Apprenticeship Learning via Inverse Reinforcement Learning Pieter Abbeel

Apprenticeship Learning via Inverse Reinforcement Learning Pieter Abbeel Andrew Y. Ng Stanford University NIPS 2003, Workshop

Motivation • Typical RL setting – Given: system model, reward function – Return: policy optimal with respect to the given model and reward function • Reward function might be hard to exactly specify • E. g. driving well on a highway: need to trade-off – Distance, speed, lane preference 11/21/2020 NIPS 2003, Workshop 2

Apprenticeship Learning • = task of learning from observing an expert/teacher • Previous work: – Mostly try to mimic teacher by learning the mapping from states to actions directly – Lack of strong performance guarantees • Our approach – Returns policy with performance as good as the expert as measured according to the expert’s unknown reward function – Reduces the problem to solving the control problem with given reward – Algorithm inspired by Inverse Reinforcement Learning (Ng and Russell, 2000) 11/21/2020 NIPS 2003, Workshop 3

Preliminaries • Markov Decision Process (S, A, T, , D, R) R(s)=w. T (s) : S [0, 1]k : k-dimensional feature vector • Value of a policy Uw( ) = E [ t t R(st)| ] = E [ t t w. T (st)| ] = w. T E [ t t (st)| ] • Feature distribution ( ) = E [ t t (st)| ] є 1/(1 - ) [0, 1]k • Uw( ) = w. T ( ) 11/21/2020 NIPS 2003, Workshop 4

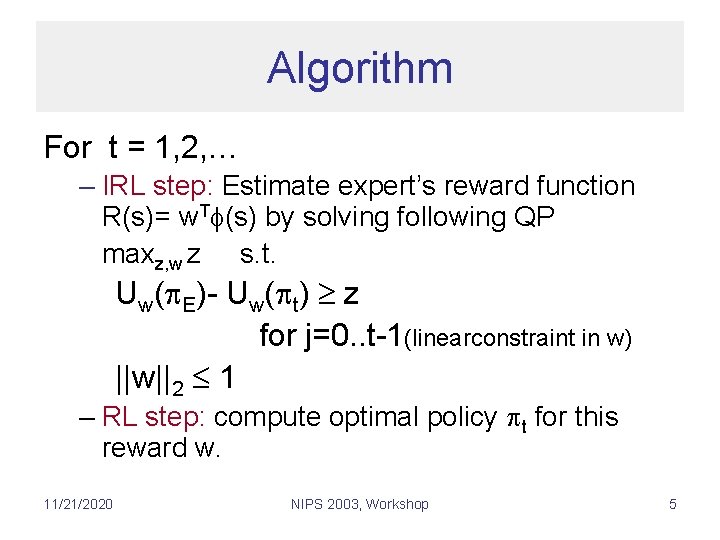

Algorithm For t = 1, 2, … – IRL step: Estimate expert’s reward function R(s)= w. T (s) by solving following QP maxz, w z s. t. Uw( E)- Uw( t) z for j=0. . t-1(linearconstraint in w) ||w||2 1 – RL step: compute optimal policy t for this reward w. 11/21/2020 NIPS 2003, Workshop 5

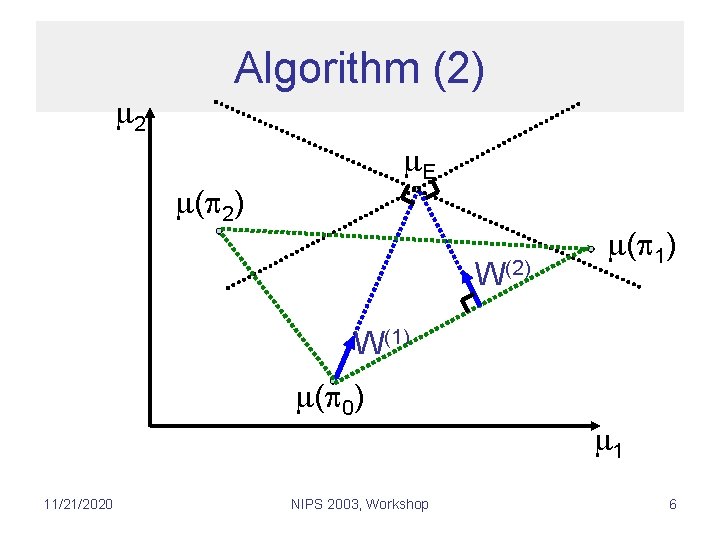

2 Algorithm (2) E ( 2) W(2) ( 1) W(1) ( 0) 11/21/2020 NIPS 2003, Workshop 1 6

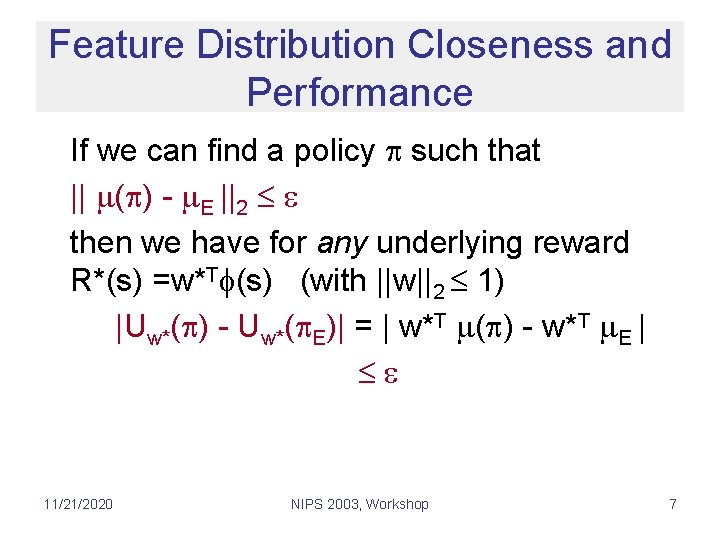

Feature Distribution Closeness and Performance If we can find a policy such that || ( ) - E ||2 then we have for any underlying reward R*(s) =w*T (s) (with ||w||2 1) |Uw*( ) - Uw*( E)| = | w*T ( ) - w*T E | 11/21/2020 NIPS 2003, Workshop 7

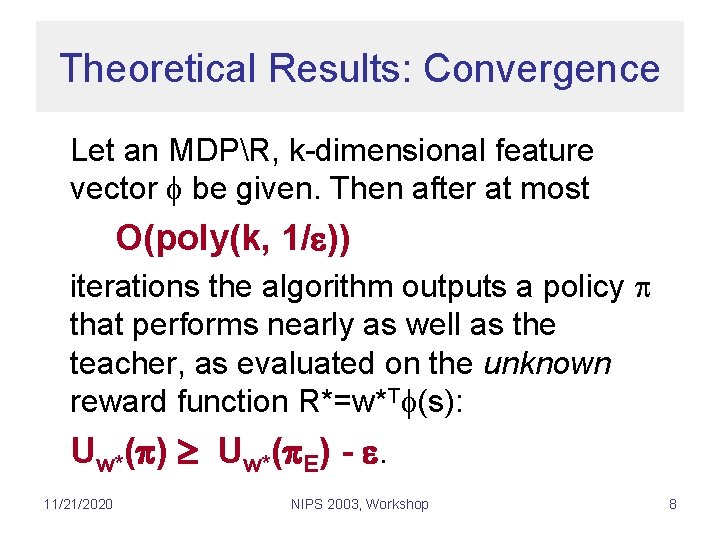

Theoretical Results: Convergence Let an MDPR, k-dimensional feature vector be given. Then after at most O(poly(k, 1/ )) iterations the algorithm outputs a policy that performs nearly as well as the teacher, as evaluated on the unknown reward function R*=w*T (s): Uw*( ) Uw*( E) - . 11/21/2020 NIPS 2003, Workshop 8

Theoretical Results: Sampling In practice, we have to use sampling estimates for the feature distribution of the expert. We still have -optimal performance with high probability for number of samples O(poly(k, 1/ )) 11/21/2020 NIPS 2003, Workshop 9

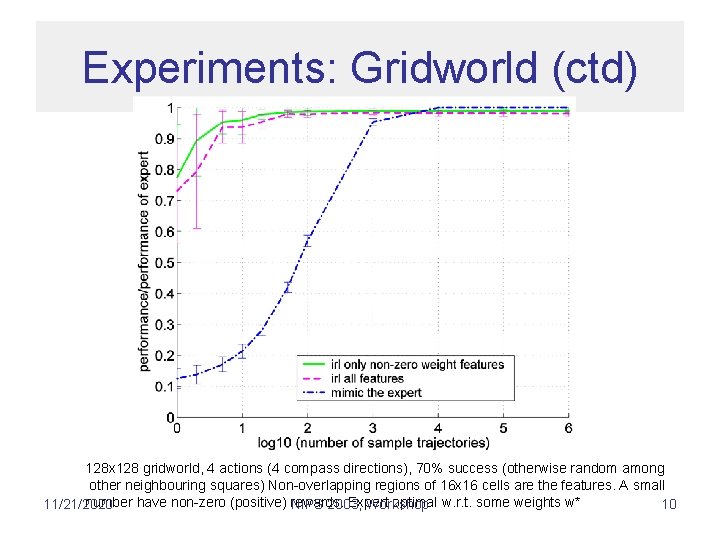

Experiments: Gridworld (ctd) 128 x 128 gridworld, 4 actions (4 compass directions), 70% success (otherwise random among other neighbouring squares) Non-overlapping regions of 16 x 16 cells are the features. A small number have non-zero (positive) rewards. Expert optimal w. r. t. some weights w* 11/21/2020 NIPS 2003, Workshop 10

Experiments: Car Driving 11/21/2020 NIPS 2003, Workshop 11

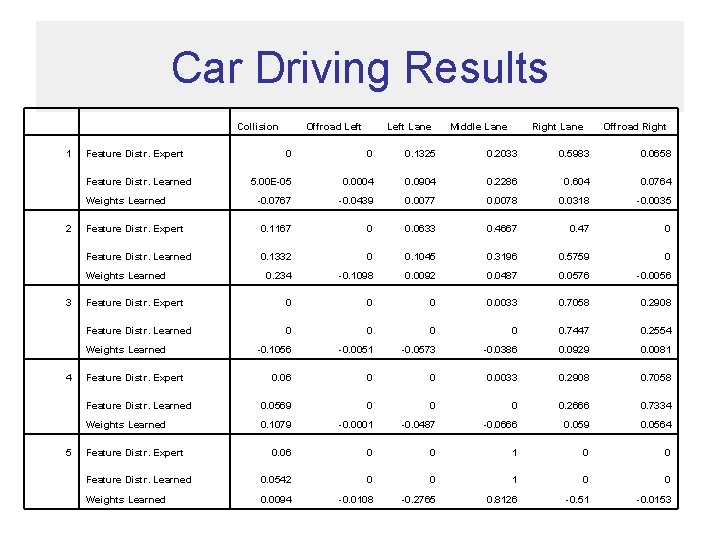

Car Driving Results 1 Feature Distr. Expert Collision Offroad Left Lane Middle Lane Right Lane Offroad Right 0 0 0. 1325 0. 2033 0. 5983 0. 0658 5. 00 E-05 0. 0004 0. 0904 0. 2286 0. 604 0. 0764 -0. 0767 -0. 0439 0. 0077 0. 0078 0. 0318 -0. 0035 Feature Distr. Expert 0. 1167 0 0. 0633 0. 4667 0. 47 0 Feature Distr. Learned 0. 1332 0 0. 1045 0. 3196 0. 5759 0 Weights Learned 0. 234 -0. 1098 0. 0092 0. 0487 0. 0576 -0. 0056 Feature Distr. Expert 0 0. 0033 0. 7058 0. 2908 Feature Distr. Learned 0 0 0. 7447 0. 2554 Weights Learned -0. 1056 -0. 0051 -0. 0573 -0. 0386 0. 0929 0. 0081 0. 06 0 0 0. 0033 0. 2908 0. 7058 Feature Distr. Learned Weights Learned 2 3 4 Feature Distr. Expert Feature Distr. Learned 0. 0569 0 0. 2666 0. 7334 Weights Learned 0. 1079 -0. 0001 -0. 0487 -0. 0666 0. 059 0. 0564 0. 06 0 0 1 0 0 5 Feature Distr. Expert Feature Distr. Learned 0. 0542 0 0 1 0 0 Weights Learned 0. 0094 -0. 0108 -0. 2765 0. 8126 -0. 51 -0. 0153

Conclusion • Our algorithm returns policy with performance as good as the expert as evaluated according to the expert’s unknown reward function • Reduced the problem to solving the control problem with given reward • Algorithm guaranteed to converge in poly(k, 1/ ) iterations • Sample complexity poly(k, 1/ ) 11/21/2020 NIPS 2003, Workshop 13

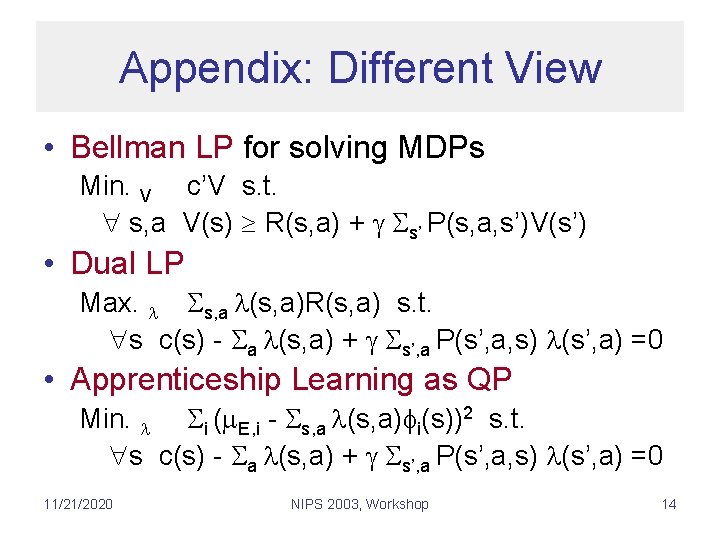

Appendix: Different View • Bellman LP for solving MDPs Min. V c’V s. t. s, a V(s) R(s, a) + s’ P(s, a, s’)V(s’) • Dual LP Max. s, a (s, a)R(s, a) s. t. s c(s) - a (s, a) + s’, a P(s’, a, s) (s’, a) =0 • Apprenticeship Learning as QP Min. i ( E, i - s, a (s, a) i(s))2 s. t. s c(s) - a (s, a) + s’, a P(s’, a, s) (s’, a) =0 11/21/2020 NIPS 2003, Workshop 14

Different View (ctd. ) • Our algorithm is equivalent to iteratively – linearize QP at current point (IRL step) – solve resulting LP (RL step) • Why not solving QP directly? Typically only possible for very small toy problems (curse of dimensionality). 11/21/2020 NIPS 2003, Workshop 15

- Slides: 15