Lecture 10 Seq GAN Chatbot Reinforcement Learning Based

Lecture 10 Seq. GAN, Chatbot, Reinforcement Learning

Based on following two papers l L. Yu, W. Zhang, J. Wang, Y. Yu, Seq. GAN: sequence generative adversarial Nets with policy gradient. AAAI, 2017. l J. Li, W. Monroe, T. Shi, S. Jean, A. Ritter, D. Jurafsky, Adversarial learning for neural dialogue generation. ar. Xiv: 1701. 06547 v 4, 2017. l And HY Lee’s lecture notes.

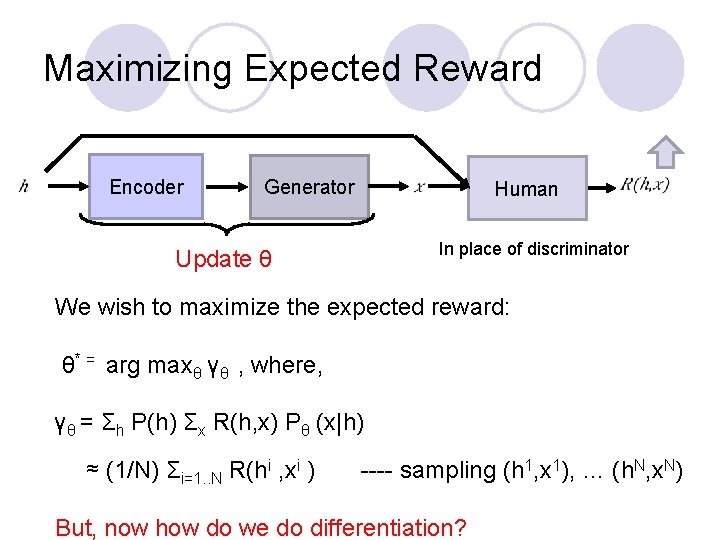

Maximizing Expected Reward Encoder Generator Update θ Human In place of discriminator We wish to maximize the expected reward: θ* = arg maxθ γθ , where, γθ = Σh P(h) Σx R(h, x) Pθ (x|h) i i 1 1 N N ≈ (1/N) Σi=1. . N R(h , x ) ---- sampling (h , x ), … (h , x ) But, now how do we do differentiation?

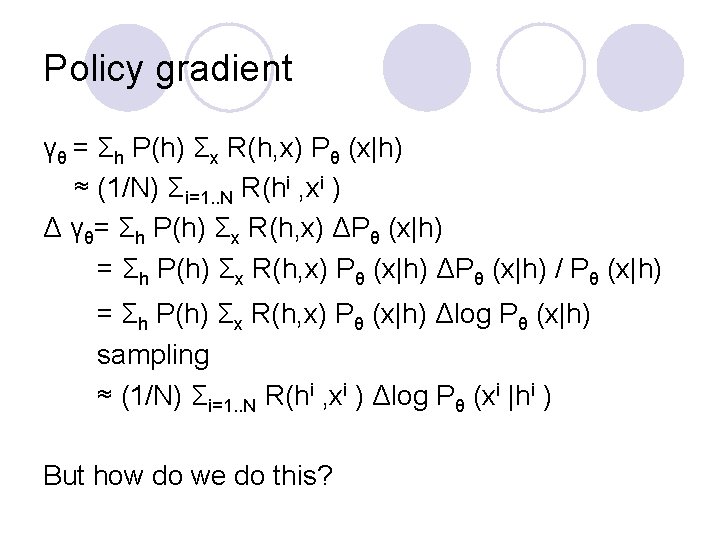

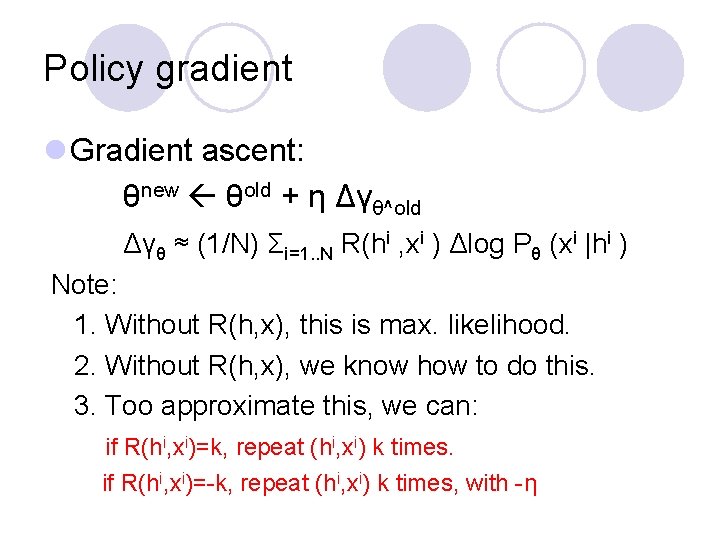

Policy gradient γθ = Σh P(h) Σx R(h, x) Pθ (x|h) ≈ (1/N) Σi=1. . N R(hi , xi ) Δ γθ= Σh P(h) Σx R(h, x) ΔPθ (x|h) = Σh P(h) Σx R(h, x) Pθ (x|h) ΔPθ (x|h) / Pθ (x|h) = Σh P(h) Σx R(h, x) Pθ (x|h) Δlog Pθ (x|h) sampling ≈ (1/N) Σi=1. . N R(hi , xi ) Δlog Pθ (xi |hi ) But how do we do this?

Policy gradient l Gradient ascent: θnew θold + η Δγθ^old Δγθ ≈ (1/N) Σi=1. . N R(hi , xi ) Δlog Pθ (xi |hi ) Note: 1. Without R(h, x), this is max. likelihood. 2. Without R(h, x), we know how to do this. 3. Too approximate this, we can: if R(hi, xi)=k, repeat (hi, xi) k times. if R(hi, xi)=-k, repeat (hi, xi) k times, with -η

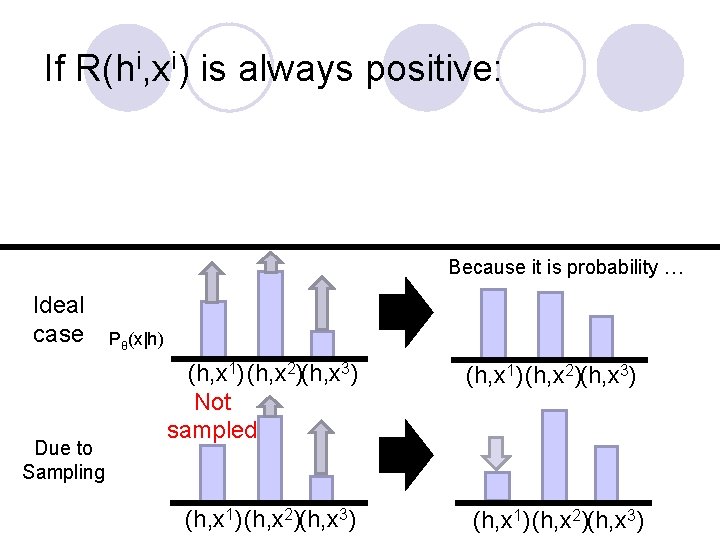

If R(hi, xi) is always positive: Because it is probability … Ideal case Due to Sampling Pθ(x|h) (h, x 1) (h, x 2)(h, x 3) Not sampled (h, x 1)(h, x 2)(h, x 3)

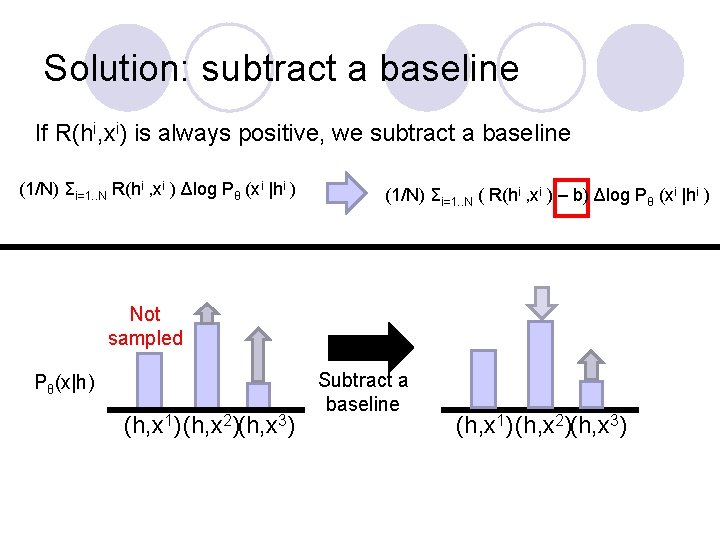

Solution: subtract a baseline If R(hi, xi) is always positive, we subtract a baseline (1/N) Σi=1. . N R(hi , xi ) Δlog Pθ (xi |hi ) (1/N) Σi=1. . N ( R(hi , xi ) – b) Δlog Pθ (xi |hi ) Not sampled Pθ(x|h) (h, x 1)(h, x 2)(h, x 3) Subtract a baseline (h, x 1)(h, x 2)(h, x 3)

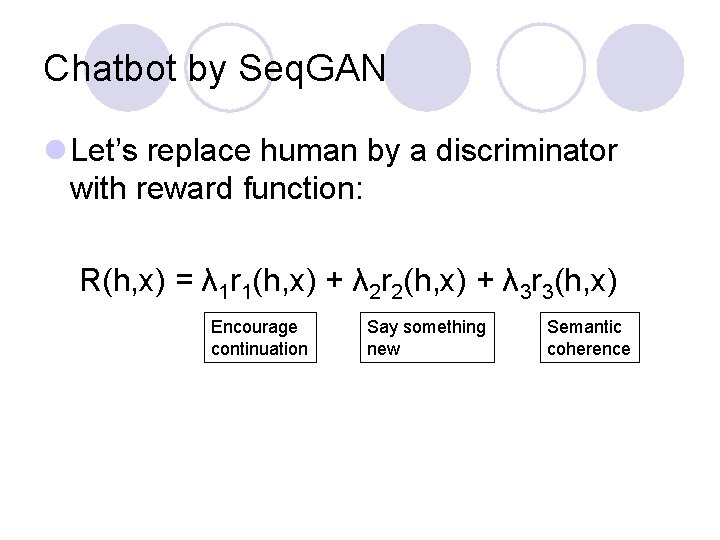

Chatbot by Seq. GAN l Let’s replace human by a discriminator with reward function: R(h, x) = λ 1 r 1(h, x) + λ 2 r 2(h, x) + λ 3 r 3(h, x) Encourage continuation Say something new Semantic coherence

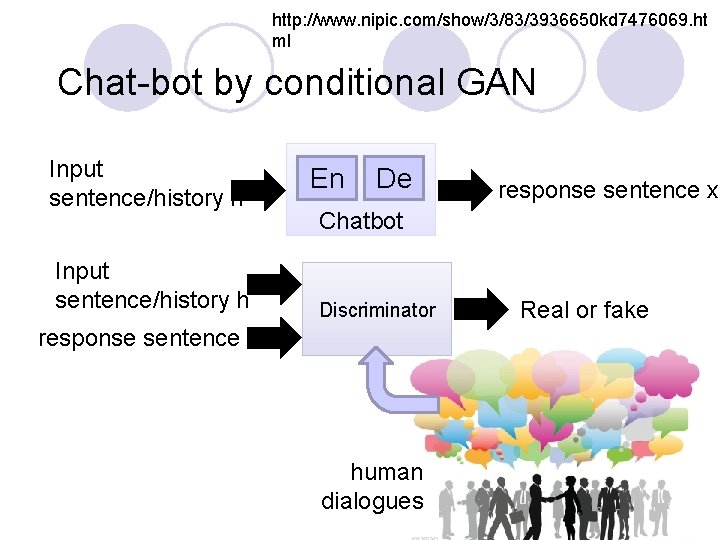

http: //www. nipic. com/show/3/83/3936650 kd 7476069. ht ml Chat-bot by conditional GAN Input sentence/history h En De response sentence x Chatbot Discriminator response sentence x human dialogues Real or fake

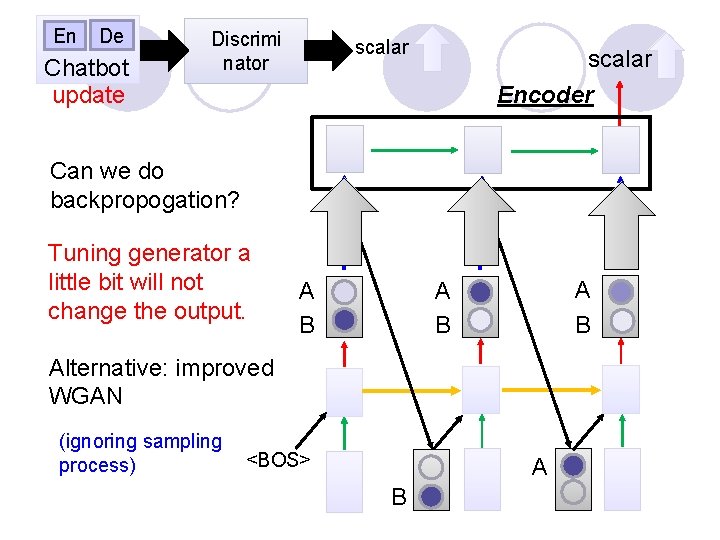

En De Chatbot update Discrimi nator scalar Can we do backpropogation? Tuning generator a little bit will not change the output. scalar Encoder A B A B Alternative: improved WGAN (ignoring sampling <BOS> process) A B

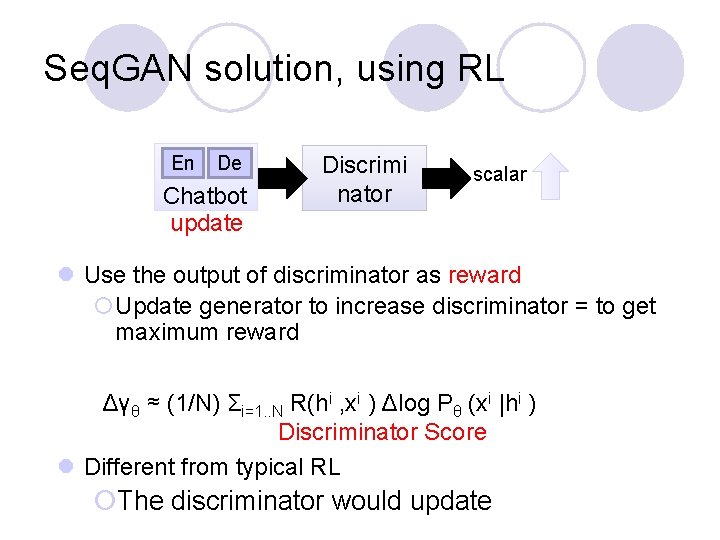

Seq. GAN solution, using RL En De Chatbot update Discrimi nator scalar l Use the output of discriminator as reward ¡Update generator to increase discriminator = to get maximum reward Δγθ ≈ (1/N) Σi=1. . N R(hi , xi ) Δlog Pθ (xi |hi ) Discriminator Score l Different from typical RL ¡The discriminator would update

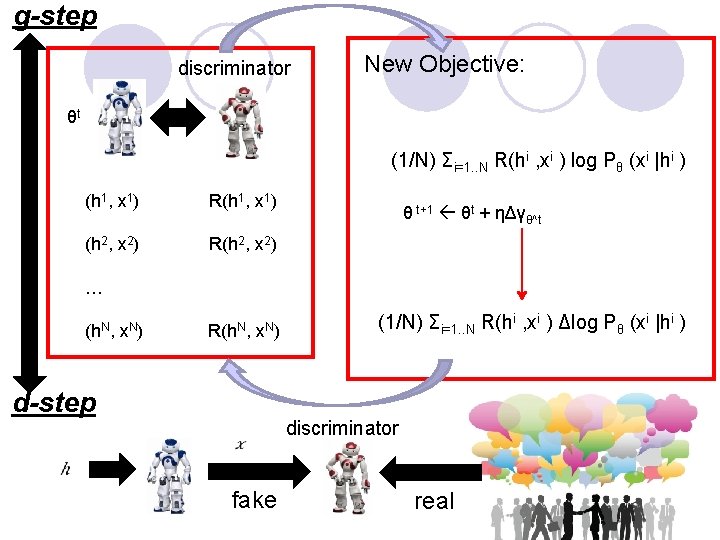

g-step discriminator New Objective: θt (1/N) Σi=1. . N R(hi , xi ) log Pθ (xi |hi ) (h 1, x 1) R(h 1, x 1) θ t+1 θt + ηΔγθ^t (h 2, x 2) R(h 2, x 2) … (h. N, x. N) R(h. N, x. N) d-step fake (1/N) Σi=1. . N R(hi , xi ) Δlog Pθ (xi |hi ) discriminator real

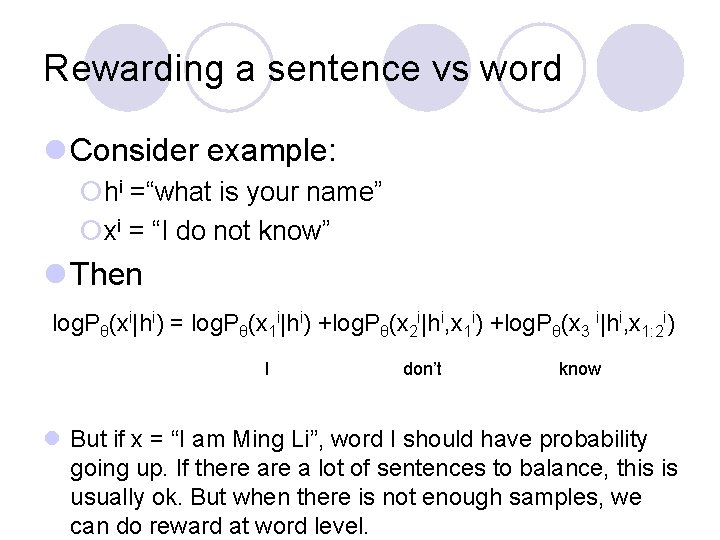

Rewarding a sentence vs word l Consider example: ¡hi =“what is your name” ¡xi = “I do not know” l Then log. Pθ(xi|hi) = log. Pθ(x 1 i|hi) +log. Pθ(x 2 i|hi, x 1 i) +log. Pθ(x 3 i|hi, x 1: 2 i) I don’t know l But if x = “I am Ming Li”, word I should have probability going up. If there a lot of sentences to balance, this is usually ok. But when there is not enough samples, we can do reward at word level.

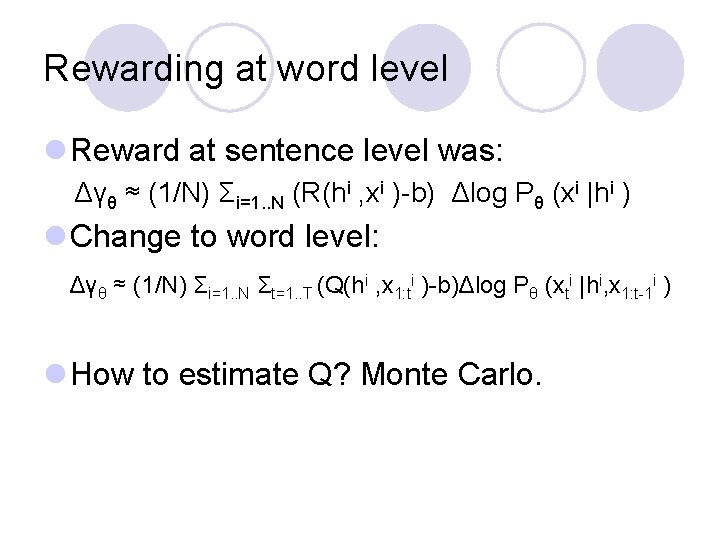

Rewarding at word level l Reward at sentence level was: Δγθ ≈ (1/N) Σi=1. . N (R(hi , xi )-b) Δlog Pθ (xi |hi ) l Change to word level: Δγθ ≈ (1/N) Σi=1. . N Σt=1. . T (Q(hi , x 1: ti )-b)Δlog Pθ (xti |hi, x 1: t-1 i ) l How to estimate Q? Monte Carlo.

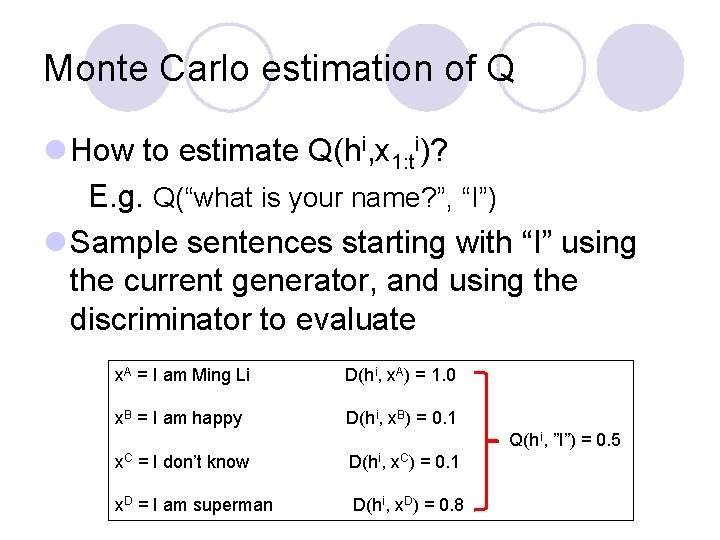

Monte Carlo estimation of Q l How to estimate Q(hi, x 1: ti)? E. g. Q(“what is your name? ”, “I”) l Sample sentences starting with “I” using the current generator, and using the discriminator to evaluate x. A = I am Ming Li D(hi, x. A) = 1. 0 x. B = I am happy D(hi, x. B) = 0. 1 Q(h i, ”I”) = 0. 5 x. C = I don’t know D(hi, x. C) = 0. 1 x. D = I am superman D(hi, x. D) = 0. 8

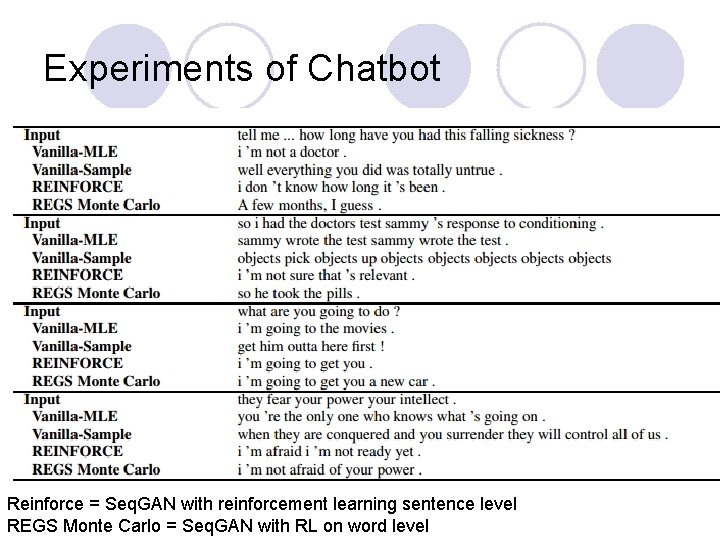

Experiments of Chatbot Reinforce = Seq. GAN with reinforcement learning sentence level REGS Monte Carlo = Seq. GAN with RL on word level

Li et al 2016 Example Results (Li, Monroe, Ritter, Galley, Gao, Jurafsky, EMNLP 2016)

- Slides: 17