Using Inaccurate Models in Reinforcement Learning Pieter Abbeel

Using Inaccurate Models in Reinforcement Learning Pieter Abbeel, Morgan Quigley and Andrew Y. Ng Stanford University

Overview n Reinforcement learning in high-dimensional continuous state-spaces. n n n We present a hybrid algorithm, which requires only n n Model-based RL: Difficult to build an accurate model. Model-free RL: Often requires large numbers of real-life trials. an approximate model, a small number of real-life trials. Resulting policy is (locally) near-optimal. Experiments on flight simulator and real RC car. Pieter Abbeel, Morgan Quigley and Andrew Y. Ng

Reinforcement learning formalism n Markov Decision Process (MDP) n n n Time varying, deterministic dynamics n n M = (S, A, T , H, s 0, R ). S = n (continuous state space) T = { ft : S x A ! S, t = 0, …, H}. Goal: find policy : S ! A, that maximizes n U( ) = E [ R (st) | ]. H n Focus: task of trajectory following. t=0 Pieter Abbeel, Morgan Quigley and Andrew Y. Ng

Motivating Example n Student-driver learning to make a 90 degree right turn n Student-driver has access to: n n n Only a few trials needed. No accurate model. Real-life trial. Crude model. Result: good policy gradient estimate. Pieter Abbeel, Morgan Quigley and Andrew Y. Ng

Algorithm Idea n n Input to algorithm: approximate model. Start by computing the optimal policy according to the model. Real-life trajectory Target trajectory The policy is optimal according to the model, so no improvement is possible based on the model. Pieter Abbeel, Morgan Quigley and Andrew Y. Ng

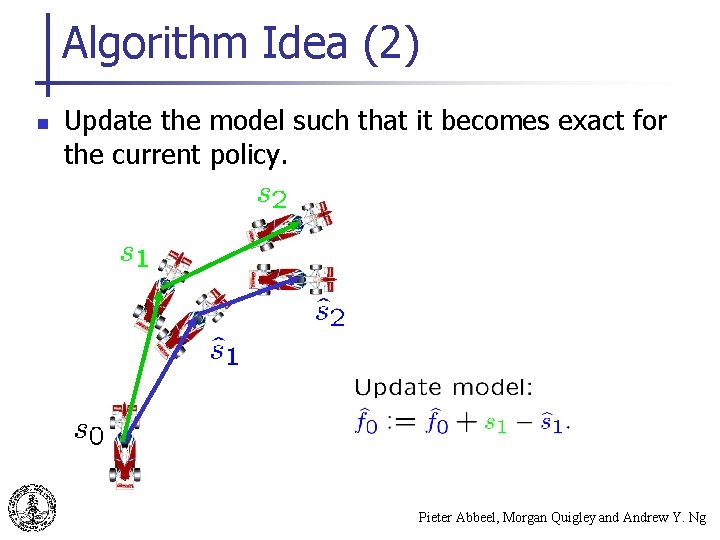

Algorithm Idea (2) n Update the model such that it becomes exact for the current policy. Pieter Abbeel, Morgan Quigley and Andrew Y. Ng

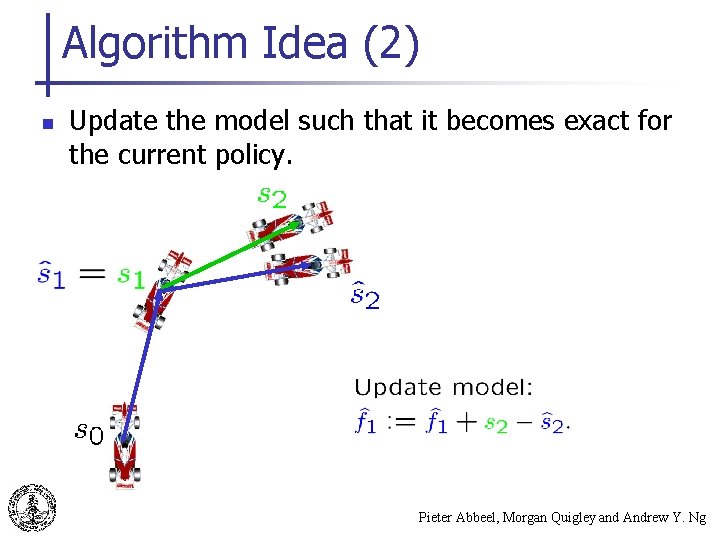

Algorithm Idea (2) n Update the model such that it becomes exact for the current policy. Pieter Abbeel, Morgan Quigley and Andrew Y. Ng

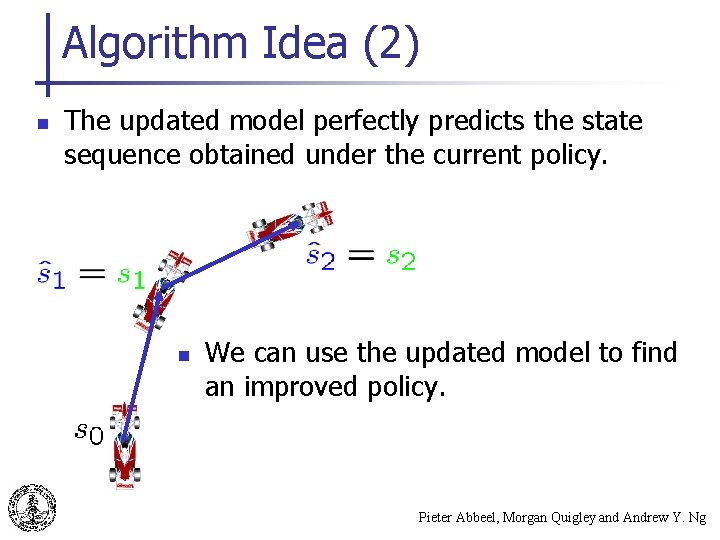

Algorithm Idea (2) n The updated model perfectly predicts the state sequence obtained under the current policy. n We can use the updated model to find an improved policy. Pieter Abbeel, Morgan Quigley and Andrew Y. Ng

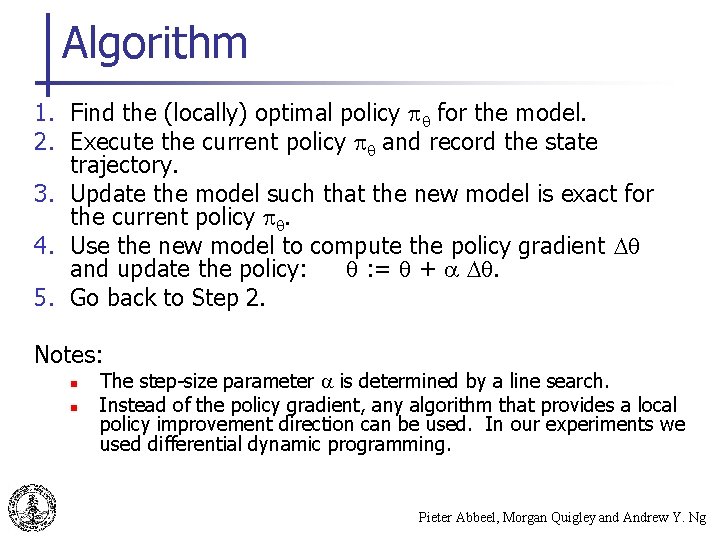

Algorithm 1. Find the (locally) optimal policy for the model. 2. Execute the current policy and record the state trajectory. 3. Update the model such that the new model is exact for the current policy . 4. Use the new model to compute the policy gradient and update the policy: : = + . 5. Go back to Step 2. Notes: n n The step-size parameter is determined by a line search. Instead of the policy gradient, any algorithm that provides a local policy improvement direction can be used. In our experiments we used differential dynamic programming. Pieter Abbeel, Morgan Quigley and Andrew Y. Ng

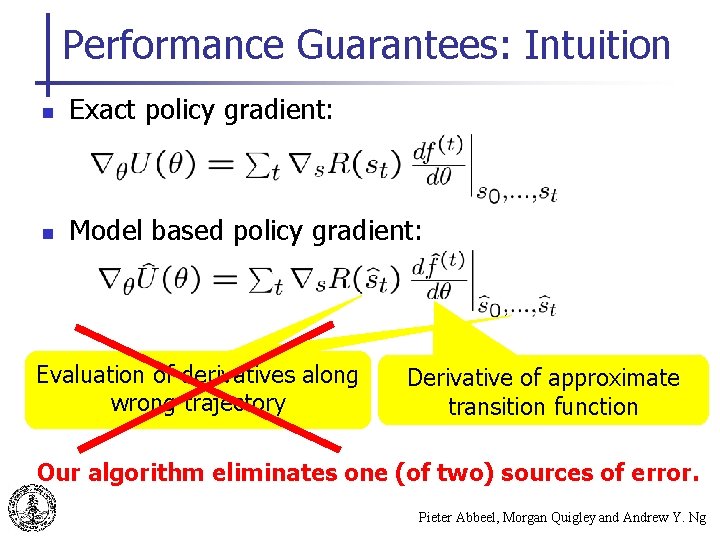

Performance Guarantees: Intuition n Exact policy gradient: n Model based policy gradient: Evaluation of derivatives along wrong trajectory Derivative of approximate transition function Our algorithm eliminates one (of two) sources of error. Pieter Abbeel, Morgan Quigley and Andrew Y. Ng

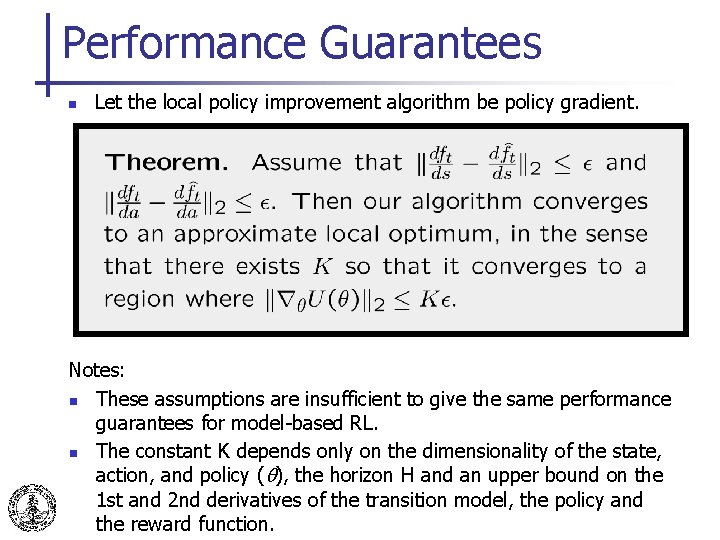

Performance Guarantees n Let the local policy improvement algorithm be policy gradient. Notes: n These assumptions are insufficient to give the same performance guarantees for model-based RL. n The constant K depends only on the dimensionality of the state, action, and policy ( ), the horizon H and an upper bound on the 1 st and 2 nd derivatives of the transition model, the policy and Pieter Abbeel, Morgan Quigley and Andrew Y. Ng the reward function.

Experiments n n We use differential dynamic programming (DDP) to find control policies in the model. Two Systems: n Flight Simulator RC Car Pieter Abbeel, Morgan Quigley and Andrew Y. Ng

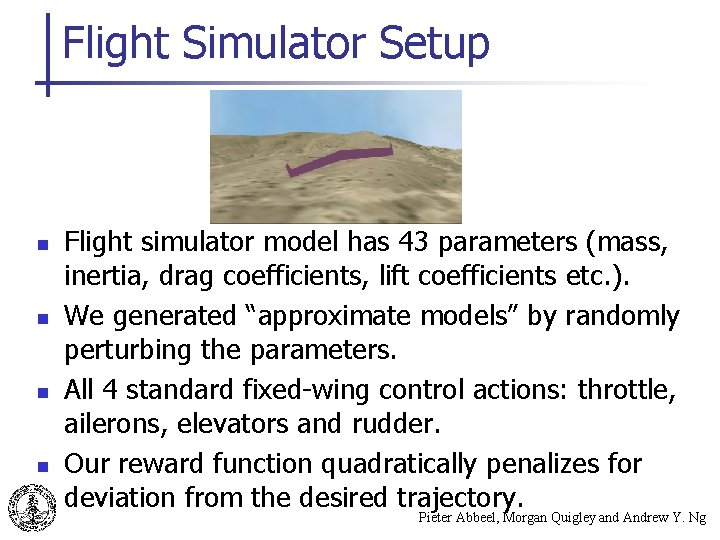

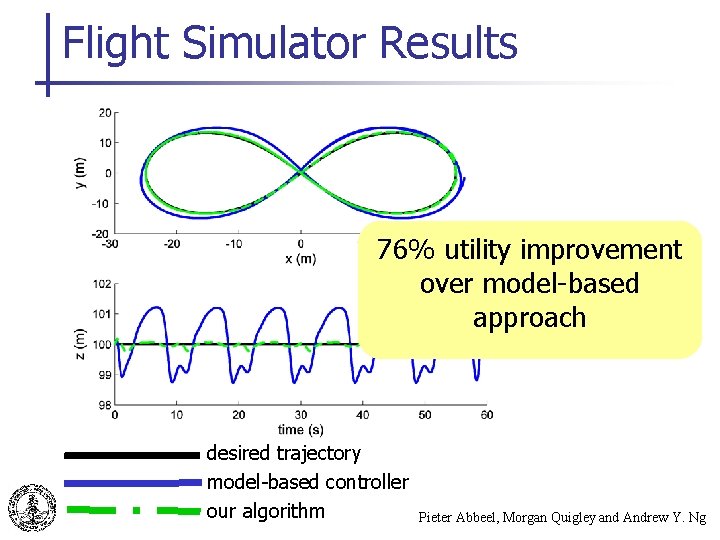

Flight Simulator Setup n n Flight simulator model has 43 parameters (mass, inertia, drag coefficients, lift coefficients etc. ). We generated “approximate models” by randomly perturbing the parameters. All 4 standard fixed-wing control actions: throttle, ailerons, elevators and rudder. Our reward function quadratically penalizes for deviation from the desired trajectory. Pieter Abbeel, Morgan Quigley and Andrew Y. Ng

Flight Simulator Movie Pieter Abbeel, Morgan Quigley and Andrew Y. Ng

Flight Simulator Results 76% utility improvement over model-based approach desired trajectory model-based controller our algorithm Pieter Abbeel, Morgan Quigley and Andrew Y. Ng

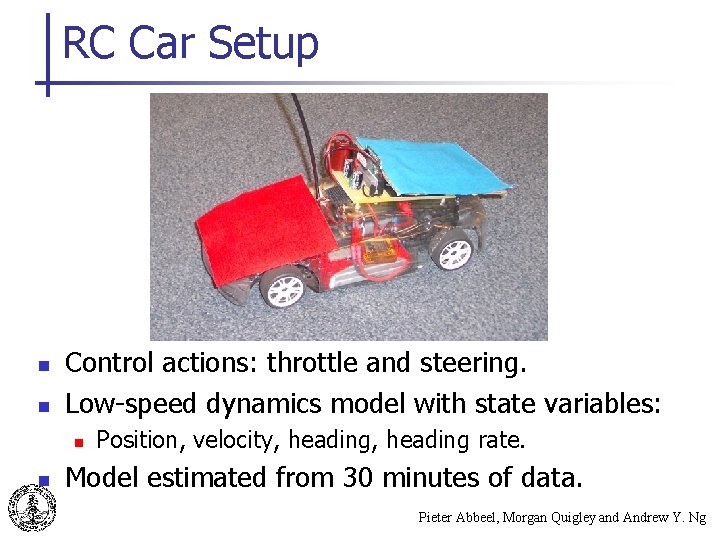

RC Car Setup n n Control actions: throttle and steering. Low-speed dynamics model with state variables: n n Position, velocity, heading rate. Model estimated from 30 minutes of data. Pieter Abbeel, Morgan Quigley and Andrew Y. Ng

RC Car: Open-Loop Turn Pieter Abbeel, Morgan Quigley and Andrew Y. Ng

RC Car: Circle Pieter Abbeel, Morgan Quigley and Andrew Y. Ng

RC Car: Figure-8 Maneuver Pieter Abbeel, Morgan Quigley and Andrew Y. Ng

Related Work n Iterative Learning Control: n n Successful robot control with limited number of trials: n n Uchiyama (1978), Longman et al. (1992), Moore (1993), Horowitz (1993), Bien et al. (1991), Owens et al. (1995), Chen et al. (1997), … Atkeson and Schaal (1997), Morimoto and Doya (2001). Robust control theory: n n Zhou et al. (1995), Dullerud and Paganini (2000), … Bagnell et al. (2001), Morimoto and Atkeson (2002), … Pieter Abbeel, Morgan Quigley and Andrew Y. Ng

Conclusion n We presented an algorithm that uses a crude model and a small number of real-life trials to find a policy that works well in real-life. Our theoretical results show that----assuming a deterministic setting and assuming a reasonable model----our algorithm returns a policy that is (locally) near-optimal. Our experiments show that our algorithm can significantly improve on purely model-based RL by using only a small number of real-life trials, even when the true system is not deterministic. Pieter Abbeel, Morgan Quigley and Andrew Y. Ng

Pieter Abbeel, Morgan Quigley and Andrew Y. Ng

Motivating Example n Student-driver learning to make a 90 degree right turn n Key aspects n n n Only a few trials needed. No accurate model. Real-life trial: shows whether turn is wide or short. Crude model: turning steering wheel more to the right results in sharper turn, turning steering wheel more to the left results in wider turn. Result: good policy gradient estimate. Pieter Abbeel, Morgan Quigley and Andrew Y. Ng

- Slides: 23