A Comparison of Methods for Transductive Transfer Learning

![Transductive vs. Inductive SVM [Joachims ’ 99, ‘ 03] 19 Transductive vs. Inductive SVM [Joachims ’ 99, ‘ 03] 19](https://slidetodoc.com/presentation_image/2ad4f1b16ac4b7aae44b053e266d73d4/image-19.jpg)

- Slides: 32

A Comparison of Methods for Transductive Transfer Learning Andrew Arnold Advised by William W. Cohen Machine Learning Department School of Computer Science Carnegie Mellon University May 30, 2007

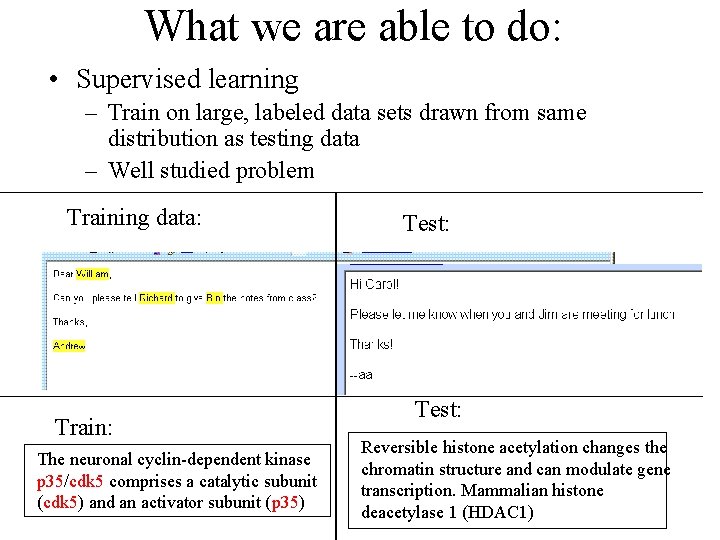

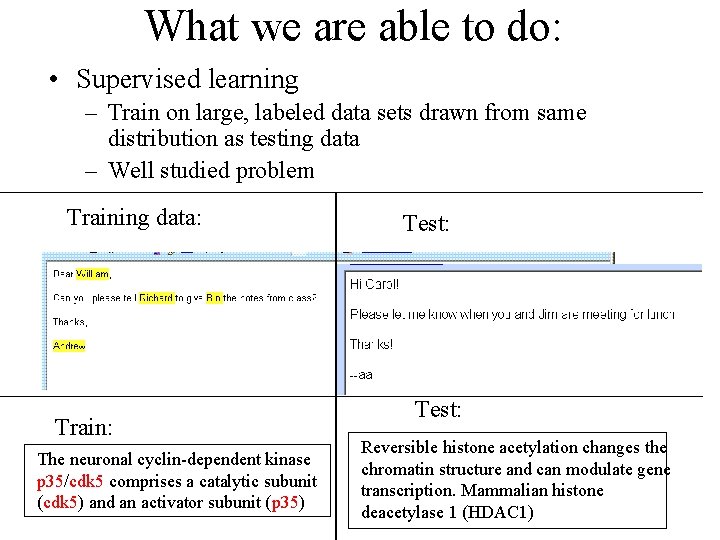

What we are able to do: • Supervised learning – Train on large, labeled data sets drawn from same distribution as testing data – Well studied problem Training data: Train: The neuronal cyclin-dependent kinase p 35/cdk 5 comprises a catalytic subunit (cdk 5) and an activator subunit (p 35) Test: Reversible histone acetylation changes the chromatin structure and can modulate gene transcription. Mammalian histone deacetylase 1 (HDAC 1)

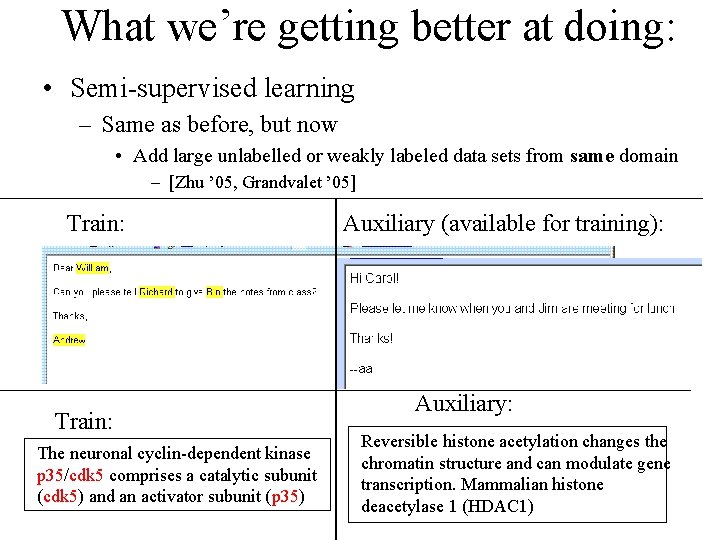

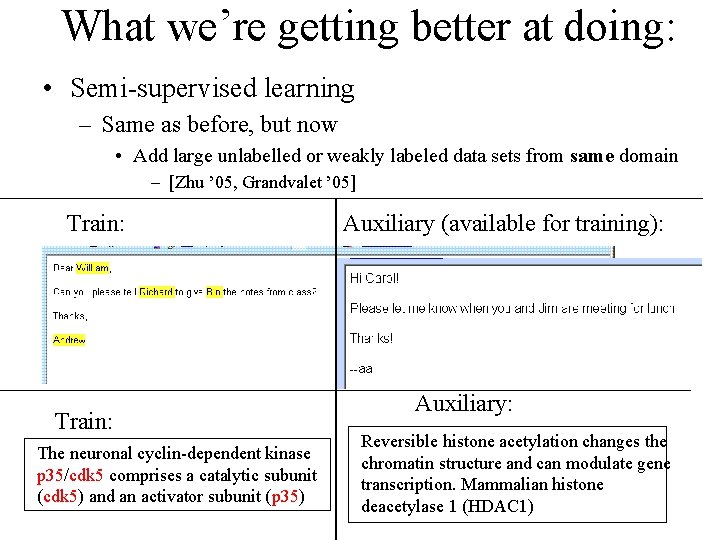

What we’re getting better at doing: • Semi-supervised learning – Same as before, but now • Add large unlabelled or weakly labeled data sets from same domain – [Zhu ’ 05, Grandvalet ’ 05] Train: The neuronal cyclin-dependent kinase p 35/cdk 5 comprises a catalytic subunit (cdk 5) and an activator subunit (p 35) Auxiliary (available for training): Auxiliary: Reversible histone acetylation changes the chromatin structure and can modulate gene transcription. Mammalian histone deacetylase 1 (HDAC 1)

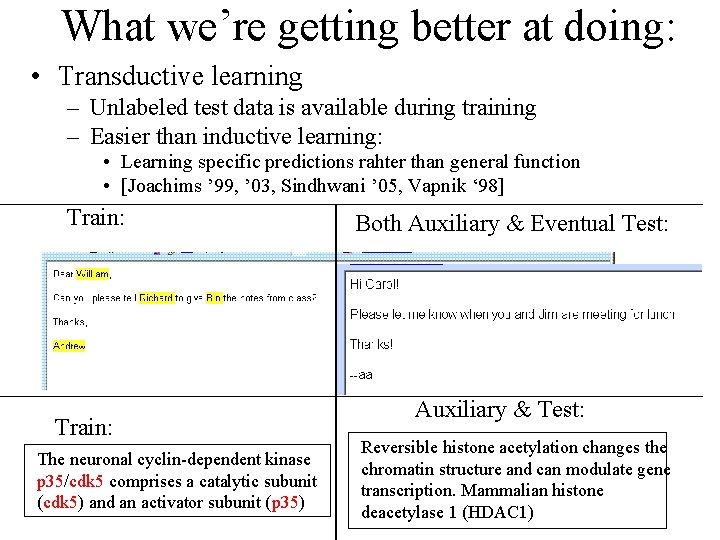

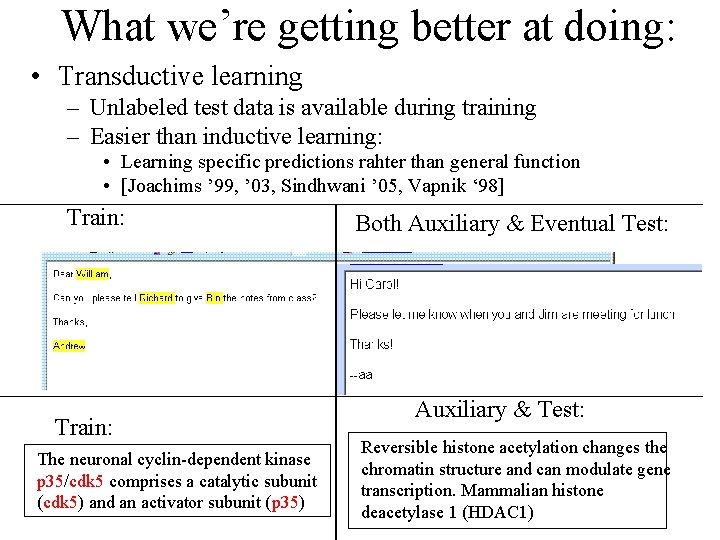

What we’re getting better at doing: • Transductive learning – Unlabeled test data is available during training – Easier than inductive learning: • Learning specific predictions rahter than general function • [Joachims ’ 99, ’ 03, Sindhwani ’ 05, Vapnik ‘ 98] Train: The neuronal cyclin-dependent kinase p 35/cdk 5 comprises a catalytic subunit (cdk 5) and an activator subunit (p 35) Both Auxiliary & Eventual Test: Auxiliary & Test: Reversible histone acetylation changes the chromatin structure and can modulate gene transcription. Mammalian histone deacetylase 1 (HDAC 1)

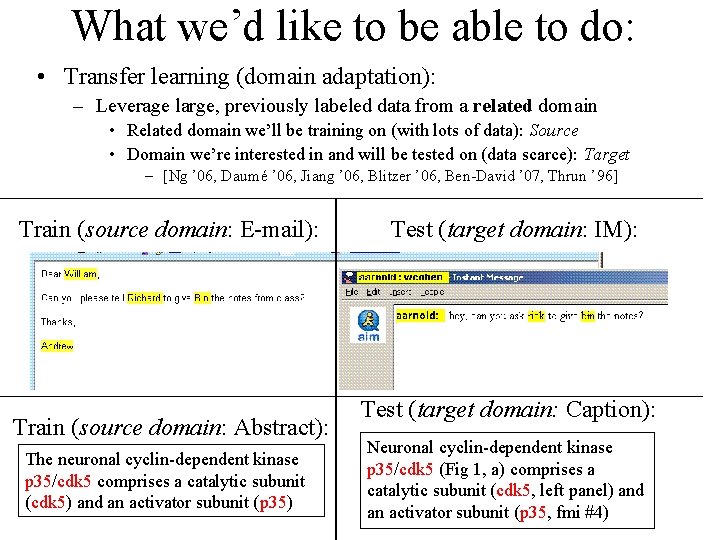

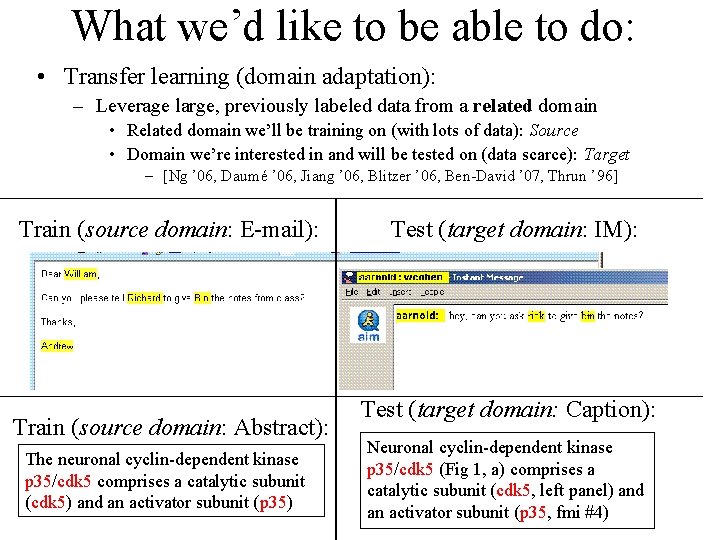

What we’d like to be able to do: • Transfer learning (domain adaptation): – Leverage large, previously labeled data from a related domain • Related domain we’ll be training on (with lots of data): Source • Domain we’re interested in and will be tested on (data scarce): Target – [Ng ’ 06, Daumé ’ 06, Jiang ’ 06, Blitzer ’ 06, Ben-David ’ 07, Thrun ’ 96] Train (source domain: E-mail): Train (source domain: Abstract): The neuronal cyclin-dependent kinase p 35/cdk 5 comprises a catalytic subunit (cdk 5) and an activator subunit (p 35) Test (target domain: IM): Test (target domain: Caption): Neuronal cyclin-dependent kinase p 35/cdk 5 (Fig 1, a) comprises a catalytic subunit (cdk 5, left panel) and an activator subunit (p 35, fmi #4)

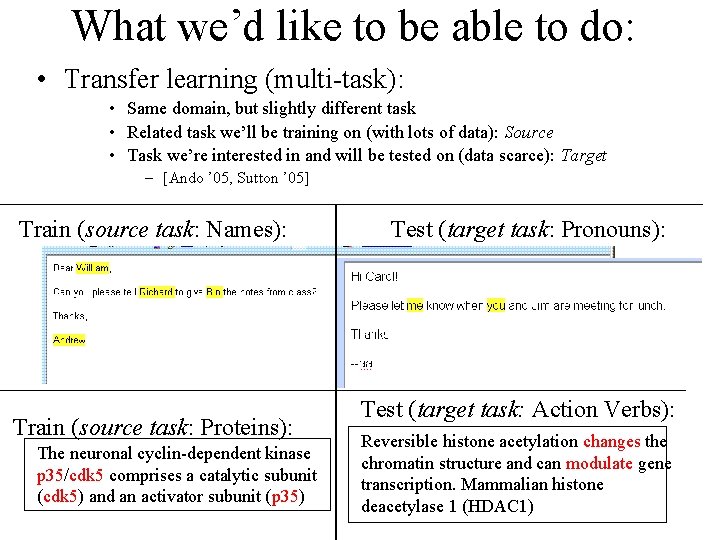

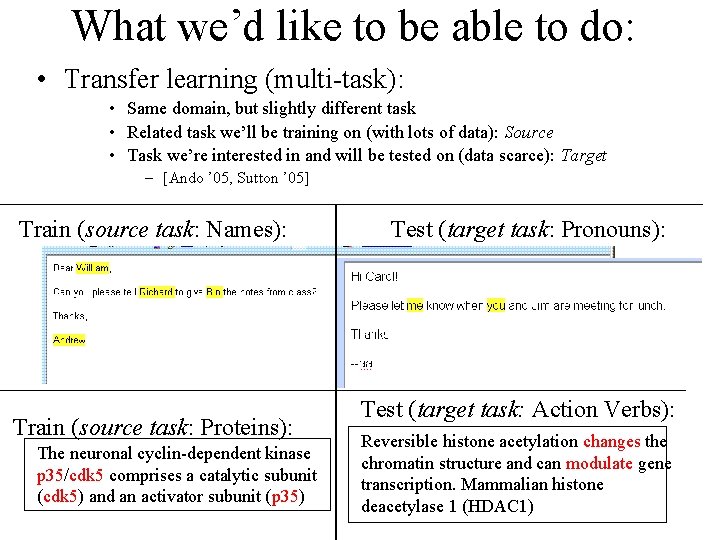

What we’d like to be able to do: • Transfer learning (multi-task): • Same domain, but slightly different task • Related task we’ll be training on (with lots of data): Source • Task we’re interested in and will be tested on (data scarce): Target – [Ando ’ 05, Sutton ’ 05] Train (source task: Names): Train (source task: Proteins): The neuronal cyclin-dependent kinase p 35/cdk 5 comprises a catalytic subunit (cdk 5) and an activator subunit (p 35) Test (target task: Pronouns): Test (target task: Action Verbs): Reversible histone acetylation changes the chromatin structure and can modulate gene transcription. Mammalian histone deacetylase 1 (HDAC 1)

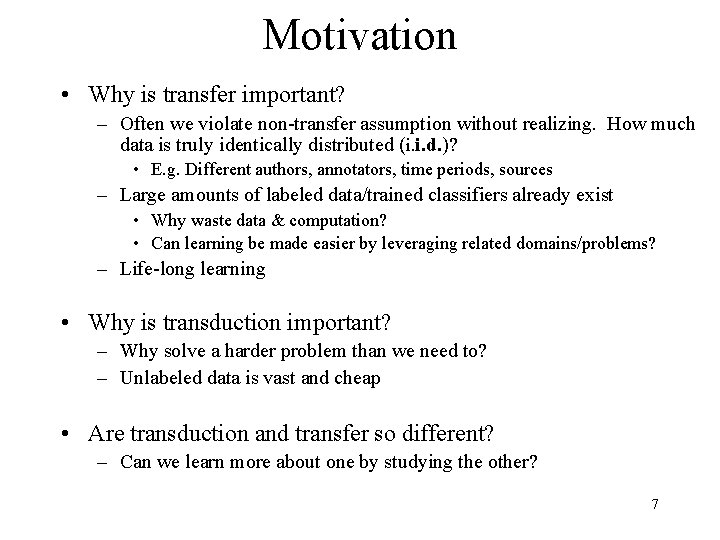

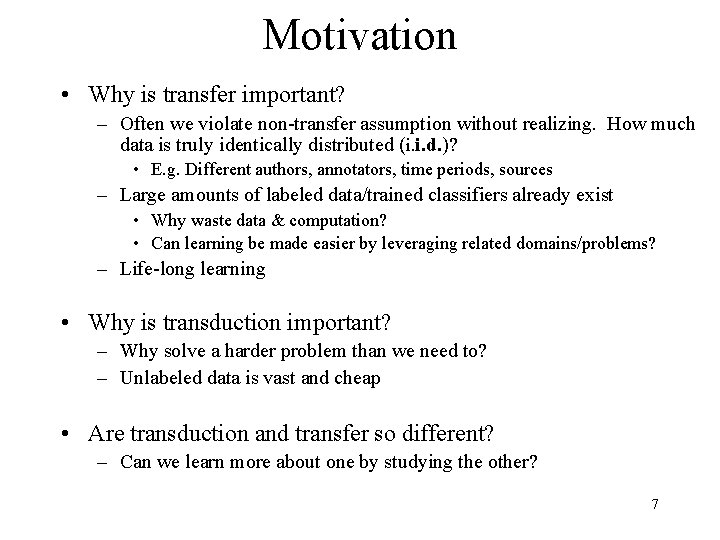

Motivation • Why is transfer important? – Often we violate non-transfer assumption without realizing. How much data is truly identically distributed (i. i. d. )? • E. g. Different authors, annotators, time periods, sources – Large amounts of labeled data/trained classifiers already exist • Why waste data & computation? • Can learning be made easier by leveraging related domains/problems? – Life-long learning • Why is transduction important? – Why solve a harder problem than we need to? – Unlabeled data is vast and cheap • Are transduction and transfer so different? – Can we learn more about one by studying the other? 7

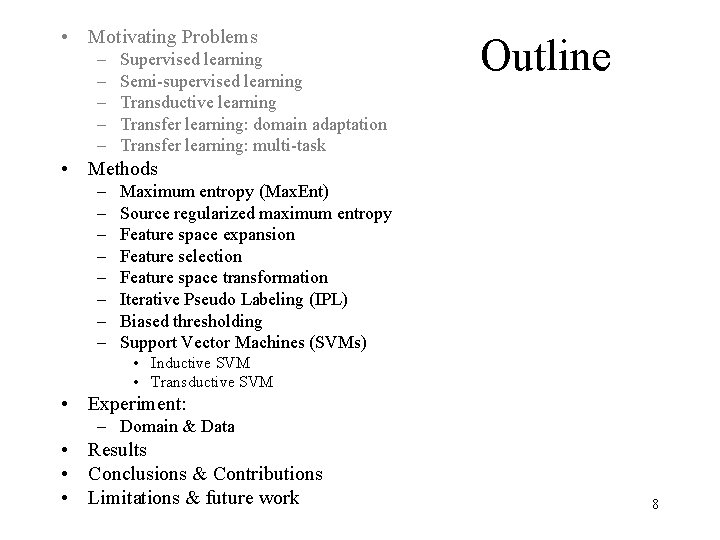

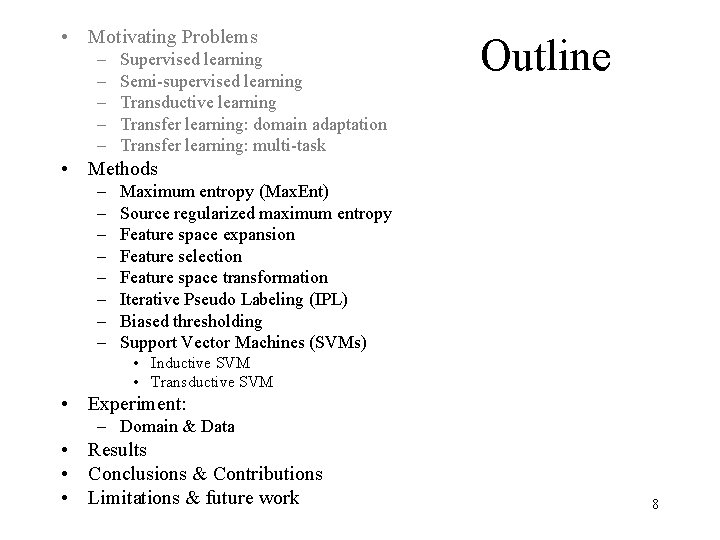

• Motivating Problems – – – Supervised learning Semi-supervised learning Transductive learning Transfer learning: domain adaptation Transfer learning: multi-task Outline • Methods – – – – Maximum entropy (Max. Ent) Source regularized maximum entropy Feature space expansion Feature selection Feature space transformation Iterative Pseudo Labeling (IPL) Biased thresholding Support Vector Machines (SVMs) • Inductive SVM • Transductive SVM • Experiment: – Domain & Data • Results • Conclusions & Contributions • Limitations & future work 8

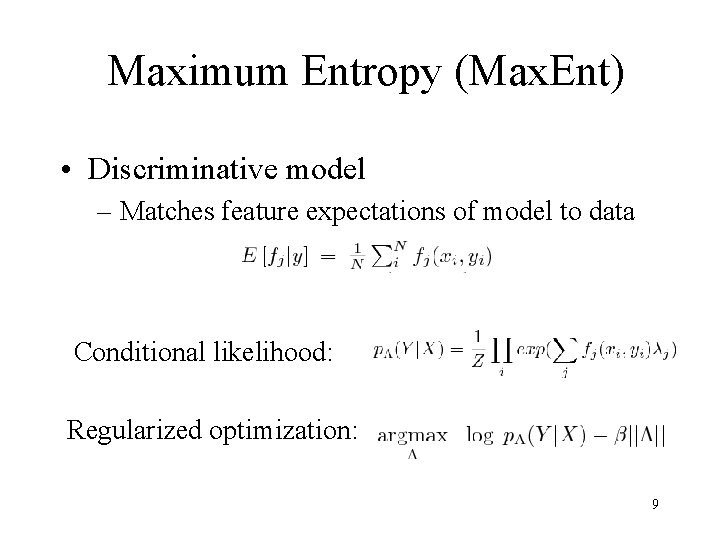

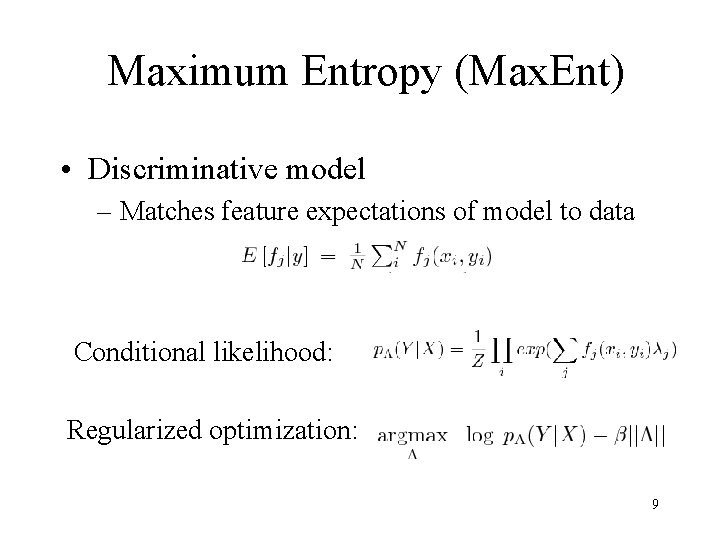

Maximum Entropy (Max. Ent) • Discriminative model – Matches feature expectations of model to data Conditional likelihood: Regularized optimization: 9

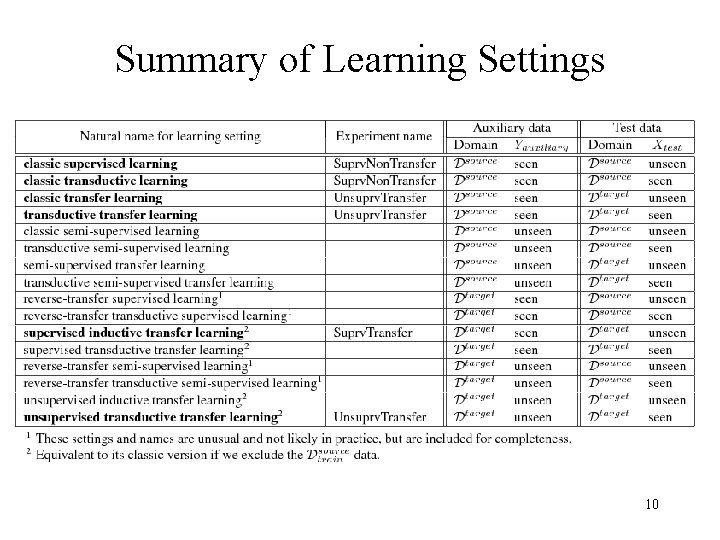

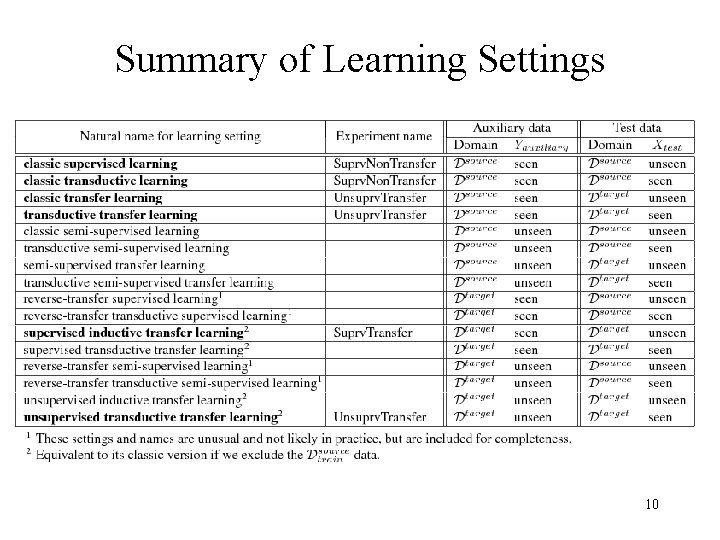

Summary of Learning Settings 10

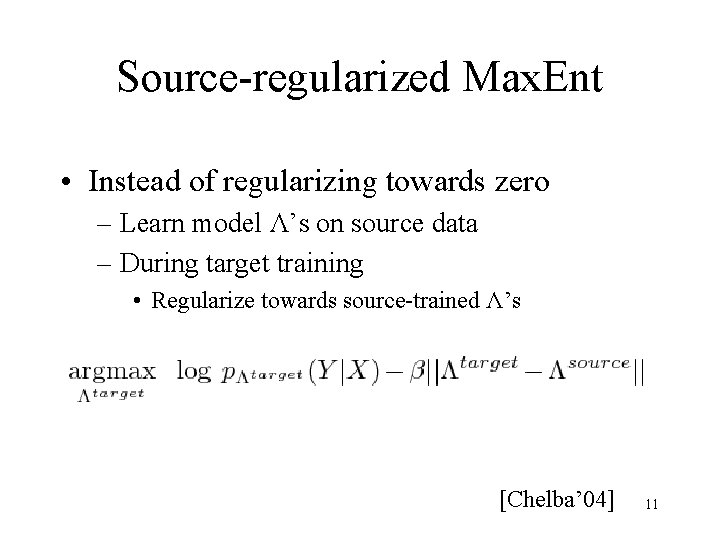

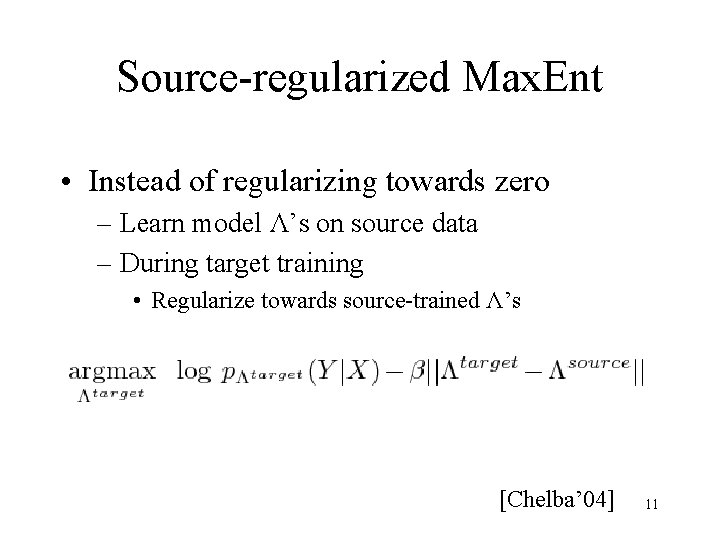

Source-regularized Max. Ent • Instead of regularizing towards zero – Learn model Λ’s on source data – During target training • Regularize towards source-trained Λ’s [Chelba’ 04] 11

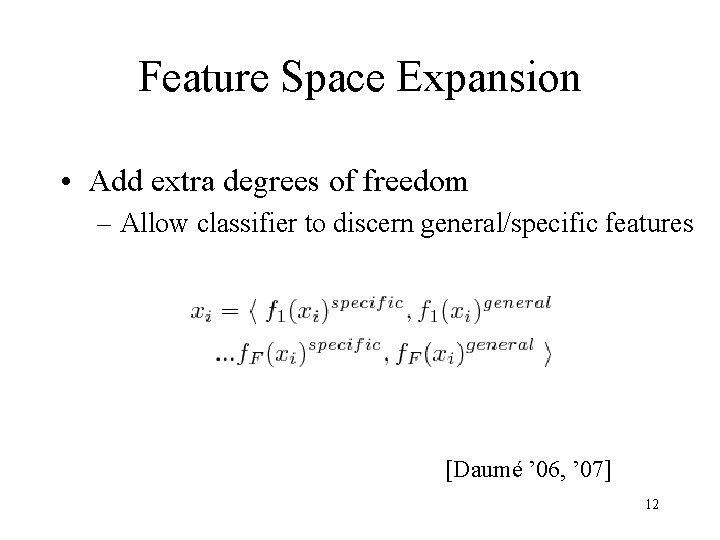

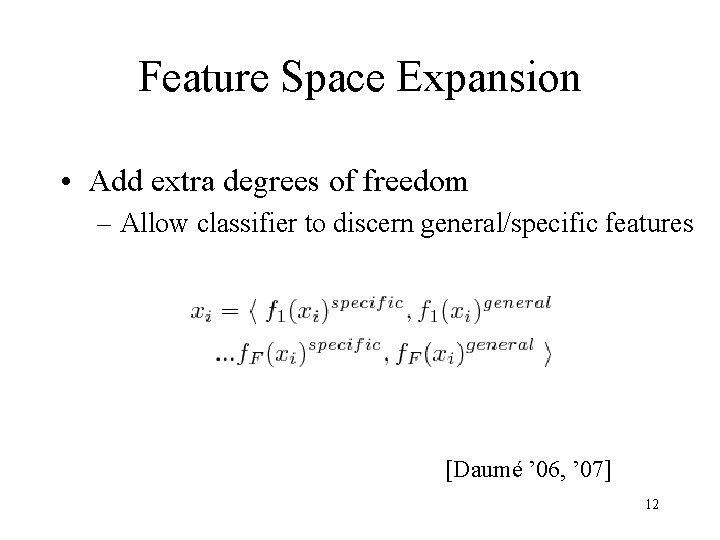

Feature Space Expansion • Add extra degrees of freedom – Allow classifier to discern general/specific features [Daumé ’ 06, ’ 07] 12

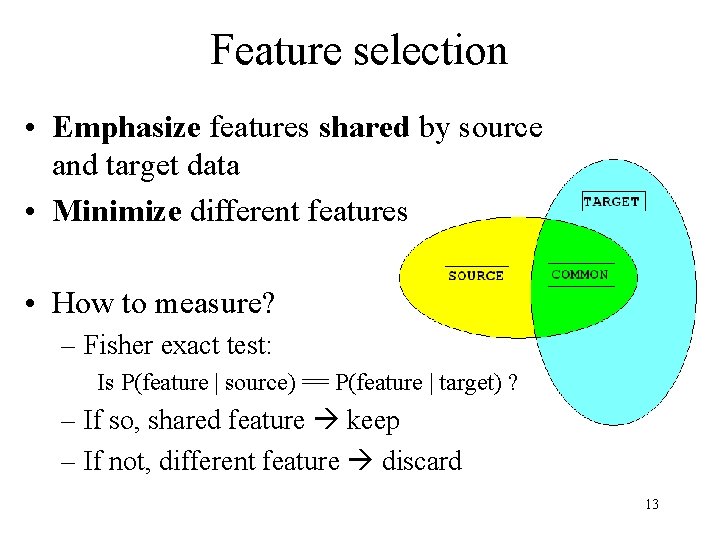

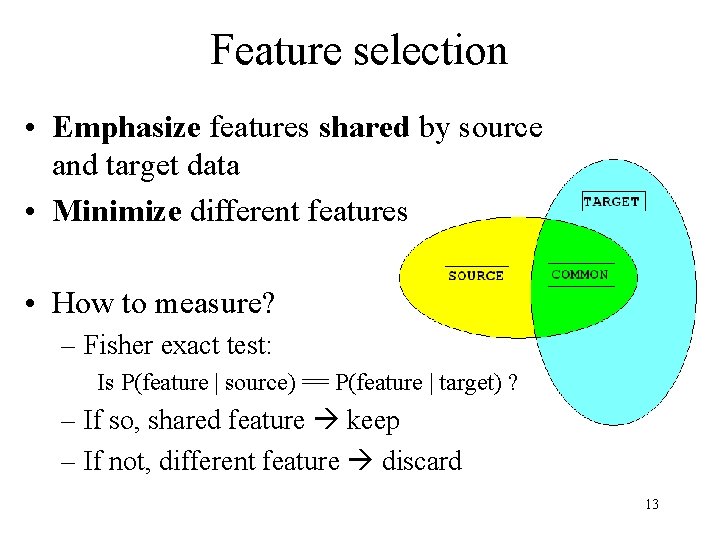

Feature selection • Emphasize features shared by source and target data • Minimize different features • How to measure? – Fisher exact test: Is P(feature | source) == P(feature | target) ? – If so, shared feature keep – If not, different feature discard 13

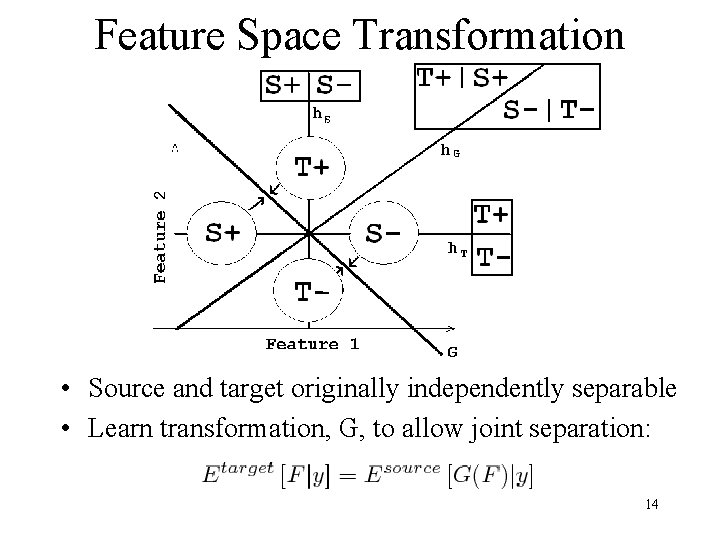

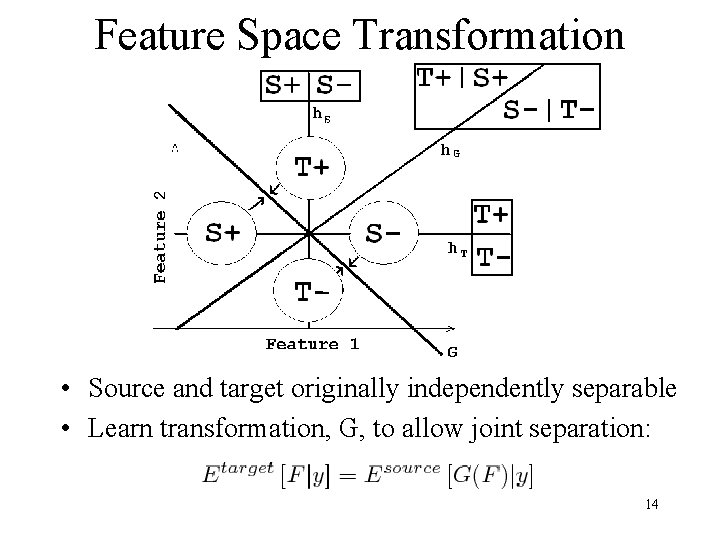

Feature Space Transformation • Source and target originally independently separable • Learn transformation, G, to allow joint separation: 14

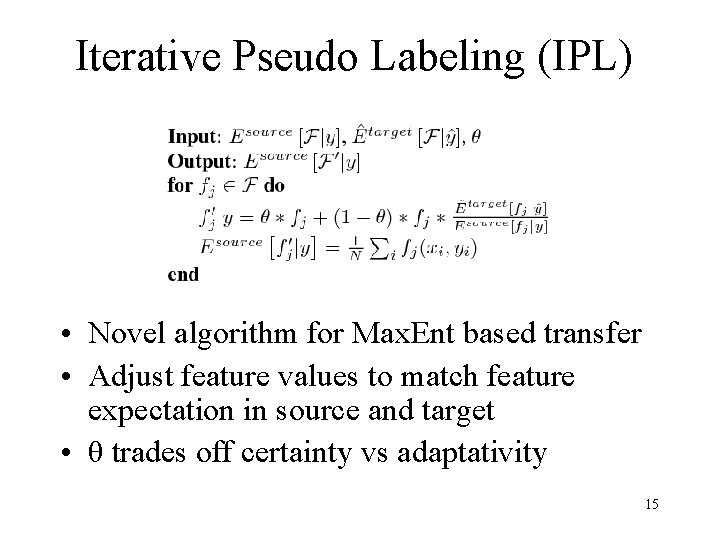

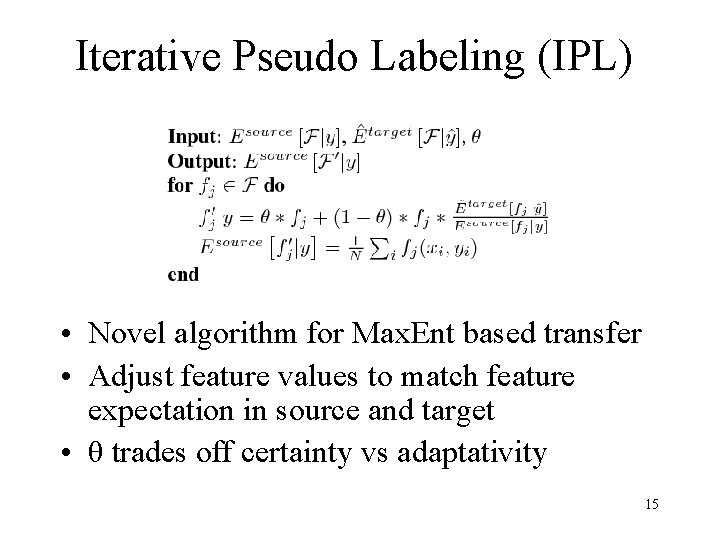

Iterative Pseudo Labeling (IPL) • Novel algorithm for Max. Ent based transfer • Adjust feature values to match feature expectation in source and target • θ trades off certainty vs adaptativity 15

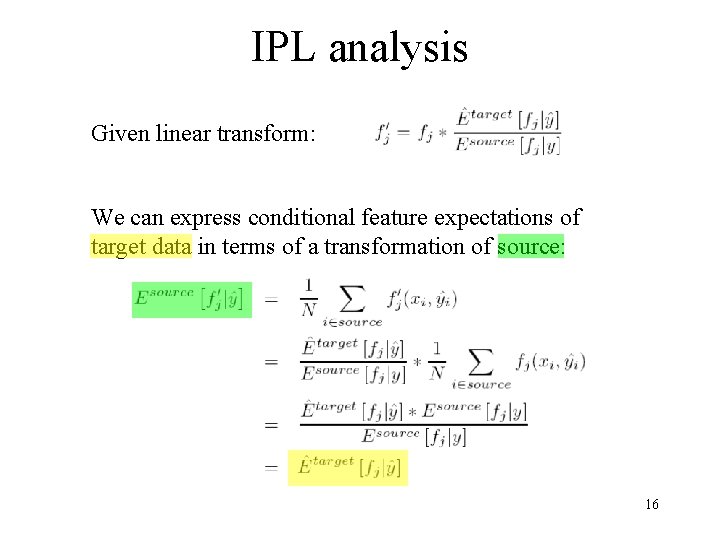

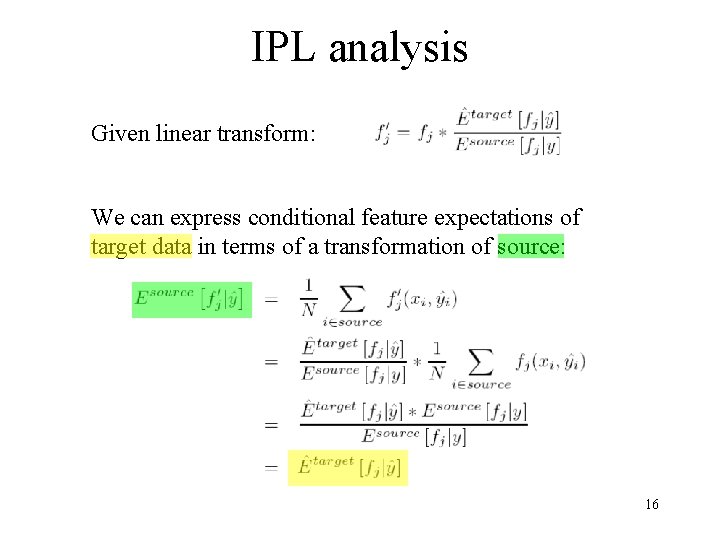

IPL analysis Given linear transform: We can express conditional feature expectations of target data in terms of a transformation of source: 16

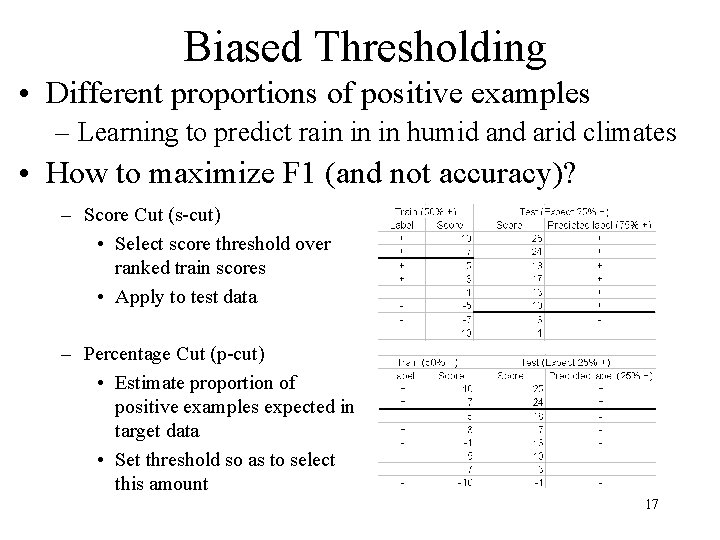

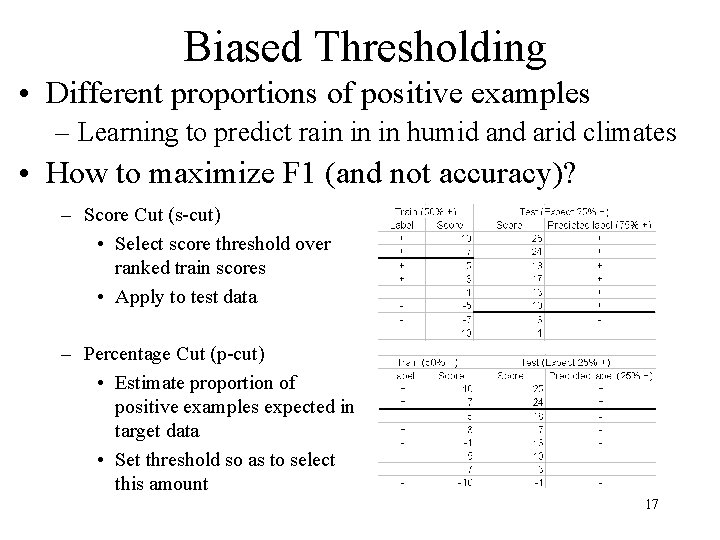

Biased Thresholding • Different proportions of positive examples – Learning to predict rain in in humid and arid climates • How to maximize F 1 (and not accuracy)? – Score Cut (s-cut) • Select score threshold over ranked train scores • Apply to test data – Percentage Cut (p-cut) • Estimate proportion of positive examples expected in target data • Set threshold so as to select this amount 17

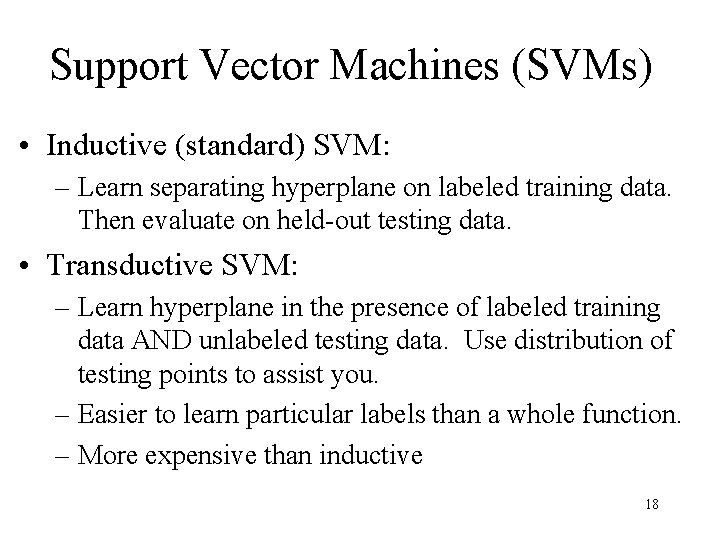

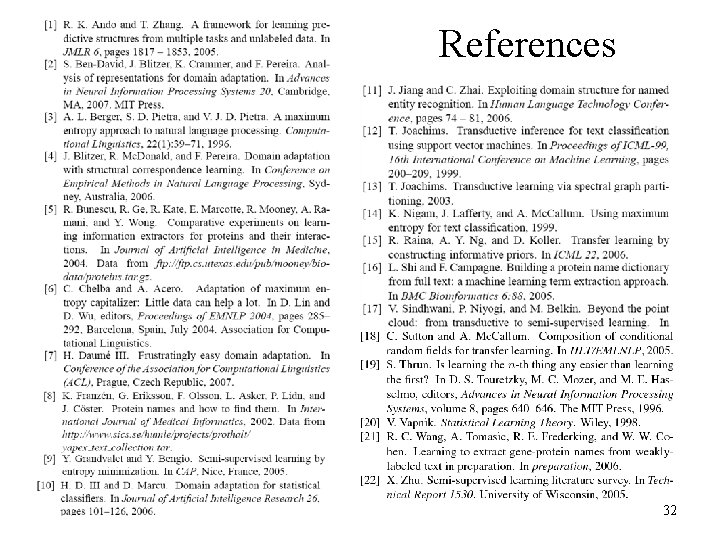

Support Vector Machines (SVMs) • Inductive (standard) SVM: – Learn separating hyperplane on labeled training data. Then evaluate on held-out testing data. • Transductive SVM: – Learn hyperplane in the presence of labeled training data AND unlabeled testing data. Use distribution of testing points to assist you. – Easier to learn particular labels than a whole function. – More expensive than inductive 18

![Transductive vs Inductive SVM Joachims 99 03 19 Transductive vs. Inductive SVM [Joachims ’ 99, ‘ 03] 19](https://slidetodoc.com/presentation_image/2ad4f1b16ac4b7aae44b053e266d73d4/image-19.jpg)

Transductive vs. Inductive SVM [Joachims ’ 99, ‘ 03] 19

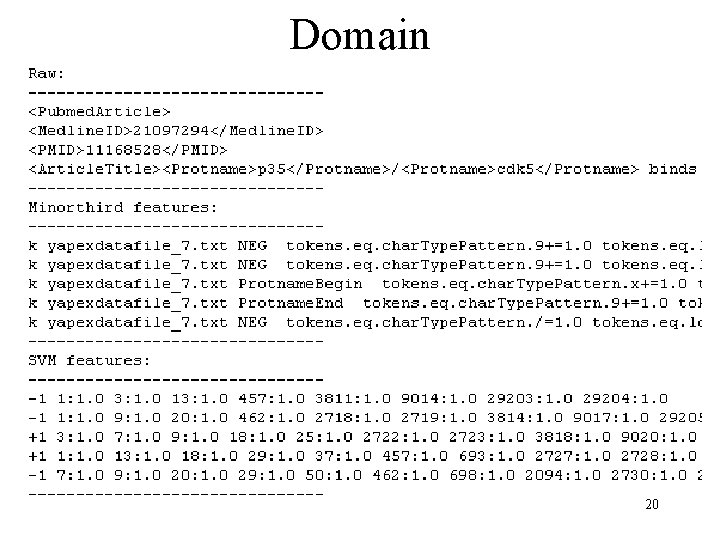

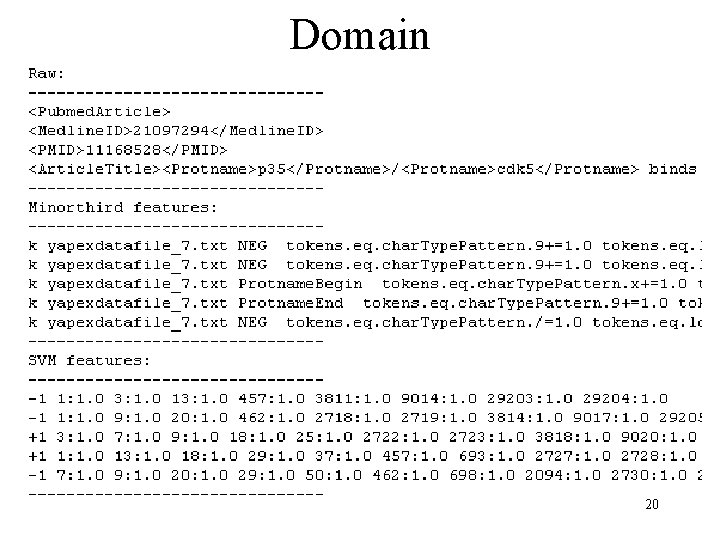

Domain 20

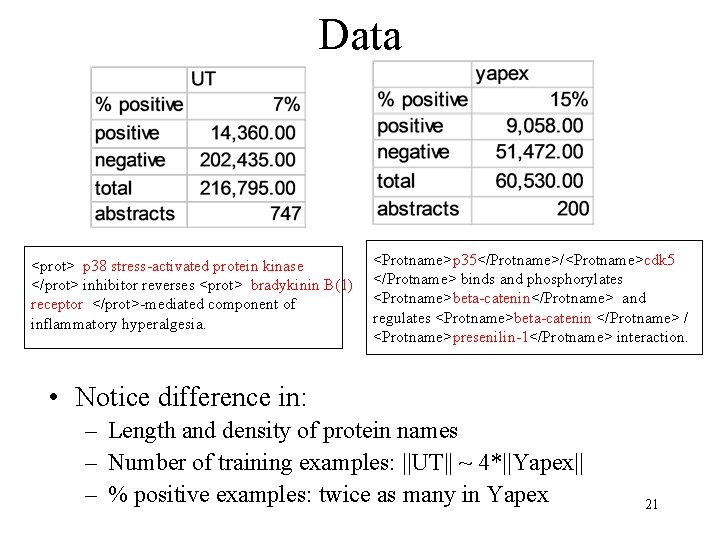

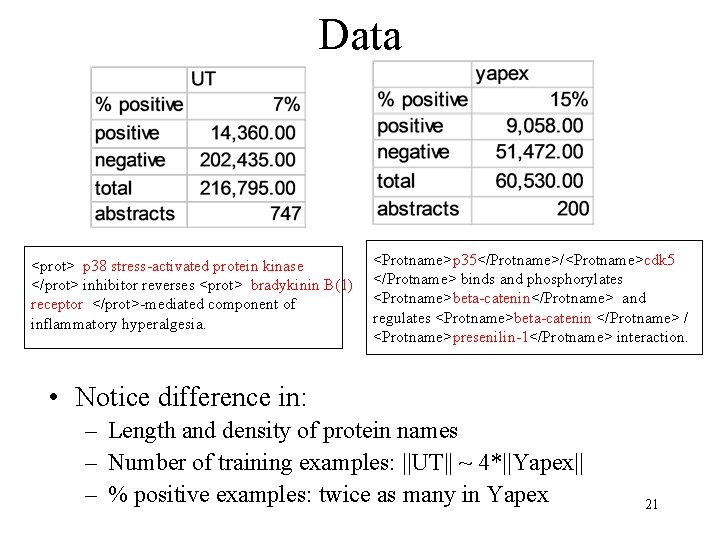

Data <prot> p 38 stress-activated protein kinase </prot> inhibitor reverses <prot> bradykinin B(1) receptor </prot>-mediated component of inflammatory hyperalgesia. <Protname>p 35</Protname>/<Protname>cdk 5 </Protname> binds and phosphorylates <Protname>beta-catenin</Protname> and regulates <Protname>beta-catenin </Protname> / <Protname>presenilin-1</Protname> interaction. • Notice difference in: – Length and density of protein names – Number of training examples: ||UT|| ~ 4*||Yapex|| – % positive examples: twice as many in Yapex 21

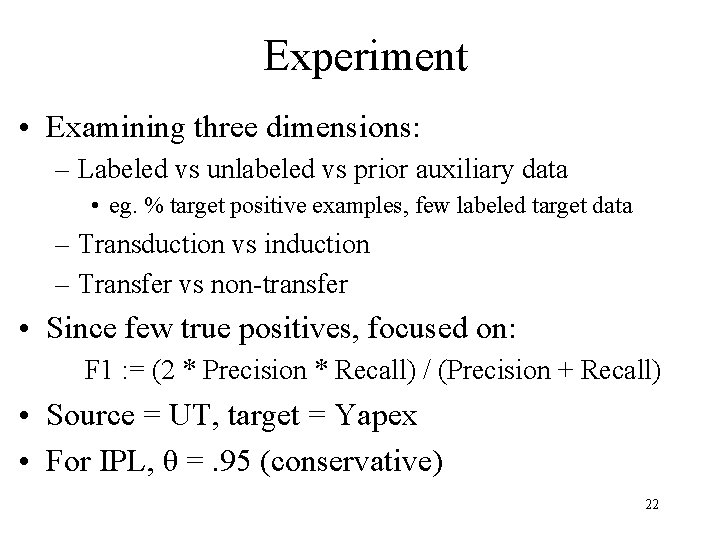

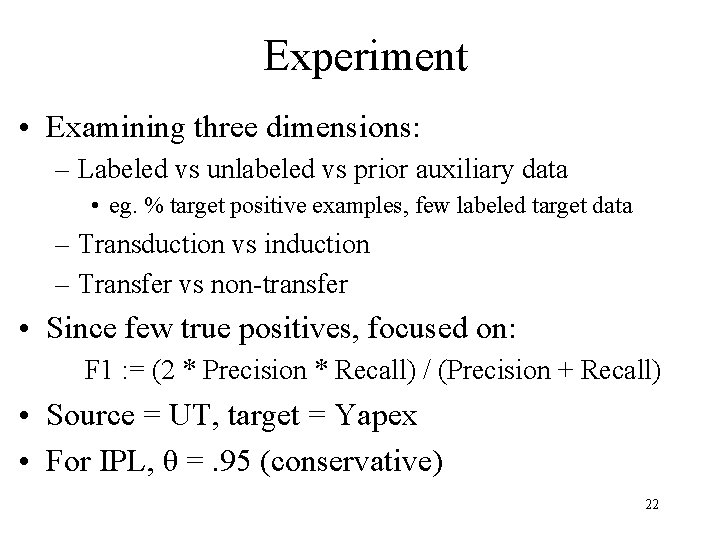

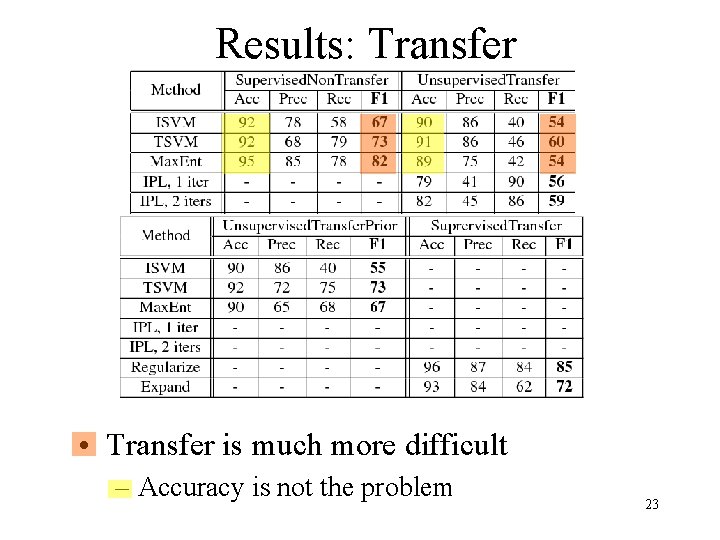

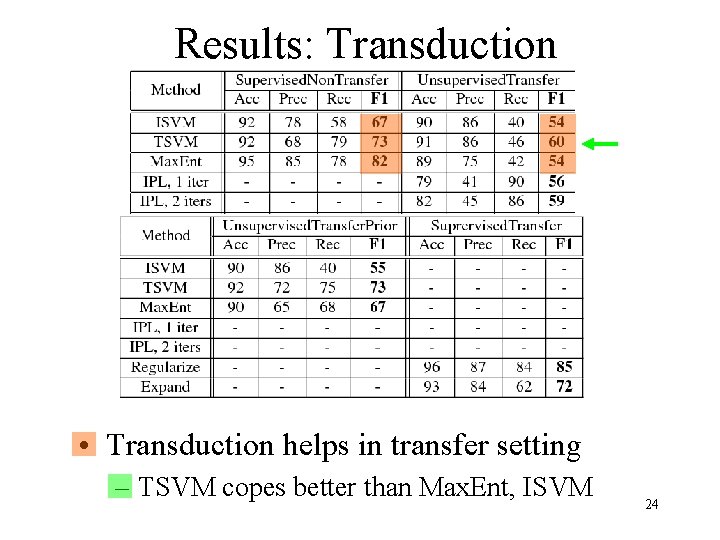

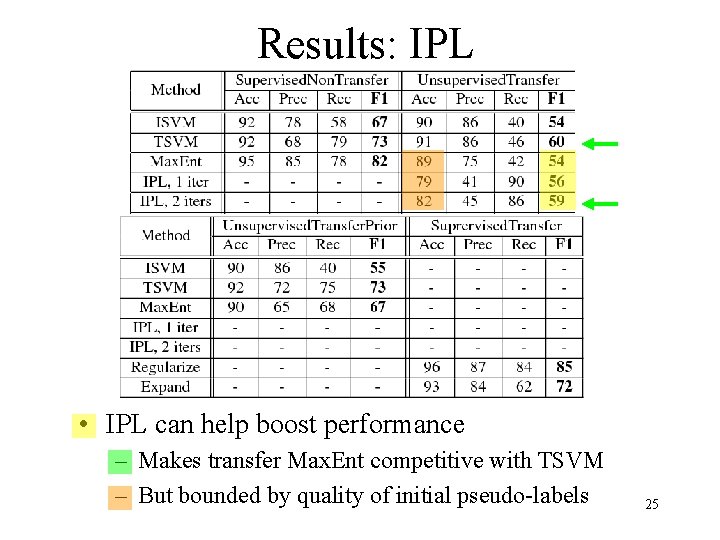

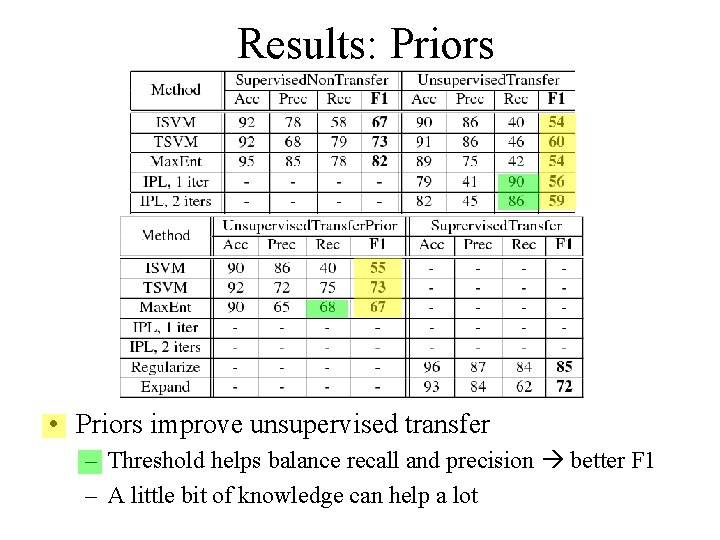

Experiment • Examining three dimensions: – Labeled vs unlabeled vs prior auxiliary data • eg. % target positive examples, few labeled target data – Transduction vs induction – Transfer vs non-transfer • Since few true positives, focused on: F 1 : = (2 * Precision * Recall) / (Precision + Recall) • Source = UT, target = Yapex • For IPL, θ =. 95 (conservative) 22

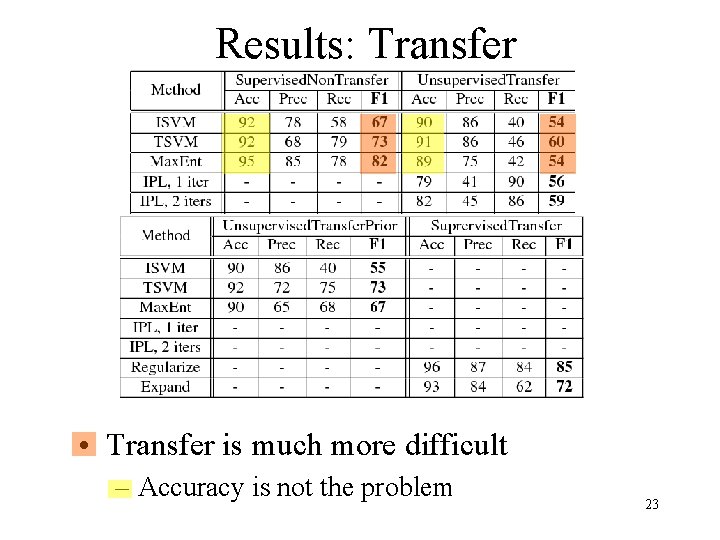

Results: Transfer • Transfer is much more difficult – Accuracy is not the problem 23

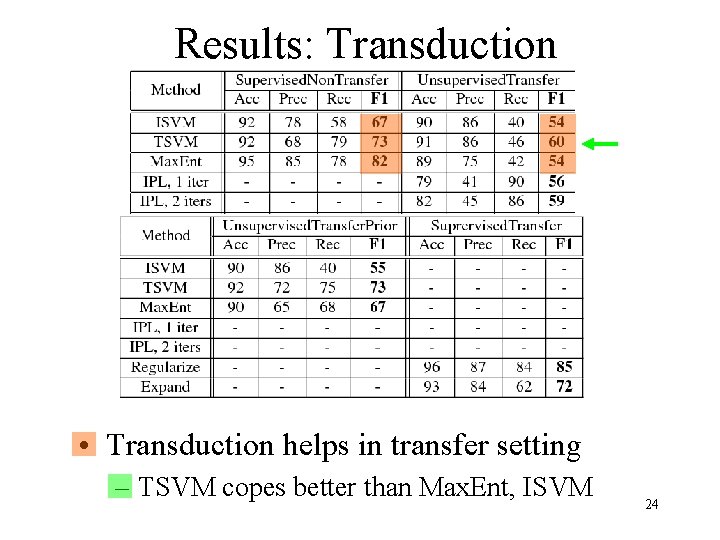

Results: Transduction • Transduction helps in transfer setting – TSVM copes better than Max. Ent, ISVM 24

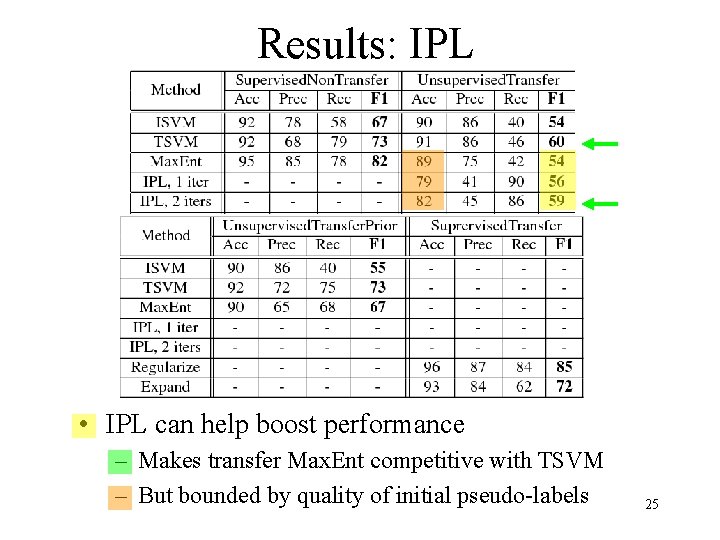

Results: IPL • IPL can help boost performance – Makes transfer Max. Ent competitive with TSVM – But bounded by quality of initial pseudo-labels 25

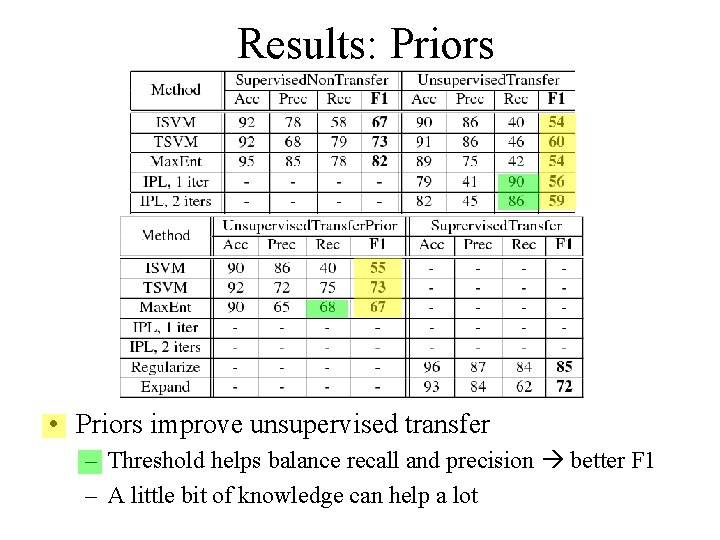

Results: Priors • Priors improve unsupervised transfer – Threshold helps balance recall and precision better F 1 – A little bit of knowledge can help a lot

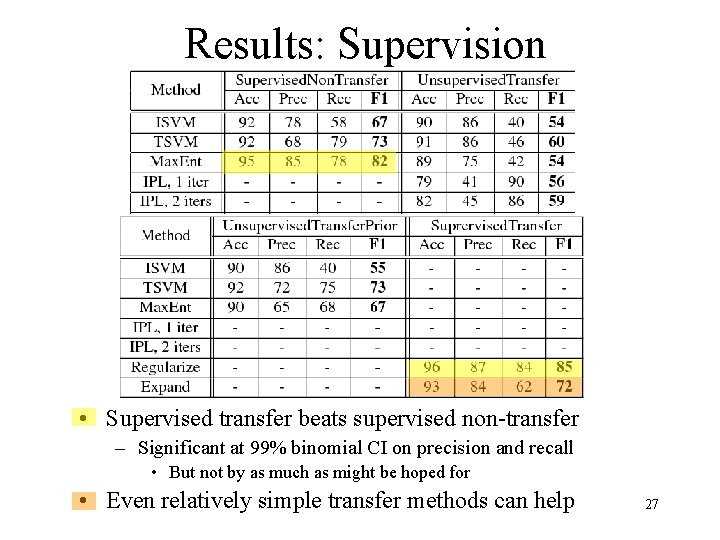

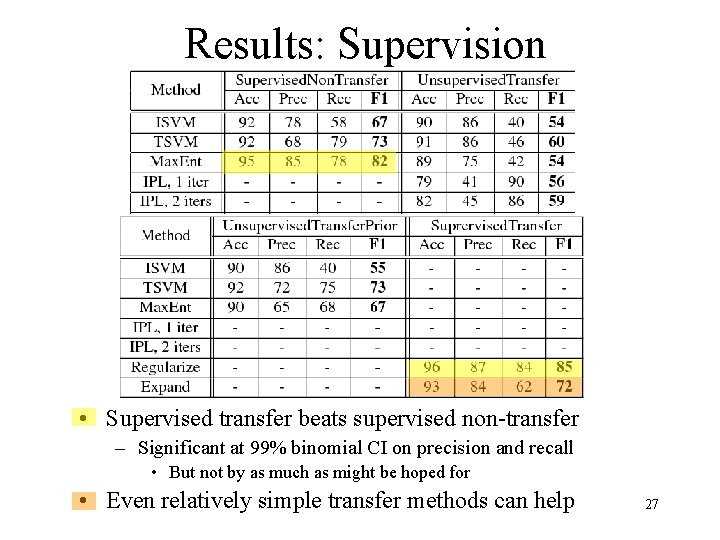

Results: Supervision • Supervised transfer beats supervised non-transfer – Significant at 99% binomial CI on precision and recall • But not by as much as might be hoped for • Even relatively simple transfer methods can help 27

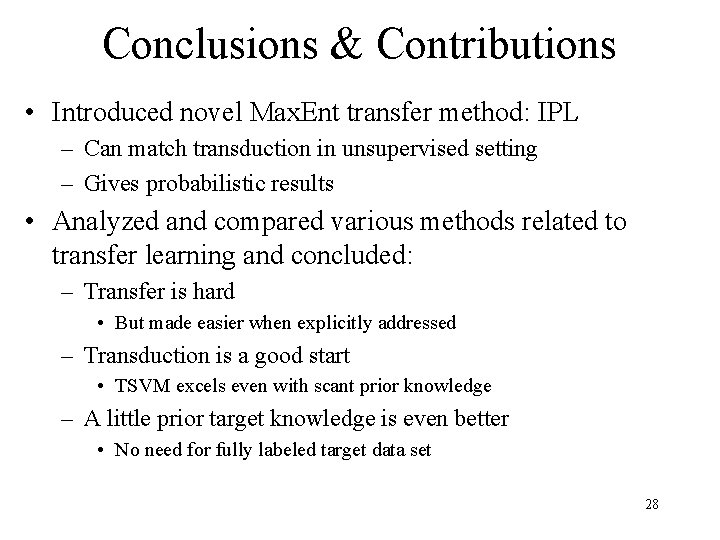

Conclusions & Contributions • Introduced novel Max. Ent transfer method: IPL – Can match transduction in unsupervised setting – Gives probabilistic results • Analyzed and compared various methods related to transfer learning and concluded: – Transfer is hard • But made easier when explicitly addressed – Transduction is a good start • TSVM excels even with scant prior knowledge – A little prior target knowledge is even better • No need for fully labeled target data set 28

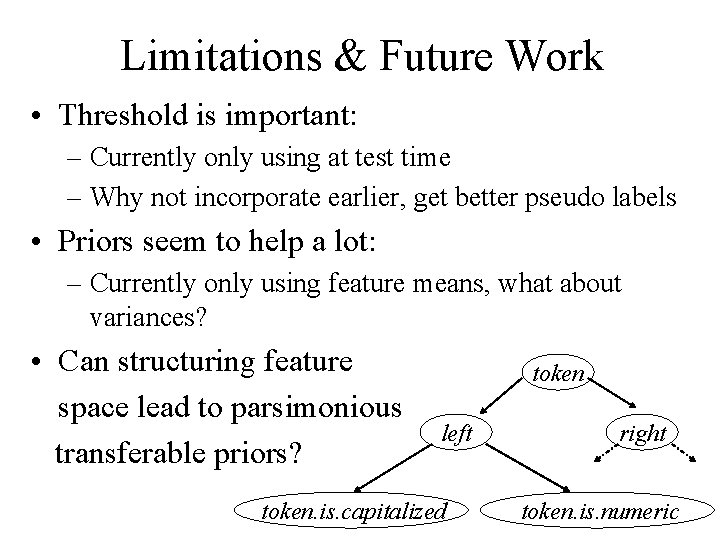

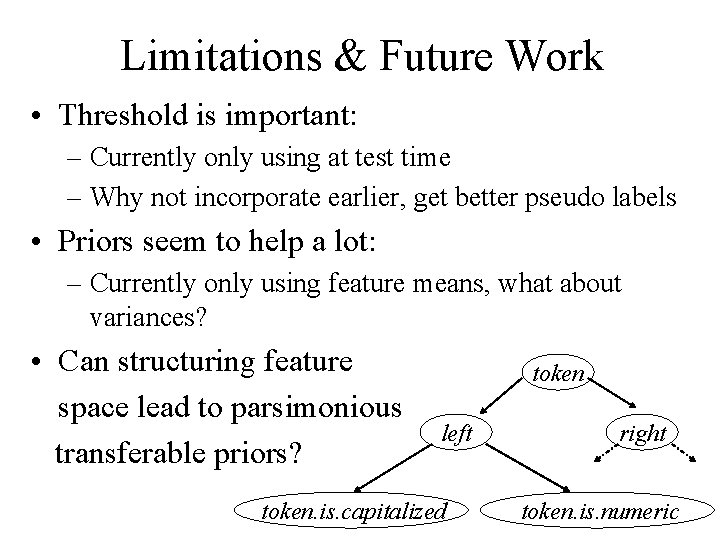

Limitations & Future Work • Threshold is important: – Currently only using at test time – Why not incorporate earlier, get better pseudo labels • Priors seem to help a lot: – Currently only using feature means, what about variances? • Can structuring feature space lead to parsimonious transferable priors? token left token. is. capitalized right token. is. numeric

Limitations & Future Work: high-level • How to better make use of source data? – Why doesn’t source data help so much? • Is IPL convex? – Is this exactly what we want to optimize? – How does regularization affect convexity? • What, exactly, is the relationship between transduction and transfer? – Can their theories be unified? • When is it worth explicitly modeling transfer? – How different do the domains need to be? – How much source/target data do we need? – What kind of priors do we need? 30

☺ Thank you! ☺ ¿ Questions ? 31

References 32