Generative Models Naive Bayes Dan Roth danrothseas upenn

Generative Models; Naive Bayes Dan Roth danroth@seas. upenn. edu|http: //www. cis. upenn. edu/~danroth/|461 C, 3401 Walnut Slides were created by Dan Roth (for CIS 519/419 at Penn or CS 446 at UIUC), Eric Eaton for CIS 519/419 at Penn, or from other authors who have made their CIS 419/519 Fall’ 19 ML slides available. 1

Administration • Projects: – Come to my office hours at least once to discuss the project. – Posters for the projects will be presented on the last meeting of the class, Monday, December 9; we’ll start earlier: 9 am – noon. – We will also ask you to prepare a 3 -minute video • Final reports will only be due after the Final exam, on December 19 – Specific instructions are on the web page and will be sent also on Piazza. CIS 419/519 Fall’ 19 2

Administration (2) • Exam: – The exam will take place on the originally assigned date, 12/19. • Location: TBD Applied Machine Learning • Structured similarly to the midterm. • 120 minutes; closed books. • The exams are mostly about understanding Machine Learning. – What is covered: • HW is about applying machine • Cumulative! learning. • Slightly more focus on the material covered after the previous mid-term. • However, notice that the ideas in this class are cumulative!! • Everything that we present in class and in the homework assignments • Material that is in the slides but is not discussed in class is not part of the material required for the exam. – Example 1: We talked about Boosting. But not about boosting the confidence. – Example 2: We talked about multiclassification: Ov. A, Av. A, but not Error Correcting codes, and not about constraint classification (in the slides). • We will give practice exams. HW 5 will also serve as preparation. CIS 419/519 Fall’ 19 Go to Bayesian Learning 3

Recap: Error Driven Learning • CIS 419/519 Fall’ 19

Recap: Error Driven Learning (2) • Inductive learning comes into play when the distribution is not known. • Then, there are two basic approaches to take. – Discriminative (Direct) Learning • and – Bayesian Learning (Generative) • Running example: – Text Correction: • “I saw the girl it the park” I saw the girl in the park CIS 419/519 Fall’ 19 5

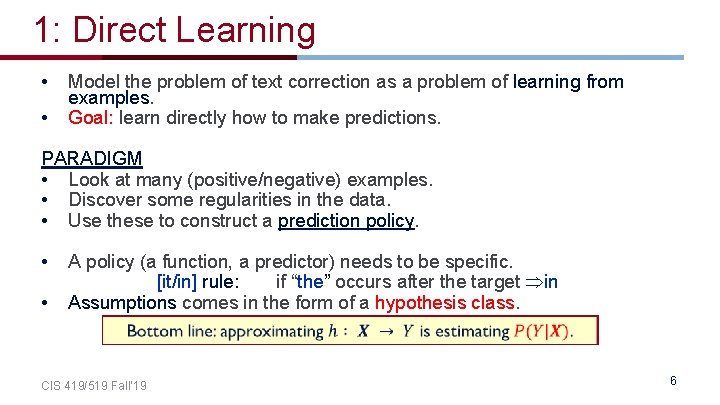

1: Direct Learning • • Model the problem of text correction as a problem of learning from examples. Goal: learn directly how to make predictions. PARADIGM • Look at many (positive/negative) examples. • Discover some regularities in the data. • Use these to construct a prediction policy. • • A policy (a function, a predictor) needs to be specific. [it/in] rule: if “the” occurs after the target in Assumptions comes in the form of a hypothesis class. CIS 419/519 Fall’ 19 6

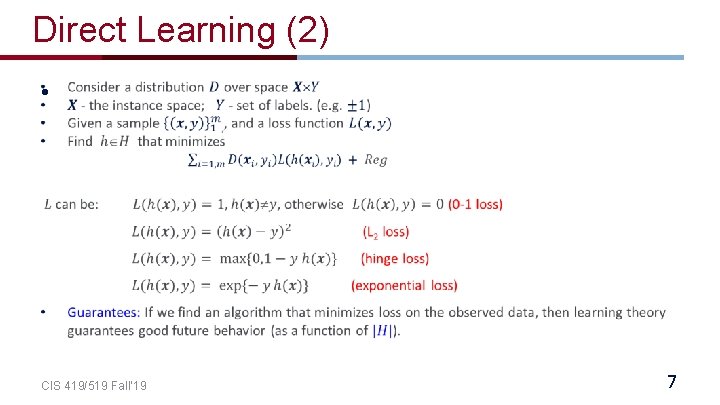

Direct Learning (2) • CIS 419/519 Fall’ 19 7

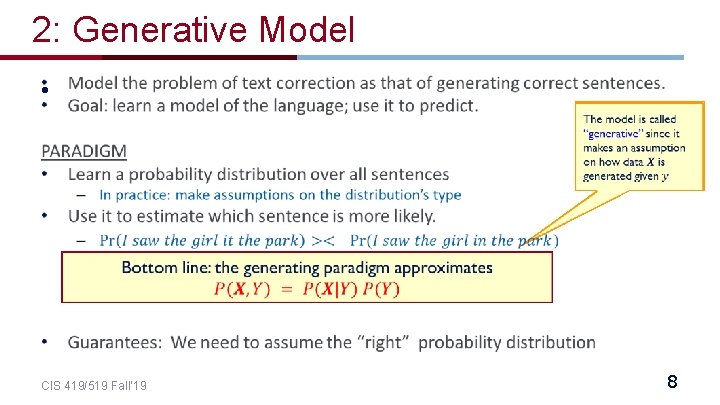

2: Generative Model • CIS 419/519 Fall’ 19 8

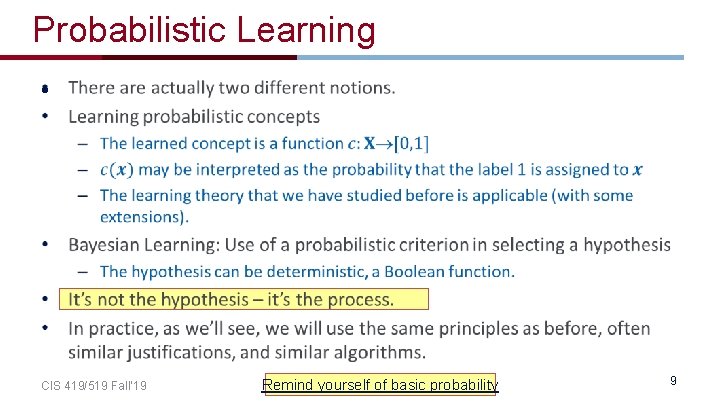

Probabilistic Learning • CIS 419/519 Fall’ 19 Remind yourself of basic probability 9

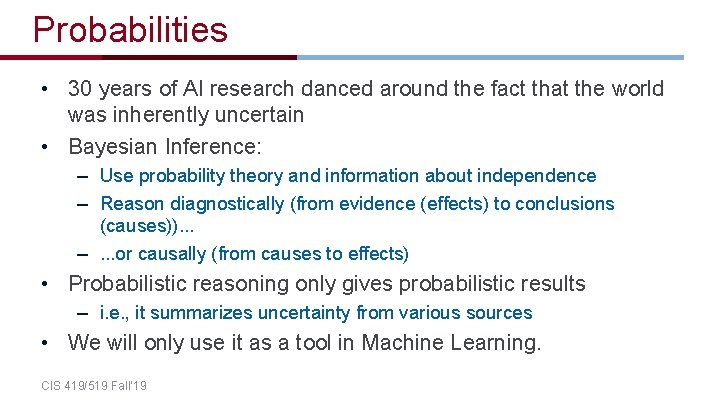

Probabilities • 30 years of AI research danced around the fact that the world was inherently uncertain • Bayesian Inference: – Use probability theory and information about independence – Reason diagnostically (from evidence (effects) to conclusions (causes)). . . –. . . or causally (from causes to effects) • Probabilistic reasoning only gives probabilistic results – i. e. , it summarizes uncertainty from various sources • We will only use it as a tool in Machine Learning. CIS 419/519 Fall’ 19

Concepts • • Probability, Probability Space and Events Joint Events Conditional Probabilities Independence – Next week’s recitation will provide a refresher on probability – Use the material we provided on-line • Next I will give a very quick refresher CIS 419/519 Fall’ 19 11

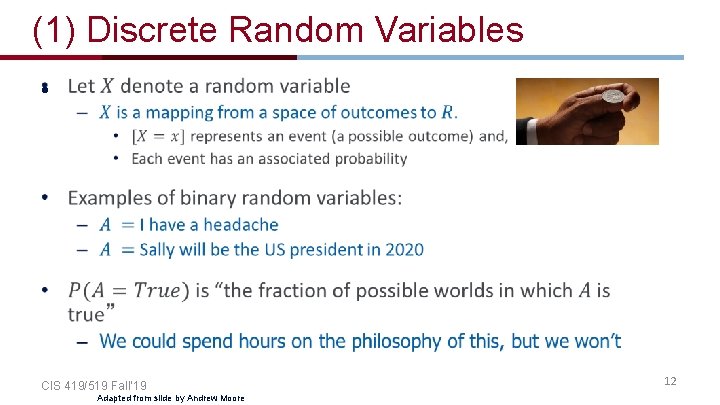

(1) Discrete Random Variables • CIS 419/519 Fall’ 19 Adapted from slide by Andrew Moore 12

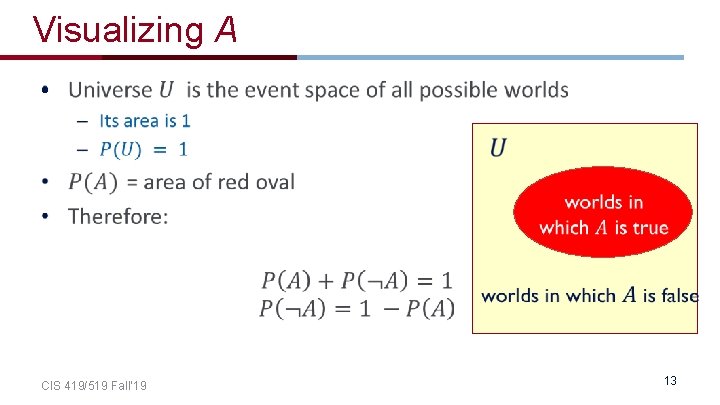

Visualizing A • CIS 419/519 Fall’ 19 13

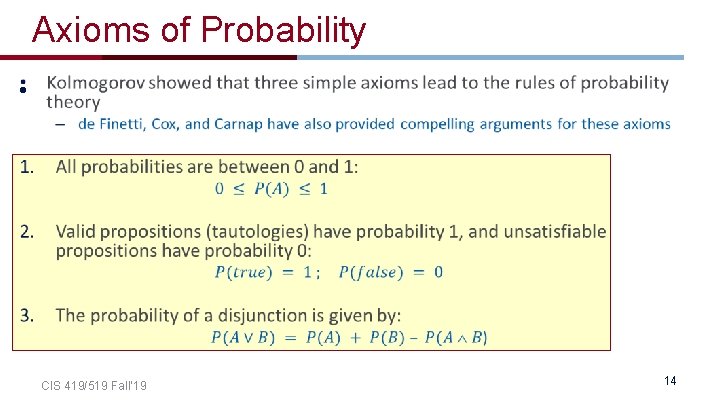

Axioms of Probability • CIS 419/519 Fall’ 19 14

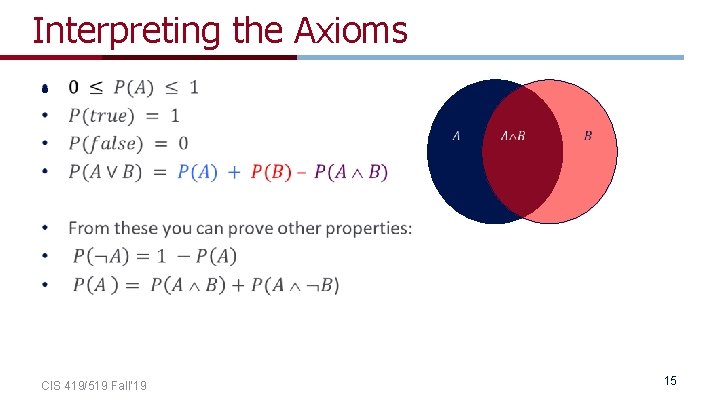

Interpreting the Axioms • CIS 419/519 Fall’ 19 15

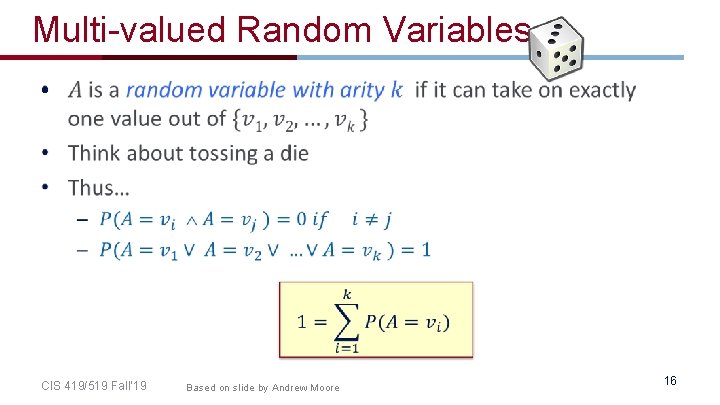

Multi-valued Random Variables • CIS 419/519 Fall’ 19 Based on slide by Andrew Moore 16

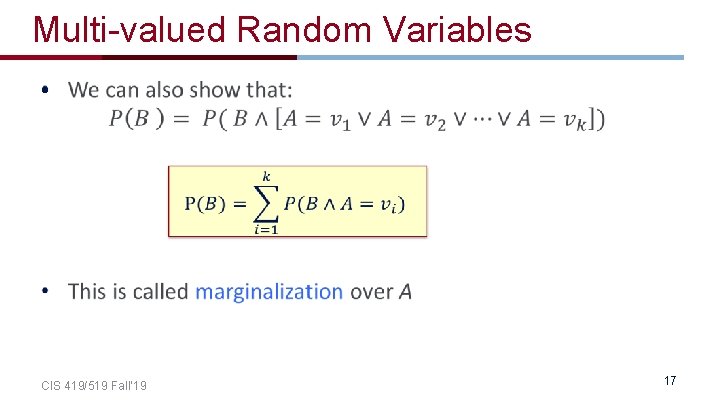

Multi-valued Random Variables • CIS 419/519 Fall’ 19 17

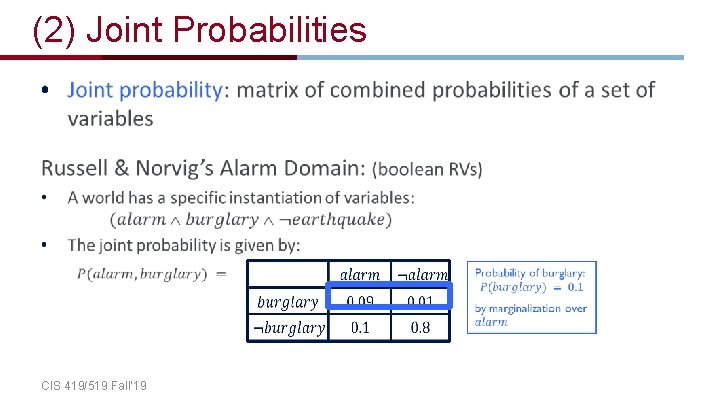

(2) Joint Probabilities • CIS 419/519 Fall’ 19

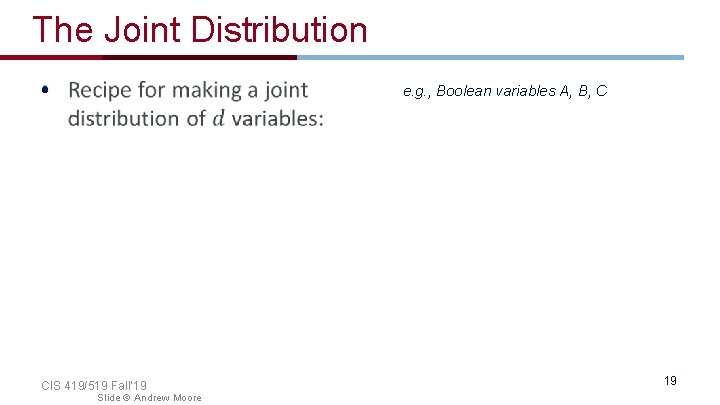

The Joint Distribution • e. g. , Boolean variables A, B, C CIS 419/519 Fall’ 19 Slide © Andrew Moore 19

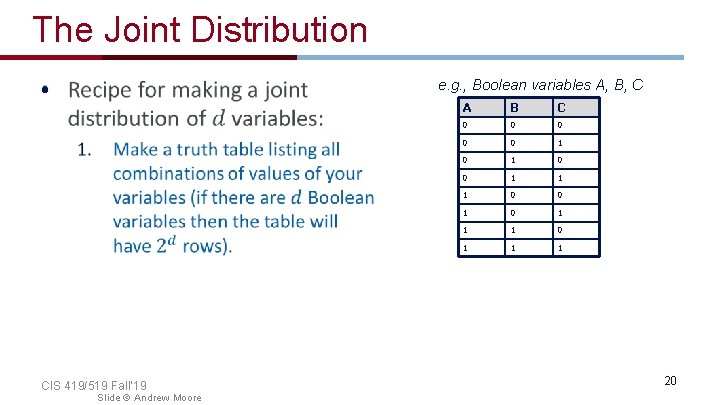

The Joint Distribution • e. g. , Boolean variables A, B, C CIS 419/519 Fall’ 19 Slide © Andrew Moore A B C 0 0 0 1 1 1 0 0 1 1 1 20

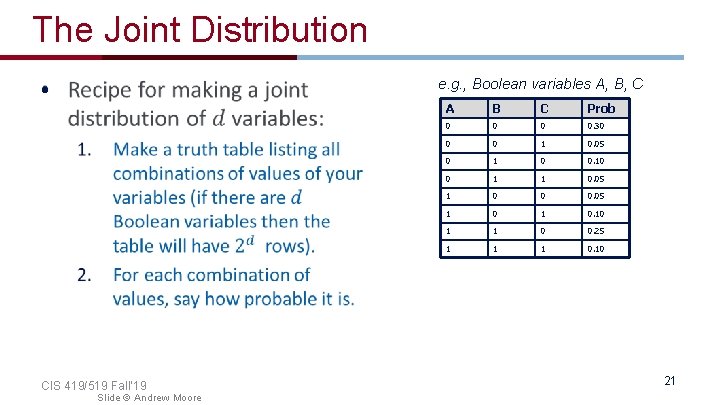

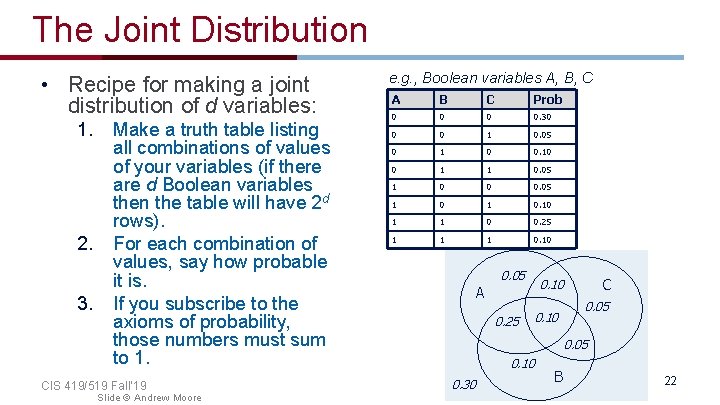

The Joint Distribution e. g. , Boolean variables A, B, C • CIS 419/519 Fall’ 19 Slide © Andrew Moore A B C Prob 0 0. 30 0 0 1 0. 05 0 1 0 0. 10 0 1 1 0. 05 1 0 0 0. 05 1 0. 10 1 1 0 0. 25 1 1 1 0. 10 21

The Joint Distribution • Recipe for making a joint distribution of d variables: 1. 2. 3. Make a truth table listing all combinations of values of your variables (if there are d Boolean variables then the table will have 2 d rows). For each combination of values, say how probable it is. If you subscribe to the axioms of probability, those numbers must sum to 1. CIS 419/519 Fall’ 19 Slide © Andrew Moore e. g. , Boolean variables A, B, C A B C Prob 0 0. 30 0 0 1 0. 05 0 1 0 0. 10 0 1 1 0. 05 1 0 0 0. 05 1 0. 10 1 1 0 0. 25 1 1 1 0. 10 0. 05 A 0. 25 C 0. 10 0. 05 0. 10 0. 30 B 22

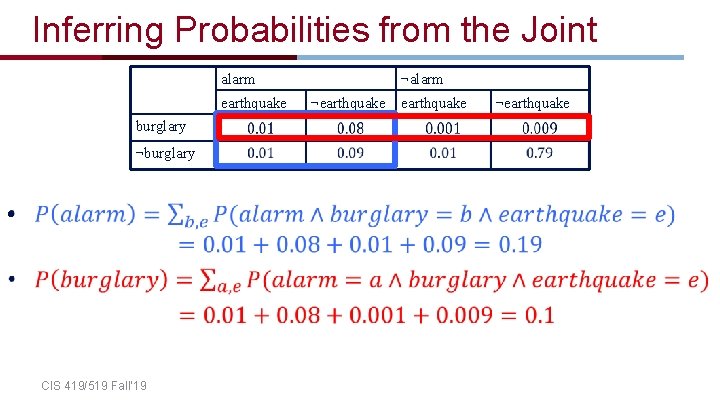

Inferring Probabilities from the Joint alarm earthquake burglary ¬burglary • CIS 419/519 Fall’ 19 ¬alarm ¬earthquake

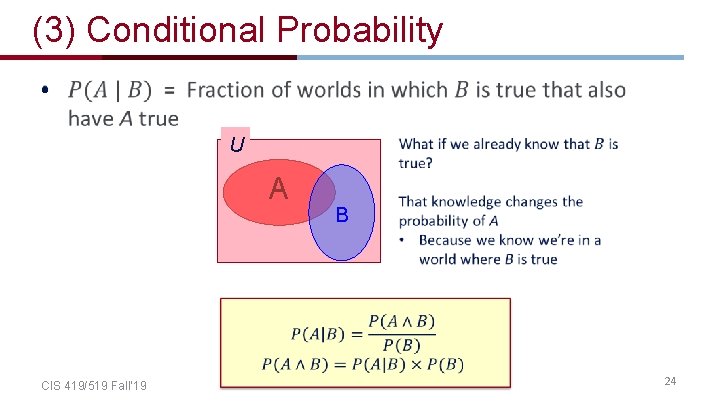

(3) Conditional Probability • U A CIS 419/519 Fall’ 19 B 24

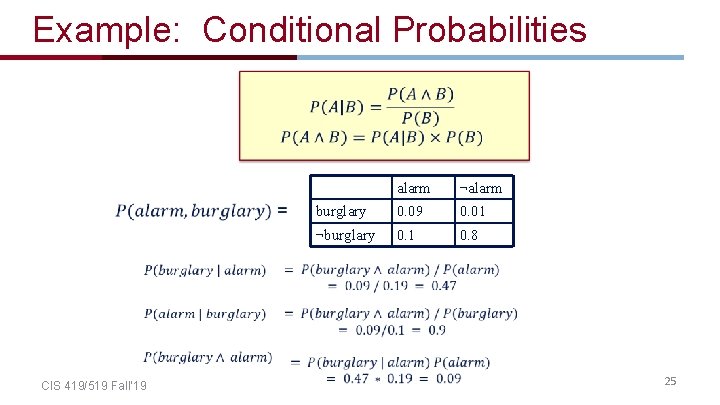

Example: Conditional Probabilities CIS 419/519 Fall’ 19 alarm ¬alarm burglary 0. 09 0. 01 ¬burglary 0. 1 0. 8 25

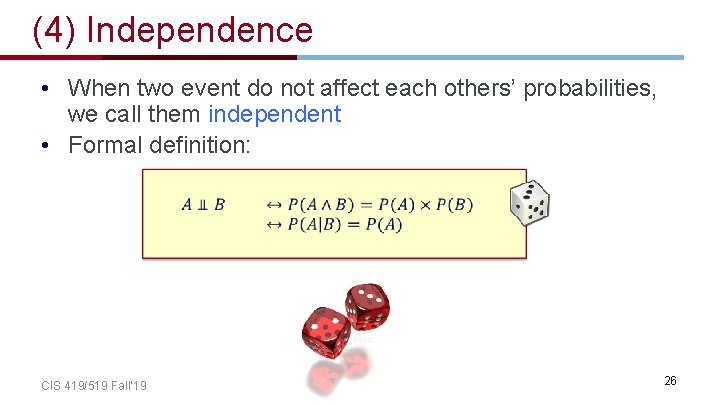

(4) Independence • When two event do not affect each others’ probabilities, we call them independent • Formal definition: CIS 419/519 Fall’ 19 26

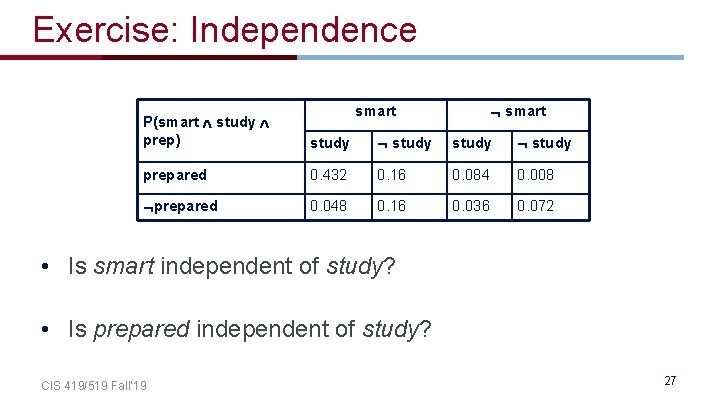

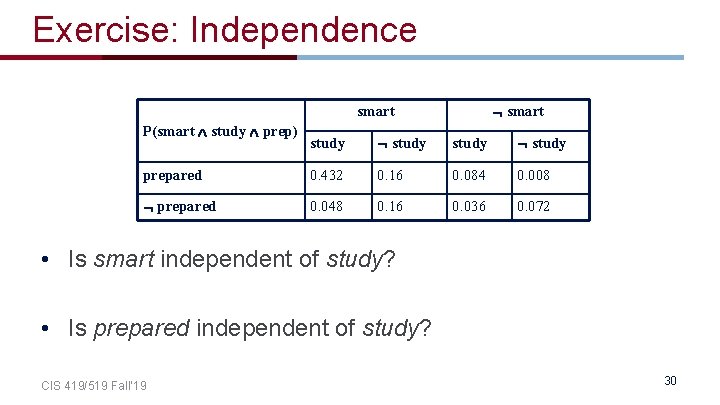

Exercise: Independence smart P(smart study prep) study prepared 0. 432 0. 16 0. 084 0. 008 prepared 0. 048 0. 16 0. 036 0. 072 • Is smart independent of study? • Is prepared independent of study? CIS 419/519 Fall’ 19 27

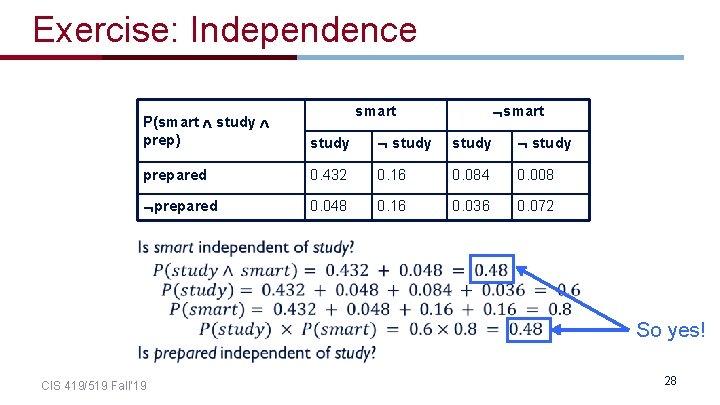

Exercise: Independence smart P(smart study prep) study prepared 0. 432 0. 16 0. 084 0. 008 prepared 0. 048 0. 16 0. 036 0. 072 So yes! CIS 419/519 Fall’ 19 28

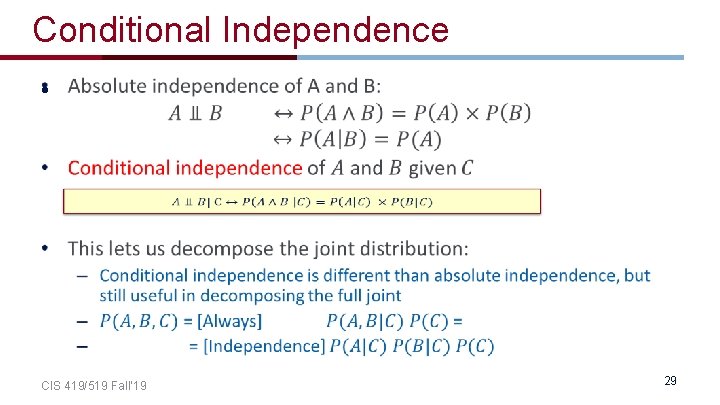

Conditional Independence • CIS 419/519 Fall’ 19 29

Exercise: Independence smart P(smart study prep) study prepared 0. 432 0. 16 0. 084 0. 008 prepared 0. 048 0. 16 0. 036 0. 072 • Is smart independent of study? • Is prepared independent of study? CIS 419/519 Fall’ 19 30

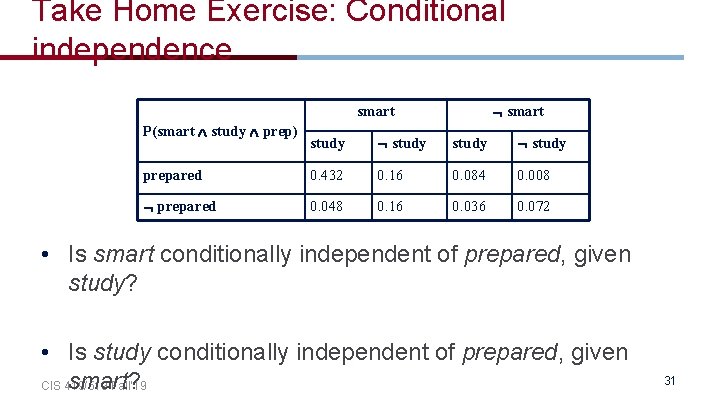

Take Home Exercise: Conditional independence smart P(smart study prep) study prepared 0. 432 0. 16 0. 084 0. 008 prepared 0. 048 0. 16 0. 036 0. 072 • Is smart conditionally independent of prepared, given study? • Is study conditionally independent of prepared, given smart? CIS 419/519 Fall’ 19 31

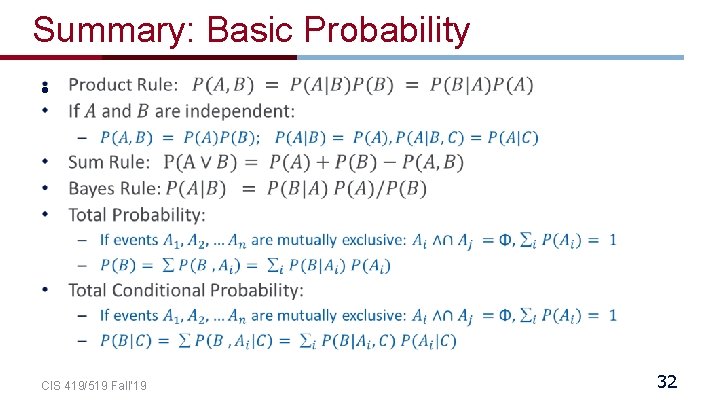

Summary: Basic Probability • CIS 419/519 Fall’ 19 32

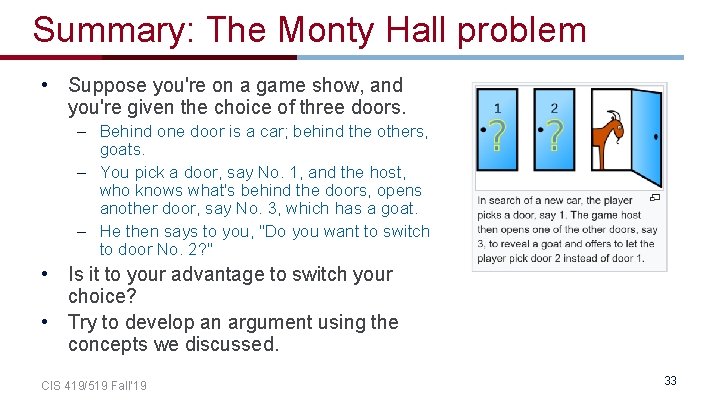

Summary: The Monty Hall problem • Suppose you're on a game show, and you're given the choice of three doors. – Behind one door is a car; behind the others, goats. – You pick a door, say No. 1, and the host, who knows what's behind the doors, opens another door, say No. 3, which has a goat. – He then says to you, "Do you want to switch to door No. 2? " • Is it to your advantage to switch your choice? • Try to develop an argument using the concepts we discussed. CIS 419/519 Fall’ 19 33

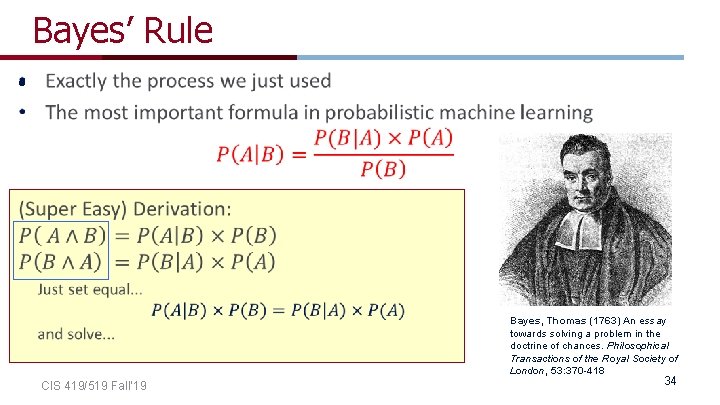

Bayes’ Rule • Bayes, Thomas (1763) An essay towards solving a problem in the doctrine of chances. Philosophical Transactions of the Royal Society of London, 53: 370 -418 CIS 419/519 Fall’ 19 34

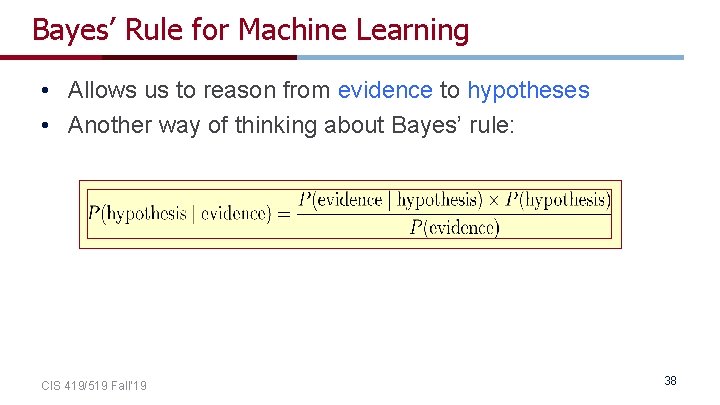

Bayes’ Rule for Machine Learning • Allows us to reason from evidence to hypotheses • Another way of thinking about Bayes’ rule: CIS 419/519 Fall’ 19 38

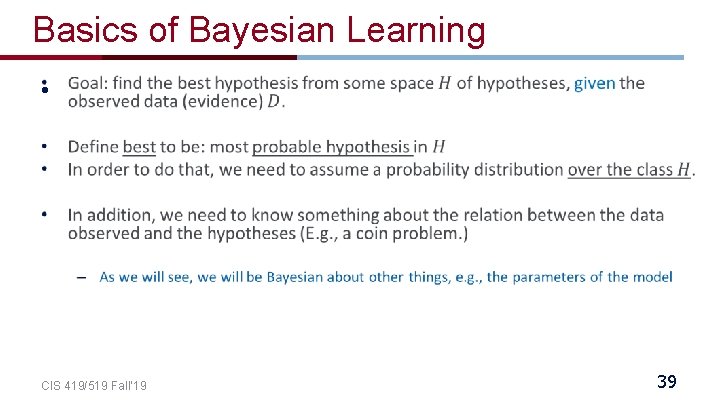

Basics of Bayesian Learning • CIS 419/519 Fall’ 19 39

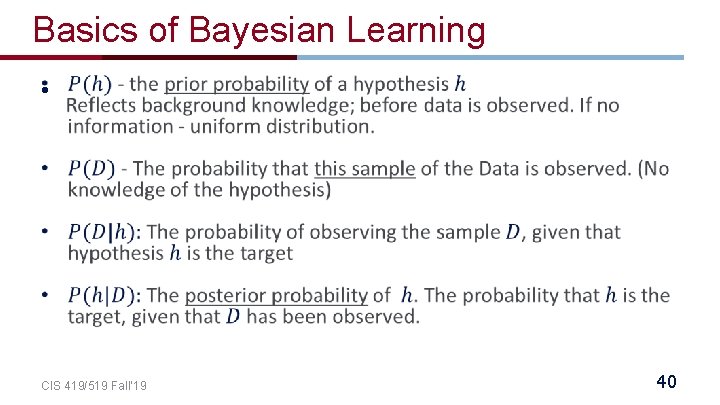

Basics of Bayesian Learning • CIS 419/519 Fall’ 19 40

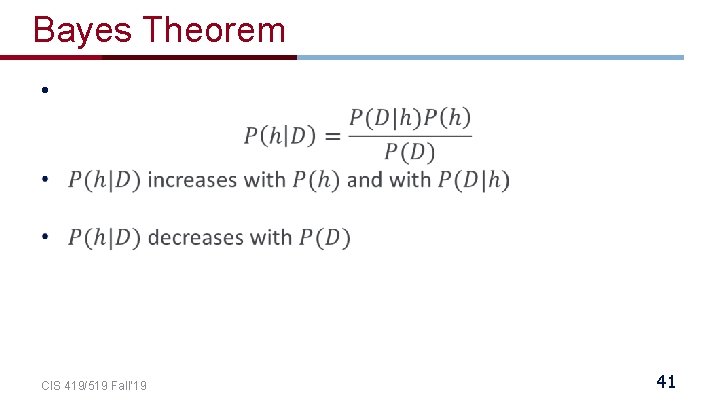

Bayes Theorem • CIS 419/519 Fall’ 19 41

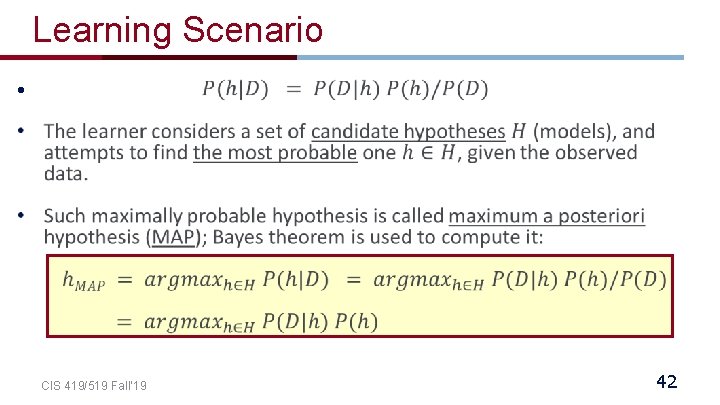

Learning Scenario • CIS 419/519 Fall’ 19 42

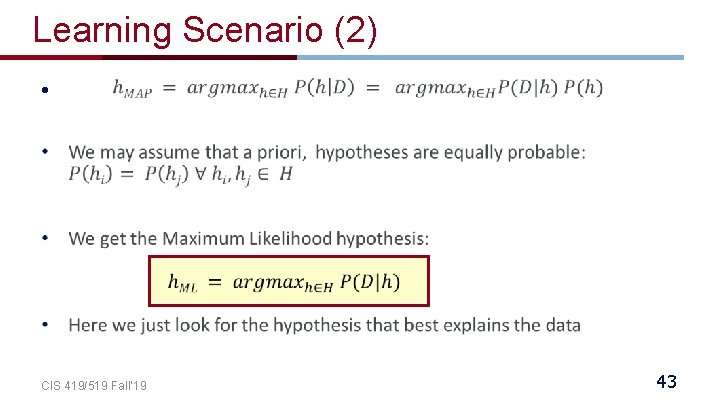

Learning Scenario (2) • CIS 419/519 Fall’ 19 43

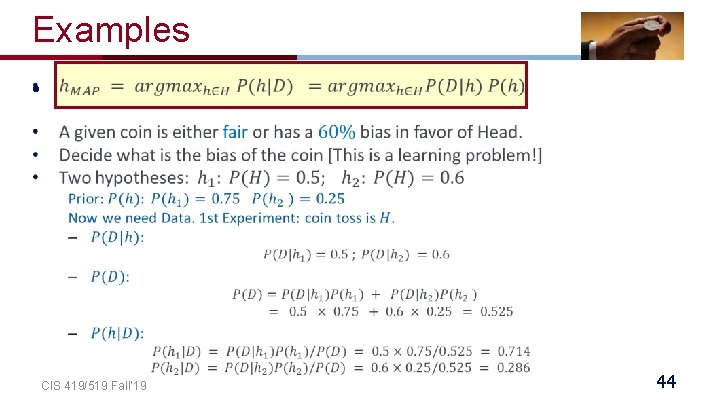

Examples • CIS 419/519 Fall’ 19 44

Examples(2) • CIS 419/519 Fall’ 19 45

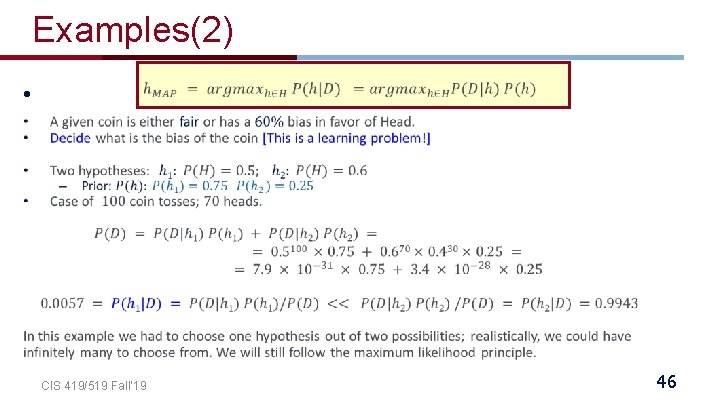

Examples(2) • CIS 419/519 Fall’ 19 46

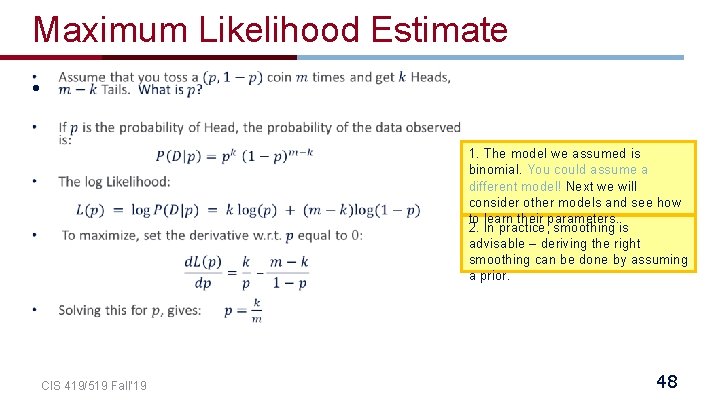

Maximum Likelihood Estimate • 1. The model we assumed is binomial. You could assume a different model! Next we will consider other models and see how to learn their parameters. 2. In practice, smoothing is advisable – deriving the right smoothing can be done by assuming a prior. CIS 419/519 Fall’ 19 48

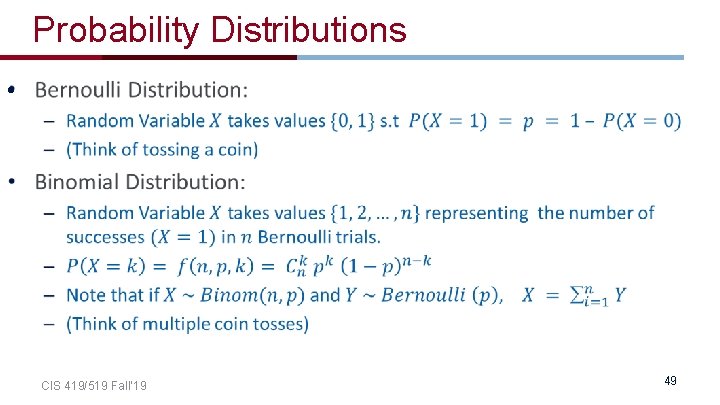

Probability Distributions • CIS 419/519 Fall’ 19 49

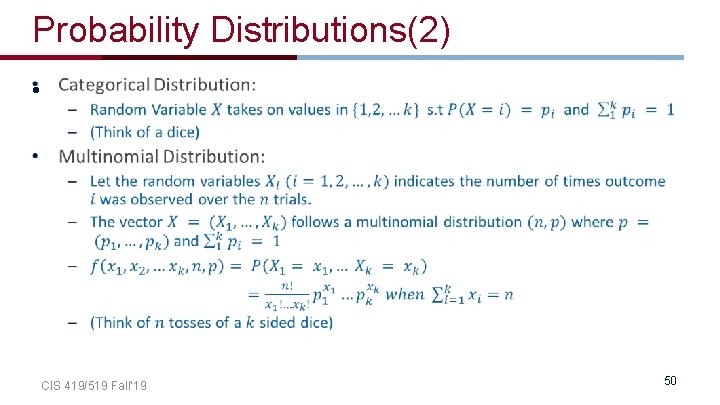

Probability Distributions(2) • CIS 419/519 Fall’ 19 50

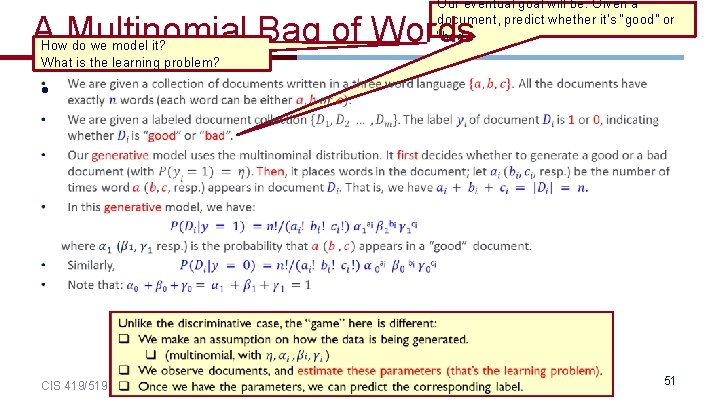

Our eventual goal will be: Given a document, predict whether it’s “good” or “bad” A Multinomial Bag of Words How do we model it? What is the learning problem? • CIS 419/519 Fall’ 19 51

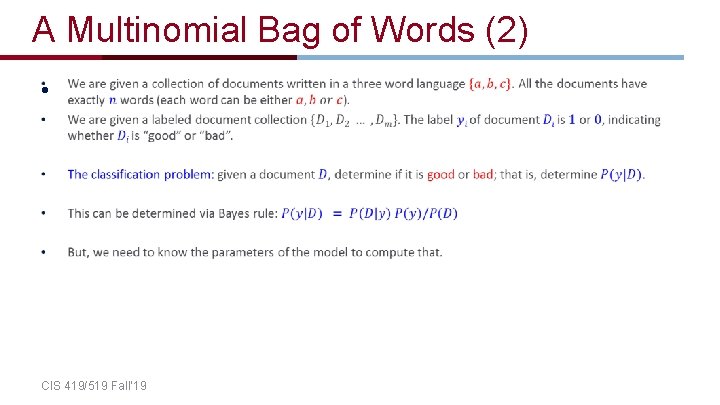

A Multinomial Bag of Words (2) • CIS 419/519 Fall’ 19

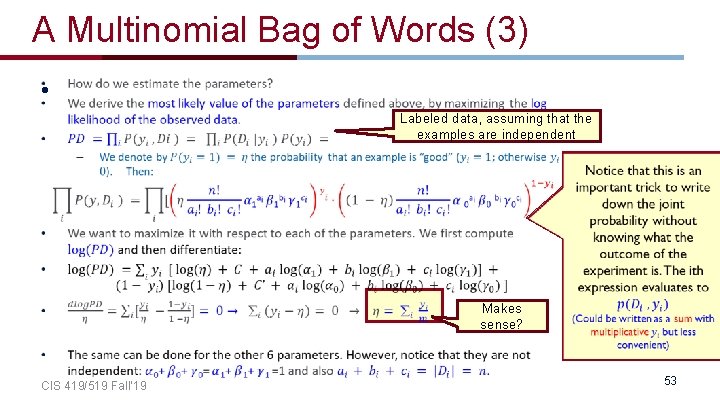

A Multinomial Bag of Words (3) • Labeled data, assuming that the examples are independent Makes sense? CIS 419/519 Fall’ 19 53

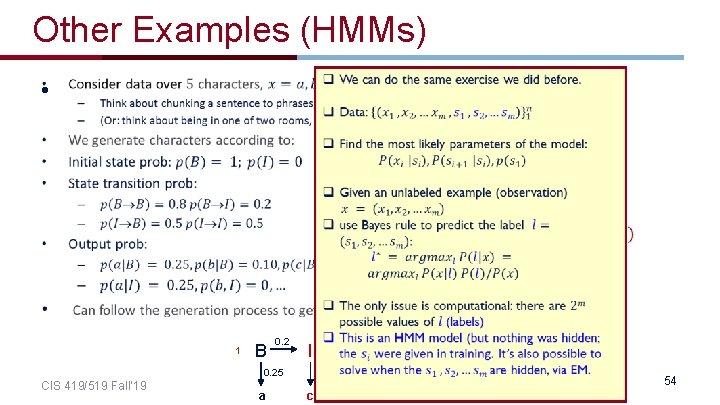

Other Examples (HMMs) • 0. 8 0. 5 0. 2 B I 0. 5 1 B 0. 2 I 0. 25 CIS 419/519 Fall’ 19 a 0. 5 0. 25 c 0. 5 I I 0. 25 d 0. 5 B 0. 25 a 0. 4 d 54

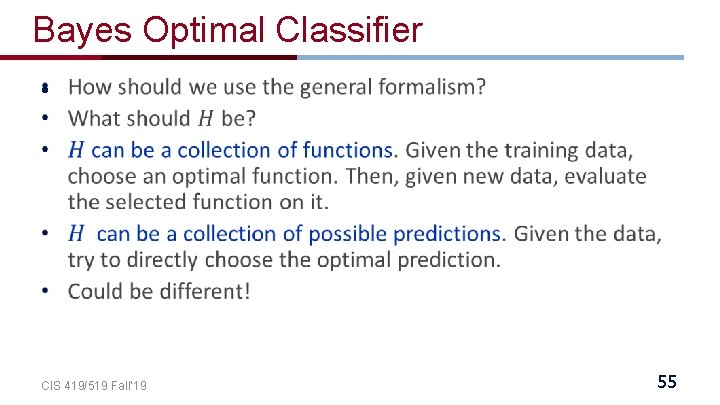

Bayes Optimal Classifier • CIS 419/519 Fall’ 19 55

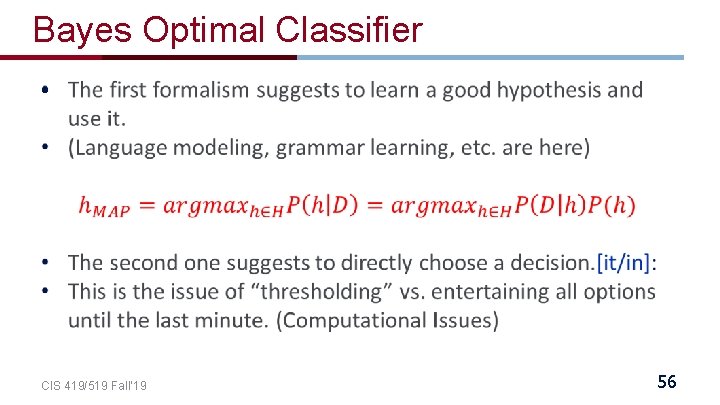

Bayes Optimal Classifier • CIS 419/519 Fall’ 19 56

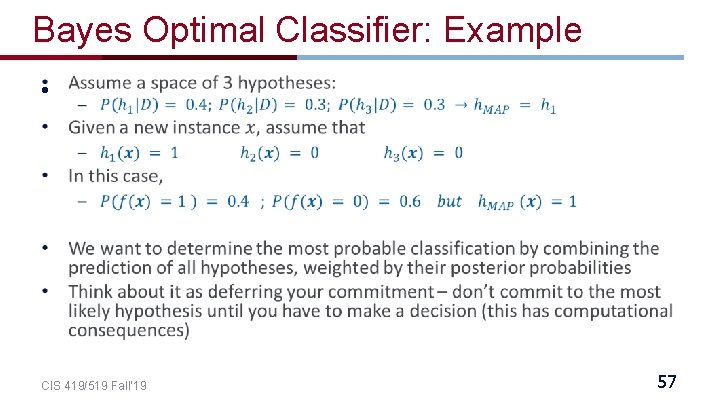

Bayes Optimal Classifier: Example • CIS 419/519 Fall’ 19 57

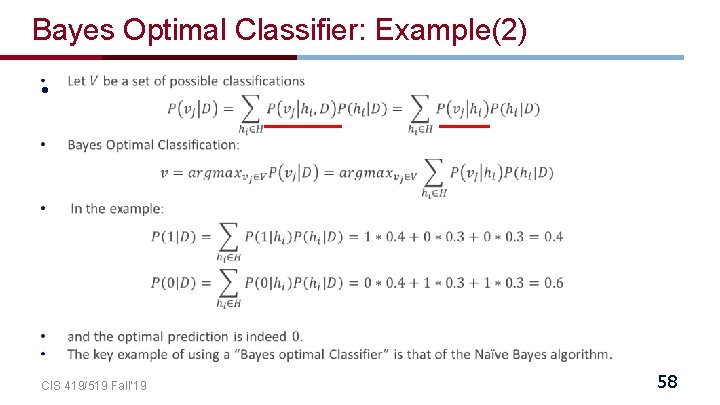

Bayes Optimal Classifier: Example(2) • CIS 419/519 Fall’ 19 58

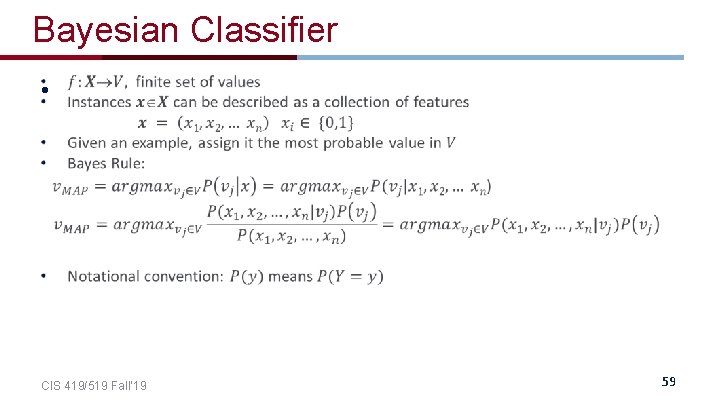

Bayesian Classifier • CIS 419/519 Fall’ 19 59

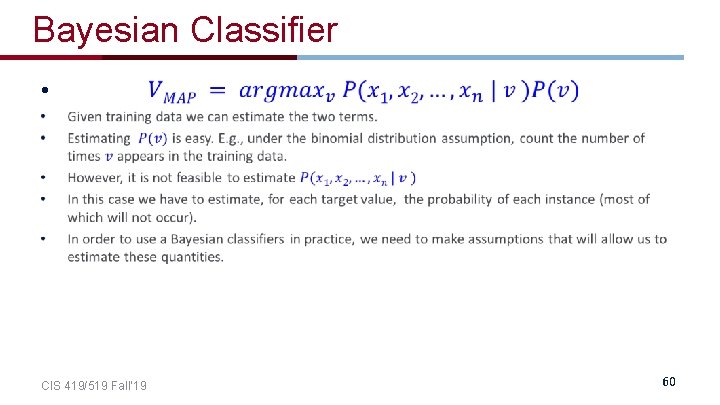

Bayesian Classifier • CIS 419/519 Fall’ 19 60

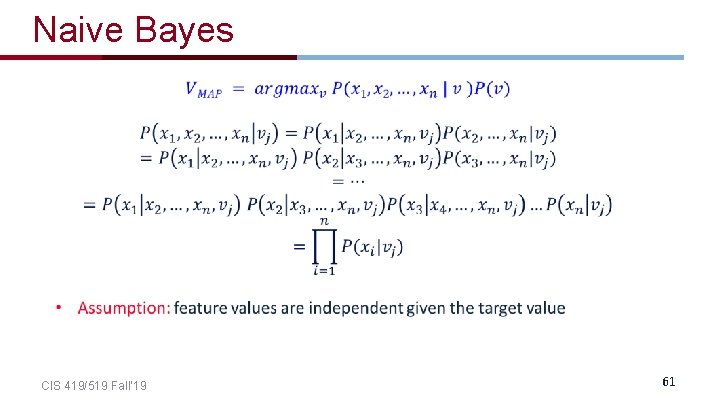

Naive Bayes CIS 419/519 Fall’ 19 61

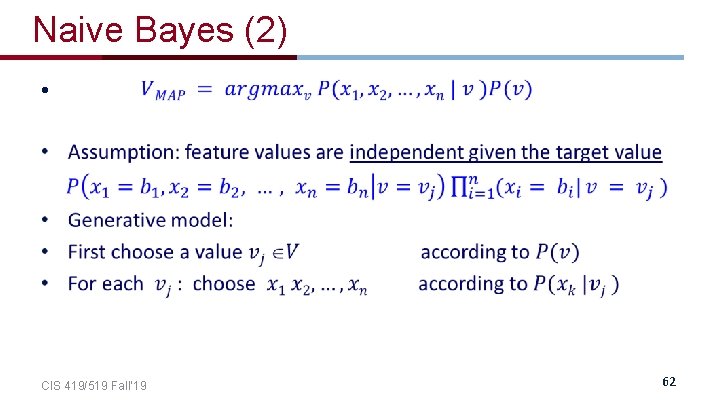

Naive Bayes (2) • CIS 419/519 Fall’ 19 62

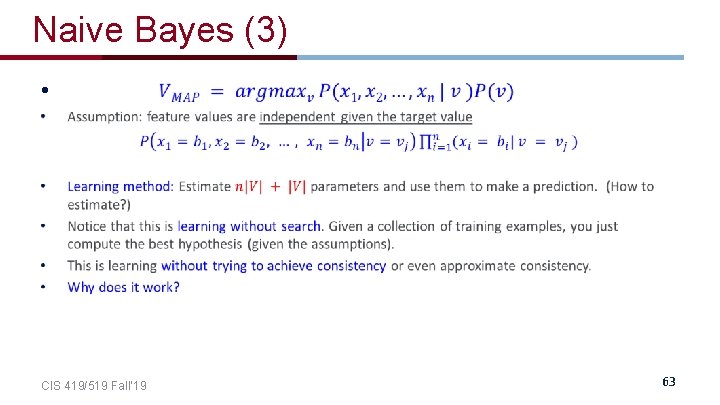

Naive Bayes (3) • CIS 419/519 Fall’ 19 63

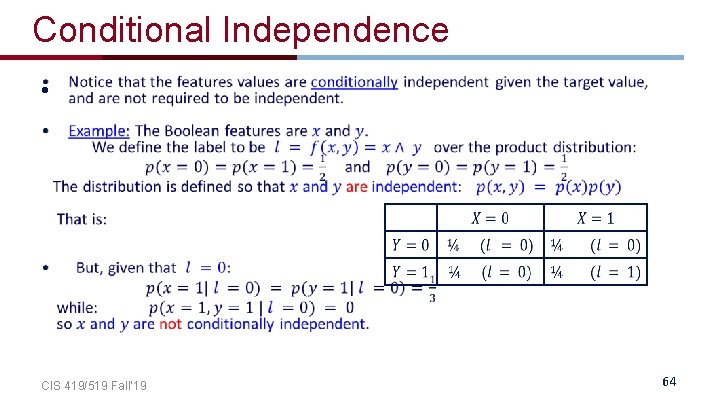

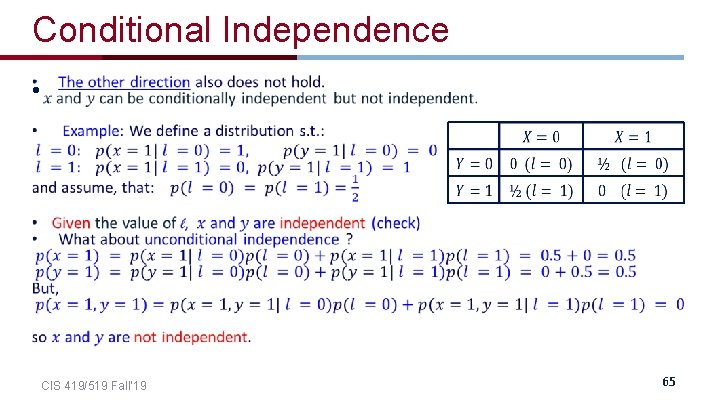

Conditional Independence • CIS 419/519 Fall’ 19 64

Conditional Independence • CIS 419/519 Fall’ 19 65

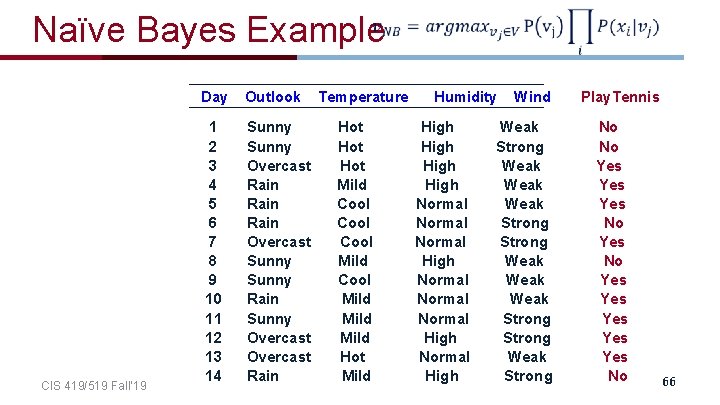

Naïve Bayes Example CIS 419/519 Fall’ 19 Day Outlook 1 2 3 4 5 6 7 8 9 10 11 12 13 14 Sunny Overcast Rain Overcast Sunny Rain Sunny Overcast Rain Temperature Hot Hot Mild Cool Mild Hot Mild Humidity High Normal Normal High Wind Weak Strong Weak Weak Strong Weak Strong Play. Tennis No No Yes Yes Yes No 66

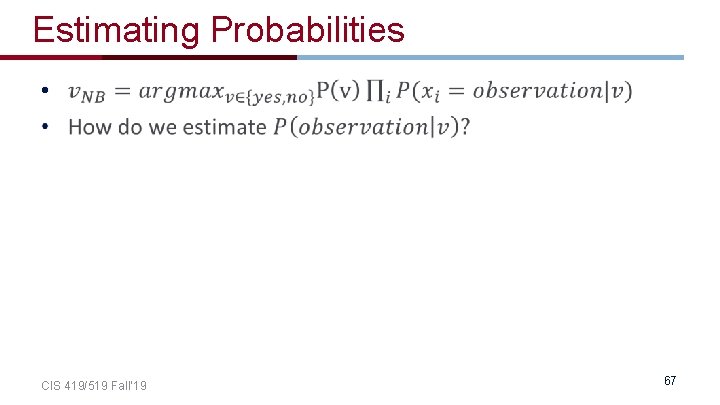

Estimating Probabilities • CIS 419/519 Fall’ 19 67

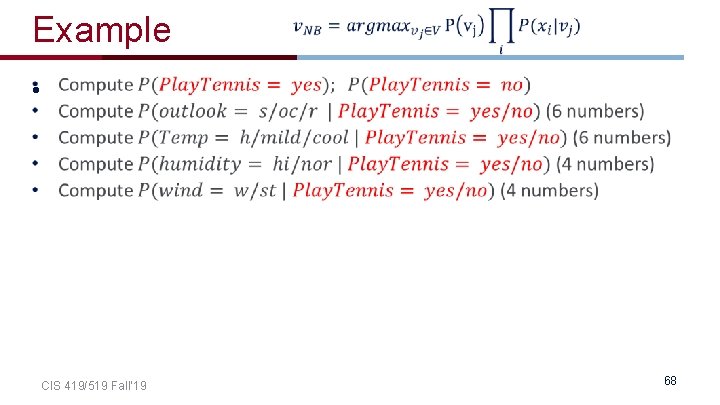

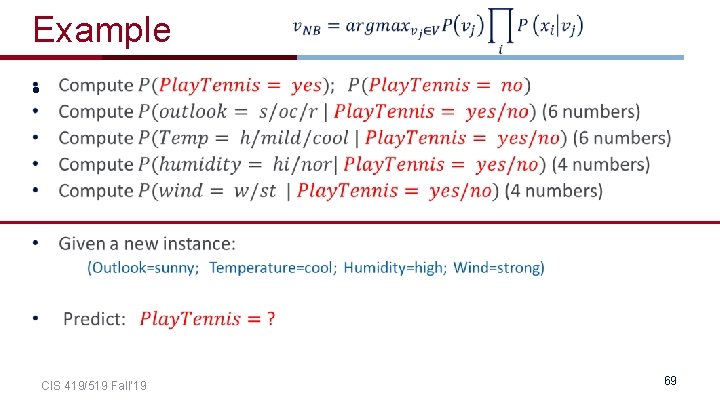

Example • CIS 419/519 Fall’ 19 68

Example • CIS 419/519 Fall’ 19 69

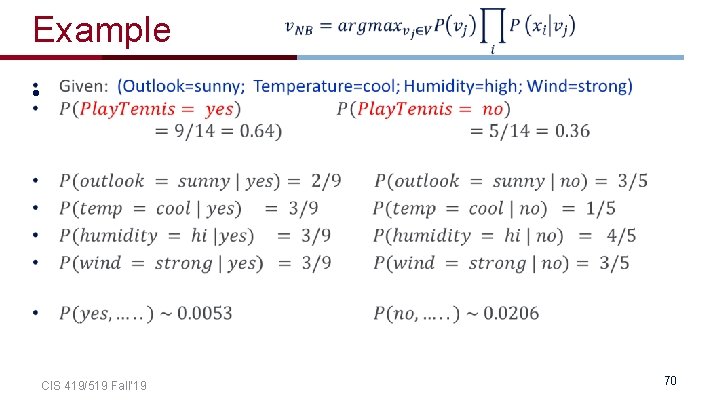

Example • CIS 419/519 Fall’ 19 70

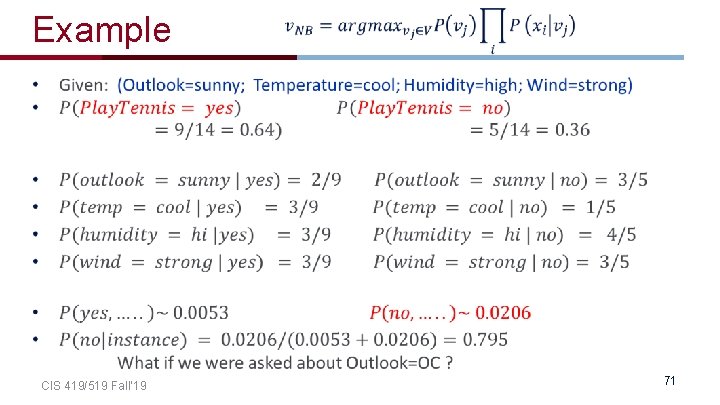

Example CIS 419/519 Fall’ 19 71

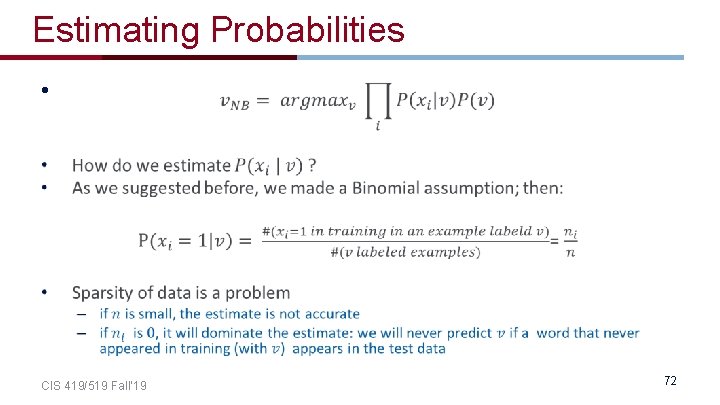

Estimating Probabilities • CIS 419/519 Fall’ 19 72

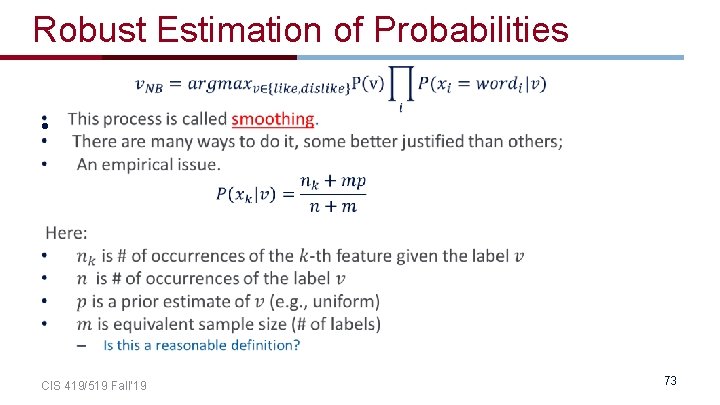

Robust Estimation of Probabilities • CIS 419/519 Fall’ 19 73

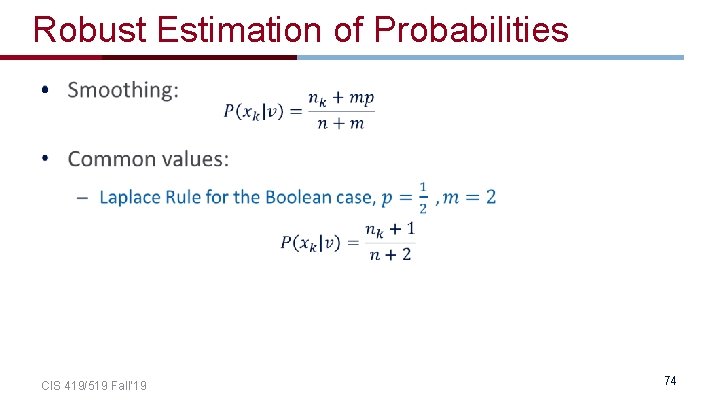

Robust Estimation of Probabilities • CIS 419/519 Fall’ 19 74

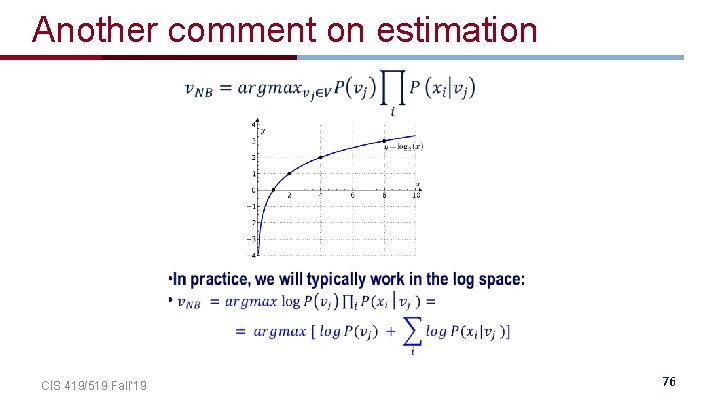

Another comment on estimation CIS 419/519 Fall’ 19 76

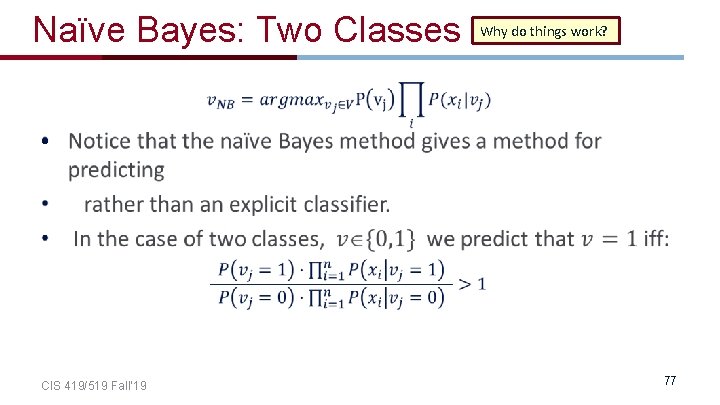

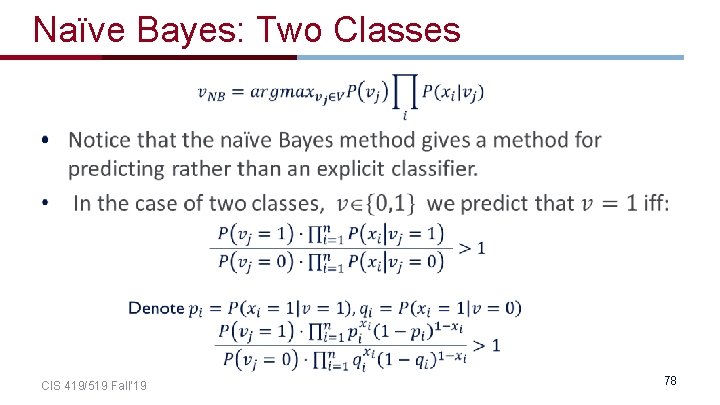

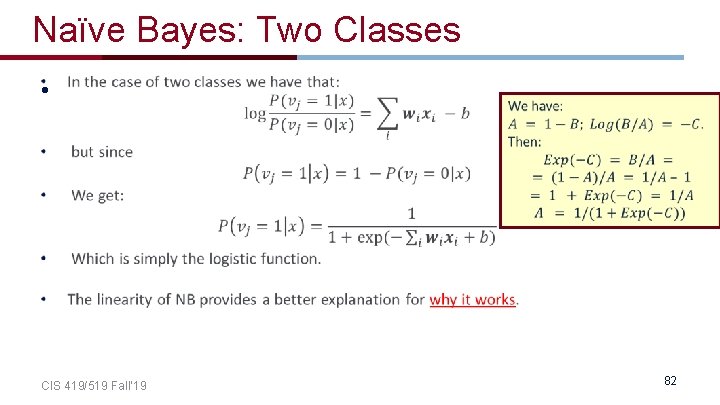

Naïve Bayes: Two Classes Why do things work? • CIS 419/519 Fall’ 19 77

Naïve Bayes: Two Classes • CIS 419/519 Fall’ 19 78

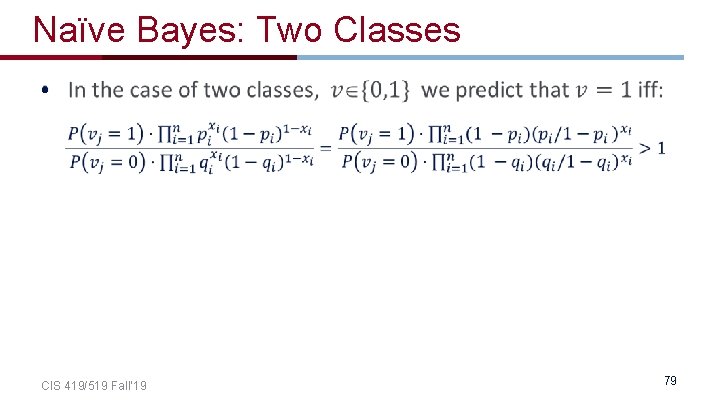

Naïve Bayes: Two Classes • CIS 419/519 Fall’ 19 79

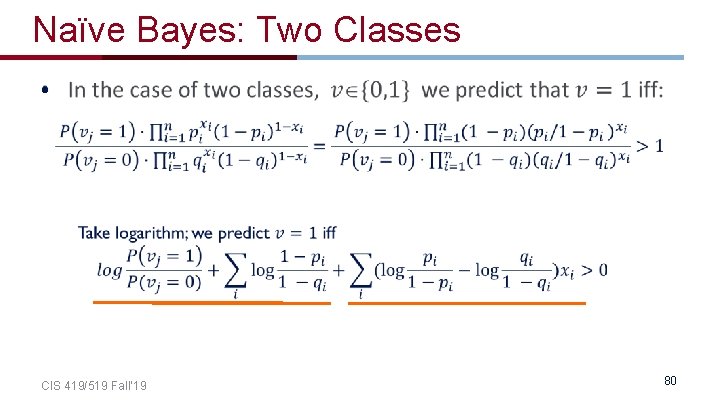

Naïve Bayes: Two Classes • CIS 419/519 Fall’ 19 80

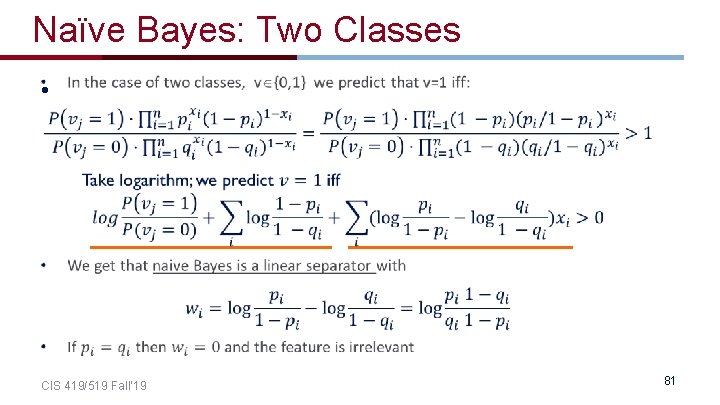

Naïve Bayes: Two Classes • CIS 419/519 Fall’ 19 81

Naïve Bayes: Two Classes • CIS 419/519 Fall’ 19 82

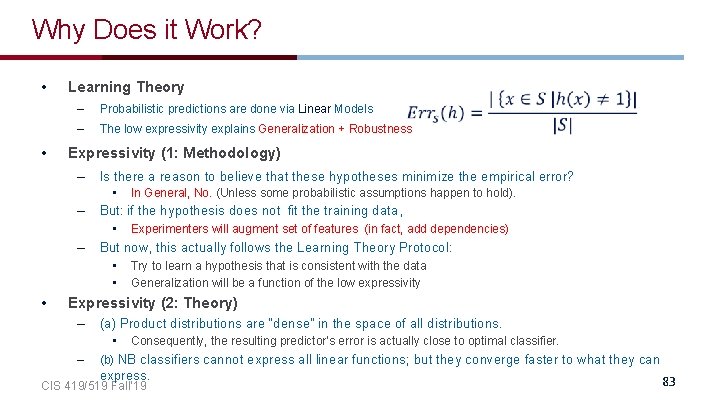

Why Does it Work? • • Learning Theory – Probabilistic predictions are done via Linear Models – The low expressivity explains Generalization + Robustness Expressivity (1: Methodology) – Is there a reason to believe that these hypotheses minimize the empirical error? • – But: if the hypothesis does not fit the training data, • – Experimenters will augment set of features (in fact, add dependencies) But now, this actually follows the Learning Theory Protocol: • • • In General, No. (Unless some probabilistic assumptions happen to hold). Try to learn a hypothesis that is consistent with the data Generalization will be a function of the low expressivity Expressivity (2: Theory) – (a) Product distributions are “dense” in the space of all distributions. • – Consequently, the resulting predictor’s error is actually close to optimal classifier. (b) NB classifiers cannot express all linear functions; but they converge faster to what they can express. CIS 419/519 Fall’ 19 83

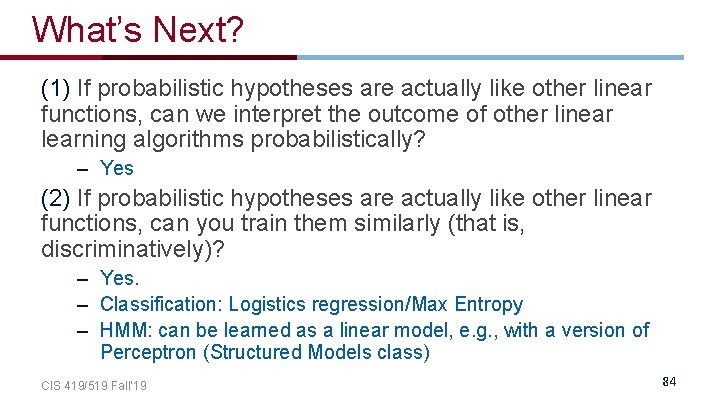

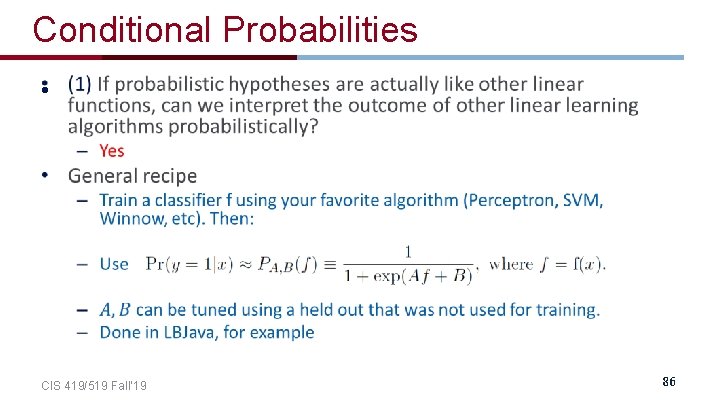

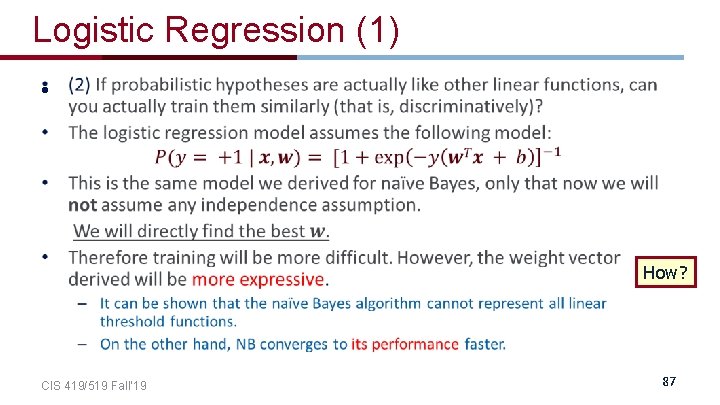

What’s Next? (1) If probabilistic hypotheses are actually like other linear functions, can we interpret the outcome of other linear learning algorithms probabilistically? – Yes (2) If probabilistic hypotheses are actually like other linear functions, can you train them similarly (that is, discriminatively)? – Yes. – Classification: Logistics regression/Max Entropy – HMM: can be learned as a linear model, e. g. , with a version of Perceptron (Structured Models class) CIS 419/519 Fall’ 19 84

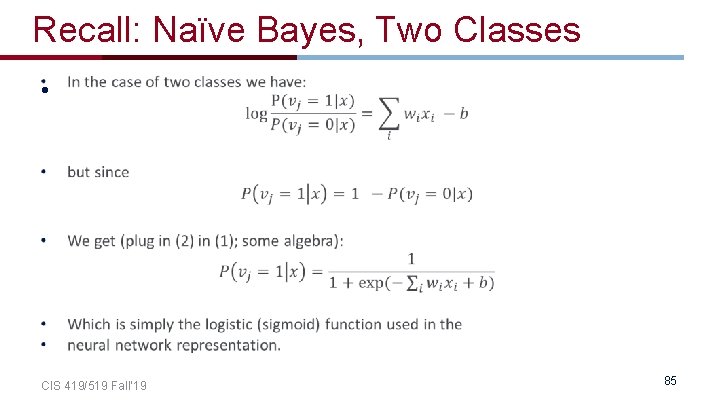

Recall: Naïve Bayes, Two Classes • CIS 419/519 Fall’ 19 85

Conditional Probabilities • CIS 419/519 Fall’ 19 86

Logistic Regression (1) • How? CIS 419/519 Fall’ 19 87

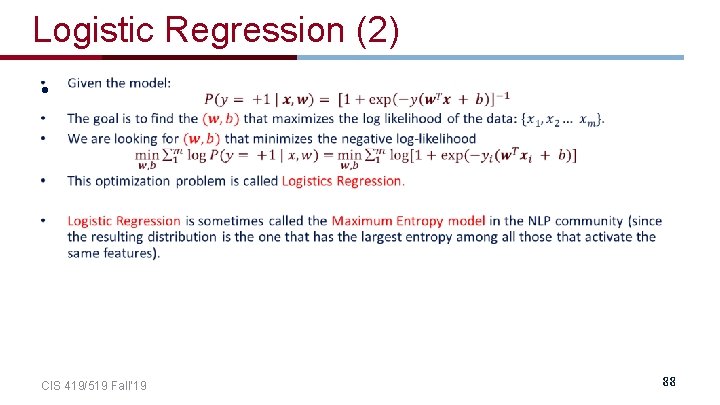

Logistic Regression (2) • CIS 419/519 Fall’ 19 88

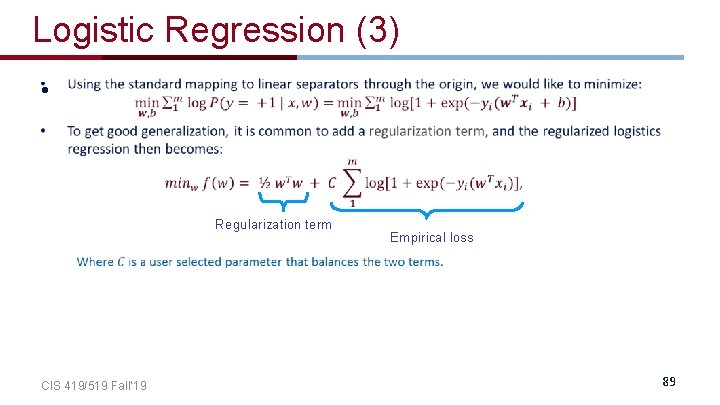

Logistic Regression (3) • Regularization term CIS 419/519 Fall’ 19 Empirical loss 89

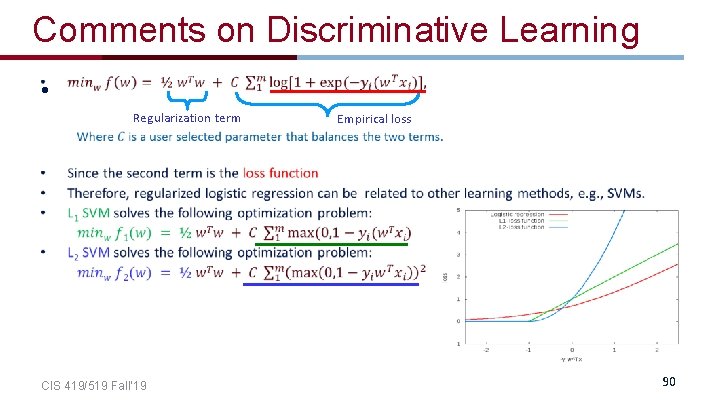

Comments on Discriminative Learning • Regularization term CIS 419/519 Fall’ 19 Empirical loss 90

A few more NB examples CIS 419/519 Fall’ 19 91

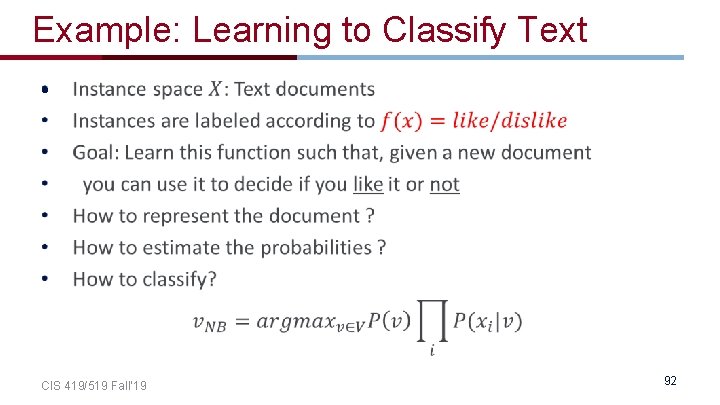

Example: Learning to Classify Text • CIS 419/519 Fall’ 19 92

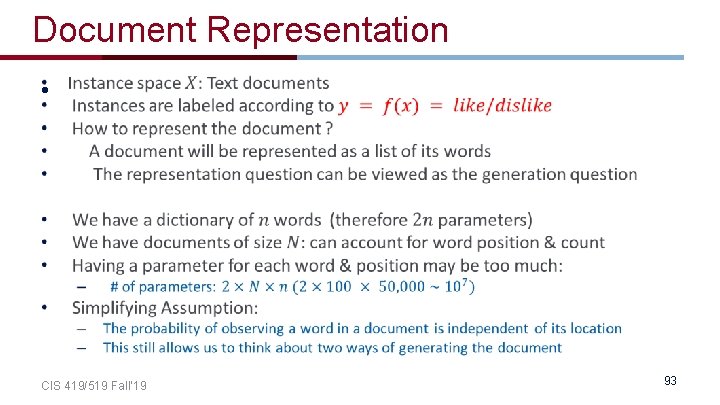

Document Representation • CIS 419/519 Fall’ 19 93

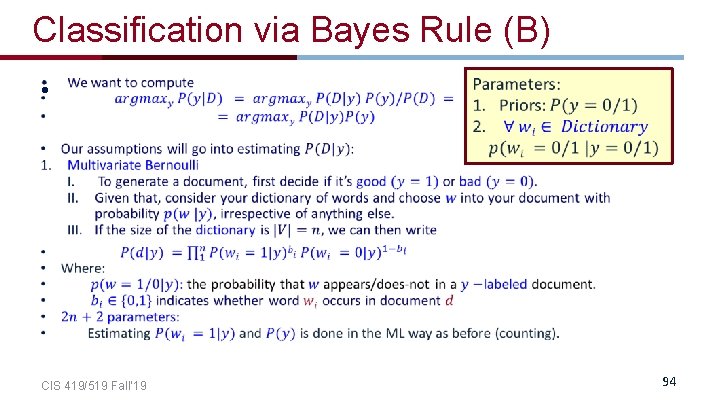

Classification via Bayes Rule (B) • CIS 419/519 Fall’ 19 94

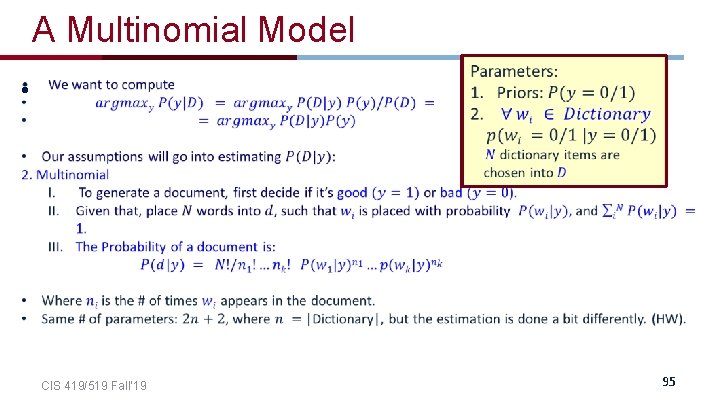

A Multinomial Model • CIS 419/519 Fall’ 19 95

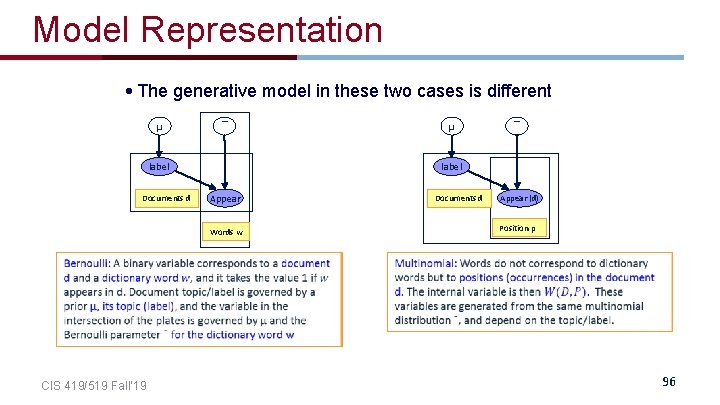

Model Representation • The generative model in these two cases is different µ ¯ label Documents d ¯ label Appear Words w CIS 419/519 Fall’ 19 µ Documents d Appear (d) Position p 96

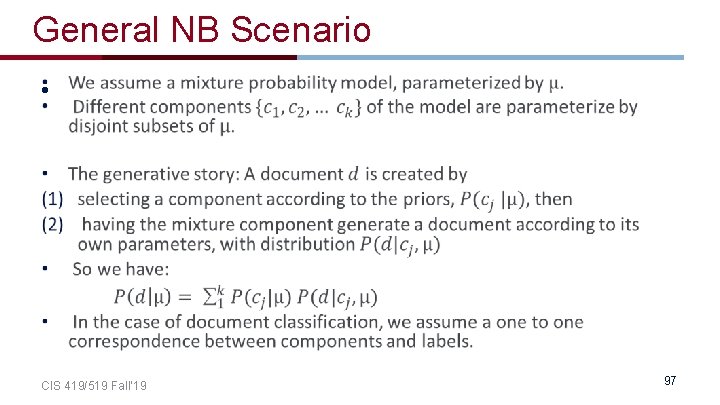

General NB Scenario • CIS 419/519 Fall’ 19 97

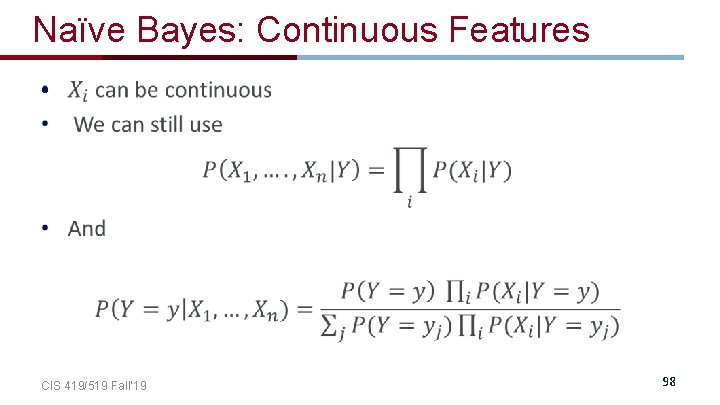

Naïve Bayes: Continuous Features • CIS 419/519 Fall’ 19 98

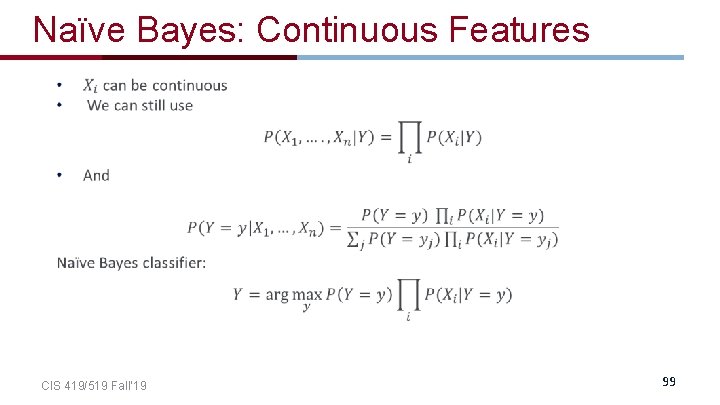

Naïve Bayes: Continuous Features CIS 419/519 Fall’ 19 99

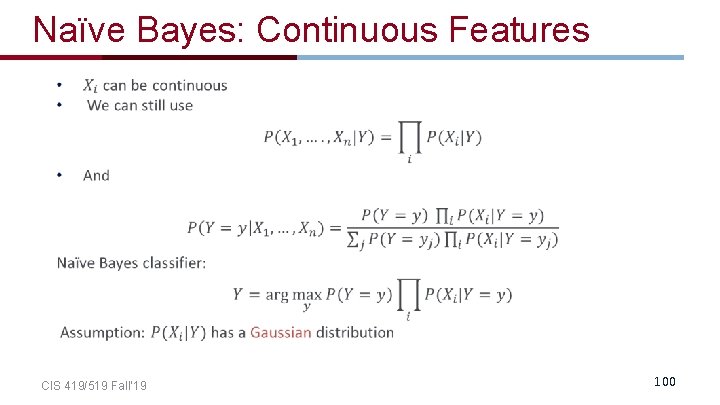

Naïve Bayes: Continuous Features CIS 419/519 Fall’ 19 100

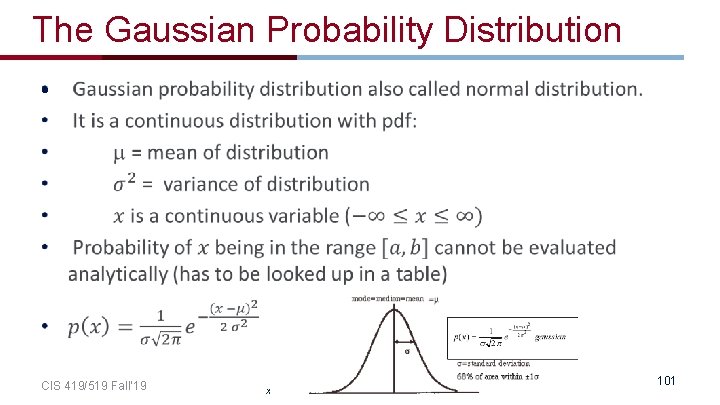

The Gaussian Probability Distribution • CIS 419/519 Fall’ 19 x 101

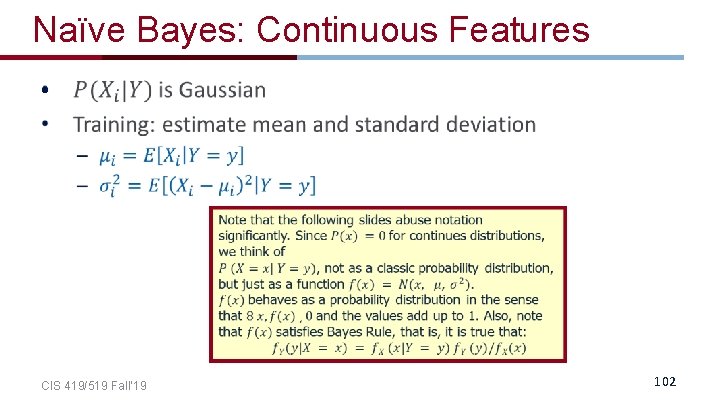

Naïve Bayes: Continuous Features • CIS 419/519 Fall’ 19 102

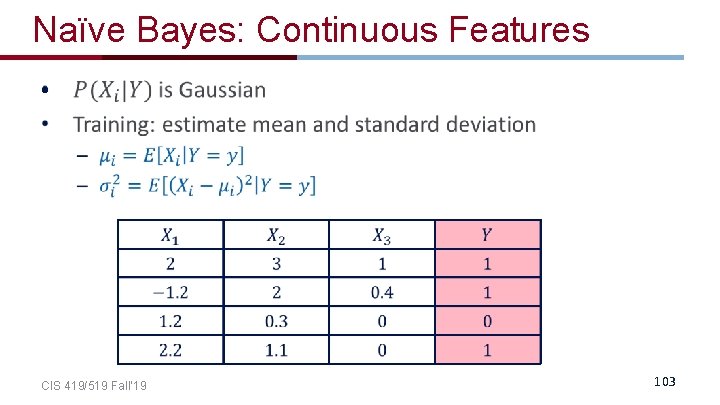

Naïve Bayes: Continuous Features • CIS 419/519 Fall’ 19 103

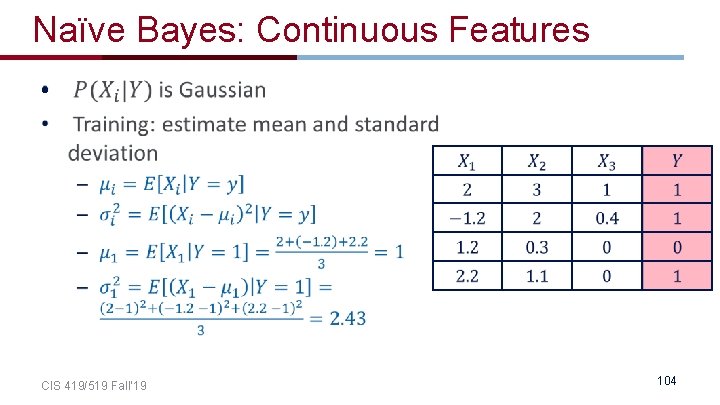

Naïve Bayes: Continuous Features • CIS 419/519 Fall’ 19 104

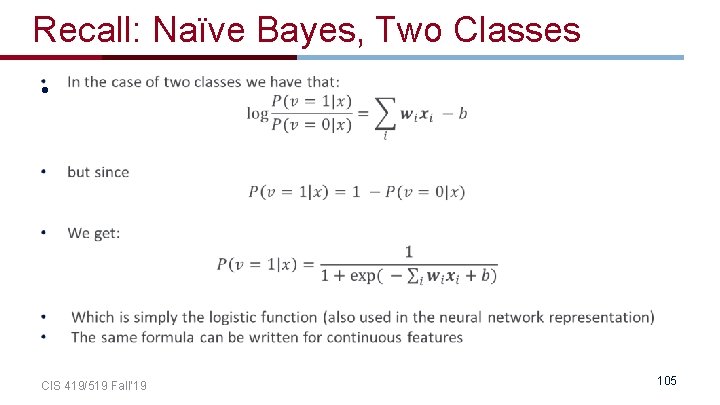

Recall: Naïve Bayes, Two Classes • CIS 419/519 Fall’ 19 105

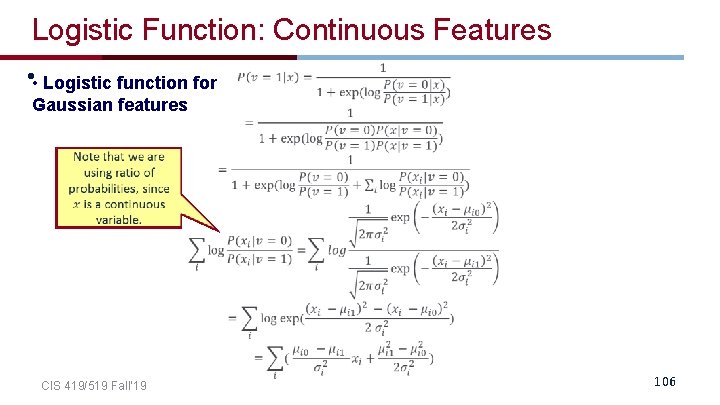

Logistic Function: Continuous Features • • Logistic function for Gaussian features CIS 419/519 Fall’ 19 106

- Slides: 101