Naive Bayes model Comp 221 tutorial 4 assignment

Naive Bayes model Comp 221 tutorial 4 (assignment 1) TA: Zhang Kai

Outline l l l Bayes probability model Naive Bayes classifier Text classification Digit classification Assignment specifications

Naive Bayes classifier l A naive Bayes classifier is a simple probabilistic classifier based on applying Bayes' theorem with strong (naive) independence assumptions, or more specifically, independent feature model.

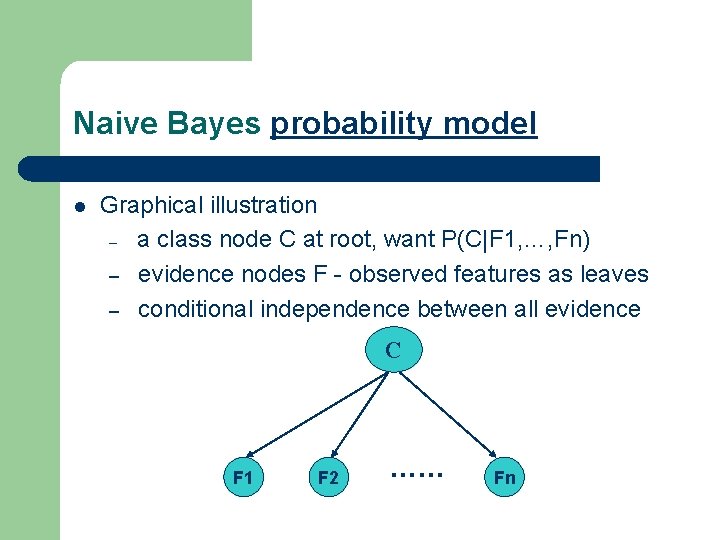

Naive Bayes probability model l Graphical illustration – a class node C at root, want P(C|F 1, …, Fn) – evidence nodes F - observed features as leaves – conditional independence between all evidence C F 1 F 2 …… Fn

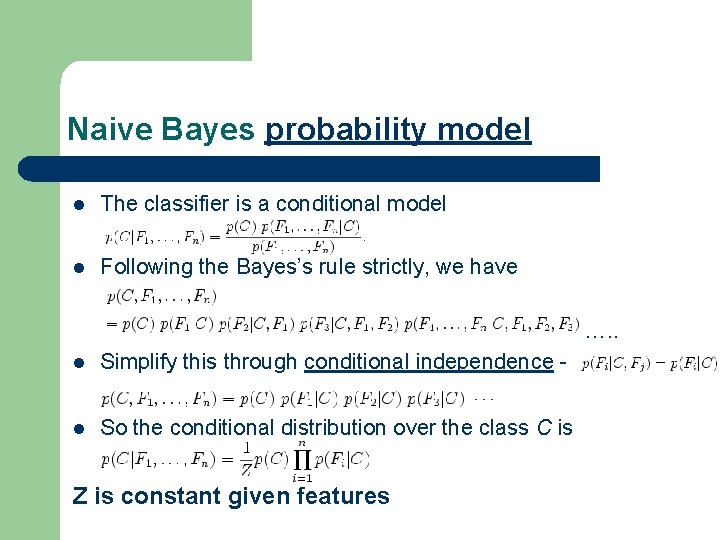

Naive Bayes probability model l The classifier is a conditional model l Following the Bayes’s rule strictly, we have …. . l Simplify this through conditional independence - l So the conditional distribution over the class C is Z is constant given features

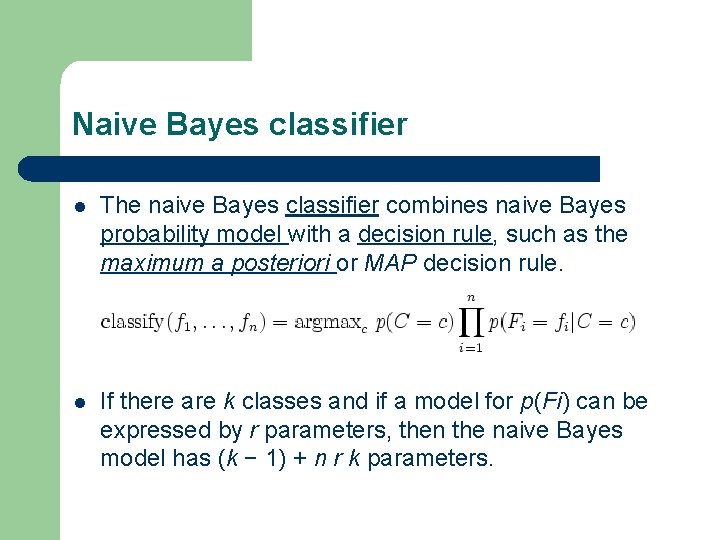

Naive Bayes classifier l The naive Bayes classifier combines naive Bayes probability model with a decision rule, such as the maximum a posteriori or MAP decision rule. l If there are k classes and if a model for p(Fi) can be expressed by r parameters, then the naive Bayes model has (k − 1) + n r k parameters.

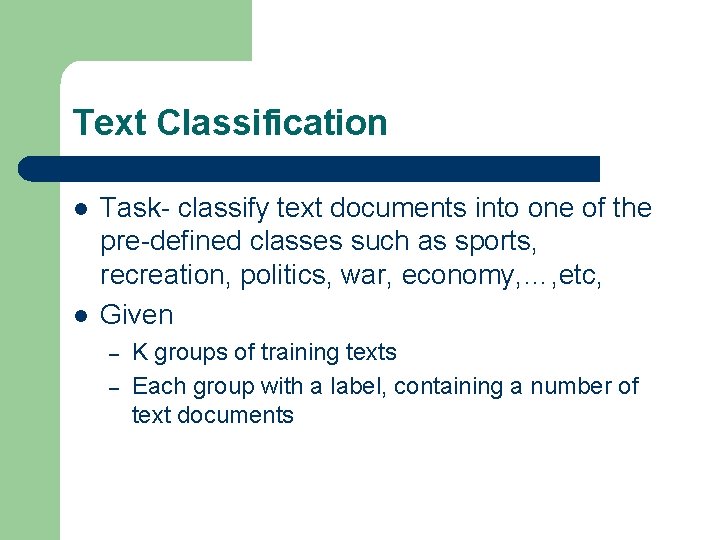

Text Classification l l Task- classify text documents into one of the pre-defined classes such as sports, recreation, politics, war, economy, …, etc, Given – – K groups of training texts Each group with a label, containing a number of text documents

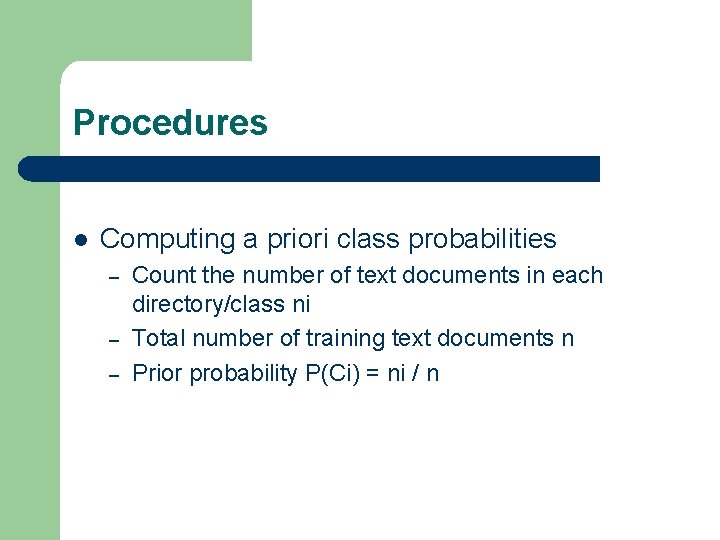

Procedures l Computing a priori class probabilities – – – Count the number of text documents in each directory/class ni Total number of training text documents n Prior probability P(Ci) = ni / n

l Computing class conditional word likelihoods – – Suppose we have chosen m key words, denoted as w 1, w 2, …, wm Count the number of times – cji, that word wj occurs in text class Ci Count the number of words – ni, in class Ci Class conditional probaiblity is P(wj | Ci) = cji / ni

l Classifying a new message d – – – Compute the features of d, i. e. , the number of times word wj occuring in d P(Ci|d) = P(Ci|w 1, w 2, …, wd) œ P(Ci)P(w 1|Ci) (w 2|Ci)… (wd|Ci) Assign d to the class I that has the maximum posterior probability

Attentions l Preprocessing – – eliminating punctuation eliminating numerals converting all characters to lowercase eliminating all words with less than 4 letters

l l You need to build a large vocabulary and separately counts how often a given word was encountered. The vocabulary can be built using a hash table. How to choose the key words wi’s? – For each class, you can pick out the first k words that occurs most frequently – For all the training data, pick out the first k works that appears most frequently – Union all these words as key-words/features

l Zero probabilities must be avoided (why? ) – – – l This occurs when one word has been encountered only in one class, but not others. In this case the class conditional probability is zero To prevent this, re-estimate the conditional prob as P(wj|Ci) =ε/ni with ni a small, tunable number Convert all probabilities to logprobabilities (loglikelihoods) to avoid exceeding the dynamic range of the computer representation of real numbers

Digit Classification (assignment 1) l l l USPS data set contains normalized handwritten digits, scanned by the U. S. Postal Service. 16 x 16 grayscale images 7291 training and 2007 test observations Format: each line consists of the digit id (0 -9) followed by the 256 grayscale values. The test set is notoriously "difficult“ Download it from here

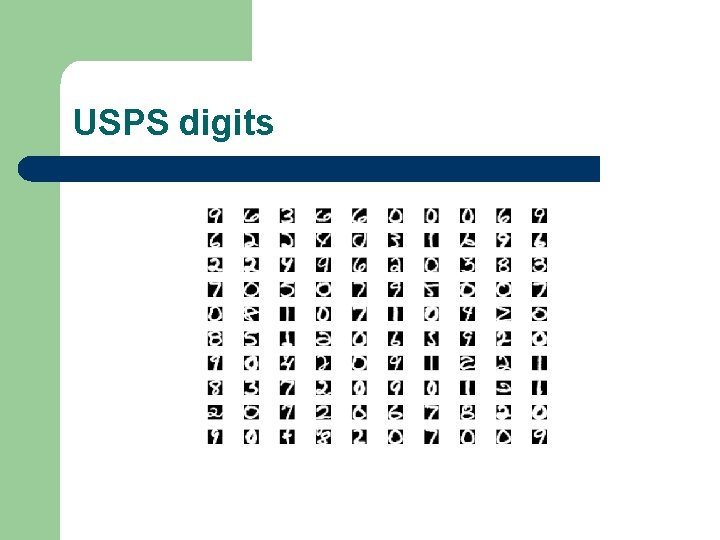

USPS digits

Setting l l Classes: 0~9 Features: each pixel is used as a feature, so there are 16 by 16, i. e. , 256 features – – l Rather than pixel gray values, we can use more informative features, such as (detected) corners, crosses, slope, gravity center, etc. How to quantize the real valued features. Task: classify new digits into one of the classes

Specifications l (preliminary, assignment 1 will come soon on Friday) l You can either use matlab or c++ for programming – – – If you use c++, you should have created the class and its members/functions as required If you use matlab, you should have written functions as required Input and output format will also be fixed in the assignemnt

Files l Matlab file to read the USPS data – – – l >[n, digit, label] = read_usps(path, file); path = ‘c: . . . ’; file = ‘usps_train. txt’; n: number of digits/images obtained Digit: a 16 by n matrix; Label: label of each image; You may want to use it to read the USPS data

l Matlab file to output a series of files – – – l >output(str, i 1, i 2); str: common string part ; i 1 and i 2 is the starting and ending integers You may want to use it to write the digits into separate files with the naming system you like

- Slides: 19