Generative Topic Models Outline Naive Bayes model Mixture

Generative Topic Models

Outline • • Naive Bayes model Mixture Models PLSA LDA 2 / 57

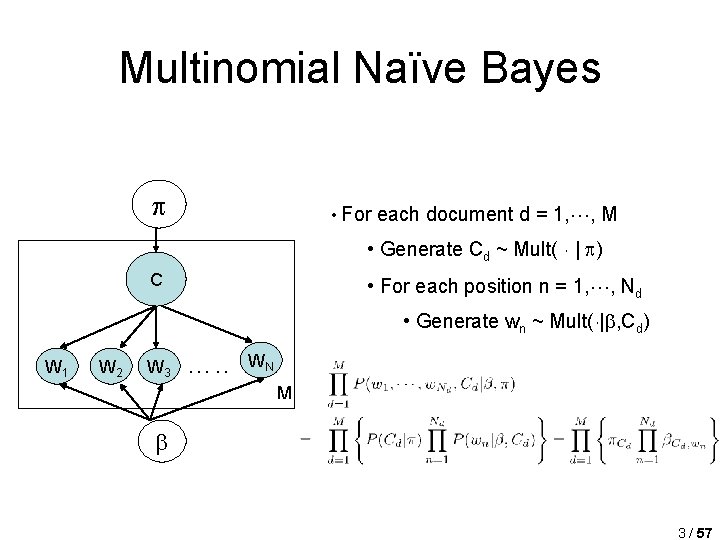

Multinomial Naïve Bayes • For each document d = 1, , M • Generate Cd ~ Mult( ¢ | ) C • For each position n = 1, , Nd • Generate wn ~ Mult(¢| , Cd) W 1 W 2 W 3 …. . WN M 3 / 57

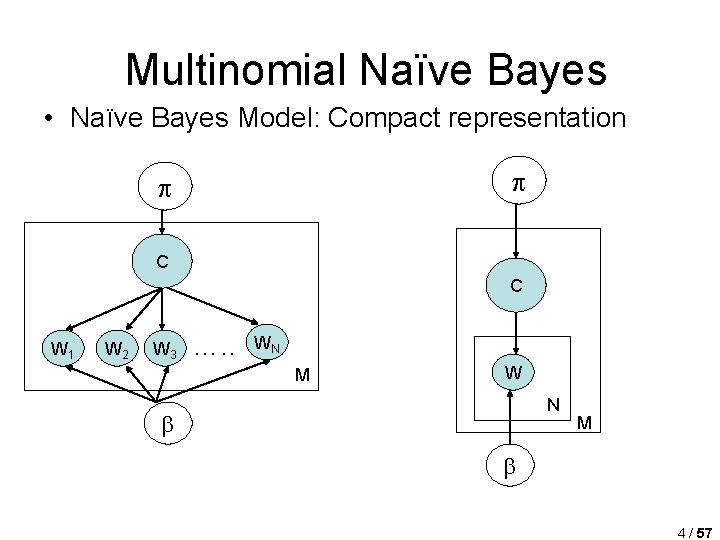

Multinomial Naïve Bayes • Naïve Bayes Model: Compact representation C C W 1 W 2 W 3 …. . WN M W N M 4 / 57

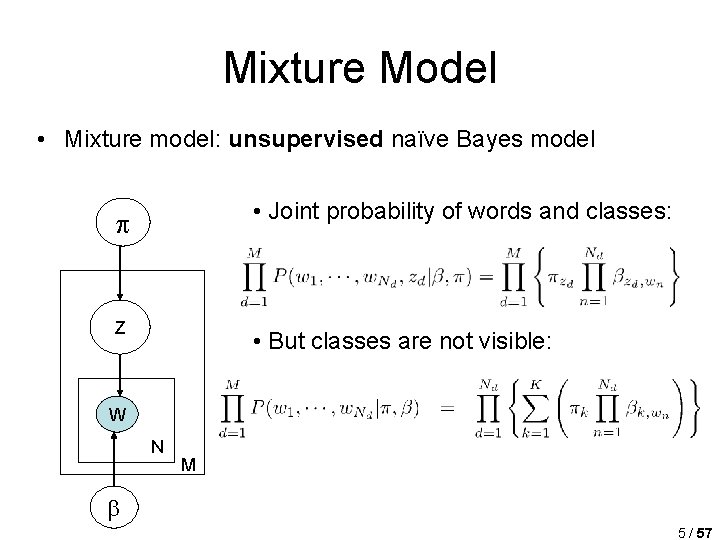

Mixture Model • Mixture model: unsupervised naïve Bayes model • Joint probability of words and classes: C Z • But classes are not visible: W N M 5 / 57

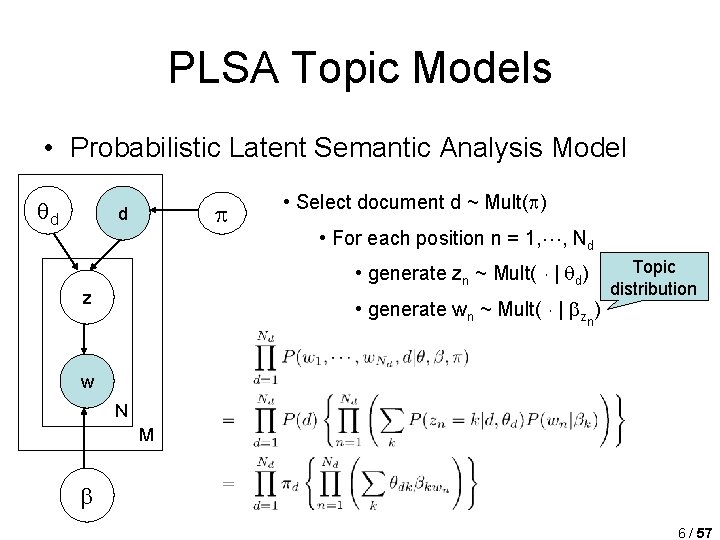

PLSA Topic Models • Probabilistic Latent Semantic Analysis Model d d • Select document d ~ Mult( ) • For each position n = 1, , Nd • generate zn ~ Mult( ¢ | d) z • generate wn ~ Mult( ¢ | zn) Topic distribution w N M 6 / 57

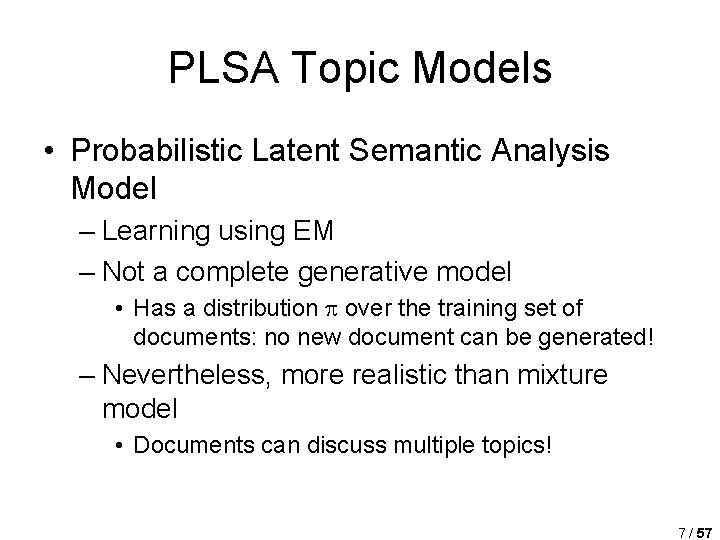

PLSA Topic Models • Probabilistic Latent Semantic Analysis Model – Learning using EM – Not a complete generative model • Has a distribution over the training set of documents: no new document can be generated! – Nevertheless, more realistic than mixture model • Documents can discuss multiple topics! 7 / 57

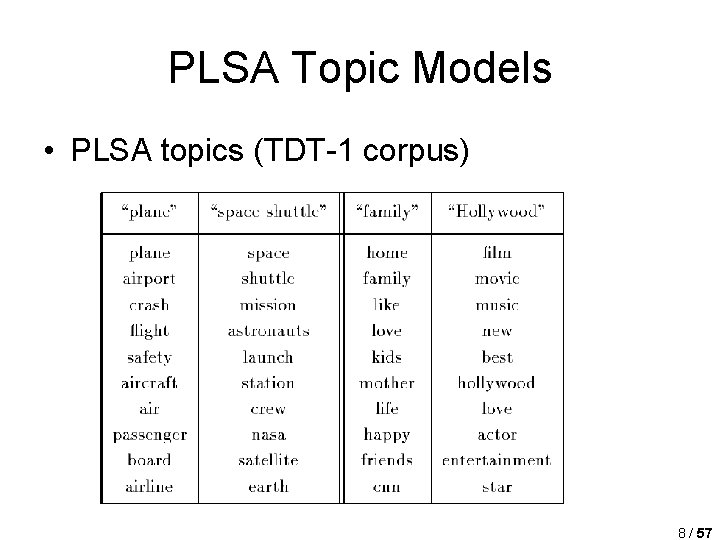

PLSA Topic Models • PLSA topics (TDT-1 corpus) 8 / 57

LDA Topic Models 9 / 57

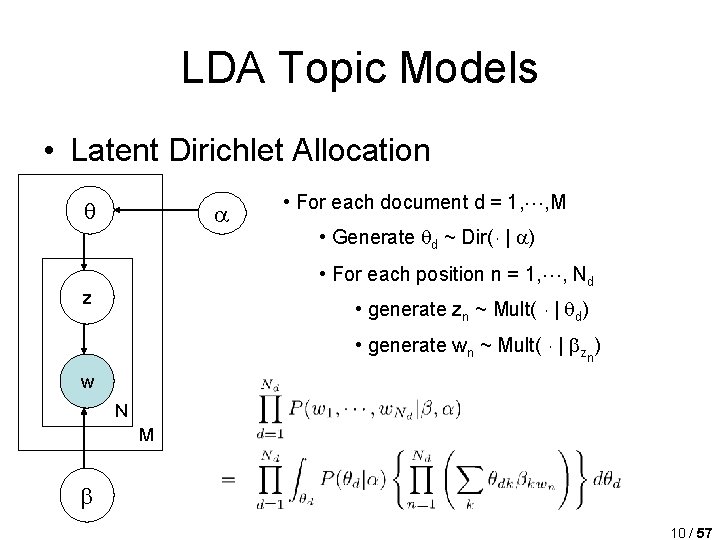

LDA Topic Models • Latent Dirichlet Allocation • For each document d = 1, , M • Generate d ~ Dir(¢ | ) • For each position n = 1, , Nd z • generate zn ~ Mult( ¢ | d) • generate wn ~ Mult( ¢ | zn) w N M 10 / 57

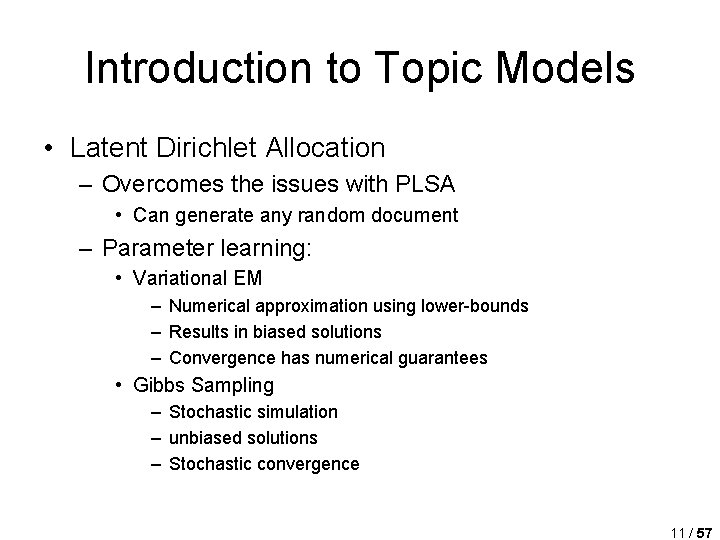

Introduction to Topic Models • Latent Dirichlet Allocation – Overcomes the issues with PLSA • Can generate any random document – Parameter learning: • Variational EM – Numerical approximation using lower-bounds – Results in biased solutions – Convergence has numerical guarantees • Gibbs Sampling – Stochastic simulation – unbiased solutions – Stochastic convergence 11 / 57

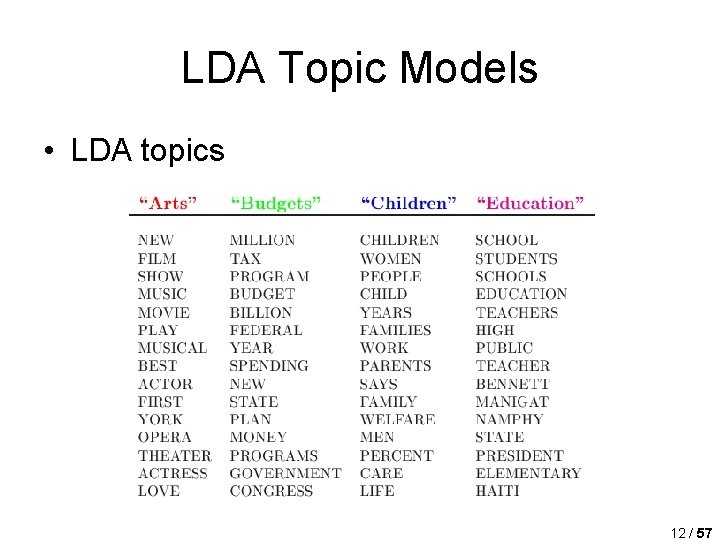

LDA Topic Models • LDA topics 12 / 57

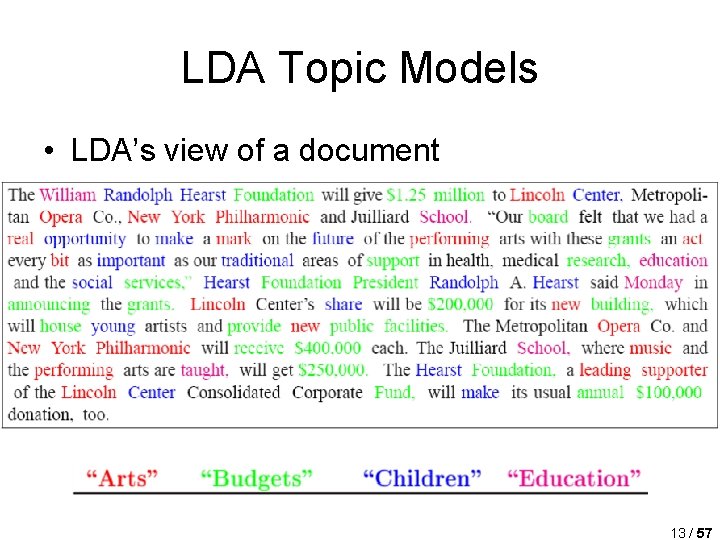

LDA Topic Models • LDA’s view of a document 13 / 57

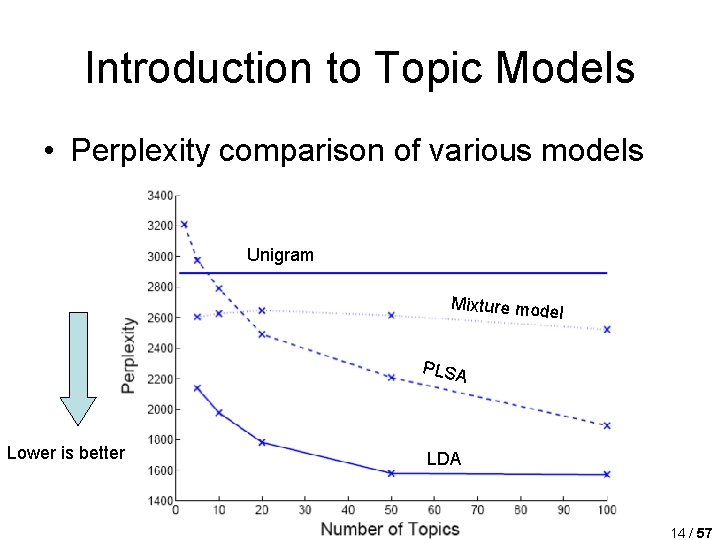

Introduction to Topic Models • Perplexity comparison of various models Unigram Mixture mode l PLSA Lower is better LDA 14 / 57

- Slides: 14