Data Mining and Machine Learning Naive Bayes David

Data Mining and Machine Learning Naive Bayes David Corne, HWU dwcorne@gmail. com

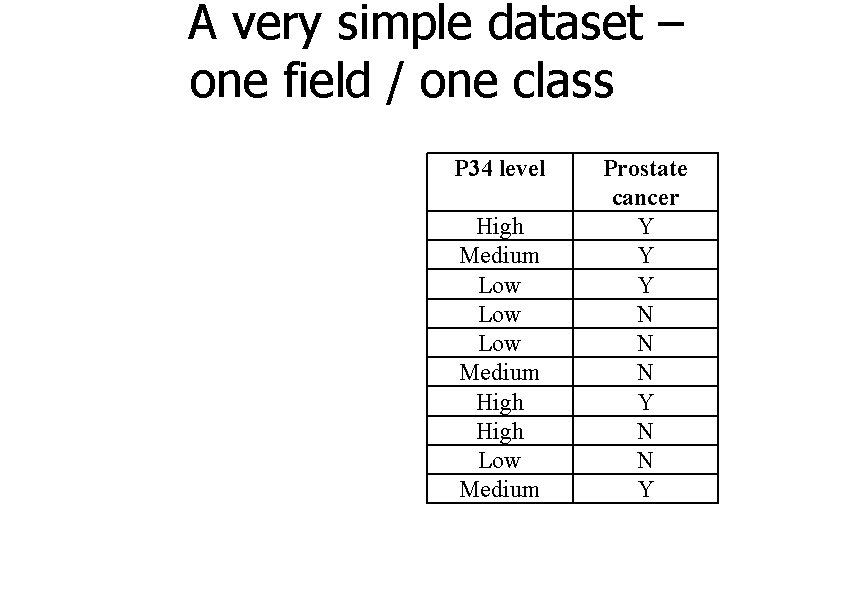

A very simple dataset – one field / one class P 34 level High Medium Low Low Medium High Low Medium Prostate cancer Y Y Y N N N Y

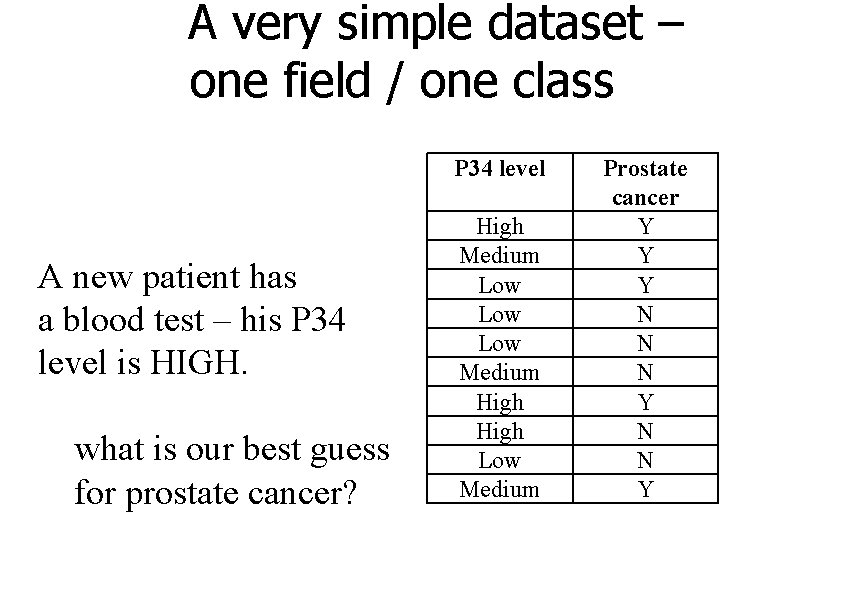

A very simple dataset – one field / one class P 34 level A new patient has a blood test – his P 34 level is HIGH. what is our best guess for prostate cancer? High Medium Low Low Medium High Low Medium Prostate cancer Y Y Y N N N Y

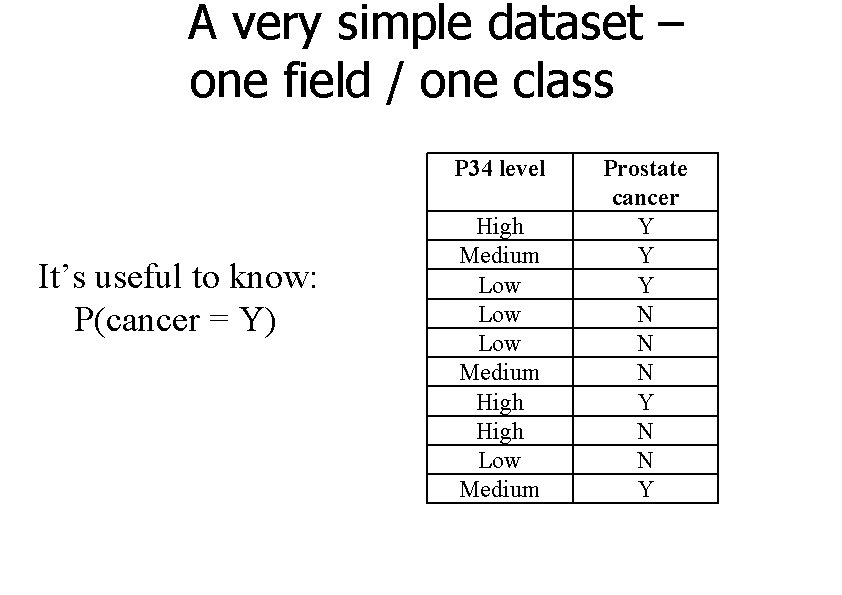

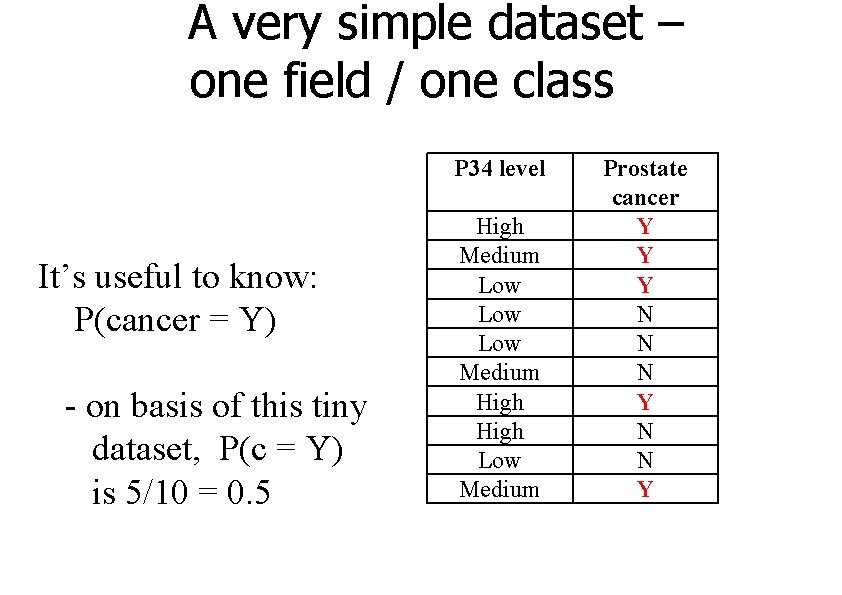

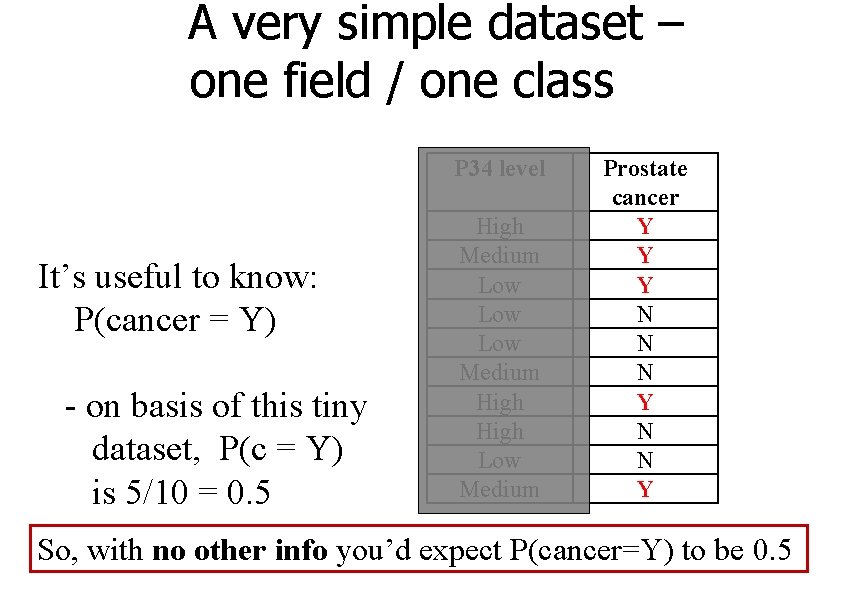

A very simple dataset – one field / one class P 34 level It’s useful to know: P(cancer = Y) High Medium Low Low Medium High Low Medium Prostate cancer Y Y Y N N N Y

A very simple dataset – one field / one class P 34 level It’s useful to know: P(cancer = Y) - on basis of this tiny dataset, P(c = Y) is 5/10 = 0. 5 High Medium Low Low Medium High Low Medium Prostate cancer Y Y Y N N N Y

A very simple dataset – one field / one class P 34 level It’s useful to know: P(cancer = Y) - on basis of this tiny dataset, P(c = Y) is 5/10 = 0. 5 High Medium Low Low Medium High Low Medium Prostate cancer Y Y Y N N N Y So, with no other info you’d expect P(cancer=Y) to be 0. 5

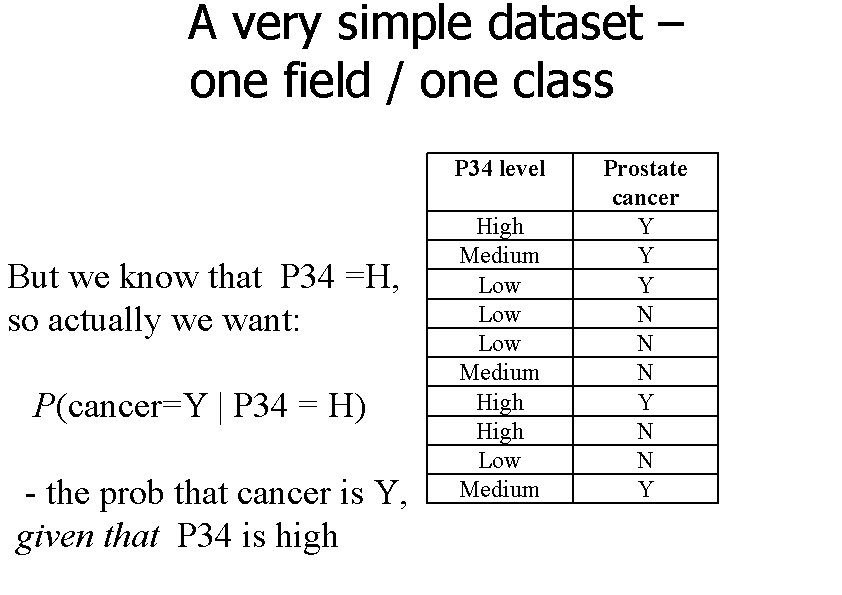

A very simple dataset – one field / one class P 34 level But we know that P 34 =H, so actually we want: P(cancer=Y | P 34 = H) - the prob that cancer is Y, given that P 34 is high High Medium Low Low Medium High Low Medium Prostate cancer Y Y Y N N N Y

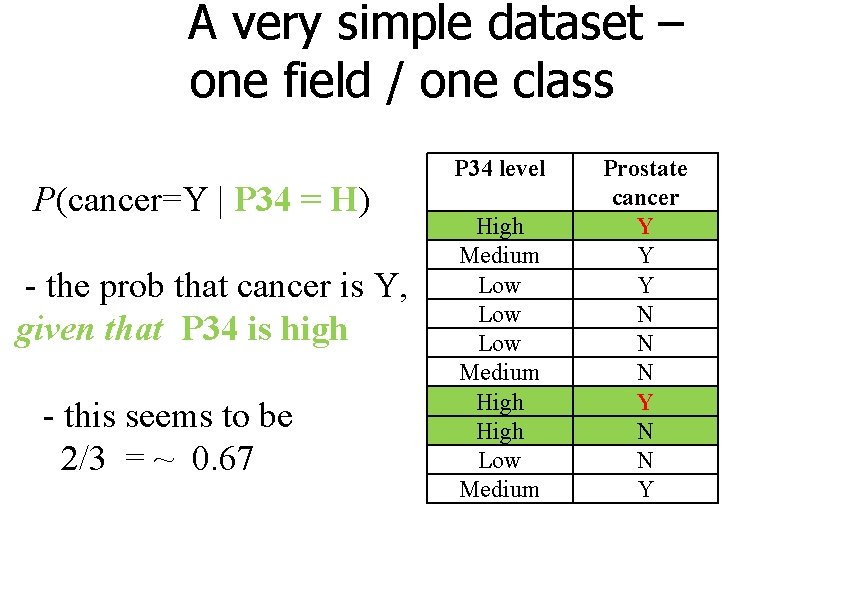

A very simple dataset – one field / one class P(cancer=Y | P 34 = H) - the prob that cancer is Y, given that P 34 is high - this seems to be 2/3 = ~ 0. 67 P 34 level High Medium Low Low Medium High Low Medium Prostate cancer Y Y Y N N N Y

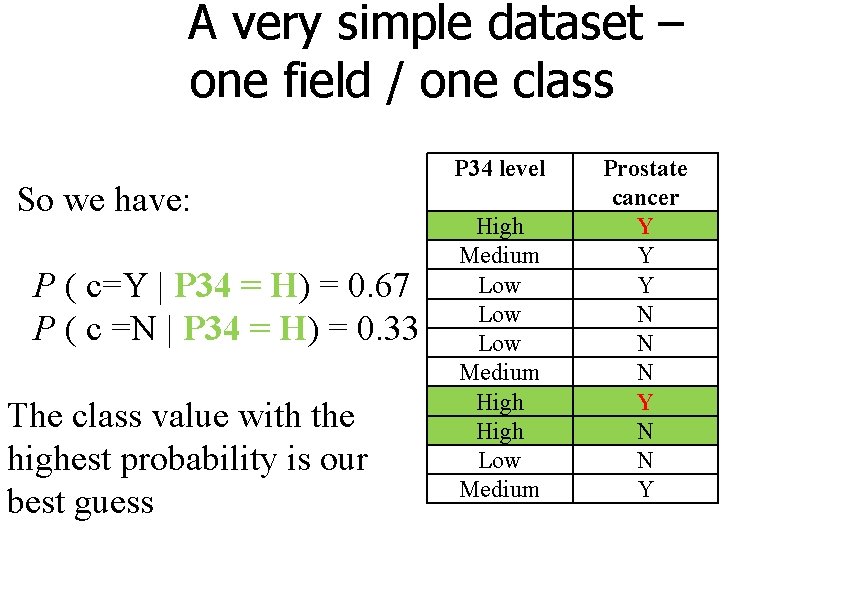

A very simple dataset – one field / one class So we have: P ( c=Y | P 34 = H) = 0. 67 P ( c =N | P 34 = H) = 0. 33 The class value with the highest probability is our best guess P 34 level High Medium Low Low Medium High Low Medium Prostate cancer Y Y Y N N N Y

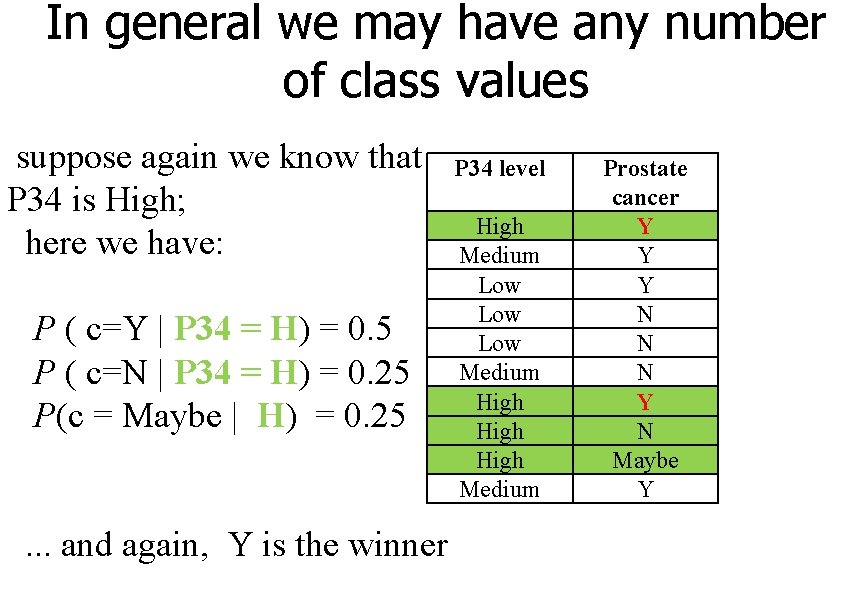

In general we may have any number of class values suppose again we know that P 34 is High; here we have: P ( c=Y | P 34 = H) = 0. 5 P ( c=N | P 34 = H) = 0. 25 P(c = Maybe | H) = 0. 25. . . and again, Y is the winner P 34 level High Medium Low Low Medium High Medium Prostate cancer Y Y Y N N N Y N Maybe Y

That is the essence of Naive Bayes, but: the probability calculations are much trickier when there are >1 fields so we make a ‘Naive’ assumption that makes it simpler

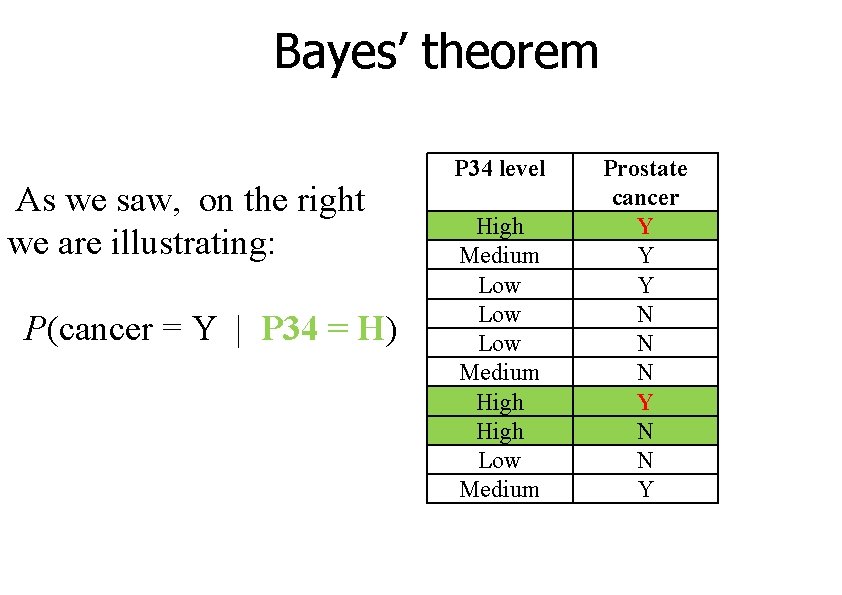

Bayes’ theorem As we saw, on the right we are illustrating: P(cancer = Y | P 34 = H) P 34 level High Medium Low Low Medium High Low Medium Prostate cancer Y Y Y N N N Y

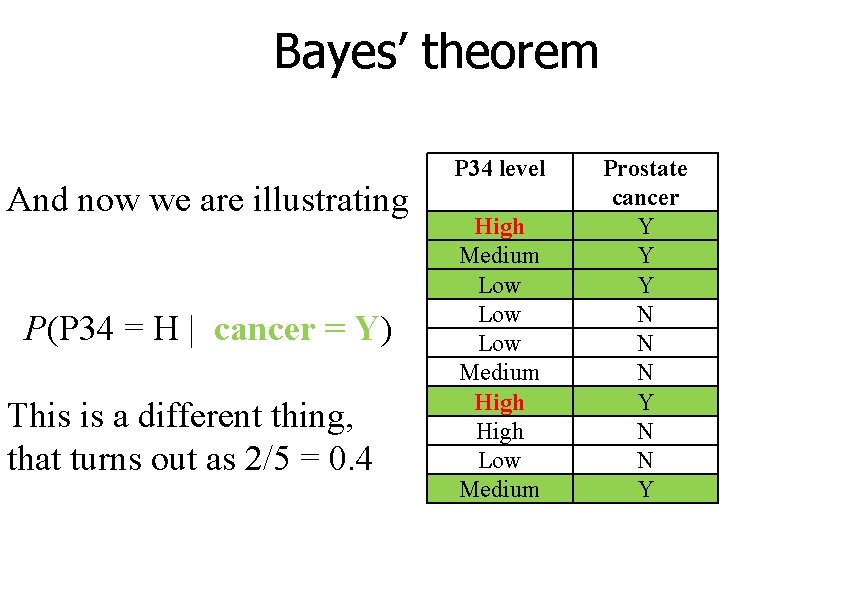

Bayes’ theorem And now we are illustrating P(P 34 = H | cancer = Y) This is a different thing, that turns out as 2/5 = 0. 4 P 34 level High Medium Low Low Medium High Low Medium Prostate cancer Y Y Y N N N Y

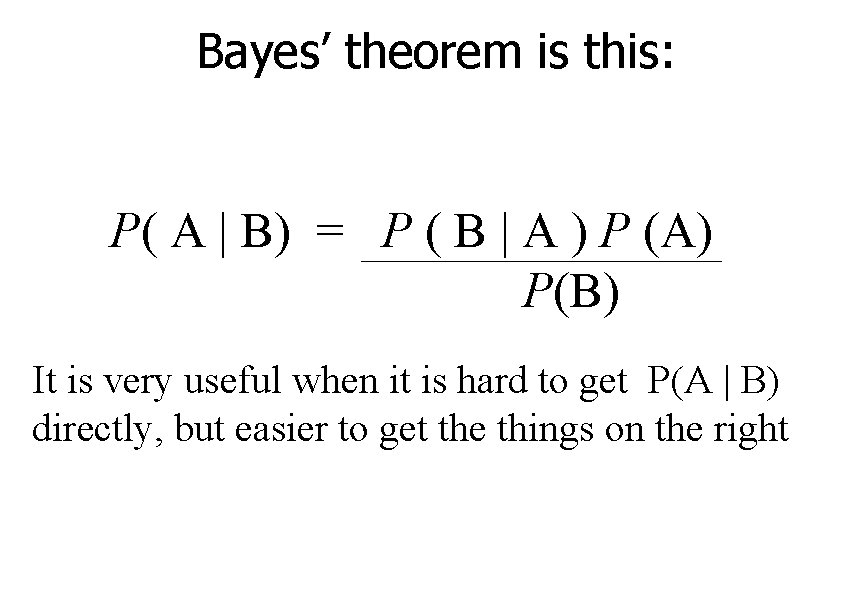

Bayes’ theorem is this: P( A | B) = P ( B | A ) P (A) P(B) It is very useful when it is hard to get P(A | B) directly, but easier to get the things on the right

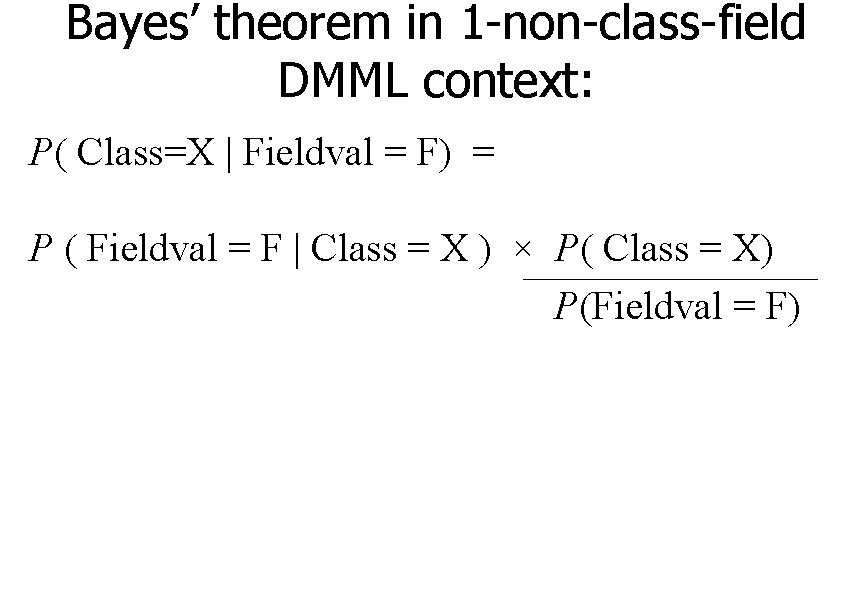

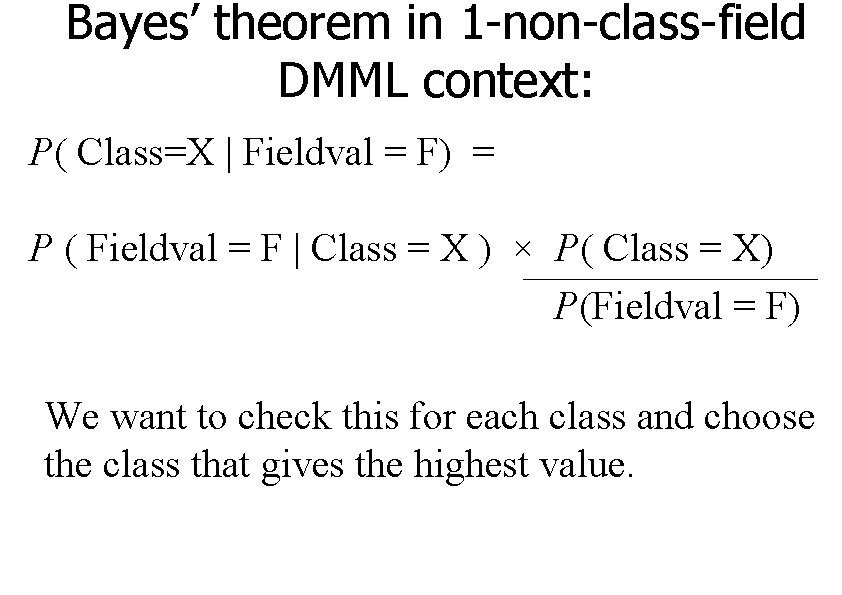

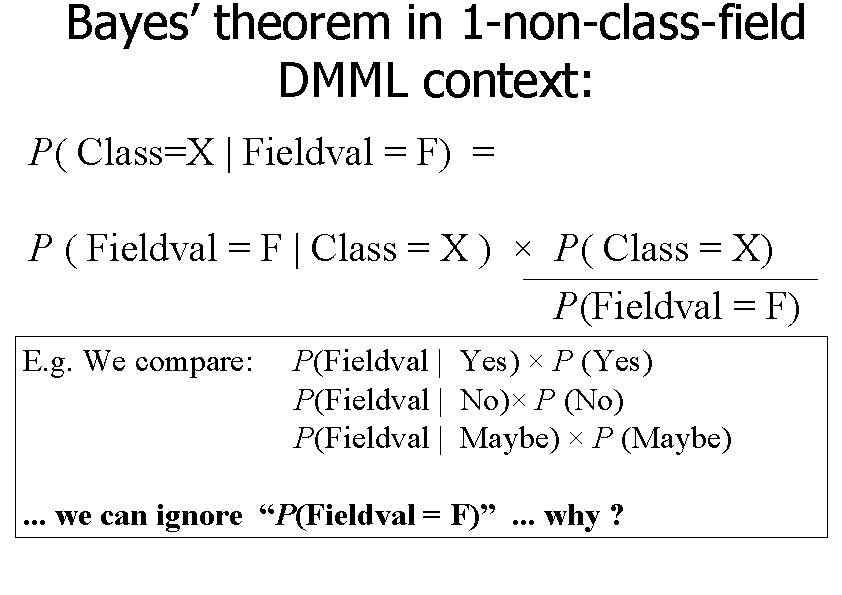

Bayes’ theorem in 1 -non-class-field DMML context: P( Class=X | Fieldval = F) = P ( Fieldval = F | Class = X ) × P( Class = X) P(Fieldval = F)

Bayes’ theorem in 1 -non-class-field DMML context: P( Class=X | Fieldval = F) = P ( Fieldval = F | Class = X ) × P( Class = X) P(Fieldval = F) We want to check this for each class and choose the class that gives the highest value.

Bayes’ theorem in 1 -non-class-field DMML context: P( Class=X | Fieldval = F) = P ( Fieldval = F | Class = X ) × P( Class = X) P(Fieldval = F) E. g. We compare: P(Fieldval | Yes) × P (Yes) P(Fieldval | No)× P (No) P(Fieldval | Maybe) × P (Maybe) . . . we can ignore “P(Fieldval = F)”. . . why ?

and that was Exactly how we do Naive Bayes for a 1 -field dataset

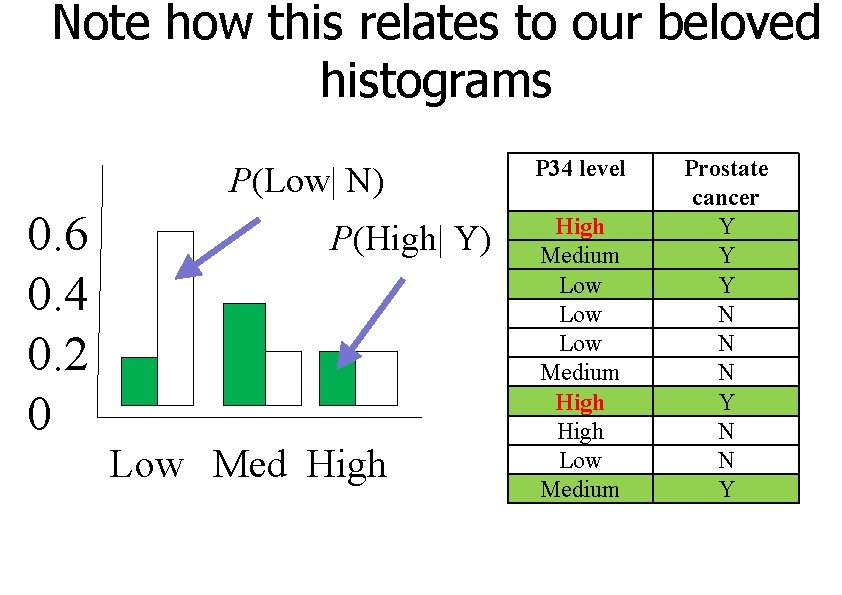

Note how this relates to our beloved histograms P(Low| N) 0. 6 0. 4 0. 2 0 P(High| Y) Low Med High P 34 level High Medium Low Low Medium High Low Medium Prostate cancer Y Y Y N N N Y

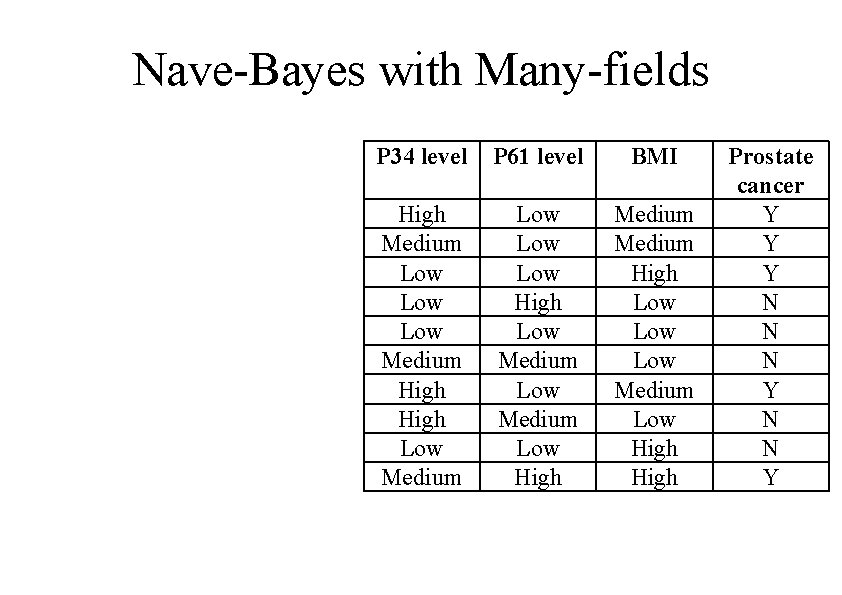

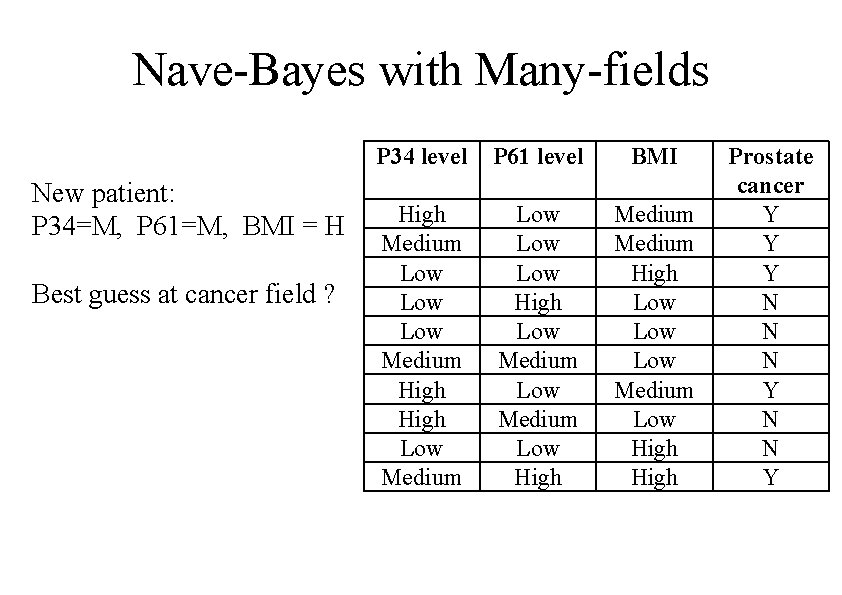

Nave-Bayes with Many-fields P 34 level P 61 level BMI High Medium Low Low Medium High Low Medium Low Low High Low Medium Low High Medium High Low Low Medium Low High Prostate cancer Y Y Y N N N Y

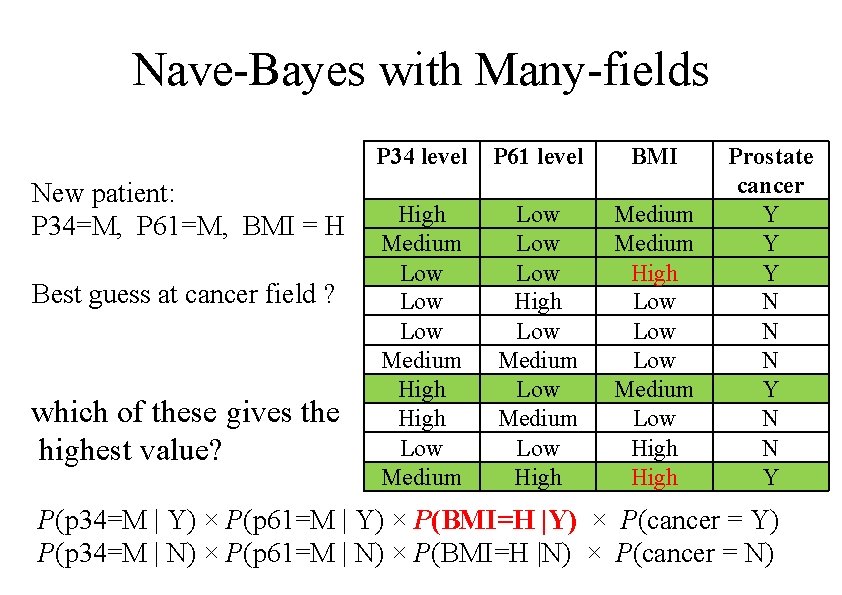

Nave-Bayes with Many-fields New patient: P 34=M, P 61=M, BMI = H Best guess at cancer field ? P 34 level P 61 level BMI High Medium Low Low Medium High Low Medium Low Low High Low Medium Low High Medium High Low Low Medium Low High Prostate cancer Y Y Y N N N Y

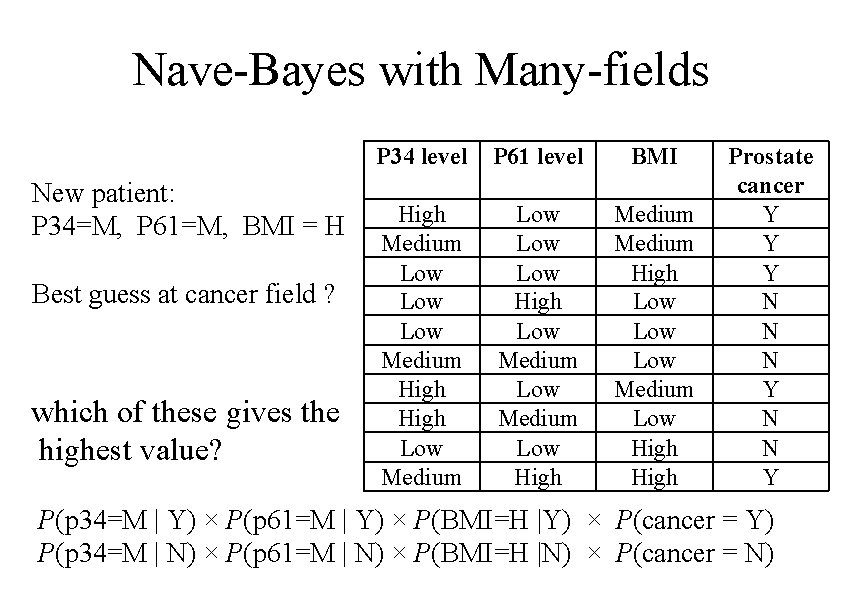

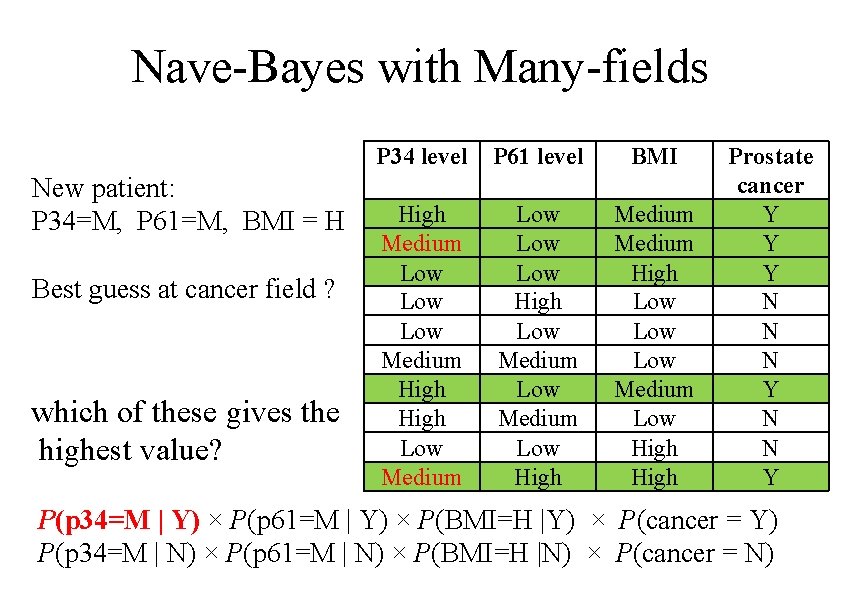

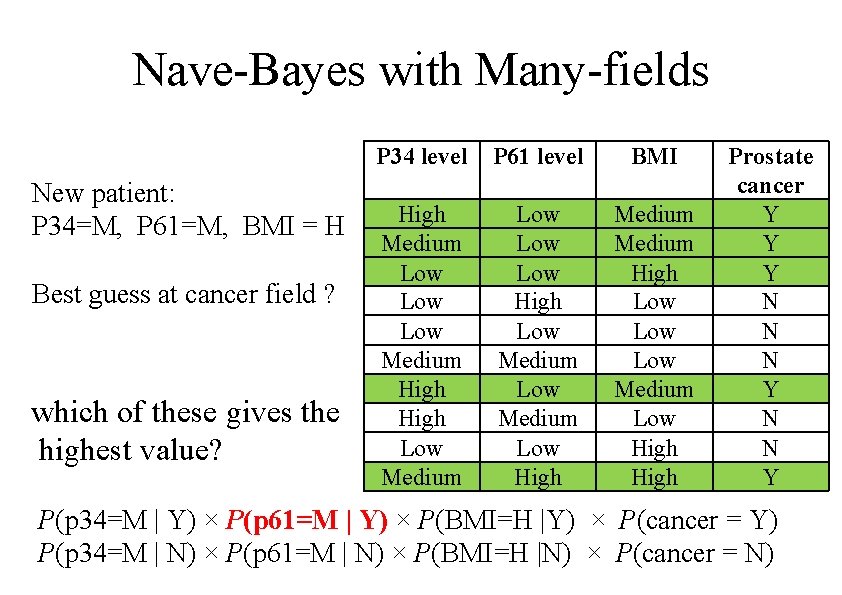

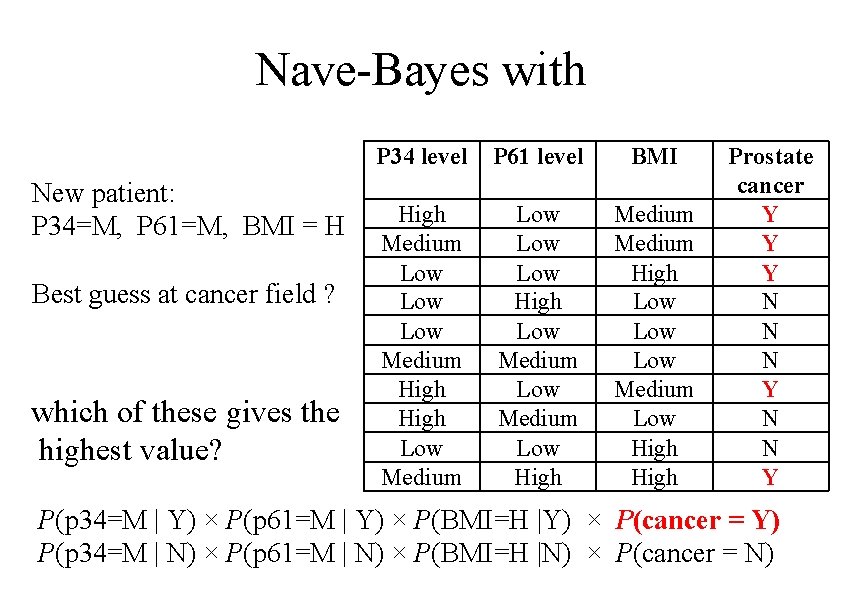

Nave-Bayes with Many-fields New patient: P 34=M, P 61=M, BMI = H Best guess at cancer field ? which of these gives the highest value? P 34 level P 61 level BMI High Medium Low Low Medium High Low Medium Low Low High Low Medium Low High Medium High Low Low Medium Low High Prostate cancer Y Y Y N N N Y P(p 34=M | Y) × P(p 61=M | Y) × P(BMI=H |Y) × P(cancer = Y) P(p 34=M | N) × P(p 61=M | N) × P(BMI=H |N) × P(cancer = N)

Nave-Bayes with Many-fields New patient: P 34=M, P 61=M, BMI = H Best guess at cancer field ? which of these gives the highest value? P 34 level P 61 level BMI High Medium Low Low Medium High Low Medium Low Low High Low Medium Low High Medium High Low Low Medium Low High Prostate cancer Y Y Y N N N Y P(p 34=M | Y) × P(p 61=M | Y) × P(BMI=H |Y) × P(cancer = Y) P(p 34=M | N) × P(p 61=M | N) × P(BMI=H |N) × P(cancer = N)

Nave-Bayes with Many-fields New patient: P 34=M, P 61=M, BMI = H Best guess at cancer field ? which of these gives the highest value? P 34 level P 61 level BMI High Medium Low Low Medium High Low Medium Low Low High Low Medium Low High Medium High Low Low Medium Low High Prostate cancer Y Y Y N N N Y P(p 34=M | Y) × P(p 61=M | Y) × P(BMI=H |Y) × P(cancer = Y) P(p 34=M | N) × P(p 61=M | N) × P(BMI=H |N) × P(cancer = N)

Nave-Bayes with Many-fields New patient: P 34=M, P 61=M, BMI = H Best guess at cancer field ? which of these gives the highest value? P 34 level P 61 level BMI High Medium Low Low Medium High Low Medium Low Low High Low Medium Low High Medium High Low Low Medium Low High Prostate cancer Y Y Y N N N Y P(p 34=M | Y) × P(p 61=M | Y) × P(BMI=H |Y) × P(cancer = Y) P(p 34=M | N) × P(p 61=M | N) × P(BMI=H |N) × P(cancer = N)

Nave-Bayes with New patient: P 34=M, P 61=M, BMI = H Best guess at cancer field ? which of these gives the highest value? P 34 level P 61 level BMI High Medium Low Low Medium High Low Medium Low Low High Low Medium Low High Medium High Low Low Medium Low High Prostate cancer Y Y Y N N N Y P(p 34=M | Y) × P(p 61=M | Y) × P(BMI=H |Y) × P(cancer = Y) P(p 34=M | N) × P(p 61=M | N) × P(BMI=H |N) × P(cancer = N)

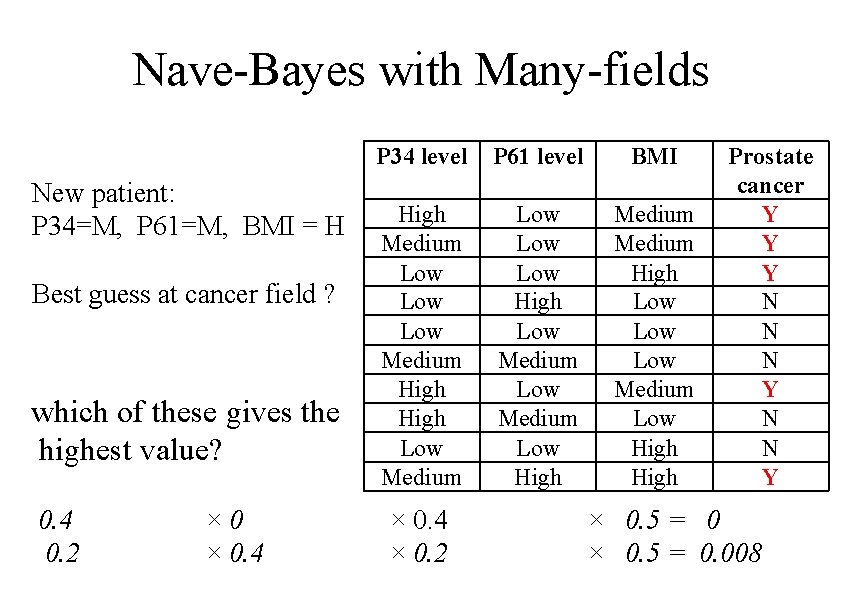

Nave-Bayes with Many-fields New patient: P 34=M, P 61=M, BMI = H Best guess at cancer field ? which of these gives the highest value? 0. 4 0. 2 × 0. 4 P 34 level P 61 level BMI High Medium Low Low Medium High Low Medium Low Low High Low Medium Low High Medium High Low Low Medium Low High × 0. 4 × 0. 2 Prostate cancer Y Y Y N N N Y × 0. 5 = 0. 008

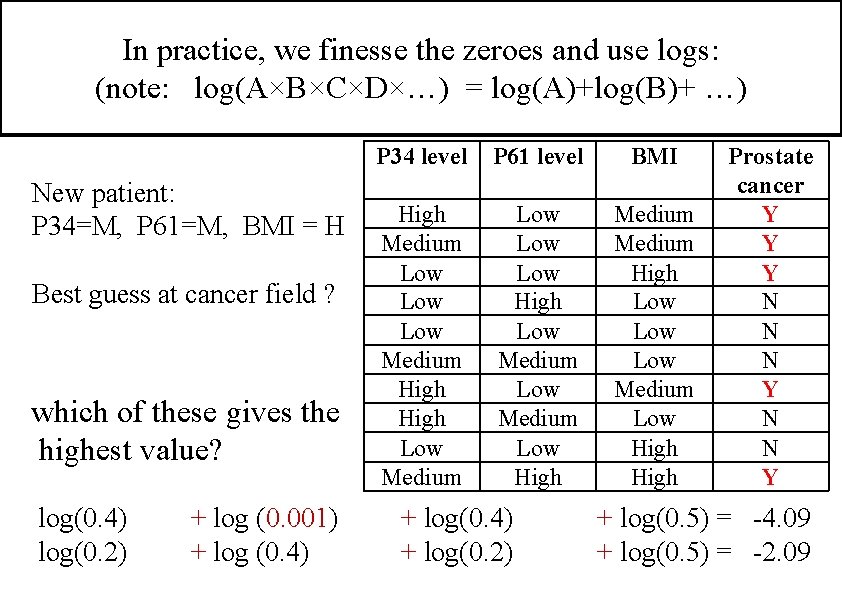

In practice, we finesse the zeroes and use logs: (note: log(A×B×C×D×…) = log(A)+log(B)+ …) New patient: P 34=M, P 61=M, BMI = H Best guess at cancer field ? which of these gives the highest value? log(0. 4) log(0. 2) + log (0. 001) + log (0. 4) P 34 level P 61 level BMI High Medium Low Low Medium High Low Medium Low Low High Low Medium Low High Medium High Low Low Medium Low High + log(0. 4) + log(0. 2) Prostate cancer Y Y Y N N N Y + log(0. 5) = -4. 09 + log(0. 5) = -2. 09

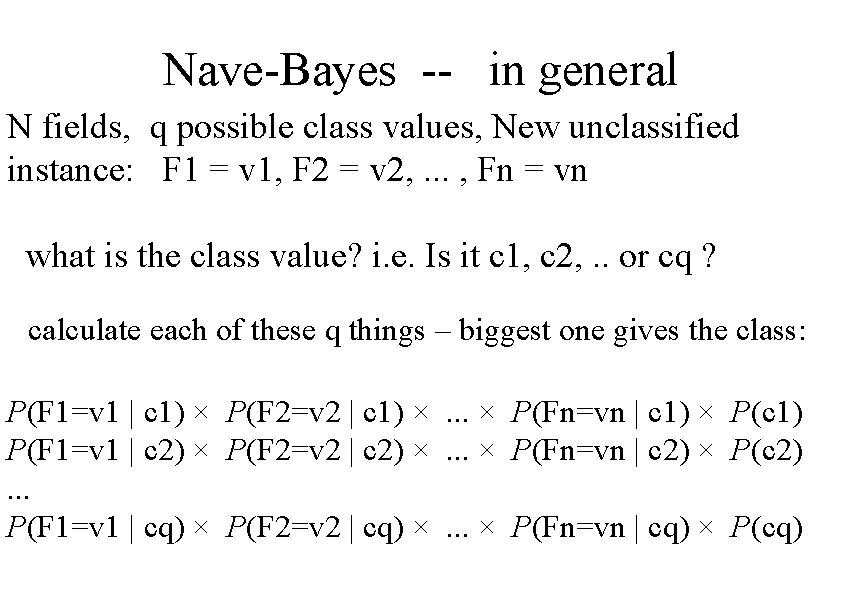

Nave-Bayes -- in general N fields, q possible class values, New unclassified instance: F 1 = v 1, F 2 = v 2, . . . , Fn = vn what is the class value? i. e. Is it c 1, c 2, . . or cq ? calculate each of these q things – biggest one gives the class: P(F 1=v 1 | c 1) × P(F 2=v 2 | c 1) ×. . . × P(Fn=vn | c 1) × P(c 1) P(F 1=v 1 | c 2) × P(F 2=v 2 | c 2) ×. . . × P(Fn=vn | c 2) × P(c 2). . . P(F 1=v 1 | cq) × P(F 2=v 2 | cq) ×. . . × P(Fn=vn | cq) × P(cq)

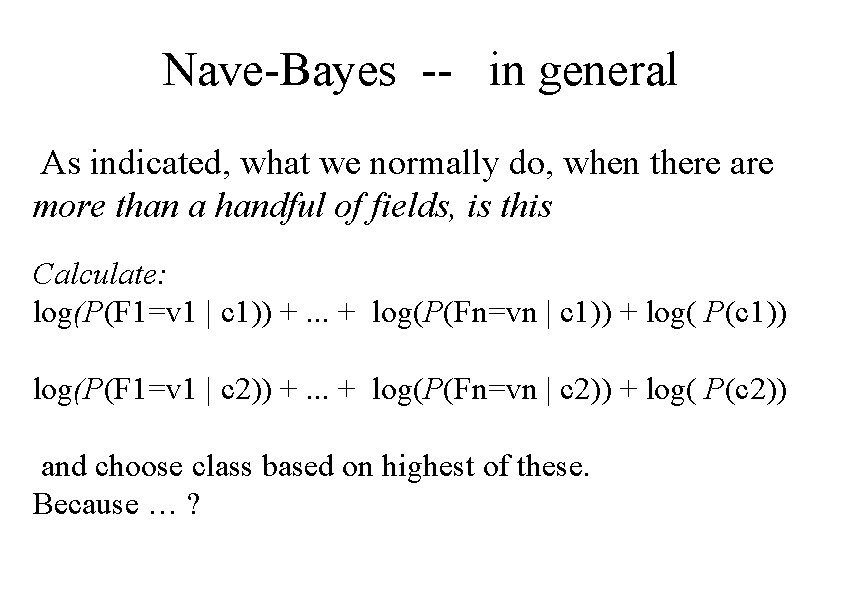

Nave-Bayes -- in general As indicated, what we normally do, when there are more than a handful of fields, is this Calculate: log(P(F 1=v 1 | c 1)) +. . . + log(P(Fn=vn | c 1)) + log( P(c 1)) log(P(F 1=v 1 | c 2)) +. . . + log(P(Fn=vn | c 2)) + log( P(c 2)) and choose class based on highest of these. Because … ?

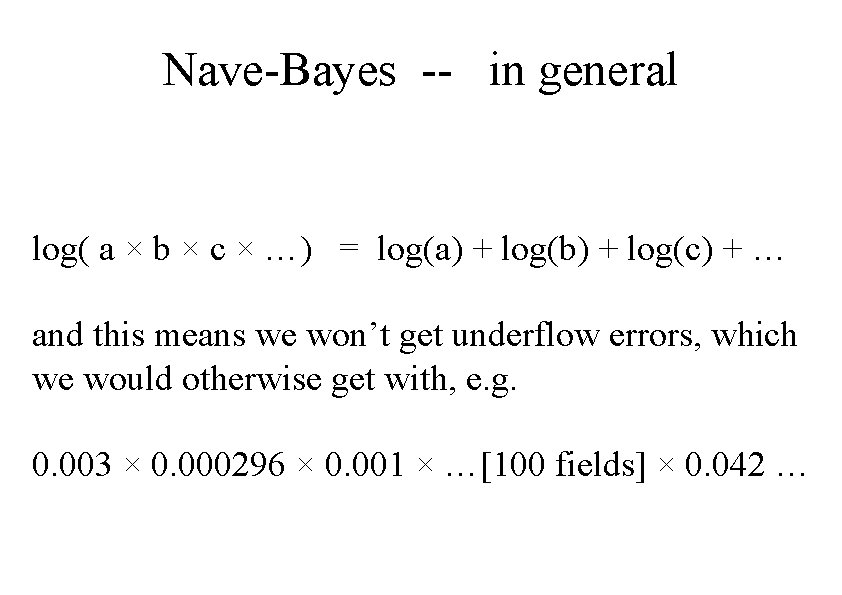

Nave-Bayes -- in general log( a × b × c × …) = log(a) + log(b) + log(c) + … and this means we won’t get underflow errors, which we would otherwise get with, e. g. 0. 003 × 0. 000296 × 0. 001 × …[100 fields] × 0. 042 …

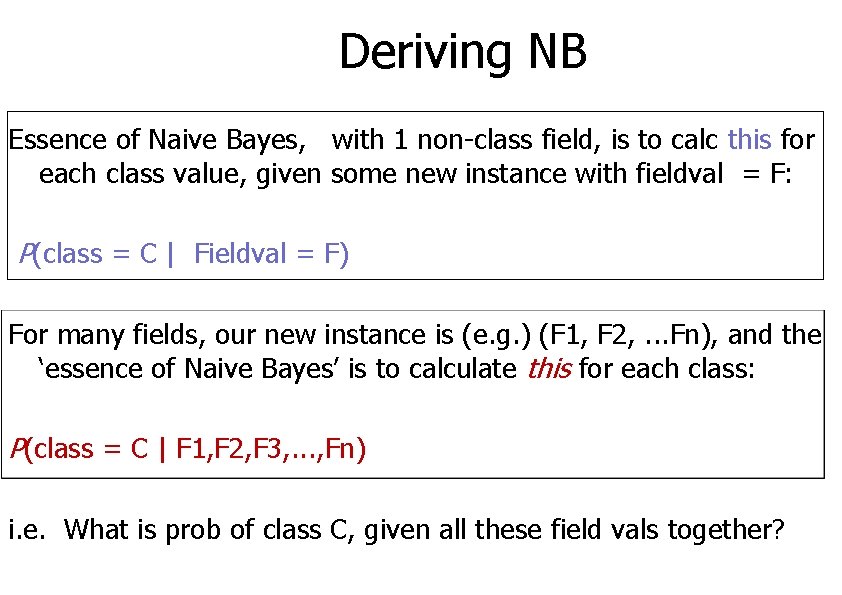

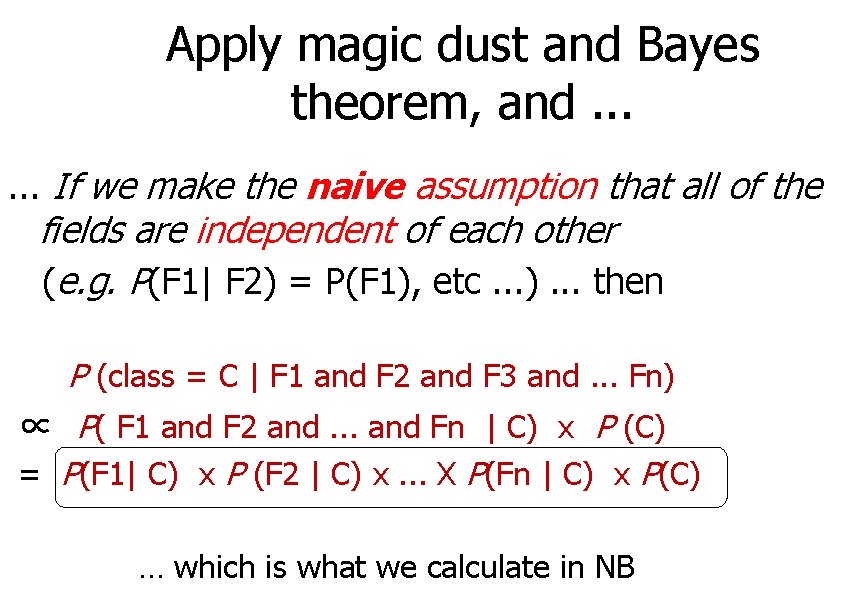

Deriving NB Essence of Naive Bayes, with 1 non-class field, is to calc this for each class value, given some new instance with fieldval = F: P(class = C | Fieldval = F) For many fields, our new instance is (e. g. ) (F 1, F 2, . . . Fn), and the ‘essence of Naive Bayes’ is to calculate this for each class: P(class = C | F 1, F 2, F 3, . . . , Fn) i. e. What is prob of class C, given all these field vals together?

Apply magic dust and Bayes theorem, and. . . If we make the naive assumption that all of the fields are independent of each other (e. g. P(F 1| F 2) = P(F 1), etc. . . ). . . then P (class = C | F 1 and F 2 and F 3 and. . . Fn) ∝ P( F 1 and F 2 and. . . and Fn | C) x P (C) = P(F 1| C) x P (F 2 | C) x. . . X P(Fn | C) x P(C) … which is what we calculate in NB

Confusion and CW 2

80% ‘accuracy’. . .

80% ‘accuracy’. . . … means our classifier predicts the correct class 80% of the time, right?

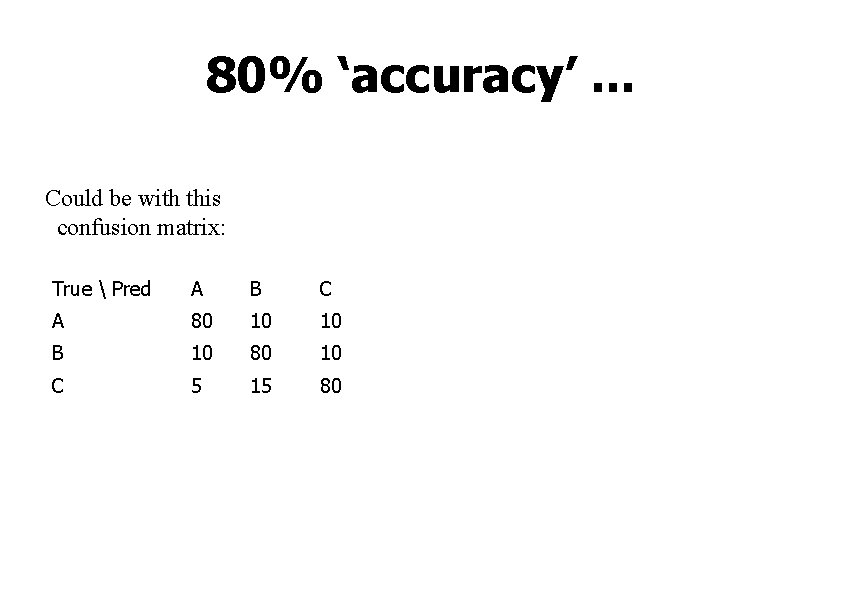

80% ‘accuracy’. . . Could be with this confusion matrix: True Pred A B C A 80 10 10 B 10 80 10 C 5 15 80

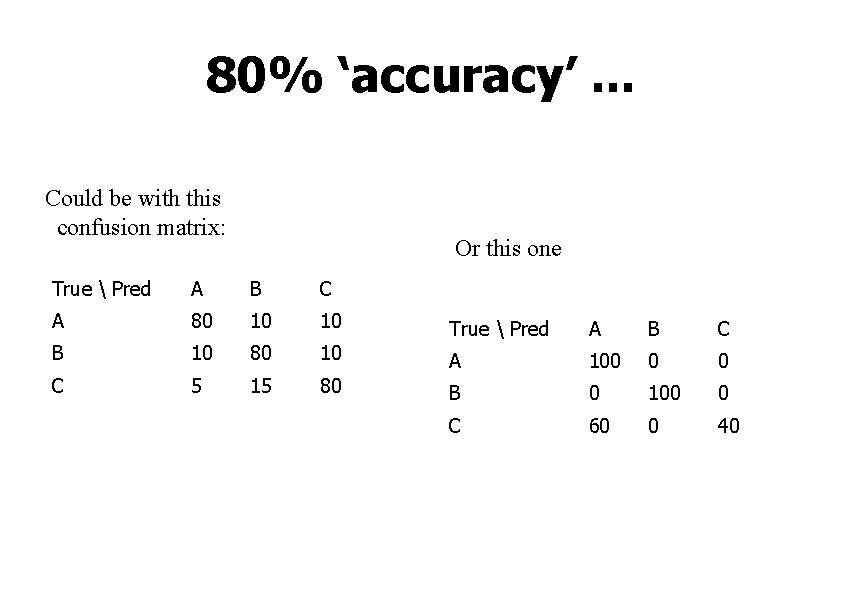

80% ‘accuracy’. . . Could be with this confusion matrix: Or this one True Pred A B C A 80 10 10 True Pred A B C B 10 80 10 A 100 0 0 C 5 15 80 B 0 100 0 C 60 0 40

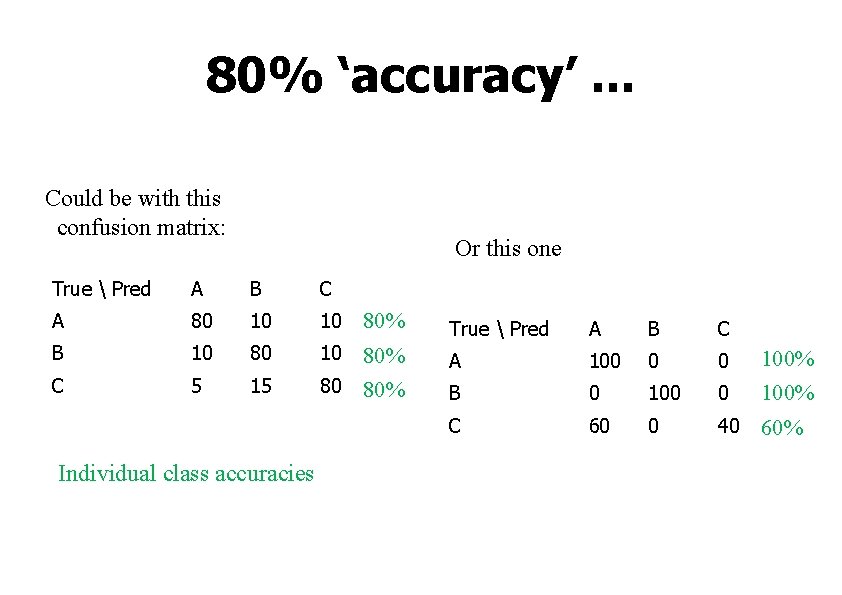

80% ‘accuracy’. . . Could be with this confusion matrix: Or this one True Pred A B C A 80 10 10 B 10 80 C 5 15 Individual class accuracies 80% 10 80% 80 80% True Pred A B C A 100 0 0 B 0 100 C 60 0 100% 0 40 60%

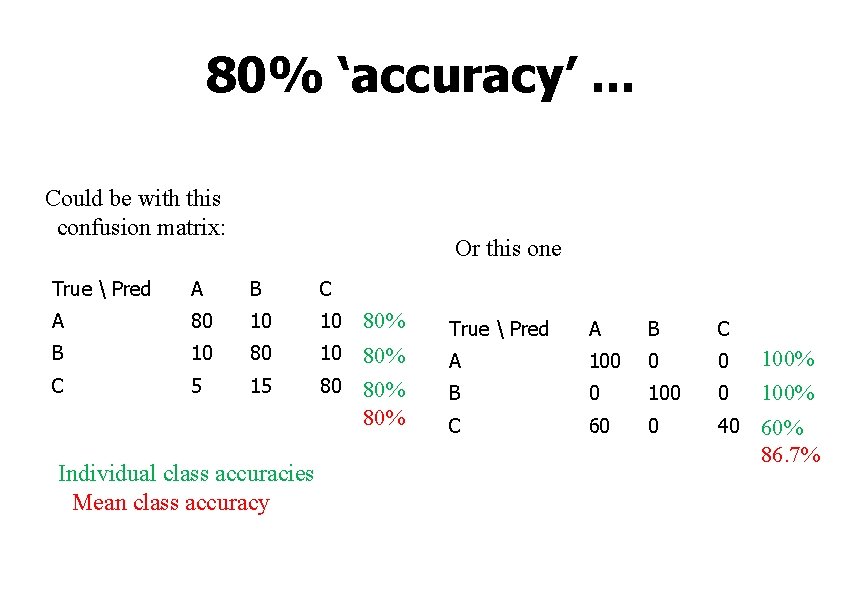

80% ‘accuracy’. . . Could be with this confusion matrix: Or this one True Pred A B C A 80 10 10 B 10 80 C 5 15 Individual class accuracies Mean class accuracy 80% 10 80% 80% True Pred A B C A 100 0 0 B 0 100 C 60 0 100% 0 40 60% 86. 7%

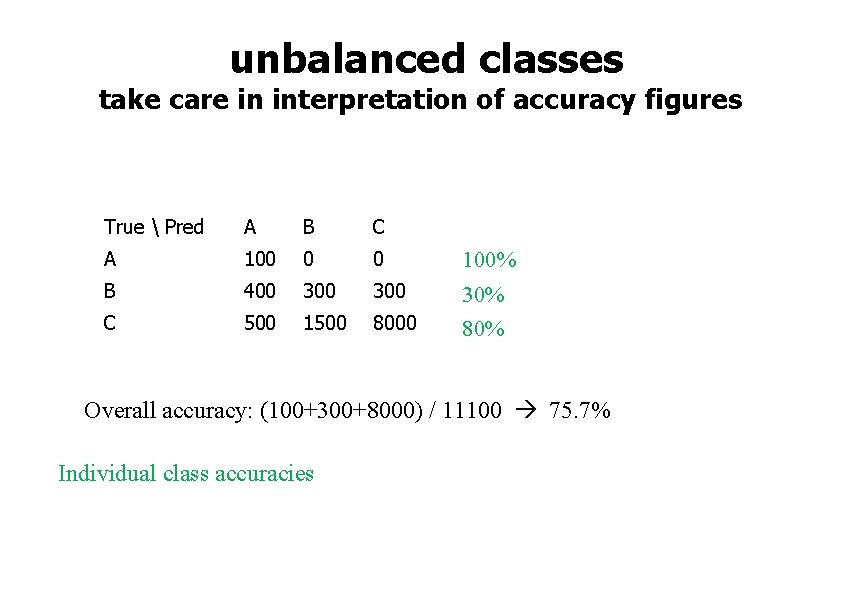

unbalanced classes take care in interpretation of accuracy figures True Pred A B C A 100 0 0 B 400 300 C 500 1500 8000 100% 30% 80% Overall accuracy: (100+300+8000) / 11100 75. 7% Individual class accuracies

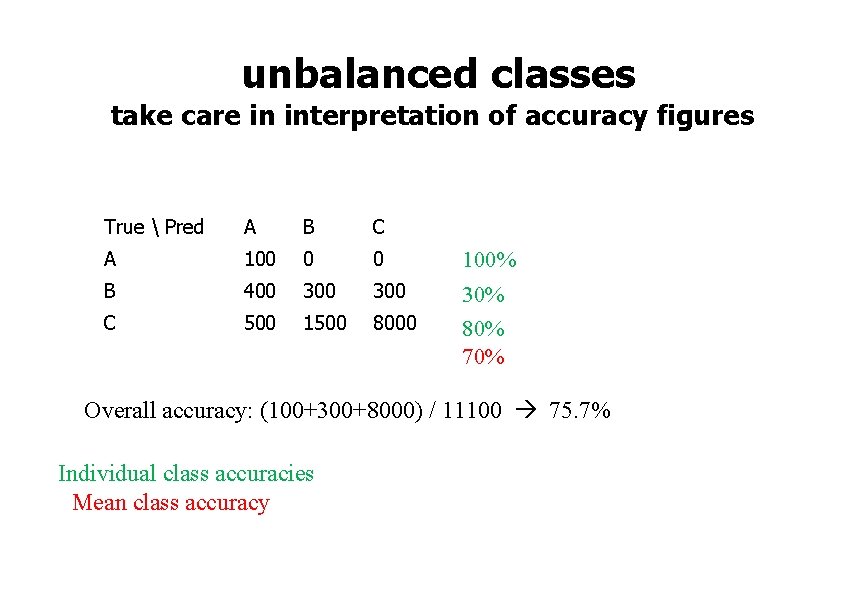

unbalanced classes take care in interpretation of accuracy figures True Pred A B C A 100 0 0 B 400 300 C 500 1500 8000 100% 30% 80% 70% Overall accuracy: (100+300+8000) / 11100 75. 7% Individual class accuracies Mean class accuracy

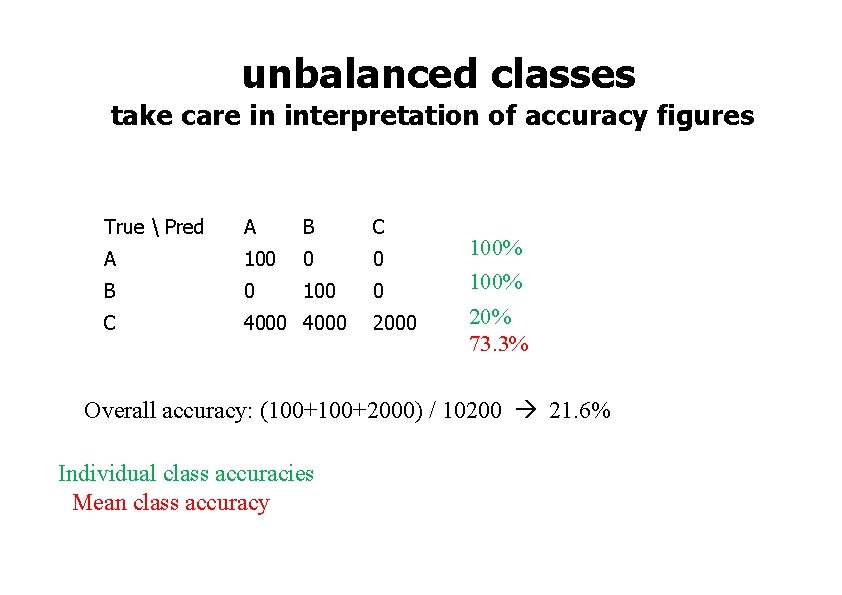

unbalanced classes take care in interpretation of accuracy figures True Pred A B C A 100 0 0 B 0 100 0 C 4000 2000 100% 20% 73. 3% Overall accuracy: (100+2000) / 10200 21. 6% Individual class accuracies Mean class accuracy

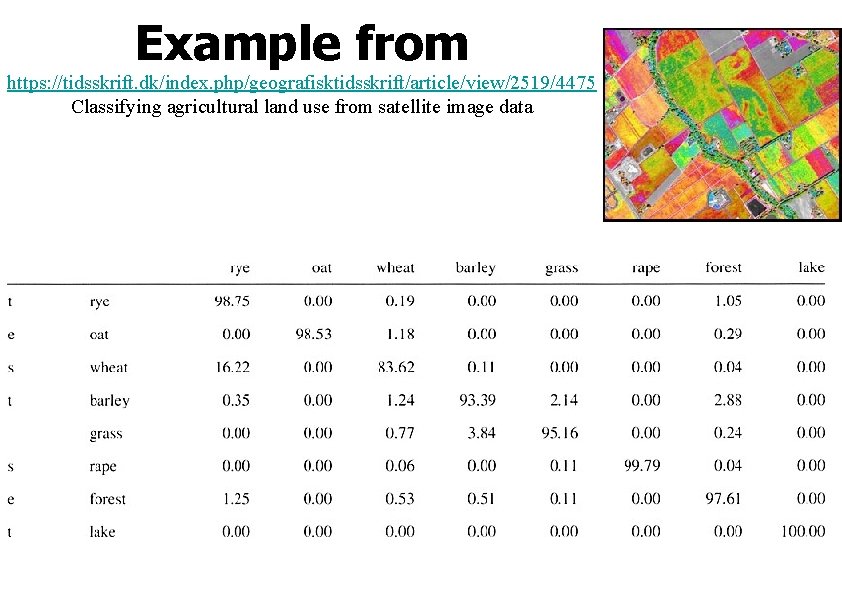

Example from https: //tidsskrift. dk/index. php/geografisktidsskrift/article/view/2519/4475 Classifying agricultural land use from satellite image data

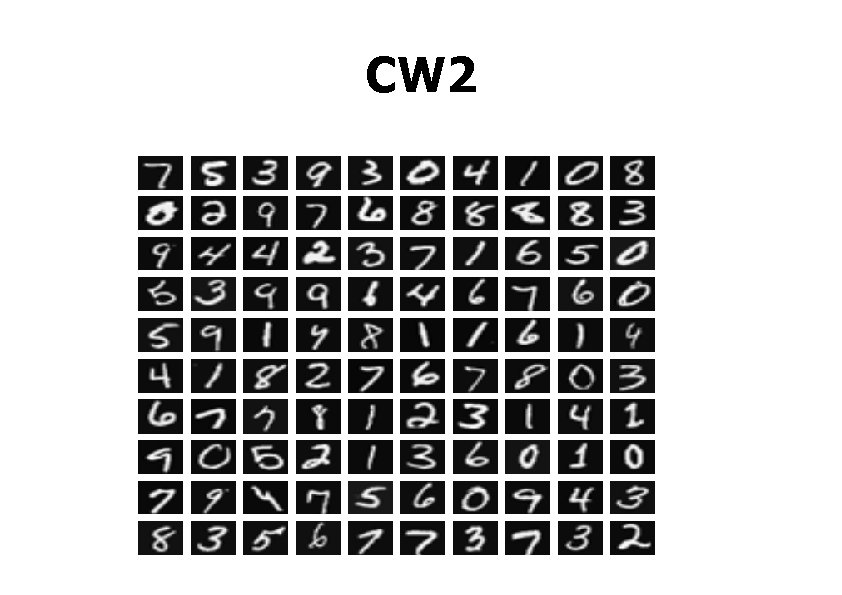

CW 2

datasets In this coursework you will work with a ‘handwritten digit recognition’ dataset. Get it from my site here: http: //www. macs. hw. ac. uk/~dwcorne/Teaching/DMML/optall. txt Also pick up ten other versions of the same dataset, as follows: http: //www. macs. hw. ac. uk/~dwcorne/Teaching/DMML/opt 0. txt http: //www. macs. hw. ac. uk/~dwcorne/Teaching/DMML/opt 1. txt http: //www. macs. hw. ac. uk/~dwcorne/Teaching/DMML/opt 2. txt [etc. . . ] http: //www. macs. hw. ac. uk/~dwcorne/Teaching/DMML/opt 9. txt

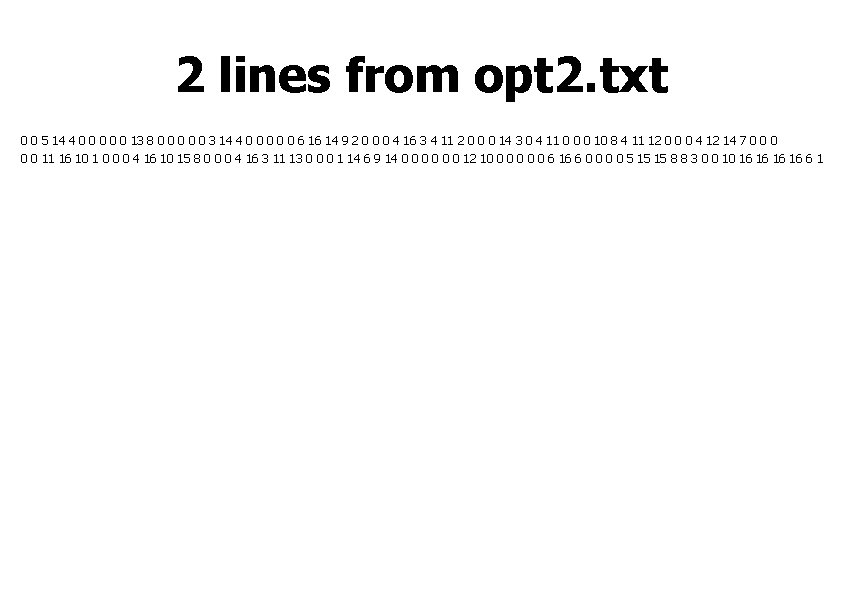

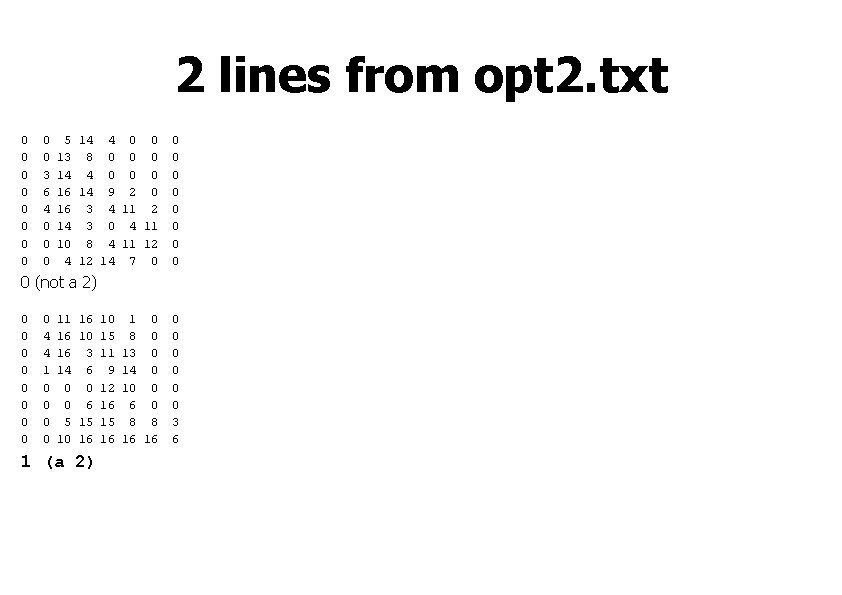

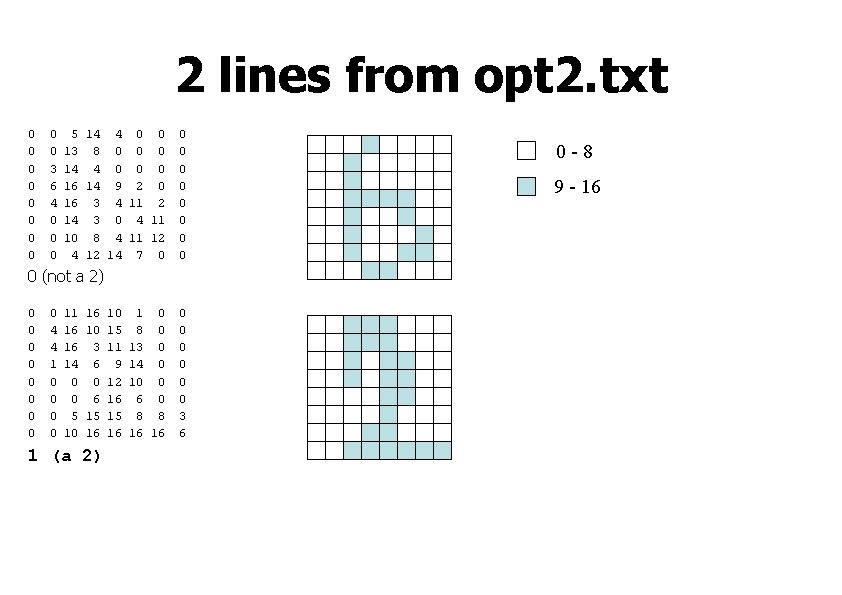

2 lines from opt 2. txt 0 0 5 14 4 0 0 0 13 8 0 0 0 3 14 4 0 0 0 6 16 14 9 2 0 0 0 4 16 3 4 11 2 0 0 0 14 3 0 4 11 0 0 0 10 8 4 11 12 0 0 0 4 12 14 7 0 0 0 11 16 10 1 0 0 0 4 16 10 15 8 0 0 0 4 16 3 11 13 0 0 0 1 14 6 9 14 0 0 0 12 10 0 0 6 16 6 0 0 5 15 15 8 8 3 0 0 10 16 16 6 1

2 lines from opt 2. txt 0 0 0 0 0 3 6 4 0 0 0 5 14 4 0 0 13 8 0 0 0 14 4 0 0 0 16 14 9 2 0 16 3 4 11 2 14 3 0 4 11 10 8 4 11 12 4 12 14 7 0 0 0 0 0 (not a 2) 0 0 0 0 0 4 4 1 0 0 11 16 16 14 0 0 5 10 16 10 3 6 0 6 15 16 1 (a 2) 10 15 11 9 12 16 15 16 1 0 8 0 13 0 14 0 10 0 6 0 8 8 16 16 0 0 0 3 6

2 lines from opt 2. txt 0 0 0 0 0 3 6 4 0 0 0 5 14 4 0 0 13 8 0 0 0 14 4 0 0 0 16 14 9 2 0 16 3 4 11 2 14 3 0 4 11 10 8 4 11 12 4 12 14 7 0 0 0 0 0 (not a 2) 0 0 0 0 0 4 4 1 0 0 11 16 16 14 0 0 5 10 16 10 3 6 0 6 15 16 1 (a 2) 10 15 11 9 12 16 15 16 1 0 8 0 13 0 14 0 10 0 6 0 8 8 16 16 0 0 0 3 6 0 -8 9 - 16

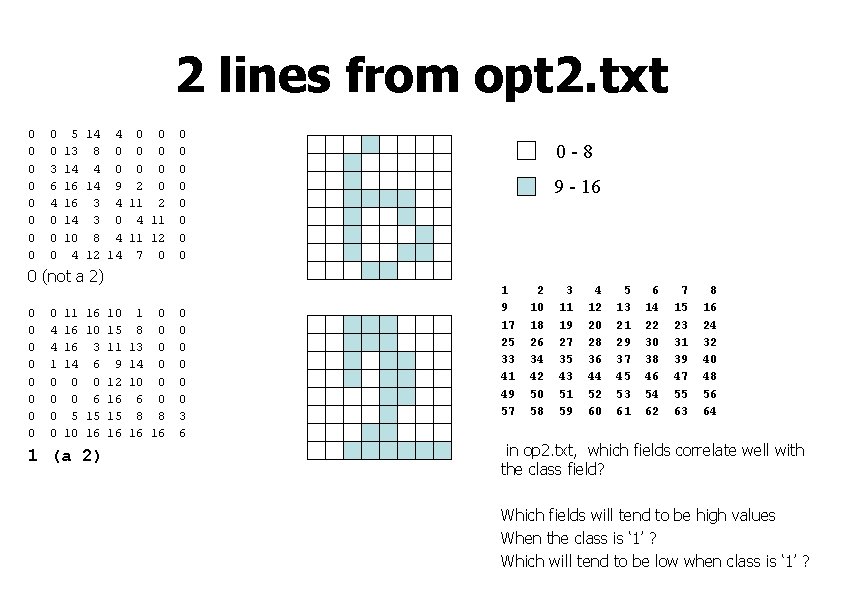

2 lines from opt 2. txt 0 0 0 0 0 3 6 4 0 0 0 5 14 4 0 0 13 8 0 0 0 14 4 0 0 0 16 14 9 2 0 16 3 4 11 2 14 3 0 4 11 10 8 4 11 12 4 12 14 7 0 0 0 0 0 (not a 2) 0 0 0 0 0 4 4 1 0 0 11 16 16 14 0 0 5 10 16 10 3 6 0 6 15 16 1 (a 2) 10 15 11 9 12 16 15 16 1 0 8 0 13 0 14 0 10 0 6 0 8 8 16 16 0 0 0 3 6 0 -8 9 - 16 1 9 17 25 33 41 49 57 2 10 18 26 34 42 50 58 3 11 19 27 35 43 51 59 4 12 20 28 36 44 52 60 5 13 21 29 37 45 53 61 6 14 22 30 38 46 54 62 7 15 23 31 39 47 55 63 8 16 24 32 40 48 56 64 in op 2. txt, which fields correlate well with the class field? Which fields will tend to be high values When the class is ‘ 1’ ? Which will tend to be low when class is ‘ 1’ ?

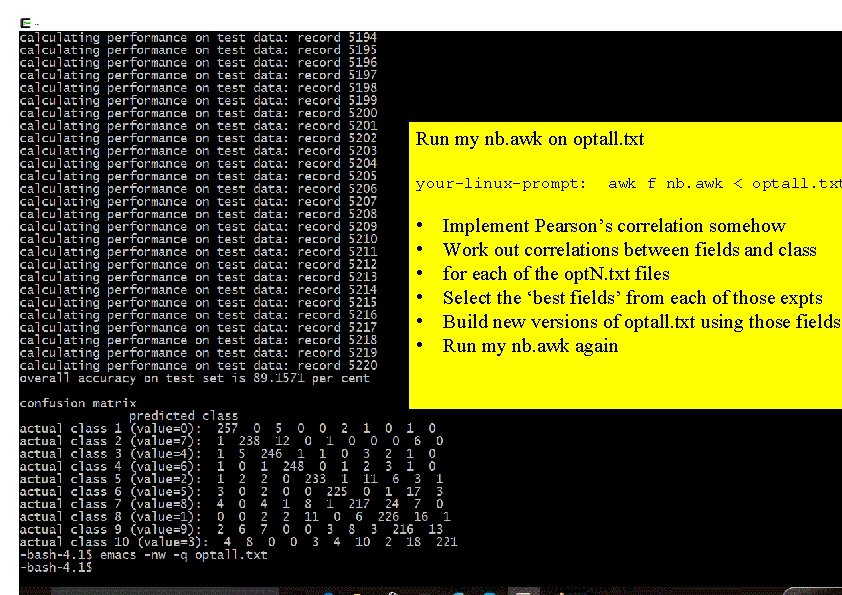

• • • • • Produce a version of optall. txt that has the instan ces in a randomised order. 2. Run my naïve Bayes awk script on the resulting vers ion of optall. txt 3. Implement a program or script that allows you to wo rk out the correlation between any two fields. 4. Using your program, find out the correlation betwee n each field and the class field -using only the first 50% of instances in the data file – for all the opt. N files. For each opt. N. txt file, keep a record of the top five fields, in order of a bsolute correlation value. Run my nb. awk on optall. txt your-linux-prompt: • • • awk f nb. awk < optall. txt Implement Pearson’s correlation somehow Work out correlations between fields and class for each of the opt. N. txt files Select the ‘best fields’ from each of those expts Build new versions of optall. txt using those fields Run my nb. awk again

Next – feature selection

- Slides: 52