Generative and Discriminative Models in NLP A Survey

- Slides: 39

Generative and Discriminative Models in NLP: A Survey Kristina Toutanova Computer Science Department Stanford University

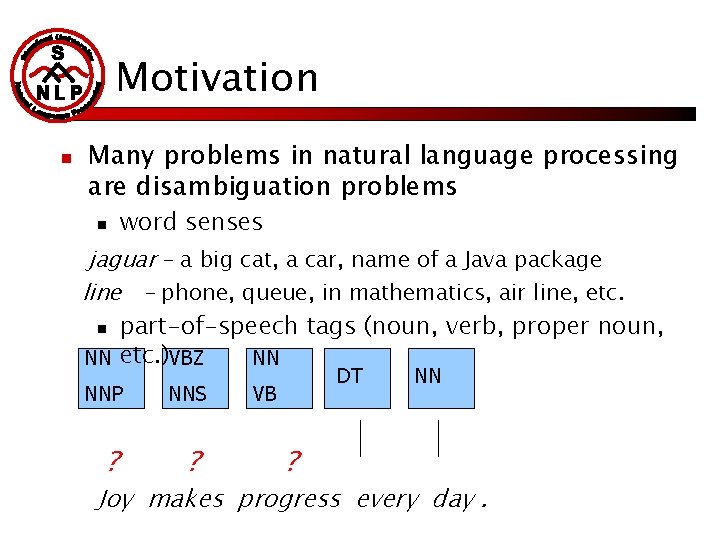

Motivation n Many problems in natural language processing are disambiguation problems n word senses jaguar – a big cat, a car, name of a Java package line - phone, queue, in mathematics, air line, etc. n part-of-speech tags (noun, verb, proper noun, NN etc. )VBZ NN NNP ? NNS ? DT VB ? NN Joy makes progress every day.

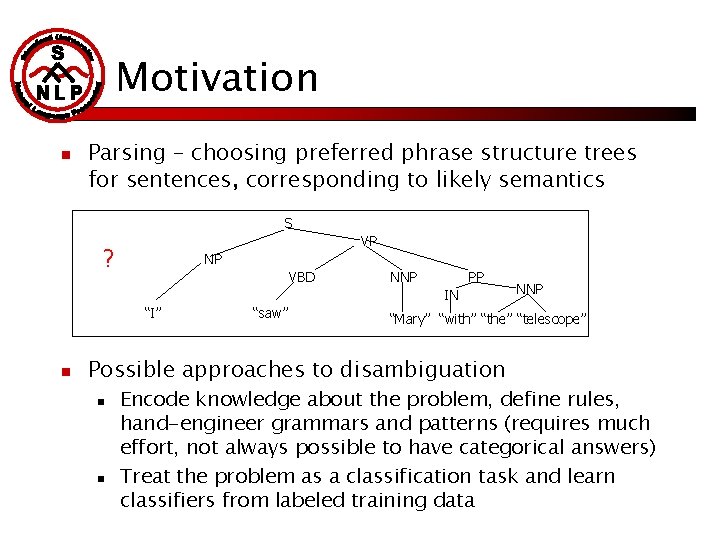

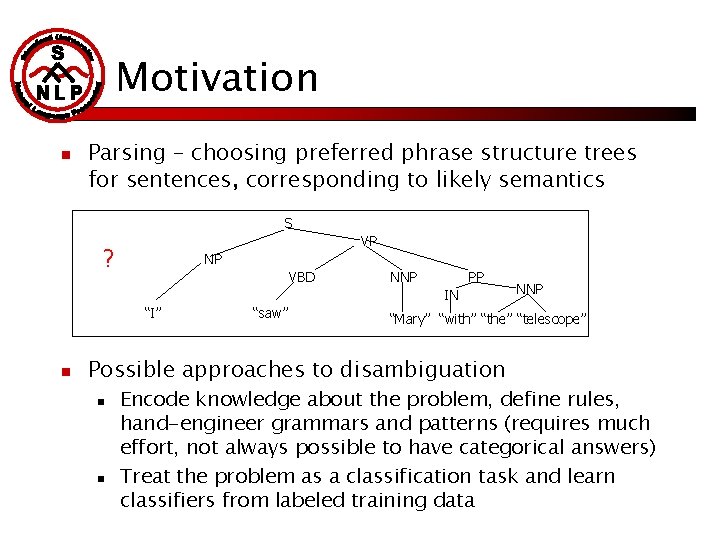

Motivation n Parsing – choosing preferred phrase structure trees for sentences, corresponding to likely semantics S VP ? NP VBD NNP PP IN “I” n “saw” “Mary” “with” “the” “telescope” Possible approaches to disambiguation n n NNP Encode knowledge about the problem, define rules, hand-engineer grammars and patterns (requires much effort, not always possible to have categorical answers) Treat the problem as a classification task and learn classifiers from labeled training data

Overview n n n General ML perspective Examples The case of Part-of-Speech Tagging The case of Syntactic Parsing Conclusions

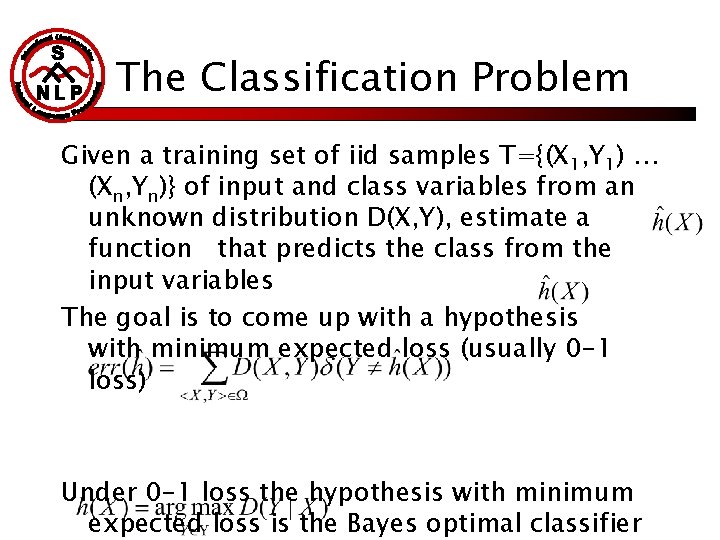

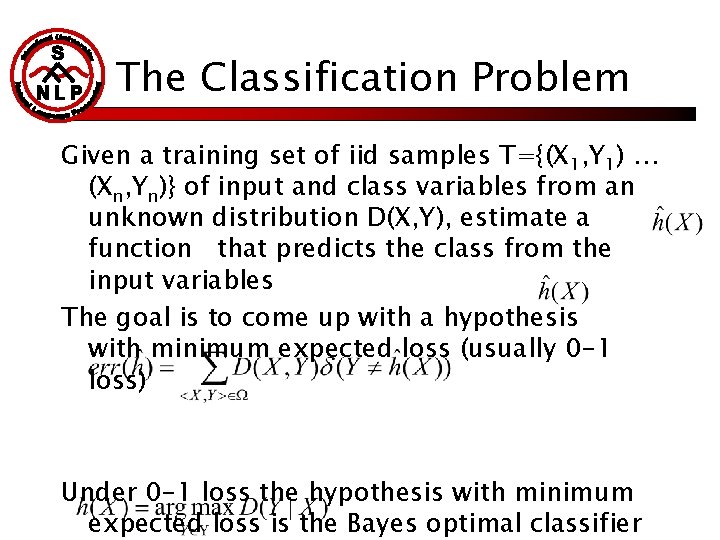

The Classification Problem Given a training set of iid samples T={(X 1, Y 1) … (Xn, Yn)} of input and class variables from an unknown distribution D(X, Y), estimate a function that predicts the class from the input variables The goal is to come up with a hypothesis with minimum expected loss (usually 0 -1 loss) Under 0 -1 loss the hypothesis with minimum expected loss is the Bayes optimal classifier

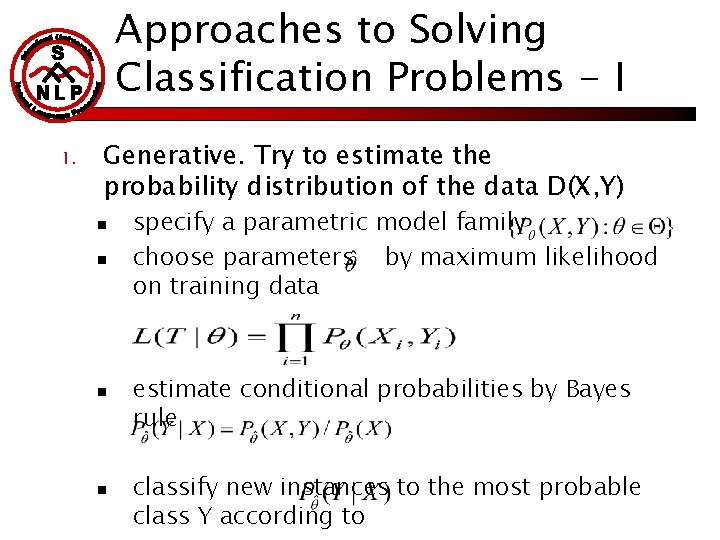

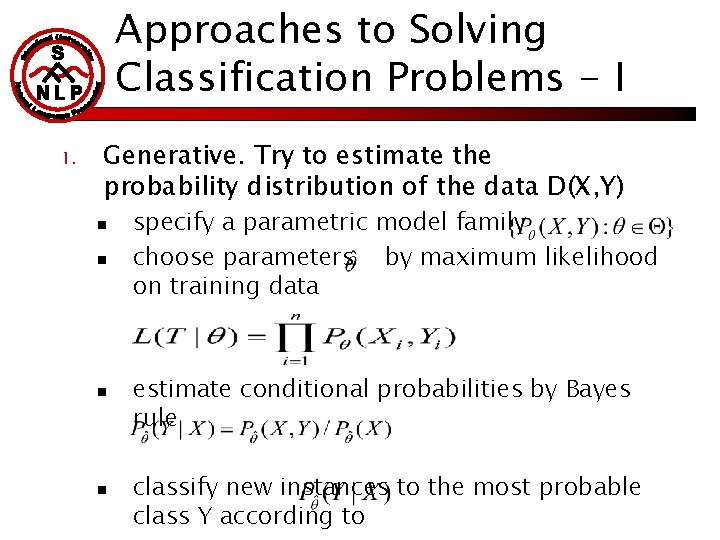

Approaches to Solving Classification Problems - I 1. Generative. Try to estimate the probability distribution of the data D(X, Y) n n specify a parametric model family choose parameters by maximum likelihood on training data estimate conditional probabilities by Bayes rule classify new instances to the most probable class Y according to

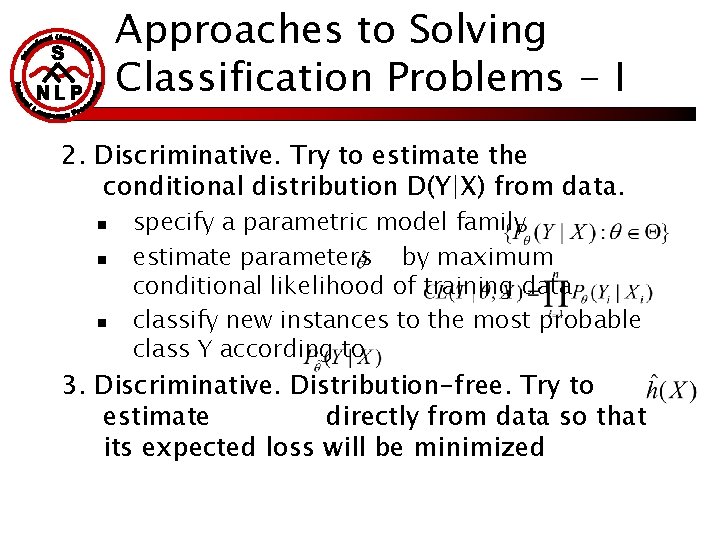

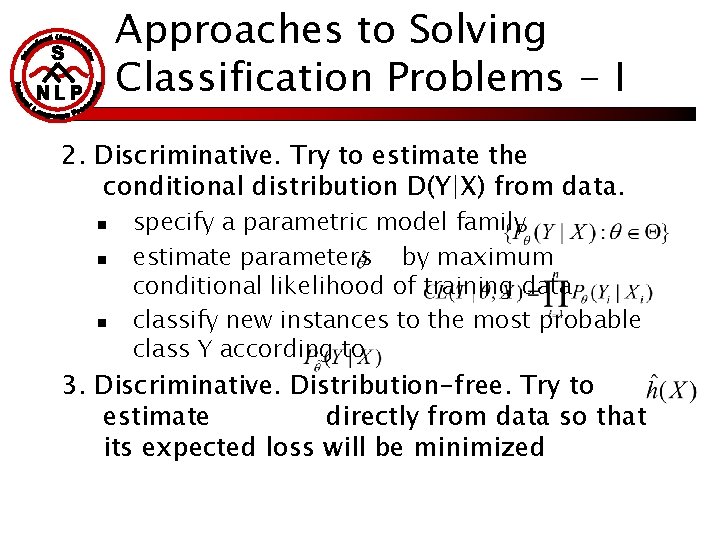

Approaches to Solving Classification Problems - I 2. Discriminative. Try to estimate the conditional distribution D(Y|X) from data. n n n specify a parametric model family estimate parameters by maximum conditional likelihood of training data classify new instances to the most probable class Y according to 3. Discriminative. Distribution-free. Try to estimate directly from data so that its expected loss will be minimized

Axes for comparison of different approaches n n n Asymptotic accuracy Accuracy for limited training data Speed of convergence to the best hypothesis Complexity of training Modeling ease

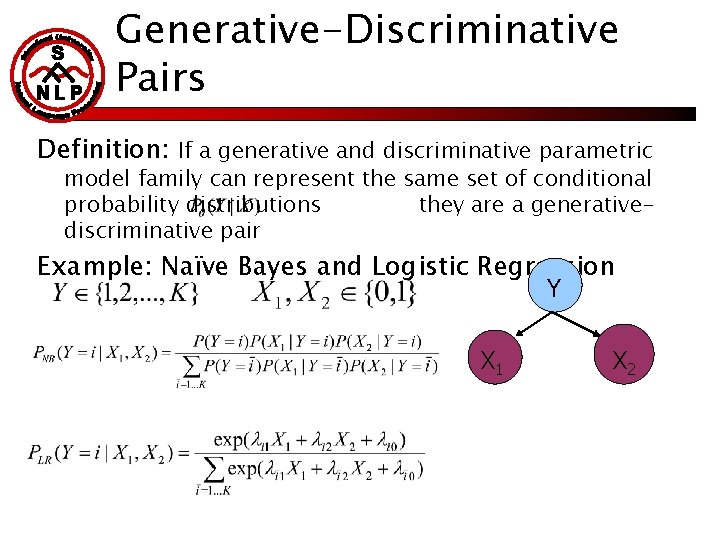

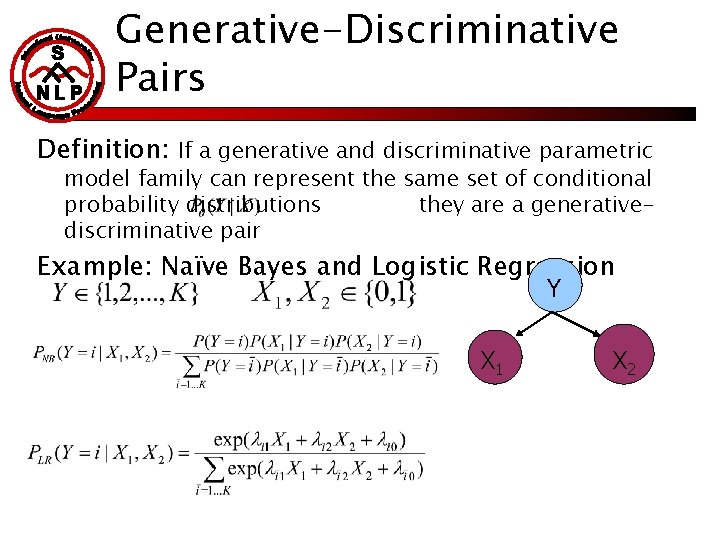

Generative-Discriminative Pairs Definition: If a generative and discriminative parametric model family can represent the same set of conditional probability distributions they are a generativediscriminative pair Example: Naïve Bayes and Logistic Regression Y X 1 X 2

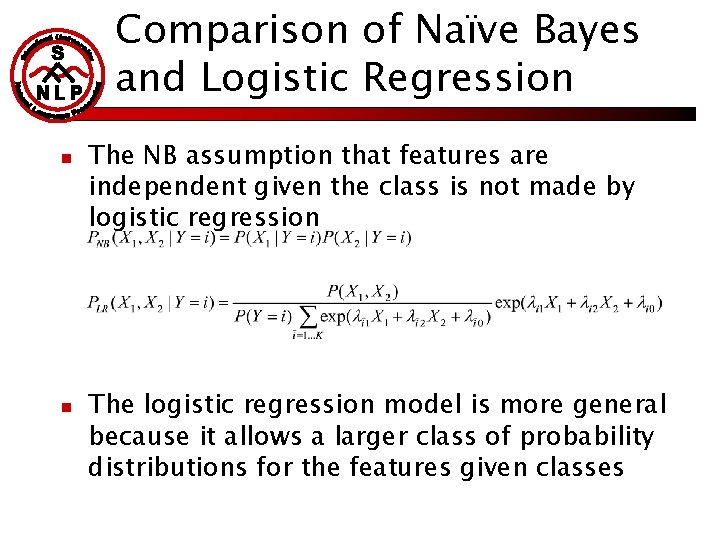

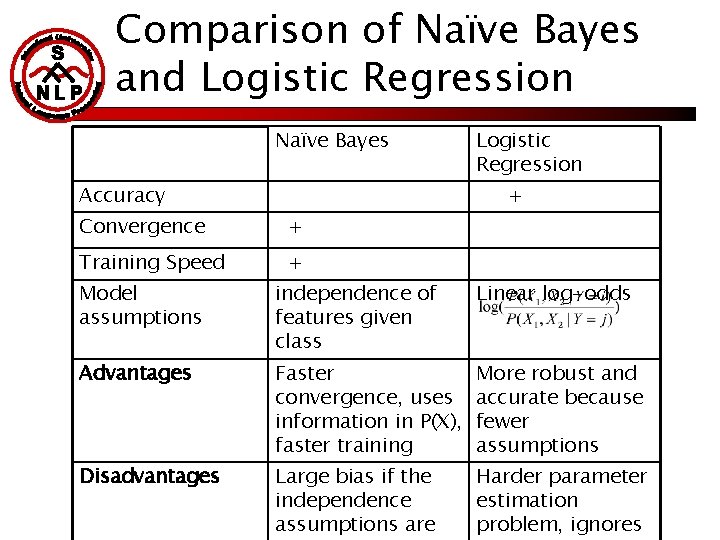

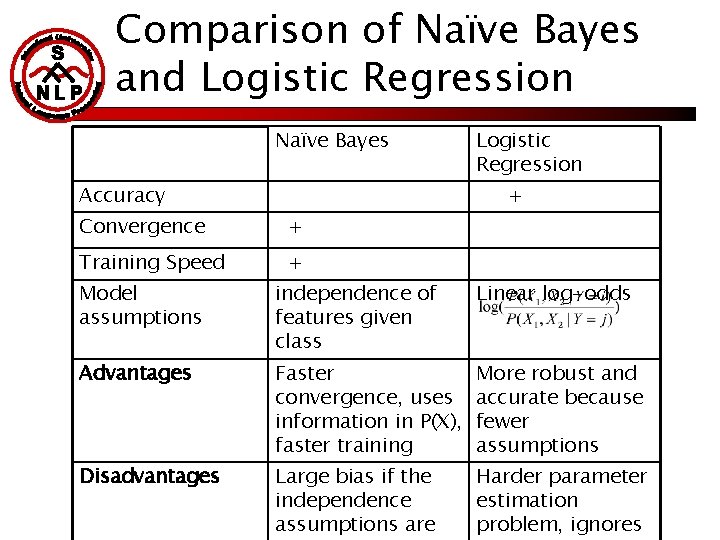

Comparison of Naïve Bayes and Logistic Regression n n The NB assumption that features are independent given the class is not made by logistic regression The logistic regression model is more general because it allows a larger class of probability distributions for the features given classes

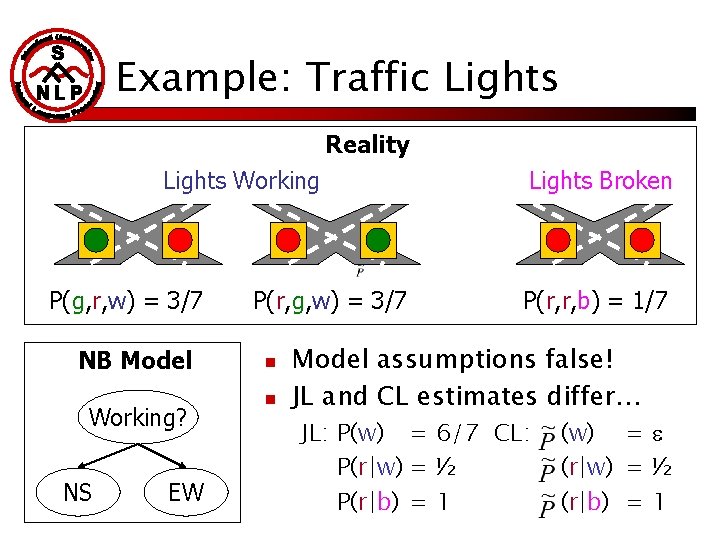

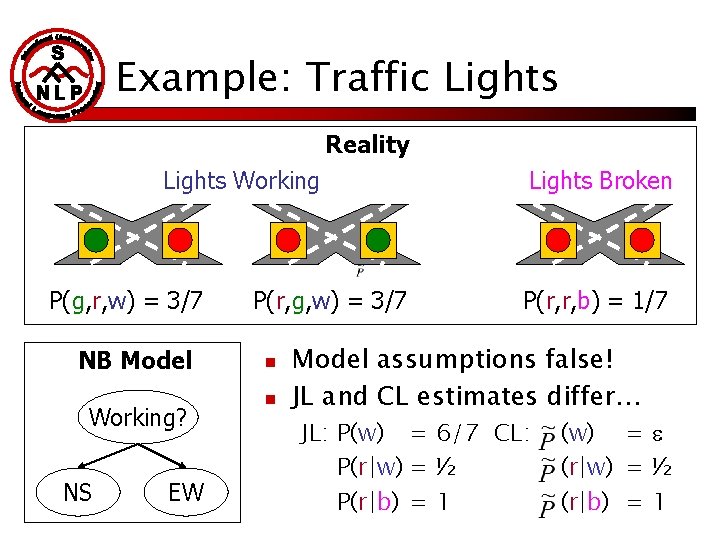

Example: Traffic Lights Reality Lights Working P(g, r, w) = 3/7 NB Model Working? NS EW P(r, g, w) = 3/7 n n Lights Broken P(r, r, b) = 1/7 Model assumptions false! JL and CL estimates differ… JL: P(w) = 6/7 CL: P(r|w) = ½ P(r|b) = 1 (w) = (r|w) = ½ (r|b) = 1

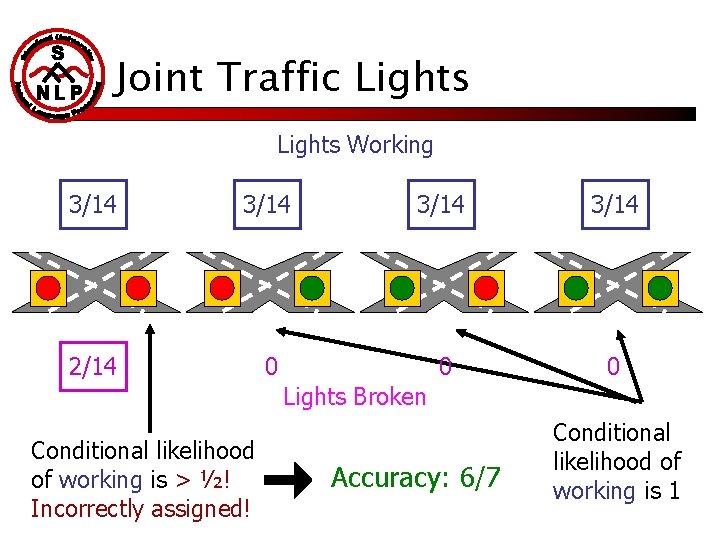

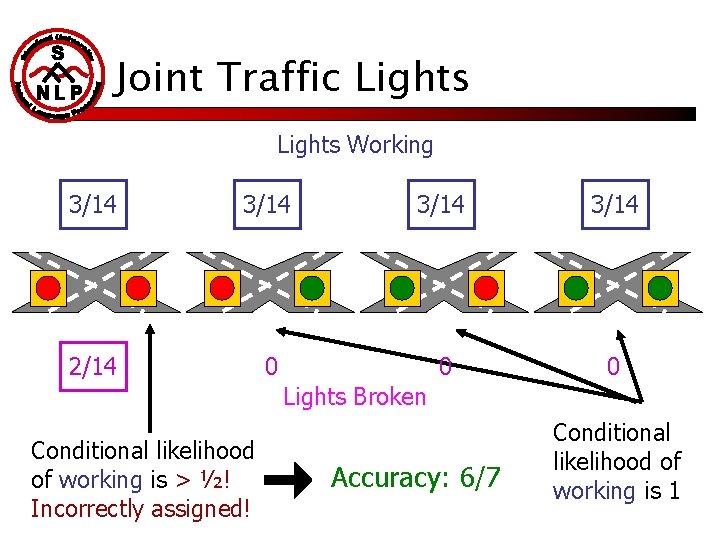

Joint Traffic Lights Working 3/14 2/14 0 0 0 Lights Broken Conditional likelihood of working is > ½! Incorrectly assigned! Accuracy: 6/7 Conditional likelihood of working is 1

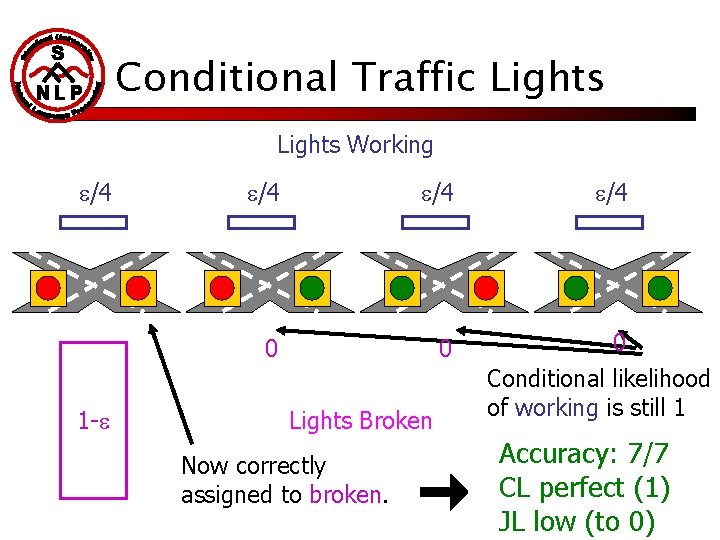

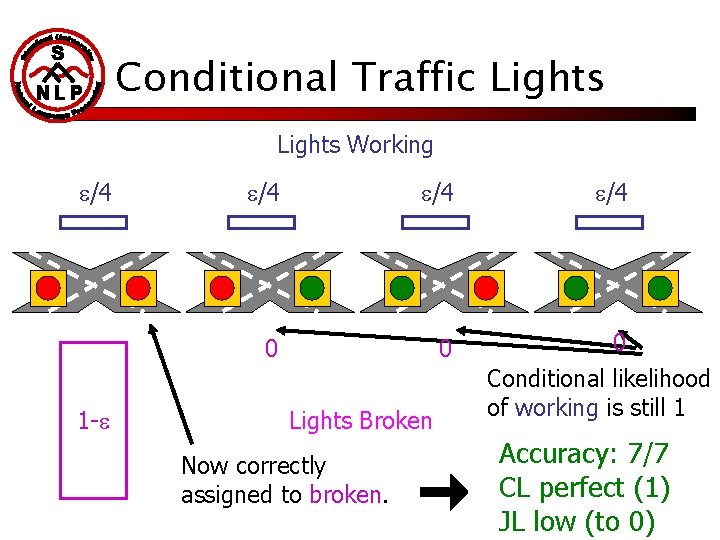

Conditional Traffic Lights Working /4 1 - /4 0 0 Lights Broken Now correctly assigned to broken. /4 0 Conditional likelihood of working is still 1 Accuracy: 7/7 CL perfect (1) JL low (to 0)

Comparison of Naïve Bayes and Logistic Regression Naïve Bayes Accuracy Logistic Regression + Convergence + Training Speed + Model assumptions independence of features given class Linear log-odds Advantages Faster convergence, uses information in P(X), faster training More robust and accurate because fewer assumptions Disadvantages Large bias if the independence assumptions are Harder parameter estimation problem, ignores

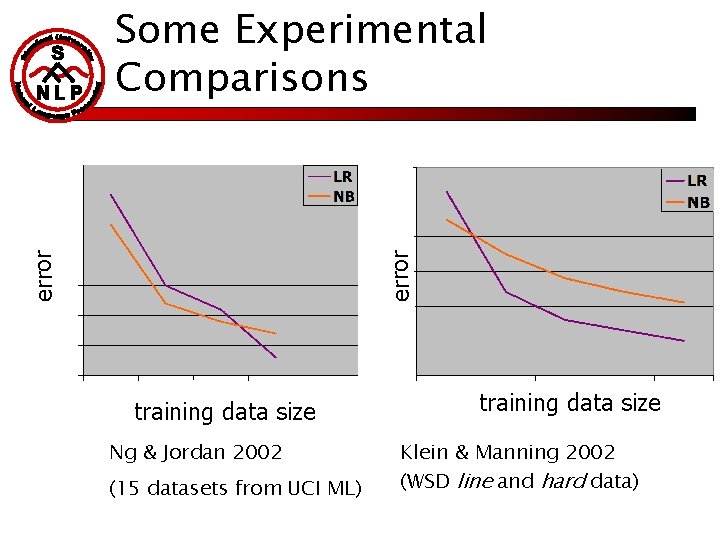

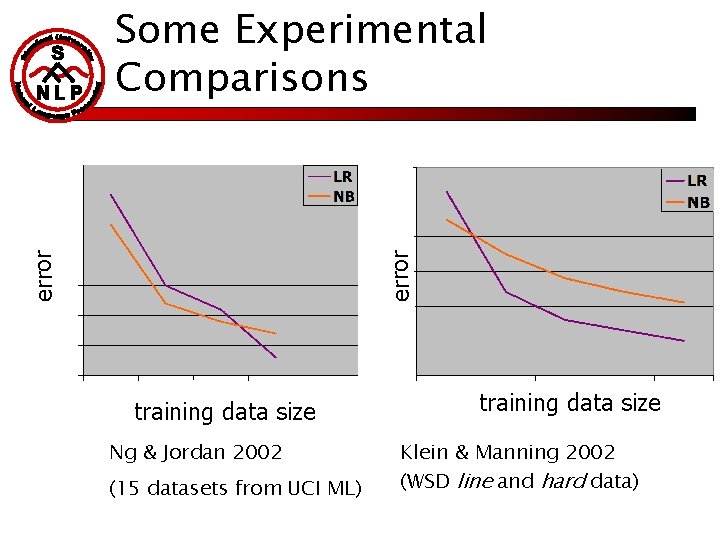

error Some Experimental Comparisons training data size Ng & Jordan 2002 (15 datasets from UCI ML) training data size Klein & Manning 2002 (WSD line and hard data)

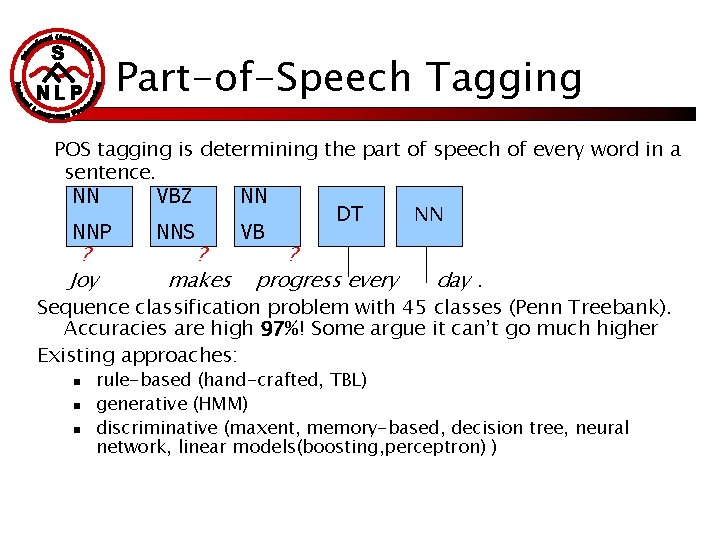

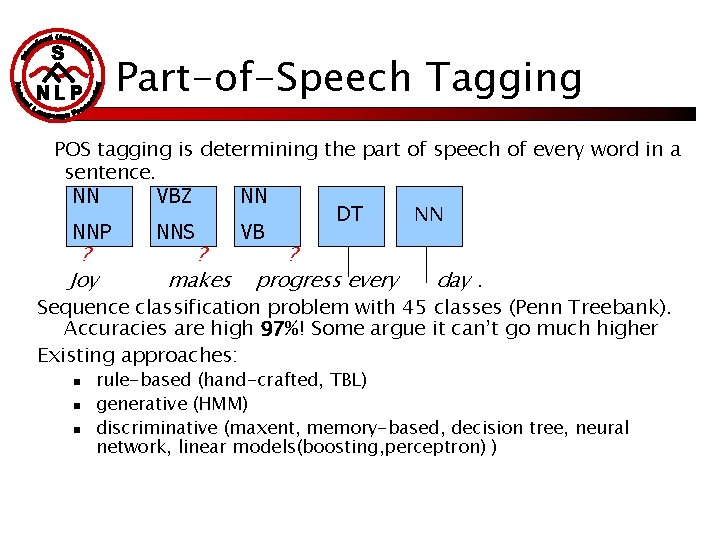

Part-of-Speech Tagging POS tagging is determining the part of speech of every word in a sentence. NN VBZ NN NN DT NNP NNS VB ? Joy ? makes ? progress every day. Sequence classification problem with 45 classes (Penn Treebank). Accuracies are high 97%! Some argue it can’t go much higher Existing approaches: n n n rule-based (hand-crafted, TBL) generative (HMM) discriminative (maxent, memory-based, decision tree, neural network, linear models(boosting, perceptron) )

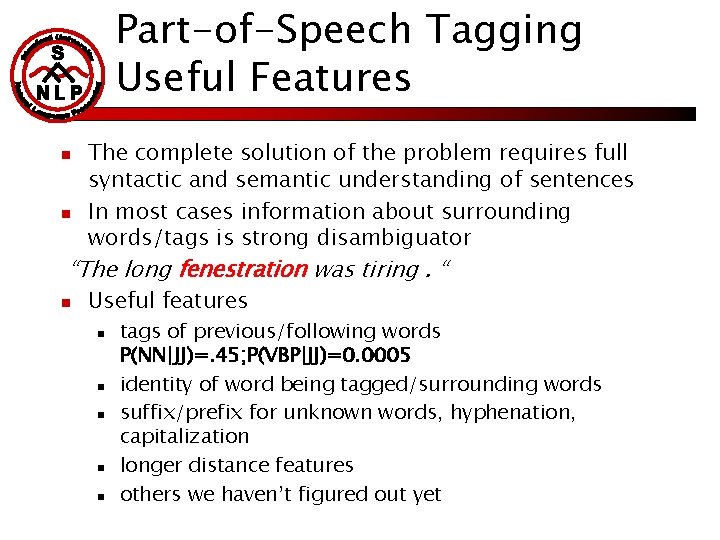

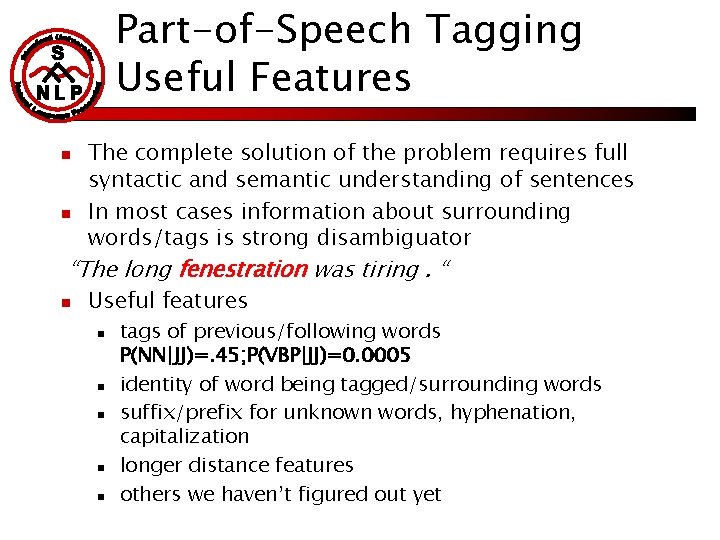

Part-of-Speech Tagging Useful Features n n The complete solution of the problem requires full syntactic and semantic understanding of sentences In most cases information about surrounding words/tags is strong disambiguator “The long fenestration was tiring. “ n Useful features n n n tags of previous/following words P(NN|JJ)=. 45; P(VBP|JJ)=0. 0005 identity of word being tagged/surrounding words suffix/prefix for unknown words, hyphenation, capitalization longer distance features others we haven’t figured out yet

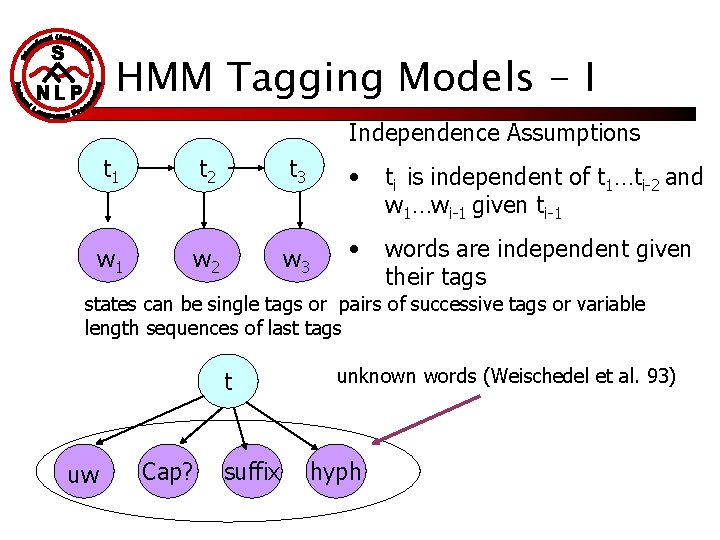

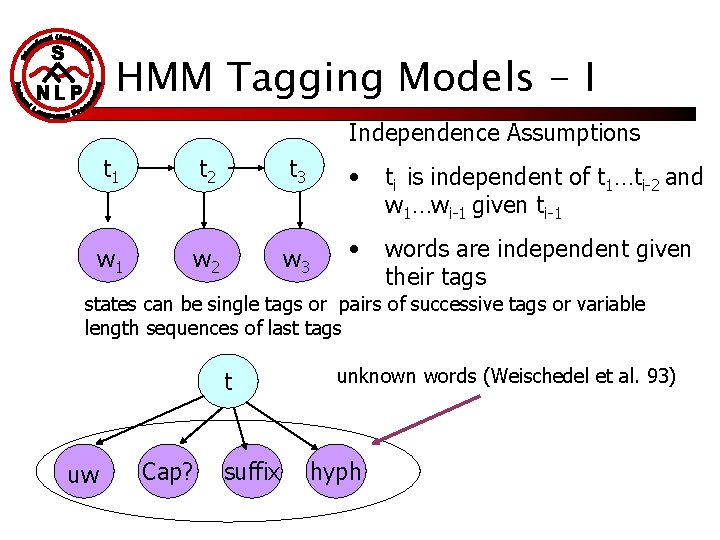

HMM Tagging Models - I Independence Assumptions t 1 t 2 t 3 • ti is independent of t 1…ti-2 and w 1…wi-1 given ti-1 w 2 w 3 • words are independent given their tags states can be single tags or pairs of successive tags or variable length sequences of last tags t uw Cap? suffix unknown words (Weischedel et al. 93) hyph

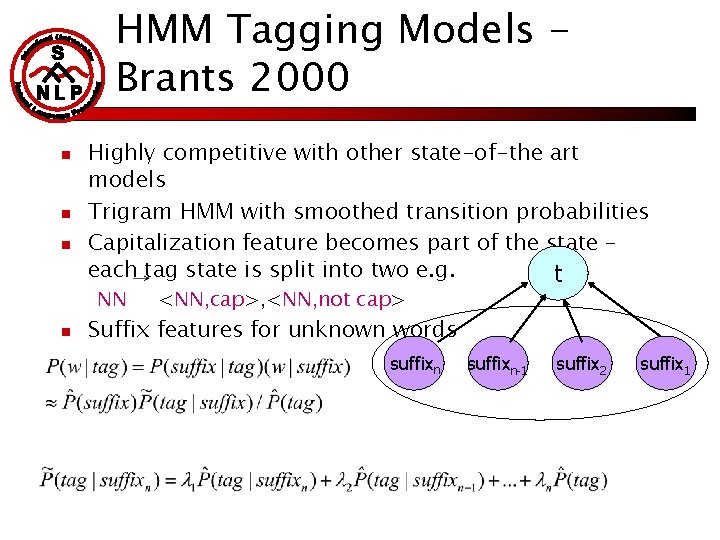

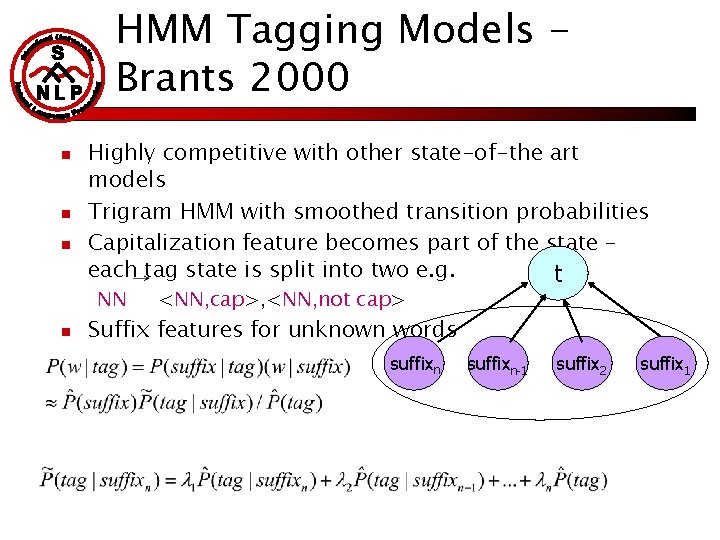

HMM Tagging Models Brants 2000 n n n Highly competitive with other state-of-the art models Trigram HMM with smoothed transition probabilities Capitalization feature becomes part of the state – each tag state is split into two e. g. t NN n <NN, cap>, <NN, not cap> Suffix features for unknown words suffixn-1 suffix 2 suffix 1

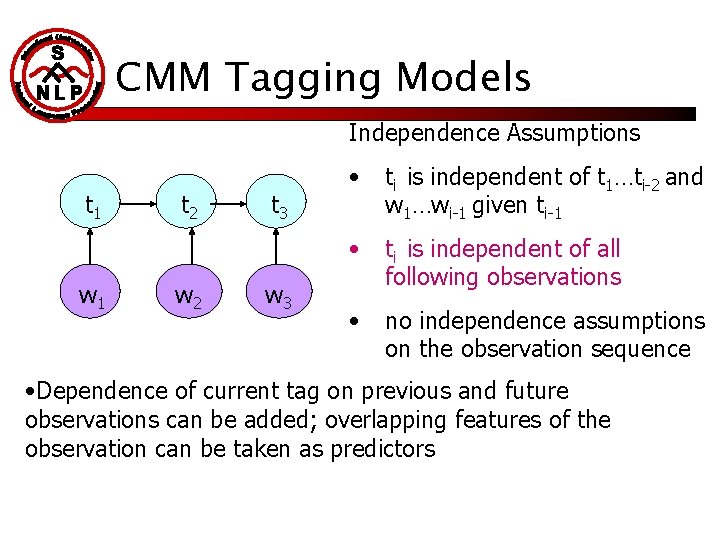

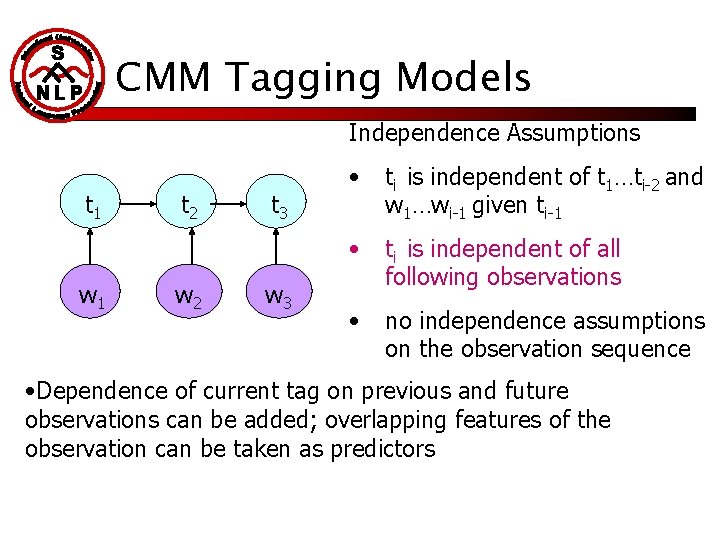

CMM Tagging Models Independence Assumptions t 1 w 1 t 2 w 2 t 3 w 3 • ti is independent of t 1…ti-2 and w 1…wi-1 given ti-1 • ti is independent of all following observations • no independence assumptions on the observation sequence • Dependence of current tag on previous and future observations can be added; overlapping features of the observation can be taken as predictors

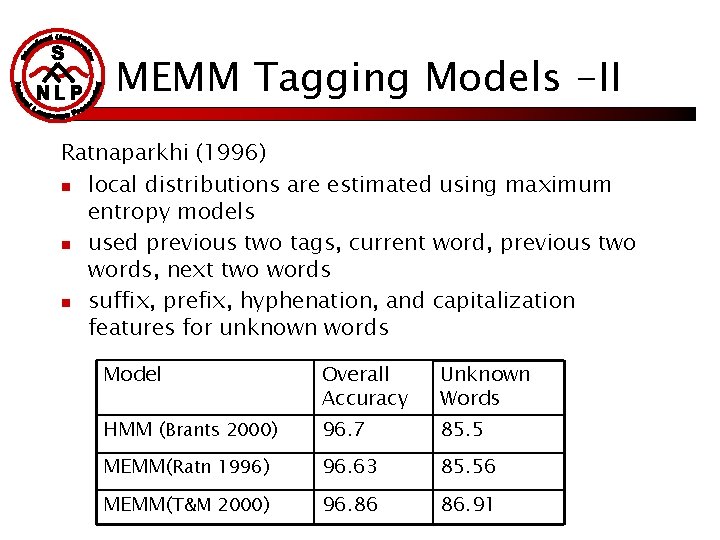

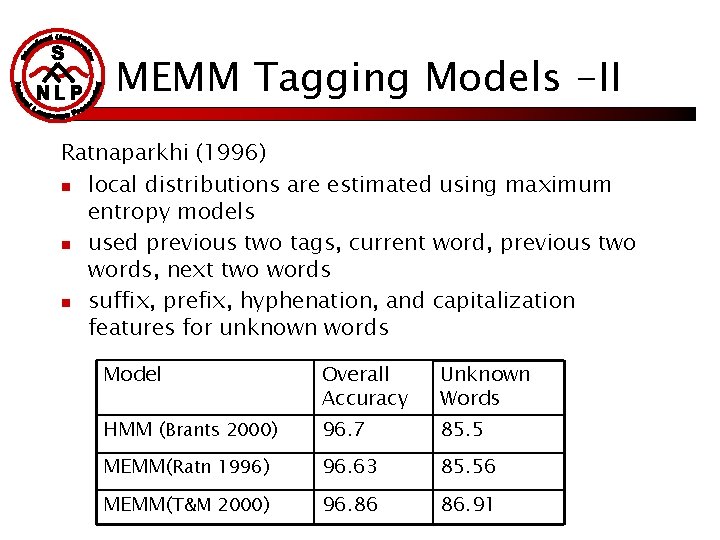

MEMM Tagging Models -II Ratnaparkhi (1996) n local distributions are estimated using maximum entropy models n used previous two tags, current word, previous two words, next two words n suffix, prefix, hyphenation, and capitalization features for unknown words Model Overall Accuracy Unknown Words HMM (Brants 2000) 96. 7 85. 5 MEMM(Ratn 1996) 96. 63 85. 56 MEMM(T&M 2000) 96. 86 86. 91

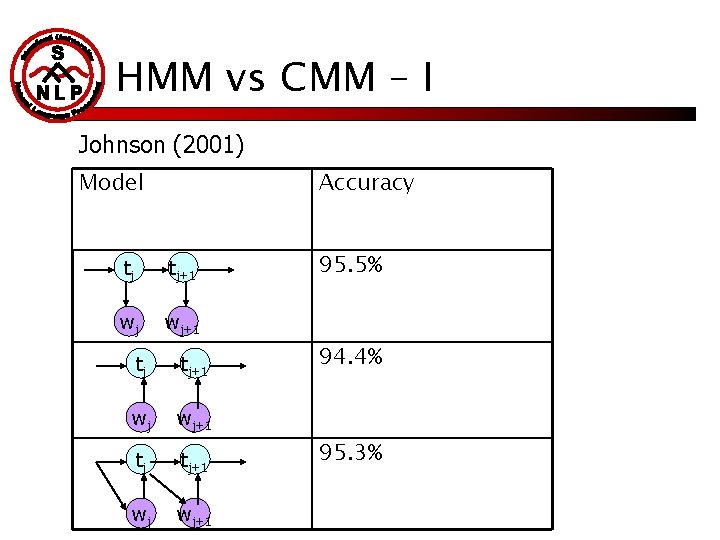

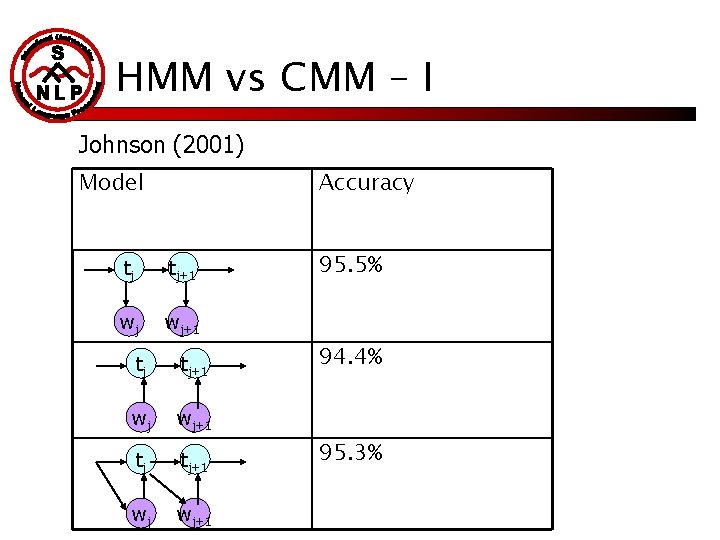

HMM vs CMM – I Johnson (2001) Model Accuracy tj tj+1 wj wj+1 95. 5% 94. 4% 95. 3%

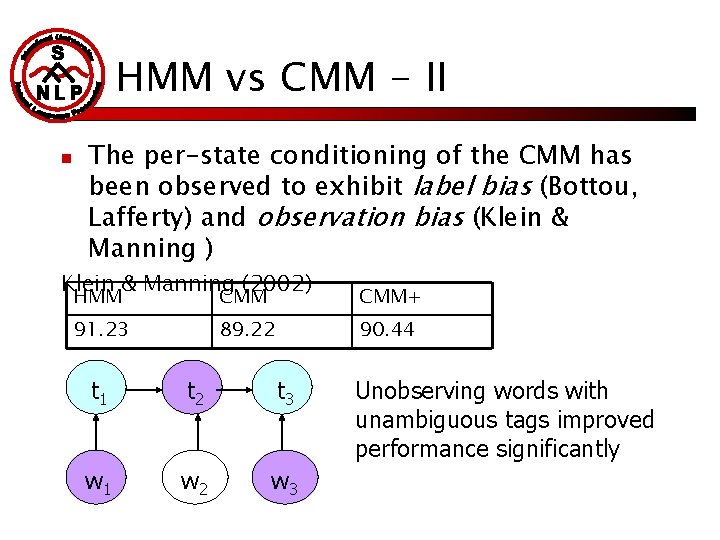

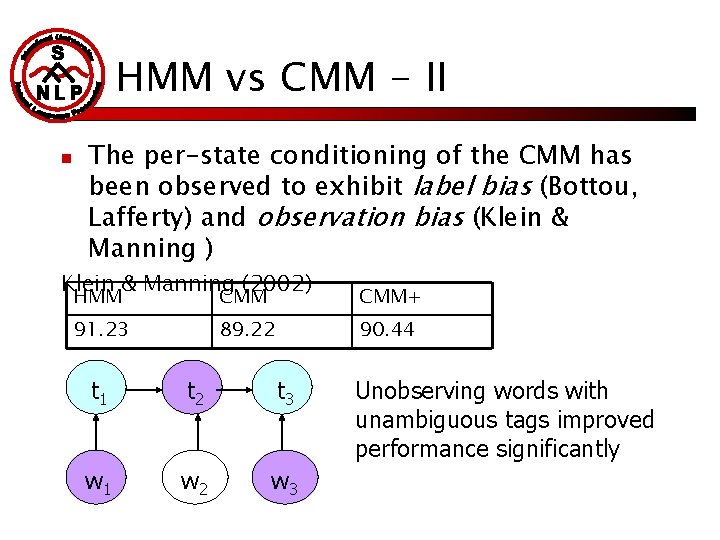

HMM vs CMM - II n The per-state conditioning of the CMM has been observed to exhibit label bias (Bottou, Lafferty) and observation bias (Klein & Manning ) Klein & Manning (2002) HMM CMM+ 91. 23 89. 22 90. 44 t 1 t 2 t 3 w 1 w 2 w 3 Unobserving words with unambiguous tags improved performance significantly

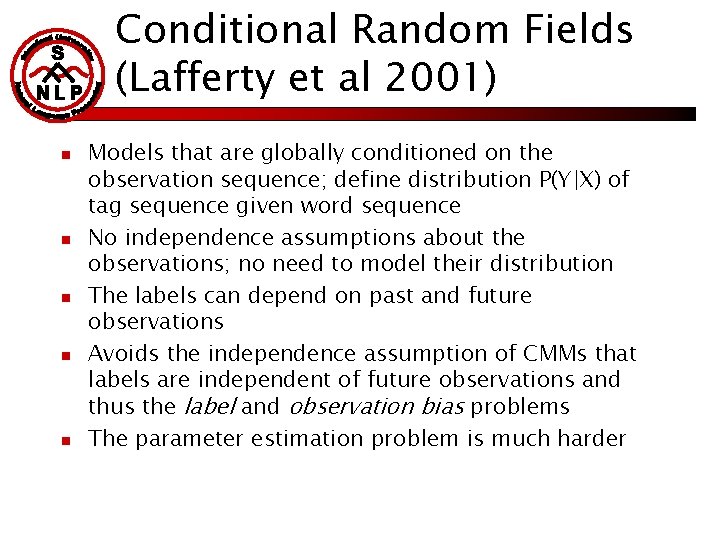

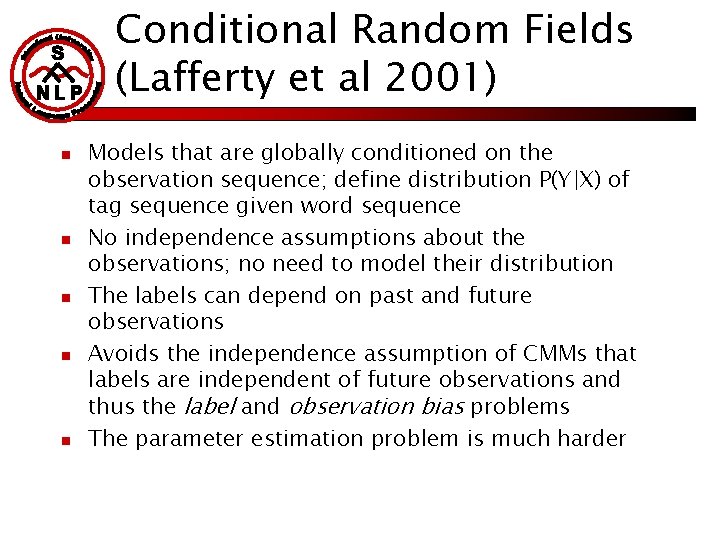

Conditional Random Fields (Lafferty et al 2001) n n n Models that are globally conditioned on the observation sequence; define distribution P(Y|X) of tag sequence given word sequence No independence assumptions about the observations; no need to model their distribution The labels can depend on past and future observations Avoids the independence assumption of CMMs that labels are independent of future observations and thus the label and observation bias problems The parameter estimation problem is much harder

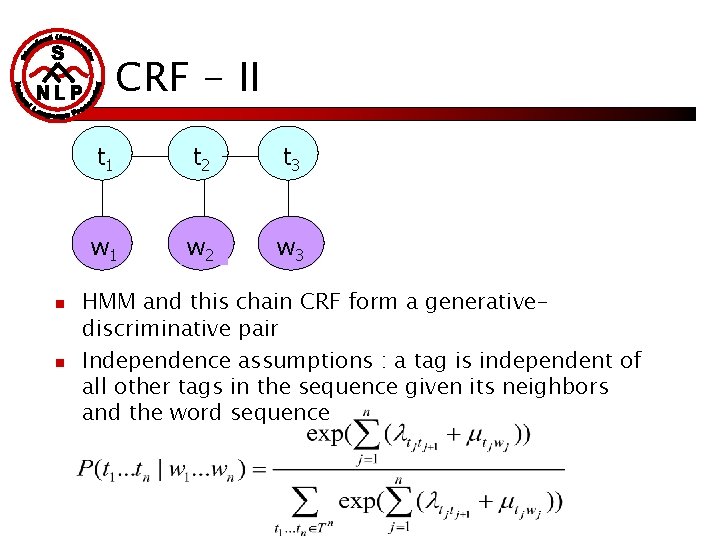

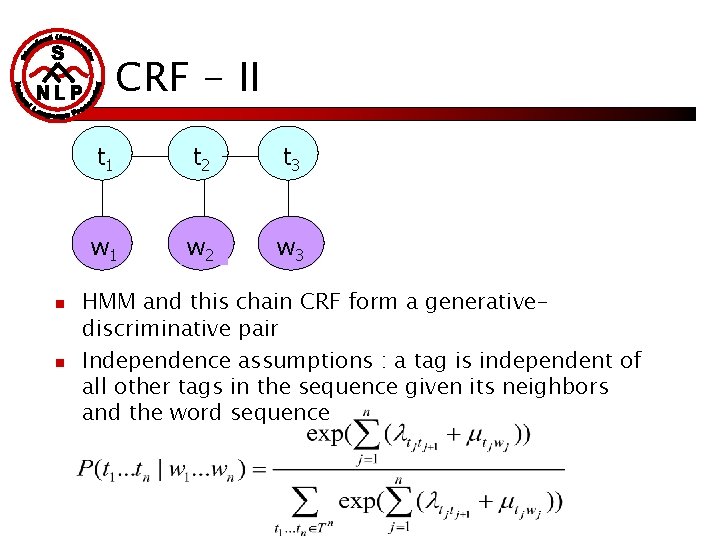

CRF - II n n t 1 t 2 t 3 w 1 w 2 w 3 HMM and this chain CRF form a generativediscriminative pair Independence assumptions : a tag is independent of all other tags in the sequence given its neighbors and the word sequence

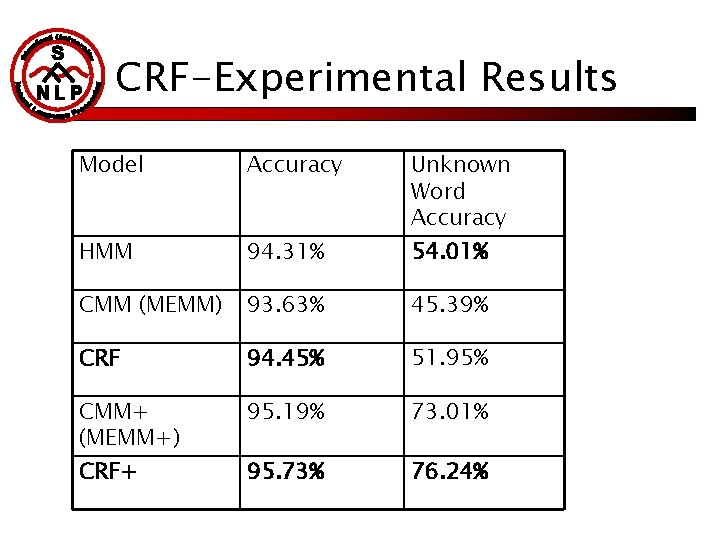

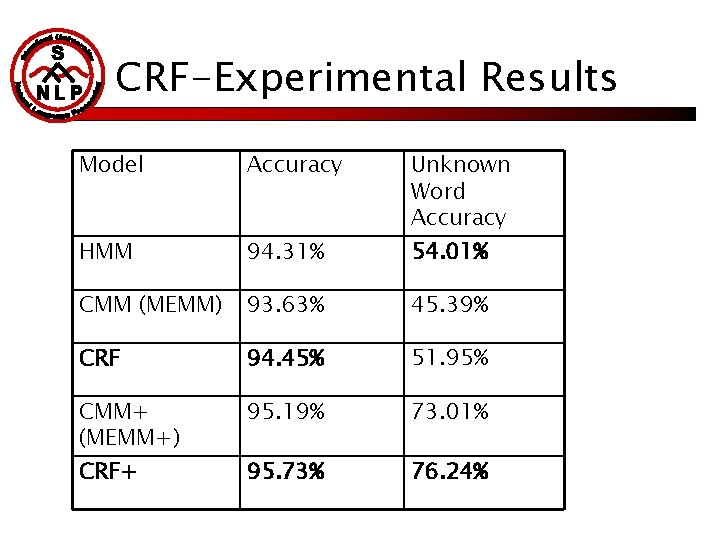

CRF-Experimental Results Model Accuracy HMM 94. 31% CMM (MEMM) 93. 63% 45. 39% CRF 94. 45% 51. 95% CMM+ (MEMM+) 95. 19% 73. 01% 95. 73% 76. 24% CRF+ Unknown Word Accuracy 54. 01%

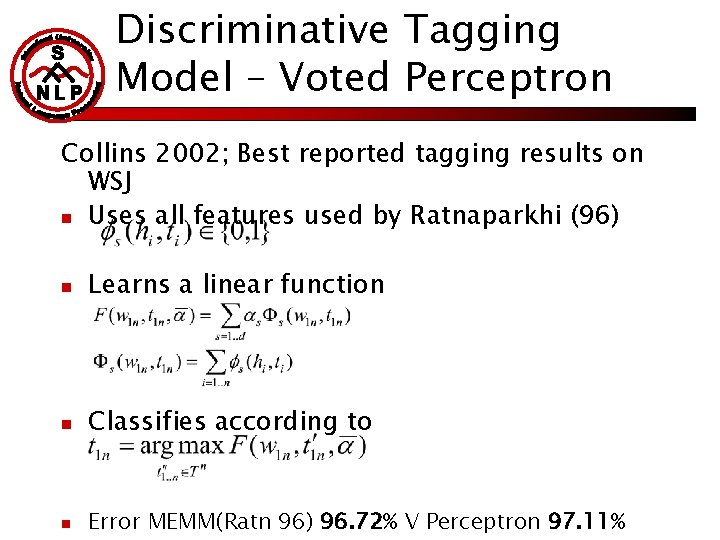

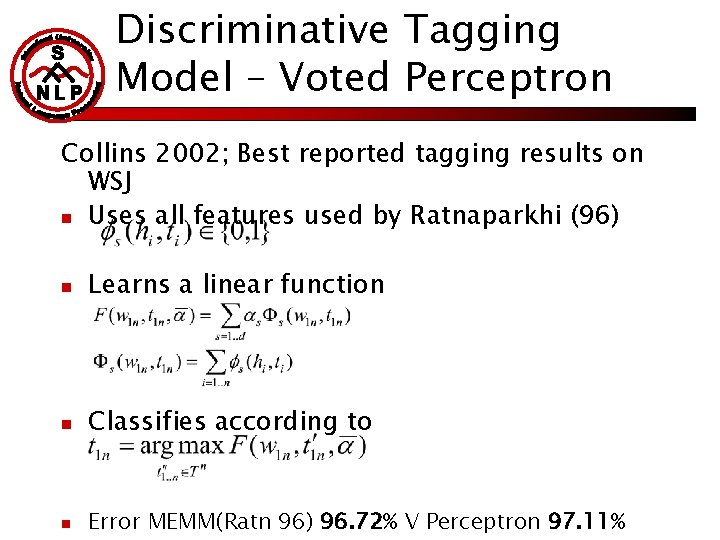

Discriminative Tagging Model – Voted Perceptron Collins 2002; Best reported tagging results on WSJ n Uses all features used by Ratnaparkhi (96) n Learns a linear function n Classifies according to n Error MEMM(Ratn 96) 96. 72% V Perceptron 97. 11%

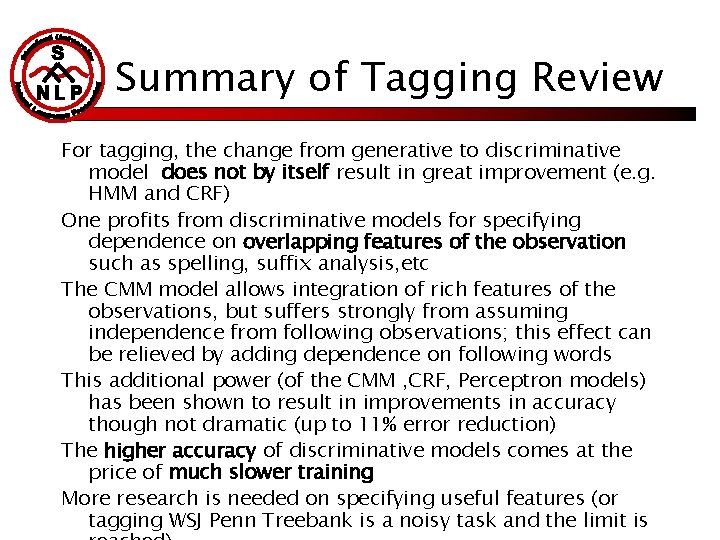

Summary of Tagging Review For tagging, the change from generative to discriminative model does not by itself result in great improvement (e. g. HMM and CRF) One profits from discriminative models for specifying dependence on overlapping features of the observation such as spelling, suffix analysis, etc The CMM model allows integration of rich features of the observations, but suffers strongly from assuming independence from following observations; this effect can be relieved by adding dependence on following words This additional power (of the CMM , CRF, Perceptron models) has been shown to result in improvements in accuracy though not dramatic (up to 11% error reduction) The higher accuracy of discriminative models comes at the price of much slower training More research is needed on specifying useful features (or tagging WSJ Penn Treebank is a noisy task and the limit is

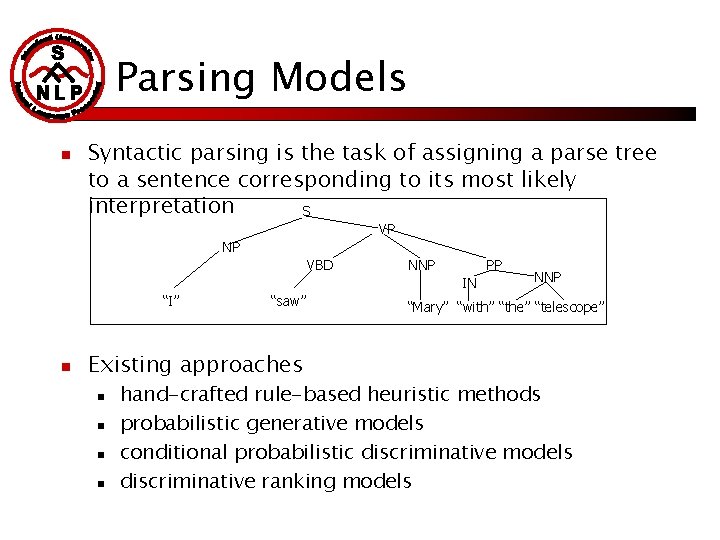

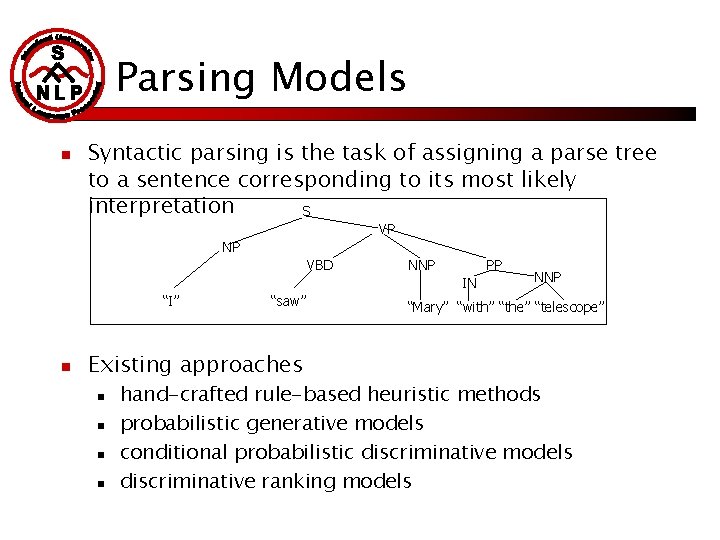

Parsing Models n Syntactic parsing is the task of assigning a parse tree to a sentence corresponding to its most likely interpretation S VP NP VBD NNP PP IN “I” n “saw” Existing approaches n n NNP “Mary” “with” “the” “telescope” hand-crafted rule-based heuristic methods probabilistic generative models conditional probabilistic discriminative models discriminative ranking models

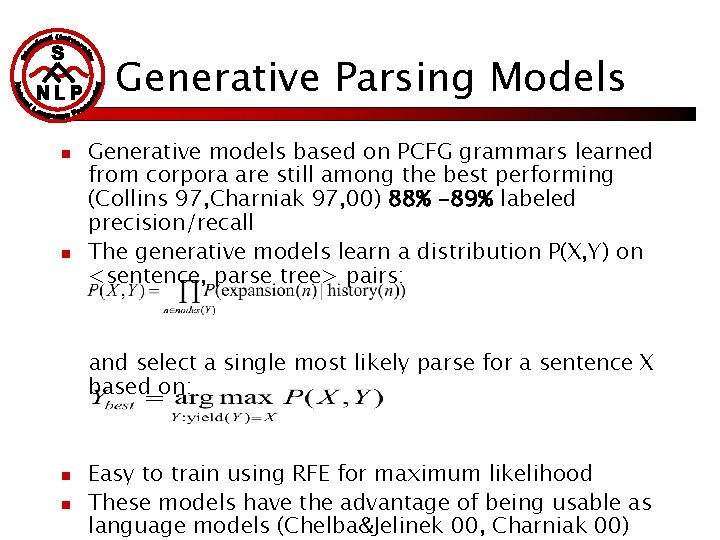

Generative Parsing Models n n Generative models based on PCFG grammars learned from corpora are still among the best performing (Collins 97, Charniak 97, 00) 88% -89% labeled precision/recall The generative models learn a distribution P(X, Y) on <sentence, parse tree> pairs: and select a single most likely parse for a sentence X based on: n n Easy to train using RFE for maximum likelihood These models have the advantage of being usable as language models (Chelba&Jelinek 00, Charniak 00)

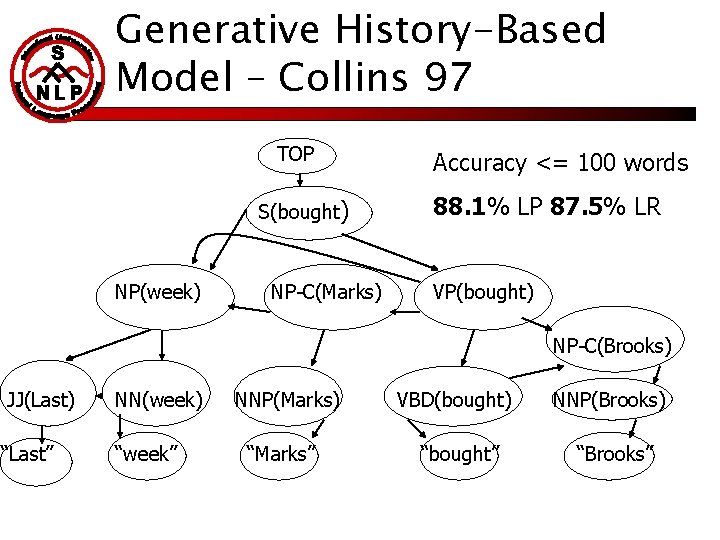

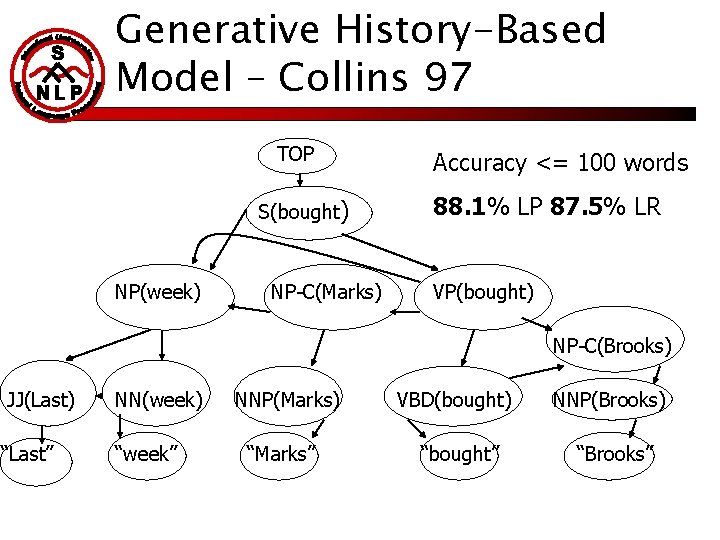

Generative History-Based Model – Collins 97 TOP S(bought) NP(week) NP-C(Marks) Accuracy <= 100 words 88. 1% LP 87. 5% LR VP(bought) NP-C(Brooks) JJ(Last) “Last” NN(week) “week” NNP(Marks) “Marks” VBD(bought) NNP(Brooks) “bought” “Brooks”

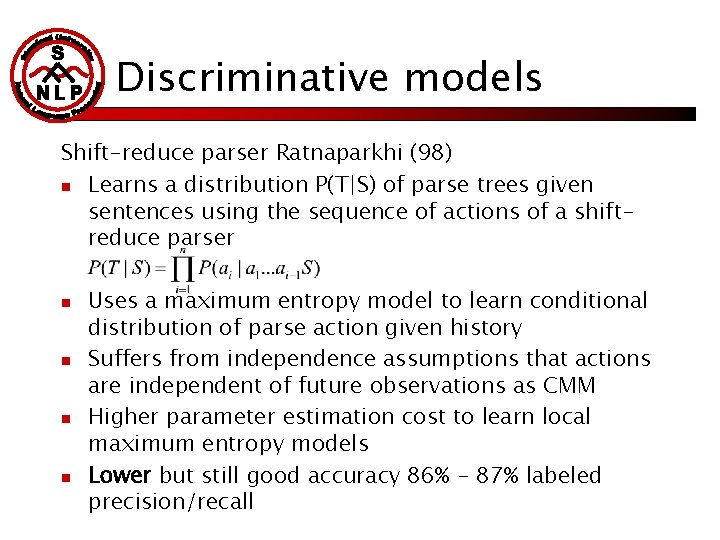

Discriminative models Shift-reduce parser Ratnaparkhi (98) n Learns a distribution P(T|S) of parse trees given sentences using the sequence of actions of a shiftreduce parser n n Uses a maximum entropy model to learn conditional distribution of parse action given history Suffers from independence assumptions that actions are independent of future observations as CMM Higher parameter estimation cost to learn local maximum entropy models Lower but still good accuracy 86% - 87% labeled precision/recall

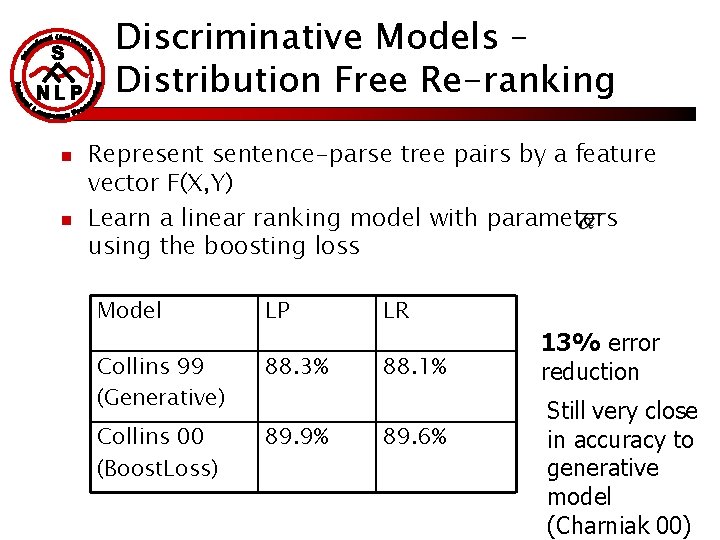

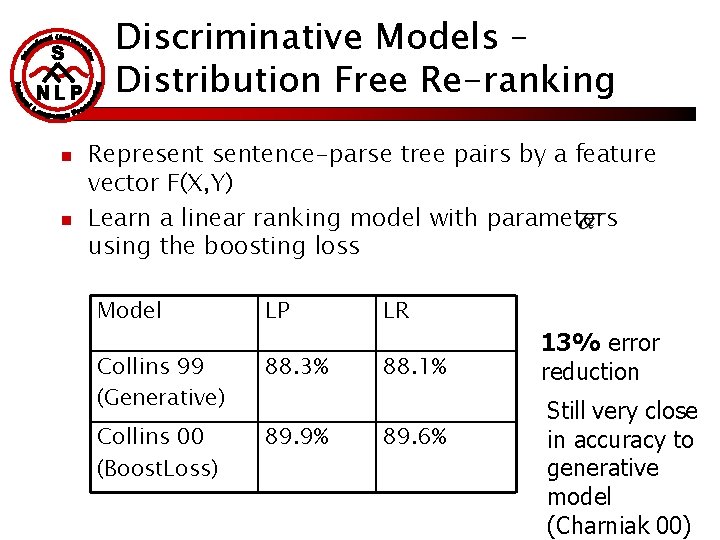

Discriminative Models – Distribution Free Re-ranking n n Representence-parse tree pairs by a feature vector F(X, Y) Learn a linear ranking model with parameters using the boosting loss Model LP Collins 99 (Generative) 88. 3% Collins 00 (Boost. Loss) 89. 9% LR 88. 1% 89. 6% 13% error reduction Still very close in accuracy to generative model (Charniak 00)

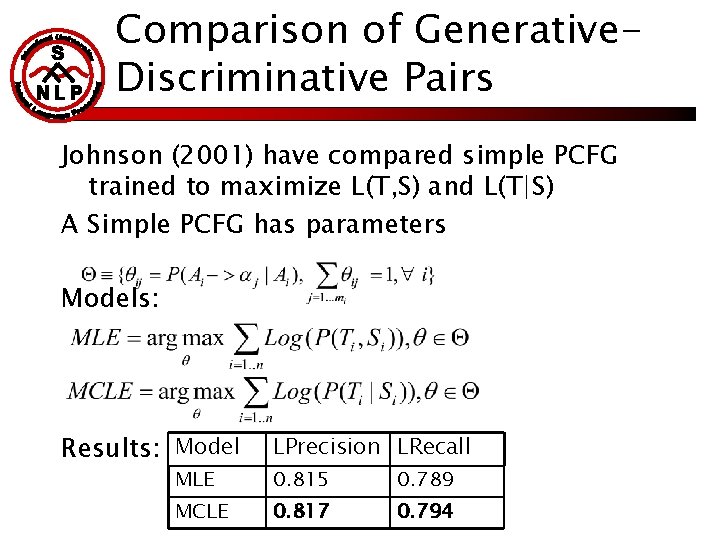

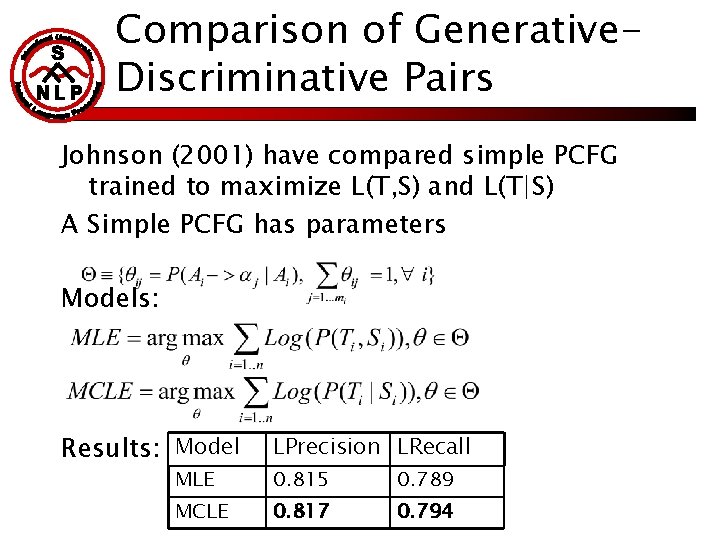

Comparison of Generative. Discriminative Pairs Johnson (2001) have compared simple PCFG trained to maximize L(T, S) and L(T|S) A Simple PCFG has parameters Models: Results: Model LPrecision LRecall MLE 0. 815 0. 789 MCLE 0. 817 0. 794

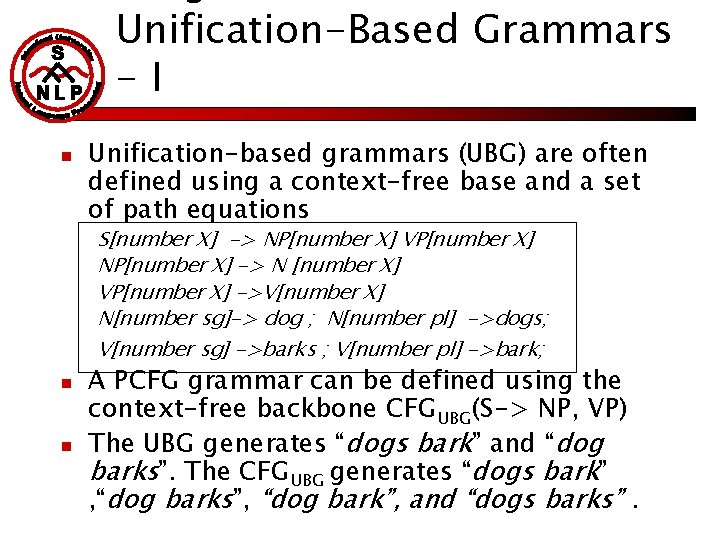

Unification-Based Grammars -I n Unification-based grammars (UBG) are often defined using a context-free base and a set of path equations S[number X] -> NP[number X] VP[number X] NP[number X] -> N [number X] VP[number X] ->V[number X] N[number sg]-> dog ; N[number pl] ->dogs; V[number sg] ->barks ; V[number pl] ->bark; n n A PCFG grammar can be defined using the context-free backbone CFGUBG(S-> NP, VP) The UBG generates “dogs bark” and “dog barks”. The CFGUBG generates “dogs bark” , “dog barks”, “dog bark”, and “dogs barks”.

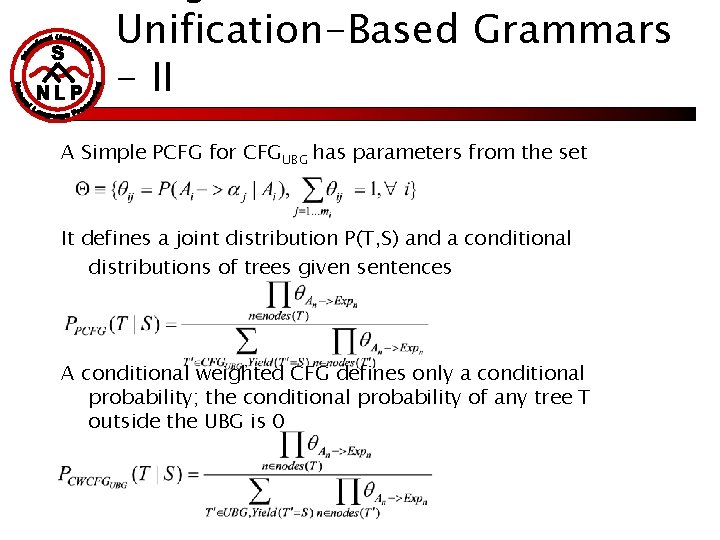

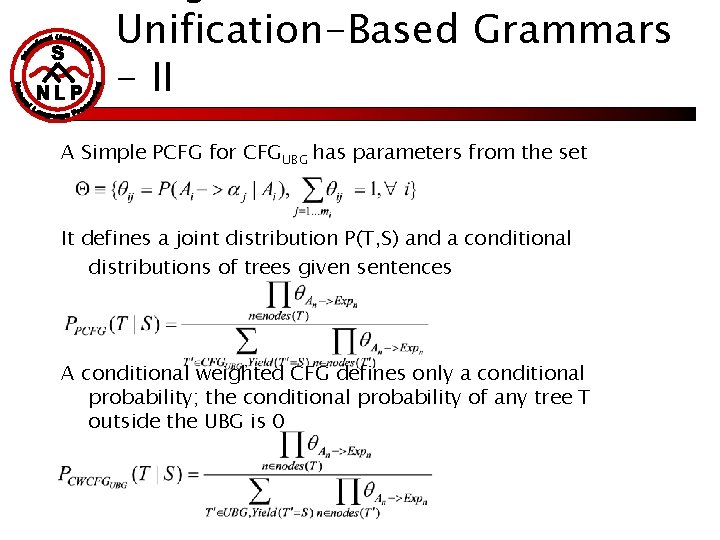

Unification-Based Grammars - II A Simple PCFG for CFGUBG has parameters from the set It defines a joint distribution P(T, S) and a conditional distributions of trees given sentences A conditional weighted CFG defines only a conditional probability; the conditional probability of any tree T outside the UBG is 0

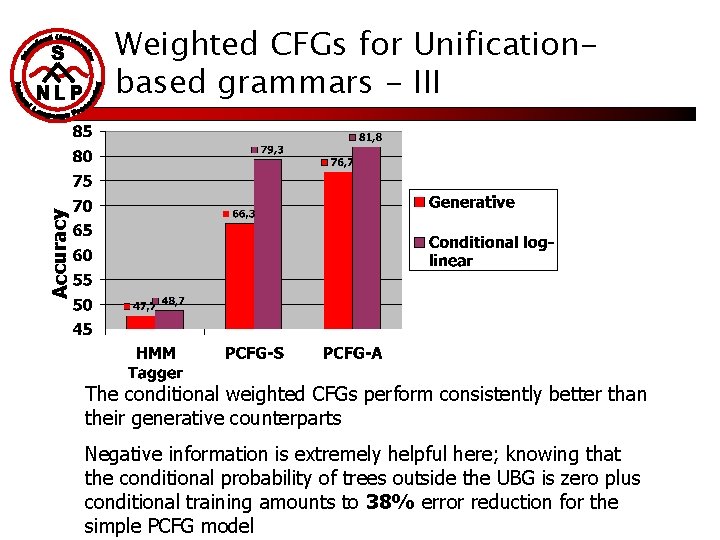

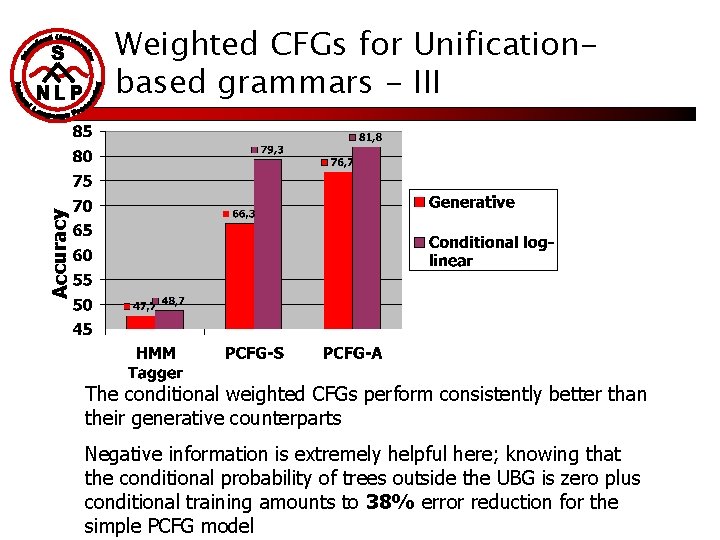

Accuracy Weighted CFGs for Unificationbased grammars - III The conditional weighted CFGs perform consistently better than their generative counterparts Negative information is extremely helpful here; knowing that the conditional probability of trees outside the UBG is zero plus conditional training amounts to 38% error reduction for the simple PCFG model

Summary of Parsing Results n n The single small study comparing a parsing generativediscriminative pair for PCFG parsing showed a small (insignificant) advantage for the discriminative model; the added computational cost is probably not worth it The best performing statistical parsers are still generative(Charniak 00, Collins 99) or use a generative model as a preprocessing stage(Collins 00, Collins 2002) (part of which has to do with computational complexity) Discriminative models allow more complex representations such as the all subtrees representation (Collins 2002) or other overlapping features (Collins 00) and this has led to up to 13% improvement over a generative model Discriminative training seems promising for parse selection tasks for UBG, where the number of possible analyses is not enormous

Conclusions n n For the current sizes of training data available for NLP tasks such as tagging and parsing, discriminative training has not by itself yielded large gains in accuracy The flexibility of including non-independent features of the observations in discriminative models has resulted in improved part-of-speech tagging models (for some tasks it might not justify the added computational complexity) For parsing, discriminative training has shown improvements when used for re-ranking or when using negative information (UBG) if you come up with a feature that is very hard to incorporate in a generative models and seems extremely useful, see if a discriminative approach will be computationally feasible !