Econometrics Chengyuan Yin School of Mathematics Econometrics 11

![Probability Limits and Expecations What is the difference between E[xn] and plim xn? Probability Limits and Expecations What is the difference between E[xn] and plim xn?](https://slidetodoc.com/presentation_image_h2/7ac0eb20c87b3bd80d893754d5605875/image-10.jpg)

- Slides: 39

Econometrics Chengyuan Yin School of Mathematics

Econometrics 11. Asymptotic Distribution Theory

Preliminary This and our class presentation will be a moderately detailed sketch of these results. More complete presentations appear in Chapter 4 of your text. Please read this chapter thoroughly. We will develop the results that we need as we proceed. Also, (I believe) that this topic is the most difficult conceptually in this course, so do feel free to ask questions in class.

Asymptotics: Setting Most modeling situations involve stochastic regressors, nonlinear models or nonlinear estimation techniques. The number of exact statistical results, such as expected value or true distribution, that can be obtained in these cases is very low. We rely, instead, on approximate results that are based on what we know about the behavior of certain statistics in large samples. Example from basic statistics: What can we say about 1/ We know a lot about. What do we know about its reciprocal?

Convergence Definitions, kinds of convergence as n grows large: 1. To a constant; example, the sample mean, 2. To a random variable; example, a t statistic with n -1 degrees of freedom

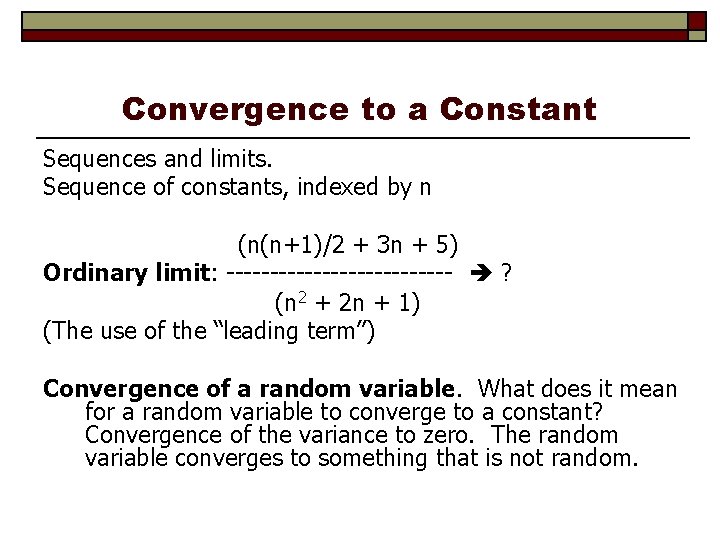

Convergence to a Constant Sequences and limits. Sequence of constants, indexed by n (n(n+1)/2 + 3 n + 5) Ordinary limit: ------------- ? (n 2 + 2 n + 1) (The use of the “leading term”) Convergence of a random variable. What does it mean for a random variable to converge to a constant? Convergence of the variance to zero. The random variable converges to something that is not random.

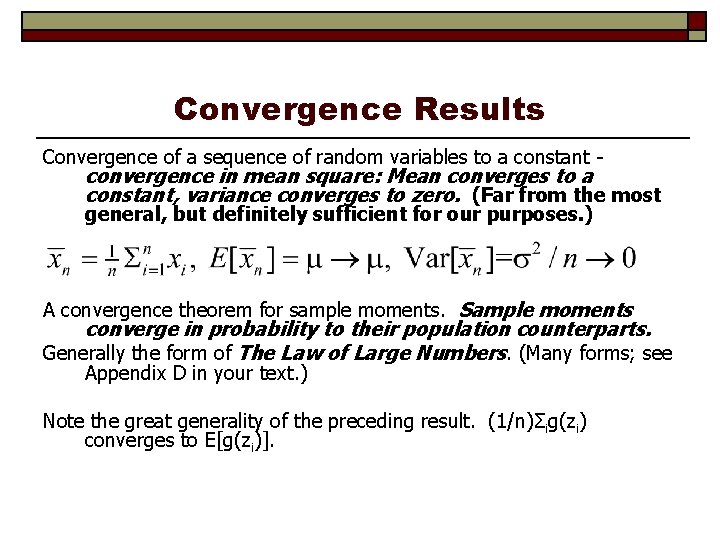

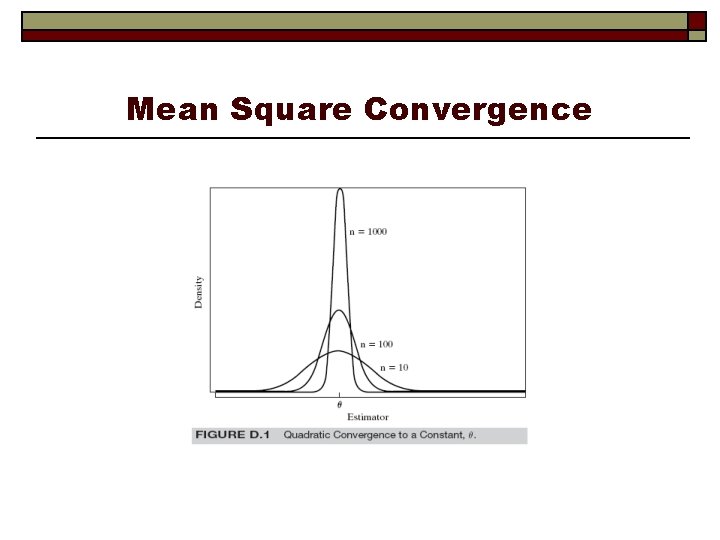

Convergence Results Convergence of a sequence of random variables to a constant - convergence in mean square: Mean converges to a constant, variance converges to zero. (Far from the most general, but definitely sufficient for our purposes. ) A convergence theorem for sample moments. Sample moments converge in probability to their population counterparts. Generally the form of The Law of Large Numbers. (Many forms; see Appendix D in your text. ) Note the great generality of the preceding result. (1/n)Σig(zi) converges to E[g(zi)].

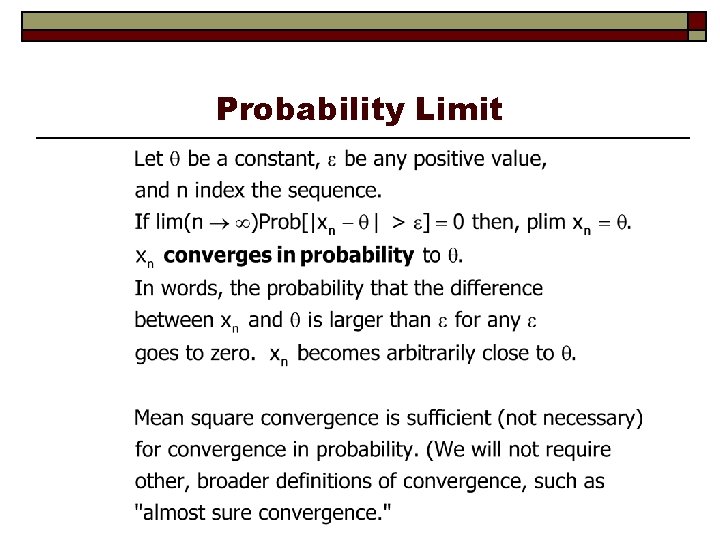

Probability Limit

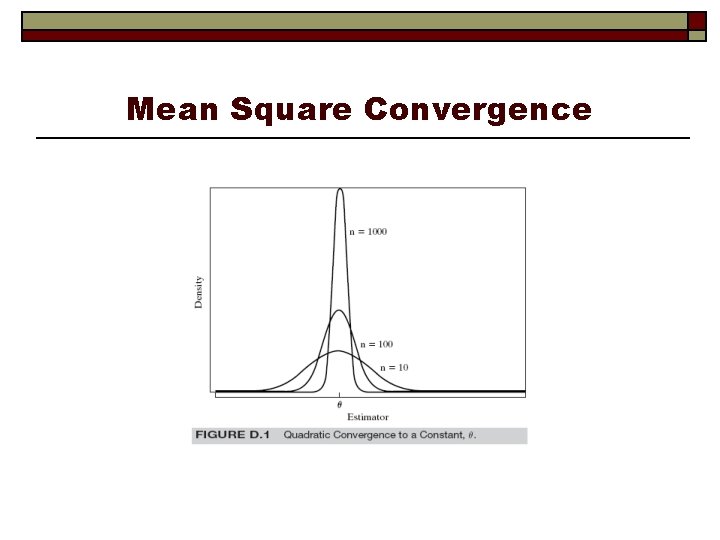

Mean Square Convergence

![Probability Limits and Expecations What is the difference between Exn and plim xn Probability Limits and Expecations What is the difference between E[xn] and plim xn?](https://slidetodoc.com/presentation_image_h2/7ac0eb20c87b3bd80d893754d5605875/image-10.jpg)

Probability Limits and Expecations What is the difference between E[xn] and plim xn?

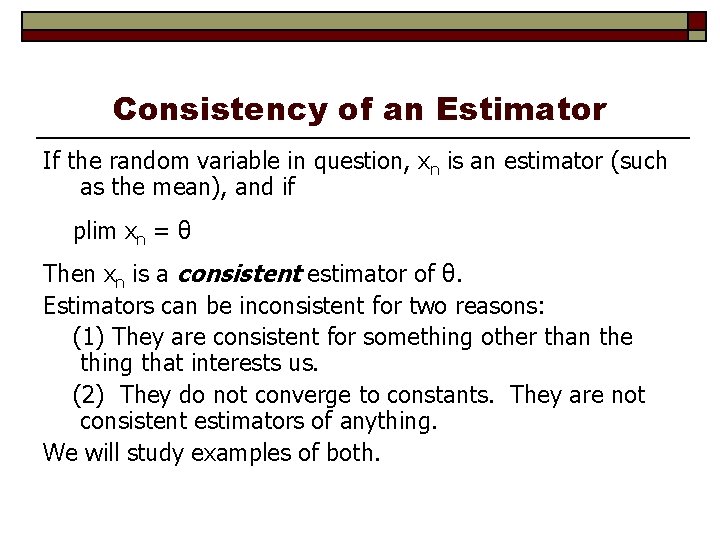

Consistency of an Estimator If the random variable in question, xn is an estimator (such as the mean), and if plim xn = θ Then xn is a consistent estimator of θ. Estimators can be inconsistent for two reasons: (1) They are consistent for something other than the thing that interests us. (2) They do not converge to constants. They are not consistent estimators of anything. We will study examples of both.

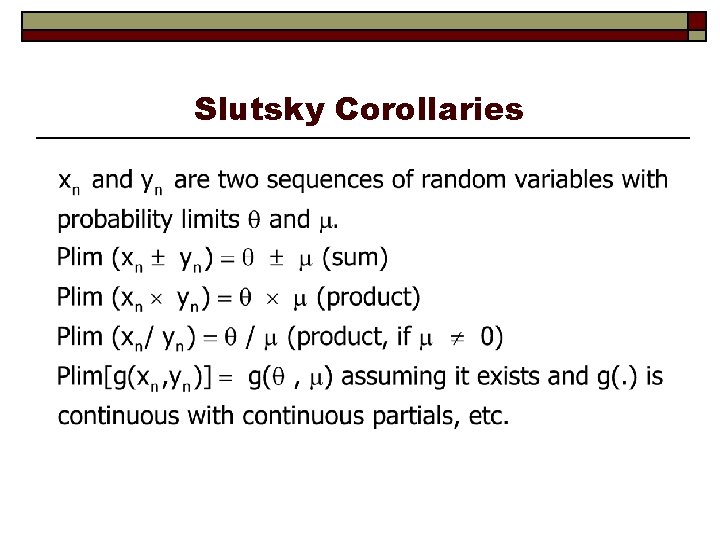

The Slutsky Theorem Assumptions: If xn is a random variable such that plim xn = θ. For now, we assume θ is a constant. g(. ) is a continuous function with continuous derivatives. g(. ) is not a function of n. Conclusion: Then plim[g(xn)] = g[plim(xn)] assuming g[plim(xn)] exists. (VVIR!) Works for probability limits. Does not work for expectations.

Slutsky Corollaries

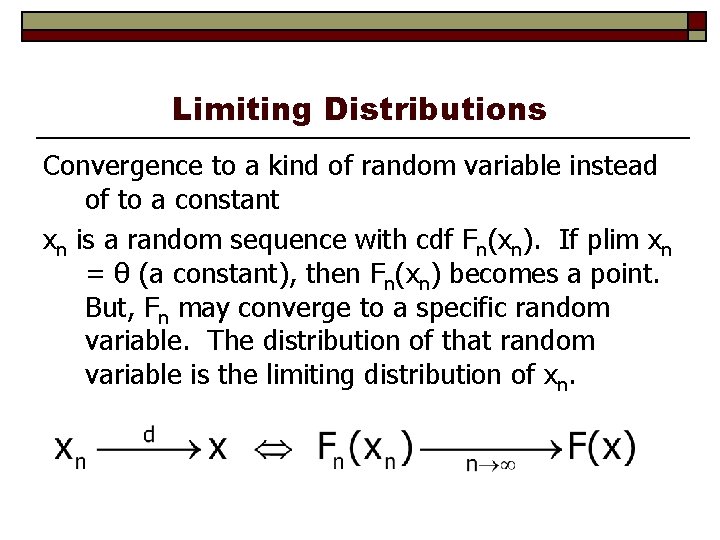

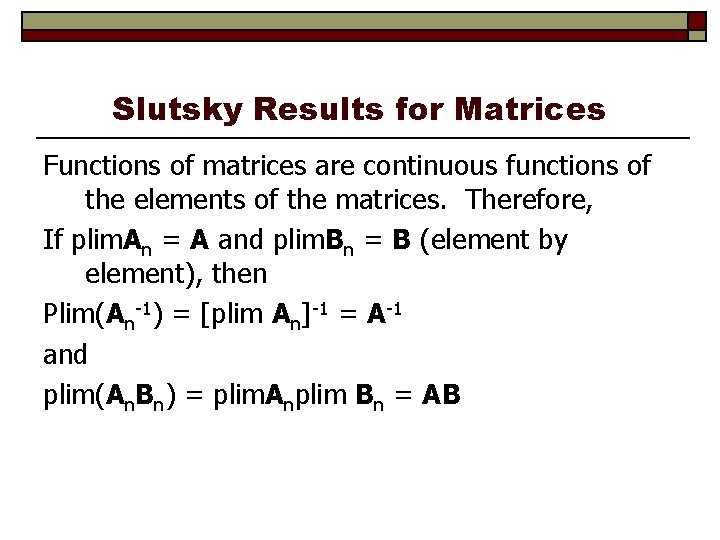

Slutsky Results for Matrices Functions of matrices are continuous functions of the elements of the matrices. Therefore, If plim. An = A and plim. Bn = B (element by element), then Plim(An-1) = [plim An]-1 = A-1 and plim(An. Bn) = plim. Anplim Bn = AB

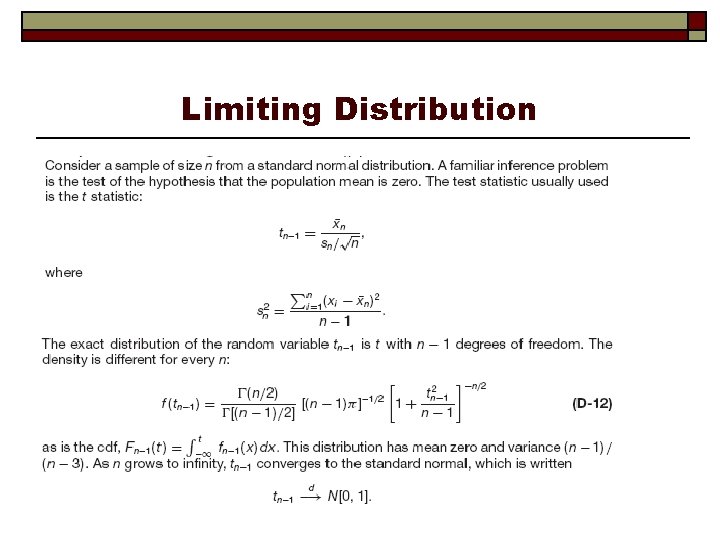

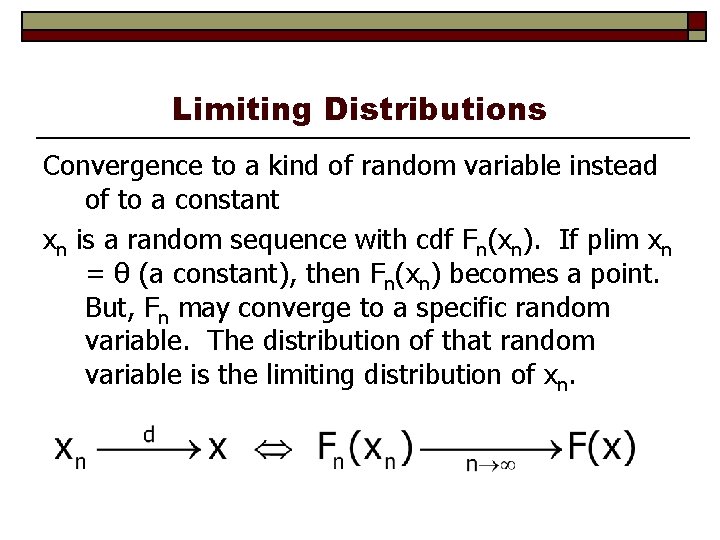

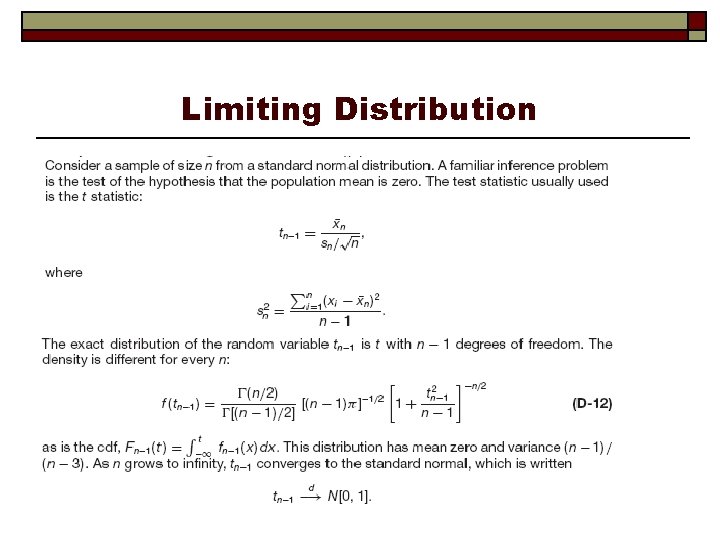

Limiting Distributions Convergence to a kind of random variable instead of to a constant xn is a random sequence with cdf Fn(xn). If plim xn = θ (a constant), then Fn(xn) becomes a point. But, Fn may converge to a specific random variable. The distribution of that random variable is the limiting distribution of xn.

Limiting Distribution

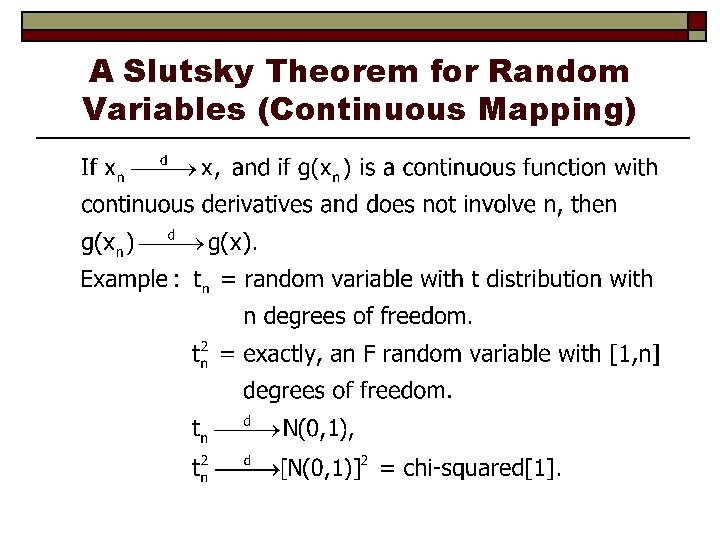

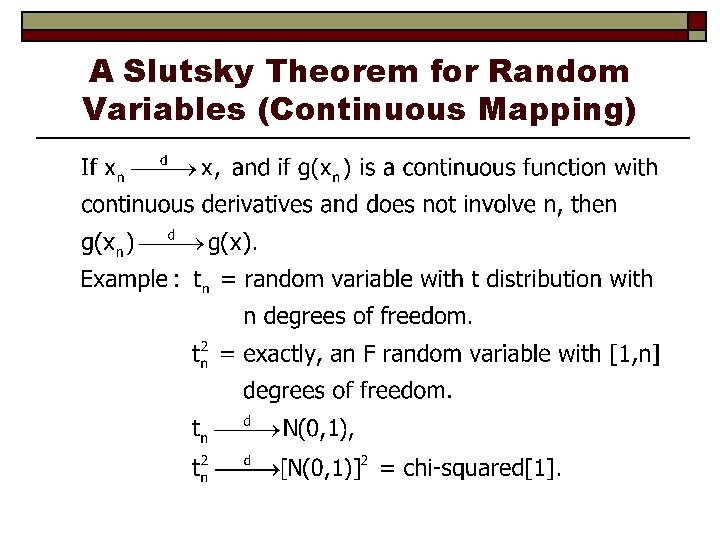

A Slutsky Theorem for Random Variables (Continuous Mapping)

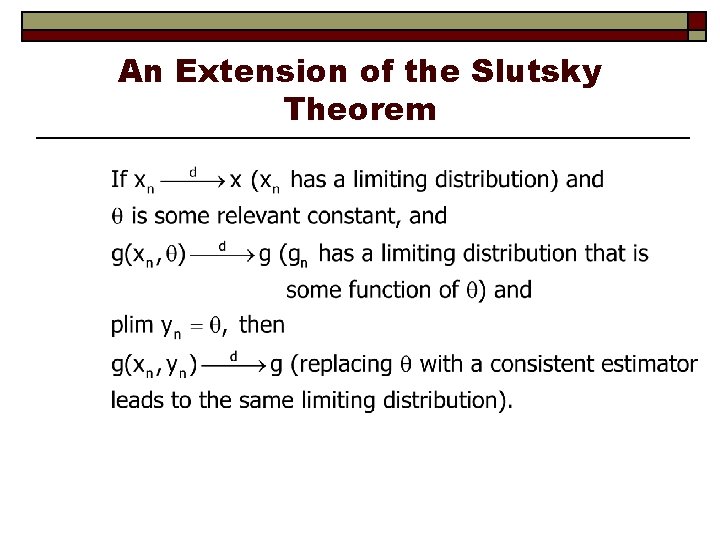

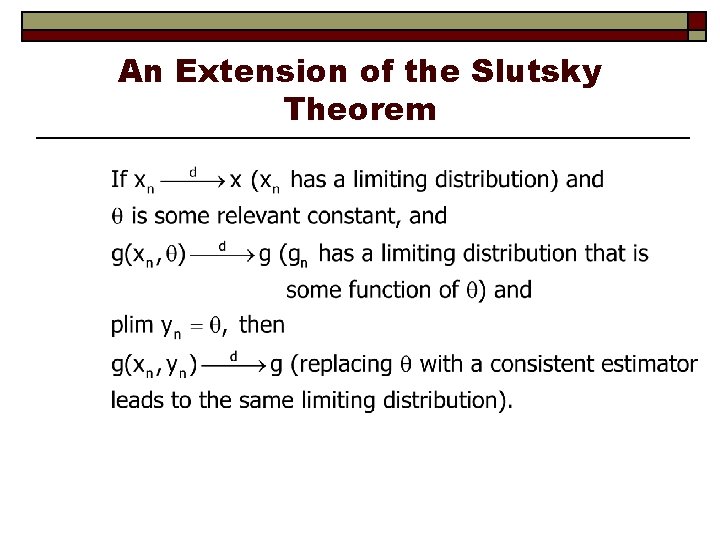

An Extension of the Slutsky Theorem

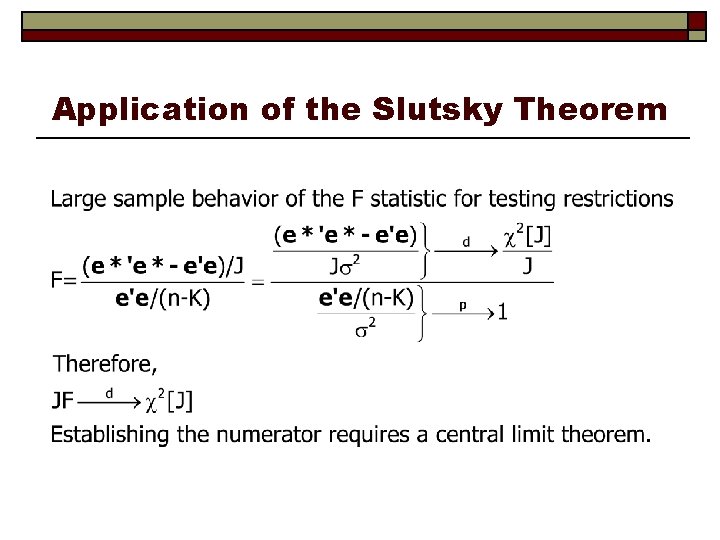

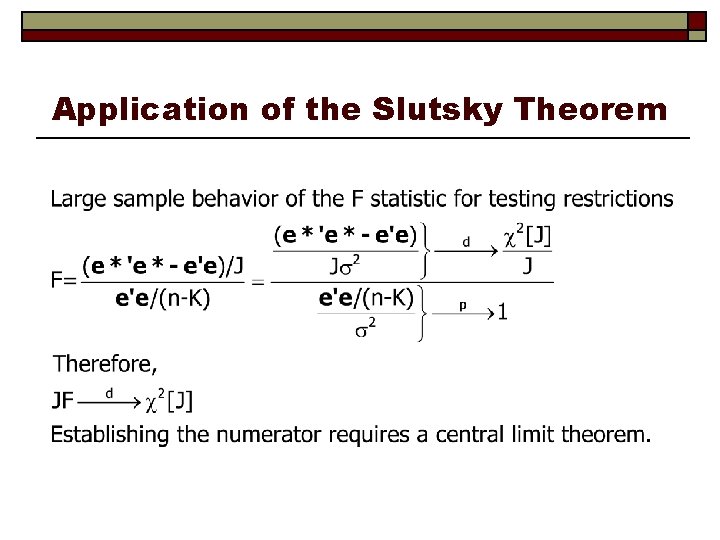

Application of the Slutsky Theorem

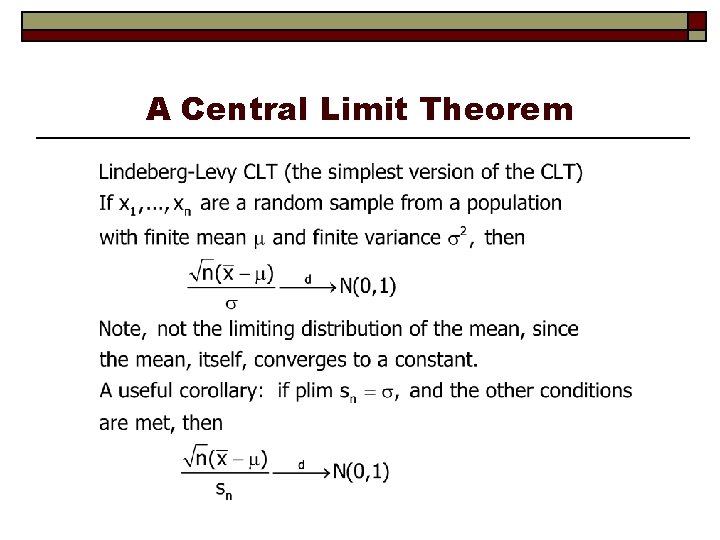

Central Limit Theorems describe the large sample behavior of random variables that involve sums of variables. “Tendency toward normality. ” Generality: When you find sums of random variables, the CLT shows up eventually. The CLT does not state that means of samples have normal distributions.

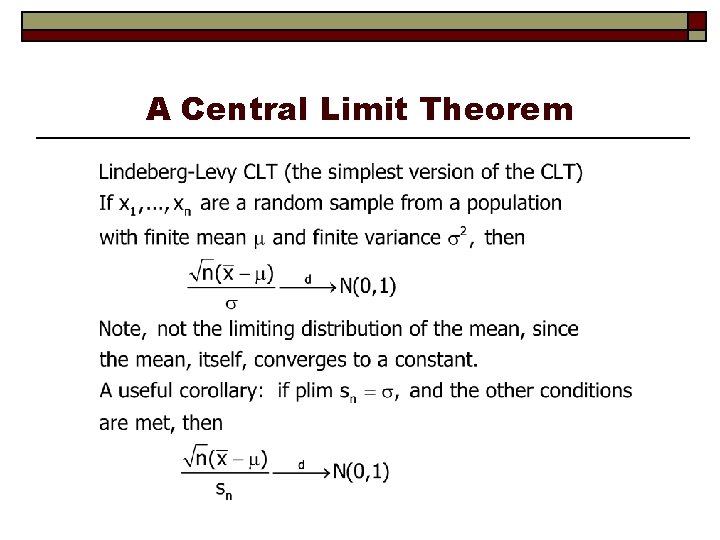

A Central Limit Theorem

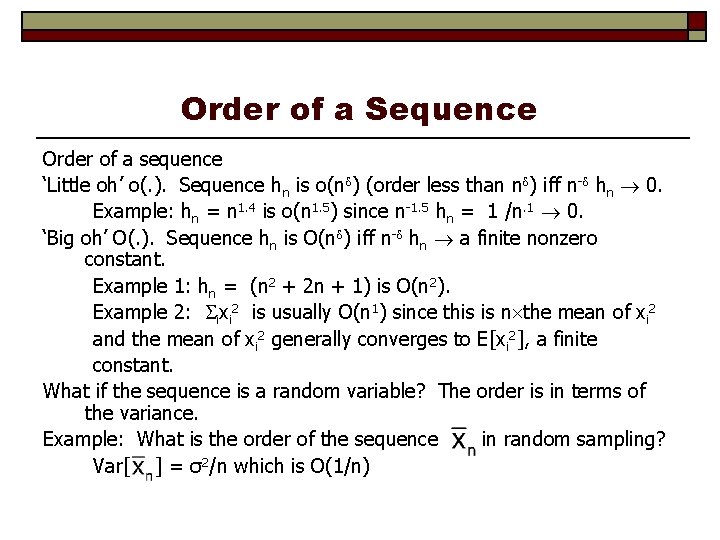

Lindberg-Levy vs. Lindeberg-Feller Lindeberg-Levy assumes random sampling – observations have the same mean and same variance. Lindeberg-Feller allows variances to differ across observations, with some necessary assumptions about how they vary. Most econometric estimators require Lindeberg. Feller.

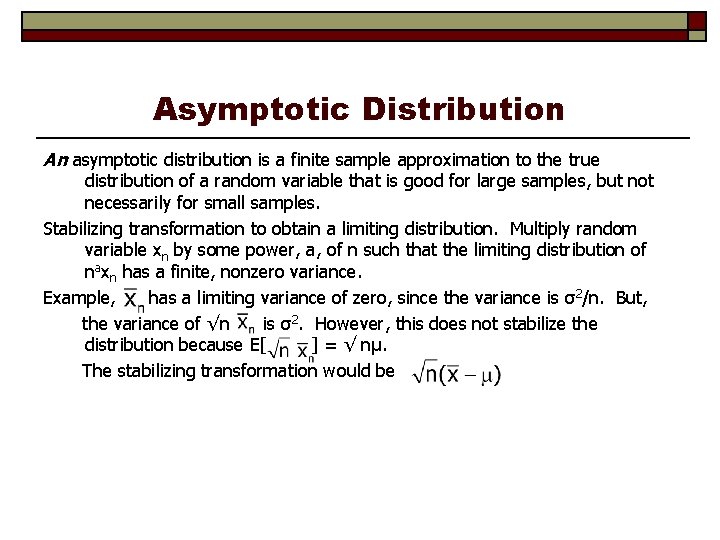

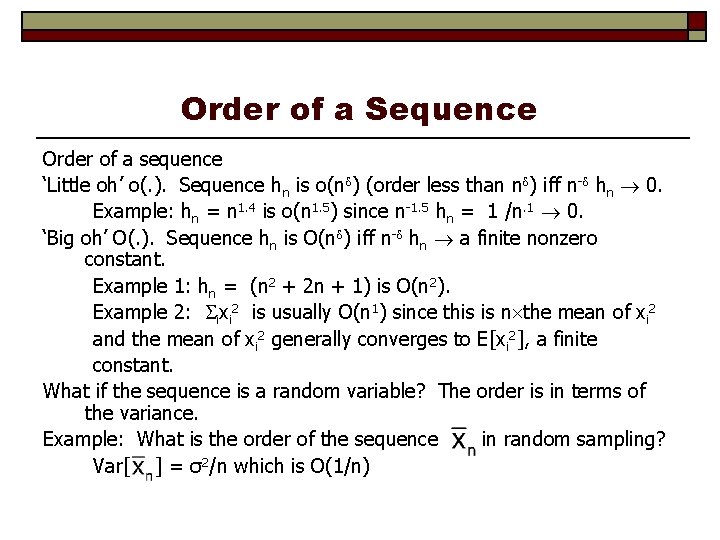

Order of a Sequence Order of a sequence ‘Little oh’ o(. ). Sequence hn is o(n ) (order less than n ) iff n- hn 0. Example: hn = n 1. 4 is o(n 1. 5) since n-1. 5 hn = 1 /n. 1 0. ‘Big oh’ O(. ). Sequence hn is O(n ) iff n- hn a finite nonzero constant. Example 1: hn = (n 2 + 2 n + 1) is O(n 2). Example 2: ixi 2 is usually O(n 1) since this is n the mean of xi 2 and the mean of xi 2 generally converges to E[xi 2], a finite constant. What if the sequence is a random variable? The order is in terms of the variance. Example: What is the order of the sequence in random sampling? Var[ ] = σ2/n which is O(1/n)

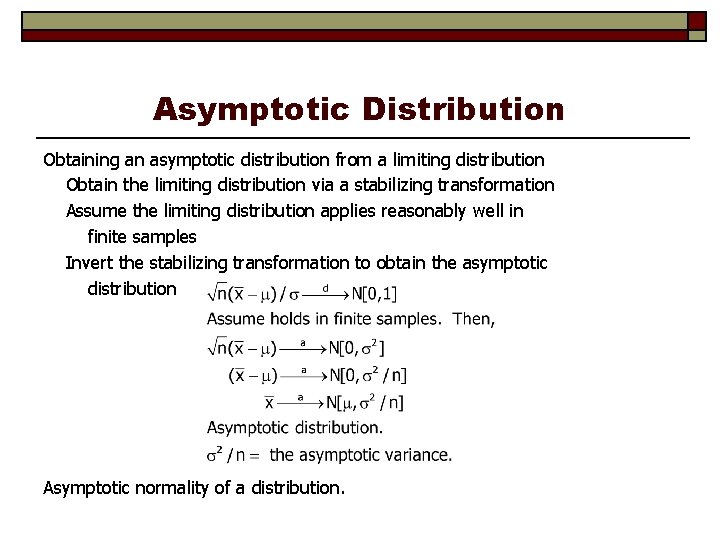

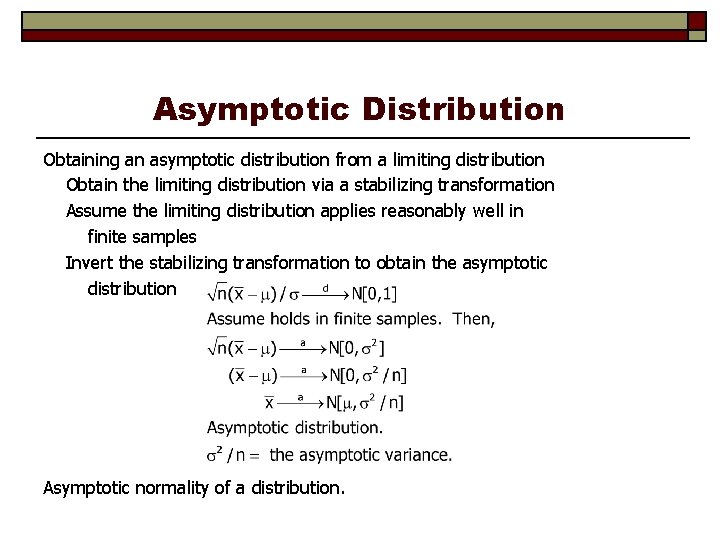

Asymptotic Distribution An asymptotic distribution is a finite sample approximation to the true distribution of a random variable that is good for large samples, but not necessarily for small samples. Stabilizing transformation to obtain a limiting distribution. Multiply random variable xn by some power, a, of n such that the limiting distribution of naxn has a finite, nonzero variance. Example, has a limiting variance of zero, since the variance is σ2/n. But, the variance of √n is σ2. However, this does not stabilize the distribution because E[ ] = √ nμ. The stabilizing transformation would be

Asymptotic Distribution Obtaining an asymptotic distribution from a limiting distribution Obtain the limiting distribution via a stabilizing transformation Assume the limiting distribution applies reasonably well in finite samples Invert the stabilizing transformation to obtain the asymptotic distribution Asymptotic normality of a distribution.

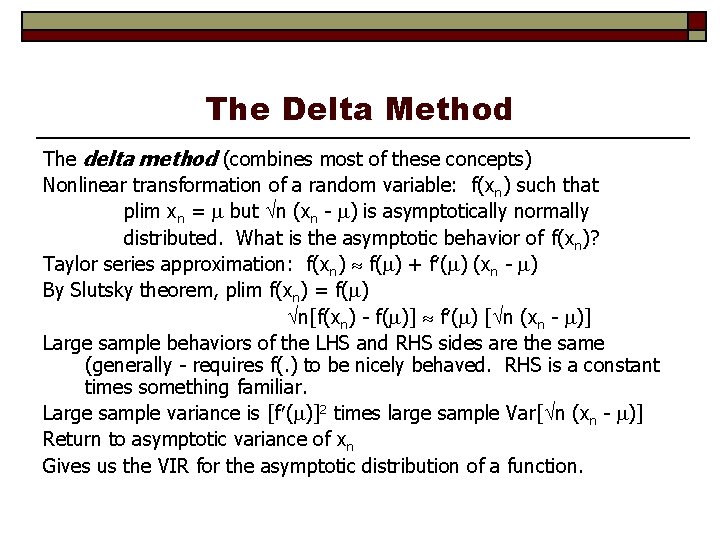

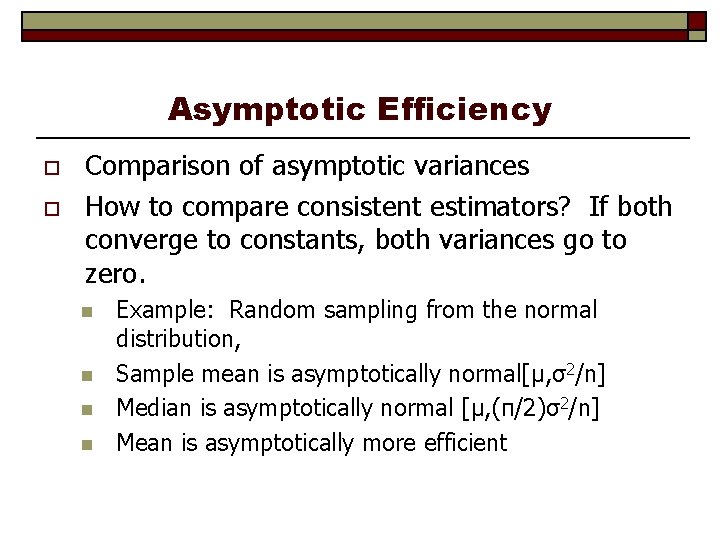

Asymptotic Efficiency o o Comparison of asymptotic variances How to compare consistent estimators? If both converge to constants, both variances go to zero. n n Example: Random sampling from the normal distribution, Sample mean is asymptotically normal[μ, σ2/n] Median is asymptotically normal [μ, (π/2)σ2/n] Mean is asymptotically more efficient

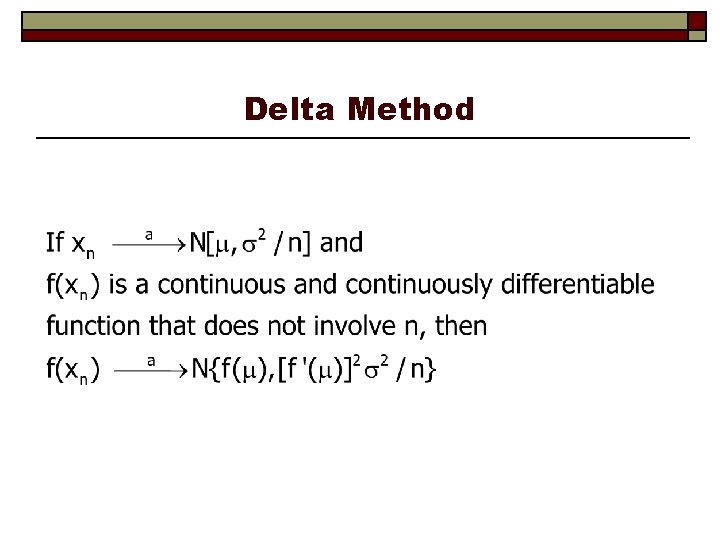

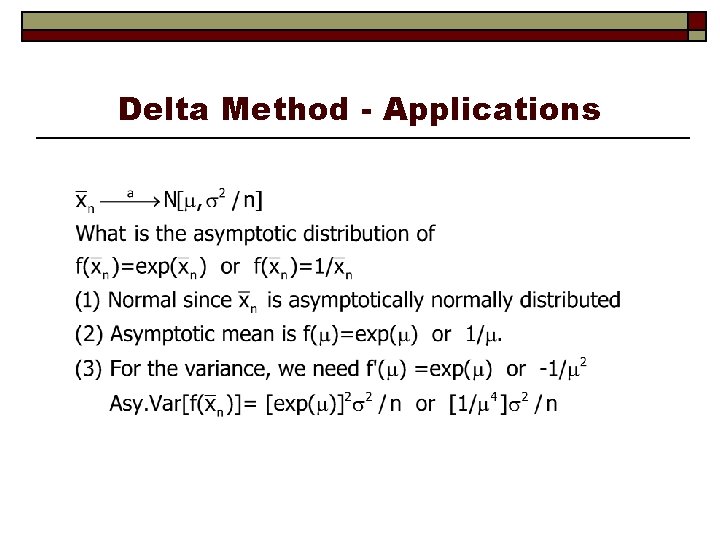

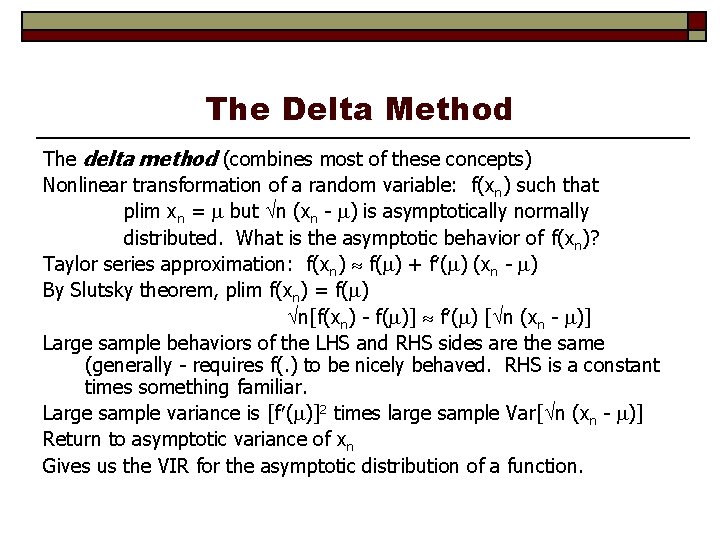

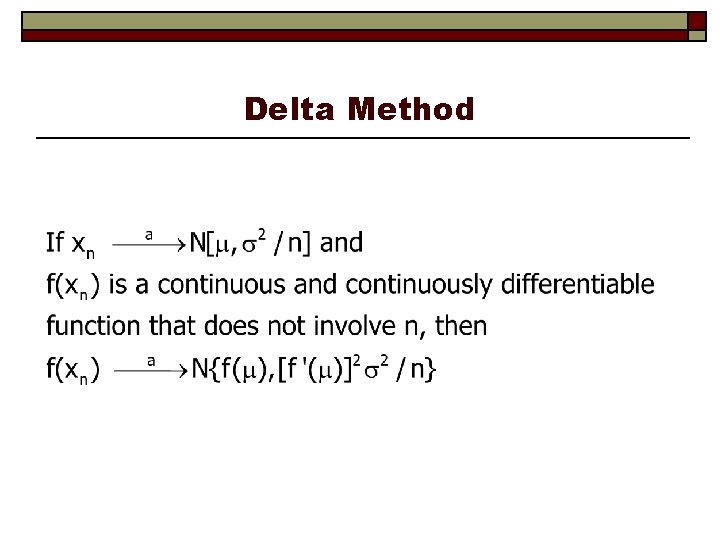

The Delta Method The delta method (combines most of these concepts) Nonlinear transformation of a random variable: f(x n) such that plim xn = but n (xn - ) is asymptotically normally distributed. What is the asymptotic behavior of f(xn)? Taylor series approximation: f(xn) f( ) + f ( ) (xn - ) By Slutsky theorem, plim f(xn) = f( ) n[f(xn) - f( )] f ( ) [ n (xn - )] Large sample behaviors of the LHS and RHS sides are the same (generally - requires f(. ) to be nicely behaved. RHS is a constant times something familiar. Large sample variance is [f ( )]2 times large sample Var[ n (xn - )] Return to asymptotic variance of xn Gives us the VIR for the asymptotic distribution of a function.

Delta Method

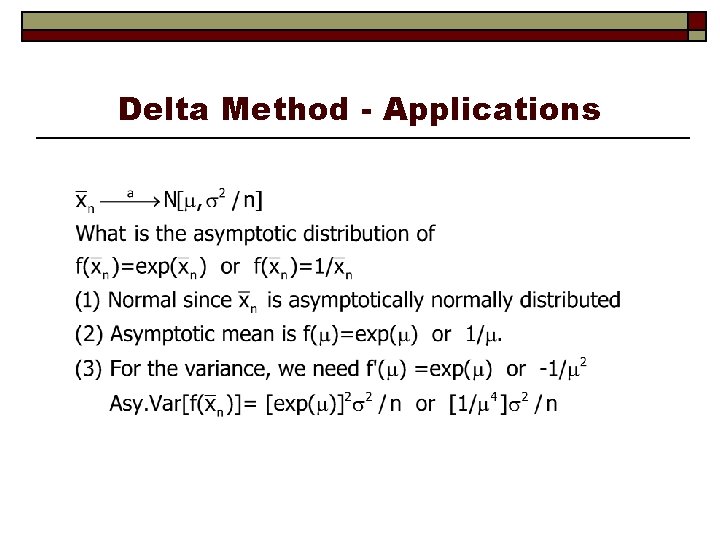

Delta Method - Applications

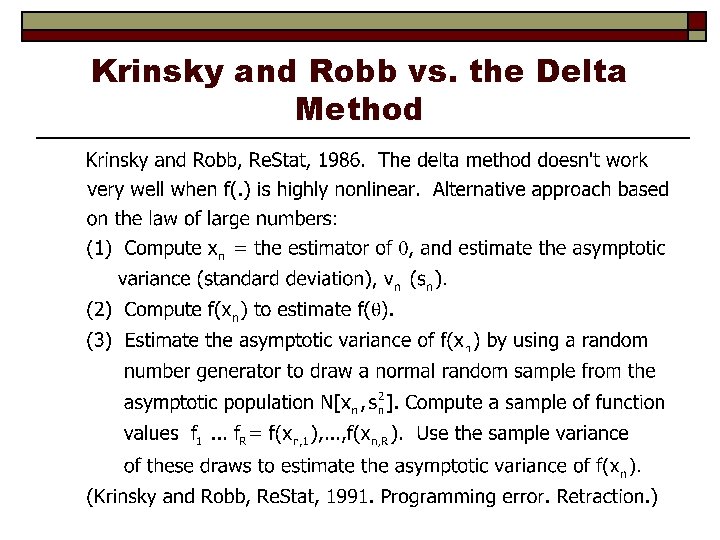

Krinsky and Robb vs. the Delta Method

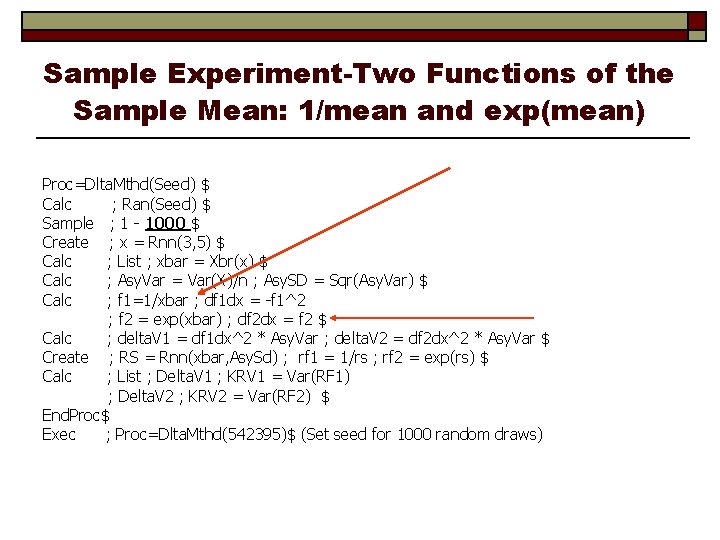

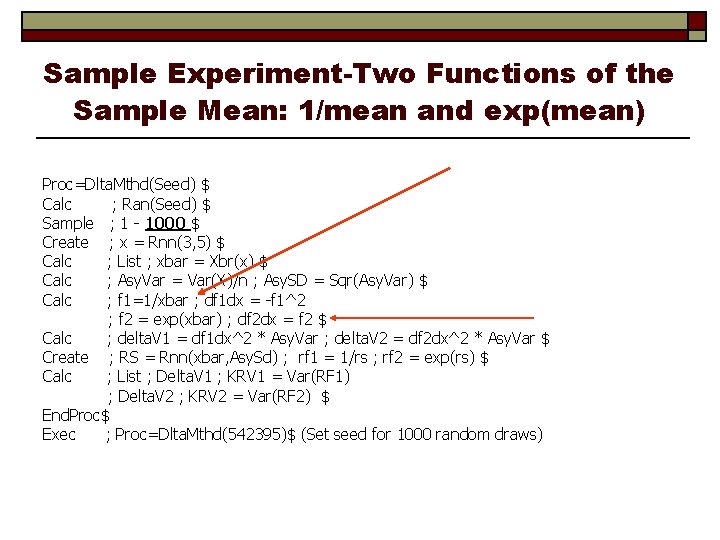

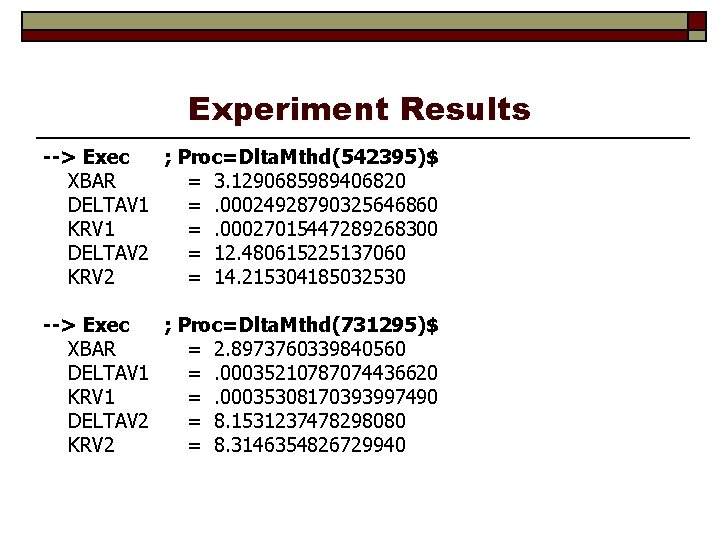

Sample Experiment-Two Functions of the Sample Mean: 1/mean and exp(mean) Proc=Dlta. Mthd(Seed) $ Calc ; Ran(Seed) $ Sample ; 1 - 1000 $ Create ; x = Rnn(3, 5) $ Calc ; List ; xbar = Xbr(x) $ Calc ; Asy. Var = Var(X)/n ; Asy. SD = Sqr(Asy. Var) $ Calc ; f 1=1/xbar ; df 1 dx = -f 1^2 ; f 2 = exp(xbar) ; df 2 dx = f 2 $ Calc ; delta. V 1 = df 1 dx^2 * Asy. Var ; delta. V 2 = df 2 dx^2 * Asy. Var $ Create ; RS = Rnn(xbar, Asy. Sd) ; rf 1 = 1/rs ; rf 2 = exp(rs) $ Calc ; List ; Delta. V 1 ; KRV 1 = Var(RF 1) ; Delta. V 2 ; KRV 2 = Var(RF 2) $ End. Proc$ Exec ; Proc=Dlta. Mthd(542395)$ (Set seed for 1000 random draws)

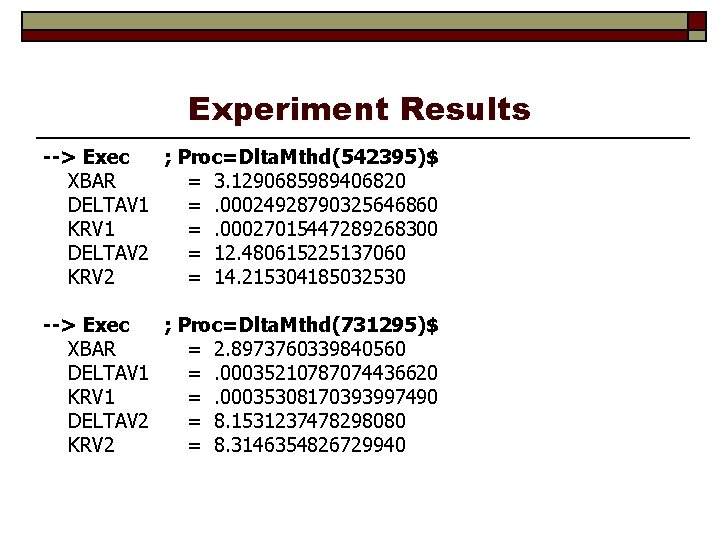

Experiment Results --> Exec ; Proc=Dlta. Mthd(542395)$ XBAR = 3. 1290685989406820 DELTAV 1 =. 00024928790325646860 KRV 1 =. 00027015447289268300 DELTAV 2 = 12. 480615225137060 KRV 2 = 14. 215304185032530 --> Exec ; Proc=Dlta. Mthd(731295)$ XBAR = 2. 8973760339840560 DELTAV 1 =. 00035210787074436620 KRV 1 =. 00035308170393997490 DELTAV 2 = 8. 1531237478298080 KRV 2 = 8. 3146354826729940

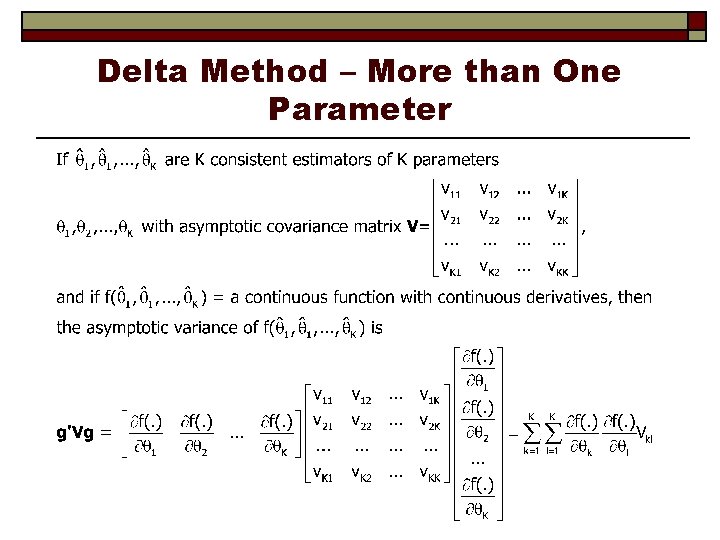

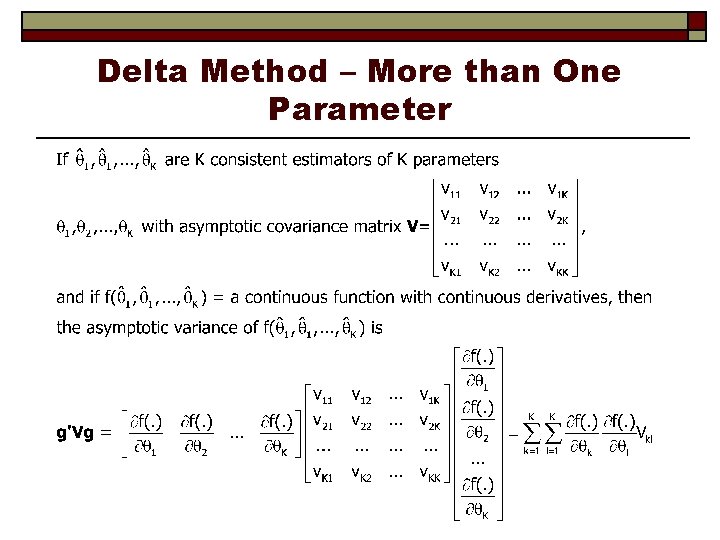

Delta Method – More than One Parameter

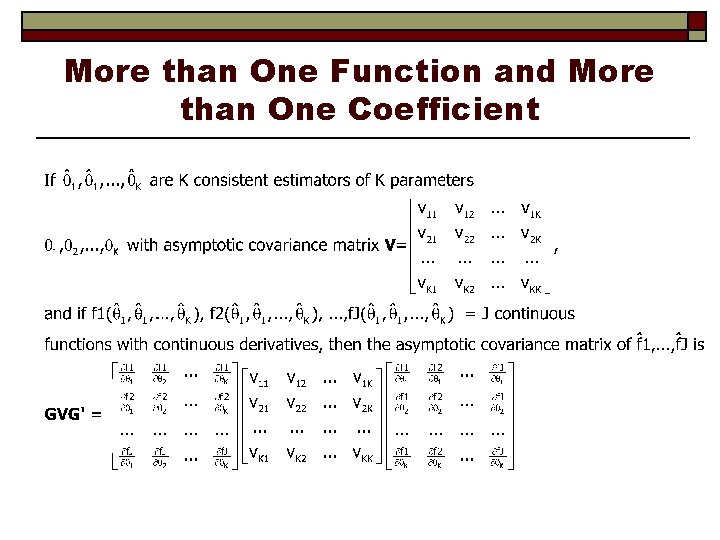

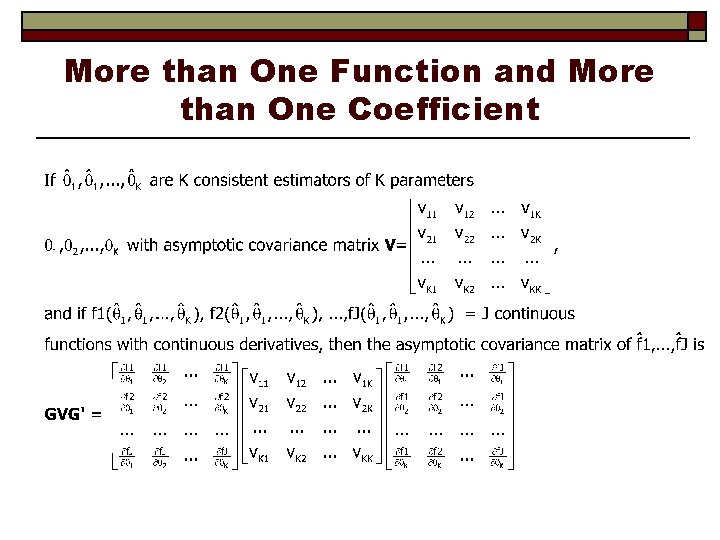

More than One Function and More than One Coefficient

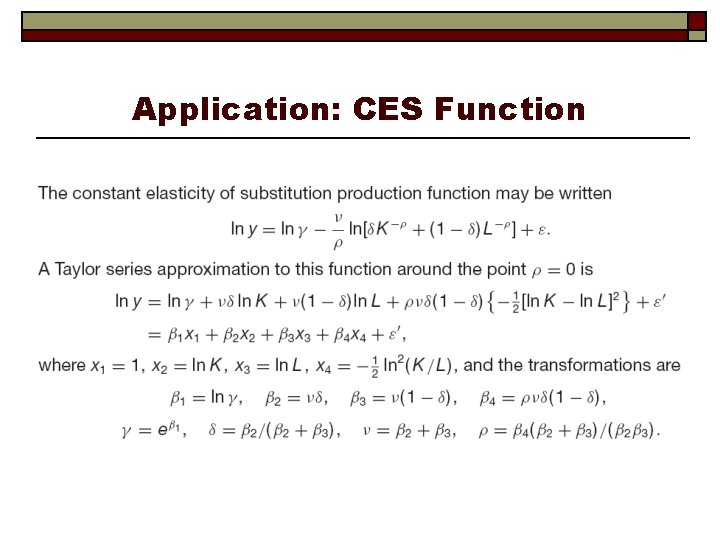

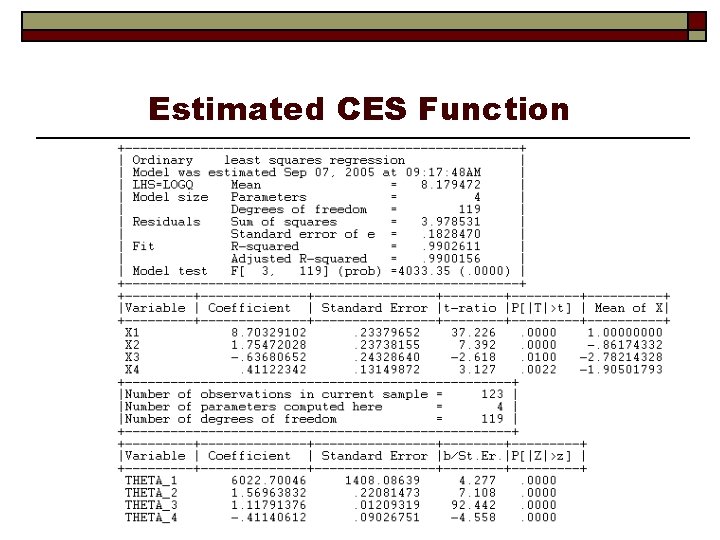

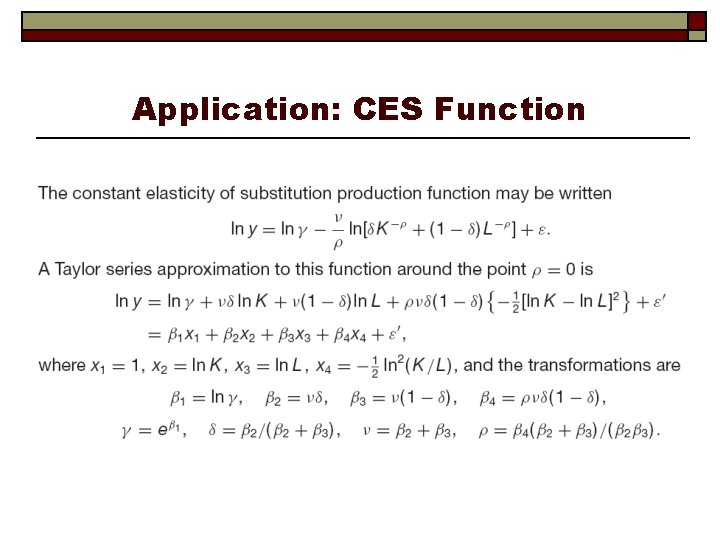

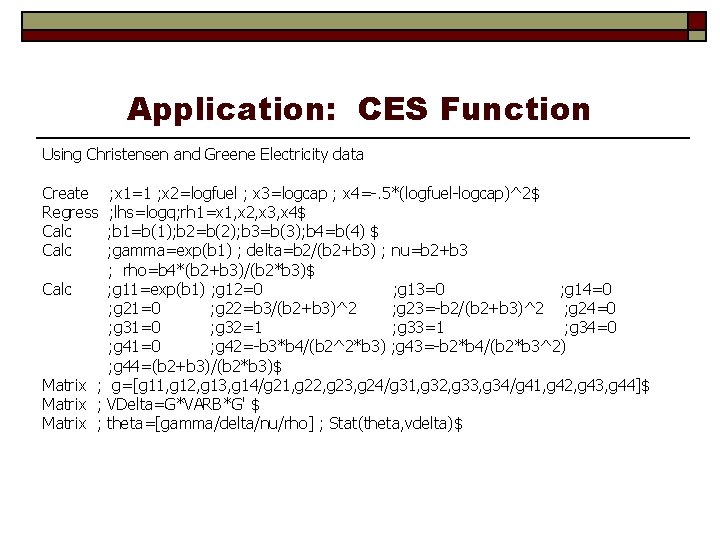

Application: CES Function

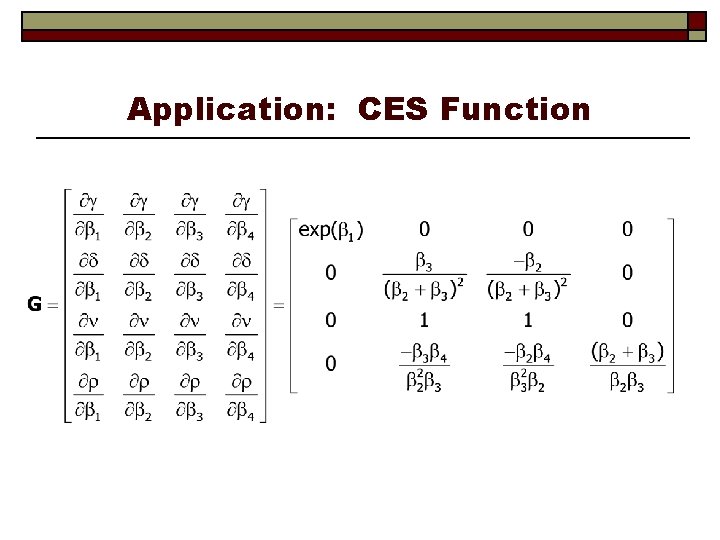

Application: CES Function

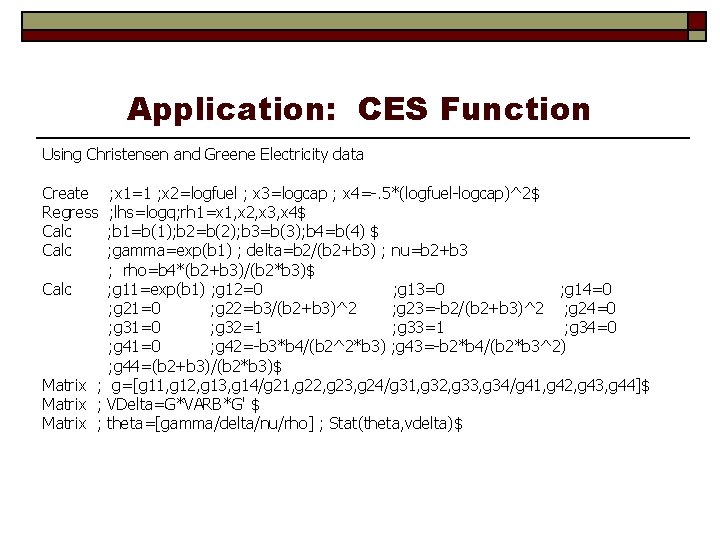

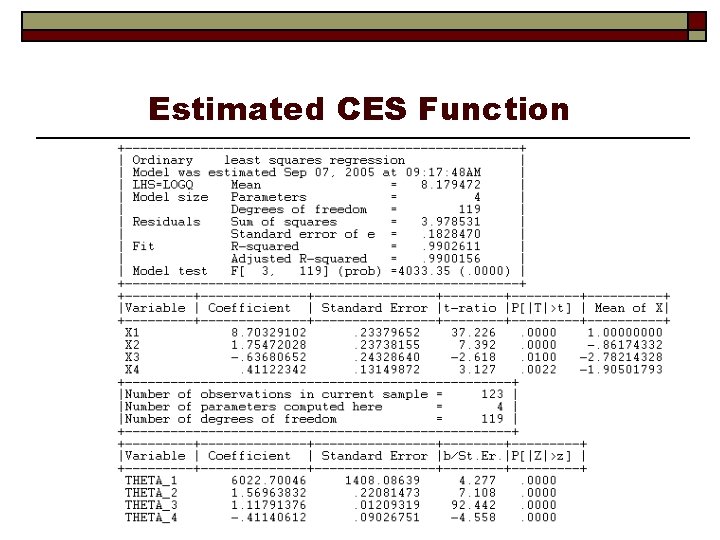

Application: CES Function Using Christensen and Greene Electricity data Create Regress Calc ; x 1=1 ; x 2=logfuel ; x 3=logcap ; x 4=-. 5*(logfuel-logcap)^2$ ; lhs=logq; rh 1=x 1, x 2, x 3, x 4$ ; b 1=b(1); b 2=b(2); b 3=b(3); b 4=b(4) $ ; gamma=exp(b 1) ; delta=b 2/(b 2+b 3) ; nu=b 2+b 3 ; rho=b 4*(b 2+b 3)/(b 2*b 3)$ Calc ; g 11=exp(b 1) ; g 12=0 ; g 13=0 ; g 14=0 ; g 21=0 ; g 22=b 3/(b 2+b 3)^2 ; g 23=-b 2/(b 2+b 3)^2 ; g 24=0 ; g 31=0 ; g 32=1 ; g 33=1 ; g 34=0 ; g 41=0 ; g 42=-b 3*b 4/(b 2^2*b 3) ; g 43=-b 2*b 4/(b 2*b 3^2) ; g 44=(b 2+b 3)/(b 2*b 3)$ Matrix ; g=[g 11, g 12, g 13, g 14/g 21, g 22, g 23, g 24/g 31, g 32, g 33, g 34/g 41, g 42, g 43, g 44]$ Matrix ; VDelta=G*VARB*G' $ Matrix ; theta=[gamma/delta/nu/rho] ; Stat(theta, vdelta)$

Estimated CES Function

Asymptotics for Least Squares Looking Ahead… Assumptions: Convergence of X X/n (doesn’t require nonstochastic or stochastic X). Convergence of X’ /n to 0. Sufficient for consistency. Assumptions: Convergence of (1/ n)X’ to a normal vector, gives asymptotic normality What about asymptotic efficiency?