Neural Network Basics I Gang Cao Outline Neural

可视计算 Neural Network Basics I Gang Cao

Outline • Neural network • CNN • Attention in CV 3

Neural network 4

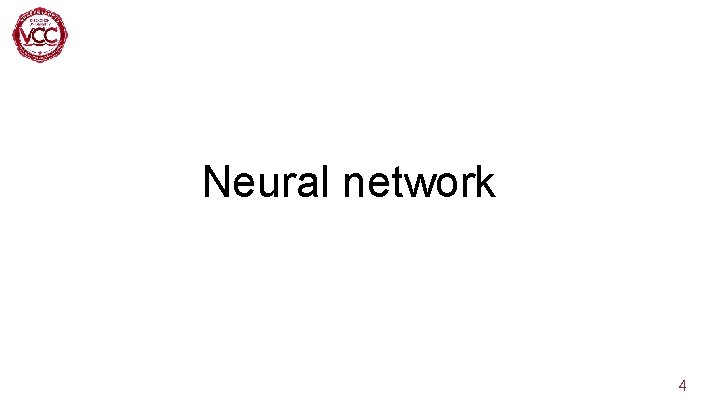

Neural network 逻辑回归 neuron 5

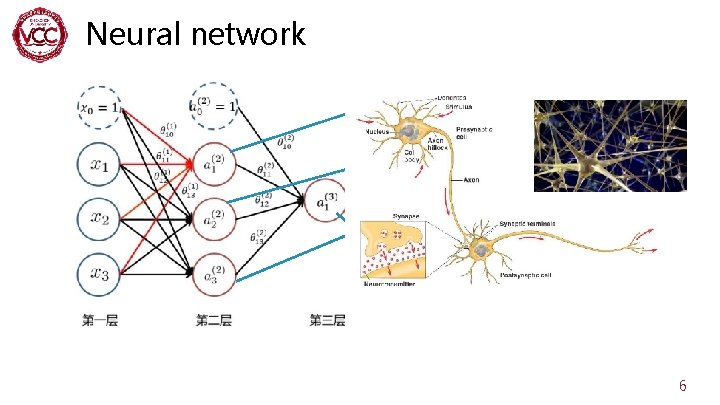

Neural network 6

![Neural network—Activation function Why do you need non-linear activation functions? Z[1] a[1] Z[2] a[2] Neural network—Activation function Why do you need non-linear activation functions? Z[1] a[1] Z[2] a[2]](http://slidetodoc.com/presentation_image_h2/995cd462acb257ddfe1689a0f97dac57/image-11.jpg)

Neural network—Activation function Why do you need non-linear activation functions? Z[1] a[1] Z[2] a[2] W’ b’ 11

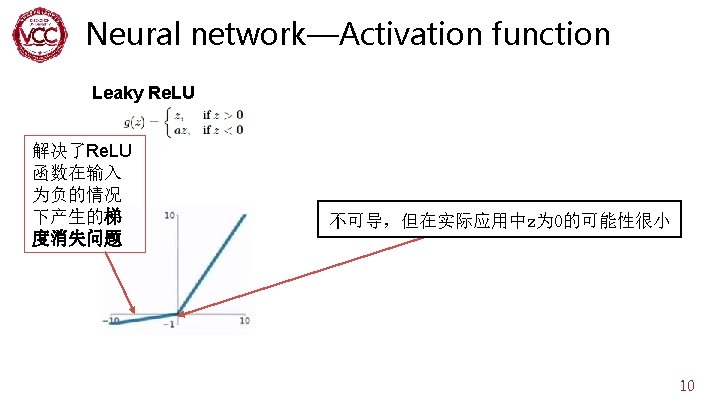

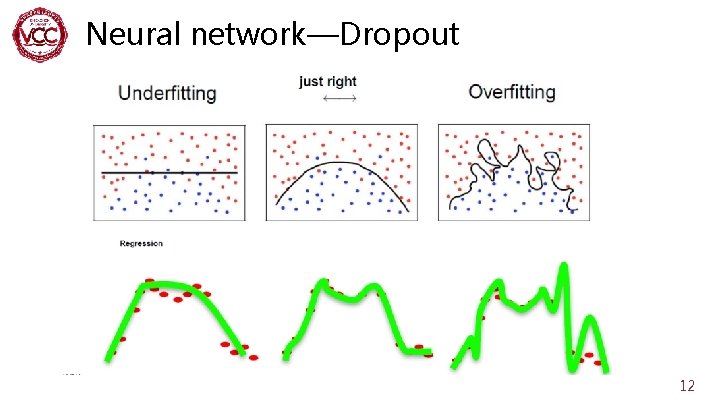

Neural network—Dropout 12

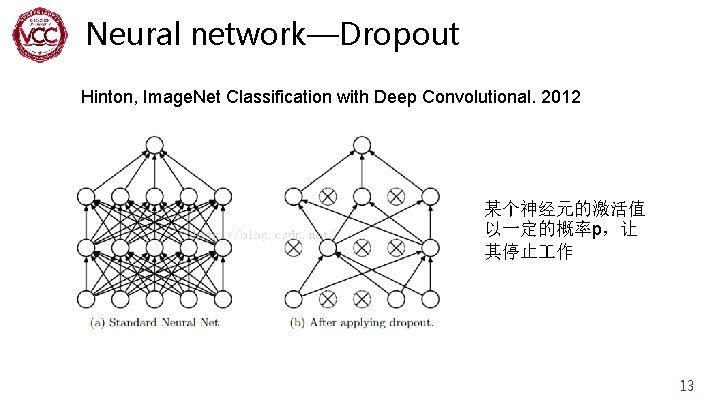

Neural network—Dropout Hinton, Image. Net Classification with Deep Convolutional. 2012 某个神经元的激活值 以一定的概率p,让 其停止 作 13

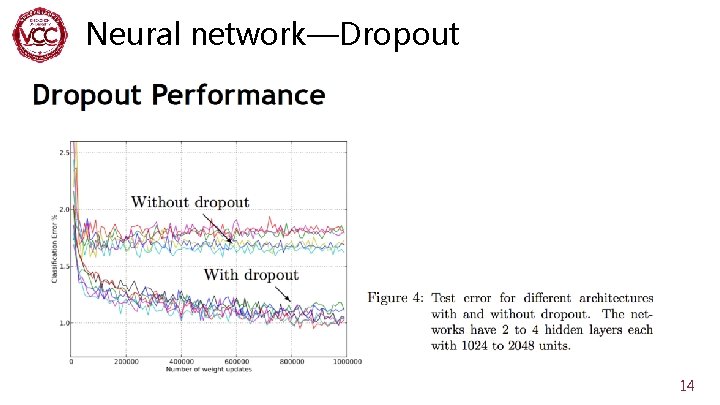

Neural network—Dropout 14

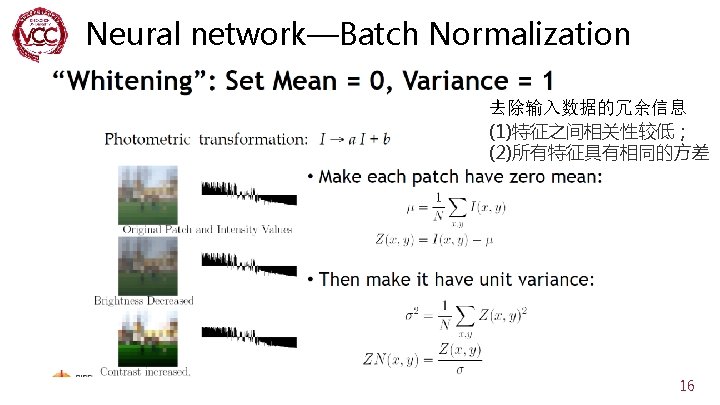

Neural network—Batch Normalization 15

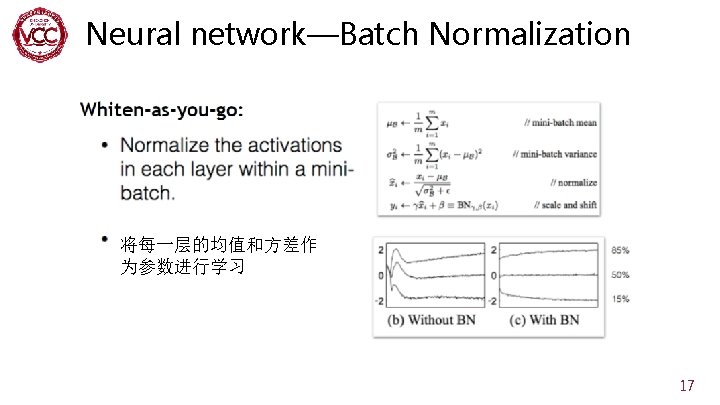

Neural network—Batch Normalization 将每一层的均值和方差作 为参数进行学习 17

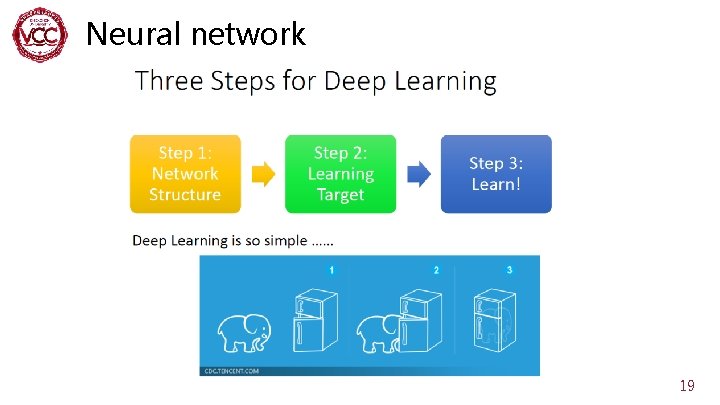

Neural network—Loss function A good function should make the loss of all examples as small as possible. 18

Neural network 19

CNN 20

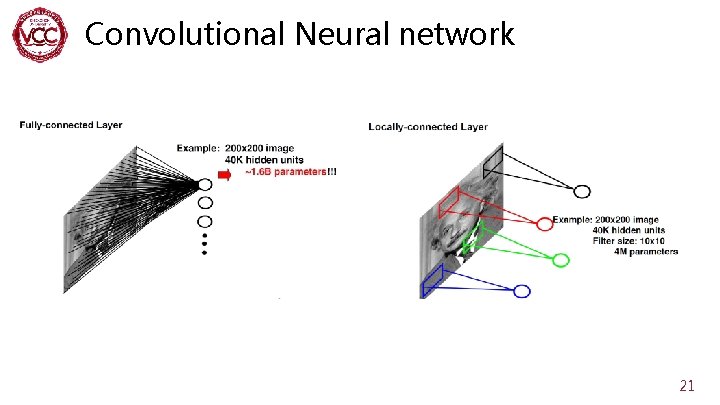

Convolutional Neural network 21

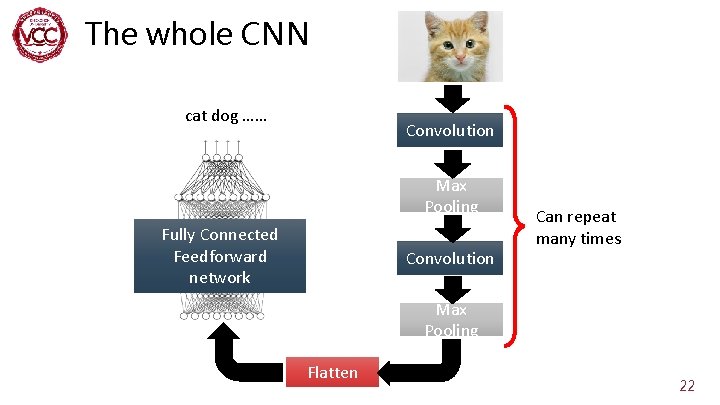

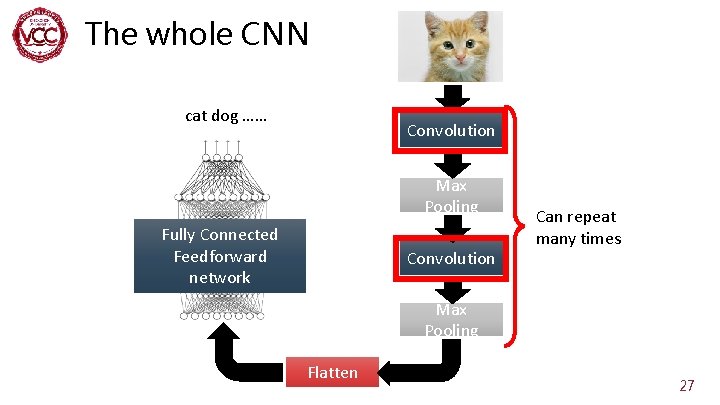

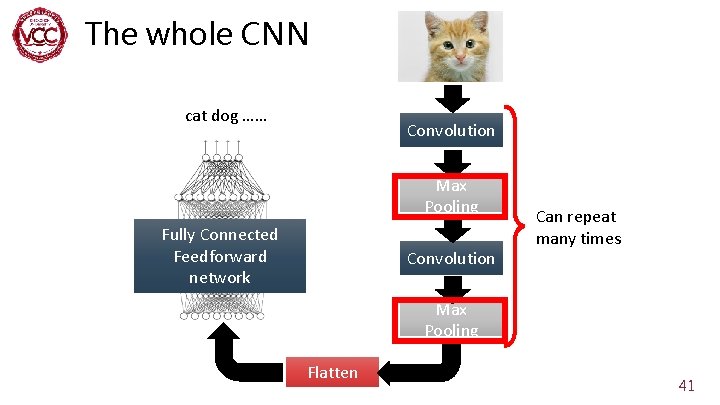

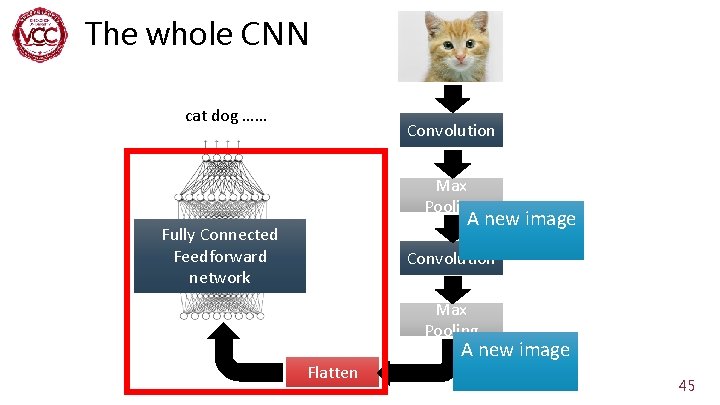

The whole CNN cat dog …… Convolution Max Pooling Fully Connected Feedforward network Convolution Can repeat many times Max Pooling Flatten 22

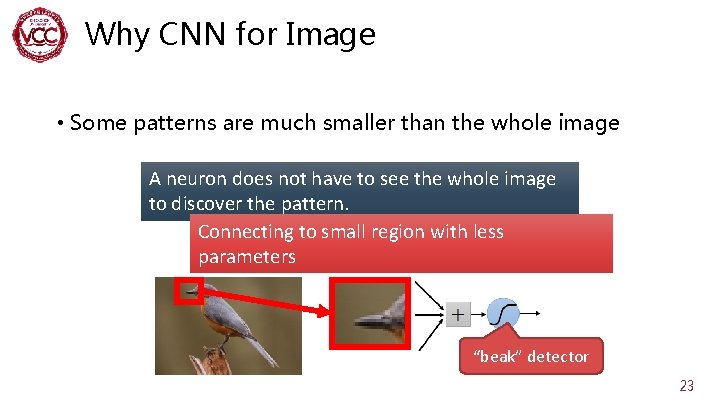

Why CNN for Image • Some patterns are much smaller than the whole image A neuron does not have to see the whole image to discover the pattern. Connecting to small region with less parameters “beak” detector 23

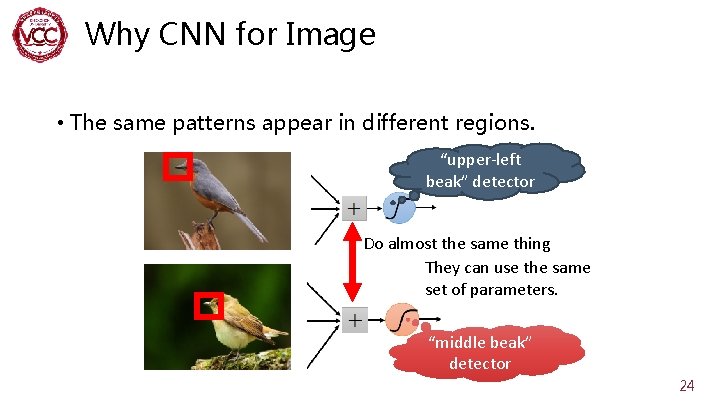

Why CNN for Image • The same patterns appear in different regions. “upper-left beak” detector Do almost the same thing They can use the same set of parameters. “middle beak” detector 24

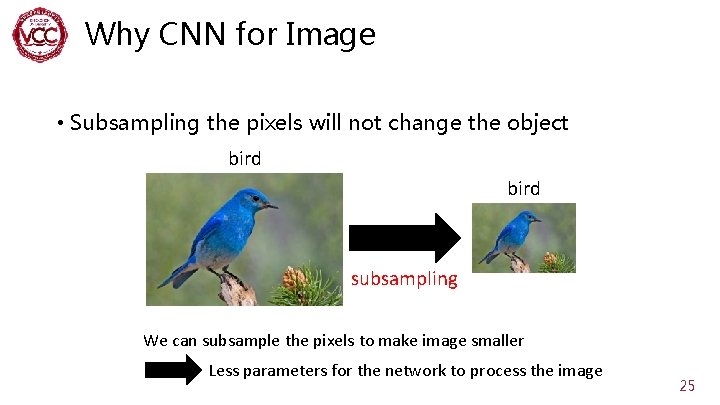

Why CNN for Image • Subsampling the pixels will not change the object bird subsampling We can subsample the pixels to make image smaller Less parameters for the network to process the image 25

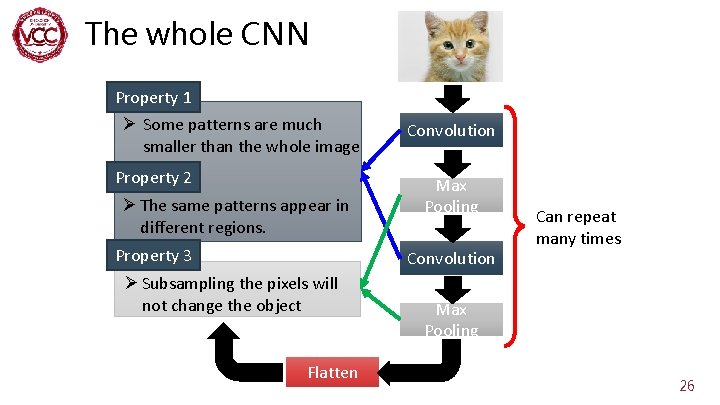

The whole CNN Property 1 Ø Some patterns are much smaller than the whole image Property 2 Ø The same patterns appear in different regions. Property 3 Convolution Max Pooling Convolution Ø Subsampling the pixels will not change the object Flatten Can repeat many times Max Pooling 26

The whole CNN cat dog …… Convolution Max Pooling Fully Connected Feedforward network Convolution Can repeat many times Max Pooling Flatten 27

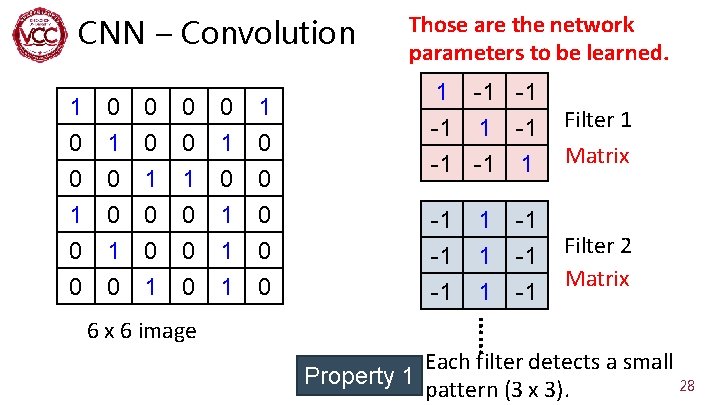

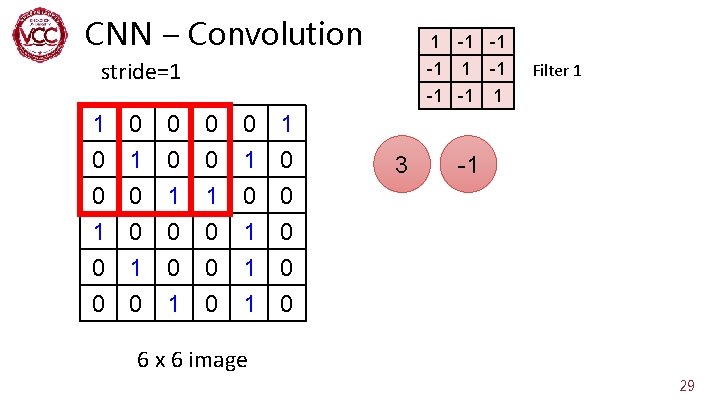

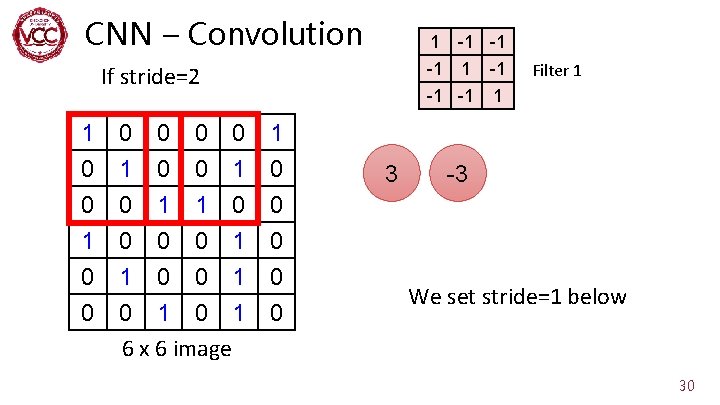

CNN – Convolution 1 0 0 1 0 0 0 1 0 1 1 0 0 0 1 1 0 0 1 -1 -1 -1 1 Filter 1 -1 -1 -1 Filter 2 Matrix 1 1 1 …… 6 x 6 image Those are the network parameters to be learned. -1 -1 -1 Matrix Each filter detects a small Property 1 28 pattern (3 x 3).

CNN – Convolution 1 stride=1 -1 -1 -1 1 1 0 0 1 0 0 0 1 0 1 1 0 0 0 1 1 0 0 3 Filter 1 -1 6 x 6 image 29

CNN – Convolution 1 -1 -1 -1 1 If stride=2 0 0 1 0 -1 -1 1 0 0 0 1 1 0 0 0 1 0 1 0 1 6 x 6 image 0 0 3 Filter 1 -3 We set stride=1 below 30

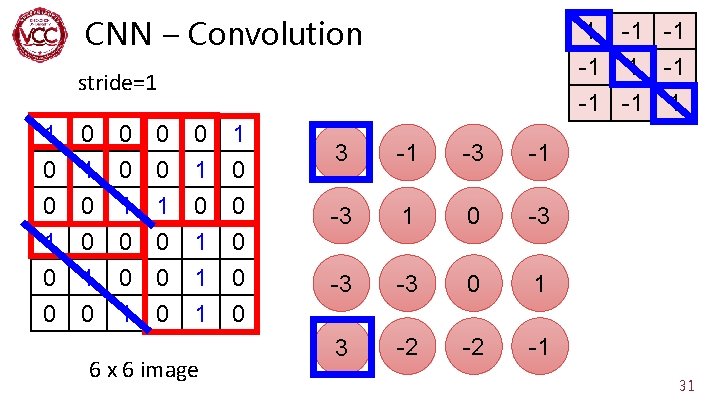

CNN – Convolution 1 -1 -1 -1 1 stride=1 1 0 0 0 1 0 0 1 0 1 1 1 6 x 6 image 1 0 0 0 3 -1 -3 1 0 -3 -3 -3 0 1 3 -2 -2 -1 31

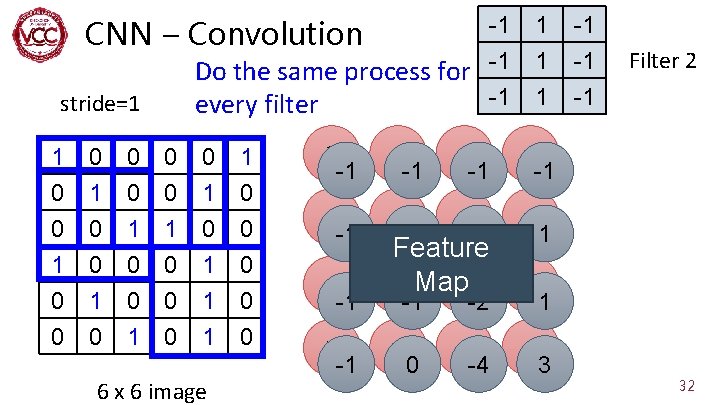

-1 Do the same process for -1 -1 every filter CNN – Convolution stride=1 1 0 0 1 0 0 0 1 0 1 1 0 0 0 1 1 0 0 6 x 6 image 1 1 1 3 -1 -1 -1 -3 -1 1 -1 0 -2 -3 1 -3 -1 Feature -3 Map 0 -1 -2 -2 0 -2 -4 1 -1 -1 -1 Filter 2 1 -1 3 32

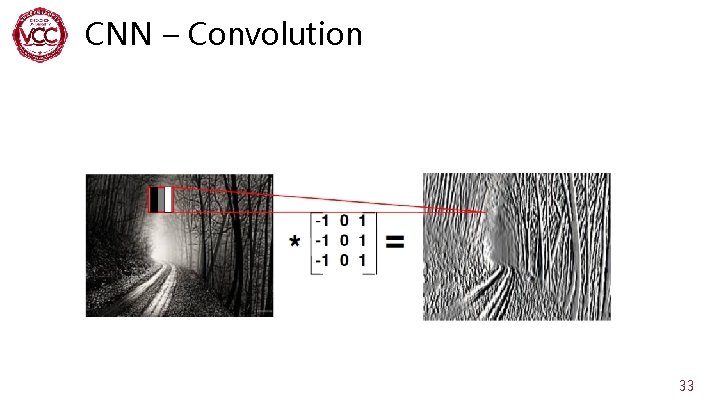

CNN – Convolution 33

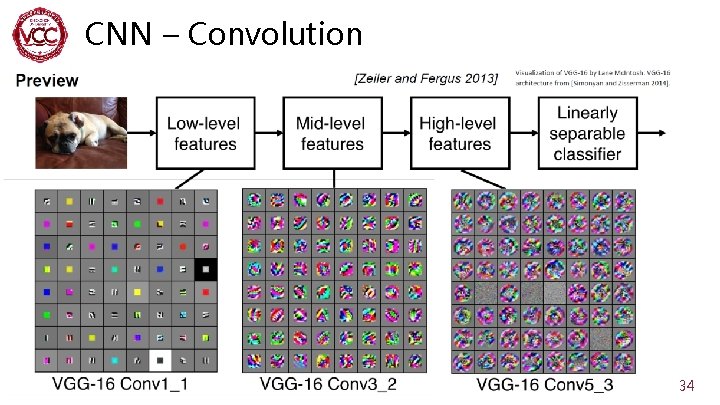

CNN – Convolution 34

![CNN – Convolution Vgg 16 conv 5_3 [Xiu-Shen Wei et al, 2017 ] 35 CNN – Convolution Vgg 16 conv 5_3 [Xiu-Shen Wei et al, 2017 ] 35](http://slidetodoc.com/presentation_image_h2/995cd462acb257ddfe1689a0f97dac57/image-35.jpg)

CNN – Convolution Vgg 16 conv 5_3 [Xiu-Shen Wei et al, 2017 ] 35

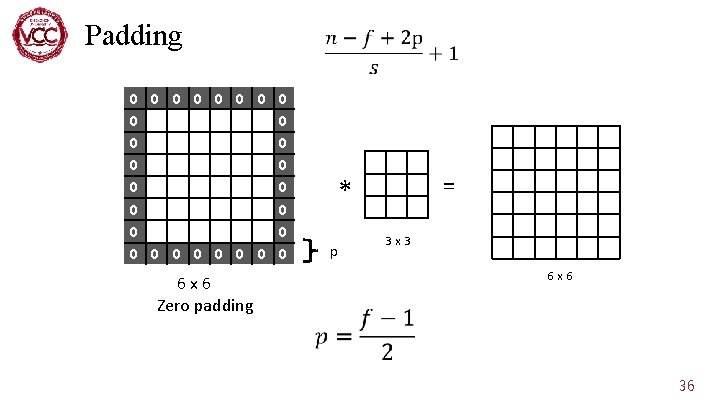

Padding 0 0 0 0 0 0 0 0 0 0 0 0 0 0 6 x 6 0 0 0 0 0 6 x 6 Zero padding = * p 3 x 3 4 x 4 6 x 6 36

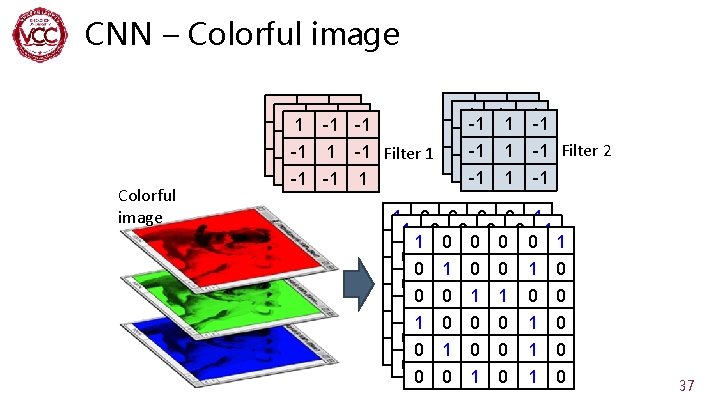

CNN – Colorful image -1 -1 11 -1 -1 -1 -1 -1 -1 11 -1 -1 -1 Filter 1 -1 -1 1 1 -1 -1 Filter 2 -1 -1 11 -1 -1 -1 -1 1 1 0 0 0 0 1 0 11 00 00 01 00 1 0 0 00 11 01 00 10 0 1 1 0 0 1 00 00 10 11 00 0 11 00 00 01 10 0 0 1 0 0 00 11 00 01 10 0 1 0 37

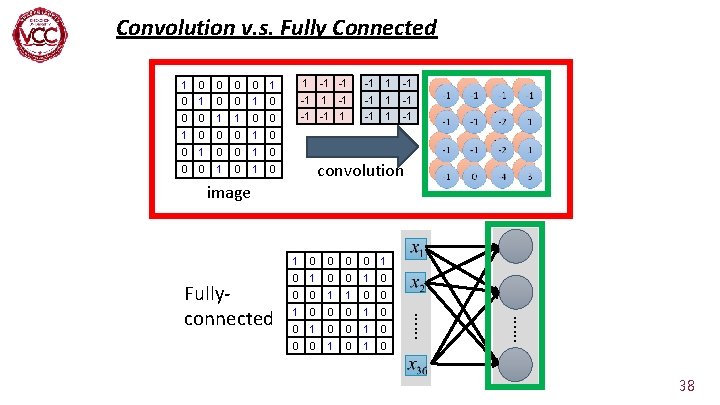

Convolution v. s. Fully Connected 1 0 0 1 0 0 0 1 0 0 1 0 1 -1 -1 -1 1 -1 -1 -1 convolution image 0 0 0 1 0 0 0 1 1 0 0 0 1 0 0 0 1 0 …… Fullyconnected 1 38

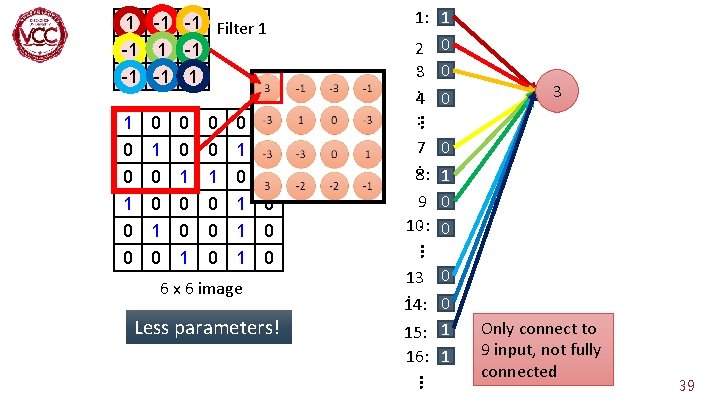

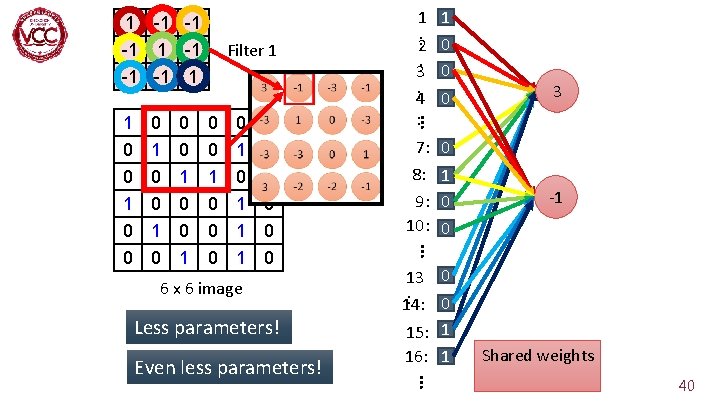

1 -1 -1 Filter 1 -1 -1 -1 1 0 0 0 0 1 1 1 1 0 0 0 6 x 6 image Less parameters! 3 7 0 : 1 8: 9 0 : 0 10: … 0 1 0 2 0 : 3 0 : 0 4 : … 1 0 0 1: 1 13 0 : 0 14: 15: 1 16: 1 … Only connect to 9 input, not fully connected 39

1 -1 -1 Filter 1 -1 -1 -1 1 0 0 0 0 1 1 1 1 0 0 0 6 x 6 image Less parameters! -1 13 0 : 0 14: 15: 1 16: 1 … Even less parameters! 7: 0 8: 1 9: 0 10: 0 … 0 1 0 3 … 1 0 0 1 1 : 2 0 3: 0 4 : Shared weights 40

The whole CNN cat dog …… Convolution Max Pooling Fully Connected Feedforward network Convolution Can repeat many times Max Pooling Flatten 41

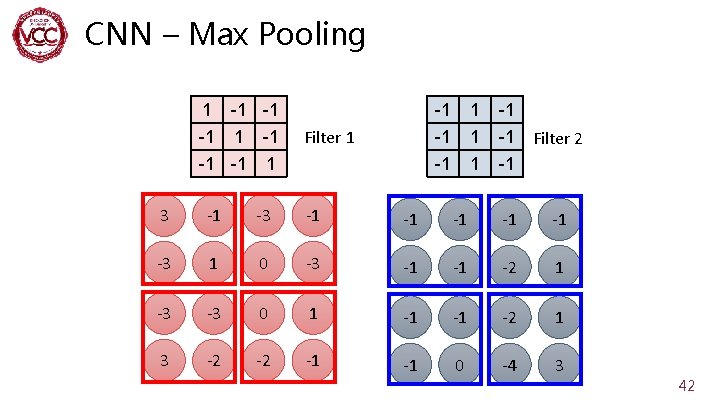

CNN – Max Pooling 1 -1 -1 -1 Filter 1 1 -1 -1 Filter 2 -1 3 -1 -1 -1 -3 1 0 -3 -1 -1 -2 1 -3 -3 0 1 -1 -1 -2 1 3 -2 -2 -1 -1 0 -4 3 42

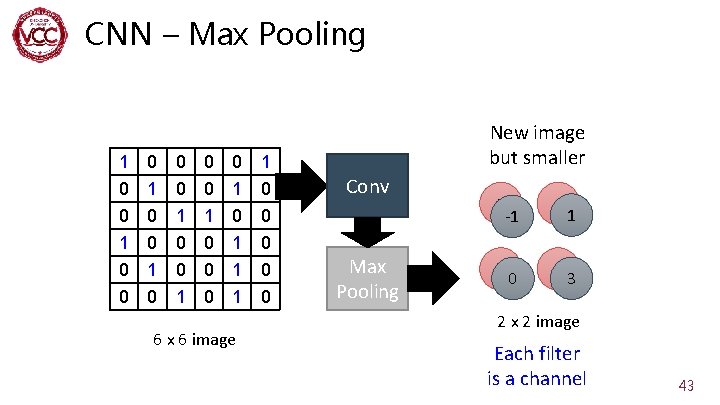

CNN – Max Pooling 1 0 0 0 1 0 0 1 0 1 1 1 6 x 6 image 1 0 0 0 New image but smaller Conv Max Pooling 3 -1 0 3 1 0 1 3 2 x 2 image Each filter is a channel 43

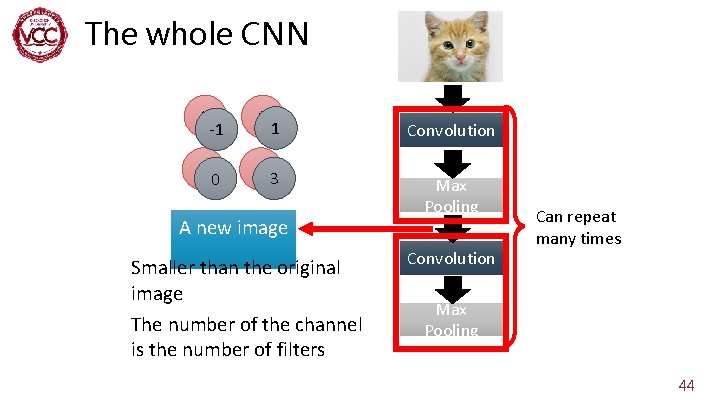

The whole CNN 3 -1 0 3 1 0 1 Convolution 3 Max Pooling A new image Smaller than the original image The number of the channel is the number of filters Convolution Can repeat many times Max Pooling 44

The whole CNN cat dog …… Convolution Max Pooling A new image Fully Connected Feedforward network Convolution Max Pooling Flatten A new image 45

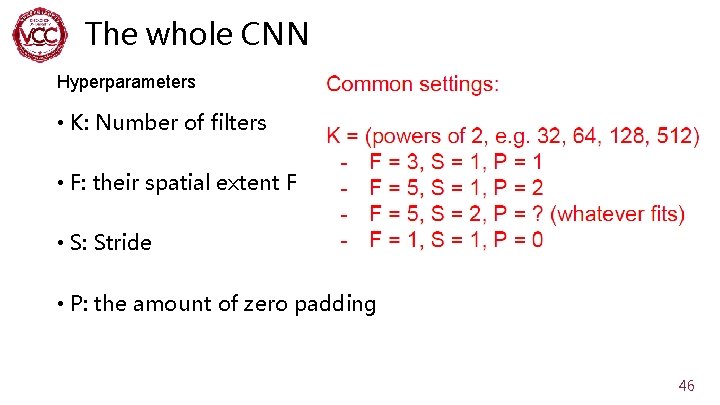

The whole CNN Hyperparameters • K: Number of filters • F: their spatial extent F • S: Stride • P: the amount of zero padding 46

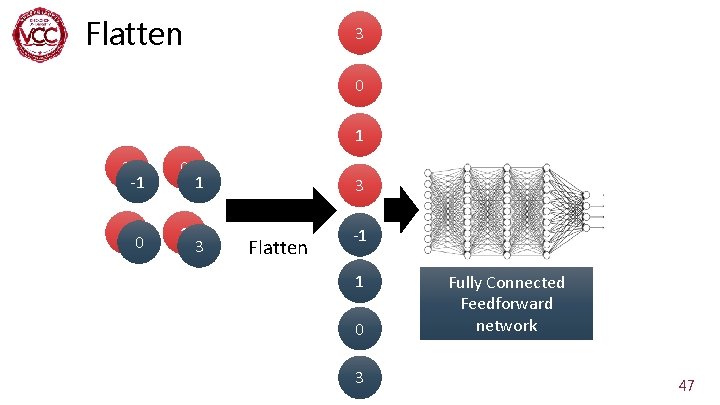

Flatten 3 0 1 3 -1 0 3 1 0 1 3 3 Flatten -1 1 0 3 Fully Connected Feedforward network 47

48

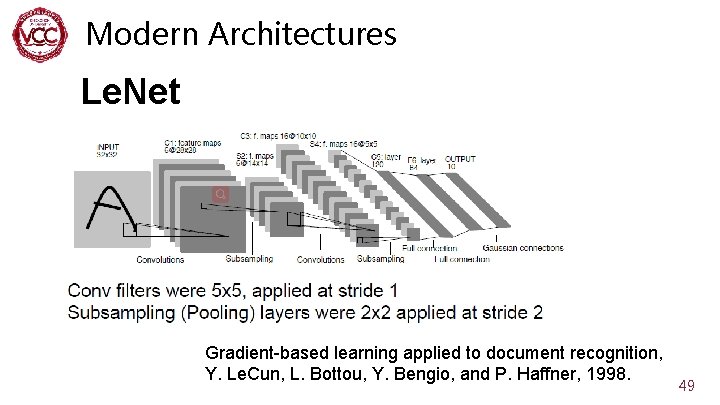

Modern Architectures Le. Net Gradient-based learning applied to document recognition, Y. Le. Cun, L. Bottou, Y. Bengio, and P. Haffner, 1998. 49

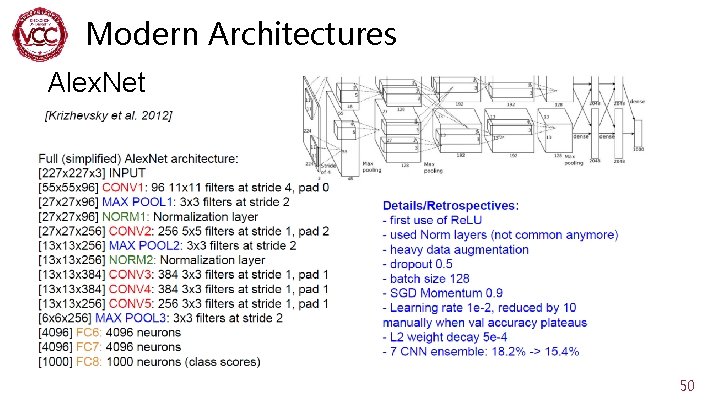

Modern Architectures Alex. Net 50

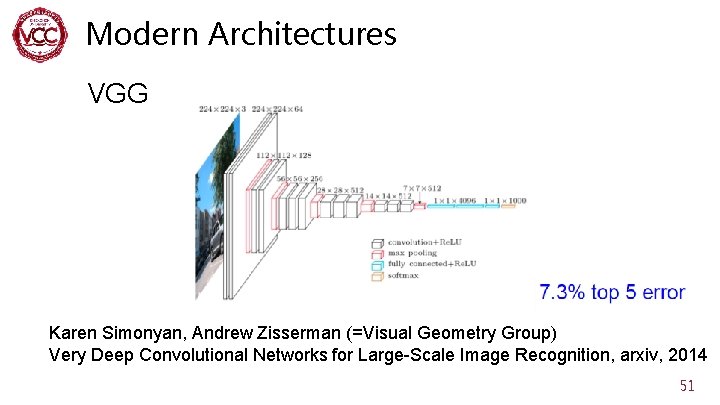

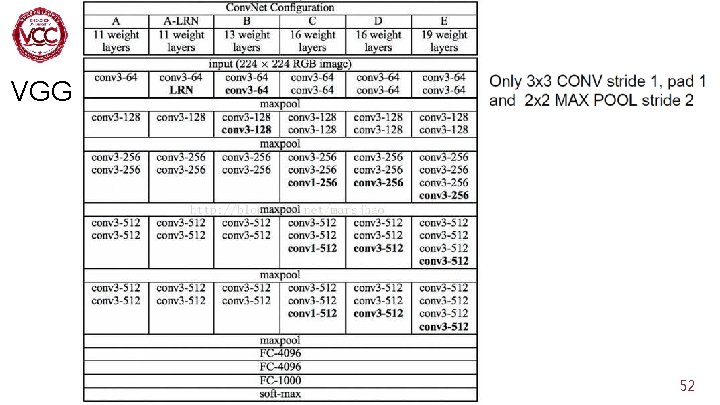

Modern Architectures VGG Karen Simonyan, Andrew Zisserman (=Visual Geometry Group) Very Deep Convolutional Networks for Large-Scale Image Recognition, arxiv, 2014 51

Modern Architectures VGG 52

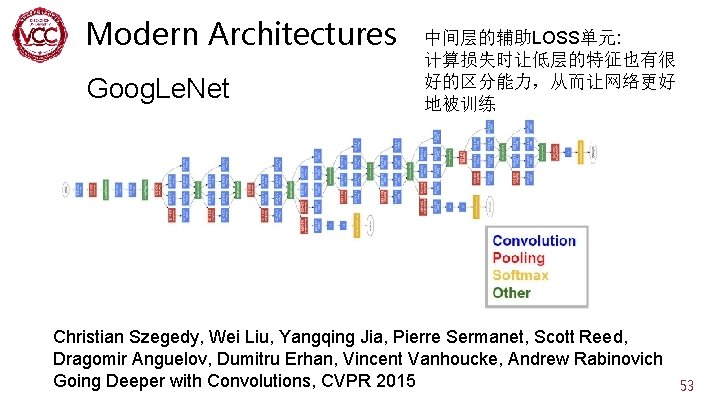

Modern Architectures Goog. Le. Net 中间层的辅助LOSS单元: 计算损失时让低层的特征也有很 好的区分能力,从而让网络更好 地被训练 Christian Szegedy, Wei Liu, Yangqing Jia, Pierre Sermanet, Scott Reed, Dragomir Anguelov, Dumitru Erhan, Vincent Vanhoucke, Andrew Rabinovich Going Deeper with Convolutions, CVPR 2015 53

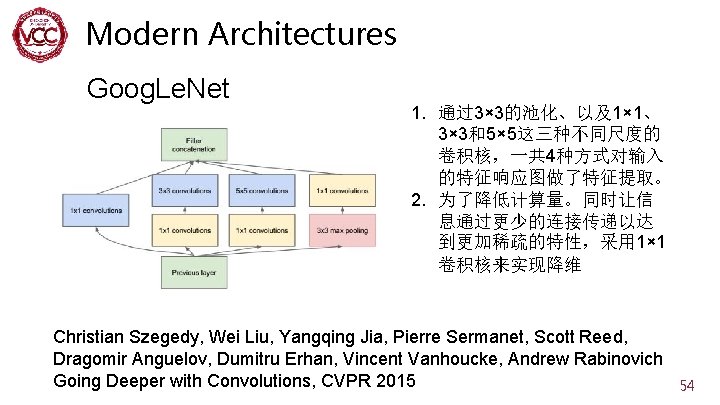

Modern Architectures Goog. Le. Net 1. 通过3× 3的池化、以及1× 1、 3× 3和5× 5这三种不同尺度的 卷积核,一共 4种方式对输入 的特征响应图做了特征提取。 2. 为了降低计算量。同时让信 息通过更少的连接传递以达 到更加稀疏的特性,采用 1× 1 卷积核来实现降维 Christian Szegedy, Wei Liu, Yangqing Jia, Pierre Sermanet, Scott Reed, Dragomir Anguelov, Dumitru Erhan, Vincent Vanhoucke, Andrew Rabinovich Going Deeper with Convolutions, CVPR 2015 54

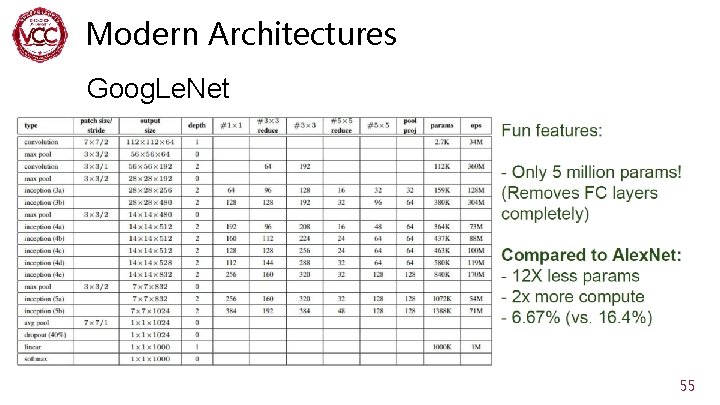

Modern Architectures Goog. Le. Net 55

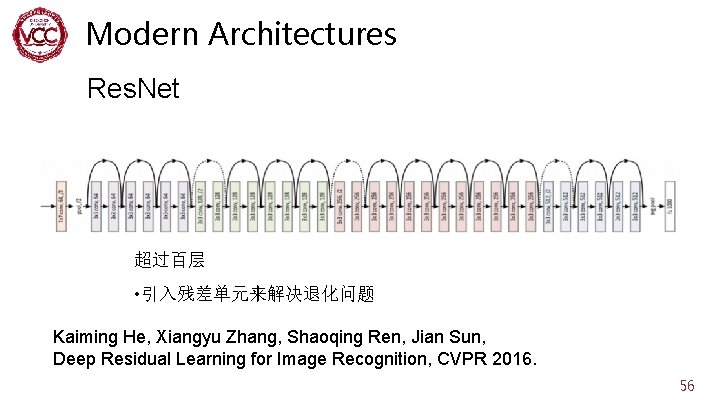

Modern Architectures Res. Net 超过百层 • 引入残差单元来解决退化问题 Kaiming He, Xiangyu Zhang, Shaoqing Ren, Jian Sun, Deep Residual Learning for Image Recognition, CVPR 2016. 56

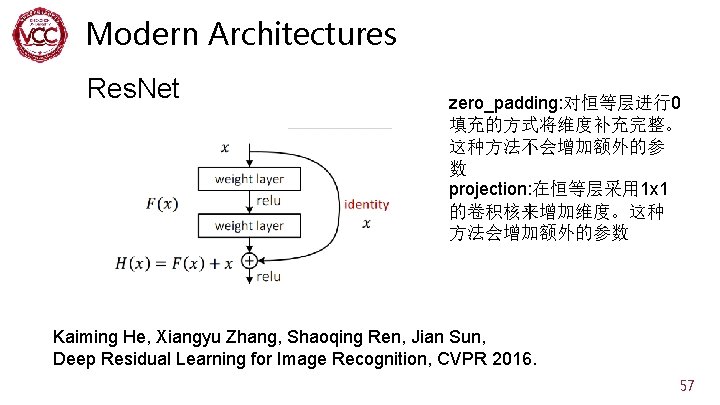

Modern Architectures Res. Net zero_padding: 对恒等层进行0 填充的方式将维度补充完整。 这种方法不会增加额外的参 数 projection: 在恒等层采用 1 x 1 的卷积核来增加维度。这种 方法会增加额外的参数 Kaiming He, Xiangyu Zhang, Shaoqing Ren, Jian Sun, Deep Residual Learning for Image Recognition, CVPR 2016. 57

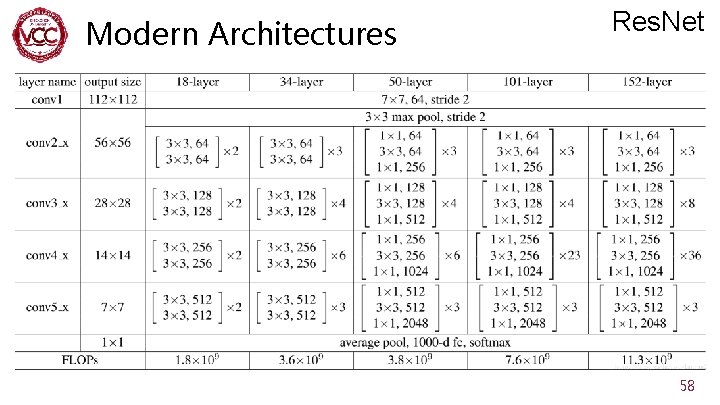

Modern Architectures Res. Net Kaiming He, Xiangyu Zhang, Shaoqing Ren, Jian Sun, Deep Residual Learning for Image Recognition, CVPR 2016. 58

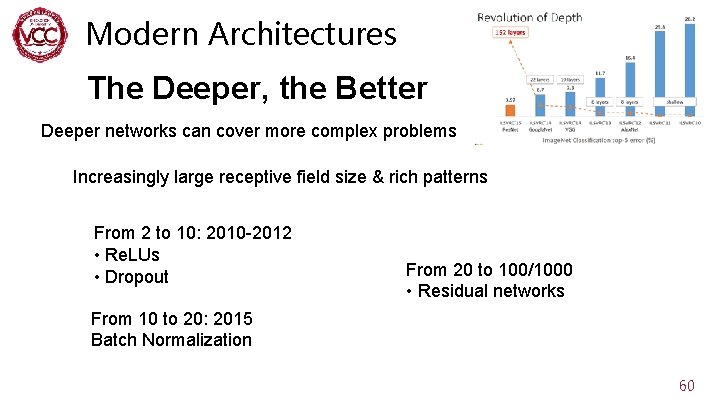

Modern Architectures The Deeper, the Better 59

Modern Architectures The Deeper, the Better Deeper networks can cover more complex problems Increasingly large receptive field size & rich patterns From 2 to 10: 2010 -2012 • Re. LUs • Dropout From 20 to 100/1000 • Residual networks From 10 to 20: 2015 Batch Normalization 60

Attention in CV 61

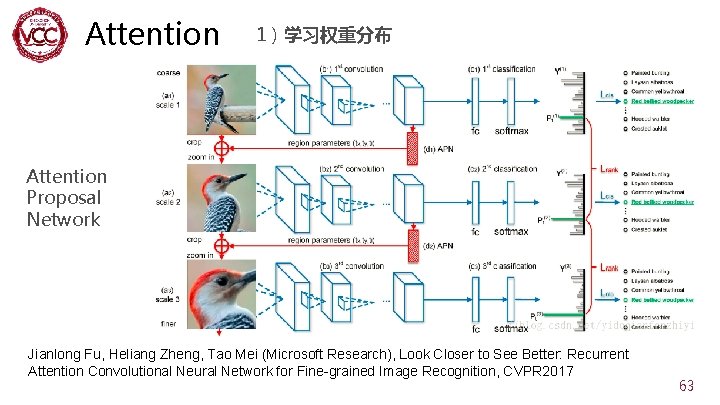

Attention 1)学习权重分布 Attention Proposal Network Jianlong Fu, Heliang Zheng, Tao Mei (Microsoft Research), Look Closer to See Better: Recurrent Attention Convolutional Neural Network for Fine-grained Image Recognition, CVPR 2017 63

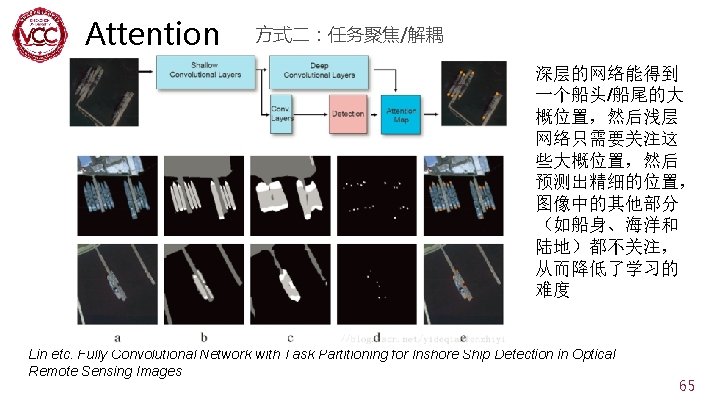

Attention 1)学习权重分布 Attention Proposal Network Jianlong Fu, Heliang Zheng, Tao Mei (Microsoft Research), Look Closer to See Better: Recurrent Attention Convolutional Neural Network for Fine-grained Image Recognition, CVPR 2017 64

References 1. Creative. AI: Deep Learning for Graphics NN Tricks & Architectures 2. Deep Learning Tutorial 李宏毅 3. https: //blog. csdn. net/qq_38284951/article/details/82769 994(【计算机视觉】深入理解Attention机制) 4. cs 231 n 66

DOWNLOADS at http: //vcc. szu. edu. cn Thank You!

- Slides: 67