CHAPTER 6 NEURAL NETWORK APPLICATION DESIGN 1 NN

- Slides: 37

CHAPTER 6 NEURAL NETWORK APPLICATION DESIGN 1

NN Application Design Now that we got some insight into theory of backpropagation networks, how can we design networks for particular applications? Designing NNs is basically an engineering task. As we discussed before, for example, there is no formula that would allow you to determine the optimal number of hidden units in a BPN for a given task. 2

NN Application Design We need to address the following issues for a successful application design: Choosing an appropriate data representation Performing an exemplar analysis Training the network and evaluating its performance We are now going to look into each of these topics. 3

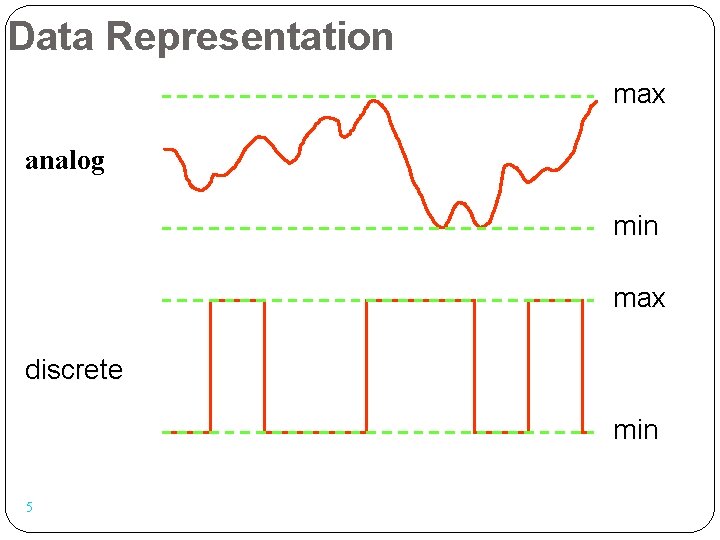

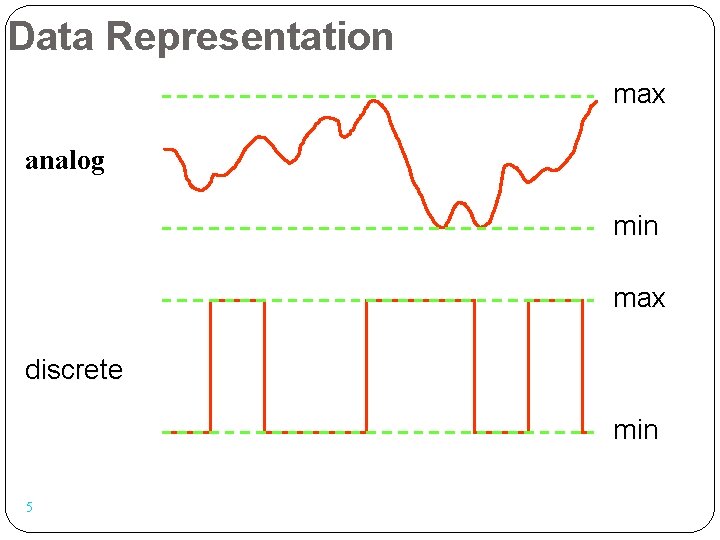

Data Representation Most networks process information in the form of input pattern vectors. These networks produce output pattern vectors that are interpreted by the embedding application. All networks process one of two types of signal components: analog (continuously variable) signals or discrete (quantized) signals. In both cases, signals have a finite amplitude; their amplitude has a minimum and a maximum value. 4

Data Representation max analog min max discrete min 5

Data Representation The main question is: How can we appropriately capture these signals and represent them as pattern vectors that we can feed into the network? We should aim for a data representation scheme that maximizes the ability of the network to detect (and respond to) relevant features in the input pattern. Relevant features are those that enable the network to generate the desired output pattern. 6

Data Representation Similarly, we also need to define a set of desired outputs that the network can actually produce. Often, a “natural” representation of the output data turns out to be impossible for the network to produce. We are going to consider internal representation and external interpretation issues as well as specific methods for creating appropriate representations. 7

Internal Representation Issues As we said before, in all network types, the amplitude of input signals and internal signals is limited: analog networks: values usually between 0 and 1 binary networks: only values 0 and 1 allowed bipolar networks: only values – 1 and 1 allowed Without this limitation, patterns with large amplitudes would dominate the network’s behavior. A disproportionately large input signal can activate a neuron even if the relevant connection weight is very small. 8

External Interpretation Issues From the perspective of the embedding application, we are concerned with the interpretation of input and output signals. These signals constitute the interface between the embedding application and its NN component. Often, these signals only become meaningful when we define an external interpretation for them. This is analogous to biological neural systems: The same signal becomes completely different meaning when it is interpreted by different brain areas (motor cortex, visual cortex etc. ). 9

External Interpretation Issues Without any interpretation, we can only use standard methods to define the difference (or similarity) between signals. For example, for binary patterns x and y, we could… … treat them as binary numbers and compute their difference as | x – y | … treat them as vectors and use the cosine of the angle between them as a measure of similarity … count the numbers of digits that we would have to flip in order to transform x into y (Hamming distance) 10

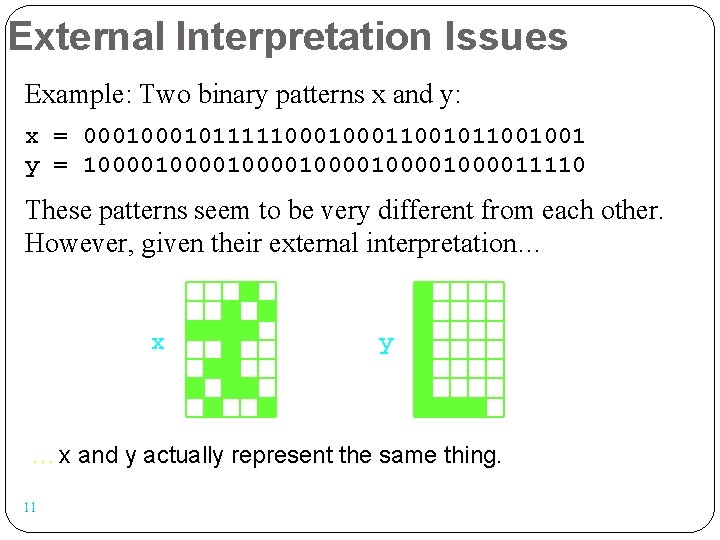

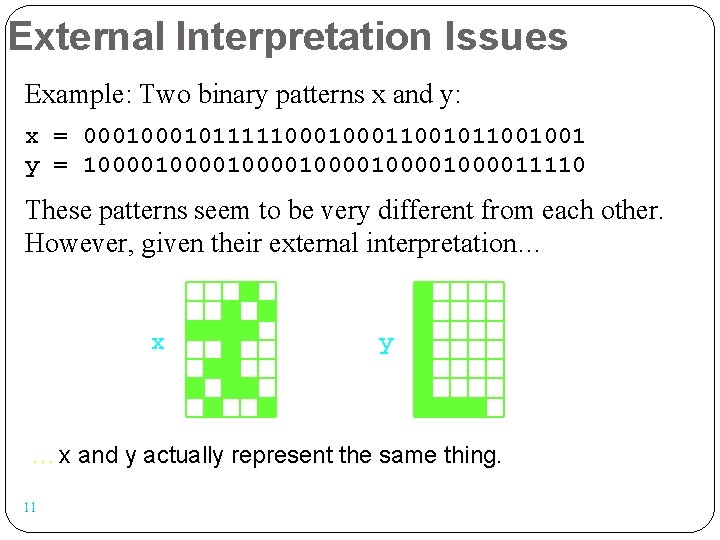

External Interpretation Issues Example: Two binary patterns x and y: x = 000101111100011001001 y = 10000100001000011110 These patterns seem to be very different from each other. However, given their external interpretation… x y …x and y actually represent the same thing. 11

Creating Data Representations The patterns that can be represented by an ANN most easily are binary patterns. Even analog networks “like” to receive and produce binary patterns – we can simply round values < 0. 5 to 0 and values 0. 5 to 1. To create a binary input vector, we can simply list all features that are relevant to the current task. Each component of our binary vector indicates whether one particular feature is present (1) or absent (0). 12

Creating Data Representations With regard to output patterns, most binary-data applications perform classification of their inputs. The output of such a network indicates to which class of patterns the current input belongs. Usually, each output neuron is associated with one class of patterns. As you already know, for any input, only one output neuron should be active (1) and the others inactive (0), indicating the class of the current input. 13

Creating Data Representations In other cases, classes are not mutually exclusive, and more than one output neuron can be active at the same time. Another variant would be the use of binary input patterns and analog output patterns for “classification”. In that case, again, each output neuron corresponds to one particular class, and its activation indicates the probability (between 0 and 1) that the current input belongs to that class. 14

Creating Data Representations Tertiary (and n-ary) patterns can cause more problems than binary patterns when we want to format them for an ANN. For example, imagine the tic-tac-toe game. Each square of the board is in one of three different states: occupied by an X, occupied by an O, empty 15

Creating Data Representations Let us now assume that we want to develop a network that plays tic-tac-toe. This network is supposed to receive the current game configuration as its input. Its output is the position where the network wants to place its next symbol (X or O). Obviously, it is impossible to represent the state of each square by a single binary value. 16

Creating Data Representations Possible solution: Use multiple binary inputs to represent non-binary states. Treat each feature in the pattern as an individual subpattern. Represent each subpattern with as many positions (units) in the pattern vector as there are possible states for the feature. Then concatenate all subpatterns into one long pattern vector. 17

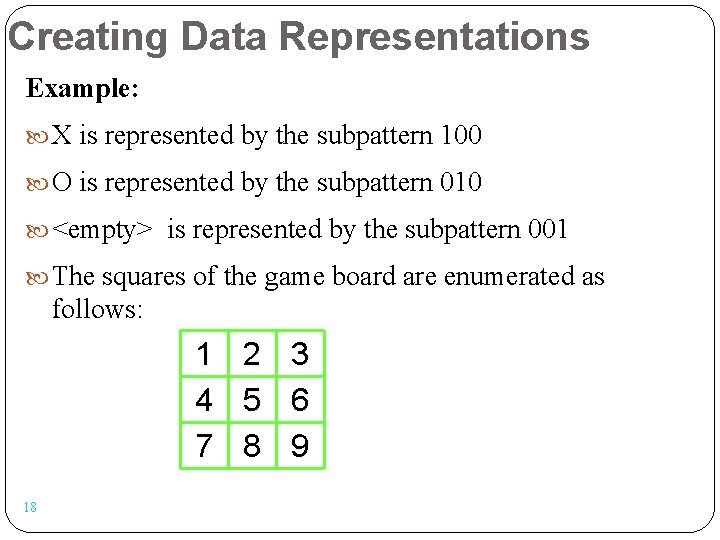

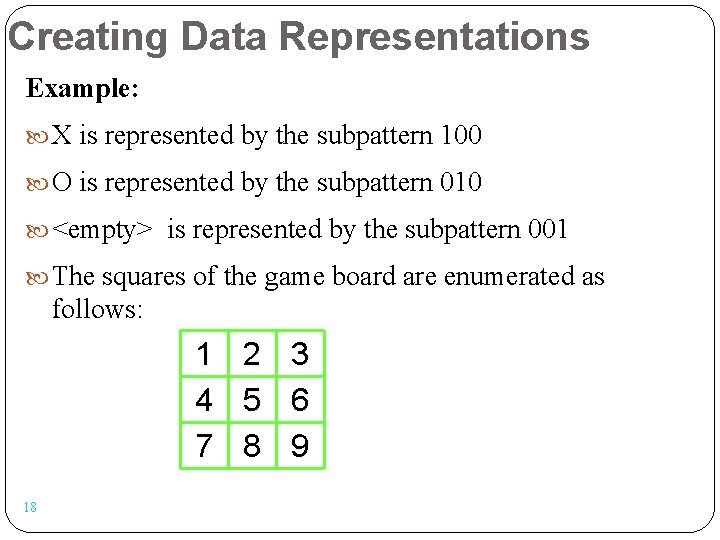

Creating Data Representations Example: X is represented by the subpattern 100 O is represented by the subpattern 010 <empty> is represented by the subpattern 001 The squares of the game board are enumerated as follows: 1 4 7 18 2 5 8 3 6 9

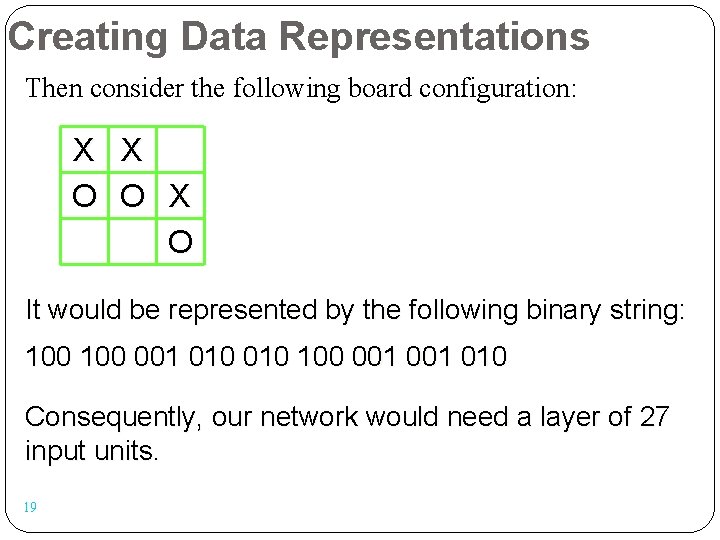

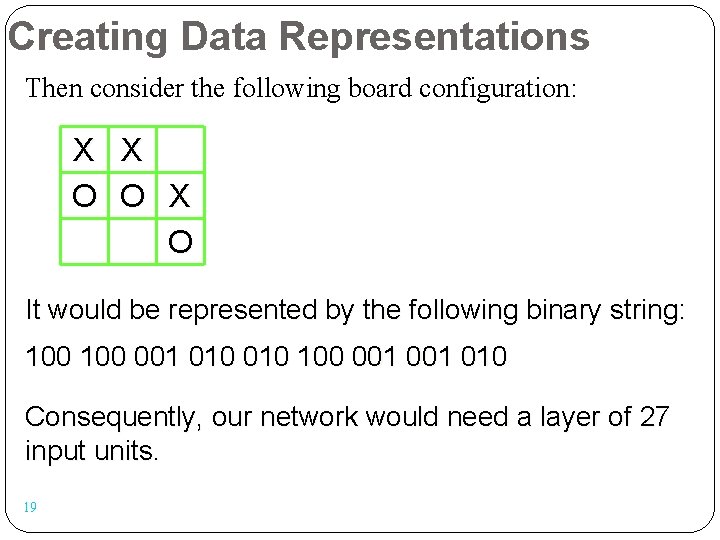

Creating Data Representations Then consider the following board configuration: X X O O X O It would be represented by the following binary string: 100 001 010 Consequently, our network would need a layer of 27 input units. 19

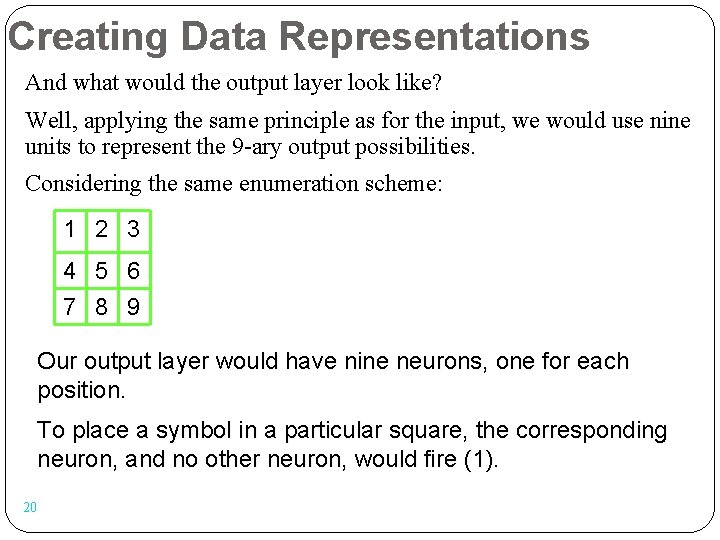

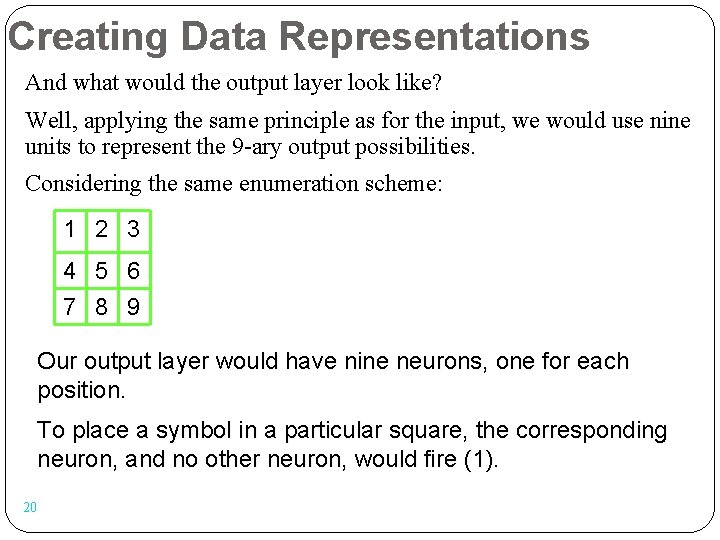

Creating Data Representations And what would the output layer look like? Well, applying the same principle as for the input, we would use nine units to represent the 9 -ary output possibilities. Considering the same enumeration scheme: 1 2 3 4 5 6 7 8 9 Our output layer would have nine neurons, one for each position. To place a symbol in a particular square, the corresponding neuron, and no other neuron, would fire (1). 20

Creating Data Representations But… Would it not lead to a smaller, simpler network if we used a shorter encoding of the non-binary states? We do not need 3 -digit strings such as 100, 010, and 001, to represent X, O, and the empty square, respectively. We can achieve a unique representation with 2 -digits strings such as 10, 01, and 00. 21

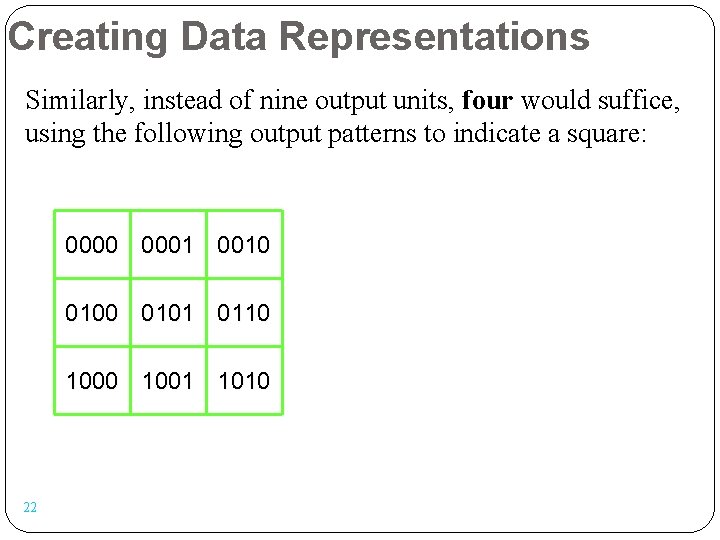

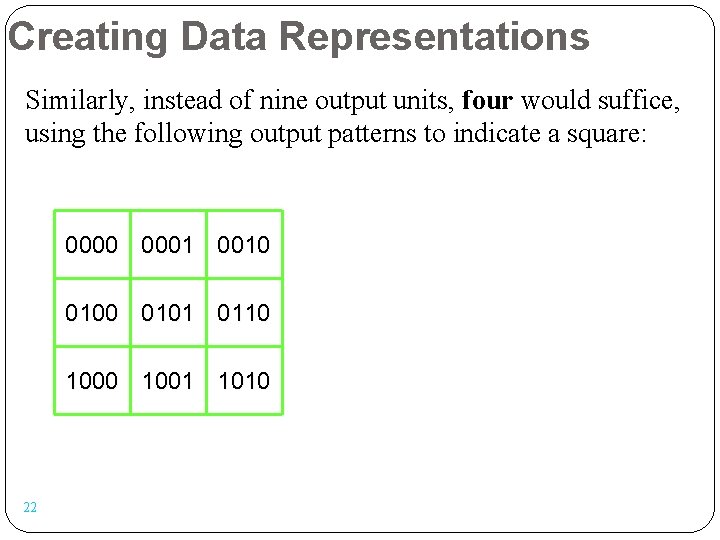

Creating Data Representations Similarly, instead of nine output units, four would suffice, using the following output patterns to indicate a square: 0000 0001 0010 0101 0110 1001 1010 22

Creating Data Representations The problem with such representations is that the meaning of the output of one neuron depends on the output of other neurons. This means that each neuron does not represent (detect) a certain feature, but groups of neurons do. In general, such functions are much more difficult to learn. Such networks usually need more hidden neurons and longer training, and their ability to generalize is weaker than for the one-neuron-per-feature-value networks. 23

Creating Data Representations On the other hand, sets of orthogonal vectors (such as 100, 010, 001) can be processed by the network more easily. This becomes clear when we consider that a neuron’s net input signal is computed as the inner product of the input and weight vectors. The geometric interpretation of these vectors shows that orthogonal vectors are especially easy to discriminate for a single neuron. 24

Creating Data Representations Another way of representing n-ary data in a neural network is using one neuron per feature, but scaling the (analog) value to indicate the degree to which a feature is present. Good examples: the brightness of a pixel in an input image the distance between a robot and an obstacle Poor examples: the letter (1 – 26) of a word the type (1 – 6) of a chess piece 25

Creating Data Representations This can be explained as follows: The way NNs work (both biological and artificial ones) is that each neuron represents the presence/absence of a particular feature. Activations 0 and 1 indicate absence or presence of that feature, respectively, and in analog networks, intermediate values indicate the extent to which a feature is present. Consequently, a small change in one input value leads to only a small change in the network’s activation pattern. 26

Creating Data Representations Therefore, it is appropriate to represent a non-binary feature by a single analog input value only if this value is scaled, i. e. , it represents the degree to which a feature is present. This is the case for the brightness of a pixel or the output of a distance sensor (feature = obstacle proximity). It is not the case for letters or chess pieces. For example, assigning values to individual letters (a = 0, b = 0. 04, c = 0. 08, …, z = 1) implies that a and b are in some way more similar to each other than are a and z. Obviously, in most contexts, this is not a reasonable assumption. 27

Creating Data Representations It is also important to notice that, in artificial (not natural!), completely connected networks the order of features that you specify for your input vectors does not influence the outcome. For the network performance, it is not necessary to represent, for example, similar features in neighboring input units. All units are treated equally; neighborhood of two neurons does not imply to the network that these represent similar features. Of course once you specified a particular order, you cannot change it any more during training or testing. 28

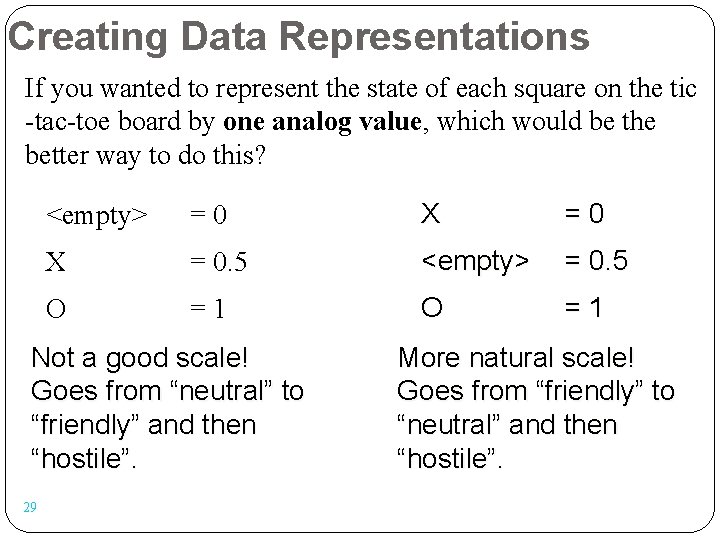

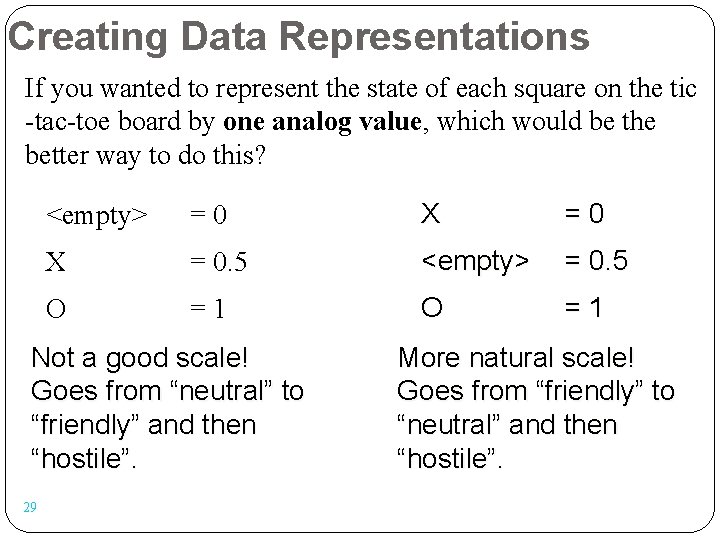

Creating Data Representations If you wanted to represent the state of each square on the tic -tac-toe board by one analog value, which would be the better way to do this? <empty> =0 X = 0. 5 <empty> = 0. 5 O =1 Not a good scale! Goes from “neutral” to “friendly” and then “hostile”. 29 More natural scale! Goes from “friendly” to “neutral” and then “hostile”.

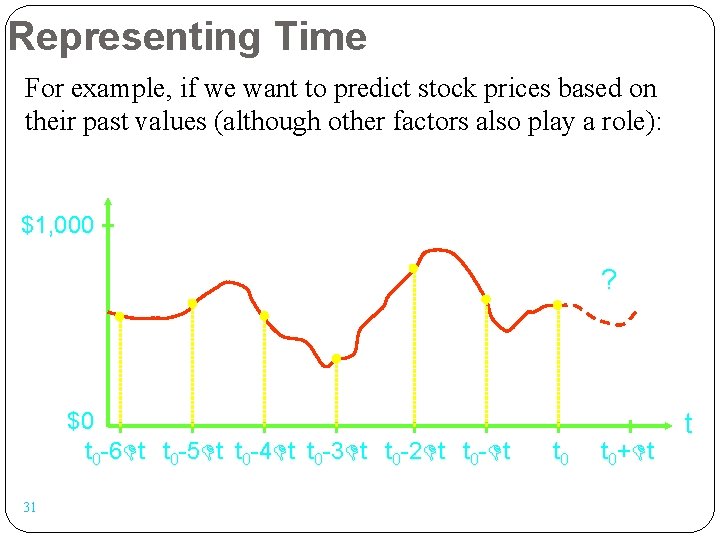

Representing Time So far we have only considered static data, that is, data that do not change over time. How can we format temporal data to feed them into an ANN in order to detect spatiotemporal patterns or even predict future states of a system? The basic idea is to treat time as another input dimension. Instead of just feeding the current data (time t 0) into our network, we expand the input vectors to contain n data vectors measured at t 0, t 0 - t, t 0 - 2 t, t 0 - 3 t, …, t 0 – (n – 1) t. 30

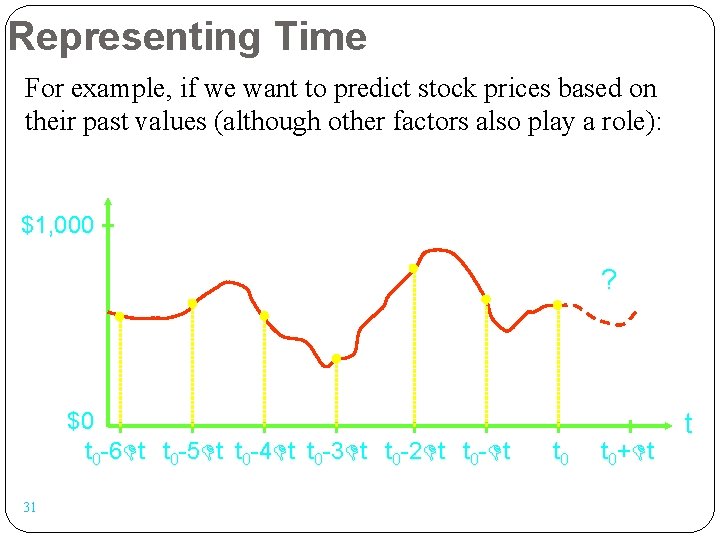

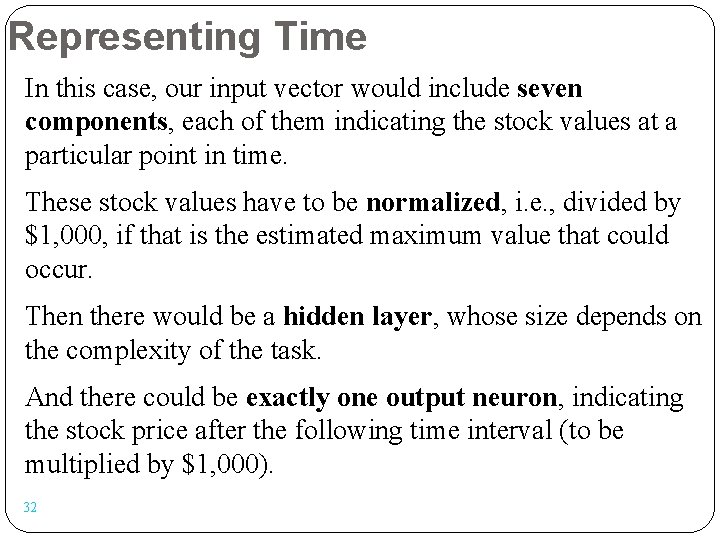

Representing Time For example, if we want to predict stock prices based on their past values (although other factors also play a role): $1, 000 ? $0 t 0 -6 t t 0 -5 t t 0 -4 t t 0 -3 t t 0 -2 t t 0 - t 31 t 0+ t t

Representing Time In this case, our input vector would include seven components, each of them indicating the stock values at a particular point in time. These stock values have to be normalized, i. e. , divided by $1, 000, if that is the estimated maximum value that could occur. Then there would be a hidden layer, whose size depends on the complexity of the task. And there could be exactly one output neuron, indicating the stock price after the following time interval (to be multiplied by $1, 000). 32

Representing Time For example, a backpropagation network could do this task. It would be trained with many stock price samples that were recorded in the past so that the price for time t 0 + t is already known. This price at time t 0 + t would be the desired output value of the network and be used to apply the BPN learning rule. Afterwards, if past stock prices indeed allow the prediction of future ones, the network will be able to give some reasonable stock price predictions. 33

Representing Time Another example: Let us assume that we want to build a very simple surveillance system. We receive bitmap images in constant time intervals and want to determine for each quadrant of the image if there is any motion visible in it, and what the direction of this motion is. Let us assume that each image consists of 10 by 10 grayscale pixels with values from 0 to 255. Let us further assume that we only want to determine of the four directions N, E, S, and W. 34

Representing Time As said before, it makes sense to represent the brightness of each pixel by an individual analog value. We normalize these values by dividing them by 255. Consequently, if we were only interested in individual images, we would feed the network with input vectors of size 100. Let us assume that two successive images are sufficient to detect motion. Then at each point in time, we would like to feed the network with the current image and the previous image that we received from the camera. 35

Representing Time We can simply concatenate the vectors representing these two images, resulting in a 200 -dimensional input vector. Therefore, our network would have 200 input neurons, and a certain number of hidden units. With regard to the output, would it be a good idea to represent the direction (N, E, S, or W) by a single analog value? No, these values do not represent a scale, so this would make the network computations unnecessarily complicated. 36

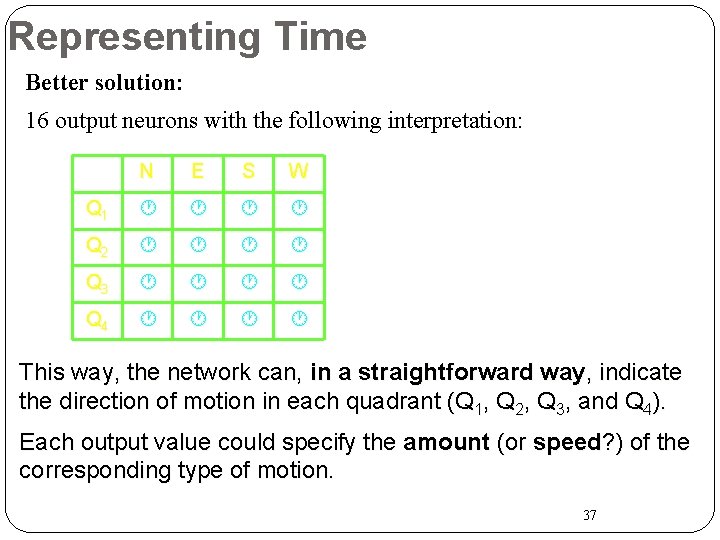

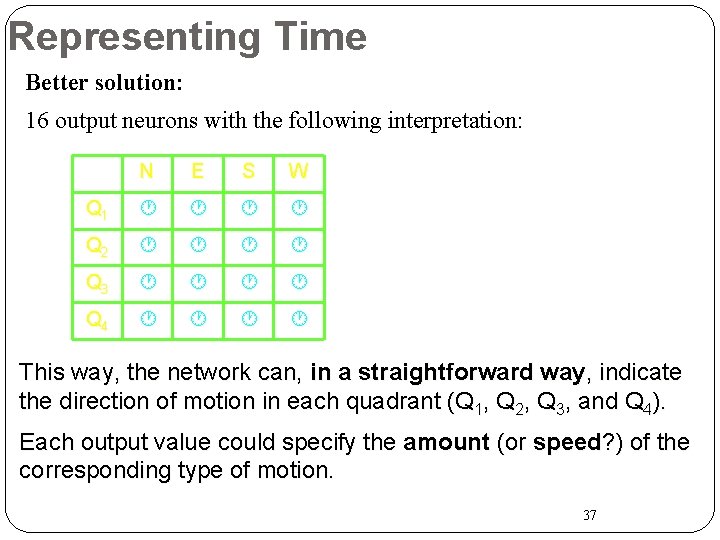

Representing Time Better solution: 16 output neurons with the following interpretation: N E S W Q 1 Q 2 Q 3 Q 4 This way, the network can, in a straightforward way, indicate the direction of motion in each quadrant (Q 1, Q 2, Q 3, and Q 4). Each output value could specify the amount (or speed? ) of the corresponding type of motion. 37