GRAPH NEURAL NETWORKS MLG Reading Group CBL Department

GRAPH NEURAL NETWORKS MLG Reading Group, CBL Department of Engineering, University of Cambridge, Cambridgeshire, England, UK, EU 14 November 2018 Presenter: Matej Balog

OUTLINE Part 1: General introduction • GNN encoders in contrast to RNN encoders • implementation • a historical look Part II: Applications • program source code [Allamanis et al. , 2018] • NP-hard problems [Li et al. , 2018] • . . . and others Part III: Power of GNNs

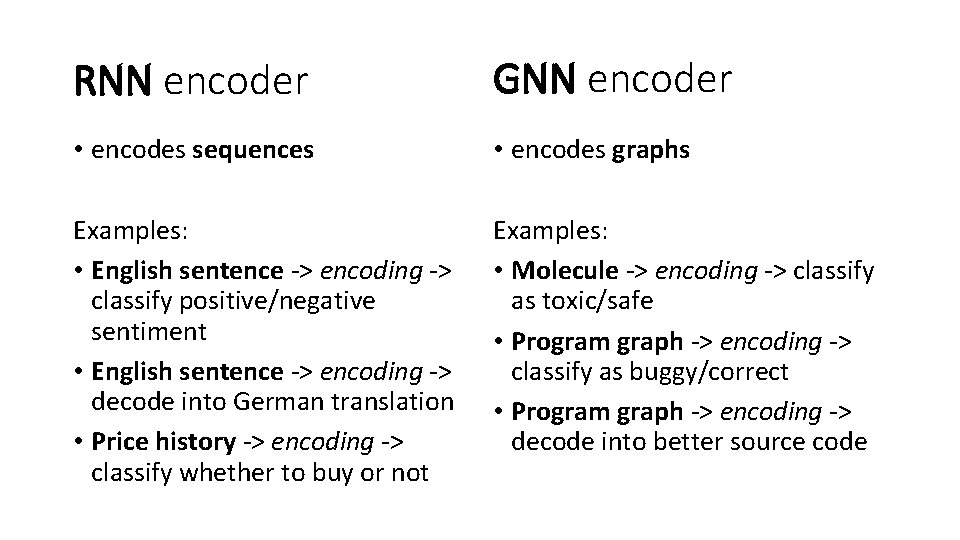

RNN encoder GNN encoder • encodes sequences • encodes graphs Examples: • English sentence -> encoding -> classify positive/negative sentiment • English sentence -> encoding -> decode into German translation • Price history -> encoding -> classify whether to buy or not Examples: • Molecule -> encoding -> classify as toxic/safe • Program graph -> encoding -> classify as buggy/correct • Program graph -> encoding -> decode into better source code

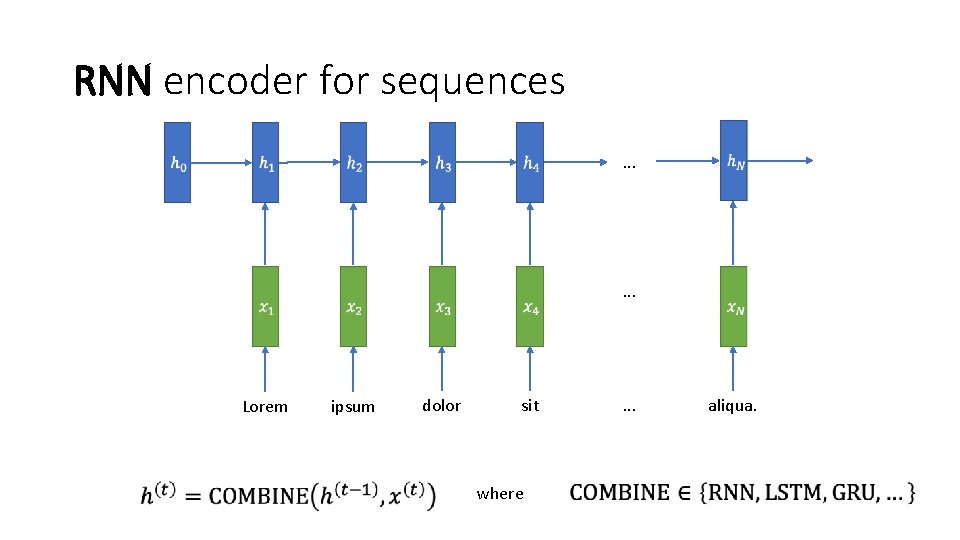

RNN encoder for sequences . . . Lorem ipsum dolor sit where . . . aliqua.

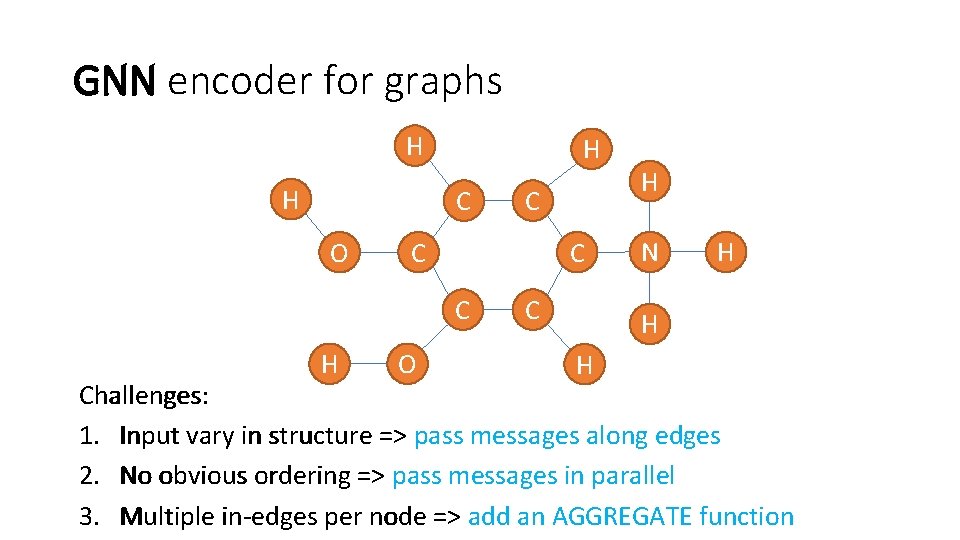

GNN encoder for graphs H H H C O C C C H C O C H N H H H Challenges: 1. Input vary in structure => pass messages along edges 2. No obvious ordering => pass messages in parallel 3. Multiple in-edges per node => add an AGGREGATE function

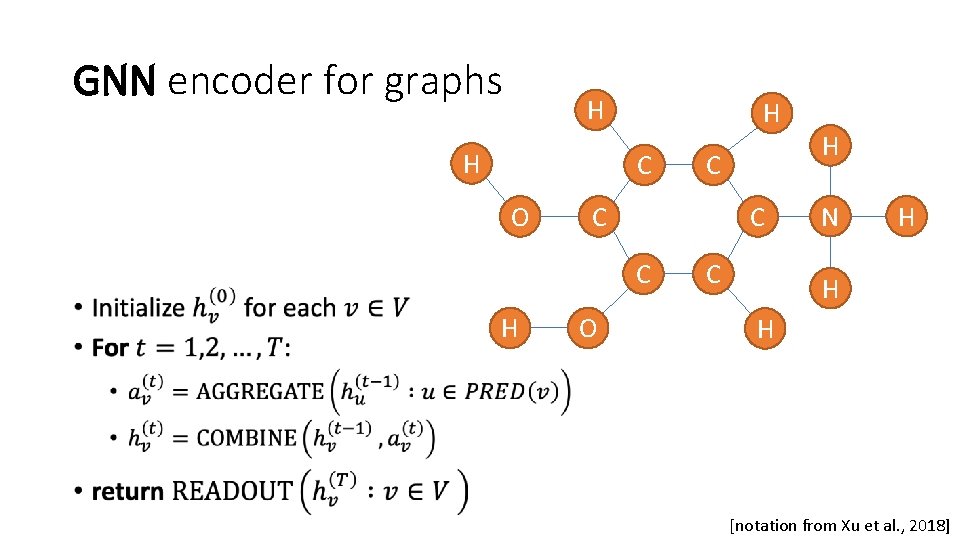

GNN encoder for graphs H H C O • H C C C H C O C H N H H H [notation from Xu et al. , 2018]

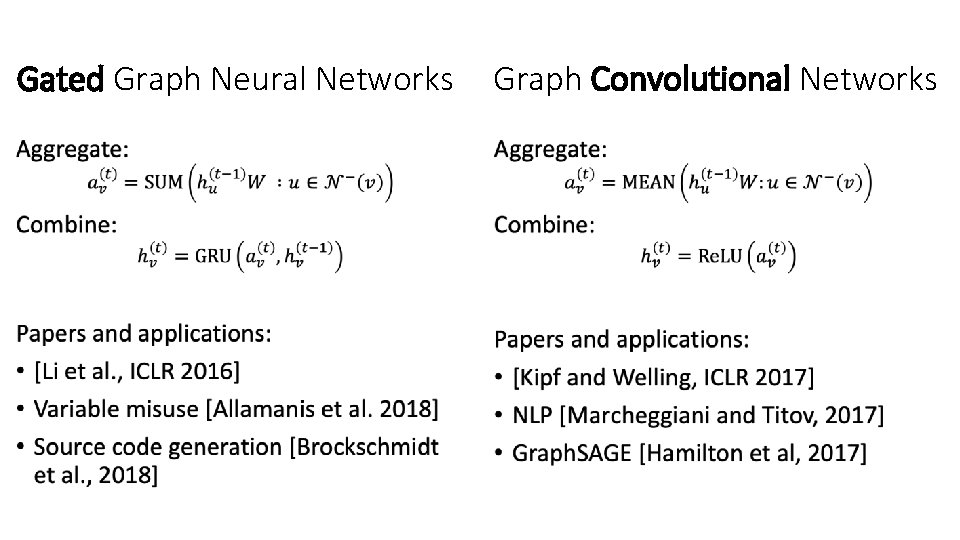

RNN encoder GNN encoder • encodes sequences • inputs vary in size • sequential message passing • learnable parameters: • encodes graphs • inputs vary in size and structure • parallel message passing • learnable parameters • input embeddings • COMBINE • READOUT • • input embeddings AGGREGATE COMBINE READOUT

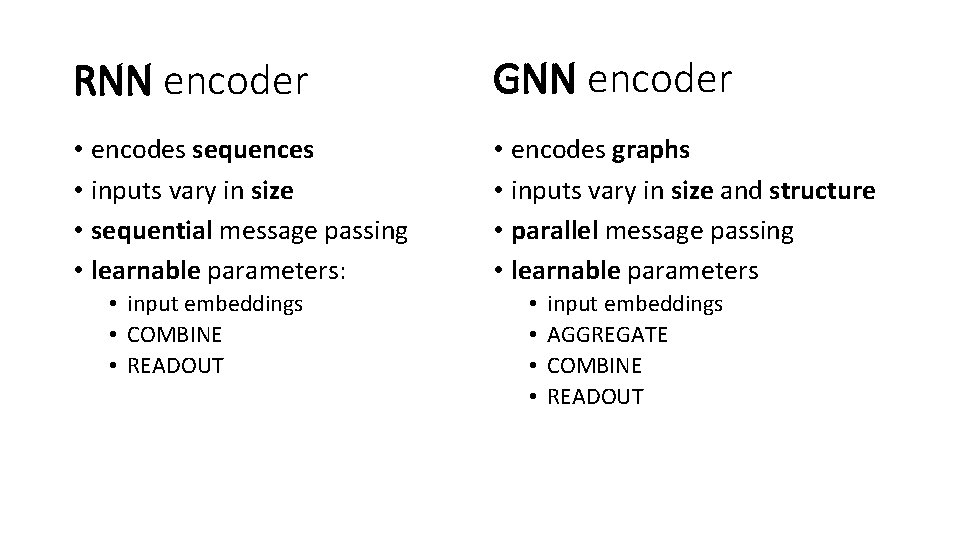

Gated Graph Neural Networks Graph Convolutional Networks • •

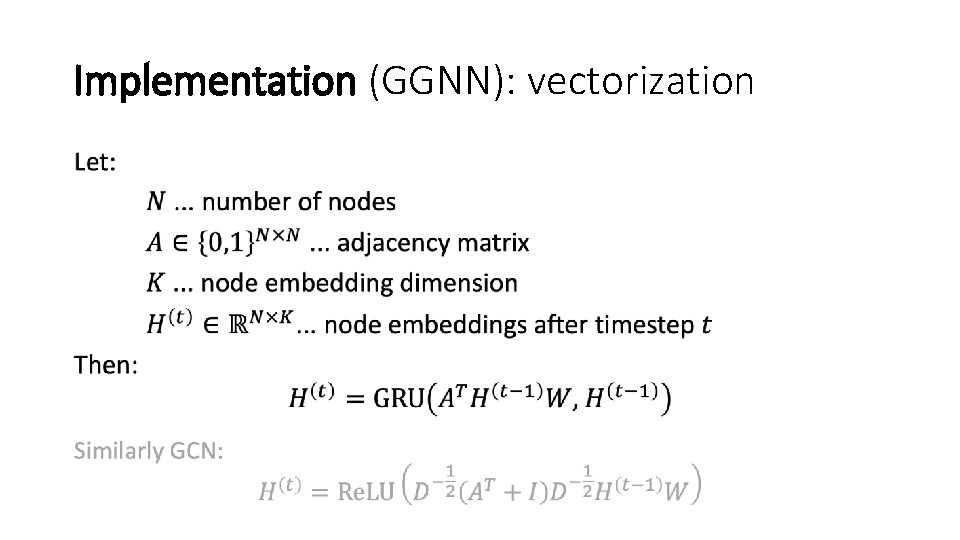

Implementation (GGNN): vectorization •

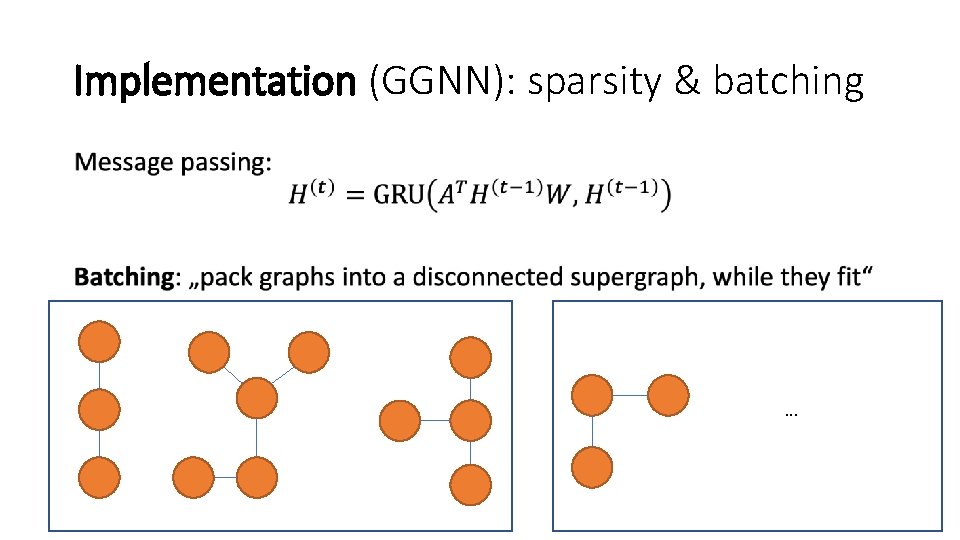

Implementation (GGNN): sparsity & batching • . . .

![Implementation (GGNN): code [github. com/Microsoft/gated-graph-neural-networksamples] Implementation (GGNN): code [github. com/Microsoft/gated-graph-neural-networksamples]](http://slidetodoc.com/presentation_image_h/e1fef6498d67a5969121c4ad3f4c4532/image-11.jpg)

Implementation (GGNN): code [github. com/Microsoft/gated-graph-neural-networksamples]

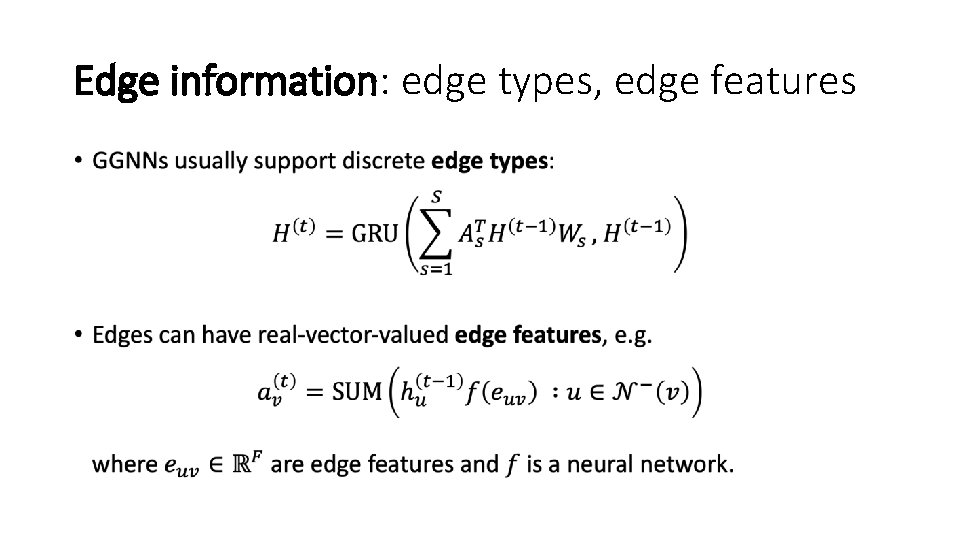

Edge information: edge types, edge features •

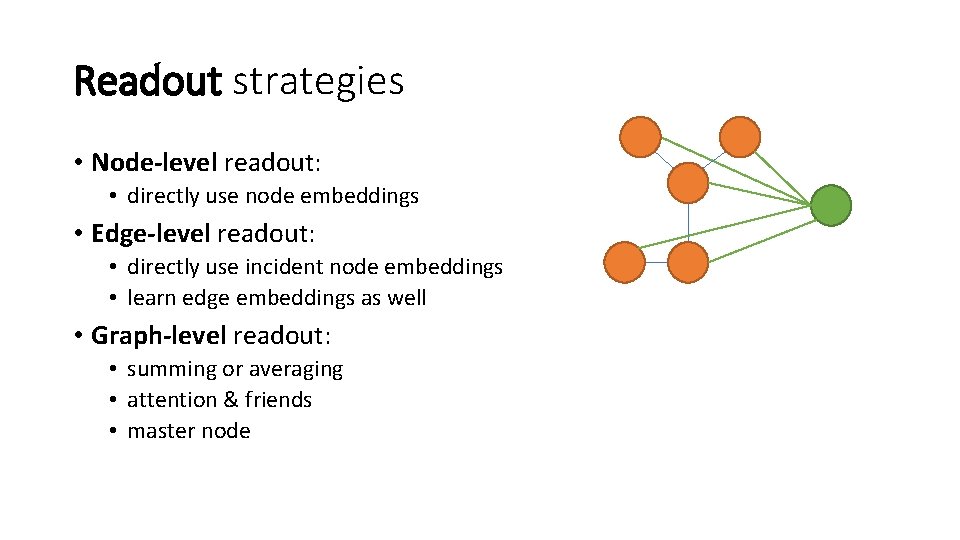

Readout strategies • Node-level readout: • directly use node embeddings • Edge-level readout: • directly use incident node embeddings • learn edge embeddings as well • Graph-level readout: • summing or averaging • attention & friends • master node

![Historical look: Scarselli et al. [2009] [Scarselli et al. 2009] • • No explicit Historical look: Scarselli et al. [2009] [Scarselli et al. 2009] • • No explicit](http://slidetodoc.com/presentation_image_h/e1fef6498d67a5969121c4ad3f4c4532/image-14.jpg)

Historical look: Scarselli et al. [2009] [Scarselli et al. 2009] • • No explicit COMBINE function • Learning: Almeida-Pineida learning algorithm Consequences: • memory efficient (intermediate states not needed for gradients) • constrained parameters to ensure propagation map is contractive • no need to initialize node features (unique fixed point) Li et al. [2015]

![Historical look: „Shallow“ network learning • Hamilton et al. [2018] Historical look: „Shallow“ network learning • Hamilton et al. [2018]](http://slidetodoc.com/presentation_image_h/e1fef6498d67a5969121c4ad3f4c4532/image-15.jpg)

Historical look: „Shallow“ network learning • Hamilton et al. [2018]

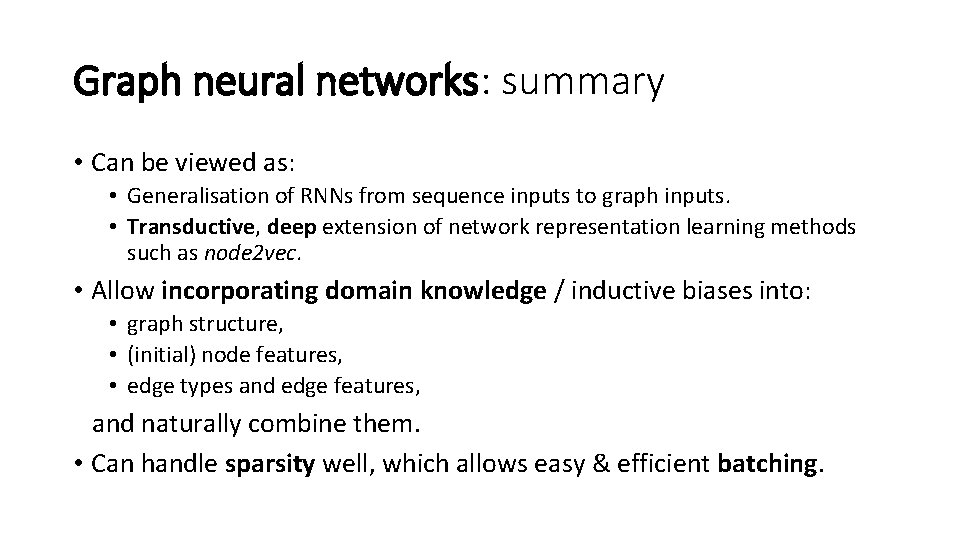

Graph neural networks: summary • Can be viewed as: • Generalisation of RNNs from sequence inputs to graph inputs. • Transductive, deep extension of network representation learning methods such as node 2 vec. • Allow incorporating domain knowledge / inductive biases into: • graph structure, • (initial) node features, • edge types and edge features, and naturally combine them. • Can handle sparsity well, which allows easy & efficient batching.

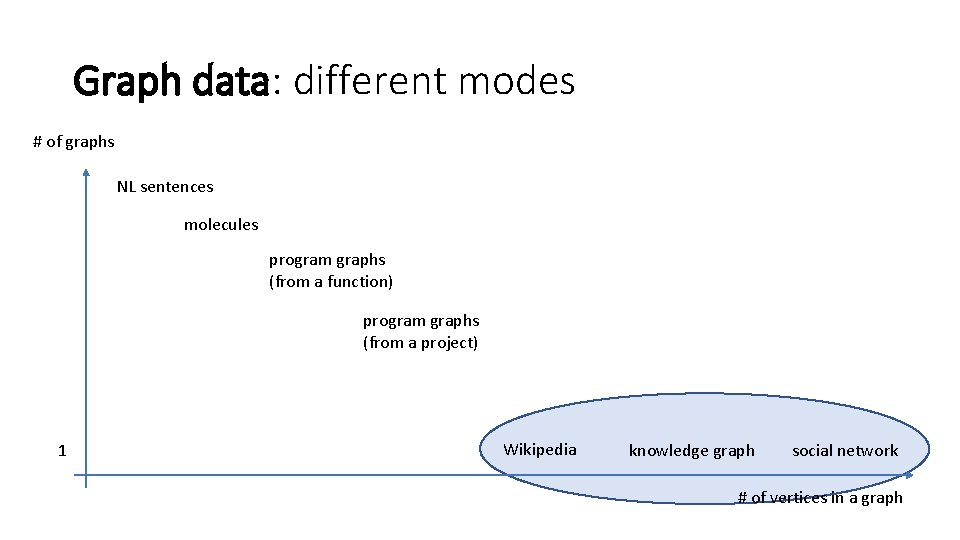

Graph data: different modes # of graphs NL sentences molecules program graphs (from a function) program graphs (from a project) 1 Wikipedia knowledge graph social network # of vertices in a graph

PART II: APPLICATIONS • Program source code: variable misuse detection, and program synthesis • NP-hard problems: Satisfiability (SAT), Maximum size independent set (MIS) • Others: molecules, NLP, recommendation systems

![Source code: graph representation Graph from syntax: [Allamanis et al. , 2018] Additional edges Source code: graph representation Graph from syntax: [Allamanis et al. , 2018] Additional edges](http://slidetodoc.com/presentation_image_h/e1fef6498d67a5969121c4ad3f4c4532/image-19.jpg)

Source code: graph representation Graph from syntax: [Allamanis et al. , 2018] Additional edges from semantics:

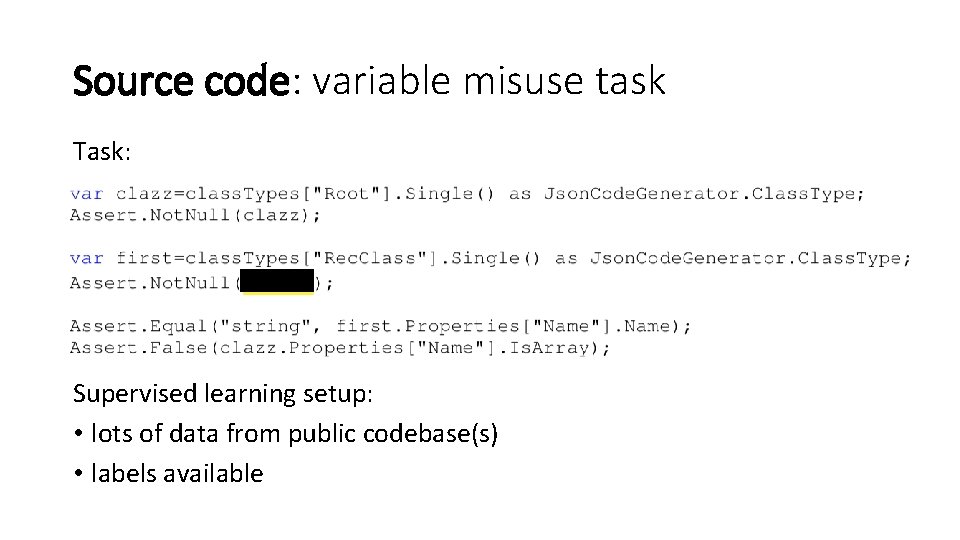

Source code: variable misuse task Task: Supervised learning setup: • lots of data from public codebase(s) • labels available

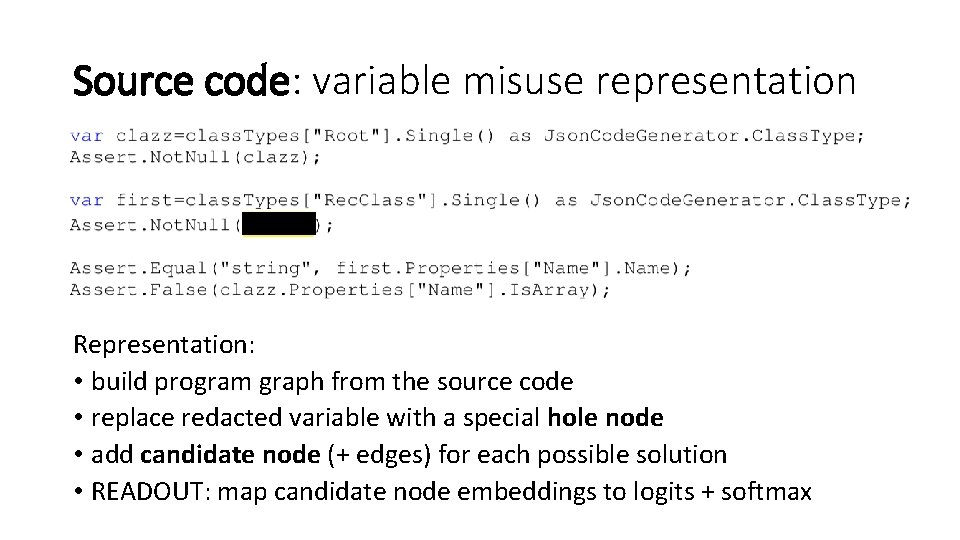

Source code: variable misuse representation Representation: • build program graph from the source code • replace redacted variable with a special hole node • add candidate node (+ edges) for each possible solution • READOUT: map candidate node embeddings to logits + softmax

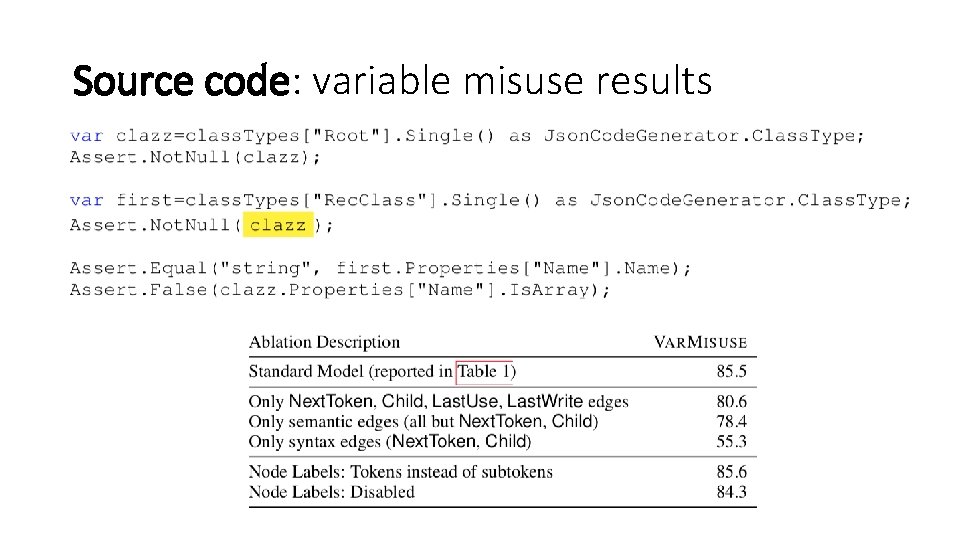

Source code: variable misuse results

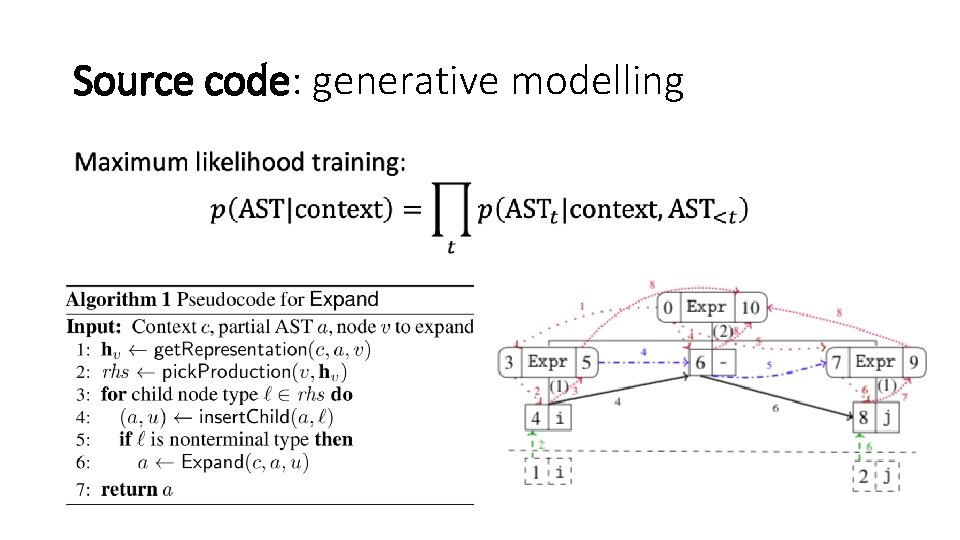

Source code: generative modelling •

![NP-hard problems: SAT • [Selsam et al. , 2018] NP-hard problems: SAT • [Selsam et al. , 2018]](http://slidetodoc.com/presentation_image_h/e1fef6498d67a5969121c4ad3f4c4532/image-24.jpg)

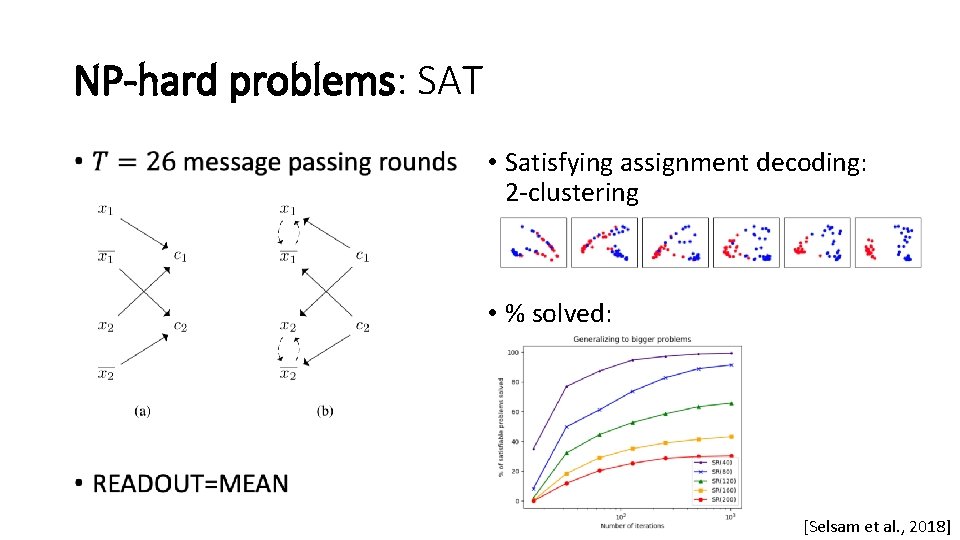

NP-hard problems: SAT • [Selsam et al. , 2018]

NP-hard problems: SAT • • Satisfying assignment decoding: 2 -clustering • % solved: [Selsam et al. , 2018]

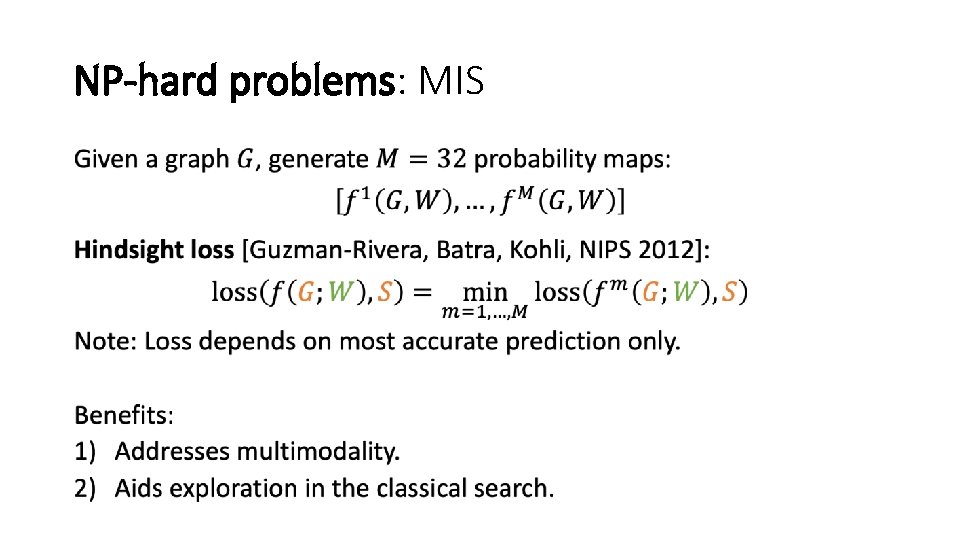

NP-hard problems: MIS Combinatorial Optimization with Graph Convolutional Networks and Guided Tree Search Zhuwen Li, Qifeng Chen, Vladlen Koltun [NIPS 2018] Tricks: • use Maximum Independent Set (MIS) as the canonical problem • combine neural approach with classical search • training loss permitting diverse candidate generation

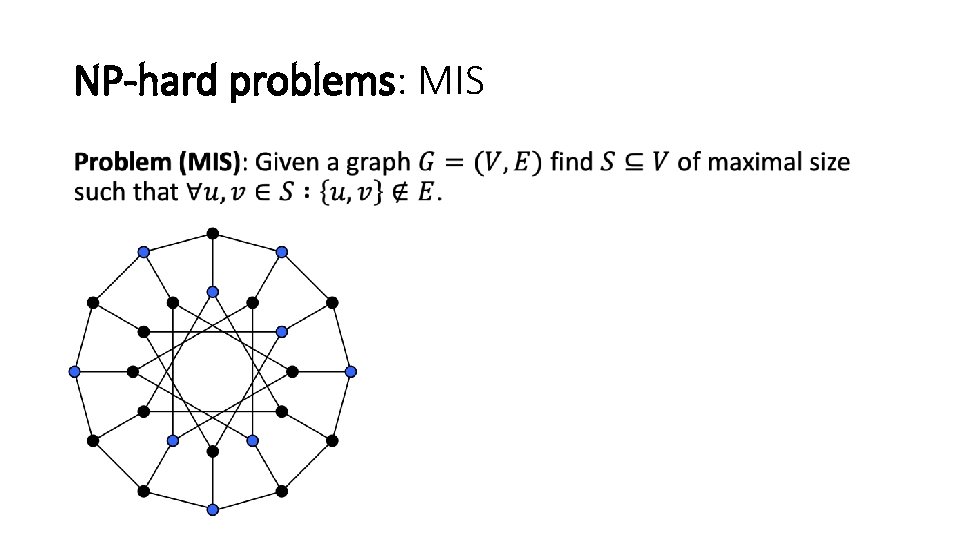

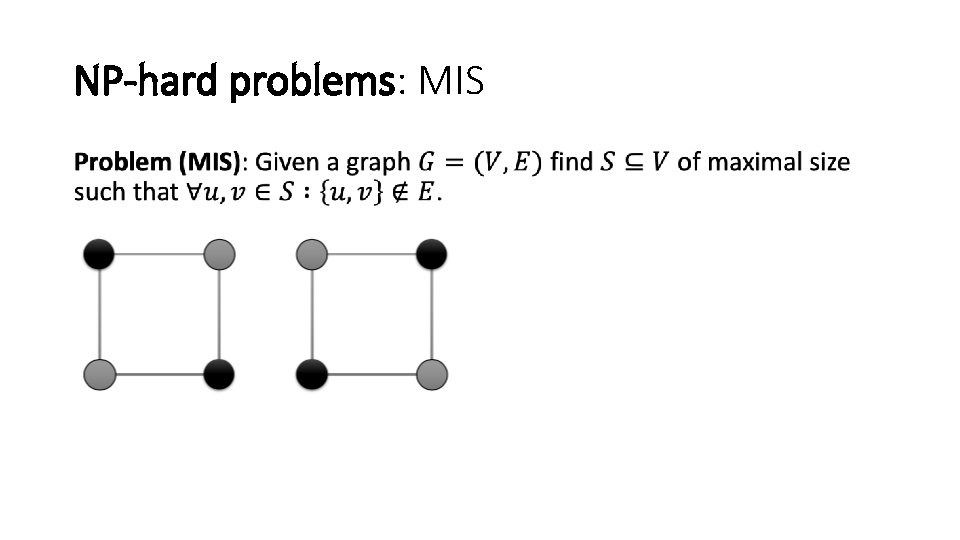

NP-hard problems: MIS •

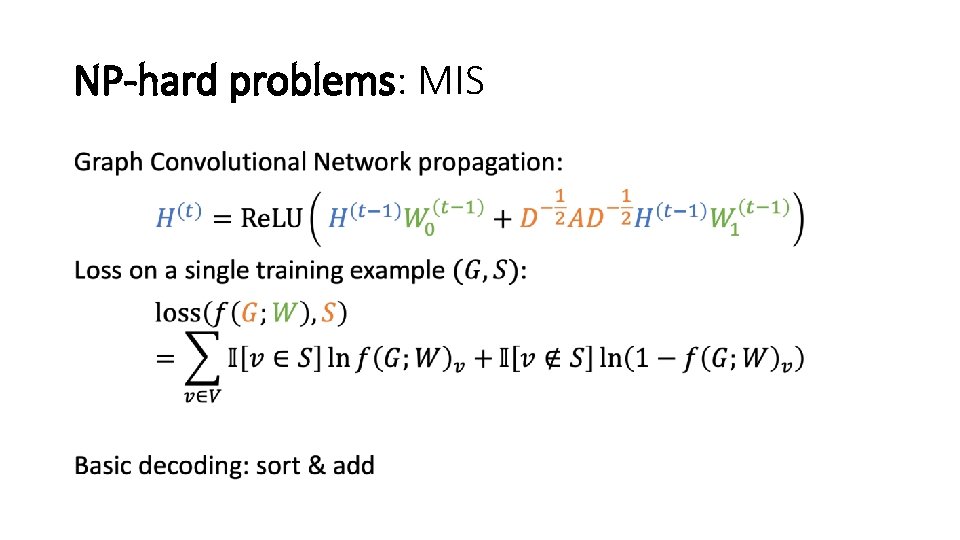

NP-hard problems: MIS •

NP-hard problems: MIS •

NP-hard problems: MIS •

NP-hard problems: MIS •

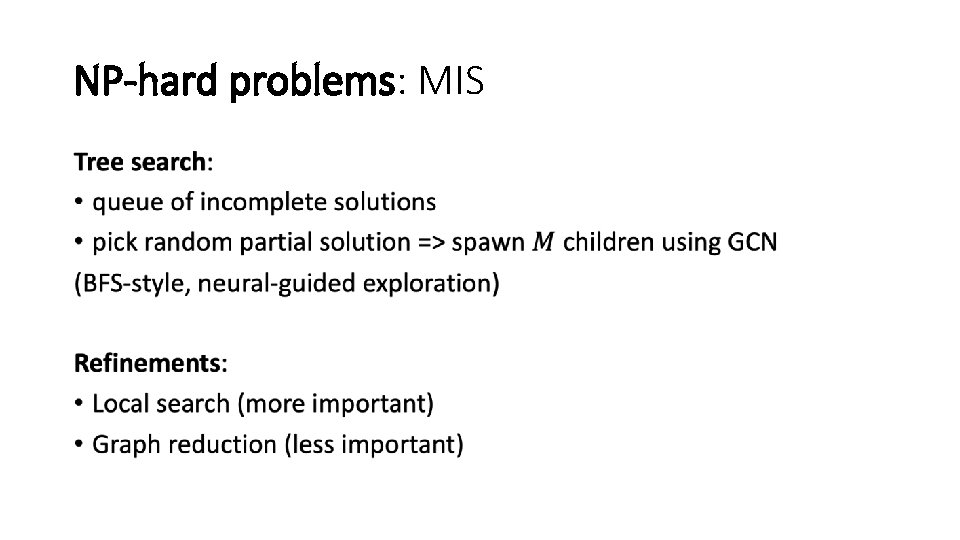

NP-hard problems: MIS Data: SATLIB benchmark • 40, 000 synthetic, satisfiable 3 -SAT instances • Average size: 400 clauses (=> 1200 vertices) [Li et al. , 2018]

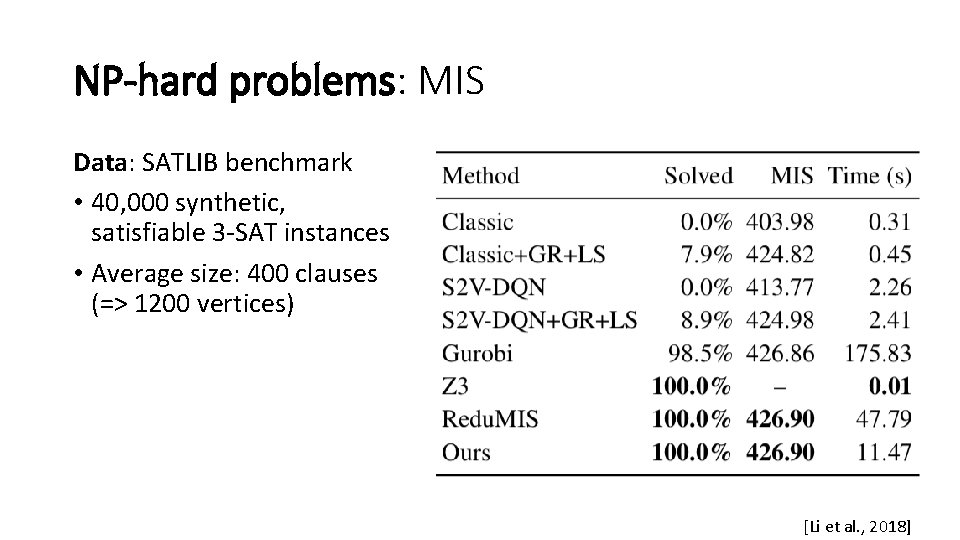

NP-hard problems: MIS Generalization to other (1) datasets and (2) problems. [BUAA-MC = challenging Maximum. Clique dataset] [Li et al. , 2018]

NP-hard problems: MIS Successful generalisation: 1. from synthetic graphs to real graphs 2. from SAT graphs to other problems 3. from graphs with ~1000 nodes to graphs with 100 x-10000 x more Limitation: • Maximum Clique is expensive (complementary graph too dense). [Li et al. , 2018]

![Molecules Neural Message Passing for Quantum Chemistry Gilmer, Schoenholz, Riley, Vinyals, Dahl [ICML 2017] Molecules Neural Message Passing for Quantum Chemistry Gilmer, Schoenholz, Riley, Vinyals, Dahl [ICML 2017]](http://slidetodoc.com/presentation_image_h/e1fef6498d67a5969121c4ad3f4c4532/image-35.jpg)

Molecules Neural Message Passing for Quantum Chemistry Gilmer, Schoenholz, Riley, Vinyals, Dahl [ICML 2017] QM 9 benchmark: • 130, 000 molecules, with 13 properties per molecule • labels computed by expensive quantum mechanical simulation (DFT) • up to 29 atoms in a molecule

Molecules •

![Natural Language Processing [Marcheggiani and Titov, EMNLP 2017] Natural Language Processing [Marcheggiani and Titov, EMNLP 2017]](http://slidetodoc.com/presentation_image_h/e1fef6498d67a5969121c4ad3f4c4532/image-37.jpg)

Natural Language Processing [Marcheggiani and Titov, EMNLP 2017]

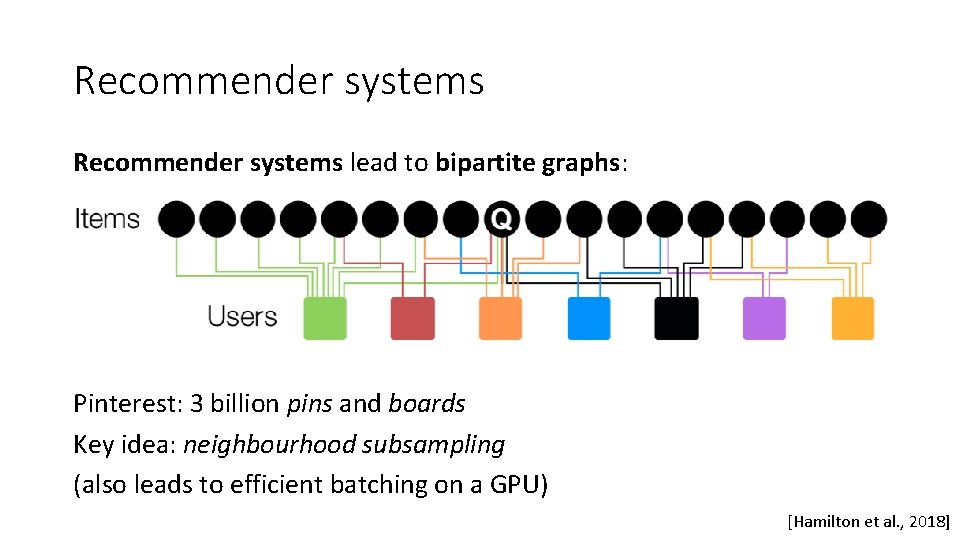

Recommender systems lead to bipartite graphs: Pinterest: 3 billion pins and boards Key idea: neighbourhood subsampling (also leads to efficient batching on a GPU) [Hamilton et al. , 2018]

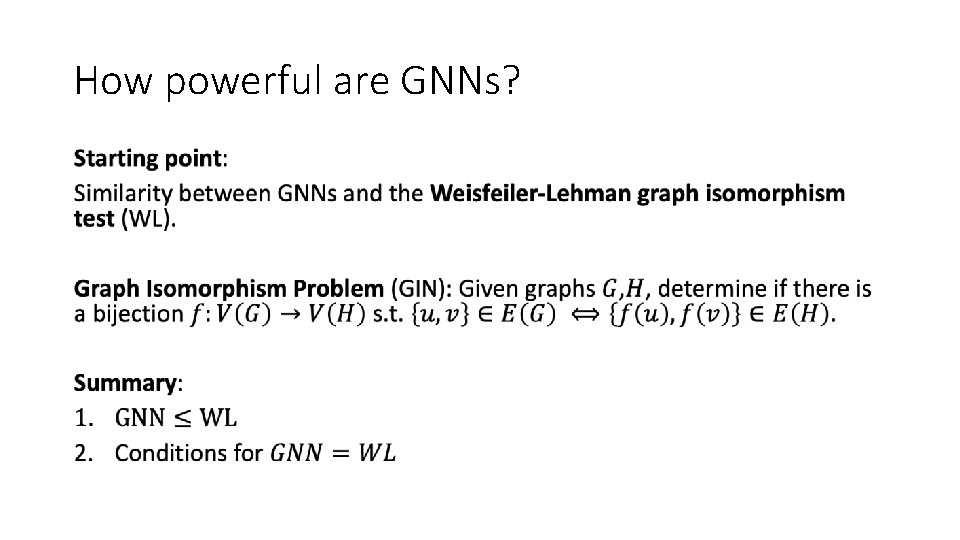

PART III: POWER OF GNNS

![How powerful are GNNs? [Xu, Hu, Leskovec, Jegelka, 2018] • „the design of new How powerful are GNNs? [Xu, Hu, Leskovec, Jegelka, 2018] • „the design of new](http://slidetodoc.com/presentation_image_h/e1fef6498d67a5969121c4ad3f4c4532/image-40.jpg)

How powerful are GNNs? [Xu, Hu, Leskovec, Jegelka, 2018] • „the design of new GNNs is mostly based on empirical intuition, heuristics, and experimental trial-and-error“ • „there is little theoretical understanding of the properties and limitations of GNNs, and formal analysis of GNN‘s representational capacity is limited“ In this paper: When can a GNN distinguish two different graphs?

How powerful are GNNs? •

![[Shervashidze, 2011] [Shervashidze, 2011]](http://slidetodoc.com/presentation_image_h/e1fef6498d67a5969121c4ad3f4c4532/image-42.jpg)

[Shervashidze, 2011]

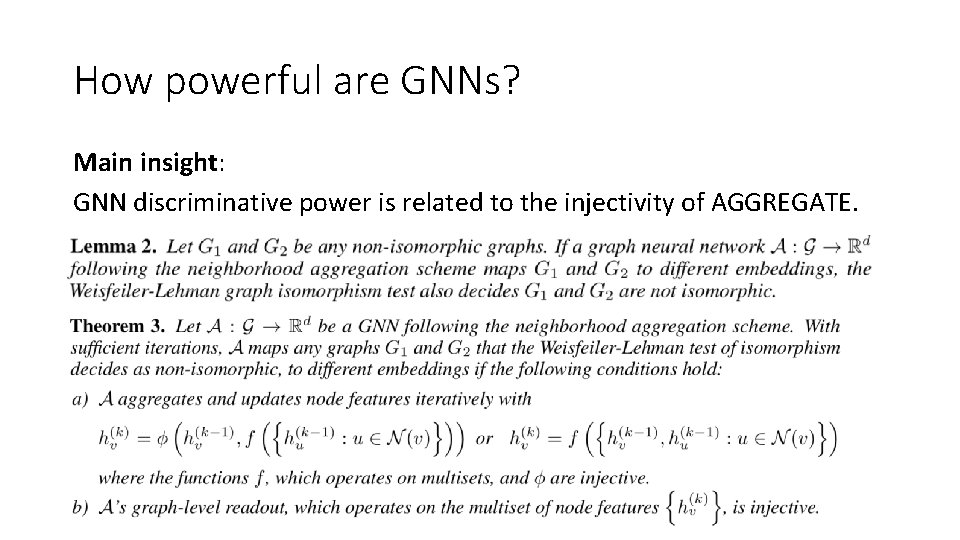

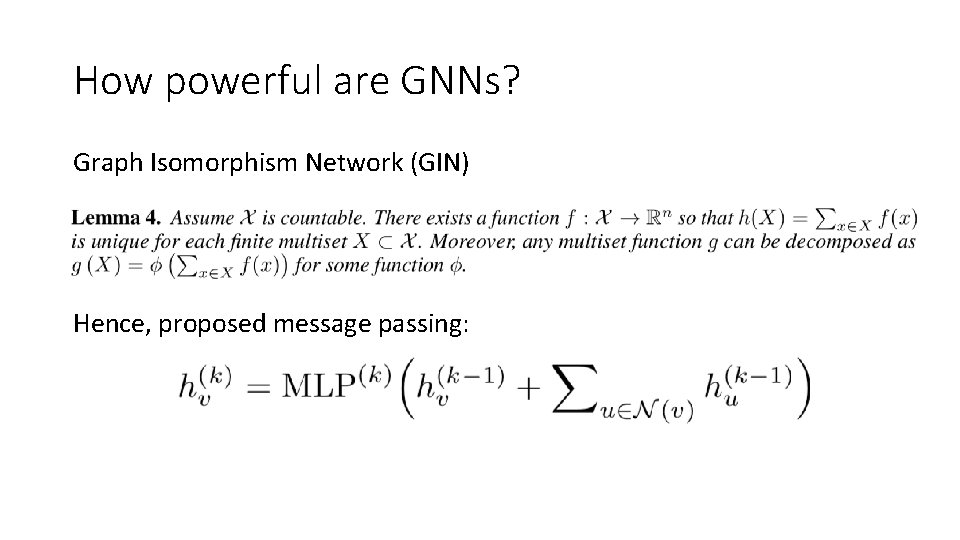

How powerful are GNNs? Main insight: GNN discriminative power is related to the injectivity of AGGREGATE.

How powerful are GNNs? Graph Isomorphism Network (GIN) Hence, proposed message passing:

![How powerful are GNNs? [Xu et al. , 2018] How powerful are GNNs? [Xu et al. , 2018]](http://slidetodoc.com/presentation_image_h/e1fef6498d67a5969121c4ad3f4c4532/image-45.jpg)

How powerful are GNNs? [Xu et al. , 2018]

![How powerful are GNNs? [Xu et al. , 2018] How powerful are GNNs? [Xu et al. , 2018]](http://slidetodoc.com/presentation_image_h/e1fef6498d67a5969121c4ad3f4c4532/image-46.jpg)

How powerful are GNNs? [Xu et al. , 2018]

MAIN REFERENCES • Learning to Represent Programs with Graphs Miltos Allamanis, Marc Brockschmidt, Mahmoud Khademi [ICLR 2018] • Combinatorial Optimization using Graph Convolutional Networks and Guided Tree Search Zhuwen Li, Qifeng Chen, Vladlen Koltun [NIPS 2018] • How powerful are Graph Neural Networks? Kelwin Xu, Weihua Hu, Jure Leskovec, Stefanie Jegelka [under review at ICLR 2019]

- Slides: 47