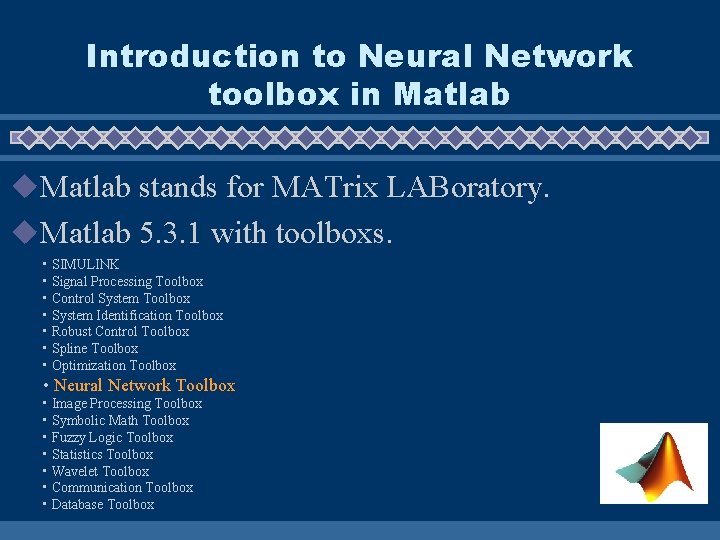

Introduction to Neural Network toolbox in Matlab u

Introduction to Neural Network toolbox in Matlab u. Matlab stands for MATrix LABoratory. u. Matlab 5. 3. 1 with toolboxs. • SIMULINK • Signal Processing Toolbox • Control System Toolbox • System Identification Toolbox • Robust Control Toolbox • Spline Toolbox • Optimization Toolbox • Neural Network Toolbox • Image Processing Toolbox • Symbolic Math Toolbox • Fuzzy Logic Toolbox • Statistics Toolbox • Wavelet Toolbox • Communication Toolbox • Database Toolbox

Programming Language : Matlab u High-level script language with interpreter. u Huge library of function and scripts. u Act as an computing environment that combines numeric computation, advanced graphics and visualization.

Entrance of matlab u. Type matlab in unix command prompt • e. g. sparc 76. cs. cuhk. hk: /uac/gds/username> matlab • If you will find an command prompt ‘>>’ and you have successfully entered matlab. u>>

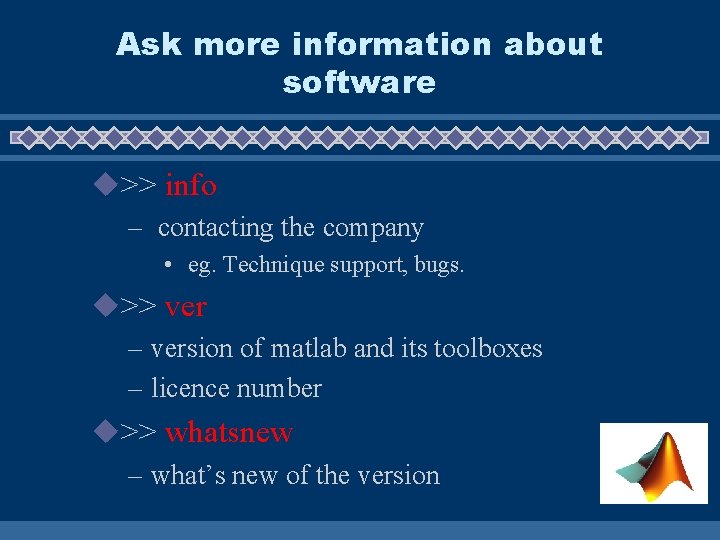

Ask more information about software u>> info – contacting the company • eg. Technique support, bugs. u>> ver – version of matlab and its toolboxes – licence number u>> whatsnew – what’s new of the version

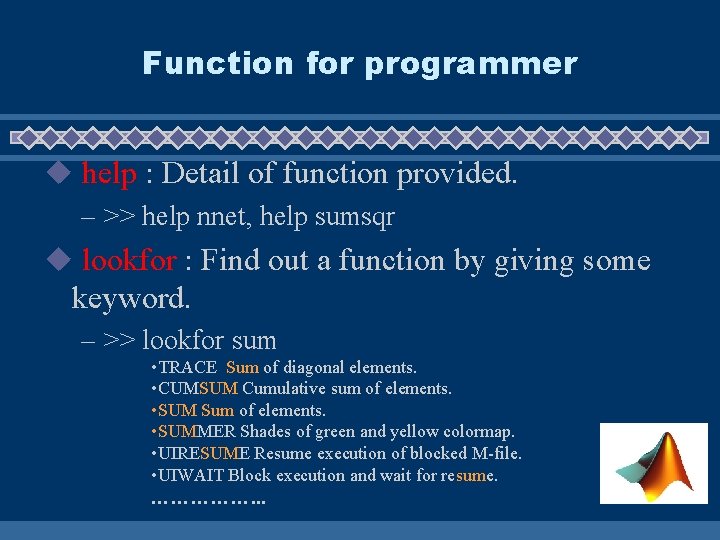

Function for programmer u help : Detail of function provided. – >> help nnet, help sumsqr u lookfor : Find out a function by giving some keyword. – >> lookfor sum • TRACE Sum of diagonal elements. • CUMSUM Cumulative sum of elements. • SUM Sum of elements. • SUMMER Shades of green and yellow colormap. • UIRESUME Resume execution of blocked M-file. • UIWAIT Block execution and wait for resume. ……………. . .

Function for programmer (cont’d) uwhich : the location of function in the system u(similar to whereis in unix shell) – >> which sum So that you can save it – sum is a built-in function. – >> which sumsqr in your own directory and modify it. – /opt 1/matlab-5. 3. 1/toolbox/nnet/sumsqr. m

Function for programmer (cont’d) u! : calling unix command in matlab system – >> !ls – >> !netscape

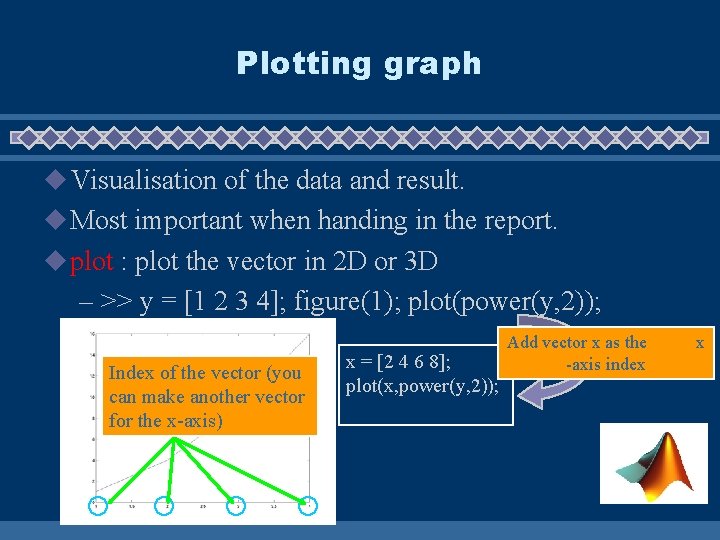

Plotting graph u Visualisation of the data and result. u Most important when handing in the report. u plot : plot the vector in 2 D or 3 D – >> y = [1 2 3 4]; figure(1); plot(power(y, 2)); Index of the vector (you can make another vector for the x-axis) x = [2 4 6 8]; plot(x, power(y, 2)); Add vector x as the -axis index x

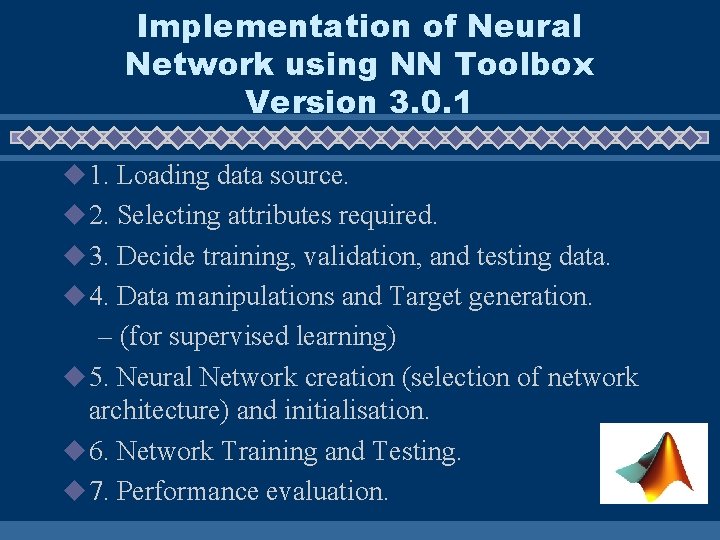

Implementation of Neural Network using NN Toolbox Version 3. 0. 1 u 1. Loading data source. u 2. Selecting attributes required. u 3. Decide training, validation, and testing data. u 4. Data manipulations and Target generation. – (for supervised learning) u 5. Neural Network creation (selection of network architecture) and initialisation. u 6. Network Training and Testing. u 7. Performance evaluation.

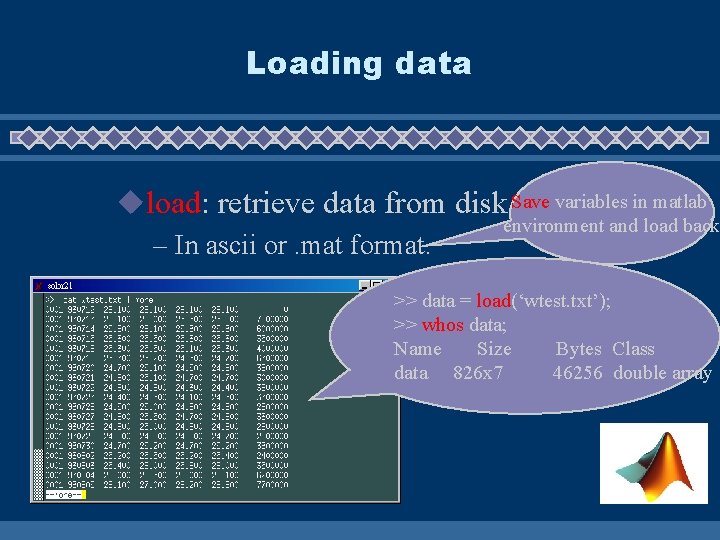

Loading data uload: retrieve data from disk. Save variables in matlab environment and load back – In ascii or. mat format. >> data = load(‘wtest. txt’); >> whos data; Name Size Bytes Class data 826 x 7 46256 double array

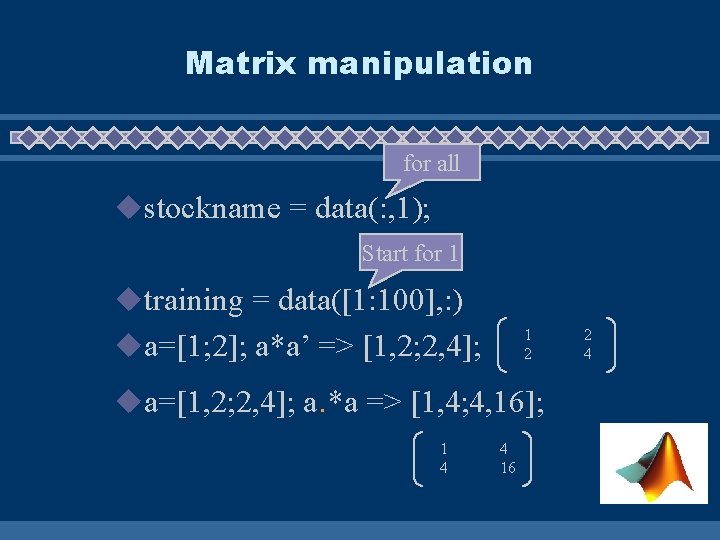

Matrix manipulation for all ustockname = data(: , 1); Start for 1 utraining = data([1: 100], : ) 1 2 ua=[1; 2]; a*a’ => [1, 2; 2, 4]; ua=[1, 2; 2, 4]; a. *a => [1, 4; 4, 16]; 1 4 4 16 2 4

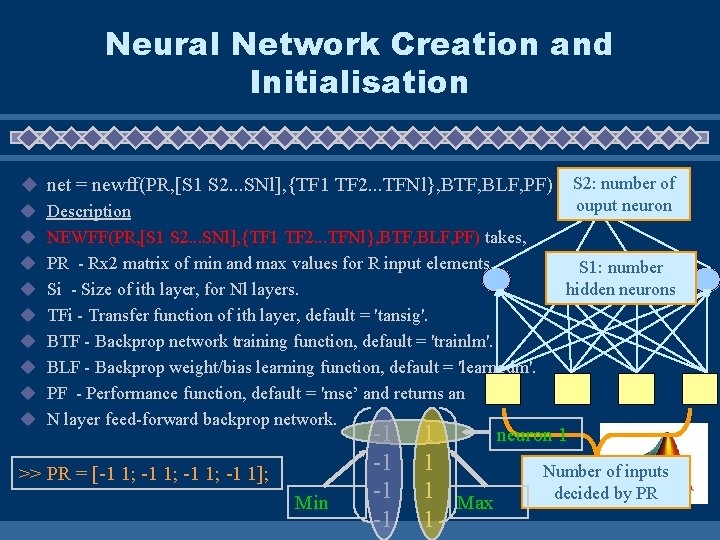

Neural Network Creation and Initialisation u net = newff(PR, [S 1 S 2. . . SNl], {TF 1 TF 2. . . TFNl}, BTF, BLF, PF) S 2: number of ouput neuron u Description u NEWFF(PR, [S 1 S 2. . . SNl], {TF 1 TF 2. . . TFNl}, BTF, BLF, PF) takes, u PR - Rx 2 matrix of min and max values for R input elements. S 1: number hidden neurons u Si - Size of ith layer, for Nl layers. u TFi - Transfer function of ith layer, default = 'tansig'. u BTF - Backprop network training function, default = 'trainlm'. u BLF - Backprop weight/bias learning function, default = 'learngdm'. u PF - Performance function, default = 'mse’ and returns an u N layer feed-forward backprop network. >> PR = [-1 1; -1 1]; Min -1 -1 neuron 1 1 1 Number of inputs 1 Max decided by PR 1

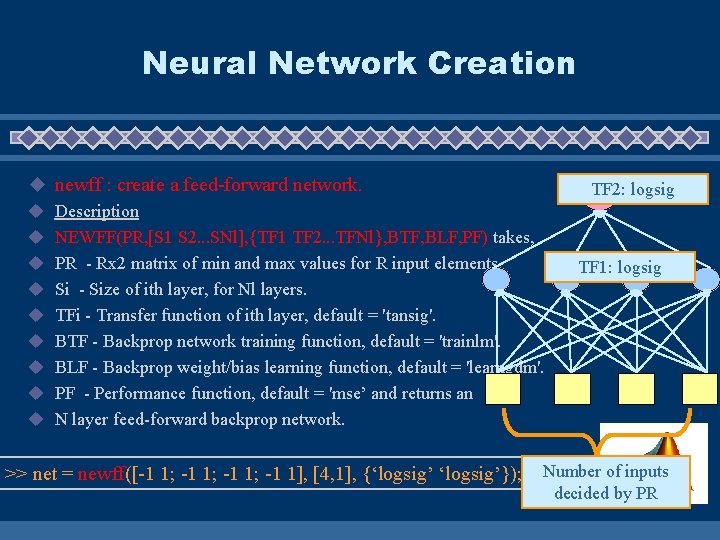

Neural Network Creation u newff : create a feed-forward network. u Description u NEWFF(PR, [S 1 S 2. . . SNl], {TF 1 TF 2. . . TFNl}, BTF, BLF, PF) takes, u PR - Rx 2 matrix of min and max values for R input elements. u Si - Size of ith layer, for Nl layers. u TFi - Transfer function of ith layer, default = 'tansig'. u BTF - Backprop network training function, default = 'trainlm'. u BLF - Backprop weight/bias learning function, default = 'learngdm'. u PF - Performance function, default = 'mse’ and returns an u N layer feed-forward backprop network. >> net = newff([-1 1; -1 1], [4, 1], {‘logsig’}); TF 2: logsig TF 1: logsig Number of inputs decided by PR

Network Initialisation u. Initialise the net’s weighting and biases u>> net = init(net); % init is called after newff ure-initialise with other function: – – net. layers{1}. init. Fcn = 'initwb'; net. input. Weights{1, 1}. init. Fcn = 'rands'; net. biases{2, 1}. init. Fcn = 'rands';

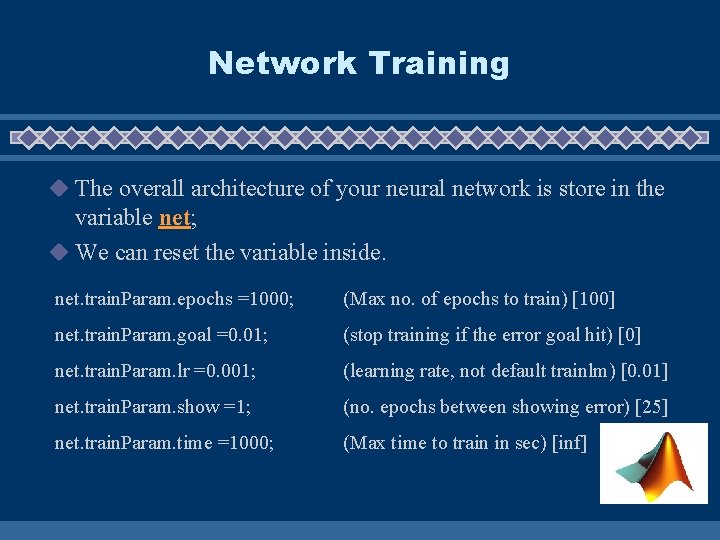

Network Training u The overall architecture of your neural network is store in the variable net; u We can reset the variable inside. net. train. Param. epochs =1000; (Max no. of epochs to train) [100] net. train. Param. goal =0. 01; (stop training if the error goal hit) [0] net. train. Param. lr =0. 001; (learning rate, not default trainlm) [0. 01] net. train. Param. show =1; (no. epochs between showing error) [25] net. train. Param. time =1000; (Max time to train in sec) [inf]

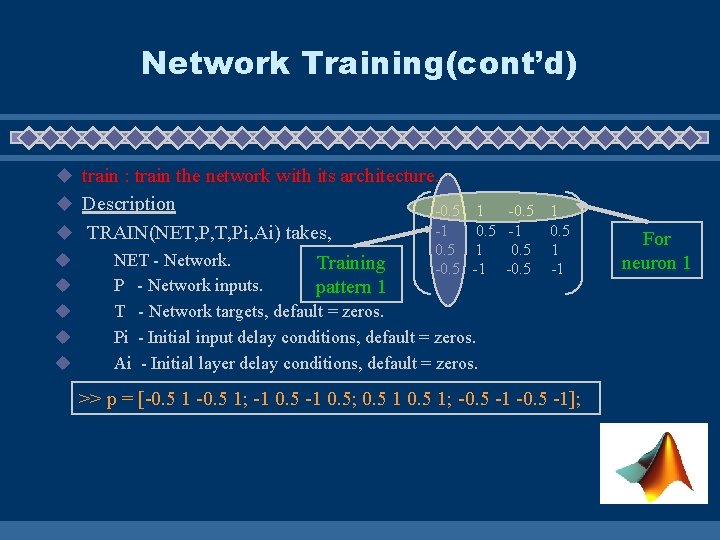

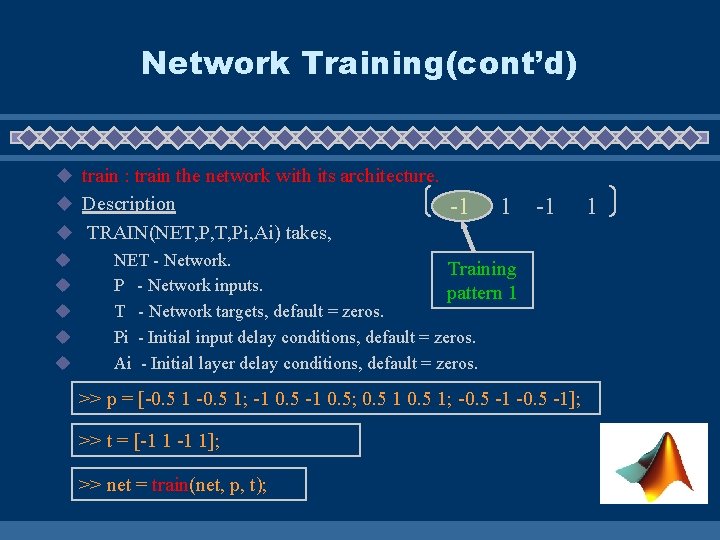

Network Training(cont’d) u train : train the network with its architecture. u Description -0. 5 1 -1 0. 5 1 -0. 5 -1 u TRAIN(NET, P, T, Pi, Ai) takes, u NET - Network. Training u P - Network inputs. pattern 1 u T - Network targets, default = zeros. u Pi - Initial input delay conditions, default = zeros. u Ai - Initial layer delay conditions, default = zeros. -0. 5 -1 0. 5 -0. 5 1 -1 >> p = [-0. 5 1; -1 0. 5; 0. 5 1; -0. 5 -1]; For neuron 1

Network Training(cont’d) u train : train the network with its architecture. u Description -1 1 -1 u TRAIN(NET, P, T, Pi, Ai) takes, u NET - Network. Training u P - Network inputs. pattern 1 u T - Network targets, default = zeros. u Pi - Initial input delay conditions, default = zeros. u Ai - Initial layer delay conditions, default = zeros. >> p = [-0. 5 1; -1 0. 5; 0. 5 1; -0. 5 -1]; >> t = [-1 1]; >> net = train(net, p, t); 1

![Simulation of the network u [Y] = SIM(model, UT) u Y u model u Simulation of the network u [Y] = SIM(model, UT) u Y u model u](http://slidetodoc.com/presentation_image/4c7cf4459f9532debaa8a592ce8318a0/image-18.jpg)

Simulation of the network u [Y] = SIM(model, UT) u Y u model u UT : Returned output in matrix or structure format. : Name of a block diagram model. : For table inputs, the input to the model is interpolated. Training pattern 1 -0. 5 -0. 25 -1. 00 >> UT = [-0. 5 1 ; -0. 25 1; -1 0. 25 ; -1 0. 5]; >> Y = sim(net, UT); 1. 00 0. 25 0. 50 For neuron 1

Performance Evaluation u Comparison between target and network’s output in testing set. (generalisation ability) u Comparison between target and network’s output in training set. (memorisation ability) u Design a function to measure the distance/similarity of the target and output, or simply use mse for example.

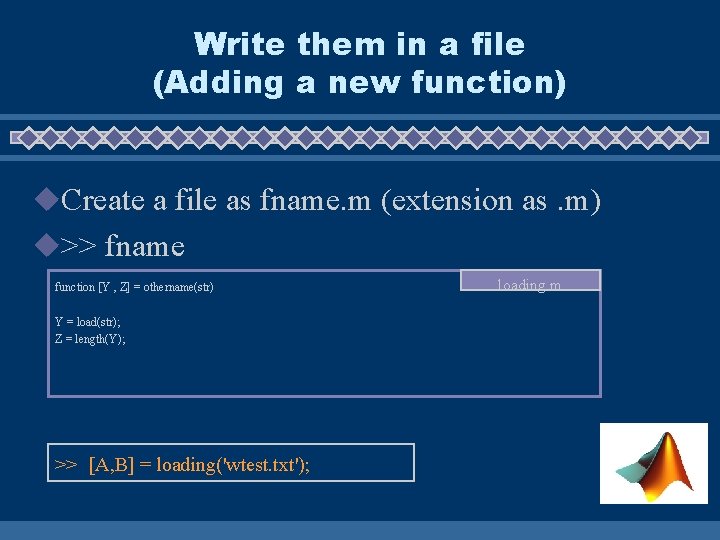

Write them in a file (Adding a new function) u. Create a file as fname. m (extension as. m) u>> fname function [Y , Z] = othername(str) Y = load(str); Z = length(Y); >> [A, B] = loading('wtest. txt'); loading. m

Reference u. Neural Networks Toolbox User's Guide – http: //www. cse. cuhk. edu. hk/corner/tech/doc/manual/matlab-5. 3. 1/help/pdf_doc/nnet. pdf u. Matlab Help Desk – http: //www. cse. cuhk. edu. hk/corner/tech/doc/manual/matlab-5. 3. 1/helpdesk. html u. Mathworks ower of Matlab – http: //www. mathworks. com/

- Slides: 22