NearOptimal Cache Block Placement with Reactive Nonuniform Cache

Near-Optimal Cache Block Placement with Reactive Nonuniform Cache Architectures Nikos Hardavellas, Northwestern University Team: M. Ferdman, B. Falsafi, A. Ailamaki Northwestern, Carnegie Mellon, EPFL

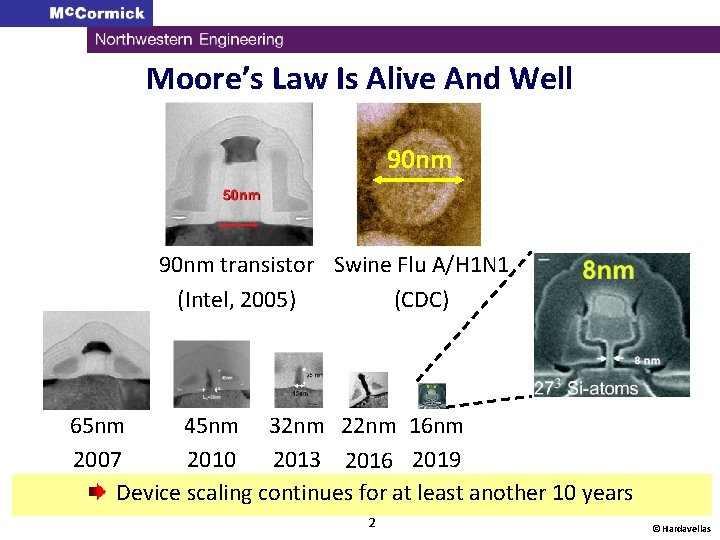

Moore’s Law Is Alive And Well 90 nm transistor Swine Flu A/H 1 N 1 (Intel, 2005) (CDC) 65 nm 45 nm 32 nm 22 nm 16 nm 2007 2010 2013 2016 2019 Device scaling continues for at least another 10 years 2 © Hardavellas

![Moore’s Law. Ended Is Alive And 2002 Well Good Days Nov. [Yelick 09] “New” Moore’s Law. Ended Is Alive And 2002 Well Good Days Nov. [Yelick 09] “New”](http://slidetodoc.com/presentation_image_h2/921109563598f8f4f00f6bdb6d149ccd/image-3.jpg)

Moore’s Law. Ended Is Alive And 2002 Well Good Days Nov. [Yelick 09] “New” Moore’s Law: 2 x cores with every generation On-chip cache grows commensurately to supply all cores with data 3 © Hardavellas

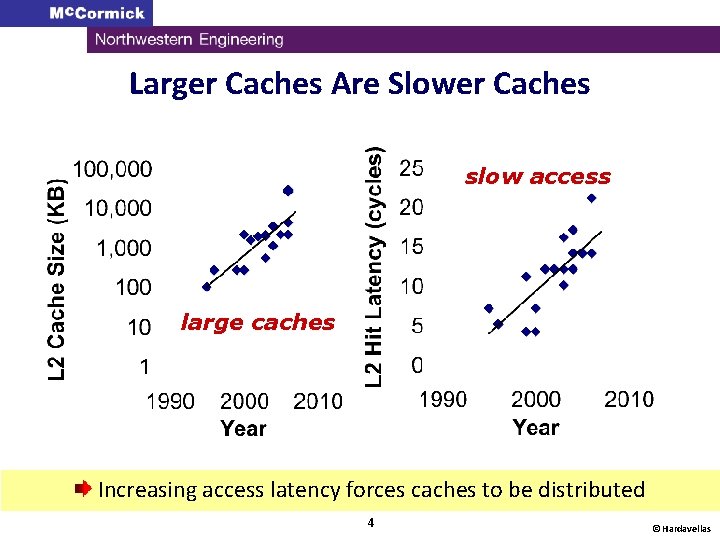

Larger Caches Are Slower Caches slow access large caches Increasing access latency forces caches to be distributed 4 © Hardavellas

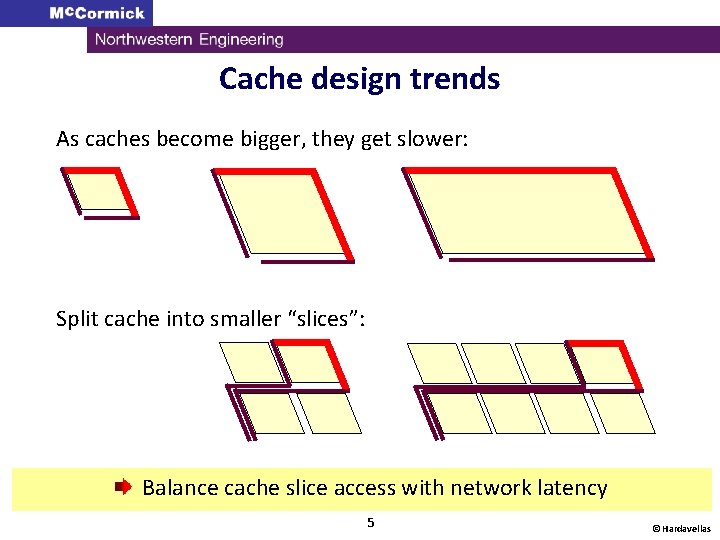

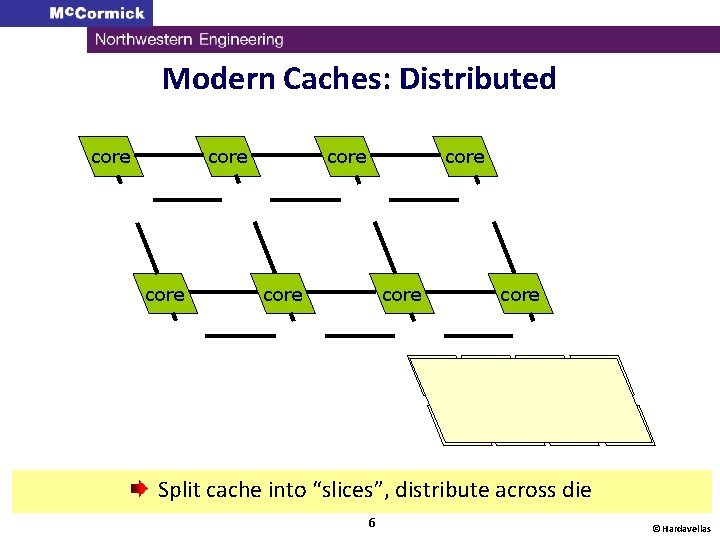

Cache design trends As caches become bigger, they get slower: Split cache into smaller “slices”: Balance cache slice access with network latency 5 © Hardavellas

Modern Caches: Distributed core core L 2 L 2 Split cache into “slices”, distribute across die 6 © Hardavellas

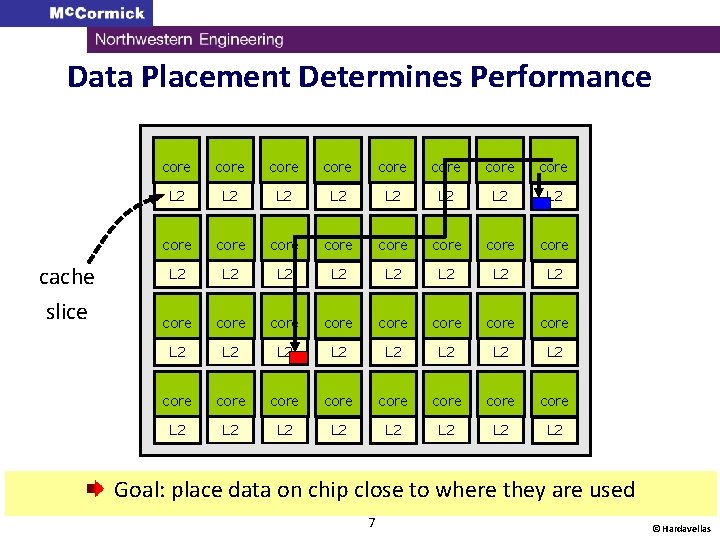

Data Placement Determines Performance cache slice core core L 2 L 2 L 2 L 2 core core core core L 2 L 2 core core L 2 L 2 Goal: place data on chip close to where they are used 7 © Hardavellas

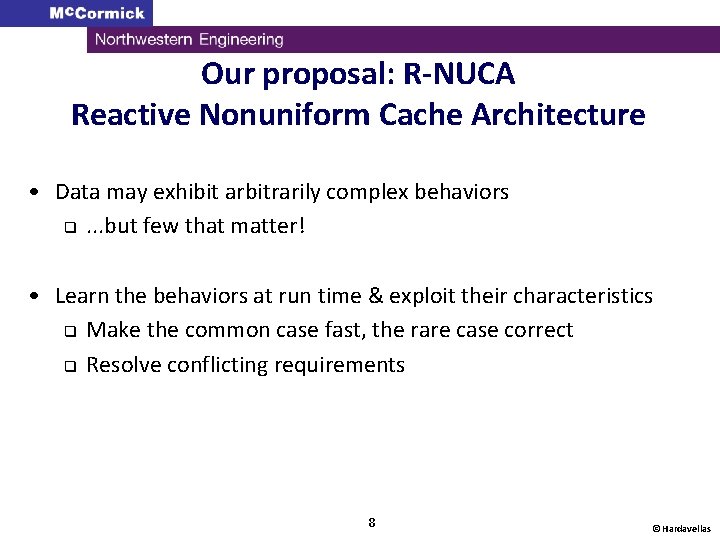

Our proposal: R-NUCA Reactive Nonuniform Cache Architecture • Data may exhibit arbitrarily complex behaviors q. . . but few that matter! • Learn the behaviors at run time & exploit their characteristics q Make the common case fast, the rare case correct q Resolve conflicting requirements 8 © Hardavellas

![Reactive Nonuniform Cache Architecture [Hardavellas et al, ISCA 2009] [Hardavellas et al, IEEE-Micro Top Reactive Nonuniform Cache Architecture [Hardavellas et al, ISCA 2009] [Hardavellas et al, IEEE-Micro Top](http://slidetodoc.com/presentation_image_h2/921109563598f8f4f00f6bdb6d149ccd/image-9.jpg)

Reactive Nonuniform Cache Architecture [Hardavellas et al, ISCA 2009] [Hardavellas et al, IEEE-Micro Top Picks 2010] • Cache accesses can be classified at run-time q Each class amenable to different placement • Per-class block placement q Simple, scalable, transparent q No need for HW coherence mechanisms at LLC q Up to 32% speedup (17% on average) § -5% on avg. from an ideal cache organization • Rotational Interleaving q Data replication and fast single-probe lookup 9 © Hardavellas

Outline • • • Introduction Why do Cache Accesses Matter? Access Classification and Block Placement Reactive NUCA Mechanisms Evaluation Conclusion 10 © Hardavellas

![Cache accesses dominate execution [Hardavellas et al, CIDR 2007] Lower is better 4 -core Cache accesses dominate execution [Hardavellas et al, CIDR 2007] Lower is better 4 -core](http://slidetodoc.com/presentation_image_h2/921109563598f8f4f00f6bdb6d149ccd/image-11.jpg)

Cache accesses dominate execution [Hardavellas et al, CIDR 2007] Lower is better 4 -core CMP DSS: TPCH/DB 2 1 GB database Ideal Bottleneck shifts from memory to L 2 -hit stalls 11 © Hardavellas

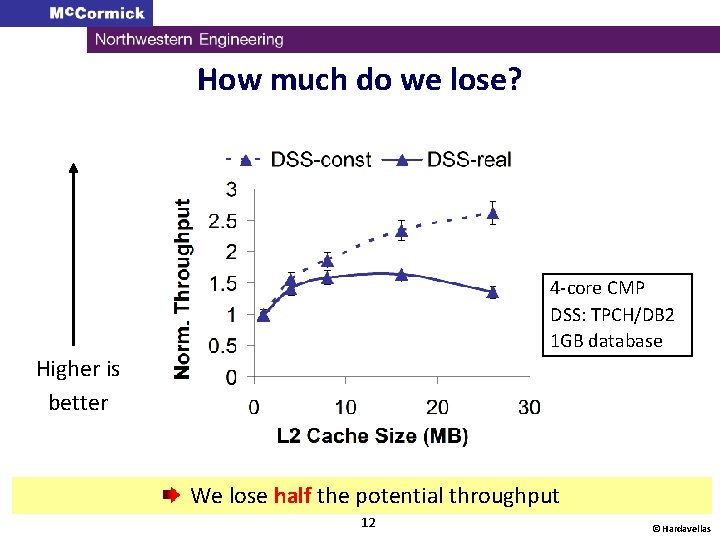

How much do we lose? 4 -core CMP DSS: TPCH/DB 2 1 GB database Higher is better We lose half the potential throughput 12 © Hardavellas

Outline • • • Introduction Why do Cache Accesses Matter? Access Classification and Block Placement Reactive NUCA Mechanisms Evaluation Conclusion 13 © Hardavellas

Terminology: Data Types core Read or Write L 2 Private core Read Write L 2 Shared Read-Only Shared Read-Write 14 © Hardavellas

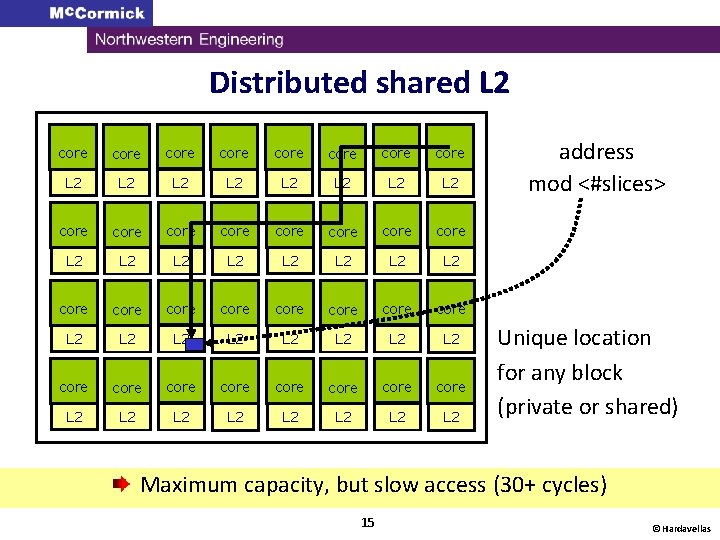

Distributed shared L 2 core core core core core core core core L 2 L 2 L 2 L 2 L 2 L 2 L 2 L 2 address mod <#slices> Unique location for any block (private or shared) Maximum capacity, but slow access (30+ cycles) 15 © Hardavellas

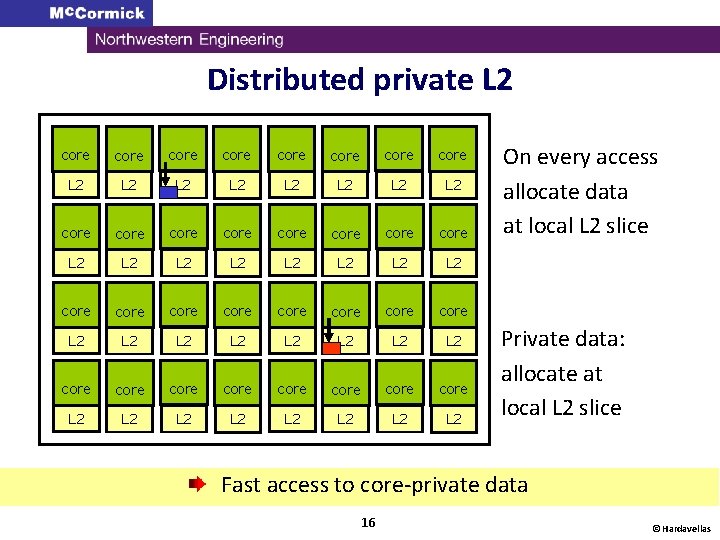

Distributed private L 2 core core core core core core core core L 2 L 2 L 2 L 2 L 2 L 2 L 2 L 2 On every access allocate data at local L 2 slice Private data: allocate at local L 2 slice Fast access to core-private data 16 © Hardavellas

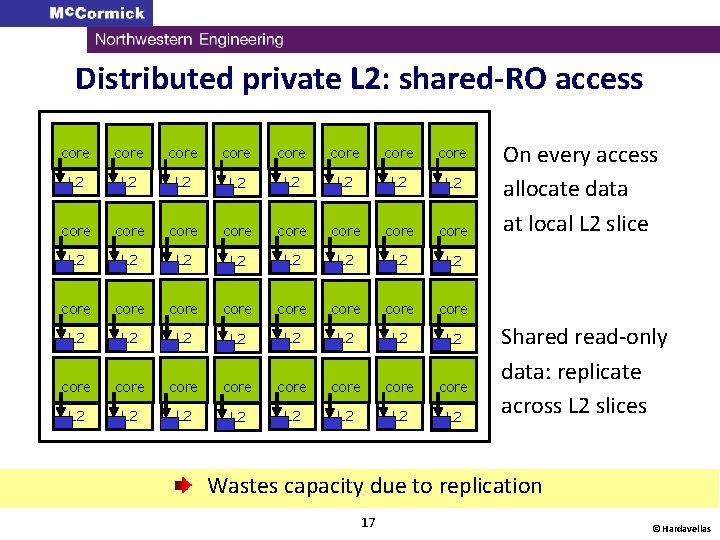

Distributed private L 2: shared-RO access core core core core core core core core L 2 L 2 L 2 L 2 L 2 L 2 L 2 L 2 On every access allocate data at local L 2 slice Shared read-only data: replicate across L 2 slices Wastes capacity due to replication 17 © Hardavellas

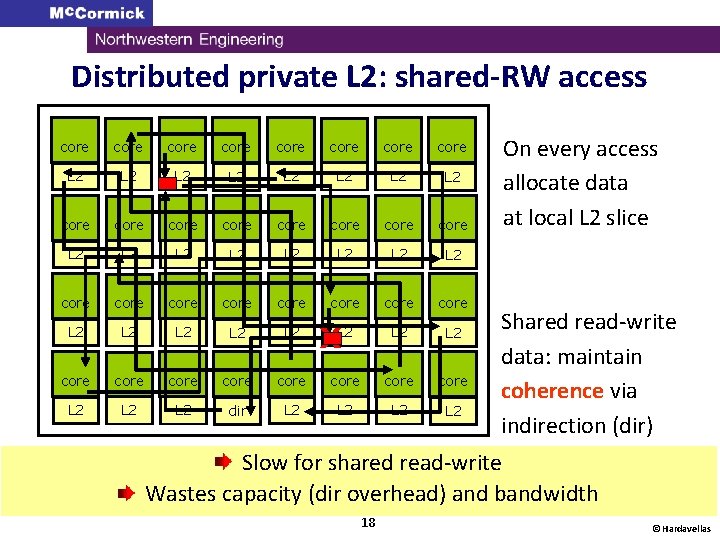

Distributed private L 2: shared-RW access core core core core L 2 L 2 L 2 L 2 core core L 2 L 2 core core L 2 L 2 dir L 2 L 2 X On every access allocate data at local L 2 slice Shared read-write data: maintain coherence via indirection (dir) Slow for shared read-write Wastes capacity (dir overhead) and bandwidth 18 © Hardavellas

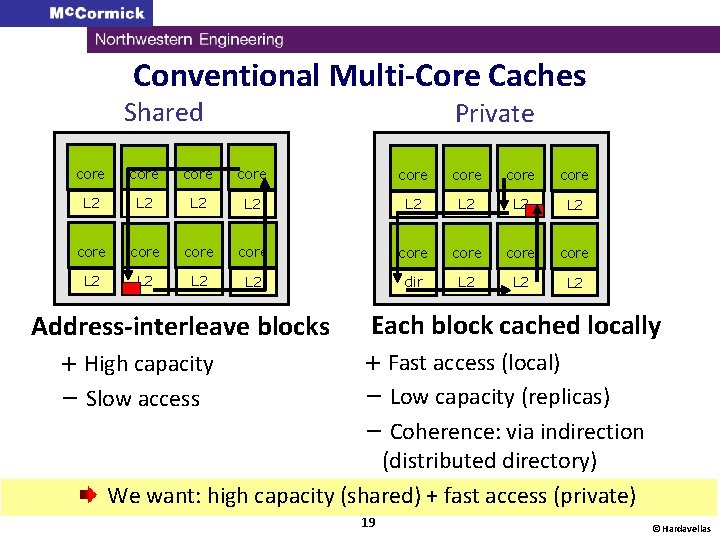

Conventional Multi-Core Caches Shared Private core core L 2 L 2 core core L 2 L 2 dir L 2 L 2 Address-interleave blocks + High capacity Each block cached locally + Fast access (local) − Low capacity (replicas) − Coherence: via indirection (distributed directory) We want: high capacity (shared) + fast access (private) − Slow access 19 © Hardavellas

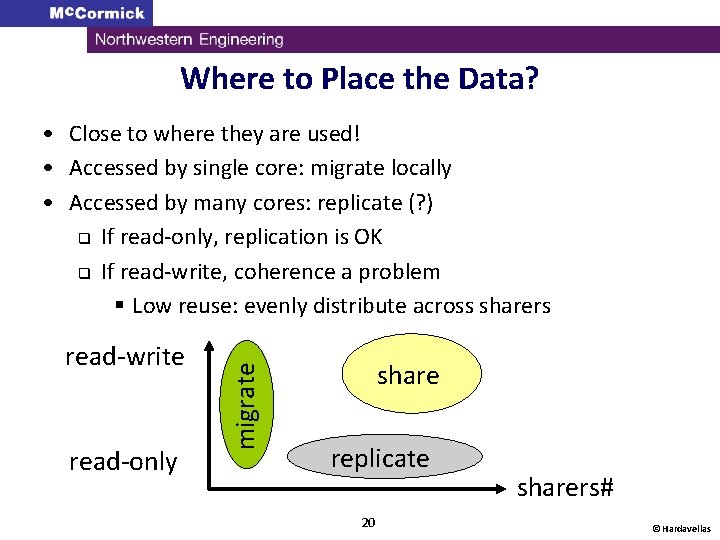

Where to Place the Data? read-write read-only migrate • Close to where they are used! • Accessed by single core: migrate locally • Accessed by many cores: replicate (? ) q If read-only, replication is OK q If read-write, coherence a problem § Low reuse: evenly distribute across sharers share replicate 20 sharers# © Hardavellas

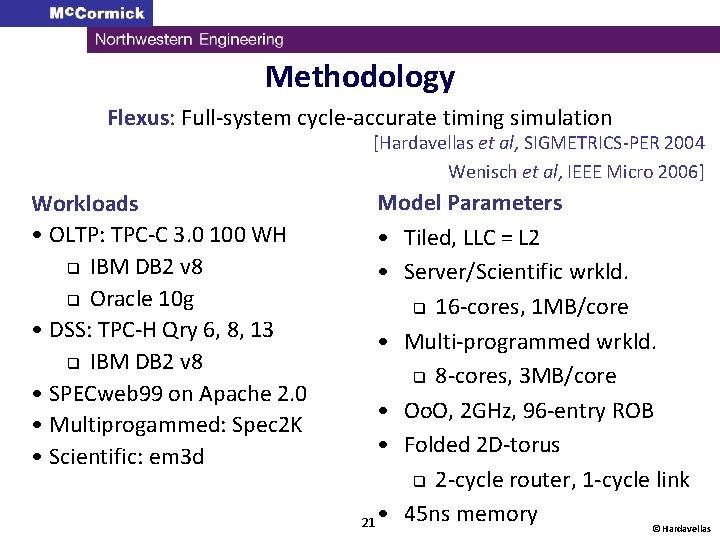

Methodology Flexus: Full-system cycle-accurate timing simulation [Hardavellas et al, SIGMETRICS-PER 2004 Wenisch et al, IEEE Micro 2006] Workloads • OLTP: TPC-C 3. 0 100 WH q IBM DB 2 v 8 q Oracle 10 g • DSS: TPC-H Qry 6, 8, 13 q IBM DB 2 v 8 • SPECweb 99 on Apache 2. 0 • Multiprogammed: Spec 2 K • Scientific: em 3 d Model Parameters • Tiled, LLC = L 2 • Server/Scientific wrkld. q 16 -cores, 1 MB/core • Multi-programmed wrkld. q 8 -cores, 3 MB/core • Oo. O, 2 GHz, 96 -entry ROB • Folded 2 D-torus q 2 -cycle router, 1 -cycle link 21 • 45 ns memory © Hardavellas

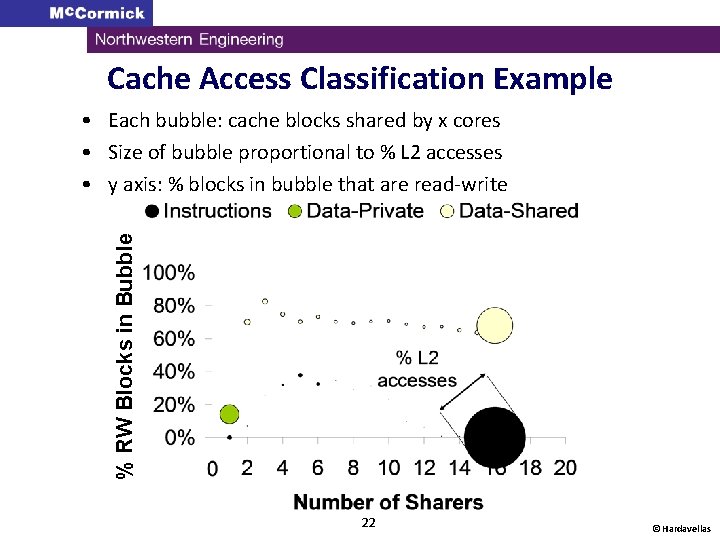

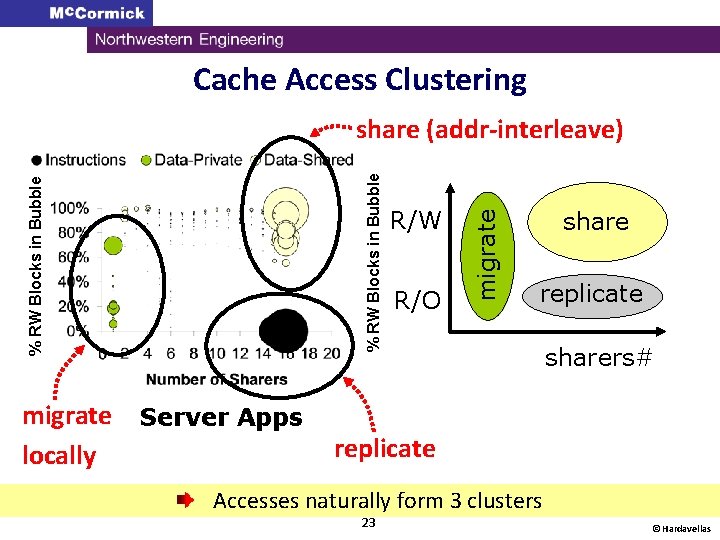

Cache Access Classification Example % RW Blocks in Bubble • Each bubble: cache blocks shared by x cores • Size of bubble proportional to % L 2 accesses • y axis: % blocks in bubble that are read-write 22 © Hardavellas

Cache Access Clustering migrate locally Server Apps R/W R/O migrate % RW Blocks in Bubble share (addr-interleave) share replicate sharers# Scientific/MP Apps replicate Accesses naturally form 3 clusters 23 © Hardavellas

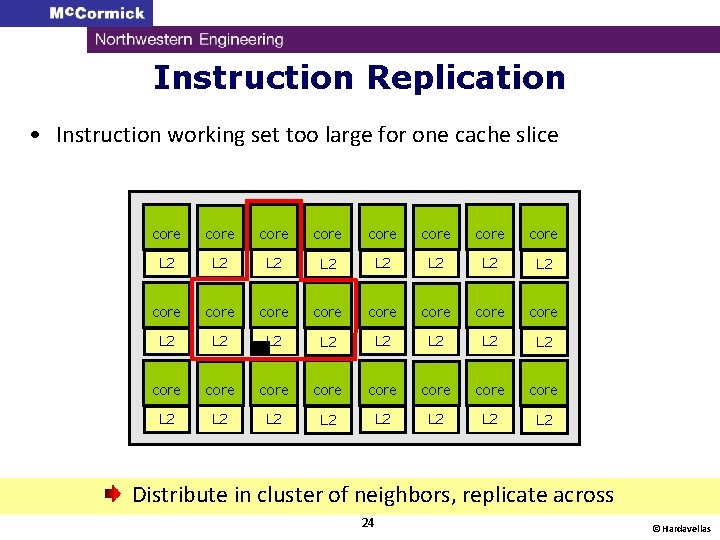

Instruction Replication • Instruction working set too large for one cache slice core core L 2 L 2 L 2 L 2 core core core core L 2 L 2 Distribute in cluster of neighbors, replicate across 24 © Hardavellas

Reactive NUCA in a nutshell • Classify accesses q private data: like private scheme (migrate) q shared data: like shared scheme (interleave) q instructions: controlled replication (middle ground) To place cache blocks, we first need to classify them 25 © Hardavellas

Outline • • • Introduction Access Classification and Block Placement Reactive NUCA Mechanisms Evaluation Conclusion 26 © Hardavellas

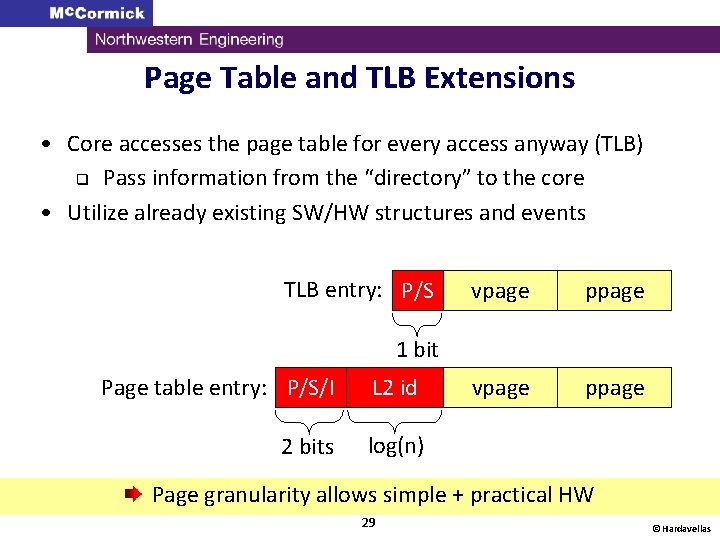

Classification Granularity • Per-block classification q High area/power overhead (cut L 2 size by half) q High latency (indirection through directory) • Per-page classification (utilize OS page table) q Persistent structure q Core accesses the page table for every access anyway (TLB) q Utilize already existing SW/HW structures and events q Page classification is accurate (<0. 5% error) Classify entire data pages, page table/TLB for bookkeeping 27 © Hardavellas

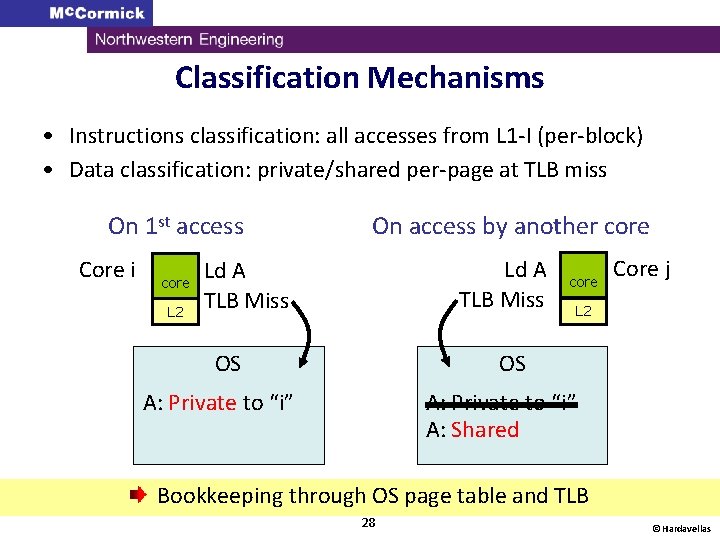

Classification Mechanisms • Instructions classification: all accesses from L 1 -I (per-block) • Data classification: private/shared per-page at TLB miss On 1 st access Core i core L 2 On access by another core Ld A TLB Miss core Core j L 2 OS OS A: Private to “i” A: Shared Bookkeeping through OS page table and TLB 28 © Hardavellas

Page Table and TLB Extensions • Core accesses the page table for every access anyway (TLB) q Pass information from the “directory” to the core • Utilize already existing SW/HW structures and events TLB entry: P/S vpage ppage 1 bit Page table entry: P/S/I L 2 id 2 bits log(n) Page granularity allows simple + practical HW 29 © Hardavellas

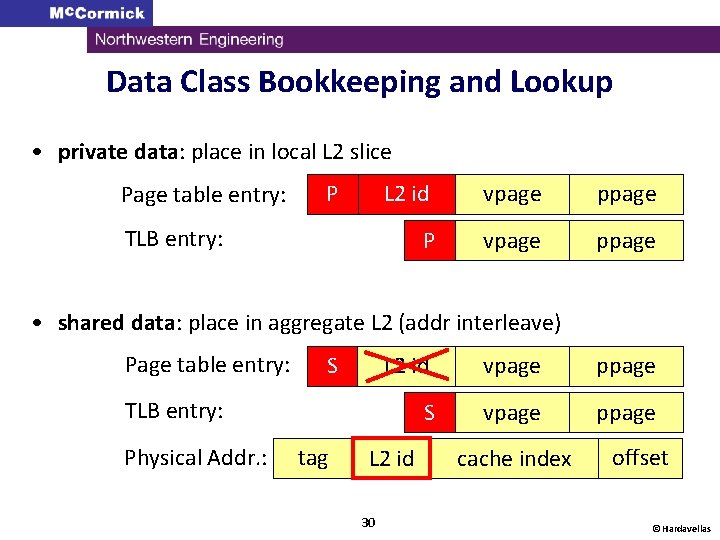

Data Class Bookkeeping and Lookup • private data: place in local L 2 slice Page table entry: P L 2 id vpage ppage P vpage ppage TLB entry: • shared data: place in aggregate L 2 (addr interleave) Page table entry: S L 2 id vpage ppage S vpage ppage TLB entry: Physical Addr. : tag L 2 id 30 cache index offset © Hardavellas

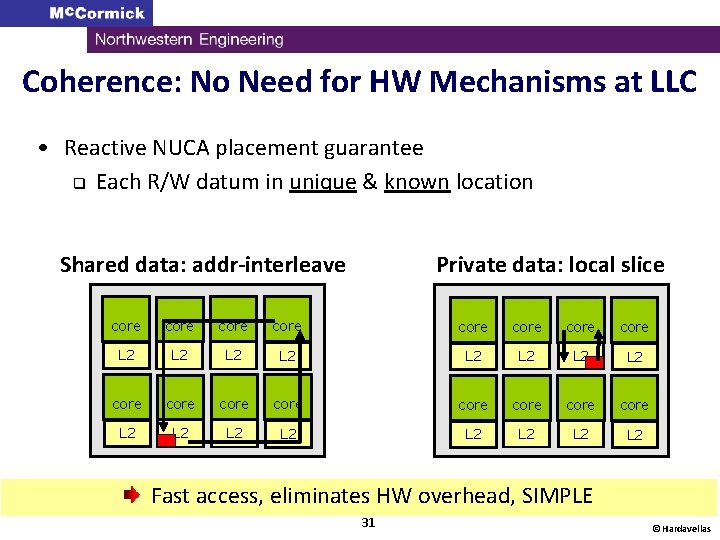

Coherence: No Need for HW Mechanisms at LLC • Reactive NUCA placement guarantee q Each R/W datum in unique & known location Shared data: addr-interleave Private data: local slice core core core core L 2 L 2 L 2 L 2 Fast access, eliminates HW overhead, SIMPLE 31 © Hardavellas

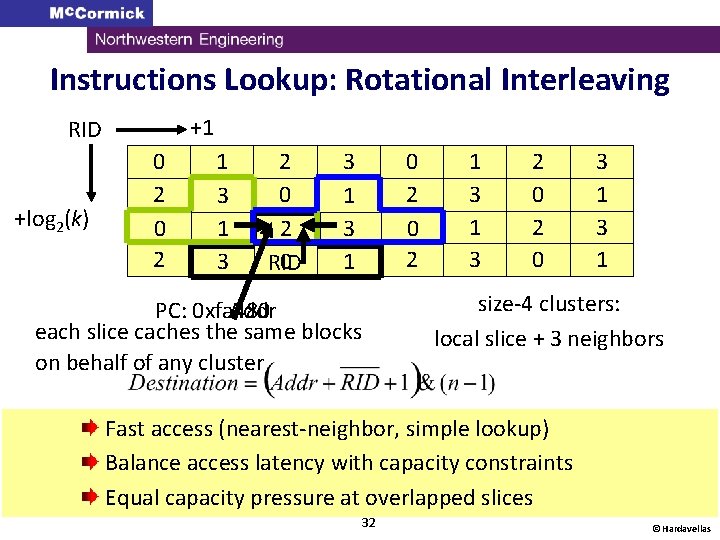

Instructions Lookup: Rotational Interleaving +1 RID +log 2(k) 0 2 1 3 2 0 RID 3 1 0 2 PC: 0 xfa 480 Addr each slice caches the same blocks on behalf of any cluster 1 3 2 0 3 1 size-4 clusters: local slice + 3 neighbors Fast access (nearest-neighbor, simple lookup) Balance access latency with capacity constraints Equal capacity pressure at overlapped slices 32 © Hardavellas

Outline • • • Introduction Access Classification and Block Placement Reactive NUCA Mechanisms Evaluation Conclusion 33 © Hardavellas

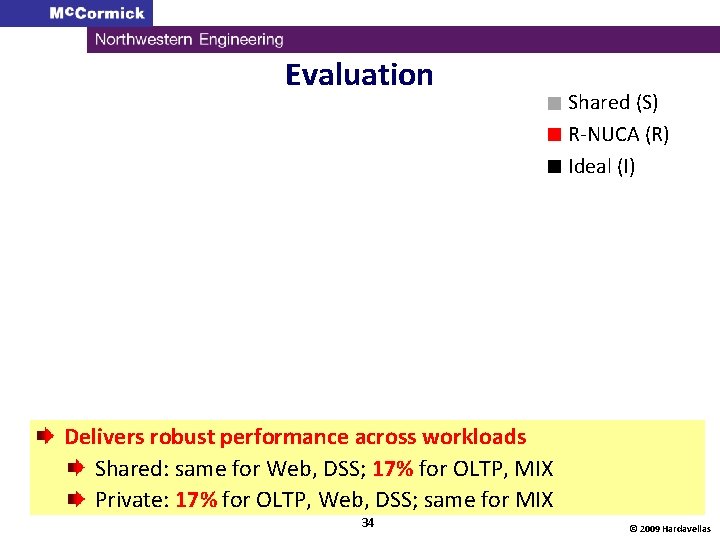

Evaluation (S) § Shared (R) § R-NUCA § Ideal (I) Delivers robust performance across workloads Shared: same for Web, DSS; 17% for OLTP, MIX Private: 17% for OLTP, Web, DSS; same for MIX 34 © 2009 Hardavellas

Conclusions • Data may exhibit arbitrarily complex behaviors q. . . but few that matter! • Learn the behaviors that matter at run time q Make the common case fast, the rare case correct • Reactive NUCA: near-optimal cache block placement q Simple, scalable, low-overhead, transparent, no coherence q Robust performance § Matches best alternative, or 17% better; up to 32% § Near-optimal placement (-5% avg. from ideal) 35 © Hardavellas

Thank You! For more information: • N. Hardavellas, M. Ferdman, B. Falsafi and A. Ailamaki. Near. Optimal Cache Block Placement with Reactive Nonuniform Cache Architectures. IEEE Micro Top Picks, Vol. 30(1), pp. 20 -28, January/February 2010. • N. Hardavellas, M. Ferdman, B. Falsafi and A. Ailamaki. Reactive NUCA: Near-Optimal Block Placement and Replication in Distributed Caches. ISCA 2009. http: //www. eecs. northwestern. edu/~hardav/ 36 © Hardavellas

BACKUP SLIDES 37 © 2009 Hardavellas

Why Are Caches Growing So Large? • Increasing number of cores: cache grows commensurately q Fewer but faster cores have the same effect • Increasing datasets: faster than Moore’s Law! • Power/thermal efficiency: caches are “cool”, cores are “hot” q So, its easier to fit more cache in a power budget • Limited bandwidth: large cache == more data on chip q Off-chip pins are used less frequently 38 © Hardavellas

Backup Slides ASR 39 © 2009 Hardavellas

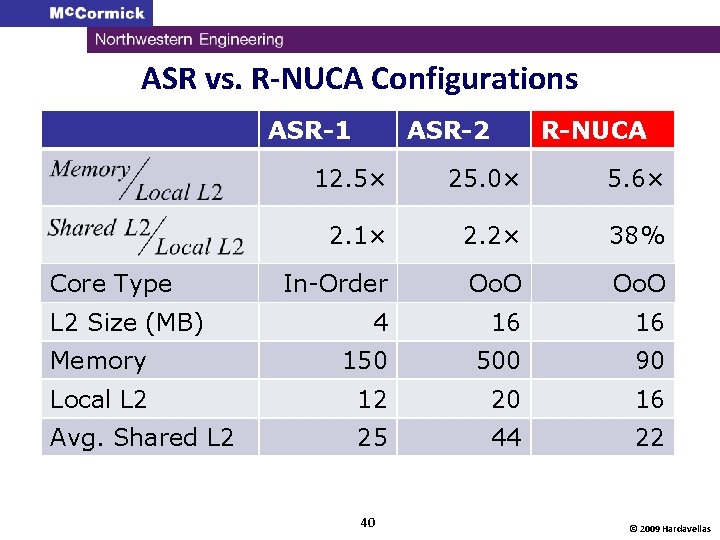

ASR vs. R-NUCA Configurations ASR-1 ASR-2 R-NUCA 12. 5× 25. 0× 5. 6× 2. 1× 2. 2× 38% In-Order Oo. O 4 16 16 Memory 150 500 90 Local L 2 12 20 16 Avg. Shared L 2 25 44 22 Core Type L 2 Size (MB) 40 © 2009 Hardavellas

ASR design space search 41 © Hardavellas

Backup Slides Prior Work 42 © 2009 Hardavellas

Prior Work • Several proposals for CMP cache management q ASR, cooperative caching, victim replication, CMP-Nu. Rapid, D-NUCA • . . . but suffer from shortcomings q complex, high-latency lookup/coherence q don’t scale q lower effective cache capacity q optimize only for subset of accesses We need: Simple, scalable mechanism for fast access to all data 43 © Hardavellas

Shortcomings of prior work • L 2 -Private q Wastes capacity q High latency (3 slice accesses + 3 hops on shr. ) • L 2 -Shared q High latency • Cooperative Caching q Doesn’t scale (centralized tag structure) • CMP-Nu. Rapid q High latency (pointer dereference, 3 hops on shr) • OS-managed L 2 q Wastes capacity (migrates all blocks) q Spill to neighbors useless (all run same code) 44 © Hardavellas

Shortcomings of Prior Work • D-NUCA q No practical implementation (lookup? ) • Victim Replication q High latency (like L 2 -Private) q Wastes capacity (home always stores block) • Adaptive Selective Replication (ASR) q High latency (like L 2 -Private) q Capacity pressure (replicates at slice granularity) q Complex (4 separate HW structures to bias coin) 45 © Hardavellas

Backup Slides Classification and Lookup 46 © 2009 Hardavellas

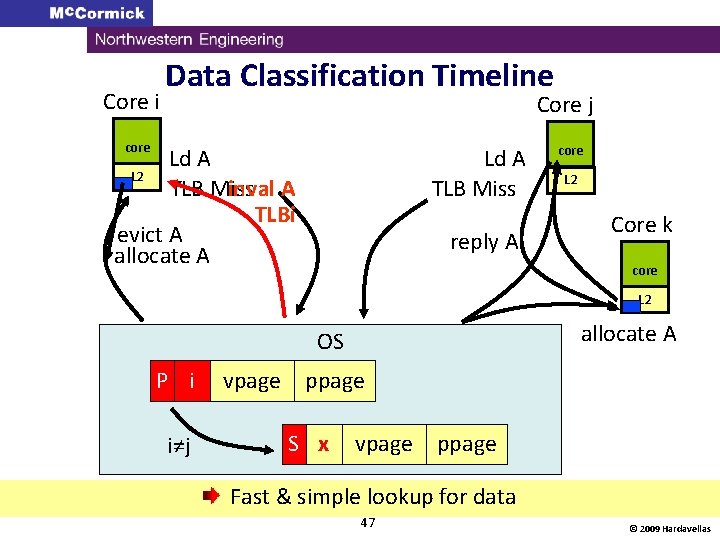

Core i Data Classification Timeline Core j core Ld A L 2 inval A TLB Miss TLBi evict A allocate A Ld A TLB Miss reply A core L 2 Core k core L 2 allocate A OS P i i≠j vpage ppage S x vpage ppage Fast & simple lookup for data 47 © 2009 Hardavellas

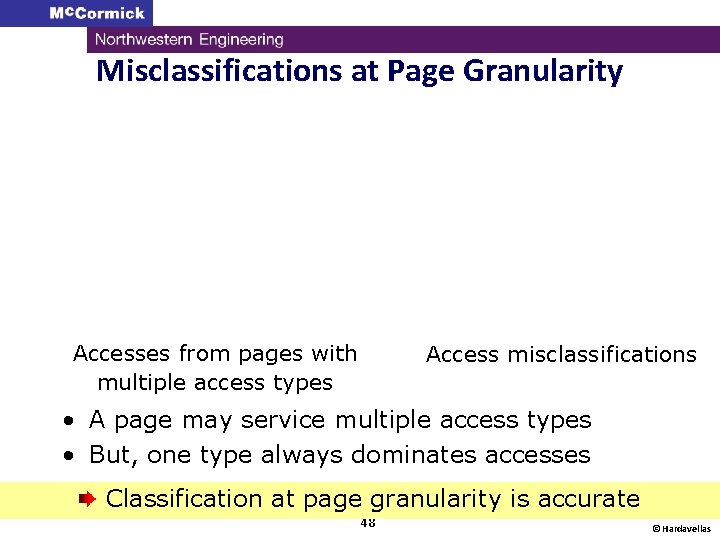

Misclassifications at Page Granularity Accesses from pages with multiple access types Access misclassifications • A page may service multiple access types • But, one type always dominates accesses Classification at page granularity is accurate 48 © Hardavellas

Backup Slides Placement 49 © 2009 Hardavellas

Private Data Placement • Spill to neighbors if working set too large? q NO!!! Each core runs similar threads Store in local L 2 slice (like in private cache) 50 © Hardavellas

Private Data Working Set • OLTP: Small per-core work. set (3 MB/16 cores = 200 KB/core) • Web: primary wk. set <6 KB/core, remaining <1. 5% L 2 refs • DSS: Policy doesn’t matter much (>100 MB work. set, <13% L 2 refs very low reuse on private) 51 © Hardavellas

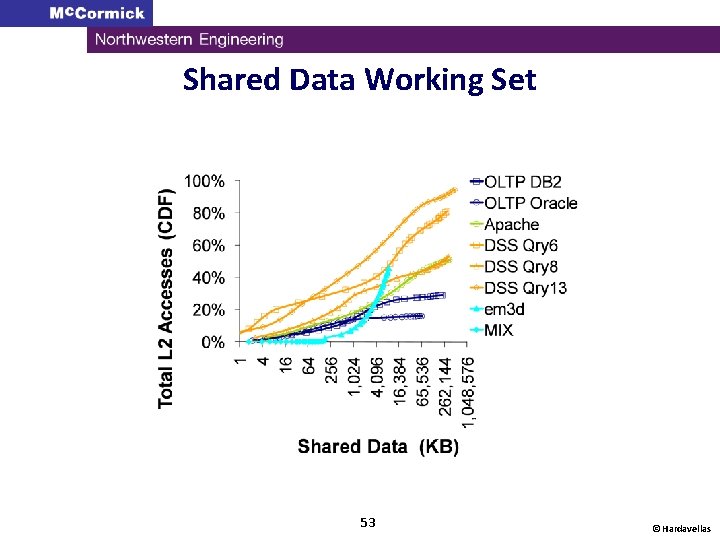

Shared Data Placement • Read-write + large working set + low reuse q Unlikely to be in local slice for reuse • Also, next sharer is random [WMPI’ 04] Address-interleave in aggregate L 2 (like shared cache) 52 © Hardavellas

Shared Data Working Set 53 © Hardavellas

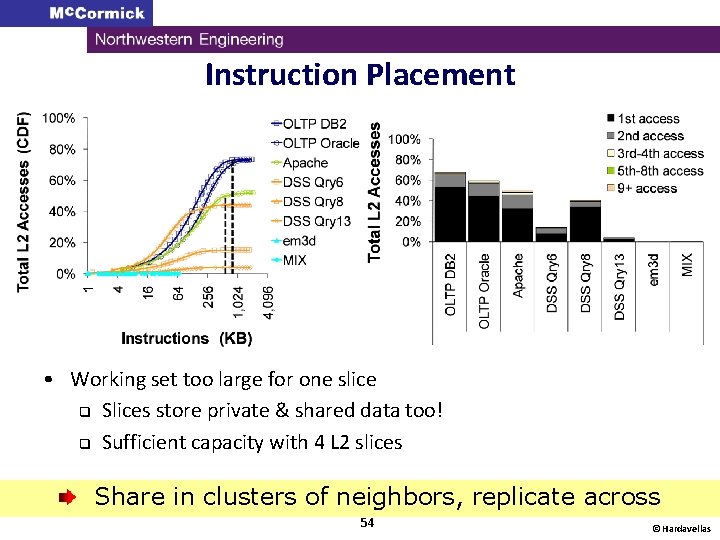

Instruction Placement • Working set too large for one slice q Slices store private & shared data too! q Sufficient capacity with 4 L 2 slices Share in clusters of neighbors, replicate across 54 © Hardavellas

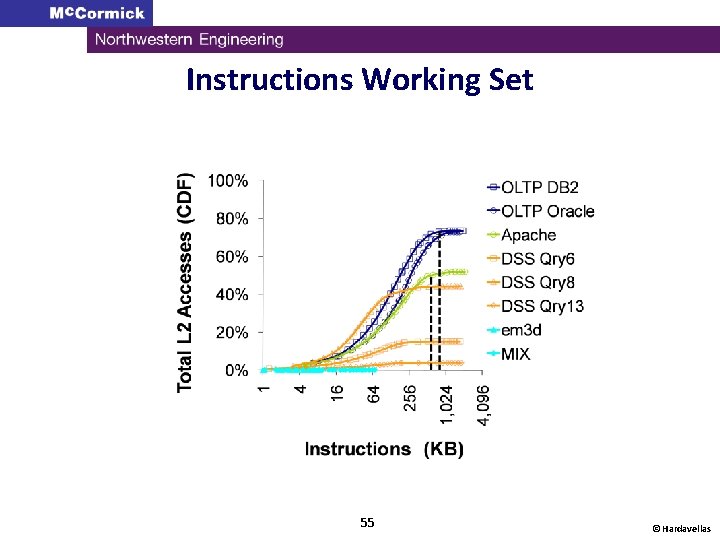

Instructions Working Set 55 © Hardavellas

Backup Slides Rotational Interleaving 56 © 2009 Hardavellas

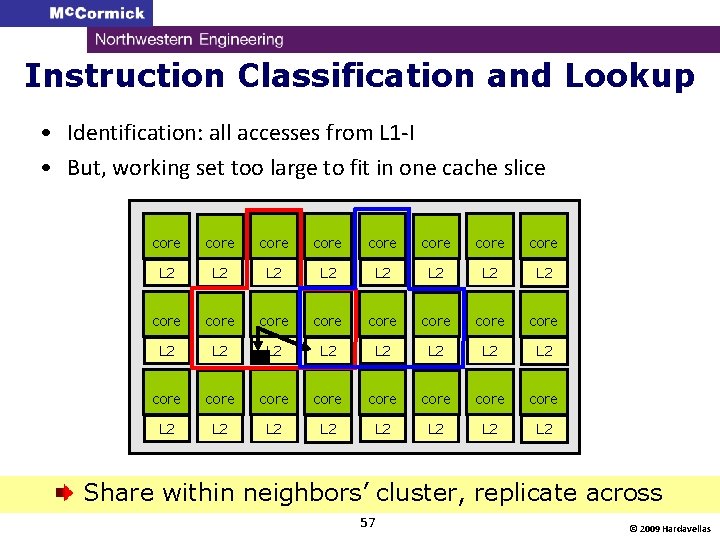

Instruction Classification and Lookup • Identification: all accesses from L 1 -I • But, working set too large to fit in one cache slice core core L 2 L 2 L 2 L 2 core core core core L 2 L 2 Share within neighbors’ cluster, replicate across 57 © 2009 Hardavellas

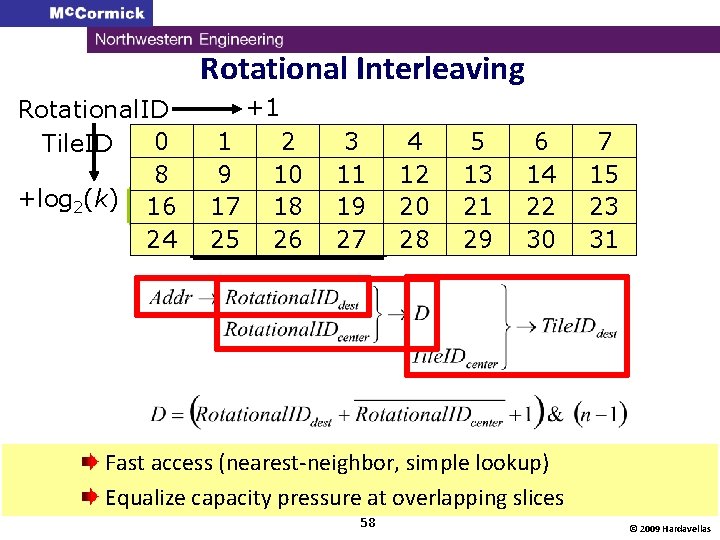

Rotational Interleaving Rotational. ID 0 Tile. ID 2 8 +log 2(k) 16 0 24 2 +1 1 3 9 17 1 25 3 2 10 0 18 2 26 0 3 11 1 19 3 27 1 4 0 12 2 20 0 28 2 5 1 13 3 21 1 29 3 6 2 14 0 22 2 30 0 7 3 15 1 23 3 31 1 Fast access (nearest-neighbor, simple lookup) Equalize capacity pressure at overlapping slices 58 © 2009 Hardavellas

Nearest-neighbor size-8 clusters 59 © Hardavellas

- Slides: 59