Data Analysis for Assuring the Quality of your

- Slides: 51

Data Analysis for Assuring the Quality of your COSF Data 1

What are these numbers? ? 2

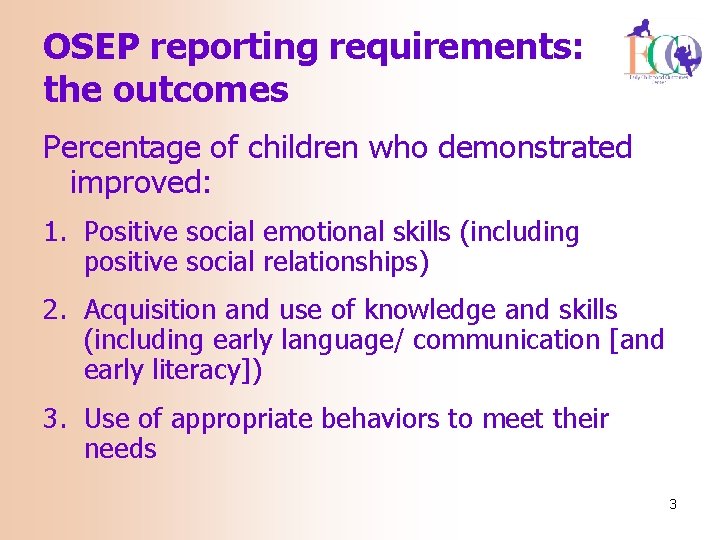

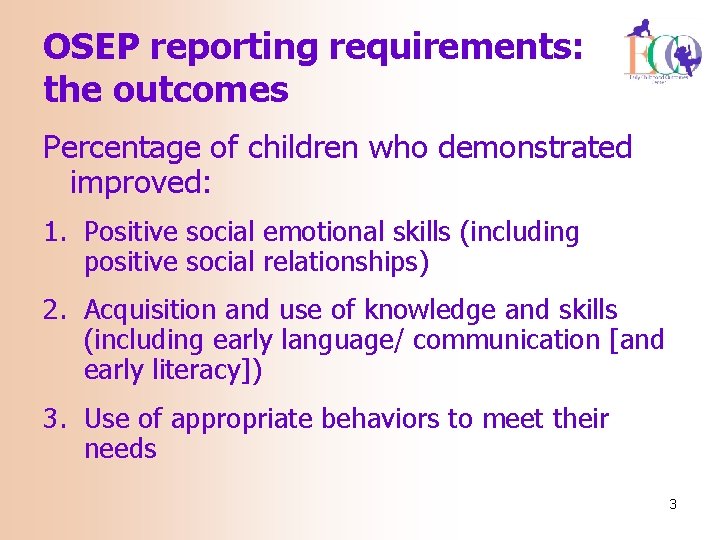

OSEP reporting requirements: the outcomes Percentage of children who demonstrated improved: 1. Positive social emotional skills (including positive social relationships) 2. Acquisition and use of knowledge and skills (including early language/ communication [and early literacy]) 3. Use of appropriate behaviors to meet their needs 3

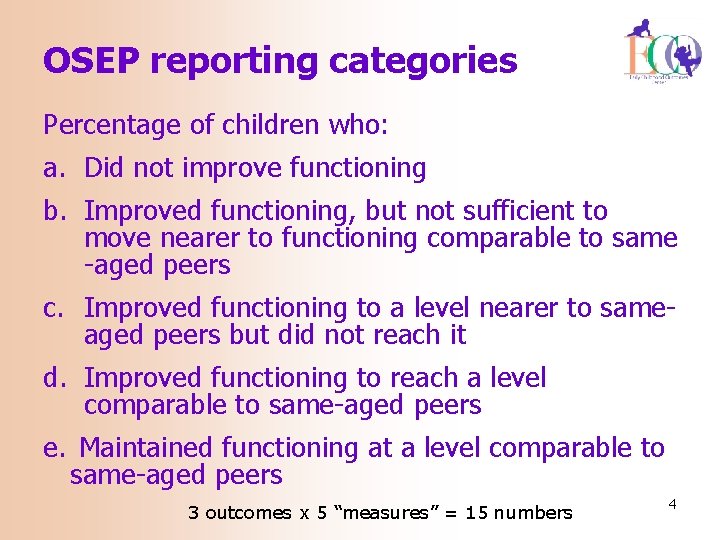

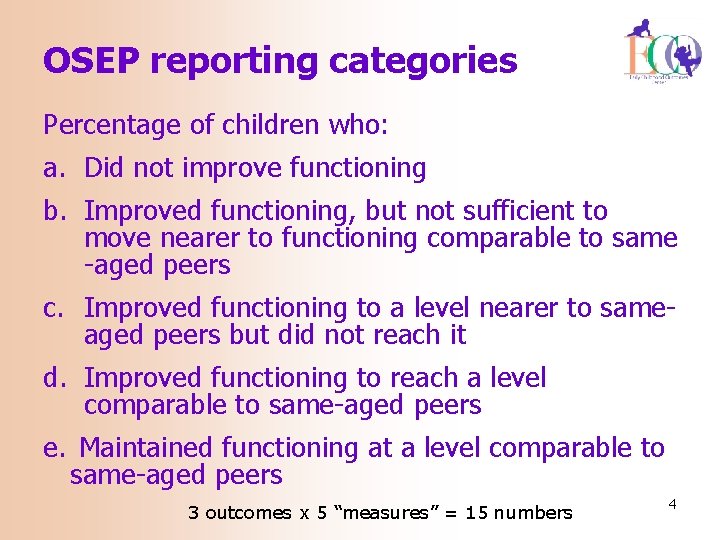

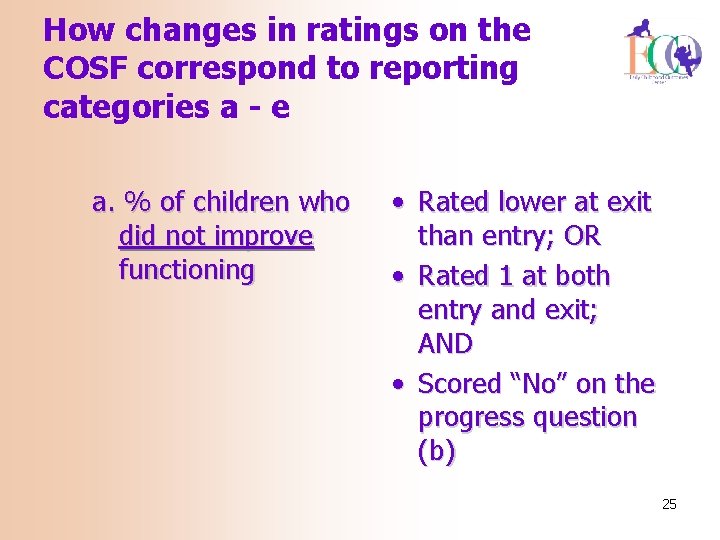

OSEP reporting categories Percentage of children who: a. Did not improve functioning b. Improved functioning, but not sufficient to move nearer to functioning comparable to same -aged peers c. Improved functioning to a level nearer to sameaged peers but did not reach it d. Improved functioning to reach a level comparable to same-aged peers e. Maintained functioning at a level comparable to same-aged peers 3 outcomes x 5 “measures” = 15 numbers 4

Getting to progress categories from the COSF ratings 5

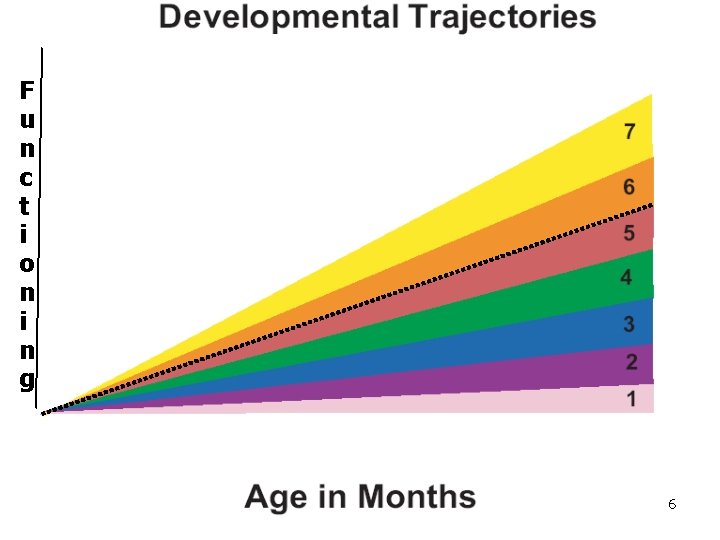

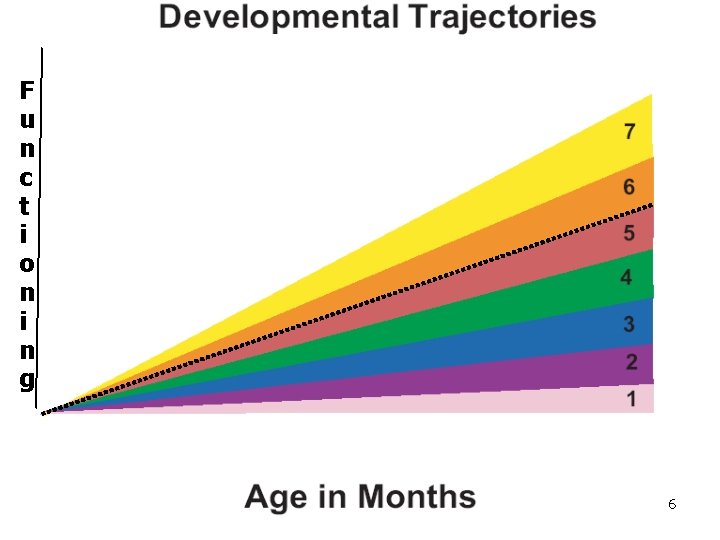

F u n c t i o n i n g 6

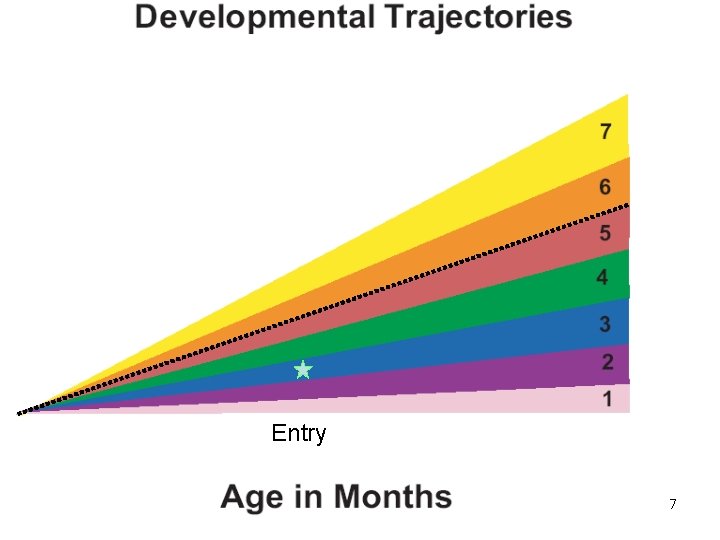

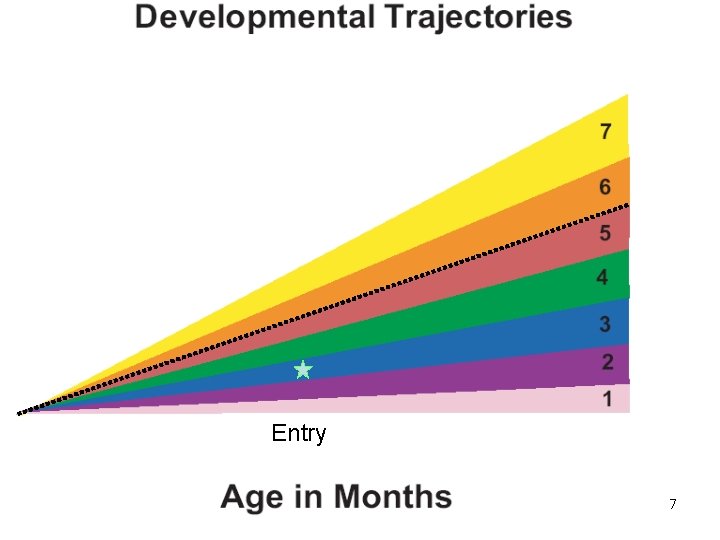

Entry 7

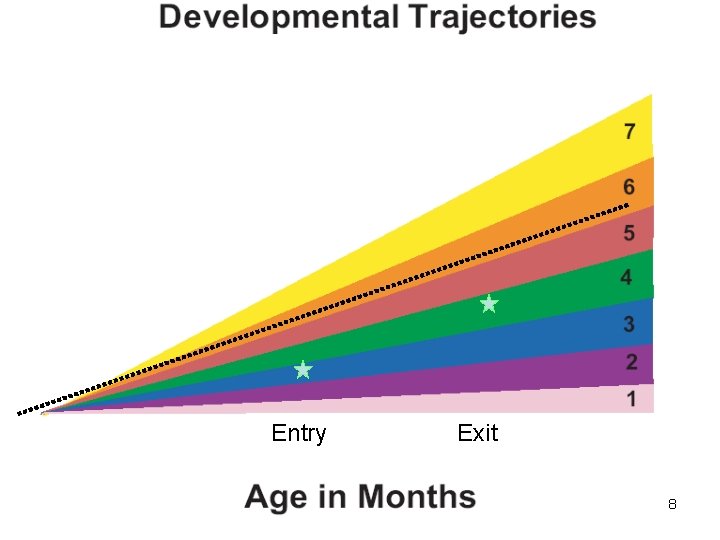

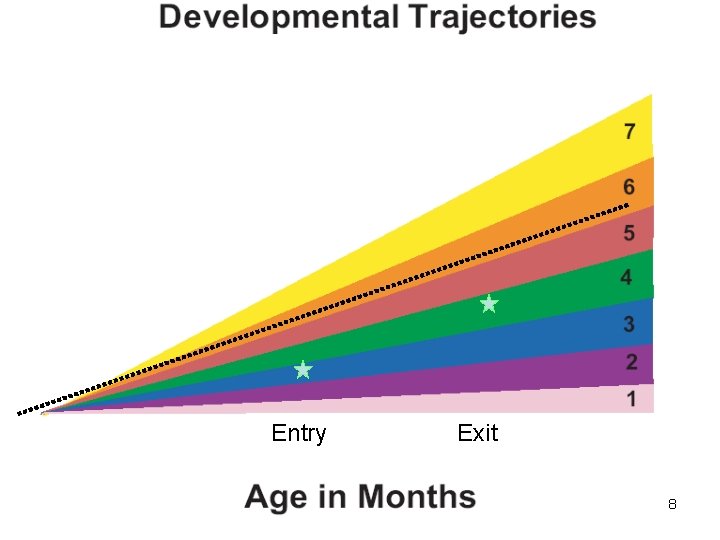

Entry Exit 8

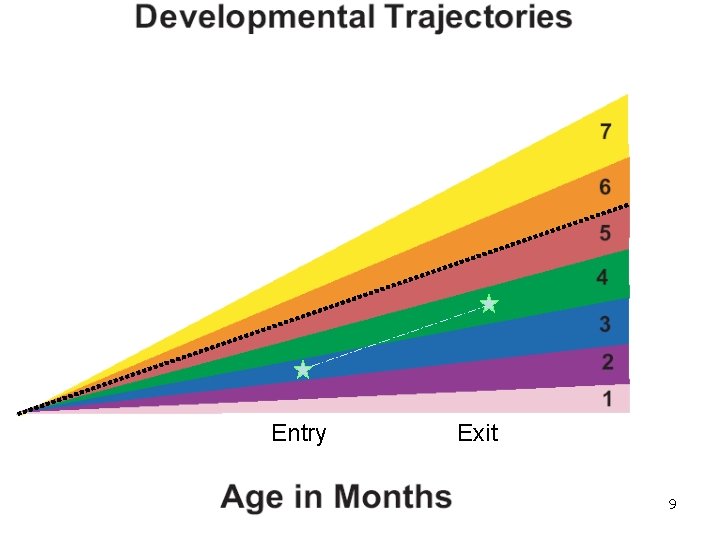

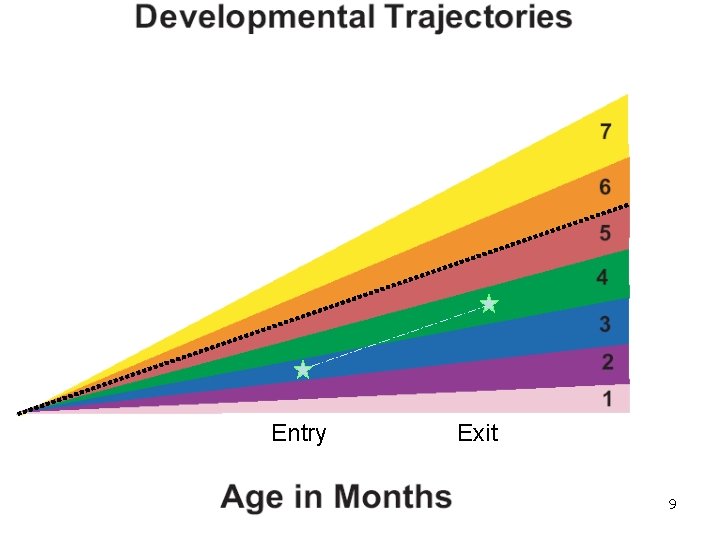

Entry Exit 9

Key Point • The OSEP categories describe types of progress children can make between entry and exit • Two COSF ratings (entry and exit) are needed to calculate what OSEP category describes a child progress 10

How changes in ratings on the COSF correspond to reporting categories a - e e. % of children who maintain functioning at a level comparable to same-aged peers • Rated 6 or 7 at entry; AND • Rated 6 or 7 at exit 11

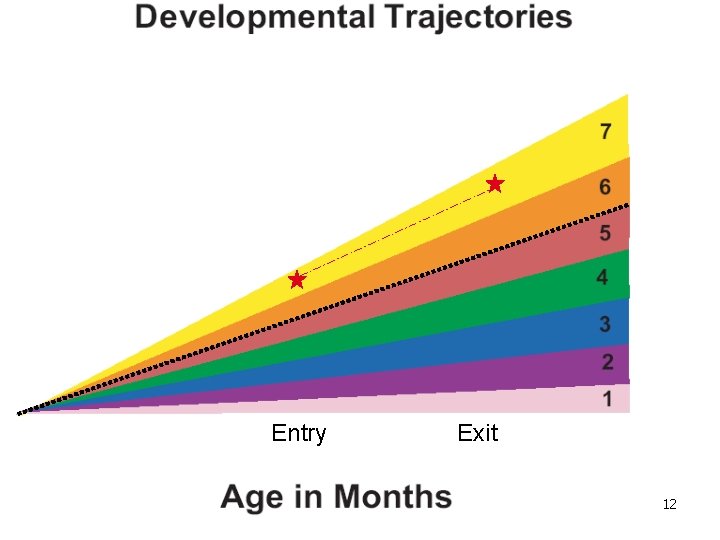

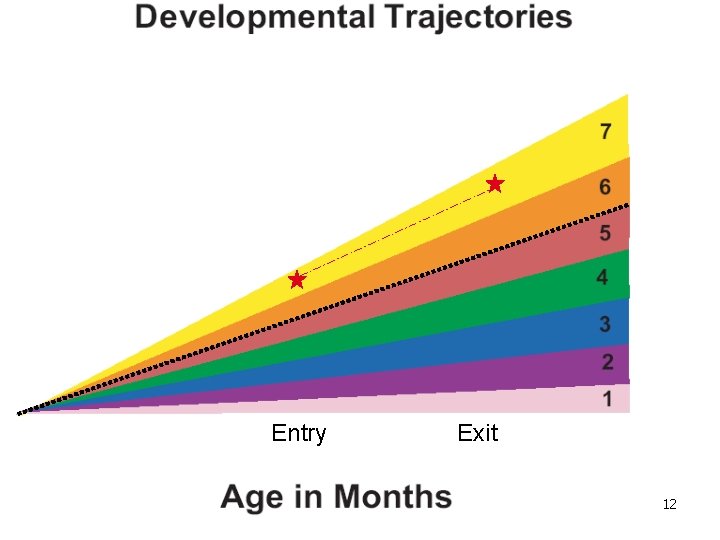

Entry Exit 12

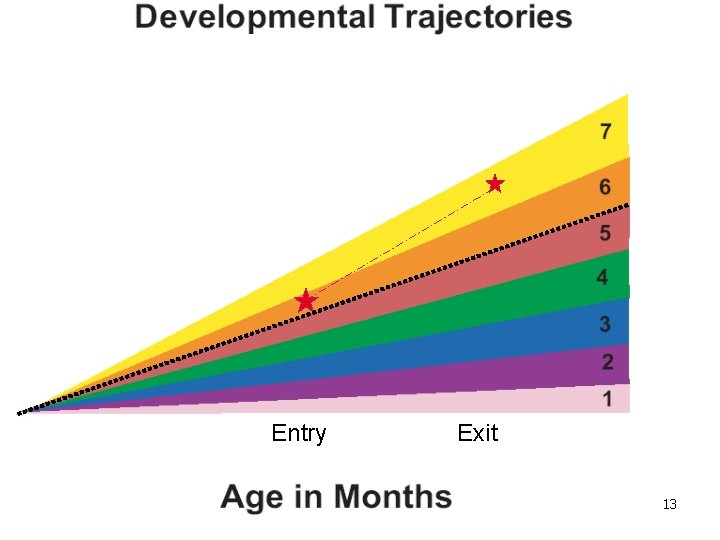

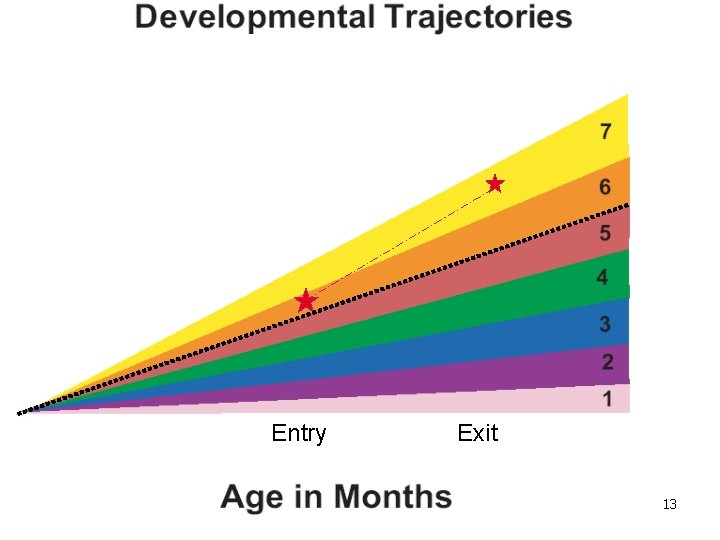

Entry Exit 13

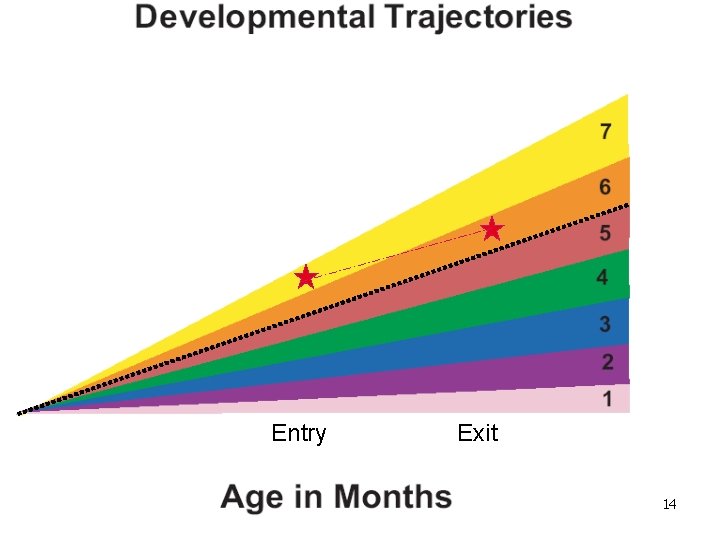

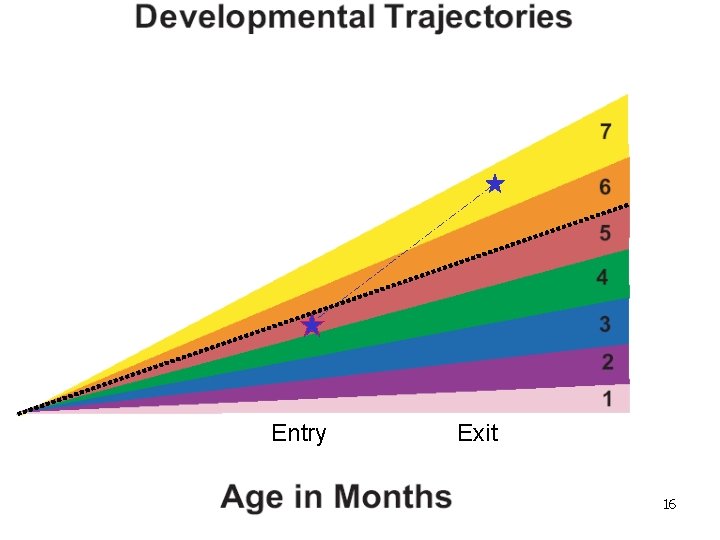

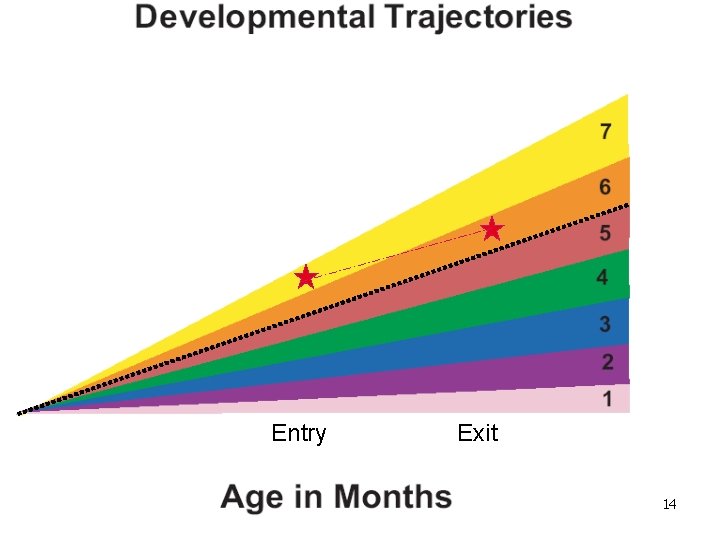

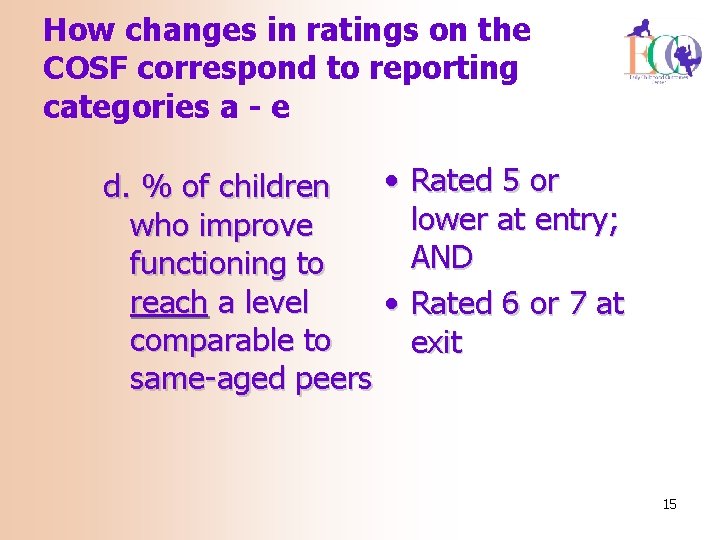

Entry Exit 14

How changes in ratings on the COSF correspond to reporting categories a - e • Rated 5 or d. % of children lower at entry; who improve AND functioning to reach a level • Rated 6 or 7 at comparable to exit same-aged peers 15

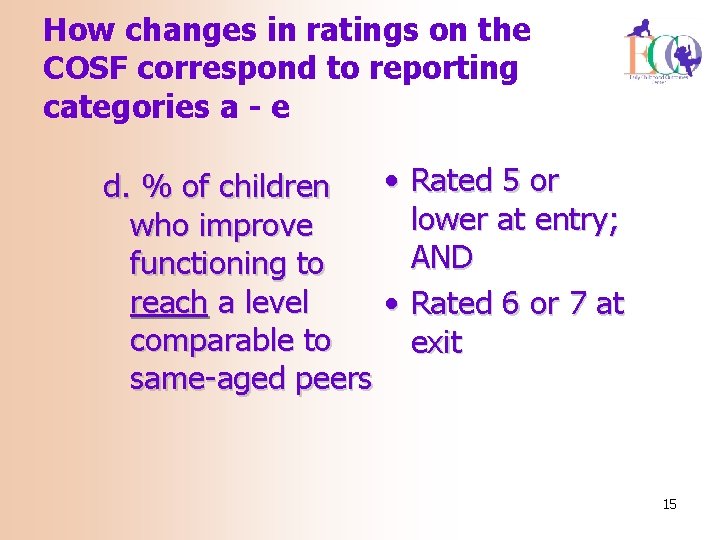

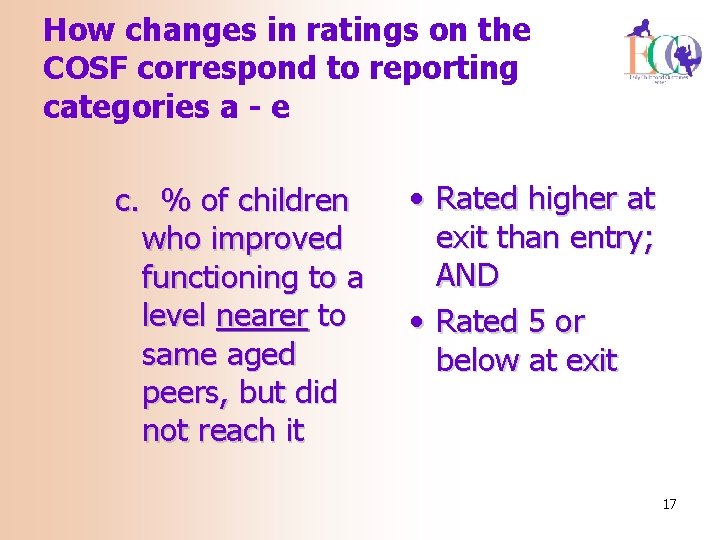

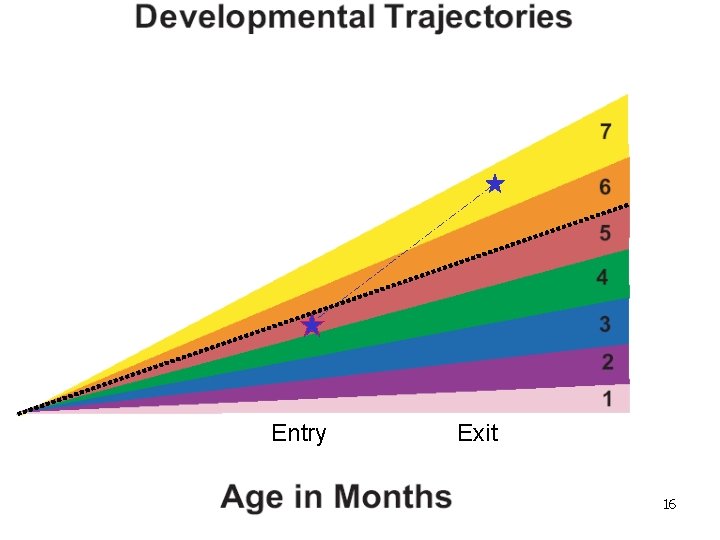

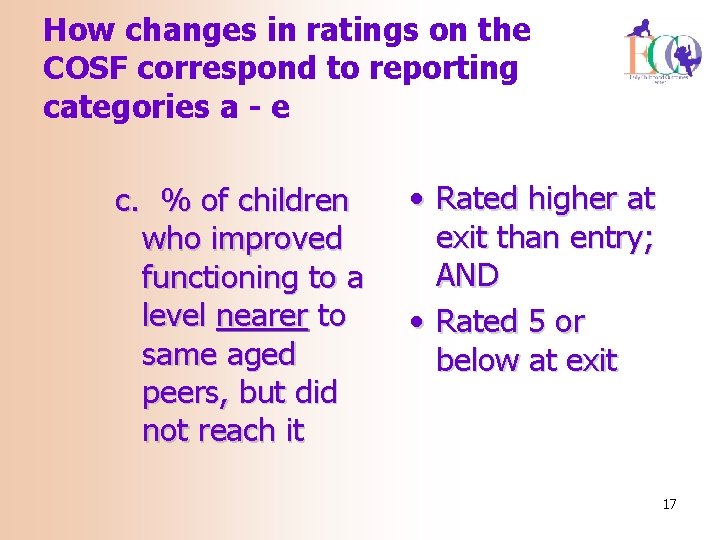

Entry Exit 16

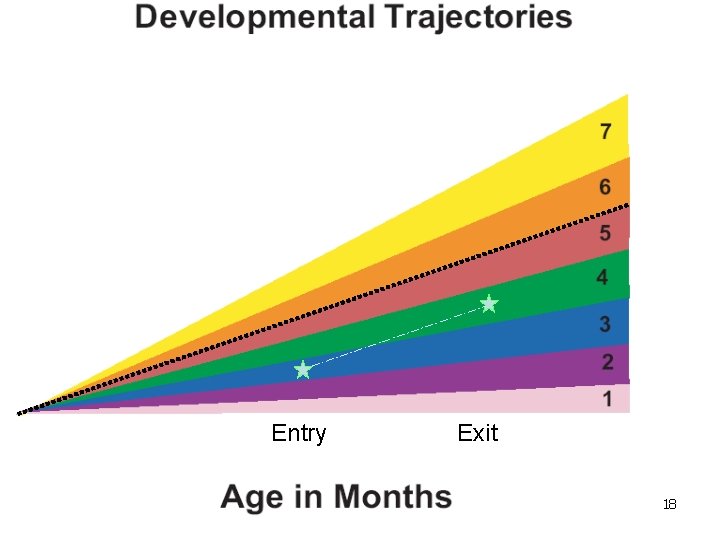

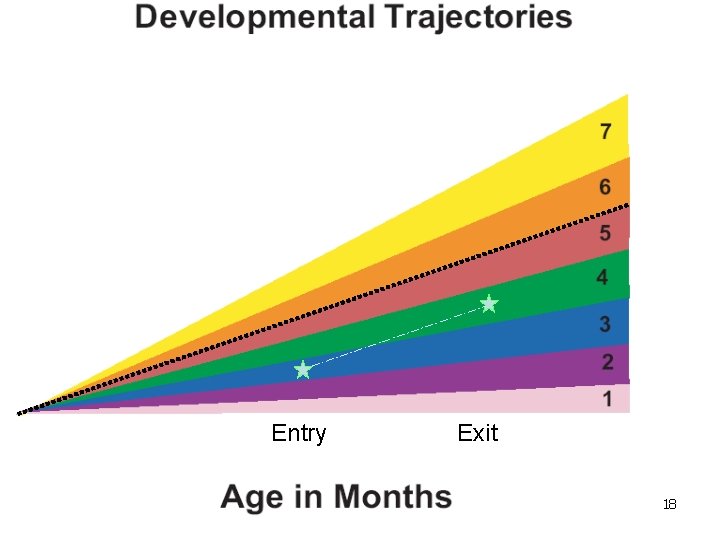

How changes in ratings on the COSF correspond to reporting categories a - e c. % of children who improved functioning to a level nearer to same aged peers, but did not reach it • Rated higher at exit than entry; AND • Rated 5 or below at exit 17

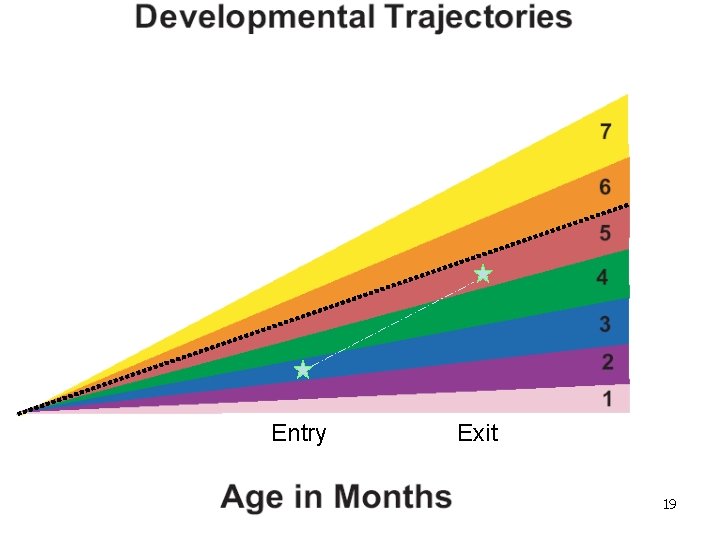

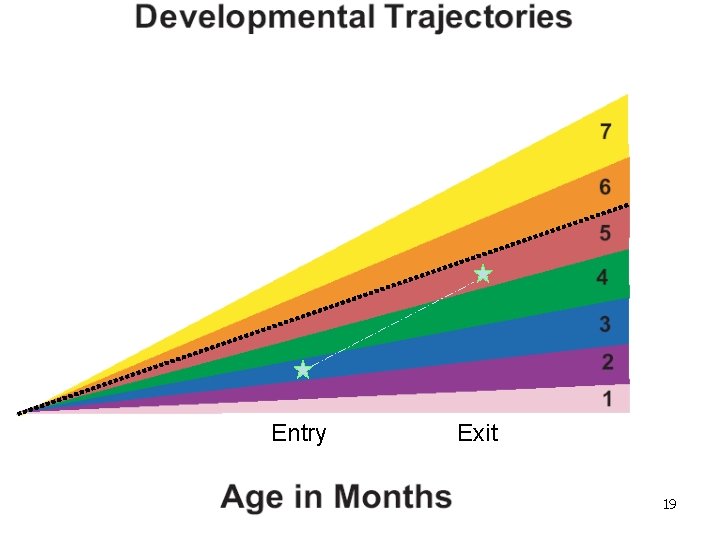

Entry Exit 18

Entry Exit 19

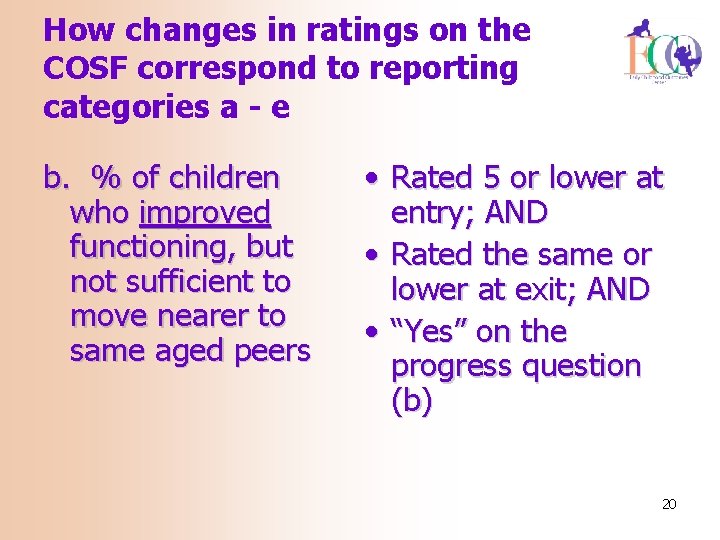

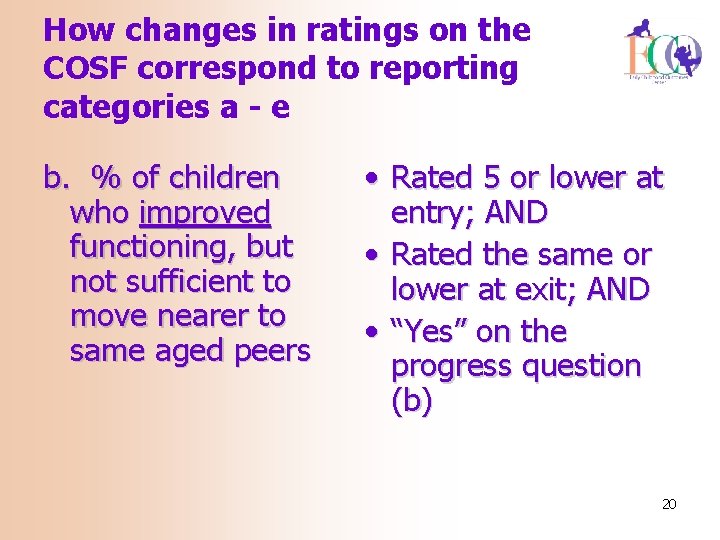

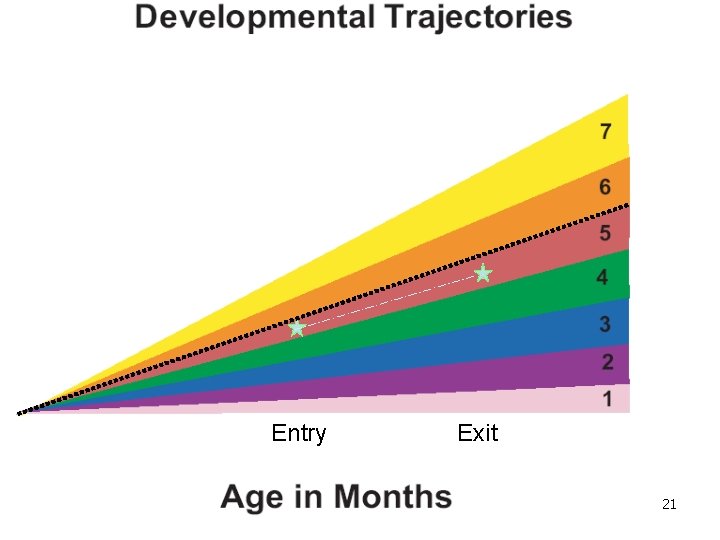

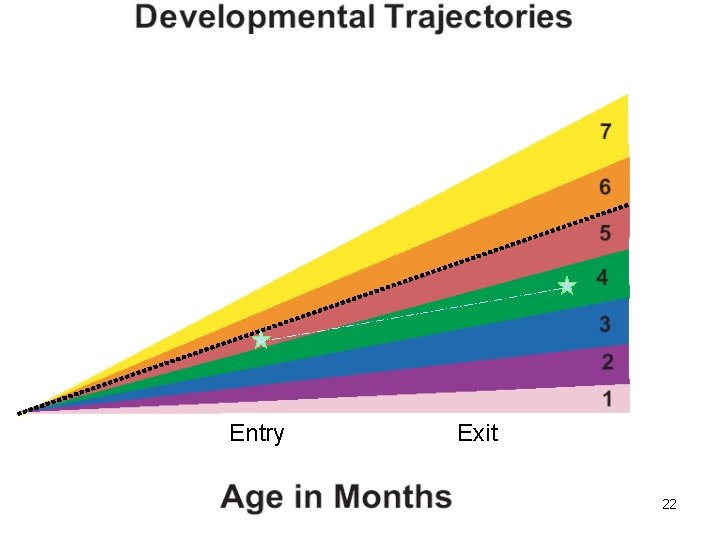

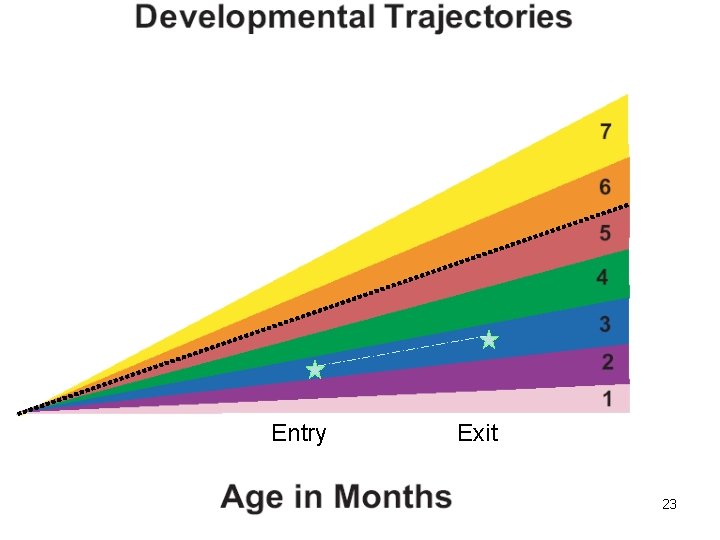

How changes in ratings on the COSF correspond to reporting categories a - e b. % of children who improved functioning, but not sufficient to move nearer to same aged peers • Rated 5 or lower at entry; AND • Rated the same or lower at exit; AND • “Yes” on the progress question (b) 20

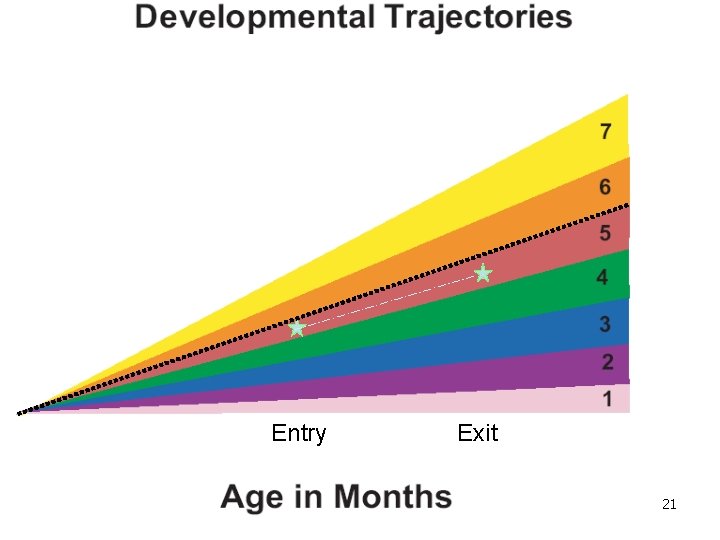

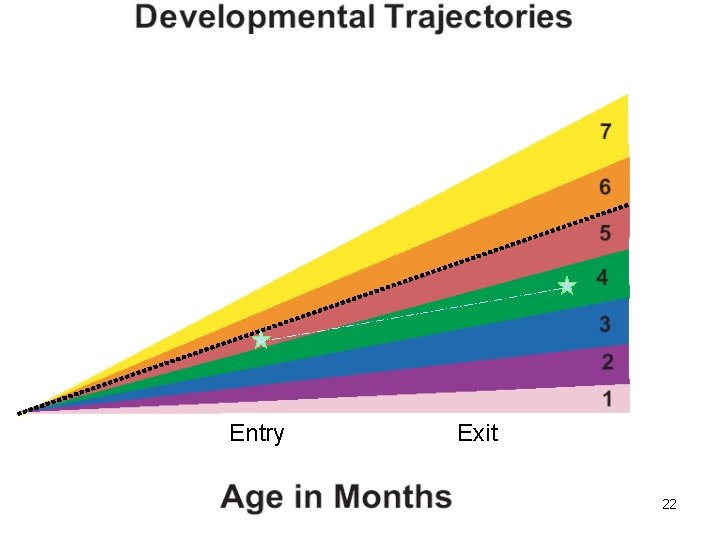

Entry Exit 21

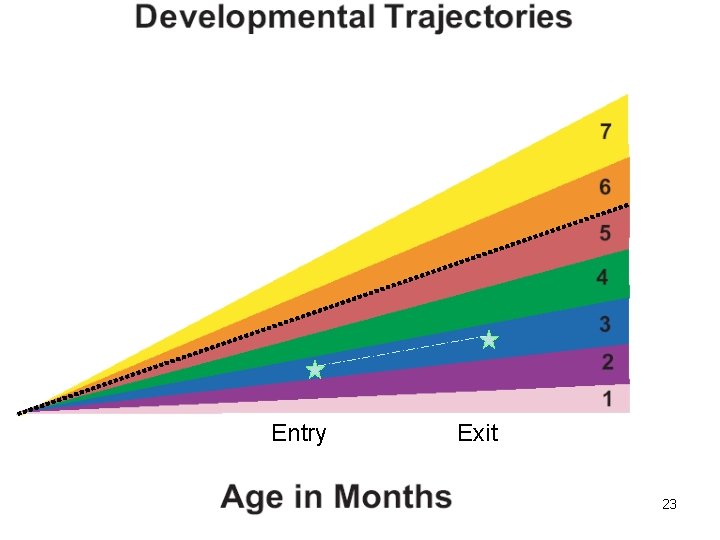

Entry Exit 22

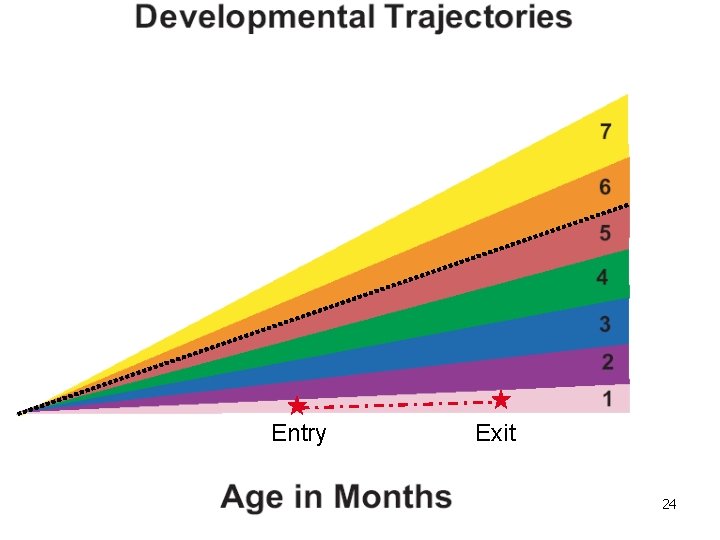

Entry Exit 23

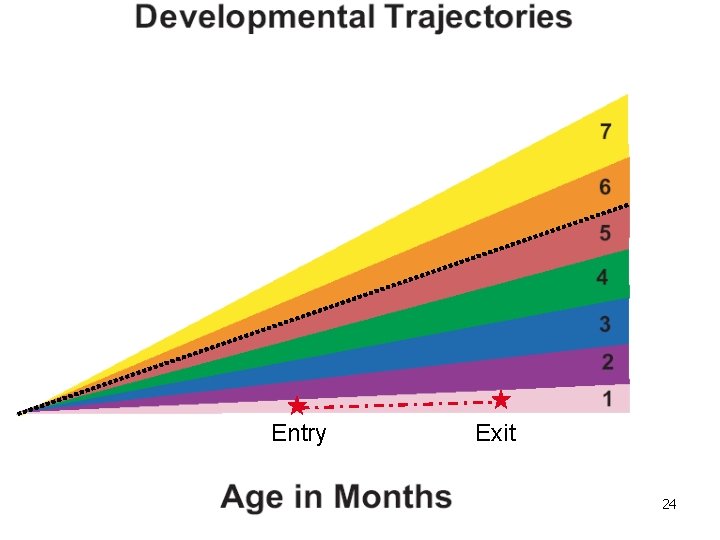

Entry Exit 24

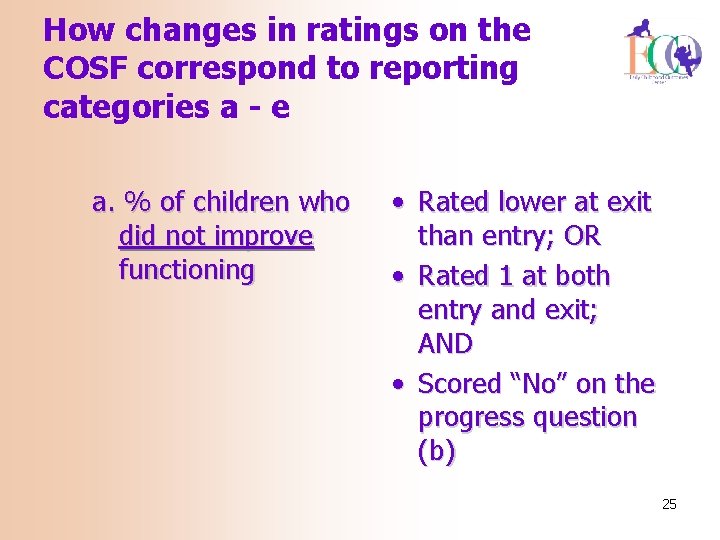

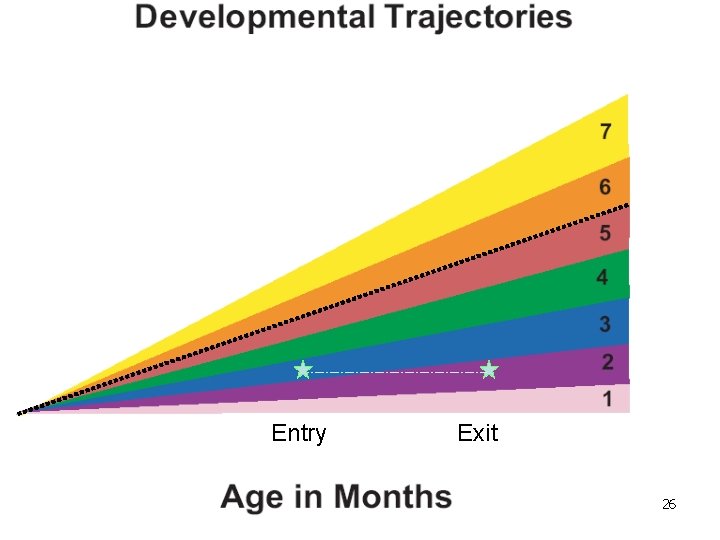

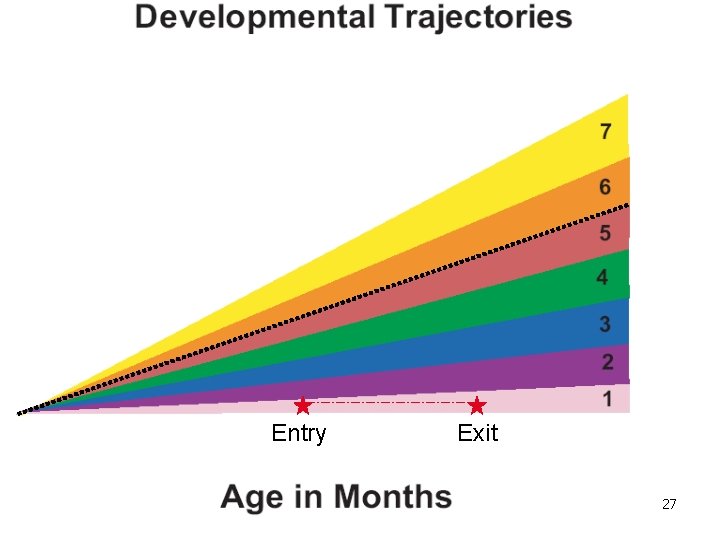

How changes in ratings on the COSF correspond to reporting categories a - e a. % of children who did not improve functioning • Rated lower at exit than entry; OR • Rated 1 at both entry and exit; AND • Scored “No” on the progress question (b) 25

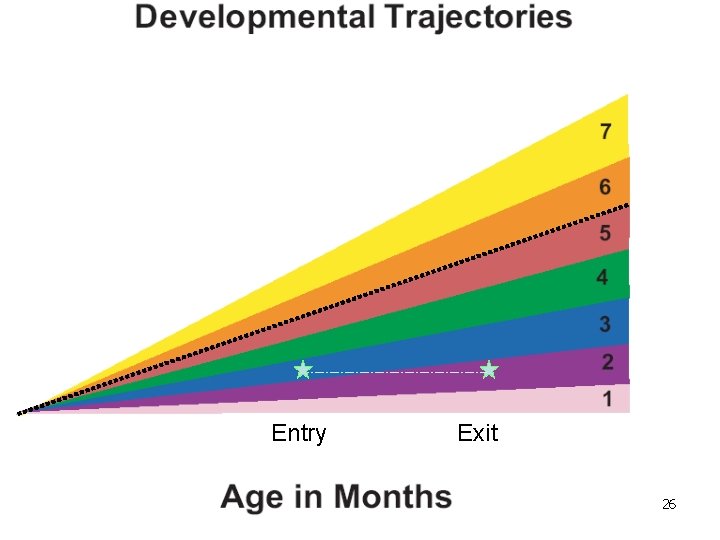

Entry Exit 26

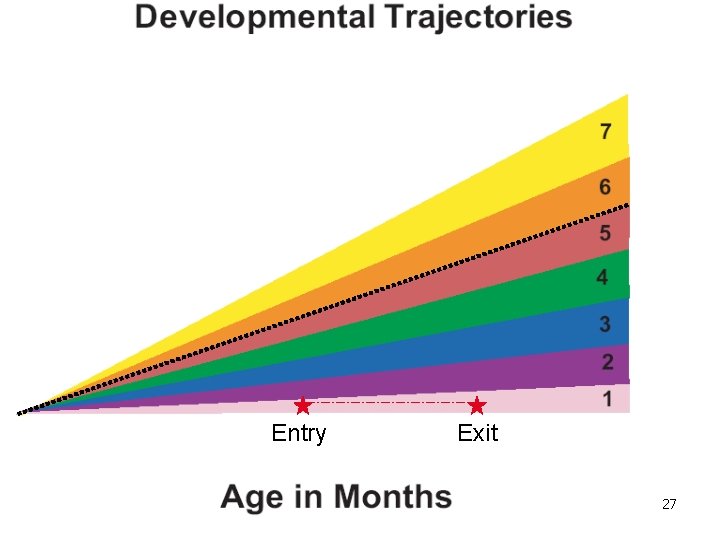

Entry Exit 27

The ECO Calculator can be used to translate COSF entry and exit ratings to the 5 progress categories for federal reporting 28

Promoting quality data through data analysis 29

Promoting quality data through data analysis • Examine the data for inconsistencies • If/when you find something strange, what might help explain it? • Is the variation because of a program data? Or because of bad data? (at this point in the implementation process, data quality issues are likely!) 30

The validity of your data is questionable if… The overall pattern in the data looks ‘strange’ – Compared to what you expect – Compared to other data – Compared to similar states/regions/agencies 31

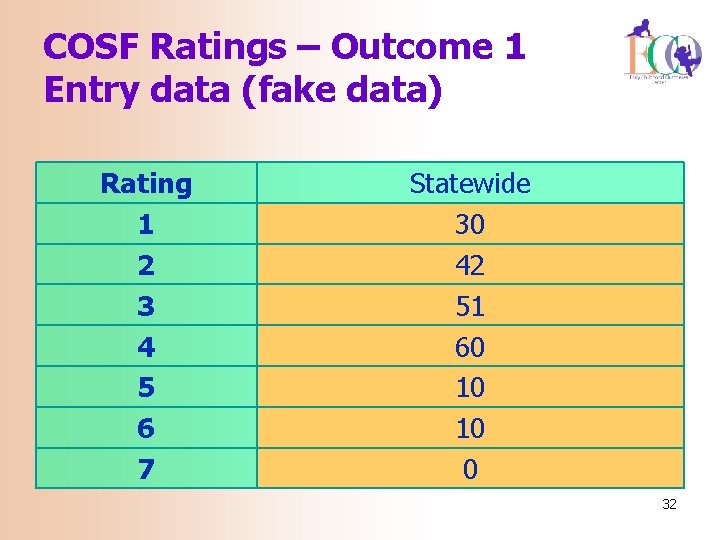

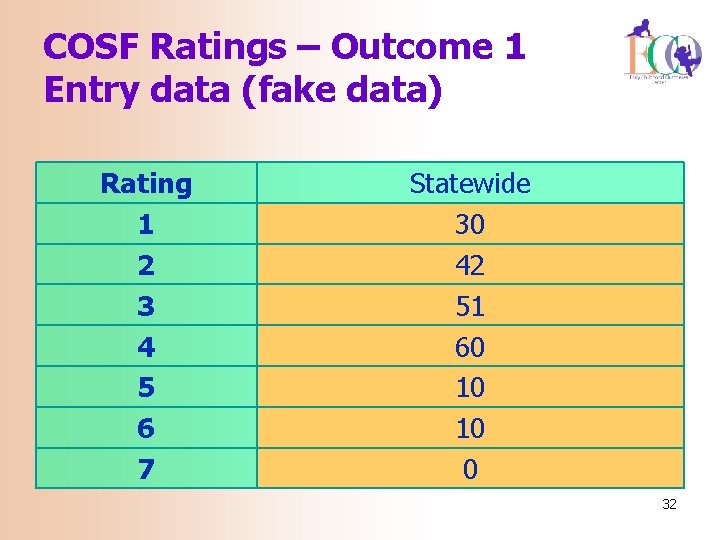

COSF Ratings – Outcome 1 Entry data (fake data) Rating 1 2 3 4 5 6 7 Statewide 30 42 51 60 10 10 0 32

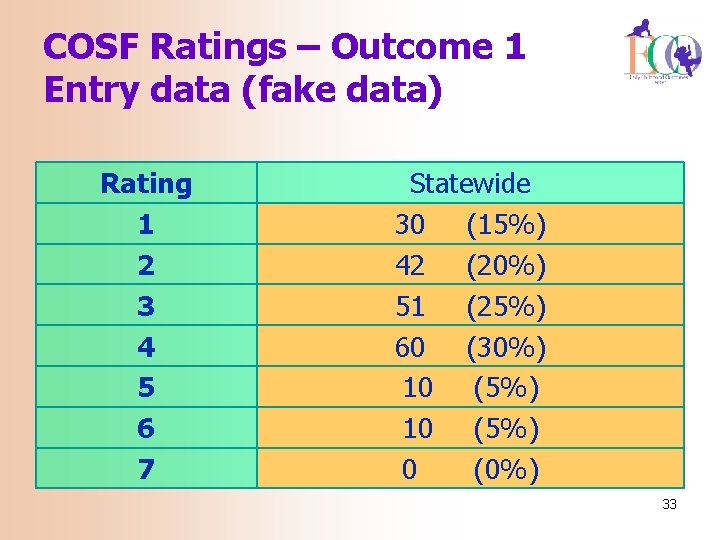

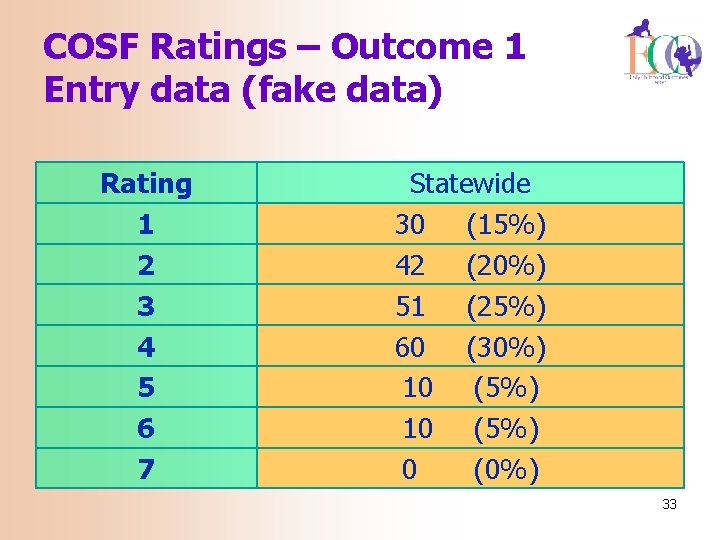

COSF Ratings – Outcome 1 Entry data (fake data) Rating 1 2 3 4 5 6 7 Statewide 30 (15%) 42 (20%) 51 (25%) 60 (30%) 10 (5%) 0 (0%) 33

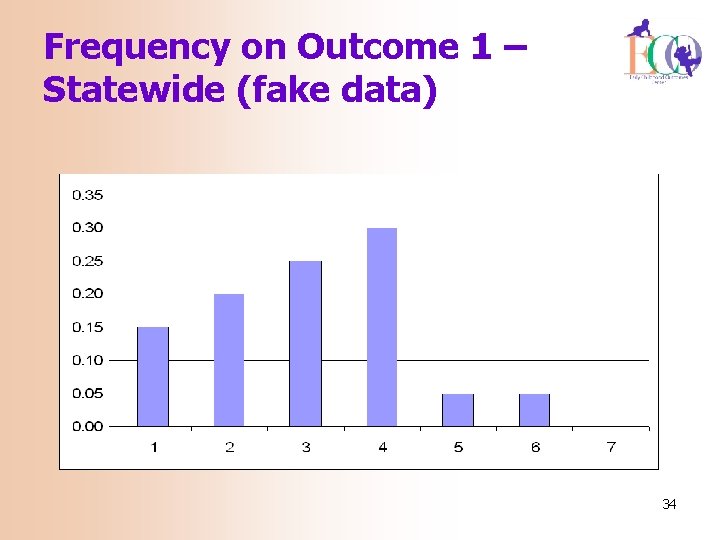

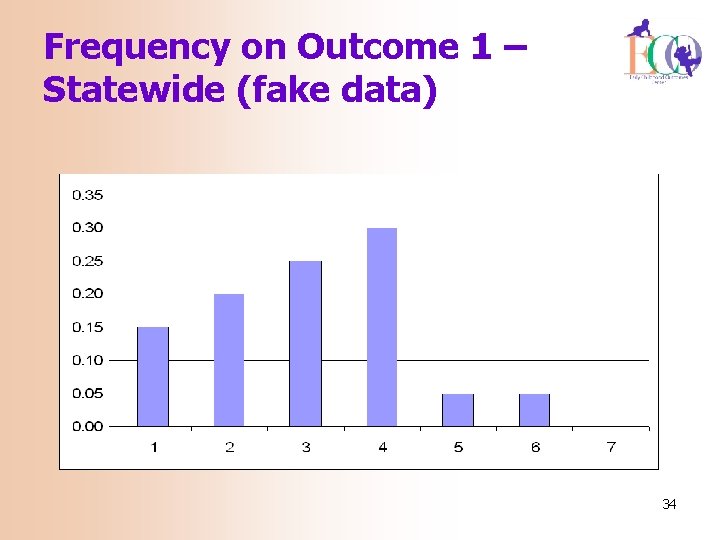

Frequency on Outcome 1 – Statewide (fake data) 34

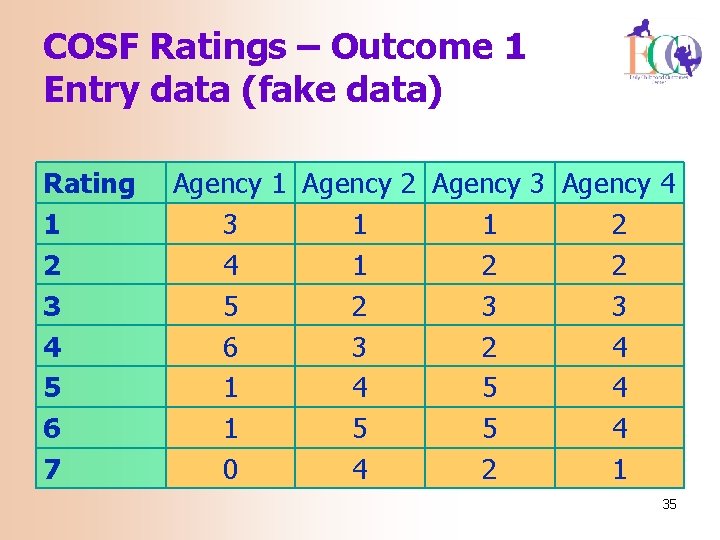

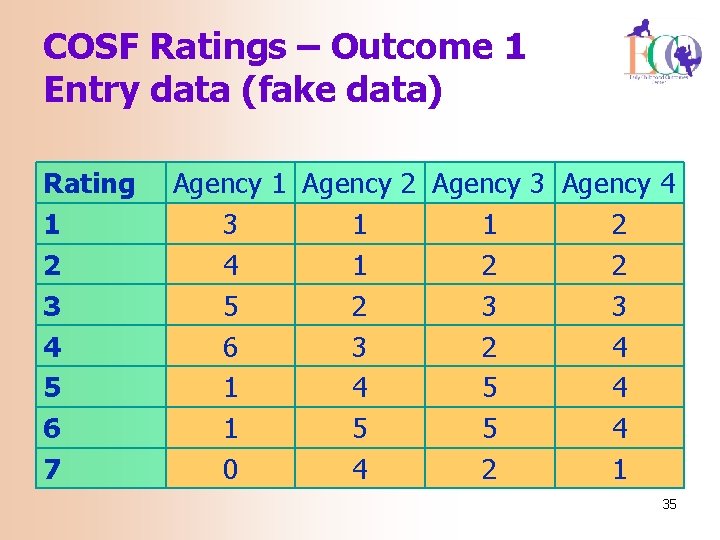

COSF Ratings – Outcome 1 Entry data (fake data) Rating 1 2 3 4 5 6 7 Agency 1 Agency 2 Agency 3 Agency 4 3 1 1 2 4 1 2 2 5 2 3 3 6 3 2 4 1 4 5 4 1 5 5 4 0 4 2 1 35

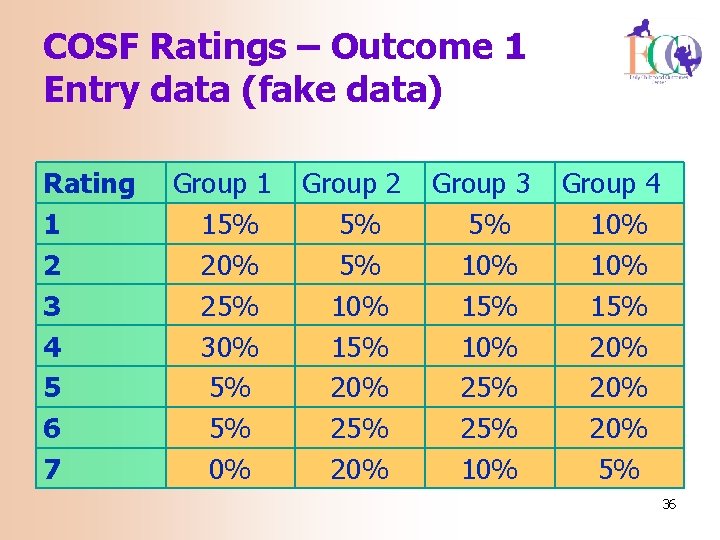

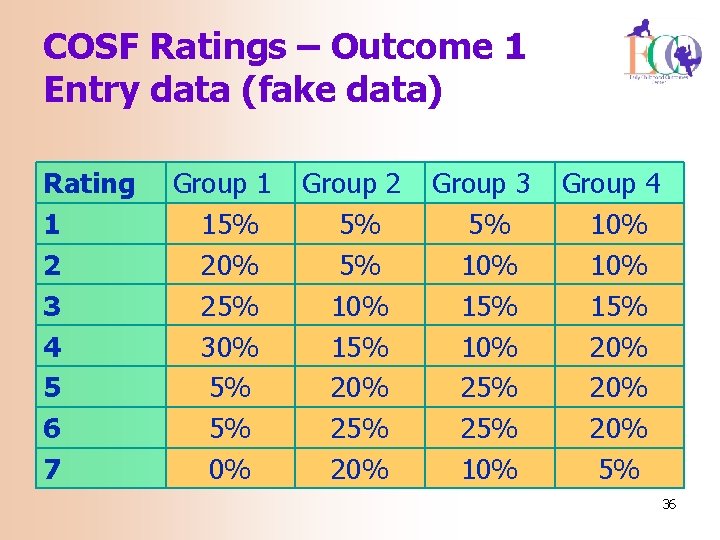

COSF Ratings – Outcome 1 Entry data (fake data) Rating 1 2 3 4 5 6 7 Group 1 15% 20% 25% 30% 5% 5% 0% Group 2 5% 5% 10% 15% 20% 25% 20% Group 3 5% 10% 15% 10% 25% 10% Group 4 10% 15% 20% 20% 5% 36

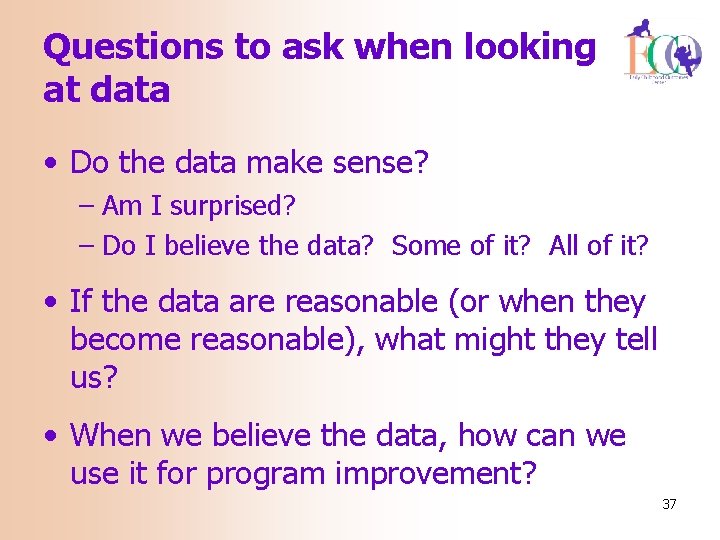

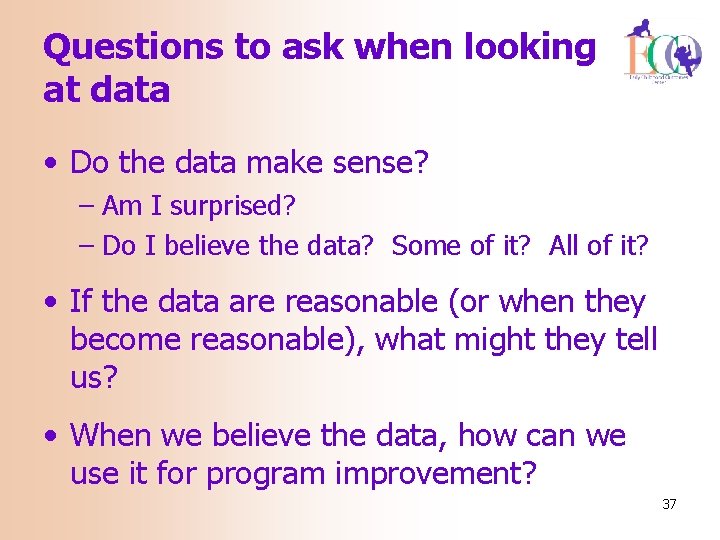

Questions to ask when looking at data • Do the data make sense? – Am I surprised? – Do I believe the data? Some of it? All of it? • If the data are reasonable (or when they become reasonable), what might they tell us? • When we believe the data, how can we use it for program improvement? 37

Using data for program improvement 38

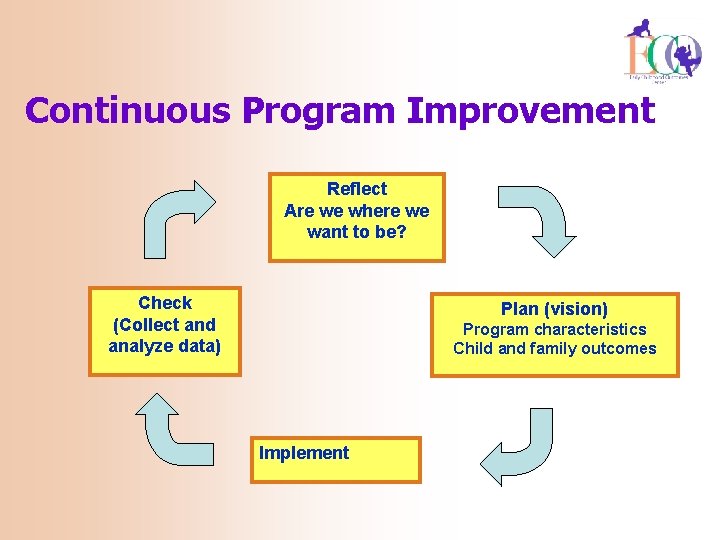

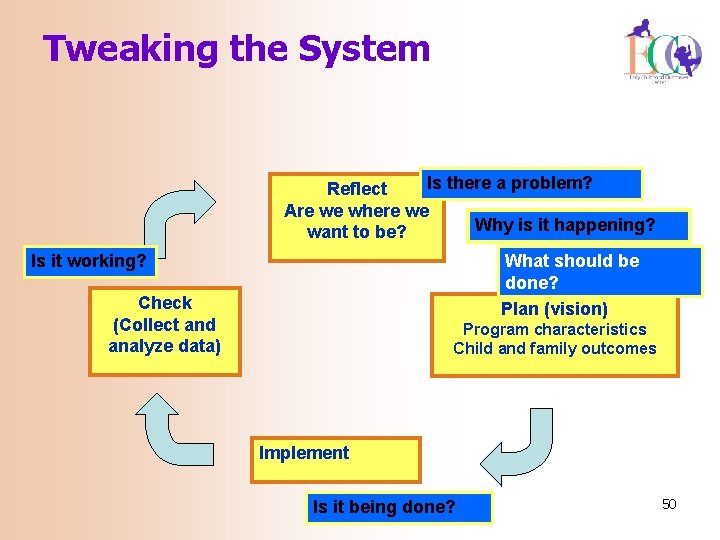

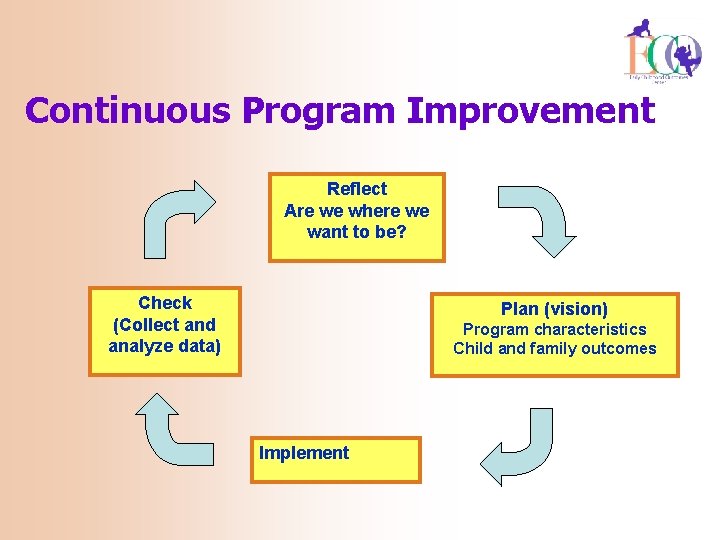

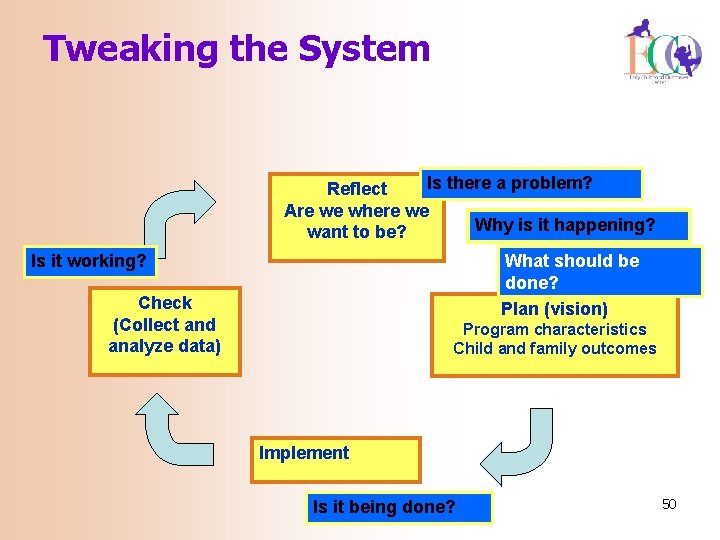

Continuous Program Improvement Reflect Are we where we want to be? Check (Collect and analyze data) Plan (vision) Program characteristics Child and family outcomes Implement 39

Using data for program improvement = EIA • Evidence • Inference • Action 40

Evidence • Evidence refers to the numbers, such as “ 45% of children in category b” • The numbers are not debatable 41

Inference • How do you interpret the #s? • What can you conclude from the #s? • Does evidence mean good news? Bad news? News we can’t interpret? • To reach an inference, sometimes we analyze data in other ways (ask for more evidence) 42

Inference • Inference is debatable -- even reasonable people can reach different conclusions • Stakeholders can help with putting meaning on the numbers • Early on, the inference may be more a question of the quality of the data 43

Explaining variation • Who has good outcomes = • Do outcomes vary by • Region of the state? • Amount of services received? • Type of services received? • Age at entry to service? • Level of functioning at entry? • Family outcomes? • Education level of parent? 44

Action • Given the inference from the numbers, what should be done? • Recommendations or action steps • Action can be debatable – and often is • Another role for stakeholders • Again, early on the action might have to do with improving the quality of the data 45

Working Assumptions • There are some high quality services and programs being provided across the state • There are some children who are not getting the highest quality services • If we can find ways to improve those services/programs, these children will experience better outcomes 46

Questions to ask of your data • Are ALL services high quality? • Are ALL children and families receiving ALL the services they should in a timely manner? • Are ALL families being supported in being involved in their child’s program? • What are the barriers to high quality services? 47

Program improvement: Where and how – At the state level – TA, policy – At the agency level – supervision, guidance – Child level -- modify intervention 48

Key points • Evidence refers to the numbers and the numbers by themselves are meaningless • Inference is attached by those who read (interpret) the numbers • You have the opportunity and obligation to attach meaning 49

Tweaking the System Is there a problem? Reflect Are we where we Why is it happening? want to be? Is it working? What should be done? Plan (vision) Check (Collect and analyze data) Program characteristics Child and family outcomes Implement Is it being done? 50

Continuous means… • …. the cycle never ends. 51