Best First Search n n n So far

Best First Search n n n So far, we have assumed that all the edges have the same cost, and that an optimal solution is a shortest path from the initial state to a goal state. Let’s generalize the model to allow individual edges with arbitrary costs associated with them. We’ll use the following denotations: length - number of edges in the path cost - sum of the edge costs on the path

Best First Search n Best First Search is an entire class algorithms, each of which employ a cost The cost function : from a l of search function. node to its cost. Example: a sum of the edge costs from the root to the node. n n We assume that a lower cost node is a better node. The different best-first search algorithms differ primarily in their cost function.

Best First Search Best-first search employs two lists of nodes: n Open list u n contains those nodes that have been generated but not yet expanded. Closed list u contains those nodes that have already been completely expanded

Best First Search n n Initially, just the root node is included on the Open list, and the Closed list is empty. At each cycle of the algorithm, an Open node of lowest cost is expanded, moved to Closed, and its children are inserted back to Open. The Open list is maintained as a priority queue. The algorithm terminates when a goal node is chosen for expansion, or there are no more nodes remaining in Open list.

Example of Best First Search n n n One example of Best First Search is breadth first search. The cost here is a depth of the node below the root. depth first search is not considered to be bestfirst-search, because it does not maintain Open and Closed lists, in order to run in linear space.

Uniform Cost Search n n Let g(n) be the sum of the edges costs from root to node n. If g(n) is our overall cost function, then the best first search becomes Uniform Cost Search, also known as Dijkstra’s single-source-shortest-path algorithm. Initially the root node is placed in Open with a cost of zero. At each step, the next node n to be expanded is an Open node whose cost g(n) is lowest among all Open nodes.

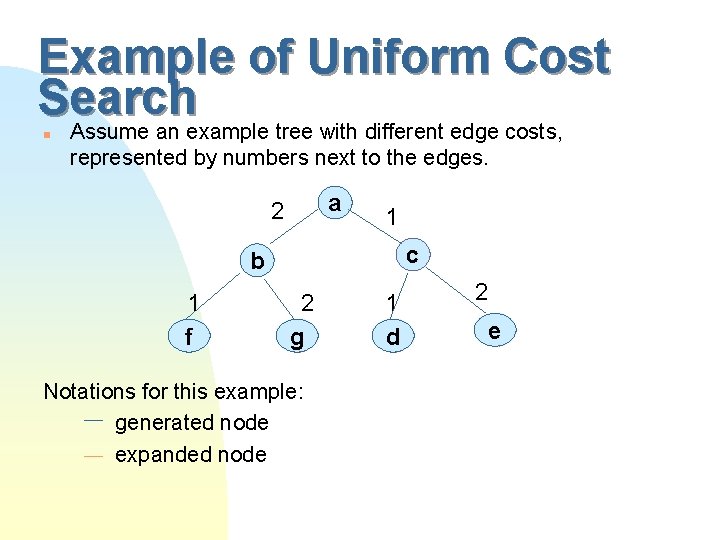

Example of Uniform Cost Search Assume an example tree with different edge costs, n represented by numbers next to the edges. a 2 1 c b 1 f 2 gc Notations for this example: generated node expanded node 1 dc 2 ec

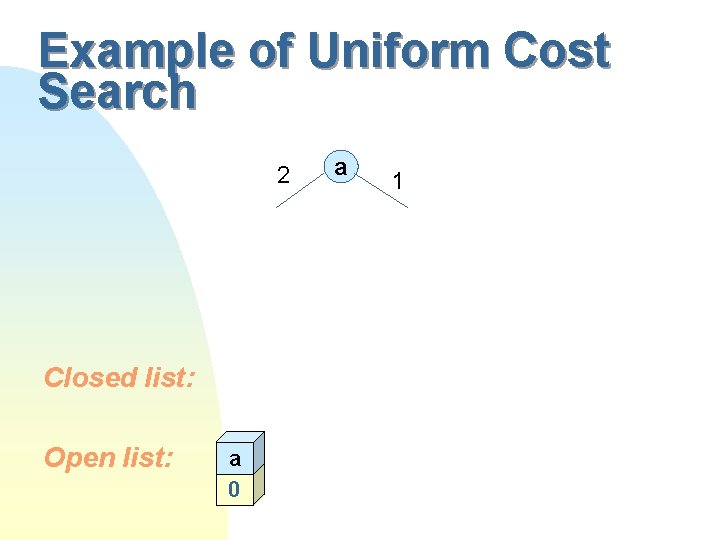

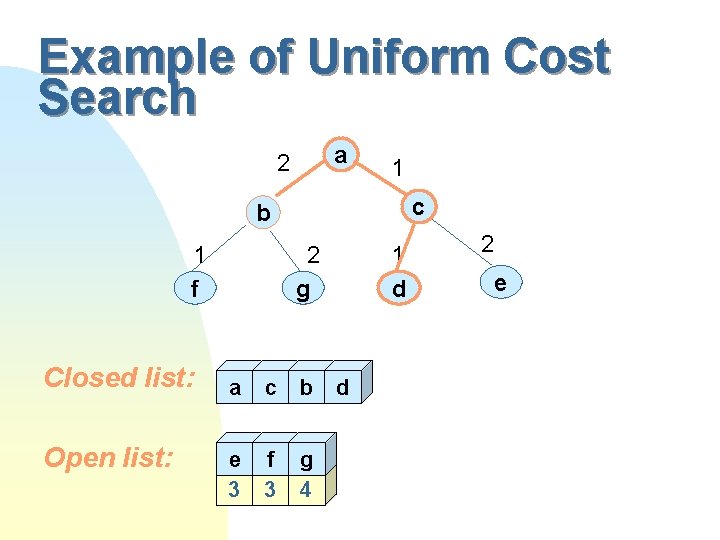

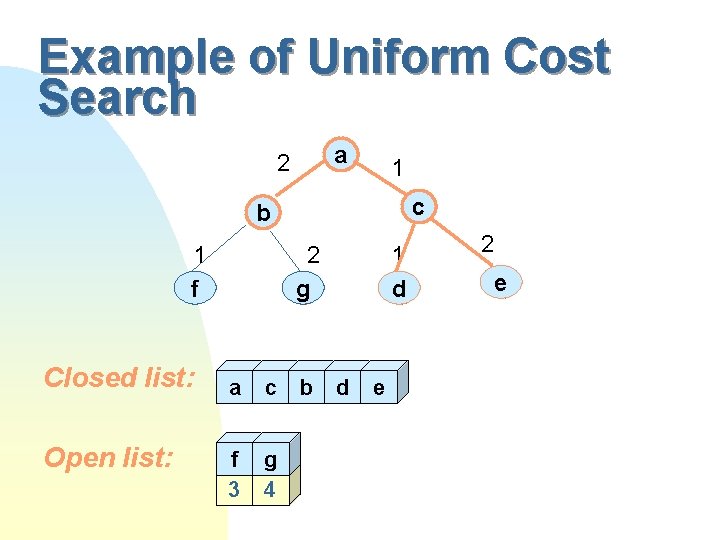

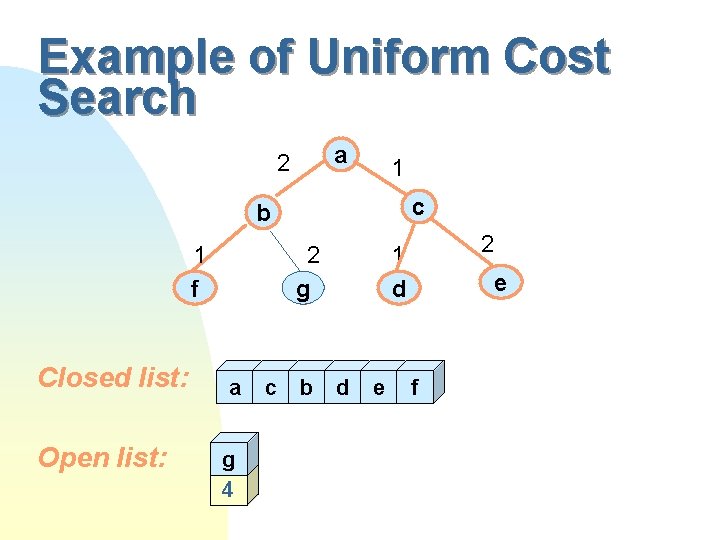

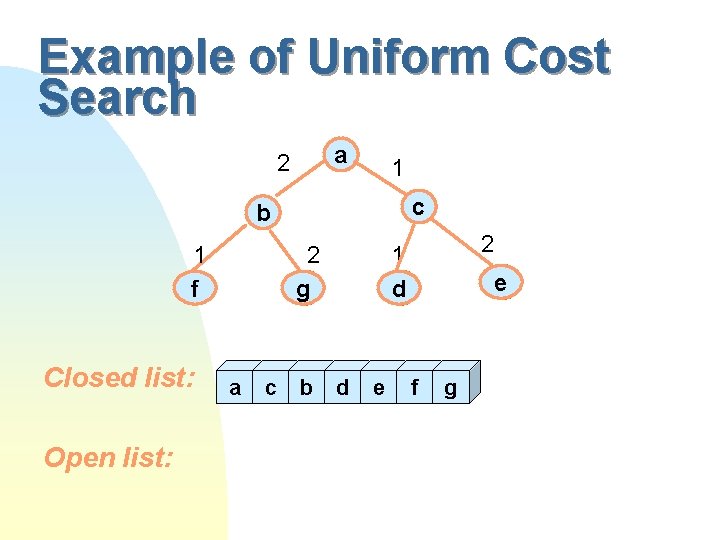

Example of Uniform Cost Search 2 Closed list: Open list: a 0 a 1

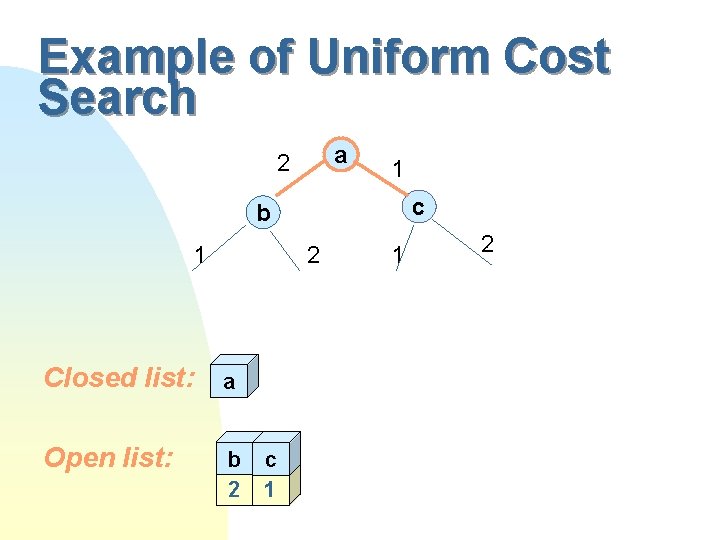

Example of Uniform Cost Search a 2 1 c b 1 2 Closed list: a Open list: b 2 c 1 1 2

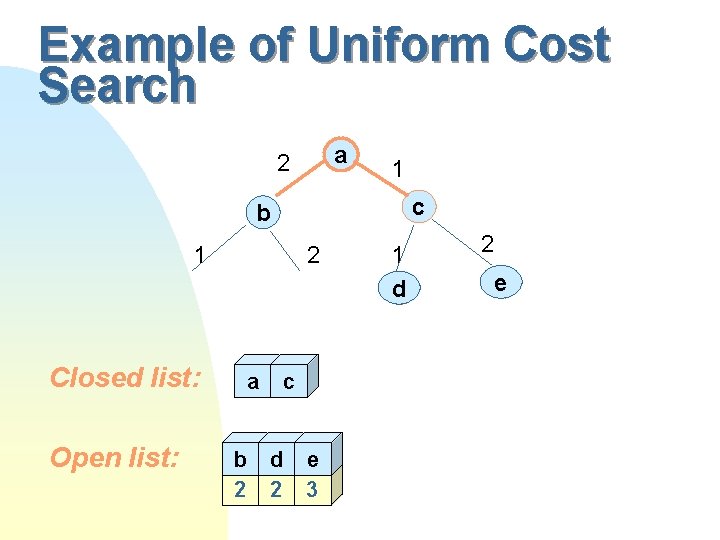

Example of Uniform Cost Search a 2 1 c b 1 Closed list: Open list: 2 a b 2 c d 2 e 3 1 dc 2 ec

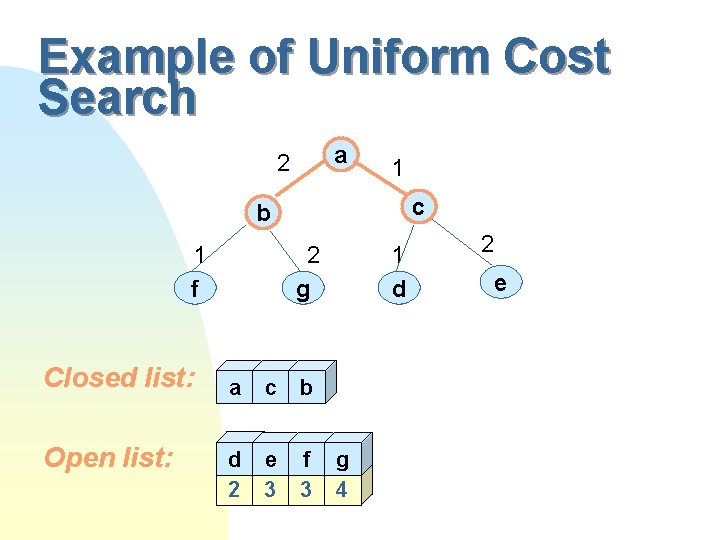

Example of Uniform Cost Search a 2 1 c b 1 f Closed list: Open list: 2 gc a c b d 2 e 3 f 3 1 dc g 4 2 ec

Example of Uniform Cost Search a 2 1 c b 1 f Closed list: Open list: 2 gc a c b e 3 f 3 g 4 1 dc d 2 ec

Example of Uniform Cost Search a 2 1 c b 1 f Closed list: Open list: 2 gc a c f 3 g 4 b 1 dc d e 2 ec

Example of Uniform Cost Search a 2 1 c b 1 f Closed list: Open list: 2 gc a g 4 c b 2 1 dc d e ec f

Example of Uniform Cost Search a 2 1 c b 1 f Closed list: Open list: 2 gc a c b 2 1 dc d e ec f g

Uniform Cost Search n n We consider Uniform Cost Search to be brute force search, because it doesn’t use a heuristic function. Questions to ask: u Whether Uniform cost always terminates? u Whether it is guaranteed to find a goal state?

Uniform Cost Search Termination n The algorithm will find a goal node or report that there is no goal node under following conditions: the problem space is finite u there must exist a path to a goal with finite length and finite cost u there must not be any infinitely long paths of finite cost u n We will assume that all the edges have a minimum non-zero edge cost e to a solve a problem of infinite chains of nodes with zero-cost edges. Then, UCS will eventually reach a goal of finite cost if one exists in the graph.

Uniform Cost Search Solution Quality n Theorem 3. 1 : In a graph where all edges have a minimum positive cost, and in which a finite path exists to a goal node, uniform-cost search will return a lowest-cost path to a goal node. n Steps of proof u show that if Open contains a node on an optimal path to a goal before a node expansion, then it must contain one after the node expansion u show that if there is a path to a goal node, the algorithm will eventually find it u show that the first time a goal node is chosen for expansion, the algorithm terminates and returns the path to that node as the solution

Uniform Cost Search Time Complexity n In the worst case u u u every edge has the minimum edge e. c is the cost of optimal solution, so once all nodes of cost c have been chosen for expansion, a goal must be chosen The maximum length of any path searched up to this point cannot exceed c/e, and hence the worst-case number of such nodes is bc/e. Thus, the worst case asymptotic time complexity of UCS is O(bc/e )

Uniform Cost Search Space Complexity n As in all best-first searches, each node that is generated is stored in the Open or Closed lists, and hence the asymptotic space complexity of UCS is the same as its asymptotic time complexity. The worst case asymptotic space complexity of UCS is O(bc/e ) n As a result, UCS is memory-limited in practice.

Complexity of Dijkstra’s Algorithm n n Dijkstra’s algorithm is the same as uniform search (Why)? its time complexity is usually reported as n 2. It is not a discrepancy, because: n is the total number of nodes in the graph. In UCS we measure problem size by the branching factor b and solution cost c. u The Dijkstra’ algorithm it is assumed that every node may be connected to every node, which gives rise to the quadratic complexity. In UCS we assume a constantbounded branching factor of b. u

Combinatorial Explosion n All the problems we have seen so far are brute-force methods, i. e. they rely on the problem space, the initial state and description of the goal state. A brute-force algorithm can be expected to generate about a million states per second. For example, the Fifteen Puzzle has 1013 would require about two month of computation, and 3 X 3 Rubik’s Cube would take about 686 thousand years. The brute-force search algorithms are not efficient enough to solve even moderately large problems. A new idea is needed!

Heuristic Evaluation Functions n n The efficiency of a brute-force can be greatly by the use of a heuristic static evaluation function, or heuristic function. Such a function can improve the efficiency of a search algorithm in two ways: leading the algorithm toward a goal state u pruning off branches that don’t lie on any optimal solution path. u

Properties of Heuristic Functions n The two most important properties of a heuristic function are: it is relatively cheap to compute u it is a relatively accurate estimator of the cost to reach a goal. Usually a “good” heuristic is if ½ opt(n)<h(n)<opt(n) u n Another property: u admissibility - the heuristic function is always a lower bound on actual solution cost.

Example of Heuristic Functions n Task : Navigating in a network of roads from one location to another Heuristic function: Airline distance. The three requirements are valid here. n Task : Sliding -tile puzzles Heuristic function: Manhattan distance - number of horizontal and vertical grid units a each tile is displaced from its goal position. The three requirements are valid here.

Example of Heuristic Functions n Task : Traveling Salesman Problem Heuristic function: A cost of minimum spanning tree(MST) of the cities The three requirements are valid here. Note that MST<TSP but also TSP<2*MST ½ TSP <MST < TSP

Pure Heuristic Search n Given a heuristic evaluation function, the simplest algorithm that uses it is: f(n) = h(n) where f(n) - cost function h(n) - heuristic function This algorithm is called Pure heuristic search (PHS) n n PHS will eventually generate the entire graph finding a goal node if one exists. If the graph is infinite, PHS is not guaranteed to terminate, even if a goal node exists.

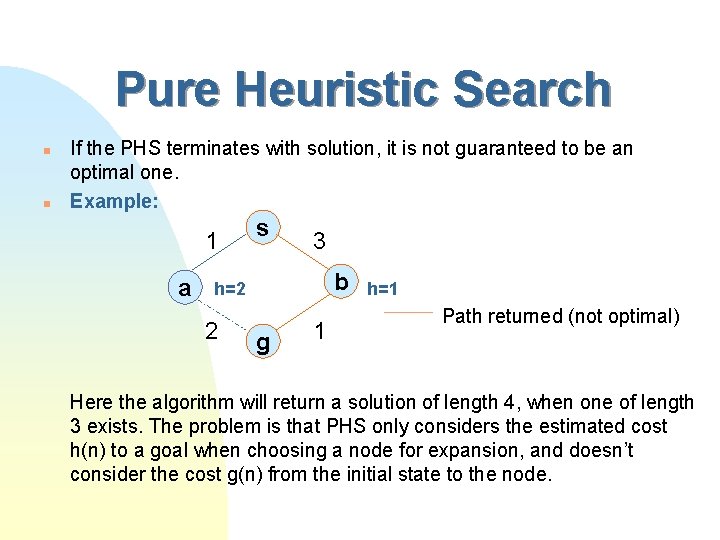

Pure Heuristic Search n n If the PHS terminates with solution, it is not guaranteed to be an optimal one. Example: 1 a s 3 b h=2 2 g 1 h=1 Path returned (not optimal) Here the algorithm will return a solution of length 4, when one of length 3 exists. The problem is that PHS only considers the estimated cost h(n) to a goal when choosing a node for expansion, and doesn’t consider the cost g(n) from the initial state to the node.

A* Algorithm n We take into account both the cost of reaching a node form the Initial state, g(h), as well as the heuristic estimate from that node to the goal node, h(n). f(n) = g(n) + h(n) n n For given h(n), this is the best estimate of a lowest cost path from the initial state to a goal state that is constrained to pass through node n The A stands for “algorithm”, and the * indicates its optimality property.

A* - Terminating Conditions n Like all best-first searches, A* terminates when it chooses a goal node for expansion, or when there are no more Open nodes. u In a finite graph, it will explore the entire graph if it doesn’t find a goal state. u In an infinite graph it will find a finite-cost path if F all edge costs are finite and have a minimum positive value F all heuristic values are finite and non-negative. /Under those conditions the cost of nodes will eventually increase without bound. Therefore, there could not be an infinite loop.

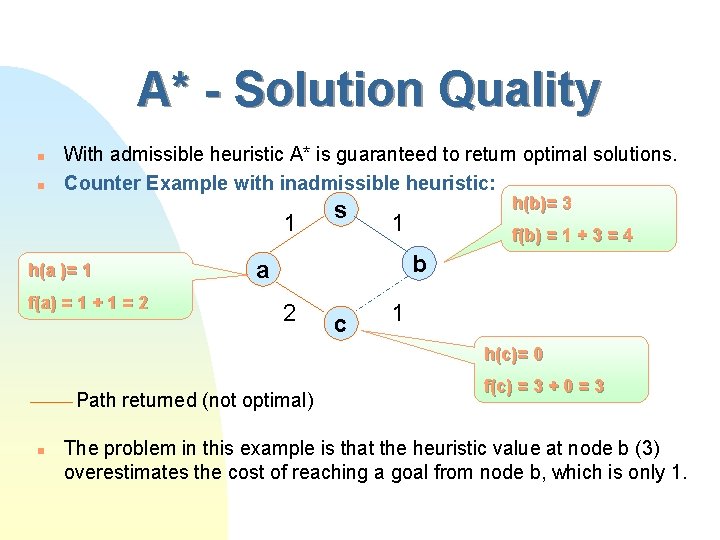

A* - Solution Quality n n With admissible heuristic A* is guaranteed to return optimal solutions. Counter Example with inadmissible heuristic: 1 h(a )= 1 f(a) = 1 + 1 = 2 s h(b)= 3 1 f(b) = 1 + 3 = 4 b a 2 c 1 h(c)= 0 Path returned (not optimal) n f(c) = 3 + 0 = 3 The problem in this example is that the heuristic value at node b (3) overestimates the cost of reaching a goal from node b, which is only 1.

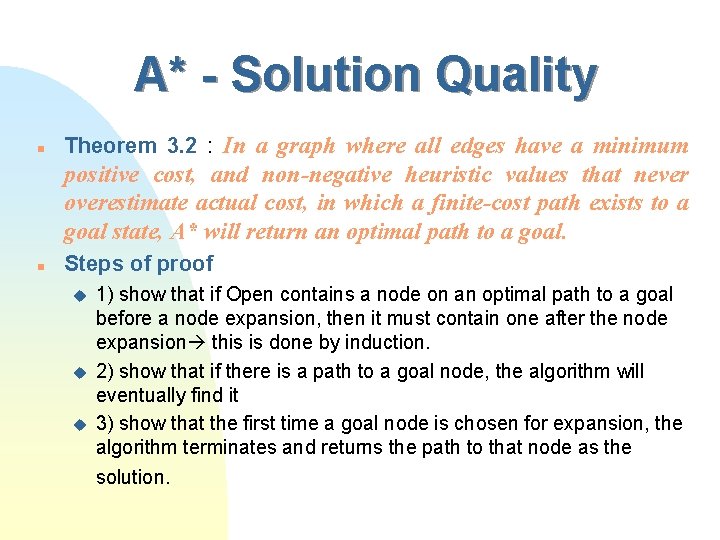

A* - Solution Quality n Theorem 3. 2 : In a graph where all edges have a minimum positive cost, and non-negative heuristic values that never overestimate actual cost, in which a finite-cost path exists to a goal state, A* will return an optimal path to a goal. n Steps of proof u u u 1) show that if Open contains a node on an optimal path to a goal before a node expansion, then it must contain one after the node expansion this is done by induction. 2) show that if there is a path to a goal node, the algorithm will eventually find it 3) show that the first time a goal node is chosen for expansion, the algorithm terminates and returns the path to that node as the solution.

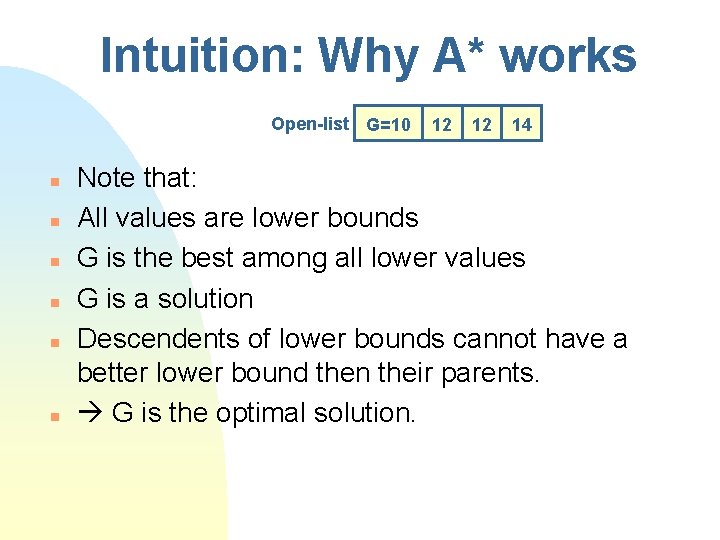

Intuition: Why A* works Open-list G=10 n n n 12 12 14 Note that: All values are lower bounds G is the best among all lower values G is a solution Descendents of lower bounds cannot have a better lower bound then their parents. G is the optimal solution.

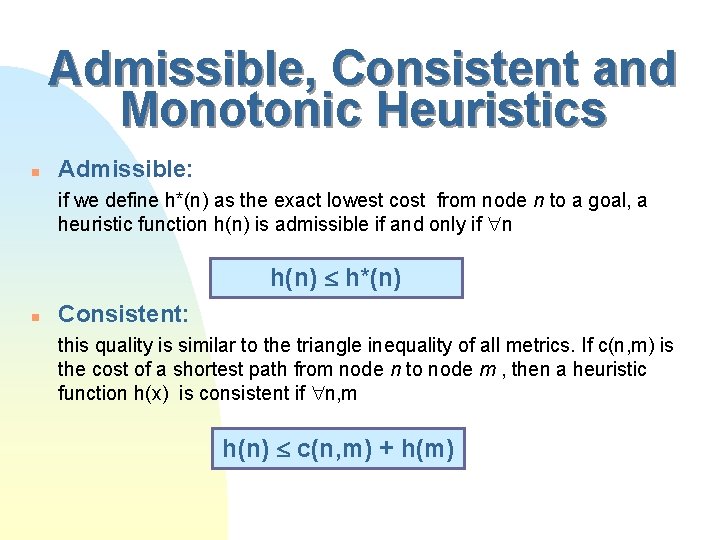

Admissible, Consistent and Monotonic Heuristics n Admissible: if we define h*(n) as the exact lowest cost from node n to a goal, a heuristic function h(n) is admissible if and only if n h(n) h*(n) n Consistent: this quality is similar to the triangle inequality of all metrics. If c(n, m) is the cost of a shortest path from node n to node m , then a heuristic function h(x) is consistent if n, m h(n) c(n, m) + h(m)

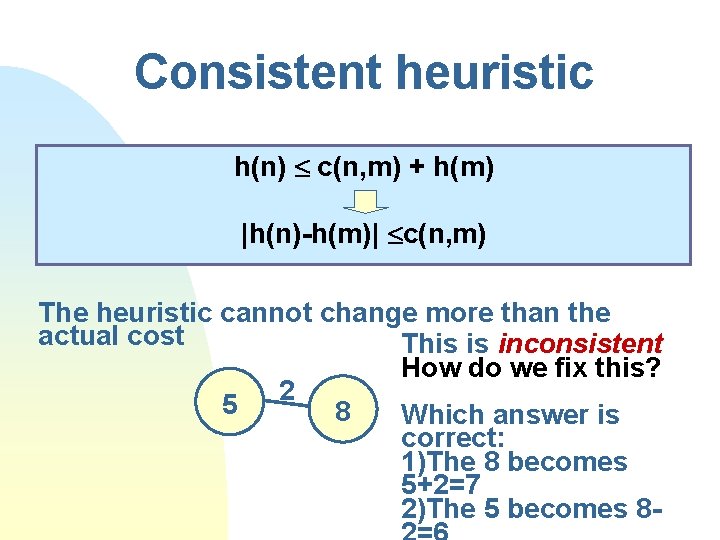

Consistent heuristic h(n) c(n, m) + h(m) |h(n)-h(m)| c(n, m) The heuristic cannot change more than the actual cost This is inconsistent How do we fix this? 5 2 8 Which answer is correct: 1)The 8 becomes 5+2=7 2)The 5 becomes 8 -

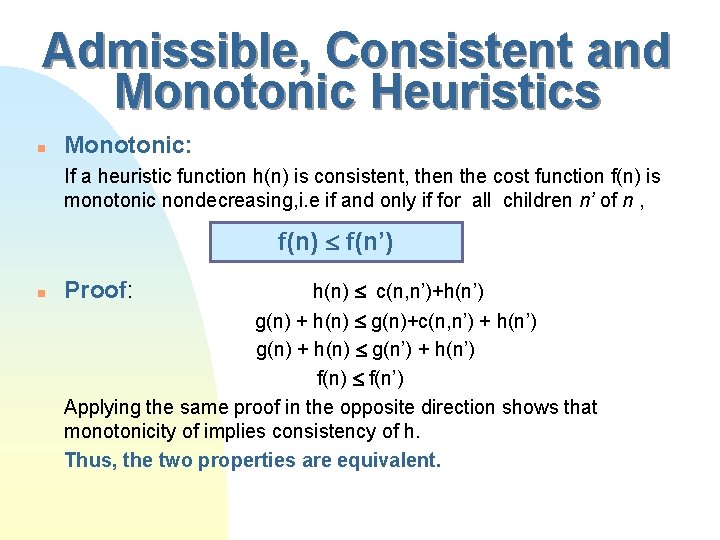

Admissible, Consistent and Monotonic Heuristics n Monotonic: If a heuristic function h(n) is consistent, then the cost function f(n) is monotonic nondecreasing, i. e if and only if for all children n’ of n , f(n) f(n’) n Proof: h(n) c(n, n’)+h(n’) g(n) + h(n) g(n)+c(n, n’) + h(n’) g(n) + h(n) g(n’) + h(n’) f(n) f(n’) Applying the same proof in the opposite direction shows that monotonicity of implies consistency of h. Thus, the two properties are equivalent.

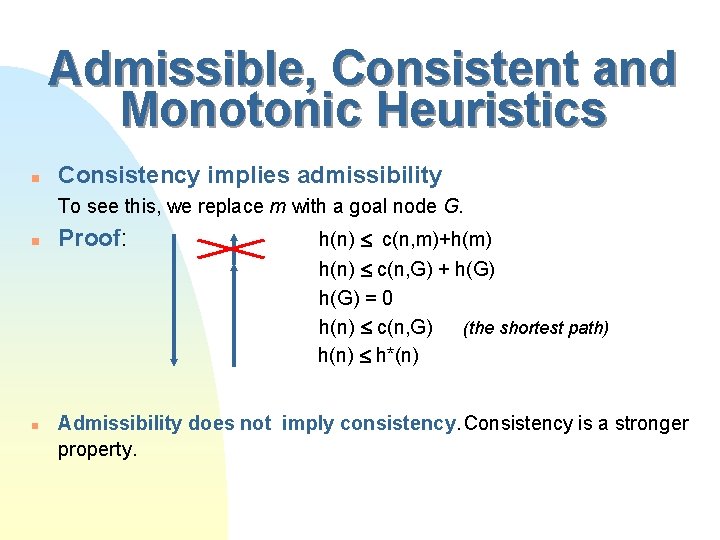

Admissible, Consistent and Monotonic Heuristics n Consistency implies admissibility To see this, we replace m with a goal node G. n n Proof: h(n) c(n, m)+h(m) h(n) c(n, G) + h(G) = 0 h(n) c(n, G) (the shortest path) h(n) h*(n) Admissibility does not imply consistency. Consistency is a stronger property.

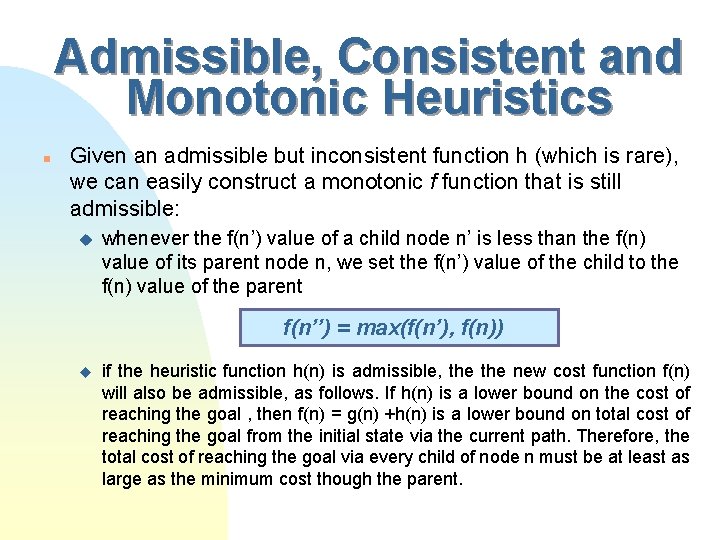

Admissible, Consistent and Monotonic Heuristics n Given an admissible but inconsistent function h (which is rare), we can easily construct a monotonic f function that is still admissible: u whenever the f(n’) value of a child node n’ is less than the f(n) value of its parent node n, we set the f(n’) value of the child to the f(n) value of the parent f(n’’) = max(f(n’), f(n)) u if the heuristic function h(n) is admissible, the new cost function f(n) will also be admissible, as follows. If h(n) is a lower bound on the cost of reaching the goal , then f(n) = g(n) +h(n) is a lower bound on total cost of reaching the goal from the initial state via the current path. Therefore, the total cost of reaching the goal via every child of node n must be at least as large as the minimum cost though the parent.

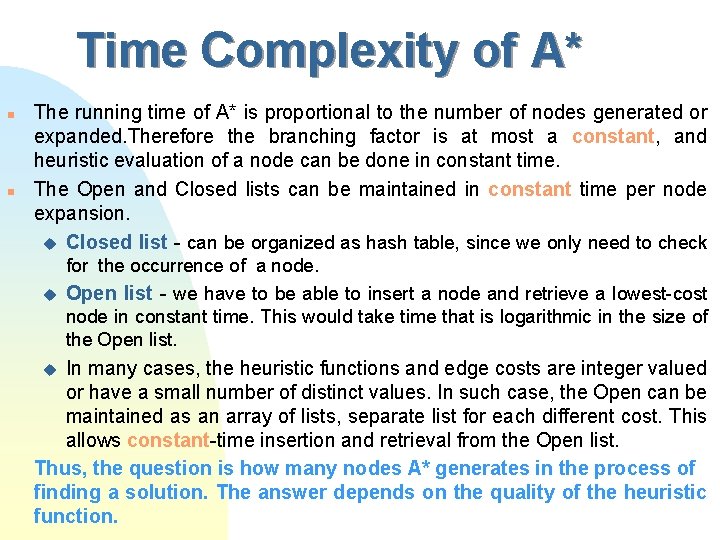

Time Complexity of A* n n The running time of A* is proportional to the number of nodes generated or expanded. Therefore the branching factor is at most a constant, and heuristic evaluation of a node can be done in constant time. The Open and Closed lists can be maintained in constant time per node expansion. u Closed list - can be organized as hash table, since we only need to check for the occurrence of a node. u Open list - we have to be able to insert a node and retrieve a lowest-cost node in constant time. This would take time that is logarithmic in the size of the Open list. In many cases, the heuristic functions and edge costs are integer valued or have a small number of distinct values. In such case, the Open can be maintained as an array of lists, separate list for each different cost. This allows constant-time insertion and retrieval from the Open list. Thus, the question is how many nodes A* generates in the process of finding a solution. The answer depends on the quality of the heuristic function. u

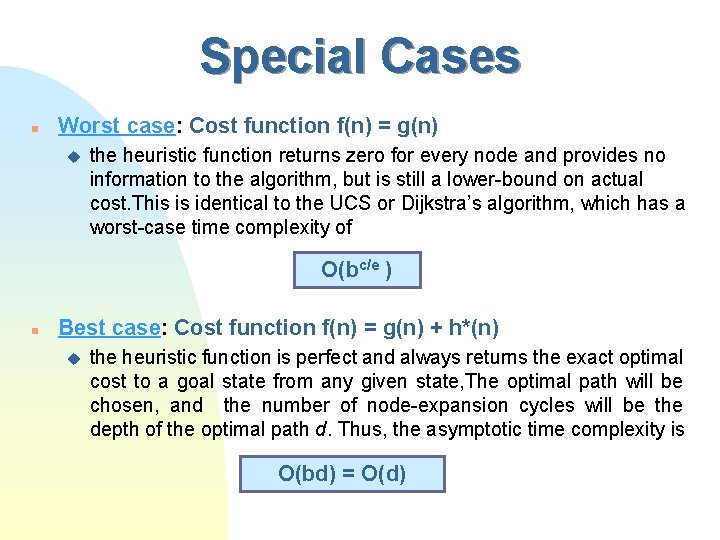

Special Cases n Worst case: Cost function f(n) = g(n) u the heuristic function returns zero for every node and provides no information to the algorithm, but is still a lower-bound on actual cost. This is identical to the UCS or Dijkstra’s algorithm, which has a worst-case time complexity of O(bc/e ) n Best case: Cost function f(n) = g(n) + h*(n) u the heuristic function is perfect and always returns the exact optimal cost to a goal state from any given state, The optimal path will be chosen, and the number of node-expansion cycles will be the depth of the optimal path d. Thus, the asymptotic time complexity is O(bd) = O(d)

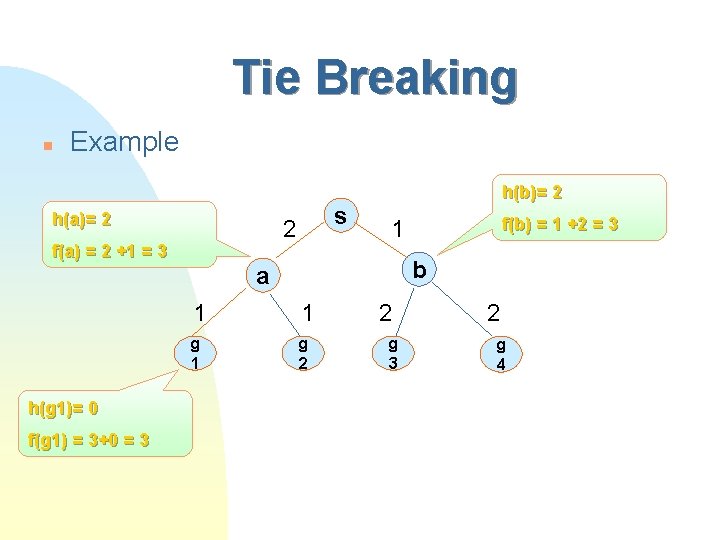

Tie Breaking n Example h(a)= 2 s 2 f(a) = 2 +1 = 3 h(b)= 2 b a h(g 1)= 0 f(g 1) = 3+0 = 3 f(b) = 1 +2 = 3 1 1 1 g c 2 2 g c 3 2 g c 4

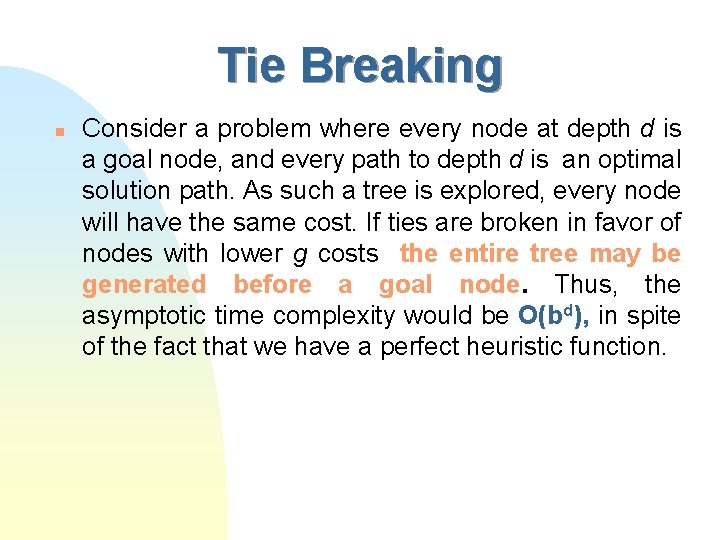

Tie Breaking n Consider a problem where every node at depth d is a goal node, and every path to depth d is an optimal solution path. As such a tree is explored, every node will have the same cost. If ties are broken in favor of nodes with lower g costs the entire tree may be generated before a goal node. Thus, the asymptotic time complexity would be O(bd), in spite of the fact that we have a perfect heuristic function.

Tie Breaking n A better tie breaking rule is to always break ties among nodes with the same f(n) value in favor of nodes with the smallest h(n) value (or the largest g(n) value). This ensures that: u any tie will always be broken in favor of a goal node, which has h(Goal) = 0 by definition. u the time complexity of A* with a perfect heuristic will be O(d).

Conditions for Node Expansion by A* n If the heuristic function is consistent, then the cost function f(n) is nondecreasing along any path away from the root node. The sequence of nodes expanded by A* starts at the h(s) and stays the same or increases until it reaches the cost of an optimal solution. Some nodes with the optimal solution cost might be expanded, until a goal node is chosen.

Conditions for Node Expansion by A* n This means that all nodes n whose cost f(n) < c will certainly be expanded, (where c is optimal solution cost), and no nodes n whose cost f(n) > c will be expanded. Some nodes n whose cost f(n) = c will be expanded. Thus, f(n) < c is a sufficient condition for A* to expand node n, and f(n) c is a necessary condition.

Time optimality of A* For a given consistent (admissible) heuristic function, every admissible algorithm must expand all nodes surely expanded by A*. n Theorem 3. 3 : n Intuition: If a node m with f(m)<C is not expanded, maybe there is a solution path n going from that node!! Proof: Suppose that there exists an admissible algorithm B, a problem P, a consistent heuristic function h and a node m such that node m is not expanded by algorithm B on problem P with heuristic function h, but node m is surely expanded by A*, meaning that f(m) = g(m) + h(m) < c. Let’s construct a new problem P’ that is identical to problem P, except for the addition of a single new edge leaving node m, which leads to a new goal nodes z. Let the cost of the edge from node m to node z be h(m), the heuristic value of node m in problem P’, or c’(m, z) = h(m). We use c’(m, z) here to denote the actual cost from m to z in problem P’. In problem P’, the cost of an optional path from the initial state to the new goal state is g(m) + c’(m, z) = g(m) + h(m) = f(m), since every path to z must go through node m. Since f(m) < c, the cost of an optimal solution to problem P, the optimal solution to problem P’ is the path from the start through node m to goal z. When we apply algorithm B to problem P’. By assumption, B never expands node m on problem P, thus it must not expand node m on problem P’ either. Thus B must fail to find the optimal solution to problem P’. Contradiction !

Intuition for the optimality of A* n If a node m with f(m)<C is not expanded, maybe there is a solution path going from that node!!

Space complexity of A* n n The main drawback of A* is its space complexity. Like all best-first search algorithms’ it stores all the nodes it generates in either the Open list or the Closed list. Thus, its space complexity is the same as its time complexity, assuming that a node can be stored in a constant amount of space. On current computers, it will typically exhaust available memory in a number of minutes. This algorithm is memory- limited. the

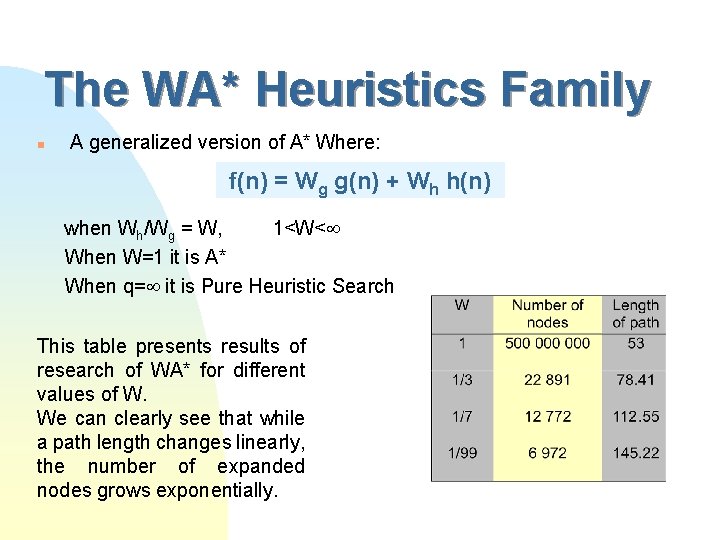

The WA* Heuristics Family n A generalized version of A* Where: f(n) = Wg g(n) + Wh h(n) when Wh/Wg = W, 1<W< When W=1 it is A* When q= it is Pure Heuristic Search This table presents results of research of WA* for different values of W. We can clearly see that while a path length changes linearly, the number of expanded nodes grows exponentially.

Time complexity of A* n n A solution to an abstract model (Pearl 84) A solution to real world problems (Korf 2001)

Abstract Analytic model n We assume that u u n the problem space is a tree, with no cycles. there is a uniform branching factor b, meaning that every node has b children every edge or operator costs one unit to apply. there is a single goal node at depth d in the tree The impact of this assumption is that once we diverge from the optimal path from the root to goal, the only way to reach the goal is to backtrack until we rejoin the single optimal path.

Constant Absolute Error n We assume that the heuristic function is that it has constant absolute error. It means that it never underestimates the optimal cost of reaching a goal by more than a constant. Thus, for some constant k h(n) = h*(n) - k We need to determine how many nodes will be expanded under these assumptions.

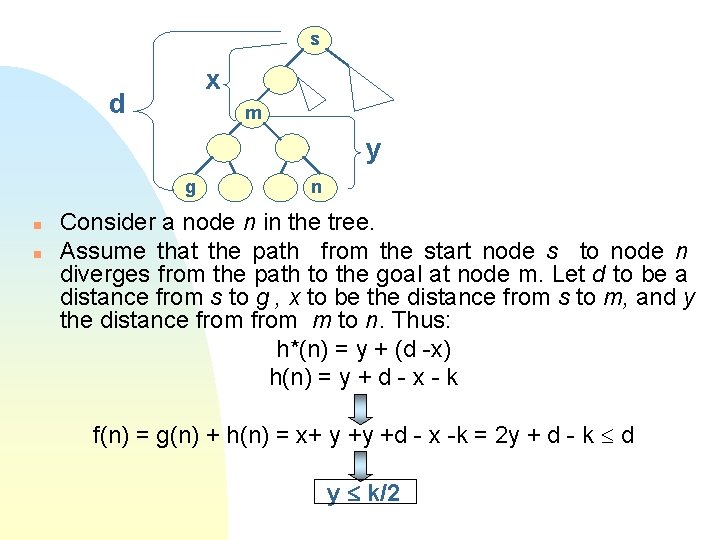

s x d m y g n n n Consider a node n in the tree. Assume that the path from the start node s to node n diverges from the path to the goal at node m. Let d to be a distance from s to g , x to be the distance from s to m, and y the distance from m to n. Thus: h*(n) = y + (d -x) h(n) = y + d - x - k f(n) = g(n) + h(n) = x+ y +y +d - x -k = 2 y + d - k d y k/2

Constant Absolute Error n For any given node m on the optimal path, the number of nodes below it, but off the optimal path, whose depth below m doesn’t exceed k/2 is (b-1)bk/2 -1. This is because there are b -1 branches immediately below node m that diverge from the optimal path, the remaining child being on the optimal path and there are b children below every subsequent node. The number of such nodes m on the optimal path from which we could diverges from it is d -1, since the last node on the optimal path is the goal itself. u n Thus, the total number of nodes whose total cost doesn’t exceed d is (d-1)(b-1)bk/2 -1. If we add the d nodes on the optimal path, this is exactly the set of nodes that are expanded by A* in the worst case. This function is O(d). (k, b are constants => bk/2 -1 is also a constant ) u Thus, the asymptotic time complexity of A* using a heuristic that has constant absolute error is linear in the solution depth.

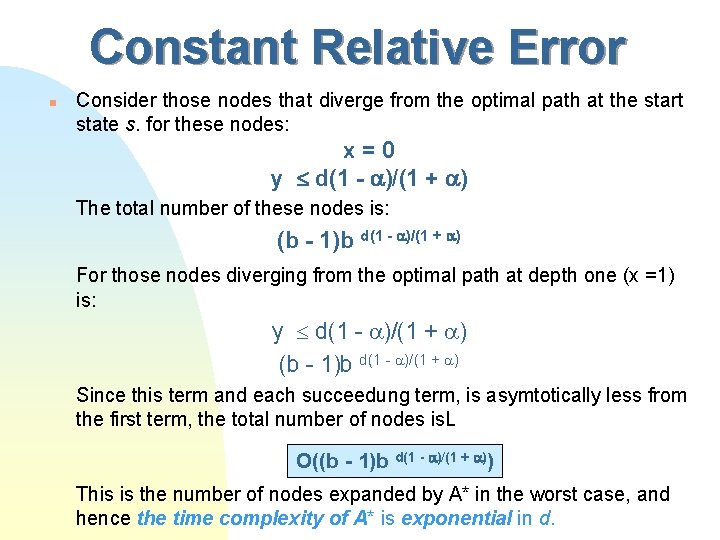

Constant Relative Error n A much more realistic assumption for measurements of heuristic functions, is constant relative error. We assume that the absolute error is a bounded percentage of the quantity being estimated. h(n) = h*(n) Let’s repeat the above analysis with the new heuristic model g(n) = x + y h*(n) = y + d - x h (n) = (y + d - x) f(n) = g(n) + h(n) = x + y + (y + d - x) d y d(1 - )/(1 + )

Constant Relative Error n Consider those nodes that diverge from the optimal path at the start state s. for these nodes: x=0 y d(1 - )/(1 + ) The total number of these nodes is: (b - 1)b d(1 - )/(1 + ) For those nodes diverging from the optimal path at depth one (x =1) is: y d(1 - )/(1 + ) (b - 1)b d(1 - )/(1 + ) Since this term and each succeedung term, is asymtotically less from the first term, the total number of nodes is. L O((b - 1)b d(1 - )/(1 + )) This is the number of nodes expanded by A* in the worst case, and hence the time complexity of A* is exponential in d.

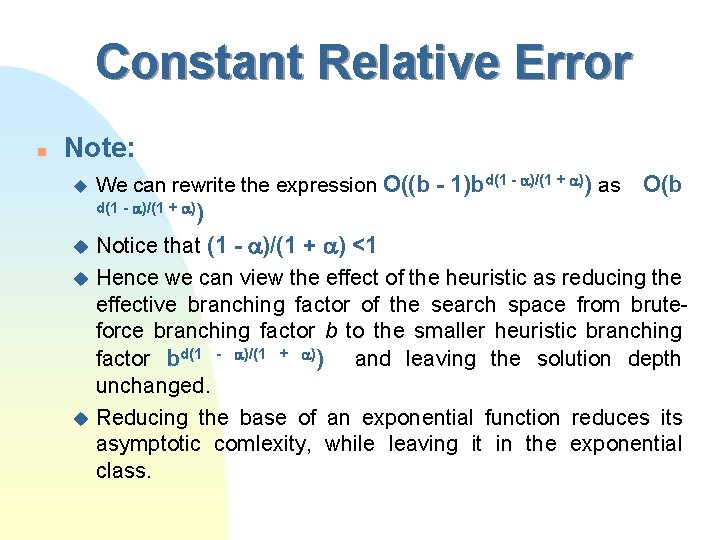

Constant Relative Error n Note: u We can rewrite the expression O((b - 1)bd(1 - )/(1 + )) as O(b d(1 - )/(1 + )) Notice that (1 - )/(1 + ) <1 u Hence we can view the effect of the heuristic as reducing the effective branching factor of the search space from bruteforce branching factor b to the smaller heuristic branching factor bd(1 - )/(1 + )) and leaving the solution depth unchanged. u Reducing the base of an exponential function reduces its asymptotic comlexity, while leaving it in the exponential class. u

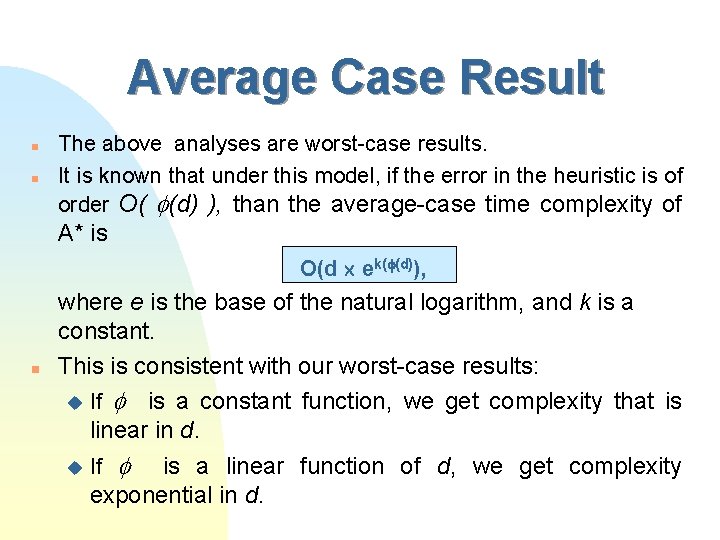

Average Case Result n n The above analyses are worst-case results. It is known that under this model, if the error in the heuristic is of order O( (d) ), than the average-case time complexity of A* is O(d ek( (d)), n where e is the base of the natural logarithm, and k is a constant. This is consistent with our worst-case results: u If is a constant function, we get complexity that is linear in d. u is a linear function of d, we get complexity exponential in d. If

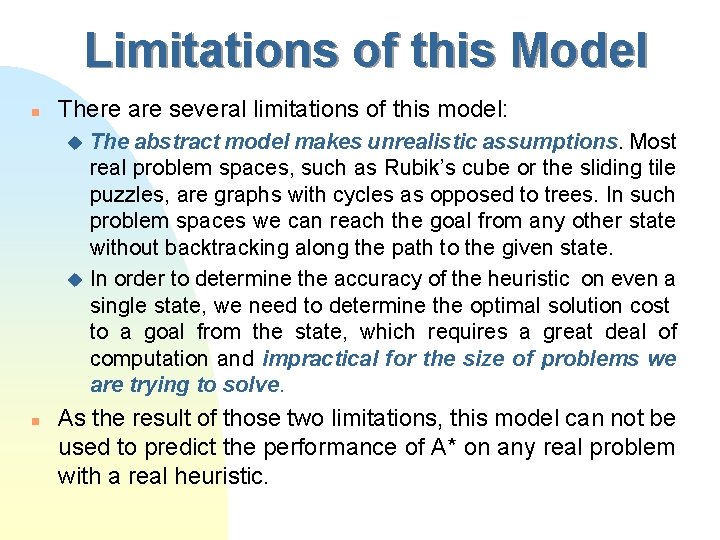

Limitations of this Model n There are several limitations of this model: The abstract model makes unrealistic assumptions. Most real problem spaces, such as Rubik’s cube or the sliding tile puzzles, are graphs with cycles as opposed to trees. In such problem spaces we can reach the goal from any other state without backtracking along the path to the given state. u In order to determine the accuracy of the heuristic on even a single state, we need to determine the optimal solution cost to a goal from the state, which requires a great deal of computation and impractical for the size of problems we are trying to solve. u n As the result of those two limitations, this model can not be used to predict the performance of A* on any real problem with a real heuristic.

Heuristic analysis on real problems

Characterization of the Heuristic n n n Instead of characterizing a heuristic by its accuracy for purposes of its analysis, we characterize a heuristic by the distribution of heuristic values over the nodes in the problem space. We can specify this distribution by a set of parameters D(x) of nodes for which h(n) x. We refer to this set of values as the overall distribution of the heuristic function, assuming that every state in the problem space is equally likely. X (0, ), but for all x max (h(n)) , D(x) = 1 A way to view D(x) is that it is the probability that a state is chosen randomly and uniformly from all states in the problem space has h(n) x

n Characterization of the Heuristic Note: This characterization of a heuristic function in terms of its overall distribution is not a measure of the accuracy of the function. u The overall distribution is much easier to determine in practice than the accuracy of the heuristic function. u n n n The complexity of a search algorithm depends on a different distribution called the equilibrium distribution denoted as P(x). This is a distribution of heuristic values at a given depth of a brute-force search, in the limit of large depth. For the easy purpose of our course we assume that D(x)=P(x)=probability that a node n has h(n) x

Assumptions of Analysis n n Our search algorithm doesn’t check for or detect state that have been previously generated. While this is not true for A*. It is true for the linear space variants. Thus, the multiple nodes that correspond to the same state of the problem are counted separately. All edges have unit cost, and hence the cost of a solution is a number of edges in the solution path. n The heuristic function is also integer valued. n The heuristic is consistent

Our Task n Our task is to determine n(b, d, P) the asymptotic worst-case number of nodes generated by A* in searching a problem space with branching factor b, optimal solution depth d, and a heuristic is characterized by the equilibrium distribution P(x).

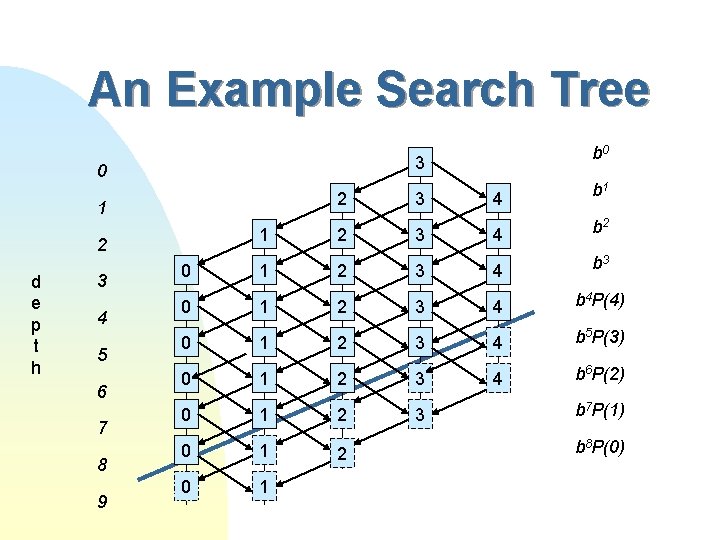

An Example Search Tree 3 0 2 1 3 4 5 6 7 8 9 3 4 b 1 1 2 3 4 b 2 0 1 2 3 4 b 3 0 1 2 3 4 b 4 P(4) 0 1 2 3 4 b 5 P(3) 0 1 2 3 4 b 6 P(2) 0 1 2 3 0 1 2 d e p t h b 0 b 7 P(1) b 8 P(0)

An Example Search Tree n n On the figure: u the vertical axis represents the depth of node below the start node. u the horizontal axis represents the heuristic value. u each box represents a set of nodes at the same depth with the same heuristic value, indicated by the number in the box. u the arrows represent the relationship between parent and child nodes. h(child) h(parent) -1. u the solid boxes represent “fertile” nodes which will be expanded. u the dotted boxes represent “sterile” nodes that will not be expanded, because their total cost exceeds the optimal solution cost. u the thick diagonal line separates the fertile nodes from the sterile nodes. In this particular example, the maximum value of the heuristic is 4, and the depth of solution d is 8 moves. We chose 3 for the heuristic value of the initial state.

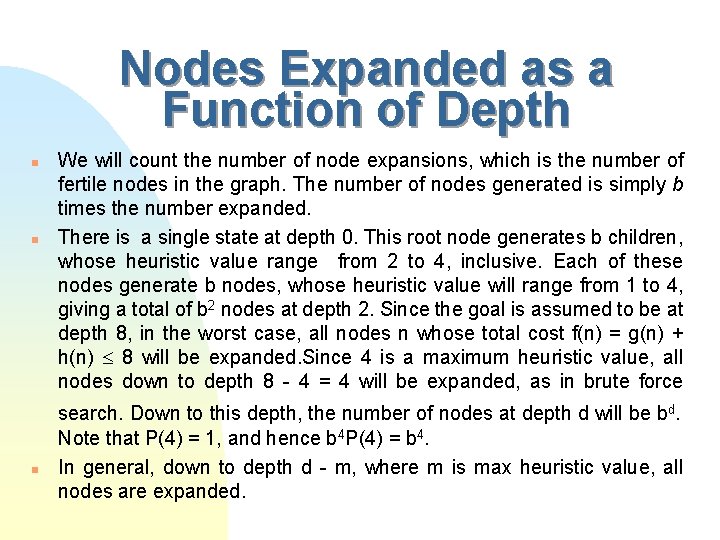

Nodes Expanded as a Function of Depth n n n We will count the number of node expansions, which is the number of fertile nodes in the graph. The number of nodes generated is simply b times the number expanded. There is a single state at depth 0. This root node generates b children, whose heuristic value range from 2 to 4, inclusive. Each of these nodes generate b nodes, whose heuristic value will range from 1 to 4, giving a total of b 2 nodes at depth 2. Since the goal is assumed to be at depth 8, in the worst case, all nodes n whose total cost f(n) = g(n) + h(n) 8 will be expanded. Since 4 is a maximum heuristic value, all nodes down to depth 8 - 4 = 4 will be expanded, as in brute force search. Down to this depth, the number of nodes at depth d will be bd. Note that P(4) = 1, and hence b 4 P(4) = b 4. In general, down to depth d - m, where m is max heuristic value, all nodes are expanded.

Nodes Expanded as a Function of Depth n n The nodes expanded at depth 5 are fertile nodes, which are those with f(n) = g(n) + h(n) = 5 + h(n) 8 h(n) 3. In equilibrium distribution, the fraction of nodes at depth 5 with h(n) 3 is P(3). Since all nodes at depth 4 are expanded, the total number of nodes at depth 5 is b 5, and the number of fertile nodes at depth 5 is b 5 P(3). While there are nodes at depth 6 with all possible heuristic values, their distribution in no longer equal to the equilibrium distribution. The number of nodes at depth 6 with h(n) 2 is completely unaffected by this pruning and is the same as it would be in a brute force search at depth 6, or b 6 P(2).

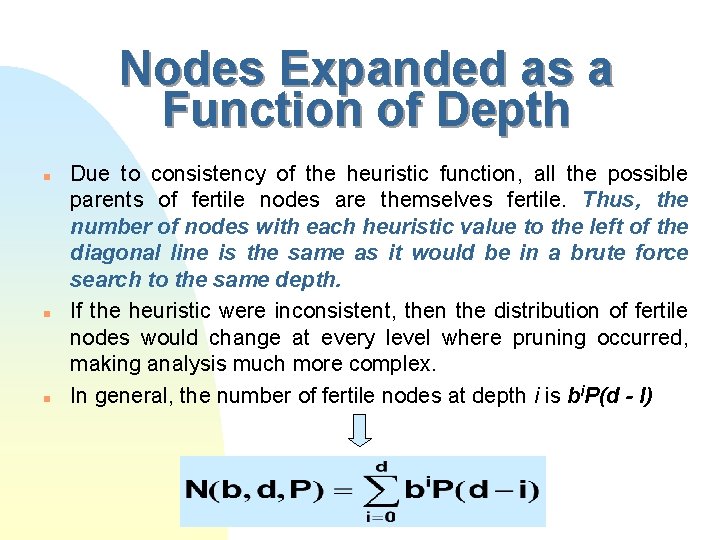

Nodes Expanded as a Function of Depth n n n Due to consistency of the heuristic function, all the possible parents of fertile nodes are themselves fertile. Thus, the number of nodes with each heuristic value to the left of the diagonal line is the same as it would be in a brute force search to the same depth. If the heuristic were inconsistent, then the distribution of fertile nodes would change at every level where pruning occurred, making analysis much more complex. In general, the number of fertile nodes at depth i is bi. P(d - I)

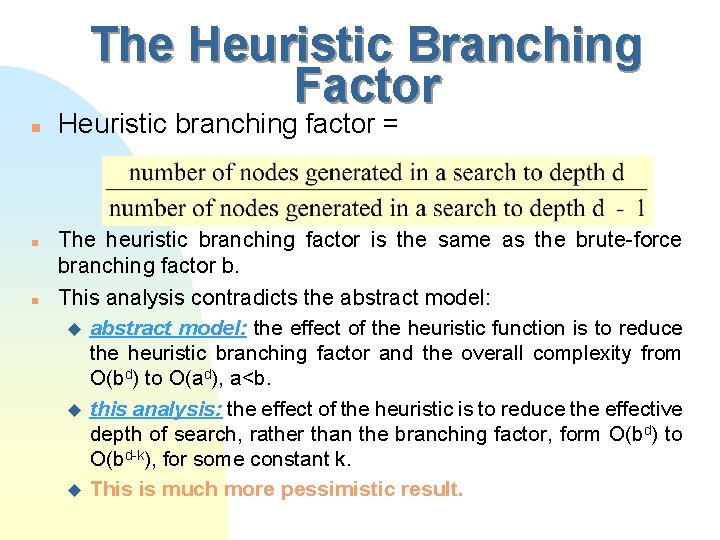

The Heuristic Branching Factor n n n Heuristic branching factor = The heuristic branching factor is the same as the brute-force branching factor b. This analysis contradicts the abstract model: u abstract model: the effect of the heuristic function is to reduce the heuristic branching factor and the overall complexity from O(bd) to O(ad), a<b. u this analysis: the effect of the heuristic is to reduce the effective depth of search, rather than the branching factor, form O(bd) to O(bd-k), for some constant k. u This is much more pessimistic result.

Linear Space Heuristic Searches n n The algorithms In Chapter 3 needed exponential space. Now we will see 3 algorithms that only require linear space.

Iterative Deepening A* (IDA*) n n IDA* is to A* as DFID is to BFS How does it work: u. A cost threshold is set. u f(n) u If = g(n) + h(n) is computed in each iteration. f(n) < threshold we expand the node. u Else the branch is pruned (we don’t expand it).

Iterative Deepening A* (IDA*) u If a goal node is reached with a price lower then the goal it is returned. u Else if a whole iteration has ended without reaching the goal, then another iteration is begun with a greater cost threshold. u The new cost threshold is set to the minimum cost of al nodes that were pruned on the previous iteration. u The cost Threshold for the first Iteration is set to the cost of the initial state.

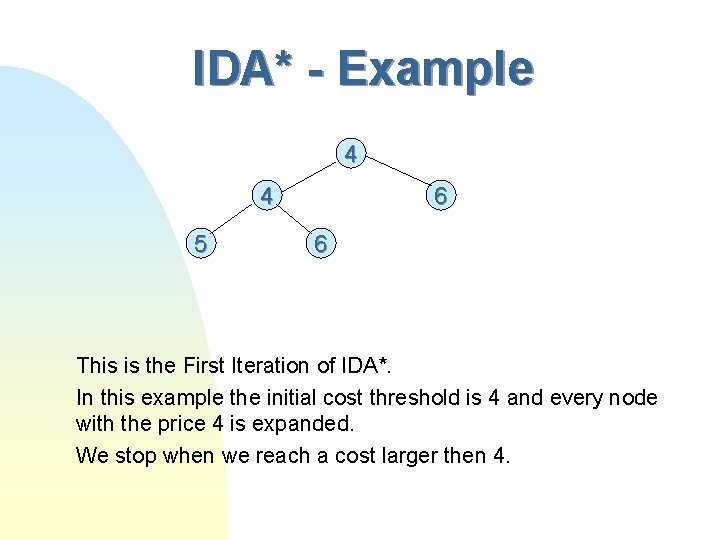

IDA* - Example 4 4 5 6 6 This is the First Iteration of IDA*. In this example the initial cost threshold is 4 and every node with the price 4 is expanded. We stop when we reach a cost larger then 4.

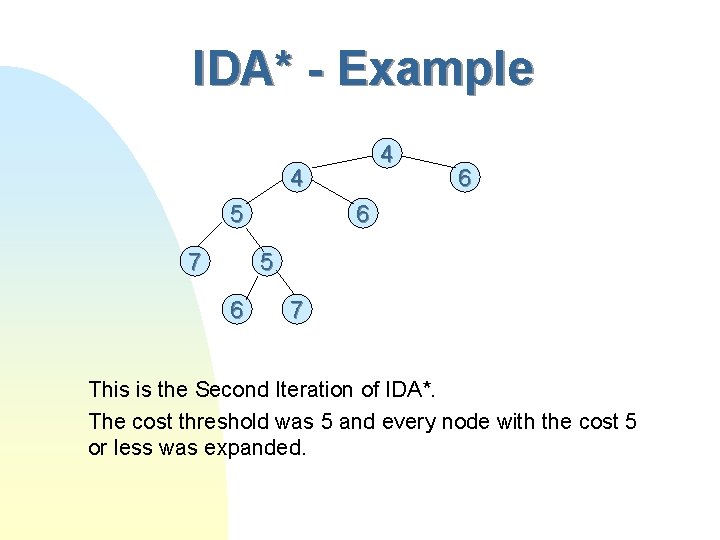

IDA* - Example 4 4 5 7 6 6 5 6 7 This is the Second Iteration of IDA*. The cost threshold was 5 and every node with the cost 5 or less was expanded.

Termination n If a solution of finite cost exists, it will eventually be found and returned by IDA*.

Solution Optimality n Theorem 4. 1 : In a graph where all edges have a minimum positive cost, and non-negative heuristic values that never overestimate actual cost, in which a finite-cost path exists to a goal state, IDA* will return an optimal path to a goal. n Steps of proof (by induction): u For the induction step, assume that at the iteration I , there is a node n on the frontier on an optimal solution path. u During iteration I+1, node n will be generated again, since threshold for iteration I+1 is greater then for iteration I. In this way we can show that at the end of every iteration, there is at least one node n on the iteration that is on an optimal solution path.

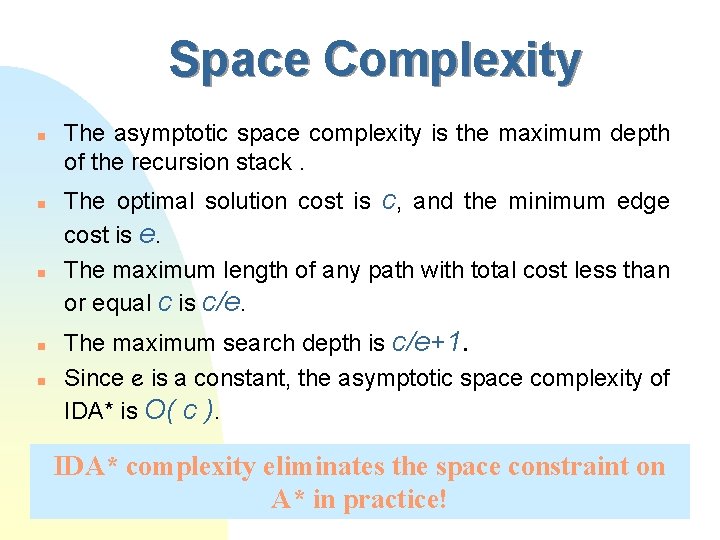

Space Complexity n n n The asymptotic space complexity is the maximum depth of the recursion stack. The optimal solution cost is c, and the minimum edge cost is e. The maximum length of any path with total cost less than or equal c is c/e. The maximum search depth is c/e+1. Since e is a constant, the asymptotic space complexity of IDA* is O( c ). IDA* complexity eliminates the space constraint on A* in practice!

Time Complexity n The last iteration u The last iteration will expand all nodes connected to the root whose cost is less then or equal to c. u This is only true if the heuristic function is consistent. u In the worst case (when the heuristic function is not consistent) IDA* expands the same set of nodes as A* does.

Time Complexity n The previous iterations u The number of nodes generated by an iteration of IDA* with the cost threshold x is : u If N(x) grows exponentially with x, with branching factor b then : u This means that in each iteration the number of nodes developed also grows exponentially with the branching factor b.

Time Complexity n n So according to what we saw the asymptotic time complexity of IDA* is the same as that of A*. We have seen that like to iterative deepening (ID) most of the work is done in the final iteration The time is not harmed by all the iterations we apply although we go over the nodes in the former levels a couple of times.

IDA* - Conclusion n n IDA* keeps the optimal solution of A*. IDA* solves the space constraint that A* has without any sacrifice to the asymptotic time complexity. IDA* may run even faster then A* (Why? ). IDA* is much easier to implement then A* because it’s a DFS algorithm and no open and closed lists have to be kept.

limitations of IDA* n When all the node costs are different: u IDA* will develop a different iteration for each node and in each iteration only one new node will be expanded. u On such a tree the time complexity of A* will be O(bd) but for IDA* O(b 2 d). u If the asymptotic complexity of A* is O(N) - IDA*‘s complexity can get in the worst case to O(N 2).

limitations of IDA* n The problem space for IDA* must be a tree because : if a certain node can be reached via multiple paths it will be represented by more than 1 node in the search tree. u A* can avoid the duplicate nodes by storing them in the memory but IDA* is a DFS (no memory) and thus it can not detect most of the duplicates. u This can increase the time complexity of IDA* compared to A*. u Thus, if there are many short cycles in the graph and there is no memory problem - choose A*. u

Experiments with IDA* n n With the 8 tile puzzle, a good implementation of IDA* runs about 3 times faster per node generation then a good implementation of A*. IDA* with the Manhattan distance heuristic was the first algorithm to optimally solve random 15 puzzle instances. When trying to solve this problem with A* the memory quickly ran out because billions of nodes need to be expanded in this problem. IDA* was also used to solve the 24 puzzle (took a couple of weeks to solve). IDA* has also been used to solve the 3 x 3 x 3 Rubik’s cube (This also takes about a week to solve).

ID as an Algorithm Schema n n ID can be viewed as an algorithm schema with different instantiations based on the cost function. There are several types : u DFID if cost = depth. u IDA* if cost = g(n) + h(n) u ID version of Uniform Cost Search if cost = g(n)

Depth First Branch and Bound n n IDA* isn’t so effective for all the problems. For Example: TSP- the Traveling Salesman Problem deals with the possibilities of a salesman in visiting a finite number of cities in the cheapest way where every two cities have different price as their distance.

IDA* And TSP n IDA* clearly doesn’t fit to deal with this problem in the best way because: u in the TSP problem there is a finite depth of the search tree, so Iterative Deepening is not useful in this case, because we know the depth of the solution and there is n use of searching different depths all the time when we know the correct depth from the beginning.

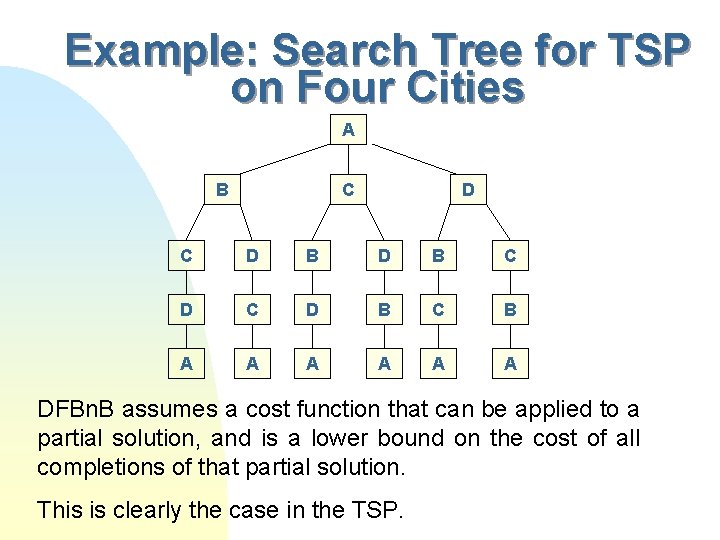

Example: Search Tree for TSP on Four Cities A B C D B D B C B A A A DFBn. B assumes a cost function that can be applied to a partial solution, and is a lower bound on the cost of all completions of that partial solution. This is clearly the case in the TSP.

How DFBn. B Works n DFBn. B works like a simple DFS but when finding the first solution the cost of that solution is stored in . u from this point on each time the cost of the new path exceeds or equals , that branch is pruned and we continue checking the next one. u each time we reach a path that costs less than we change to this cost and update the best solution. u The search ends when we finish checking the whole tree. u n starts with infinity in the beginning of the search.

When DFBn. B is Used n n n Depth-First Branch-and-Bound is often used when the optimal solution is required. It can also be applied in an infinite tree if there is a good upper bound available on the optimal solution depth or solution cost. It is also better then IDA* wastes much time when there a lot of different costs, for example in the TSP each cost is different then the other so the IDA* will only generate few nodes in each iteration thus costing us a lot of wasted time.

Improving DFBn. B n Node Ordering u n We use node ordering to find a low cost solution as quickly as possible and this results in greater pruning in the remainder of the search. For example in the TSP we can order the nodes in increasing order of the distances between child cities and parent cities. Heuristic evaluation function u We can use a heuristic evaluation function f(n) = g(n) + h(n) in order to improve the DFBn. B Algorithm.

Solution Quality and Complexity n n DFBn. B returns an optimal solution. The asymptotic complexity is O(bd), since all the children of each node on the current path must be generated and stored to order them. O(bd) = O(d) since we assume b is constant. The asymptotic complexity is O(d) n An analysis of the time complexity of DFBn. B has to model both the heuristic function and efficiency of node ordering. The existing analyses on this algorithm are all based on abstract analytic models.

An Analytic Model and Surprising Anomaly n n It is a model that assumed a tree with uniform branching factor and depth, were the edges are assigned costs randomly from some distribution. Example: u The edges cost zero or one with probability 0. 5 each. - It can be shown that if the expected number of zero-cost children of a node is greater than 1, DFBn. B with node ordering will run in time that is polynomial in the search depth.

Truncated Branch and Bound n n n DDFBn. B is an any-time algorithm It’s uniqueness is that at any given time the algorithm can stop and return a solution (not an optimal one). If you don’t know how much time you have to solve the problem you can use DFBn. B and when you stop it, it will return the best path found so far.

ID vs. DFBn. B n Similarities: u Both guarantee optimal solutions given lower bound heuristics. u Both are DFS and have linear space complexity u Both use global cost bound.

ID vs. DFBn. B n Main differences: u In ID the cost threshold is always a lower bound of the best solution and it increases during the iterations. u DFBn. B starts with an upper bound cost threshold and decreases through the search. u Both expand more nodes than A*. ID expands only nodes with lower cost than C but a couple of times, and DFBn. B expands also nodes with a cost larger than C.

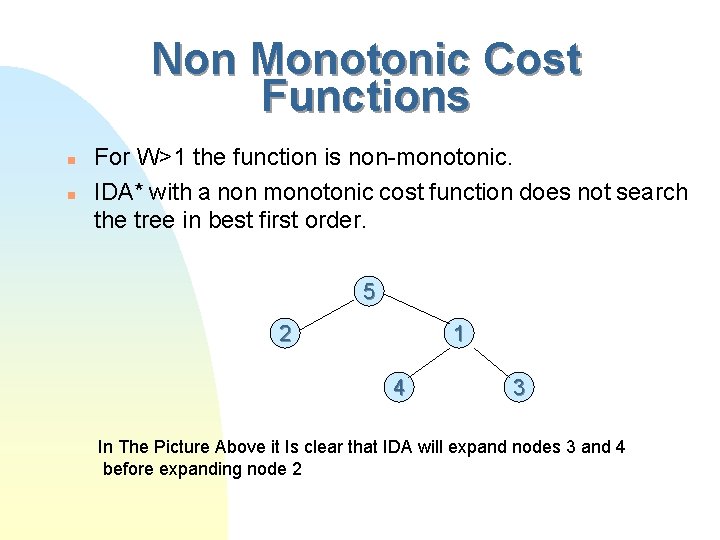

Non Monotonic Cost Functions n WA* has a cost function: f(n) = g(n) + wh(n) n To avoid it’s large space complexity (same as A*) we would like to use Iterative Deepening with this cost function.

Non Monotonic Cost Functions n n For W>1 the function is non-monotonic. IDA* with a non monotonic cost function does not search the tree in best first order. 5 2 1 4 3 In The Picture Above it Is clear that IDA will expand nodes 3 and 4 before expanding node 2

Recursive Best-First Search n RBFS is a linear-space algorithm that expands nodes in best-first order even with a non-monotonic cost function and generates fewer nodes than iterative deepening with a monotonic cost function.

Simple Recursive Best-First Search n n SRBFS uses a local cost threshold for each recursive call. It takes 2 arguments: a node u an upper bound u n n It explores the subtree below the node as long as it contains frontier nodes whose costs do not exceed the upped bound. Every node has an upper bound on cost. Upper bound=min(upper bound on it’s parent, current value of it’s lowest cost brother) Intuition: The upper bound is the second best node of the open-list of the equivalent A* run.

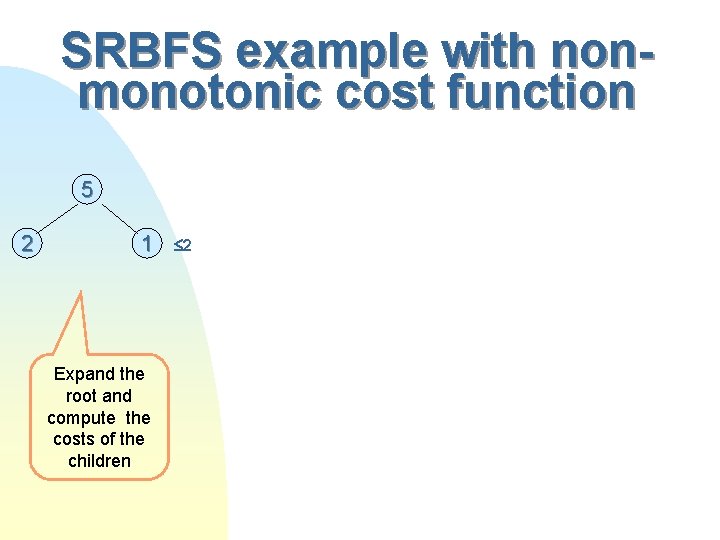

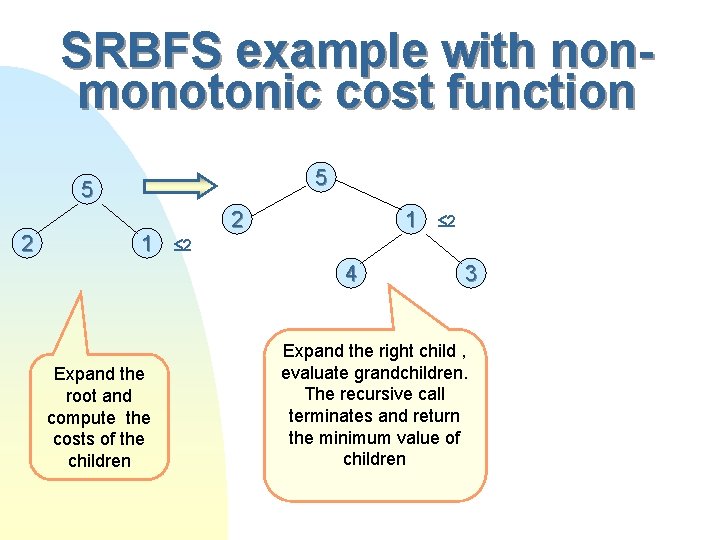

SRBFS example with nonmonotonic cost function 5 2 1 Expand the root and compute the costs of the children 2

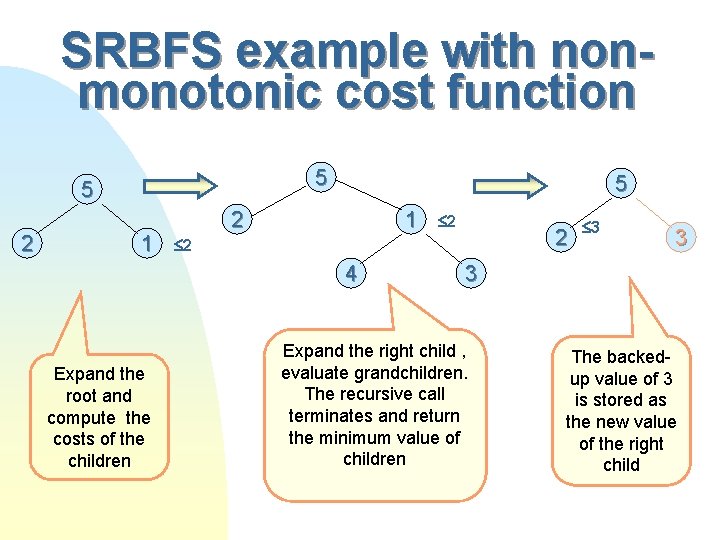

SRBFS example with nonmonotonic cost function 5 5 2 1 2 4 Expand the root and compute the costs of the children 2 3 Expand the right child , evaluate grandchildren. The recursive call terminates and return the minimum value of children

SRBFS example with nonmonotonic cost function 5 5 2 1 2 2 4 Expand the root and compute the costs of the children 2 3 3 3 Expand the right child , evaluate grandchildren. The recursive call terminates and return the minimum value of children The backedup value of 3 is stored as the new value of the right child

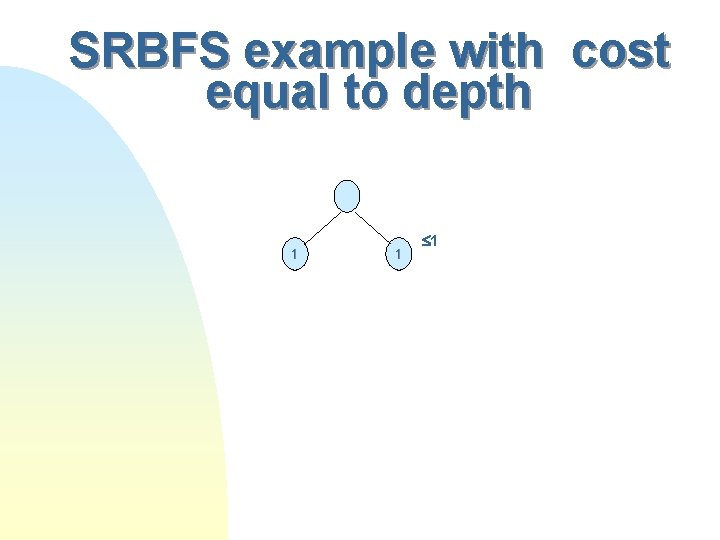

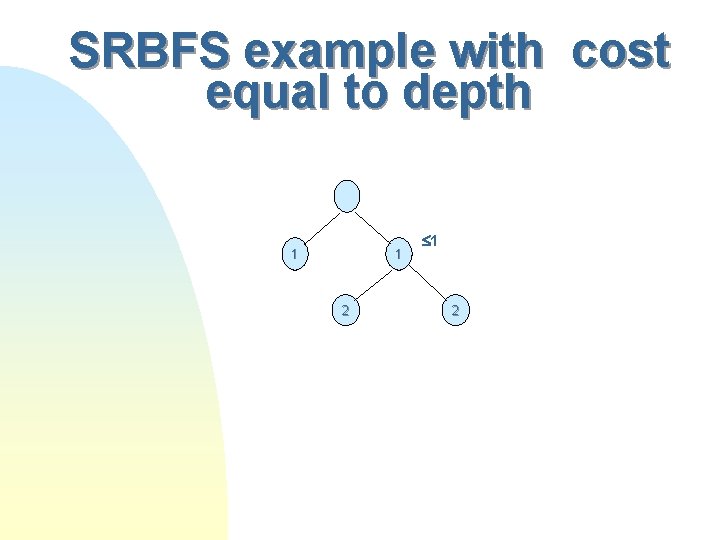

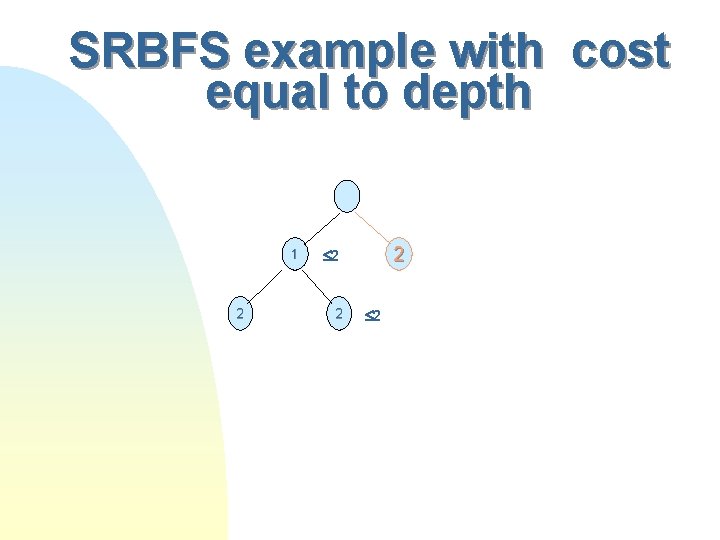

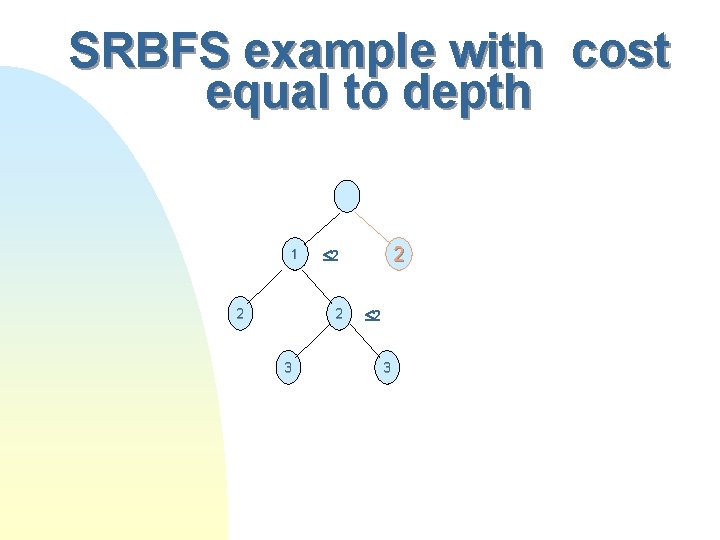

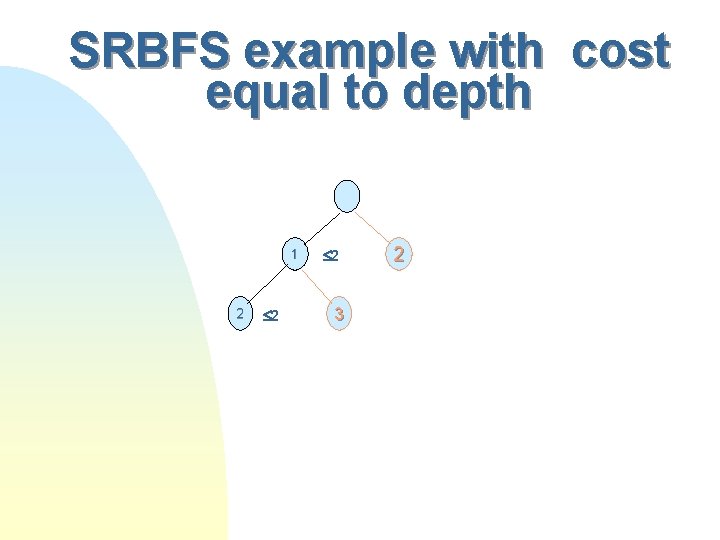

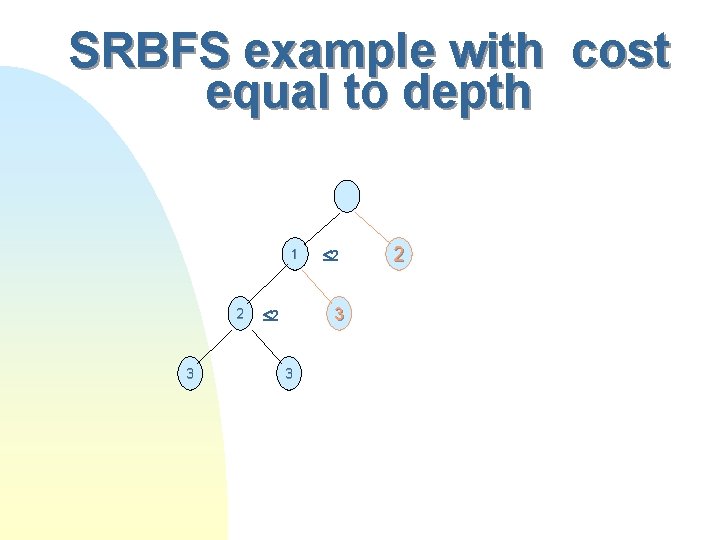

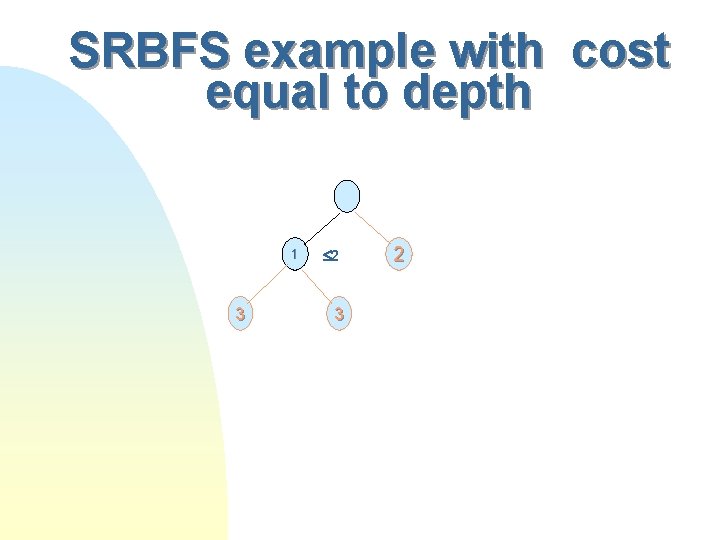

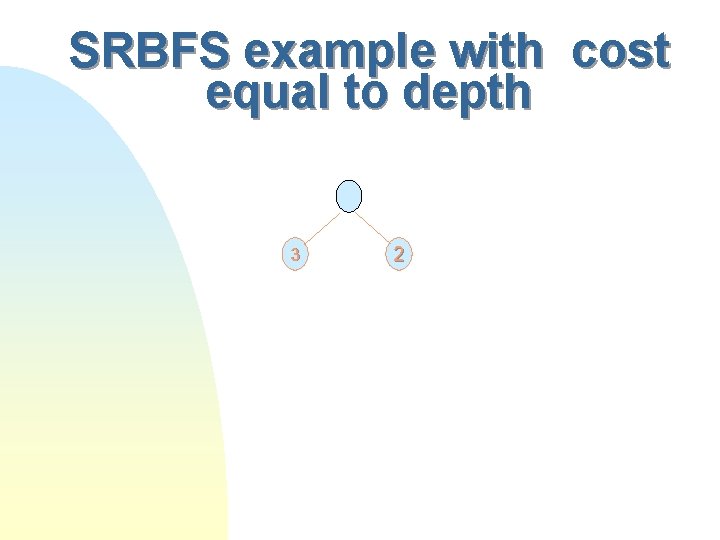

SRBFS example with cost equal to depth 1 1 1

SRBFS example with cost equal to depth 1 1 2

SRBFS example with cost equal to depth 1 2 2

SRBFS example with cost equal to depth 1 2 2 2

SRBFS example with cost equal to depth 1 2 2 3 2 2 2 3

SRBFS example with cost equal to depth 1 2 2 2 3 2

SRBFS example with cost equal to depth 1 2 3 2

SRBFS example with cost equal to depth 1 3 2 3 2

SRBFS example with cost equal to depth 3 2

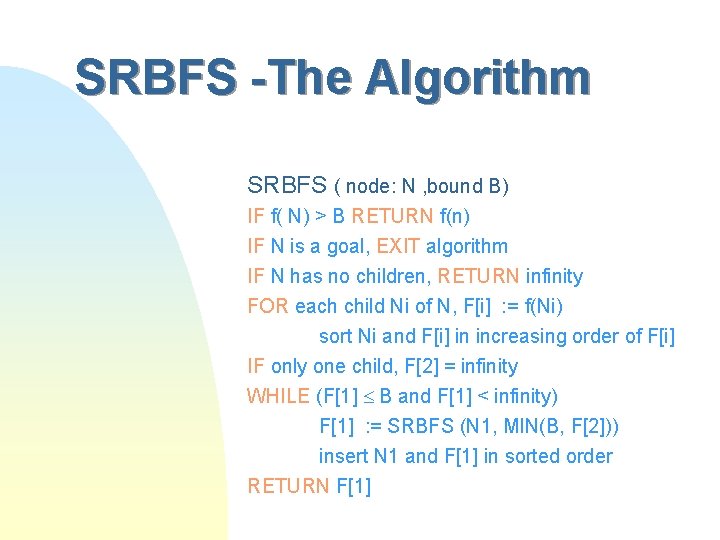

SRBFS -The Algorithm SRBFS ( node: N , bound B) IF f( N) > B RETURN f(n) IF N is a goal, EXIT algorithm IF N has no children, RETURN infinity FOR each child Ni of N, F[i] : = f(Ni) sort Ni and F[i] in increasing order of F[i] IF only one child, F[2] = infinity WHILE (F[1] B and F[1] < infinity) F[1] : = SRBFS (N 1, MIN(B, F[2])) insert N 1 and F[1] in sorted order RETURN F[1]

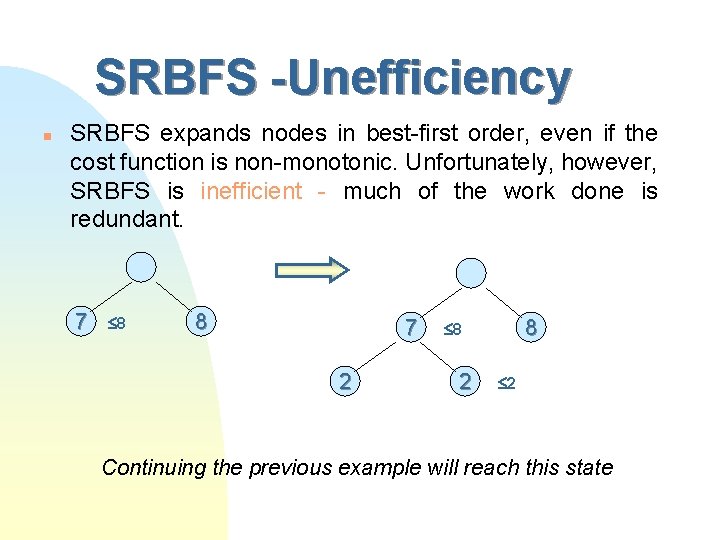

SRBFS -Unefficiency n SRBFS expands nodes in best-first order, even if the cost function is non-monotonic. Unfortunately, however, SRBFS is inefficient - much of the work done is redundant. 7 8 8 7 2 8 8 2 2 Continuing the previous example will reach this state

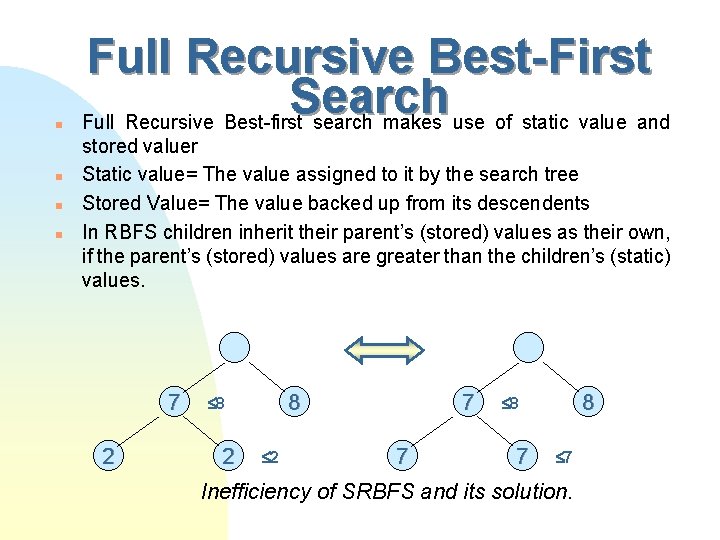

n n Full Recursive Best-First Search Full Recursive Best-first search makes use of static value and stored valuer Static value= The value assigned to it by the search tree Stored Value= The value backed up from its descendents In RBFS children inherit their parent’s (stored) values as their own, if the parent’s (stored) values are greater than the children’s (static) values. 7 2 8 8 2 2 7 7 8 8 7 7 Inefficiency of SRBFS and its solution.

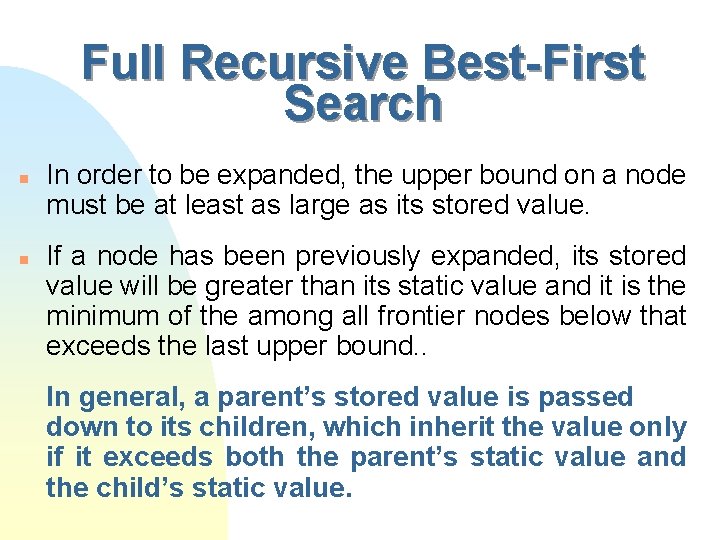

Full Recursive Best-First Search n n In order to be expanded, the upper bound on a node must be at least as large as its stored value. If a node has been previously expanded, its stored value will be greater than its static value and it is the minimum of the among all frontier nodes below that exceeds the last upper bound. . In general, a parent’s stored value is passed down to its children, which inherit the value only if it exceeds both the parent’s static value and the child’s static value.

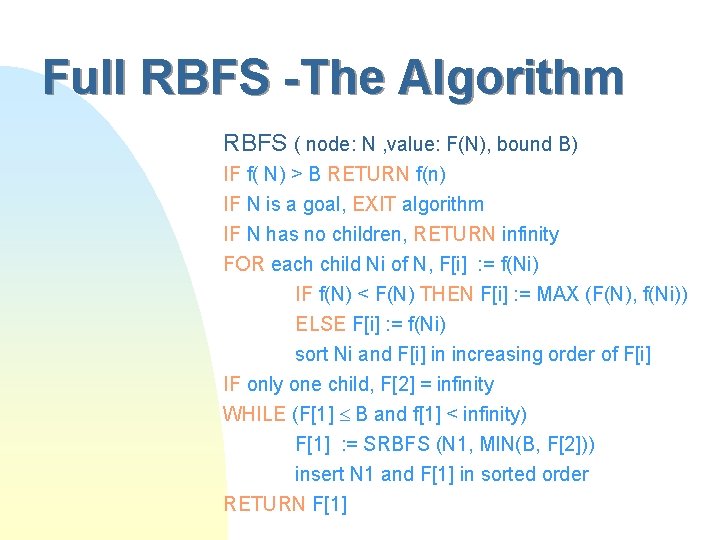

Full RBFS -The Algorithm RBFS ( node: N , value: F(N), bound B) IF f( N) > B RETURN f(n) IF N is a goal, EXIT algorithm IF N has no children, RETURN infinity FOR each child Ni of N, F[i] : = f(Ni) IF f(N) < F(N) THEN F[i] : = MAX (F(N), f(Ni)) ELSE F[i] : = f(Ni) sort Ni and F[i] in increasing order of F[i] IF only one child, F[2] = infinity WHILE (F[1] B and f[1] < infinity) F[1] : = SRBFS (N 1, MIN(B, F[2])) insert N 1 and F[1] in sorted order RETURN F[1]

Full Recursive Best-First Search In order to fully understand RBFs one must 1) read the book and 2) Generate a tree and run EBFS step by step

RBFS vs SRBFS n RBFS behaves differently depending on whether it is expanding new nodes, or previously expanded nodes: u New nodes - proceeds like Best-First. u Previously expanded nodes - behaves like BFS until it reaches a lowest - cost node. Then it reverts back to Best-First.

Space Complexity of SRBFS and RBFS The space complexity of SRBFS and RBFS is O(bd) where b is the branching factor and d is the maximum search depth.

Time Complexity of SRBFS and RBFS n n The asymptotic time complexity of SRBFS and RBFS is the number of node generations. The actual number of nodes generated depends on the particular cost function.

Worst case Time Complexity n As with ID, the worst-case time complexity occurs when u all nodes have unique cost values. u they must be arranged so that successive nodes in an ordered sequence of cost values are in different subtrees of the root node.

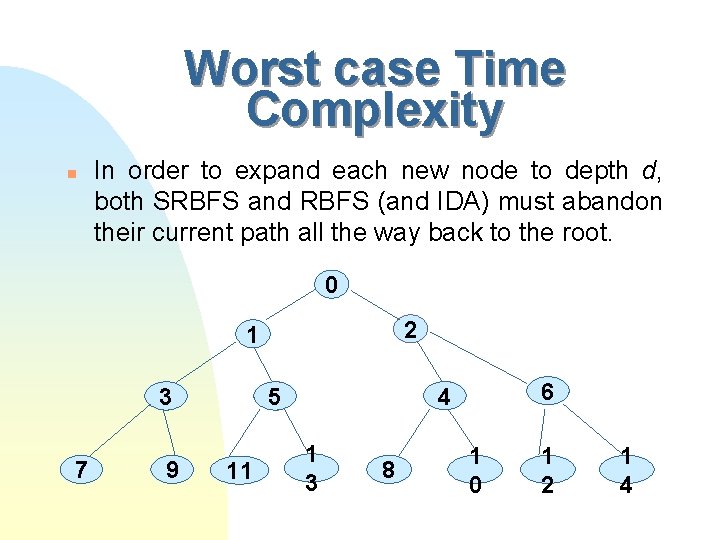

Worst case Time Complexity n In order to expand each new node to depth d, both SRBFS and RBFS (and IDA) must abandon their current path all the way back to the root. 0 2 1 3 7 9 11 6 c 4 c 5 c 1 c 3 8 1 0 1 2 1 4

Worst case Time Complexity The worst case time complexity of SRBFS and RBFS is O(b 2 d-1)

RBFS vs. ID n n If the cost function is non-monotonic, the two algorithms are not directly comparable. RBFS generate fewer nodes than ID on average. Because RBFS only backtracks to their common ancestor instead of directly to the root as IDA*.

- Slides: 128