Local search and Optimisation Introduction global versus local

Local search and Optimisation Introduction: global versus local Study of the key local search techniques Concluding Remarks

Introduction: Global versus Local search

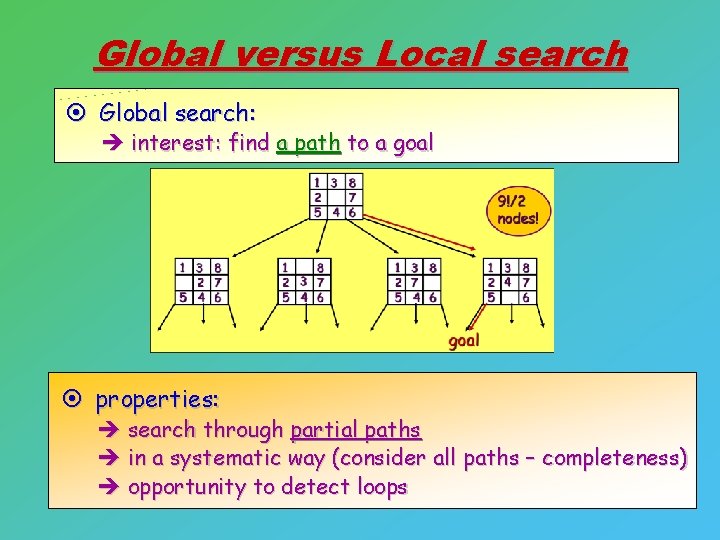

Global versus Local search ¤ Global search: è interest: find a path to a goal ¤ properties: è search through partial paths è in a systematic way (consider all paths – completeness) è opportunity to detect loops

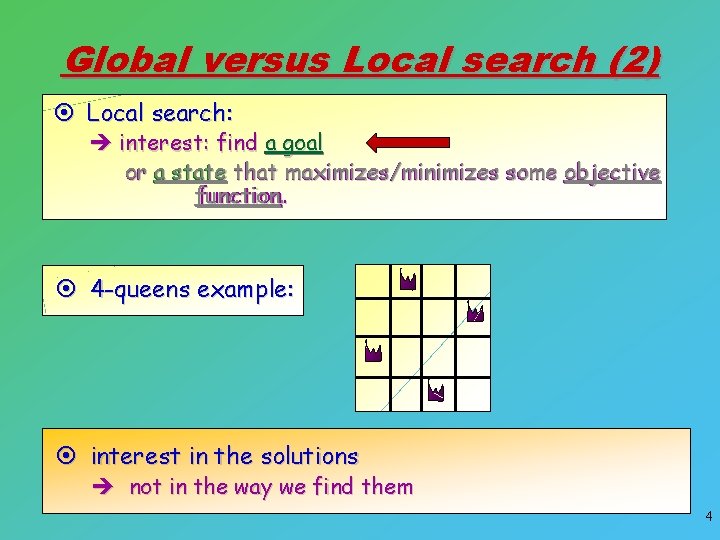

Global versus Local search (2) ¤ Local search: è interest: find a goal or a state that maximizes/minimizes some objective function. . ¤ 4 -queens example: ¤ interest in the solutions è not in the way we find them 4

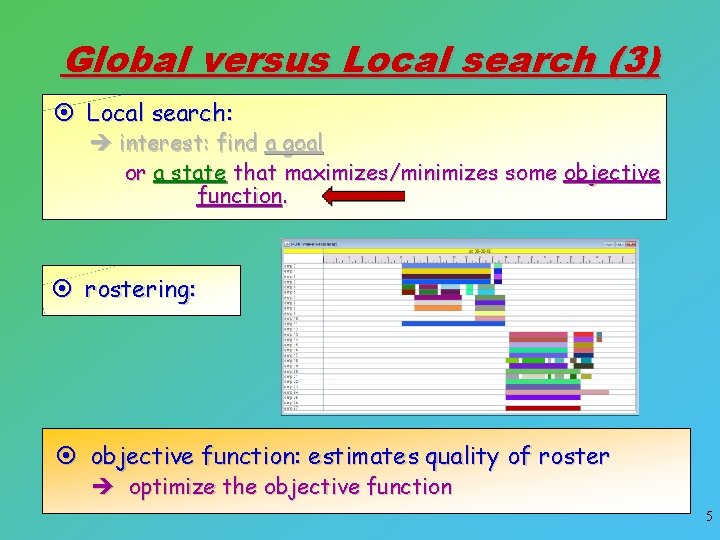

Global versus Local search (3) ¤ Local search: è interest: find a goal or a state that maximizes/minimizes some objective function. ¤ rostering: ¤ objective function: estimates quality of roster è optimize the objective function 5

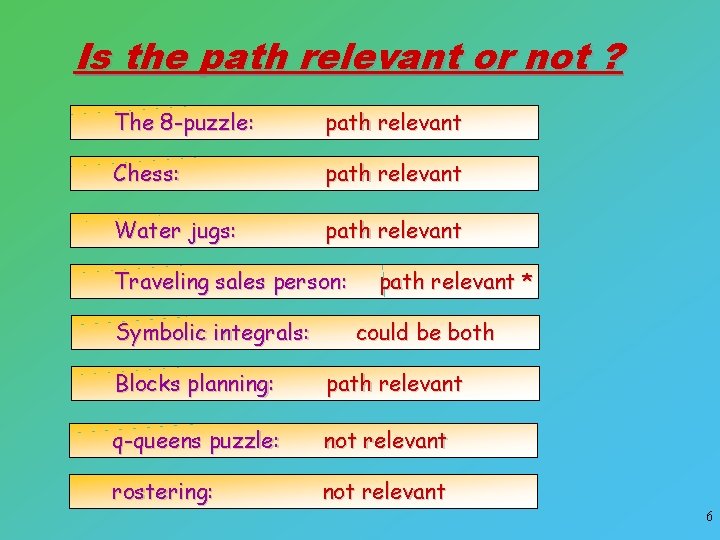

Is the path relevant or not ? The 8 -puzzle: path relevant Chess: path relevant Water jugs: path relevant Traveling sales person: Symbolic integrals: path relevant * could be both Blocks planning: path relevant q-queens puzzle: not relevant rostering: not relevant 6

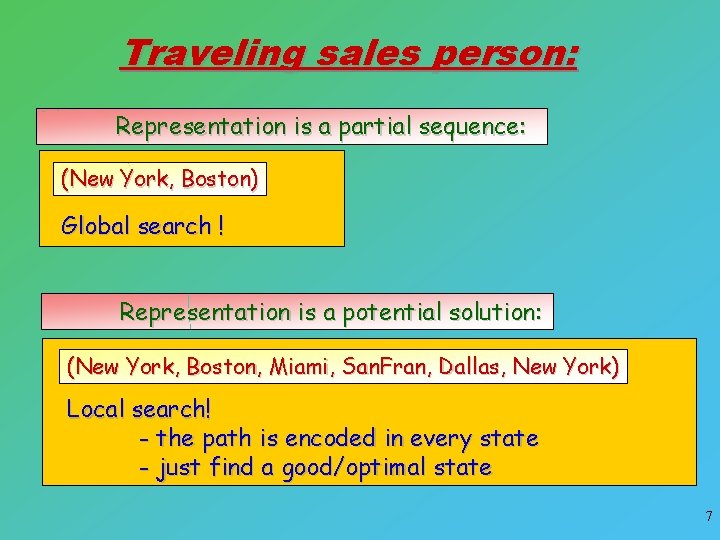

Traveling sales person: Representation is a partial sequence: (New York, Boston) Global search ! Representation is a potential solution: (New York, Boston, Miami, San. Fran, Dallas, New York) Local search! - the path is encoded in every state - just find a good/optimal state 7

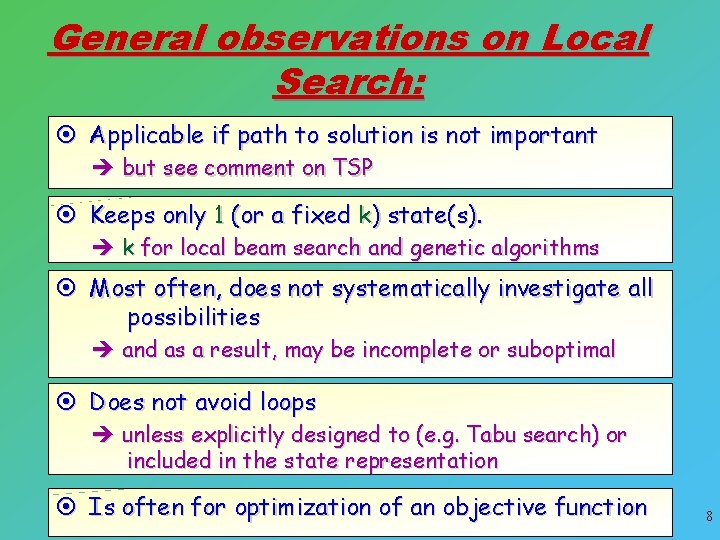

General observations on Local Search: ¤ Applicable if path to solution is not important è but see comment on TSP ¤ Keeps only 1 (or a fixed k) state(s). è k for local beam search and genetic algorithms ¤ Most often, does not systematically investigate all possibilities è and as a result, may be incomplete or suboptimal ¤ Does not avoid loops è unless explicitly designed to (e. g. Tabu search) or included in the state representation ¤ Is often for optimization of an objective function 8

Local Search Algorithms: Hill Climbing (3) (local version) Simulated Annealing Local k-Beam Search Genetic Algorithms Tabu Search Heuristics and Metaheuristics

Hill-Climbing (3) or Greedy local search The really “local-search” variant of Hill Climbing

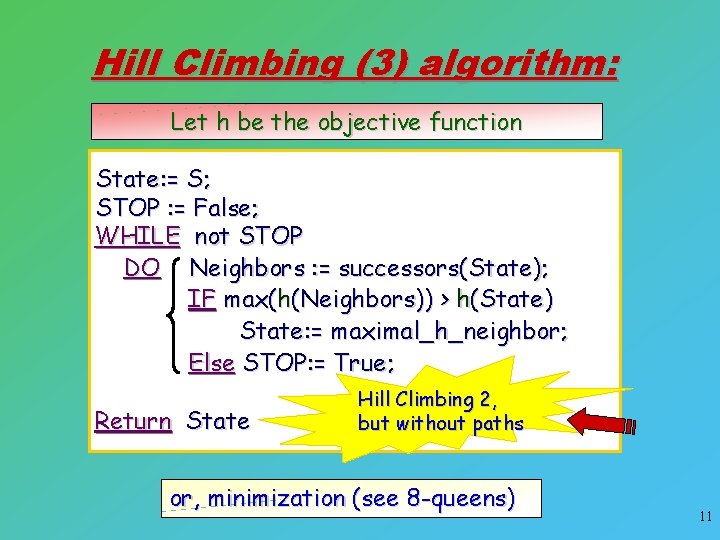

Hill Climbing (3) algorithm: Let h be the objective function State: = S; STOP : = False; WHILE not STOP DO Neighbors : = successors(State); IF max(h(Neighbors)) > h(State) State: = maximal_h_neighbor; Else STOP: = True; Return State Hill Climbing 2, but without paths or, minimization (see 8 -queens) 11

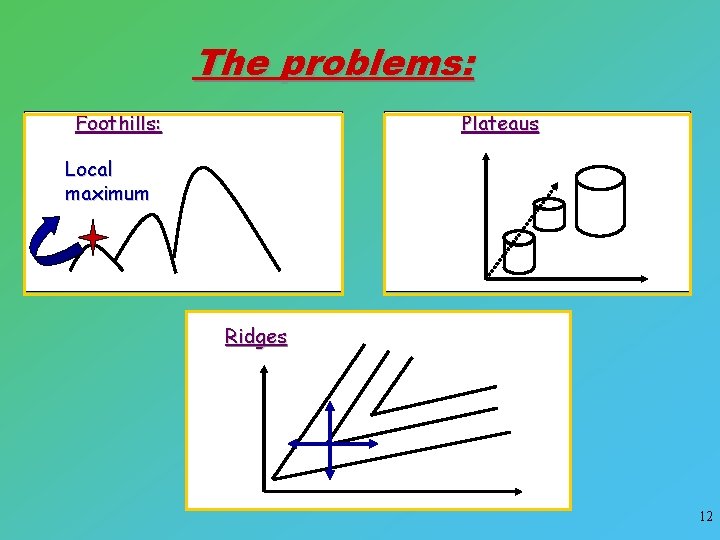

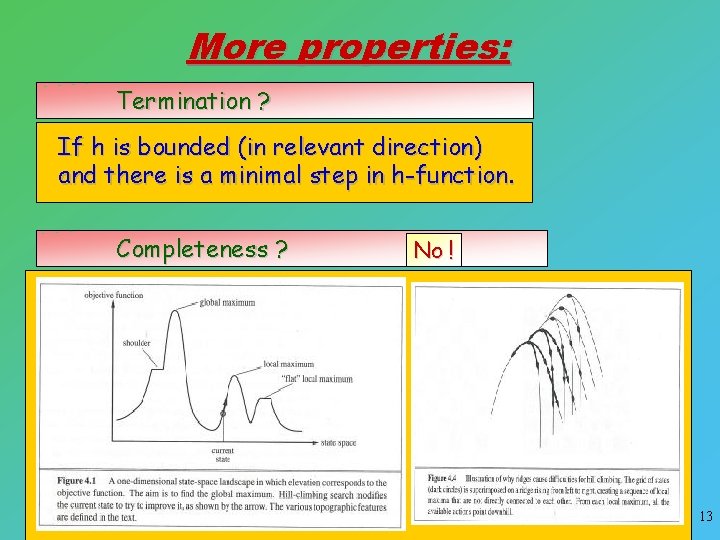

The problems: Plateaus Foothills: Local maximum Ridges 12

More properties: Termination ? If h is bounded (in relevant direction) and there is a minimal step in h-function. Completeness ? No ! 13

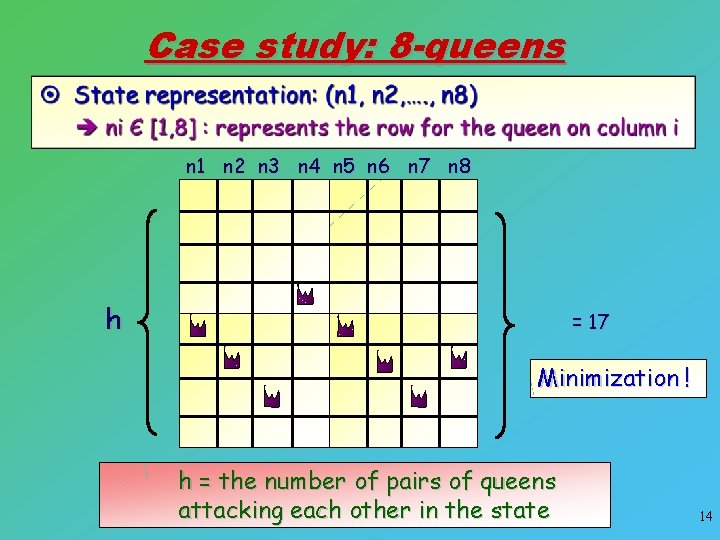

Case study: 8 -queens n 1 n 2 n 3 n 4 n 5 n 6 n 7 n 8 h = 17 Minimization ! h = the number of pairs of queens attacking each other in the state 14

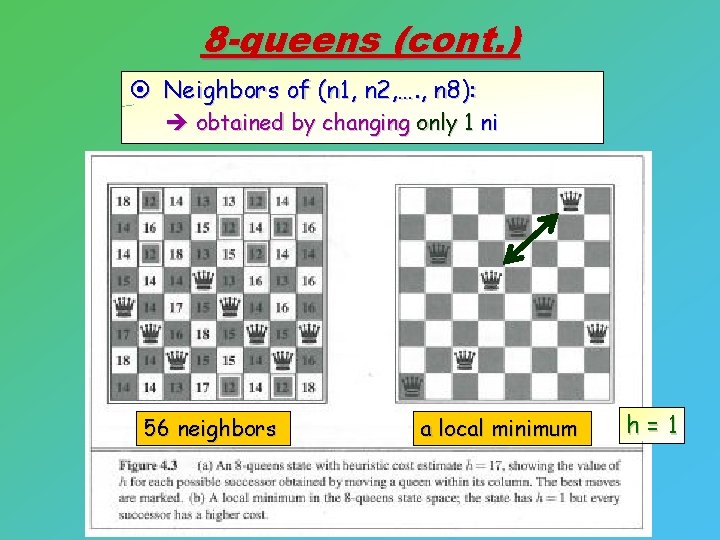

8 -queens (cont. ) ¤ Neighbors of (n 1, n 2, …. , n 8): è obtained by changing only 1 ni 56 neighbors a local minimum h=1

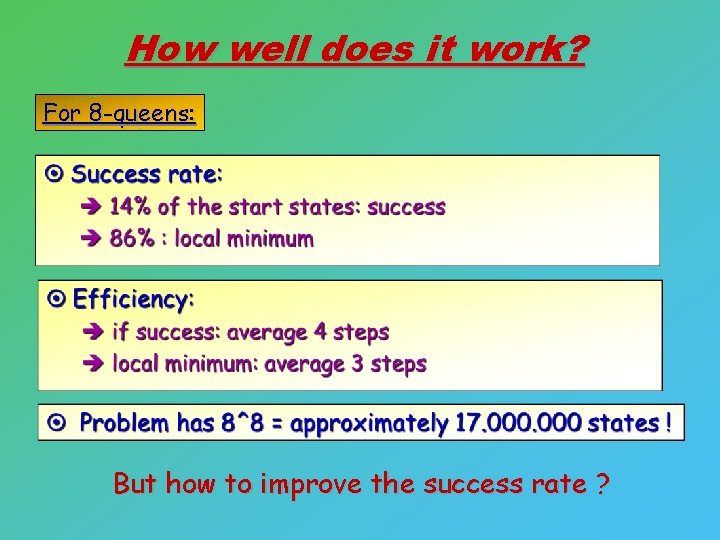

How well does it work? For 8 -queens: But how to improve the success rate ?

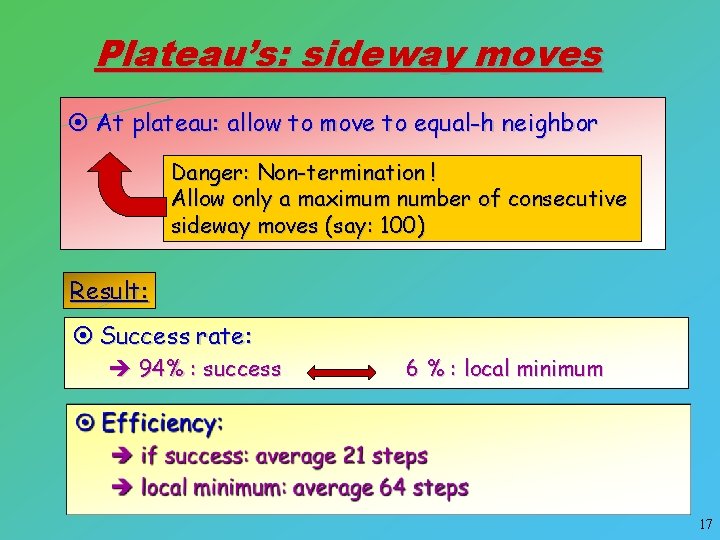

Plateau’s: sideway moves ¤ At plateau: allow to move to equal-h neighbor Danger: Non-termination ! Allow only a maximum number of consecutive sideway moves (say: 100) Result: ¤ Success rate: è 94% : success 6 % : local minimum 17

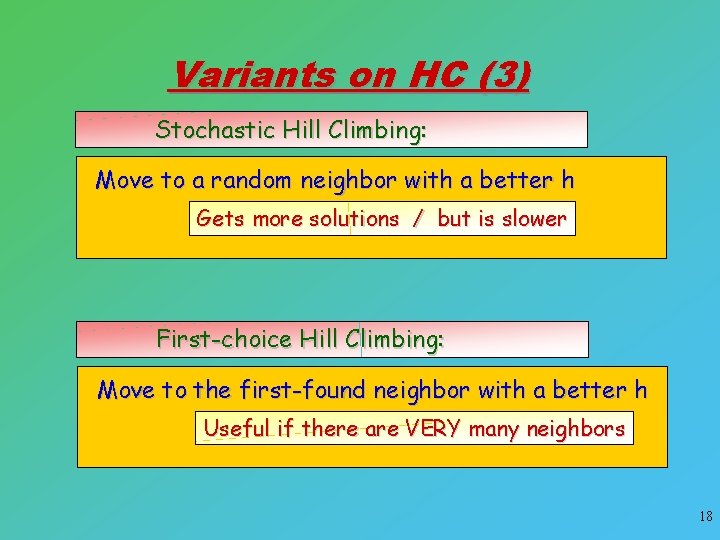

Variants on HC (3) Stochastic Hill Climbing: Move to a random neighbor with a better h Gets more solutions / but is slower First-choice Hill Climbing: Move to the first-found neighbor with a better h Useful if there are VERY many neighbors 18

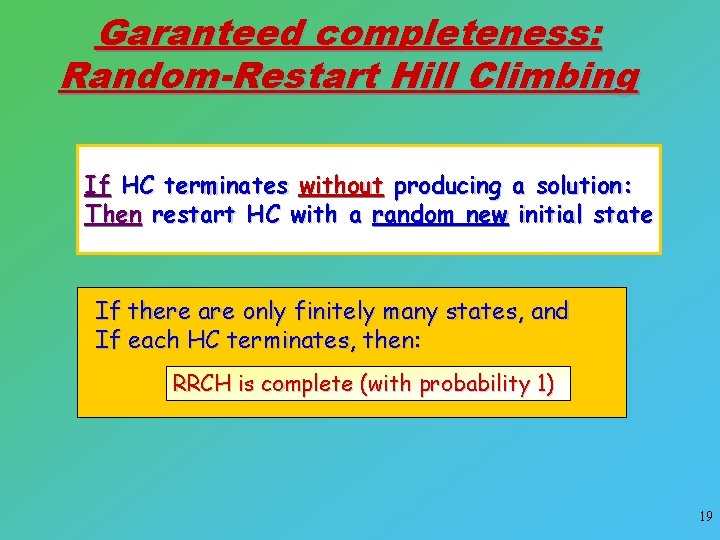

Garanteed completeness: Random-Restart Hill Climbing If HC terminates without producing a solution: Then restart HC with a random new initial state If there are only finitely many states, and If each HC terminates, then: RRCH is complete (with probability 1) 19

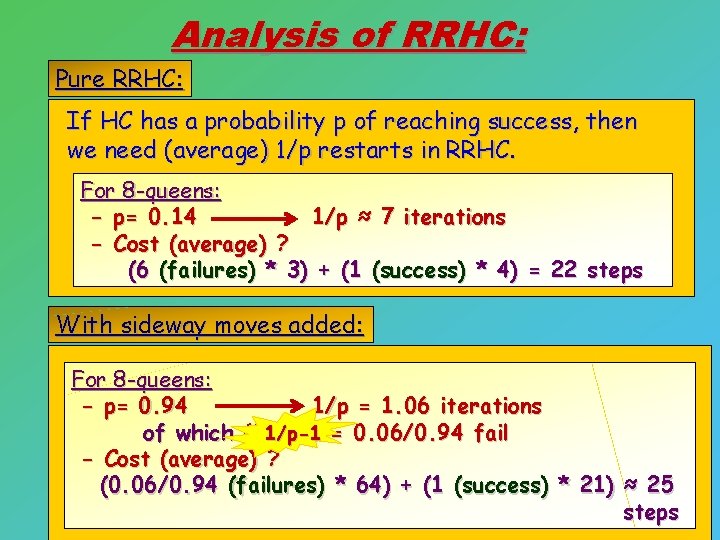

Analysis of RRHC: Pure RRHC: If HC has a probability p of reaching success, then we need (average) 1/p restarts in RRHC. For 8 -queens: - p= 0. 14 1/p ≈ 7 iterations - Cost (average) ? (6 (failures) * 3) + (1 (success) * 4) = 22 steps With sideway moves added: For 8 -queens: - p= 0. 94 1/p = 1. 06 iterations of which (1 -p)/p 1/p-1 = 0. 06/0. 94 fail - Cost (average) ? (0. 06/0. 94 (failures) * 64) + (1 (success) * 21) ≈ 25 steps 20

Conclusion ? ¤ Local Search is replacing more and more other solvers in _many_ domains continuously è including optimization problems in ML. 21

Simulated Annealing Kirkpatrick et al. 1983 Simulate the process of annealing from metallurgy

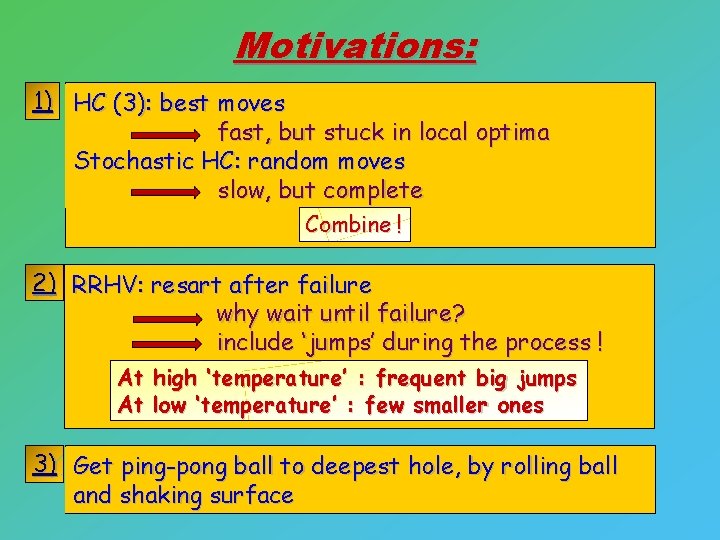

Motivations: 1) HC (3): best moves fast, but stuck in local optima Stochastic HC: random moves slow, but complete Combine ! 2) RRHV: resart after failure why wait until failure? include ‘jumps’ during the process ! At high ‘temperature’ : frequent big jumps At low ‘temperature’ : few smaller ones 3) Get ping-pong ball to deepest hole, by rolling ball and shaking surface

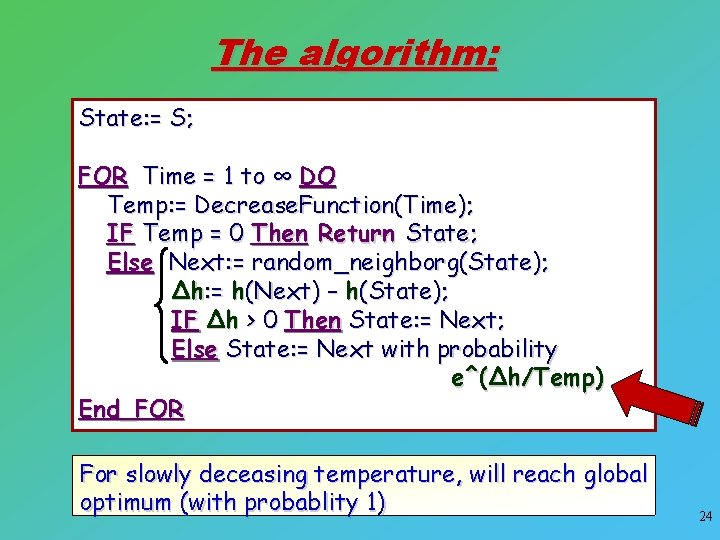

The algorithm: State: = S; FOR Time = 1 to ∞ DO Temp: = Decrease. Function(Time); IF Temp = 0 Then Return State; Else Next: = random_neighborg(State); Δh: = h(Next) – h(State); IF Δh > 0 Then State: = Next; Else State: = Next with probability e^(Δh/Temp) End_FOR For slowly deceasing temperature, will reach global optimum (with probablity 1) 24

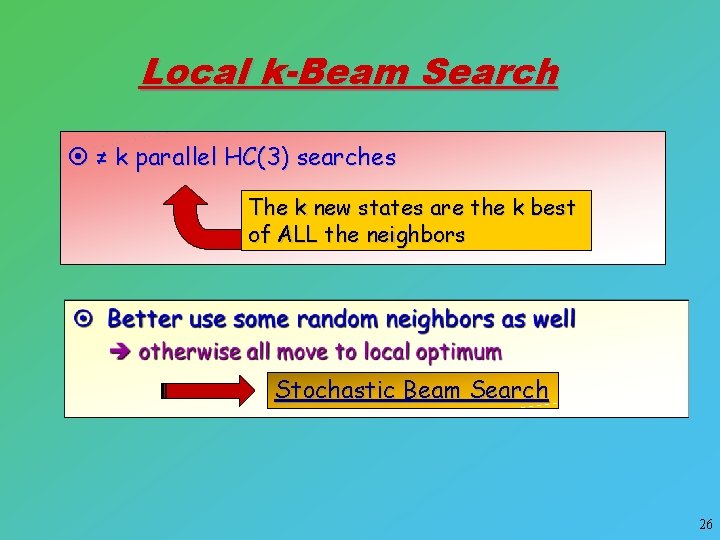

Local k-Beam Search Beam search, without keeping partial paths

Local k-Beam Search ¤ ≠ k parallel HC(3) searches The k new states are the k best of ALL the neighbors Stochastic Beam Search 26

Genetic Algorithms Holland 1975 Search inspired by evolution theory

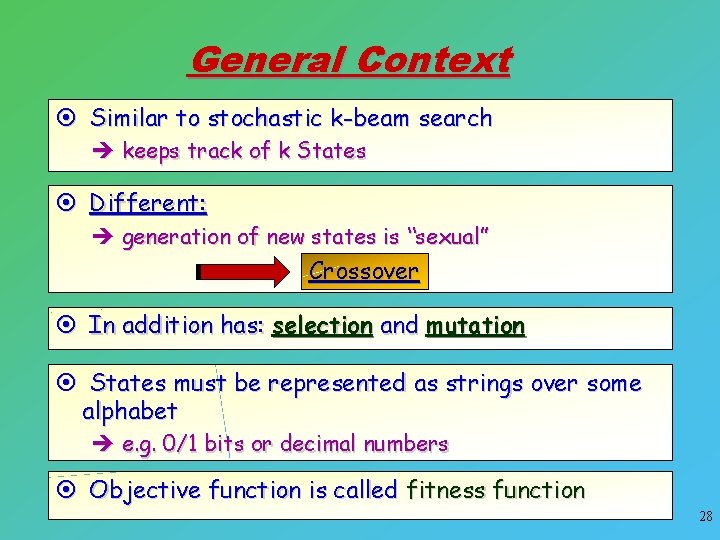

General Context ¤ Similar to stochastic k-beam search è keeps track of k States ¤ Different: è generation of new states is “sexual” Crossover ¤ In addition has: selection and mutation ¤ States must be represented as strings over some alphabet è e. g. 0/1 bits or decimal numbers ¤ Objective function is called fitness function 28

![8 -queens example: ¤ State representation: 8 -string of numbers [1, 8] ¤ Population: 8 -queens example: ¤ State representation: 8 -string of numbers [1, 8] ¤ Population:](http://slidetodoc.com/presentation_image_h2/44d3023c771be4fb79c95836fa2eadb6/image-29.jpg)

8 -queens example: ¤ State representation: 8 -string of numbers [1, 8] ¤ Population: set of k states -- here: k=4

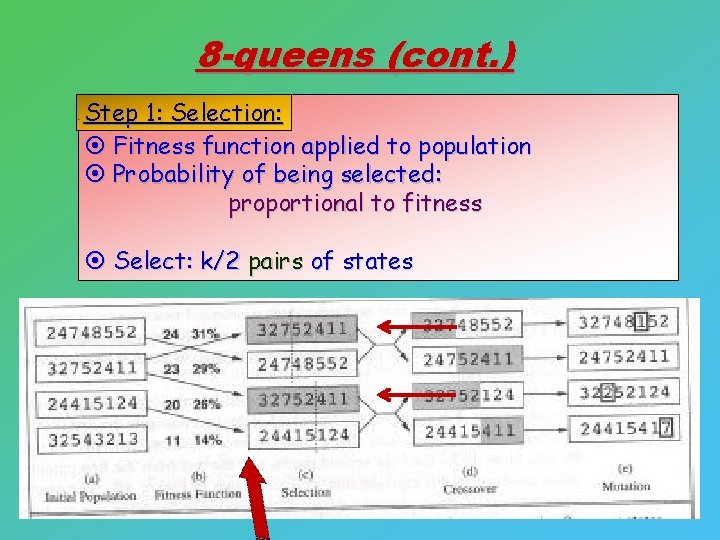

8 -queens (cont. ) Step 1: Selection: ¤ Fitness function applied to population ¤ Probability of being selected: proportional to fitness ¤ Select: k/2 pairs of states

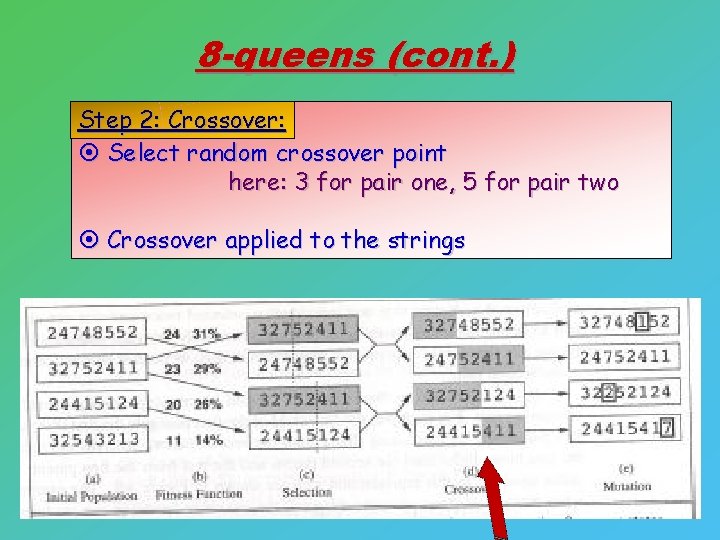

8 -queens (cont. ) Step 2: Crossover: ¤ Select random crossover point here: 3 for pair one, 5 for pair two ¤ Crossover applied to the strings

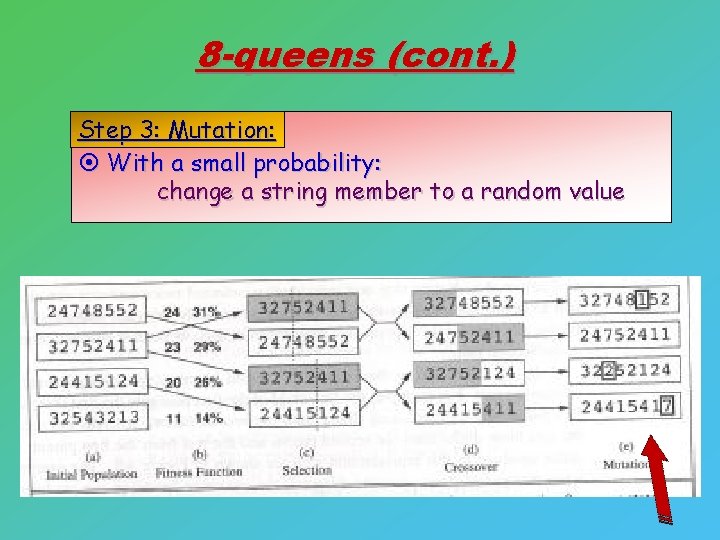

8 -queens (cont. ) Step 3: Mutation: ¤ With a small probability: change a string member to a random value

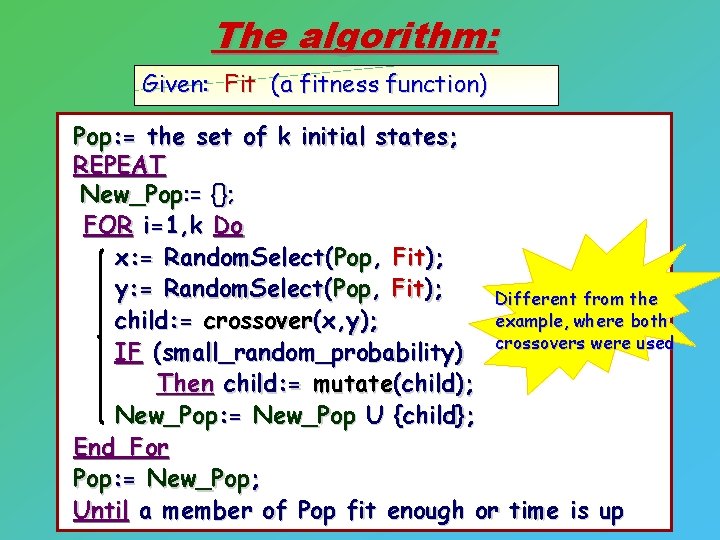

The algorithm: Given: Fit (a fitness function) Pop: = the set of k initial states; REPEAT New_Pop: = {}; FOR i=1, k Do x: = Random. Select(Pop, Fit); y: = Random. Select(Pop, Fit); Different from the example, where both child: = crossover(x, y); IF (small_random_probability) crossovers were used Then child: = mutate(child); New_Pop: = New_Pop U {child}; End_For Pop: = New_Pop; Until a member of Pop fit enough or time is up

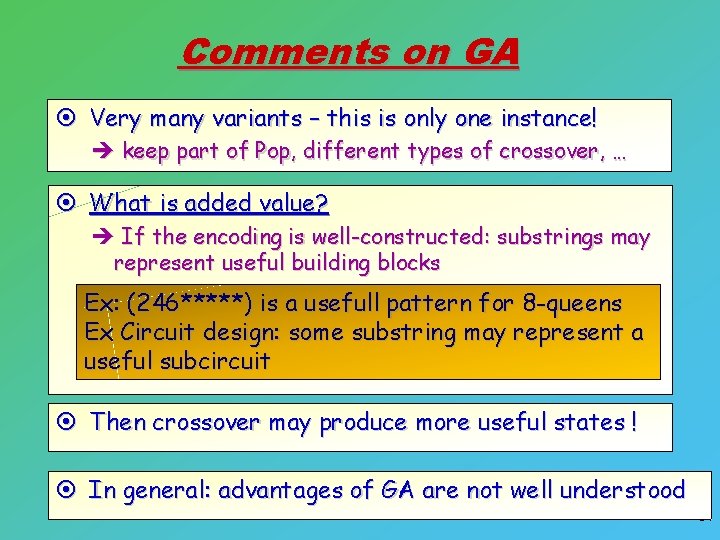

Comments on GA ¤ Very many variants – this is only one instance! è keep part of Pop, different types of crossover, … ¤ What is added value? è If the encoding is well-constructed: substrings may represent useful building blocks Ex: (246*****) is a usefull pattern for 8 -queens Ex Circuit design: some substring may represent a useful subcircuit ¤ Then crossover may produce more useful states ! ¤ In general: advantages of GA are not well understood 34

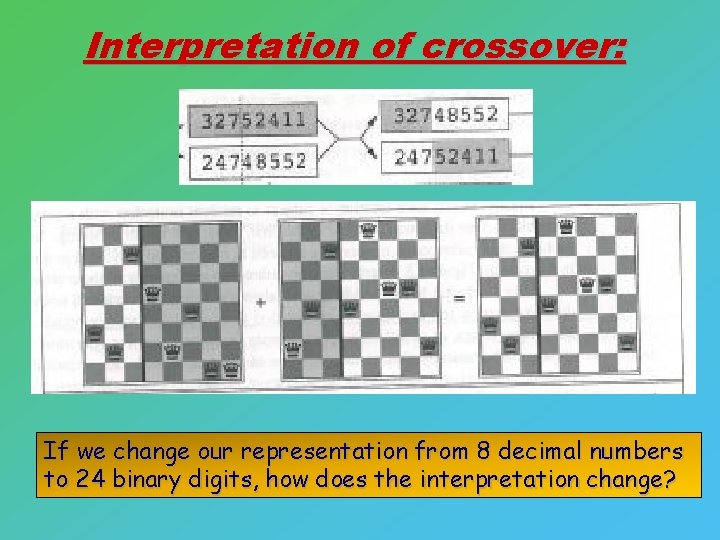

Interpretation of crossover: If we change our representation from 8 decimal numbers to 24 binary digits, how does the interpretation change?

Tabu Search Glover 1986 Another way to get HC out of local minima

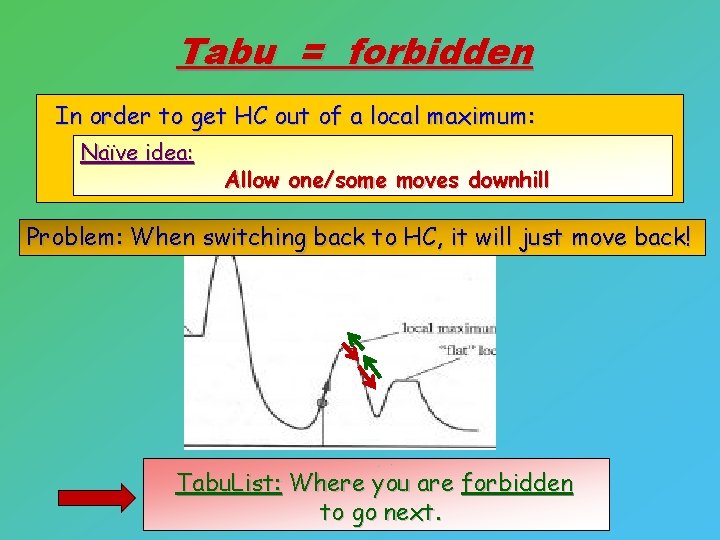

Tabu = forbidden In order to get HC out of a local maximum: Naïve idea: Allow one/some moves downhill Problem: When switching back to HC, it will just move back! Tabu. List: Where you are forbidden to go next.

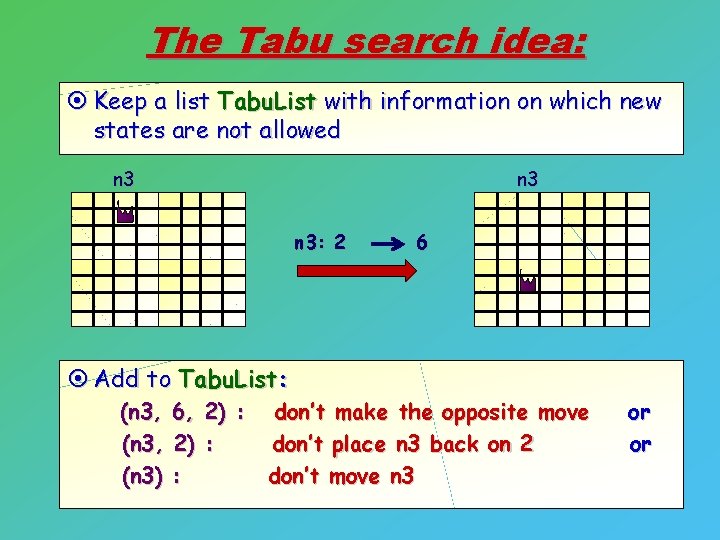

The Tabu search idea: ¤ Keep a list Tabu. List with information on which new states are not allowed n 3 n 3: 2 ¤ Add to Tabu. List: (n 3, (n 3) 6, 2) : : 6 don’t make the opposite move don’t place n 3 back on 2 don’t move n 3 or or

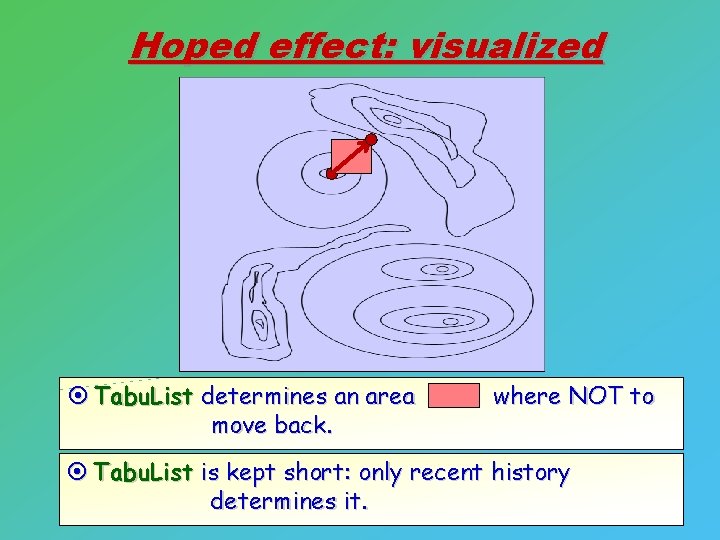

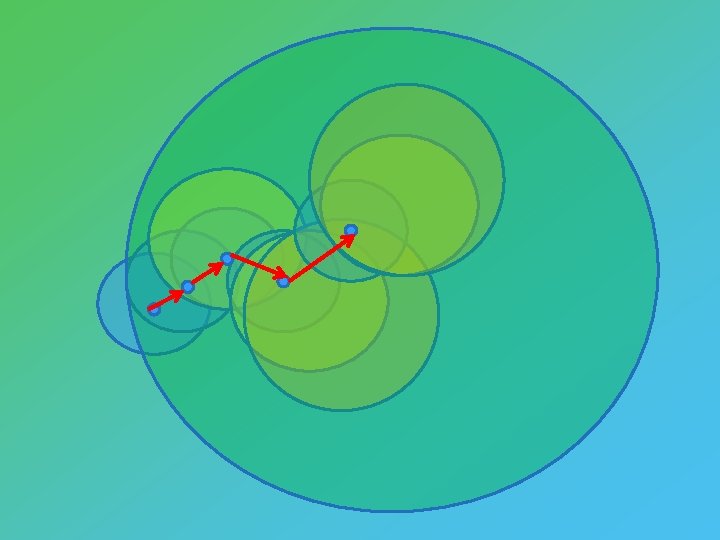

Hoped effect: visualized ¤ Tabu. List determines an area move back. where NOT to ¤ Tabu. List is kept short: only recent history determines it.

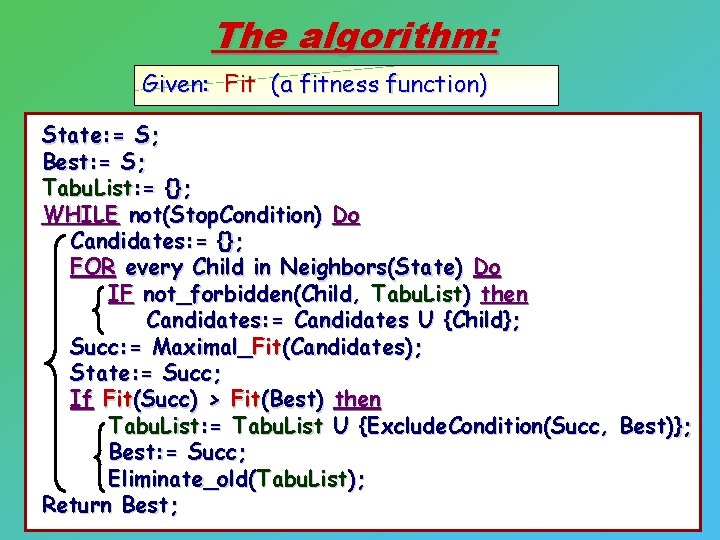

The algorithm: Given: Fit (a fitness function) State: = S; Best: = S; Tabu. List: = {}; WHILE not(Stop. Condition) Do Candidates: = {}; FOR every Child in Neighbors(State) Do IF not_forbidden(Child, Tabu. List) then Candidates: = Candidates U {Child}; Succ: = Maximal_Fit(Candidates); State: = Succ; If Fit(Succ) > Fit(Best) then Tabu. List: = Tabu. List U {Exclude. Condition(Succ, Best)}; Best: = Succ; Eliminate_old(Tabu. List); Return Best;

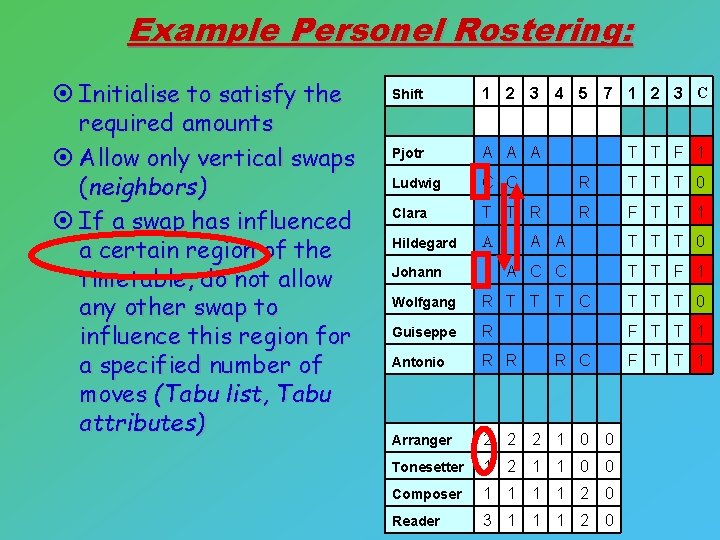

Example Personel Rostering: ¤ Initialise to satisfy the required amounts ¤ Allow only vertical swaps (neighbors) ¤ If a swap has influenced a certain region of the timetable, do not allow any other swap to influence this region for a specified number of moves (Tabu list, Tabu attributes) Shift 1 2 3 4 5 7 1 2 3 C Pjotr A A A Ludwig C C R T T T 0 Clara T T R R F T T 1 Hildegard A T T F 1 A A T T T 0 A C C T T F 1 Wolfgang R T T T C T T T 0 Guiseppe R F T T 1 Antonio R R Arranger 2 2 2 1 0 0 Tonesetter 1 2 1 1 0 0 Composer 1 1 2 0 Reader 3 1 1 1 2 0 Johann R C F T T 1

Heuristics and Meta-heuristics More differences with Global search Examples of problems and heuristics Meta-heuristics

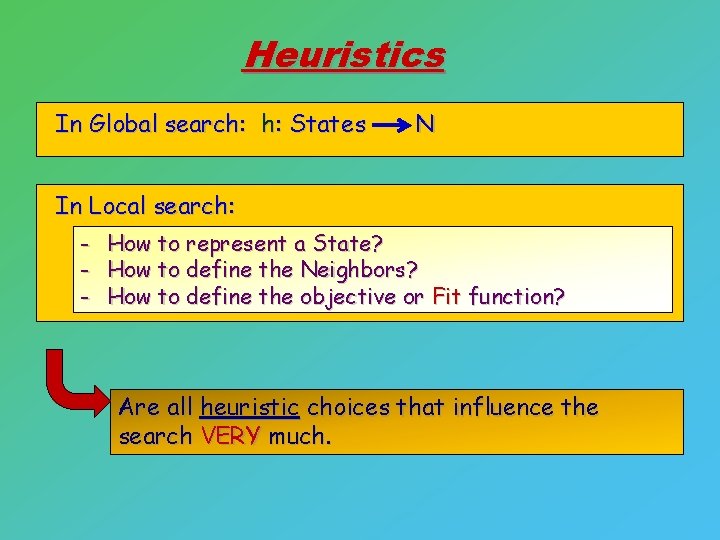

Heuristics In Global search: h: States N In Local search: - How to represent a State? How to define the Neighbors? How to define the objective or Fit function? Are all heuristic choices that influence the search VERY much.

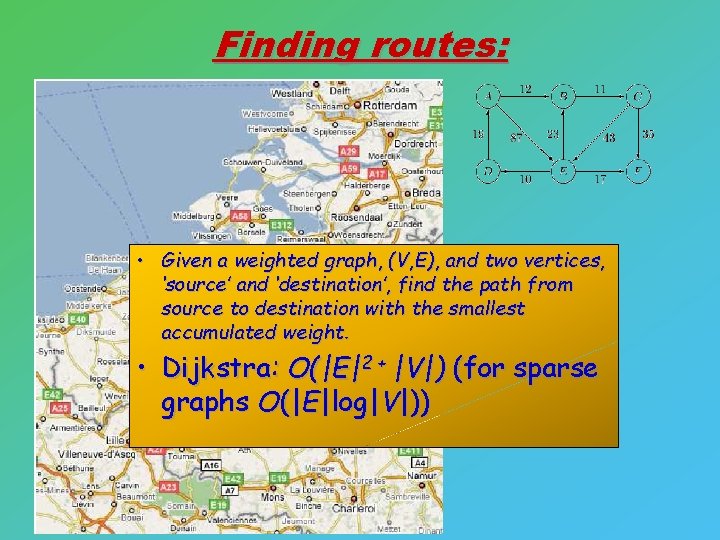

Finding routes: • Given a weighted graph, (V, E), and two vertices, ‘source’ and ‘destination’, find the path from source to destination with the smallest accumulated weight. • Dijkstra: O(|E|2 + |V|) (for sparse graphs O(|E|log|V|))

The objective function: OR In general: many different functions possible

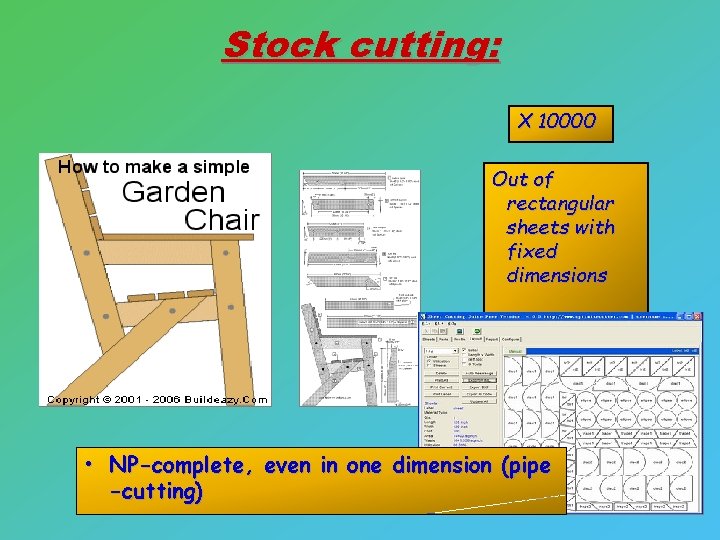

Stock cutting: X 10000 Out of rectangular sheets with fixed dimensions • NP-complete, even in one dimension (pipe -cutting)

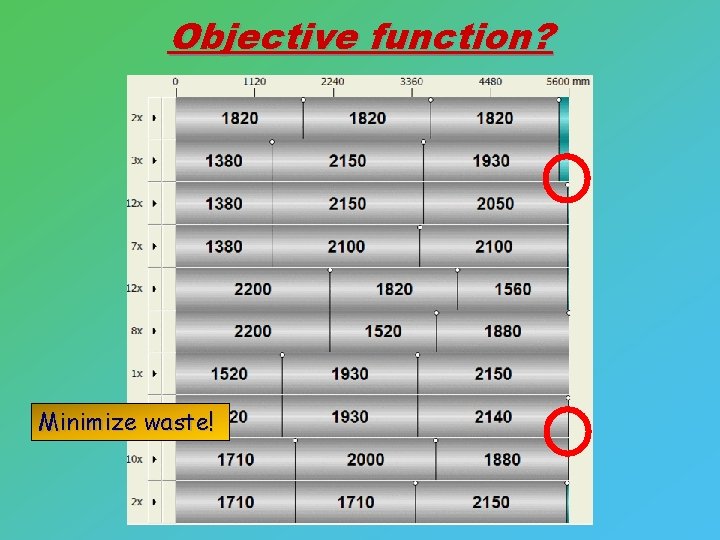

Objective function? Minimize waste!

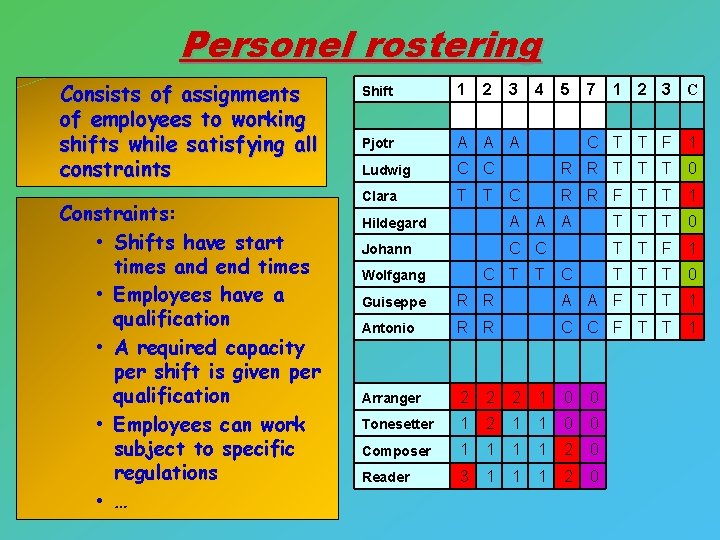

Personel rostering Consists of assignments of employees to working shifts while satisfying all constraints Constraints: • Shifts have start times and end times • Employees have a qualification – Constraints: • • A required capacity Shifts have start times and end times per shift is given per • Employees have a qualification • • A required capacity per Employees can work shift is given per subject to specific qualification • Employees can work subject regulations to specific regulations • • … … Shift 1 2 Pjotr A A A Ludwig C C Clara T T 3 4 5 7 1 2 3 C C T T F 1 R R T T T 0 C R R F T T 1 Hildegard A A A T T T 0 Johann C C T T F 1 C T Wolfgang T C T T T 0 Guiseppe R R A A F T T 1 Antonio R R C C F T T 1 Arranger 2 2 2 1 0 0 Tonesetter 1 2 1 1 0 0 Composer 1 1 2 0 Reader 3 1 1 1 2 0

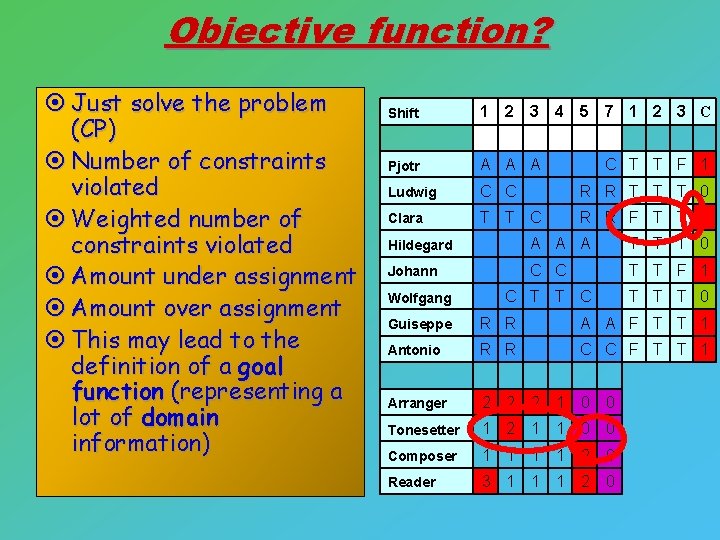

Objective function? ¤ Just solve the problem (CP) ¤ Number of constraints violated ¤ Weighted number of constraints violated ¤ Amount under assignment ¤ Amount over assignment ¤ This may lead to the definition of a goal function (representing a lot of domain information) Shift 1 2 3 4 5 7 1 2 3 C Pjotr A A A Ludwig C C R R T T T 0 Clara T T C R R F T T 1 C T T F 1 Hildegard A A A T T T 0 Johann C C T T F 1 C T T T 0 Wolfgang Guiseppe R R A A F T T 1 Antonio R R C C F T T 1 Arranger 2 2 2 1 0 0 Tonesetter 1 2 1 1 0 0 Composer 1 1 2 0 Reader 3 1 1 1 2 0

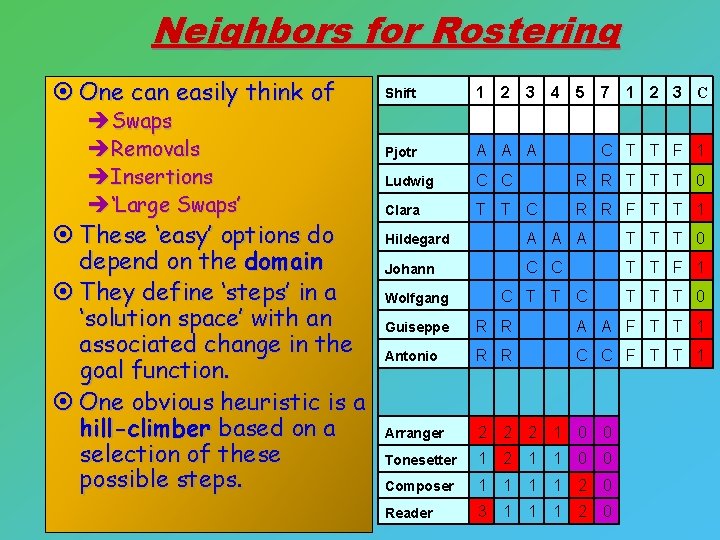

Neighbors for Rostering ¤ One can easily think of èSwaps èRemovals èInsertions è‘Large Swaps’ ¤ These ‘easy’ options do depend on the domain ¤ They define ‘steps’ in a ‘solution space’ with an associated change in the goal function. ¤ One obvious heuristic is a hill-climber based on a selection of these possible steps. Shift 1 2 3 4 5 7 1 2 3 C Pjotr A A A Ludwig C C R R T T T 0 Clara T T C R R F T T 1 C T T F 1 Hildegard A A A T T T 0 Johann C C T T F 1 C T T T 0 Wolfgang Guiseppe R R A A F T T 1 Antonio R R C C F T T 1 Arranger 2 2 2 1 0 0 Tonesetter 1 2 1 1 0 0 Composer 1 1 2 0 Reader 3 1 1 1 2 0

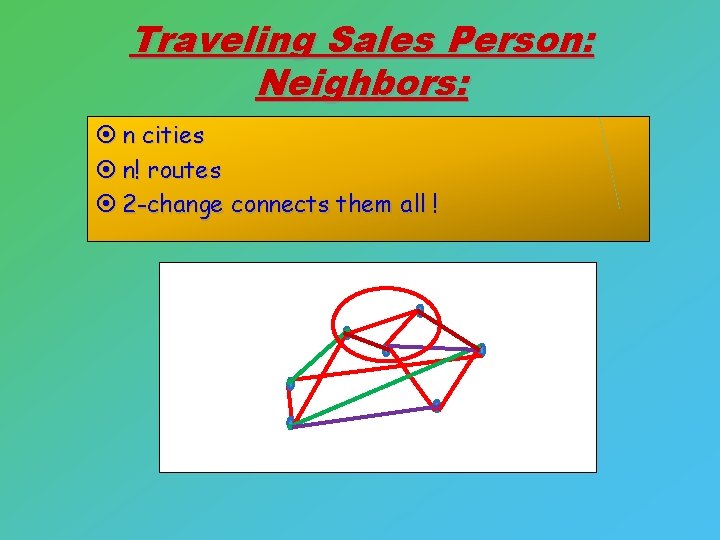

Traveling Sales Person: Neighbors: ¤ n cities ¤ n! routes ¤ 2 -change connects them all !

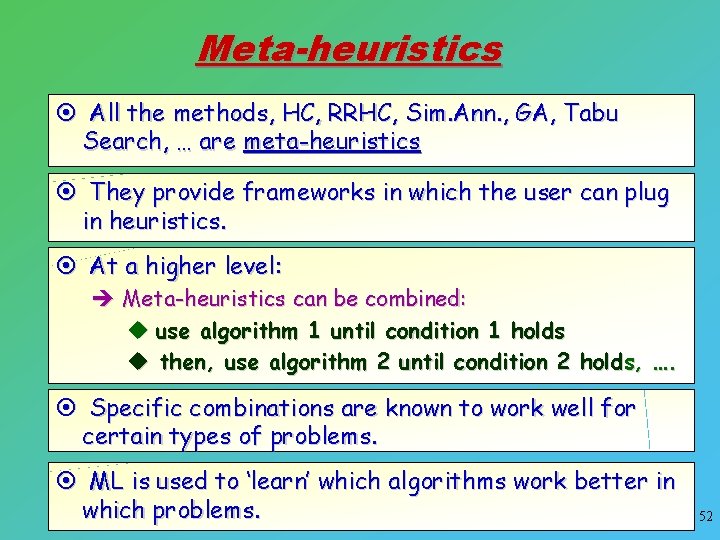

Meta-heuristics ¤ All the methods, HC, RRHC, Sim. Ann. , GA, Tabu Search, … are meta-heuristics ¤ They provide frameworks in which the user can plug in heuristics. ¤ At a higher level: è Meta-heuristics can be combined: u use algorithm 1 until condition 1 holds u then, use algorithm 2 until condition 2 holds, …. ¤ Specific combinations are known to work well for certain types of problems. ¤ ML is used to ‘learn’ which algorithms work better in which problems. 52

Concluding remarks Local Search in Continuous Spaces Variable Neighborhoods Search Relation to BDA: some pointers

Continuous Search Spaces Some basic ideas

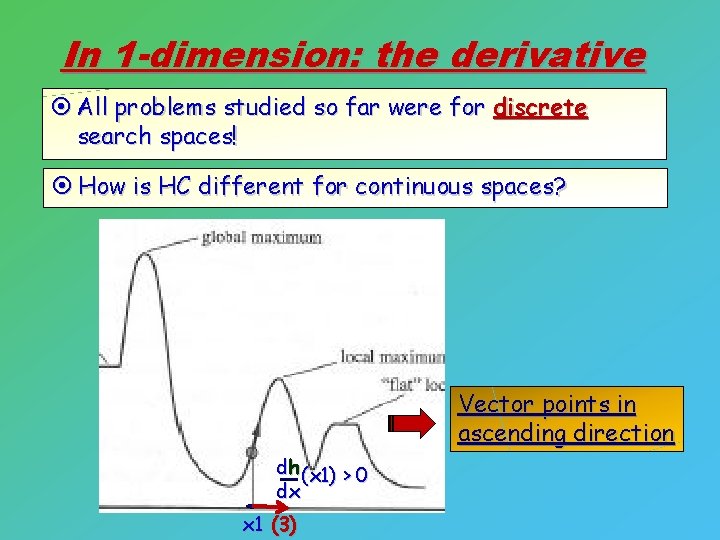

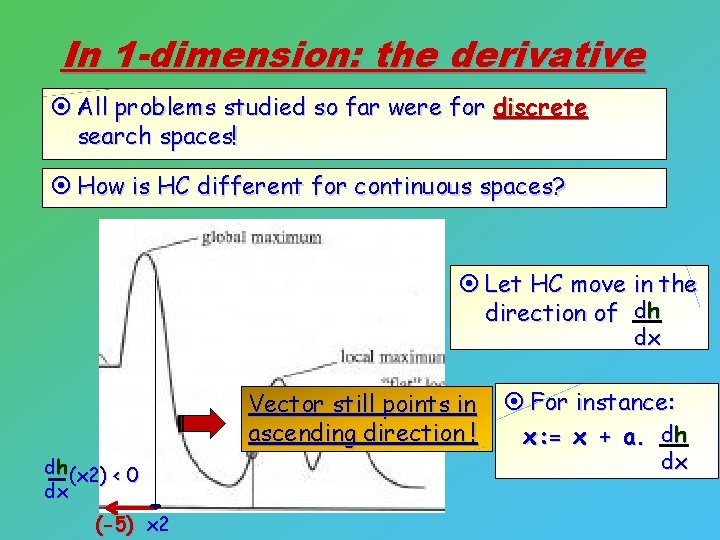

In 1 -dimension: the derivative ¤ All problems studied so far were for discrete search spaces! ¤ How is HC different for continuous spaces? Vector points in ascending direction dh (x 1) > 0 dx x 1 (3)

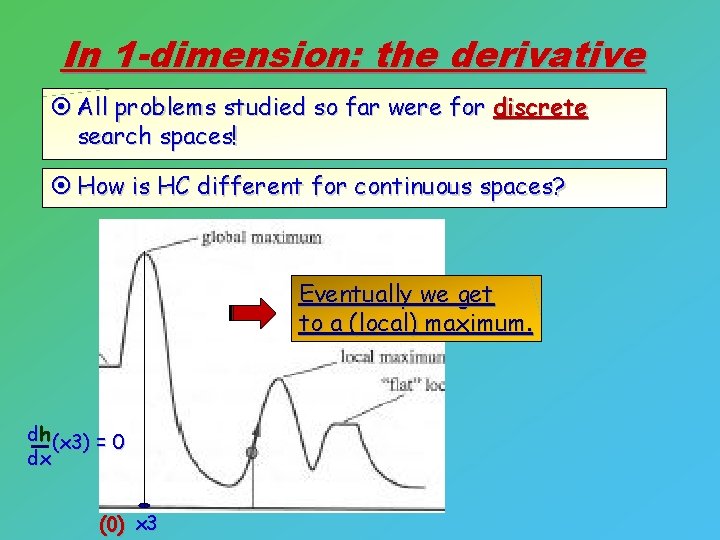

In 1 -dimension: the derivative ¤ All problems studied so far were for discrete search spaces! ¤ How is HC different for continuous spaces? ¤ Let HC move in the direction of dh dx Vector still points in ascending direction ! dh (x 2) < 0 dx (-5) x 2 ¤ For instance: x: = x + a. dh dx

In 1 -dimension: the derivative ¤ All problems studied so far were for discrete search spaces! ¤ How is HC different for continuous spaces? Eventually we get to a (local) maximum. dh (x 3) = 0 dx (0) x 3

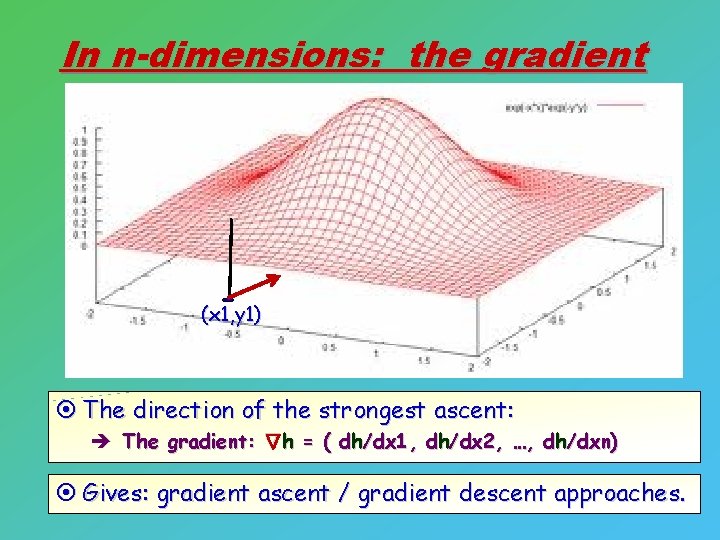

In n-dimensions: the gradient (x 1, y 1) ¤ The direction of the strongest ascent: Δ è The gradient: h = ( dh/dx 1, dh/dx 2, …, dh/dxn) ¤ Gives: gradient ascent / gradient descent approaches.

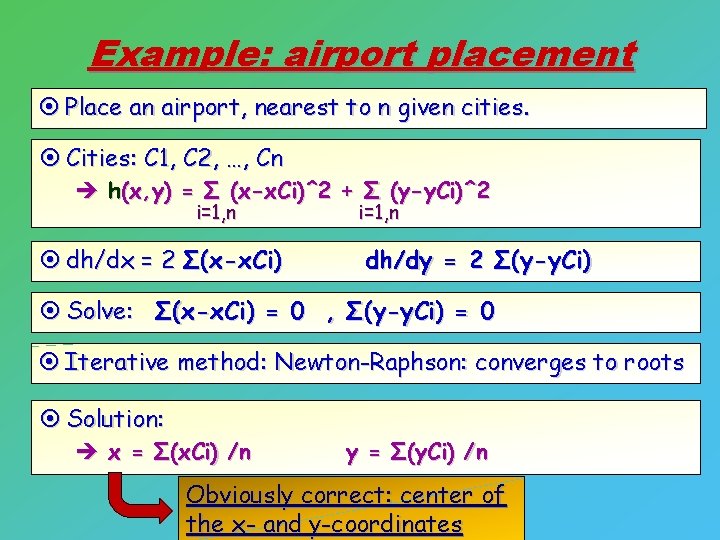

Example: airport placement ¤ Place an airport, nearest to n given cities. ¤ Cities: C 1, C 2, …, Cn è h(x, y) = Σ (x-x. Ci)^2 + Σ (y-y. Ci)^2 i=1, n ¤ dh/dx = 2 Σ(x-x. Ci) i=1, n dh/dy = 2 Σ(y-y. Ci) ¤ Solve: Σ(x-x. Ci) = 0 , Σ(y-y. Ci) = 0 ¤ Iterative method: Newton-Raphson: converges to roots ¤ Solution: è x = Σ(x. Ci) /n y = Σ(y. Ci) /n Obviously correct: center of the x- and y-coordinates

Variable Neighborhood Search Exploit various different ways of defining neighborhoods to move out of local optima

Variable neighborhood search (Mladenovic and Hanssen 1997) ¤ Facts: A local minimum with respect to one neighborhood structure is not necessarily so for another. A global minimum is a local minimum with respect to all neighborhood structures For many problems local minima with respect to one or several neighbourhoods are relatively close to each other

Idea: use different neighborhoods ¤ By moving to a different neighborhood: you may get out of the local optimum ! ¤ Define a number of different neighborhoods è different ways to compute successors ¤ If you can not get out of local optimum in one, try the next neighborhood.

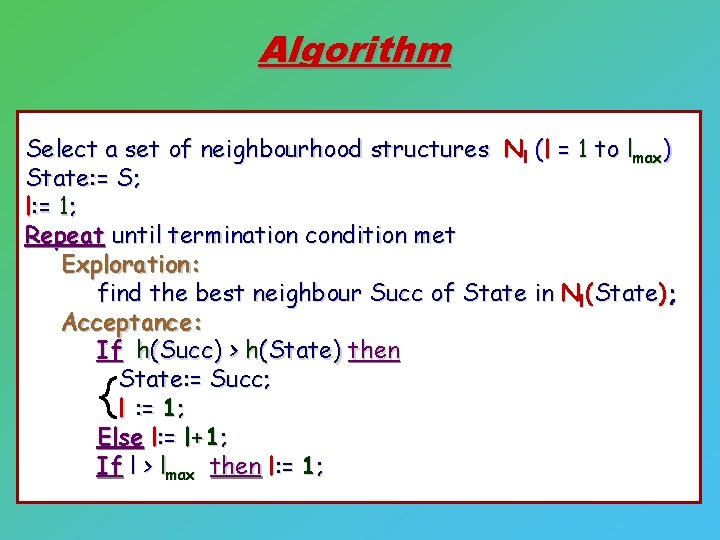

Algorithm Select a set of neighbourhood structures Nl (l = 1 to lmax) State: = S; l: = 1; Repeat until termination condition met Exploration: find the best neighbour Succ of State in Nl(State); Acceptance: If h(Succ) > h(State) then State: = Succ; l : = 1; Else l: = l+1; If l > lmax then l: = 1;

Broad subdomain ¤ Many variants exist ! è possible topic for you presentation. è find a paper on variable neighborhood search u technique or application.

Relation to BDA: some pointers Examples Sub-modularity

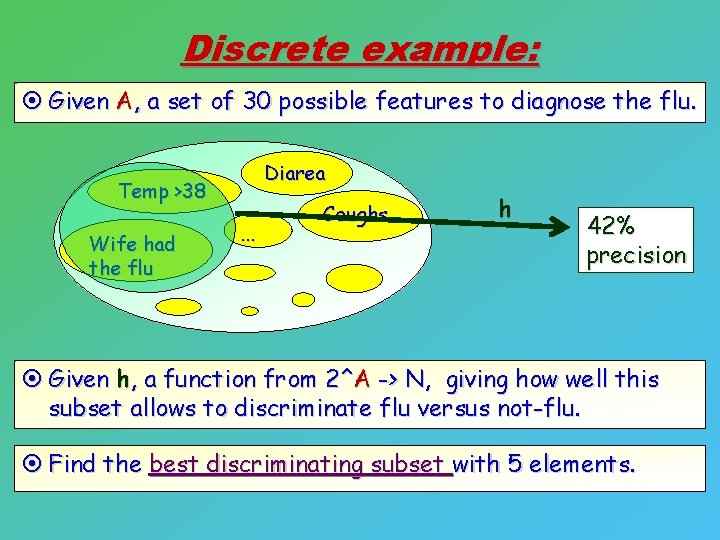

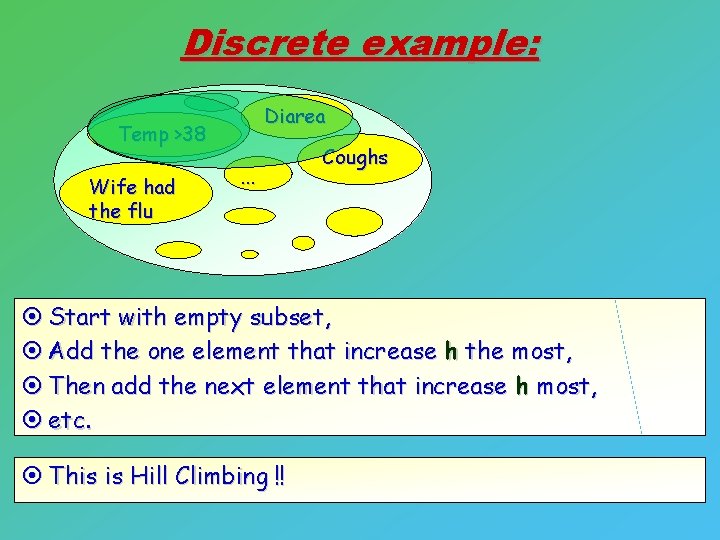

Discrete example: ¤ Given A, a set of 30 possible features to diagnose the flu. Diarea Temp >38 Wife had the flu . . . Coughs h 42% precision ¤ Given h, a function from 2^A -> N, giving how well this subset allows to discriminate flu versus not-flu. ¤ Find the best discriminating subset with 5 elements.

Discrete example: Diarea Temp >38 Wife had the flu . . . Coughs ¤ Start with empty subset, ¤ Add the one element that increase h the most, ¤ Then add the next element that increase h most, ¤ etc. ¤ This is Hill Climbing !!

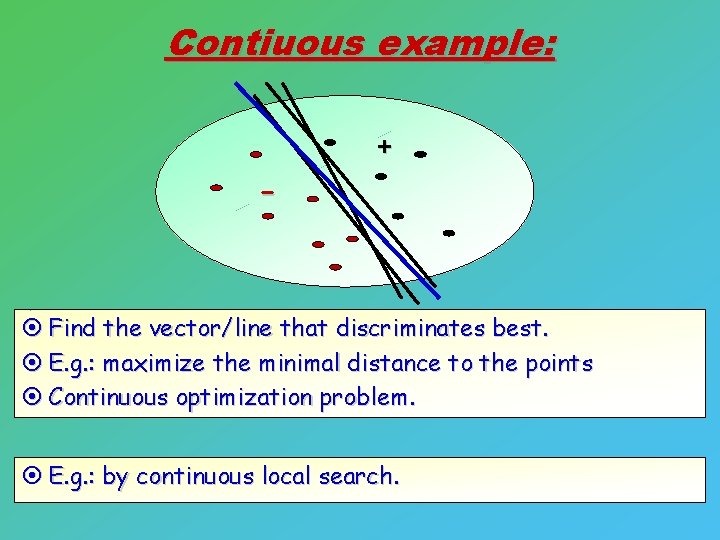

Contiuous example: - + ¤ Find the vector/line that discriminates best. ¤ E. g. : maximize the minimal distance to the points ¤ Continuous optimization problem. ¤ E. g. : by continuous local search.

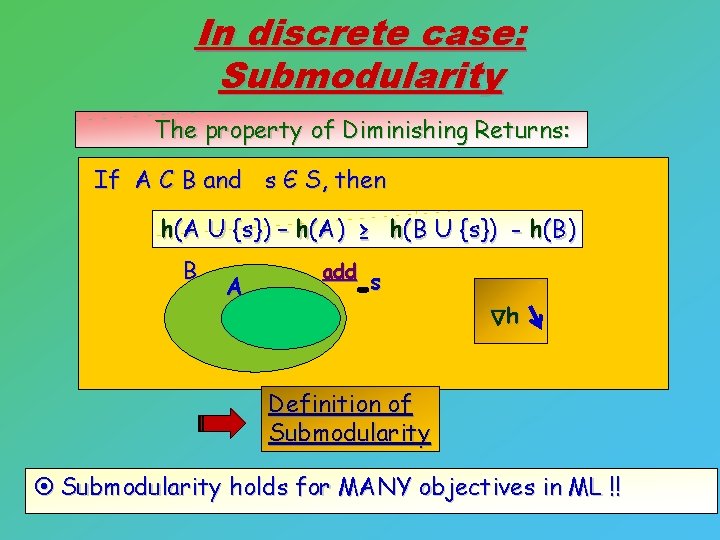

In discrete case: Submodularity The property of Diminishing Returns: If A C B and s Є S, then h(A U {s}) – h(A) ≥ h(B U {s}) - h(B) B A add s h Δ Definition of Submodularity ¤ Submodularity holds for MANY objectives in ML !!

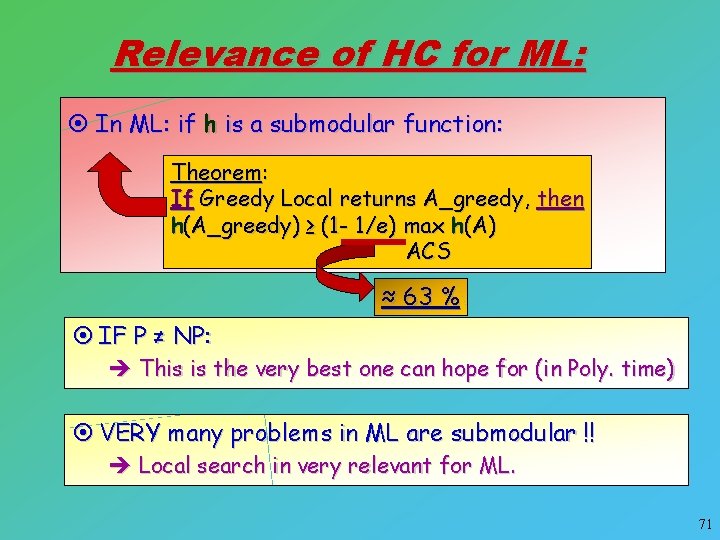

Relevance of HC for ML: ¤ In ML: if h is a submodular function: Theorem: If Greedy Local returns A_greedy, then h(A_greedy) ≥ (1 - 1/e) max h(A) ACS ≈ 63 % ¤ IF P ≠ NP: è This is the very best one can hope for (in Poly. time) ¤ VERY many problems in ML are submodular !! è Local search in very relevant for ML. 71

Reading assignment and presentations: Applications of Local Search Other Local Search or variants of the studied methods Applications of Local Search in ML Further aspects of Submodularity For the coming SAT-solving: MAX-SAT solving Mini-Sat Further relations between SAT and Local Search Start with Google and Wiki Study at least one “real”/scientific source Provide the reference on your sources.

- Slides: 72