MATLAB for High Performance Computing Shaohao Chen Brian

MATLAB for High Performance Computing Shaohao Chen Brian and Cognitive Sciences (BCS) Massachusetts Institute of Technology (MIT)

Outline Why using Matlab for HPC Use Matlab on Openmind Optimize Matlab codes Matlab Parallel Computing Toolbox

Why using Matlab for HPC? Many Matlab programs run faster on high-performance computing (HPC) clusters than on regular laptops/desktops. Openmind is an HPC cluster with over 1, 700 CPU cores and around 250 GPUs. MATLAB site license is available to all MIT users. (Unlimited on Openmind). Matlab programs can be exceptionally fast if they are optimized and parallelized, and painfully slow if not. Matlab Parallel Computing Toolbox (PCT) is well-designed for multi-core CPUs, GPUs, and computer clusters. The optimization and parallelization methods covered in this tutorial can be used not only on HPC clusters but also on regular laptops/desktops. The skills you learn today should enable you to solve bigger problems faster using MATLAB.

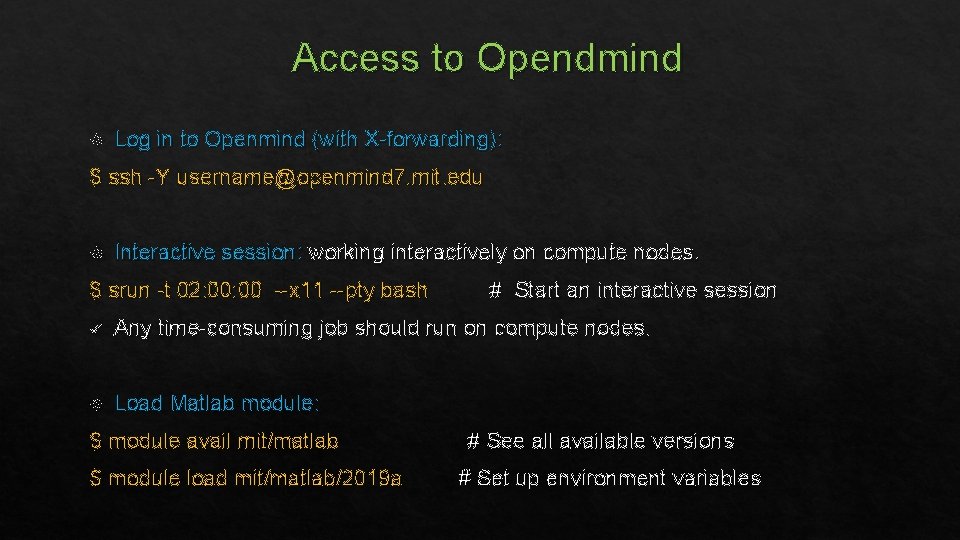

Access to Opendmind Log in to Openmind (with X-forwarding): $ ssh -Y username@openmind 7. mit. edu Interactive session: working interactively on compute nodes. $ srun -t 02: 00 --x 11 --pty bash # Start an interactive session ü Any time-consuming job should run on compute nodes. Load Matlab module: $ module avail mit/matlab $ module load mit/matlab/2019 a # See all available versions # Set up environment variables

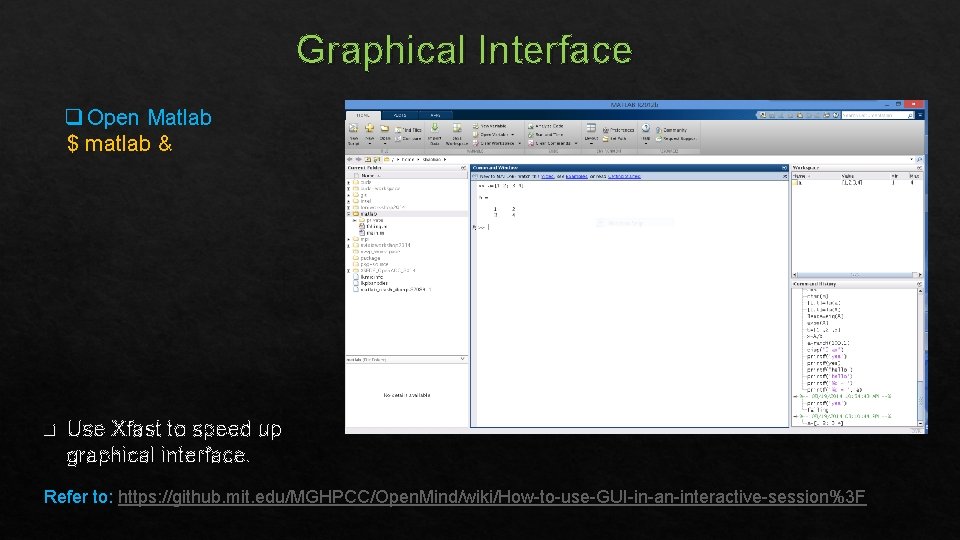

Graphical Interface q Open Matlab $ matlab & q Use Xfast to speed up graphical interface. Refer to: https: //github. mit. edu/MGHPCC/Open. Mind/wiki/How-to-use-GUI-in-an-interactive-session%3 F

Text platform $ matlab -nodisplay % Work in text interface. Does not display any graph. $ matlab -nodesktop % Program in text interface. Pop out graphs when necessary. v Many Linux commands (some prefixed with an exclamation mark) are available within Matlab platform, such as: cd, ls, pwd, !cp, !rm, !mv, !cat, !vim, !diff, and !grep v Edit M-file and run the program: >> !vim mfilename. m % edit in text window >> edit mfilename. m % create a new or open an existing m-file in graphical window >> open mfilename. m % open an existing m-file in graphical window >> run mfilename. m % run the program >> mfilename % run the program

Submit a batch job to background using a script $ sbatch script. sh ü Write a batch job script using any Linux editor (such as vim, emacs, gedit, or nano). ü A typical batch script for a Matlab job (Suppose that source codes are saved in an m-file): #!/bin/bash # Start a bash script #SBATCH -t 01: 00 # Wall time #SBATCH -n 1 # 1 CPU core module load mit/matlab/2019 a # Set up environment variables matlab -nodisplay -r ”my_program, exit" # Run a Matlab program

Exercise 1 Run a Matlab program on Openmind: Request for an interactive session first. i) Write a Matlab code in an m-file to print “Hello world” (e. g. use the disp function). ii) Run the program interactively in Matlab platform. iii) Submit a batch job to run the program in the background.

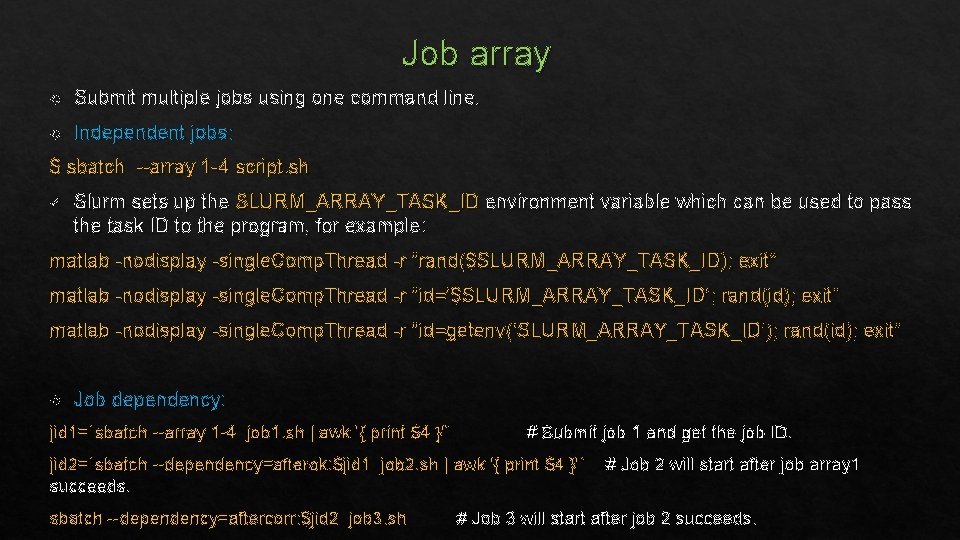

Job array Submit multiple jobs using one command line. Independent jobs: $ sbatch --array 1 -4 script. sh ü Slurm sets up the SLURM_ARRAY_TASK_ID environment variable which can be used to pass the task ID to the program, for example: matlab -nodisplay -single. Comp. Thread -r “rand($SLURM_ARRAY_TASK_ID); exit” matlab -nodisplay -single. Comp. Thread -r “id=‘$SLURM_ARRAY_TASK_ID’; rand(id); exit” matlab -nodisplay -single. Comp. Thread -r “id=getenv(‘SLURM_ARRAY_TASK_ID’); rand(id); exit” Job dependency: jid 1=`sbatch --array 1 -4 job 1. sh | awk '{ print $4 }'` # Submit job 1 and get the job ID. jid 2=`sbatch --dependency=afterok: $jid 1 job 2. sh | awk '{ print $4 }'` succeeds. sbatch --dependency=aftercorr: $jid 2 job 3. sh # Job 2 will start after job array 1 # Job 3 will start after job 2 succeeds.

Optimize Matlab codes Tools for measuring performance and optimizing codes Remove Optimize Use unnecessary work memory usage built-in functions/operators

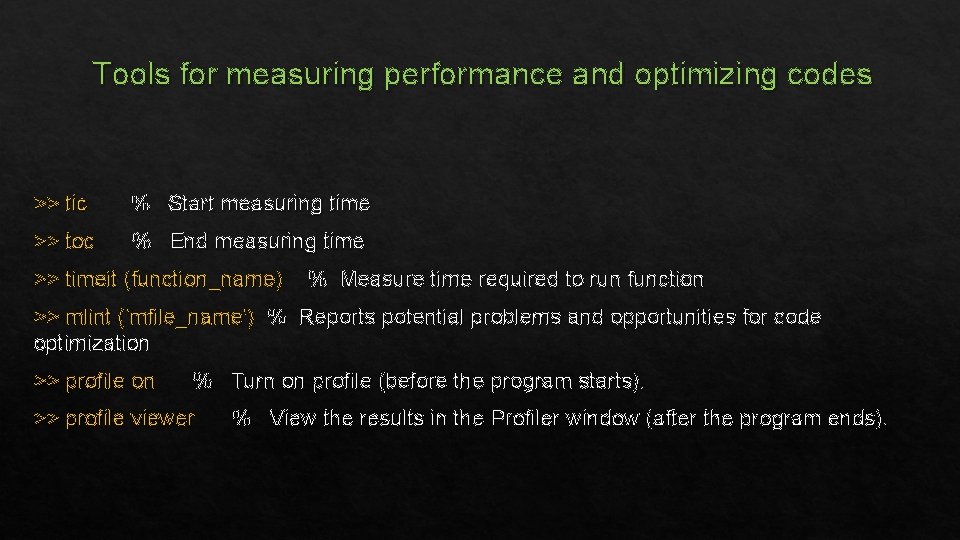

Tools for measuring performance and optimizing codes >> tic % Start measuring time >> toc % End measuring time >> timeit (function_name) % Measure time required to run function >> mlint ('mfile_name') % Reports potential problems and opportunities for code optimization >> profile on % Turn on profile (before the program starts). >> profile viewer % View the results in the Profiler window (after the program ends).

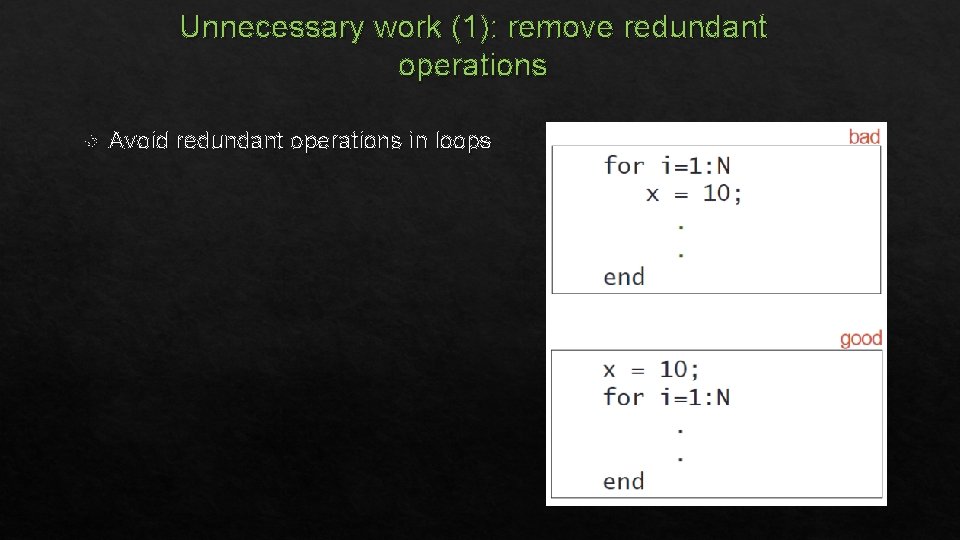

Unnecessary work (1): remove redundant operations Avoid redundant operations in loops

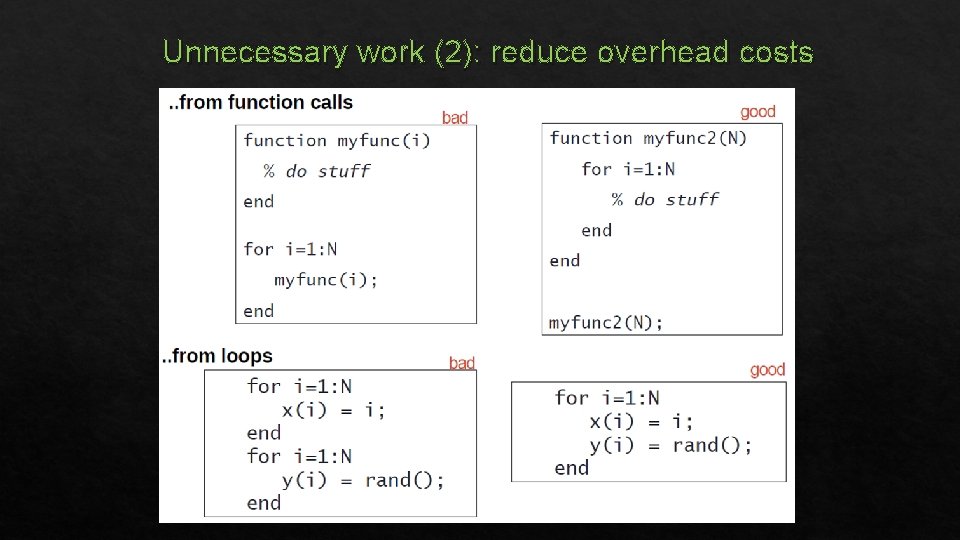

Unnecessary work (2): reduce overhead costs

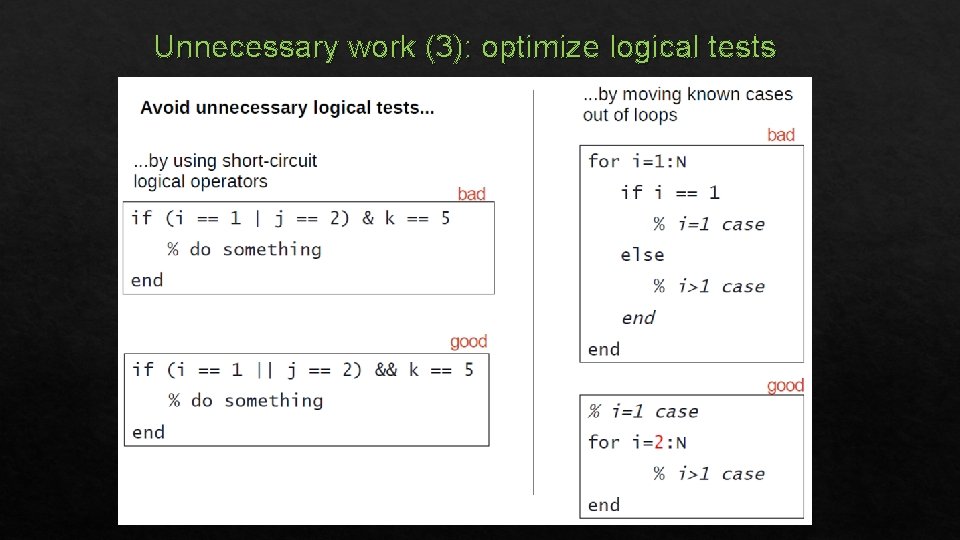

Unnecessary work (3): optimize logical tests

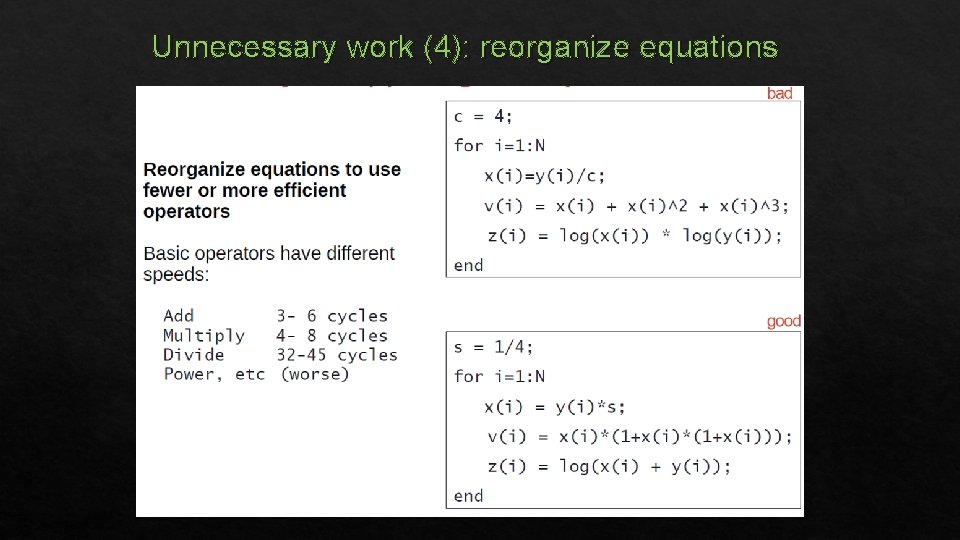

Unnecessary work (4): reorganize equations

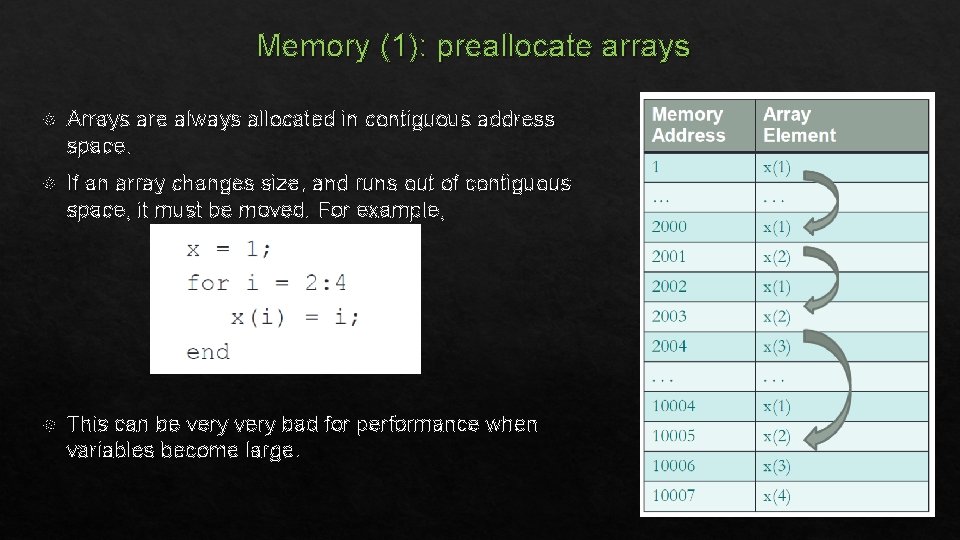

Memory (1): preallocate arrays Arrays are always allocated in contiguous address space. If an array changes size, and runs out of contiguous space, it must be moved. For example, This can be very bad for performance when variables become large.

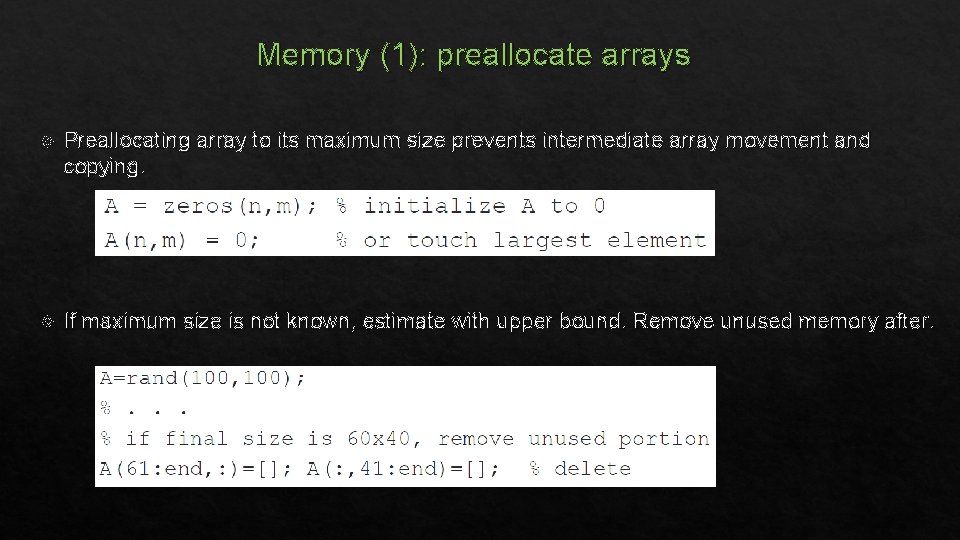

Memory (1): preallocate arrays Preallocating array to its maximum size prevents intermediate array movement and copying. If maximum size is not known, estimate with upper bound. Remove unused memory after.

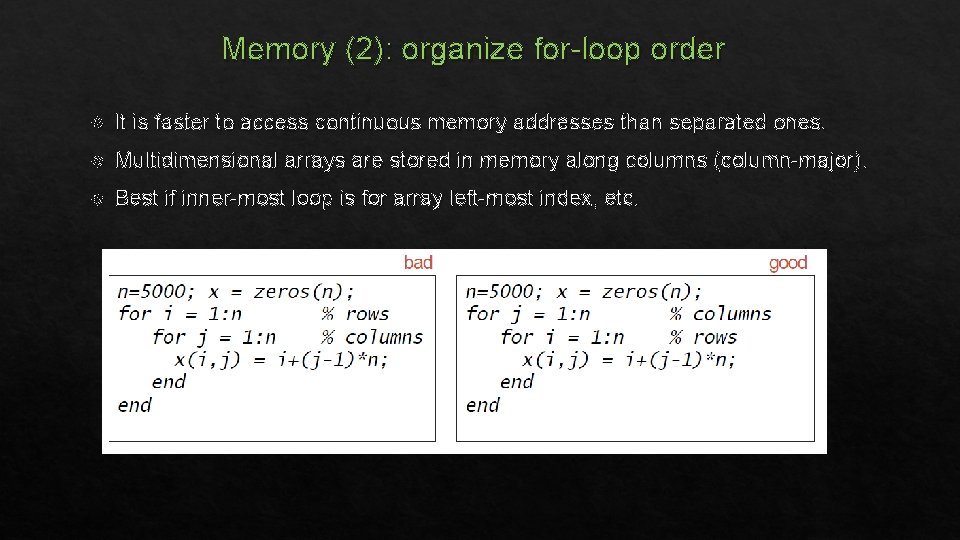

Memory (2): organize for-loop order It is faster to access continuous memory addresses than separated ones. Multidimensional arrays are stored in memory along columns (column-major). Best if inner-most loop is for array left-most index, etc.

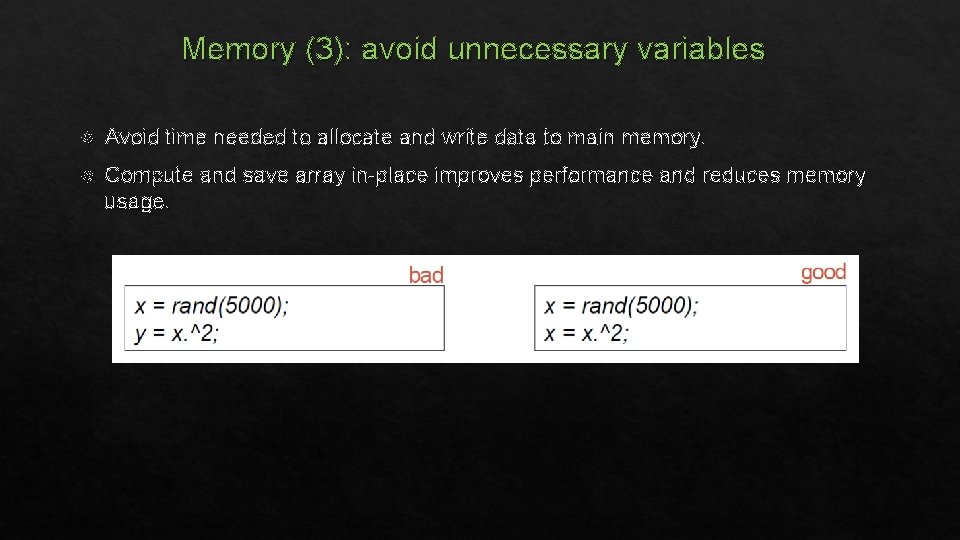

Memory (3): avoid unnecessary variables Avoid time needed to allocate and write data to main memory. Compute and save array in-place improves performance and reduces memory usage.

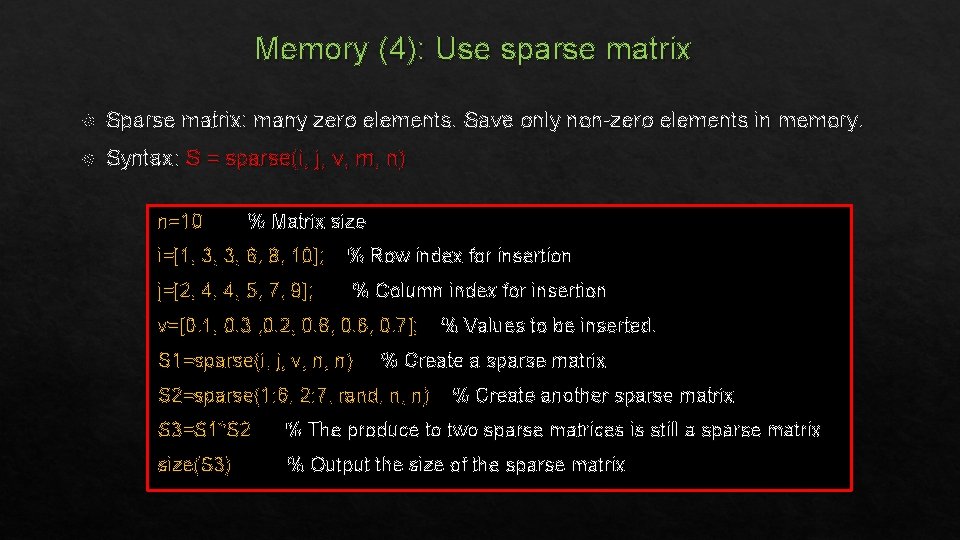

Memory (4): Use sparse matrix Sparse matrix: many zero elements. Save only non-zero elements in memory. Syntax: S = sparse(i, j, v, m, n) n=10 % Matrix size i=[1, 3, 3, 6, 8, 10]; % Row index for insertion j=[2, 4, 4, 5, 7, 9]; % Column index for insertion v=[0. 1, 0. 3 , 0. 2, 0. 8, 0. 6, 0. 7]; S 1=sparse(i, j, v, n, n) % Values to be inserted. % Create a sparse matrix S 2=sparse(1: 6, 2: 7, rand, n, n) % Create another sparse matrix S 3=S 1*S 2 % The produce to two sparse matrices is still a sparse matrix size(S 3) % Output the size of the sparse matrix

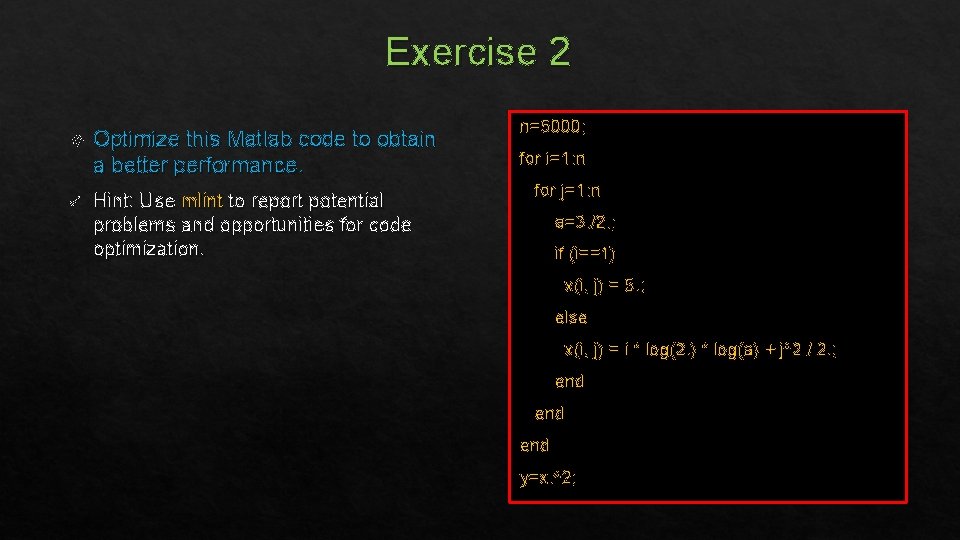

Exercise 2 Optimize this Matlab code to obtain a better performance. ü Hint: Use mlint to report potential problems and opportunities for code optimization. n=5000; for i=1: n for j=1: n a=3. /2. ; if (i==1) x(i, j) = 5. ; else x(i, j) = i * log(2. ) * log(a) + j^2 / 2. ; end end y=x. ^2;

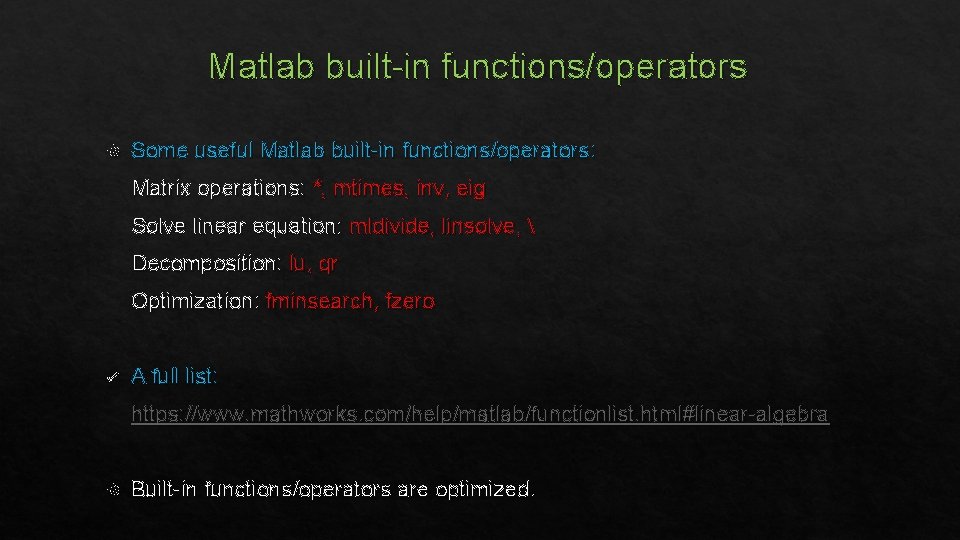

Matlab built-in functions/operators Some useful Matlab built-in functions/operators: Matrix operations: *, mtimes, inv, eig Solve linear equation: mldivide, linsolve, Decomposition: lu, qr Optimization: fminsearch, fzero ü A full list: https: //www. mathworks. com/help/matlab/functionlist. html#linear-algebra Built-in functions/operators are optimized.

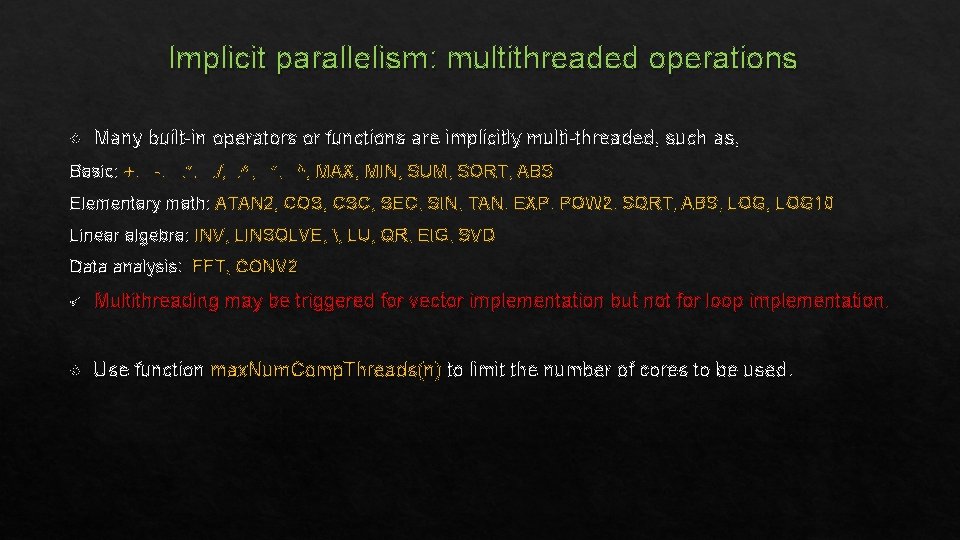

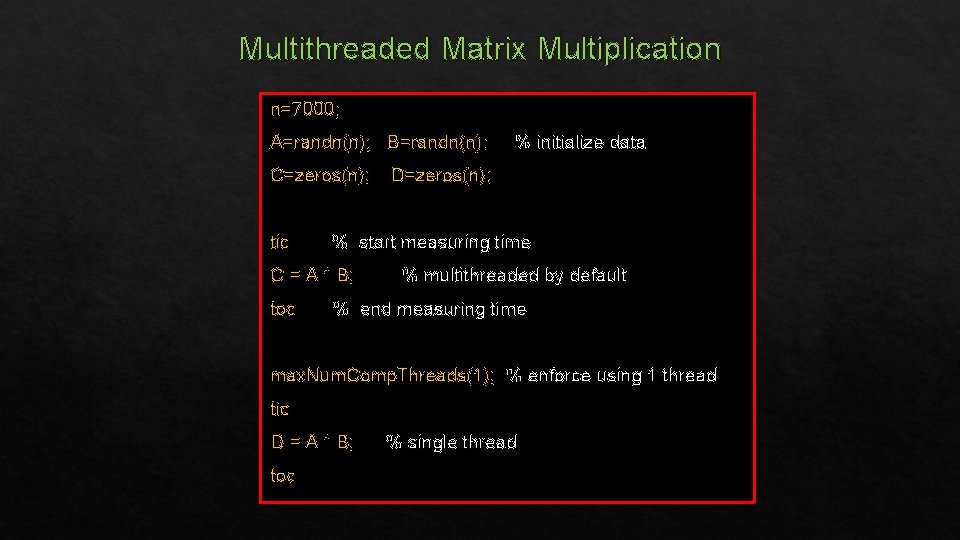

Implicit parallelism: multithreaded operations Many built-in operators or functions are implicitly multi-threaded, such as, Basic: +, -, . *, . /, . ^, *, ^, MAX, MIN, SUM, SORT, ABS Elementary math: ATAN 2, COS, CSC, SEC, SIN, TAN, EXP, POW 2, SQRT, ABS, LOG 10 Linear algebra: INV, LINSOLVE, , LU, QR, EIG, SVD Data analysis: FFT, CONV 2 ü Multithreading may be triggered for vector implementation but not for loop implementation. Use function max. Num. Comp. Threads(n) to limit the number of cores to be used.

Multithreaded Matrix Multiplication n=7000; A=randn(n); B=randn(n); C=zeros(n); tic D=zeros(n); % start measuring time C = A * B; toc % initialize data % multithreaded by default % end measuring time max. Num. Comp. Threads(1); % enforce using 1 thread tic D = A * B; toc % single thread

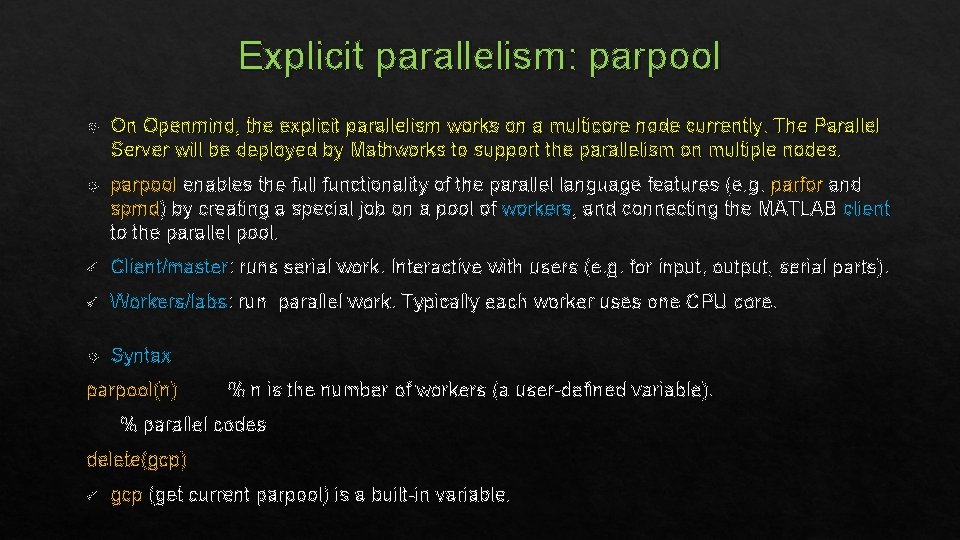

Explicit parallelism: parpool On Openmind, the explicit parallelism works on a multicore node currently. The Parallel Server will be deployed by Mathworks to support the parallelism on multiple nodes. parpool enables the full functionality of the parallel language features (e. g. parfor and spmd) by creating a special job on a pool of workers, and connecting the MATLAB client to the parallel pool. ü Client/master: runs serial work. Interactive with users (e. g. for input, output, serial parts). ü Workers/labs: run parallel work. Typically each worker uses one CPU core. Syntax parpool(n) % n is the number of workers (a user-defined variable). % parallel codes delete(gcp) ü gcp (get current parpool) is a built-in variable.

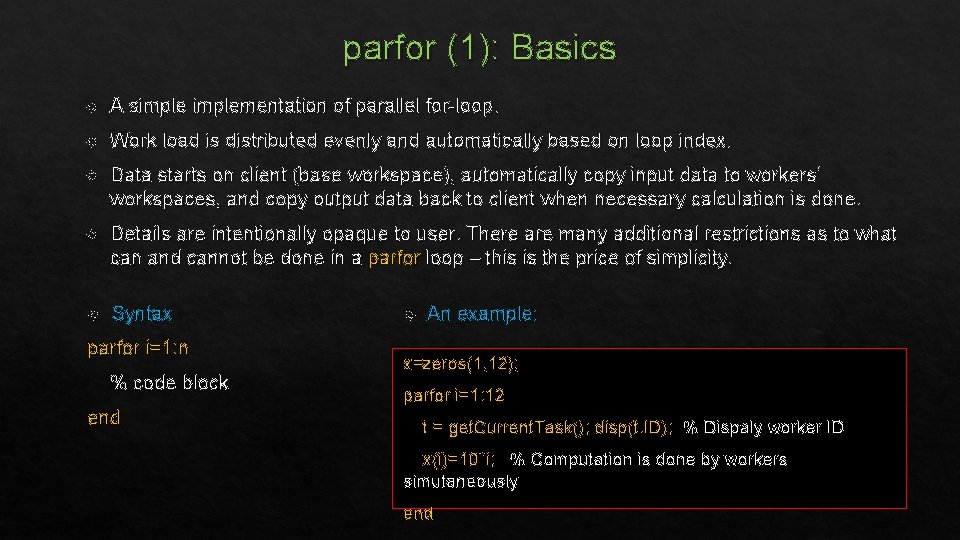

parfor (1): Basics A simplementation of parallel for-loop. Work load is distributed evenly and automatically based on loop index. Data starts on client (base workspace), automatically copy input data to workers’ workspaces, and copy output data back to client when necessary calculation is done. Details are intentionally opaque to user. There are many additional restrictions as to what can and cannot be done in a parfor loop – this is the price of simplicity. Syntax parfor i=1: n % code block end An example: x=zeros(1, 12); parfor i=1: 12 t = get. Current. Task(); disp(t. ID); % Dispaly worker ID x(i)=10*i; % Computation is done by workers simutaneously end

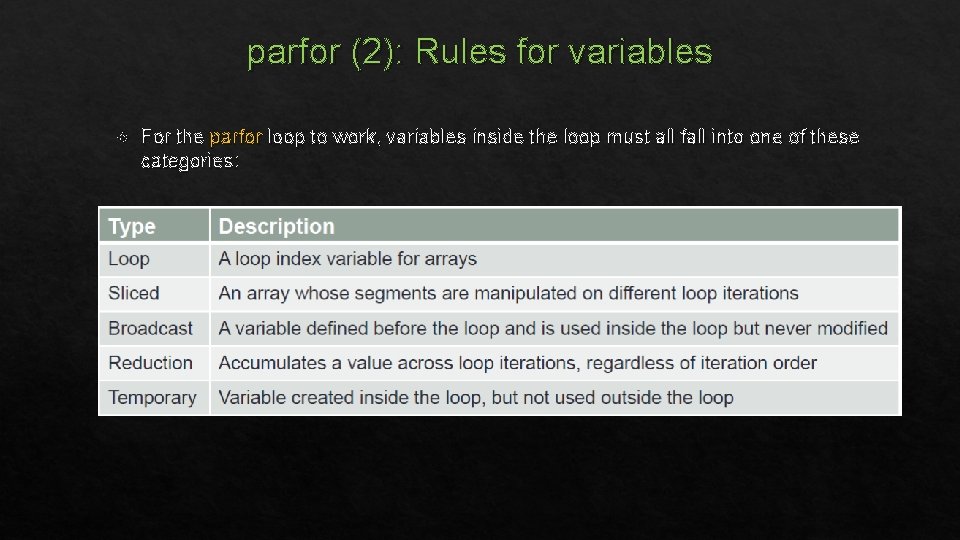

parfor (2): Rules for variables For the parfor loop to work, variables inside the loop must all fall into one of these categories:

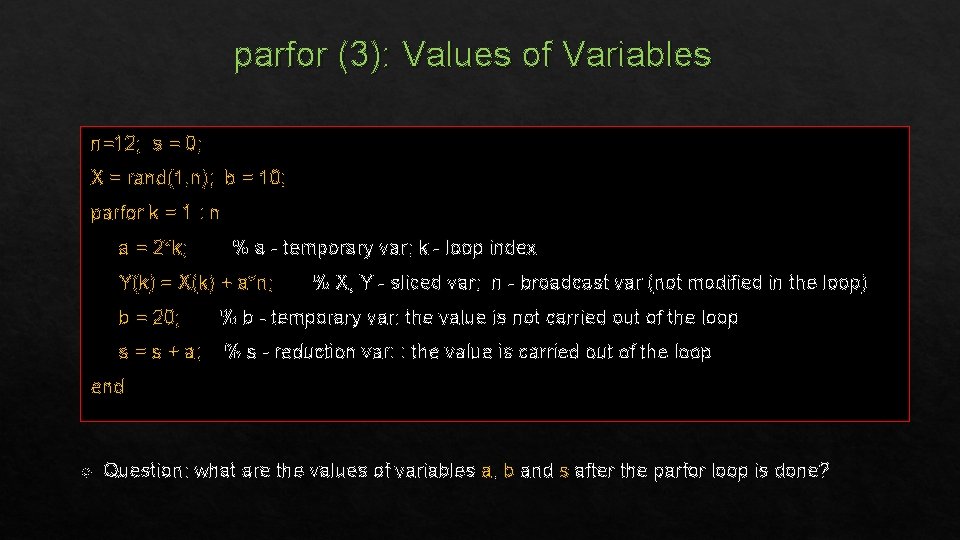

parfor (3): Values of Variables n=12; s = 0; X = rand(1, n); b = 10; parfor k = 1 : n a = 2*k; % a - temporary var; k - loop index Y(k) = X(k) + a*n; % X, Y - sliced var; n - broadcast var (not modified in the loop) b = 20; % b - temporary var: the value is not carried out of the loop s = s + a; % s - reduction var: : the value is carried out of the loop end Question: what are the values of variables a, b and s after the parfor loop is done?

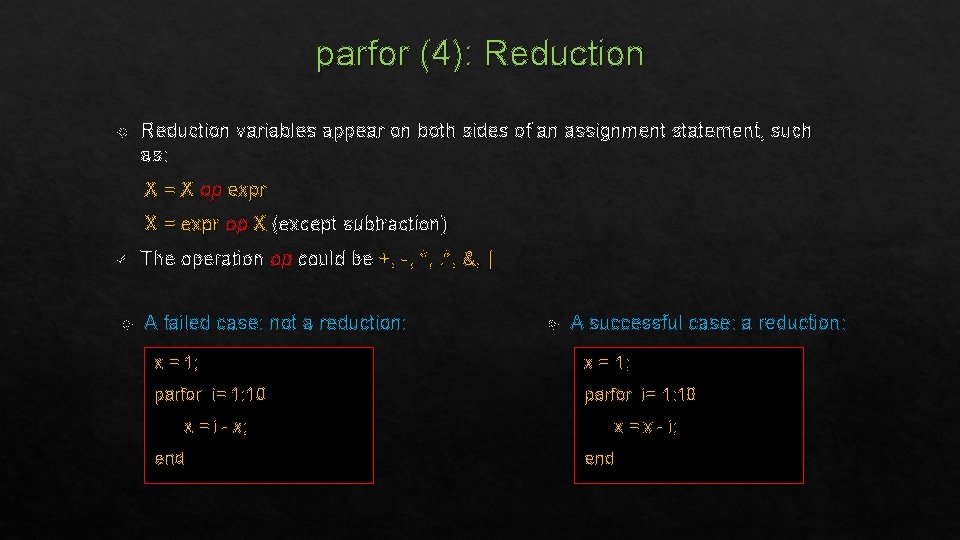

parfor (4): Reduction variables appear on both sides of an assignment statement, such as: X = X op expr X = expr op X (except subtraction) ü The operation op could be +, -, *, &, | A failed case: not a reduction: A successful case: a reduction: x = 1; parfor i= 1: 10 x = i - x; end x = x - i; end

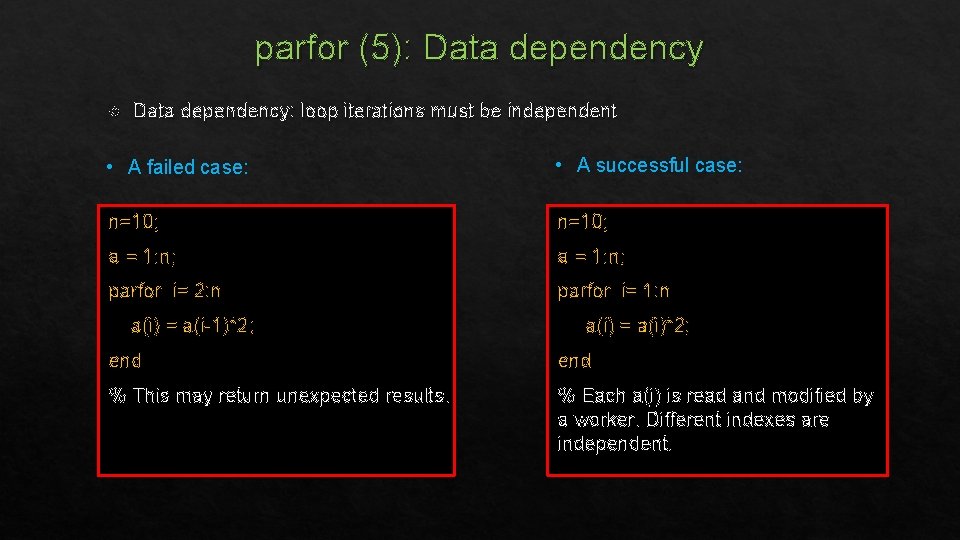

parfor (5): Data dependency: loop iterations must be independent • A failed case: • A successful case: n=10; a = 1: n; parfor i= 2: n parfor i= 1: n a(i) = a(i-1)*2; a(i) = a(i)*2; end % This may return unexpected results. % Each a(i) is read and modified by a worker. Different indexes are independent.

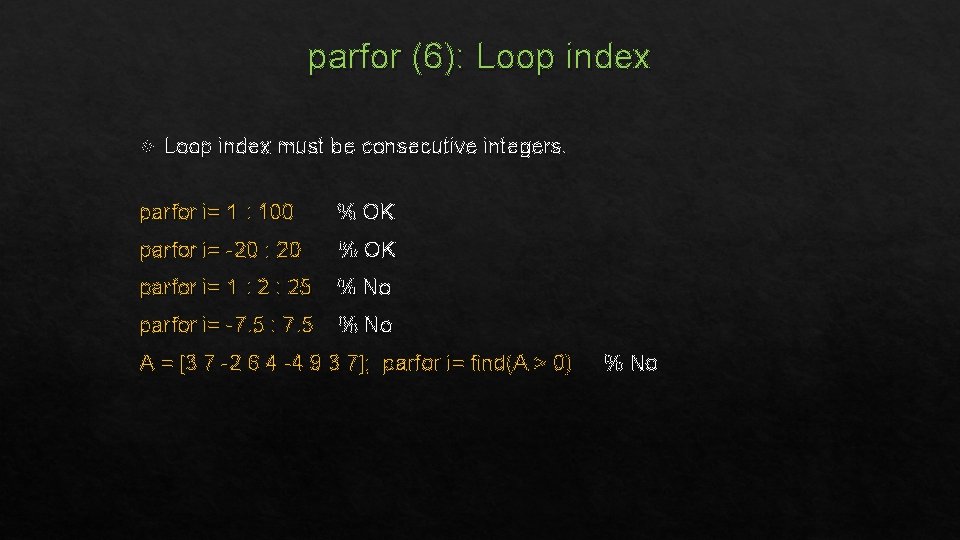

parfor (6): Loop index must be consecutive integers. parfor i= 1 : 100 % OK parfor i= -20 : 20 % OK parfor i= 1 : 25 % No parfor i= -7. 5 : 7. 5 % No A = [3 7 -2 6 4 -4 9 3 7]; parfor i= find(A > 0) % No

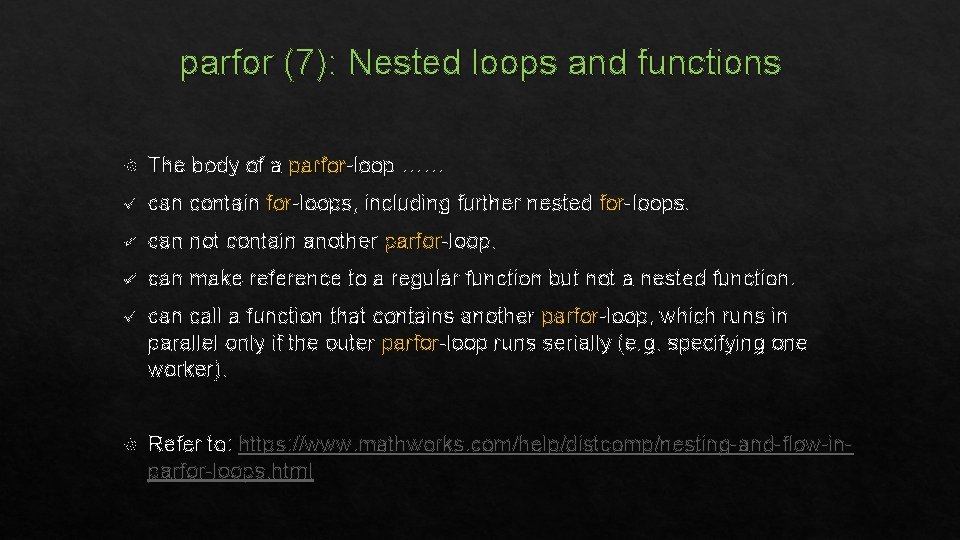

parfor (7): Nested loops and functions The body of a parfor-loop …… ü can contain for-loops, including further nested for-loops. ü can not contain another parfor-loop. ü can make reference to a regular function but not a nested function. ü can call a function that contains another parfor-loop, which runs in parallel only if the outer parfor-loop runs serially (e. g. specifying one worker). Refer to: https: //www. mathworks. com/help/distcomp/nesting-and-flow-inparfor-loops. html

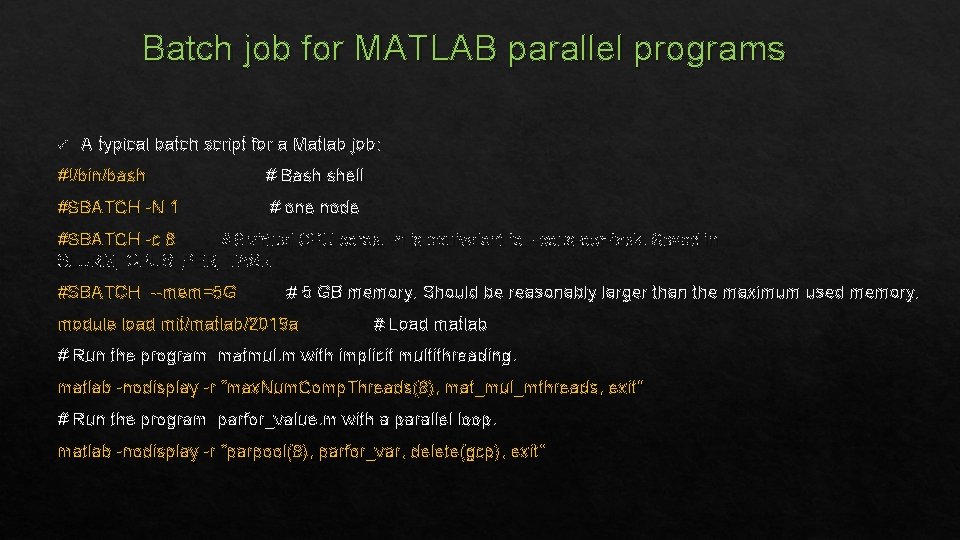

Batch job for MATLAB parallel programs ü A typical batch script for a Matlab job: #!/bin/bash # Bash shell #SBATCH -N 1 # one node #SBATCH -c 8 # 8 virtual CPU cores. -n is equivalent to --cpus-per-task. Saved in SLURM_CPUS_PER_TASK #SBATCH --mem=5 G # 5 GB memory. Should be reasonably larger than the maximum used memory. module load mit/matlab/2019 a # Load matlab # Run the program matmul. m with implicit multithreading. matlab -nodisplay -r “max. Num. Comp. Threads(8), mat_mul_mthreads, exit” # Run the program parfor_value. m with a parallel loop. matlab -nodisplay -r “parpool(8), parfor_var, delete(gcp), exit”

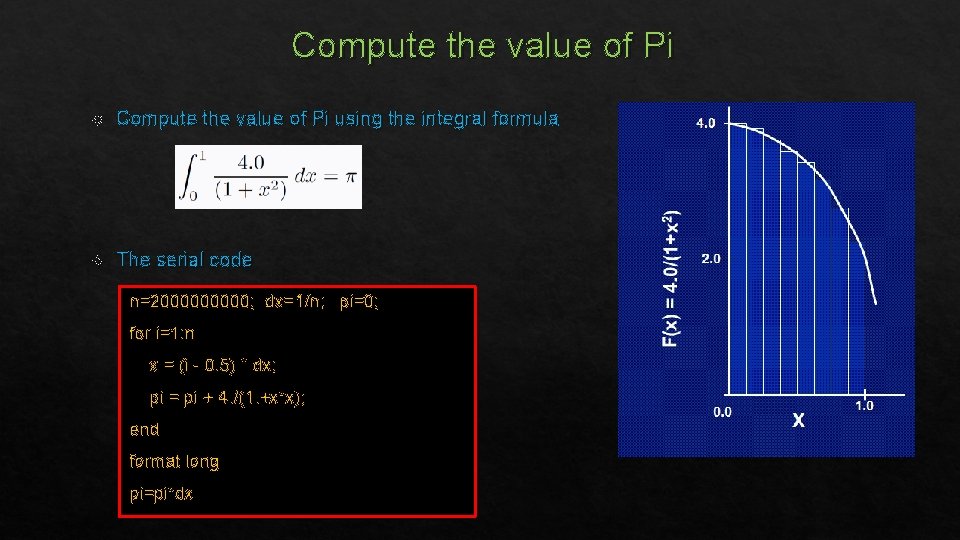

Compute the value of Pi using the integral formula The serial code n=200000; dx=1/n; pi=0; for i=1: n x = (i - 0. 5) * dx; pi = pi + 4. /(1. +x*x); end format long pi=pi*dx

Exercise 3 Compute the value of Pi using parfor i) Parallelize the code using parfor. Check whether all variables in the parfor region fall into one of the valid categories. ii) Compare the performances of the serial and the parallel codes.

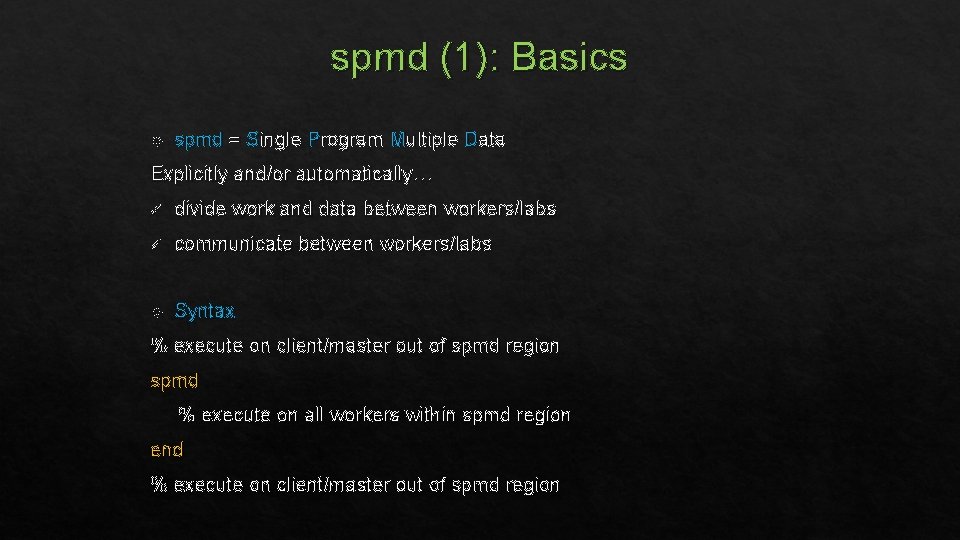

spmd (1): Basics spmd = Single Program Multiple Data Explicitly and/or automatically… ü divide work and data between workers/labs ü communicate between workers/labs Syntax % execute on client/master out of spmd region spmd % execute on all workers within spmd region end % execute on client/master out of spmd region

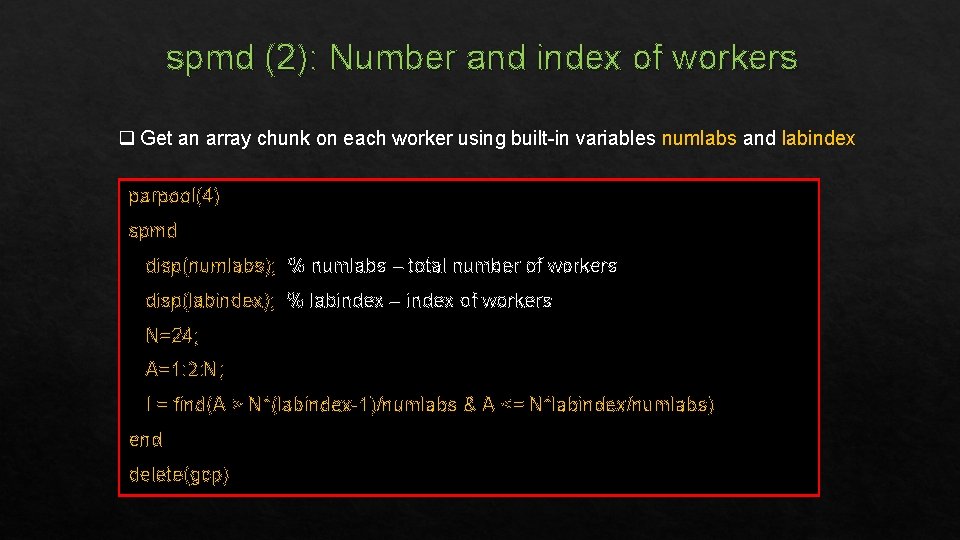

spmd (2): Number and index of workers q Get an array chunk on each worker using built-in variables numlabs and labindex parpool(4) spmd disp(numlabs); % numlabs – total number of workers disp(labindex); % labindex – index of workers N=24; A=1: 2: N; I = find(A > N*(labindex-1)/numlabs & A <= N*labindex/numlabs) end delete(gcp)

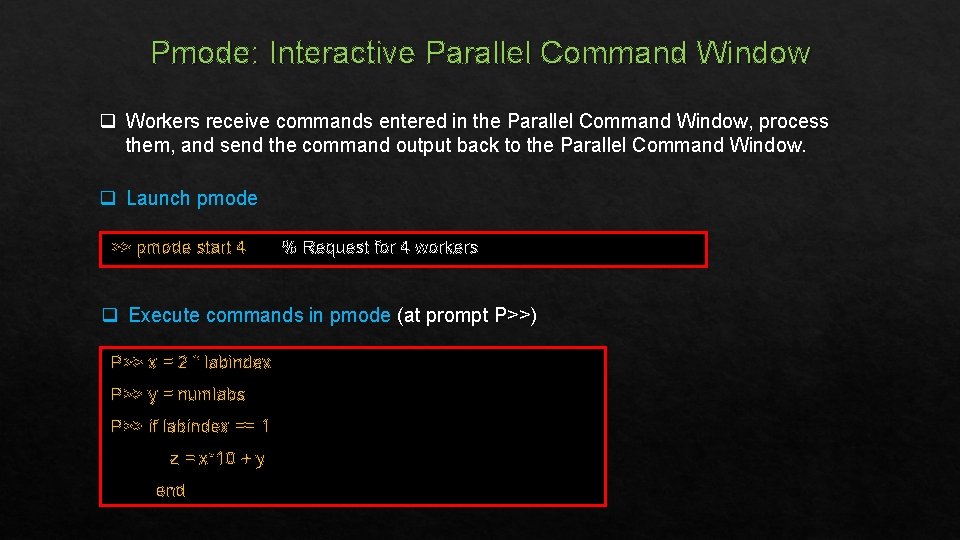

Pmode: Interactive Parallel Command Window q Workers receive commands entered in the Parallel Command Window, process them, and send the command output back to the Parallel Command Window. q Launch pmode >> pmode start 4 % Request for 4 workers q Execute commands in pmode (at prompt P>>) P>> x = 2 * labindex P>> y = numlabs P>> if labindex == 1 z = x*10 + y end

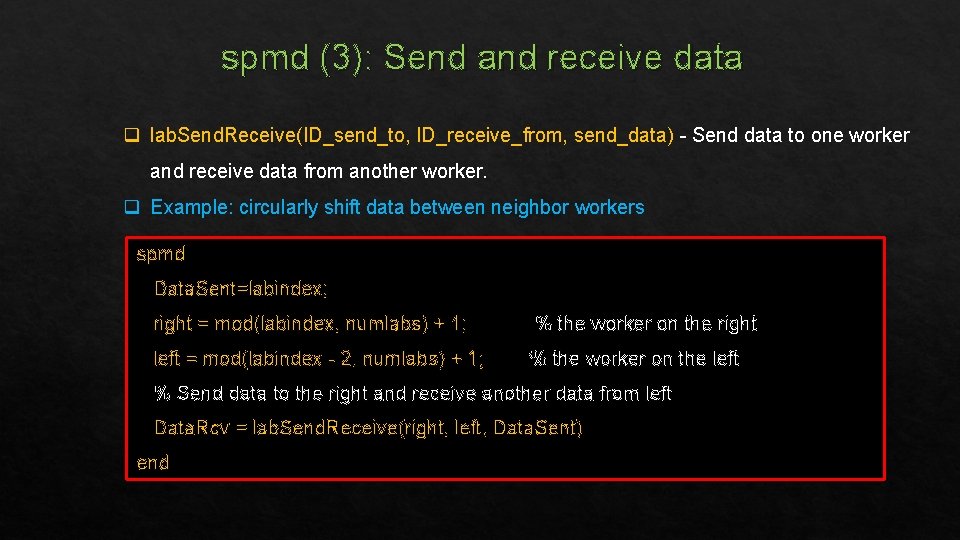

spmd (3): Send and receive data q lab. Send. Receive(ID_send_to, ID_receive_from, send_data) - Send data to one worker and receive data from another worker. q Example: circularly shift data between neighbor workers spmd Data. Sent=labindex; right = mod(labindex, numlabs) + 1; % the worker on the right left = mod(labindex - 2, numlabs) + 1; % the worker on the left % Send data to the right and receive another data from left Data. Rcv = lab. Send. Receive(right, left, Data. Sent) end

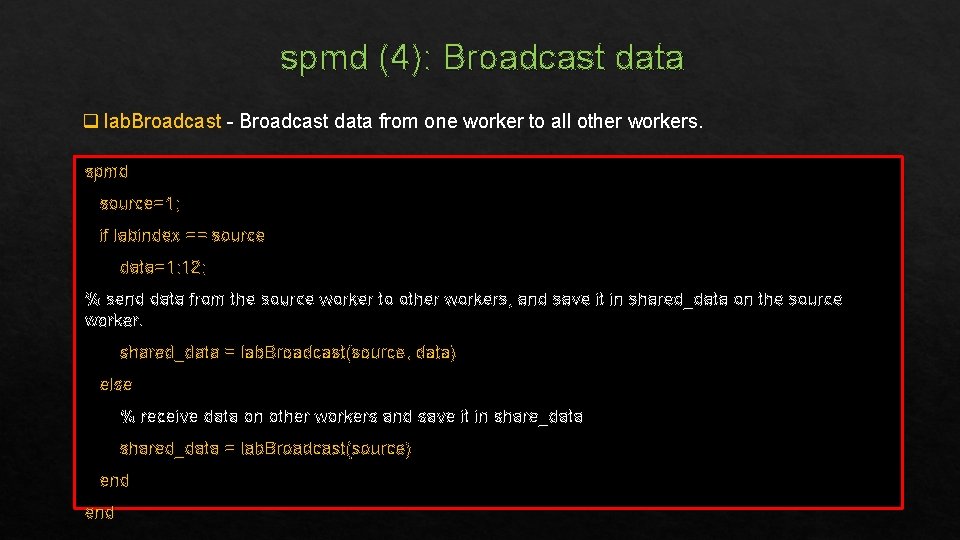

spmd (4): Broadcast data q lab. Broadcast - Broadcast data from one worker to all other workers. spmd source=1; if labindex == source data=1: 12; % send data from the source worker to other workers, and save it in shared_data on the source worker. shared_data = lab. Broadcast(source, data) else % receive data on other workers and save it in share_data shared_data = lab. Broadcast(source) end

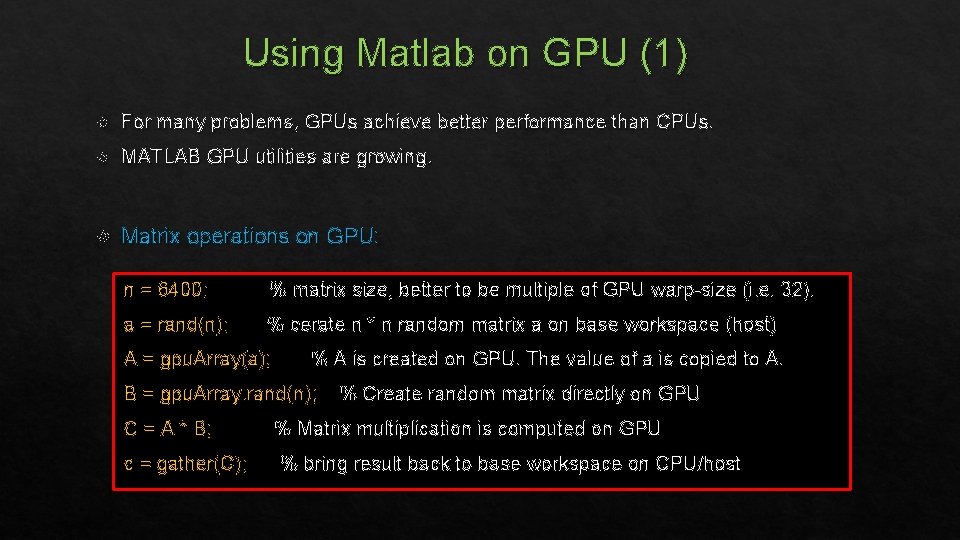

Using Matlab on GPU (1) For many problems, GPUs achieve better performance than CPUs. MATLAB GPU utilities are growing. Matrix operations on GPU: n = 6400; % matrix size, better to be multiple of GPU warp-size (i. e. 32). a = rand(n); % cerate n * n random matrix a on base workspace (host) A = gpu. Array(a); % A is created on GPU. The value of a is copied to A. B = gpu. Array. rand(n); C = A * B; c = gather(C); % Create random matrix directly on GPU % Matrix multiplication is computed on GPU % bring result back to base workspace on CPU/host

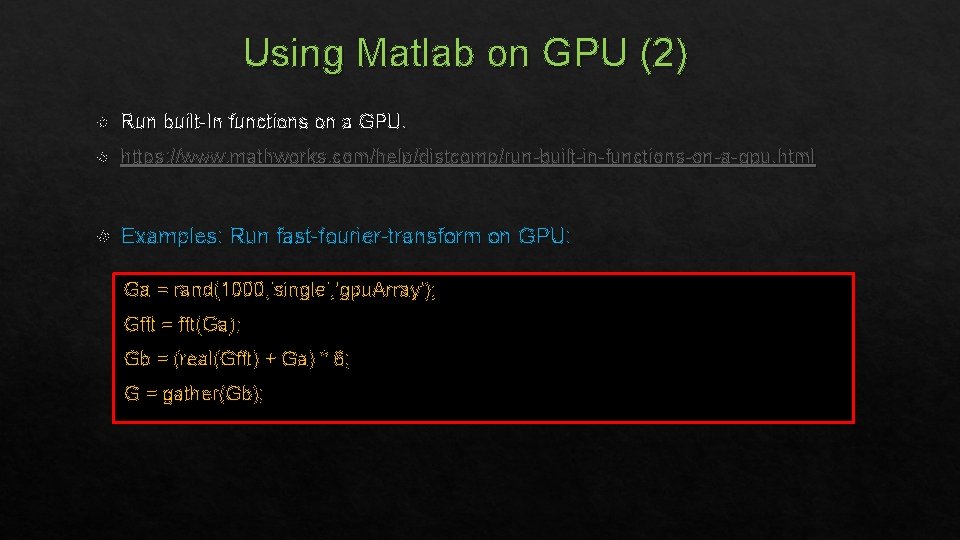

Using Matlab on GPU (2) Run built-In functions on a GPU. https: //www. mathworks. com/help/distcomp/run-built-in-functions-on-a-gpu. html Examples: Run fast-fourier-transform on GPU: Ga = rand(1000, 'single', 'gpu. Array'); Gfft = fft(Ga); Gb = (real(Gfft) + Ga) * 6; G = gather(Gb);

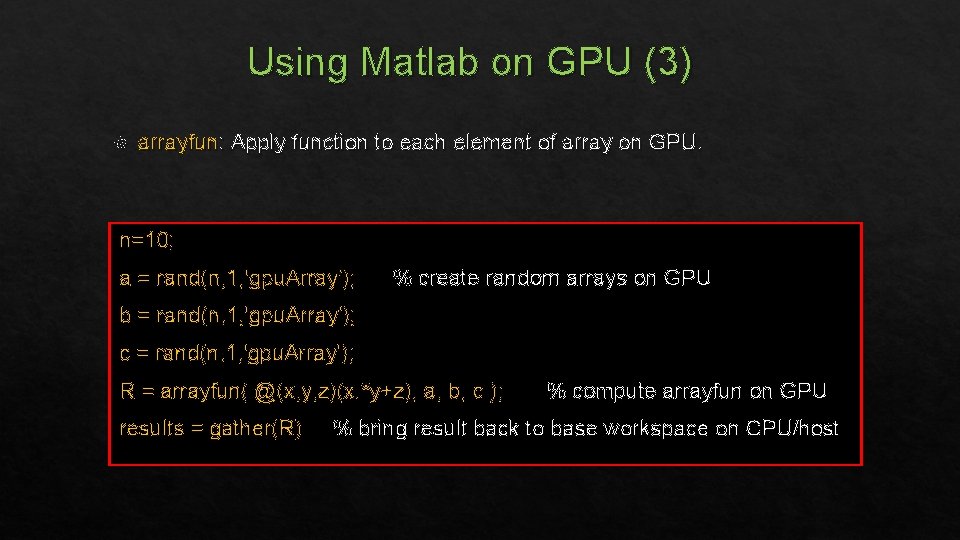

Using Matlab on GPU (3) arrayfun: Apply function to each element of array on GPU. n=10; a = rand(n, 1, 'gpu. Array'); % create random arrays on GPU b = rand(n, 1, 'gpu. Array'); c = rand(n, 1, 'gpu. Array'); R = arrayfun( @(x, y, z)(x. *y+z), a, b, c ); results = gather(R) % compute arrayfun on GPU % bring result back to base workspace on CPU/host

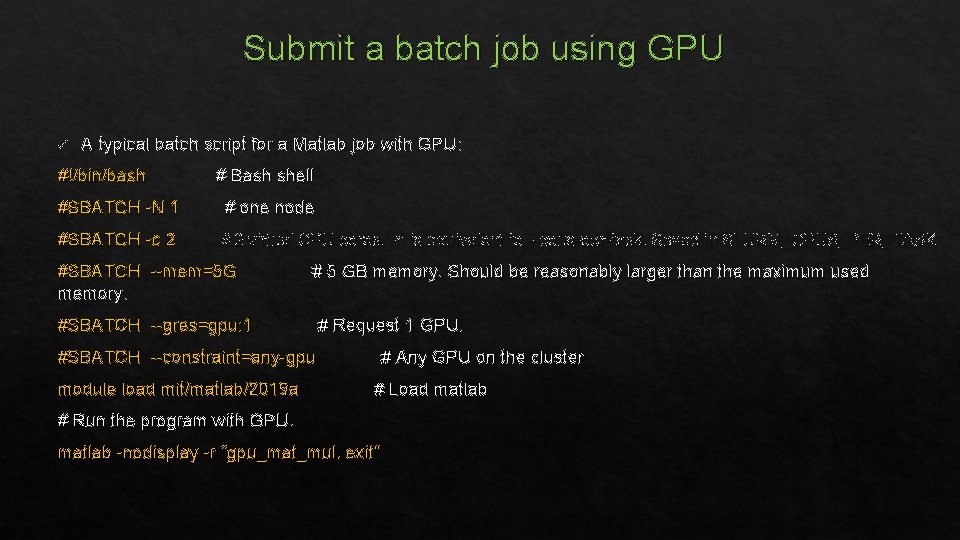

Submit a batch job using GPU ü A typical batch script for a Matlab job with GPU: #!/bin/bash # Bash shell #SBATCH -N 1 # one node #SBATCH -c 2 # 2 virtual CPU cores. -n is equivalent to --cpus-per-task. Saved in SLURM_CPUS_PER_TASK #SBATCH --mem=5 G memory. # 5 GB memory. Should be reasonably larger than the maximum used #SBATCH --gres=gpu: 1 # Request 1 GPU. #SBATCH --constraint=any-gpu module load mit/matlab/2019 a # Any GPU on the cluster # Load matlab # Run the program with GPU. matlab -nodisplay -r “gpu_mat_mul, exit”

Further Information Math. Works Web sites: ü MATLAB Parallel Computing Toolbox documentation: http: //www. mathworks. com/help/distcomp/index. html ü MATLAB documentation: http: //www. mathworks. com/help/matlab/ A book: Accelerating MATLAB Performance: 1001 tips to speed up MATLAB programs by Yair M. Altman Further help: Submit an issue at https: //github. mit. edu/MGHPCC/openmind/issues Email: shaohao@mit. edu Slack channel for OM users: openmind-46. slack. com

- Slides: 45