Machine Translation II Wordbased SMT Ling 571 Fei

Machine Translation (II): Word-based SMT Ling 571 Fei Xia Week 10: 12/1/05 -12/6/05

Outline • General concepts – Source channel model – Notations – Word alignment • Model 1 -2 • Model 3 -4 • Model 5

IBM Model Basics • Classic paper: Brown et. al. (1993) • Translation: F E (or Fr Eng) • Resource required: – Parallel data (a set of “sentence” pairs) • Main concepts: – Source channel model – Hidden word alignment – EM training

Intuition • Sentence pairs: word mapping is one-to-one. – (1) S: a b c d e T: l m n o p – (2) S: c a e T: p n m – (3) S: d a c T: n p l (b, o), (d, l), (e, m), and (a, p), (c, n), or (a, n), (c, p)

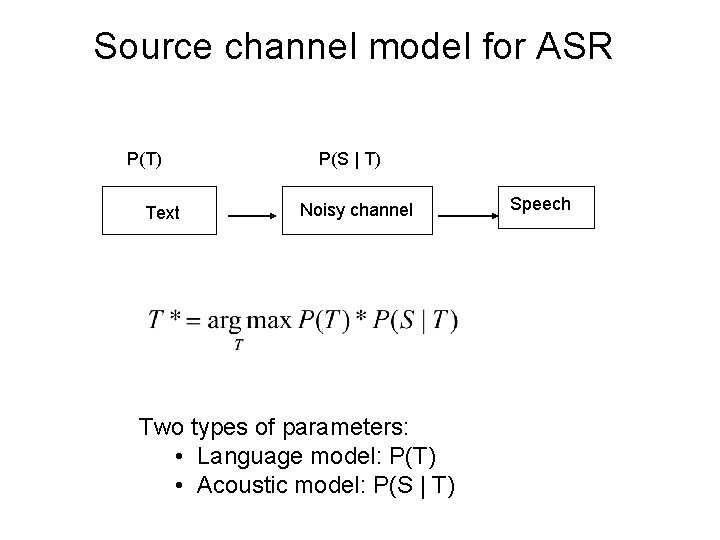

Source channel model • Task: S T • Source channel (a. k. a. noisy channel, noisy source channel): use the Bayes Rule. • Two types of parameters: – P(T): language model – P(S | T): its meaning varies.

Source channel model for ASR P(T) Text P(S | T) Noisy channel Two types of parameters: • Language model: P(T) • Acoustic model: P(S | T) Speech

Source Channel for ASR • People think in text. • A sentence can be characterized by a plausibility filter P(T). • Sentences are “corrupted” into speech by an acoustic model P(S | T). • Our goal is to find the original text. To achieve this goal, we efficiently evaluate P(T) * P(S | T) over many candidate sentences.

Source channel model for MT P(T) Tgt sent P(S | T) Noisy channel Two types of parameters: • Language model: P(T) • Translation model: P(S | T) Src sent

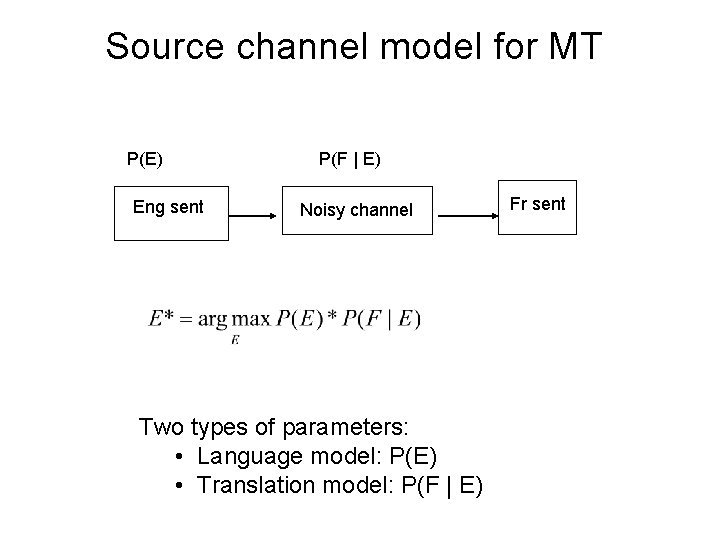

Source channel model for MT P(E) Eng sent P(F | E) Noisy channel Two types of parameters: • Language model: P(E) • Translation model: P(F | E) Fr sent

Source channel for MT • People think in English. • English thoughts can be characterized by a plausibility filter P(E). • Sentences are “corrupted” into a different “language” by a translation model P(F | E). • Our goal is to find the original, uncorrupted English sentence e. To achieve this goal, we efficiently evaluate P(E) * P(F | E) over many candidate Eng sentences.

Source channel vs. direct model • Source channel: demand plausible Eng and strong correlation between e and f. • Direct model: demand strong correlation between e and f. • Question: Formally, they are the same. In practice, they are not due to different approximations.

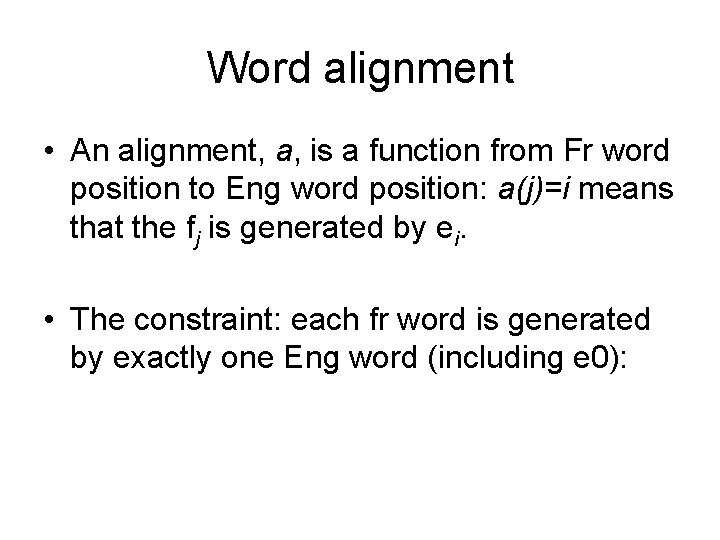

Word alignment • a(j)=i aj = i • a = (a 1, …, am) • Ex: – F: f 1 f 2 – E: e 1 e 2 f 3 f 4 e 3 – a 4=3 – a = (0, 1, 1, 3, 2) f 5 e 4

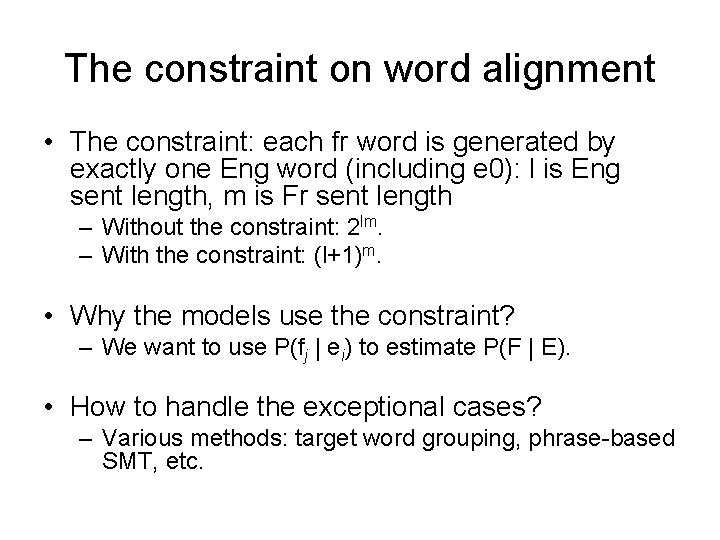

The constraint on word alignment • The constraint: each fr word is generated by exactly one Eng word (including e 0): l is Eng sent length, m is Fr sent length – Without the constraint: 2 lm. – With the constraint: (l+1)m. • Why the models use the constraint? – We want to use P(fj | ei) to estimate P(F | E). • How to handle the exceptional cases? – Various methods: target word grouping, phrase-based SMT, etc.

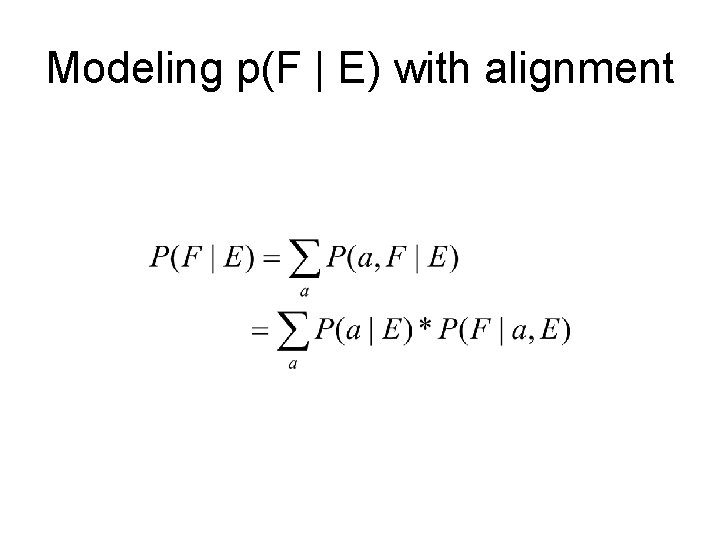

Modeling p(F | E) with alignment

Notation • • E: e i: F: f j: the Eng sentence: E = e 1 …el the i-th Eng word. the Fr sentence: f 1 … fm the j-th Fr word. • e 0: the Eng NULL word • F 0 : the Fr NULL word. • a j: the position of Eng word that generates fj.

Word alignment • An alignment, a, is a function from Fr word position to Eng word position: a(j)=i means that the fj is generated by ei. • The constraint: each fr word is generated by exactly one Eng word (including e 0):

Notation (cont) • • • l: Eng sent leng m: Fr sent leng i: Eng word position j: Fr word position e: an Eng word f: a Fr word

Outline • General concepts – Source channel model – Word alignment – Notations • Model 1 -2 • Model 3 -4

Model 1 and 2

Model 1 and 2 • Modeling – Generative process – Decomposition – Formula and types of parameters • Training • Finding the best alignment • Decoding

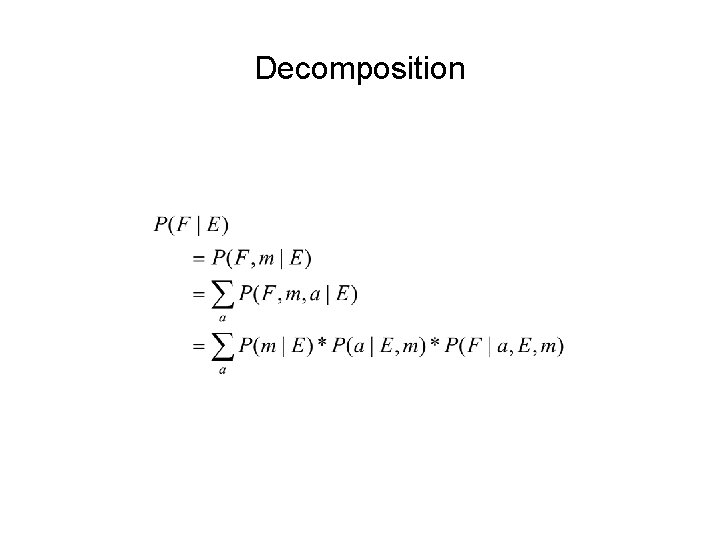

Generative process • To generate F from E: – Pick a length m for F, with prob P(m | l) – Choose an alignment a, with prob P(a | E, m) – Generate Fr sent given the Eng sent and the alignment, with prob P(F | E, a, m). • Another way to look at it: – Pick a length m for F, with prob P(m | l). – For j=1 to m • Pick an Eng word index aj, with prob P(aj | j, m, l). • Pick a Fr word fj according to the Eng word ei, where aj=I, with prob P(fj | ei ).

Decomposition

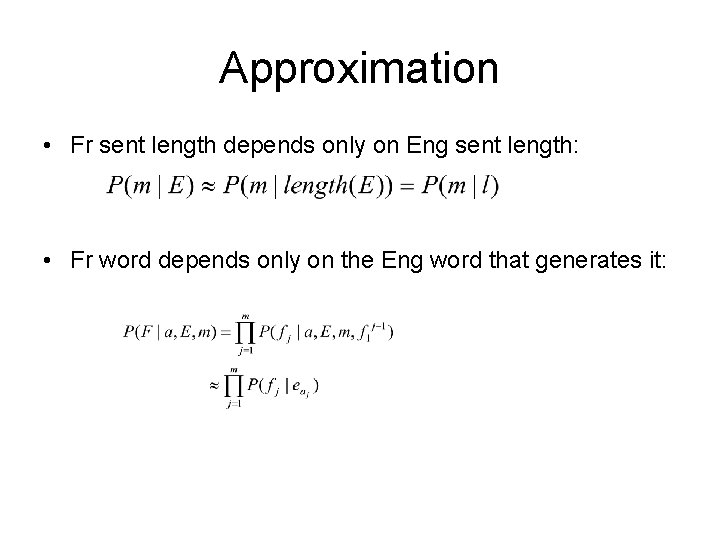

Approximation • Fr sent length depends only on Eng sent length: • Fr word depends only on the Eng word that generates it:

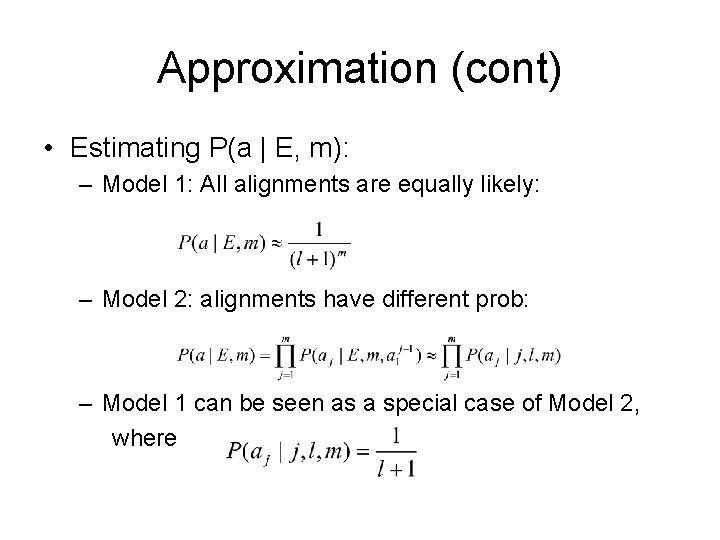

Approximation (cont) • Estimating P(a | E, m): – Model 1: All alignments are equally likely: – Model 2: alignments have different prob: – Model 1 can be seen as a special case of Model 2, where

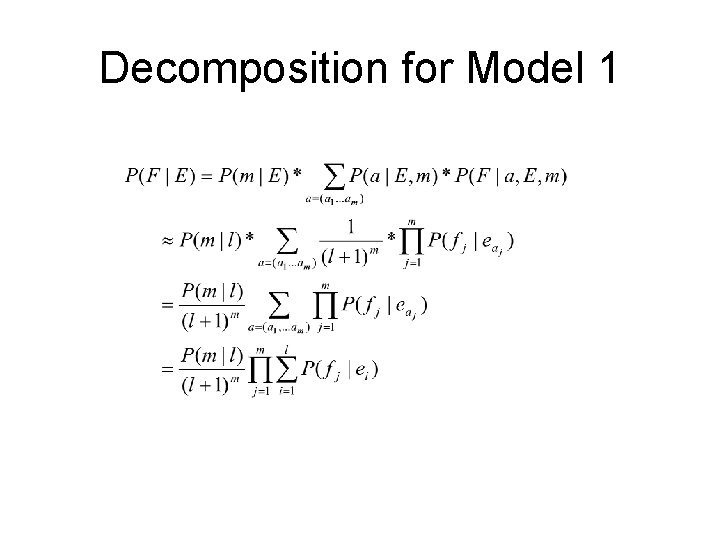

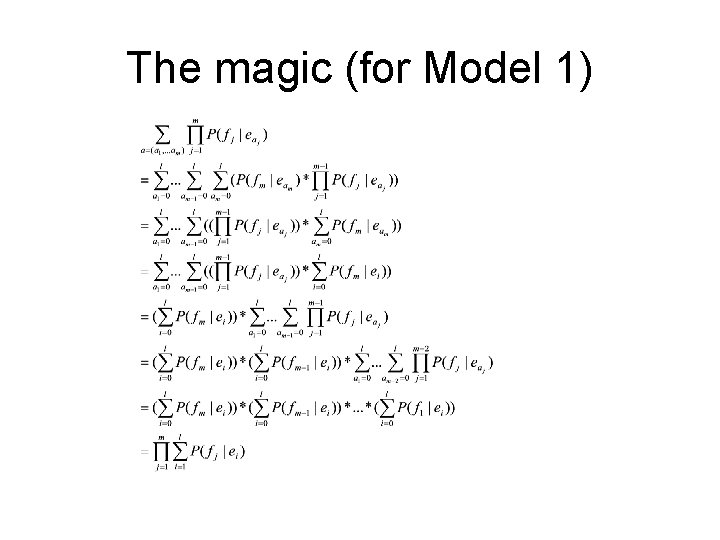

Decomposition for Model 1

The magic (for Model 1)

Final formula and parameters for Model 1 Two types of parameters: • Length prob: P(m | l) • Translation prob: P(fj | ei), or t(fj | ei),

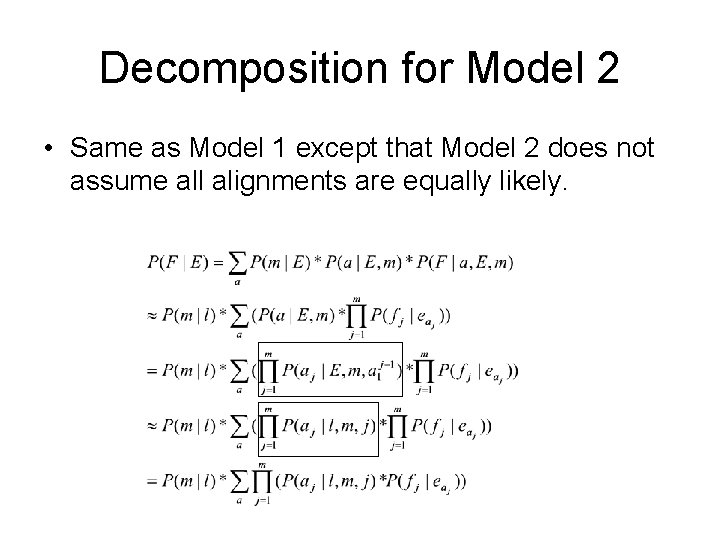

Decomposition for Model 2 • Same as Model 1 except that Model 2 does not assume all alignments are equally likely.

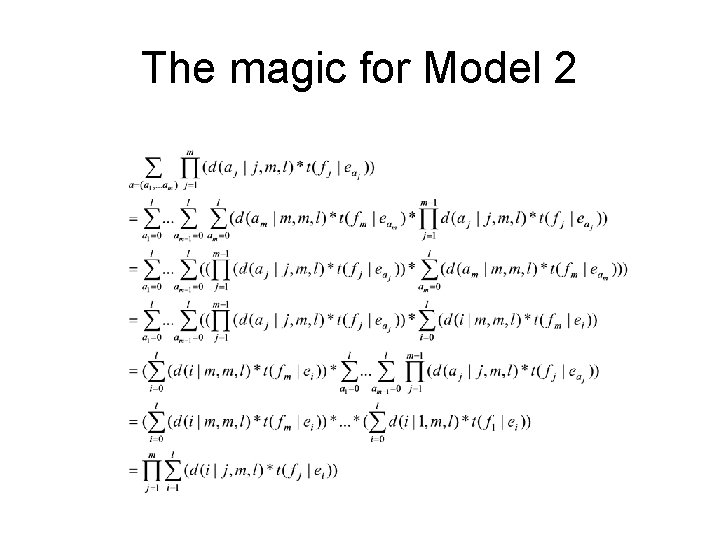

The magic for Model 2

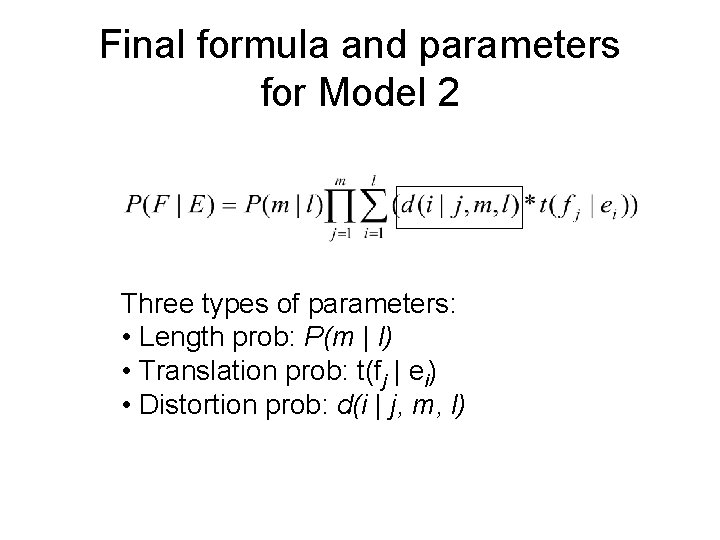

Final formula and parameters for Model 2 Three types of parameters: • Length prob: P(m | l) • Translation prob: t(fj | ei) • Distortion prob: d(i | j, m, l)

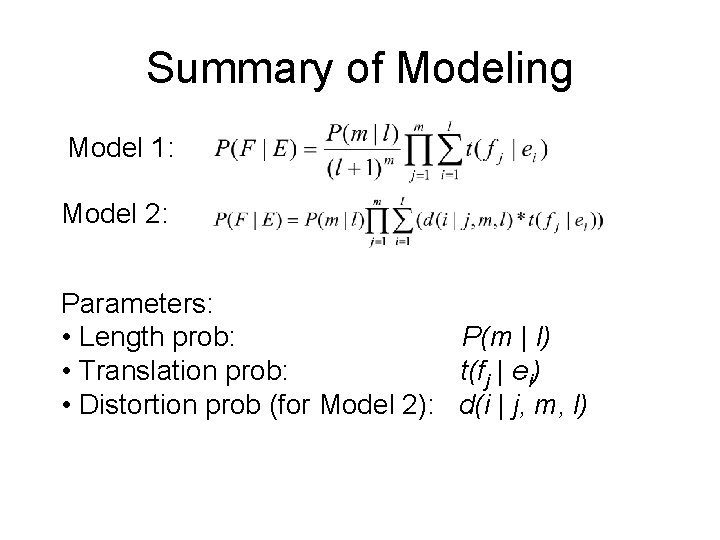

Summary of Modeling Model 1: Model 2: Parameters: • Length prob: P(m | l) • Translation prob: t(fj | ei) • Distortion prob (for Model 2): d(i | j, m, l)

Model 1 and 2 • Modeling – Generative process – Decomposition – Formula and types of parameters • Training • Finding the best alignment • Decoding

Training • Mathematically motivated: – Having an objective function to optimize – Using several clever tricks • The resulting formulae – are intuitively expected – can be calculated efficiently • EM algorithm – Hill climbing, and each iteration guarantees to improve objective function – It does not guaranteed to reach global optimal.

Length prob: P(j | i) • Let Ct (j, i) be the number of sentence pairs where the Fr leng is j, and Eng leng is i. • Length prob: • No need for iterations

Estimating t(f|e): a naïve approach • A naïve approach: – Count the times that f appears in F and e appears in E. – Count the times that e appears in E – Divide the 1 st number by the 2 nd number. • Problem: – It cannot distinguish true translations from pure coincidence. – Ex: t(el | white) t(blanco | white) • Solution: count the times that f aligns to e.

Estimating t(f|e) in Model 1 • When each sent pair has a unique word alignment • When each sent pair has several word alignments with prob • When there are no word alignments

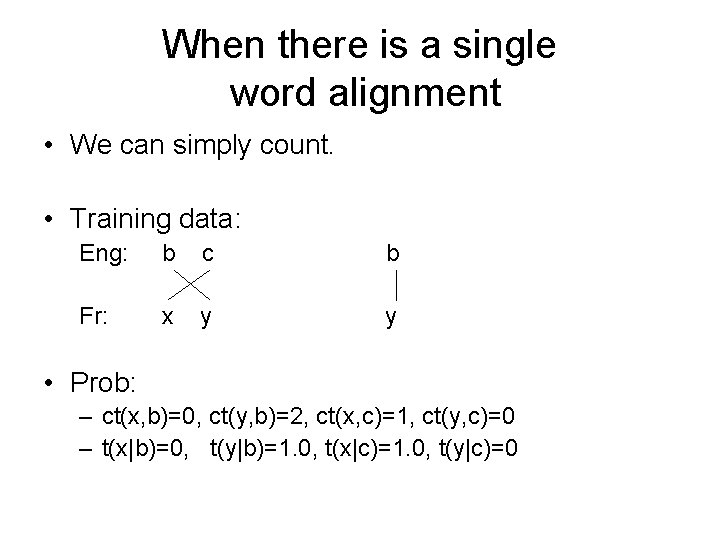

When there is a single word alignment • We can simply count. • Training data: Eng: b c b Fr: x y y • Prob: – ct(x, b)=0, ct(y, b)=2, ct(x, c)=1, ct(y, c)=0 – t(x|b)=0, t(y|b)=1. 0, t(x|c)=1. 0, t(y|c)=0

When there are several word alignments • If a sent pair has several word alignments, use fractional counts. • Training data: P(a|E, F)=0. 3 0. 2 b c x y 0. 4 b c 0. 1 b c 1. 0 b x x y y y • Prob: – Ct(x, b)=0. 7, Ct(y, b)=1. 5, Ct(x, c)=0. 3, Ct(y, c)=0. 5 – P(x|b)=7/22, P(y|b)=15/22, P(x|c)=3/8, P(y|c)=5/8

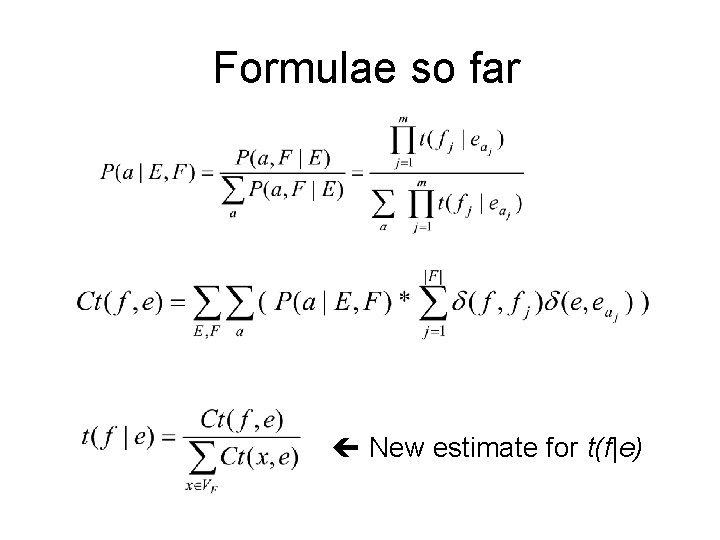

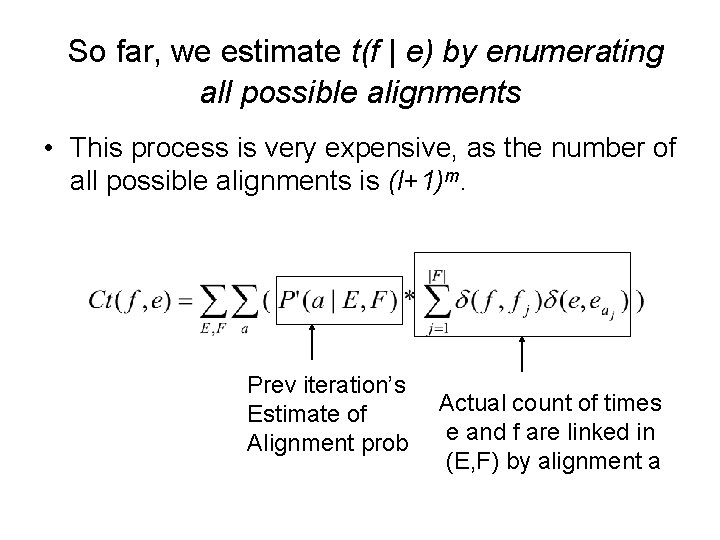

Fractional counts • Let Ct(f, e) be the fractional count of (f, e) pair in the training data, given alignment prob P. Alignment prob Actual count of times e and f are linked in (E, F) by alignment a

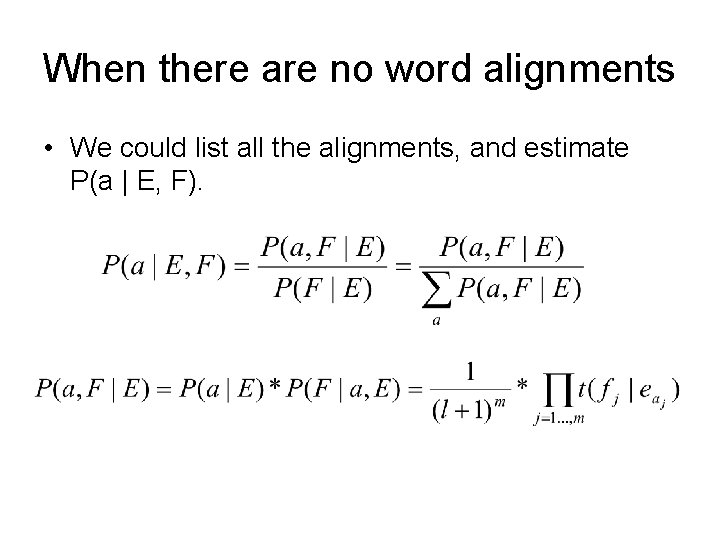

When there are no word alignments • We could list all the alignments, and estimate P(a | E, F).

Formulae so far New estimate for t(f|e)

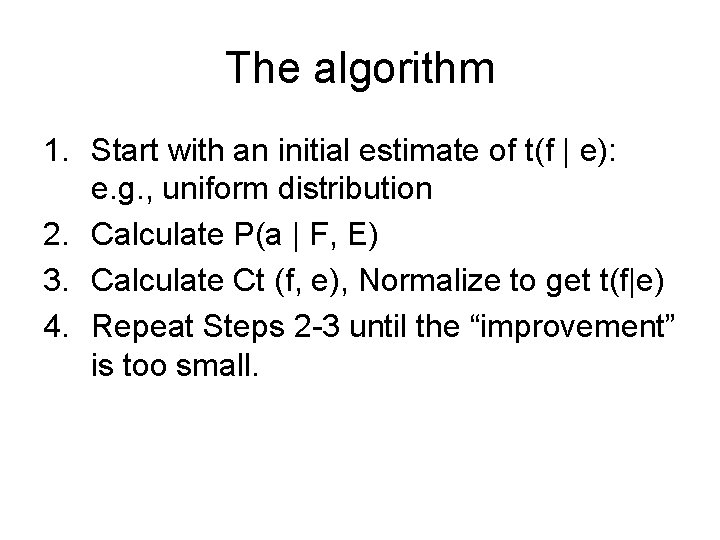

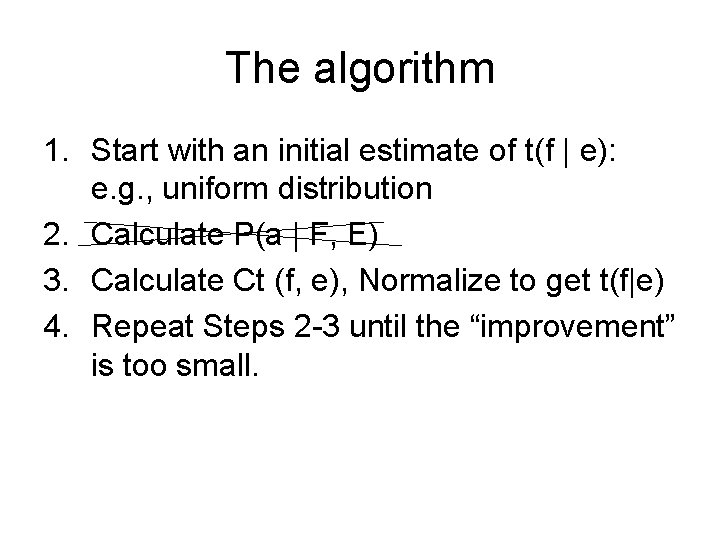

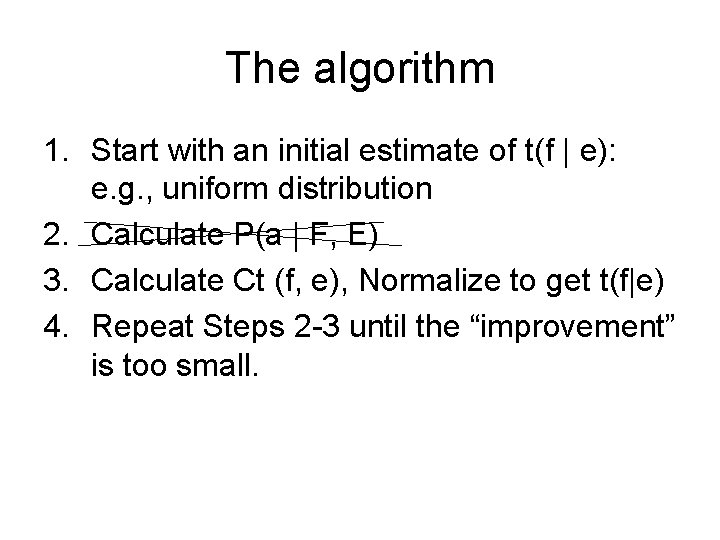

The algorithm 1. Start with an initial estimate of t(f | e): e. g. , uniform distribution 2. Calculate P(a | F, E) 3. Calculate Ct (f, e), Normalize to get t(f|e) 4. Repeat Steps 2 -3 until the “improvement” is too small.

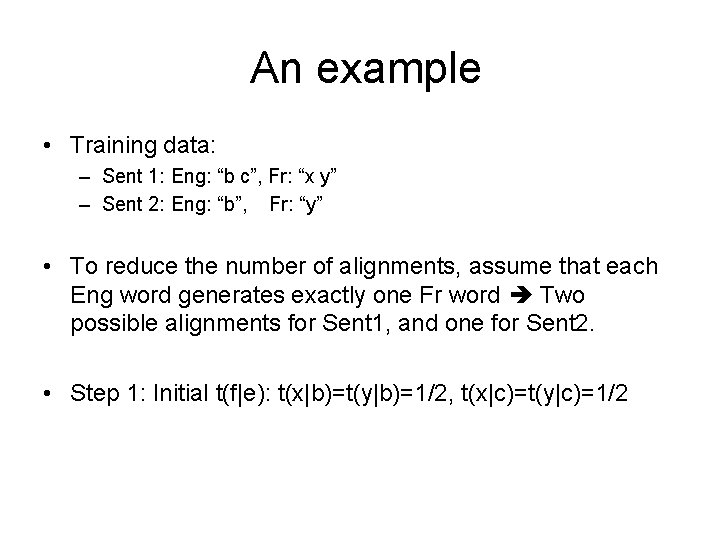

An example • Training data: – Sent 1: Eng: “b c”, Fr: “x y” – Sent 2: Eng: “b”, Fr: “y” • To reduce the number of alignments, assume that each Eng word generates exactly one Fr word Two possible alignments for Sent 1, and one for Sent 2. • Step 1: Initial t(f|e): t(x|b)=t(y|b)=1/2, t(x|c)=t(y|c)=1/2

Step 2: calculating P(a|F, E) • a 1: b c x y a 2: b c x y • Before normalization: – P(a 1|E 1, F 1)*Z=1/2*1/2=1/4 – P(a 2|E 1, F 1)*Z=1/2*1/2=1/4 – P(a 3|E 2, F 2)*Z=1/2 • After normalization: – P(a 1|E 1, F 1)=1/4 / (1/4+1/4) = ½ – P(a 2|E 1, F 1)=1/4 / ½ = ½. – P(a 3|E 2, F 2) = ½ / ½ = 1 a 3: b y

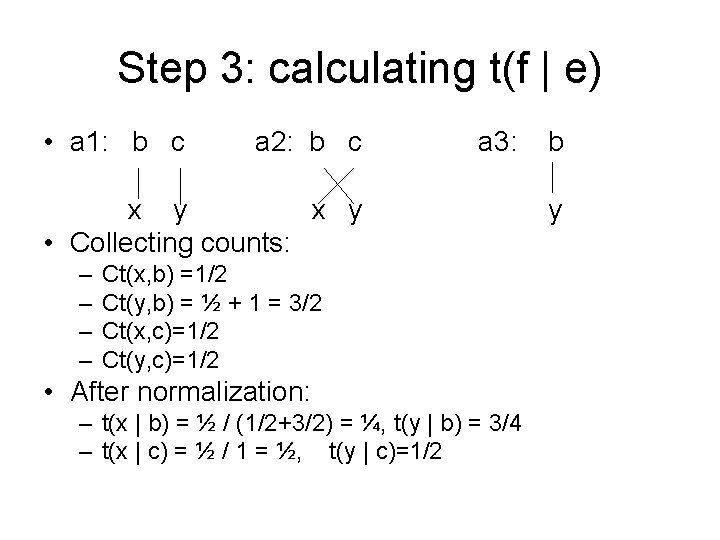

Step 3: calculating t(f | e) • a 1: b c a 2: b c a 3: x y • Collecting counts: – – Ct(x, b) =1/2 Ct(y, b) = ½ + 1 = 3/2 Ct(x, c)=1/2 Ct(y, c)=1/2 • After normalization: – t(x | b) = ½ / (1/2+3/2) = ¼, t(y | b) = 3/4 – t(x | c) = ½ / 1 = ½, t(y | c)=1/2 b y

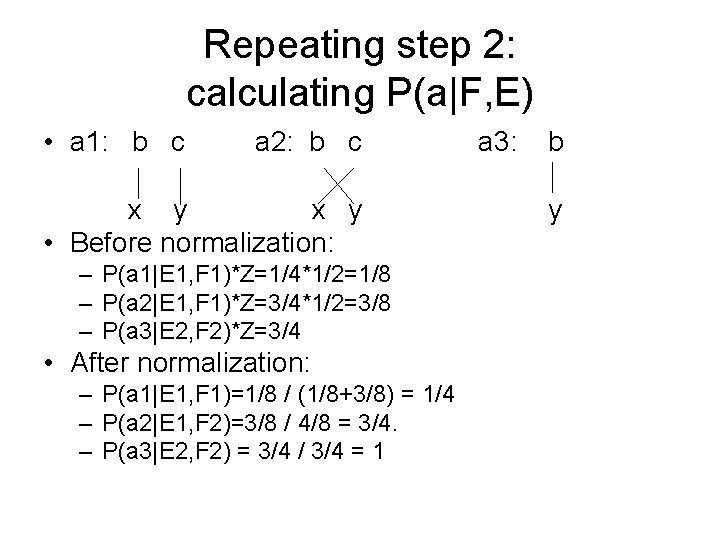

Repeating step 2: calculating P(a|F, E) • a 1: b c a 2: b c x y • Before normalization: – P(a 1|E 1, F 1)*Z=1/4*1/2=1/8 – P(a 2|E 1, F 1)*Z=3/4*1/2=3/8 – P(a 3|E 2, F 2)*Z=3/4 • After normalization: – P(a 1|E 1, F 1)=1/8 / (1/8+3/8) = 1/4 – P(a 2|E 1, F 2)=3/8 / 4/8 = 3/4. – P(a 3|E 2, F 2) = 3/4 / 3/4 = 1 a 3: b y

Repeating step 3: calculating t(f | e) • a 1: b c a 2: b c a 3: b x y • Collecting counts: – – Ct(x, b) =1/4 Ct(y, b) = 3/4+ 1 = 7/4 Ct(x, c)=3/4 Ct(y, c)=1/4 • After normalization: – t(x | b) = 1/4 / (1/4+7/4) = 1/8, t(y | b) = 7/8 – t(x | c) = 3/4 / (3/4+1/4) = 3/4, t(y | c)=1/4 y

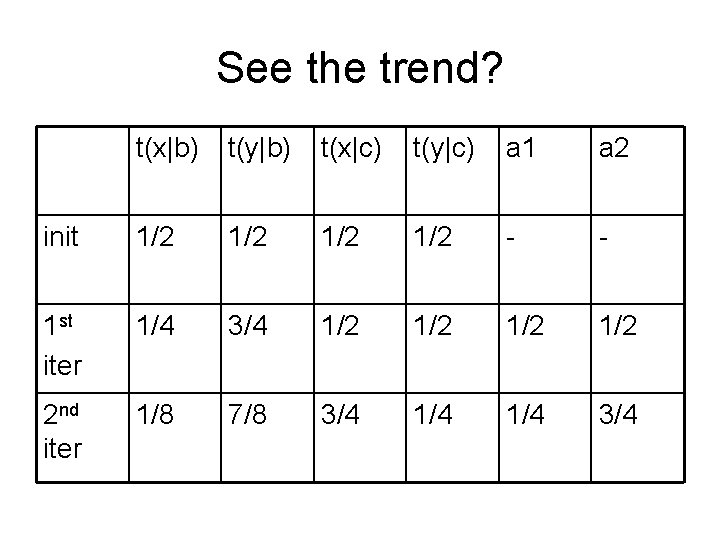

See the trend? t(x|b) t(y|b) t(x|c) t(y|c) a 1 a 2 init 1/2 1/2 - - 1 st iter 1/4 3/4 1/2 1/2 2 nd iter 1/8 7/8 3/4 1/4 3/4

So far, we estimate t(f | e) by enumerating all possible alignments • This process is very expensive, as the number of all possible alignments is (l+1)m. Prev iteration’s Estimate of Alignment prob Actual count of times e and f are linked in (E, F) by alignment a

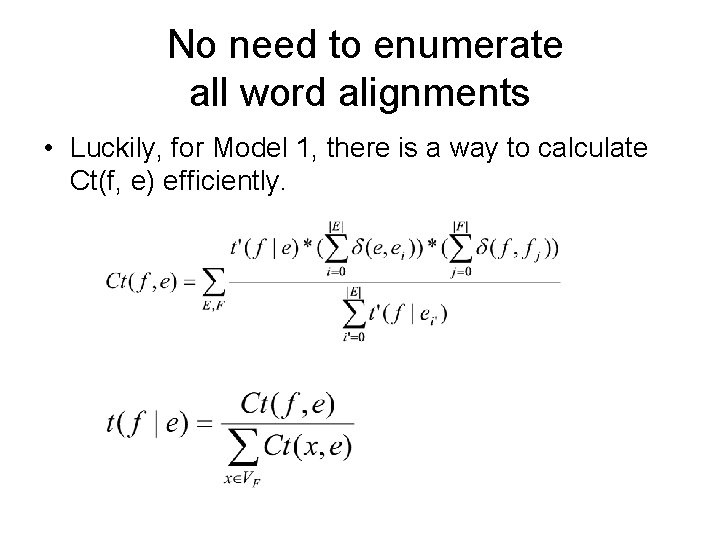

No need to enumerate all word alignments • Luckily, for Model 1, there is a way to calculate Ct(f, e) efficiently.

The algorithm 1. Start with an initial estimate of t(f | e): e. g. , uniform distribution 2. Calculate P(a | F, E) 3. Calculate Ct (f, e), Normalize to get t(f|e) 4. Repeat Steps 2 -3 until the “improvement” is too small.

Calculating t(f | e) with the new formulae • E 1: b c E 2: F 1: x y F 2: • Collecting counts: – – b y Ct(x, b) =1/2/(1/2+1/2) Ct(y, b) = ½ /(1/2+1/2) + 1/1 = 3/2 Ct(x, c)=1/2 / (1/2+1/2) = 1/2 Ct(y, c)=1/2 / (1/2+1/2) = 1/2 • After normalization: – t(x | b) = ½ / (1/2+3/2) = ¼, t(y | b) = 3/4 – t(x | c) = ½ / 1 = ½, t(y | c)=1/2

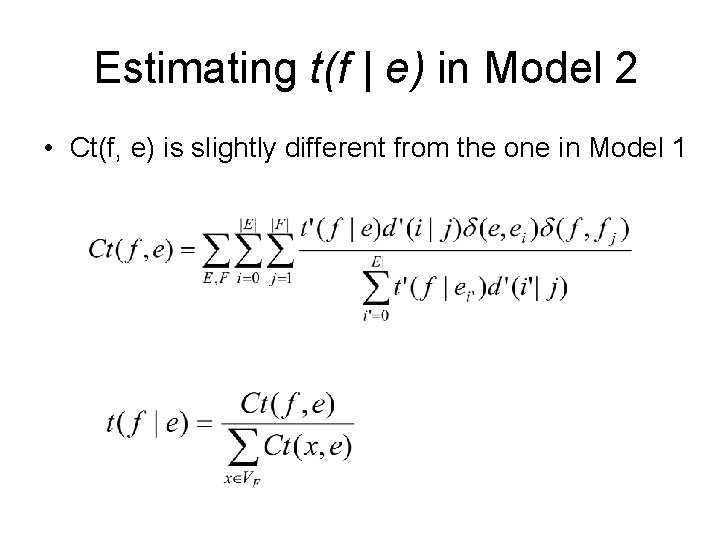

Estimating t(f | e) in Model 2 • Ct(f, e) is slightly different from the one in Model 1

Estimating d(i | j, m, l) in Model 2 • Let Ct(i, j, m, l) be the fractional count that Fr position j is linked to the Eng position i.

The algorithm 1. Start with an initial estimate of t(f | e): e. g. , uniform distribution 2. Calculate P(a | F, E) 3. Calculate Ct (f, e), Normalize to get t(f|e) 4. Repeat Steps 2 -3 until the “improvement” is too small.

EM algorithm • EM: expectation maximization • In a model with hidden states (e. g. , word alignment), how can we estimate model parameters? • EM does the following: – E-step: Take an initial model parameterization and calculate the expected values of the hidden data. – M-step: Use the expected values to maximize the likelihood of the training data.

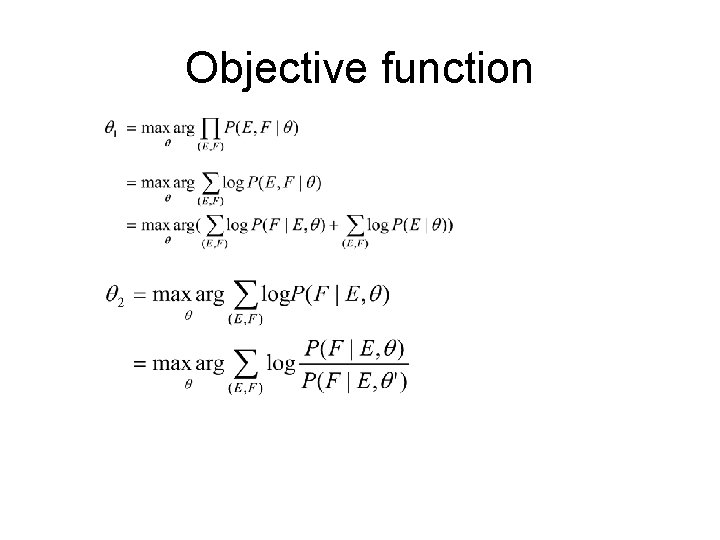

Objective function

Training Summary • Mathematically motivated: – Having an objective function to optimize – Using several clever tricks • The resulting formulae – are intuitively expected – can be calculated efficiently • EM algorithm – Hill climbing, and each iteration guarantees to improve objective function – It does not guaranteed to reach global optimal.

Model 1 and 2 • Modeling – Generative process – Decomposition – Formula and types of parameters • Training • Finding the best alignment

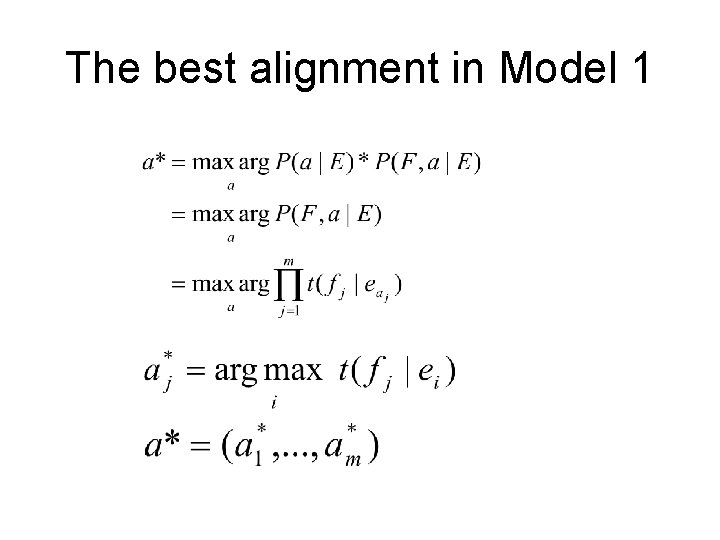

The best alignment in Model 1 -5 Given E and F, we are looking for the best alignment a*:

The best alignment in Model 1

The best alignment in Model 2

Summary of Model 1 and 2 • Modeling: – Pick the length of F with prob P(m | l). – For each position j • Pick an English word position aj, with prob P(aj | j, m, l). • Pick a Fr word fj according to the Eng word ei, with t(fj | ei), where i=aj – The resulting formula can be calculated efficiently. • Training: EM algorithm. The update can be done efficiently. • Finding the best alignment: can be easily done.

Limitations of Model 1 and 2 • There could be some relations among the Fr words generated by the same Eng word (w. r. t. positions and fertility). • The relations are not captured by Model 1 and 2. • They are captured by Model 3 and 4.

Outline • General concepts – Source channel model – Word alignment – Notations • Model 1 -2 • Model 3 -4

Model 3 and 4

Model 3 and 4 • Modeling – Generative process – Decomposition and final formula – Types of parameters • Training • Finding the best alignment • Decoding

Generative process • For each Eng word ei, choose a fertility • For each ei, generate Fr words • Choose the position of each Fr word.

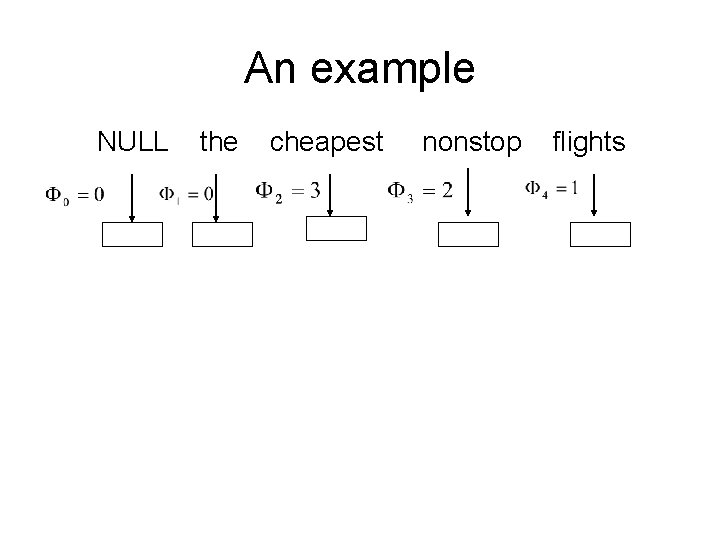

An example NULL the cheapest nonstop flights

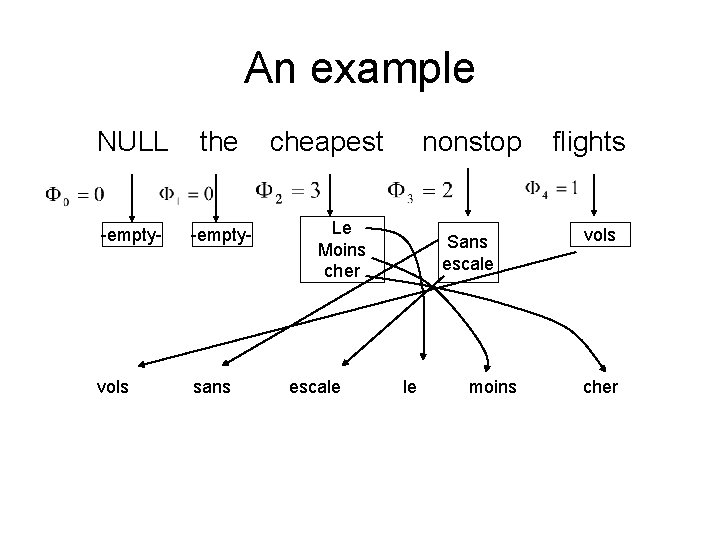

An example NULL the -empty- vols sans cheapest nonstop Le Moins cher escale Sans escale le moins flights vols cher

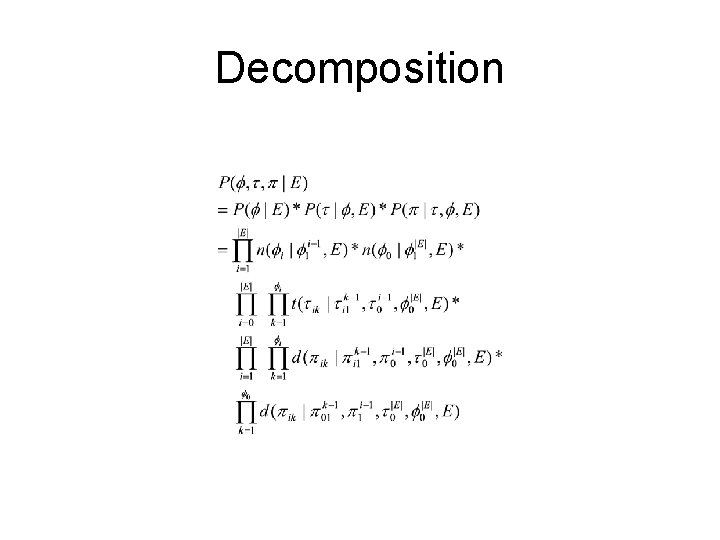

Decomposition

Approximations and types of parameters Where N is the number of empty slots.

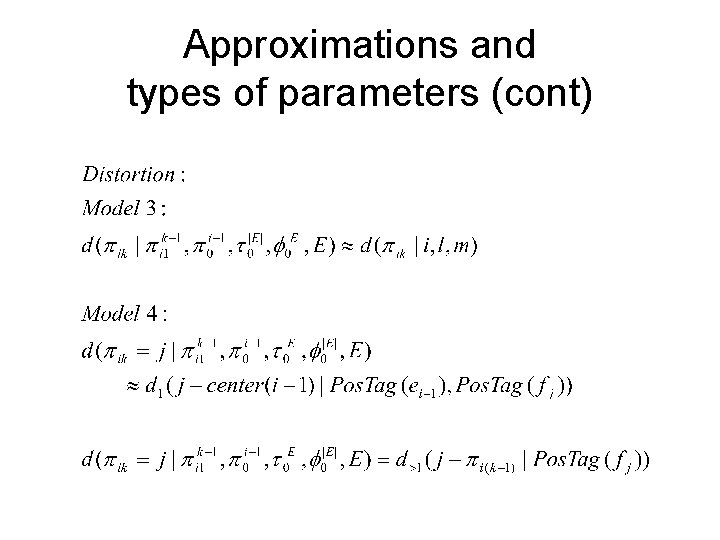

Approximations and types of parameters (cont)

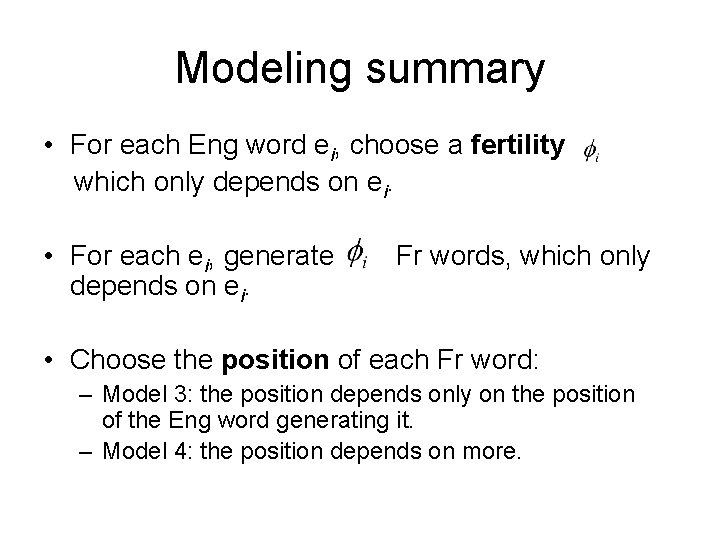

Modeling summary • For each Eng word ei, choose a fertility which only depends on ei. • For each ei, generate depends on ei. Fr words, which only • Choose the position of each Fr word: – Model 3: the position depends only on the position of the Eng word generating it. – Model 4: the position depends on more.

Training • Use EM, just like Model 1 and 2 • Translation and distortion probabilities can be calculated efficiently, fertility probabilities cannot. • No efficient algorithms to find the best alignment.

Model 3 and 4 • Modeling – Generative process – Decomposition and final formula – Types of parameters • Training • Finding the best alignment • Decoding

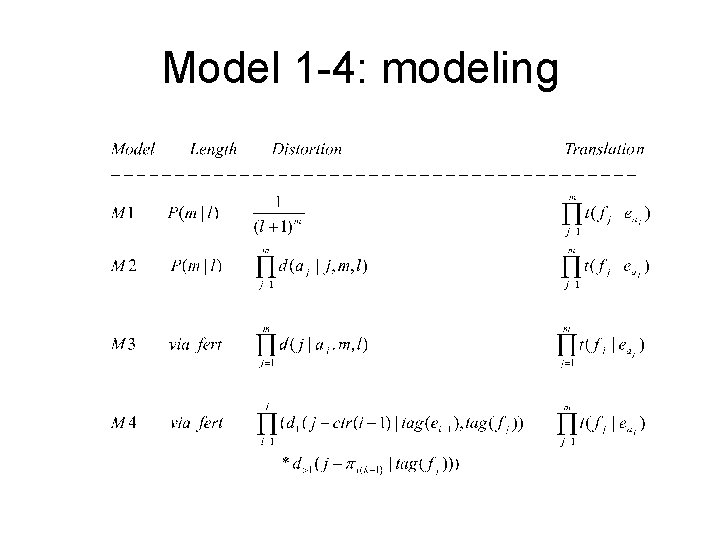

Model 1 -4: modeling

Model 1 -4: training • Similarities: – Same objective function – Same algorithm: EM algorithm • Differences: – Summation over all alignments can be done efficiently for Model 1 -2, but not for Model 3 -4. – Best alignment can be found efficiently for Model 1 -2, but not for Model 3 -4.

Summary • General concepts – Source channel model: P(E) and P(F|E) – Notations – Word alignment: each Fr word comes from exactly one Eng word (including e 0). • Model 1 -2 • Model 3 -4

- Slides: 79