Introduction to MT Ling 580 Fei Xia Week

- Slides: 55

Introduction to MT Ling 580 Fei Xia Week 1: 1/03/06

Outline • Course overview • Introduction to MT – Major challenges – Major approaches – Evaluation of MT systems • Overview of word-based SMT

Course overview

General info • Course website: – Syllabus (incl. slides and papers): updated every week. – Message board – ESubmit • Office hour: Fri: 10: 30 am-12: 30 pm. • Prerequisites: – Ling 570 and Ling 571. – Programming: C or C++, Perl is a plus. – Introduction to probability and statistics

Expectations • Reading: – Papers are online – Finish reading before class. Bring your questions to class. • Grade: – – Leading discussion (1 -2 papers): 50% Project: 40% Class participation: 10% No quizzes, exams

Leading discussion • • Indicate your choice via EPost by Jan 8. You might want to read related papers. Make slides with Power. Point. Email me your slides by 3: 30 am on the Monday before your presentation. • Present the paper in class and lead the discussion: 40 -50 minutes.

Project • Details will be available soon. • Project presentation: 3/7/06 • Final report: due on 3/12/06 • Pongo account will be ready soon.

Introduction to MT

A brief history of MT (Based on work by John Hutchins) • Before the computer: In the mid 1930 s, a French. Armenian Georges Artsrouni and a Russian Petr Troyanskii applied for patents for ‘translating machines’. • The pioneers (1947 -1954): the first public MT demo was given in 1954 (by IBM and Georgetown University). • The decade of optimism (1954 -1966): ALPAC (Automatic Language Processing Advisory Committee) report in 1966: "there is no immediate or predictable prospect of useful machine translation. "

A brief history of MT (cont) • The aftermath of the ALPAC report (19661980): a virtual end to MT research • The 1980 s: Interlingua, example-based MT • The 1990 s: Statistical MT • The 2000 s: Hybrid MT

Where are we now? • Huge potential/need due to the internet, globalization and international politics. • Quick development time due to SMT, the availability of parallel data and computers. • Translation is reasonable for language pairs with a large amount of resource. • Start to include more “minor” languages.

What is MT good for? • • Rough translation: web data Computer-aided human translation Translation for limited domain Cross-lingual IR • Machine is better than human in: – Speed: much faster than humans – Memory: can easily memorize millions of word/phrase translations. – Manpower: machines are much cheaper than humans – Fast learner: it takes minutes or hours to build a new system. Erasable memory – Never complain, never get tired, …

Major challenges in MT

Translation is hard • • Novels Word play, jokes, puns, hidden messages Concept gaps: go Greek, bei fen Other constraints: lyrics, dubbing, poem, …

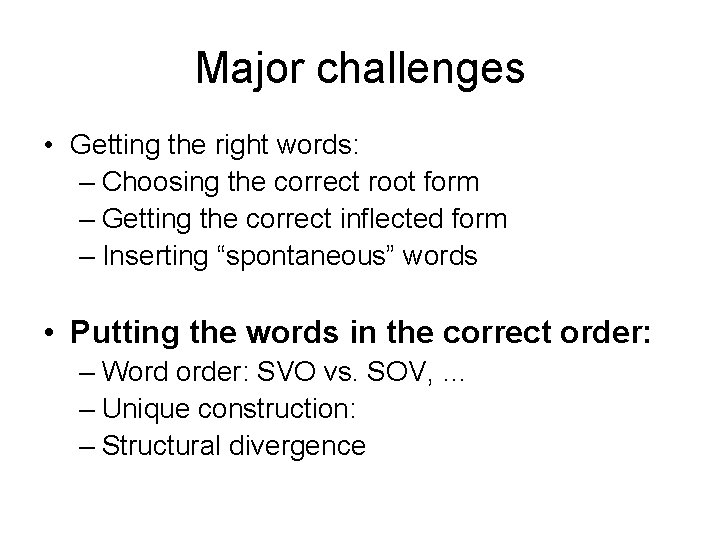

Major challenges • Getting the right words: – Choosing the correct root form – Getting the correct inflected form – Inserting “spontaneous” words • Putting the words in the correct order: – Word order: SVO vs. SOV, … – Unique constructions: – Divergence

Lexical choice • Homonymy/Polysemy: bank, run • Concept gap: no corresponding concepts in another language: go Greek, go Dutch, fen sui, lame duck, … • Coding (Concept lexeme mapping) differences: – More distinction in one language: e. g. , kinship vocabulary. – Different division of conceptual space:

Choosing the appropriate inflection • Inflection: gender, number, case, tense, … • Ex: – Number: Ch-Eng: all the concrete nouns: ch_book book, books – Gender: Eng-Fr: all the adjectives – Case: Eng-Korean: all the arguments – Tense: Ch-Eng: all the verbs: ch_buy buy, bought, will buy

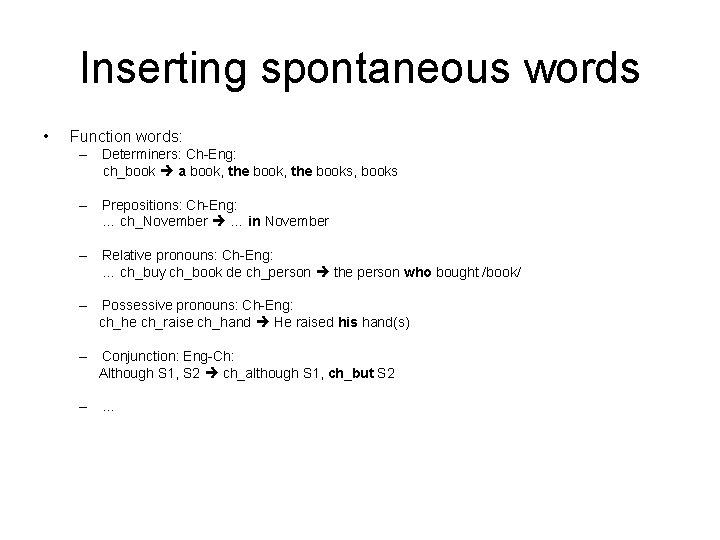

Inserting spontaneous words • Function words: – Determiners: Ch-Eng: ch_book a book, the books, books – Prepositions: Ch-Eng: … ch_November … in November – Relative pronouns: Ch-Eng: … ch_buy ch_book de ch_person the person who bought /book/ – Possessive pronouns: Ch-Eng: ch_he ch_raise ch_hand He raised his hand(s) – Conjunction: Eng-Ch: Although S 1, S 2 ch_although S 1, ch_but S 2 – …

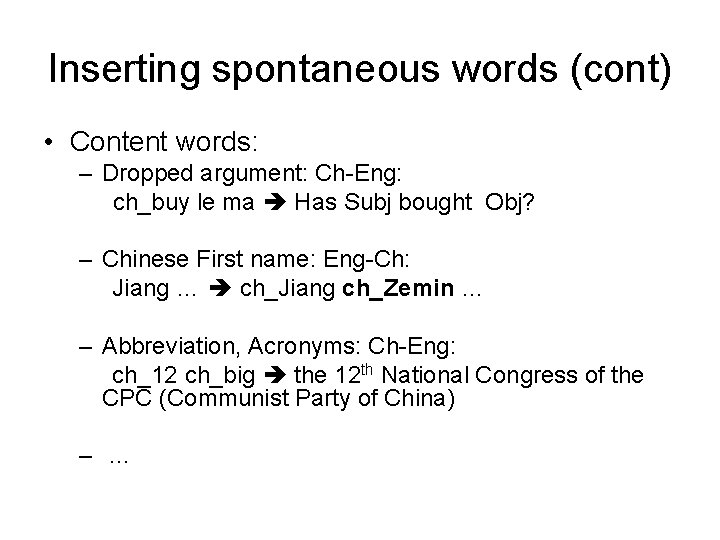

Inserting spontaneous words (cont) • Content words: – Dropped argument: Ch-Eng: ch_buy le ma Has Subj bought Obj? – Chinese First name: Eng-Ch: Jiang … ch_Jiang ch_Zemin … – Abbreviation, Acronyms: Ch-Eng: ch_12 ch_big the 12 th National Congress of the CPC (Communist Party of China) – …

Major challenges • Getting the right words: – Choosing the correct root form – Getting the correct inflected form – Inserting “spontaneous” words • Putting the words in the correct order: – Word order: SVO vs. SOV, … – Unique construction: – Structural divergence

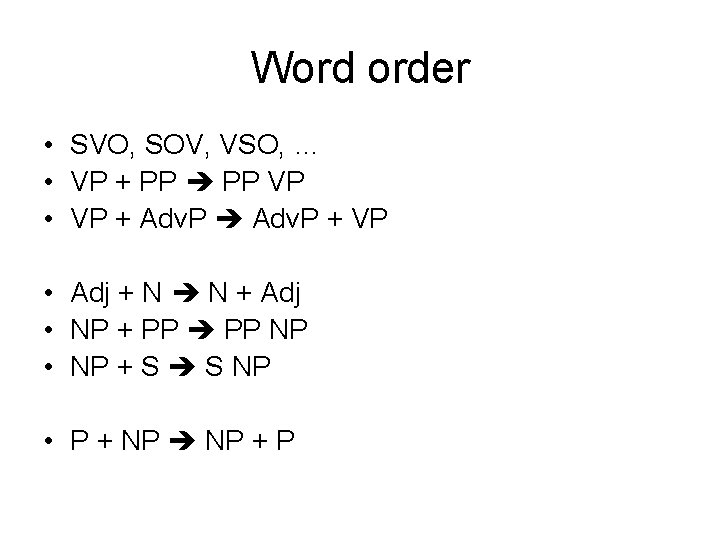

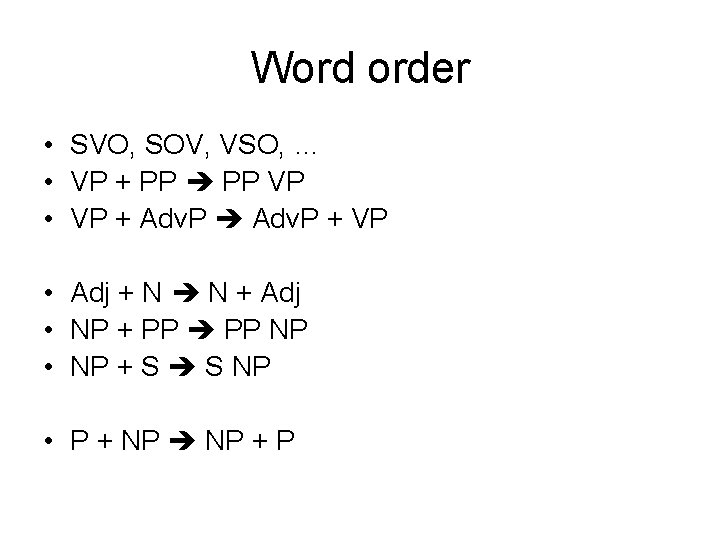

Word order • SVO, SOV, VSO, … • VP + PP VP • VP + Adv. P + VP • Adj + N N + Adj • NP + PP NP • NP + S S NP • P + NP + P

“Unique” Constructions • Overt wh-movement: Eng-Ch: – Eng: Why do you think that he came yesterday? – Ch: you why think he yesterday come ASP? – Ch: you think he yesterday why come? • Ba-construction: Ch-Eng – She ba homework finish ASP She finished her homework. – He ba wall dig ASP CL hole He digged a hole in the wall. – She ba orange peel ASP skin She peeled the orange’s skin.

Translation divergences • Source and target parse trees (dependency trees) are not identical. • Example: I like Mary S: Marta me gusta a mi (‘Mary pleases me’) • More discussion next time.

Major approaches

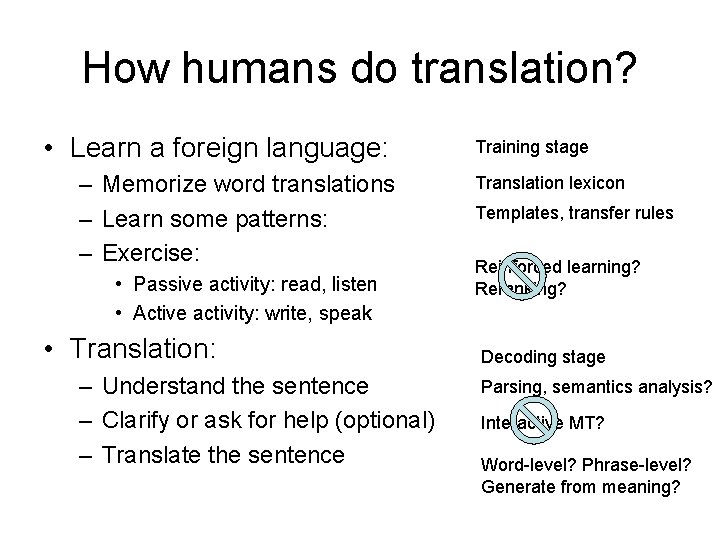

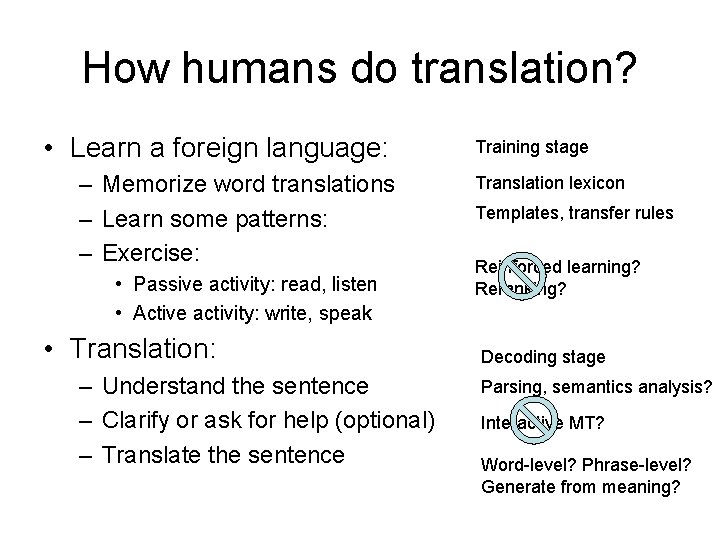

How humans do translation? • Learn a foreign language: – Memorize word translations – Learn some patterns: – Exercise: • Passive activity: read, listen • Active activity: write, speak • Translation: – Understand the sentence – Clarify or ask for help (optional) – Translate the sentence Training stage Translation lexicon Templates, transfer rules Reinforced learning? Reranking? Decoding stage Parsing, semantics analysis? Interactive MT? Word-level? Phrase-level? Generate from meaning?

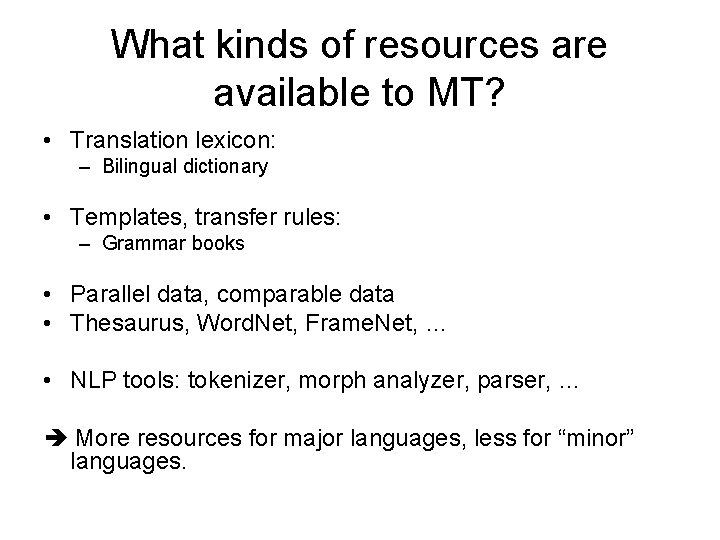

What kinds of resources are available to MT? • Translation lexicon: – Bilingual dictionary • Templates, transfer rules: – Grammar books • Parallel data, comparable data • Thesaurus, Word. Net, Frame. Net, … • NLP tools: tokenizer, morph analyzer, parser, … More resources for major languages, less for “minor” languages.

Major approaches • • • Transfer-based Interlingua Example-based (EBMT) Statistical MT (SMT) Hybrid approach

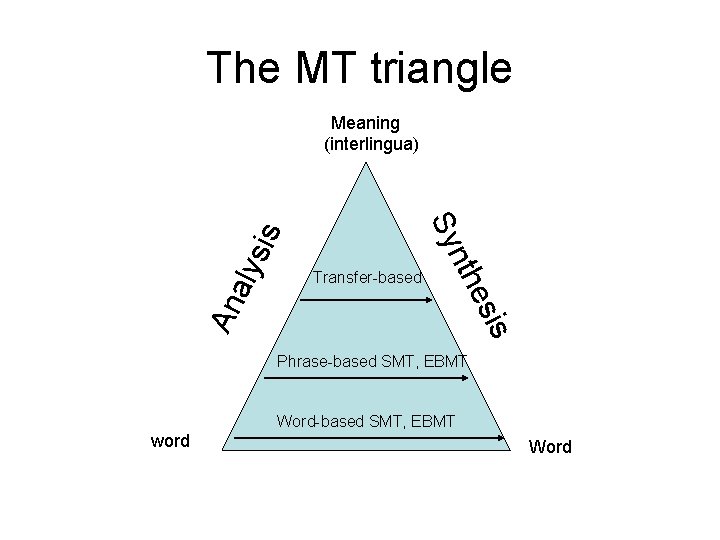

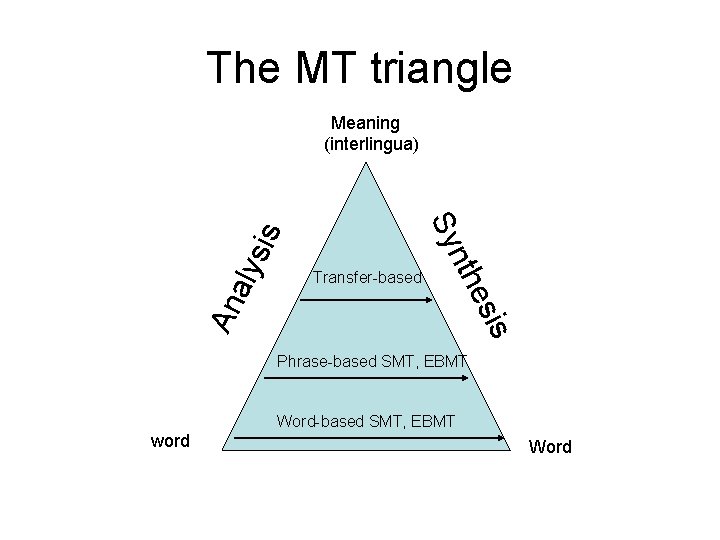

The MT triangle s esi An a Transfer-based nth Sy lys is Meaning (interlingua) Phrase-based SMT, EBMT Word-based SMT, EBMT word Word

Transfer-based MT • Analysis, transfer, generation: 1. 2. 3. 4. • Resources required: – – – • Parse the source sentence Transform the parse tree with transfer rules Translate source words Get the target sentence from the tree Source parser A translation lexicon A set of transfer rules An example: Mary bought a book yesterday.

Transfer-based MT (cont) • Parsing: linguistically motivated grammar or formal grammar? • Transfer: – context-free rules? A path on a dependency tree? – Apply at most one rule at each level? – How are rules created? • Translating words: word-to-word translation? • Generation: using LM or other additional knowledge? • How to create the needed resources automatically?

Interlingua • For n languages, we need n(n-1) MT systems. • Interlingua uses a language-independent representation. • Conceptually, Interlingua is elegant: we only need n analyzers, and n generators. • Resource needed: – A language-independent representation – Sophisticated analyzers – Sophisticated generators

Interlingua (cont) • Questions: – Does language-independent meaning representation really exist? If so, what does it look like? – It requires deep analysis: how to get such an analyzer: e. g. , semantic analysis – It requires non-trivial generation: How is that done? – It forces disambiguation at various levels: lexical, syntactic, semantic, discourse levels. – It cannot take advantage of similarities between a particular language pair.

Example-based MT • Basic idea: translate a sentence by using the closest match in parallel data. • First proposed by Nagao (1981). • Ex: – Training data: • w 1 w 2 w 3 w 4 w 1’ w 2’ w 3’ w 4’ • w 5 w 6 w 7 w 5’ w 6’ w 7’ • w 8 w 9 w 8’ w 9’ – Test sent: • w 1 w 2 w 6 w 7 w 9 w 1’ w 2’ w 6’ w 7’ w 9’

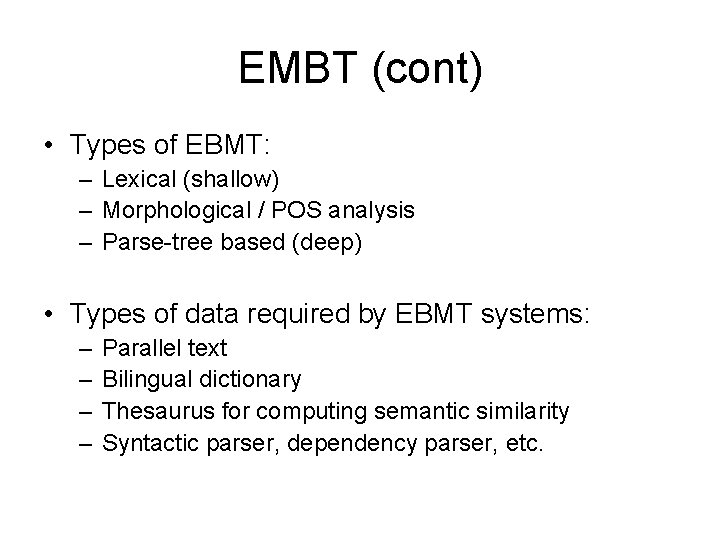

EMBT (cont) • Types of EBMT: – Lexical (shallow) – Morphological / POS analysis – Parse-tree based (deep) • Types of data required by EBMT systems: – – Parallel text Bilingual dictionary Thesaurus for computing semantic similarity Syntactic parser, dependency parser, etc.

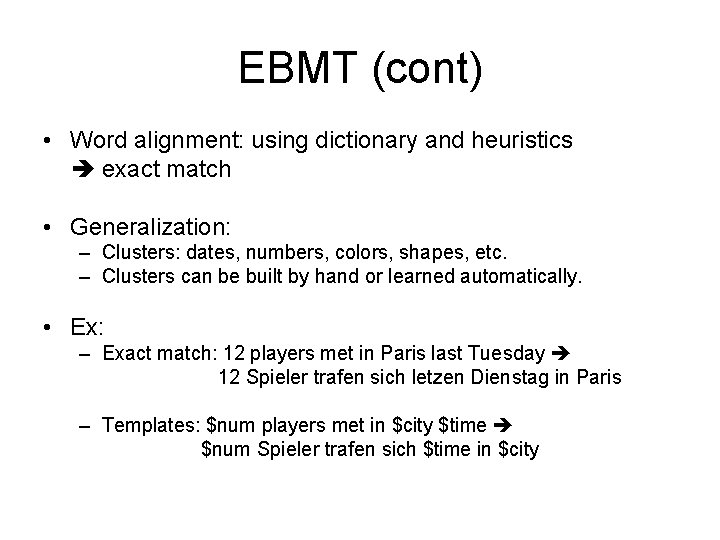

EBMT (cont) • Word alignment: using dictionary and heuristics exact match • Generalization: – Clusters: dates, numbers, colors, shapes, etc. – Clusters can be built by hand or learned automatically. • Ex: – Exact match: 12 players met in Paris last Tuesday 12 Spieler trafen sich letzen Dienstag in Paris – Templates: $num players met in $city $time $num Spieler trafen sich $time in $city

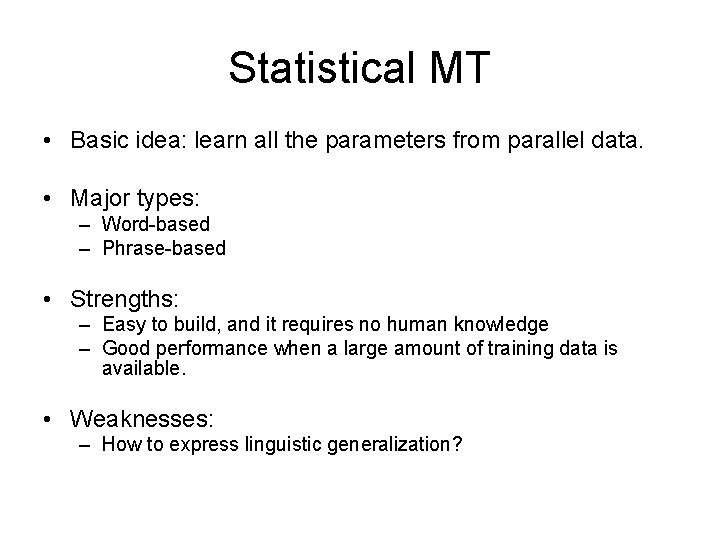

Statistical MT • Basic idea: learn all the parameters from parallel data. • Major types: – Word-based – Phrase-based • Strengths: – Easy to build, and it requires no human knowledge – Good performance when a large amount of training data is available. • Weaknesses: – How to express linguistic generalization?

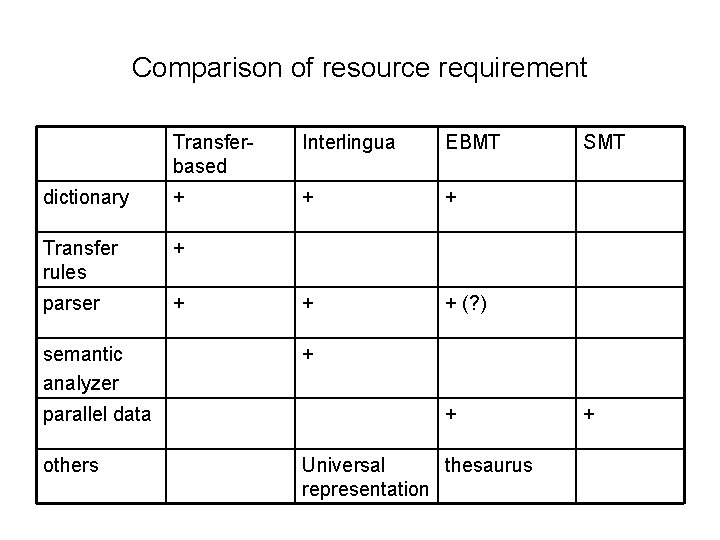

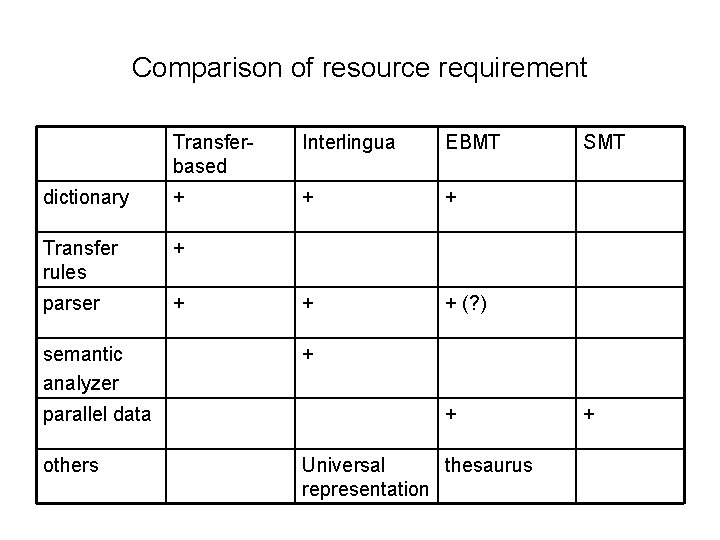

Comparison of resource requirement Transferbased Interlingua EBMT dictionary + + + Transfer rules + parser + + + (? ) semantic analyzer parallel data others SMT + + Universal thesaurus representation +

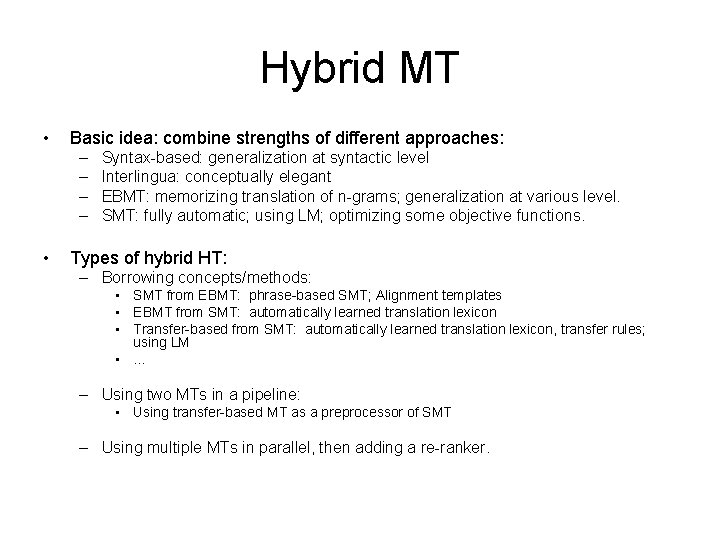

Hybrid MT • Basic idea: combine strengths of different approaches: – – • Syntax-based: generalization at syntactic level Interlingua: conceptually elegant EBMT: memorizing translation of n-grams; generalization at various level. SMT: fully automatic; using LM; optimizing some objective functions. Types of hybrid HT: – Borrowing concepts/methods: • SMT from EBMT: phrase-based SMT; Alignment templates • EBMT from SMT: automatically learned translation lexicon • Transfer-based from SMT: automatically learned translation lexicon, transfer rules; using LM • … – Using two MTs in a pipeline: • Using transfer-based MT as a preprocessor of SMT – Using multiple MTs in parallel, then adding a re-ranker.

Evaluation of MT

Evaluation • Unlike many NLP tasks (e. g. , tagging, chunking, parsing, IE, pronoun resolution), there is no single gold standard for MT. • Human evaluation: accuracy, fluency, … – Problem: expensive, slow, subjective, non-reusable. • Automatic measures: – – Edit distance Word error rate (WER), Position-independent WER (PER) Simple string accuracy (SSA), Generation string accuracy (GSA) BLEU

Edit distance • The Edit distance (a. k. a. Levenshtein distance) is defined as the minimal cost of transforming str 1 into str 2, using three operations (substitution, insertion, deletion). • Use DP and the complexity is O(m*n).

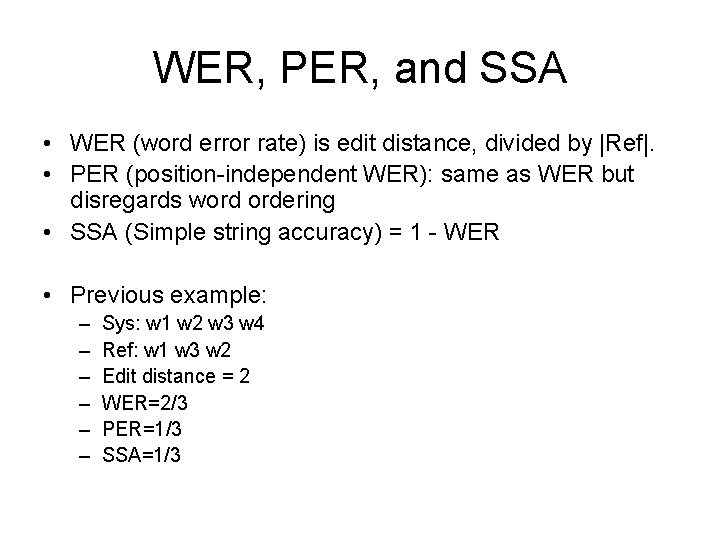

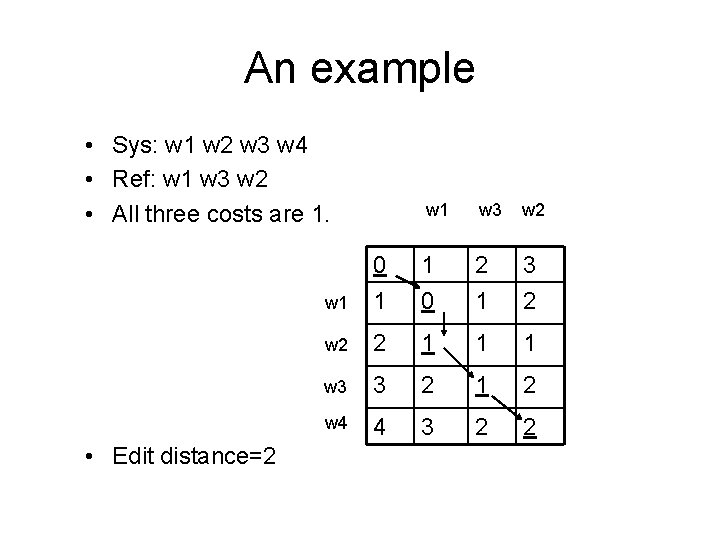

WER, PER, and SSA • WER (word error rate) is edit distance, divided by |Ref|. • PER (position-independent WER): same as WER but disregards word ordering • SSA (Simple string accuracy) = 1 - WER • Previous example: – – – Sys: w 1 w 2 w 3 w 4 Ref: w 1 w 3 w 2 Edit distance = 2 WER=2/3 PER=1/3 SSA=1/3

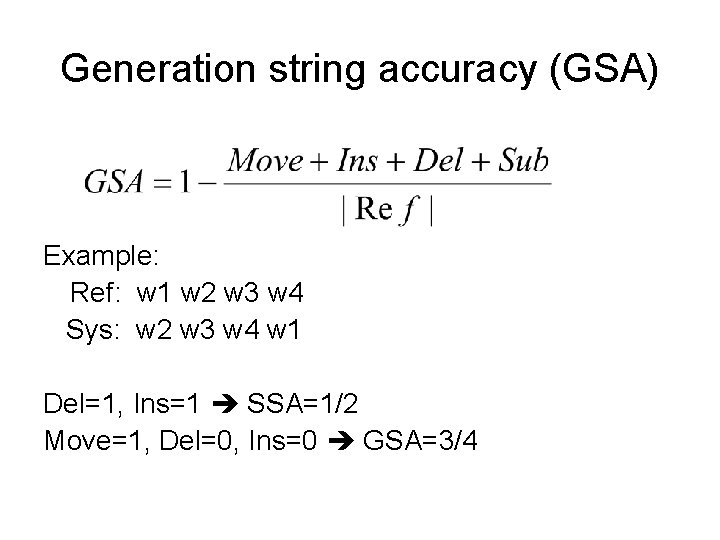

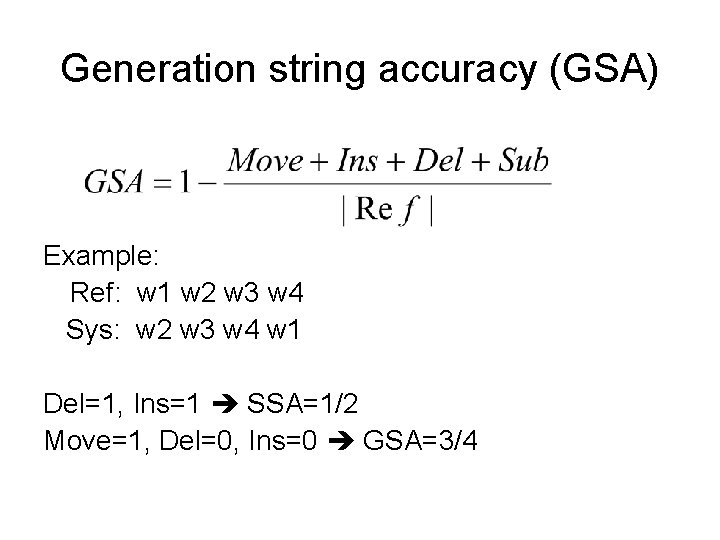

Generation string accuracy (GSA) Example: Ref: w 1 w 2 w 3 w 4 Sys: w 2 w 3 w 4 w 1 Del=1, Ins=1 SSA=1/2 Move=1, Del=0, Ins=0 GSA=3/4

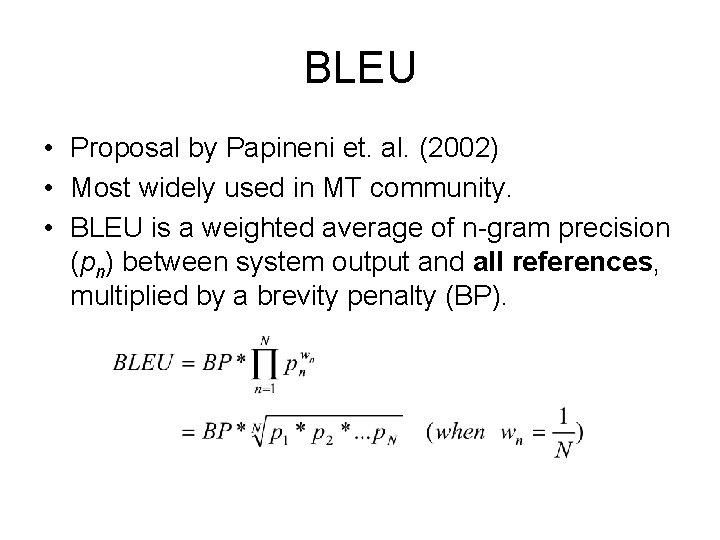

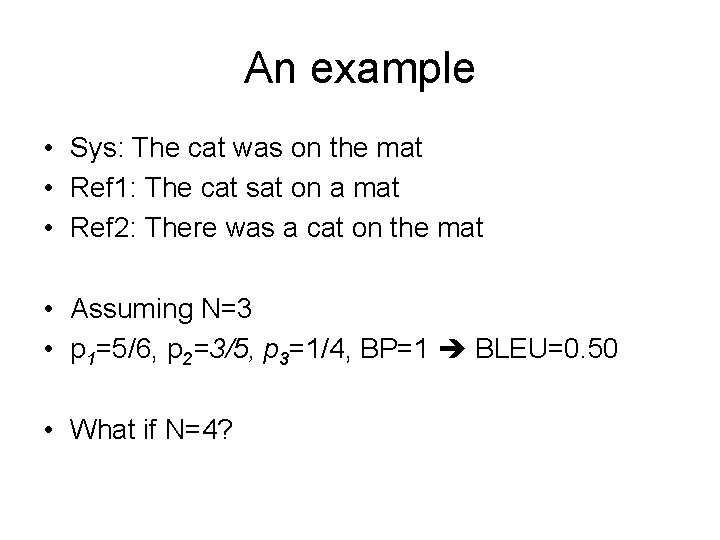

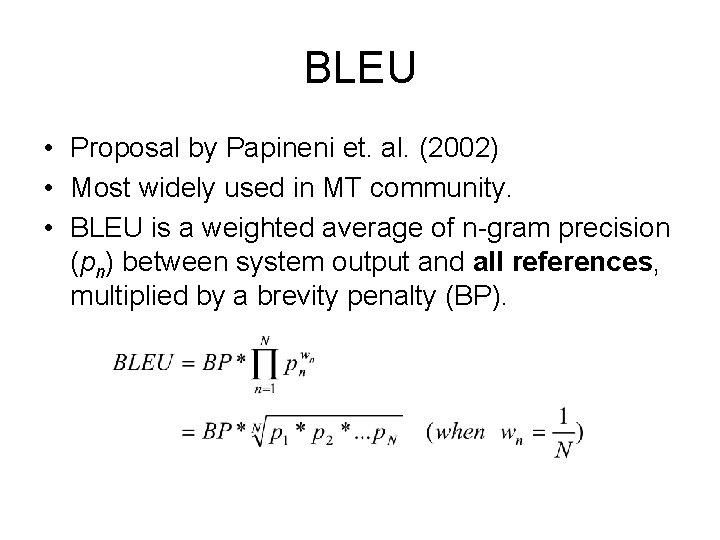

BLEU • Proposal by Papineni et. al. (2002) • Most widely used in MT community. • BLEU is a weighted average of n-gram precision (pn) between system output and all references, multiplied by a brevity penalty (BP).

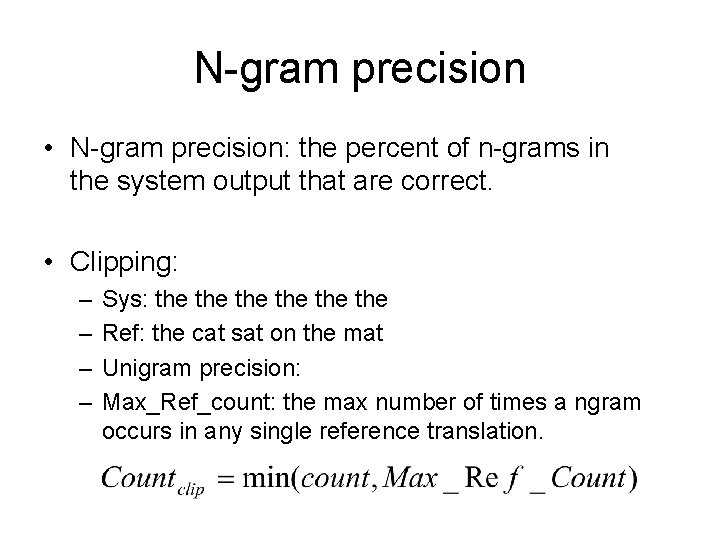

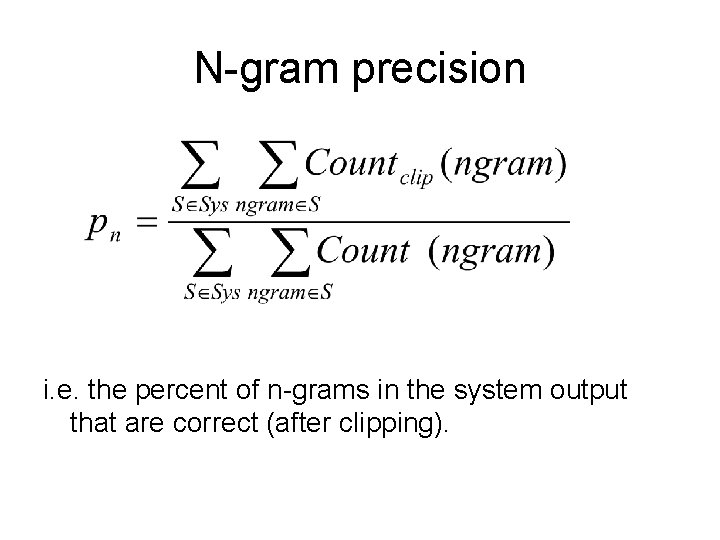

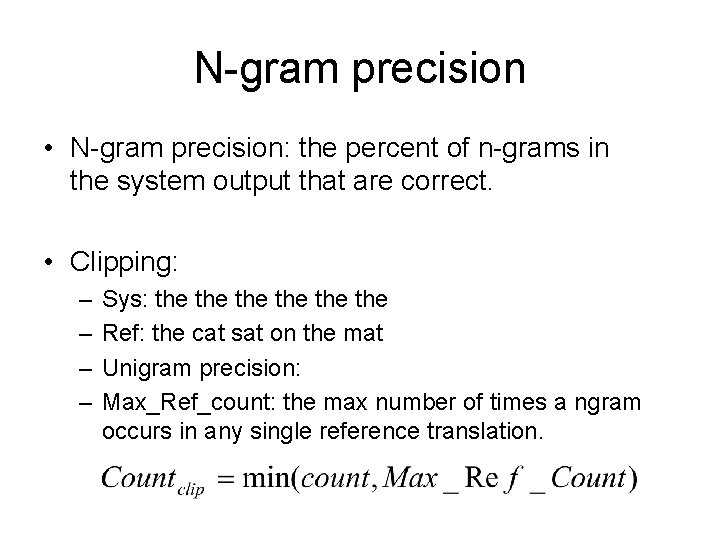

N-gram precision • N-gram precision: the percent of n-grams in the system output that are correct. • Clipping: – – Sys: the the the Ref: the cat sat on the mat Unigram precision: Max_Ref_count: the max number of times a ngram occurs in any single reference translation.

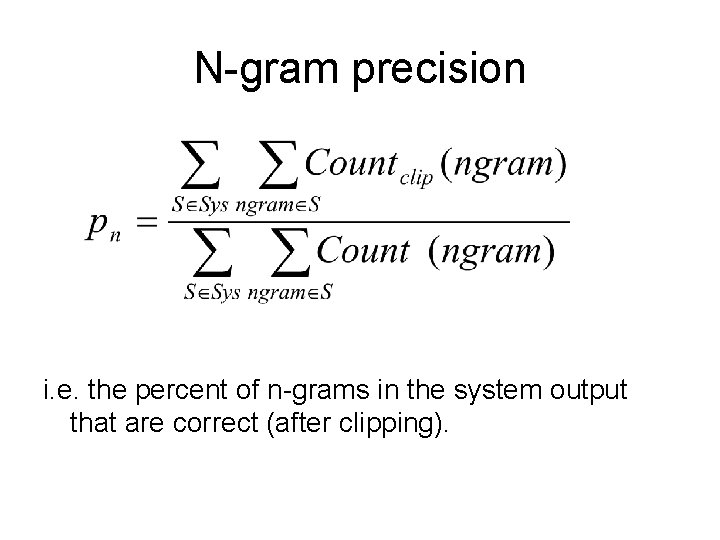

N-gram precision i. e. the percent of n-grams in the system output that are correct (after clipping).

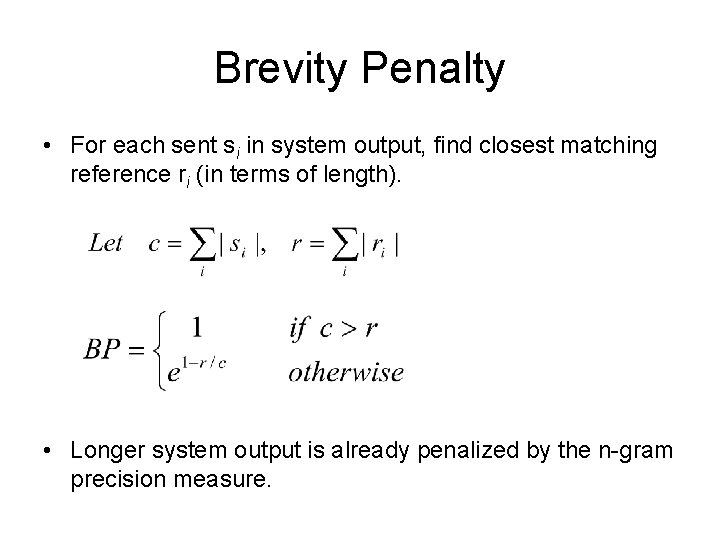

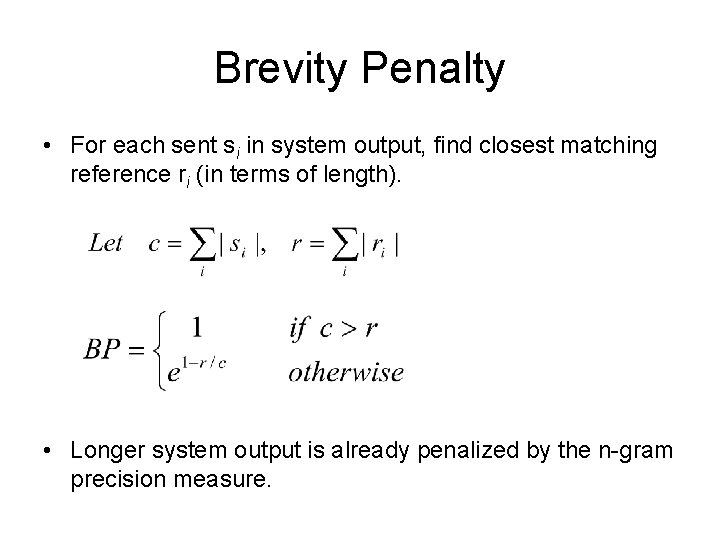

Brevity Penalty • For each sent si in system output, find closest matching reference ri (in terms of length). • Longer system output is already penalized by the n-gram precision measure.

An example • Sys: The cat was on the mat • Ref 1: The cat sat on a mat • Ref 2: There was a cat on the mat • Assuming N=3 • p 1=5/6, p 2=3/5, p 3=1/4, BP=1 BLEU=0. 50 • What if N=4?

Summary • Course overview • Major challenges in MT – Choose the right words (root form, inflection, spontaneous words) – Put them in right positions (word order, unique constructions, divergences)

Summary (cont) • Major approaches – – – Transfer-based MT Interlingua Example-based MT Statistical MT Hybrid MT • Evaluation of MT systems – Edit distance – WER, PER, SSA, GSA – BLEU

Additional slides

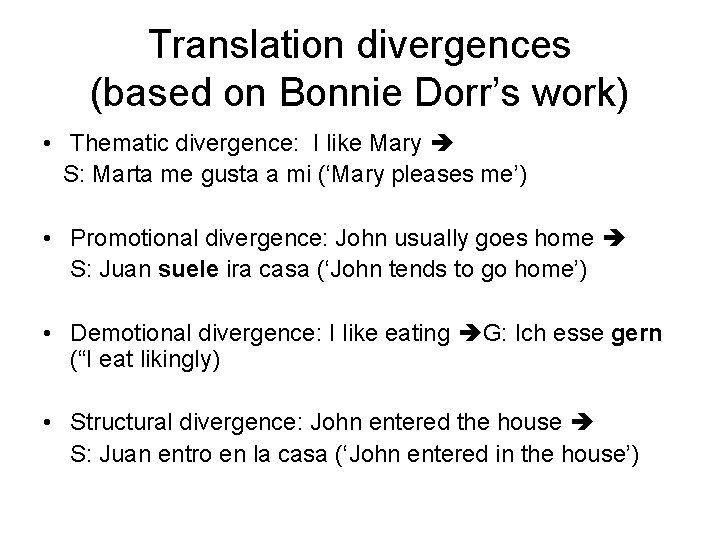

Translation divergences (based on Bonnie Dorr’s work) • Thematic divergence: I like Mary S: Marta me gusta a mi (‘Mary pleases me’) • Promotional divergence: John usually goes home S: Juan suele ira casa (‘John tends to go home’) • Demotional divergence: I like eating G: Ich esse gern (“I eat likingly) • Structural divergence: John entered the house S: Juan entro en la casa (‘John entered in the house’)

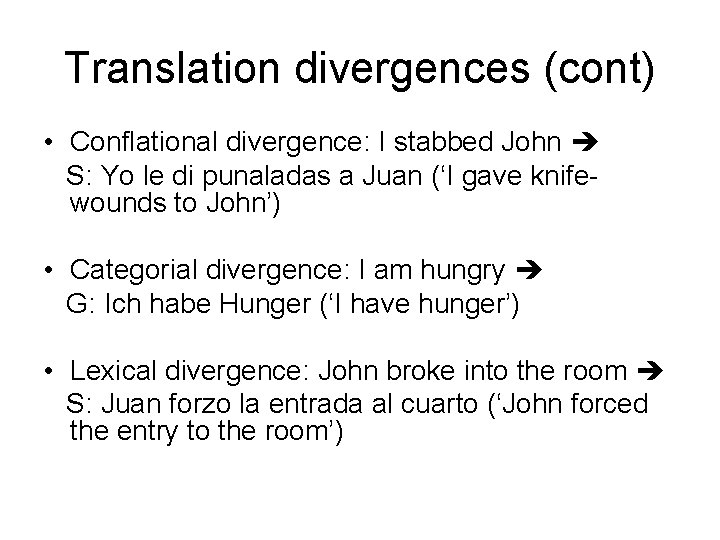

Translation divergences (cont) • Conflational divergence: I stabbed John S: Yo le di punaladas a Juan (‘I gave knifewounds to John’) • Categorial divergence: I am hungry G: Ich habe Hunger (‘I have hunger’) • Lexical divergence: John broke into the room S: Juan forzo la entrada al cuarto (‘John forced the entry to the room’)

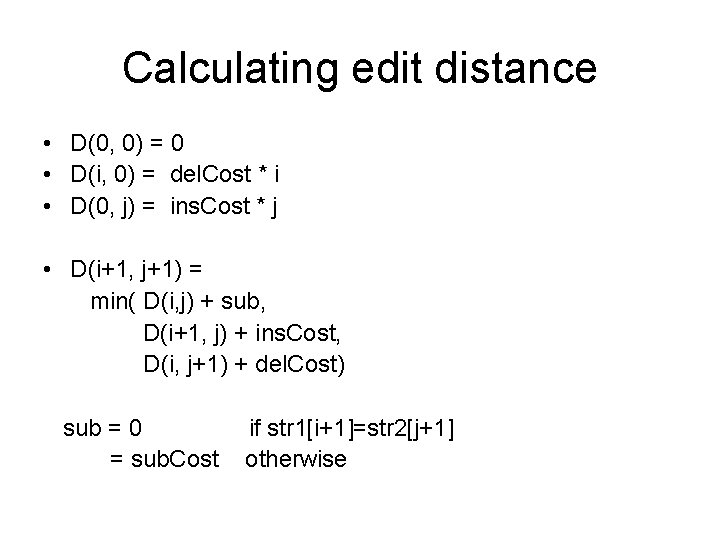

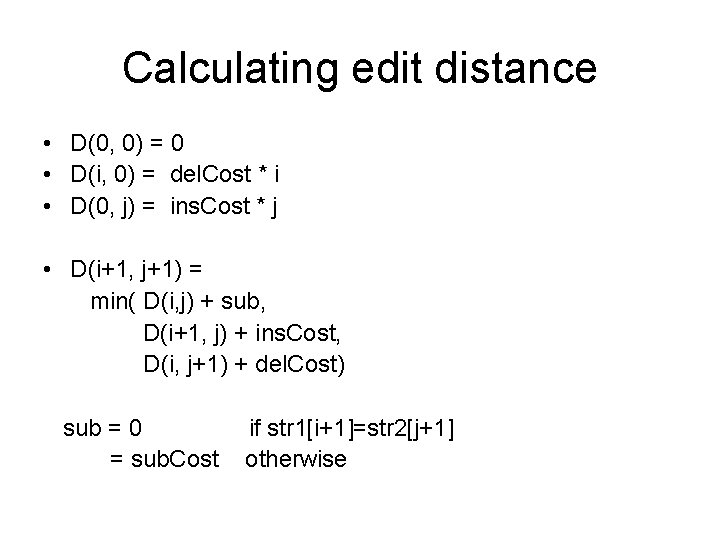

Calculating edit distance • D(0, 0) = 0 • D(i, 0) = del. Cost * i • D(0, j) = ins. Cost * j • D(i+1, j+1) = min( D(i, j) + sub, D(i+1, j) + ins. Cost, D(i, j+1) + del. Cost) sub = 0 = sub. Cost if str 1[i+1]=str 2[j+1] otherwise

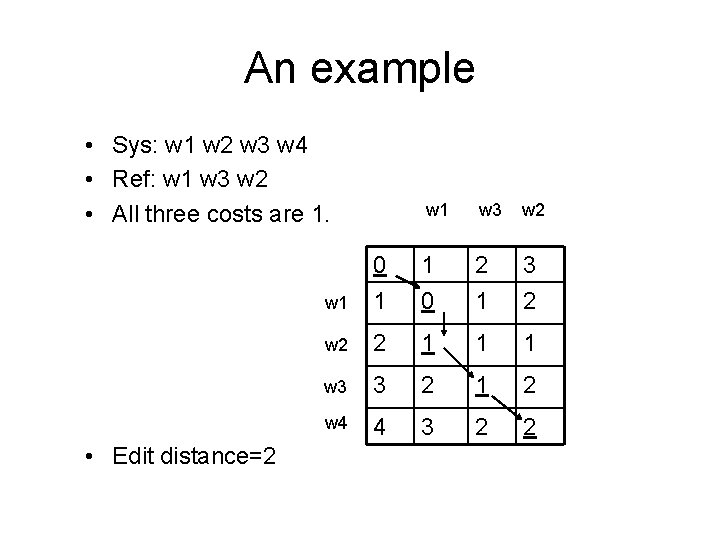

An example • Sys: w 1 w 2 w 3 w 4 • Ref: w 1 w 3 w 2 • All three costs are 1. • Edit distance=2 w 1 w 3 w 2 w 1 0 1 1 0 2 1 3 2 w 2 2 1 1 1 w 3 3 2 1 2 w 4 4 3 2 2