Probabilistic Parsing Ling 571 Fei Xia Week 4

Probabilistic Parsing Ling 571 Fei Xia Week 4: 10/18 -10/20/05

Outline • Misc: Hw 3 and Hw 4: lexicalized rules • CYK recap – Converting CFG into CNF – N-best • • Quiz #2 Common prob equations Independence assumption Lexicalized models

CYK Recap

Converting CFG into CNF • Extended CNF • CFG in general vs. CFG for natural languages • Converting CFG into CNF • Converting PCFG into CNF • Recovering parse trees

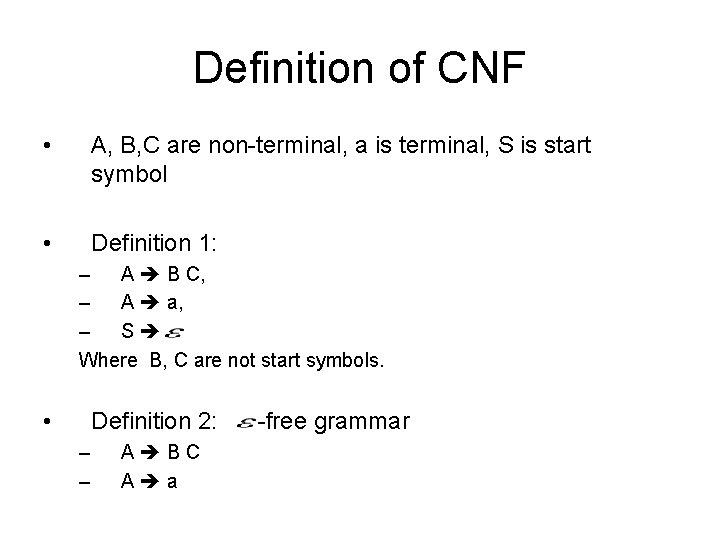

Definition of CNF • A, B, C are non-terminal, a is terminal, S is start symbol • Definition 1: – A B C, – A a, – S Where B, C are not start symbols. • Definition 2: – – A BC A a -free grammar

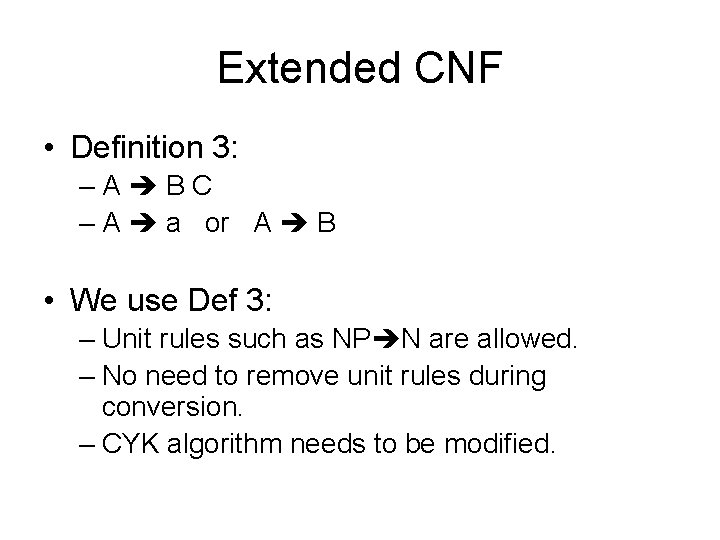

Extended CNF • Definition 3: –A BC – A a or A B • We use Def 3: – Unit rules such as NP N are allowed. – No need to remove unit rules during conversion. – CYK algorithm needs to be modified.

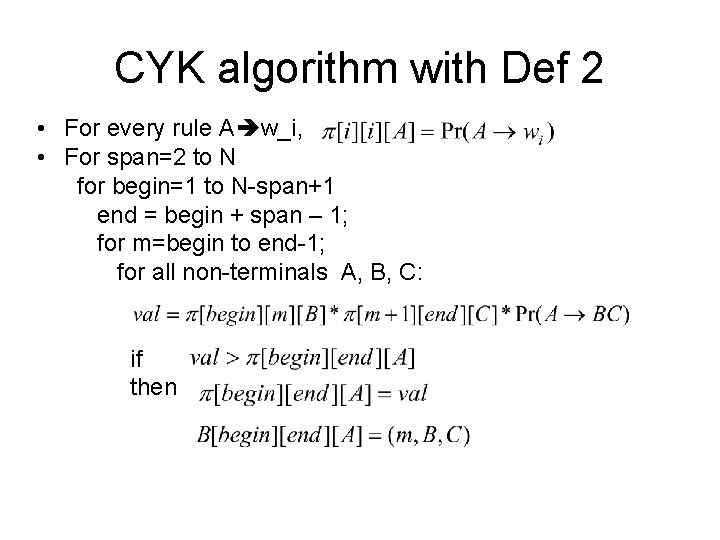

CYK algorithm with Def 2 • For every rule A w_i, • For span=2 to N for begin=1 to N-span+1 end = begin + span – 1; for m=begin to end-1; for all non-terminals A, B, C: if then

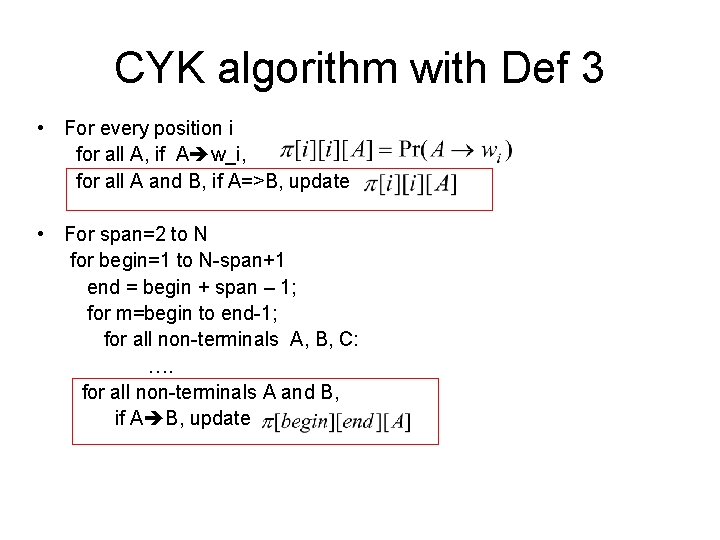

CYK algorithm with Def 3 • For every position i for all A, if A w_i, for all A and B, if A=>B, update • For span=2 to N for begin=1 to N-span+1 end = begin + span – 1; for m=begin to end-1; for all non-terminals A, B, C: …. for all non-terminals A and B, if A B, update

CFG • CFG in general: – G=(N, T, P, S) – Rules: • CFG for natural languages: – G=(N, T, P, S) – Pre-terminal: – Rules: • Syntactic rules: • Lexicon:

Conversion from CFG to CNF • CFG (in general) to CNF (Def 1) – Add S 0 S – Remove e-rules – Remove unit rules – Replace n-ary rules with binary rules • CFG (for NL) to CNF (Def 3) – CFG (for NL) has no e-rules – Unit rules are allowed in CNF (Def 3) – Only the last step is necessary

An example • VP V NP PP PP • To recover the parse tree w. r. t original CFG, just remove added non-terminals.

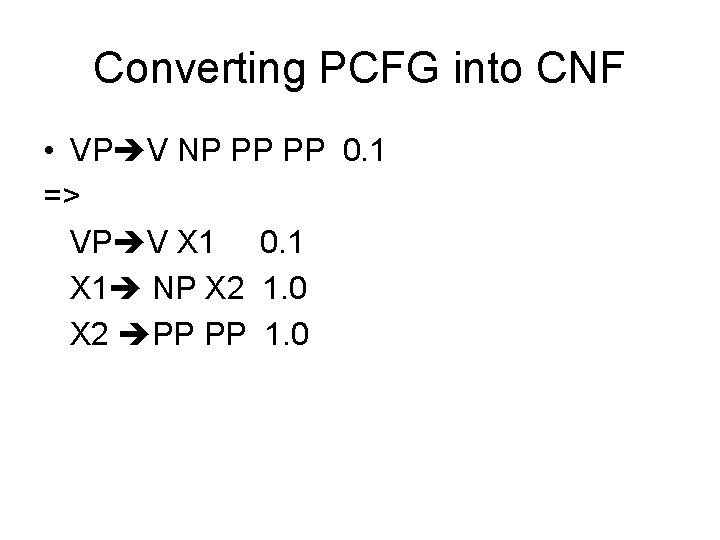

Converting PCFG into CNF • VP V NP PP PP 0. 1 => VP V X 1 0. 1 X 1 NP X 2 1. 0 X 2 PP PP 1. 0

CYK with N-best output

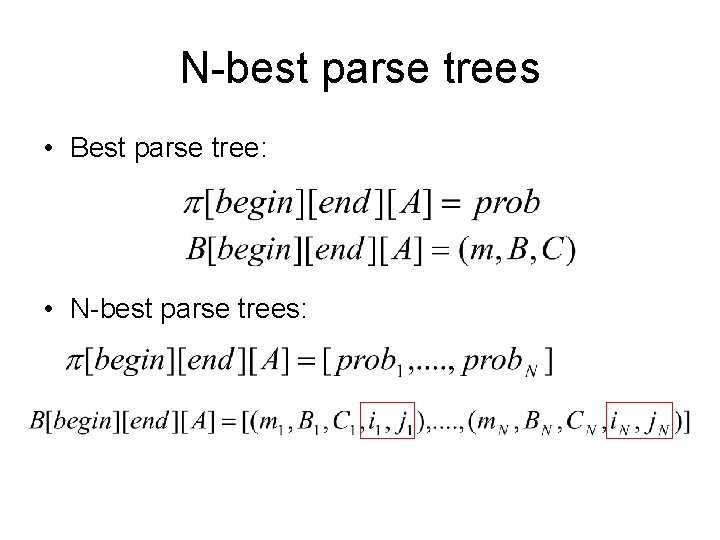

N-best parse trees • Best parse tree: • N-best parse trees:

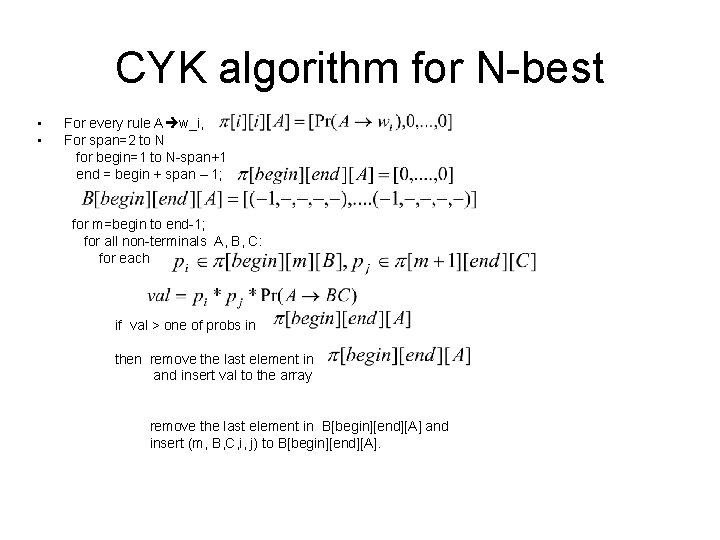

CYK algorithm for N-best • • For every rule A w_i, For span=2 to N for begin=1 to N-span+1 end = begin + span – 1; for m=begin to end-1; for all non-terminals A, B, C: for each if val > one of probs in then remove the last element in and insert val to the array remove the last element in B[begin][end][A] and insert (m, B, C, i, j) to B[begin][end][A].

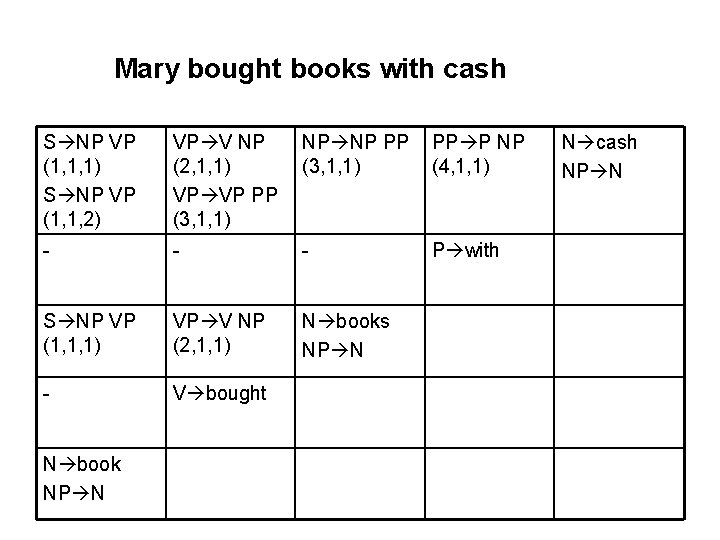

Mary bought books with cash S NP VP (1, 1, 1) S NP VP (1, 1, 2) VP V NP (2, 1, 1) VP VP PP (3, 1, 1) NP NP PP (3, 1, 1) PP P NP (4, 1, 1) - - - P with S NP VP (1, 1, 1) VP V NP (2, 1, 1) N books NP N - V bought N book NP N N cash NP N

Common probability equations

Three types of probability • Joint prob: P(x, y)= prob of x and y happening together • Conditional prob: P(x|y) = prob of x given a specific value of y • Marginal prob: P(x) = prob of x for all possible values of y

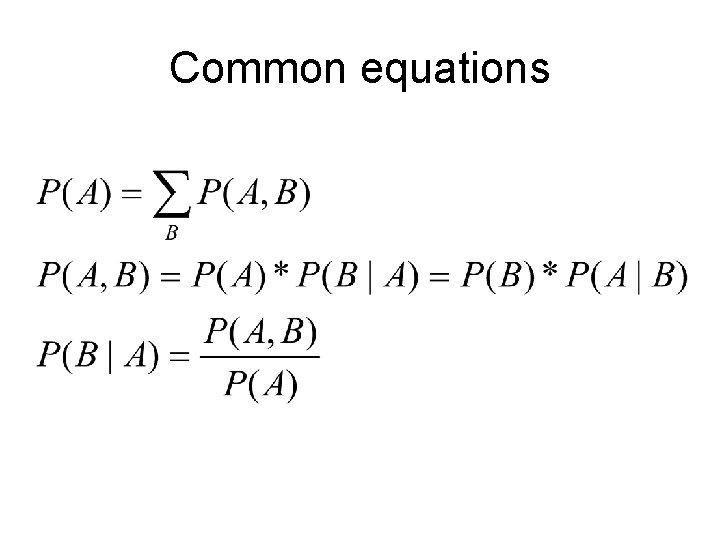

Common equations

An example • #(words)=100, #(nouns)=40, #(verbs)=20 • “books” appears 10 times, 3 as verbs, 7 as nouns • • • P(w=books)=0. 1 P(w=books, t=noun)=0. 07 P(t=noun|w=books)=0. 7 P(nouns)=0. 4 P(w=books|t=nouns)=7/40

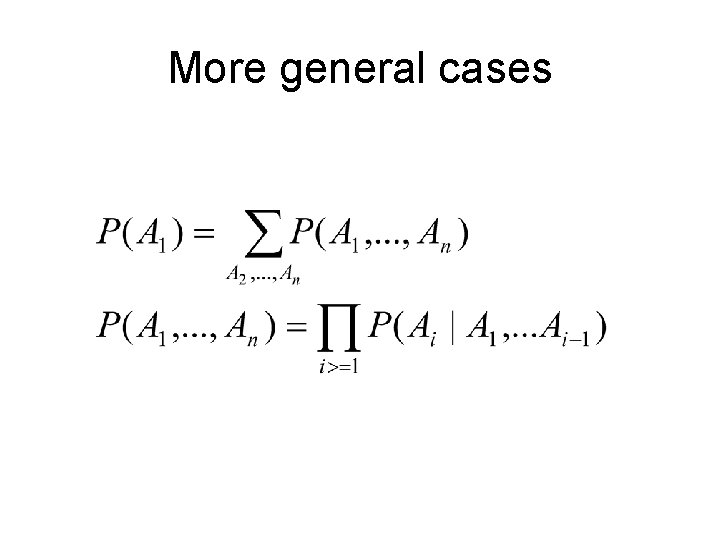

More general cases

Independence assumption

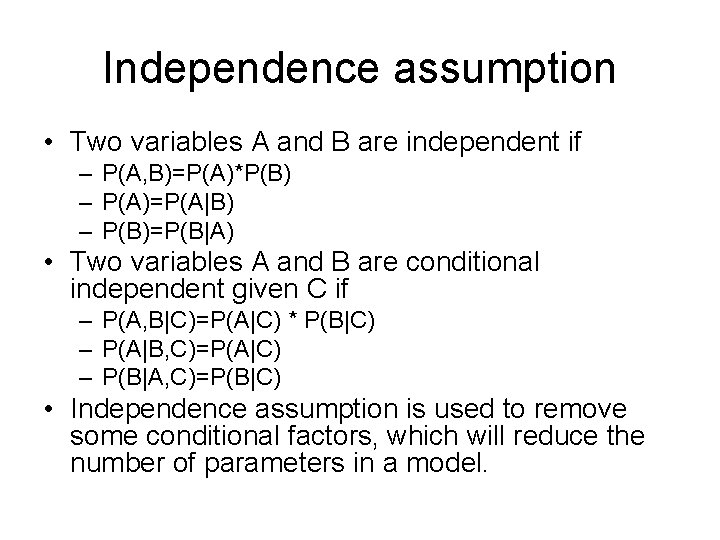

Independence assumption • Two variables A and B are independent if – P(A, B)=P(A)*P(B) – P(A)=P(A|B) – P(B)=P(B|A) • Two variables A and B are conditional independent given C if – P(A, B|C)=P(A|C) * P(B|C) – P(A|B, C)=P(A|C) – P(B|A, C)=P(B|C) • Independence assumption is used to remove some conditional factors, which will reduce the number of parameters in a model.

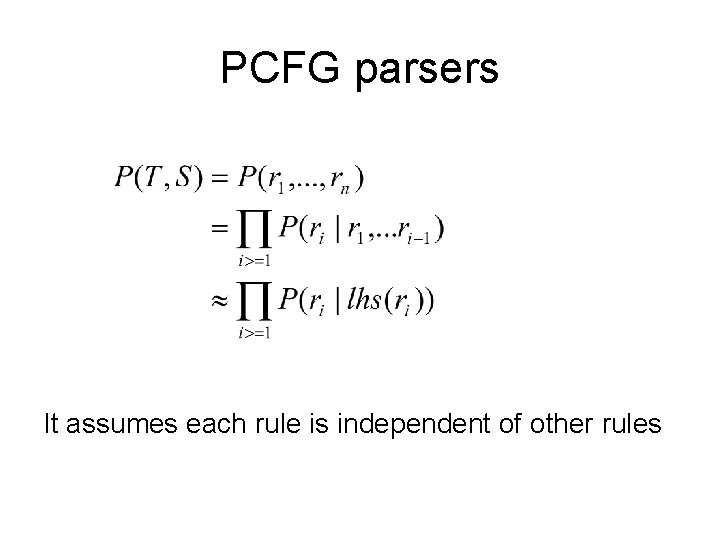

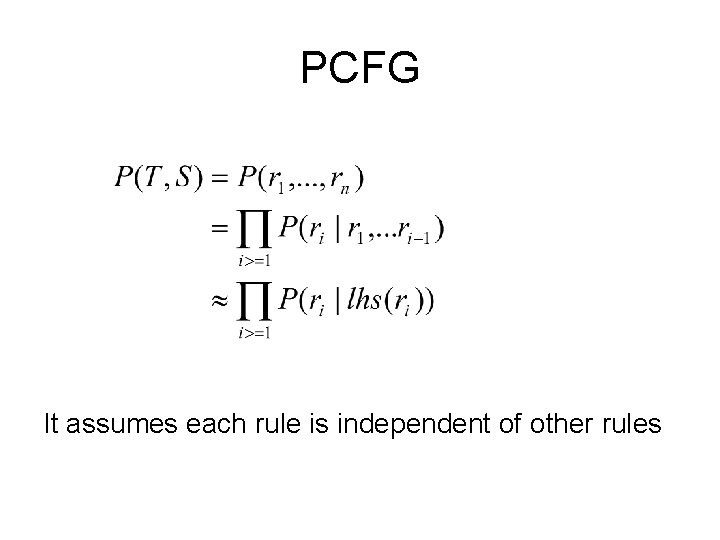

PCFG parsers It assumes each rule is independent of other rules

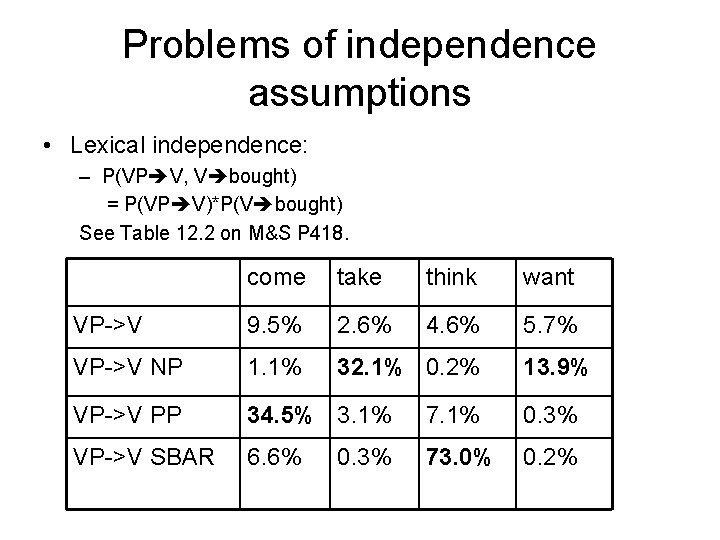

Problems of independence assumptions • Lexical independence: – P(VP V, V bought) = P(VP V)*P(V bought) See Table 12. 2 on M&S P 418. come take think want VP->V 9. 5% 2. 6% 4. 6% 5. 7% VP->V NP 1. 1% 32. 1% 0. 2% VP->V PP 34. 5% 3. 1% 7. 1% 0. 3% VP->V SBAR 6. 6% 73. 0% 0. 2% 0. 3% 13. 9%

Problems of independence assumptions (cont) • Structural independence: – P(S NP VP, NP Pron) = P(S NP VP) * P(NP Pron) See Table 12. 3 on M&S P 420. % as subj NP Pron 13. 7% NP Det NN 5. 6% NP NP SBAR 0. 5% NP NP PP 5. 6% % as obj 2. 1% 4. 6% 2. 6% 14. 1%

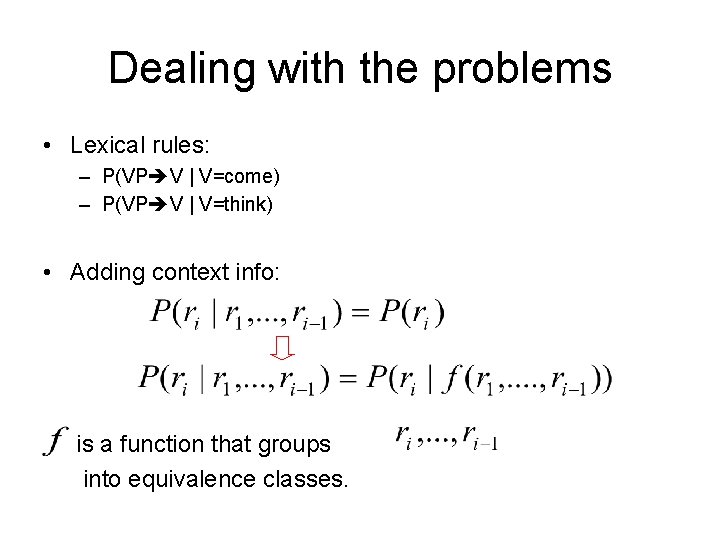

Dealing with the problems • Lexical rules: – P(VP V | V=come) – P(VP V | V=think) • Adding context info: is a function that groups into equivalence classes.

PCFG It assumes each rule is independent of other rules

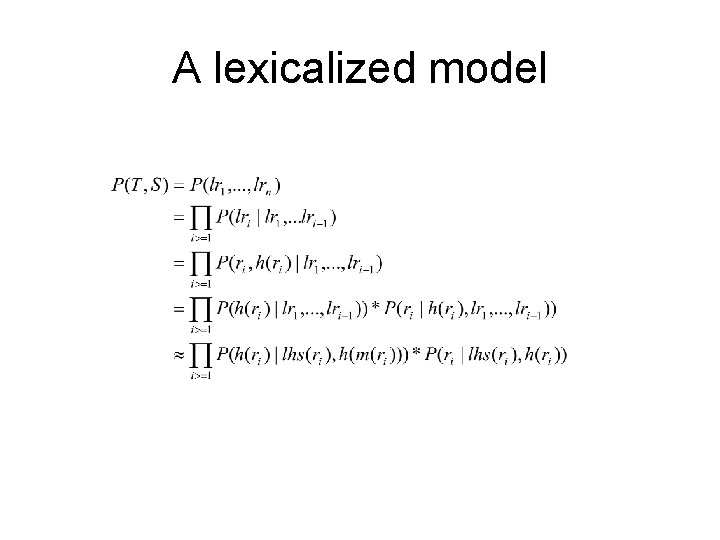

A lexicalized model

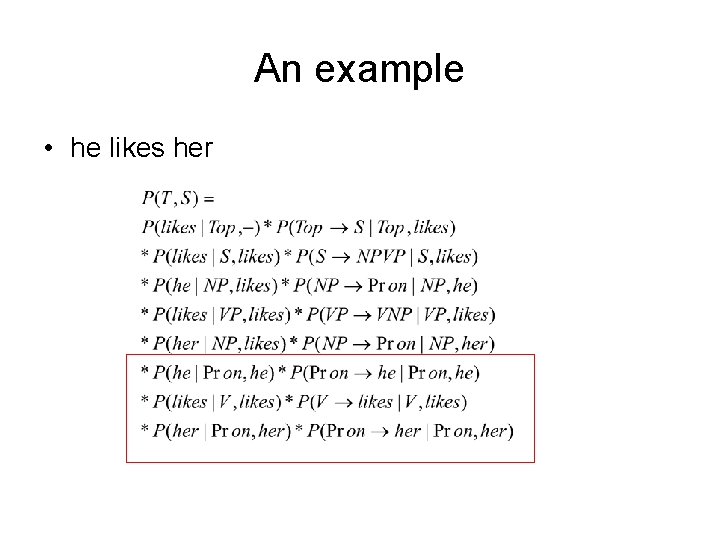

An example • he likes her

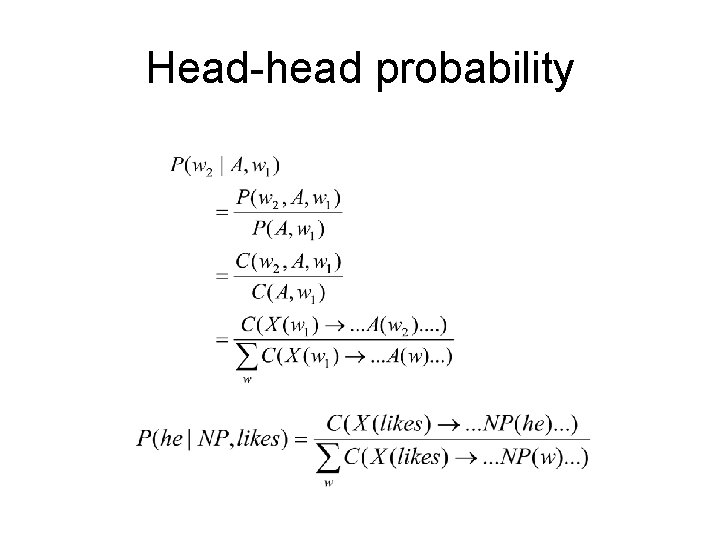

Head-head probability

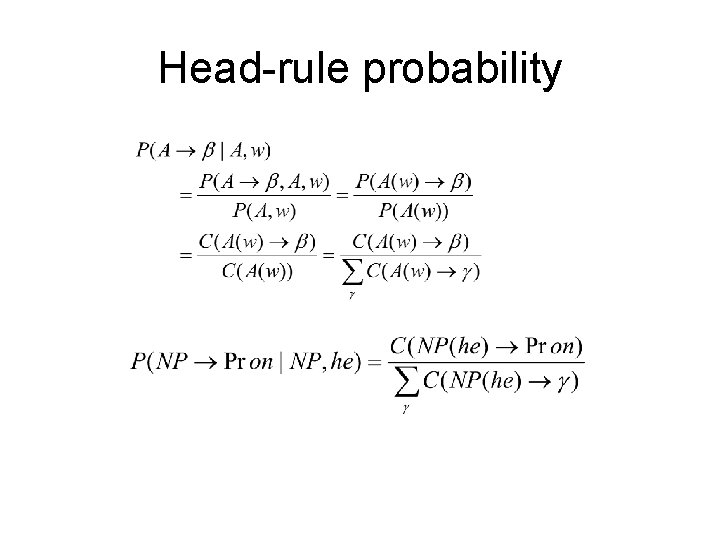

Head-rule probability

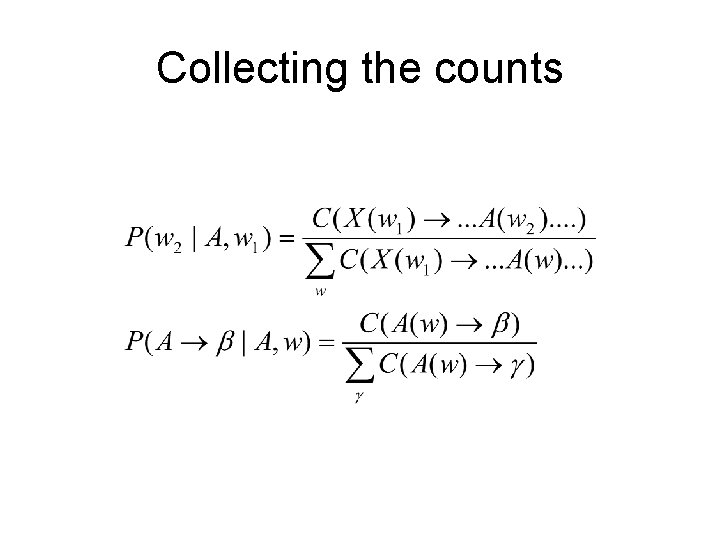

Collecting the counts

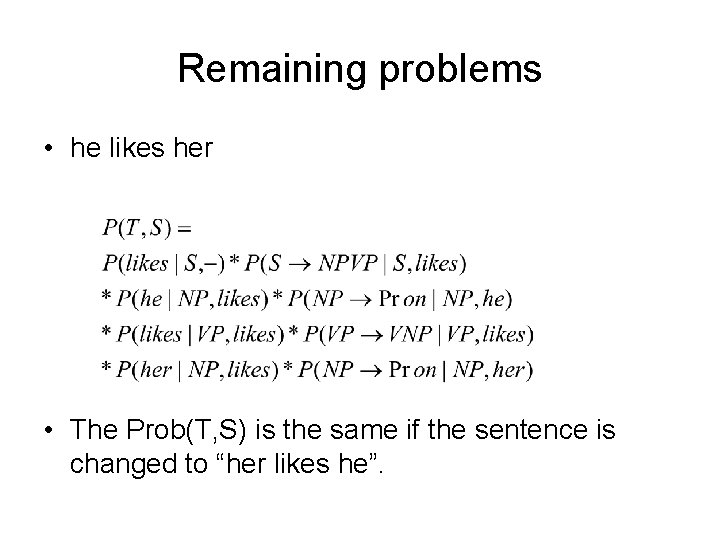

Remaining problems • he likes her • The Prob(T, S) is the same if the sentence is changed to “her likes he”.

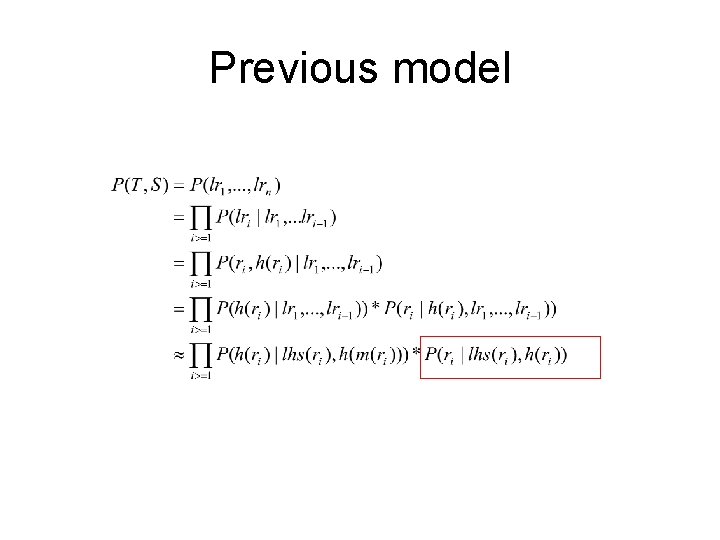

Previous model

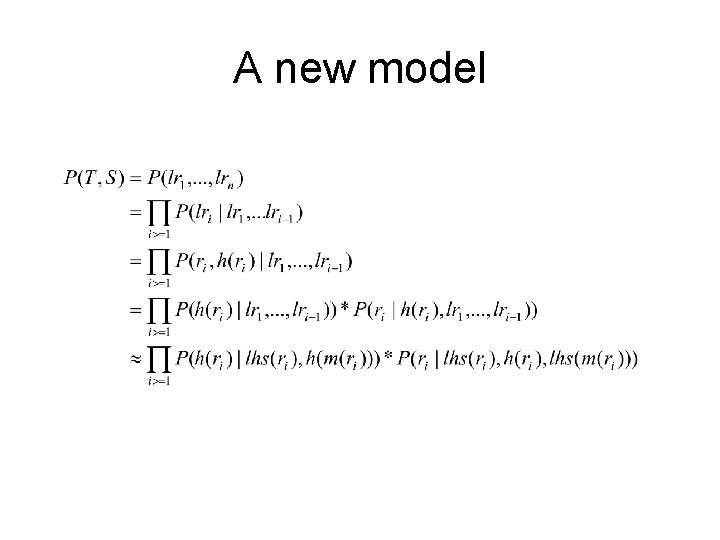

A new model

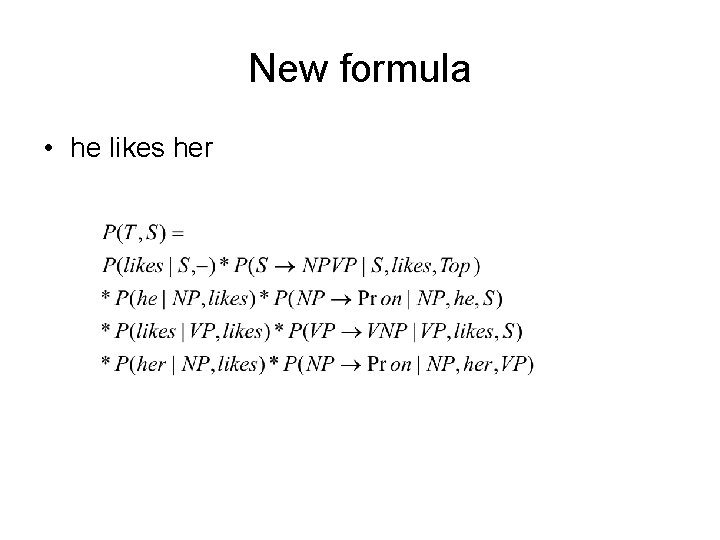

New formula • he likes her

- Slides: 37