Forwardbackward algorithm LING 572 Fei Xia 022306 Outline

Forward-backward algorithm LING 572 Fei Xia 02/23/06

Outline • Forward and backward probability • Expected counts and update formulae • Relation with EM

HMM • A HMM is a tuple – – – : A set of states S={s 1, s 2, …, s. N}. A set of output symbols Σ={w 1, …, w. M}. Initial state probabilities State transition prob: A={aij}. Symbol emission prob: B={bijk} • State sequence: X 1…XT+1 • Output sequence: o 1…o. T

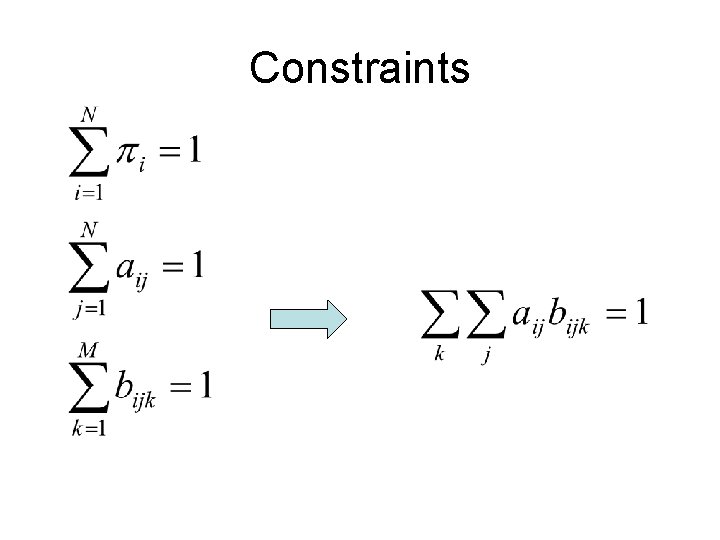

Constraints

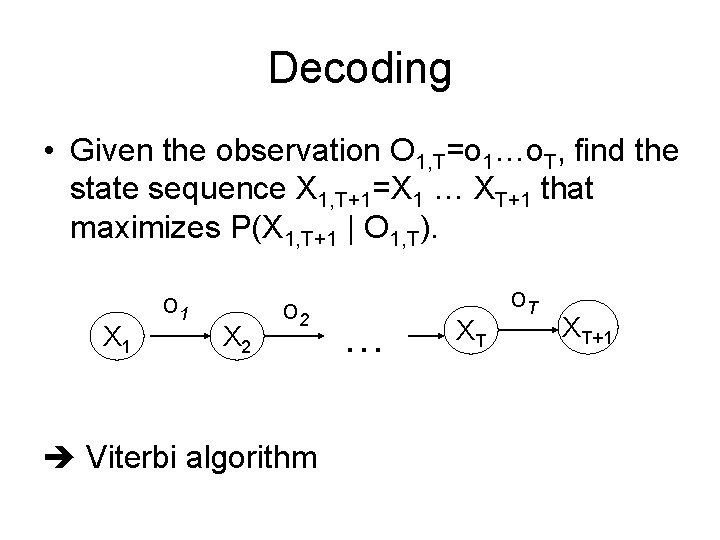

Decoding • Given the observation O 1, T=o 1…o. T, find the state sequence X 1, T+1=X 1 … XT+1 that maximizes P(X 1, T+1 | O 1, T). X 1 o 1 X 2 o 2 Viterbi algorithm … XT o. T XT+1

Notation • • • A sentence: O 1, T=o 1…o. T, T is the sentence length The state sequence X 1, T+1=X 1 … XT+1 t: time t, range from 1 to T+1. Xt: the state at time t. • i, j: state si, sj. • k: word wk in the vocabulary

Forward and backward probabilities

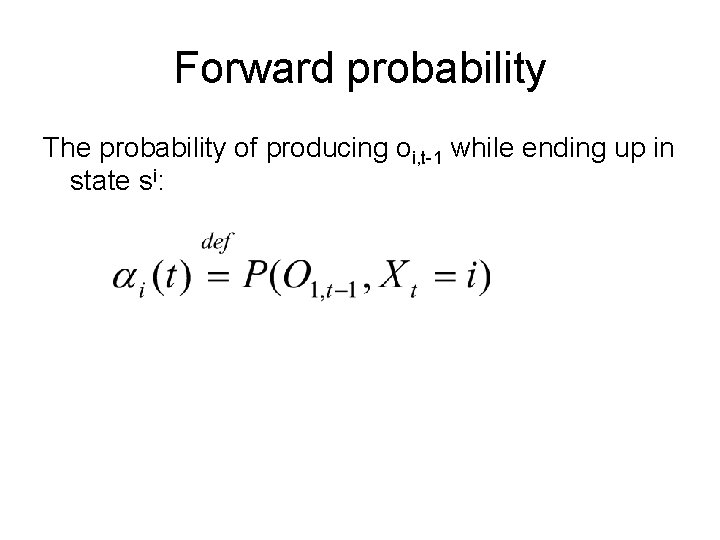

Forward probability The probability of producing oi, t-1 while ending up in state si:

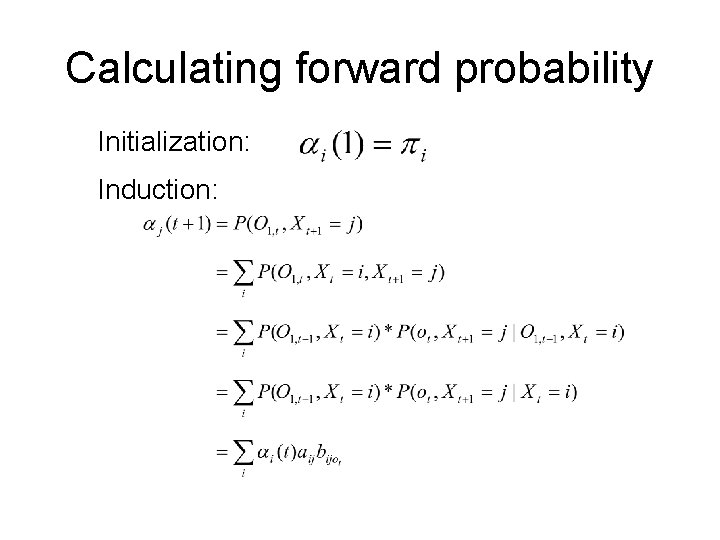

Calculating forward probability Initialization: Induction:

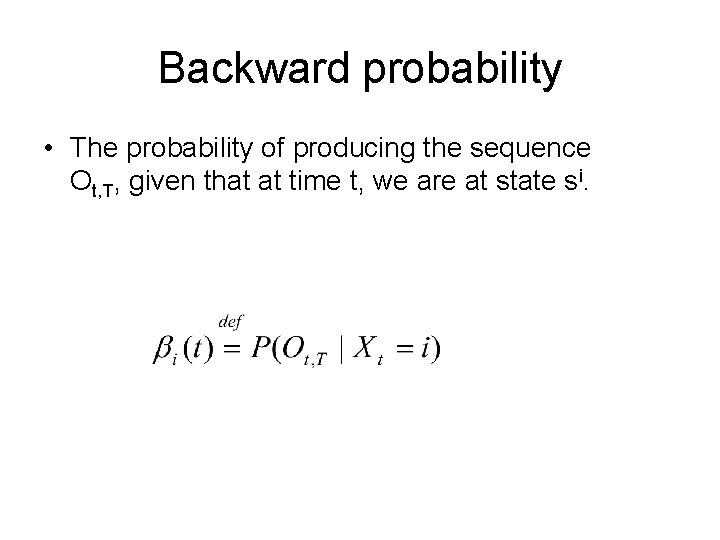

Backward probability • The probability of producing the sequence Ot, T, given that at time t, we are at state si.

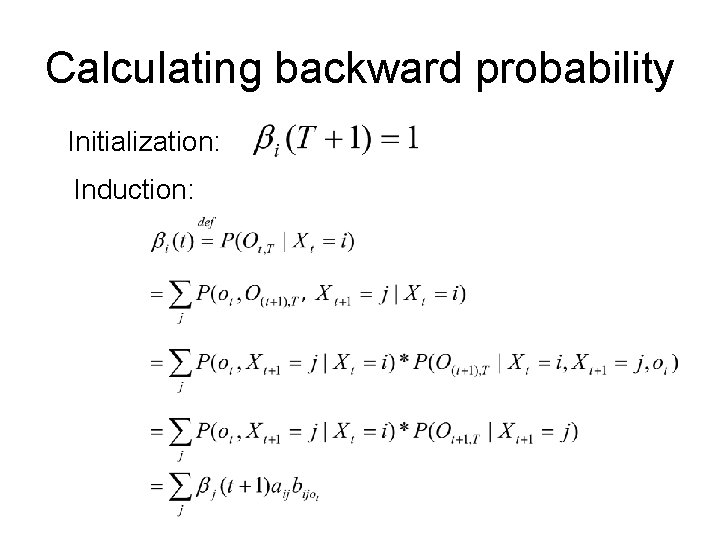

Calculating backward probability Initialization: Induction:

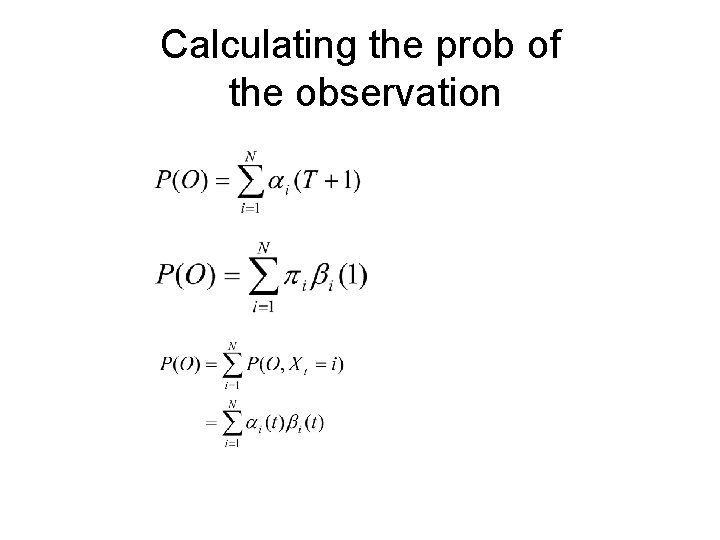

Calculating the prob of the observation

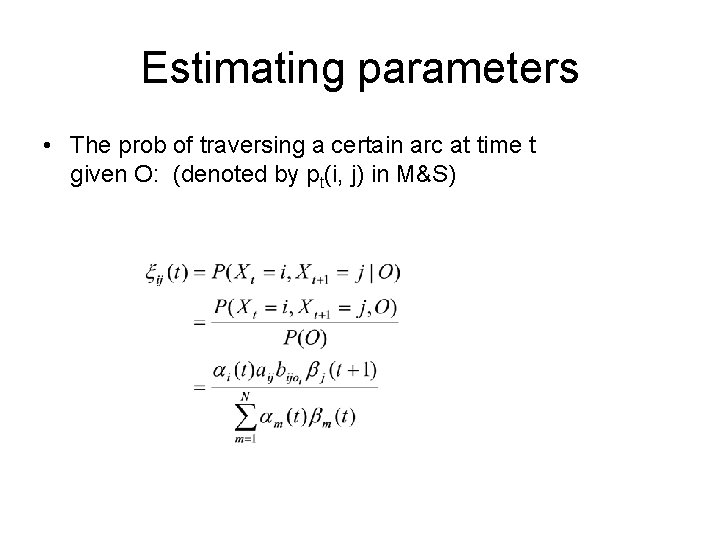

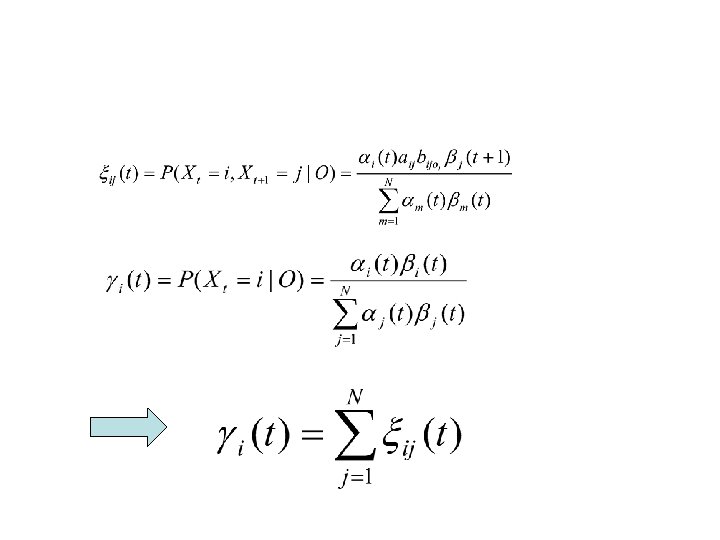

Estimating parameters • The prob of traversing a certain arc at time t given O: (denoted by pt(i, j) in M&S)

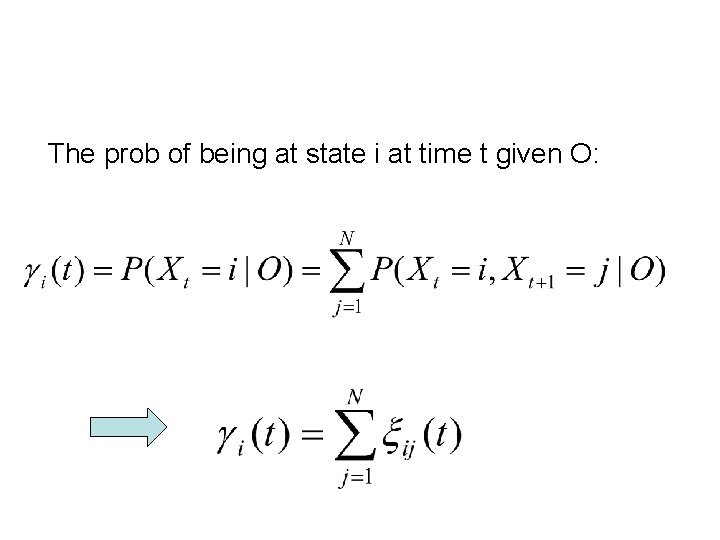

The prob of being at state i at time t given O:

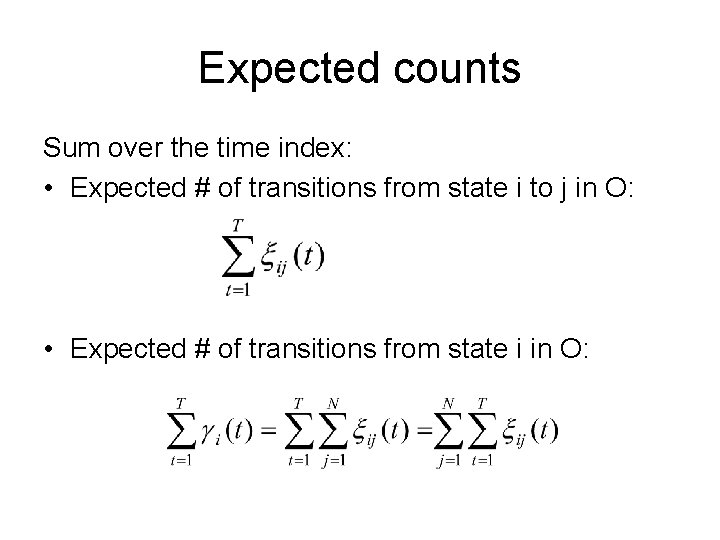

Expected counts Sum over the time index: • Expected # of transitions from state i to j in O: • Expected # of transitions from state i in O:

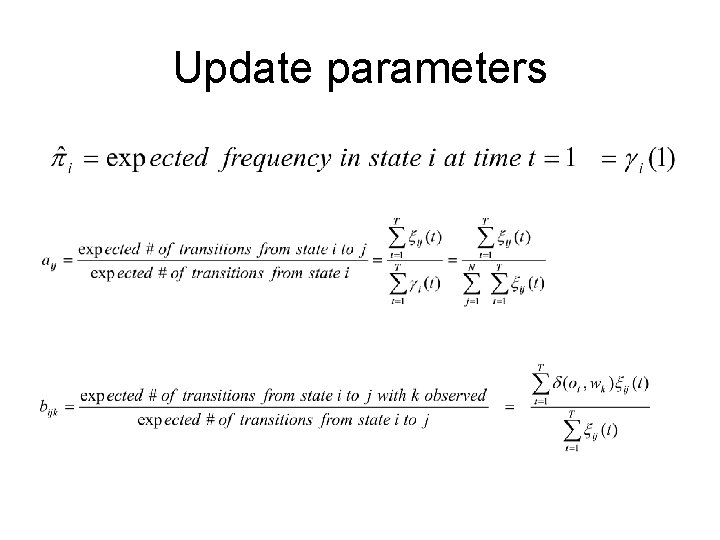

Update parameters

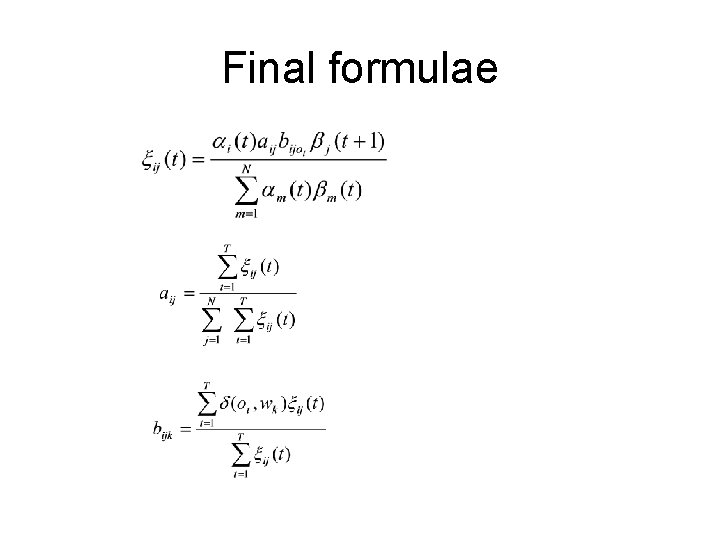

Final formulae

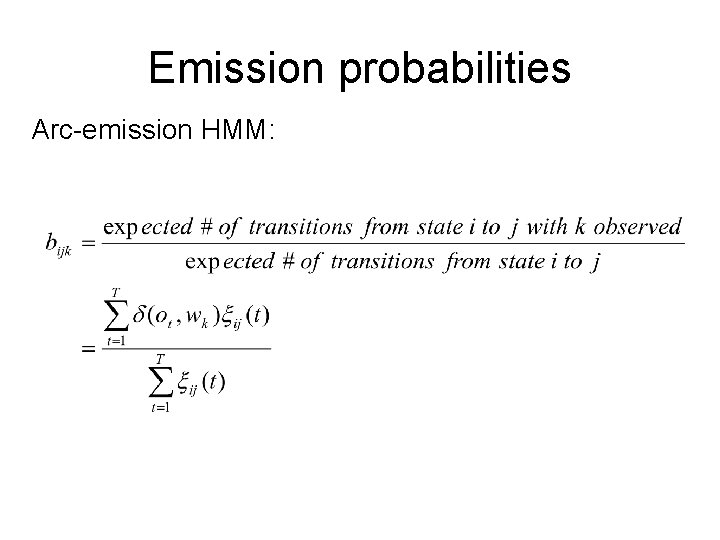

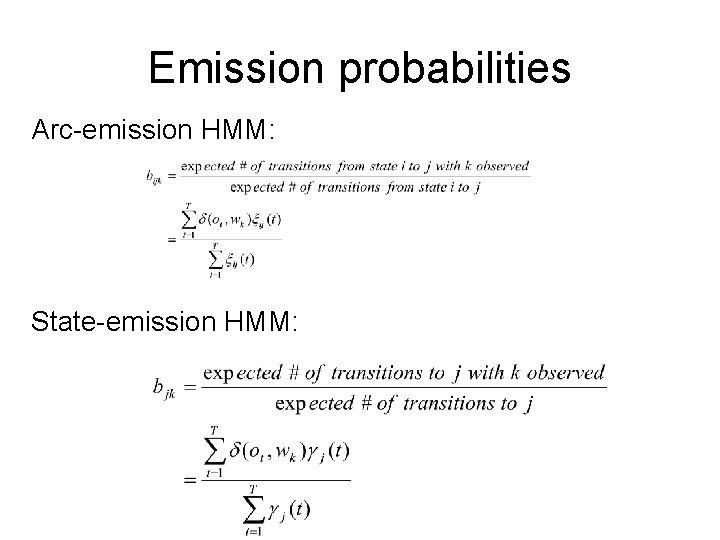

Emission probabilities Arc-emission HMM:

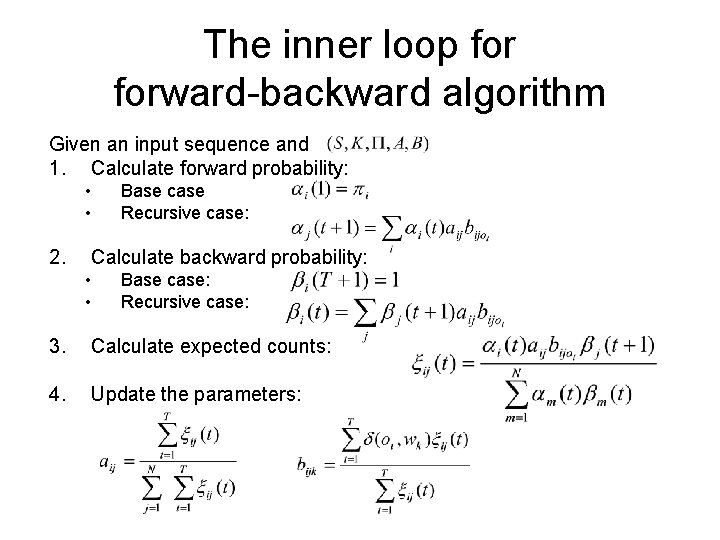

The inner loop forward-backward algorithm Given an input sequence and 1. Calculate forward probability: • • 2. Base case Recursive case: Calculate backward probability: • • Base case: Recursive case: 3. Calculate expected counts: 4. Update the parameters:

Relation to EM

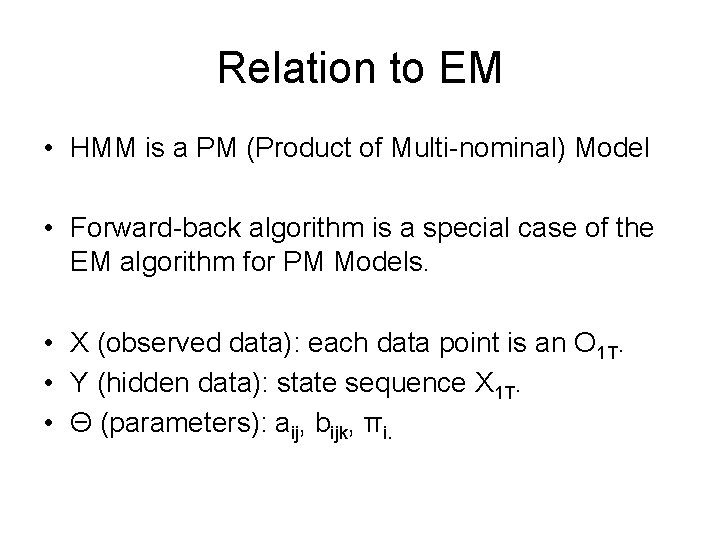

Relation to EM • HMM is a PM (Product of Multi-nominal) Model • Forward-back algorithm is a special case of the EM algorithm for PM Models. • X (observed data): each data point is an O 1 T. • Y (hidden data): state sequence X 1 T. • Θ (parameters): aij, bijk, πi.

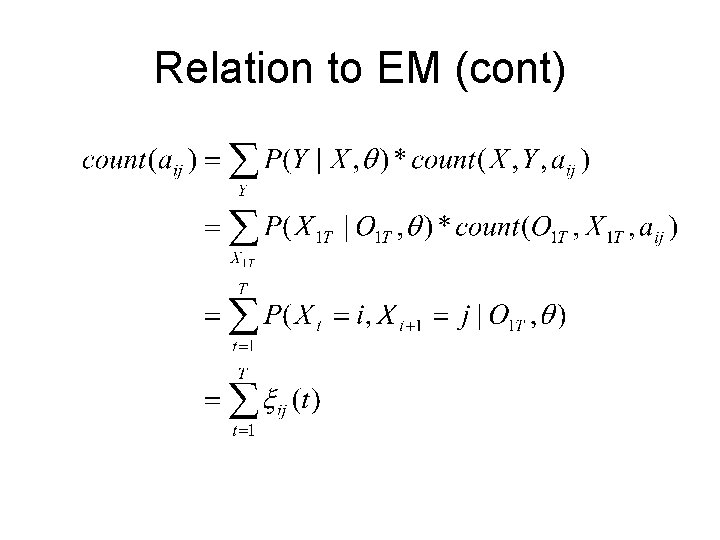

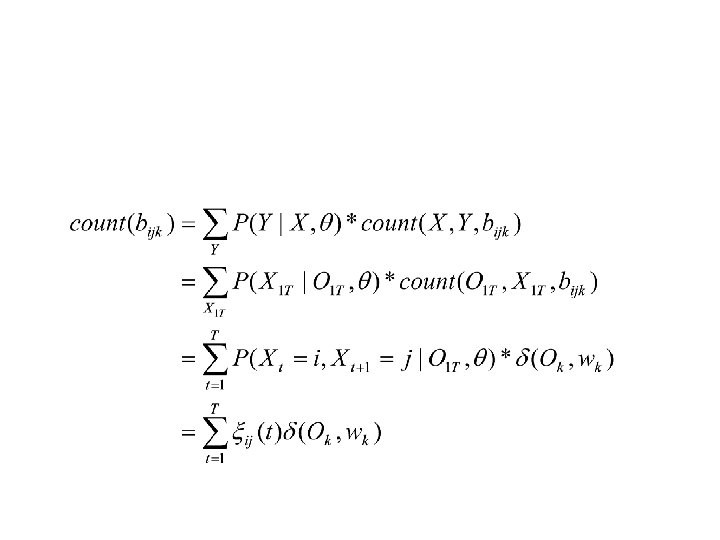

Relation to EM (cont)

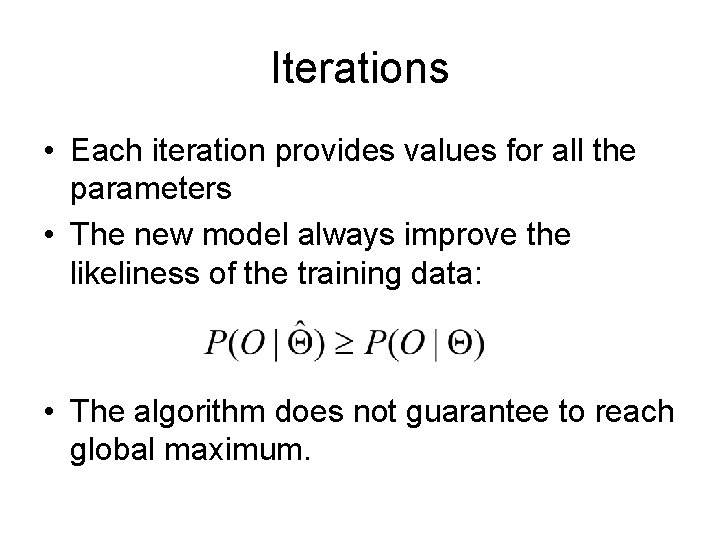

Iterations • Each iteration provides values for all the parameters • The new model always improve the likeliness of the training data: • The algorithm does not guarantee to reach global maximum.

Summary • A way of estimating parameters for HMM – Define forward and backward probability, which can calculated efficiently (DP) – Given an initial parameter setting, we re-estimate the parameters at each iteration. – The forward-backward algorithm is a special case of EM algorithm for PM model

Additional slides

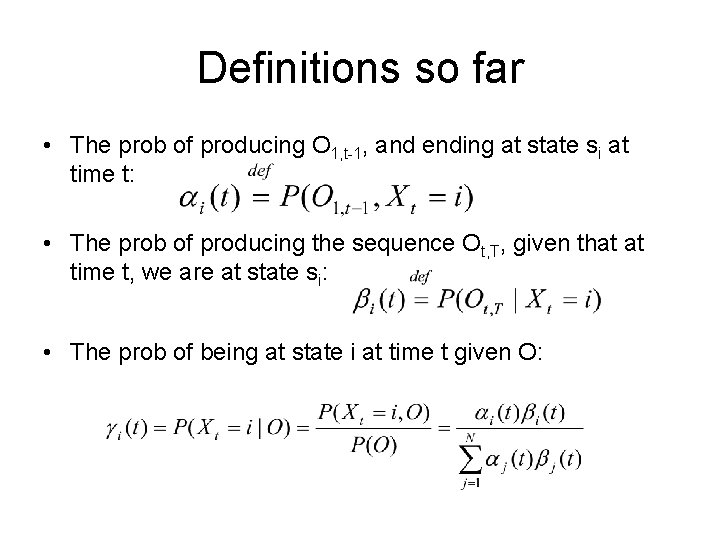

Definitions so far • The prob of producing O 1, t-1, and ending at state si at time t: • The prob of producing the sequence Ot, T, given that at time t, we are at state si: • The prob of being at state i at time t given O:

Emission probabilities Arc-emission HMM: State-emission HMM:

- Slides: 29