Logistic Regression for binary outcomes Why multiple logistic

Logistic Regression for binary outcomes

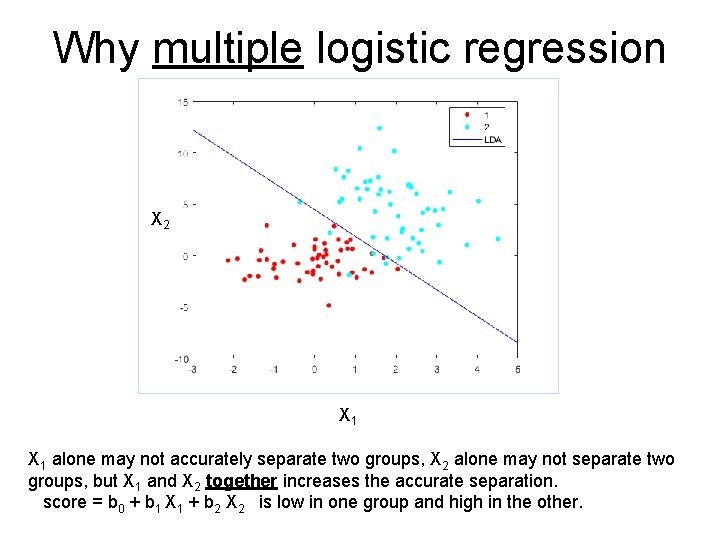

Why multiple logistic regression X 2 X 1 alone may not accurately separate two groups, X 2 alone may not separate two groups, but X 1 and X 2 together increases the accurate separation. score = b 0 + b 1 X 1 + b 2 X 2 is low in one group and high in the other.

In Linear Regression, Y is continuous In Logistic, Y is binary (0, 1). “Average” Y is P. Can’t use linear regression since: 1. Y can’t be linearly related to Xs. 2. Y does NOT have a Gaussian (normal) distribution around “mean” P. We need a “linearizing” transformation and a non Gaussian error model

Since 0 <= P <= 1 Might use odds = P/(1 -P) Odds has no “ceiling” but has “floor” of zero. So we use the logit transformation ln(P/(1 -P)) = ln(odds) = logit(P) Logit does not have a floor or ceiling. Model: logit = ln(P/(1 -P))=β 0+ β 1 X 1 + β 2 X 2+…+βk. Xk or Odds= e(β 0 + β 1 X 1 + β 2 X 2+…+βk. Xk)=elogit

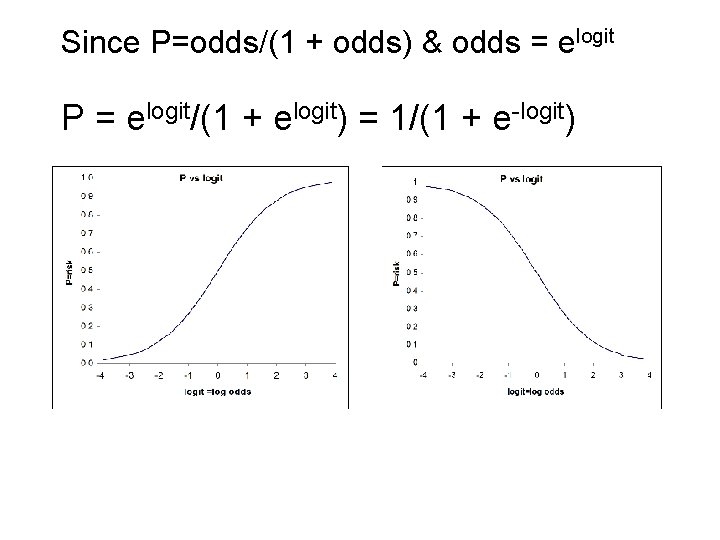

Since P=odds/(1 + odds) & odds = elogit P = elogit/(1 + elogit) = 1/(1 + e-logit)

If ln(odds)= β 0+ β 1 X 1 + β 2 X 2+…+βk. Xk then odds = (eβ 0) (eβ 1 X 1) (eβ 2 X 2)…(eβk. Xk) or odds = (base odds) OR 1 OR 2 … ORk Model is multiplicative on the odds scale (Base odds are odds when all Xs=0) ORi = odds ratio for the ith X Risk = odds/(1+ odds)

Interpreting β coefficients Example: Dichotomous X X = 0 for males, X=1 for females logit(P) = β 0 + β 1 X M: X=0, logit(Pm)= β 0 F: X=1, logit(Pf) = β 0 + β 1 logit(Pf) – logit(Pm) = β 1 log(OR) = β 1, eβ 1 = OR

Example: P is proportion with disease logit(P) = β 0 + β 1 age + β 2 sex “sex” is coded 0 for M, 1 for F OR for F vs M for disease is eβ 2 if both are the same age. eβ 1 is the increase in the odds of disease for a one year increase in age. (eβ 1)k = ekβ 1 is the OR for a ‘k’ year change in age in two groups with the same gender.

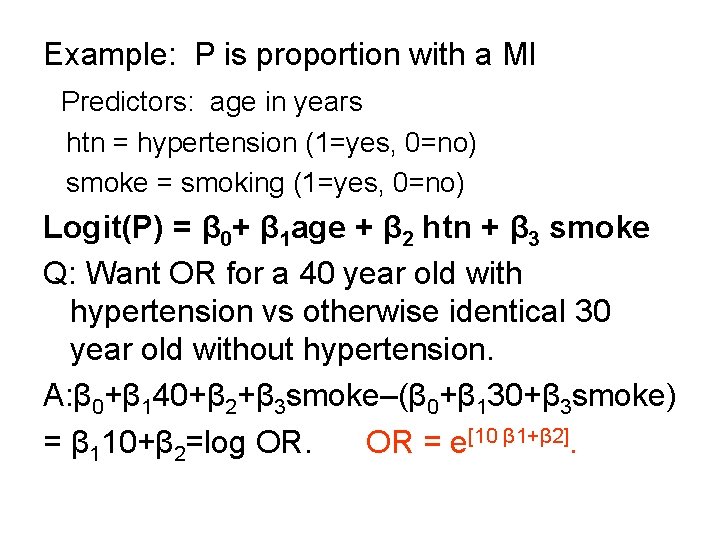

Example: P is proportion with a MI Predictors: age in years htn = hypertension (1=yes, 0=no) smoke = smoking (1=yes, 0=no) Logit(P) = β 0+ β 1 age + β 2 htn + β 3 smoke Q: Want OR for a 40 year old with hypertension vs otherwise identical 30 year old without hypertension. A: β 0+β 140+β 2+β 3 smoke–(β 0+β 130+β 3 smoke) = β 110+β 2=log OR. OR = e[10 β 1+β 2].

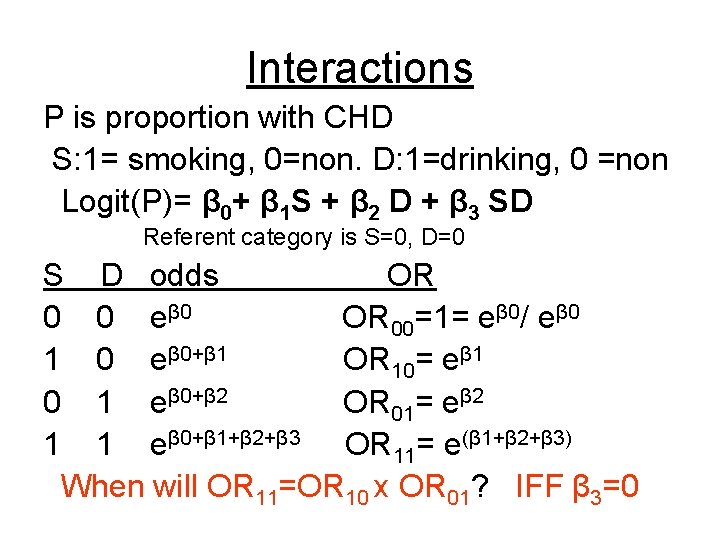

Interactions P is proportion with CHD S: 1= smoking, 0=non. D: 1=drinking, 0 =non Logit(P)= β 0+ β 1 S + β 2 D + β 3 SD Referent category is S=0, D=0 S D odds OR 0 0 eβ 0 OR 00=1= eβ 0/ eβ 0 1 0 eβ 0+β 1 OR 10= eβ 1 0 1 eβ 0+β 2 OR 01= eβ 2 1 1 eβ 0+β 1+β 2+β 3 OR 11= e(β 1+β 2+β 3) When will OR 11=OR 10 x OR 01? IFF β 3=0

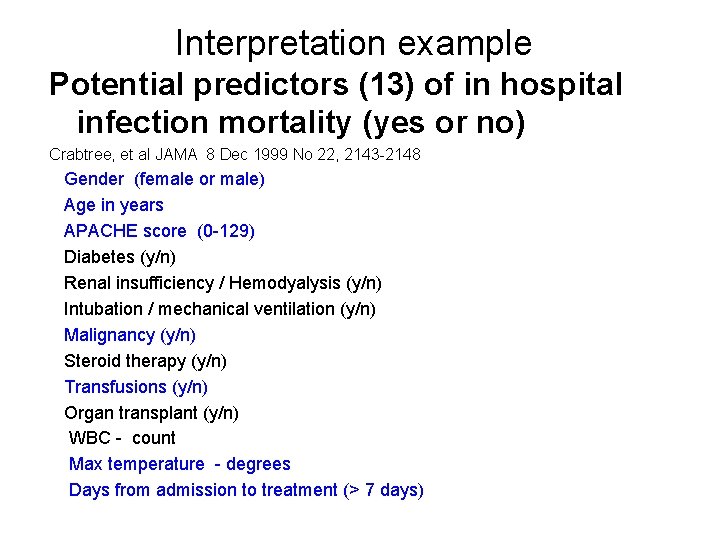

Interpretation example Potential predictors (13) of in hospital infection mortality (yes or no) Crabtree, et al JAMA 8 Dec 1999 No 22, 2143 -2148 Gender (female or male) Age in years APACHE score (0 -129) Diabetes (y/n) Renal insufficiency / Hemodyalysis (y/n) Intubation / mechanical ventilation (y/n) Malignancy (y/n) Steroid therapy (y/n) Transfusions (y/n) Organ transplant (y/n) WBC - count Max temperature - degrees Days from admission to treatment (> 7 days)

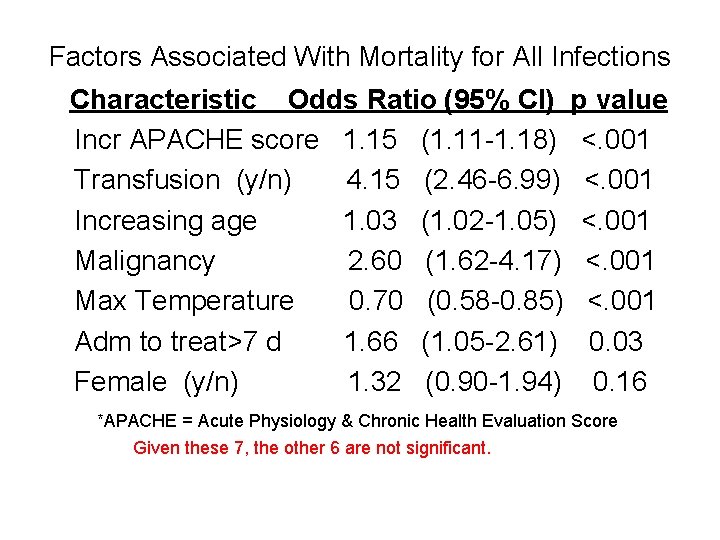

Factors Associated With Mortality for All Infections Characteristic Odds Ratio (95% CI) p value Incr APACHE score 1. 15 (1. 11 -1. 18) <. 001 Transfusion (y/n) 4. 15 (2. 46 -6. 99) <. 001 Increasing age 1. 03 (1. 02 -1. 05) <. 001 Malignancy 2. 60 (1. 62 -4. 17) <. 001 Max Temperature 0. 70 (0. 58 -0. 85) <. 001 Adm to treat>7 d 1. 66 (1. 05 -2. 61) 0. 03 Female (y/n) 1. 32 (0. 90 -1. 94) 0. 16 *APACHE = Acute Physiology & Chronic Health Evaluation Score Given these 7, the other 6 are not significant.

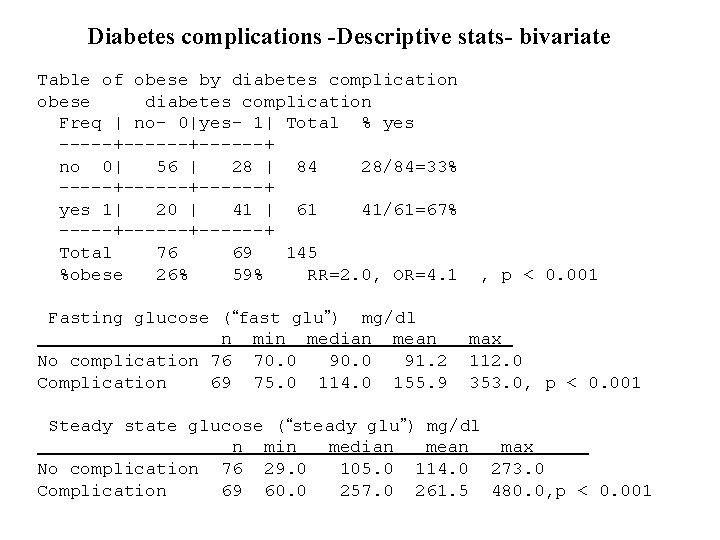

Diabetes complications -Descriptive stats- bivariate Table of obese by diabetes complication obese diabetes complication Freq | no- 0|yes- 1| Total % yes -----+------+ no 0| 56 | 28 | 84 28/84=33% -----+------+ yes 1| 20 | 41 | 61 41/61=67% -----+------+ Total 76 69 145 %obese 26% 59% RR=2. 0, OR=4. 1 Fasting glucose (“fast glu”) mg/dl n min median mean No complication 76 70. 0 91. 2 Complication 69 75. 0 114. 0 155. 9 , p < 0. 001 max 112. 0 353. 0, p < 0. 001 Steady state glucose (“steady glu”) mg/dl n min median mean max No complication 76 29. 0 105. 0 114. 0 273. 0 Complication 69 60. 0 257. 0 261. 5 480. 0, p < 0. 001

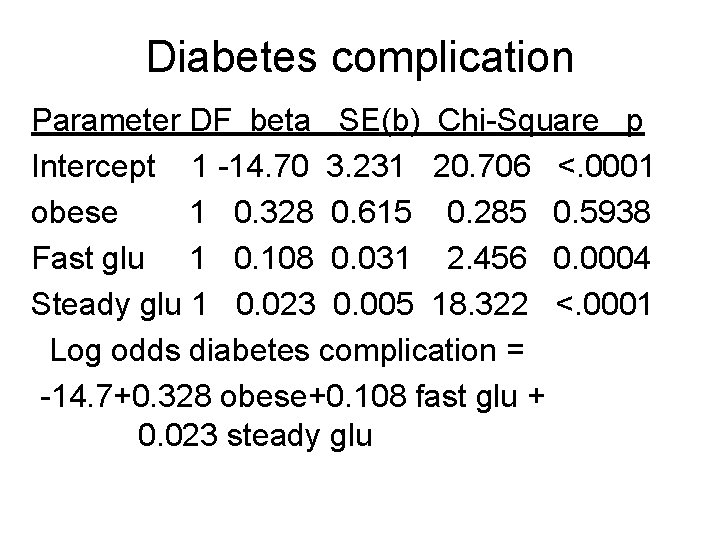

Diabetes complication Parameter DF beta SE(b) Chi-Square p Intercept 1 -14. 70 3. 231 20. 706 <. 0001 obese 1 0. 328 0. 615 0. 285 0. 5938 Fast glu 1 0. 108 0. 031 2. 456 0. 0004 Steady glu 1 0. 023 0. 005 18. 322 <. 0001 Log odds diabetes complication = -14. 7+0. 328 obese+0. 108 fast glu + 0. 023 steady glu

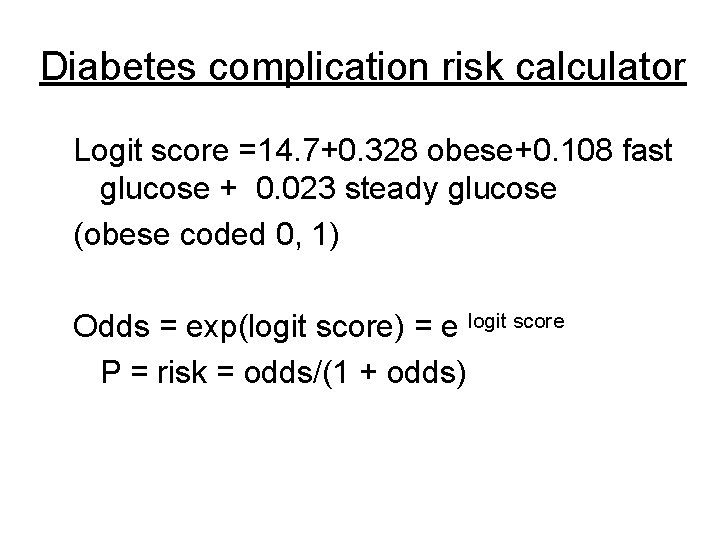

Diabetes complication risk calculator Logit score =14. 7+0. 328 obese+0. 108 fast glucose + 0. 023 steady glucose (obese coded 0, 1) Odds = exp(logit score) = e logit score P = risk = odds/(1 + odds)

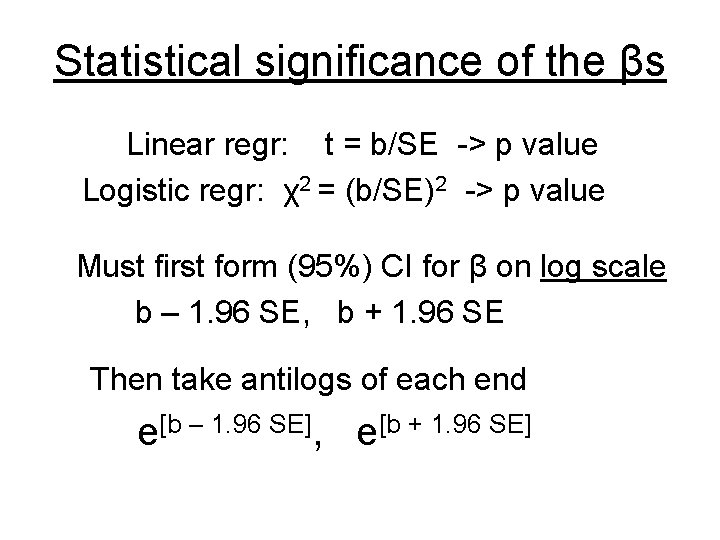

Statistical significance of the βs Linear regr: t = b/SE -> p value Logistic regr: χ2 = (b/SE)2 -> p value Must first form (95%) CI for β on log scale b – 1. 96 SE, b + 1. 96 SE Then take antilogs of each end e[b – 1. 96 SE], e[b + 1. 96 SE]

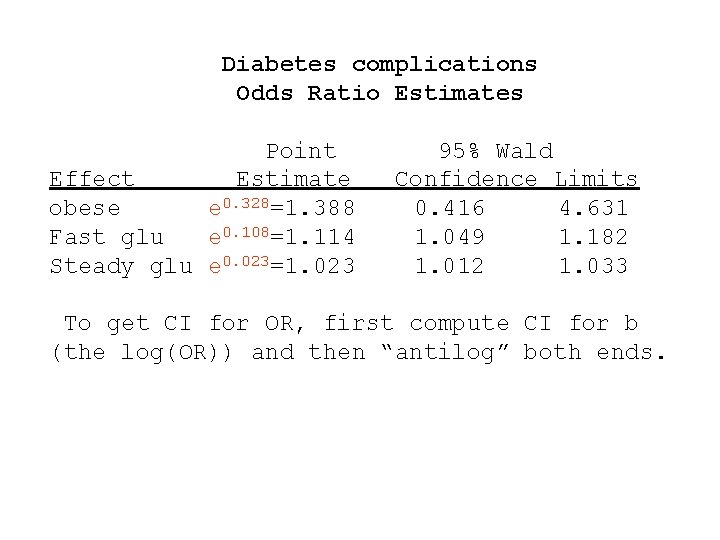

Diabetes complications Odds Ratio Estimates Point Effect Estimate obese e 0. 328=1. 388 Fast glu e 0. 108=1. 114 Steady glu e 0. 023=1. 023 95% Wald Confidence Limits 0. 416 4. 631 1. 049 1. 182 1. 012 1. 033 To get CI for OR, first compute CI for b (the log(OR)) and then “antilog” both ends.

Model Fit statistics Before one publishes results from regression, one should evaluate the “model performance” and whether the model fits the data. One should not publish a model that is a poor fit to the data. Failing to provide model performance statistics prevents one from knowing if the model provides a reasonable representation of the relationship between the predictors (Xs) and the outcome (Y or logit or P).

Model fit-Linear vs Logistic regression k variables, n observations Variation Model Error Total df sum of squares (linear) or deviance (logit) k n-1 G D T =total <-fixed Yi= ith observation, Ŷi=prediction for ith obs statistic Linear regr Logistic regr D/(n-k) Σ[(Yi-Ŷi)/Ŷ]2 Corr(Y, Ŷ)2 Model var/total var Residual SDe -R 2 Mean deviance Hosmer-L χ2 Cox-Snell R 2 Pseudo R 2

Good regression models have large G (model variance) and small D (error variance) so R 2 is closer to 1. 0. For logistic regression, D/(n-k), the mean deviance, should be near 1. 0. There are two versions of the R 2 for logistic regression.

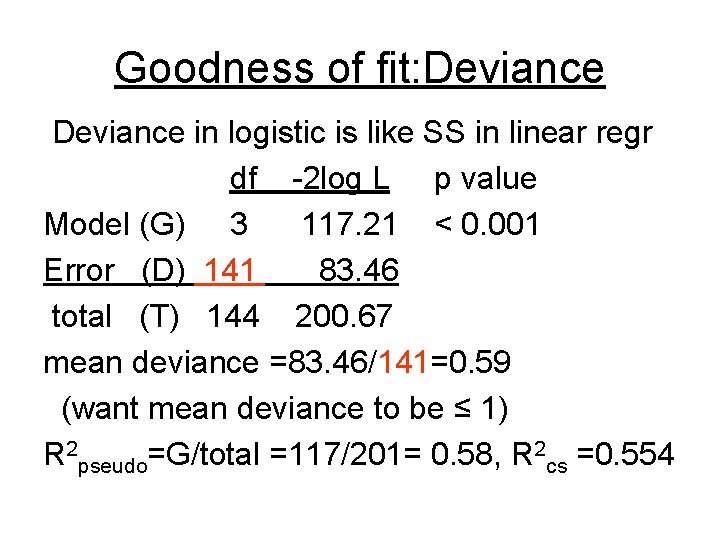

Goodness of fit: Deviance in logistic is like SS in linear regr df -2 log L p value Model (G) 3 117. 21 < 0. 001 Error (D) 141 83. 46 total (T) 144 200. 67 mean deviance =83. 46/141=0. 59 (want mean deviance to be ≤ 1) R 2 pseudo=G/total =117/201= 0. 58, R 2 cs =0. 554

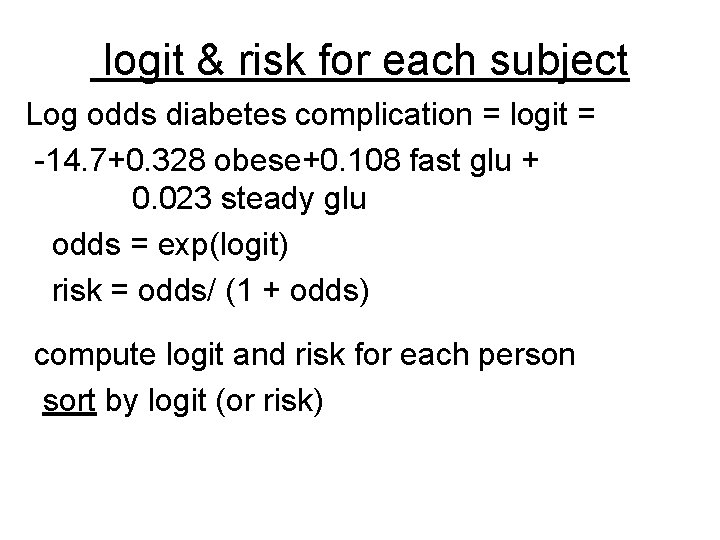

logit & risk for each subject Log odds diabetes complication = logit = -14. 7+0. 328 obese+0. 108 fast glu + 0. 023 steady glu odds = exp(logit) risk = odds/ (1 + odds) compute logit and risk for each person sort by logit (or risk)

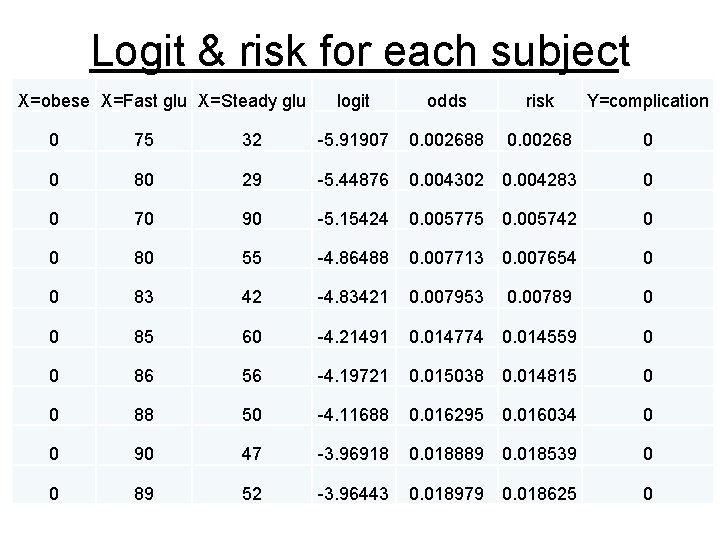

Logit & risk for each subject X=obese X=Fast glu X=Steady glu logit odds risk Y=complication 0 75 32 -5. 91907 0. 002688 0. 00268 0 0 80 29 -5. 44876 0. 004302 0. 004283 0 0 70 90 -5. 15424 0. 005775 0. 005742 0 0 80 55 -4. 86488 0. 007713 0. 007654 0 0 83 42 -4. 83421 0. 007953 0. 00789 0 0 85 60 -4. 21491 0. 014774 0. 014559 0 0 86 56 -4. 19721 0. 015038 0. 014815 0 0 88 50 -4. 11688 0. 016295 0. 016034 0 0 90 47 -3. 96918 0. 018889 0. 018539 0 0 89 52 -3. 96443 0. 018979 0. 018625 0

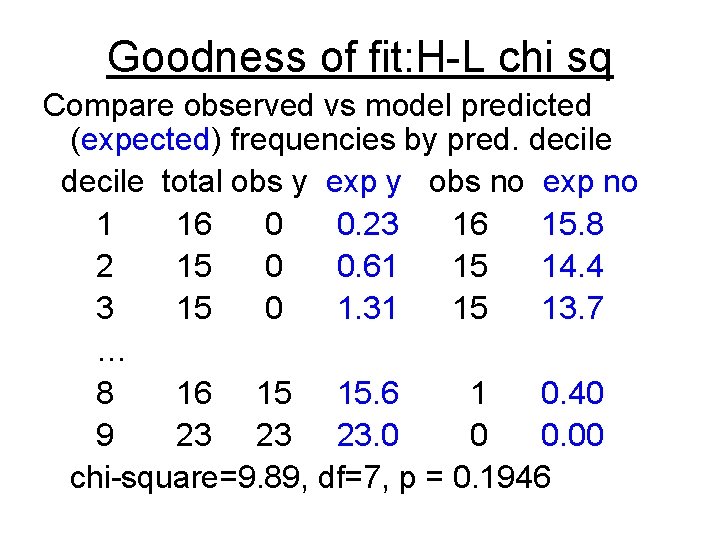

Goodness of fit: H-L chi sq Compare observed vs model predicted (expected) frequencies by pred. decile total obs y exp y obs no exp no 1 16 0 0. 23 16 15. 8 2 15 0 0. 61 15 14. 4 3 15 0 1. 31 15 13. 7 … 8 16 15 15. 6 1 0. 40 9 23 23 23. 0 0 0. 00 chi-square=9. 89, df=7, p = 0. 1946

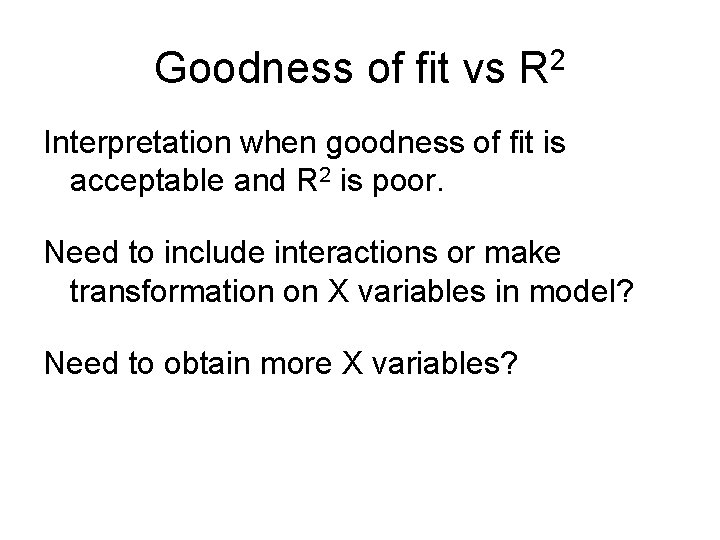

Goodness of fit vs R 2 Interpretation when goodness of fit is acceptable and R 2 is poor. Need to include interactions or make transformation on X variables in model? Need to obtain more X variables?

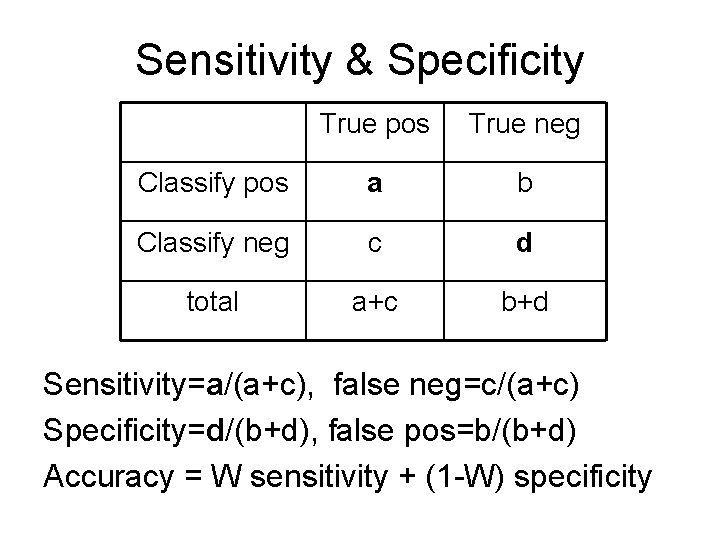

Sensitivity & Specificity True pos True neg Classify pos a b Classify neg c d total a+c b+d Sensitivity=a/(a+c), false neg=c/(a+c) Specificity=d/(b+d), false pos=b/(b+d) Accuracy = W sensitivity + (1 -W) specificity

Any good classification rule, including a logistic model, should have high sensitivity & specificity. In logistic, we choose a cutpoint, Pc, Predict positive if P > Pc Predict negative if P < Pc Or can use logit cutpoint=ln(Pc/(1 -Pc))

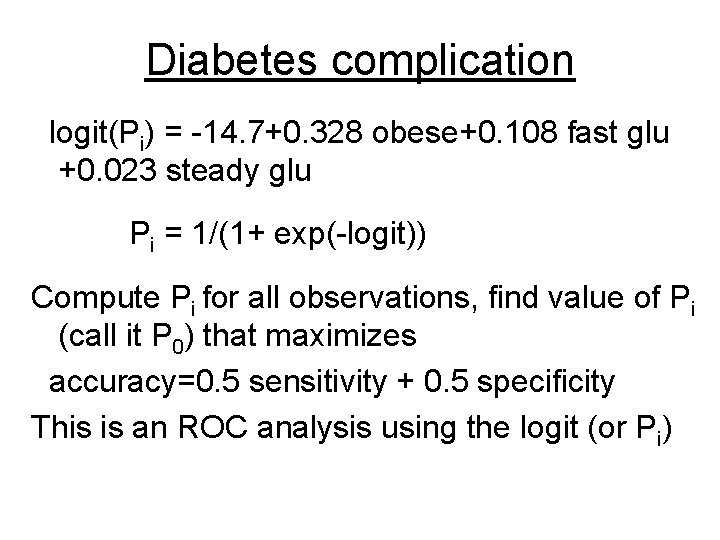

Diabetes complication logit(Pi) = -14. 7+0. 328 obese+0. 108 fast glu +0. 023 steady glu Pi = 1/(1+ exp(-logit)) Compute Pi for all observations, find value of Pi (call it P 0) that maximizes accuracy=0. 5 sensitivity + 0. 5 specificity This is an ROC analysis using the logit (or Pi)

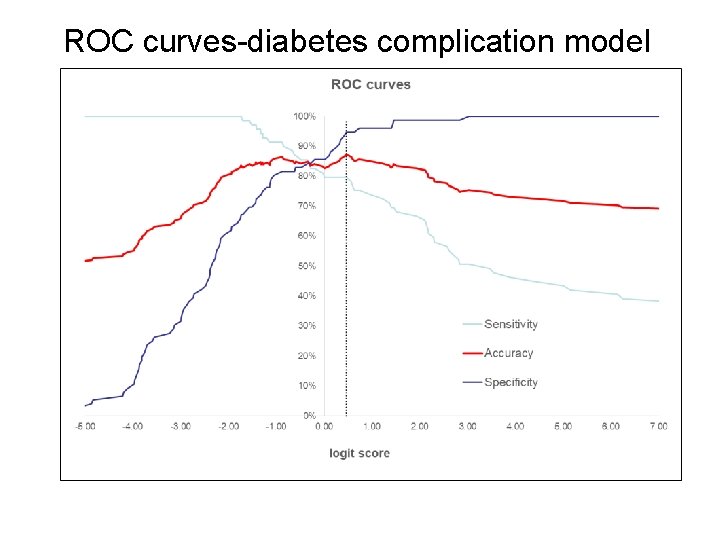

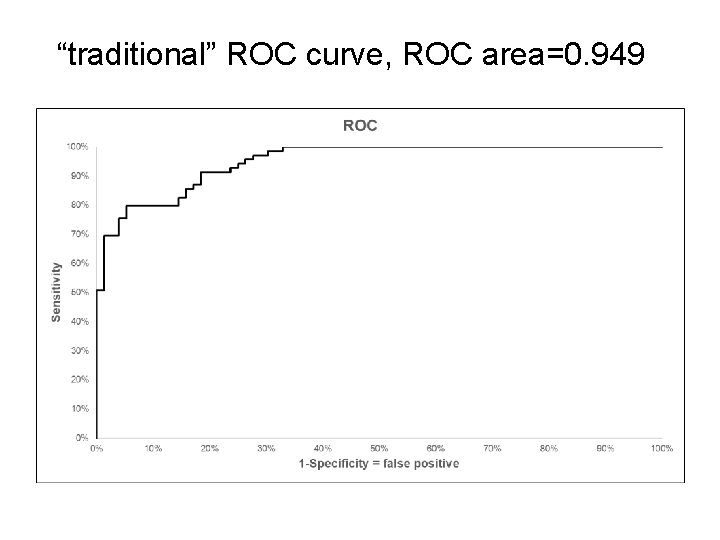

ROC curves-diabetes complication model

“traditional” ROC curve, ROC area=0. 949

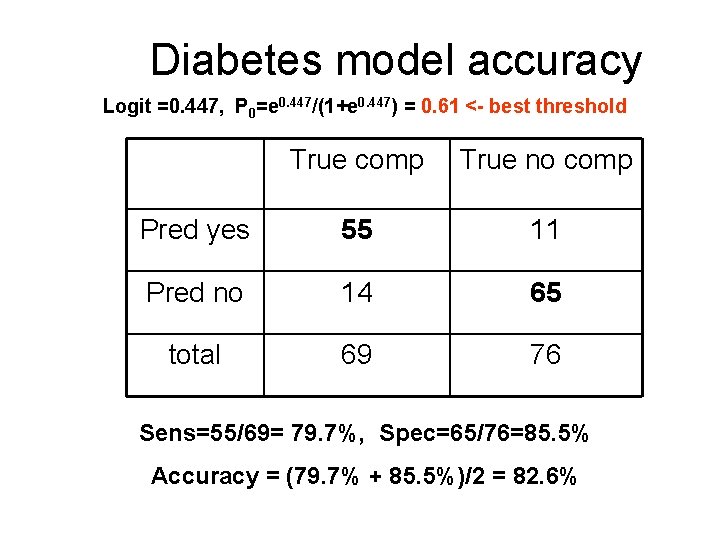

Diabetes model accuracy Logit =0. 447, P 0=e 0. 447/(1+e 0. 447) = 0. 61 <- best threshold True comp True no comp Pred yes 55 11 Pred no 14 65 total 69 76 Sens=55/69= 79. 7%, Spec=65/76=85. 5% Accuracy = (79. 7% + 85. 5%)/2 = 82. 6%

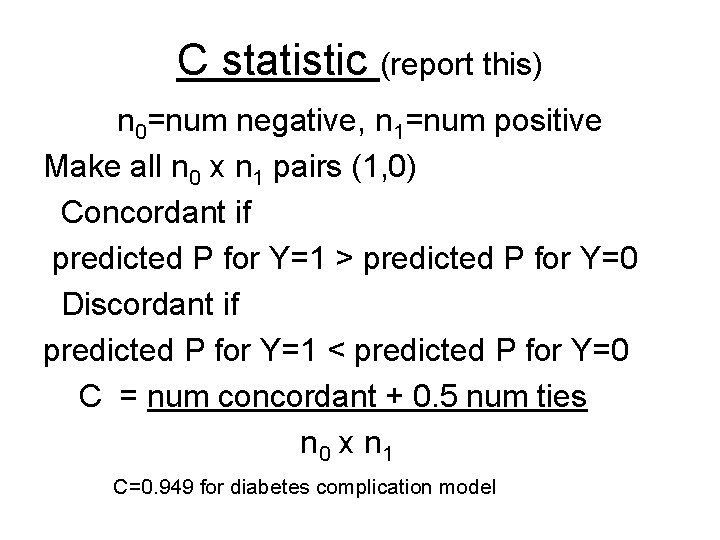

C statistic (report this) n 0=num negative, n 1=num positive Make all n 0 x n 1 pairs (1, 0) Concordant if predicted P for Y=1 > predicted P for Y=0 Discordant if predicted P for Y=1 < predicted P for Y=0 C = num concordant + 0. 5 num ties n 0 x n 1 C=0. 949 for diabetes complication model

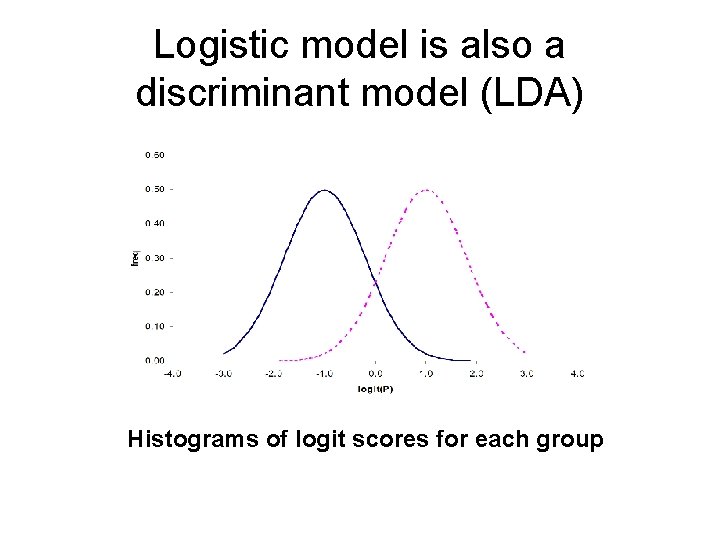

Logistic model is also a discriminant model (LDA) Histograms of logit scores for each group

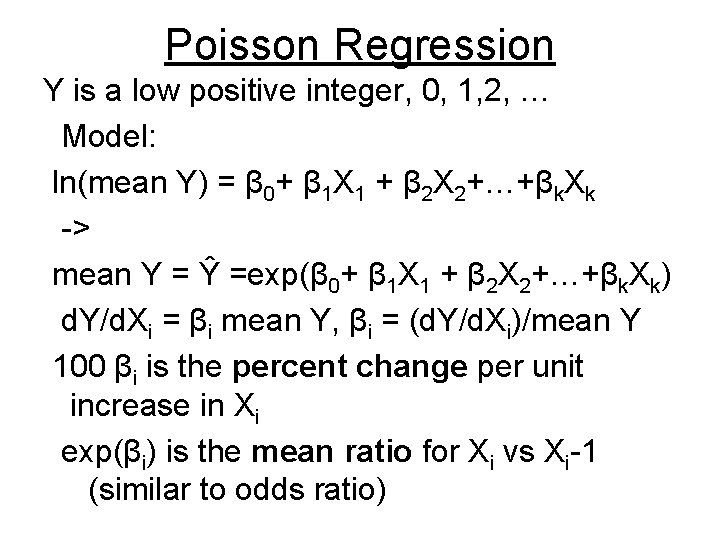

Poisson Regression Y is a low positive integer, 0, 1, 2, … Model: ln(mean Y) = β 0+ β 1 X 1 + β 2 X 2+…+βk. Xk -> mean Y = Ŷ =exp(β 0+ β 1 X 1 + β 2 X 2+…+βk. Xk) d. Y/d. Xi = βi mean Y, βi = (d. Y/d. Xi)/mean Y 100 βi is the percent change per unit increase in Xi exp(βi) is the mean ratio for Xi vs Xi-1 (similar to odds ratio)

End of lecture presentation Additional example

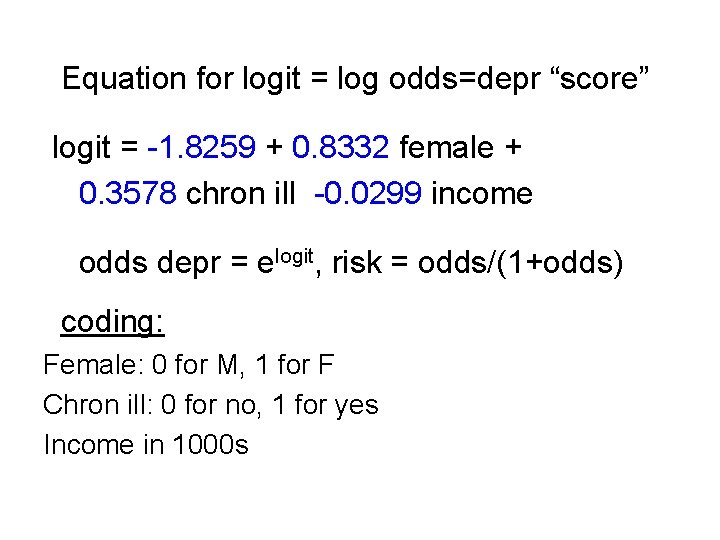

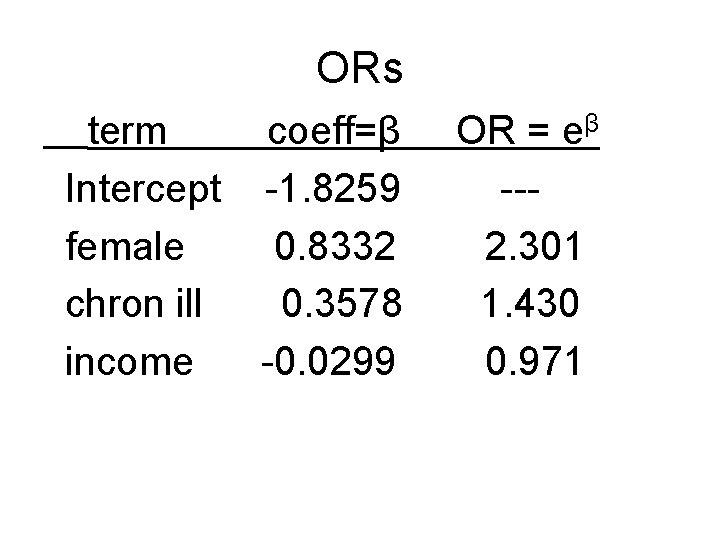

Equation for logit = log odds=depr “score” logit = -1. 8259 + 0. 8332 female + 0. 3578 chron ill -0. 0299 income odds depr = elogit, risk = odds/(1+odds) coding: Female: 0 for M, 1 for F Chron ill: 0 for no, 1 for yes Income in 1000 s

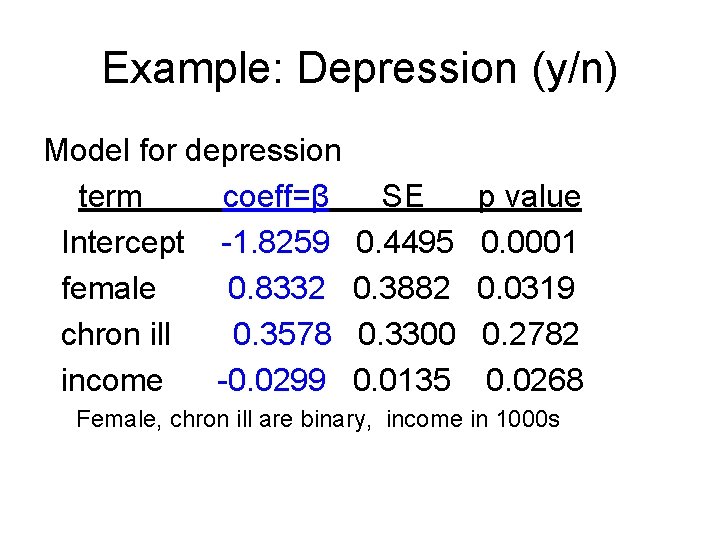

Example: Depression (y/n) Model for depression term coeff=β Intercept -1. 8259 female 0. 8332 chron ill 0. 3578 income -0. 0299 SE 0. 4495 0. 3882 0. 3300 0. 0135 p value 0. 0001 0. 0319 0. 2782 0. 0268 Female, chron ill are binary, income in 1000 s

ORs term coeff=β Intercept -1. 8259 female 0. 8332 chron ill 0. 3578 income -0. 0299 OR = eβ --2. 301 1. 430 0. 971

- Slides: 38