Advanced Quantitative Techniques Logistic regressions Difference between linear

Advanced Quantitative Techniques Logistic regressions

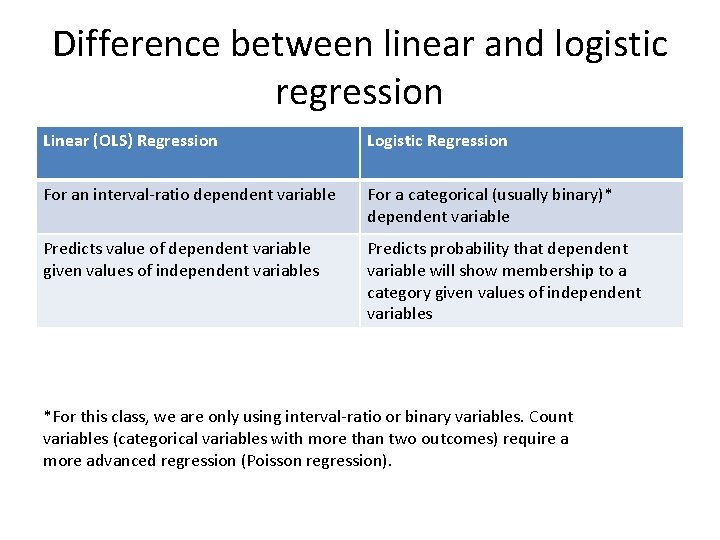

Difference between linear and logistic regression Linear (OLS) Regression Logistic Regression For an interval-ratio dependent variable For a categorical (usually binary)* dependent variable Predicts value of dependent variable given values of independent variables Predicts probability that dependent variable will show membership to a category given values of independent variables *For this class, we are only using interval-ratio or binary variables. Count variables (categorical variables with more than two outcomes) require a more advanced regression (Poisson regression).

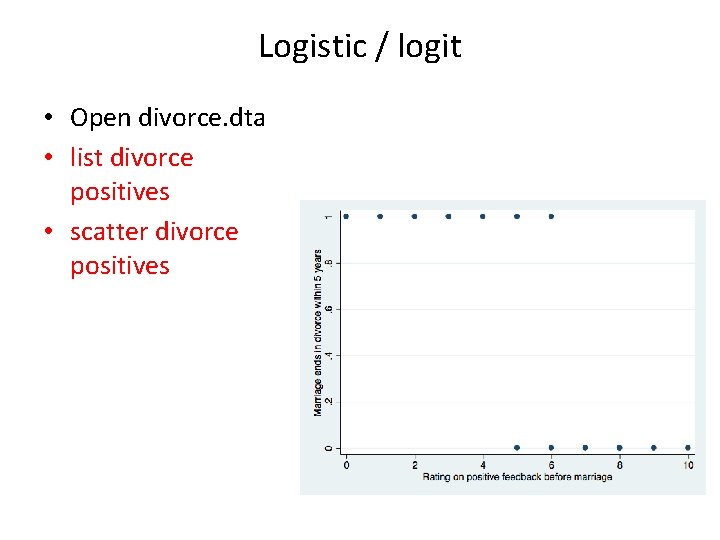

Logistic / logit • Open divorce. dta • list divorce positives • scatter divorce positives

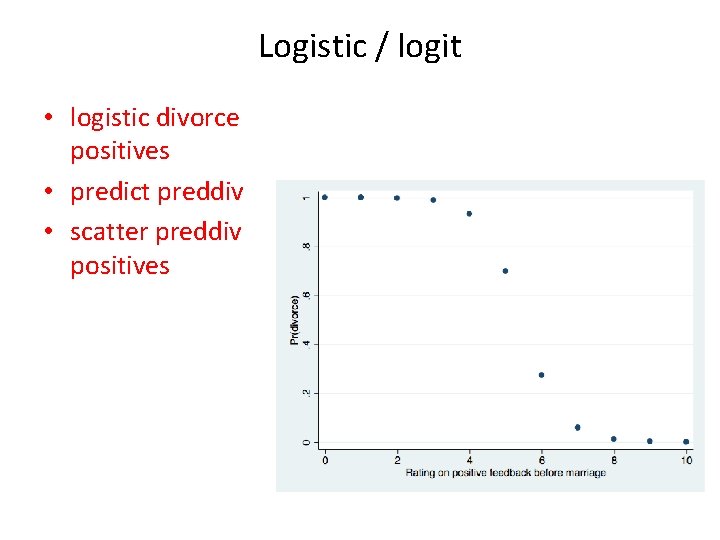

Logistic / logit • logistic divorce positives • predict preddiv • scatter preddiv positives

Logistic / logit Logit=ln(odds ratio) • In Stata, there are two commands for logistic regression: logit and logistic. • The logit command gives the regression coefficients to estimate the logit score. • The logistic command gives us the odds ratios we need to interpret the effect size of the predictors. • The logit is a function of the logistic regression: it is just a different way of presenting the same relationship between independent and dependent variables (see Acock, section 11. 2)

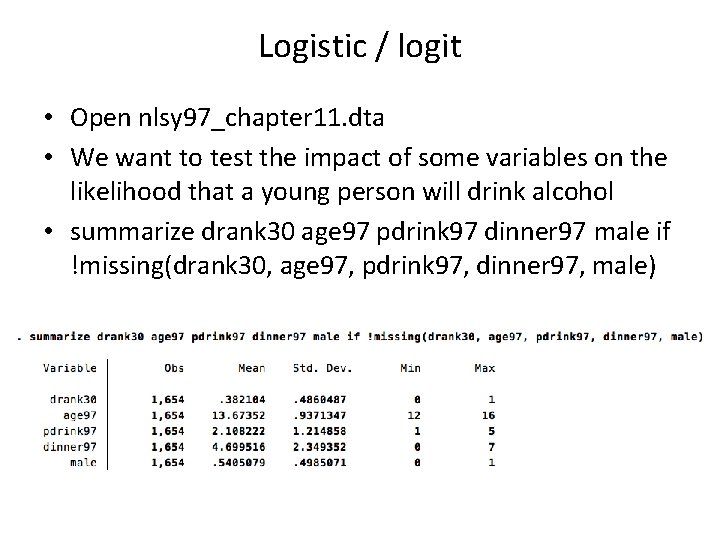

Logistic / logit • Open nlsy 97_chapter 11. dta • We want to test the impact of some variables on the likelihood that a young person will drink alcohol • summarize drank 30 age 97 pdrink 97 dinner 97 male if !missing(drank 30, age 97, pdrink 97, dinner 97, male)

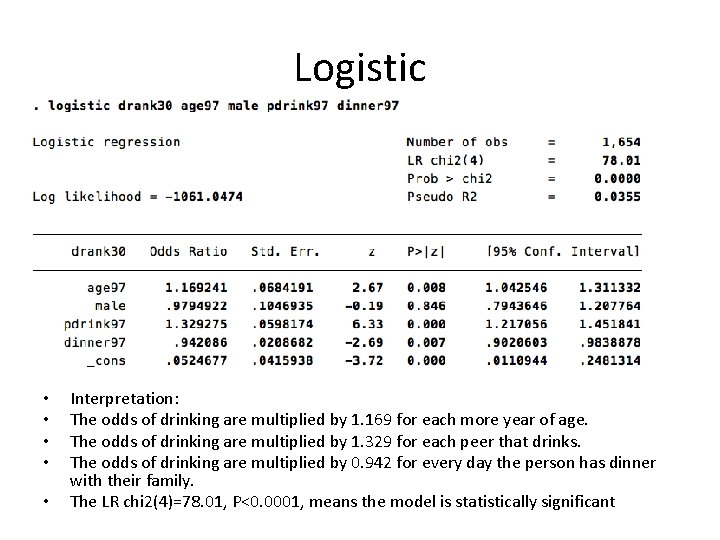

Logistic • • • Interpretation: The odds of drinking are multiplied by 1. 169 for each more year of age. The odds of drinking are multiplied by 1. 329 for each peer that drinks. The odds of drinking are multiplied by 0. 942 for every day the person has dinner with their family. The LR chi 2(4)=78. 01, P<0. 0001, means the model is statistically significant

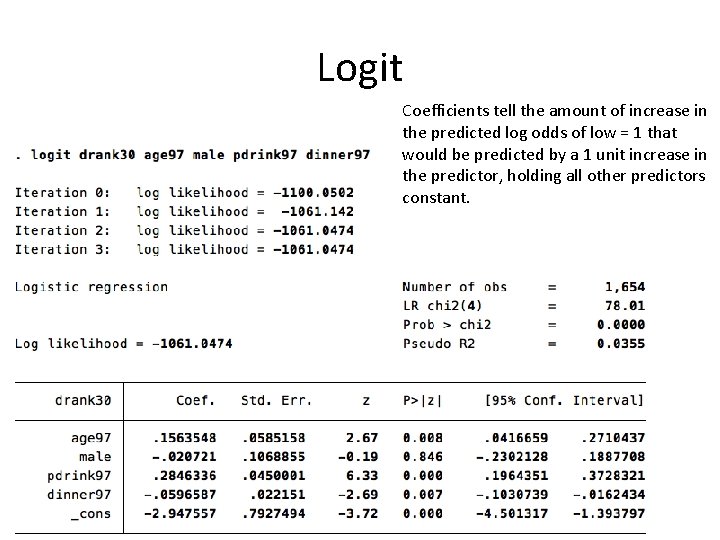

Logit Coefficients tell the amount of increase in the predicted log odds of low = 1 that would be predicted by a 1 unit increase in the predictor, holding all other predictors constant.

Comparing effects of variables • It is hard to compare the effect of two independent variables using odds ratio when they are measured in different scales. • For example, the variable male is binary (0 to 1), so it is simple to observe its effect in odds ratio terms. • But it is hard to compare the effect of “male” with the effect of variable dinner 97 (number of days the person has dinner with his or her family), which goes from 0 to 7. • If he odds ratio of “male” tells us how more likely it is that a male will drink compared to a female, dinner 97 tells us the probability change for each day. • Beta coefficients standardize the effects, allowing a comparison based on standard deviations.

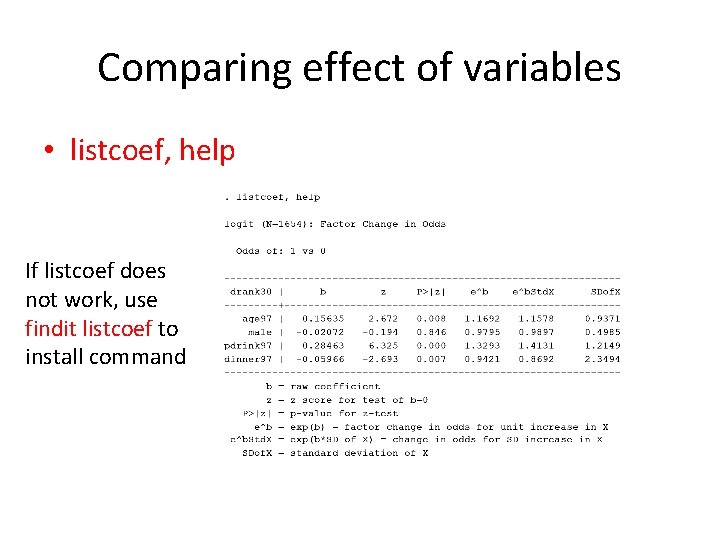

Comparing effect of variables • listcoef, help If listcoef does not work, use findit listcoef to install command

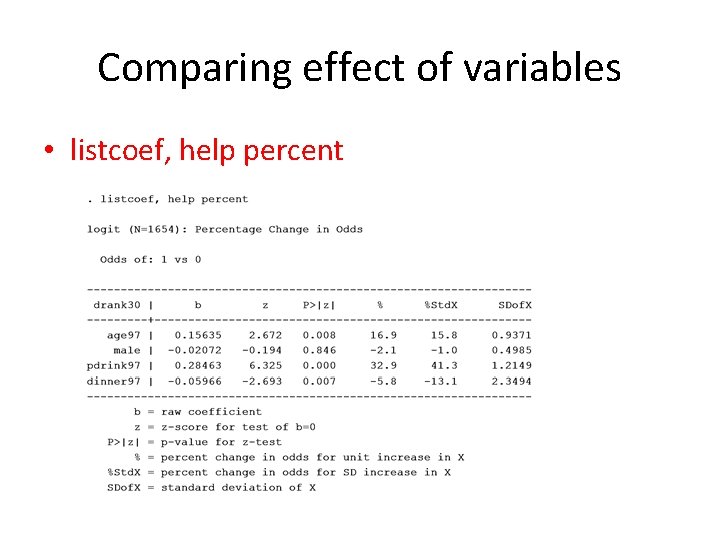

Comparing effect of variables • listcoef, help percent

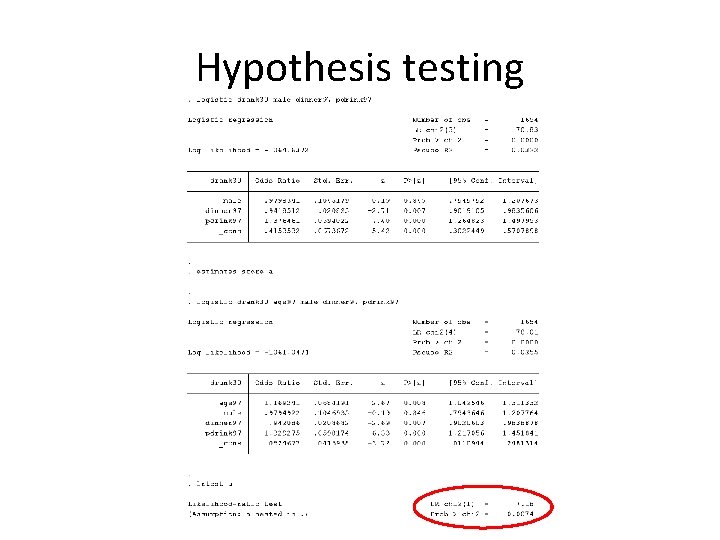

Hypothesis testing • 1. Wald chi-squared test: z reported by Stata in logistic regression. • 2. Likelihood-ratio chi-squared test. • Compare LR chi 2 with and without the variable you want to test. • To test variable “age 97”: logistic drank 30 male dinner 97 pdrink 97 estimates store a logistic drank 30 age 97 male dinner 97 pdrink 97 lrtest a

Hypothesis testing

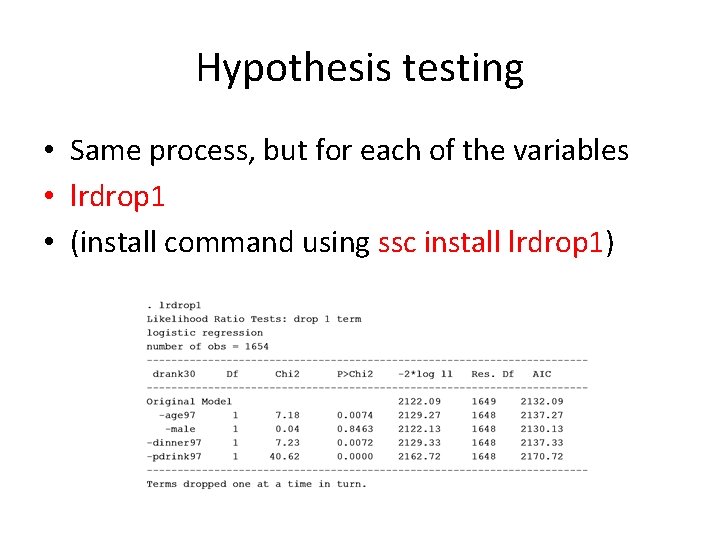

Hypothesis testing • Same process, but for each of the variables • lrdrop 1 • (install command using ssc install lrdrop 1)

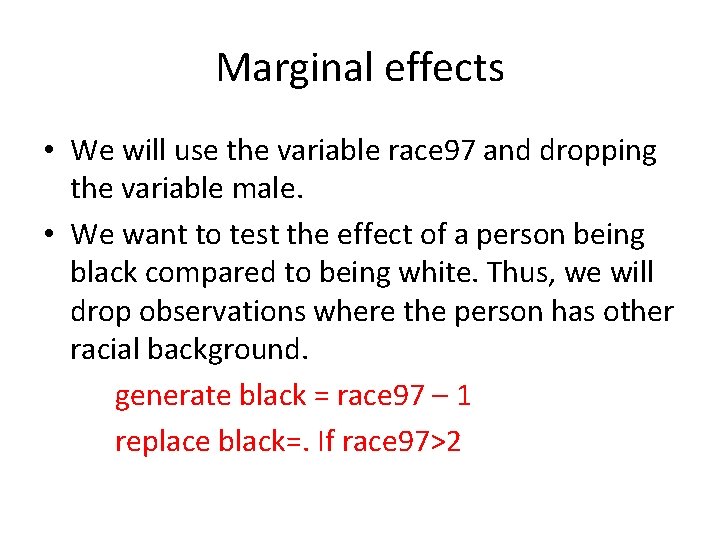

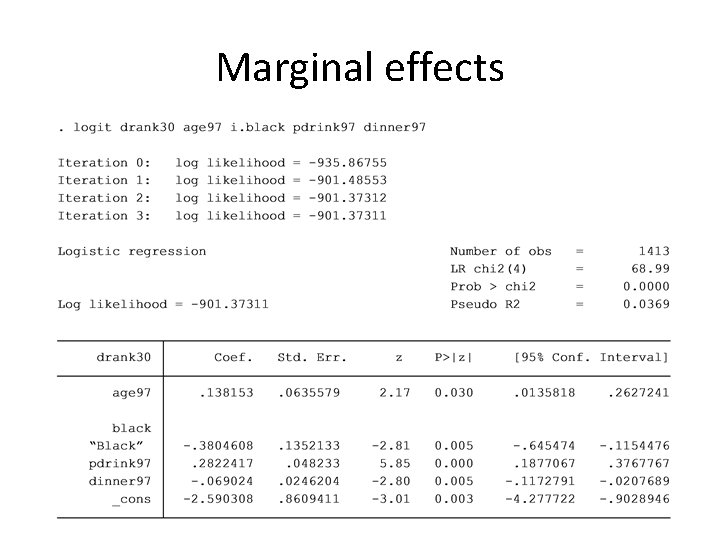

Marginal effects • We will use the variable race 97 and dropping the variable male. • We want to test the effect of a person being black compared to being white. Thus, we will drop observations where the person has other racial background. generate black = race 97 – 1 replace black=. If race 97>2

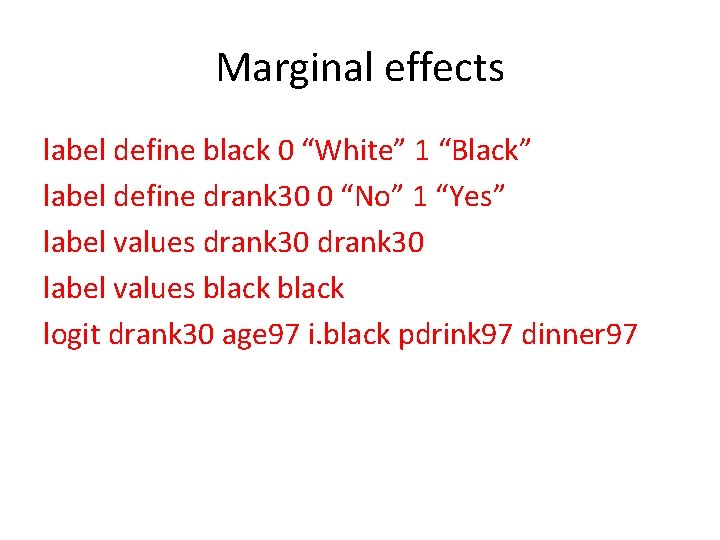

Marginal effects label define black 0 “White” 1 “Black” label define drank 30 0 “No” 1 “Yes” label values drank 30 label values black logit drank 30 age 97 i. black pdrink 97 dinner 97

Marginal effects

Marginal effects • The margins command tell the difference in the probability of having drunk in the last 30 days is an individual is black compared with an individual is white. • Initially, we are setting the covariates at the mean. • So the command will tell us what is the difference between blacks and whites who are average on the other covariates.

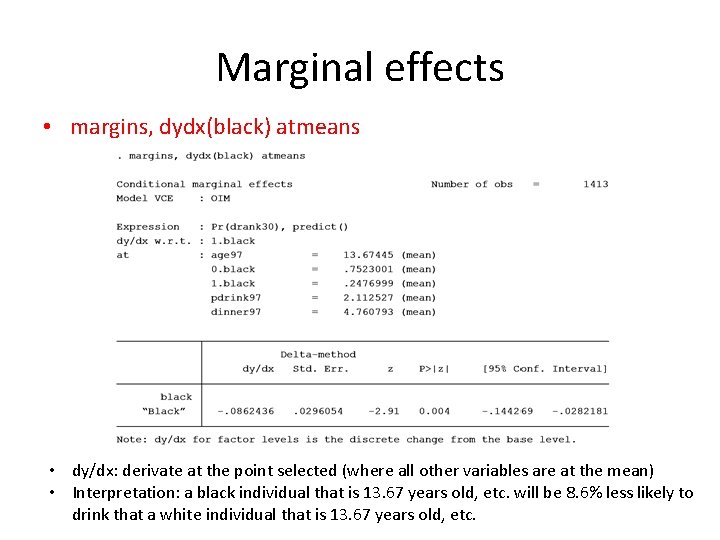

Marginal effects • margins, dydx(black) atmeans • dy/dx: derivate at the point selected (where all other variables are at the mean) • Interpretation: a black individual that is 13. 67 years old, etc. will be 8. 6% less likely to drink that a white individual that is 13. 67 years old, etc.

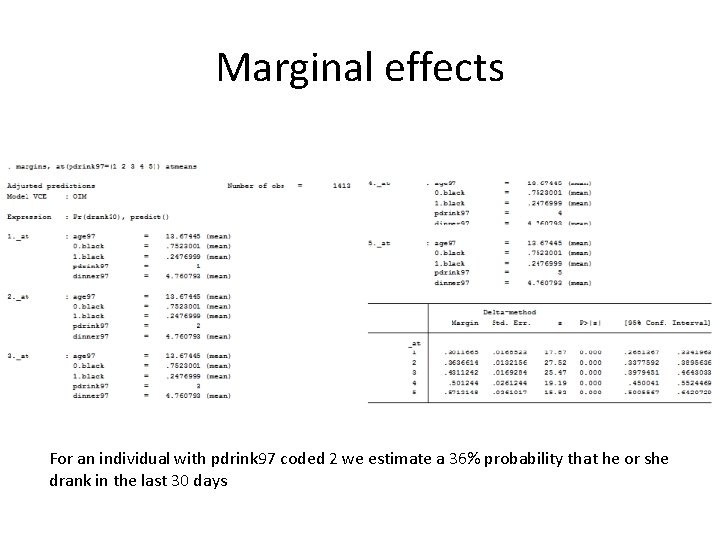

Marginal effects • We can also test marginal effects at points other than the mean using the at( ) option. • margins, at(pdrink 97=(1 2 3 4 5)) atmeans

Marginal effects For an individual with pdrink 97 coded 2 we estimate a 36% probability that he or she drank in the last 30 days

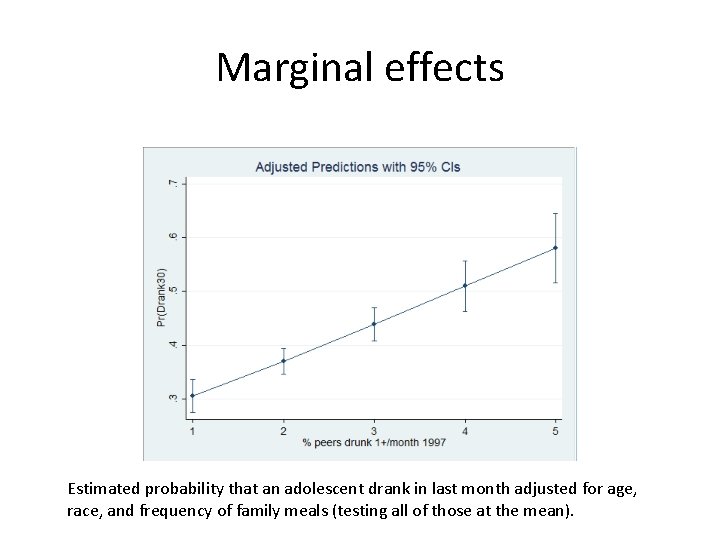

Marginal effects Estimated probability that an adolescent drank in last month adjusted for age, race, and frequency of family meals (testing all of those at the mean).

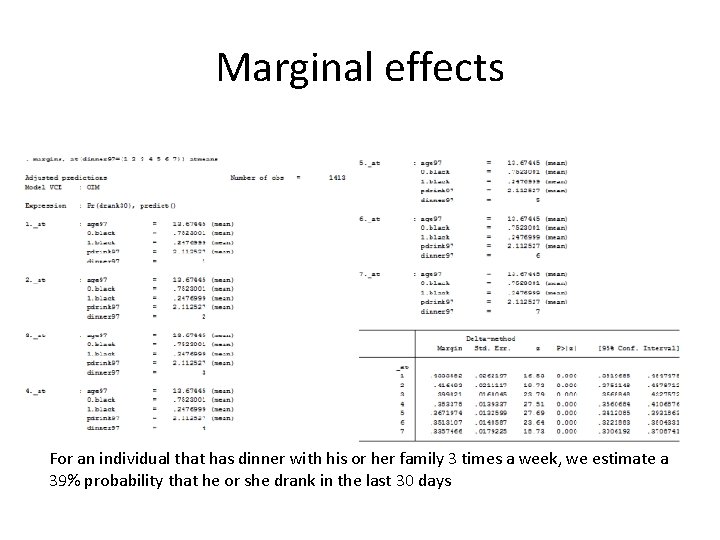

Marginal effects For an individual that has dinner with his or her family 3 times a week, we estimate a 39% probability that he or she drank in the last 30 days

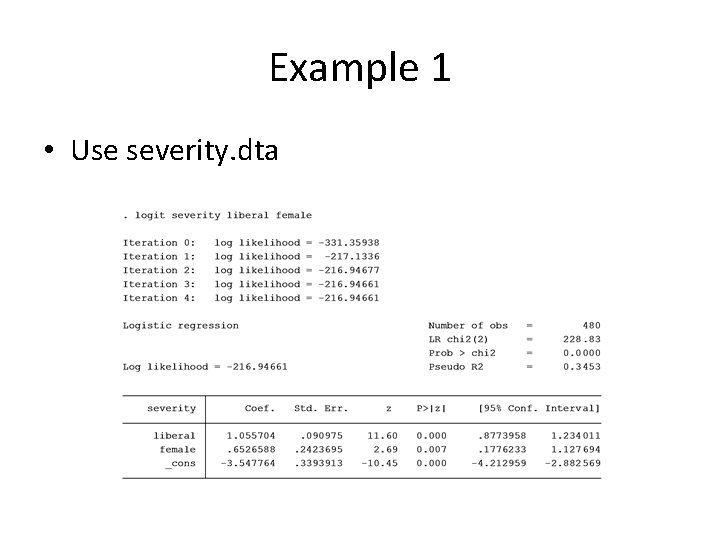

Example 1 • Use severity. dta

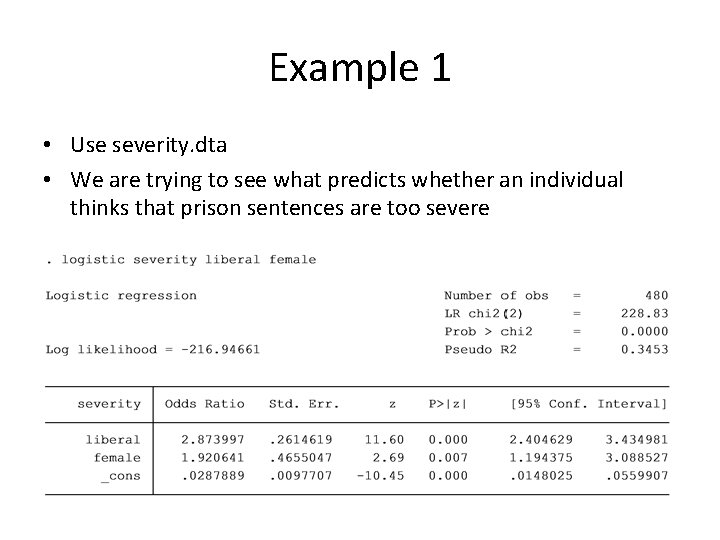

Example 1 • Use severity. dta • We are trying to see what predicts whether an individual thinks that prison sentences are too severe

Example 1

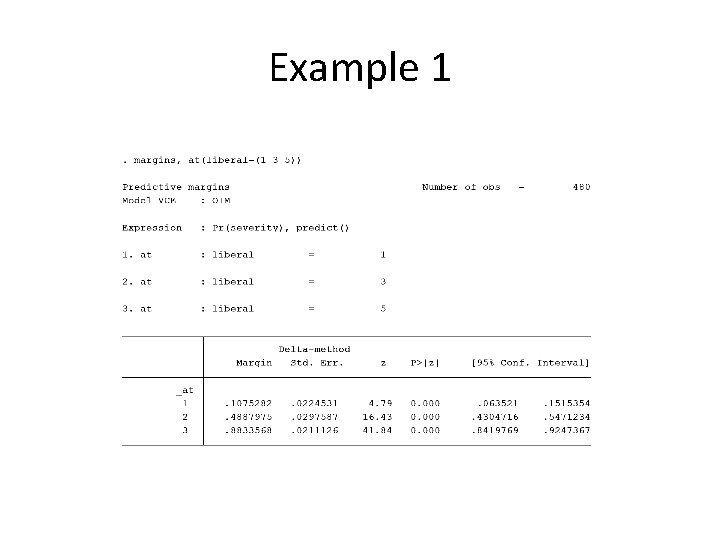

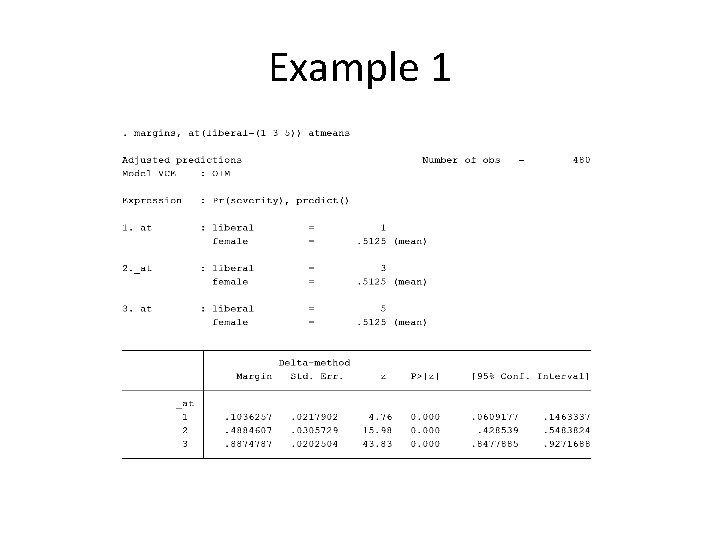

Example 1

- Slides: 27