School of Computer Science 10 601 B Introduction

School of Computer Science 10 -601 B Introduction to Machine Learning Neural Networks Readings: Bishop Ch. 5 Murphy Ch. 16. 5, Ch. 28 Mitchell Ch. 4 Matt Gormley Lecture 15 October 19, 2016 1

Reminders 2

Outline • Logistic Regression (Recap) • Neural Networks • Backpropagation 3

RECALL: LOGISTIC REGRESSION 4

Using gradient ascent for linear. Recall… classifiers Key idea behind today’s lecture: 1. Define a linear classifier (logistic regression) 2. Define an objective function (likelihood) 3. Optimize it with gradient descent to learn parameters 4. Predict the class with highest probability under the model 5

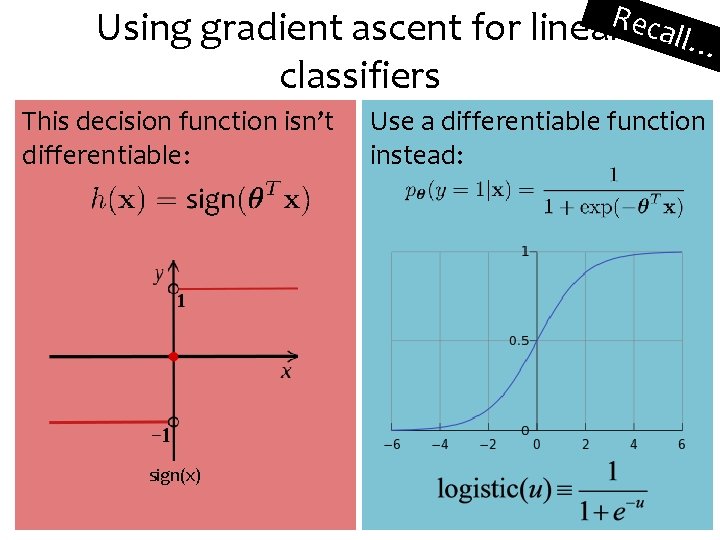

Using gradient ascent for linear. Recall… classifiers This decision function isn’t differentiable: Use a differentiable function instead: sign(x) 6

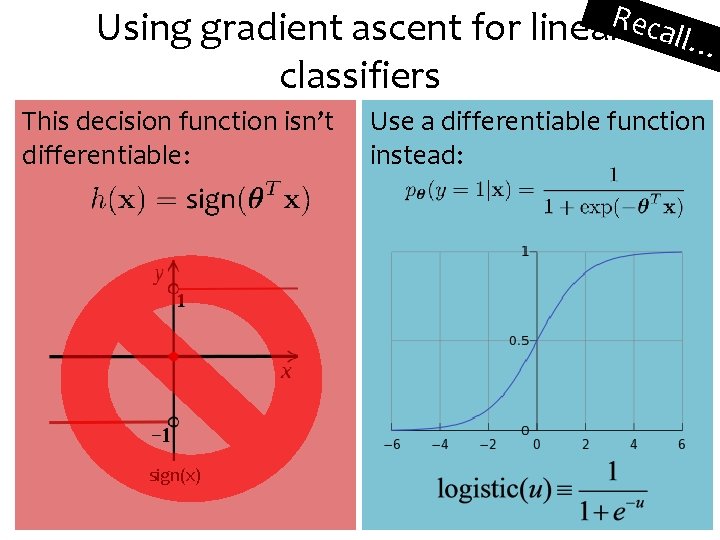

Using gradient ascent for linear. Recall… classifiers This decision function isn’t differentiable: Use a differentiable function instead: sign(x) 7

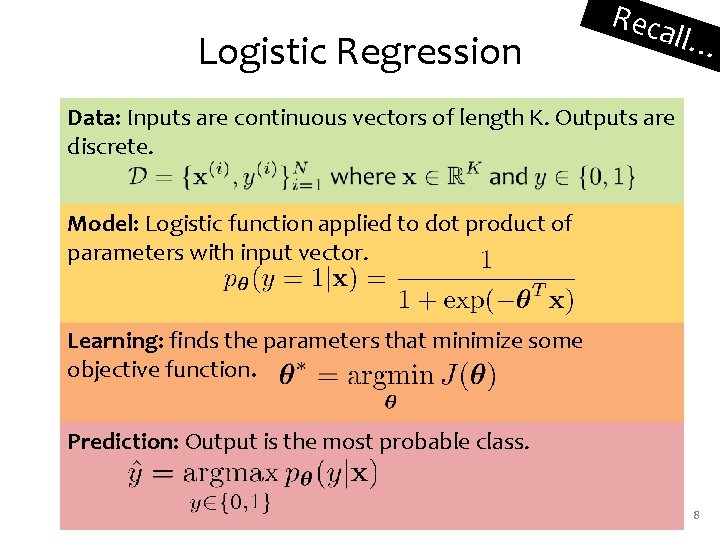

Logistic Regression Reca ll… Data: Inputs are continuous vectors of length K. Outputs are discrete. Model: Logistic function applied to dot product of parameters with input vector. Learning: finds the parameters that minimize some objective function. Prediction: Output is the most probable class. 8

NEURAL NETWORKS 9

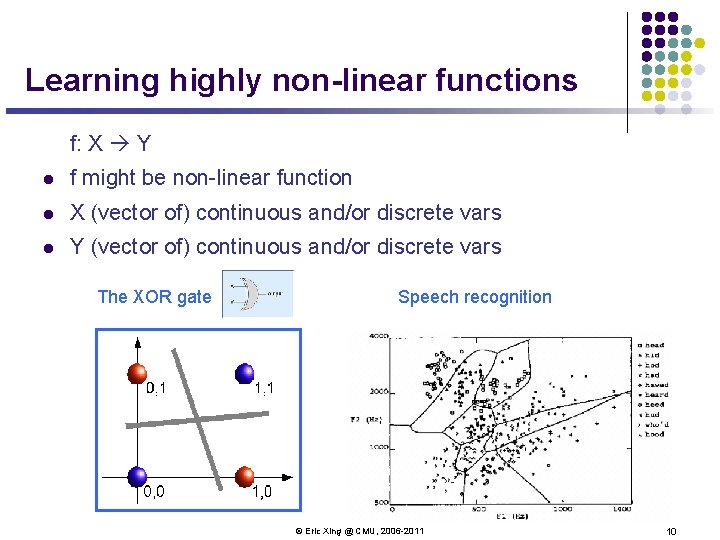

Learning highly non-linear functions f: X Y l f might be non-linear function l X (vector of) continuous and/or discrete vars l Y (vector of) continuous and/or discrete vars The XOR gate Speech recognition © Eric Xing @ CMU, 2006 -2011 10

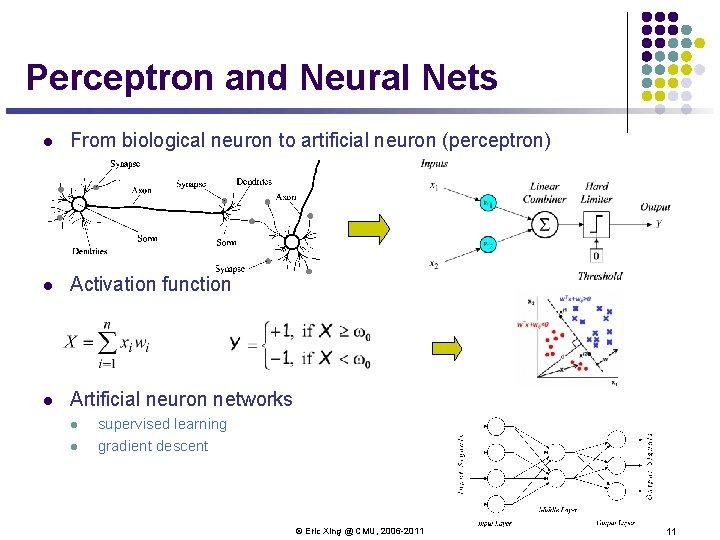

Perceptron and Neural Nets l From biological neuron to artificial neuron (perceptron) l Activation function l Artificial neuron networks l l supervised learning gradient descent © Eric Xing @ CMU, 2006 -2011 11

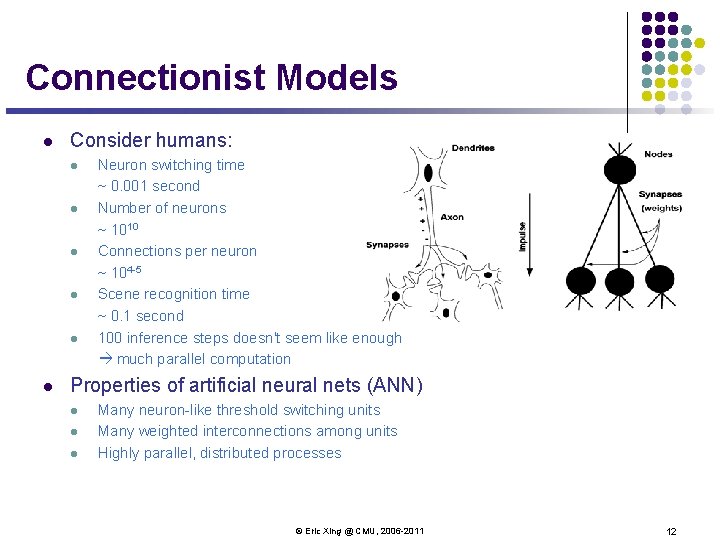

Connectionist Models l Consider humans: l l l Neuron switching time ~ 0. 001 second Number of neurons ~ 1010 Connections per neuron ~ 104 -5 Scene recognition time ~ 0. 1 second 100 inference steps doesn't seem like enough much parallel computation Properties of artificial neural nets (ANN) l l l Many neuron-like threshold switching units Many weighted interconnections among units Highly parallel, distributed processes © Eric Xing @ CMU, 2006 -2011 12

Motivation Why is everyone talking about Deep Learning? • Because a lot of money is invested in it… – Deep. Mind: Acquired by Google for $400 million – DNNResearch: Three person startup (including Geoff Hinton) acquired by Google for unknown price tag – Enlitic, Ersatz, Meta. Mind, Nervana, Skylab: Deep Learning startups commanding millions of VC dollars • Because it made the front page of the New York Times 13

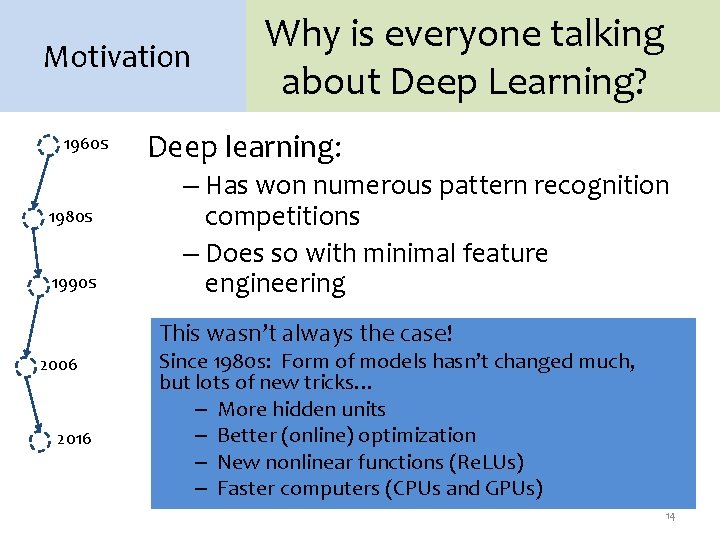

Motivation 1960 s 1980 s 1990 s Why is everyone talking about Deep Learning? Deep learning: – Has won numerous pattern recognition competitions – Does so with minimal feature engineering This wasn’t always the case! 2006 2016 Since 1980 s: Form of models hasn’t changed much, but lots of new tricks… – More hidden units – Better (online) optimization – New nonlinear functions (Re. LUs) – Faster computers (CPUs and GPUs) 14

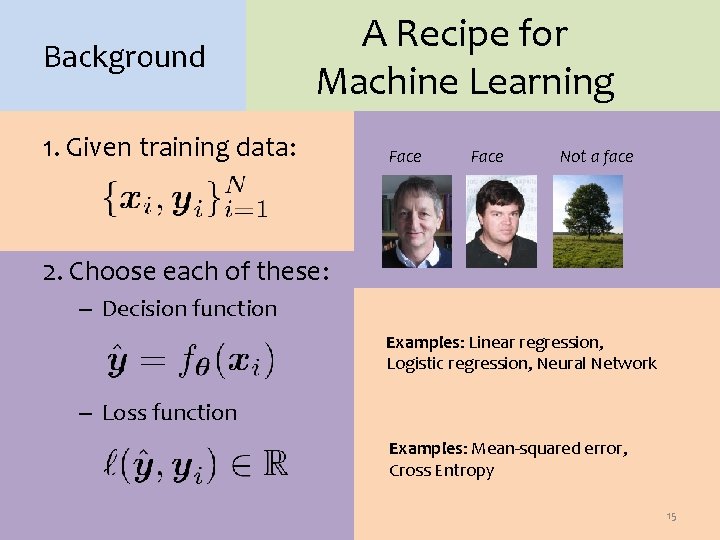

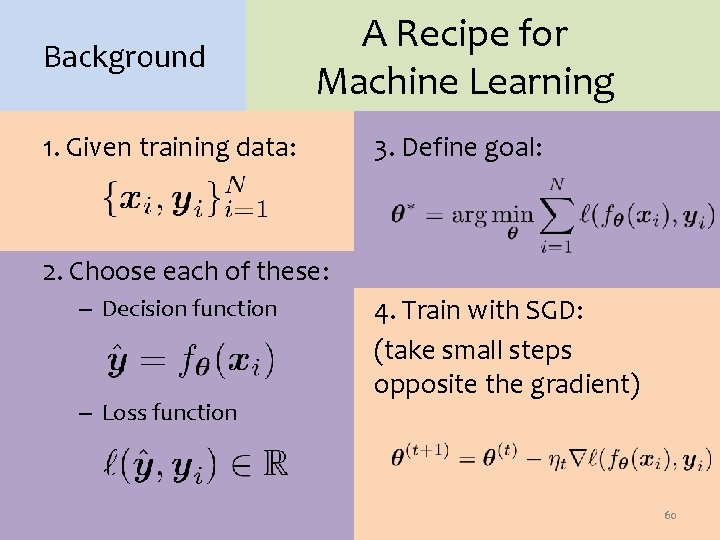

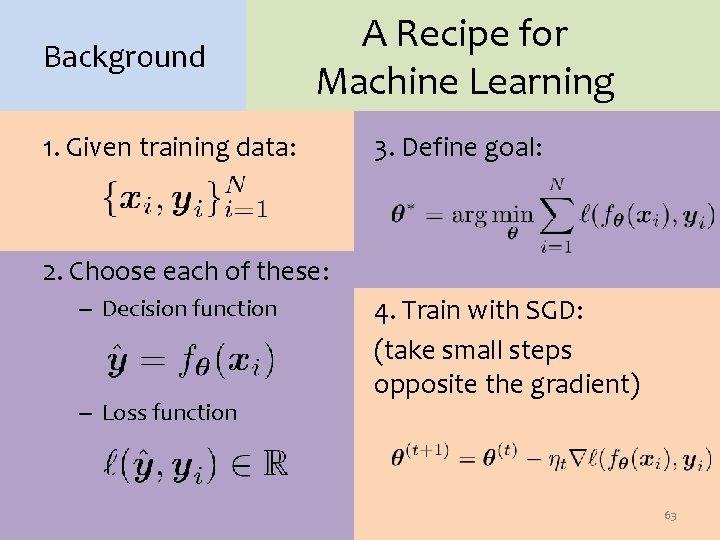

Background A Recipe for Machine Learning 1. Given training data: Face Not a face 2. Choose each of these: – Decision function Examples: Linear regression, Logistic regression, Neural Network – Loss function Examples: Mean-squared error, Cross Entropy 15

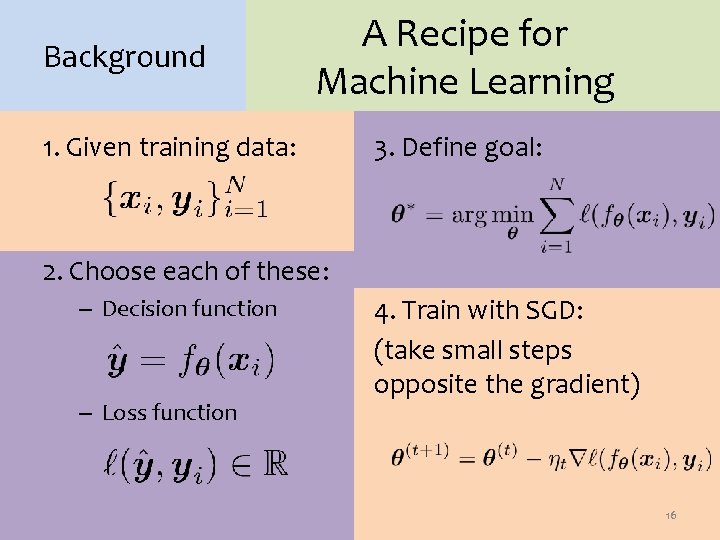

Background A Recipe for Machine Learning 1. Given training data: 3. Define goal: 2. Choose each of these: – Decision function – Loss function 4. Train with SGD: (take small steps opposite the gradient) 16

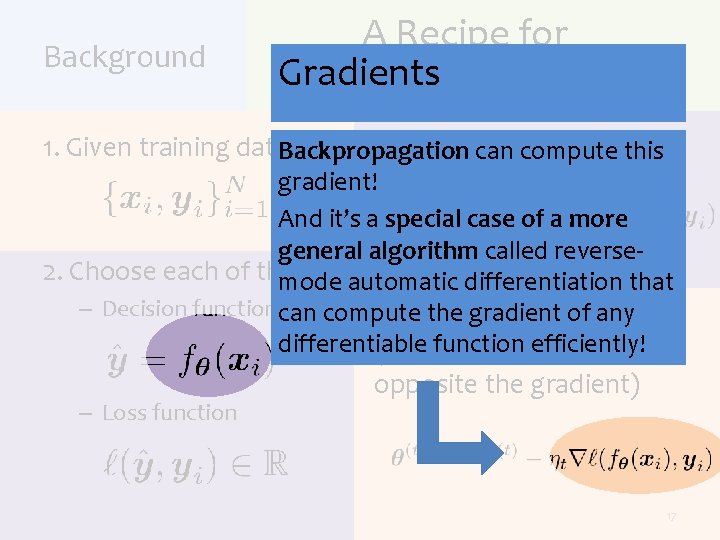

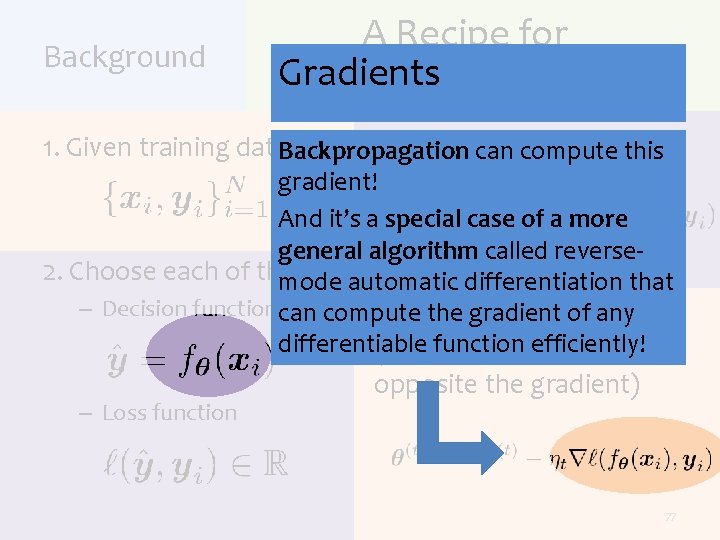

Background A Recipe for Gradients Machine Learning 3. Definecan goal: 1. Given training data: Backpropagation compute this gradient! And it’s a special case of a more general algorithm called reverse 2. Choose each of these: mode automatic differentiation that – Decision function can compute 4. Train SGD: the with gradient of any differentiable efficiently! (takefunction small steps – Loss function opposite the gradient) 17

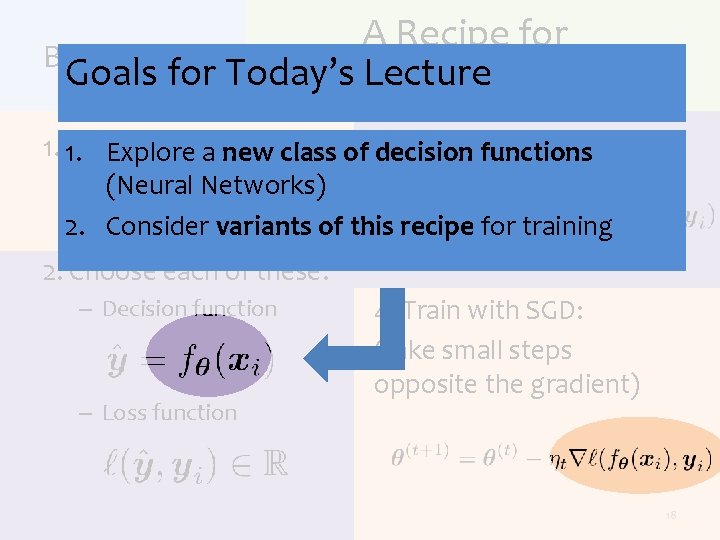

A Recipe for Background Goals for Today’s Lecture Machine Learning Define functions goal: 1. 1. Given training data: Explore a new class of 3. decision (Neural Networks) 2. Consider variants of this recipe for training 2. Choose each of these: – Decision function – Loss function 4. Train with SGD: (take small steps opposite the gradient) 18

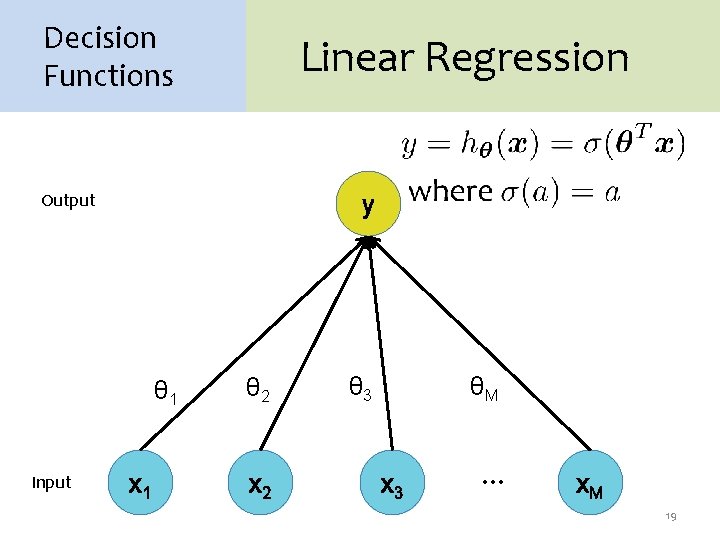

Decision Functions Linear Regression Output y θ 1 Input x 1 θ 2 x 2 θ 3 θM x 3 … x. M 19

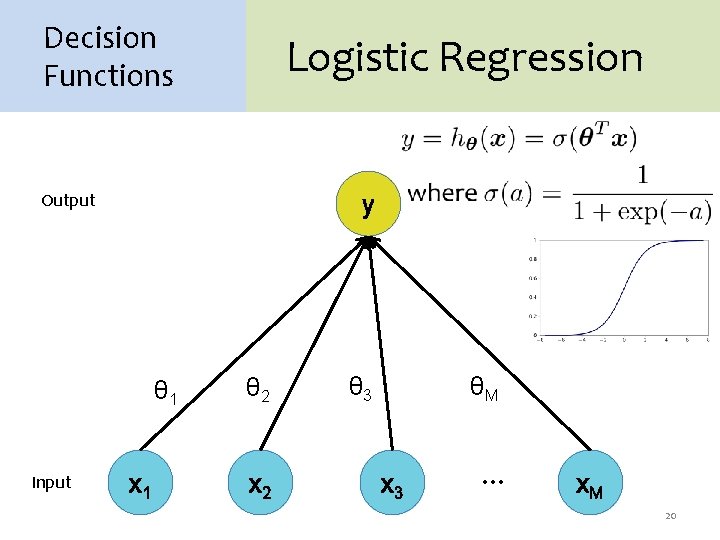

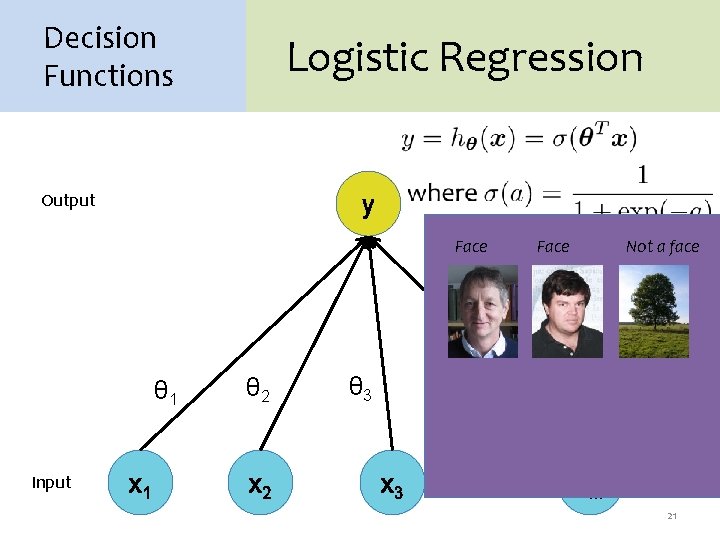

Decision Functions Logistic Regression Output y θ 1 Input x 1 θ 2 x 2 θ 3 θM x 3 … x. M 20

Decision Functions Logistic Regression Output y Face θ 1 Input x 1 θ 2 x 2 θ 3 Face Not a face θM x 3 … x. M 21

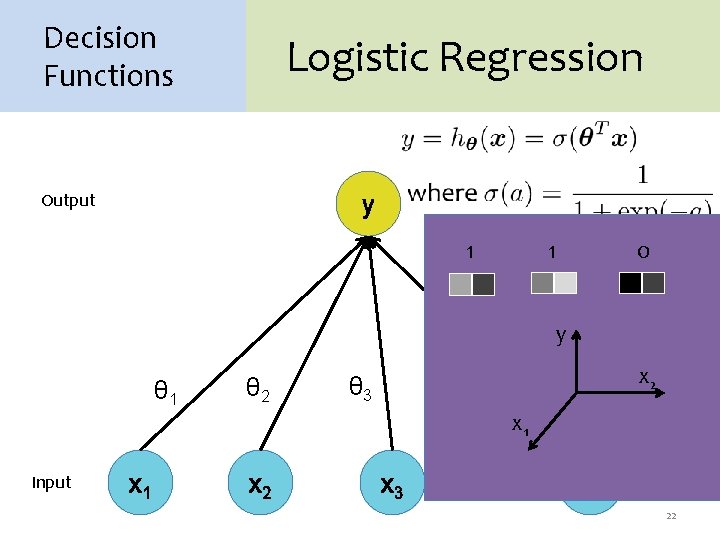

Decision Functions Logistic Regression Output y 1 1 0 y θ 1 Input x 1 θ 2 θ 3 x 2 θM x 1 x 2 x 3 … x. M 22

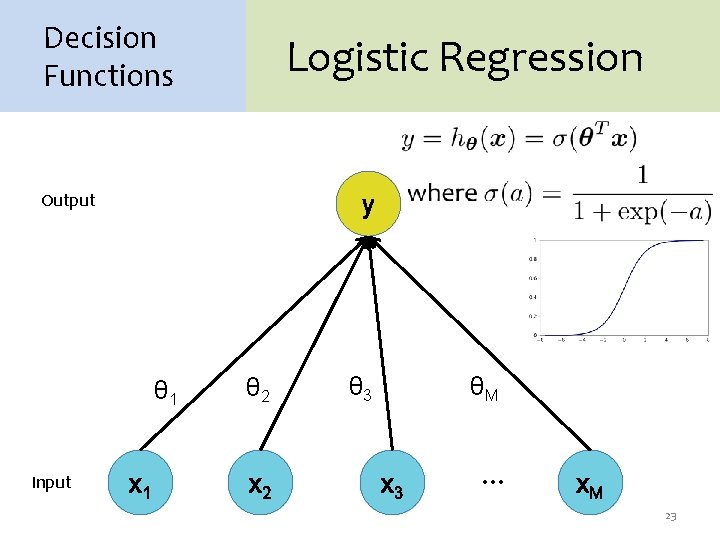

Decision Functions Logistic Regression Output y θ 1 Input x 1 θ 2 x 2 θ 3 θM x 3 … x. M 23

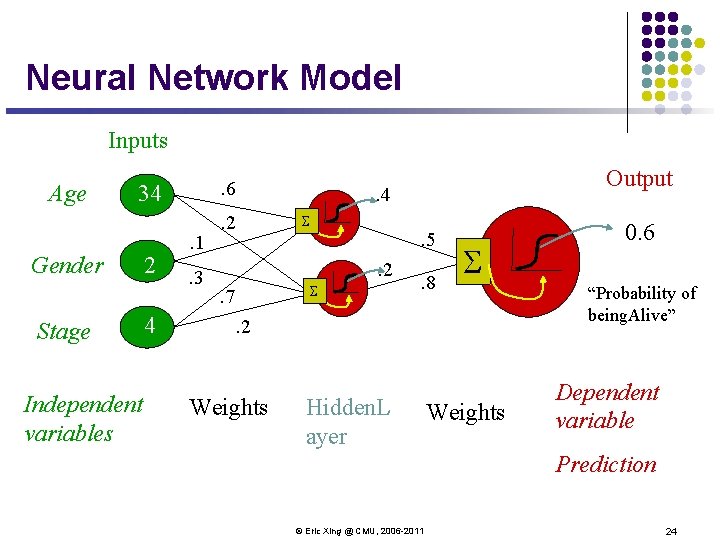

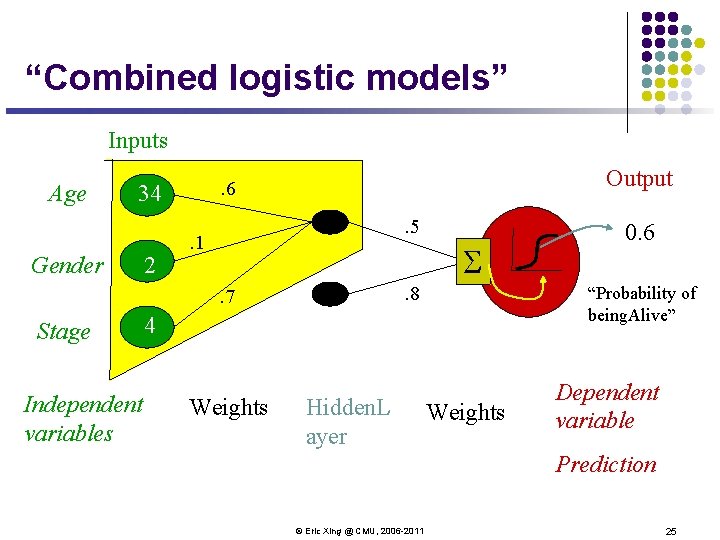

Neural Network Model Inputs Age . 6 34 Gender Stage Independent variables 2 4 . 1. 3 . 2 . 4 S . 5. 2 S . 7 Output . 8 S . 2 Weights Hidden. L ayer Weights 0. 6 “Probability of being. Alive” Dependent variable Prediction © Eric Xing @ CMU, 2006 -2011 24

“Combined logistic models” Inputs Age 34 Gender 2 Output . 6. 5 . 1 S. 8 . 7 Stage Independent variables “Probability of being. Alive” 4 Weights Hidden. L ayer 0. 6 Weights Dependent variable Prediction © Eric Xing @ CMU, 2006 -2011 25

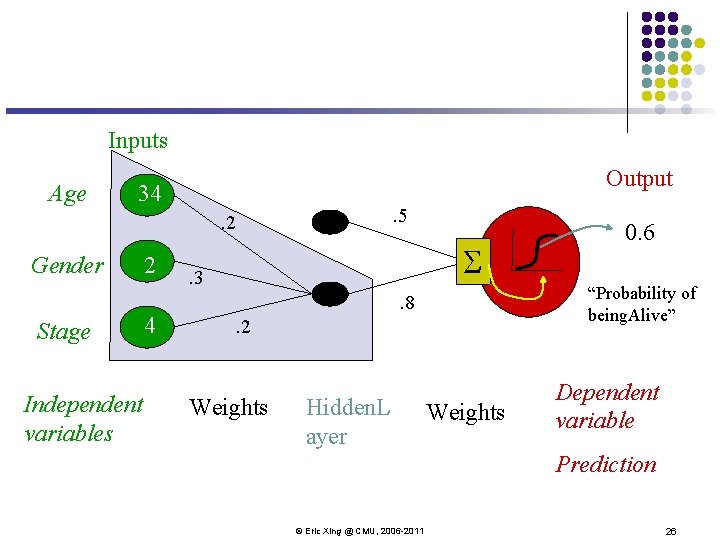

Inputs Age Output 34 . 5 . 2 Gender Stage Independent variables 2 4 S . 3 “Probability of being. Alive” . 8. 2 Weights Hidden. L ayer 0. 6 Weights Dependent variable Prediction © Eric Xing @ CMU, 2006 -2011 26

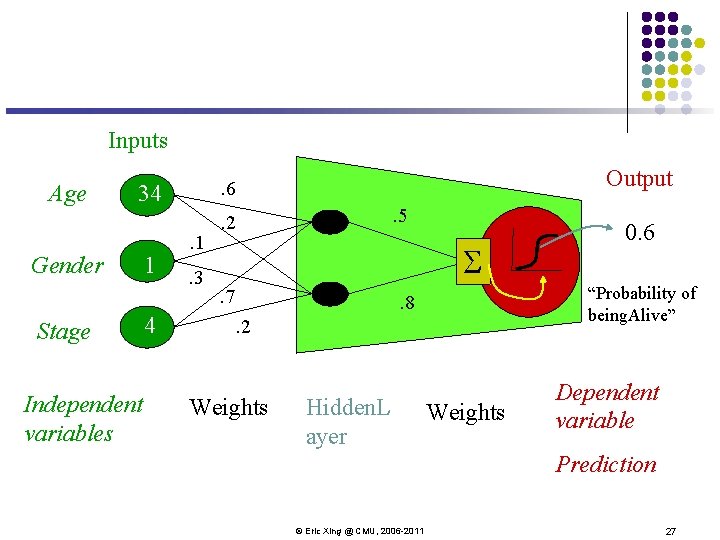

Inputs Age 34 Gender Stage Independent variables 1 4 Output . 6. 1. 3 . 5 . 2 S. 7 “Probability of being. Alive” . 8. 2 Weights Hidden. L ayer 0. 6 Weights Dependent variable Prediction © Eric Xing @ CMU, 2006 -2011 27

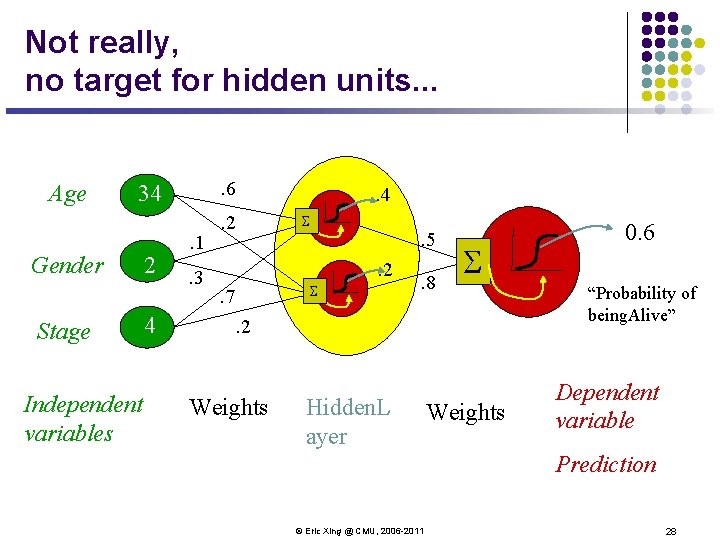

Not really, no target for hidden units. . . Age . 6 34 Gender Stage Independent variables 2 4 . 1. 3 . 2 . 4 S . 5. 2 S . 7 . 8 S . 2 Weights Hidden. L ayer Weights 0. 6 “Probability of being. Alive” Dependent variable Prediction © Eric Xing @ CMU, 2006 -2011 28

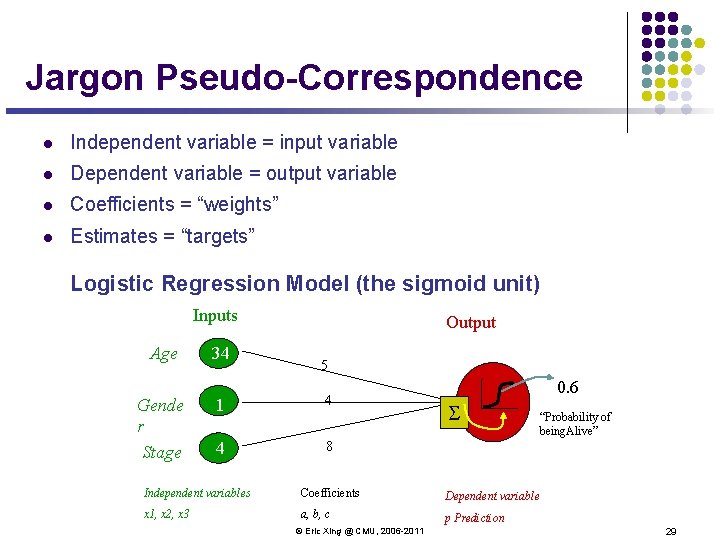

Jargon Pseudo-Correspondence l Independent variable = input variable l Dependent variable = output variable l Coefficients = “weights” l Estimates = “targets” Logistic Regression Model (the sigmoid unit) Inputs Age Gende r Stage 34 Output 5 1 4 4 8 0. 6 S “Probability of being. Alive” Independent variables Coefficients Dependent variable x 1, x 2, x 3 a, b, c p Prediction © Eric Xing @ CMU, 2006 -2011 29

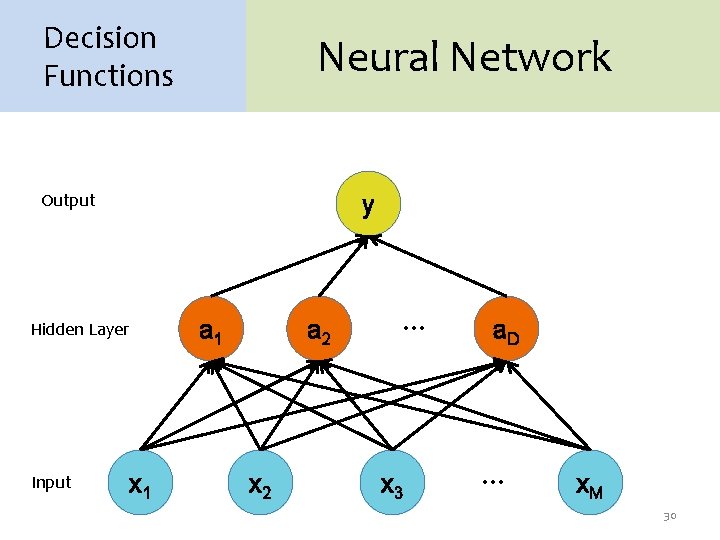

Decision Functions Neural Network Output y Hidden Layer Input x 1 a 2 x 2 … x 3 a. D … x. M 30

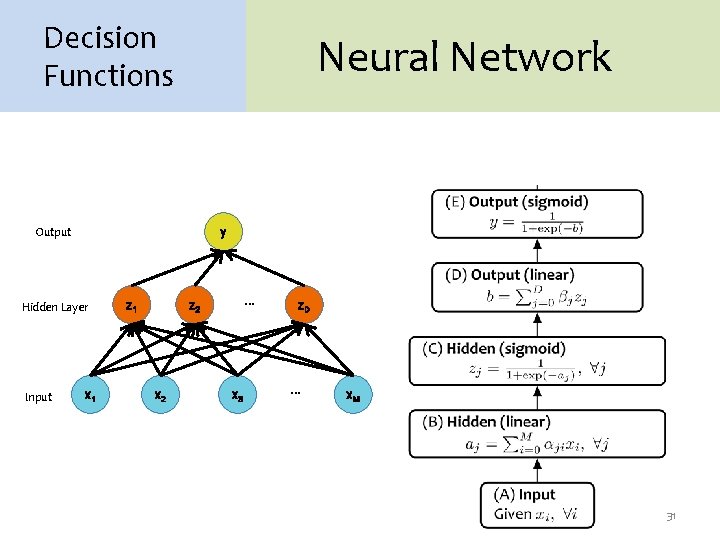

Decision Functions Neural Network Output y Hidden Layer Input x 1 z 1 … z 2 x 3 z. D … x. M 31

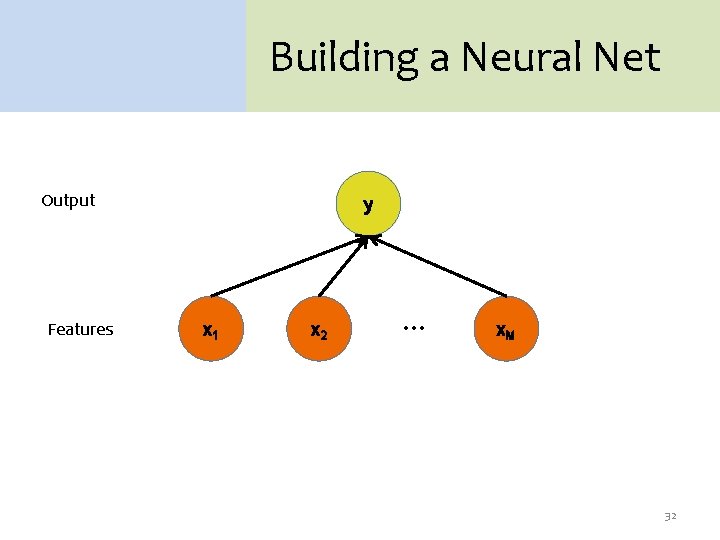

Building a Neural Net Output Features y x 1 x 2 … x. M 32

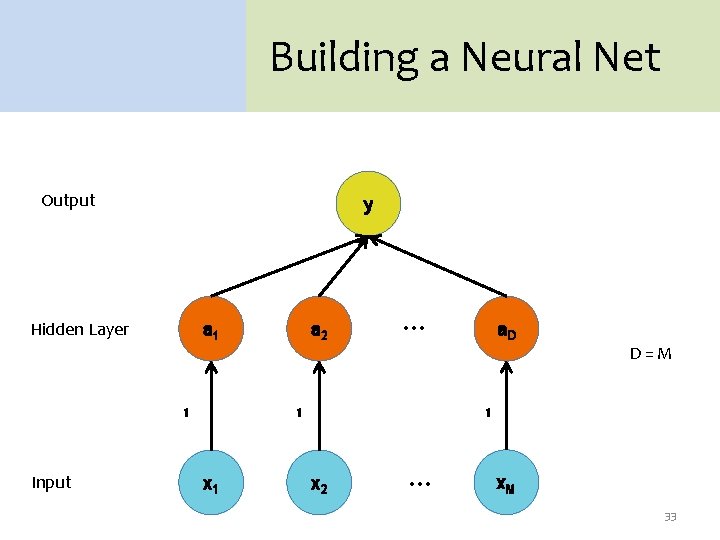

Building a Neural Net Output y Hidden Layer a 1 1 Input a 2 … 1 x 1 a. D D=M 1 x 2 … x. M 33

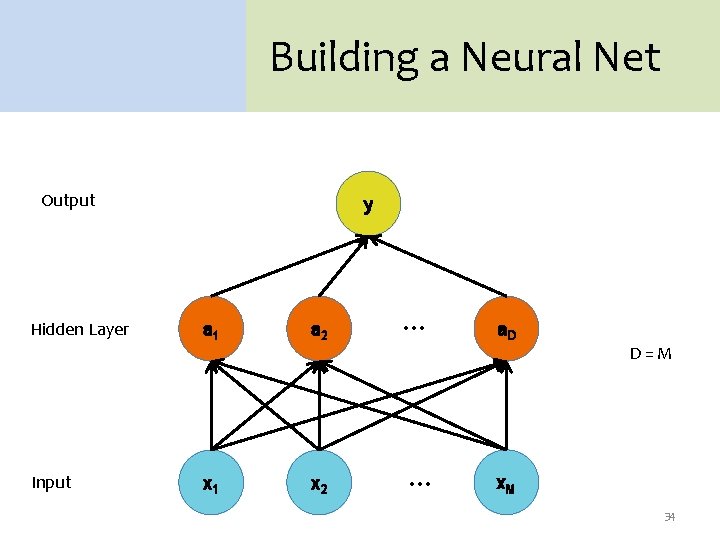

Building a Neural Net Output Hidden Layer Input y a 1 a 2 x 1 x 2 … … a. D D=M x. M 34

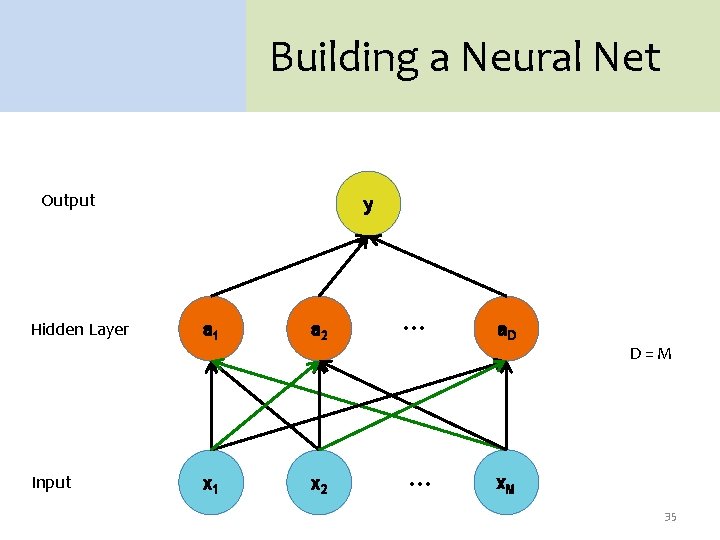

Building a Neural Net Output Hidden Layer Input y a 1 a 2 x 1 x 2 … … a. D D=M x. M 35

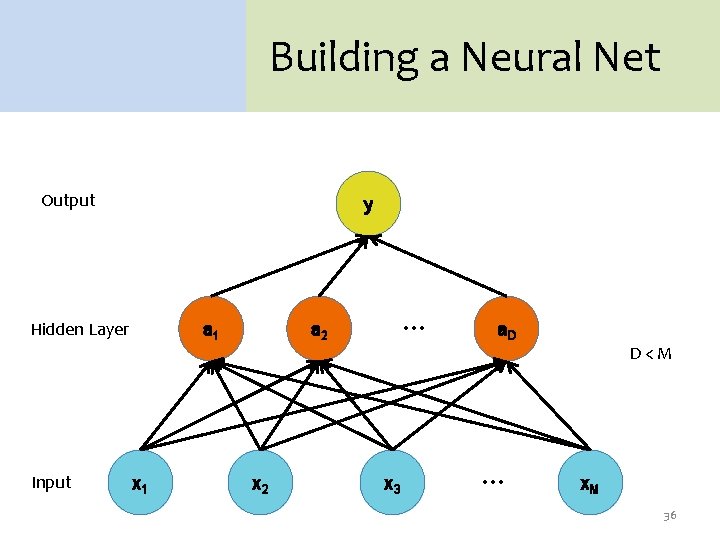

Building a Neural Net Output y Hidden Layer Input a 1 x 1 … a 2 x 3 a. D … D<M x. M 36

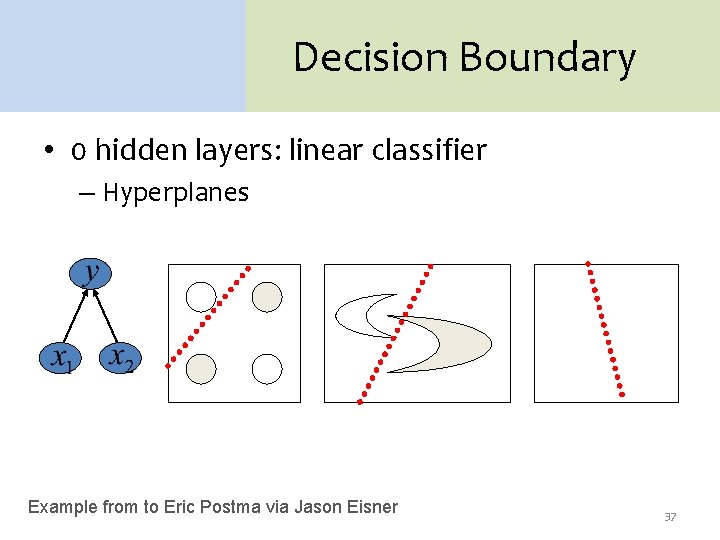

Decision Boundary • 0 hidden layers: linear classifier – Hyperplanes Example from to Eric Postma via Jason Eisner 37

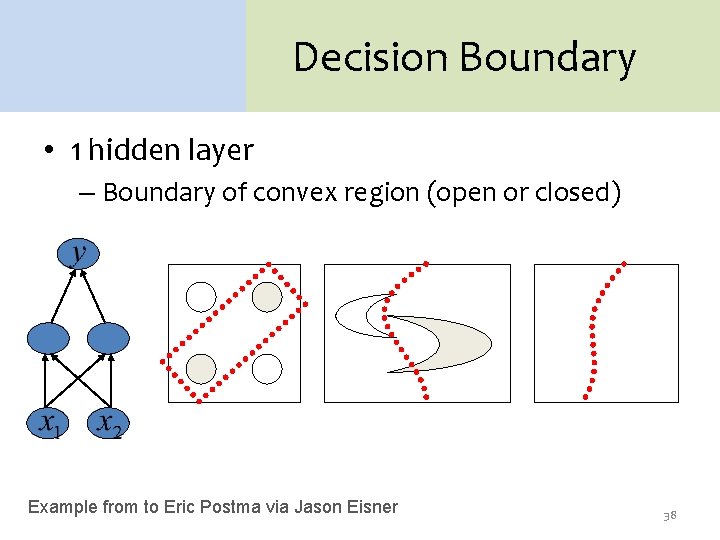

Decision Boundary • 1 hidden layer – Boundary of convex region (open or closed) Example from to Eric Postma via Jason Eisner 38

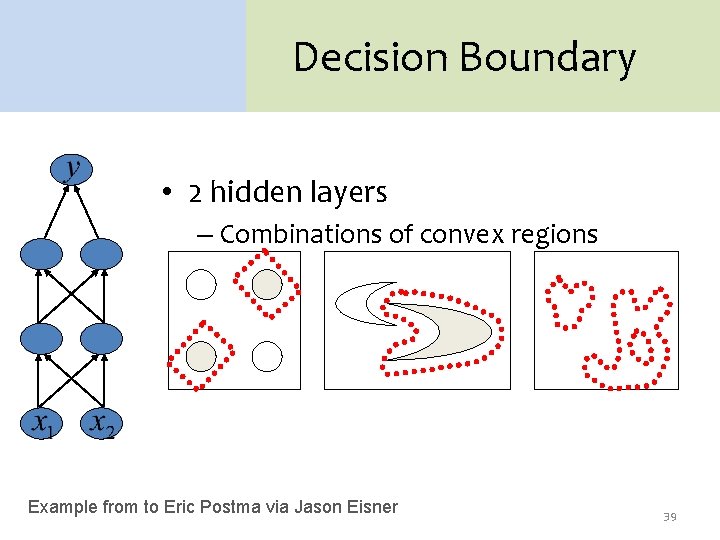

Decision Boundary • 2 hidden layers – Combinations of convex regions Example from to Eric Postma via Jason Eisner 39

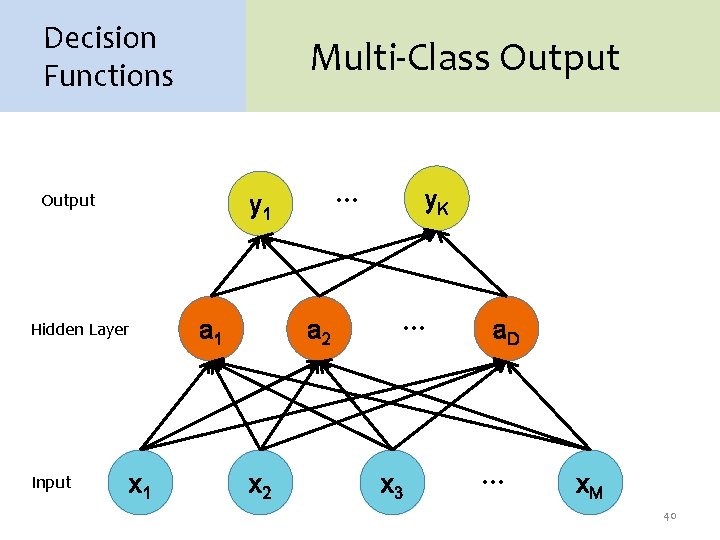

Decision Functions Multi-Class Output Hidden Layer Input … y 1 x 1 a 2 x 2 y. K … x 3 a. D … x. M 40

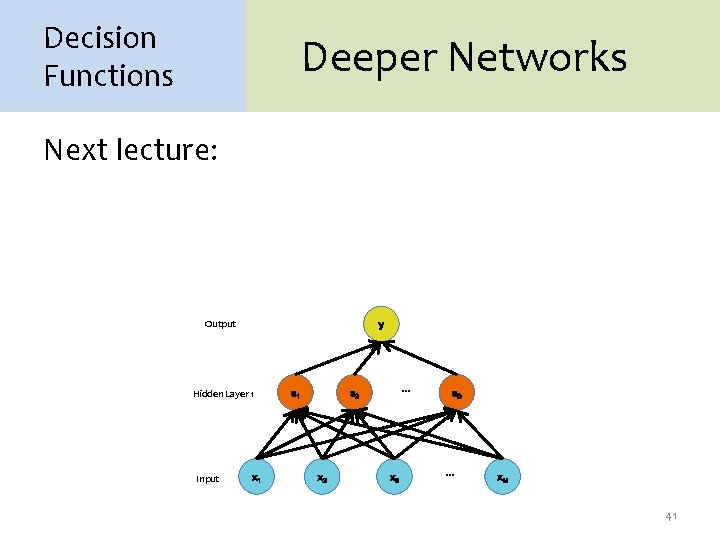

Decision Functions Deeper Networks Next lecture: Output y Hidden Layer 1 Input x 1 a 1 … a 2 x 3 a. D … x. M 41

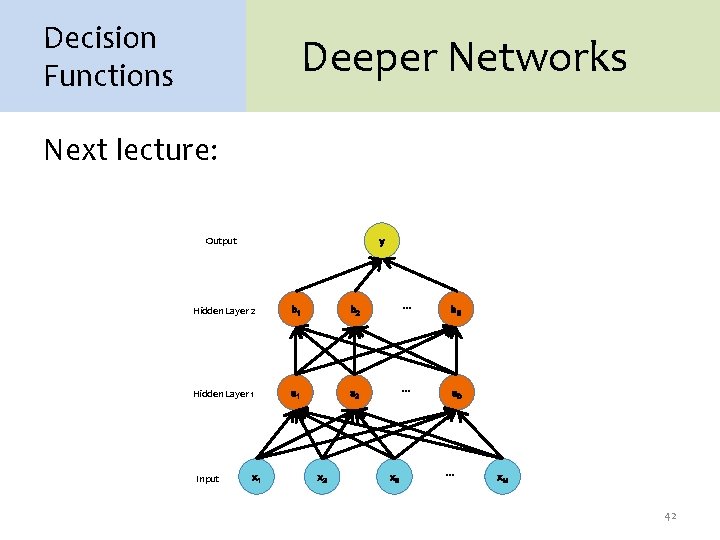

Decision Functions Deeper Networks Next lecture: Output y Hidden Layer 2 b 1 b 2 … b. E Hidden Layer 1 a 2 … a. D Input x 1 x 2 x 3 … x. M 42

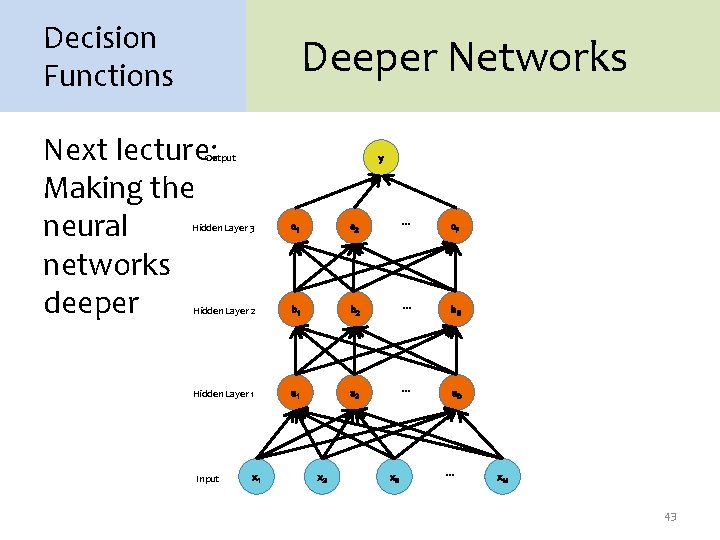

Decision Functions Deeper Networks Next lecture: Making the neural networks deeper Output y Hidden Layer 3 c 1 c 2 … c. F Hidden Layer 2 b 1 b 2 … b. E Hidden Layer 1 a 2 … a. D Input x 1 x 2 x 3 … x. M 43

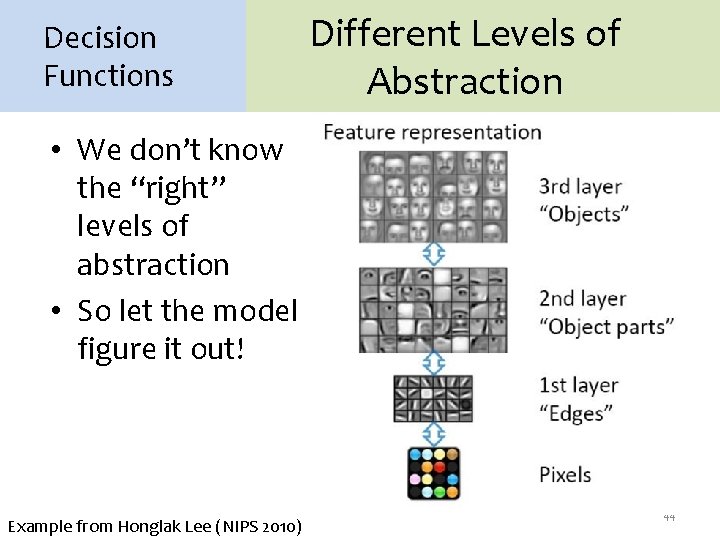

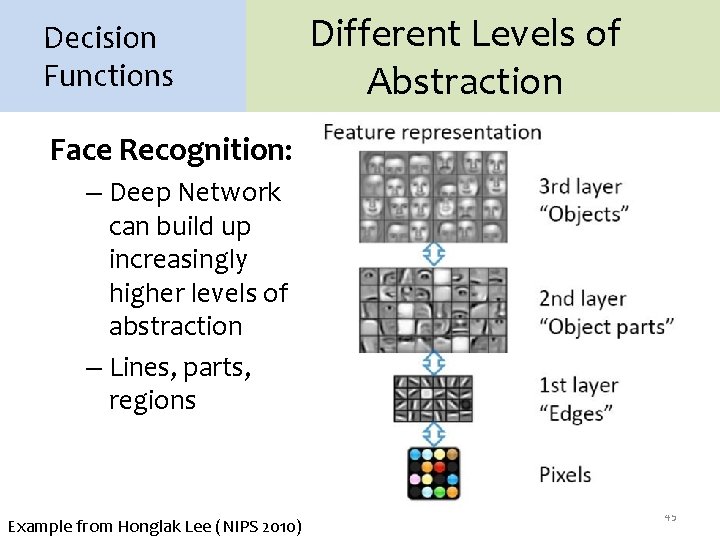

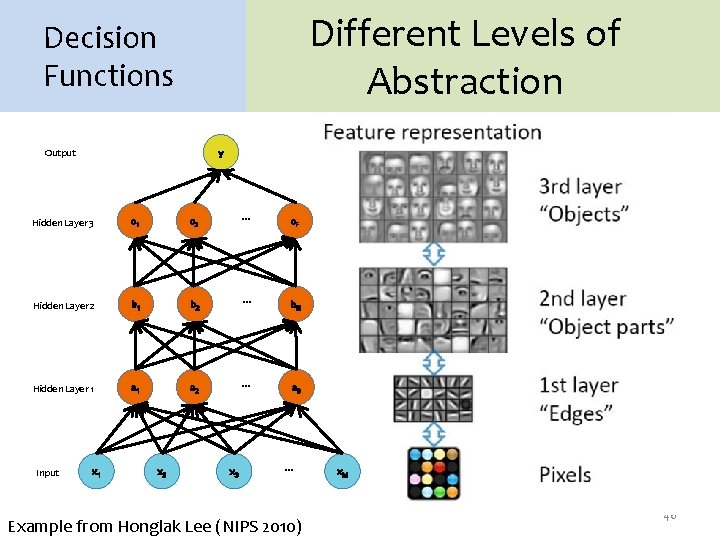

Decision Functions Different Levels of Abstraction • We don’t know the “right” levels of abstraction • So let the model figure it out! Example from Honglak Lee (NIPS 2010) 44

Decision Functions Different Levels of Abstraction Face Recognition: – Deep Network can build up increasingly higher levels of abstraction – Lines, parts, regions Example from Honglak Lee (NIPS 2010) 45

Different Levels of Abstraction Decision Functions Output y Hidden Layer 3 c 1 c 2 … c. F Hidden Layer 2 b 1 b 2 … b. E Hidden Layer 1 a 2 … a. D Input x 1 x 2 x 3 … Example from Honglak Lee (NIPS 2010) x. M 46

ARCHITECTURES 47

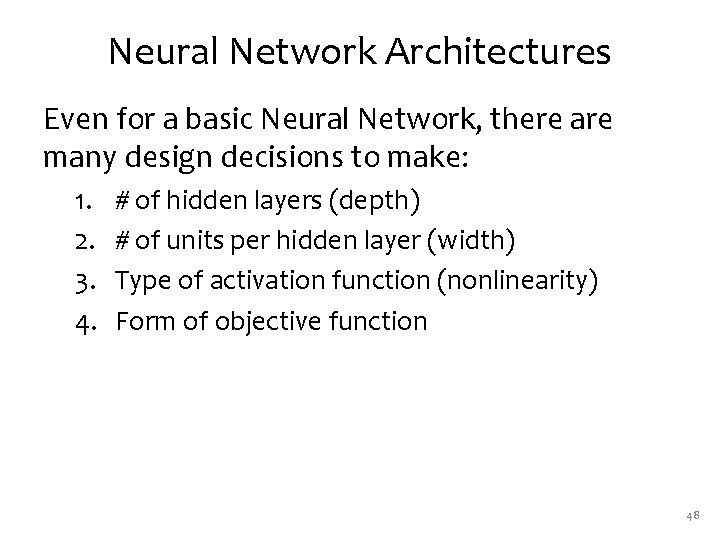

Neural Network Architectures Even for a basic Neural Network, there are many design decisions to make: 1. 2. 3. 4. # of hidden layers (depth) # of units per hidden layer (width) Type of activation function (nonlinearity) Form of objective function 48

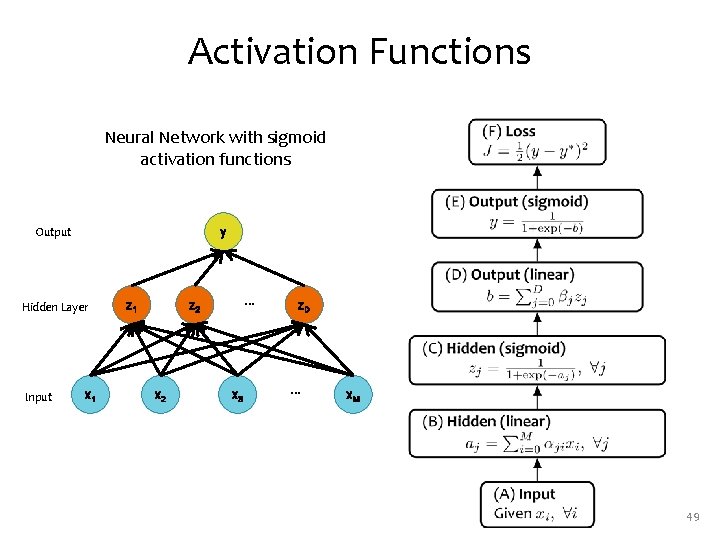

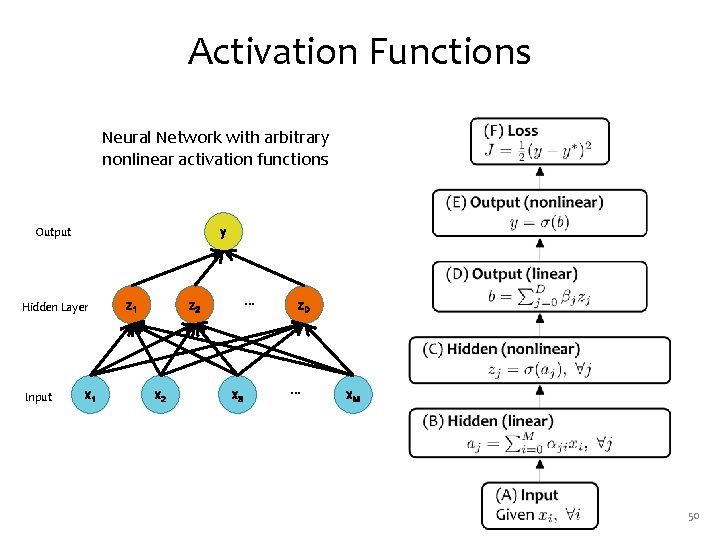

Activation Functions Neural Network with sigmoid activation functions Output y Hidden Layer Input x 1 z 1 … z 2 x 3 z. D … x. M 49

Activation Functions Neural Network with arbitrary nonlinear activation functions Output y Hidden Layer Input x 1 z 1 … z 2 x 3 z. D … x. M 50

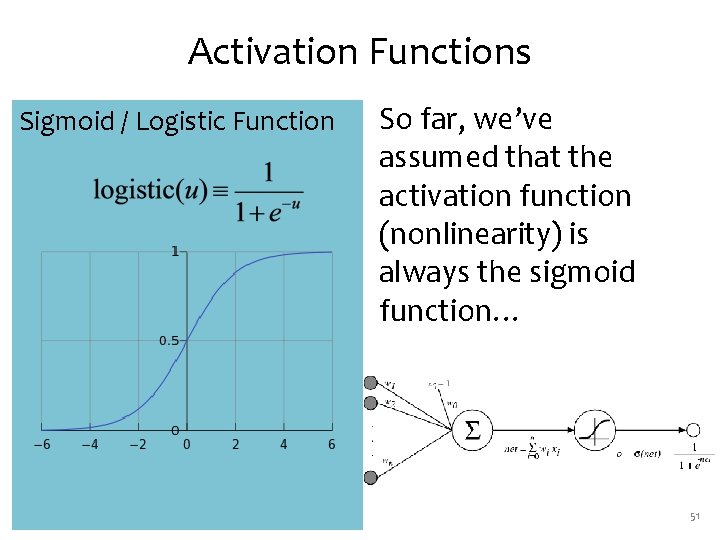

Activation Functions Sigmoid / Logistic Function So far, we’ve assumed that the activation function (nonlinearity) is always the sigmoid function… 51

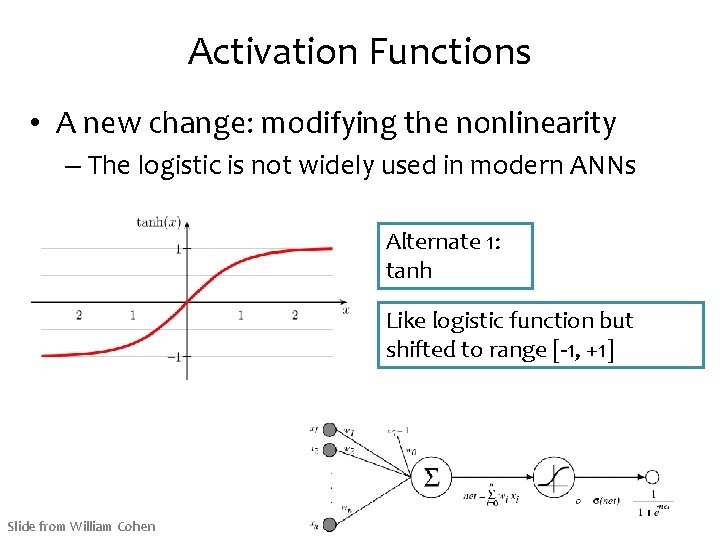

Activation Functions • A new change: modifying the nonlinearity – The logistic is not widely used in modern ANNs Alternate 1: tanh Like logistic function but shifted to range [-1, +1] Slide from William Cohen

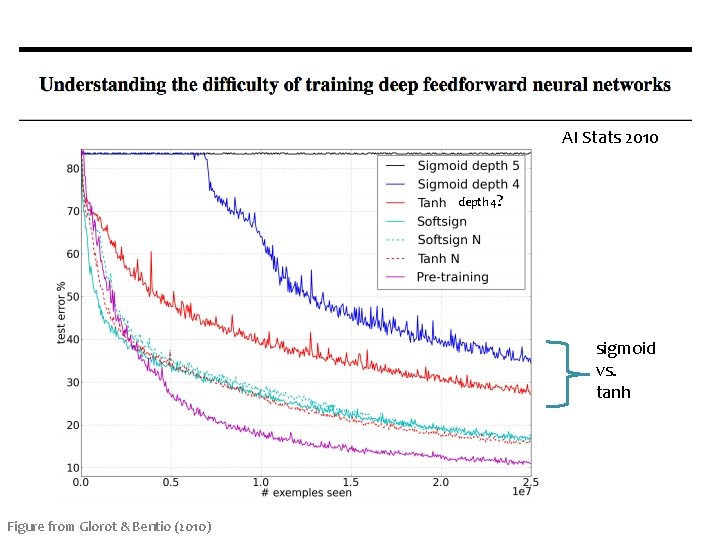

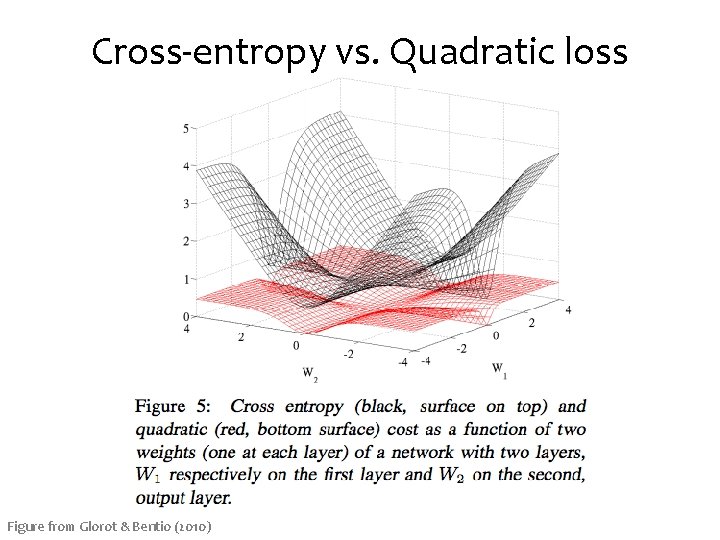

AI Stats 2010 depth 4? sigmoid vs. tanh Figure from Glorot & Bentio (2010)

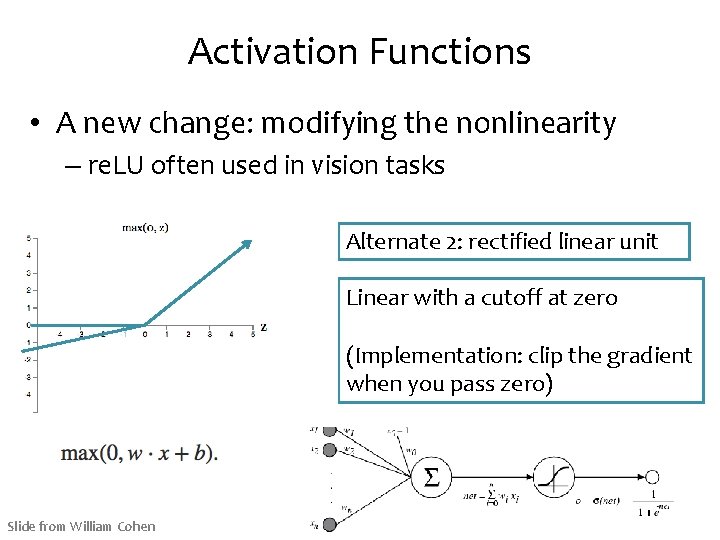

Activation Functions • A new change: modifying the nonlinearity – re. LU often used in vision tasks Alternate 2: rectified linear unit Linear with a cutoff at zero (Implementation: clip the gradient when you pass zero) Slide from William Cohen

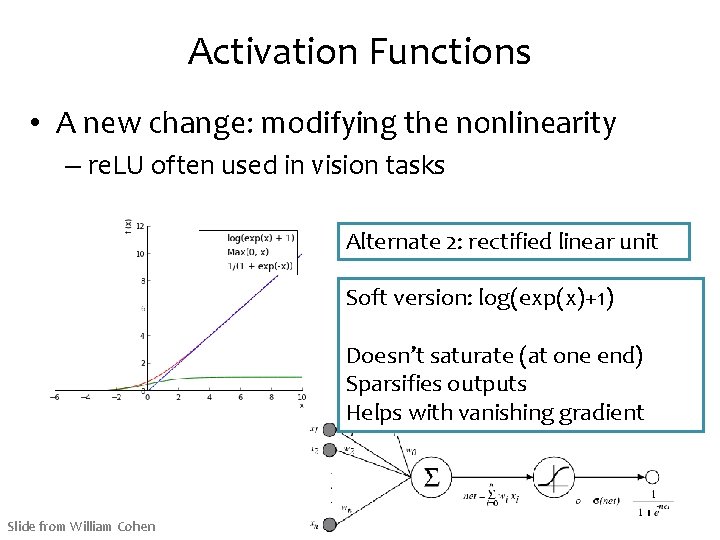

Activation Functions • A new change: modifying the nonlinearity – re. LU often used in vision tasks Alternate 2: rectified linear unit Soft version: log(exp(x)+1) Doesn’t saturate (at one end) Sparsifies outputs Helps with vanishing gradient Slide from William Cohen

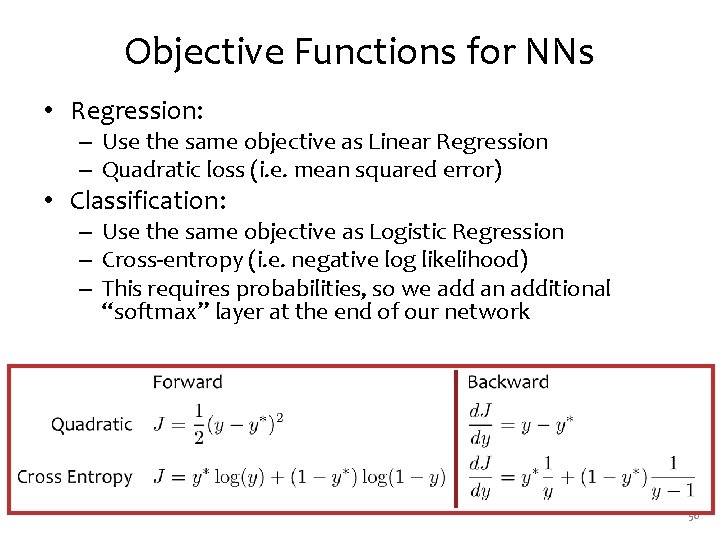

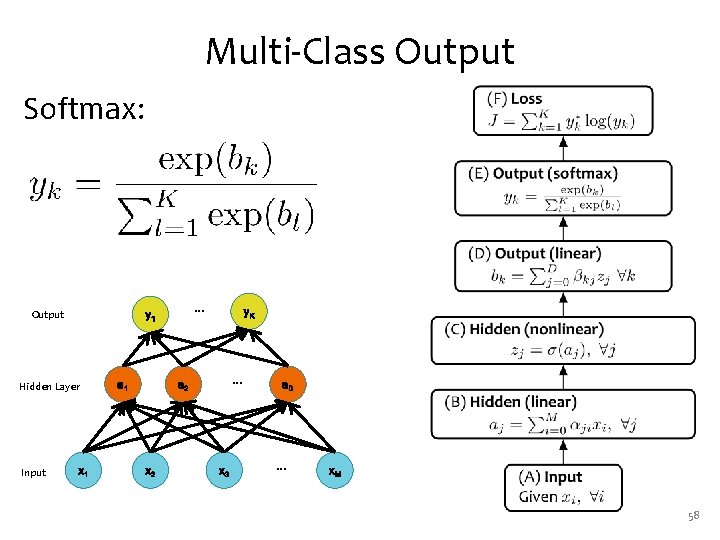

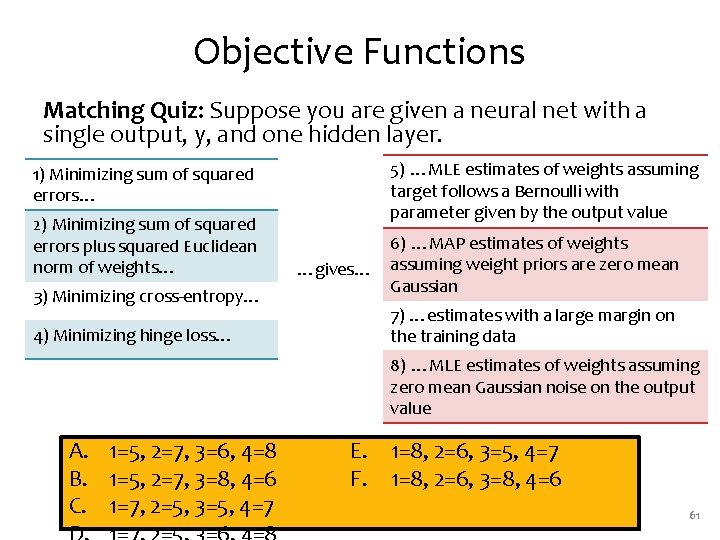

Objective Functions for NNs • Regression: – Use the same objective as Linear Regression – Quadratic loss (i. e. mean squared error) • Classification: – Use the same objective as Logistic Regression – Cross-entropy (i. e. negative log likelihood) – This requires probabilities, so we add an additional “softmax” layer at the end of our network 56

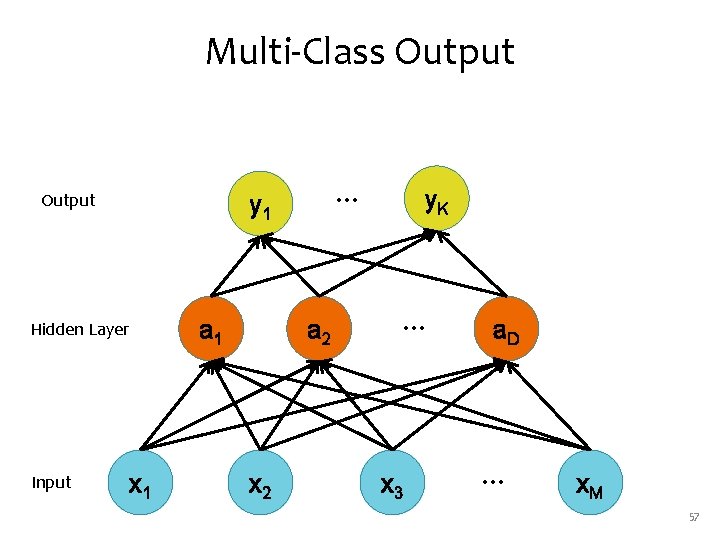

Multi-Class Output Hidden Layer Input … y 1 x 1 a 2 x 2 y. K … x 3 a. D … x. M 57

Multi-Class Output Softmax: Output Hidden Layer Input … y 1 x 1 a 1 y. K … a 2 x 3 a. D … x. M 58

Cross-entropy vs. Quadratic loss Figure from Glorot & Bentio (2010)

Background A Recipe for Machine Learning 1. Given training data: 3. Define goal: 2. Choose each of these: – Decision function – Loss function 4. Train with SGD: (take small steps opposite the gradient) 60

Objective Functions Matching Quiz: Suppose you are given a neural net with a single output, y, and one hidden layer. 5) …MLE estimates of weights assuming target follows a Bernoulli with parameter given by the output value 1) Minimizing sum of squared errors… 2) Minimizing sum of squared errors plus squared Euclidean norm of weights… 3) Minimizing cross-entropy… 4) Minimizing hinge loss… …gives… 6) …MAP estimates of weights assuming weight priors are zero mean Gaussian 7) …estimates with a large margin on the training data 8) …MLE estimates of weights assuming zero mean Gaussian noise on the output value A. 1=5, 2=7, 3=6, 4=8 B. 1=5, 2=7, 3=8, 4=6 C. 1=7, 2=5, 3=5, 4=7 E. 1=8, 2=6, 3=5, 4=7 F. 1=8, 2=6, 3=8, 4=6 61

BACKPROPAGATION 62

Background A Recipe for Machine Learning 1. Given training data: 3. Define goal: 2. Choose each of these: – Decision function – Loss function 4. Train with SGD: (take small steps opposite the gradient) 63

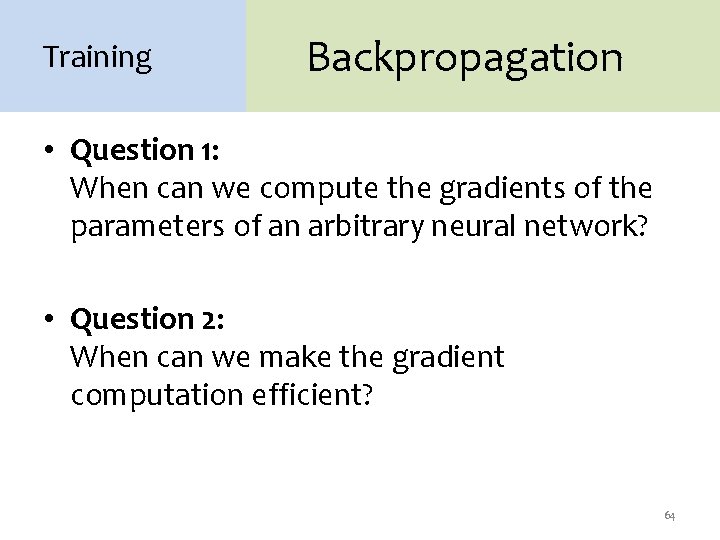

Training Backpropagation • Question 1: When can we compute the gradients of the parameters of an arbitrary neural network? • Question 2: When can we make the gradient computation efficient? 64

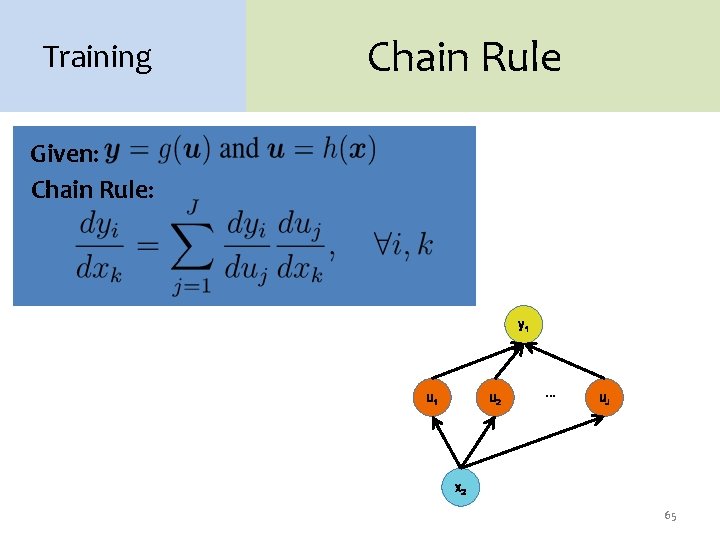

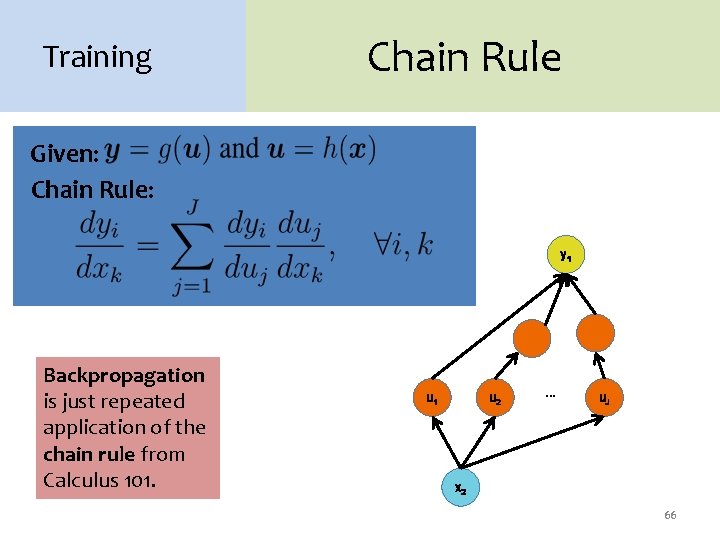

Training Chain Rule Given: Chain Rule: y 1 u 2 … u. J x 2 65

Training Chain Rule Given: Chain Rule: y 1 Backpropagation is just repeated application of the chain rule from Calculus 101. u 1 u 2 … u. J x 2 66

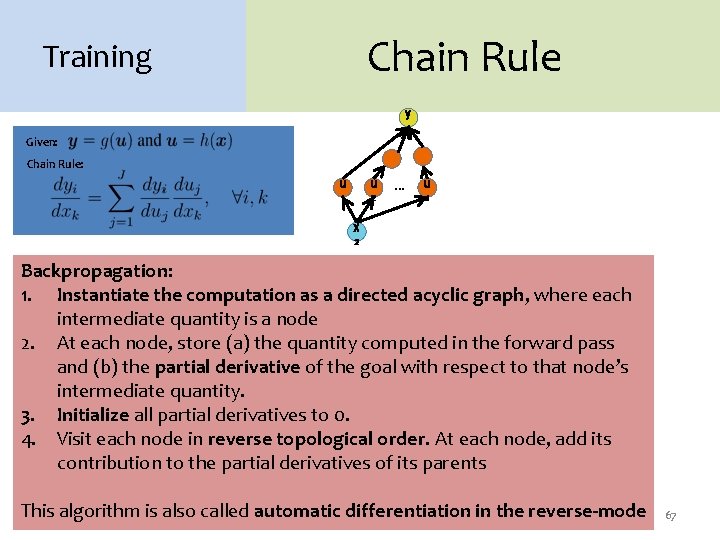

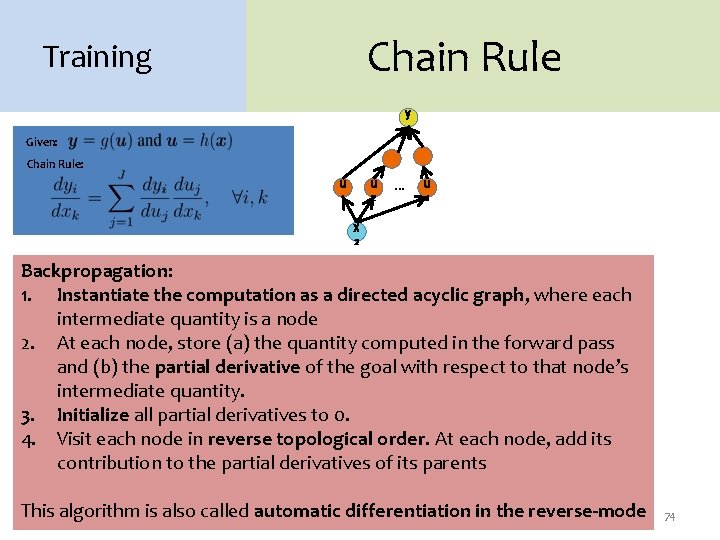

Chain Rule Training y 1 Given: Chain Rule: u u 1 2 … u J x 2 Backpropagation: 1. Instantiate the computation as a directed acyclic graph, where each intermediate quantity is a node 2. At each node, store (a) the quantity computed in the forward pass and (b) the partial derivative of the goal with respect to that node’s intermediate quantity. 3. Initialize all partial derivatives to 0. 4. Visit each node in reverse topological order. At each node, add its contribution to the partial derivatives of its parents This algorithm is also called automatic differentiation in the reverse-mode 67

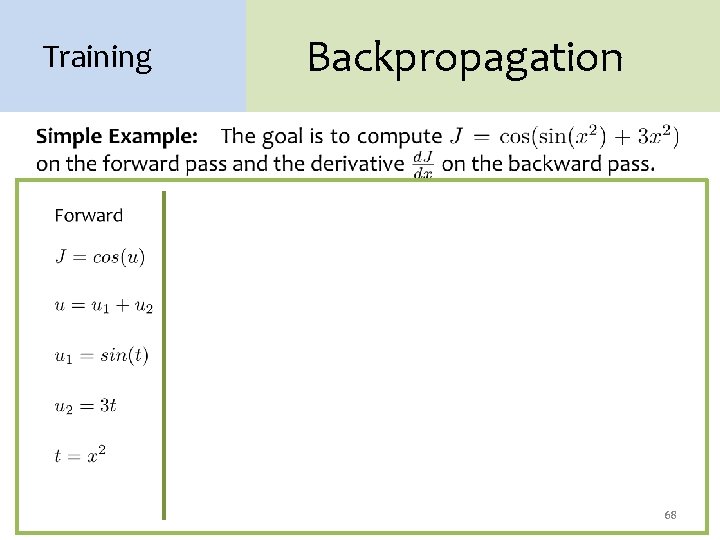

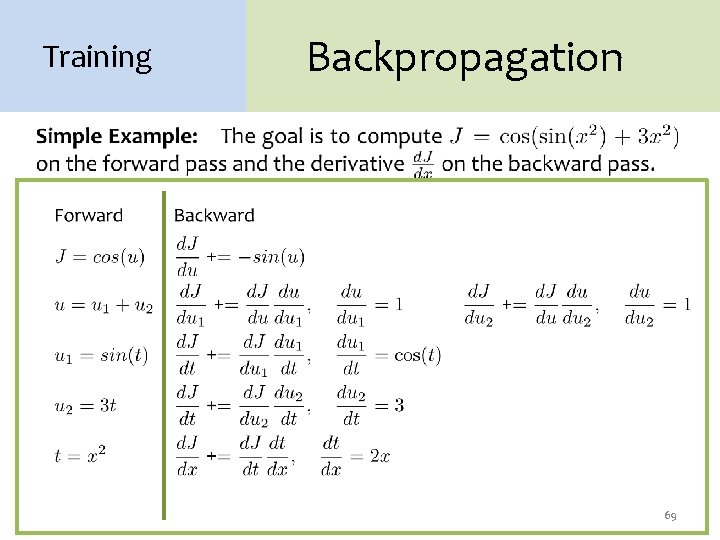

Training Backpropagation 68

Training Backpropagation 69

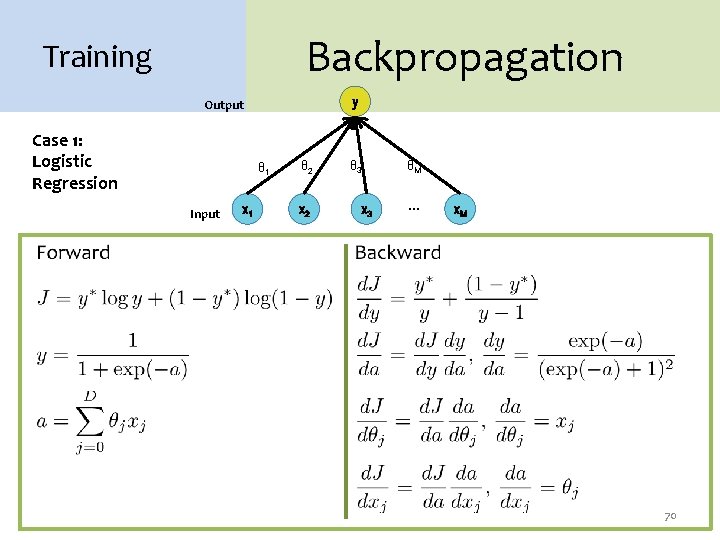

Backpropagation Training y Output Case 1: Logistic Regression θ 1 Input x 1 θ 2 x 2 θ 3 x 3 θM … x. M 70

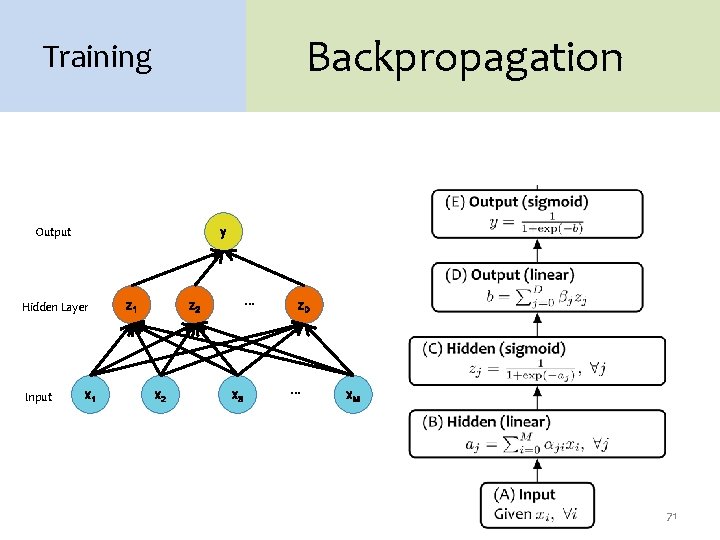

Backpropagation Training Output y Hidden Layer Input x 1 z 1 … z 2 x 3 z. D … x. M 71

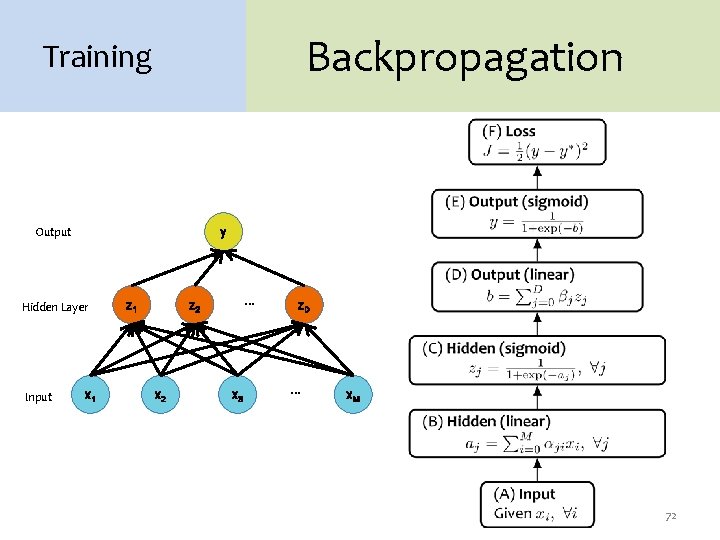

Backpropagation Training Output y Hidden Layer Input x 1 z 1 … z 2 x 3 z. D … x. M 72

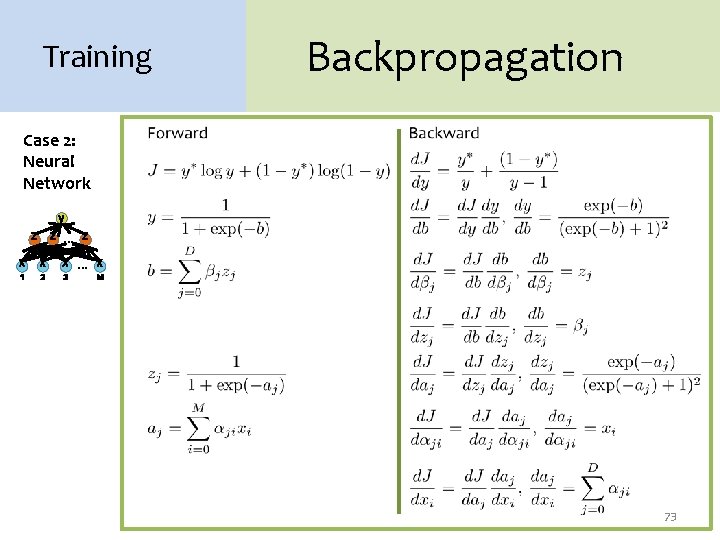

Training Backpropagation Case 2: Neural Network y z z … z 1 2 x x 1 2 D x … x 3 M 73

Chain Rule Training y 1 Given: Chain Rule: u u 1 2 … u J x 2 Backpropagation: 1. Instantiate the computation as a directed acyclic graph, where each intermediate quantity is a node 2. At each node, store (a) the quantity computed in the forward pass and (b) the partial derivative of the goal with respect to that node’s intermediate quantity. 3. Initialize all partial derivatives to 0. 4. Visit each node in reverse topological order. At each node, add its contribution to the partial derivatives of its parents This algorithm is also called automatic differentiation in the reverse-mode 74

Chain Rule Training y 1 Given: Chain Rule: u u 1 2 … u J x 2 Backpropagation: 1. Instantiate the computation as a directed acyclic graph, where each node represents a Tensor. 2. At each node, store (a) the quantity computed in the forward pass and (b) the partial derivatives of the goal with respect to that node’s Tensor. 3. Initialize all partial derivatives to 0. 4. Visit each node in reverse topological order. At each node, add its contribution to the partial derivatives of its parents This algorithm is also called automatic differentiation in the reverse-mode 75

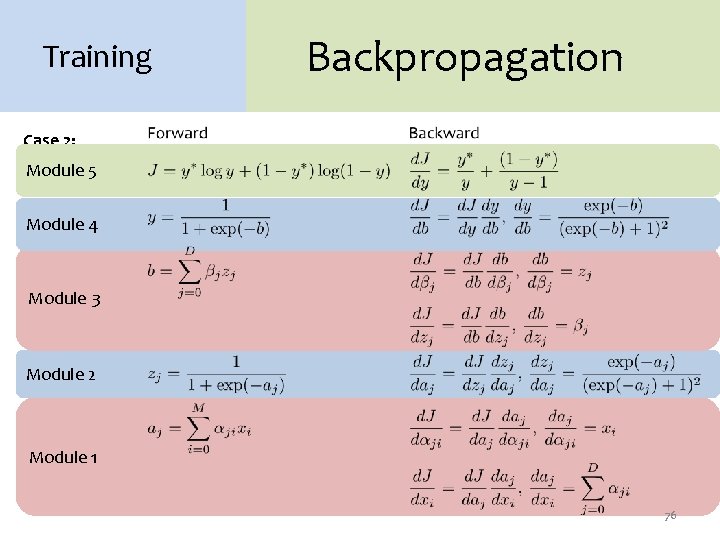

Training Backpropagation Case 2: Neural Module 5 Network y Module 4 z z … z 1 2 x x 1 2 D x … x 3 M Module 3 Module 2 Module 1 76

Background A Recipe for Gradients Machine Learning 3. Definecan goal: 1. Given training data: Backpropagation compute this gradient! And it’s a special case of a more general algorithm called reverse 2. Choose each of these: mode automatic differentiation that – Decision function can compute 4. Train SGD: the with gradient of any differentiable efficiently! (takefunction small steps – Loss function opposite the gradient) 77

Summary 1. Neural Networks… – provide a way of learning features – are highly nonlinear prediction functions – (can be) a highly parallel network of logistic regression classifiers – discover useful hidden representations of the input 2. Backpropagation… – provides an efficient way to compute gradients – is a special case of reverse-mode automatic differentiation 78

- Slides: 78