Business Research Methods Multiple Regression Multiple Regression Multiple

Business Research Methods Multiple Regression

Multiple Regression • • • Multiple Regression Model Least Squares Method Multiple Coefficient of Determination Model Assumptions Testing for Significance Using the Estimated Regression Equation for Estimation and Prediction • Qualitative Independent Variables

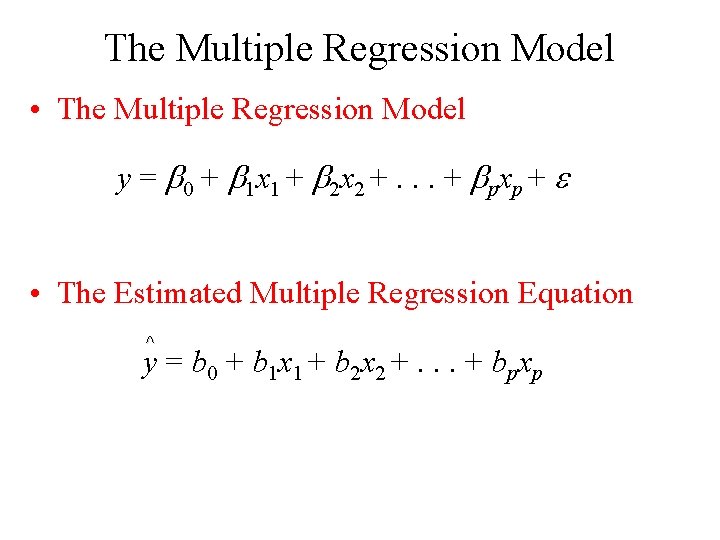

The Multiple Regression Model • The Multiple Regression Model y = 0 + 1 x 1 + 2 x 2 +. . . + pxp + • The Estimated Multiple Regression Equation ^ y = b 0 + b 1 x 1 + b 2 x 2 +. . . + bpxp

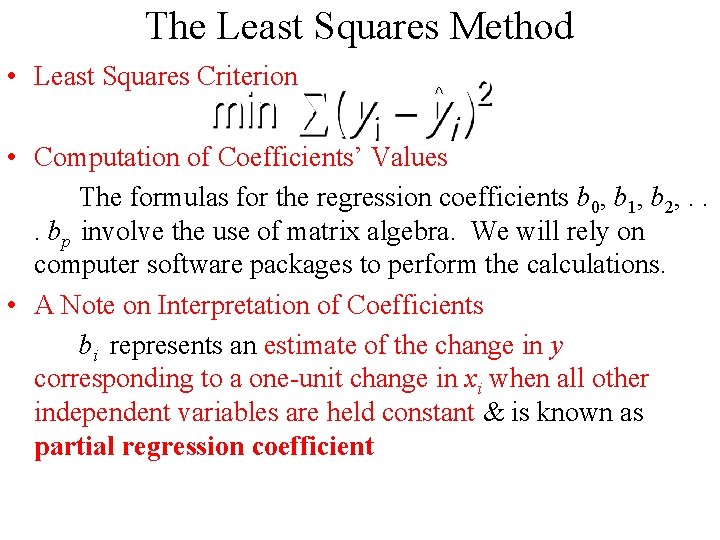

The Least Squares Method • Least Squares Criterion ^ • Computation of Coefficients’ Values The formulas for the regression coefficients b 0, b 1, b 2, . . . bp involve the use of matrix algebra. We will rely on computer software packages to perform the calculations. • A Note on Interpretation of Coefficients bi represents an estimate of the change in y corresponding to a one-unit change in xi when all other independent variables are held constant & is known as partial regression coefficient

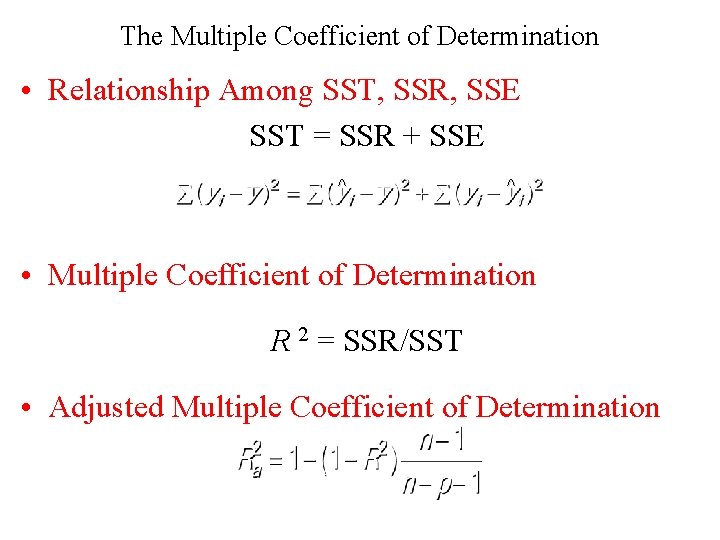

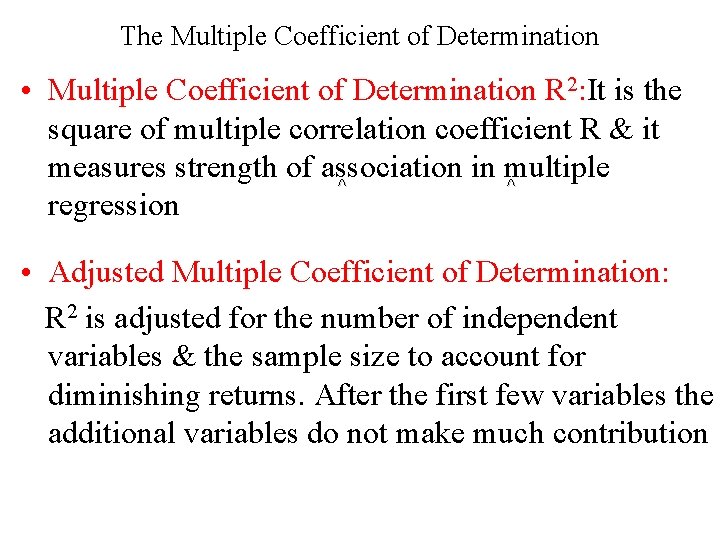

The Multiple Coefficient of Determination • Relationship Among SST, SSR, SSE SST = SSR + SSE ^ ^ • Multiple Coefficient of Determination R 2 = SSR/SST • Adjusted Multiple Coefficient of Determination

The Multiple Coefficient of Determination • Multiple Coefficient of Determination R 2: It is the square of multiple correlation coefficient R & it measures strength of association in multiple ^ ^ regression • Adjusted Multiple Coefficient of Determination: R 2 is adjusted for the number of independent variables & the sample size to account for diminishing returns. After the first few variables the additional variables do not make much contribution

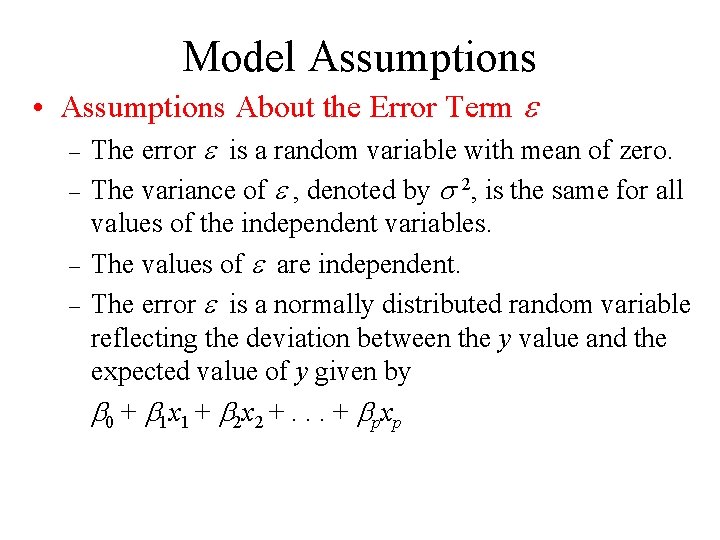

Model Assumptions • Assumptions About the Error Term – – The error is a random variable with mean of zero. The variance of , denoted by 2, is the same for all values of the independent variables. The values of are independent. The error is a normally distributed random variable reflecting the deviation between the y value and the expected value of y given by 0 + 1 x 1 + 2 x 2 +. . . + pxp

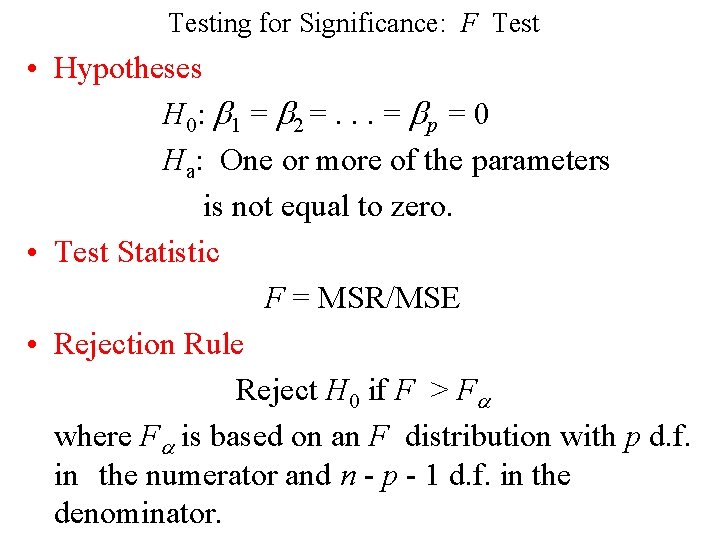

Testing for Significance: F Test • Hypotheses H 0: 1 = 2 =. . . = p = 0 Ha: One or more of the parameters is not equal to zero. • Test Statistic F = MSR/MSE • Rejection Rule Reject H 0 if F > F where F is based on an F distribution with p d. f. in the numerator and n - p - 1 d. f. in the denominator.

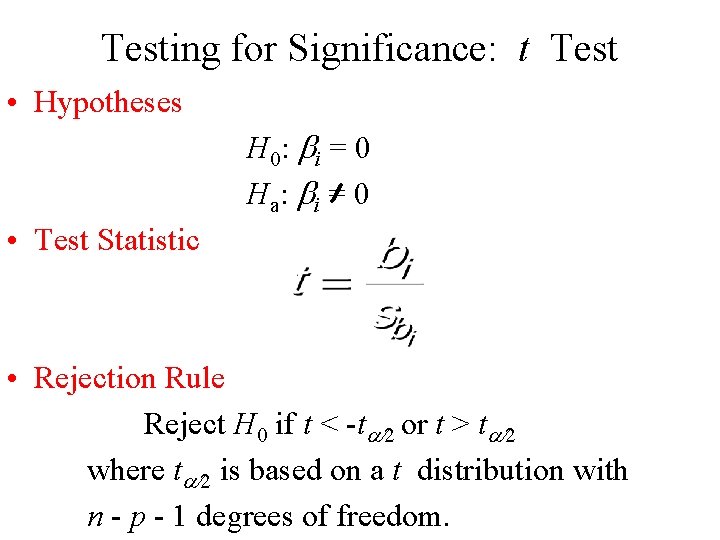

Testing for Significance: t Test • Hypotheses H 0: i = 0 Ha : i = 0 • Test Statistic • Rejection Rule Reject H 0 if t < -t or t > t where t is based on a t distribution with n - p - 1 degrees of freedom.

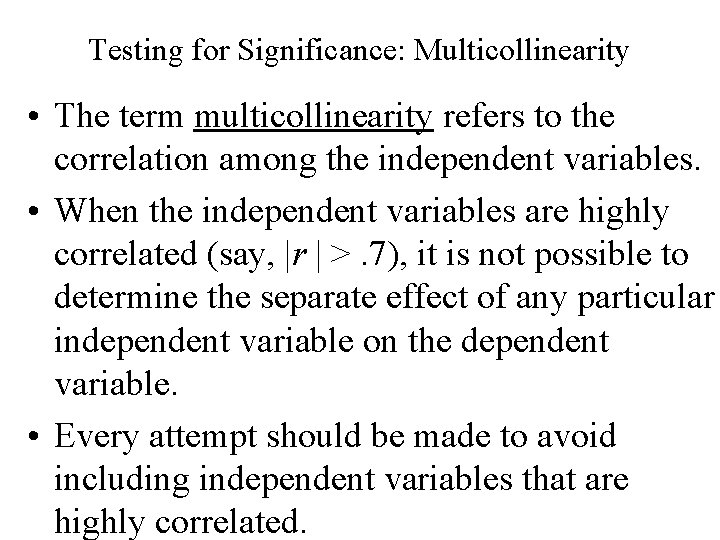

Testing for Significance: Multicollinearity • The term multicollinearity refers to the correlation among the independent variables. • When the independent variables are highly correlated (say, |r | >. 7), it is not possible to determine the separate effect of any particular independent variable on the dependent variable. • Every attempt should be made to avoid including independent variables that are highly correlated.

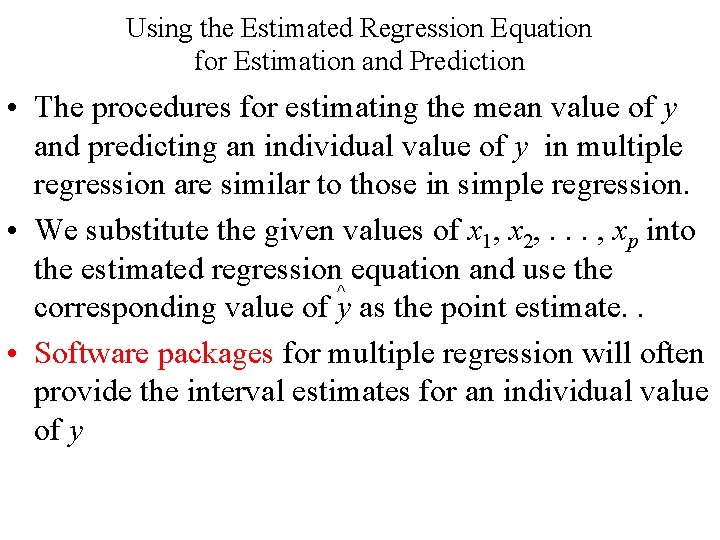

Using the Estimated Regression Equation for Estimation and Prediction • The procedures for estimating the mean value of y and predicting an individual value of y in multiple regression are similar to those in simple regression. • We substitute the given values of x 1, x 2, . . . , xp into the estimated regression equation and use the ^ corresponding value of y as the point estimate. . • Software packages for multiple regression will often provide the interval estimates for an individual value of y

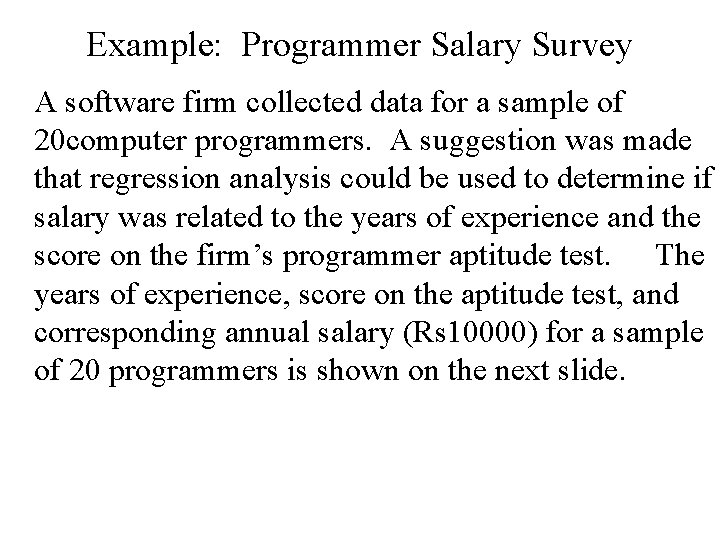

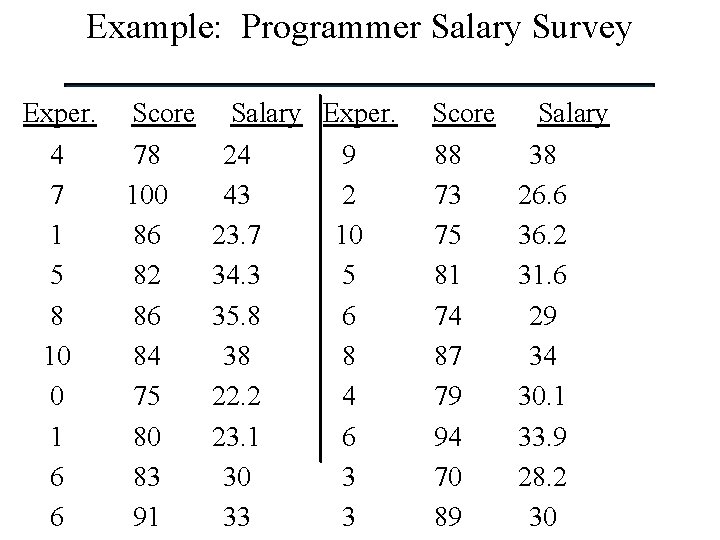

Example: Programmer Salary Survey A software firm collected data for a sample of 20 computer programmers. A suggestion was made that regression analysis could be used to determine if salary was related to the years of experience and the score on the firm’s programmer aptitude test. The years of experience, score on the aptitude test, and corresponding annual salary (Rs 10000) for a sample of 20 programmers is shown on the next slide.

Example: Programmer Salary Survey Exper. 4 7 1 5 8 10 0 1 6 6 Score 78 100 86 82 86 84 75 80 83 91 Salary Exper. 24 9 43 2 23. 7 10 34. 3 5 35. 8 6 38 8 22. 2 4 23. 1 6 30 3 33 3 Score 88 73 75 81 74 87 79 94 70 89 Salary 38 26. 6 36. 2 31. 6 29 34 30. 1 33. 9 28. 2 30

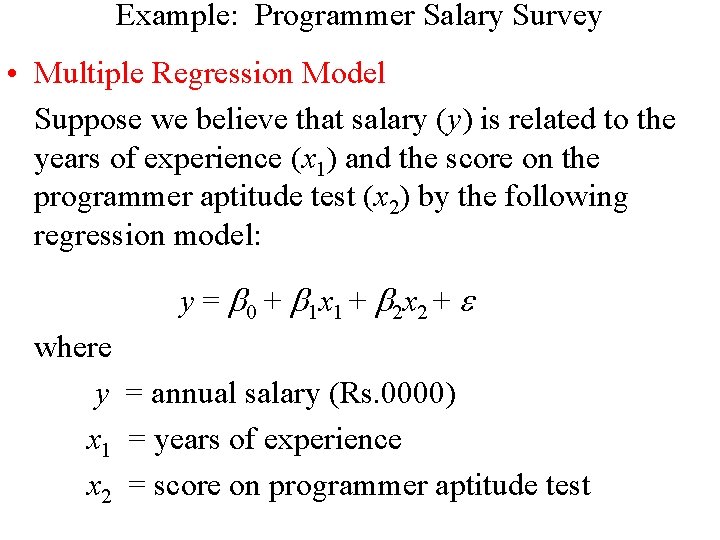

Example: Programmer Salary Survey • Multiple Regression Model Suppose we believe that salary (y) is related to the years of experience (x 1) and the score on the programmer aptitude test (x 2) by the following regression model: y = 0 + 1 x 1 + 2 x 2 + where y = annual salary (Rs. 0000) x 1 = years of experience x 2 = score on programmer aptitude test

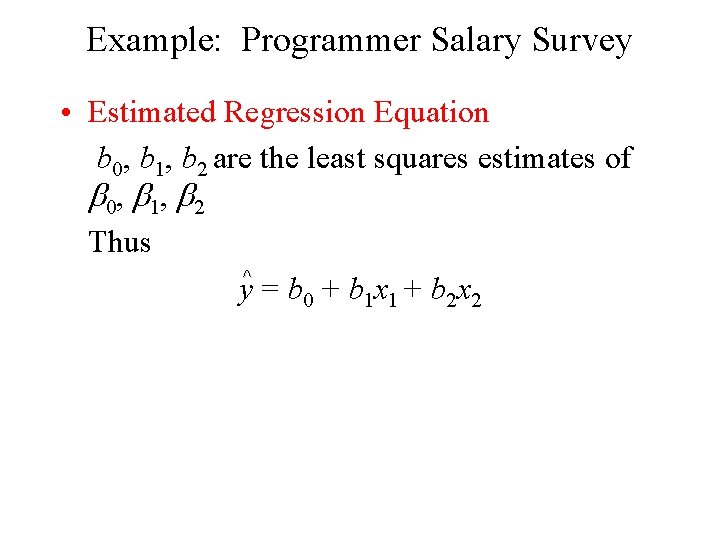

Example: Programmer Salary Survey • Estimated Regression Equation b 0, b 1, b 2 are the least squares estimates of 0, 1, 2 Thus ^ y = b 0 + b 1 x 1 + b 2 x 2

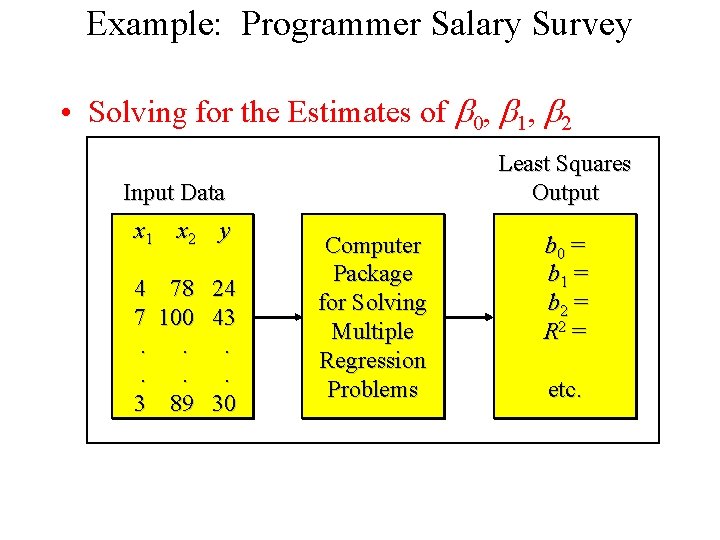

Example: Programmer Salary Survey • Solving for the Estimates of 0, 1, 2 Least Squares Output Input Data x 1 x 2 y 4 7. . 3 78 100. . 89 24 43. . 30 Computer Package for Solving Multiple Regression Problems b 0 = b 1 = b 2 = R 2 = etc.

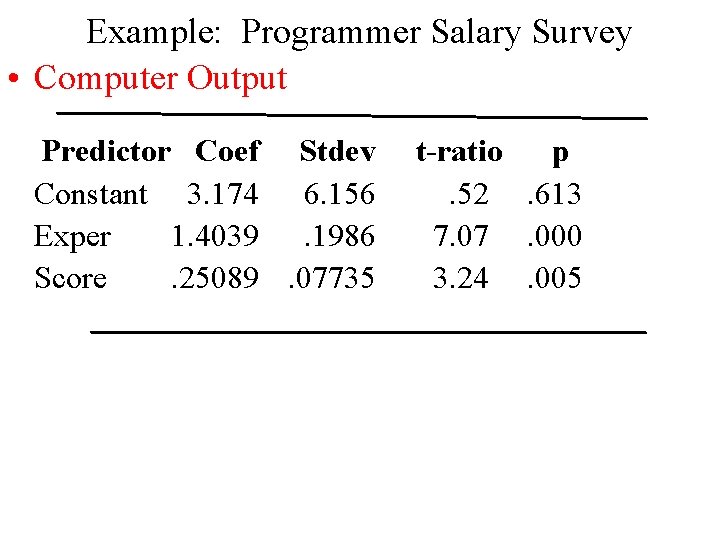

Example: Programmer Salary Survey • Computer Output Predictor Coef Stdev Constant 3. 174 6. 156 Exper 1. 4039. 1986 Score. 25089. 07735 t-ratio p. 52. 613 7. 000 3. 24. 005

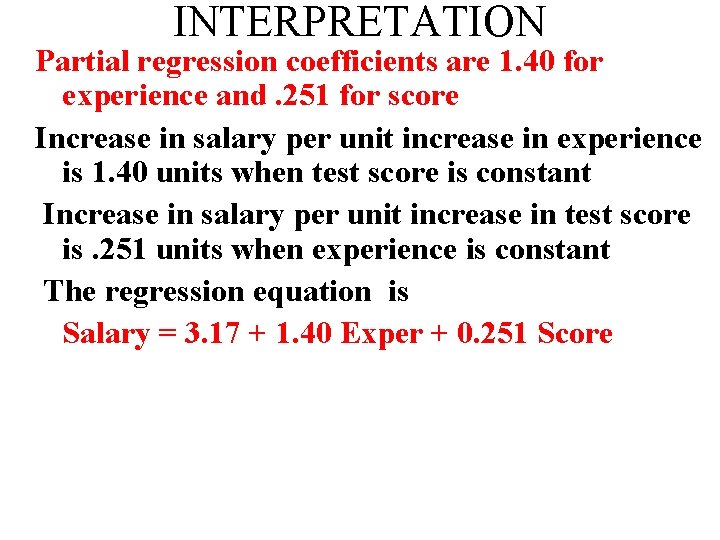

INTERPRETATION Partial regression coefficients are 1. 40 for experience and. 251 for score Increase in salary per unit increase in experience is 1. 40 units when test score is constant Increase in salary per unit increase in test score is. 251 units when experience is constant The regression equation is Salary = 3. 17 + 1. 40 Exper + 0. 251 Score

The p ratio for Experience is. 000. This is less than. 05. Hence experience is a significant variable in explaining salary The p ratio for Score is. 005 which is also less than. 05. Hence score is also a significant variable in explaining salary

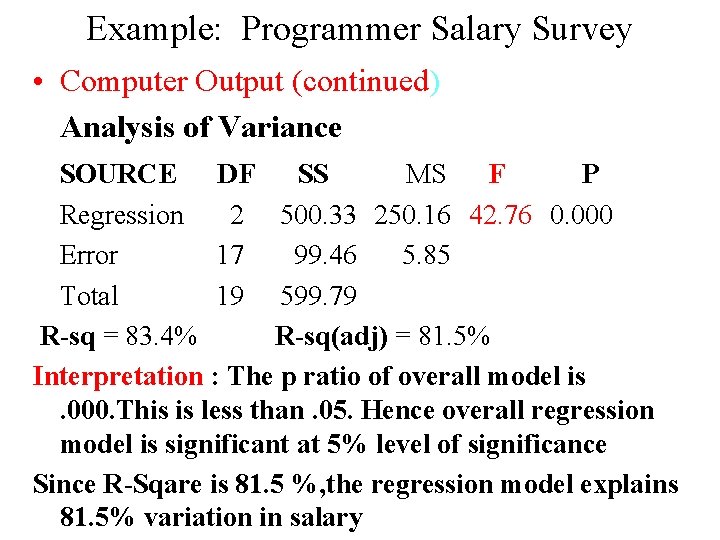

Example: Programmer Salary Survey • Computer Output (continued) Analysis of Variance SOURCE DF SS MS F P Regression 2 500. 33 250. 16 42. 76 0. 000 Error 17 99. 46 5. 85 Total 19 599. 79 R-sq = 83. 4% R-sq(adj) = 81. 5% Interpretation : The p ratio of overall model is. 000. This is less than. 05. Hence overall regression model is significant at 5% level of significance Since R-Sqare is 81. 5 %, the regression model explains 81. 5% variation in salary

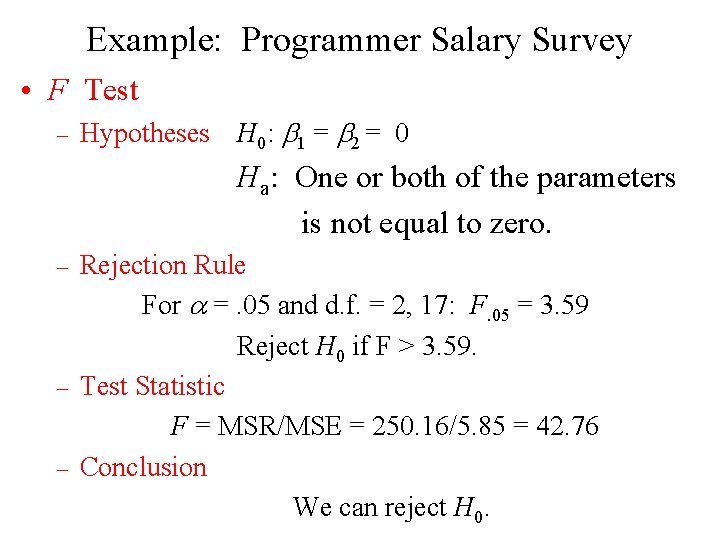

Example: Programmer Salary Survey • F Test – Hypotheses H 0: 1 = 2 = 0 Ha: One or both of the parameters is not equal to zero. – – – Rejection Rule For =. 05 and d. f. = 2, 17: F. 05 = 3. 59 Reject H 0 if F > 3. 59. Test Statistic F = MSR/MSE = 250. 16/5. 85 = 42. 76 Conclusion We can reject H 0.

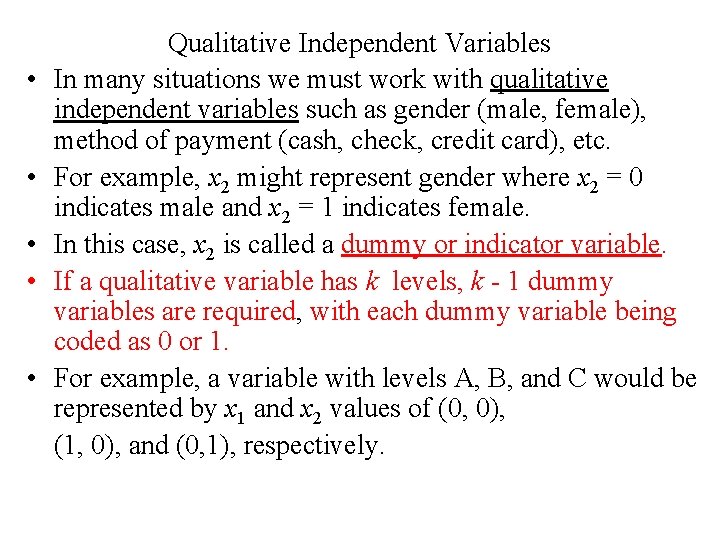

• • • Qualitative Independent Variables In many situations we must work with qualitative independent variables such as gender (male, female), method of payment (cash, check, credit card), etc. For example, x 2 might represent gender where x 2 = 0 indicates male and x 2 = 1 indicates female. In this case, x 2 is called a dummy or indicator variable. If a qualitative variable has k levels, k - 1 dummy variables are required, with each dummy variable being coded as 0 or 1. For example, a variable with levels A, B, and C would be represented by x 1 and x 2 values of (0, 0), (1, 0), and (0, 1), respectively.

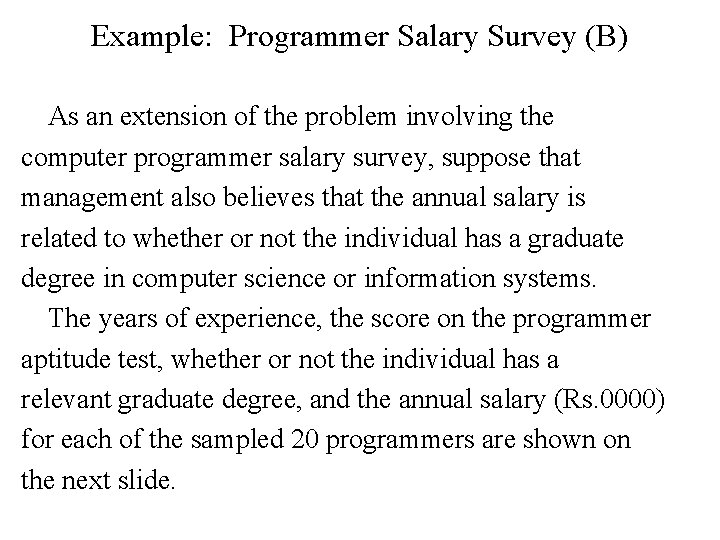

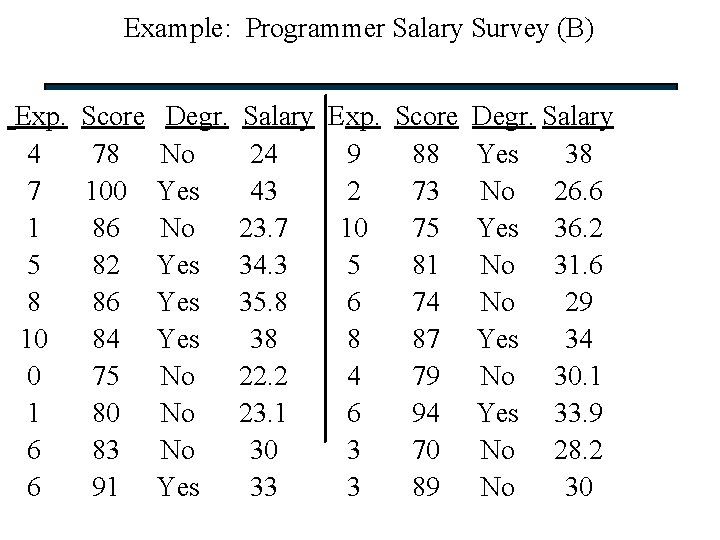

Example: Programmer Salary Survey (B) As an extension of the problem involving the computer programmer salary survey, suppose that management also believes that the annual salary is related to whether or not the individual has a graduate degree in computer science or information systems. The years of experience, the score on the programmer aptitude test, whether or not the individual has a relevant graduate degree, and the annual salary (Rs. 0000) for each of the sampled 20 programmers are shown on the next slide.

Example: Programmer Salary Survey (B) Exp. 4 7 1 5 8 10 0 1 6 6 Score 78 100 86 82 86 84 75 80 83 91 Degr. No Yes Yes No No No Yes Salary Exp. Score Degr. Salary 24 9 88 Yes 38 43 2 73 No 26. 6 23. 7 10 75 Yes 36. 2 34. 3 5 81 No 31. 6 35. 8 6 74 No 29 38 8 87 Yes 34 22. 2 4 79 No 30. 1 23. 1 6 94 Yes 33. 9 30 3 70 No 28. 2 33 3 89 No 30

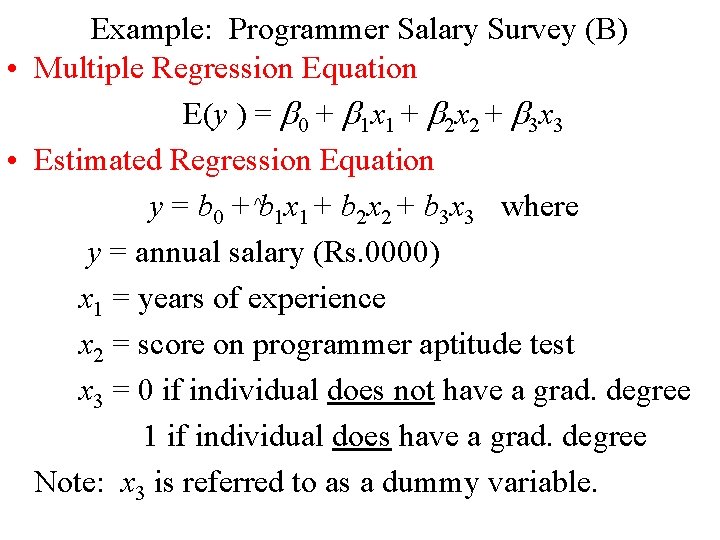

Example: Programmer Salary Survey (B) • Multiple Regression Equation E(y ) = 0 + 1 x 1 + 2 x 2 + 3 x 3 • Estimated Regression Equation y = b 0 + ^b 1 x 1 + b 2 x 2 + b 3 x 3 where y = annual salary (Rs. 0000) x 1 = years of experience x 2 = score on programmer aptitude test x 3 = 0 if individual does not have a grad. degree 1 if individual does have a grad. degree Note: x 3 is referred to as a dummy variable.

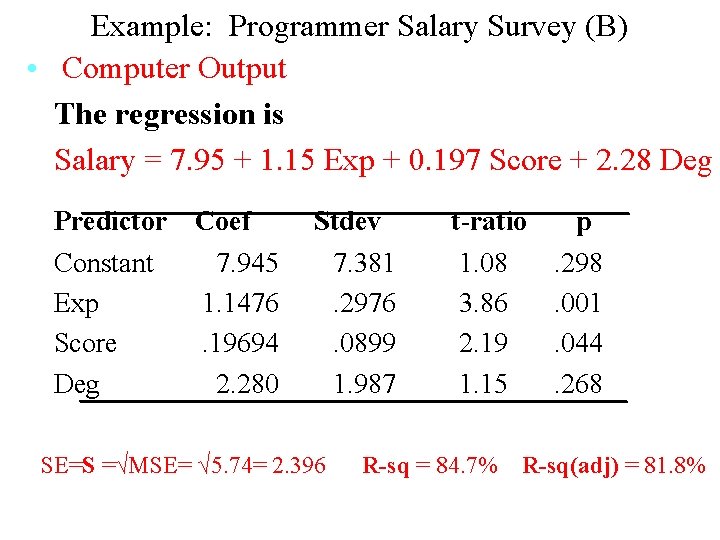

Example: Programmer Salary Survey (B) • Computer Output The regression is Salary = 7. 95 + 1. 15 Exp + 0. 197 Score + 2. 28 Deg Predictor Constant Exp Score Deg Coef 7. 945 1. 1476. 19694 2. 280 Stdev 7. 381. 2976. 0899 1. 987 SE=S =√MSE= √ 5. 74= 2. 396 t-ratio 1. 08 3. 86 2. 19 1. 15 R-sq = 84. 7% p. 298. 001. 044. 268 R-sq(adj) = 81. 8%

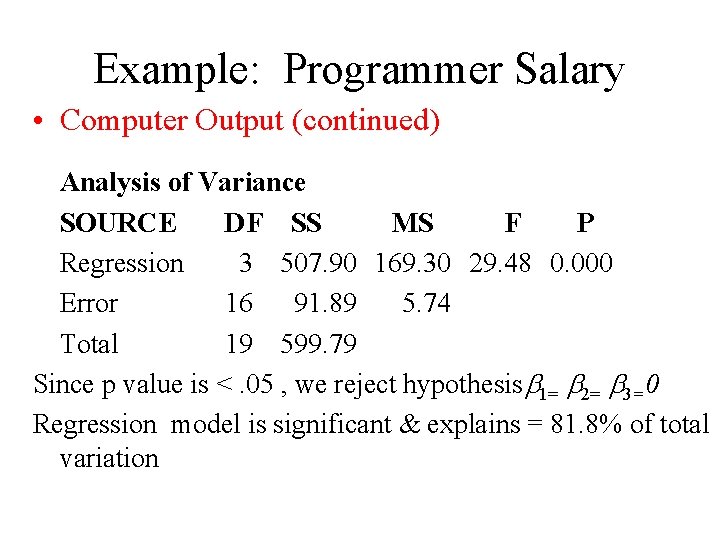

• Example: Programmer Salary Computer Output (continued) Survey (B) Analysis of Variance SOURCE DF SS MS F P Regression 3 507. 90 169. 30 29. 48 0. 000 Error 16 91. 89 5. 74 Total 19 599. 79 Since p value is <. 05 , we reject hypothesis 1= 2= 3=0 Regression model is significant & explains = 81. 8% of total variation

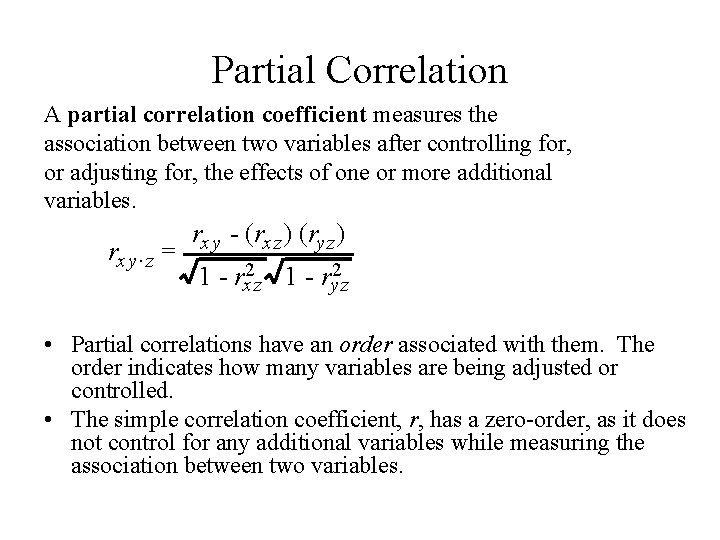

Partial Correlation A partial correlation coefficient measures the association between two variables after controlling for, or adjusting for, the effects of one or more additional variables. rx y. z = rx y - (rx z ) (ry z ) 1 - rx 2 z 1 - ry 2 z • Partial correlations have an order associated with them. The order indicates how many variables are being adjusted or controlled. • The simple correlation coefficient, r, has a zero-order, as it does not control for any additional variables while measuring the association between two variables.

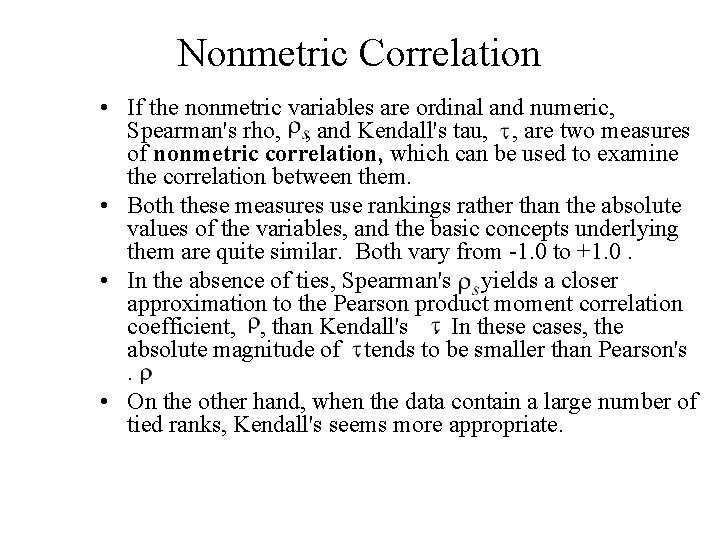

Nonmetric Correlation • If the nonmetric variables are ordinal and numeric, Spearman's rho, , and Kendall's tau, , are two measures of nonmetric correlation, which can be used to examine the correlation between them. • Both these measures use rankings rather than the absolute values of the variables, and the basic concepts underlying them are quite similar. Both vary from -1. 0 to +1. 0. • In the absence of ties, Spearman's yields a closer approximation to the Pearson product moment correlation coefficient, , than Kendall's. In these cases, the absolute magnitude of tends to be smaller than Pearson's. • On the other hand, when the data contain a large number of tied ranks, Kendall's seems more appropriate.

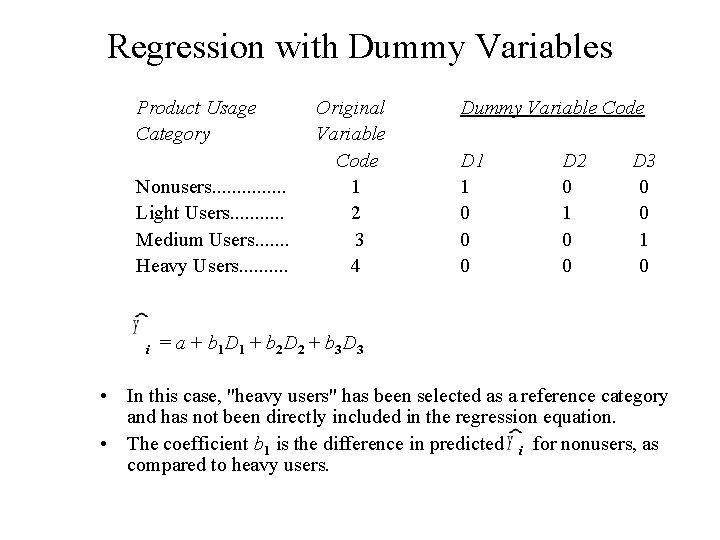

Regression with Dummy Variables Product Usage Category Nonusers. . . . Light Users. . . Medium Users. . . . Heavy Users. . i Original Variable Code 1 2 3 4 Dummy Variable Code D 1 1 0 0 0 D 2 0 1 0 0 D 3 0 0 1 0 = a + b 1 D 1 + b 2 D 2 + b 3 D 3 • In this case, "heavy users" has been selected as a reference category and has not been directly included in the regression equation. • The coefficient b 1 is the difference in predicted i for nonusers, as compared to heavy users.

- Slides: 30