Introduction to CUDA 1 of n Patrick Cozzi

- Slides: 73

Introduction to CUDA (1 of n*) Patrick Cozzi University of Pennsylvania CIS 565 - Spring 2011 * Where n is 2 or 3

Administrivia n Paper presentation due Wednesday, 02/23 ¨ Topics n first come, first serve Assignment 4 handed today ¨ Due Friday, 03/04 at 11: 59 pm

Agenda GPU architecture review n CUDA n ¨ First of two or three dedicated classes

Acknowledgements n Many slides are from ¨ Kayvon Fatahalian's From Shader Code to a Teraflop: How GPU Shader Cores Work: n http: //bps 10. idav. ucdavis. edu/talks/03 fatahalian_gpu. Arch. Teraflop_BPS_SIGGRAPH 201 0. pdf ¨ David n Kirk and Wen-mei Hwu’s UIUC course: http: //courses. engr. illinois. edu/ece 498/al/

GPU Architecture Review n GPUs are: ¨ Parallel ¨ Multithreaded ¨ Many-core n GPUs have: ¨ Tremendous computational horsepower ¨ High memory bandwidth

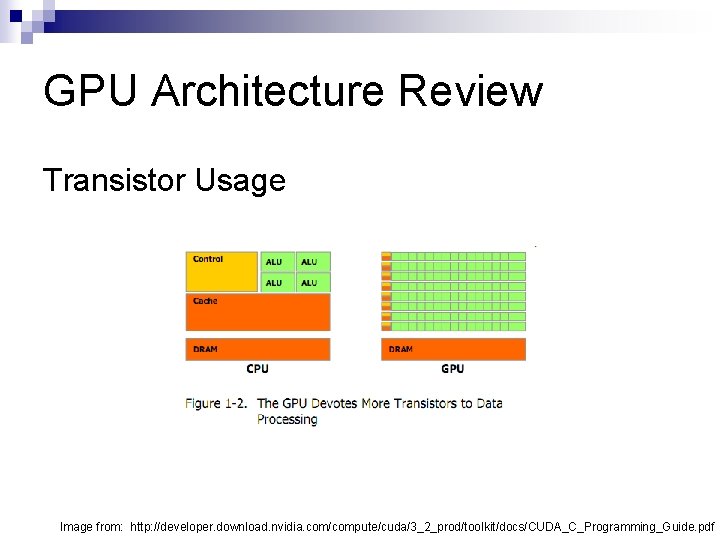

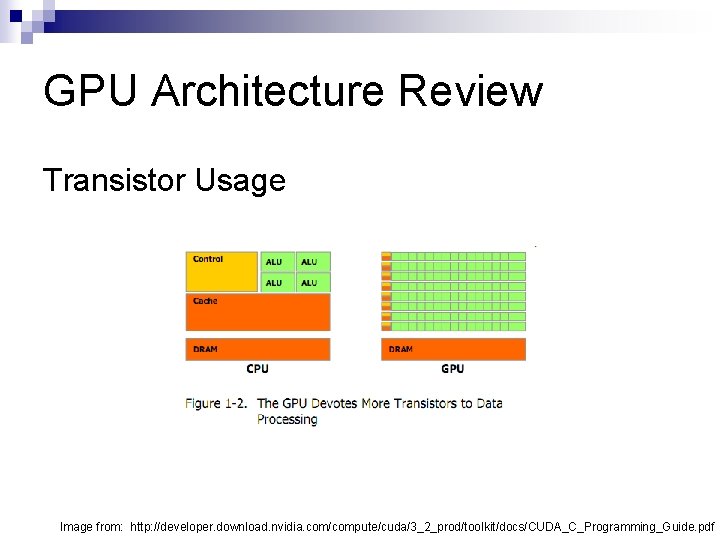

GPU Architecture Review n GPUs are specialized for ¨ Compute-intensive, highly parallel computation ¨ Graphics! n Transistors are devoted to: ¨ Processing ¨ Not: Data caching n Flow control n

GPU Architecture Review Transistor Usage Image from: http: //developer. download. nvidia. com/compute/cuda/3_2_prod/toolkit/docs/CUDA_C_Programming_Guide. pdf

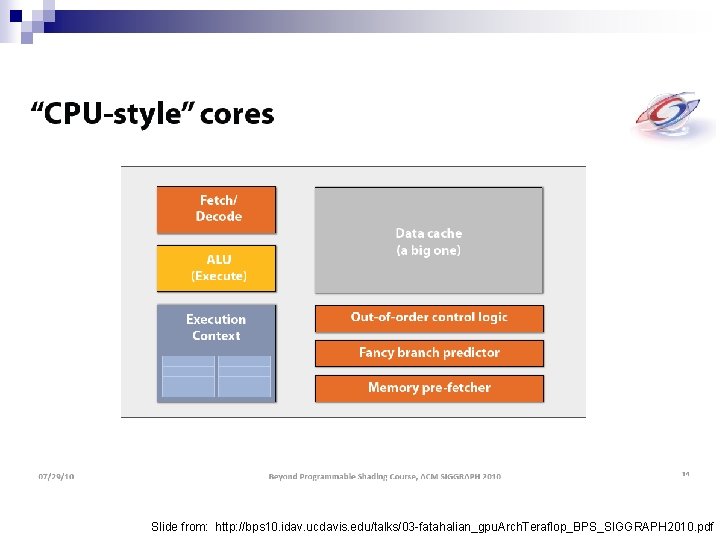

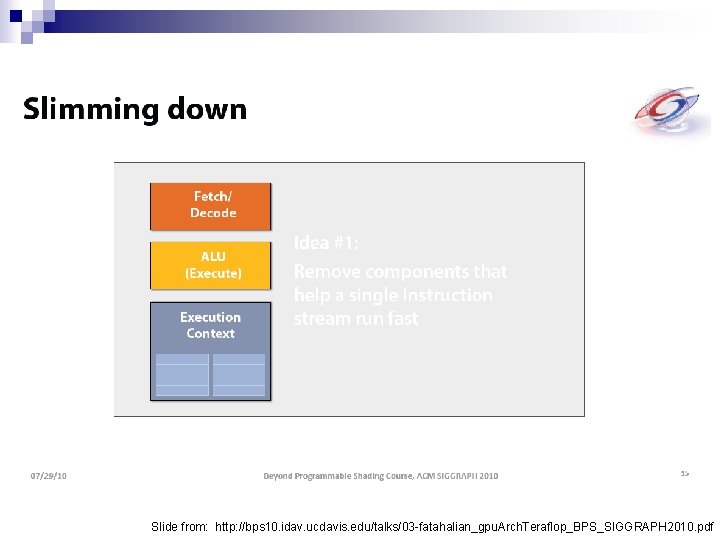

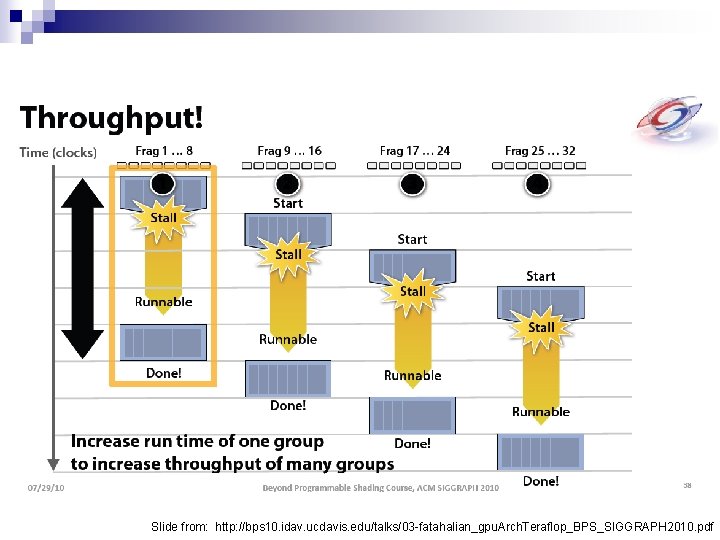

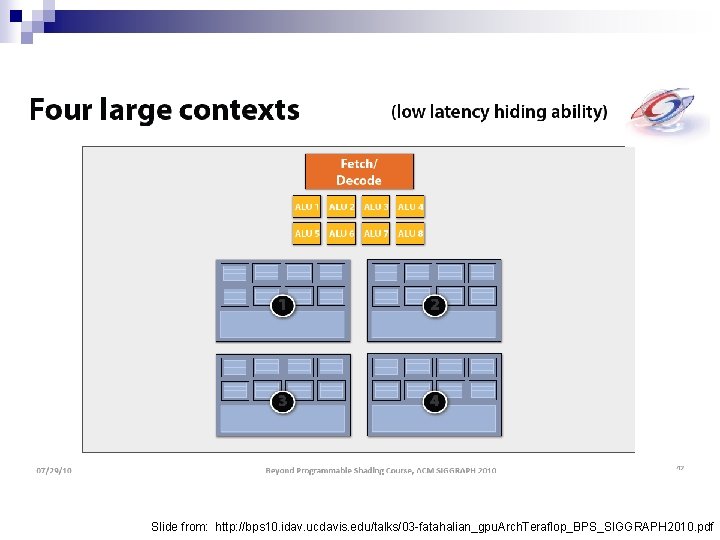

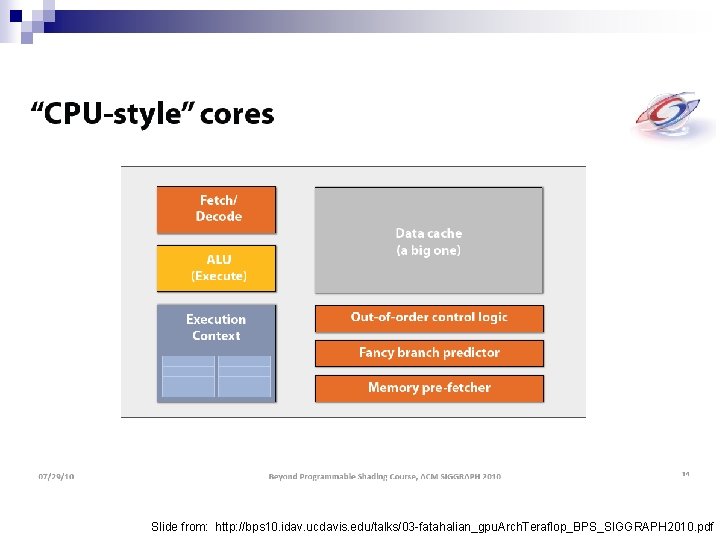

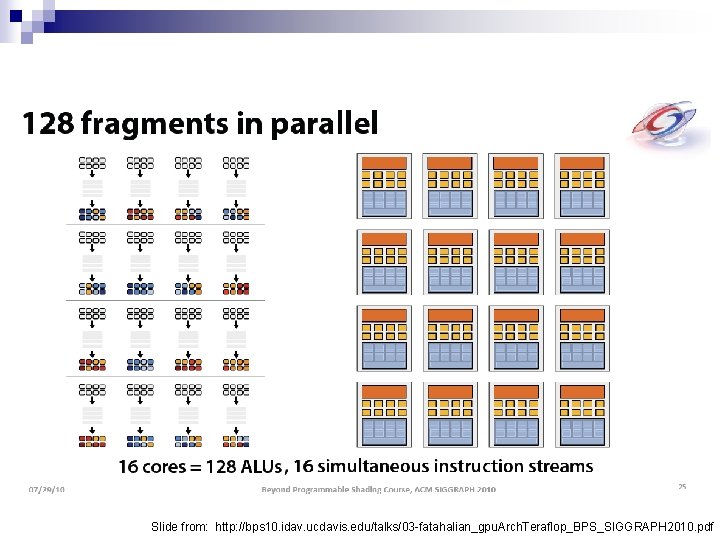

Slide from: http: //bps 10. idav. ucdavis. edu/talks/03 -fatahalian_gpu. Arch. Teraflop_BPS_SIGGRAPH 2010. pdf

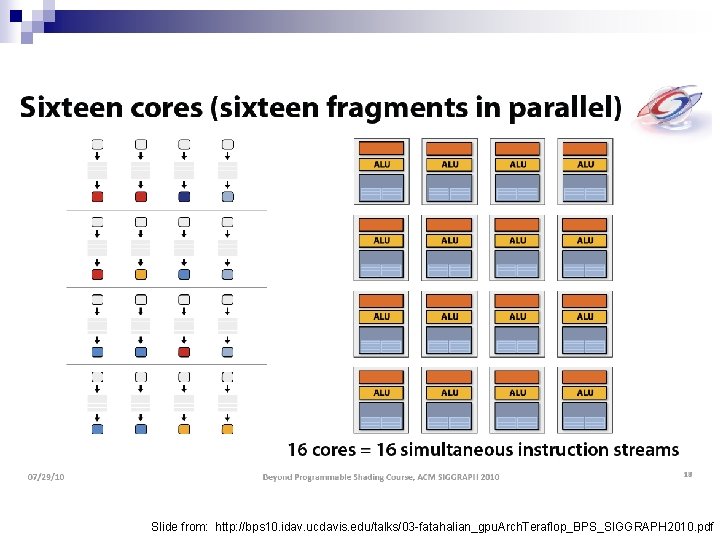

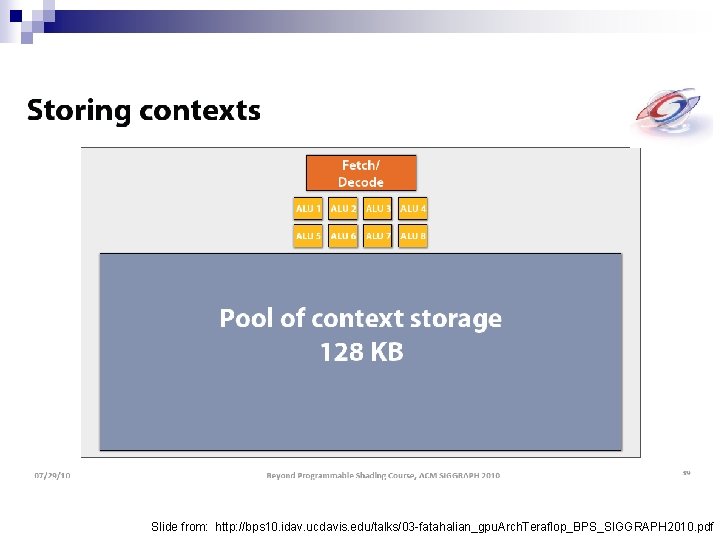

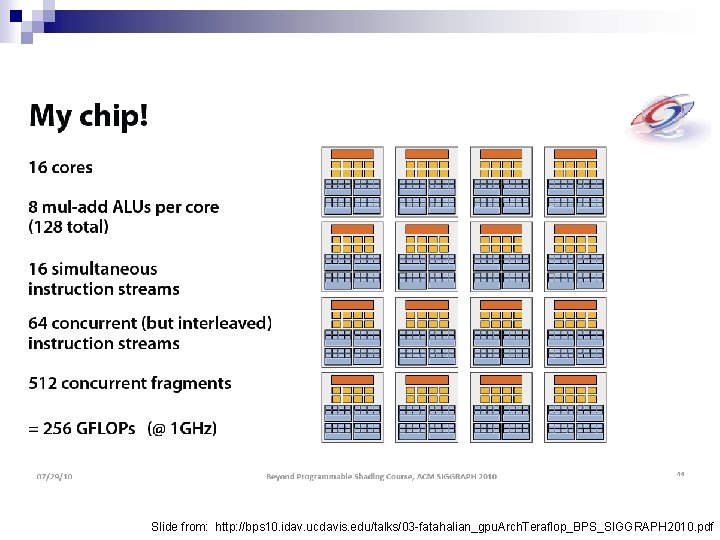

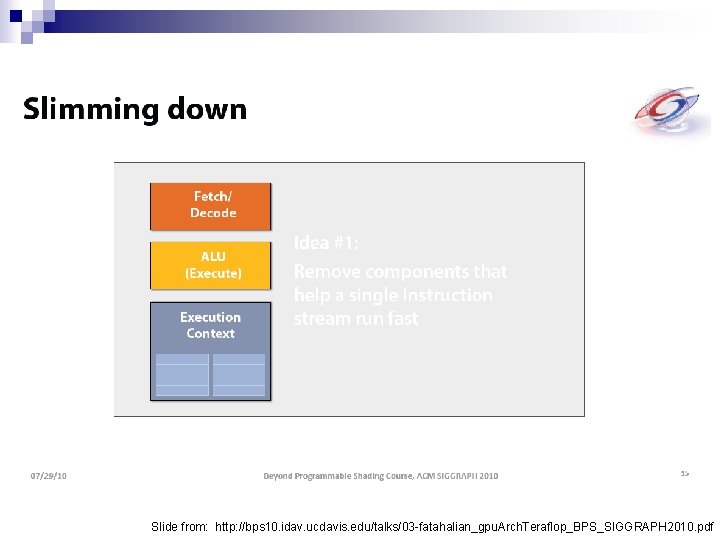

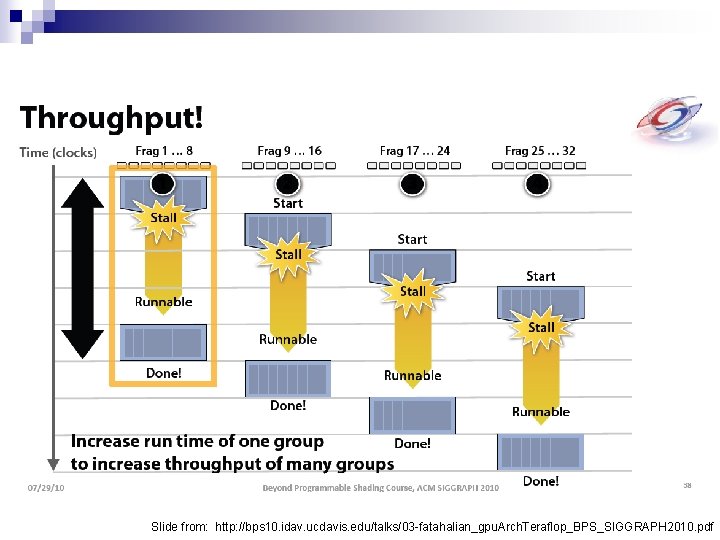

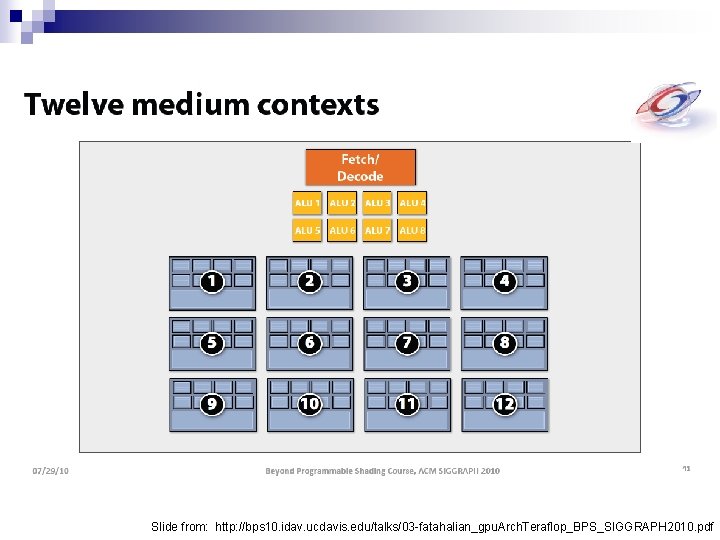

Slide from: http: //bps 10. idav. ucdavis. edu/talks/03 -fatahalian_gpu. Arch. Teraflop_BPS_SIGGRAPH 2010. pdf

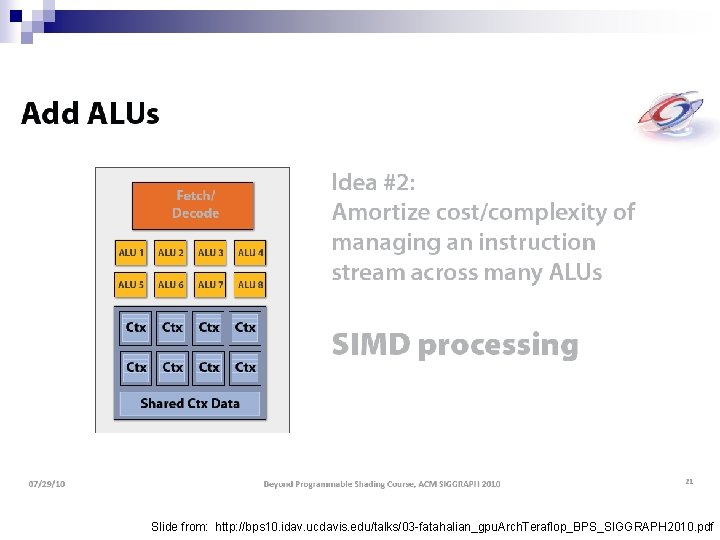

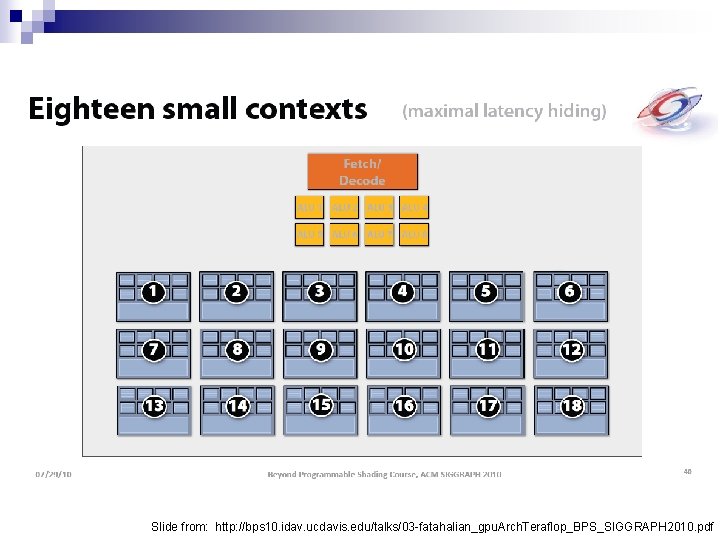

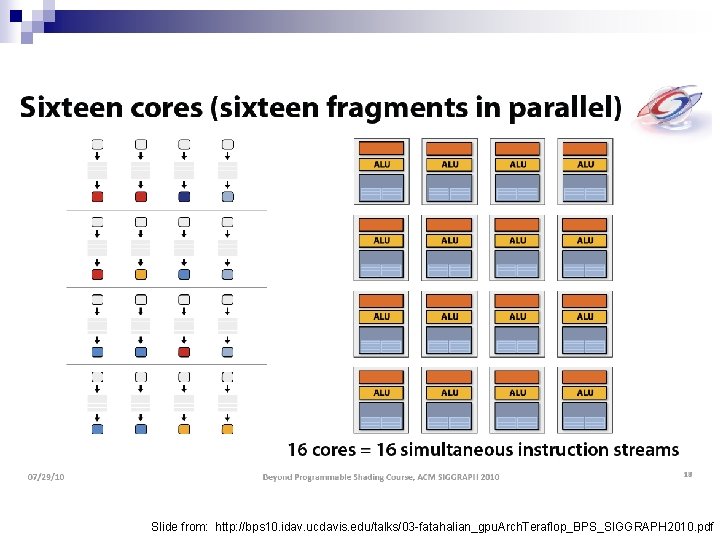

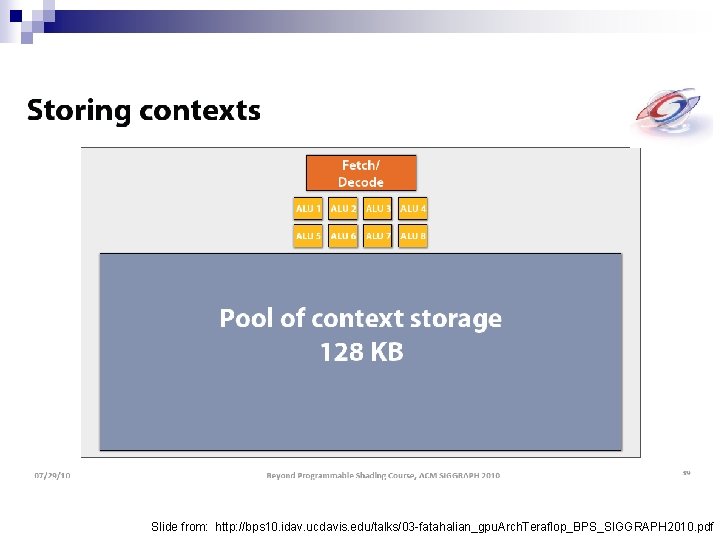

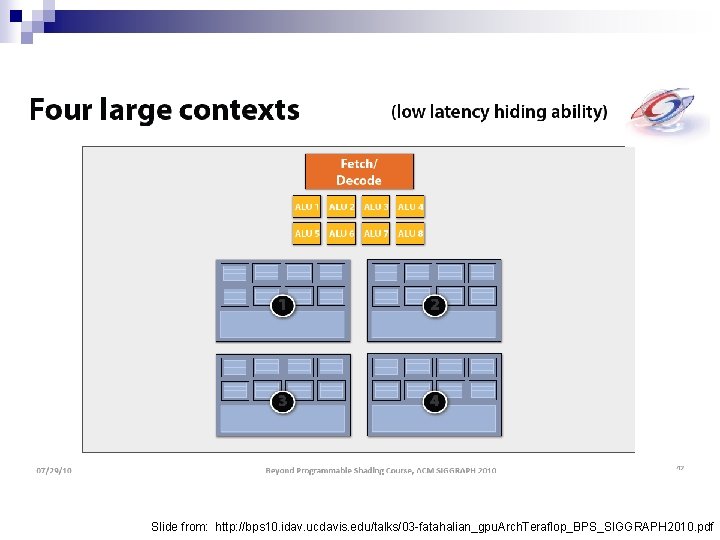

Slide from: http: //bps 10. idav. ucdavis. edu/talks/03 -fatahalian_gpu. Arch. Teraflop_BPS_SIGGRAPH 2010. pdf

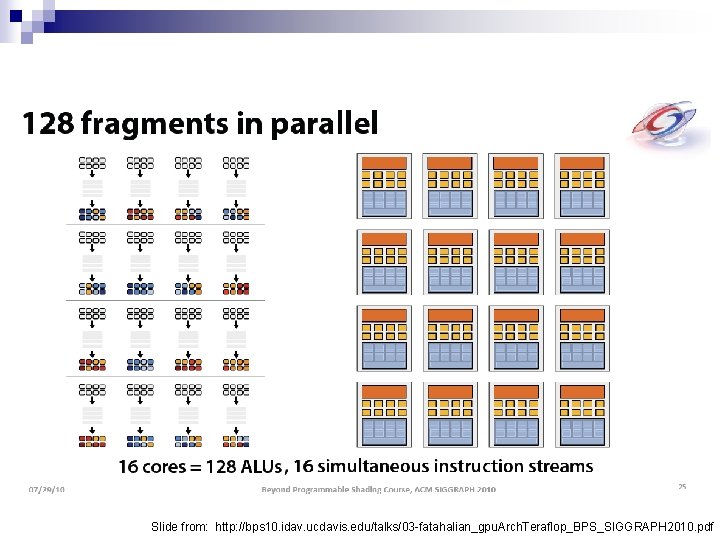

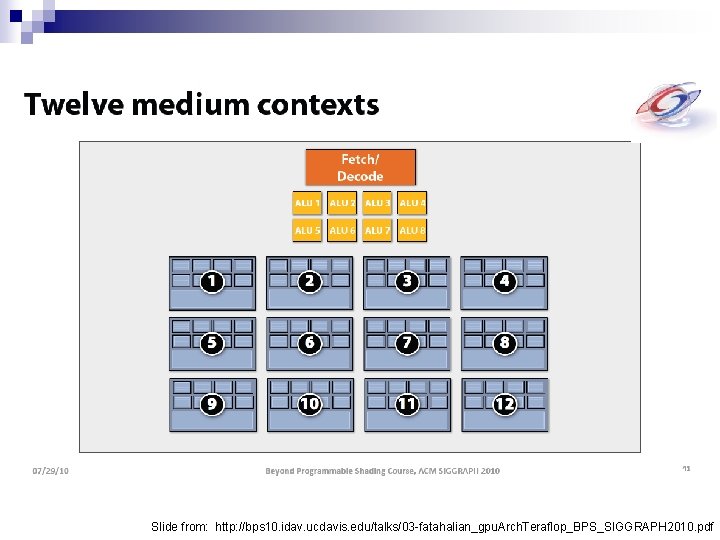

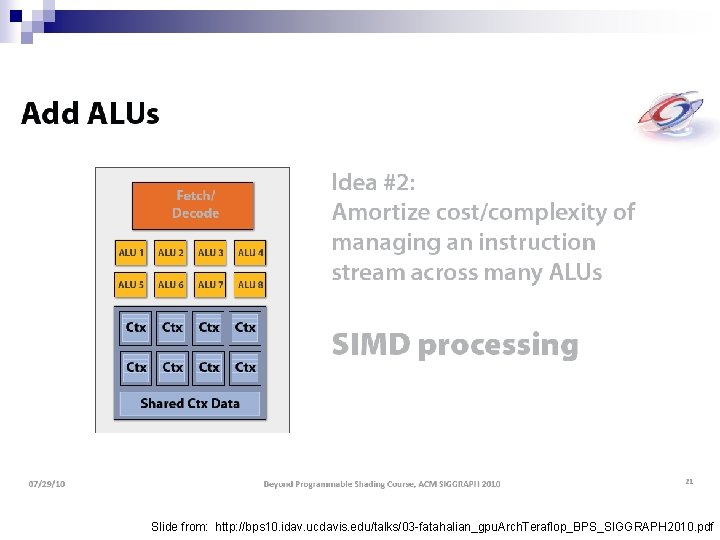

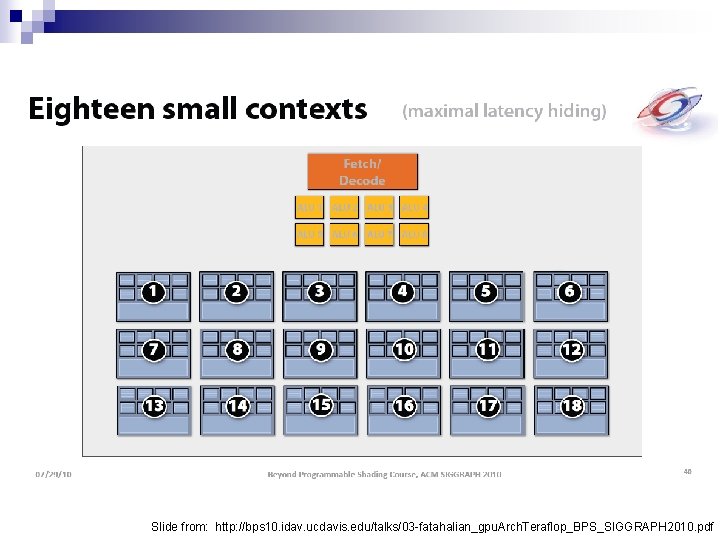

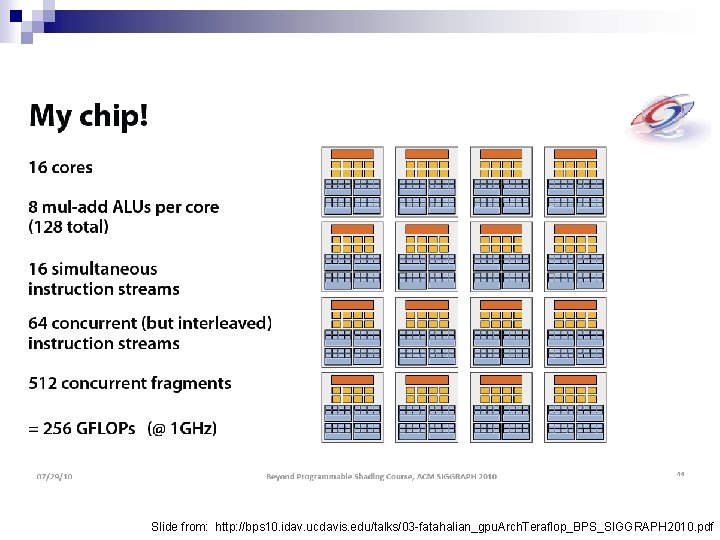

Slide from: http: //bps 10. idav. ucdavis. edu/talks/03 -fatahalian_gpu. Arch. Teraflop_BPS_SIGGRAPH 2010. pdf

Slide from: http: //bps 10. idav. ucdavis. edu/talks/03 -fatahalian_gpu. Arch. Teraflop_BPS_SIGGRAPH 2010. pdf

Slide from: http: //bps 10. idav. ucdavis. edu/talks/03 -fatahalian_gpu. Arch. Teraflop_BPS_SIGGRAPH 2010. pdf

Slide from: http: //bps 10. idav. ucdavis. edu/talks/03 -fatahalian_gpu. Arch. Teraflop_BPS_SIGGRAPH 2010. pdf

Slide from: http: //bps 10. idav. ucdavis. edu/talks/03 -fatahalian_gpu. Arch. Teraflop_BPS_SIGGRAPH 2010. pdf

Slide from: http: //bps 10. idav. ucdavis. edu/talks/03 -fatahalian_gpu. Arch. Teraflop_BPS_SIGGRAPH 2010. pdf

Slide from: http: //bps 10. idav. ucdavis. edu/talks/03 -fatahalian_gpu. Arch. Teraflop_BPS_SIGGRAPH 2010. pdf

Slide from: http: //bps 10. idav. ucdavis. edu/talks/03 -fatahalian_gpu. Arch. Teraflop_BPS_SIGGRAPH 2010. pdf

Let’s program this thing!

GPU Computing History n 2001/2002 – researchers see GPU as dataparallel coprocessor ¨ The n GPGPU field is born 2007 – NVIDIA releases CUDA ¨ CUDA – Compute Uniform Device Architecture ¨ GPGPU shifts to GPU Computing n 2008 – Khronos releases Open. CL specification

CUDA Abstractions A hierarchy of thread groups n Shared memories n Barrier synchronization n

CUDA Terminology n Host – typically the CPU ¨ Code n written in ANSI C Device – typically the GPU (data-parallel) ¨ Code written in extended ANSI C Host and device have separate memories n CUDA Program n ¨ Contains both host and device code

CUDA Terminology n Kernel – data-parallel function ¨ Invoking a kernel creates lightweight threads on the device n n Threads are generated and scheduled with hardware Does a kernel remind you of a shader in Open. GL?

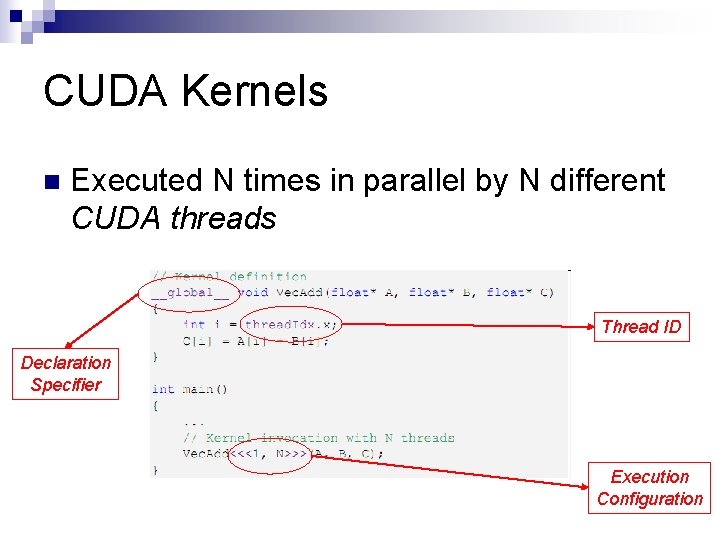

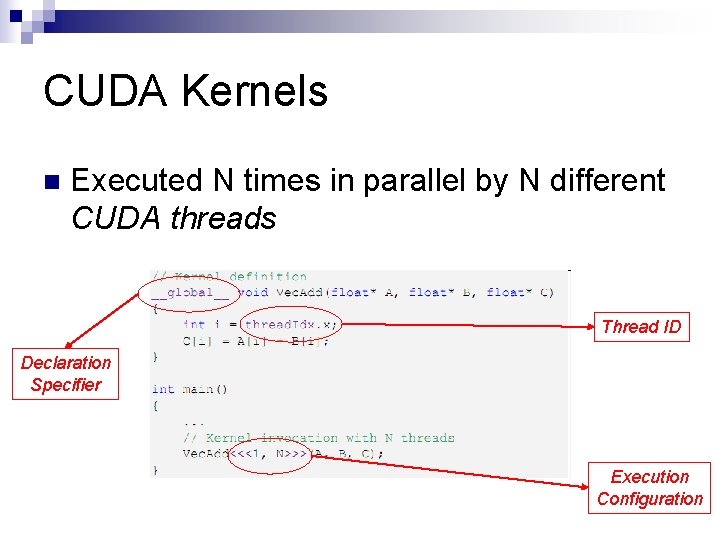

CUDA Kernels n Executed N times in parallel by N different CUDA threads Thread ID Declaration Specifier Execution Configuration

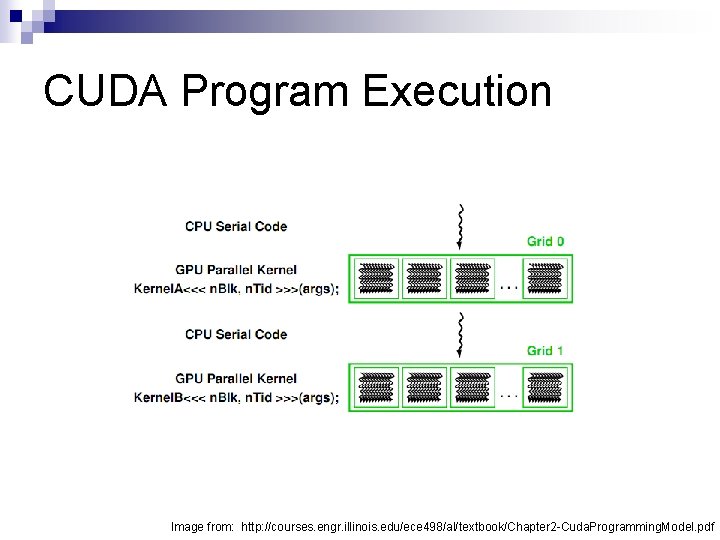

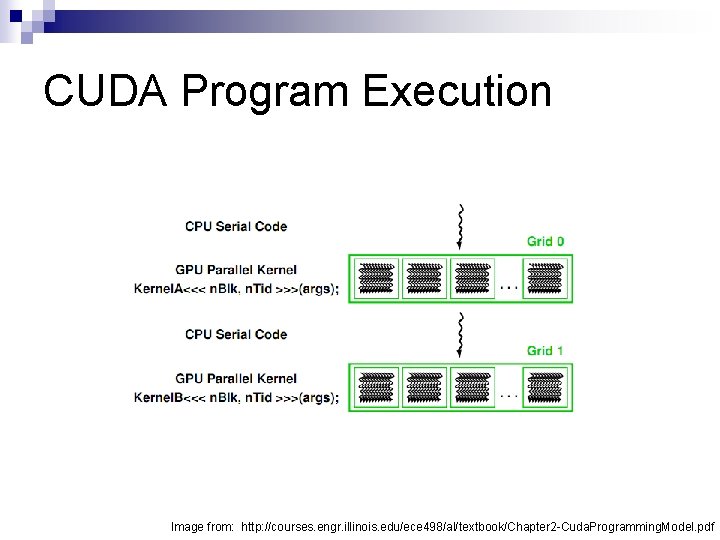

CUDA Program Execution Image from: http: //courses. engr. illinois. edu/ece 498/al/textbook/Chapter 2 -Cuda. Programming. Model. pdf

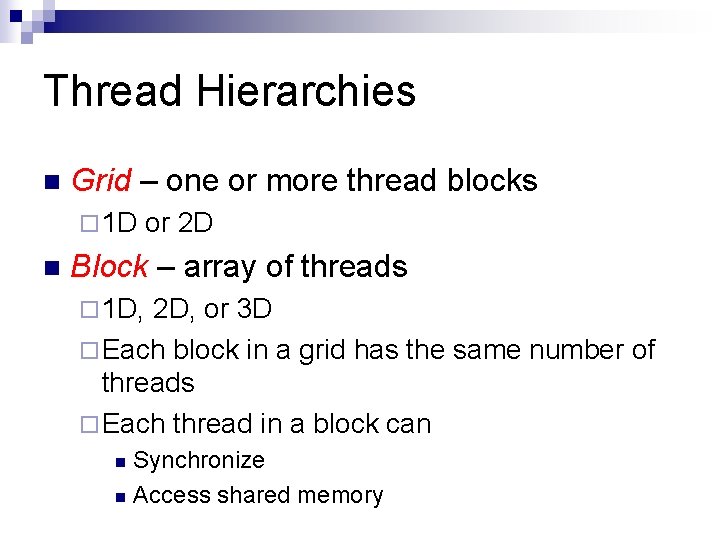

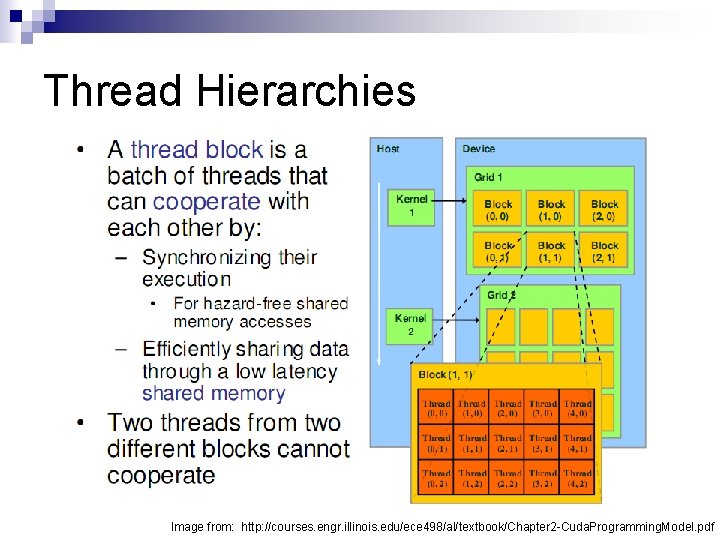

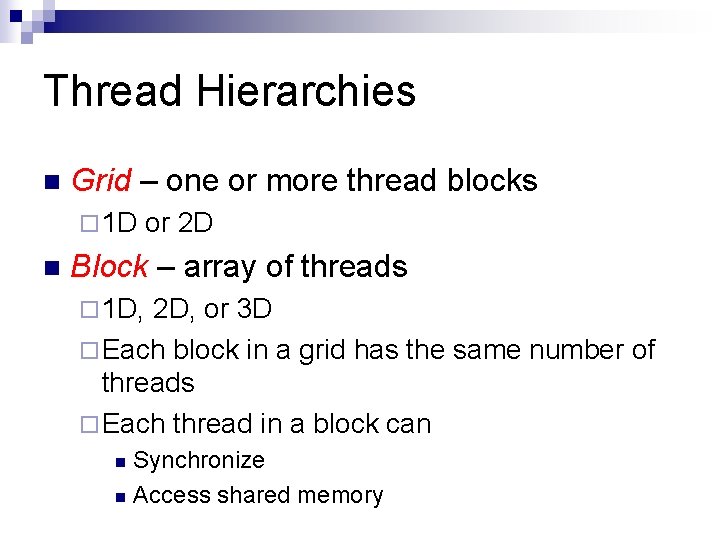

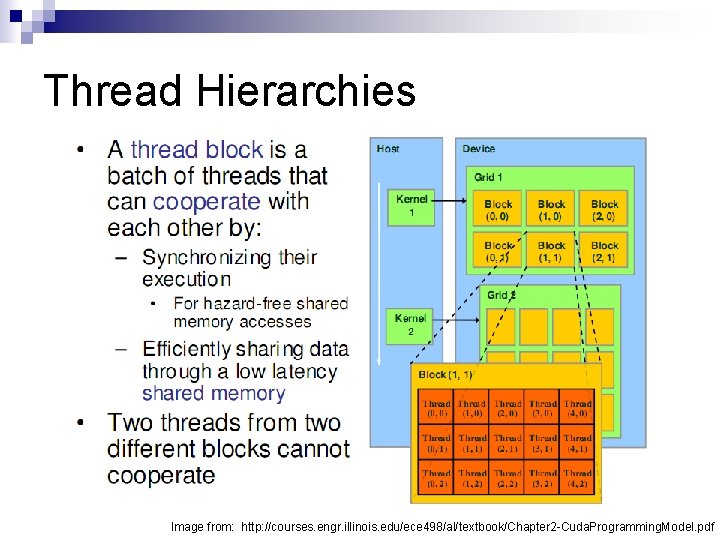

Thread Hierarchies n Grid – one or more thread blocks ¨ 1 D n or 2 D Block – array of threads ¨ 1 D, 2 D, or 3 D ¨ Each block in a grid has the same number of threads ¨ Each thread in a block can Synchronize n Access shared memory n

Thread Hierarchies Image from: http: //courses. engr. illinois. edu/ece 498/al/textbook/Chapter 2 -Cuda. Programming. Model. pdf

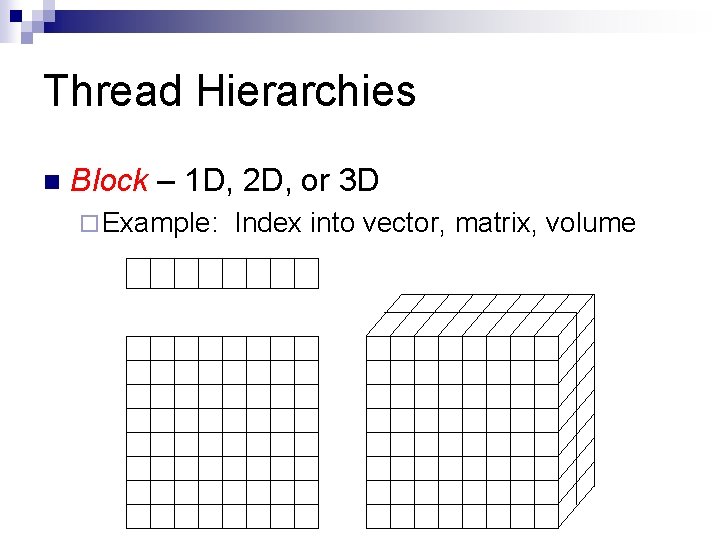

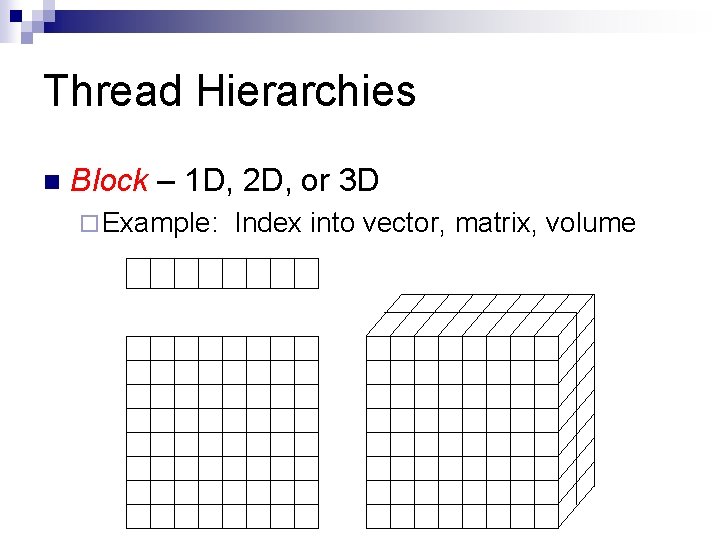

Thread Hierarchies n Block – 1 D, 2 D, or 3 D ¨ Example: Index into vector, matrix, volume

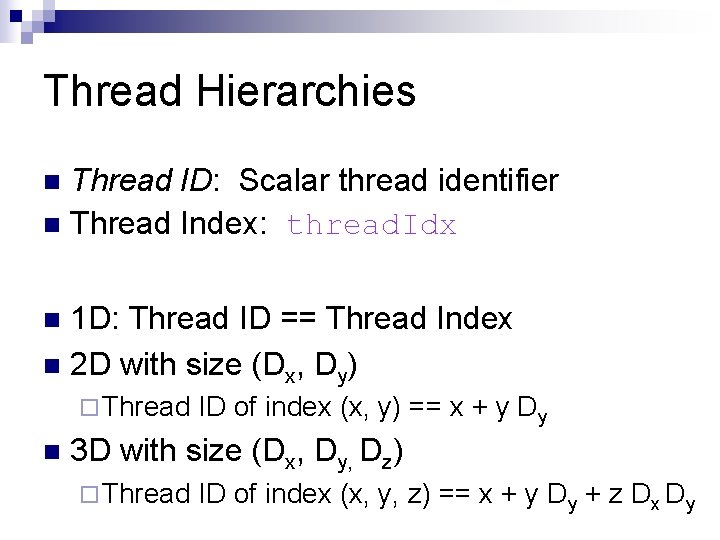

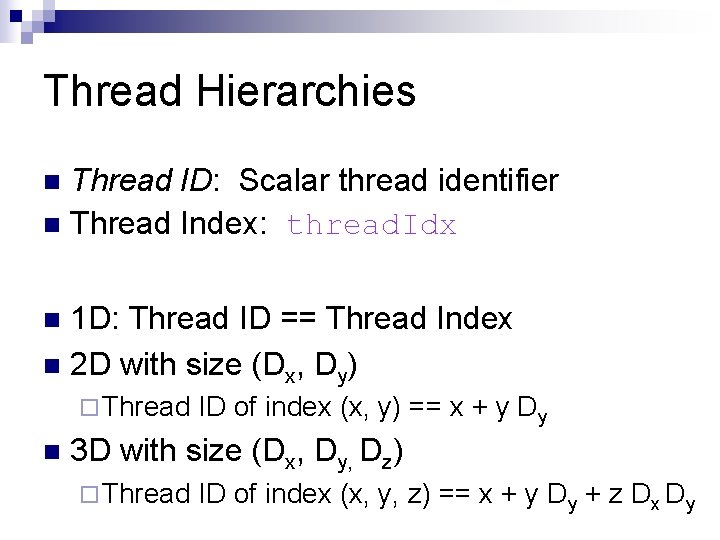

Thread Hierarchies Thread ID: Scalar thread identifier n Thread Index: thread. Idx n 1 D: Thread ID == Thread Index n 2 D with size (Dx, Dy) n ¨ Thread n ID of index (x, y) == x + y Dy 3 D with size (Dx, Dy, Dz) ¨ Thread ID of index (x, y, z) == x + y Dy + z Dx Dy

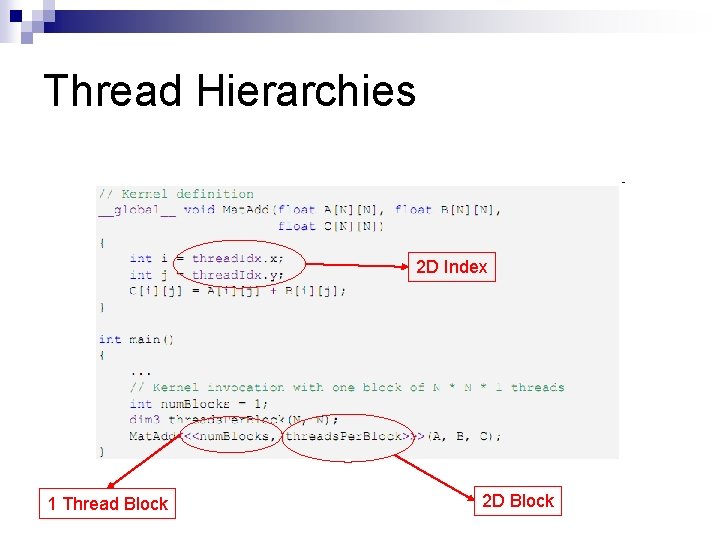

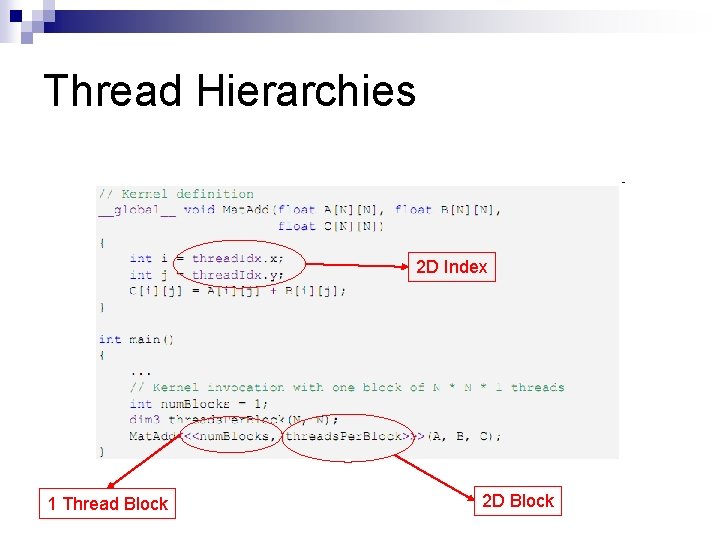

Thread Hierarchies 2 D Index 1 Thread Block 2 D Block

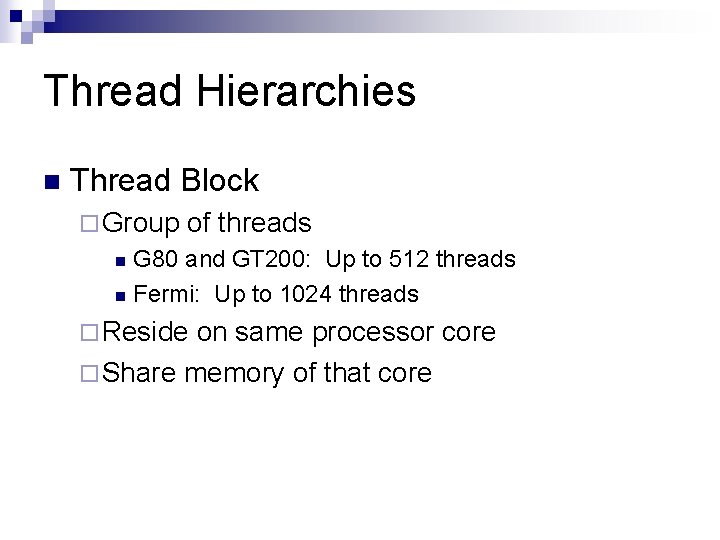

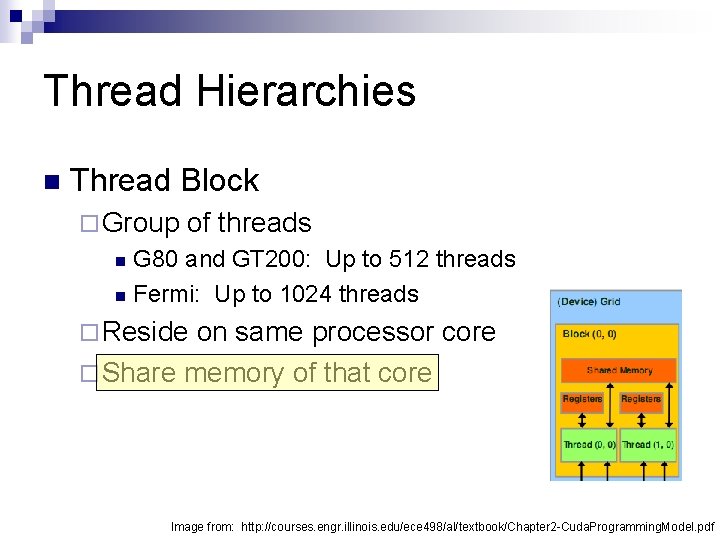

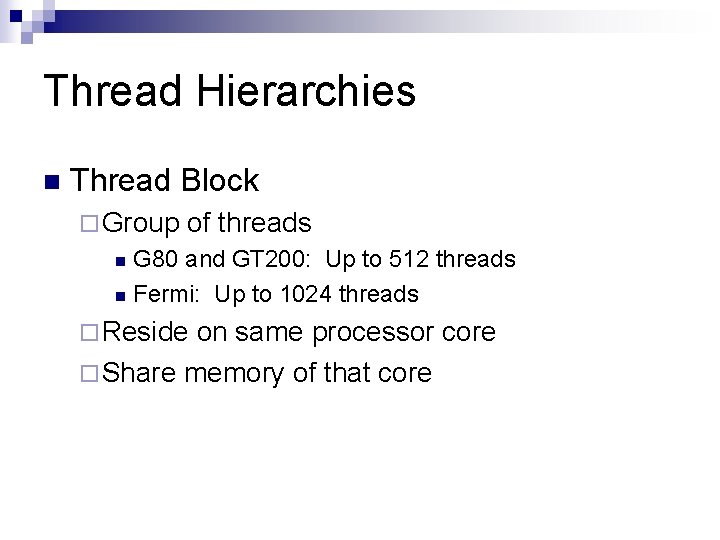

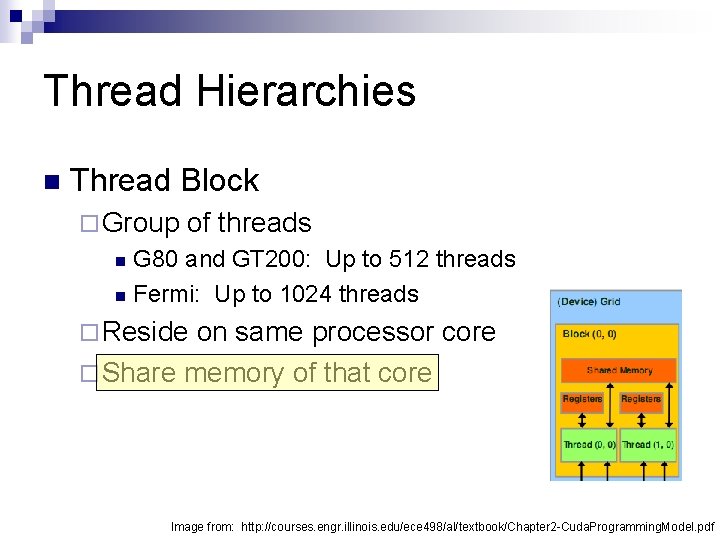

Thread Hierarchies n Thread Block ¨ Group of threads G 80 and GT 200: Up to 512 threads n Fermi: Up to 1024 threads n ¨ Reside on same processor core ¨ Share memory of that core

Thread Hierarchies n Thread Block ¨ Group of threads G 80 and GT 200: Up to 512 threads n Fermi: Up to 1024 threads n ¨ Reside on same processor core ¨ Share memory of that core Image from: http: //courses. engr. illinois. edu/ece 498/al/textbook/Chapter 2 -Cuda. Programming. Model. pdf

Thread Hierarchies Block Index: block. Idx n Dimension: block. Dim n ¨ 1 D or 2 D

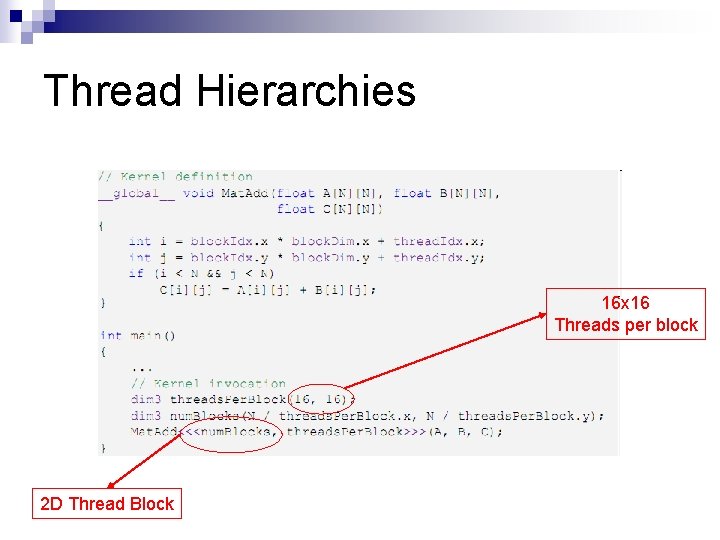

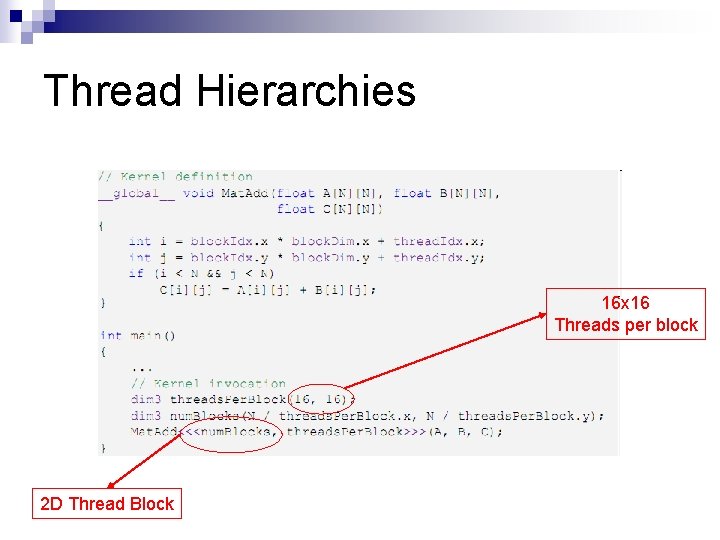

Thread Hierarchies 16 x 16 Threads per block 2 D Thread Block

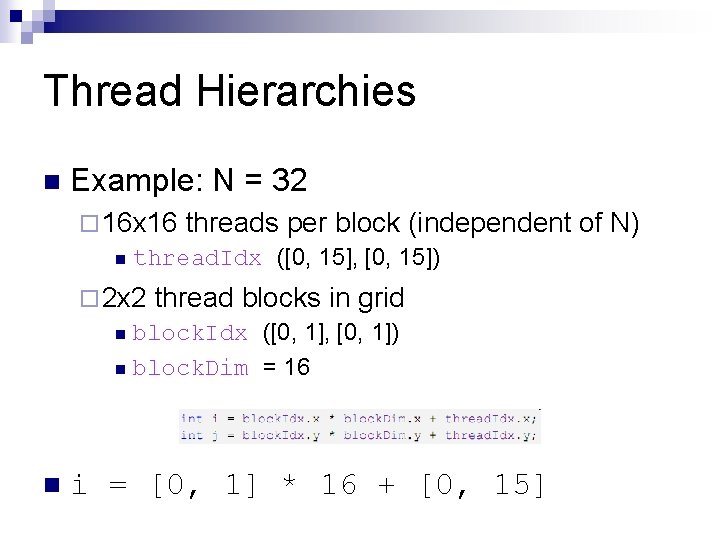

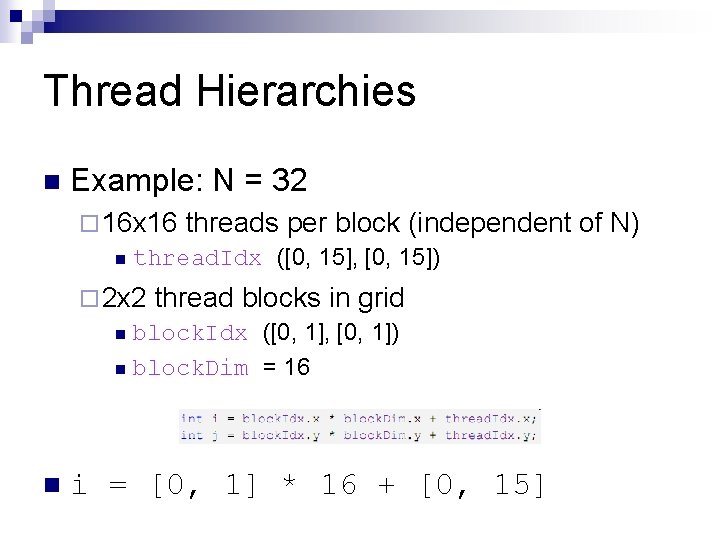

Thread Hierarchies n Example: N = 32 ¨ 16 x 16 threads per block (independent n thread. Idx ([0, 15], [0, 15]) ¨ 2 x 2 thread blocks in grid n block. Idx ([0, 1], [0, 1]) n n block. Dim = 16 i = [0, 1] * 16 + [0, 15] of N)

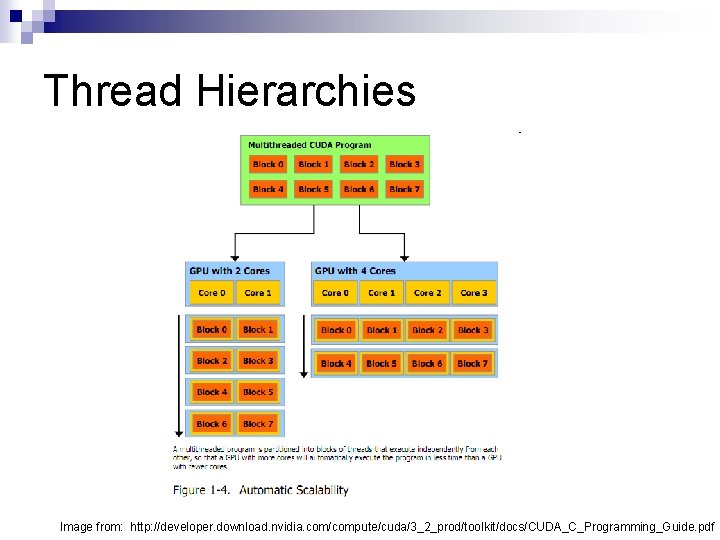

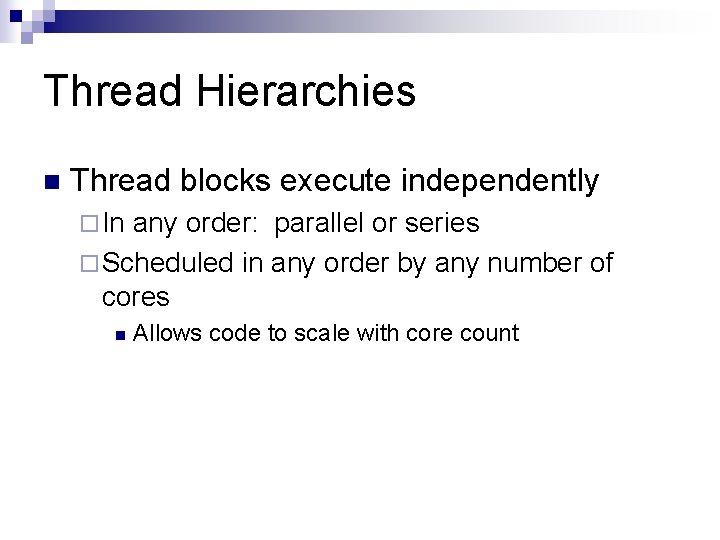

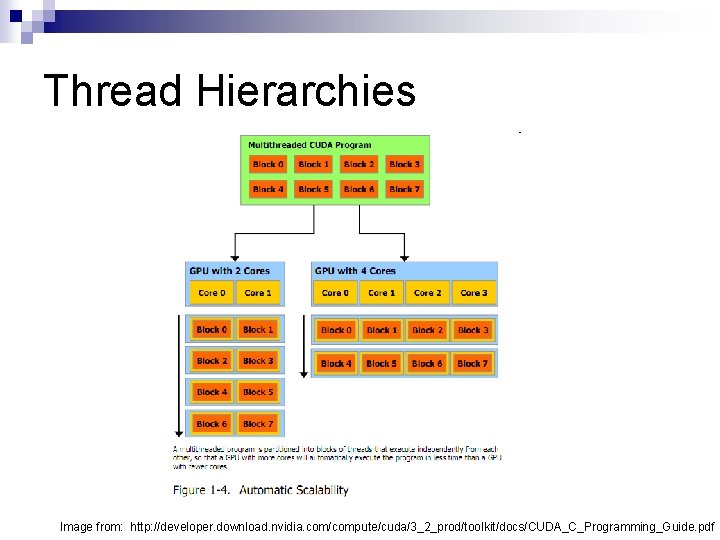

Thread Hierarchies n Thread blocks execute independently ¨ In any order: parallel or series ¨ Scheduled in any order by any number of cores n Allows code to scale with core count

Thread Hierarchies Image from: http: //developer. download. nvidia. com/compute/cuda/3_2_prod/toolkit/docs/CUDA_C_Programming_Guide. pdf

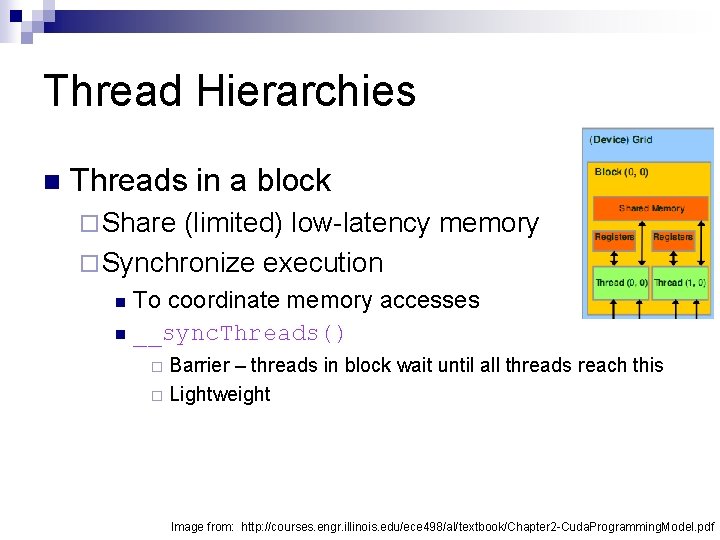

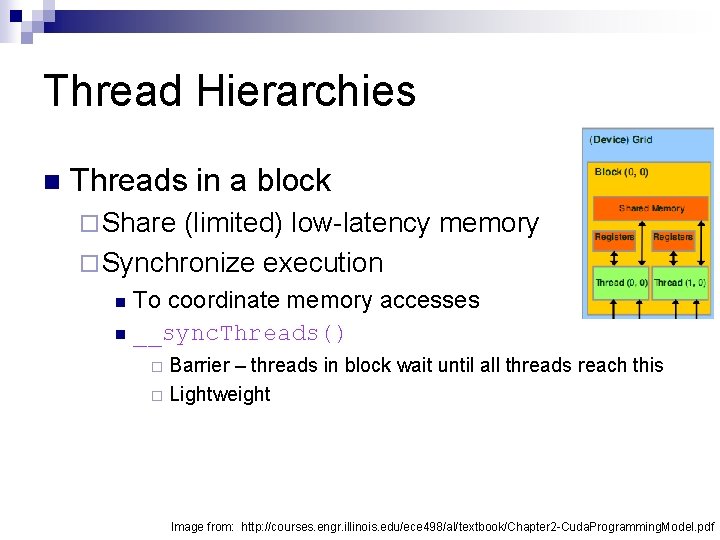

Thread Hierarchies n Threads in a block ¨ Share (limited) low-latency memory ¨ Synchronize execution To coordinate memory accesses n __sync. Threads() n Barrier – threads in block wait until all threads reach this ¨ Lightweight ¨ Image from: http: //courses. engr. illinois. edu/ece 498/al/textbook/Chapter 2 -Cuda. Programming. Model. pdf

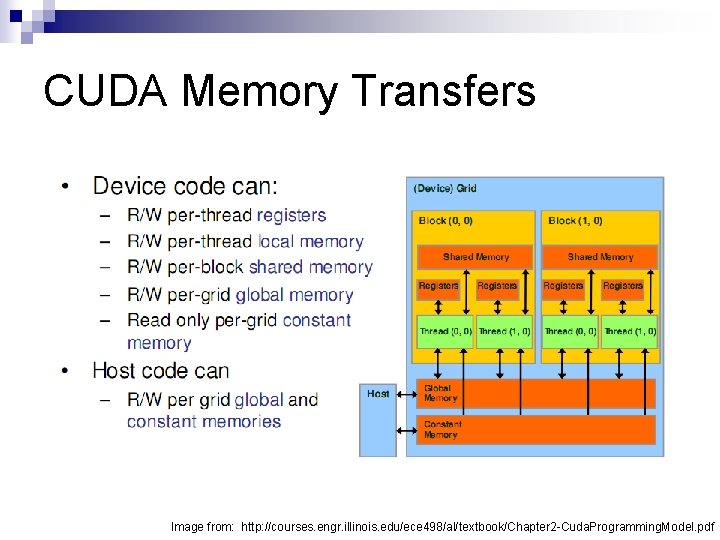

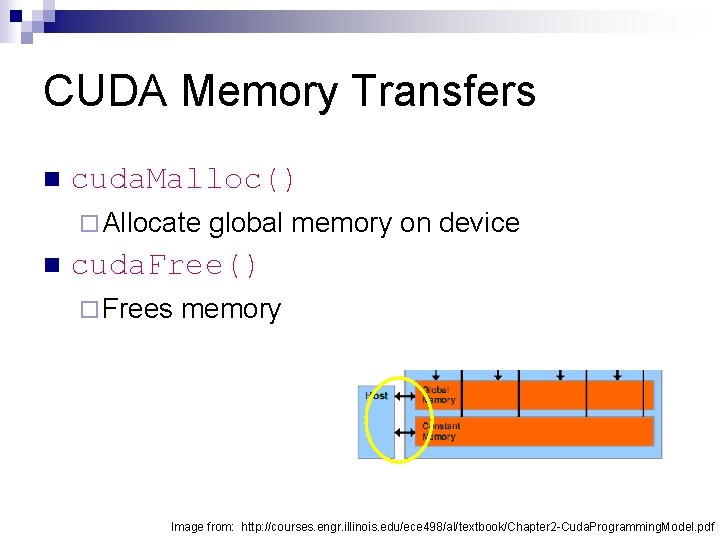

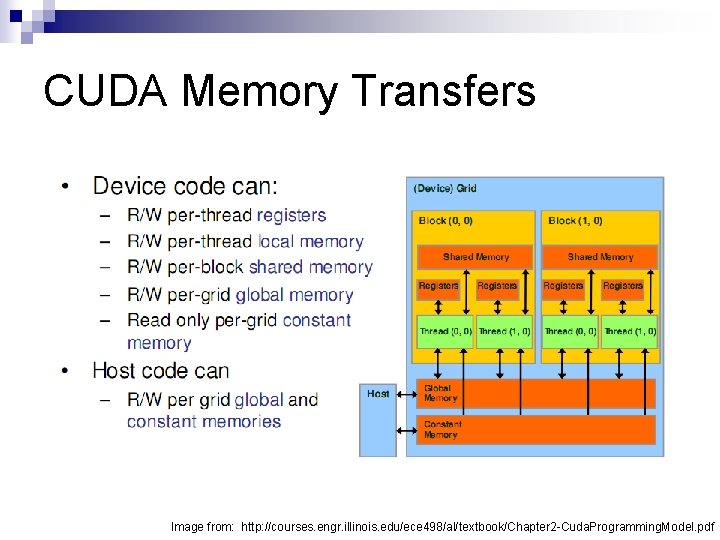

CUDA Memory Transfers Image from: http: //courses. engr. illinois. edu/ece 498/al/textbook/Chapter 2 -Cuda. Programming. Model. pdf

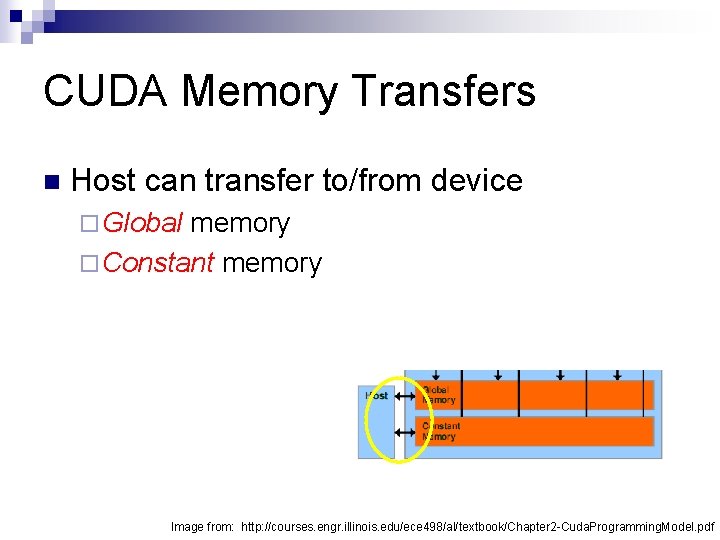

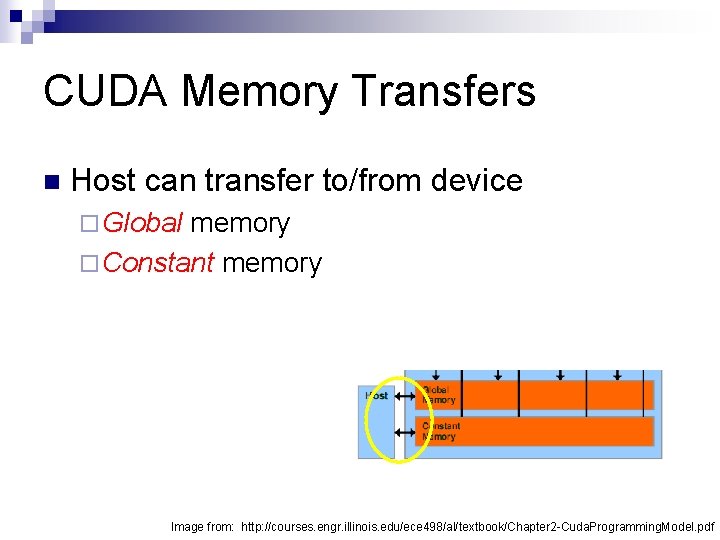

CUDA Memory Transfers n Host can transfer to/from device ¨ Global memory ¨ Constant memory Image from: http: //courses. engr. illinois. edu/ece 498/al/textbook/Chapter 2 -Cuda. Programming. Model. pdf

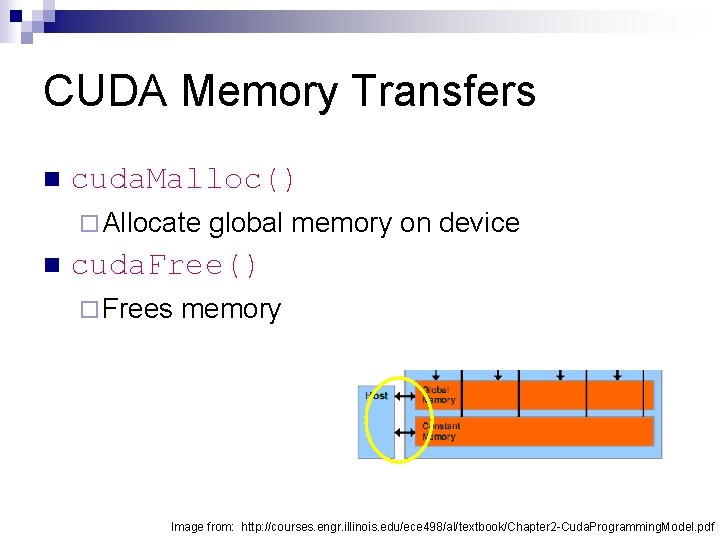

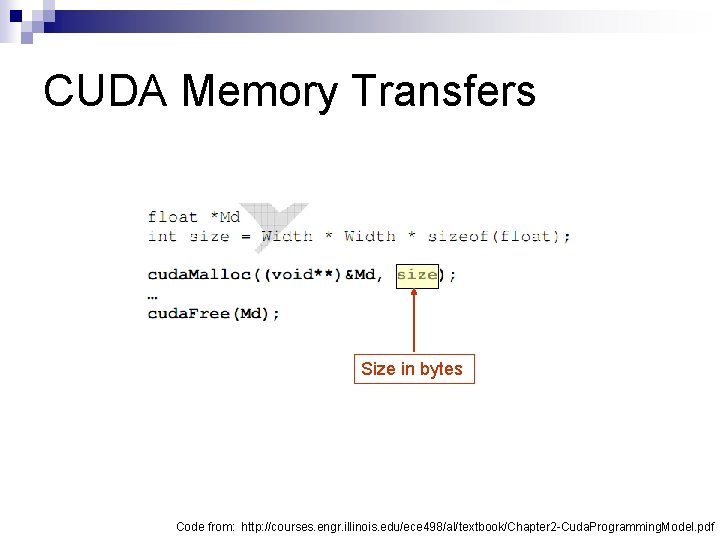

CUDA Memory Transfers n cuda. Malloc() ¨ Allocate n global memory on device cuda. Free() ¨ Frees memory Image from: http: //courses. engr. illinois. edu/ece 498/al/textbook/Chapter 2 -Cuda. Programming. Model. pdf

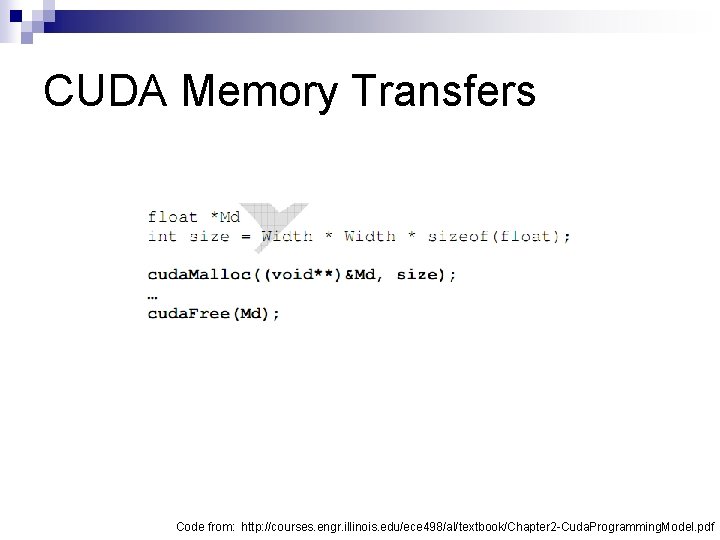

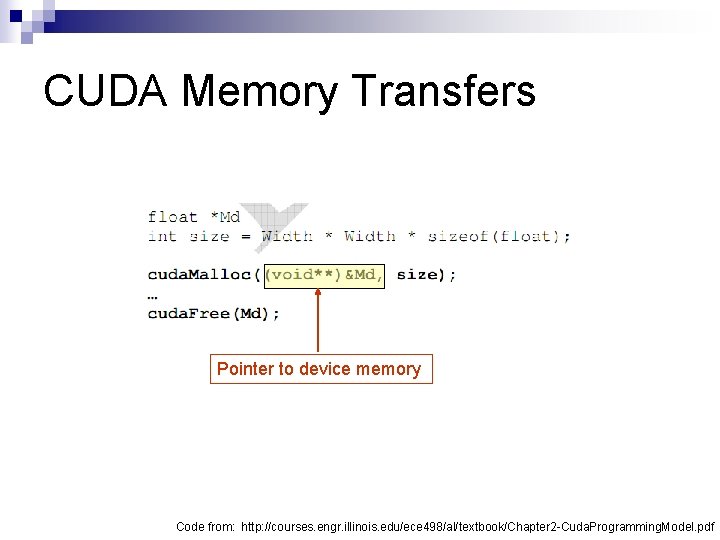

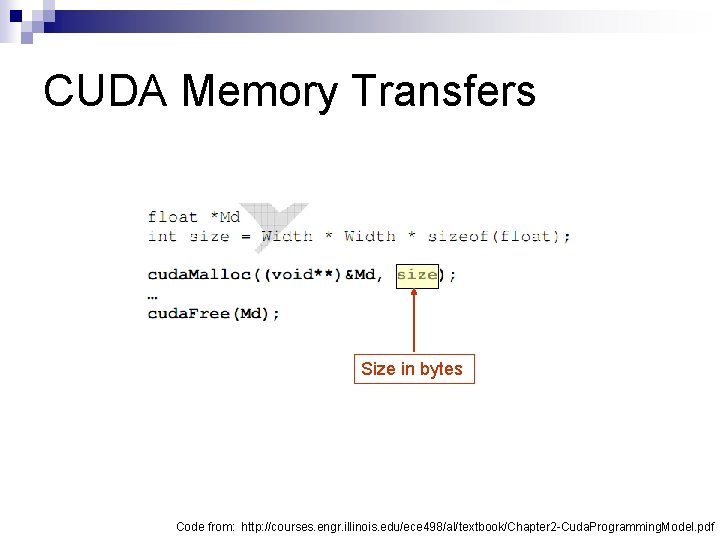

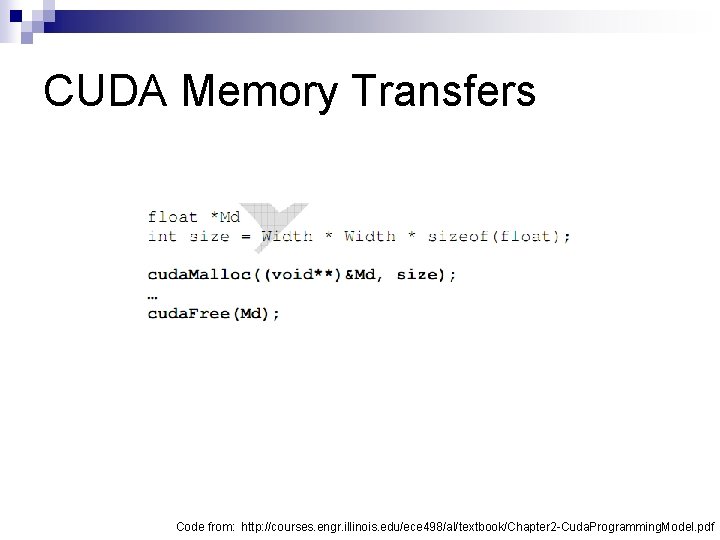

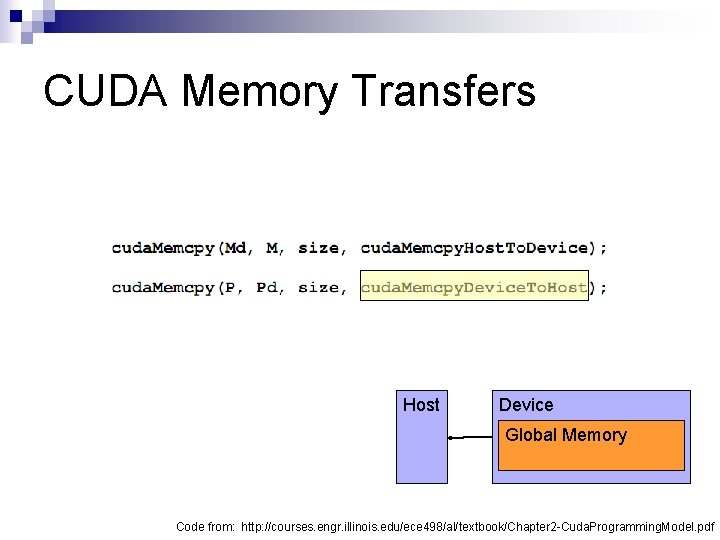

CUDA Memory Transfers Code from: http: //courses. engr. illinois. edu/ece 498/al/textbook/Chapter 2 -Cuda. Programming. Model. pdf

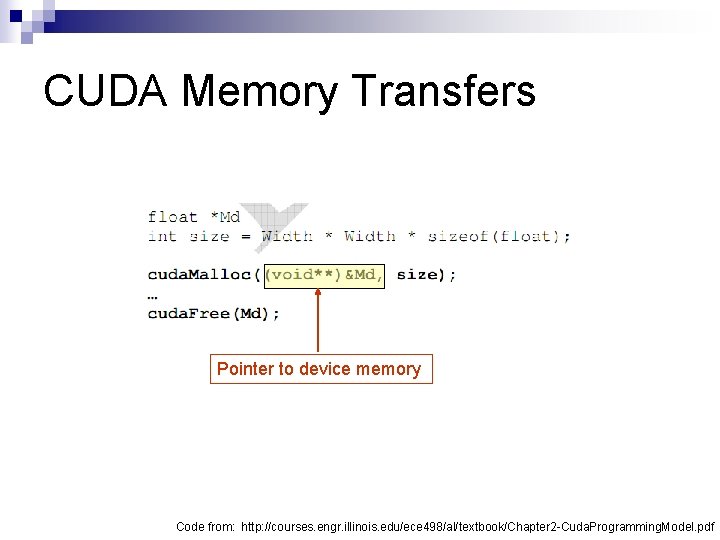

CUDA Memory Transfers Pointer to device memory Code from: http: //courses. engr. illinois. edu/ece 498/al/textbook/Chapter 2 -Cuda. Programming. Model. pdf

CUDA Memory Transfers Size in bytes Code from: http: //courses. engr. illinois. edu/ece 498/al/textbook/Chapter 2 -Cuda. Programming. Model. pdf

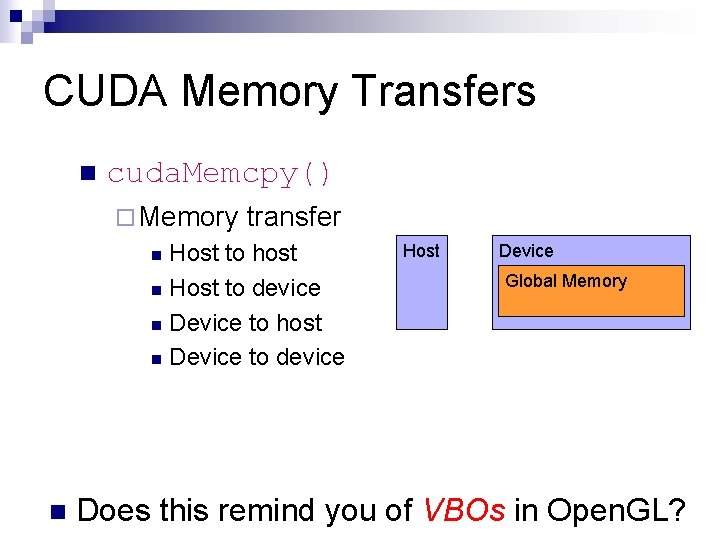

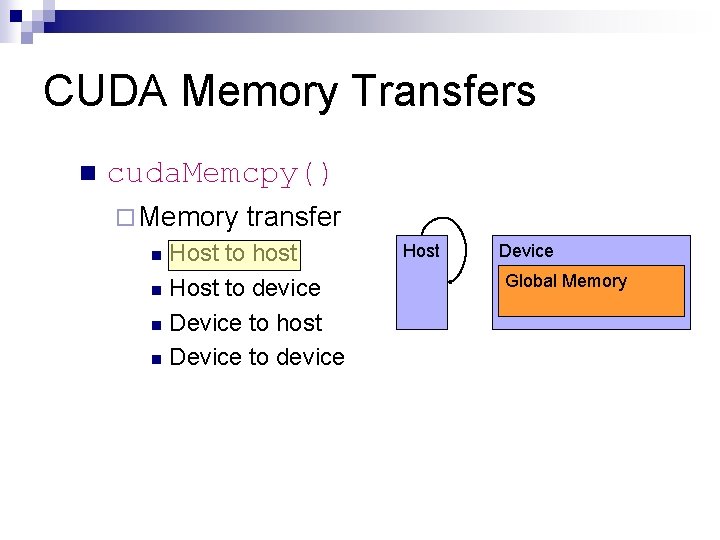

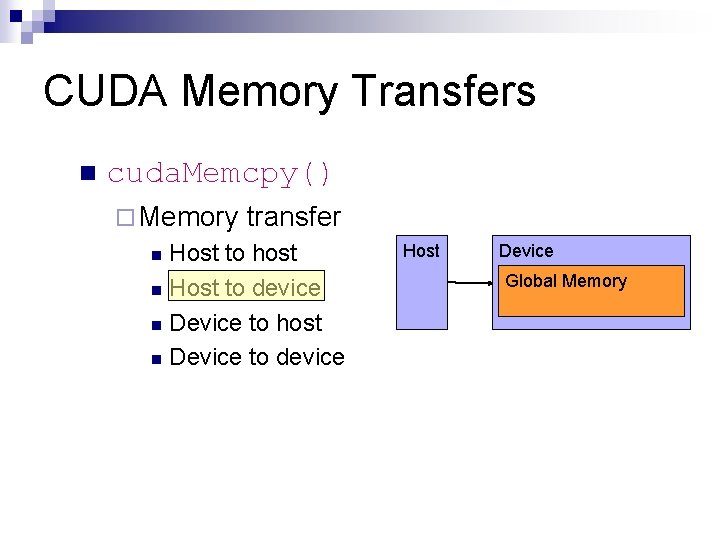

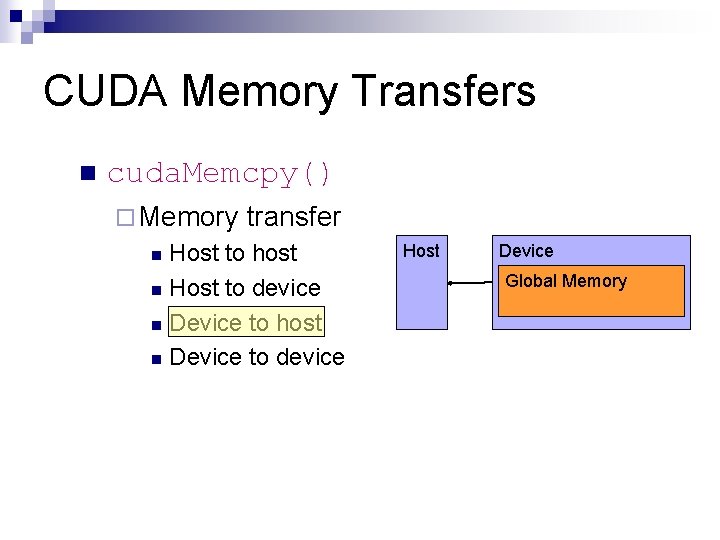

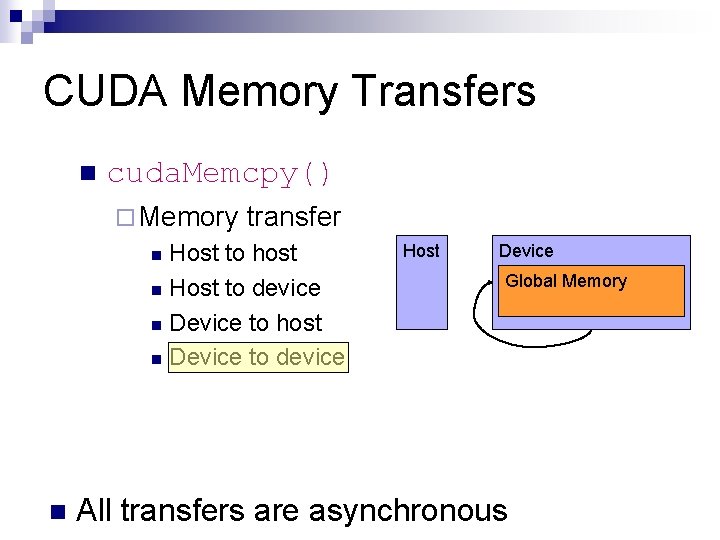

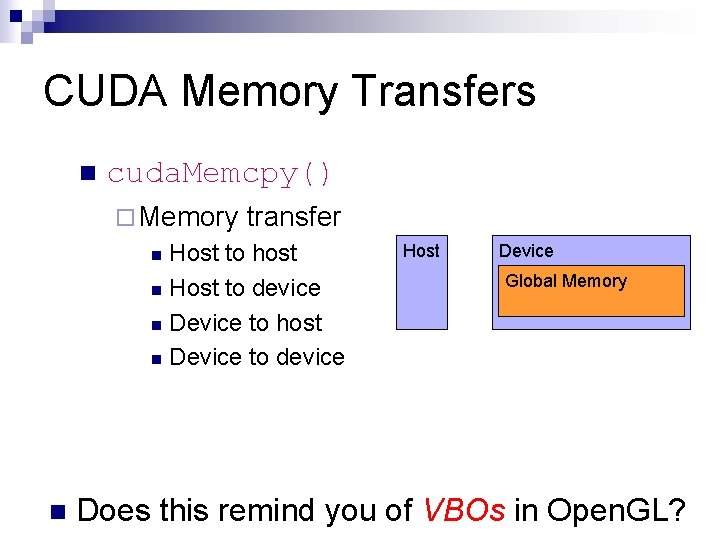

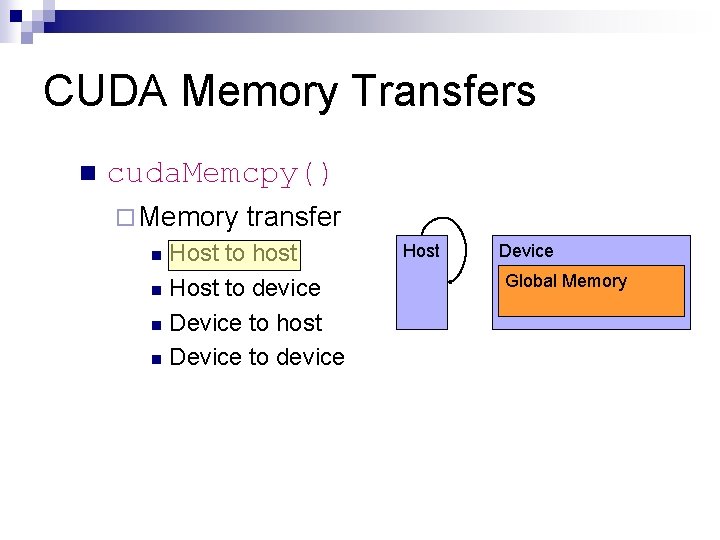

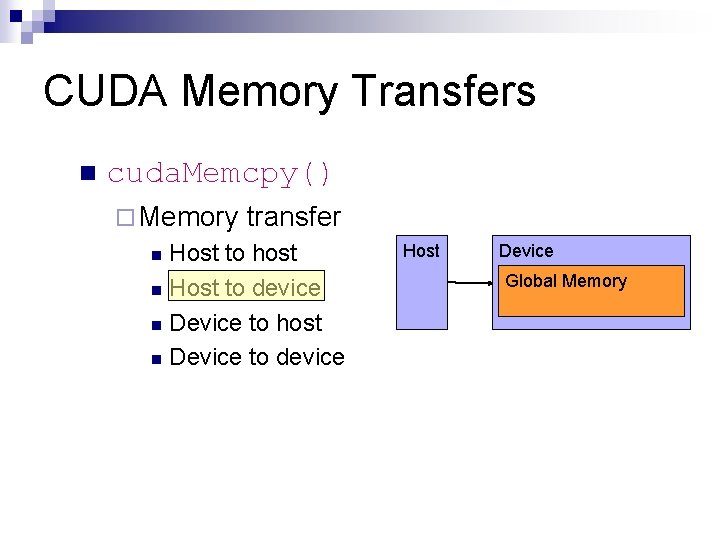

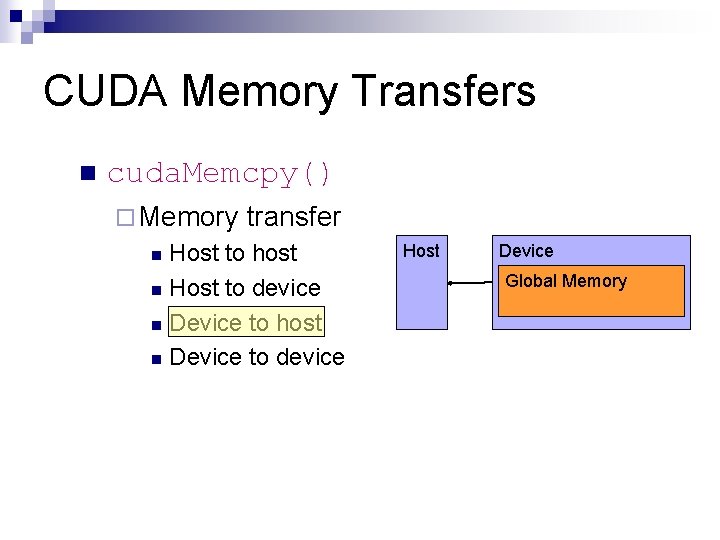

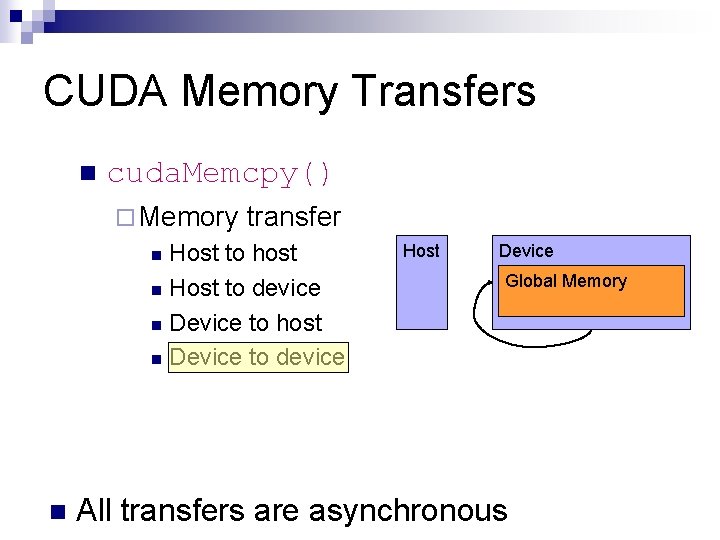

CUDA Memory Transfers n cuda. Memcpy() ¨ Memory transfer Host to host n Host to device n Device to host n Device to device n n Host Device Global Memory Does this remind you of VBOs in Open. GL?

CUDA Memory Transfers n cuda. Memcpy() ¨ Memory transfer Host to host n Host to device n Device to host n Device to device n Host Device Global Memory

CUDA Memory Transfers n cuda. Memcpy() ¨ Memory transfer Host to host n Host to device n Device to host n Device to device n Host Device Global Memory

CUDA Memory Transfers n cuda. Memcpy() ¨ Memory transfer Host to host n Host to device n Device to host n Device to device n Host Device Global Memory

CUDA Memory Transfers n cuda. Memcpy() ¨ Memory transfer Host to host n Host to device n Device to host n Device to device n n Host Device Global Memory All transfers are asynchronous

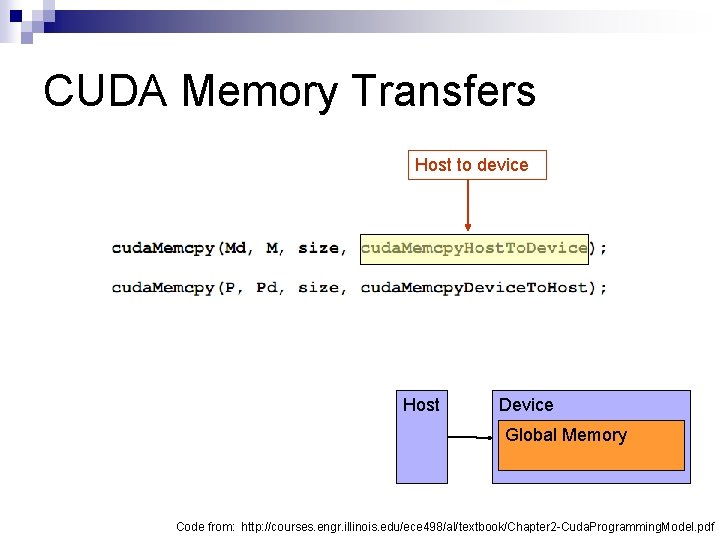

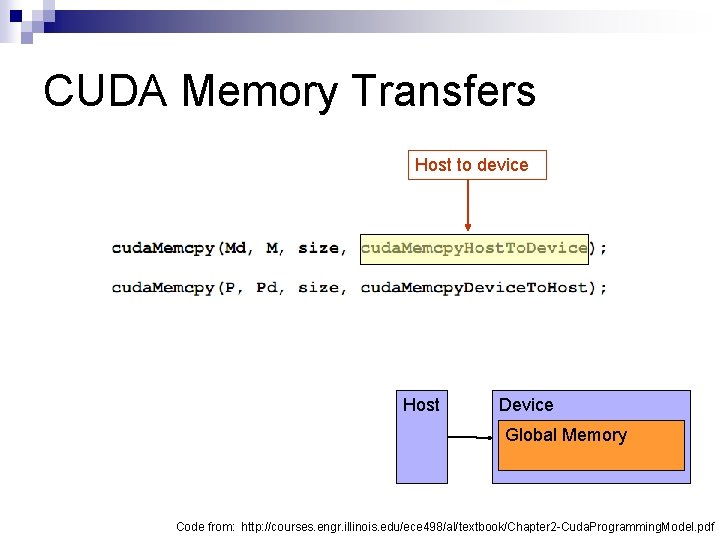

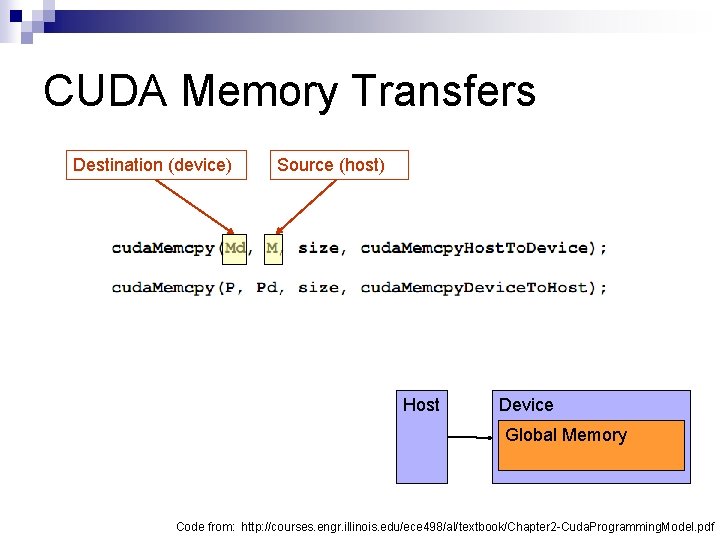

CUDA Memory Transfers Host to device Host Device Global Memory Code from: http: //courses. engr. illinois. edu/ece 498/al/textbook/Chapter 2 -Cuda. Programming. Model. pdf

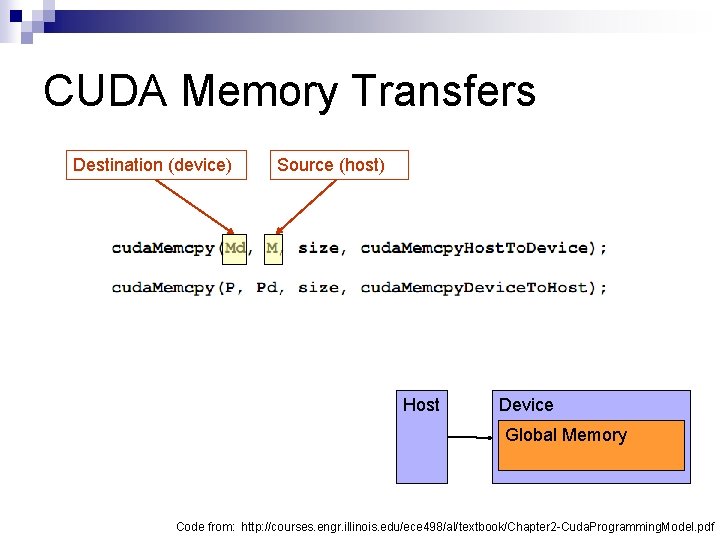

CUDA Memory Transfers Destination (device) Source (host) Host Device Global Memory Code from: http: //courses. engr. illinois. edu/ece 498/al/textbook/Chapter 2 -Cuda. Programming. Model. pdf

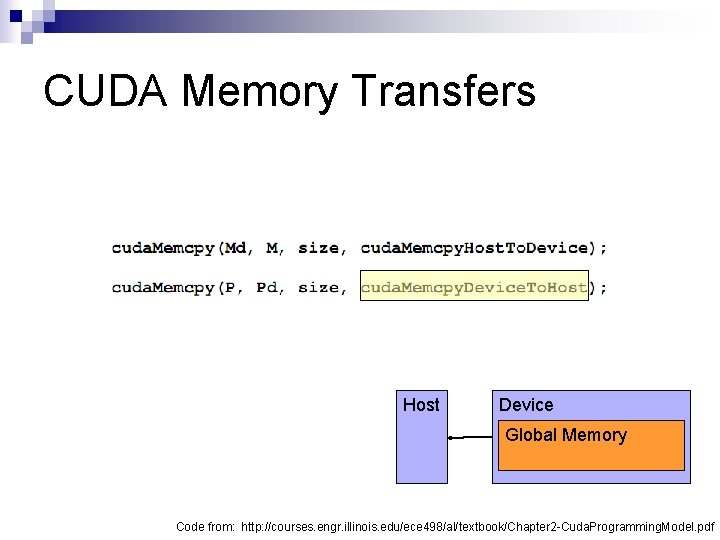

CUDA Memory Transfers Host Device Global Memory Code from: http: //courses. engr. illinois. edu/ece 498/al/textbook/Chapter 2 -Cuda. Programming. Model. pdf

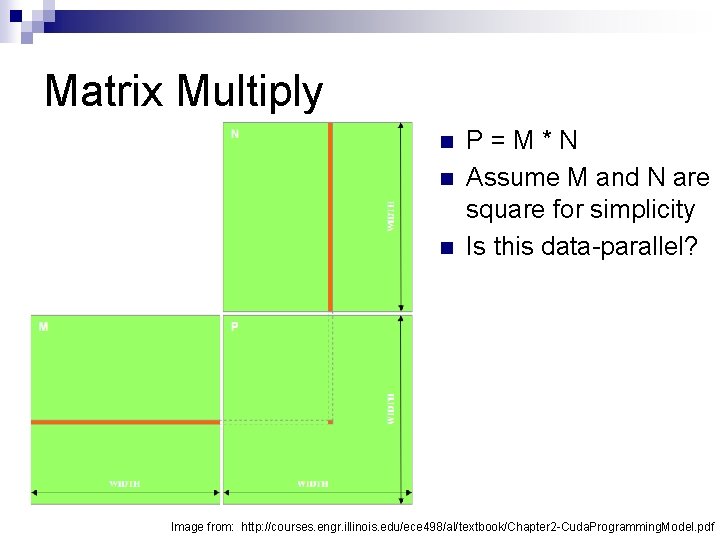

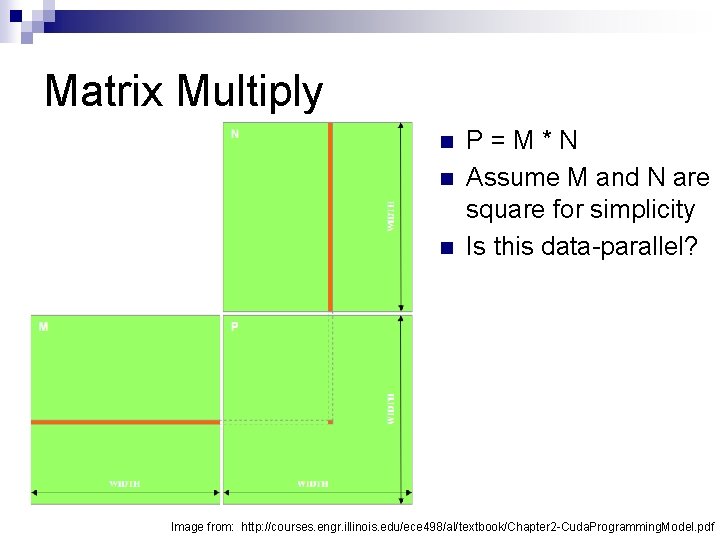

Matrix Multiply n n n P=M*N Assume M and N are square for simplicity Is this data-parallel? Image from: http: //courses. engr. illinois. edu/ece 498/al/textbook/Chapter 2 -Cuda. Programming. Model. pdf

Matrix Multiply n 1, 000 x 1, 000 matrix n 1, 000 dot products n Each 1, 000 multiples and 1, 000 adds

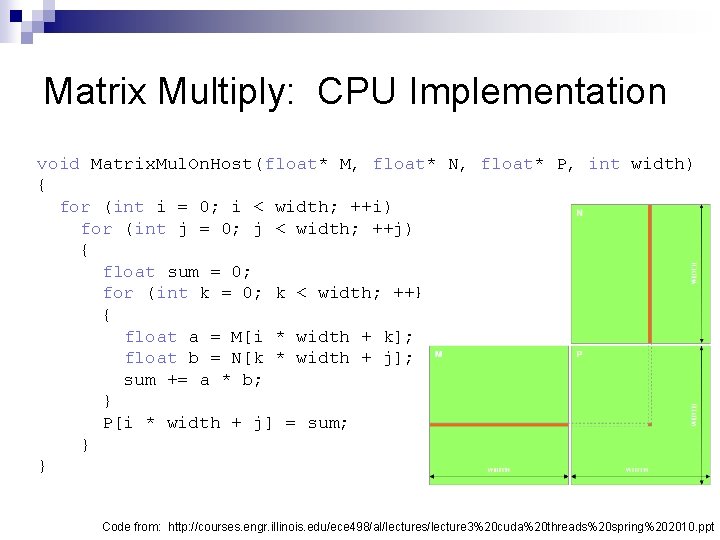

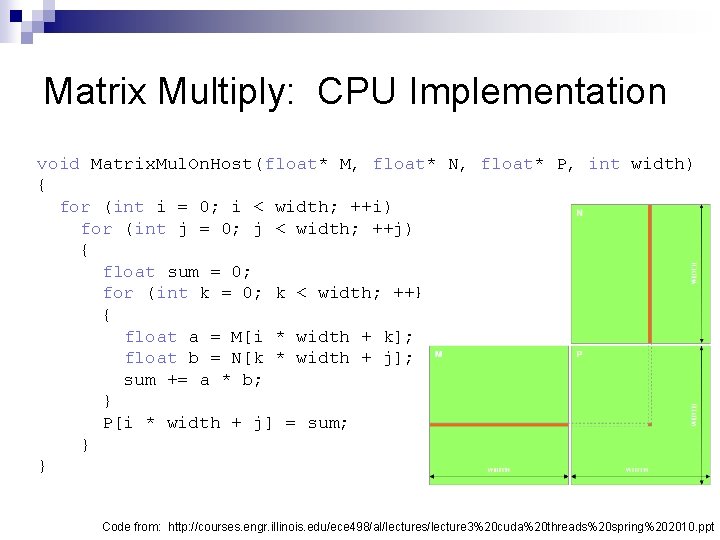

Matrix Multiply: CPU Implementation void Matrix. Mul. On. Host(float* M, float* N, float* P, int width) { for (int i = 0; i < width; ++i) for (int j = 0; j < width; ++j) { float sum = 0; for (int k = 0; k < width; ++k) { float a = M[i * width + k]; float b = N[k * width + j]; sum += a * b; } P[i * width + j] = sum; } } Code from: http: //courses. engr. illinois. edu/ece 498/al/lectures/lecture 3%20 cuda%20 threads%20 spring%202010. ppt

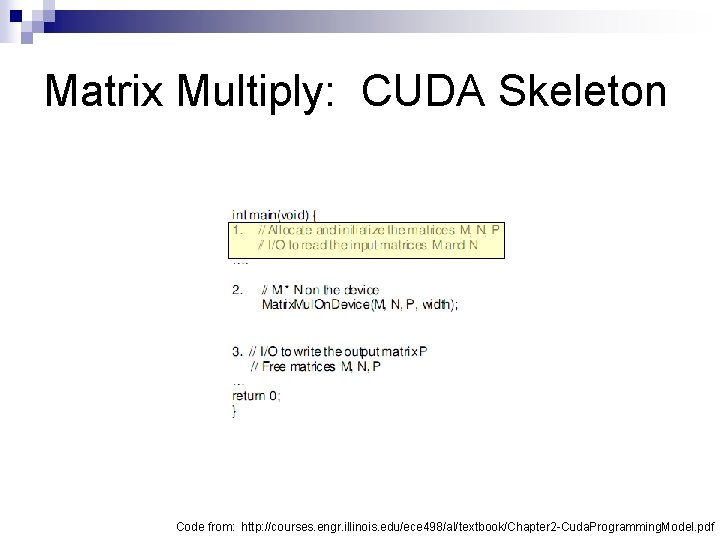

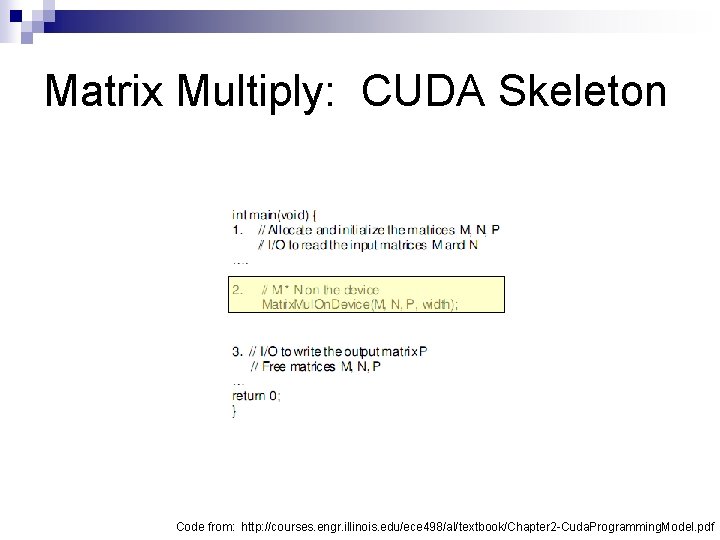

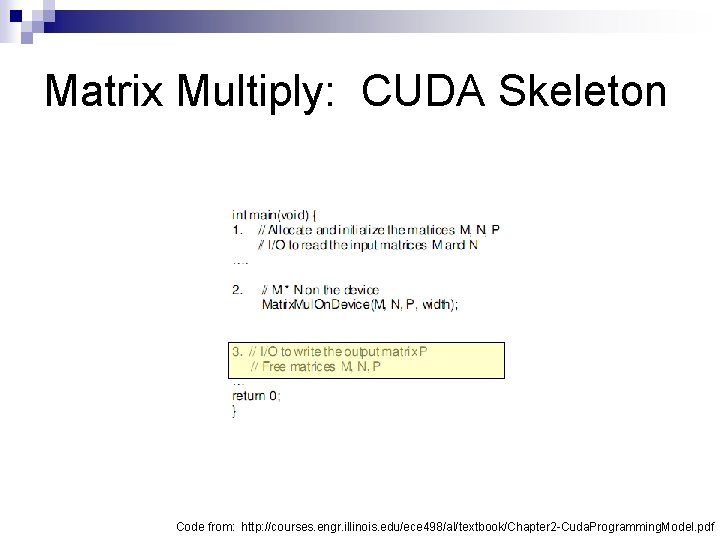

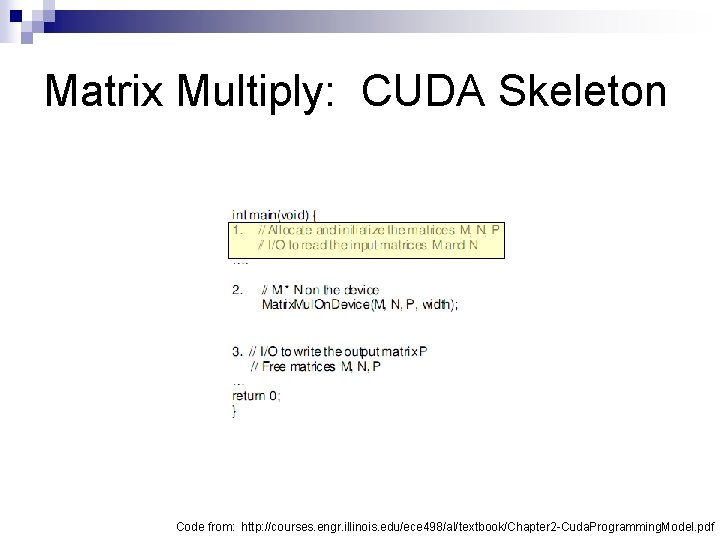

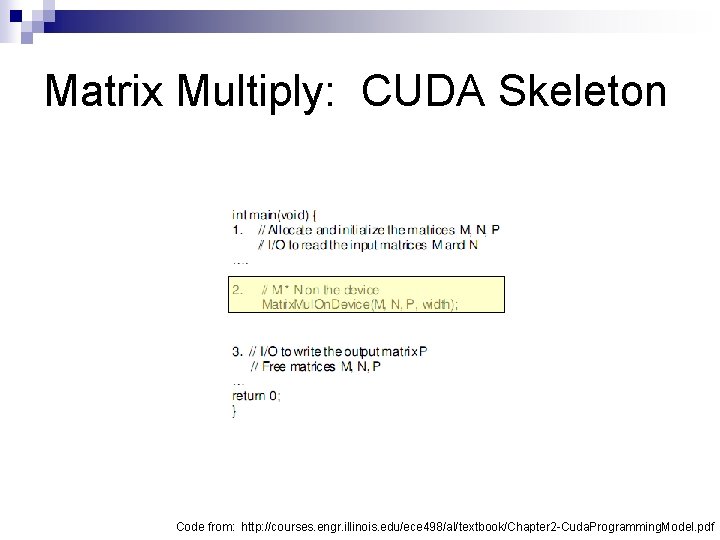

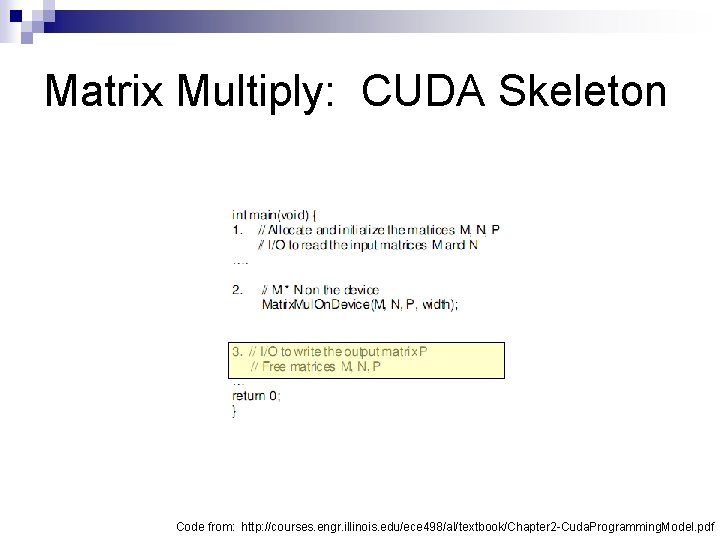

Matrix Multiply: CUDA Skeleton Code from: http: //courses. engr. illinois. edu/ece 498/al/textbook/Chapter 2 -Cuda. Programming. Model. pdf

Matrix Multiply: CUDA Skeleton Code from: http: //courses. engr. illinois. edu/ece 498/al/textbook/Chapter 2 -Cuda. Programming. Model. pdf

Matrix Multiply: CUDA Skeleton Code from: http: //courses. engr. illinois. edu/ece 498/al/textbook/Chapter 2 -Cuda. Programming. Model. pdf

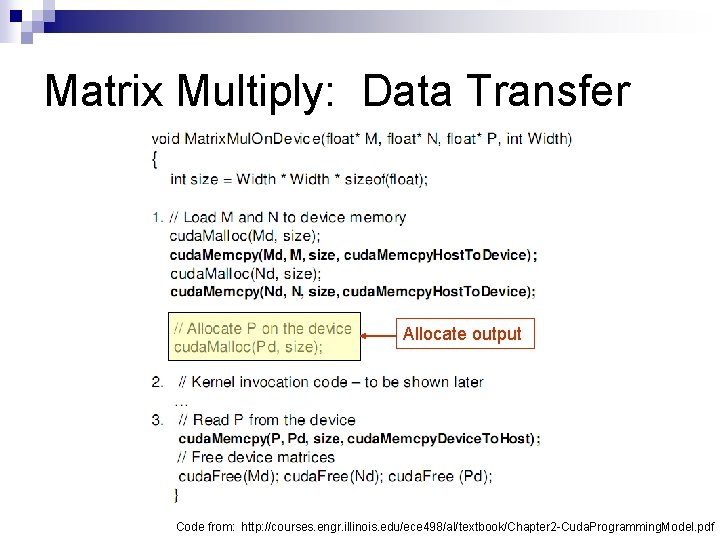

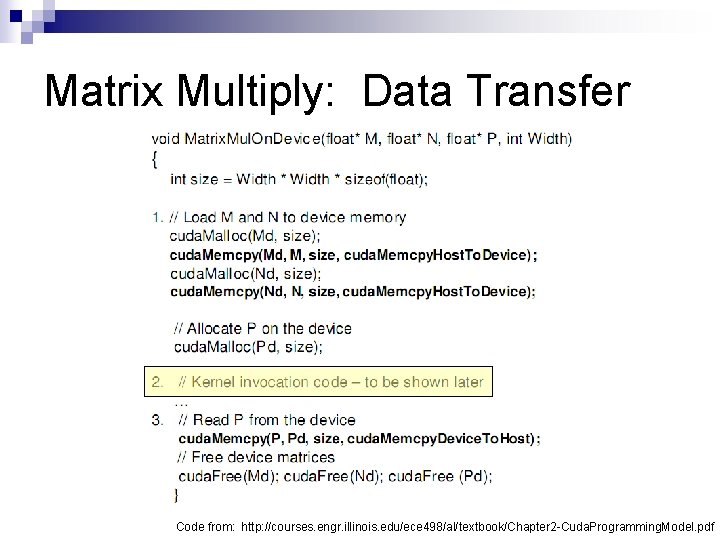

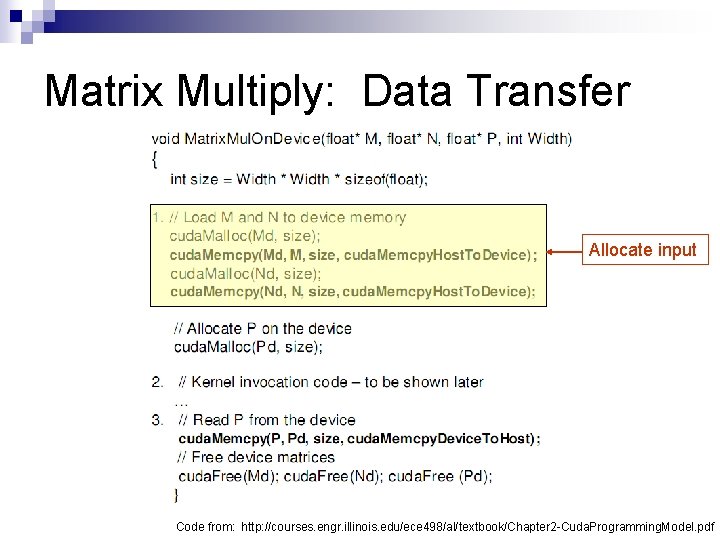

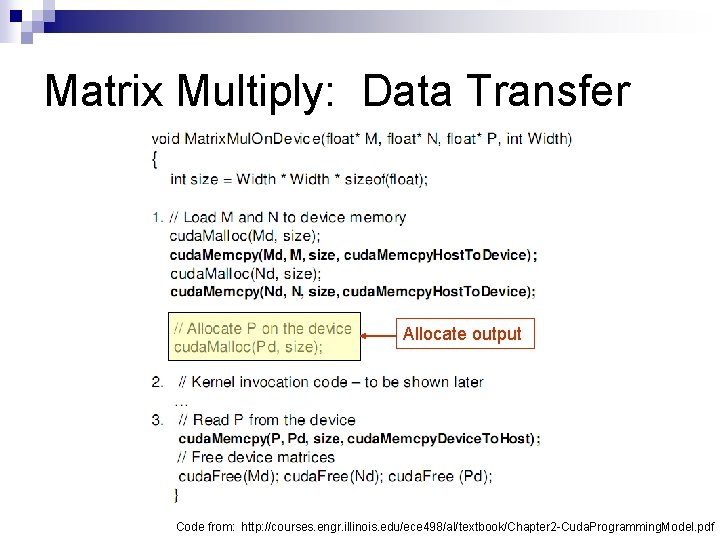

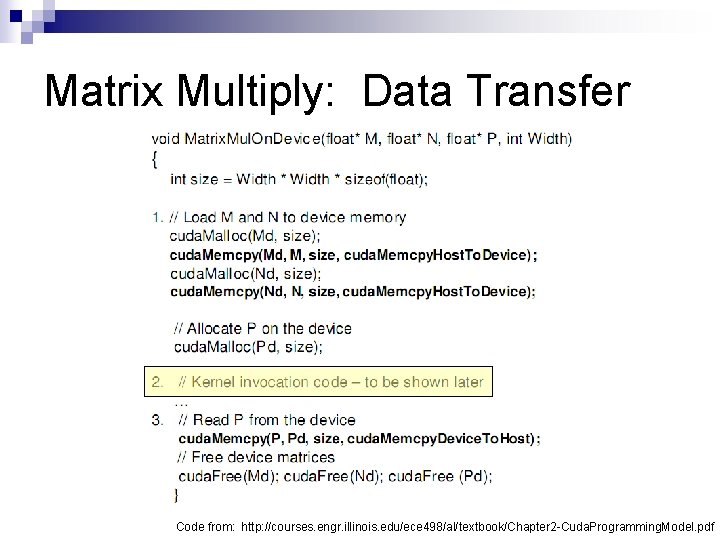

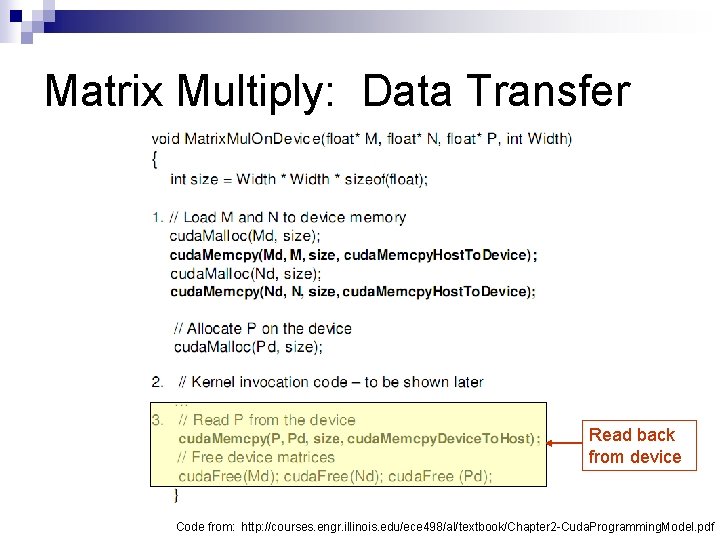

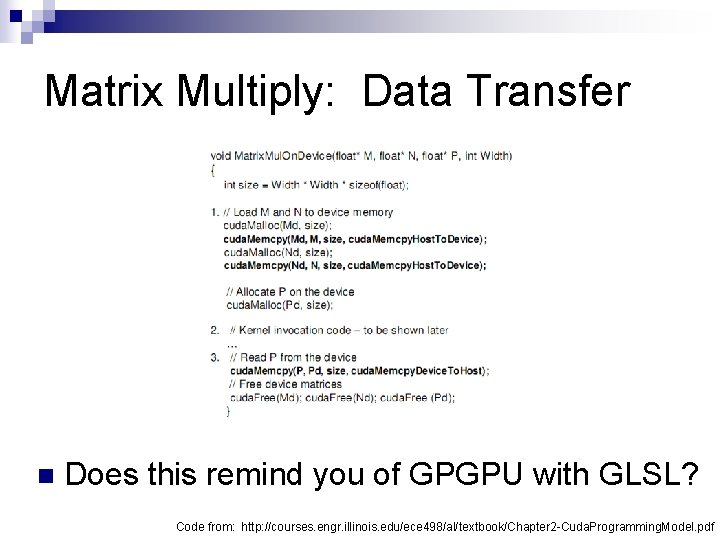

Matrix Multiply n Step 1 ¨ Add CUDA memory transfers to the skeleton

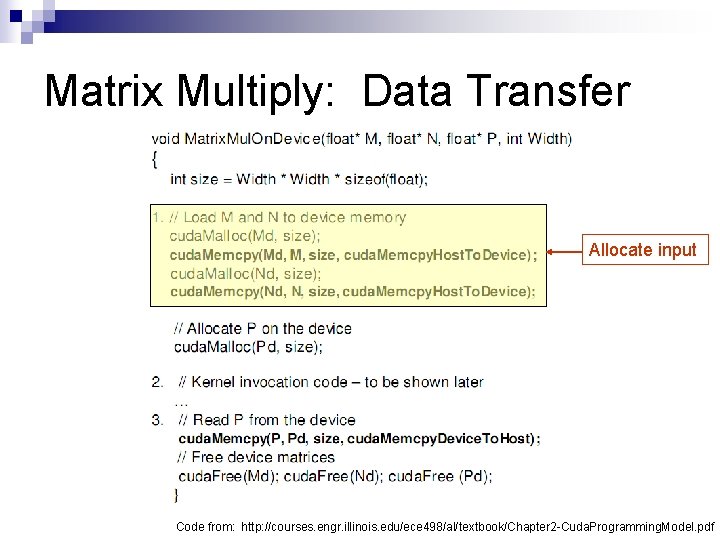

Matrix Multiply: Data Transfer Allocate input Code from: http: //courses. engr. illinois. edu/ece 498/al/textbook/Chapter 2 -Cuda. Programming. Model. pdf

Matrix Multiply: Data Transfer Allocate output Code from: http: //courses. engr. illinois. edu/ece 498/al/textbook/Chapter 2 -Cuda. Programming. Model. pdf

Matrix Multiply: Data Transfer Code from: http: //courses. engr. illinois. edu/ece 498/al/textbook/Chapter 2 -Cuda. Programming. Model. pdf

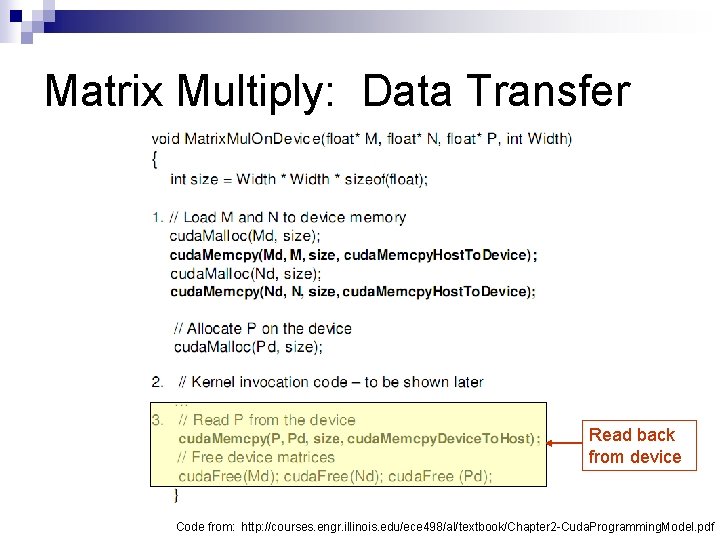

Matrix Multiply: Data Transfer Read back from device Code from: http: //courses. engr. illinois. edu/ece 498/al/textbook/Chapter 2 -Cuda. Programming. Model. pdf

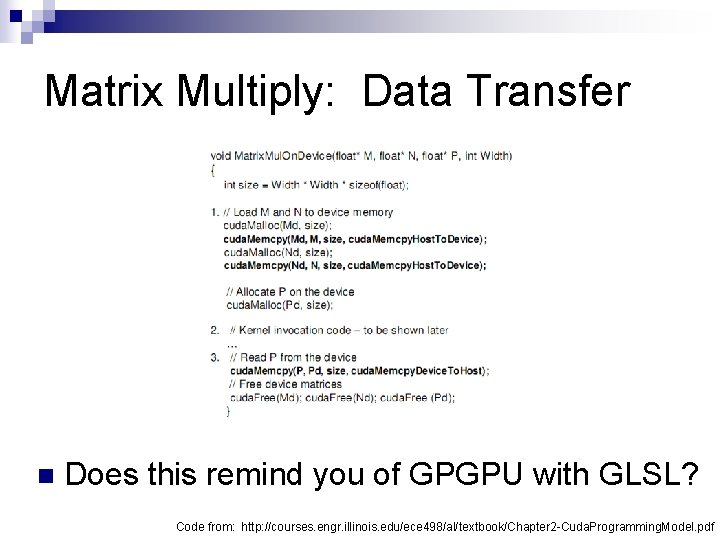

Matrix Multiply: Data Transfer n Does this remind you of GPGPU with GLSL? Code from: http: //courses. engr. illinois. edu/ece 498/al/textbook/Chapter 2 -Cuda. Programming. Model. pdf

Matrix Multiply n Step 2 ¨ Implement the kernel in CUDA C

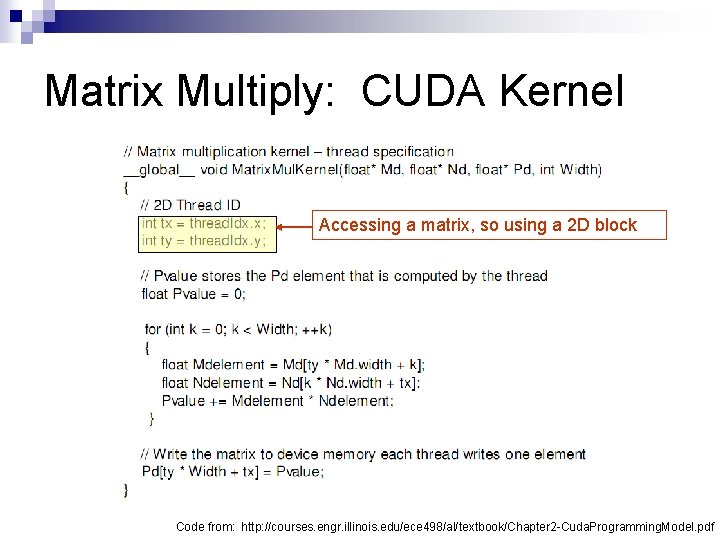

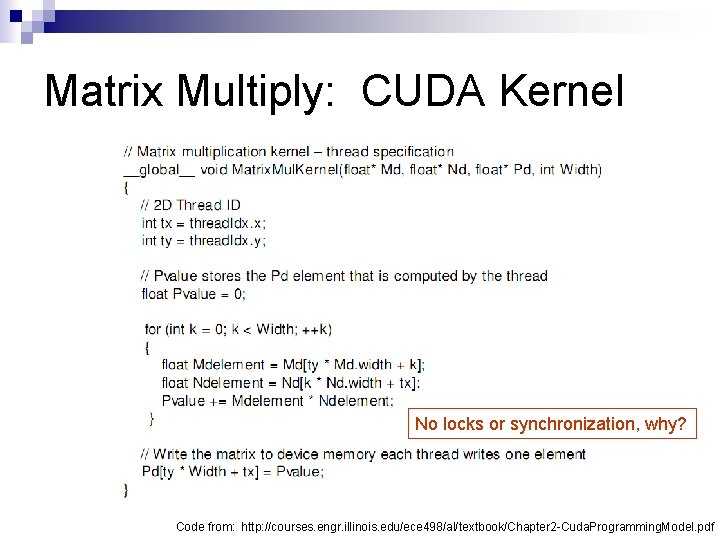

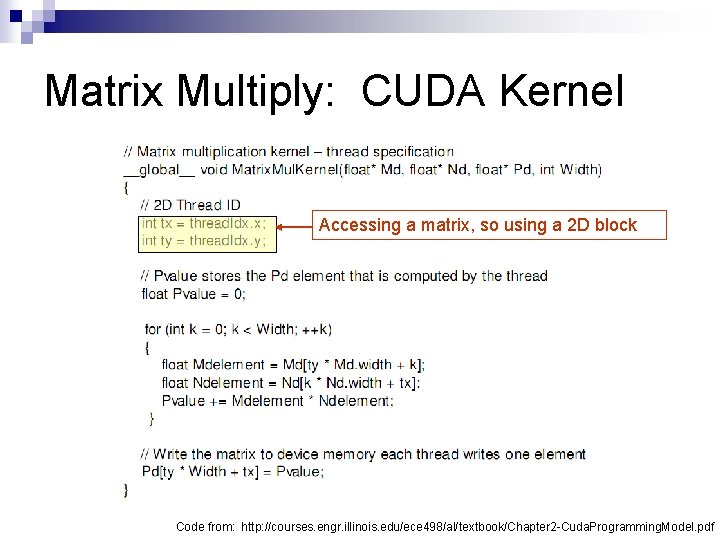

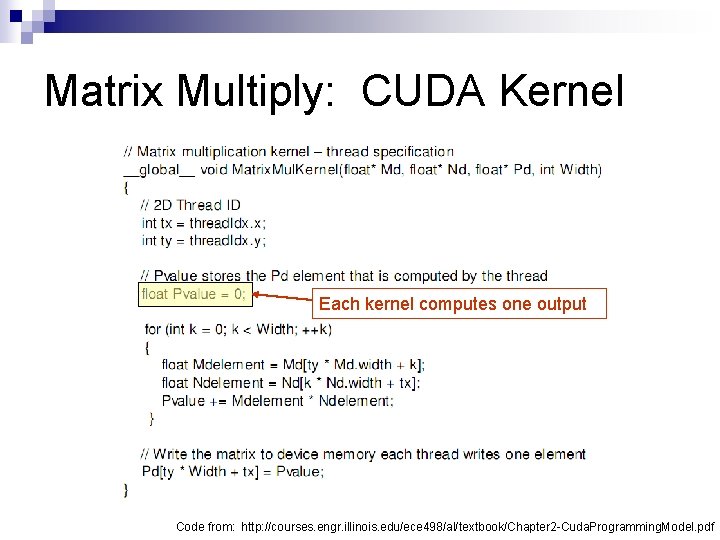

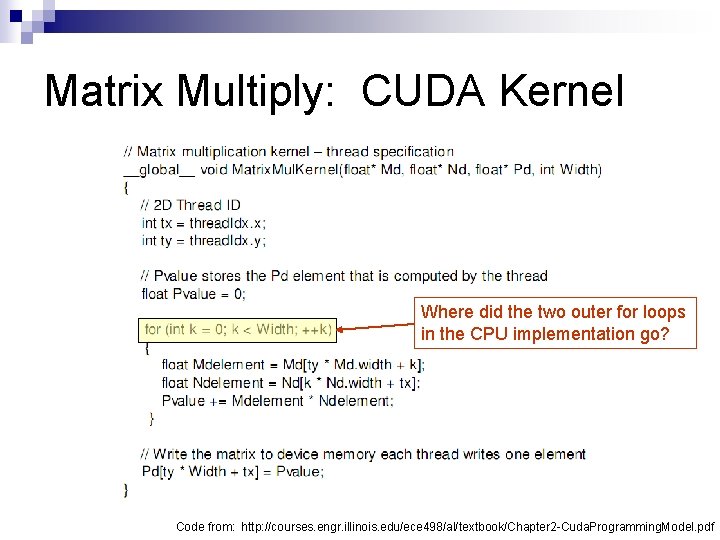

Matrix Multiply: CUDA Kernel Accessing a matrix, so using a 2 D block Code from: http: //courses. engr. illinois. edu/ece 498/al/textbook/Chapter 2 -Cuda. Programming. Model. pdf

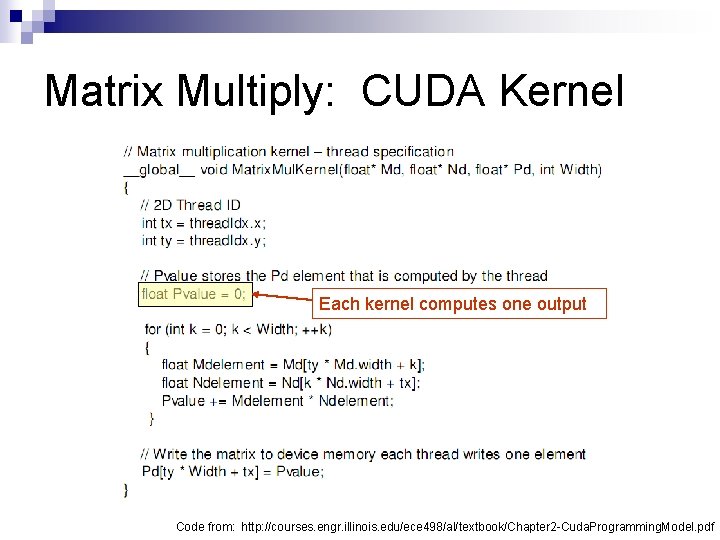

Matrix Multiply: CUDA Kernel Each kernel computes one output Code from: http: //courses. engr. illinois. edu/ece 498/al/textbook/Chapter 2 -Cuda. Programming. Model. pdf

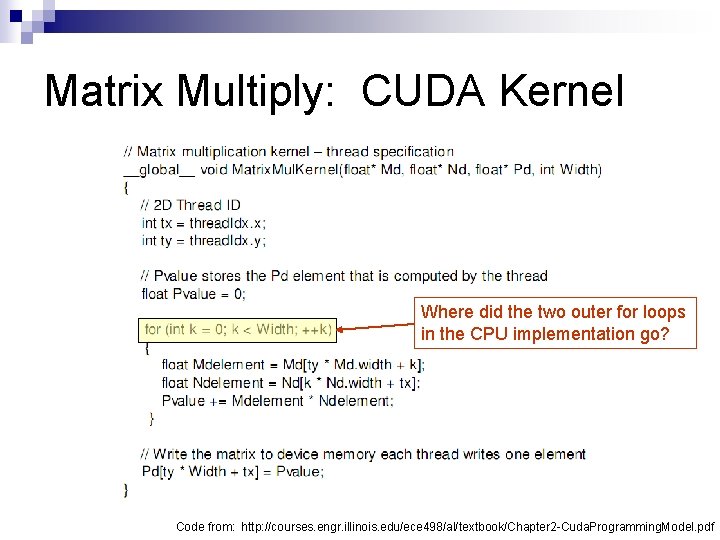

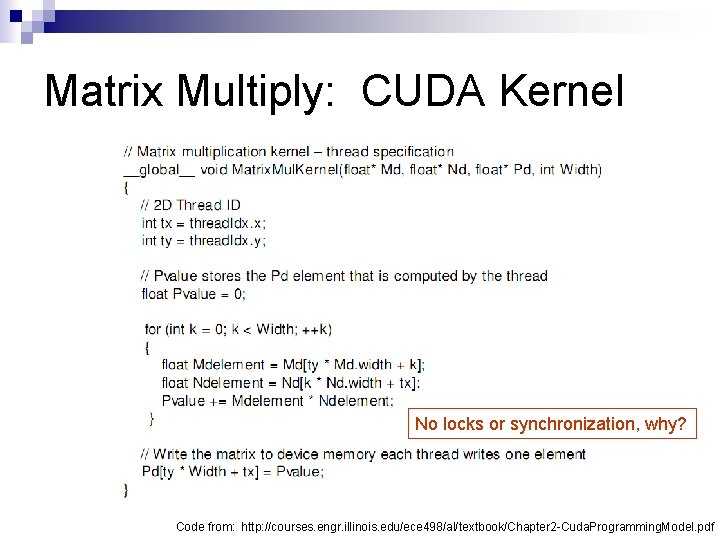

Matrix Multiply: CUDA Kernel Where did the two outer for loops in the CPU implementation go? Code from: http: //courses. engr. illinois. edu/ece 498/al/textbook/Chapter 2 -Cuda. Programming. Model. pdf

Matrix Multiply: CUDA Kernel No locks or synchronization, why? Code from: http: //courses. engr. illinois. edu/ece 498/al/textbook/Chapter 2 -Cuda. Programming. Model. pdf

Matrix Multiply n Step 3 ¨ Invoke the kernel in CUDA C

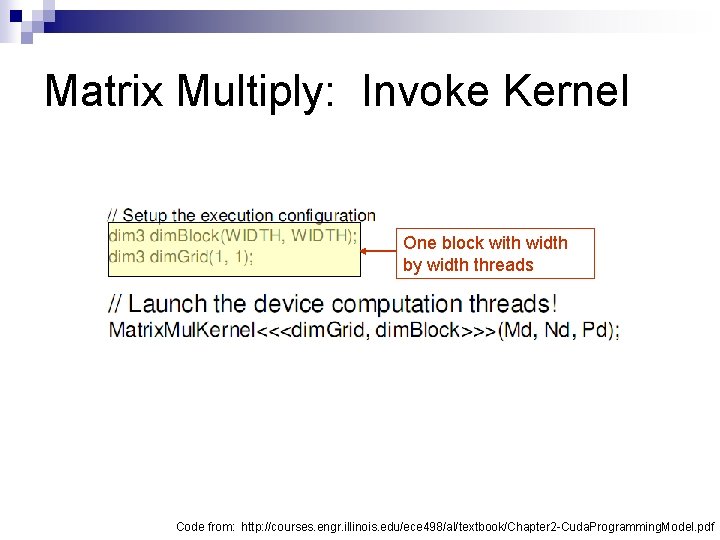

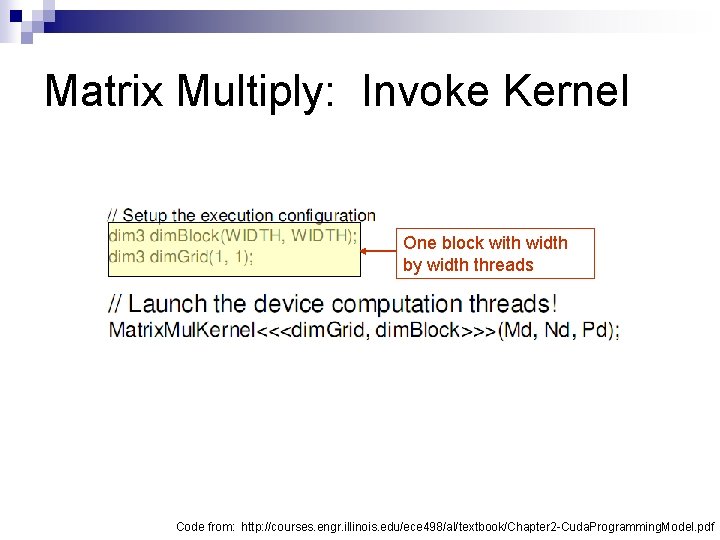

Matrix Multiply: Invoke Kernel One block with width by width threads Code from: http: //courses. engr. illinois. edu/ece 498/al/textbook/Chapter 2 -Cuda. Programming. Model. pdf

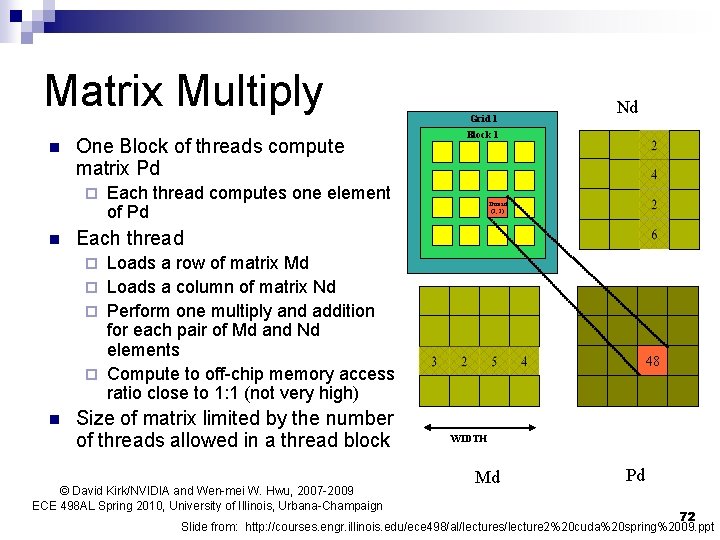

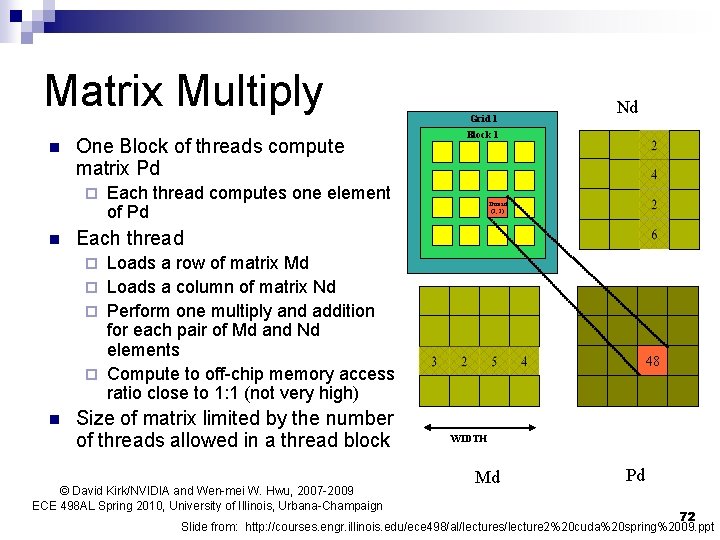

Matrix Multiply n One Block of threads compute matrix Pd ¨ n Grid 1 Nd Block 1 Each thread computes one element of Pd Thread (2, 2) Each thread Loads a row of matrix Md ¨ Loads a column of matrix Nd ¨ Perform one multiply and addition for each pair of Md and Nd elements ¨ Compute to off-chip memory access ratio close to 1: 1 (not very high) ¨ n Size of matrix limited by the number of threads allowed in a thread block © David Kirk/NVIDIA and Wen-mei W. Hwu, 2007 -2009 ECE 498 AL Spring 2010, University of Illinois, Urbana-Champaign 48 WIDTH Md Pd 72 Slide from: http: //courses. engr. illinois. edu/ece 498/al/lectures/lecture 2%20 cuda%20 spring%2009. ppt

Matrix Multiply What is the major performance problem with our implementation? n What is the major limitation? n