CUDA 101 Basics Overview What is CUDA Data

CUDA - 101 Basics

Overview • • • What is CUDA? Data Parallelism Host-Device model Thread execution Matrix-multiplication

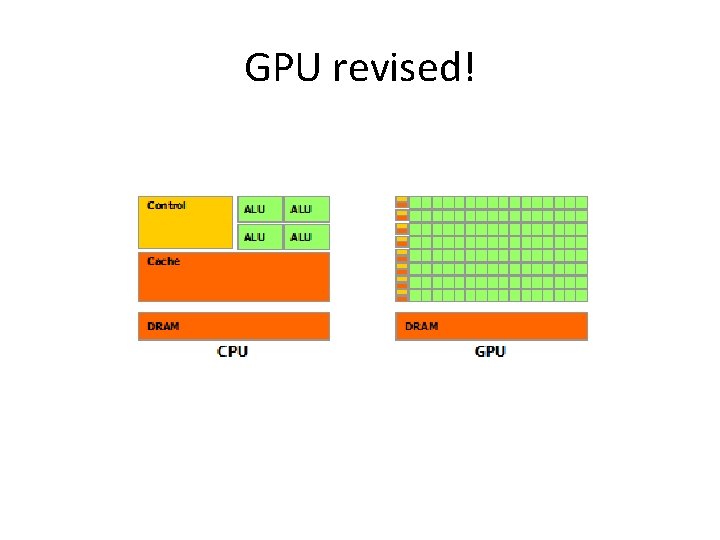

GPU revised!

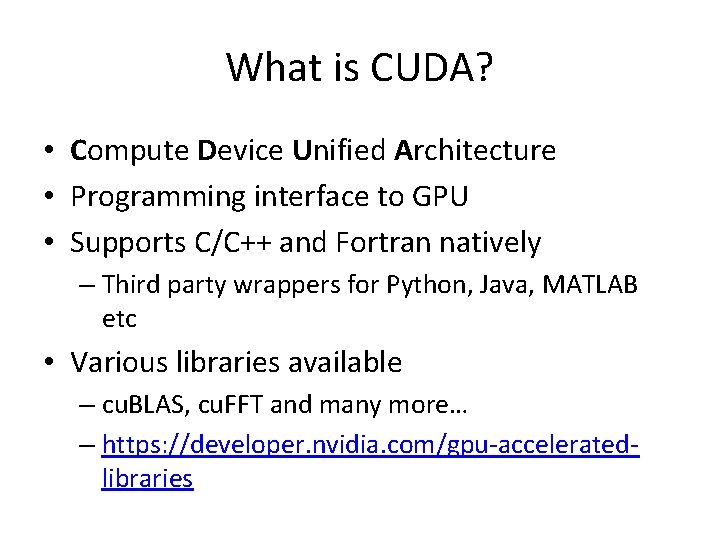

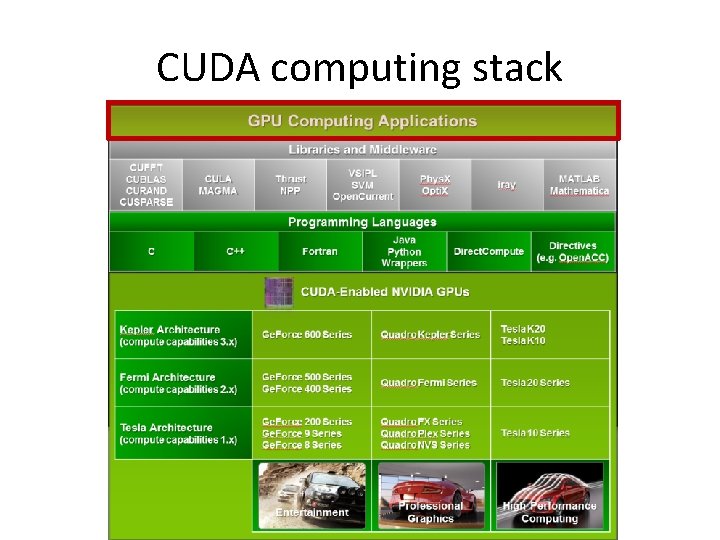

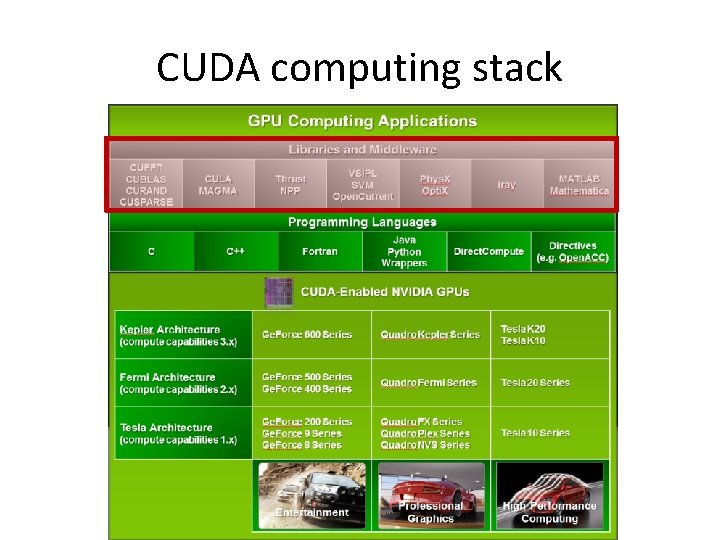

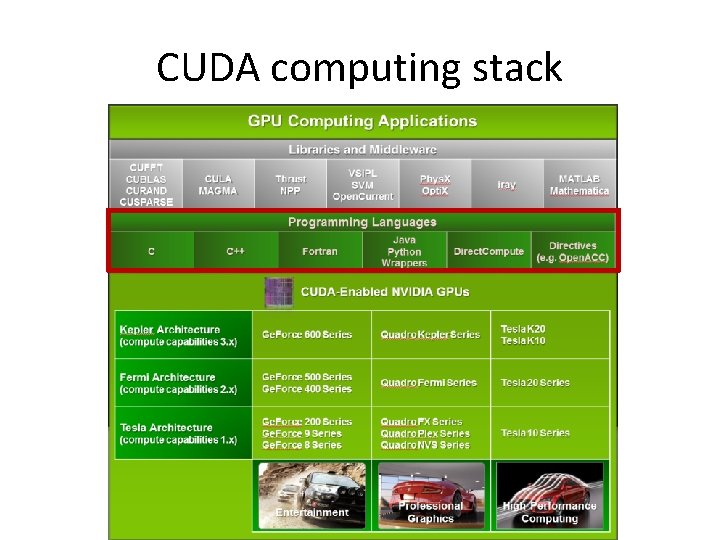

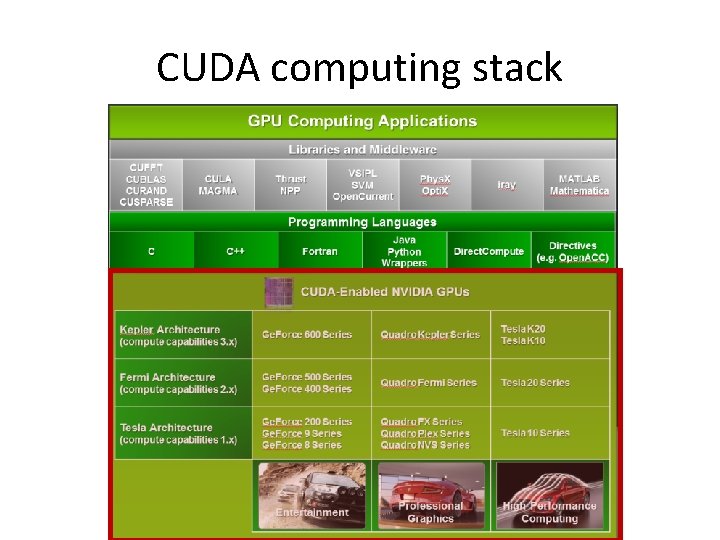

What is CUDA? • Compute Device Unified Architecture • Programming interface to GPU • Supports C/C++ and Fortran natively – Third party wrappers for Python, Java, MATLAB etc • Various libraries available – cu. BLAS, cu. FFT and many more… – https: //developer. nvidia. com/gpu-acceleratedlibraries

CUDA computing stack

CUDA computing stack

CUDA computing stack

CUDA computing stack

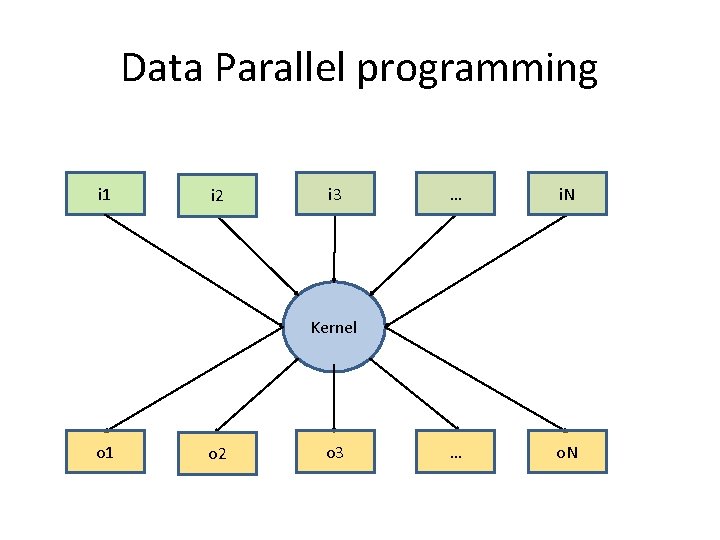

Data Parallel programming i 1 i 2 i 3 … i. N … o. N Kernel o 1 o 2 o 3

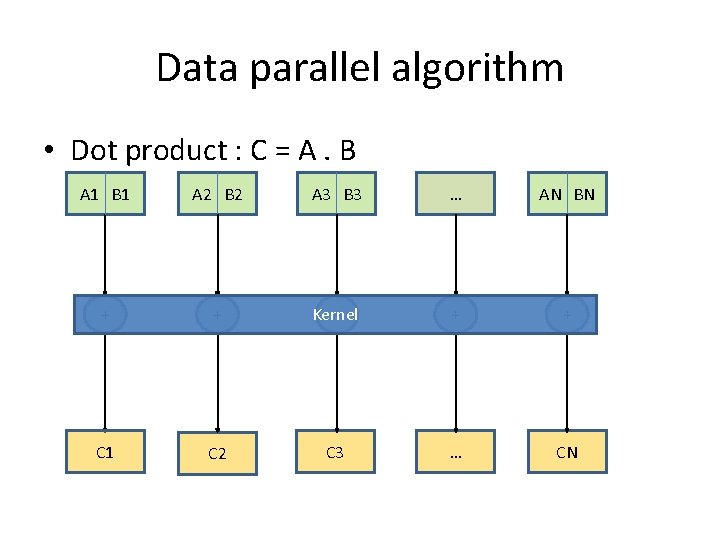

Data parallel algorithm • Dot product : C = A. B A 1 B 1 A 2 B 2 A 3 B 3 … AN BN + + Kernel + + + C 1 C 2 C 3 … CN

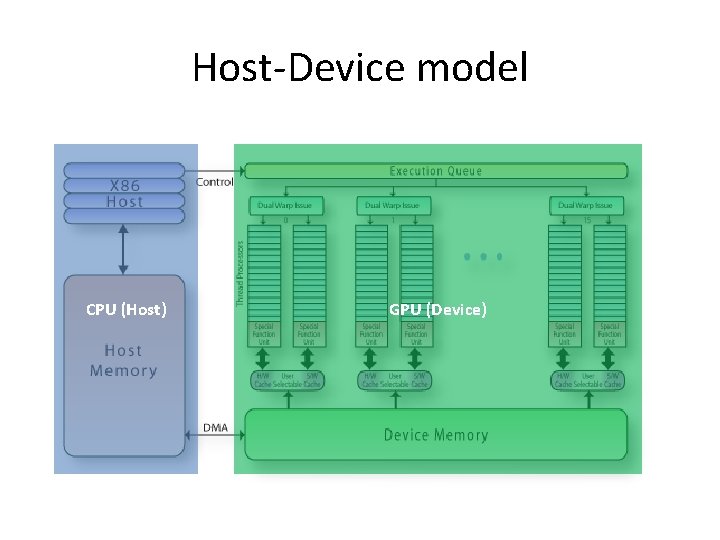

Host-Device model CPU (Host) GPU (Device)

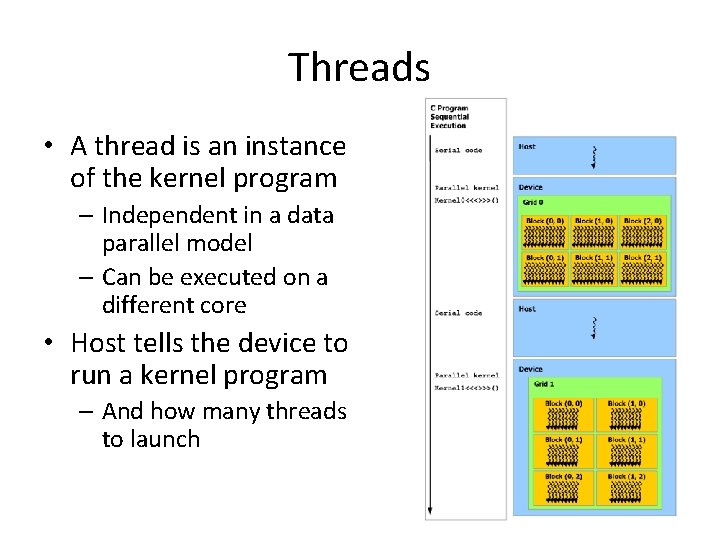

Threads • A thread is an instance of the kernel program – Independent in a data parallel model – Can be executed on a different core • Host tells the device to run a kernel program – And how many threads to launch

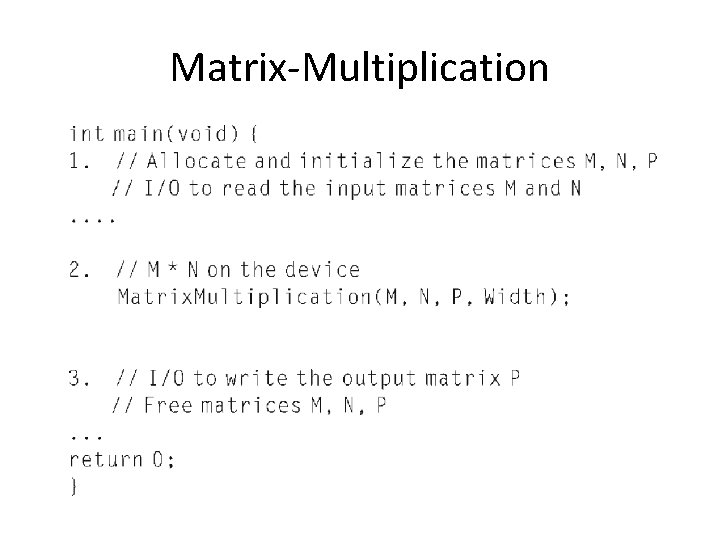

Matrix-Multiplication

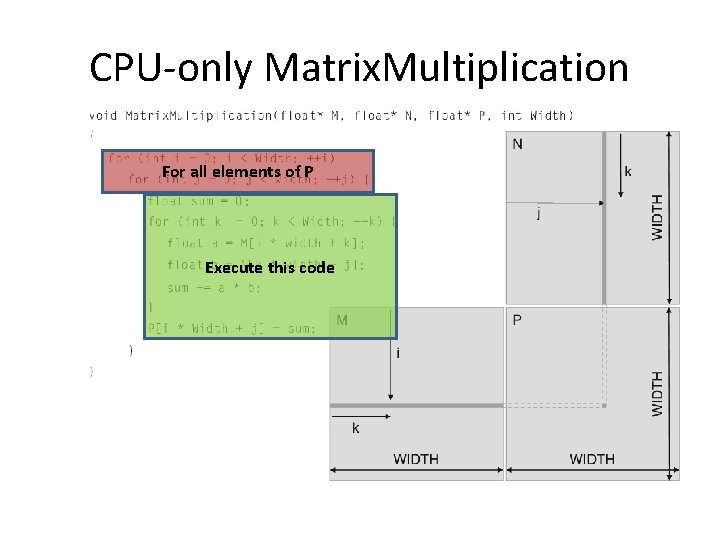

CPU-only Matrix. Multiplication For all elements of P Execute this code

![Memory Indexing in C (and CUDA) M(i, j) = M[i + j * width] Memory Indexing in C (and CUDA) M(i, j) = M[i + j * width]](http://slidetodoc.com/presentation_image/b6f0eb351cf9839c8839c0f204368c24/image-15.jpg)

Memory Indexing in C (and CUDA) M(i, j) = M[i + j * width]

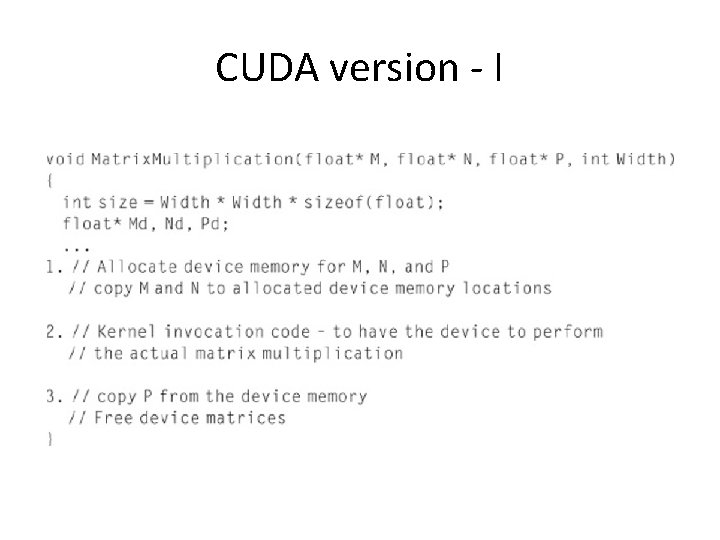

CUDA version - I

CUDA program flow • Allocate input and output memory on host – Do the same for device • Transfer input data from host -> device • Launch kernel on device • Transfer output data from device -> host

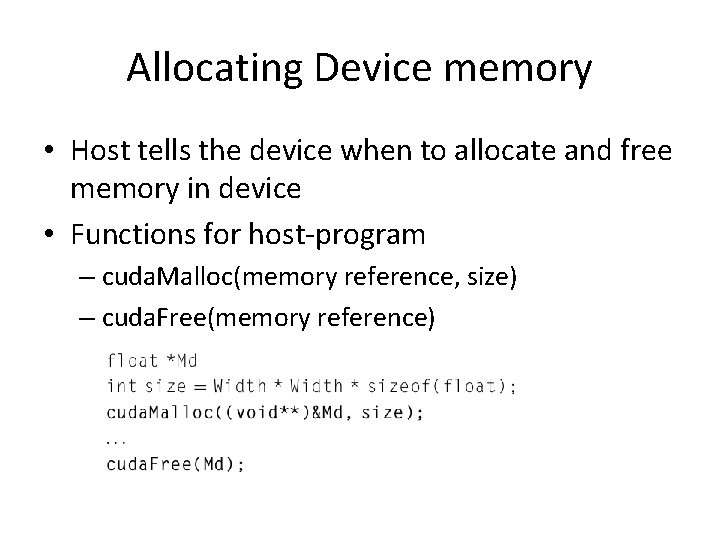

Allocating Device memory • Host tells the device when to allocate and free memory in device • Functions for host-program – cuda. Malloc(memory reference, size) – cuda. Free(memory reference)

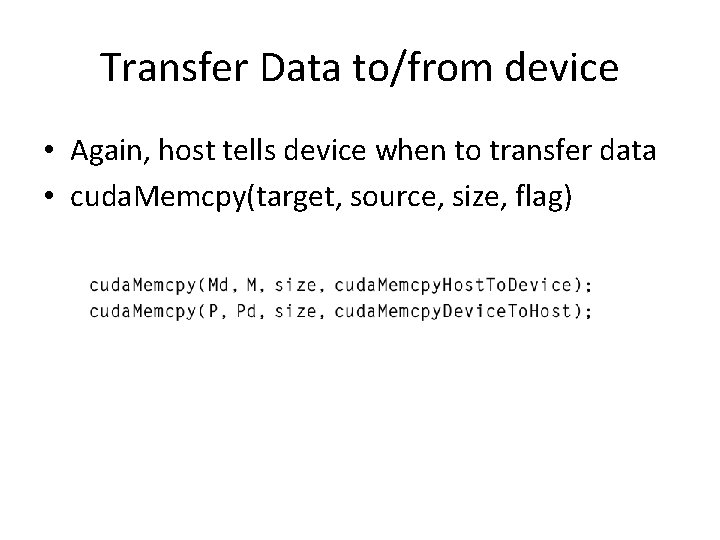

Transfer Data to/from device • Again, host tells device when to transfer data • cuda. Memcpy(target, source, size, flag)

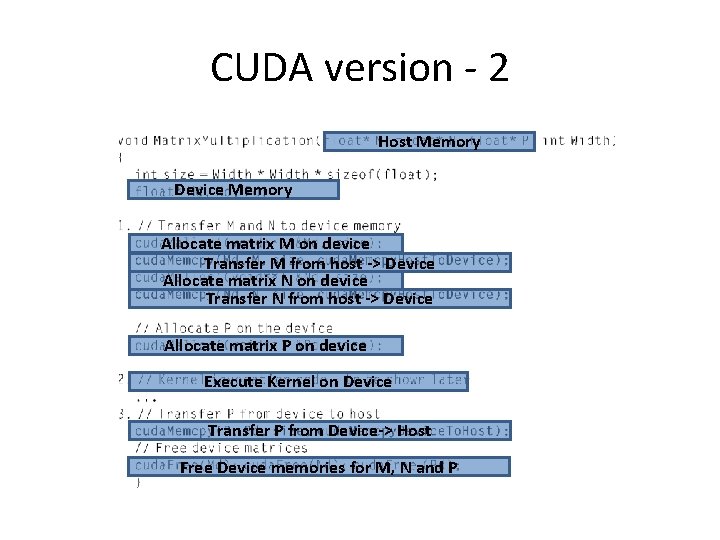

CUDA version - 2 Host Memory Device Memory Allocate matrix M on device Transfer M from host -> Device Allocate matrix N on device Transfer N from host -> Device Allocate matrix P on device Execute Kernel on Device Transfer P from Device-> Host Free Device memories for M, N and P

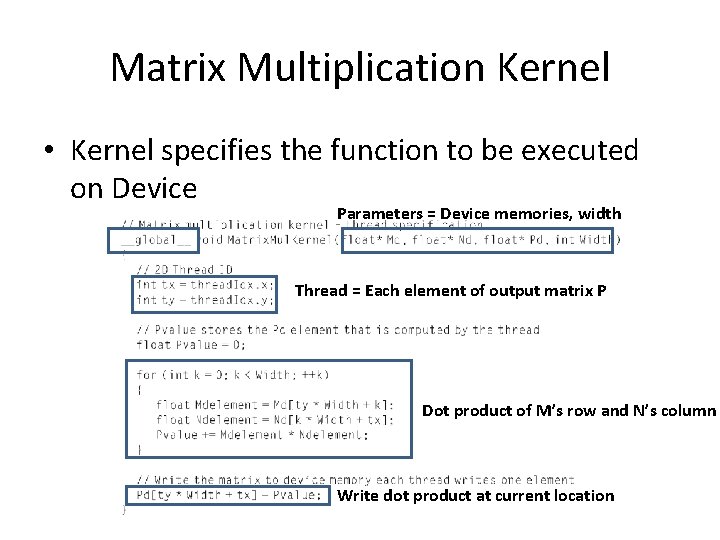

Matrix Multiplication Kernel • Kernel specifies the function to be executed on Device Parameters = Device memories, width Thread = Each element of output matrix P Dot product of M’s row and N’s column Write dot product at current location

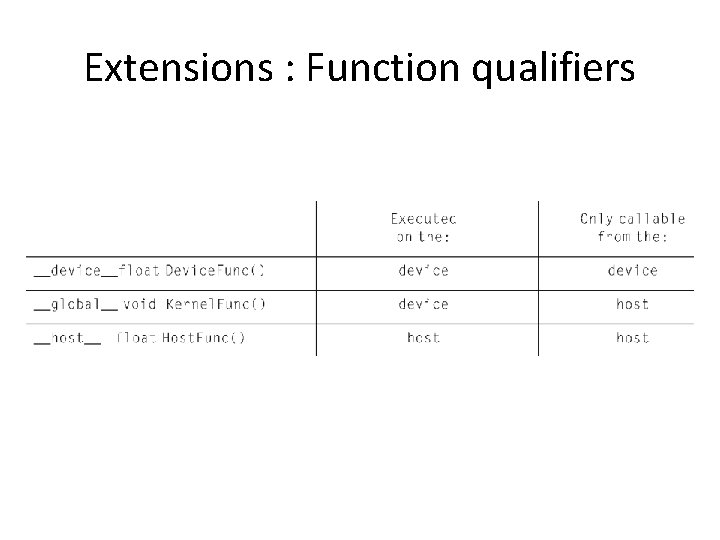

Extensions : Function qualifiers

Extensions : Thread indexing • All threads execute the same code – But they need work on separate memory data • thread. Id. x & thread. Id. y – These variables automatically receive corresponding values for their threads

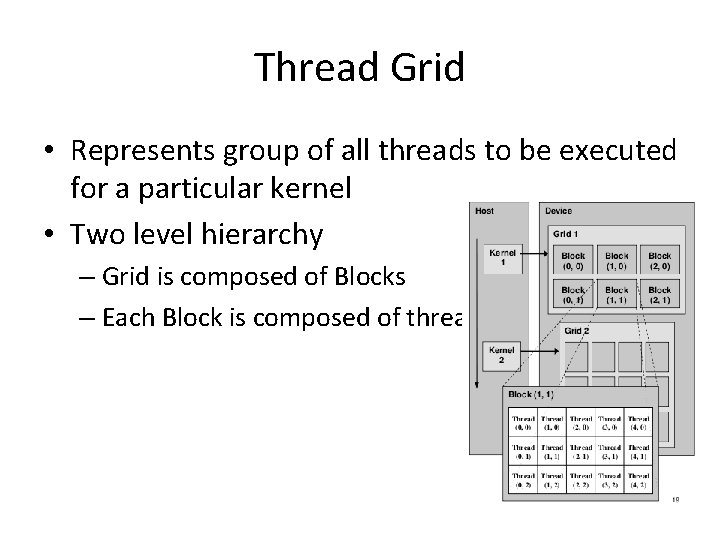

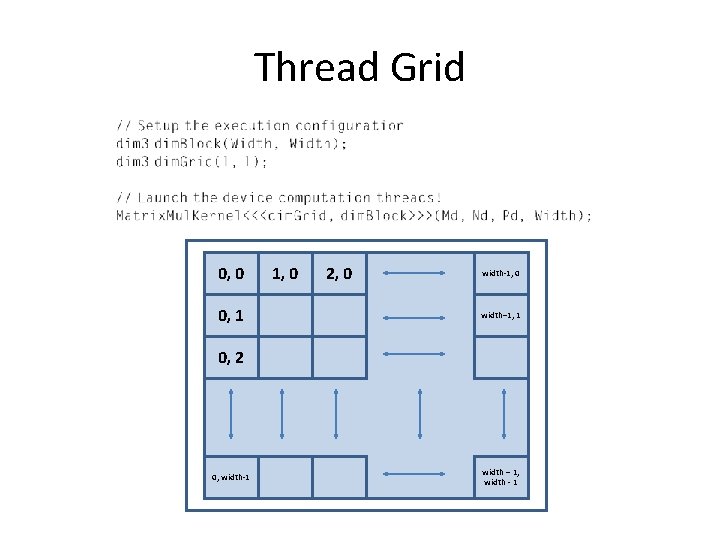

Thread Grid • Represents group of all threads to be executed for a particular kernel • Two level hierarchy – Grid is composed of Blocks – Each Block is composed of threads

Thread Grid 0, 0 0, 1 1, 0 2, 0 width-1, 0 width– 1, 1 0, 2 0, width-1 width – 1, width - 1

Conclusion • Sample code and tutorials • CUDA nodes? • Programming guide – http: //docs. nvidia. com/cuda-c-programming -guide/ • SDK – https: //developer. nvidia. com/cuda-downloads – Available for windows, Mac and Linux – Lot of sample programs

QUESTIONS?

- Slides: 27