CUDA Lecture 7 CUDA Threads and Atomics Prepared

![Race Conditions (cont. ) thread. ID: 0 thread. ID: 1917 // vector[0] was equal Race Conditions (cont. ) thread. ID: 0 thread. ID: 1917 // vector[0] was equal](https://slidetodoc.com/presentation_image_h/1fe47c7680011dbdd9d2f17b286bb921/image-4.jpg)

- Slides: 38

CUDA Lecture 7 CUDA Threads and Atomics Prepared 8/8/2011 by T. O’Neil for 3460: 677, Fall 2011, The University of Akron.

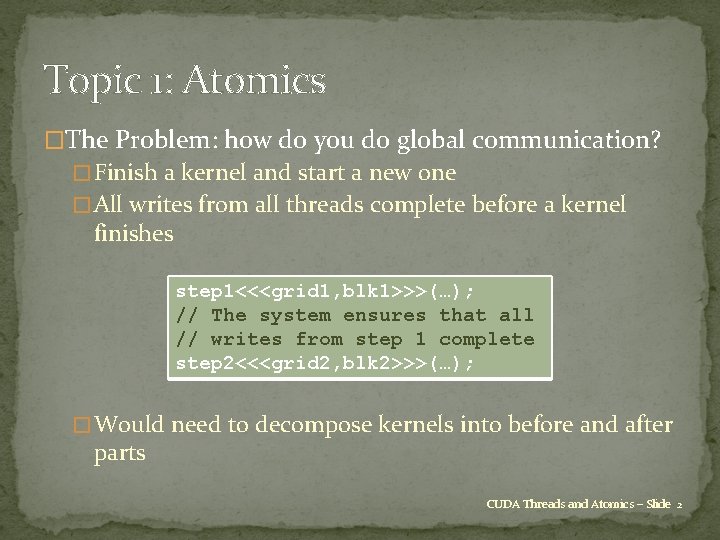

Topic 1: Atomics �The Problem: how do you do global communication? � Finish a kernel and start a new one � All writes from all threads complete before a kernel finishes step 1<<<grid 1, blk 1>>>(…); // The system ensures that all // writes from step 1 complete step 2<<<grid 2, blk 2>>>(…); � Would need to decompose kernels into before and after parts CUDA Threads and Atomics – Slide 2

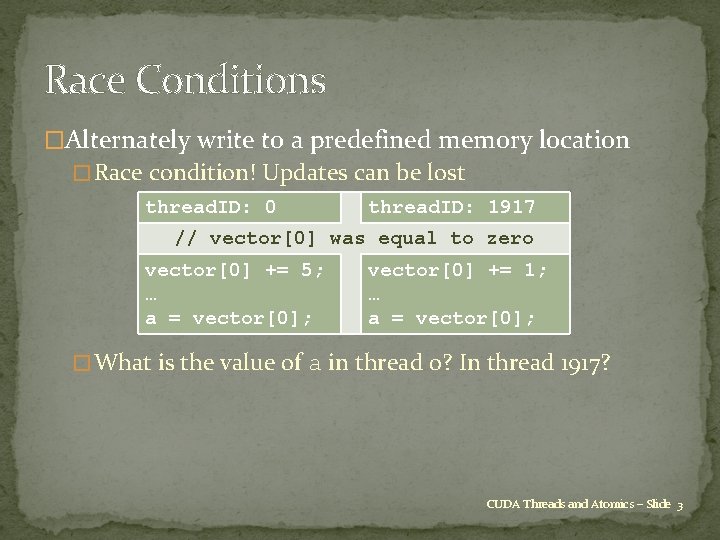

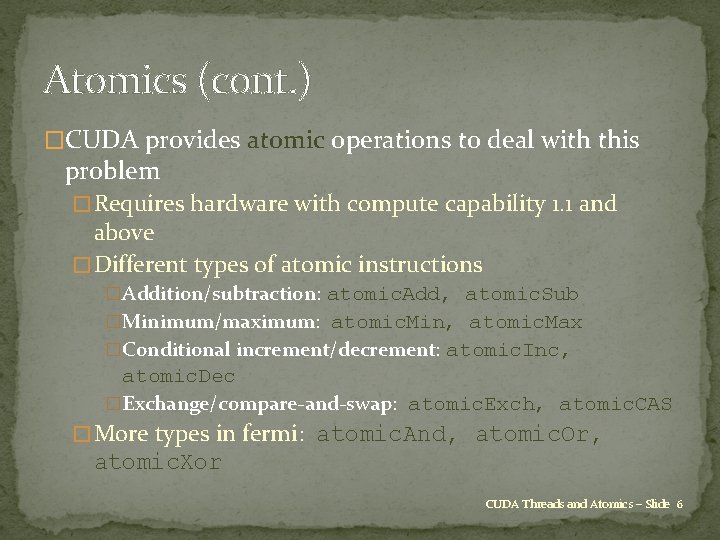

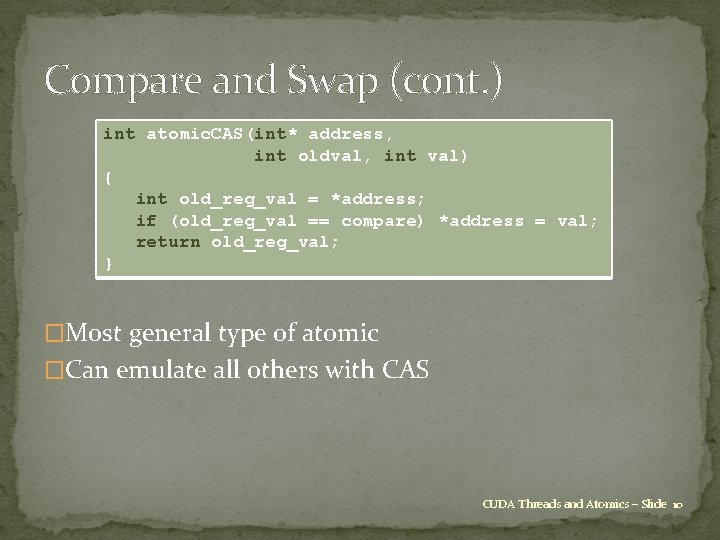

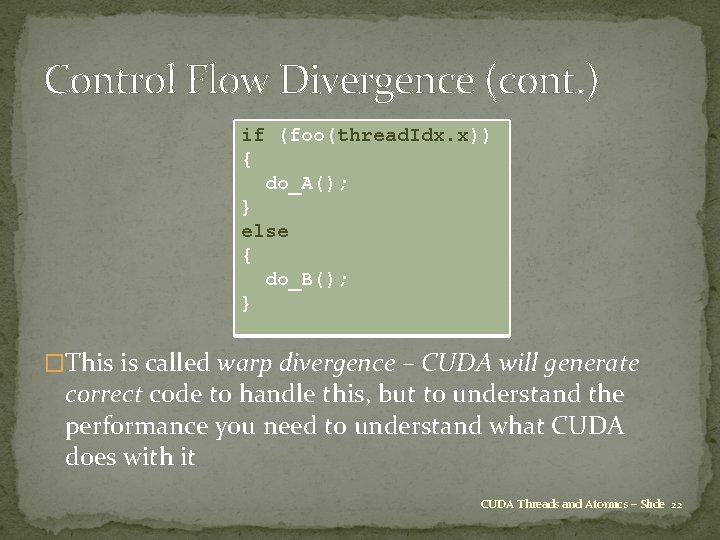

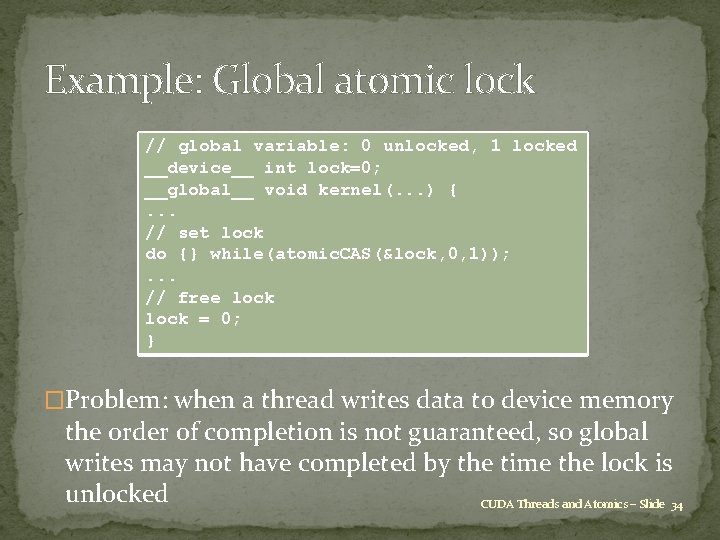

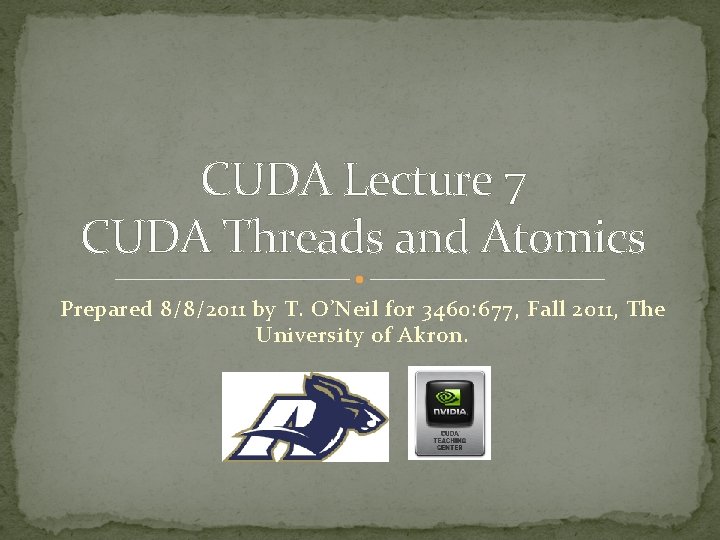

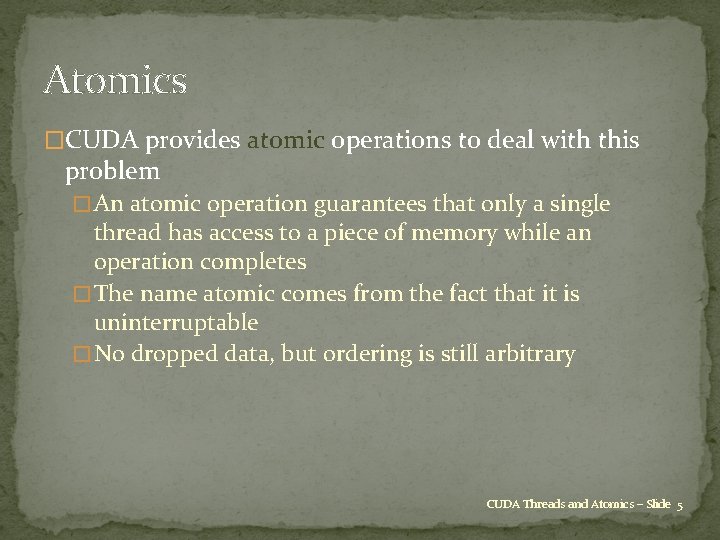

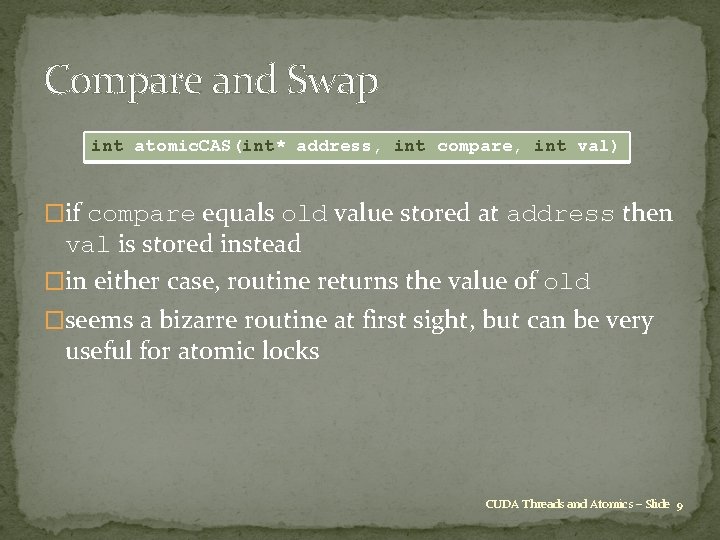

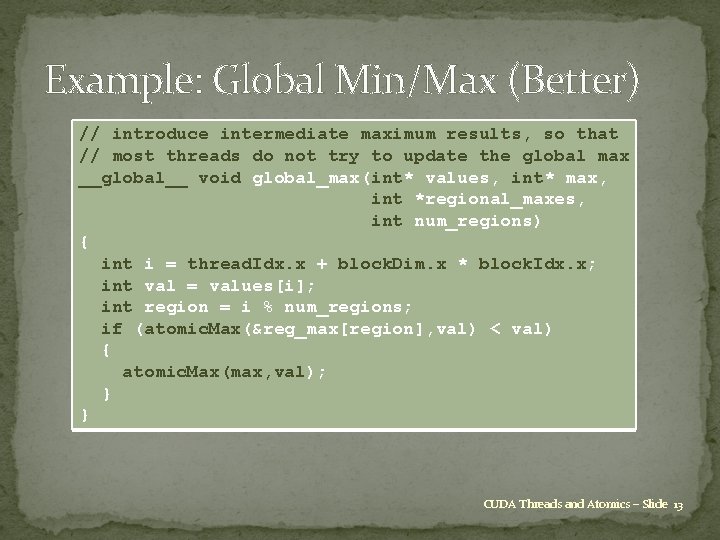

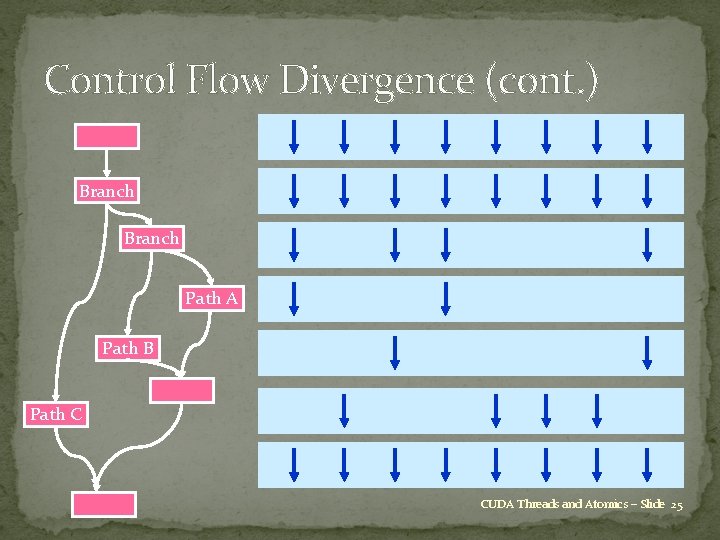

Race Conditions �Alternately write to a predefined memory location � Race condition! Updates can be lost thread. ID: 0 thread. ID: 1917 // vector[0] was equal to zero vector[0] += 5; … a = vector[0]; vector[0] += 1; … a = vector[0]; � What is the value of a in thread 0? In thread 1917? CUDA Threads and Atomics – Slide 3

![Race Conditions cont thread ID 0 thread ID 1917 vector0 was equal Race Conditions (cont. ) thread. ID: 0 thread. ID: 1917 // vector[0] was equal](https://slidetodoc.com/presentation_image_h/1fe47c7680011dbdd9d2f17b286bb921/image-4.jpg)

Race Conditions (cont. ) thread. ID: 0 thread. ID: 1917 // vector[0] was equal to zero vector[0] += 5; … a = vector[0]; vector[0] += 1; … a = vector[0]; � Thread 0 could have finished execution before 1917 started � Or the other way around � Or both are executing at the same time � Answer: not defined by the programming model; can be arbitrary CUDA Threads and Atomics – Slide 4

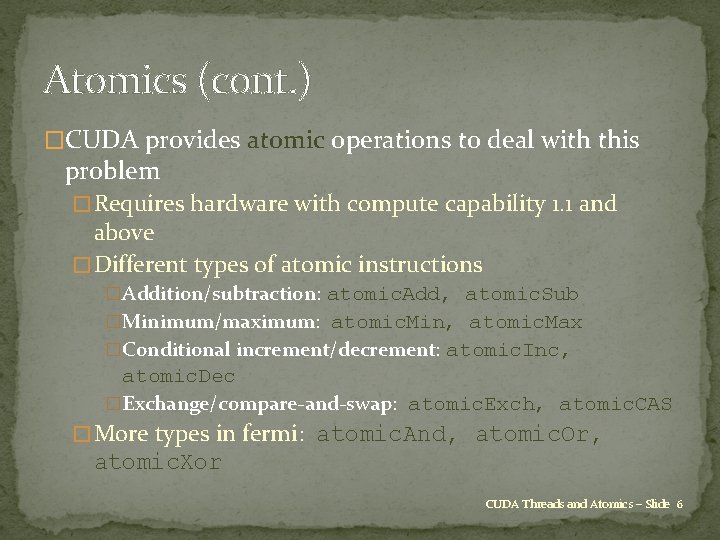

Atomics �CUDA provides atomic operations to deal with this problem � An atomic operation guarantees that only a single thread has access to a piece of memory while an operation completes � The name atomic comes from the fact that it is uninterruptable � No dropped data, but ordering is still arbitrary CUDA Threads and Atomics – Slide 5

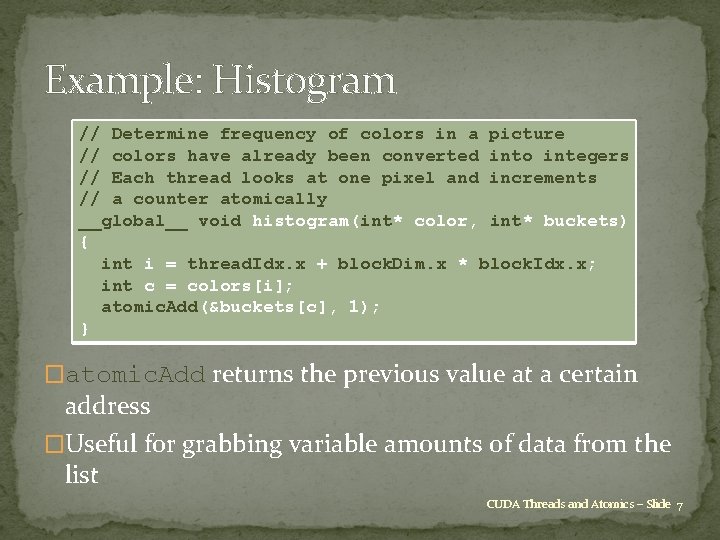

Atomics (cont. ) �CUDA provides atomic operations to deal with this problem � Requires hardware with compute capability 1. 1 and above � Different types of atomic instructions �Addition/subtraction: atomic. Add, atomic. Sub �Minimum/maximum: atomic. Min, atomic. Max �Conditional increment/decrement: atomic. Inc, atomic. Dec �Exchange/compare-and-swap: atomic. Exch, atomic. CAS � More types in fermi: atomic. And, atomic. Or, atomic. Xor CUDA Threads and Atomics – Slide 6

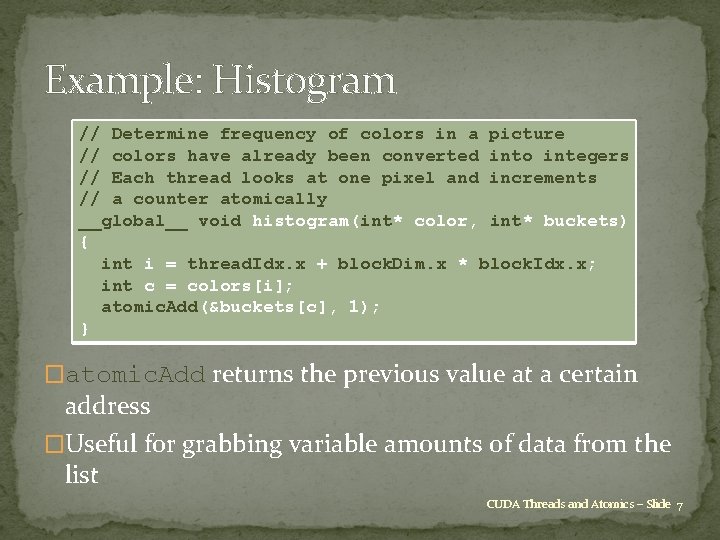

Example: Histogram // Determine frequency of colors in a picture // colors have already been converted into integers // Each thread looks at one pixel and increments // a counter atomically __global__ void histogram(int* color, int* buckets) { int i = thread. Idx. x + block. Dim. x * block. Idx. x; int c = colors[i]; atomic. Add(&buckets[c], 1); } �atomic. Add returns the previous value at a certain address �Useful for grabbing variable amounts of data from the list CUDA Threads and Atomics – Slide 7

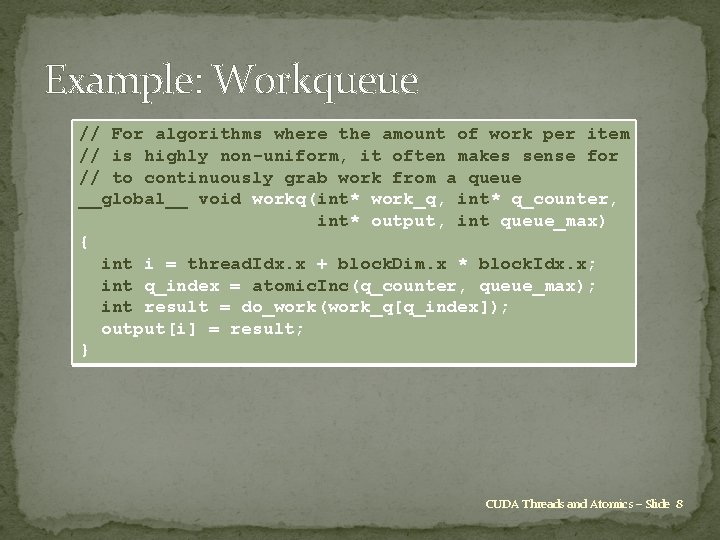

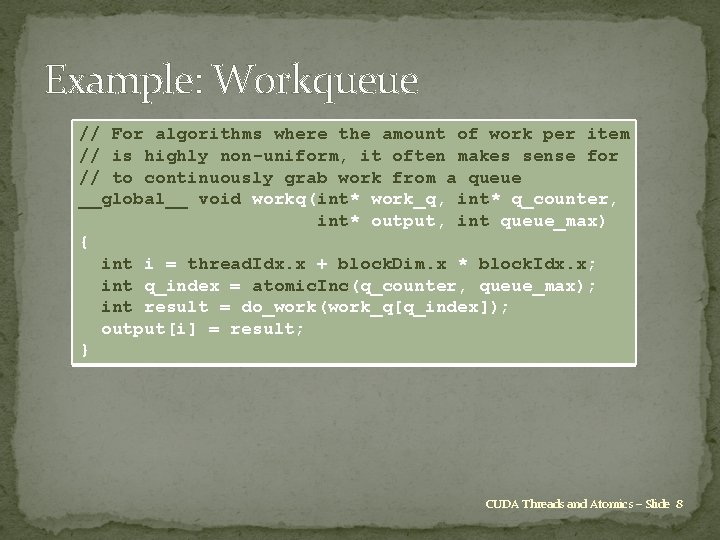

Example: Workqueue // For algorithms where the amount of work per item // is highly non-uniform, it often makes sense for // to continuously grab work from a queue __global__ void workq(int* work_q, int* q_counter, int* output, int queue_max) { int i = thread. Idx. x + block. Dim. x * block. Idx. x; int q_index = atomic. Inc(q_counter, queue_max); int result = do_work(work_q[q_index]); output[i] = result; } CUDA Threads and Atomics – Slide 8

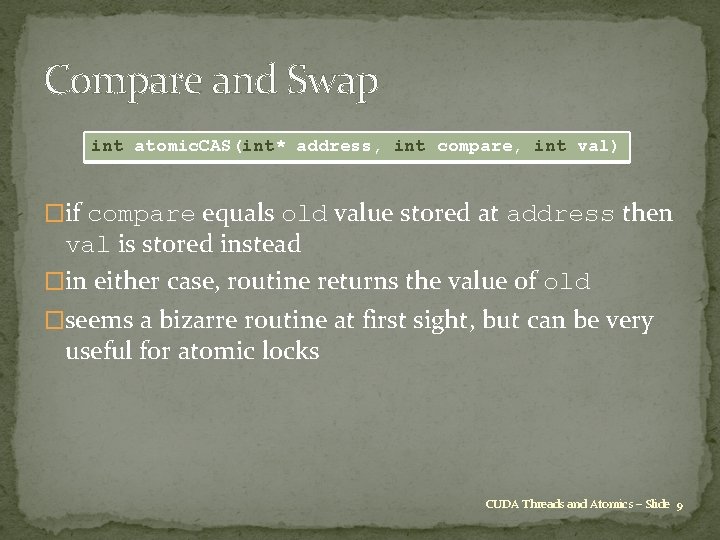

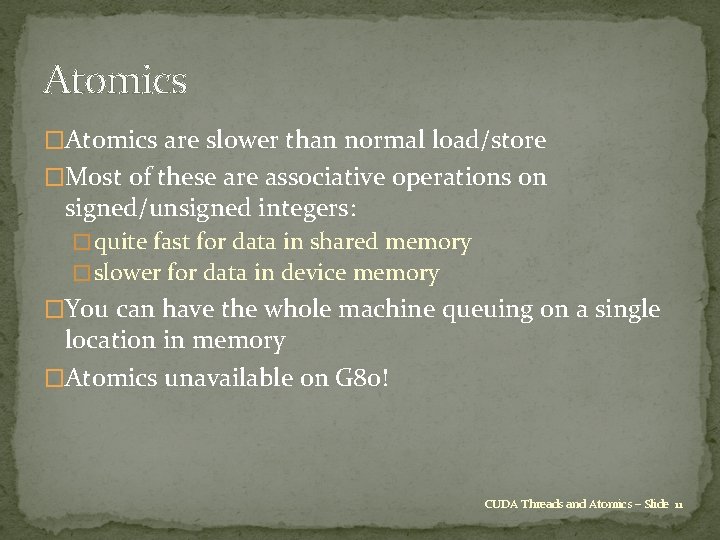

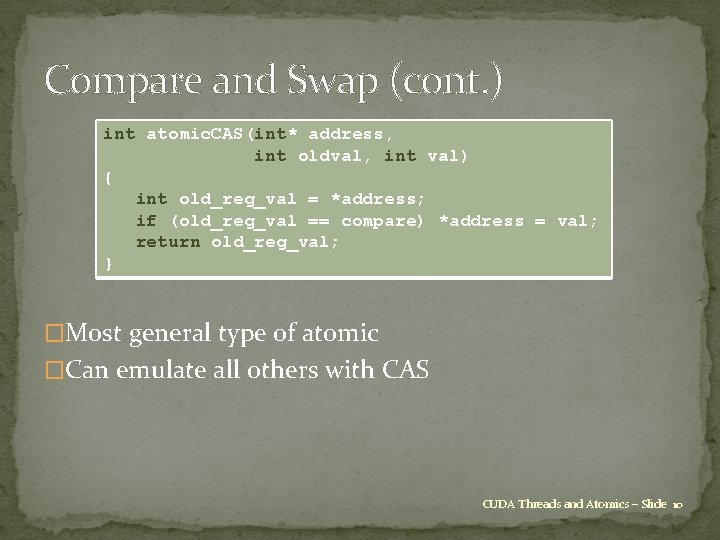

Compare and Swap int atomic. CAS(int* address, int compare, int val) �if compare equals old value stored at address then val is stored instead �in either case, routine returns the value of old �seems a bizarre routine at first sight, but can be very useful for atomic locks CUDA Threads and Atomics – Slide 9

Compare and Swap (cont. ) int atomic. CAS(int* address, int oldval, int val) { int old_reg_val = *address; if (old_reg_val == compare) *address = val; return old_reg_val; } �Most general type of atomic �Can emulate all others with CAS CUDA Threads and Atomics – Slide 10

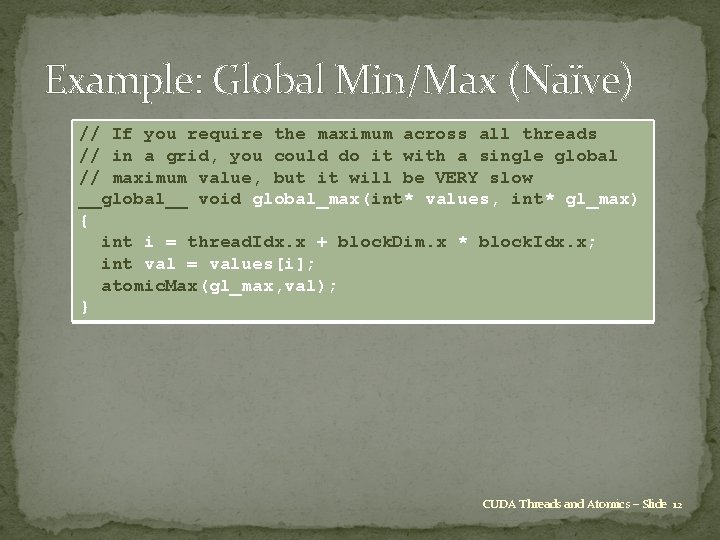

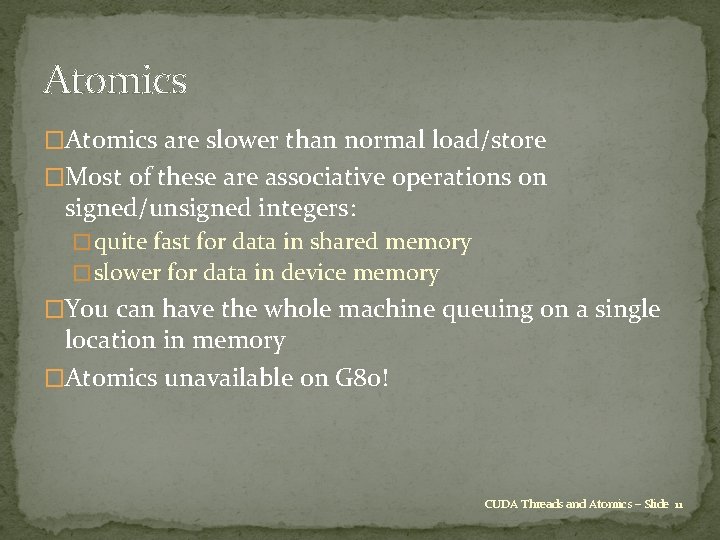

Atomics �Atomics are slower than normal load/store �Most of these are associative operations on signed/unsigned integers: � quite fast for data in shared memory � slower for data in device memory �You can have the whole machine queuing on a single location in memory �Atomics unavailable on G 80! CUDA Threads and Atomics – Slide 11

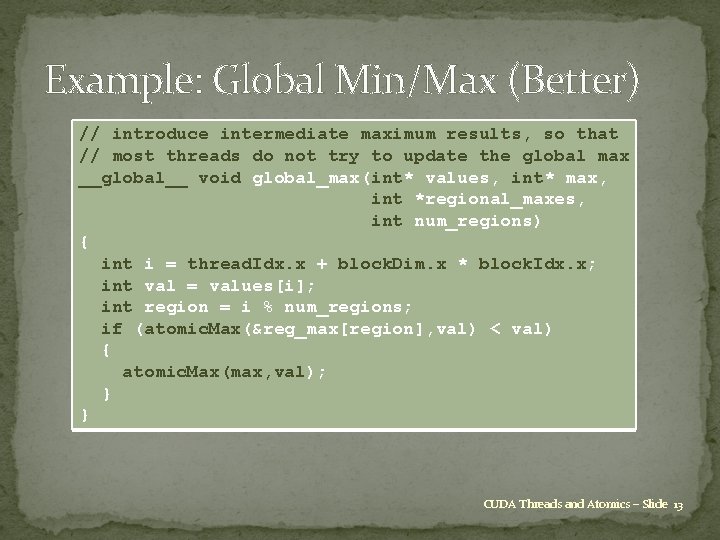

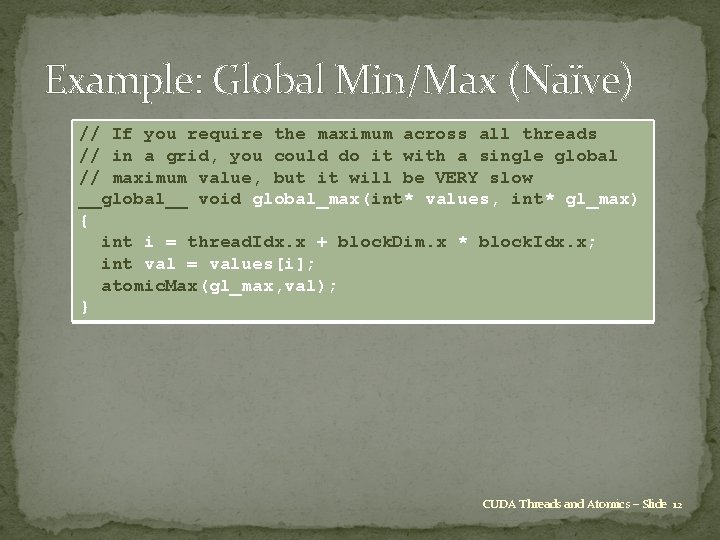

Example: Global Min/Max (Naïve) // If you require the maximum across all threads // in a grid, you could do it with a single global // maximum value, but it will be VERY slow __global__ void global_max(int* values, int* gl_max) { int i = thread. Idx. x + block. Dim. x * block. Idx. x; int val = values[i]; atomic. Max(gl_max, val); } CUDA Threads and Atomics – Slide 12

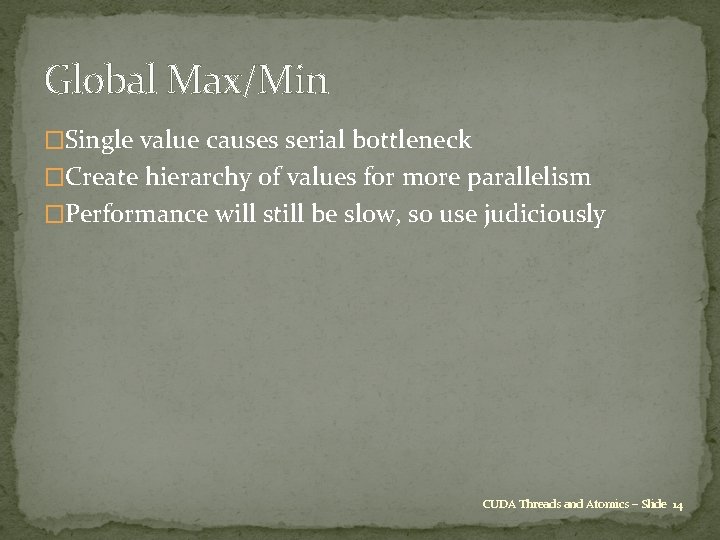

Example: Global Min/Max (Better) // introduce intermediate maximum results, so that // most threads do not try to update the global max __global__ void global_max(int* values, int* max, int *regional_maxes, int num_regions) { int i = thread. Idx. x + block. Dim. x * block. Idx. x; int val = values[i]; int region = i % num_regions; if (atomic. Max(®_max[region], val) < val) { atomic. Max(max, val); } } CUDA Threads and Atomics – Slide 13

Global Max/Min �Single value causes serial bottleneck �Create hierarchy of values for more parallelism �Performance will still be slow, so use judiciously CUDA Threads and Atomics – Slide 14

Atomics: Summary �Can’t use normal load/store for inter-thread communication because of race conditions �Use atomic instructions for sparse and/or unpredictable global communication �Decompose data (very limited use of single global sum/max/min/etc. ) for more parallelism CUDA Threads and Atomics – Slide 15

Topic 2: Streaming Multiprocessor Execution and Divergence �How a streaming multiprocessor (SM) executes threads � Overview of how a streaming multiprocessor works � SIMT Execution � Divergence CUDA Threads and Atomics – Slide 16

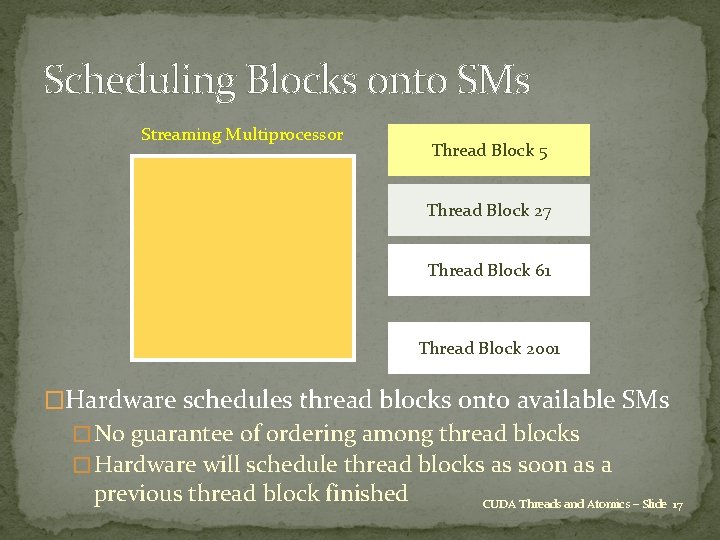

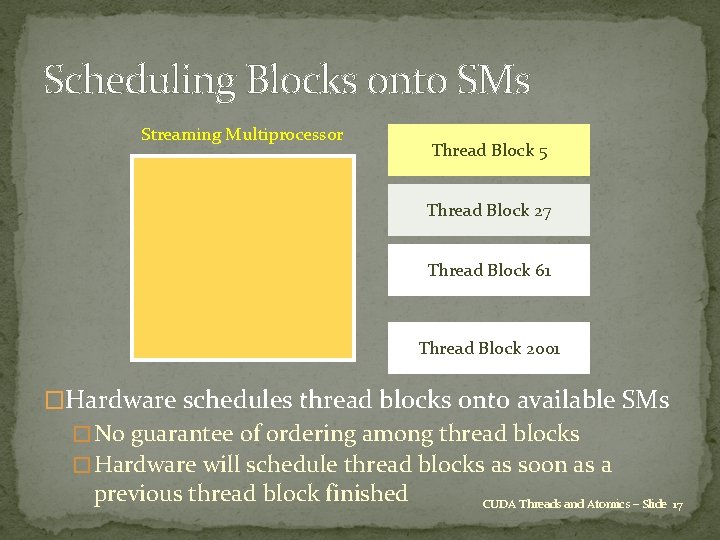

Scheduling Blocks onto SMs Streaming Multiprocessor Thread Block 5 Thread Block 27 Thread Block 61 Thread Block 2001 �Hardware schedules thread blocks onto available SMs � No guarantee of ordering among thread blocks � Hardware will schedule thread blocks as soon as a previous thread block finished CUDA Threads and Atomics – Slide 17

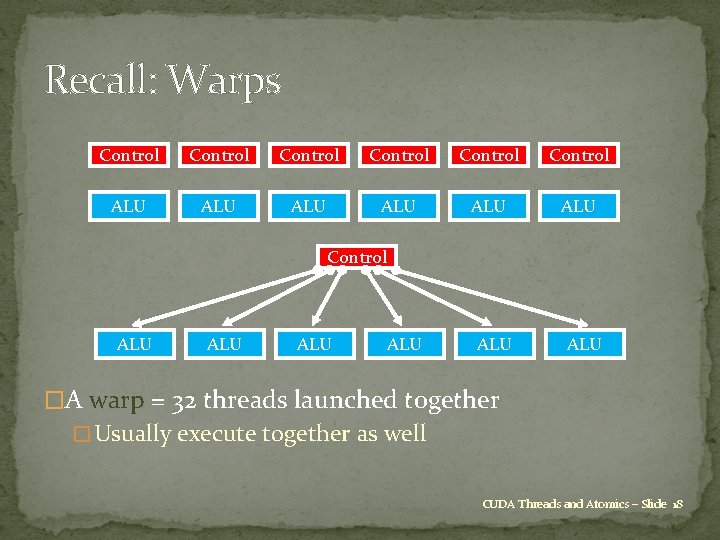

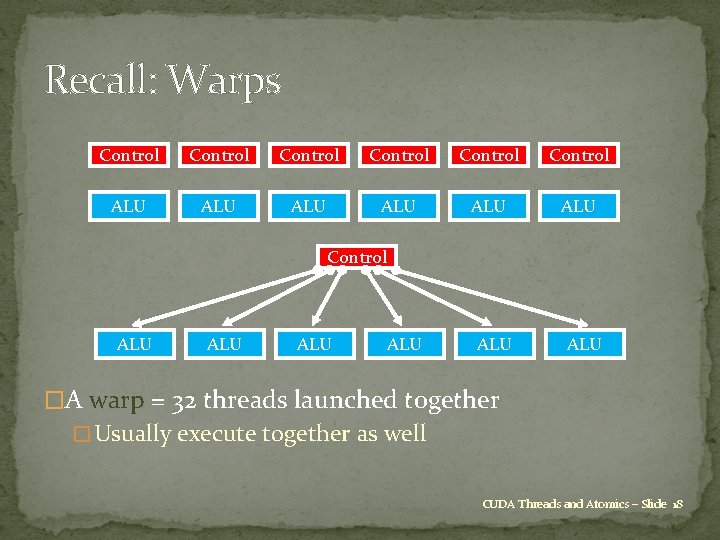

Recall: Warps Control Control ALU ALU ALU �A warp = 32 threads launched together � Usually execute together as well CUDA Threads and Atomics – Slide 18

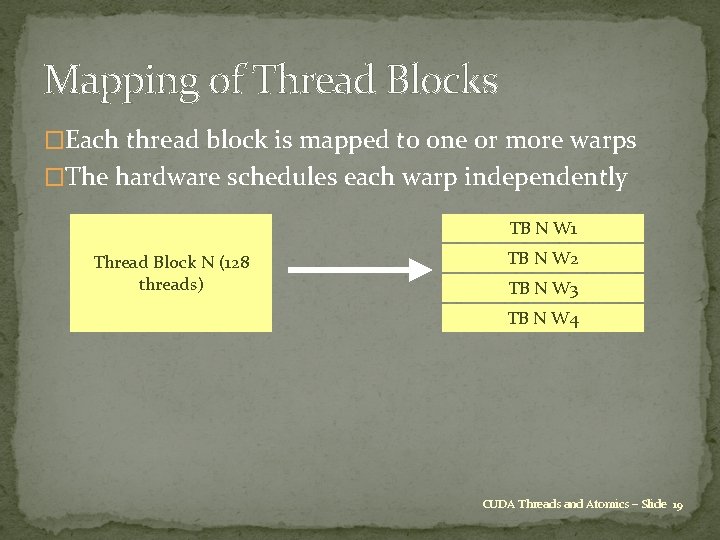

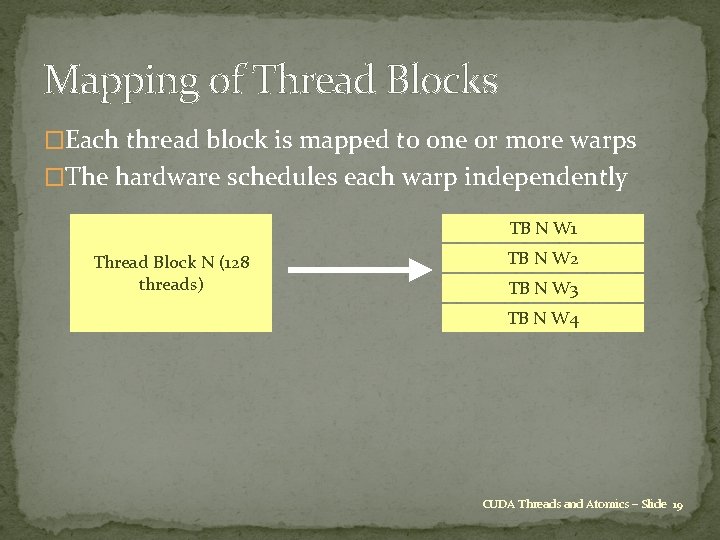

Mapping of Thread Blocks �Each thread block is mapped to one or more warps �The hardware schedules each warp independently TB N W 1 Thread Block N (128 threads) TB N W 2 TB N W 3 TB N W 4 CUDA Threads and Atomics – Slide 19

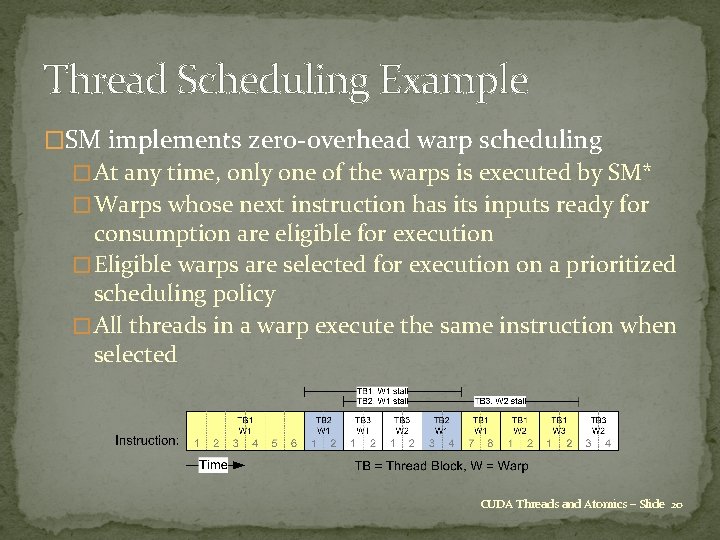

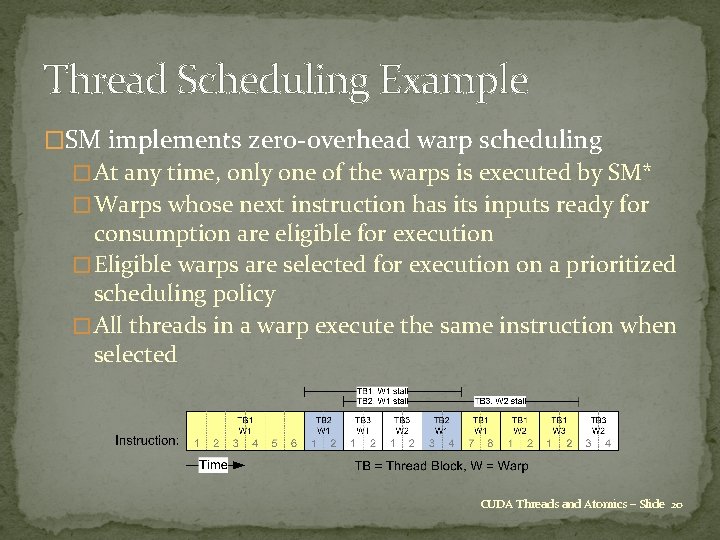

Thread Scheduling Example �SM implements zero-overhead warp scheduling � At any time, only one of the warps is executed by SM* � Warps whose next instruction has its inputs ready for consumption are eligible for execution � Eligible warps are selected for execution on a prioritized scheduling policy � All threads in a warp execute the same instruction when selected CUDA Threads and Atomics – Slide 20

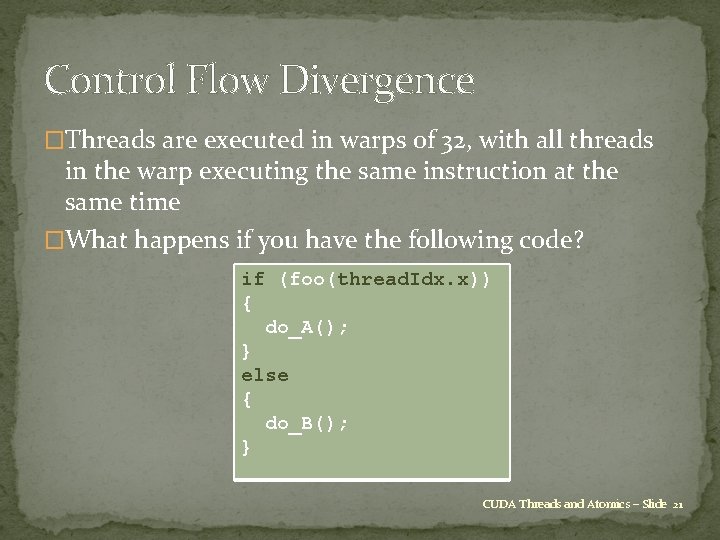

Control Flow Divergence �Threads are executed in warps of 32, with all threads in the warp executing the same instruction at the same time �What happens if you have the following code? if (foo(thread. Idx. x)) { do_A(); } else { do_B(); } CUDA Threads and Atomics – Slide 21

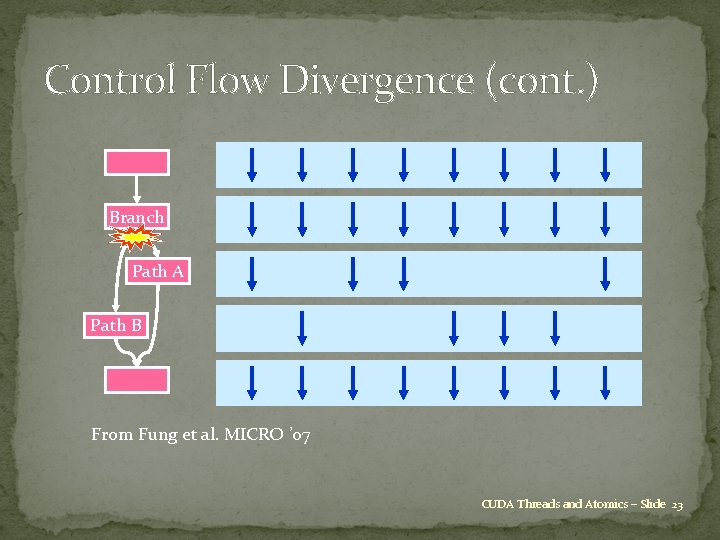

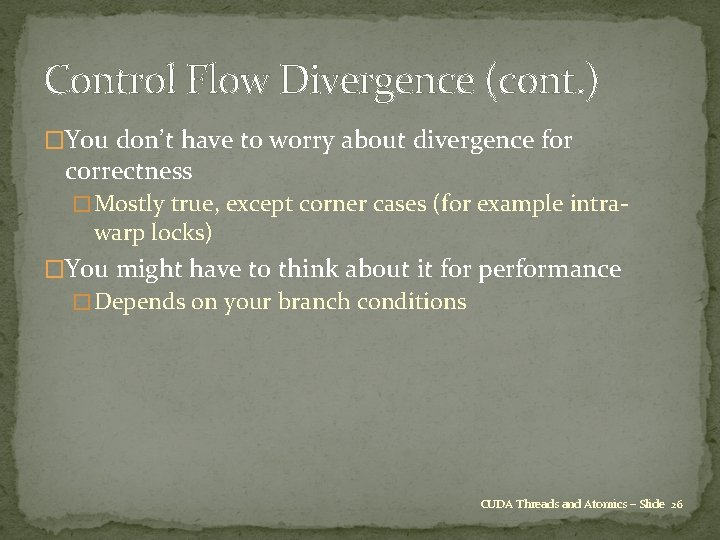

Control Flow Divergence (cont. ) if (foo(thread. Idx. x)) { do_A(); } else { do_B(); } �This is called warp divergence – CUDA will generate correct code to handle this, but to understand the performance you need to understand what CUDA does with it CUDA Threads and Atomics – Slide 22

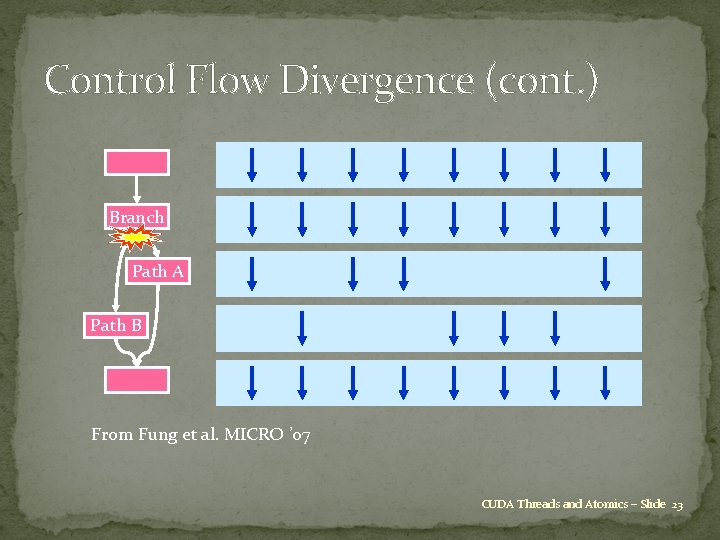

Control Flow Divergence (cont. ) Branch Path A Path B From Fung et al. MICRO ’ 07 CUDA Threads and Atomics – Slide 23

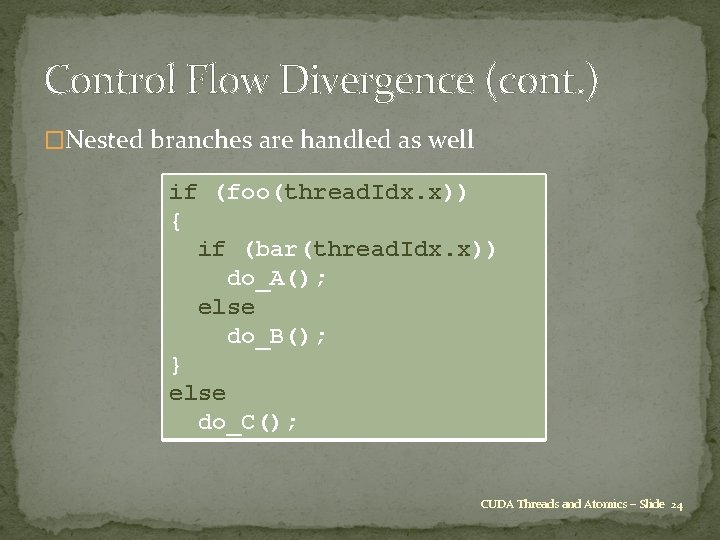

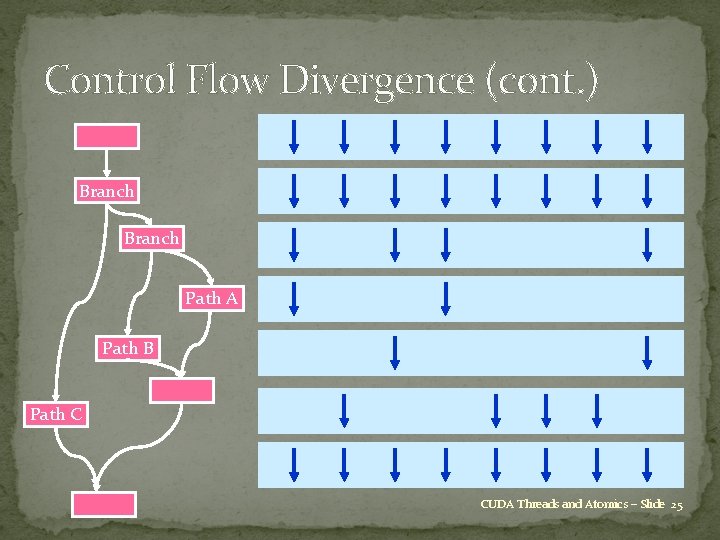

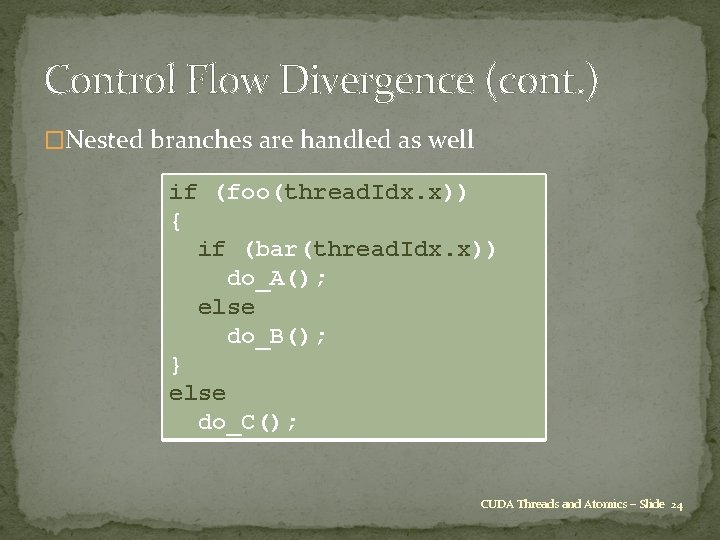

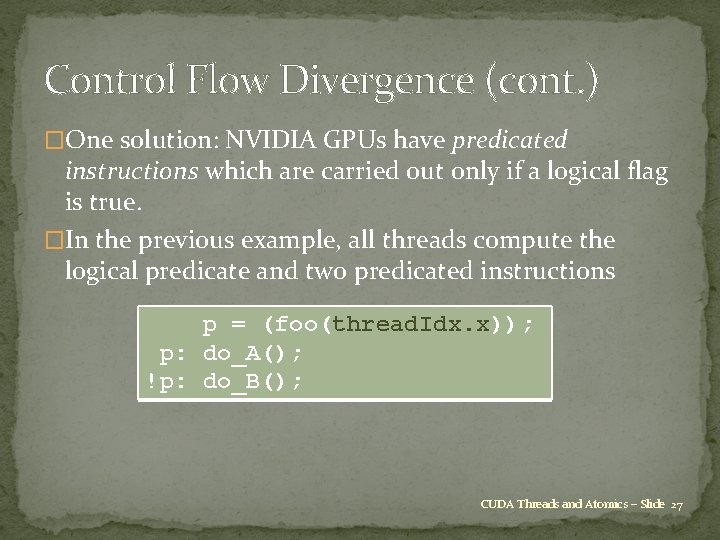

Control Flow Divergence (cont. ) �Nested branches are handled as well if (foo(thread. Idx. x)) { if (bar(thread. Idx. x)) do_A(); else do_B(); } else do_C(); CUDA Threads and Atomics – Slide 24

Control Flow Divergence (cont. ) Branch Path A Path B Path C CUDA Threads and Atomics – Slide 25

Control Flow Divergence (cont. ) �You don’t have to worry about divergence for correctness � Mostly true, except corner cases (for example intra- warp locks) �You might have to think about it for performance � Depends on your branch conditions CUDA Threads and Atomics – Slide 26

Control Flow Divergence (cont. ) �One solution: NVIDIA GPUs have predicated instructions which are carried out only if a logical flag is true. �In the previous example, all threads compute the logical predicate and two predicated instructions p = (foo(thread. Idx. x)); p: do_A(); !p: do_B(); CUDA Threads and Atomics – Slide 27

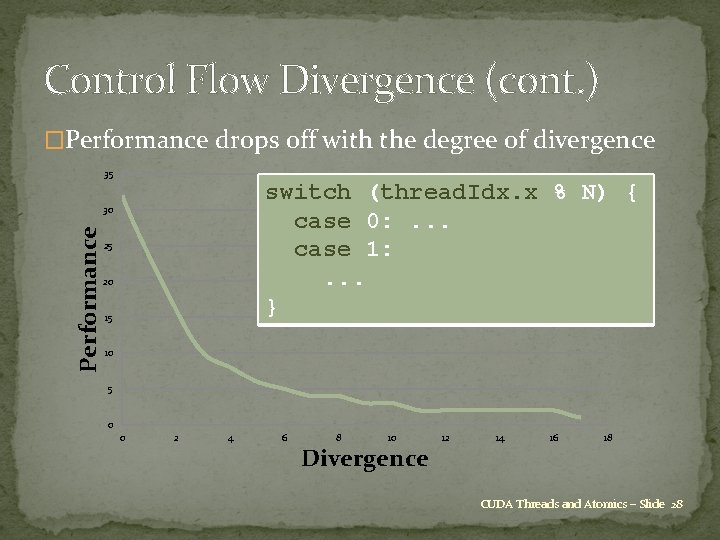

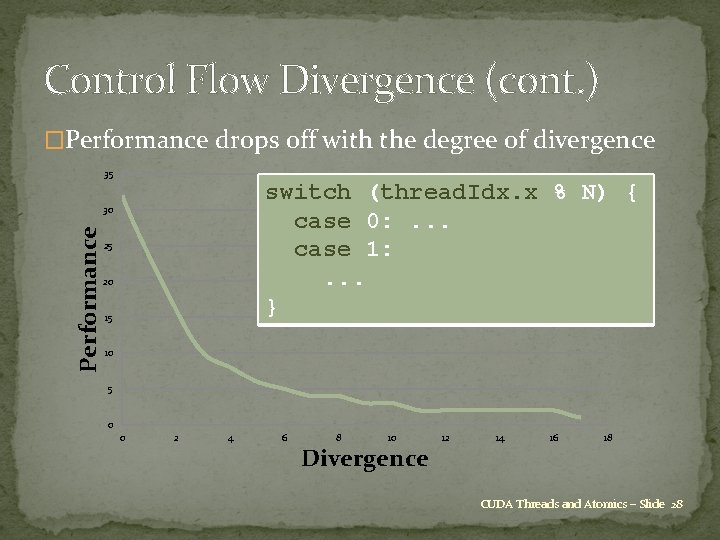

Control Flow Divergence (cont. ) �Performance drops off with the degree of divergence 35 switch (thread. Idx. x % N) { case 0: . . . case 1: . . . } Performance 30 25 20 15 10 5 0 0 2 4 6 8 10 Divergence 12 14 16 18 CUDA Threads and Atomics – Slide 28

Control Flow Divergence (cont. ) �Performance drops off with the degree of divergence � In worst case, effectively lose a factor of 32 in performance if one thread needs expensive branch, while rest do nothing �Another example: processing a long list of elements where, depending on run-time values, a few require very expensive processing GPU implementation: � first process list to build two sub-lists of “simple” and “expensive” elements � then process two sub-lists separately CUDA Threads and Atomics – Slide 29

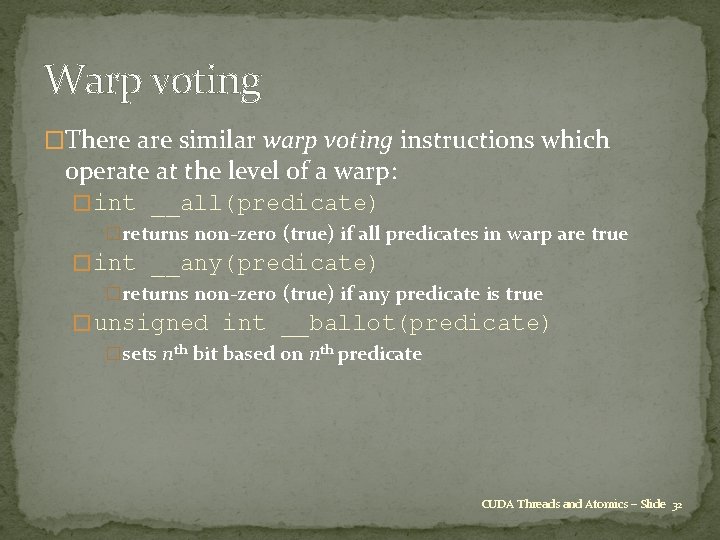

Synchronization �Already introduced __syncthreads(); which forms a barrier – all threads wait until every one has reached this point. �When writing conditional code, must be careful to make sure that all threads do reach the __syncthreads(); �Otherwise, can end up in deadlock CUDA Threads and Atomics – Slide 30

Synchronization (cont. ) �Fermi supports some new synchronisation instructions which are similar to __syncthreads() but have extra capabilities: � int __syncthreads_count(predicate) �counts how many predicates are true � int __syncthreads_and(predicate) �returns non-zero (true) if all predicates are true � int __syncthreads_or(predicate) �returns non-zero (true) if any predicate is true CUDA Threads and Atomics – Slide 31

Warp voting �There are similar warp voting instructions which operate at the level of a warp: � int __all(predicate) �returns non-zero (true) if all predicates in warp are true � int __any(predicate) �returns non-zero (true) if any predicate is true � unsigned int __ballot(predicate) �sets nth bit based on nth predicate CUDA Threads and Atomics – Slide 32

Topic 3: Locks �Use very judiciously �Always include a max_iter in your spinloop! �Decompose your data and your locks CUDA Threads and Atomics – Slide 33

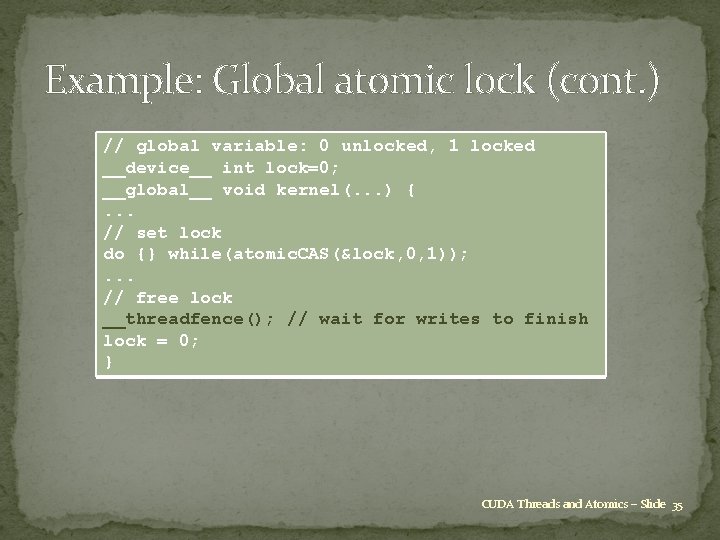

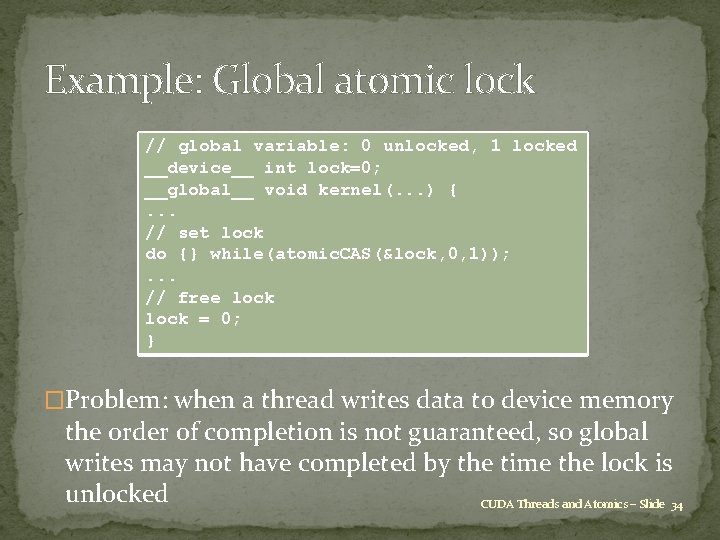

Example: Global atomic lock // global variable: 0 unlocked, 1 locked __device__ int lock=0; __global__ void kernel(. . . ) {. . . // set lock do {} while(atomic. CAS(&lock, 0, 1)); . . . // free lock = 0; } �Problem: when a thread writes data to device memory the order of completion is not guaranteed, so global writes may not have completed by the time the lock is unlocked CUDA Threads and Atomics – Slide 34

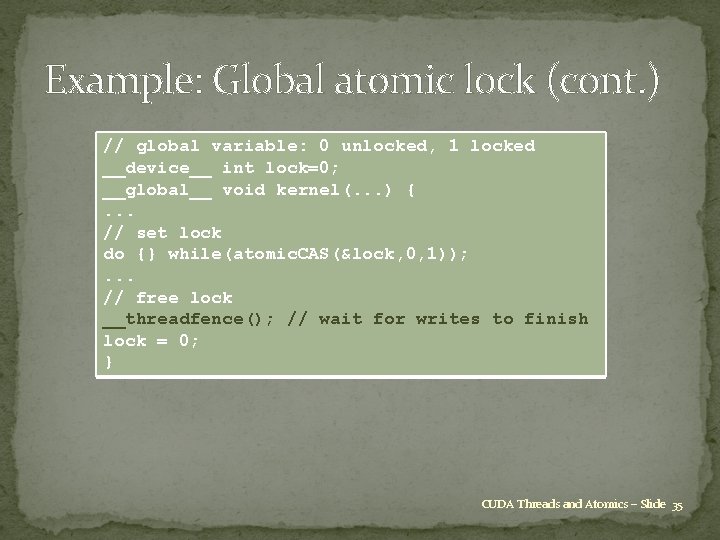

Example: Global atomic lock (cont. ) // global variable: 0 unlocked, 1 locked __device__ int lock=0; __global__ void kernel(. . . ) {. . . // set lock do {} while(atomic. CAS(&lock, 0, 1)); . . . // free lock __threadfence(); // wait for writes to finish lock = 0; } CUDA Threads and Atomics – Slide 35

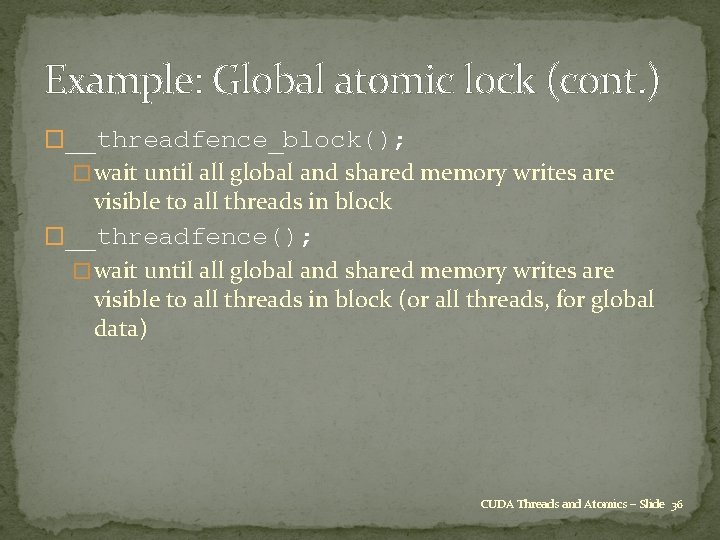

Example: Global atomic lock (cont. ) �__threadfence_block(); � wait until all global and shared memory writes are visible to all threads in block �__threadfence(); � wait until all global and shared memory writes are visible to all threads in block (or all threads, for global data) CUDA Threads and Atomics – Slide 36

Summary �lots of esoteric capabilities – don’t worry about most of �them �essential to understand warp divergence – can have a �very big impact on performance �__syncthreads(); is vital �the rest can be ignored until you have a critical need – then read the documentation carefully and look for examples in the SDK CUDA Threads and Atomics – Slide 37

End Credits �Based on original material from � Oxford University: Mike Giles � Stanford University �Jared Hoberock, David Tarjan �Revision history: last updated 8/8/2011. CUDA Threads and Atomics – Slide 38