High Performance Linux Clusters Guru Session Usenix Boston

High Performance Linux Clusters Guru Session, Usenix, Boston June 30, 2004 Greg Bruno, SDSC

Overview of San Diego Supercomputer Center n Founded in 1985 ¨ n n n Over 400 employees Mission: Innovate, develop, and deploy technology to advance science Recognized as an international leader in: ¨ ¨ ¨ n Non-military access to supercomputers Grid and Cluster Computing Data Management High Performance Computing Networking Visualization Primarily funded by NSF

My Background n 1984 - 1998: NCR - Helped to build the world’s largest database computers ¨ n n Saw the transistion from proprietary parallel systems to clusters 1999 - 2000: HPVM - Helped build Windows clusters 2000 - Now: Rocks - Helping to build Linuxbased clusters

Why Clusters?

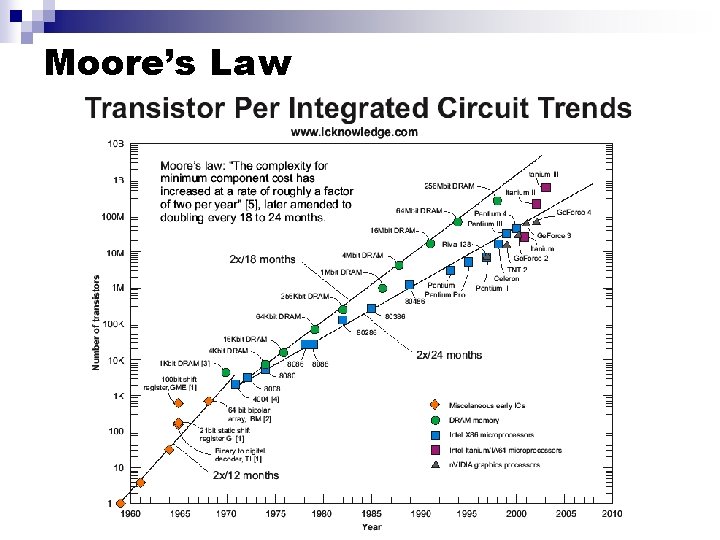

Moore’s Law

Cluster Pioneers n In the mid-1990 s, Network of Workstations project (UC Berkeley) and the Beowulf Project (NASA) asked the question: Can You Build a High Performance Machine From Commodity Components?

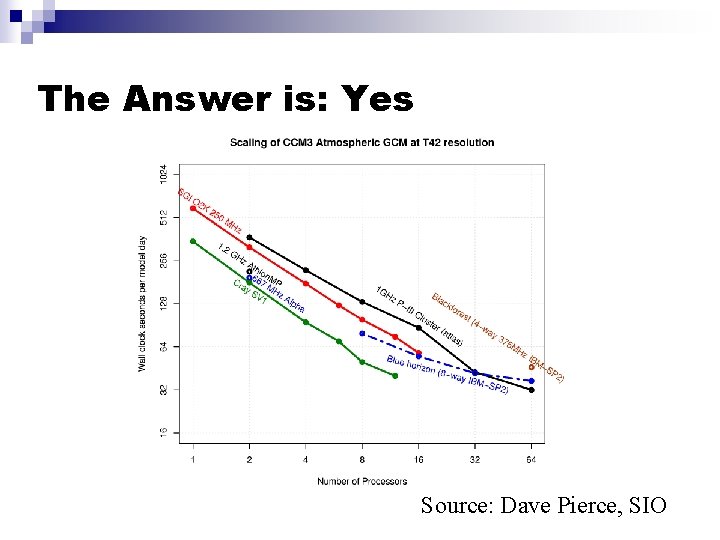

The Answer is: Yes Source: Dave Pierce, SIO

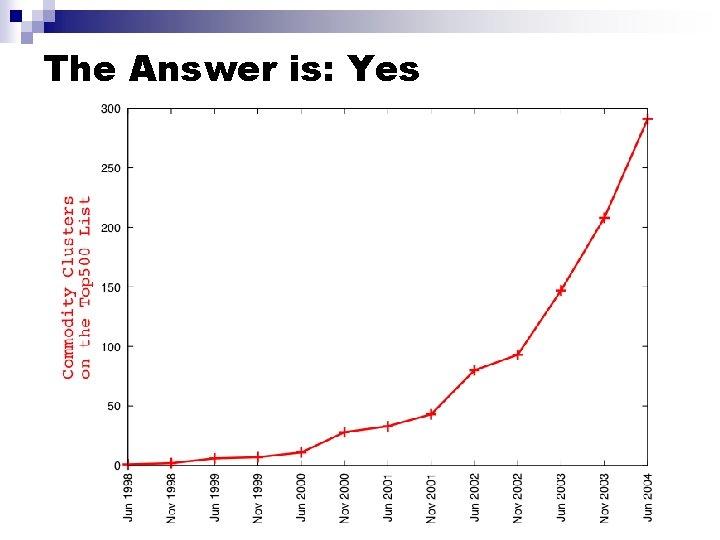

The Answer is: Yes

Types of Clusters n High Availability ¨ Generally small (less than 8 nodes) n Visualization n High Performance Computational tools for scientific computing ¨ Large database machines ¨

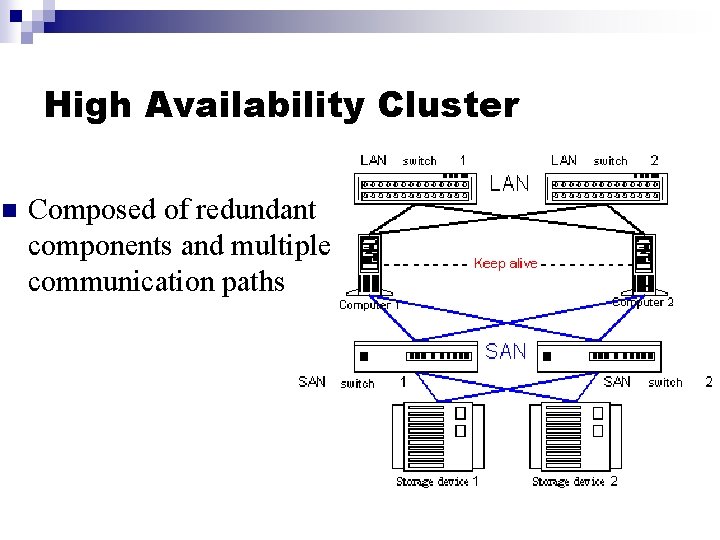

High Availability Cluster n Composed of redundant components and multiple communication paths

Visualization Cluster n Each node in the cluster drives a display

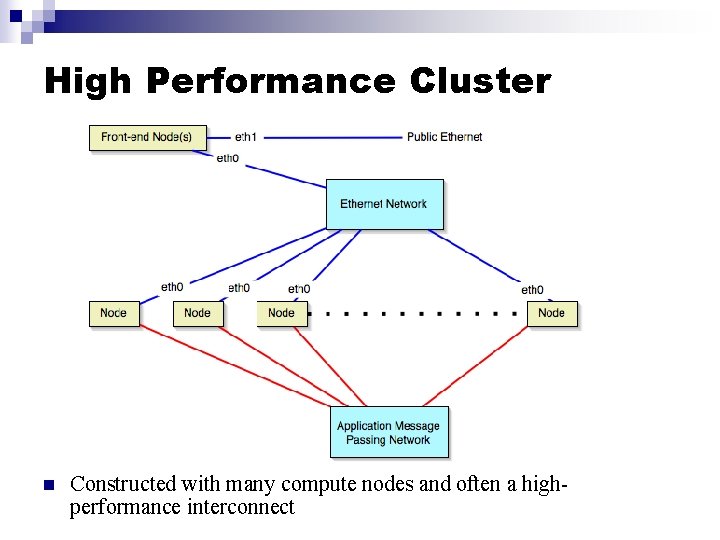

High Performance Cluster n Constructed with many compute nodes and often a highperformance interconnect

Cluster Hardware Components

Cluster Processors n n n Pentium/Athlon Opteron Itanium

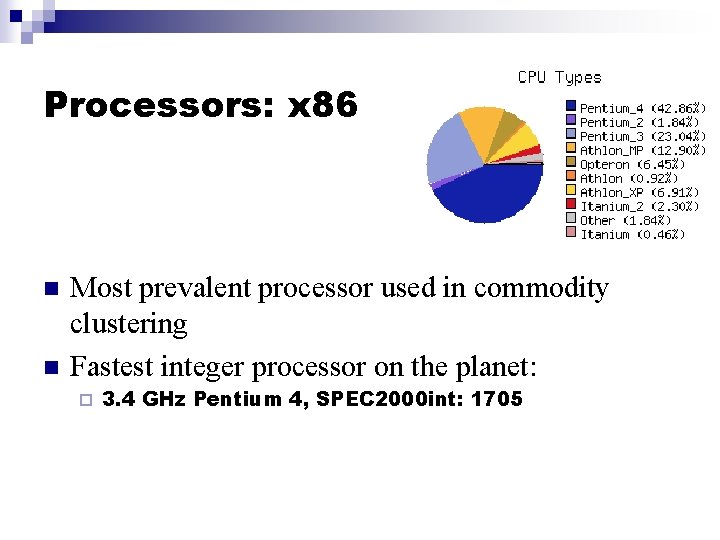

Processors: x 86 n n Most prevalent processor used in commodity clustering Fastest integer processor on the planet: ¨ 3. 4 GHz Pentium 4, SPEC 2000 int: 1705

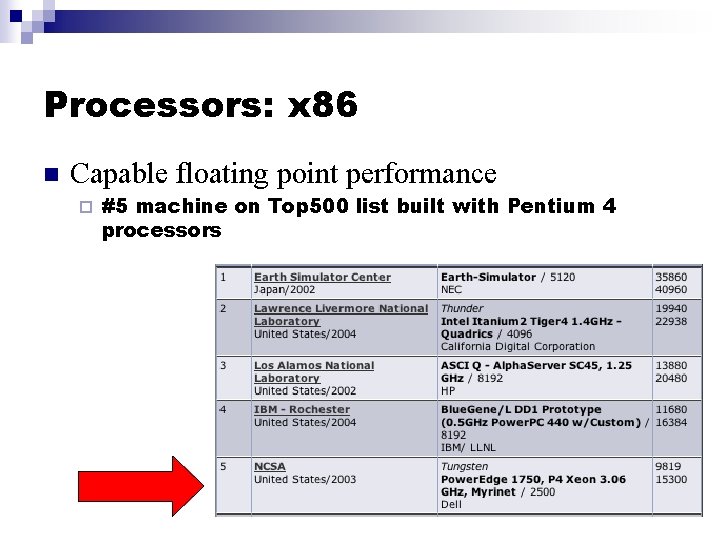

Processors: x 86 n Capable floating point performance ¨ #5 machine on Top 500 list built with Pentium 4 processors

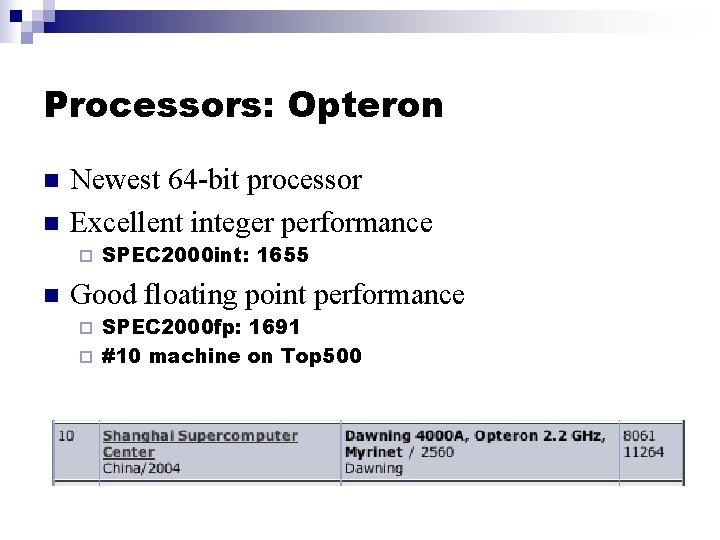

Processors: Opteron n n Newest 64 -bit processor Excellent integer performance ¨ n SPEC 2000 int: 1655 Good floating point performance SPEC 2000 fp: 1691 ¨ #10 machine on Top 500 ¨

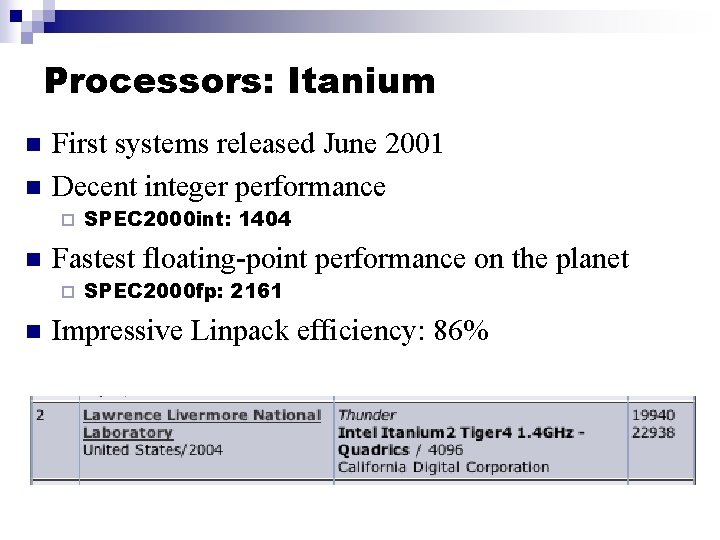

Processors: Itanium n n First systems released June 2001 Decent integer performance ¨ n Fastest floating-point performance on the planet ¨ n SPEC 2000 int: 1404 SPEC 2000 fp: 2161 Impressive Linpack efficiency: 86%

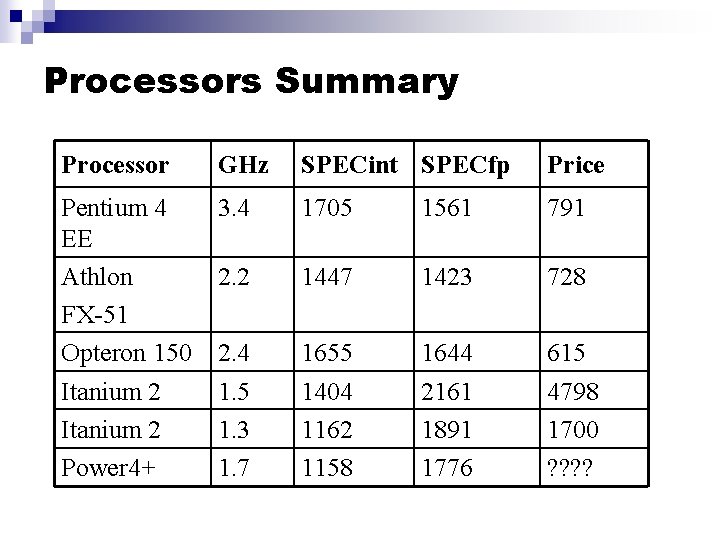

Processors Summary Processor GHz SPECint SPECfp Price Pentium 4 EE Athlon FX-51 Opteron 150 Itanium 2 Power 4+ 3. 4 1705 1561 791 2. 2 1447 1423 728 2. 4 1. 5 1. 3 1. 7 1655 1404 1162 1158 1644 2161 1891 1776 615 4798 1700 ? ?

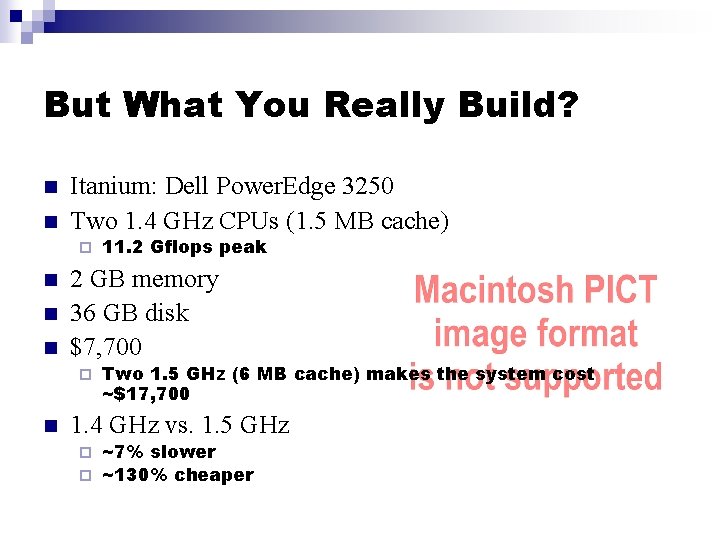

But What You Really Build? n n Itanium: Dell Power. Edge 3250 Two 1. 4 GHz CPUs (1. 5 MB cache) ¨ n n n 2 GB memory 36 GB disk $7, 700 ¨ n 11. 2 Gflops peak Two 1. 5 GHz (6 MB cache) makes the system cost ~$17, 700 1. 4 GHz vs. 1. 5 GHz ~7% slower ¨ ~130% cheaper ¨

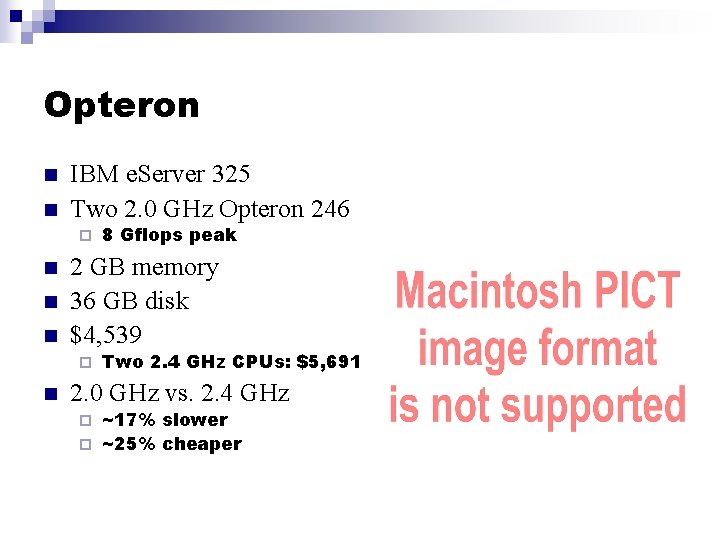

Opteron n n IBM e. Server 325 Two 2. 0 GHz Opteron 246 ¨ n n n 2 GB memory 36 GB disk $4, 539 ¨ n 8 Gflops peak Two 2. 4 GHz CPUs: $5, 691 2. 0 GHz vs. 2. 4 GHz ~17% slower ¨ ~25% cheaper ¨

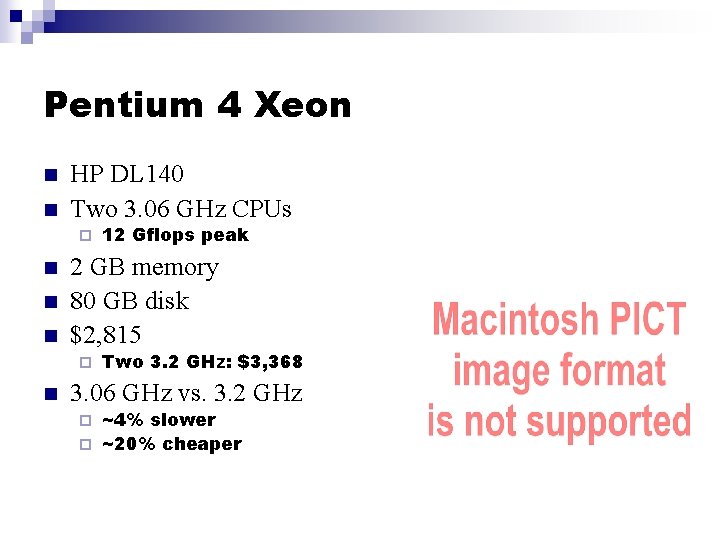

Pentium 4 Xeon n n HP DL 140 Two 3. 06 GHz CPUs ¨ n n n 2 GB memory 80 GB disk $2, 815 ¨ n 12 Gflops peak Two 3. 2 GHz: $3, 368 3. 06 GHz vs. 3. 2 GHz ~4% slower ¨ ~20% cheaper ¨

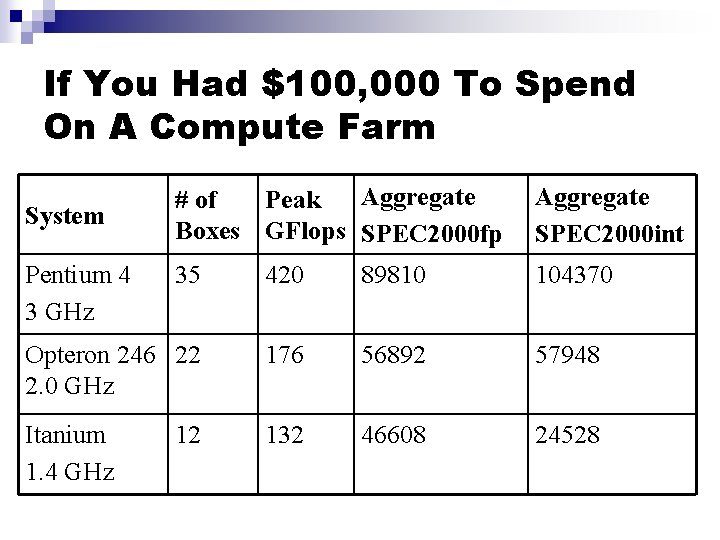

If You Had $100, 000 To Spend On A Compute Farm Aggregate # of Peak Boxes GFlops SPEC 2000 fp Aggregate SPEC 2000 int 35 420 89810 104370 Opteron 246 22 2. 0 GHz 176 56892 57948 Itanium 1. 4 GHz 132 46608 24528 System Pentium 4 3 GHz 12

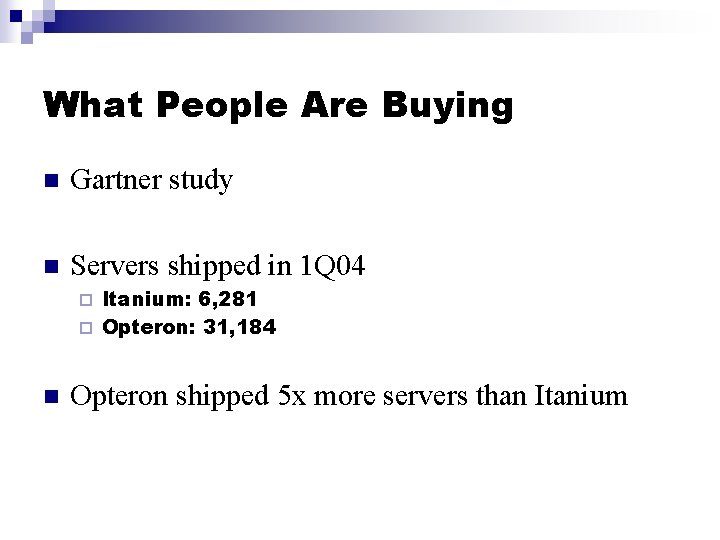

What People Are Buying n Gartner study n Servers shipped in 1 Q 04 Itanium: 6, 281 ¨ Opteron: 31, 184 ¨ n Opteron shipped 5 x more servers than Itanium

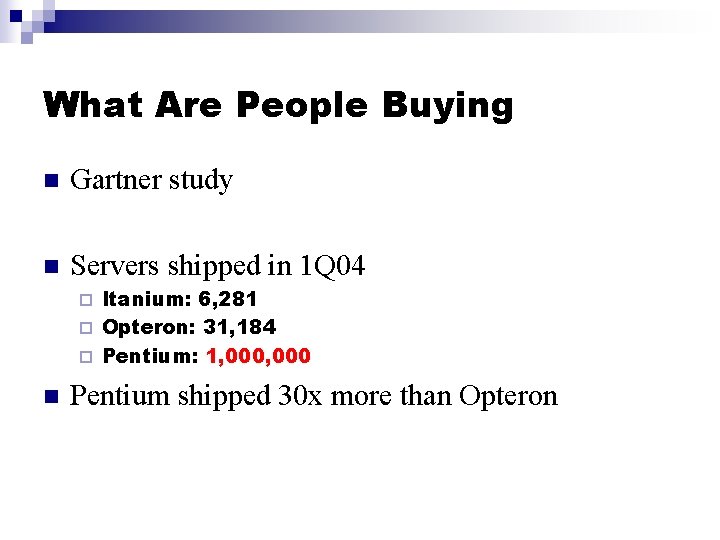

What Are People Buying n Gartner study n Servers shipped in 1 Q 04 Itanium: 6, 281 ¨ Opteron: 31, 184 ¨ Pentium: 1, 000 ¨ n Pentium shipped 30 x more than Opteron

Interconnects

Interconnects n Ethernet ¨ n Most prevalent on clusters Low-latency interconnects Myrinet ¨ Infiniband ¨ Quadrics ¨ Ammasso ¨

Why Low-Latency Interconnects? n Performance Lower latency ¨ Higher bandwidth ¨ n Accomplished through OS-bypass

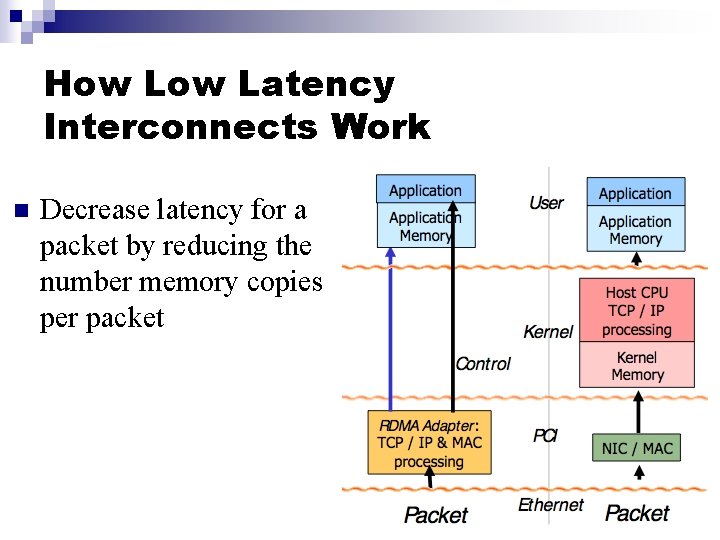

How Latency Interconnects Work n Decrease latency for a packet by reducing the number memory copies per packet

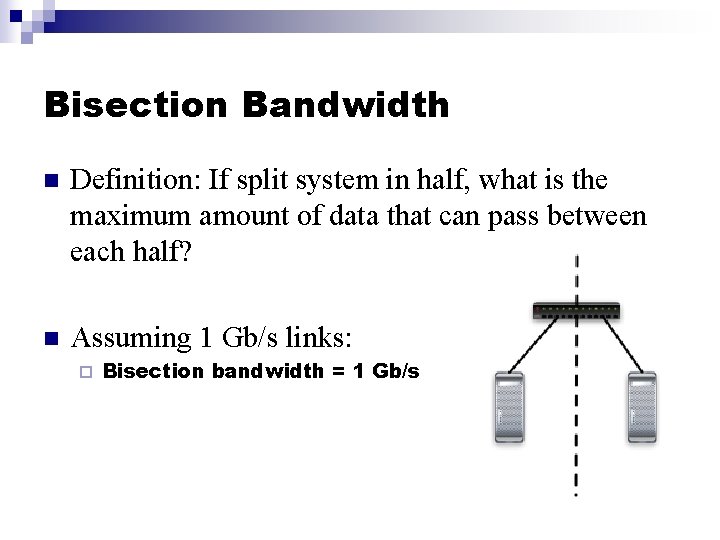

Bisection Bandwidth n Definition: If split system in half, what is the maximum amount of data that can pass between each half? n Assuming 1 Gb/s links: ¨ Bisection bandwidth = 1 Gb/s

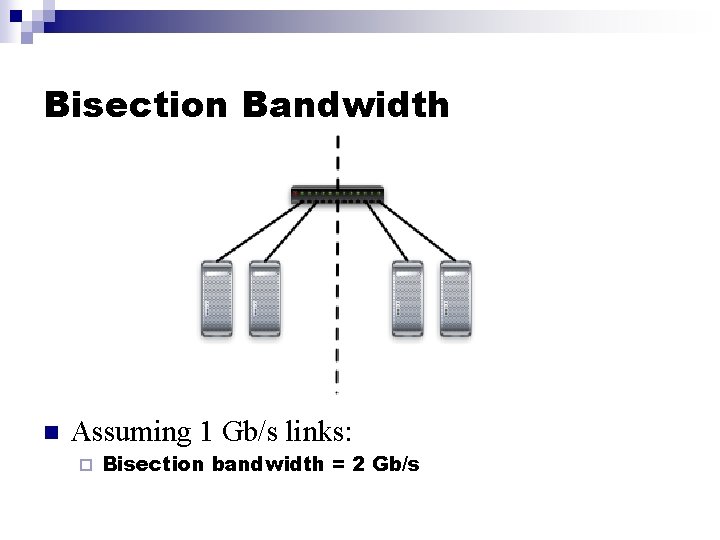

Bisection Bandwidth n Assuming 1 Gb/s links: ¨ Bisection bandwidth = 2 Gb/s

Bisection Bandwidth n n Definition: Full bisection bandwidth is a network topology that can support N/2 simultaneous communication streams. That is, the nodes on one half of the network can communicate with the nodes on the other half at full speed.

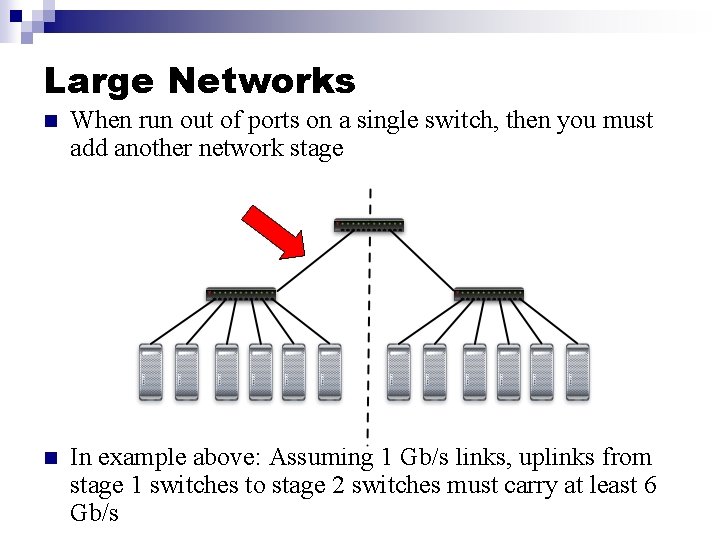

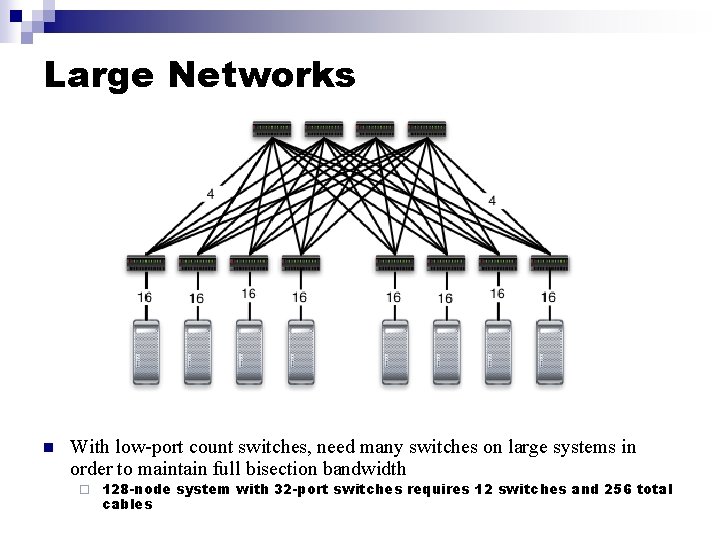

Large Networks n When run out of ports on a single switch, then you must add another network stage n In example above: Assuming 1 Gb/s links, uplinks from stage 1 switches to stage 2 switches must carry at least 6 Gb/s

Large Networks n With low-port count switches, need many switches on large systems in order to maintain full bisection bandwidth ¨ 128 -node system with 32 -port switches requires 12 switches and 256 total cables

Myrinet n Long-time interconnect vendor ¨ Delivering products since 1995 n Deliver single 128 -port full bisection bandwidth switch n MPI Performance: Latency: 6. 7 us ¨ Bandwidth: 245 MB/s ¨ Cost/port (based on 64 -port configuration): $1000 ¨ n n Switch + NIC + cable http: //www. myri. com/myrinet/product_list. html

Myrinet n Recently announced 256 port switch ¨ Available August 2004

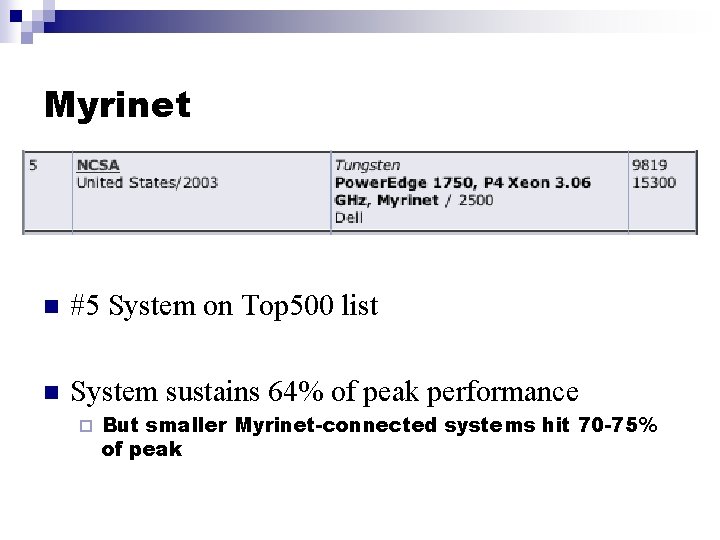

Myrinet n #5 System on Top 500 list n System sustains 64% of peak performance ¨ But smaller Myrinet-connected systems hit 70 -75% of peak

Quadrics n Qs. Net. II E-series ¨ Released at the end of May 2004 n Deliver 128 -port standalone switches n MPI Performance: Latency: 3 us ¨ Bandwidth: 900 MB/s ¨ Cost/port (based on 64 -port configuration): $1800 ¨ n n Switch + NIC + cable http: //doc. quadrics. com/Quadrics. Home. nsf/Display. Pages/ A 3 EE 4 AED 738 B 6 E 2480256 DD 30057 B 227

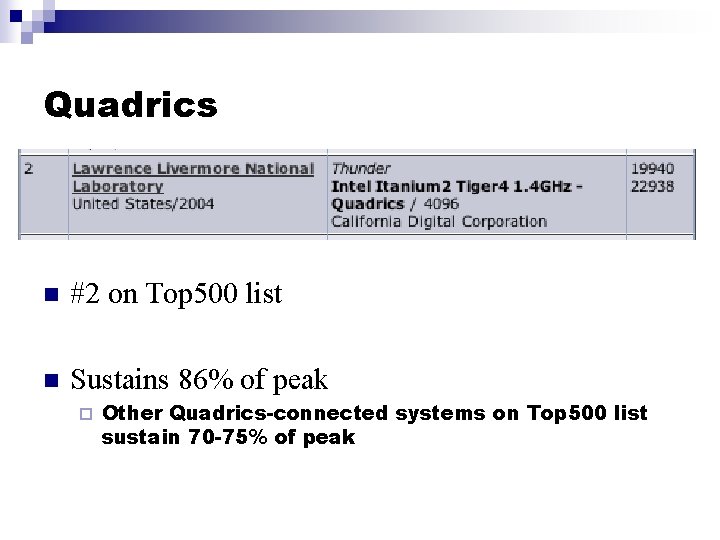

Quadrics n #2 on Top 500 list n Sustains 86% of peak ¨ Other Quadrics-connected systems on Top 500 list sustain 70 -75% of peak

Infiniband n Newest cluster interconnect n Currently shipping 32 -port switches and 192 -port switches n MPI Performance: Latency: 6. 8 us ¨ Bandwidth: 840 MB/s ¨ Estimated cost/port (based on 64 -port configuration): $1700 - 3000 ¨ n n Switch + NIC + cable http: //www. techonline. com/community/related_content/24364

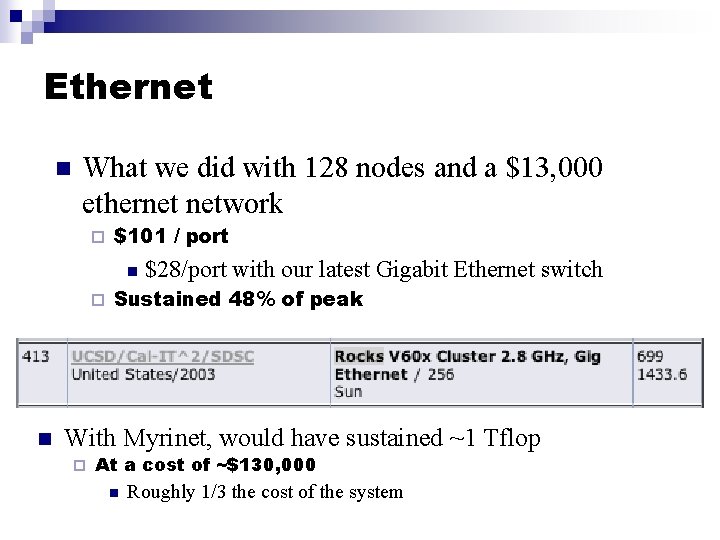

Ethernet n Latency: 80 us n Bandwidth: 100 MB/s n Top 500 list has ethernet-based systems sustaining between 35 -59% of peak

Ethernet n What we did with 128 nodes and a $13, 000 ethernet network ¨ $101 / port n ¨ n $28/port with our latest Gigabit Ethernet switch Sustained 48% of peak With Myrinet, would have sustained ~1 Tflop ¨ At a cost of ~$130, 000 n Roughly 1/3 the cost of the system

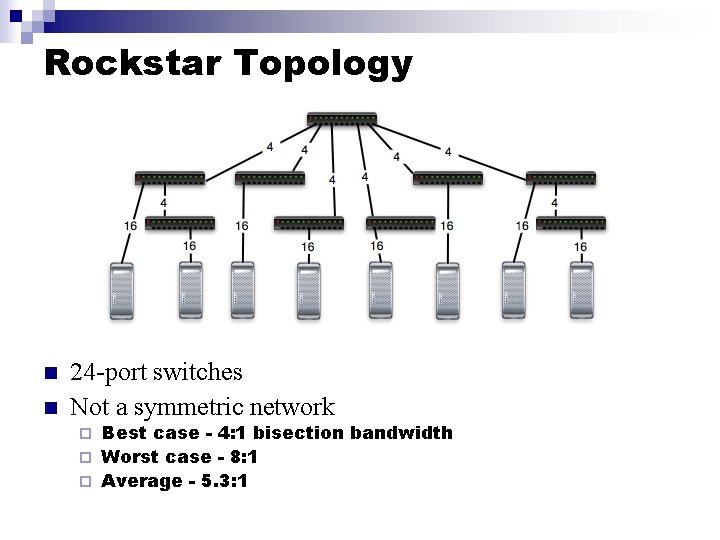

Rockstar Topology n n 24 -port switches Not a symmetric network Best case - 4: 1 bisection bandwidth ¨ Worst case - 8: 1 ¨ Average - 5. 3: 1 ¨

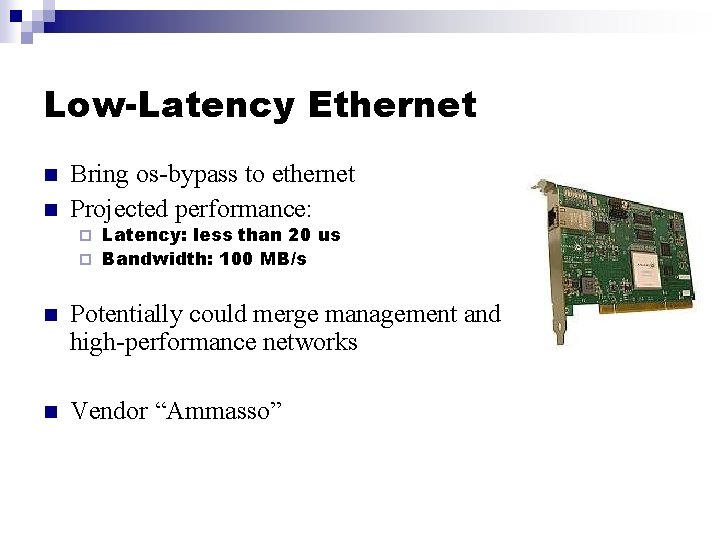

Low-Latency Ethernet n n Bring os-bypass to ethernet Projected performance: Latency: less than 20 us ¨ Bandwidth: 100 MB/s ¨ n Potentially could merge management and high-performance networks n Vendor “Ammasso”

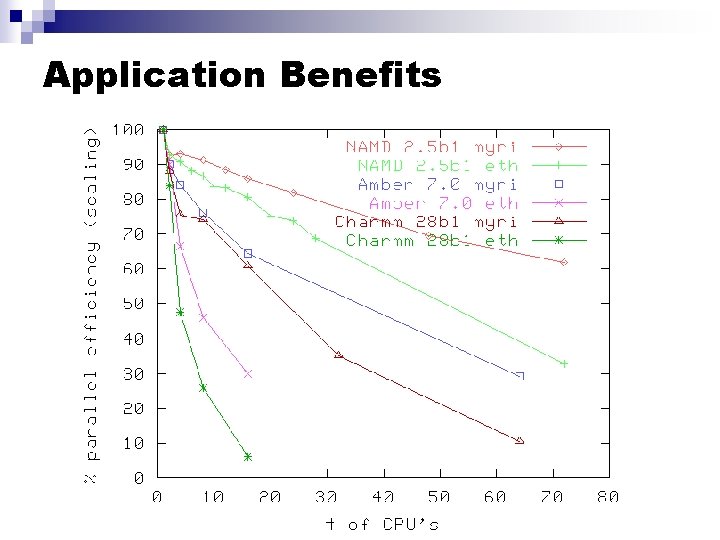

Application Benefits

Storage

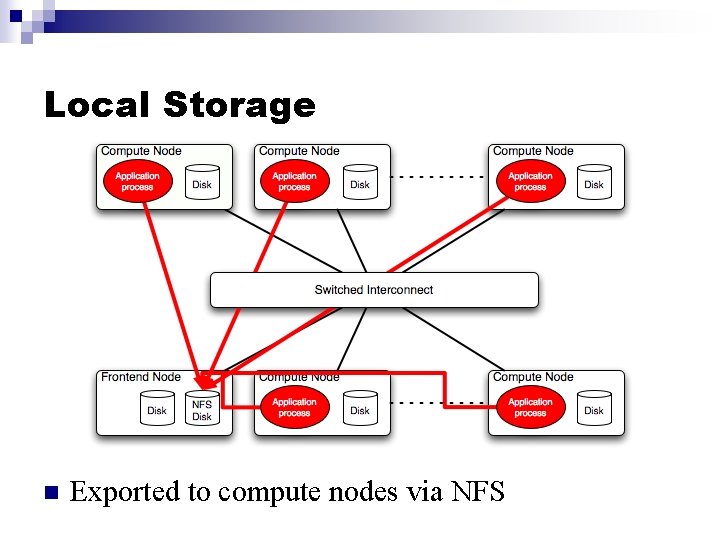

Local Storage n Exported to compute nodes via NFS

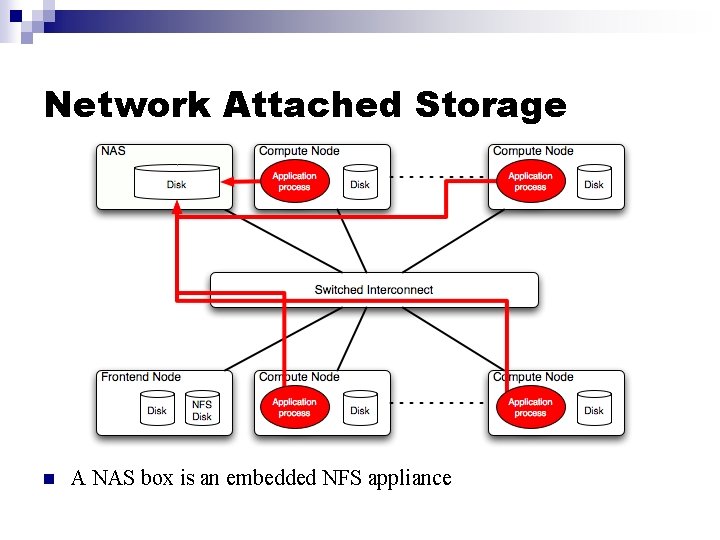

Network Attached Storage n A NAS box is an embedded NFS appliance

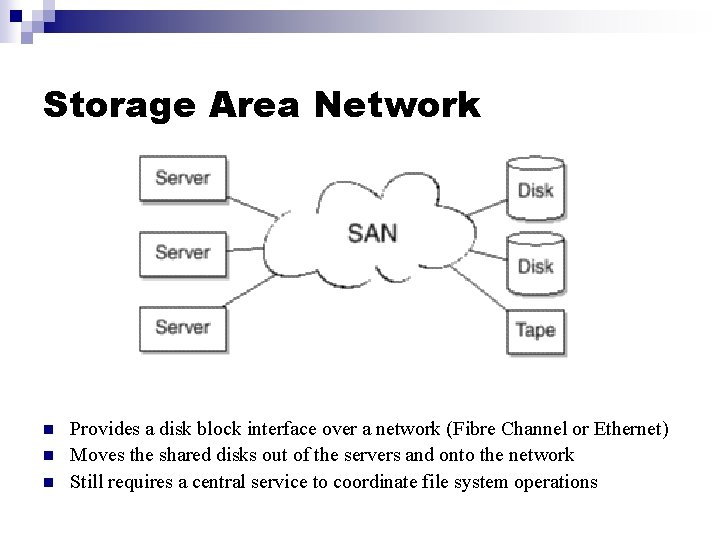

Storage Area Network n n n Provides a disk block interface over a network (Fibre Channel or Ethernet) Moves the shared disks out of the servers and onto the network Still requires a central service to coordinate file system operations

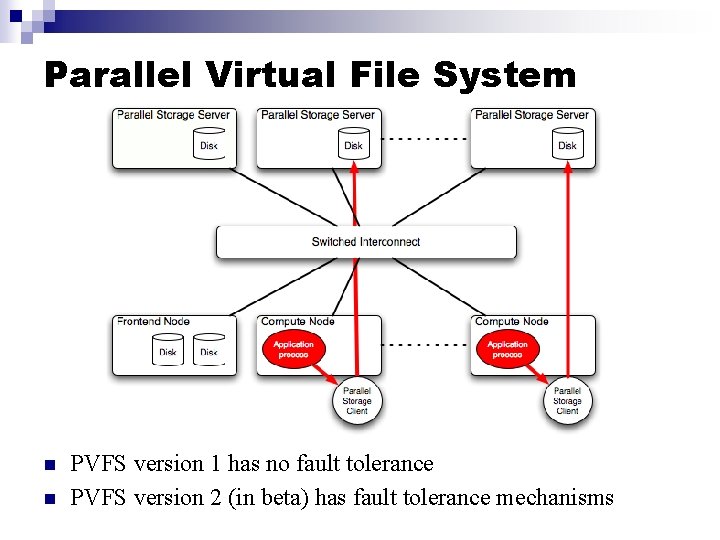

Parallel Virtual File System n n PVFS version 1 has no fault tolerance PVFS version 2 (in beta) has fault tolerance mechanisms

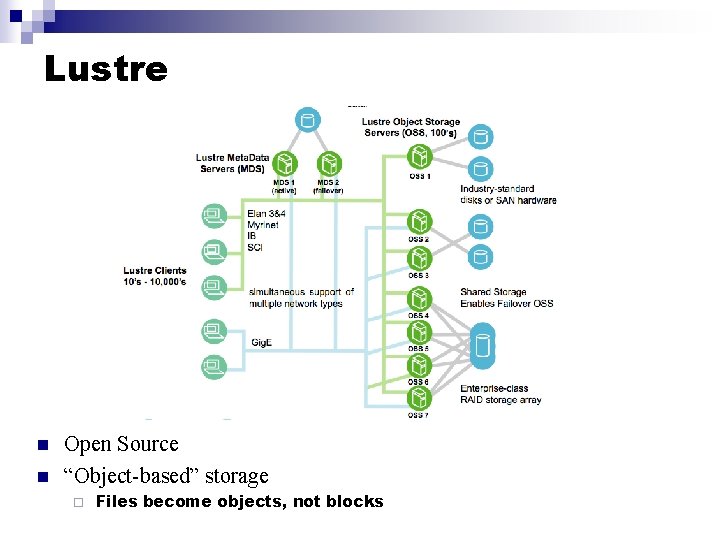

Lustre n n Open Source “Object-based” storage ¨ Files become objects, not blocks

Cluster Software

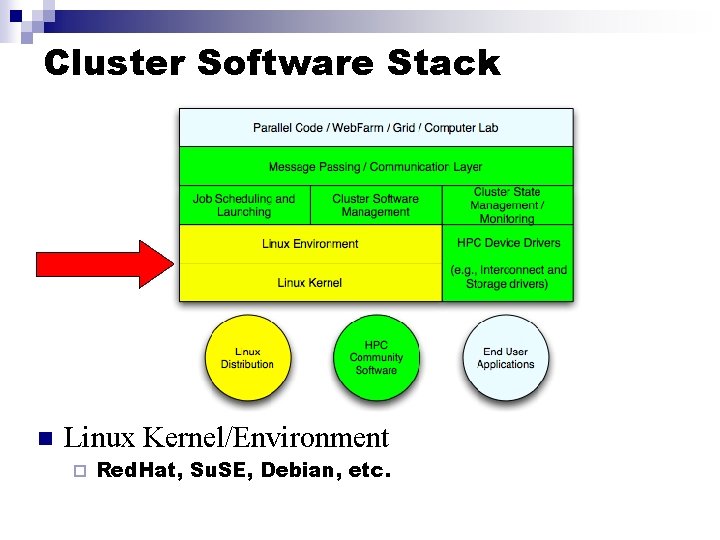

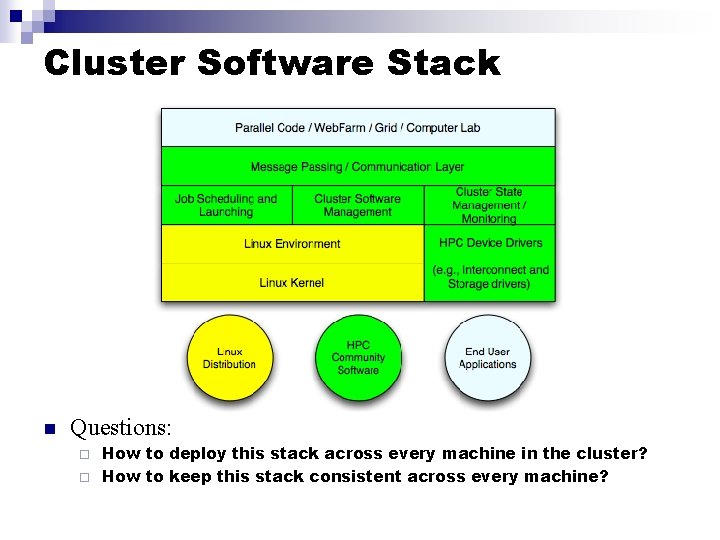

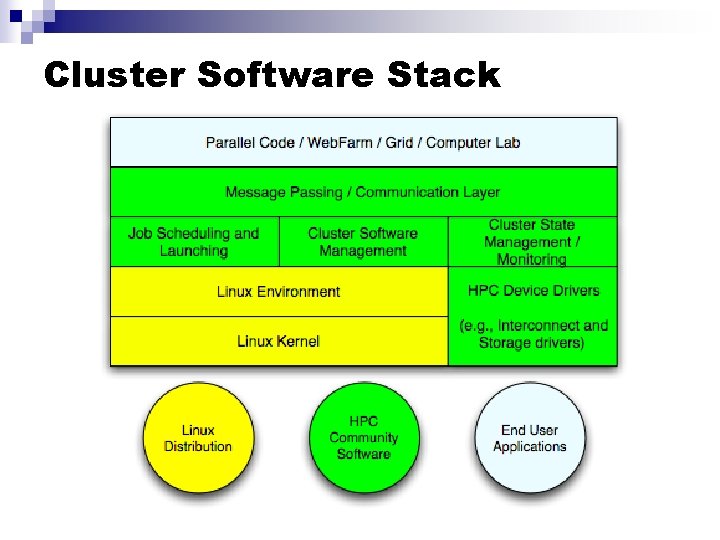

Cluster Software Stack n Linux Kernel/Environment ¨ Red. Hat, Su. SE, Debian, etc.

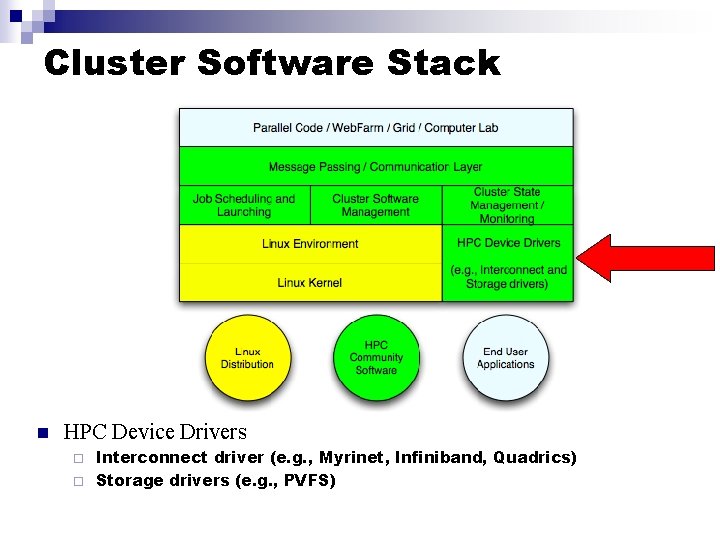

Cluster Software Stack n HPC Device Drivers Interconnect driver (e. g. , Myrinet, Infiniband, Quadrics) ¨ Storage drivers (e. g. , PVFS) ¨

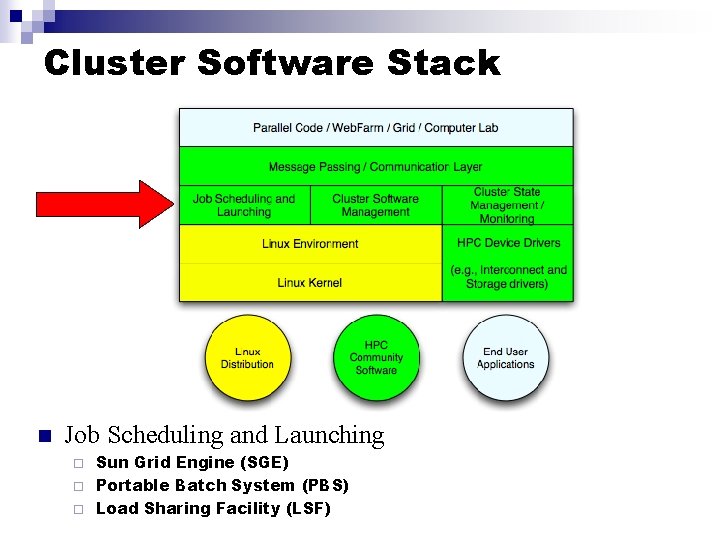

Cluster Software Stack n Job Scheduling and Launching Sun Grid Engine (SGE) ¨ Portable Batch System (PBS) ¨ Load Sharing Facility (LSF) ¨

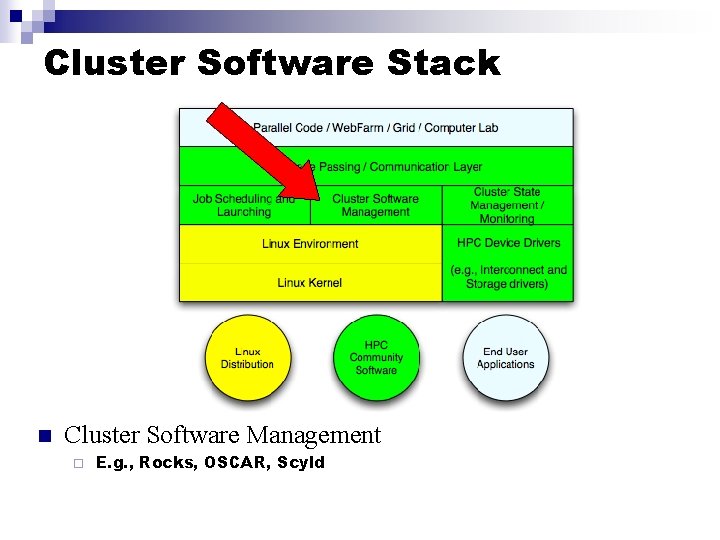

Cluster Software Stack n Cluster Software Management ¨ E. g. , Rocks, OSCAR, Scyld

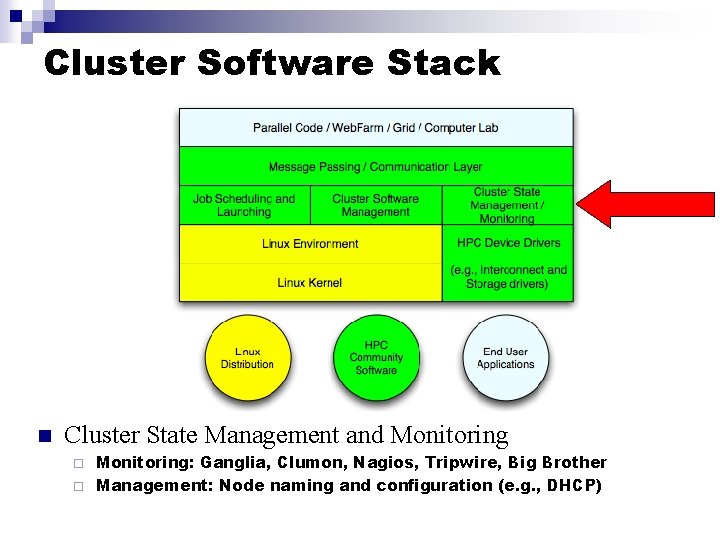

Cluster Software Stack n Cluster State Management and Monitoring: Ganglia, Clumon, Nagios, Tripwire, Big Brother ¨ Management: Node naming and configuration (e. g. , DHCP) ¨

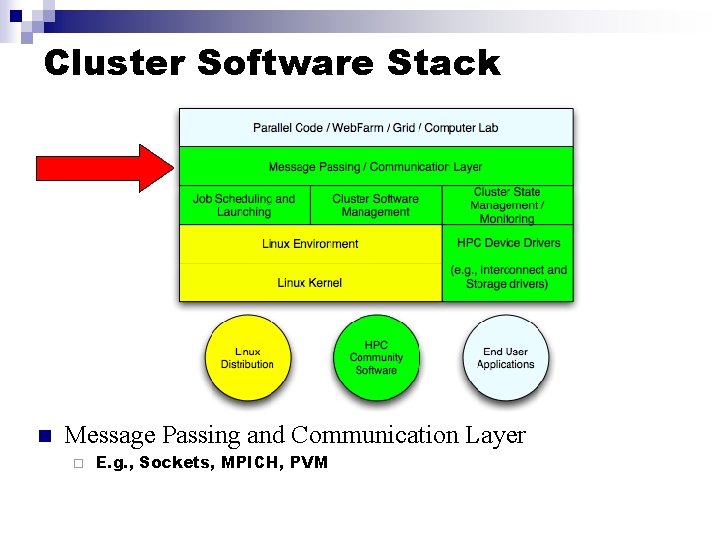

Cluster Software Stack n Message Passing and Communication Layer ¨ E. g. , Sockets, MPICH, PVM

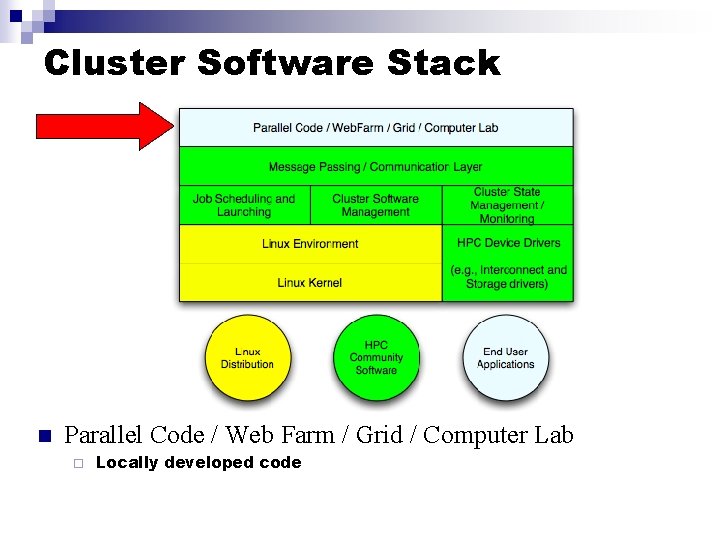

Cluster Software Stack n Parallel Code / Web Farm / Grid / Computer Lab ¨ Locally developed code

Cluster Software Stack n Questions: How to deploy this stack across every machine in the cluster? ¨ How to keep this stack consistent across every machine? ¨

Software Deployment n Known methods: Manual Approach ¨ “Add-on” method ¨ n Bring up a frontend, then add cluster packages ¨ ¨ Open. Mosix, OSCAR, Warewulf Integrated n Cluster packages are added at frontend installation time ¨ Rocks, Scyld

Rocks

Primary Goal n Make clusters easy n Target audience: Scientists who want a capable computational resource in their own lab

Philosophy n n Not fun to “care and feed” for a system All compute nodes are 100% automatically installed ¨ n Critical for scaling Essential to track software updates ¨ RHEL 3. 0 has issued 232 source RPM updates since Oct 21 n n Roughly 1 updated SRPM per day Run on heterogeneous standard high volume components ¨ Use the components that offer the best price/performance!

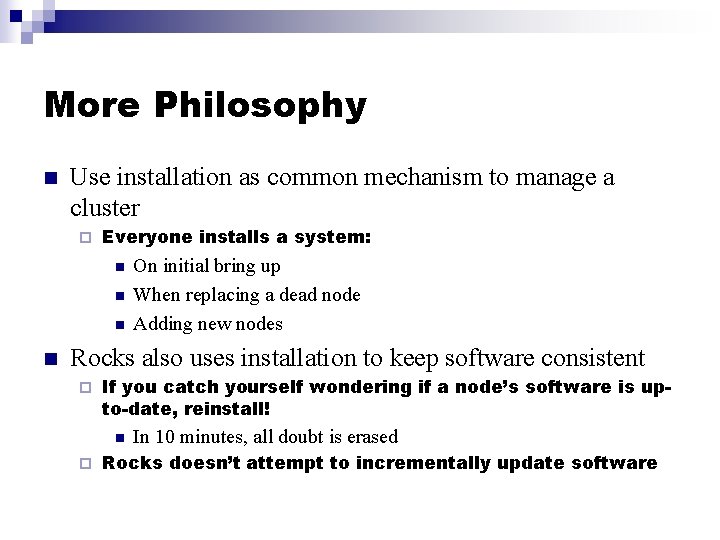

More Philosophy n Use installation as common mechanism to manage a cluster ¨ Everyone installs a system: n n On initial bring up When replacing a dead node Adding new nodes Rocks also uses installation to keep software consistent ¨ If you catch yourself wondering if a node’s software is upto-date, reinstall! n ¨ In 10 minutes, all doubt is erased Rocks doesn’t attempt to incrementally update software

Rocks Cluster Distribution n Fully-automated cluster-aware distribution ¨ n Cluster on a CD set Software Packages ¨ Full Red Hat Linux distribution n Red Hat Linux Enterprise 3. 0 rebuilt from source De-facto standard cluster packages ¨ Rocks community packages ¨ n System Configuration ¨ Configure the services in packages

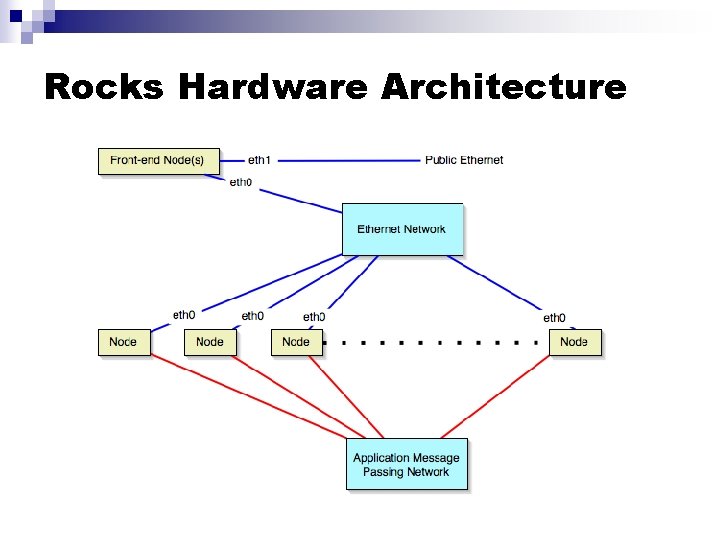

Rocks Hardware Architecture

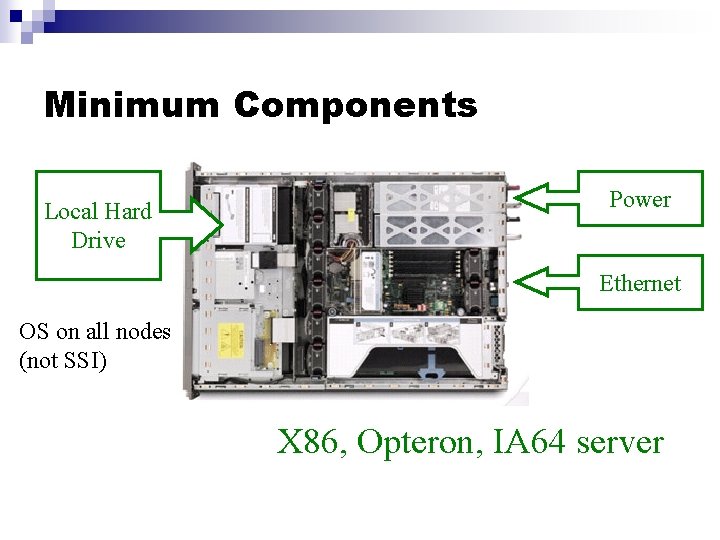

Minimum Components Local Hard Drive Power Ethernet OS on all nodes (not SSI) X 86, Opteron, IA 64 server

Optional Components n Myrinet high-performance network ¨ Infiniband support in Nov 2004 n Network-addressable power distribution unit n keyboard/video/mouse network not required Non-commodity ¨ How do you manage your management network? ¨ Crash carts have a lower TCO ¨

Storage n NFS ¨ n The frontend exports all home directories Parallel Virtual File System version 1 ¨ System nodes can be targeted as Compute + PVFS or strictly PVFS nodes

Minimum Hardware Requirements n Frontend: 2 ethernet connections ¨ 18 GB disk drive ¨ 512 MB memory ¨ n Compute: 1 ethernet connection ¨ 18 GB disk drive ¨ 512 MB memory ¨ n n Power Ethernet switches

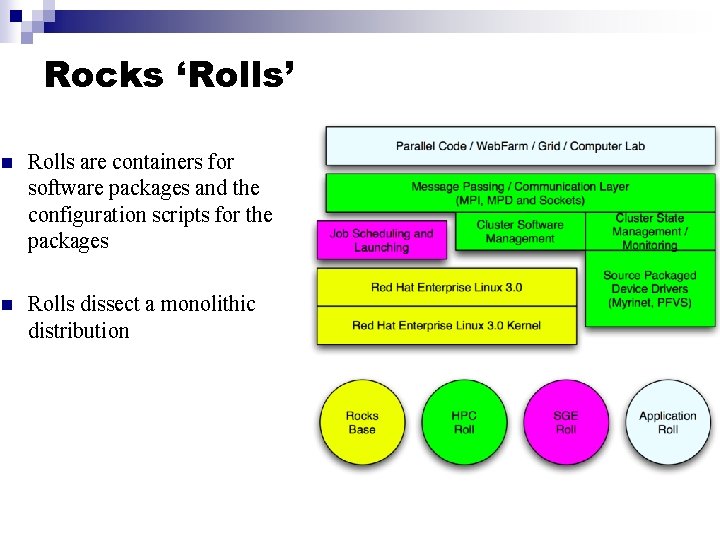

Cluster Software Stack

Rocks ‘Rolls’ n Rolls are containers for software packages and the configuration scripts for the packages n Rolls dissect a monolithic distribution

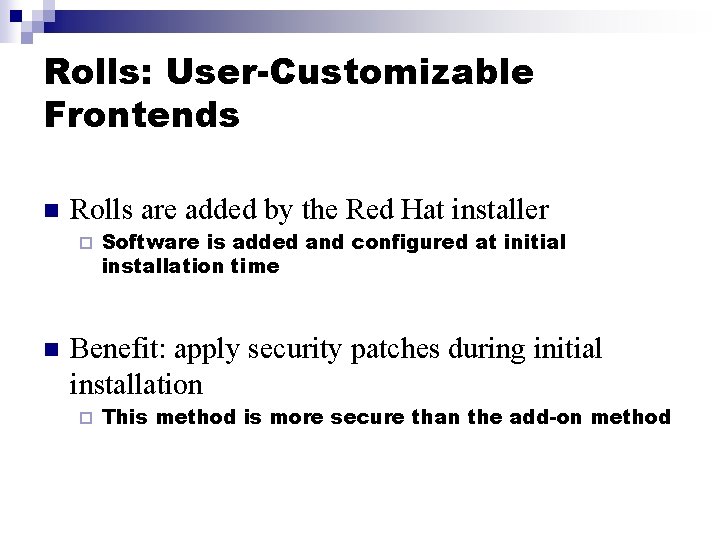

Rolls: User-Customizable Frontends n Rolls are added by the Red Hat installer ¨ n Software is added and configured at initial installation time Benefit: apply security patches during initial installation ¨ This method is more secure than the add-on method

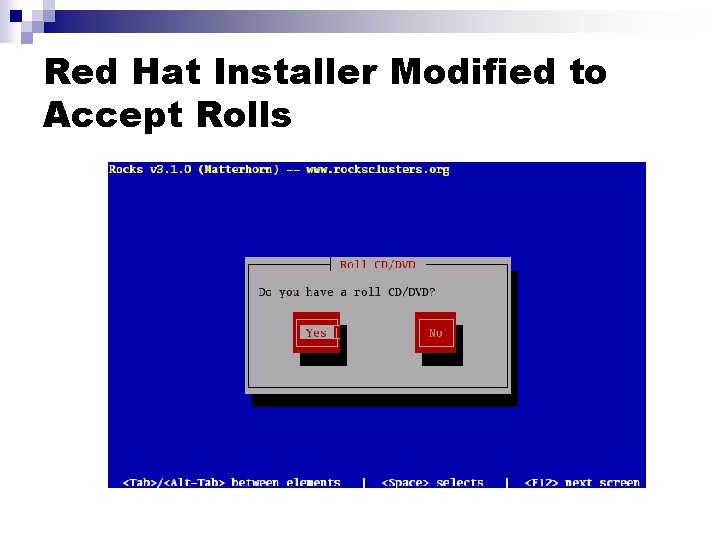

Red Hat Installer Modified to Accept Rolls

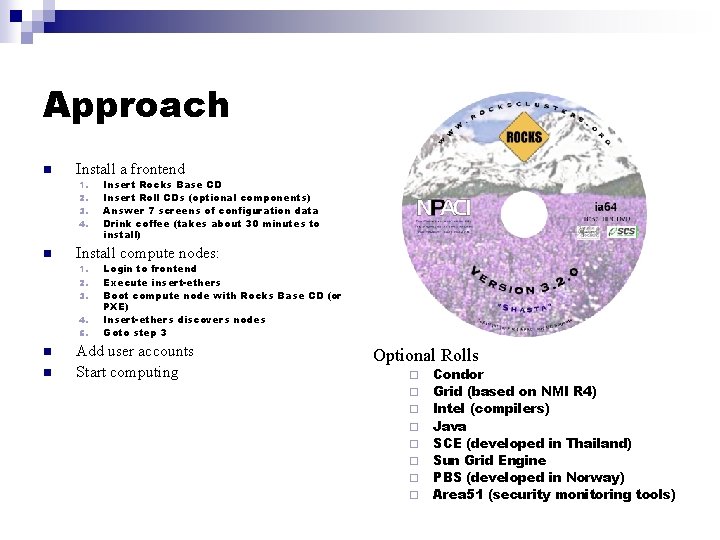

Approach n Install a frontend 1. 2. 3. 4. n Install compute nodes: 1. 2. 3. 4. 5. n n Insert Rocks Base CD Insert Roll CDs (optional components) Answer 7 screens of configuration data Drink coffee (takes about 30 minutes to install) Login to frontend Execute insert-ethers Boot compute node with Rocks Base CD (or PXE) Insert-ethers discovers nodes Goto step 3 Add user accounts Start computing Optional Rolls ¨ ¨ ¨ ¨ Condor Grid (based on NMI R 4) Intel (compilers) Java SCE (developed in Thailand) Sun Grid Engine PBS (developed in Norway) Area 51 (security monitoring tools)

Login to Frontend n Create ssh public/private key Ask for ‘passphrase’ ¨ These keys are used to securely login into compute nodes without having to enter a password each time you login to a compute node ¨ n Execute ‘insert-ethers’ ¨ This utility listens for new compute nodes

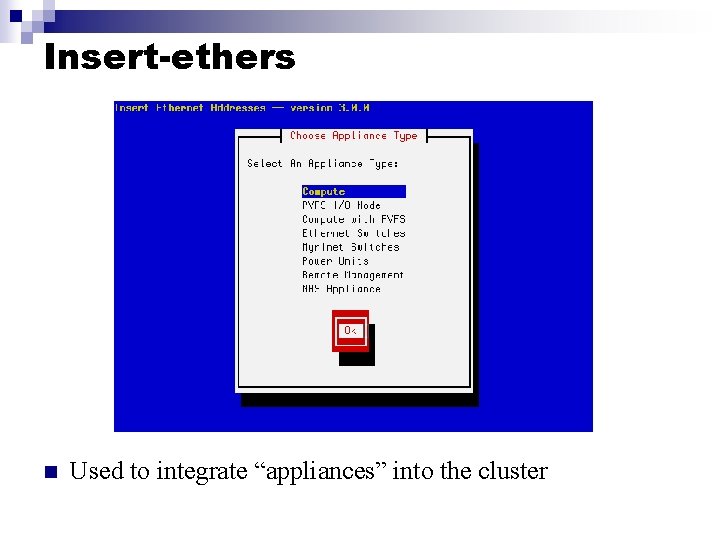

Insert-ethers n Used to integrate “appliances” into the cluster

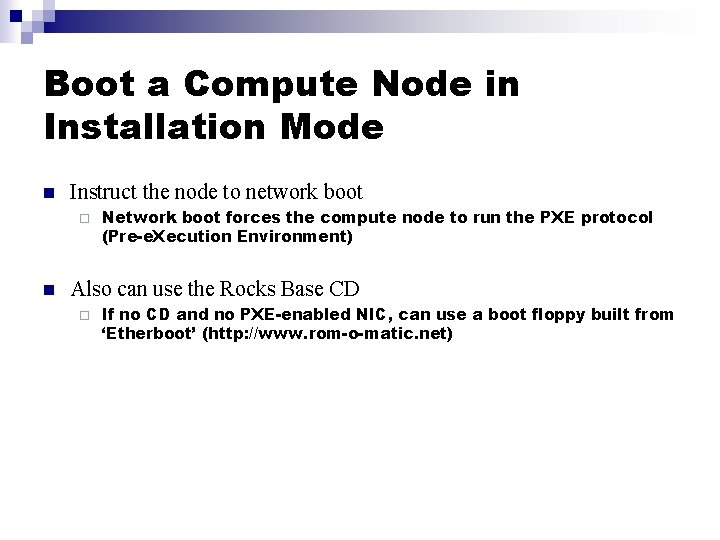

Boot a Compute Node in Installation Mode n Instruct the node to network boot ¨ n Network boot forces the compute node to run the PXE protocol (Pre-e. Xecution Environment) Also can use the Rocks Base CD ¨ If no CD and no PXE-enabled NIC, can use a boot floppy built from ‘Etherboot’ (http: //www. rom-o-matic. net)

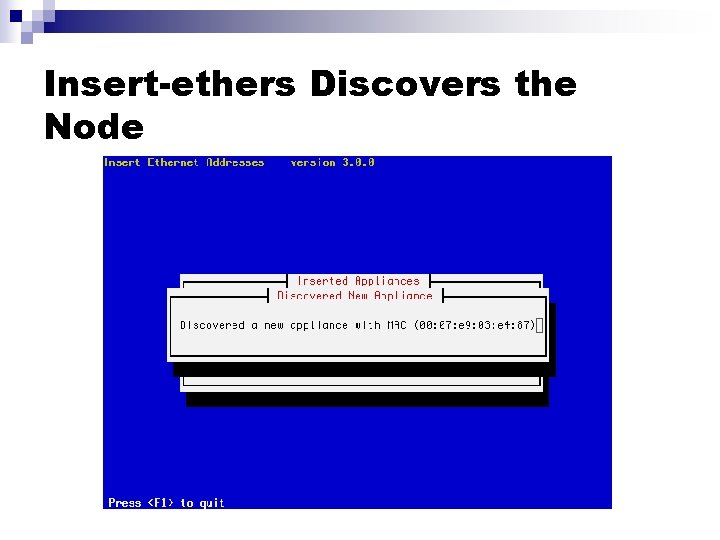

Insert-ethers Discovers the Node

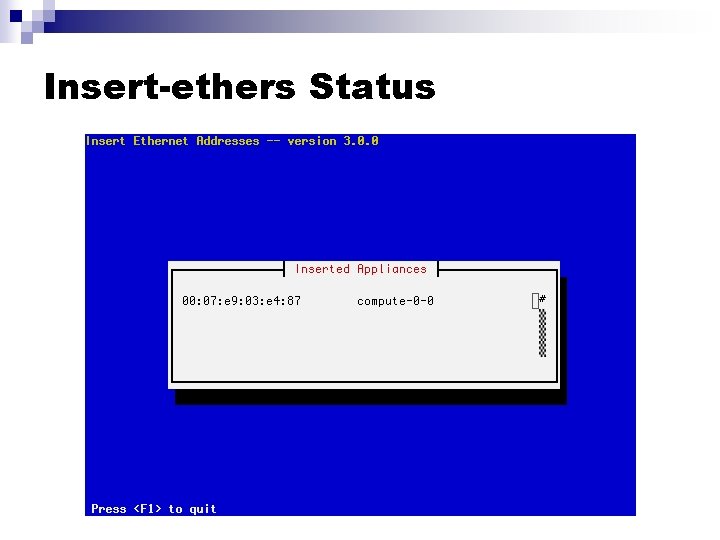

Insert-ethers Status

e. KV Ethernet Keyboard and Video n Monitor your compute node installation over the ethernet network ¨ n No KVM required! Execute: ‘ssh compute-0 -0’

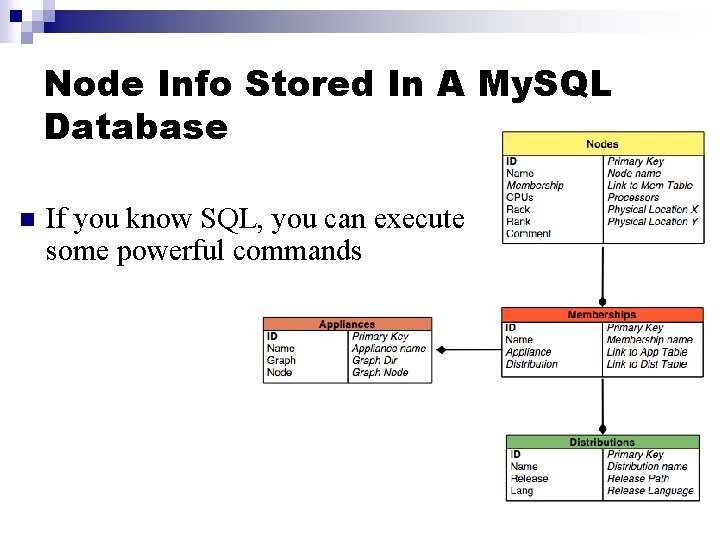

Node Info Stored In A My. SQL Database n If you know SQL, you can execute some powerful commands

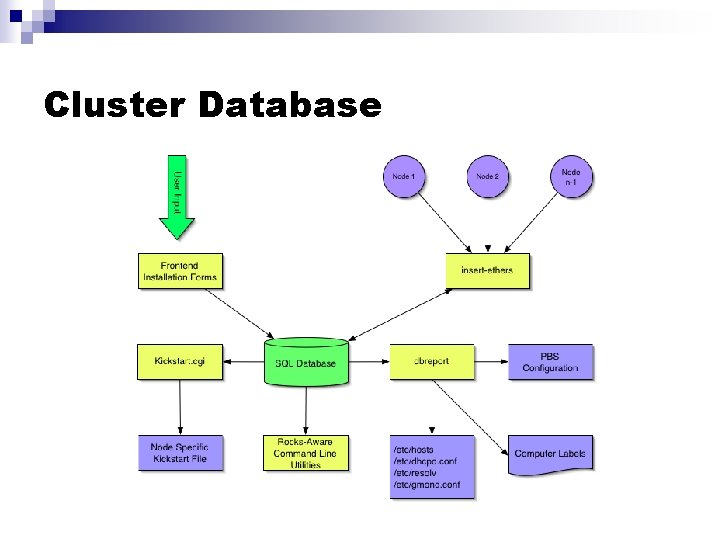

Cluster Database

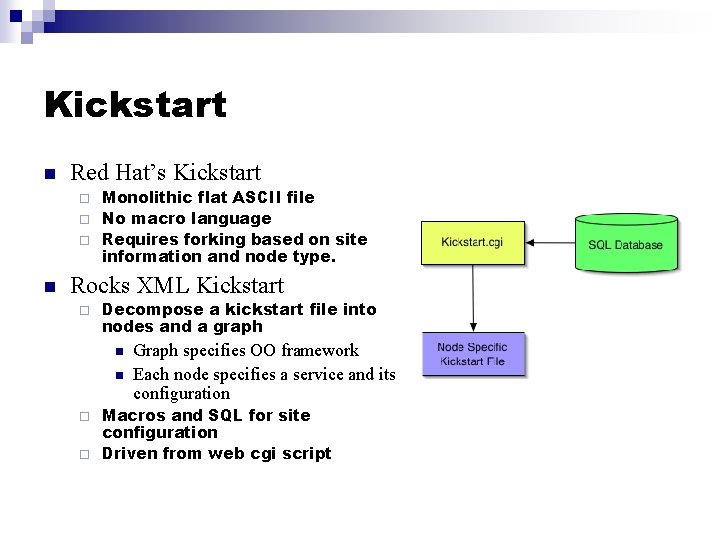

Kickstart n Red Hat’s Kickstart Monolithic flat ASCII file ¨ No macro language ¨ Requires forking based on site information and node type. ¨ n Rocks XML Kickstart ¨ Decompose a kickstart file into nodes and a graph n n Graph specifies OO framework Each node specifies a service and its configuration Macros and SQL for site configuration ¨ Driven from web cgi script ¨

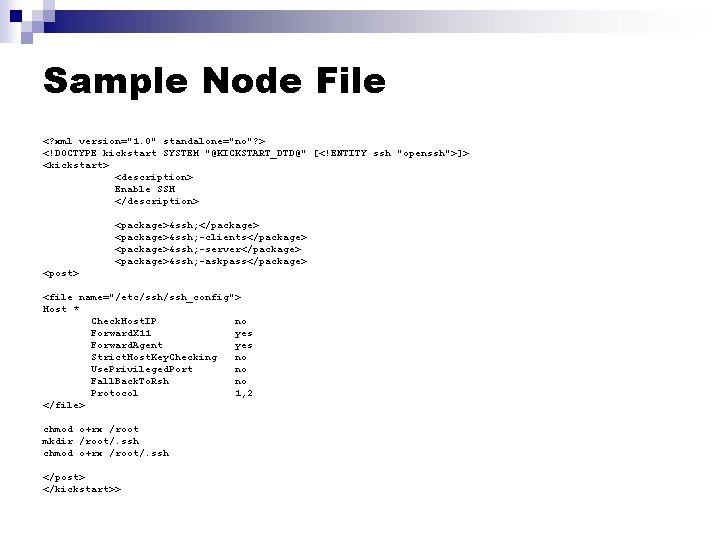

Sample Node File <? xml version="1. 0" standalone="no"? > <!DOCTYPE kickstart SYSTEM "@KICKSTART_DTD@" [<!ENTITY ssh "openssh">]> <kickstart> <description> Enable SSH </description> <package>&ssh; </package> <package>&ssh; -clients</package> <package>&ssh; -server</package> <package>&ssh; -askpass</package> <post> <file name="/etc/ssh_config"> Host * Check. Host. IP no Forward. X 11 yes Forward. Agent yes Strict. Host. Key. Checking no Use. Privileged. Port no Fall. Back. To. Rsh no Protocol 1, 2 </file> chmod o+rx /root mkdir /root/. ssh chmod o+rx /root/. ssh </post> </kickstart>>

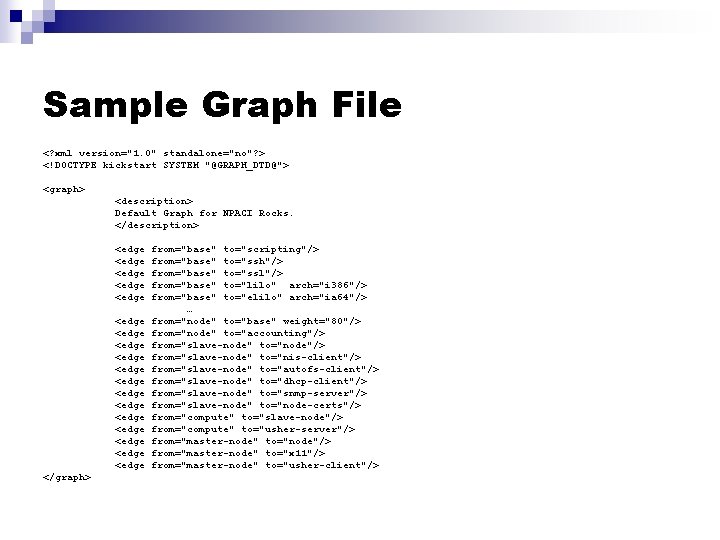

Sample Graph File <? xml version="1. 0" standalone="no"? > <!DOCTYPE kickstart SYSTEM "@GRAPH_DTD@"> <graph> <description> Default Graph for NPACI Rocks. </description> <edge <edge <edge <edge <edge </graph> from="base" to="scripting"/> from="base" to="ssh"/> from="base" to="ssl"/> from="base" to="lilo" arch="i 386"/> from="base" to="elilo" arch="ia 64"/> … from="node" to="base" weight="80"/> from="node" to="accounting"/> from="slave-node" to="node"/> from="slave-node" to="nis-client"/> from="slave-node" to="autofs-client"/> from="slave-node" to="dhcp-client"/> from="slave-node" to="snmp-server"/> from="slave-node" to="node-certs"/> from="compute" to="slave-node"/> from="compute" to="usher-server"/> from="master-node" to="node"/> from="master-node" to="x 11"/> from="master-node" to="usher-client"/>

Kickstart framework

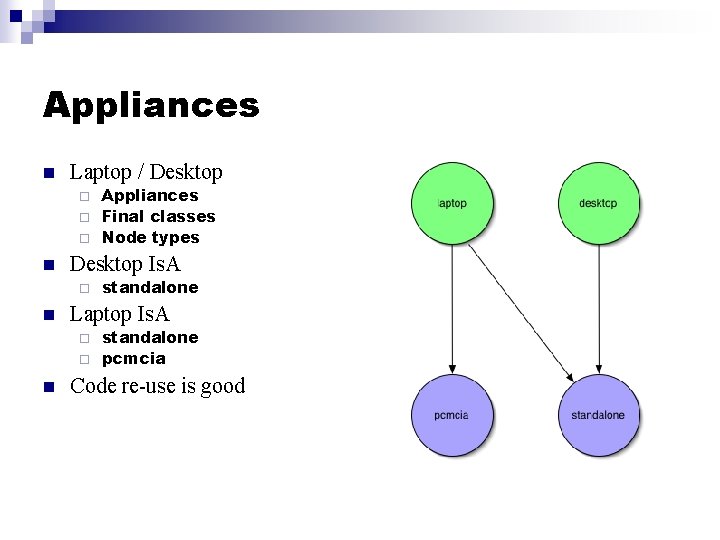

Appliances n Laptop / Desktop Appliances ¨ Final classes ¨ Node types ¨ n Desktop Is. A ¨ n standalone Laptop Is. A standalone ¨ pcmcia ¨ n Code re-use is good

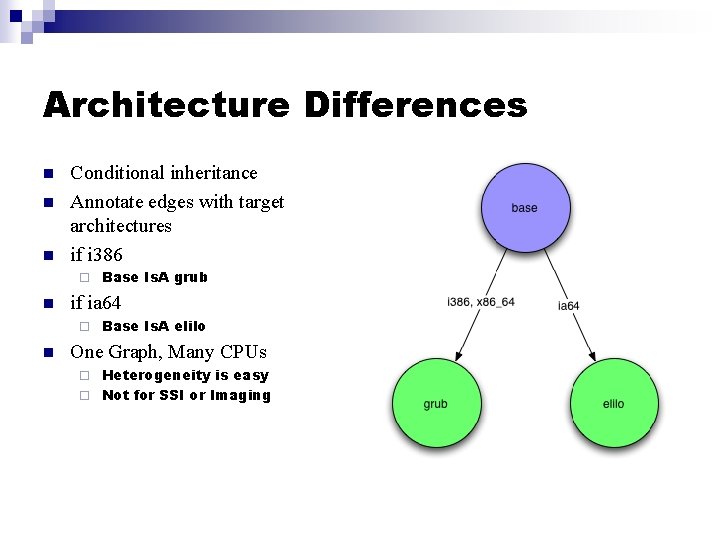

Architecture Differences n n n Conditional inheritance Annotate edges with target architectures if i 386 ¨ n if ia 64 ¨ n Base Is. A grub Base Is. A elilo One Graph, Many CPUs Heterogeneity is easy ¨ Not for SSI or Imaging ¨

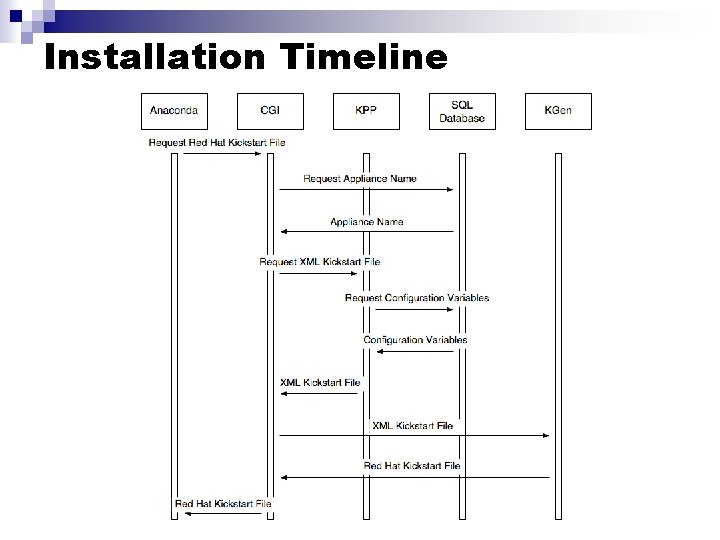

Installation Timeline

Status

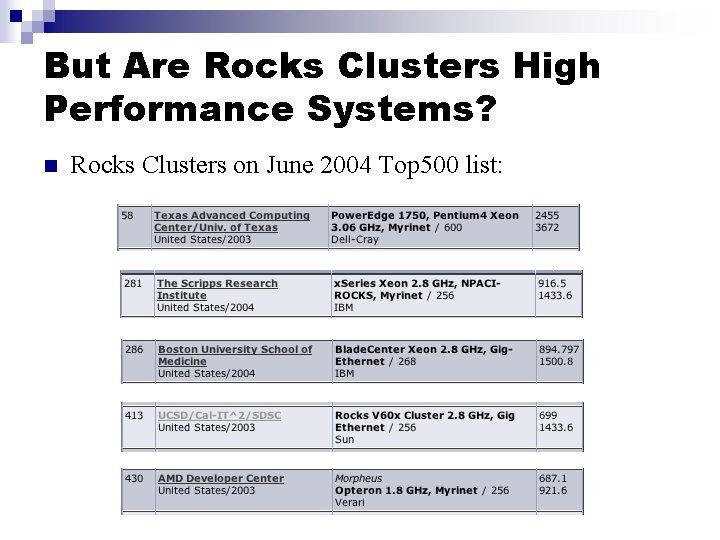

But Are Rocks Clusters High Performance Systems? n Rocks Clusters on June 2004 Top 500 list:

What We Proposed To Sun n n Let’s build a Top 500 machine … … from the ground up … … in 2 hours … … in the Sun booth at Supercomputing ‘ 03

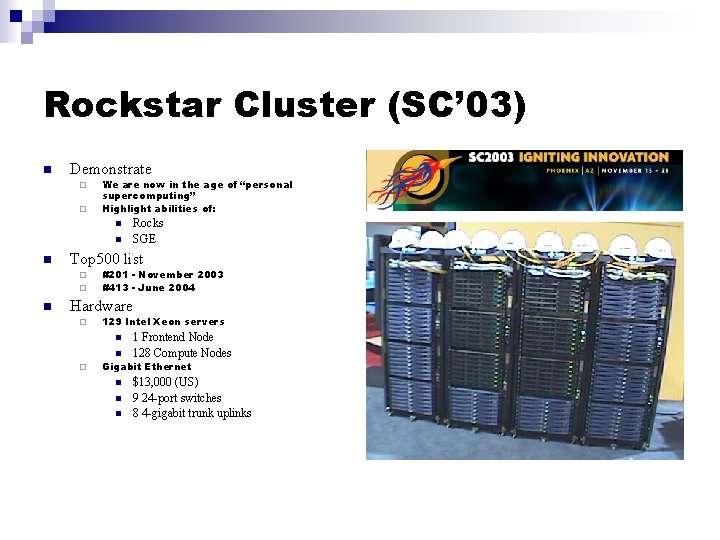

Rockstar Cluster (SC’ 03) n Demonstrate ¨ ¨ We are now in the age of “personal supercomputing” Highlight abilities of: n n n Top 500 list ¨ ¨ n Rocks SGE #201 - November 2003 #413 - June 2004 Hardware ¨ 129 Intel Xeon servers n n ¨ 1 Frontend Node 128 Compute Nodes Gigabit Ethernet n n n $13, 000 (US) 9 24 -port switches 8 4 -gigabit trunk uplinks

- Slides: 96