Beowulf Clusters Paul Tymann Computer Science Department Rochester

Beowulf Clusters Paul Tymann Computer Science Department Rochester Institute of Technology ptt@cs. rit. edu 364 - Beowulf Clusters

Parallel Computers (Summary) • In the mid 70 s and 80 s high performance computing was dominated by systems that were contained in a single “box”. • Architectures were specialized and very different from each other. – There is no Von Neumann architecture for parallel computers – If the software and hardware architectures matched you could attain significant improvements in performance – Difficult (almost impossible in some cases) to port programs – Programmers had to be specialized – Very expensive 9/25/2020 364 - Beowulf Clusters 2

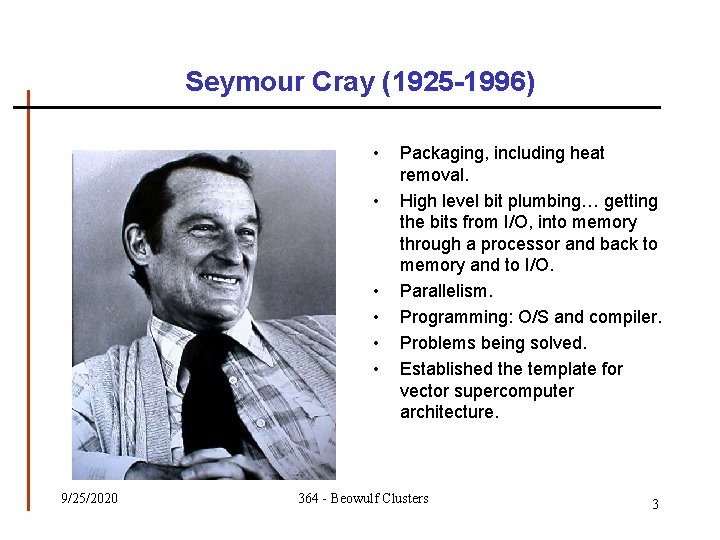

Seymour Cray (1925 -1996) • • • 9/25/2020 Packaging, including heat removal. High level bit plumbing… getting the bits from I/O, into memory through a processor and back to memory and to I/O. Parallelism. Programming: O/S and compiler. Problems being solved. Established the template for vector supercomputer architecture. 364 - Beowulf Clusters 3

Cray XMP/4 9/25/2020 364 - Beowulf Clusters 4

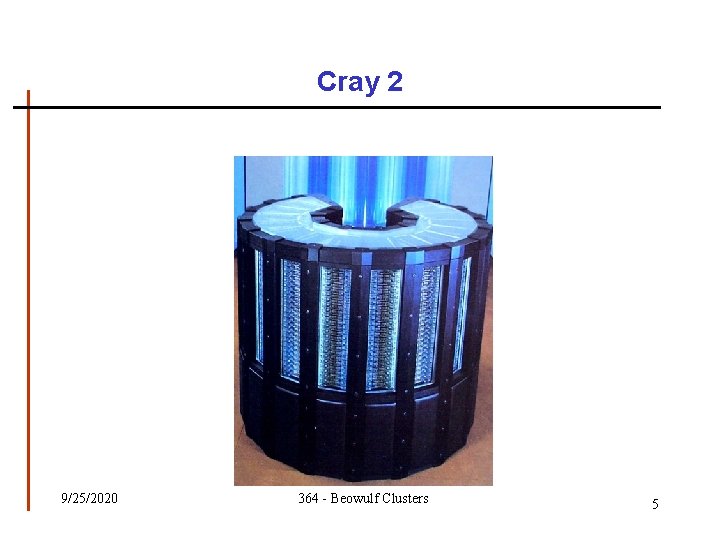

Cray 2 9/25/2020 364 - Beowulf Clusters 5

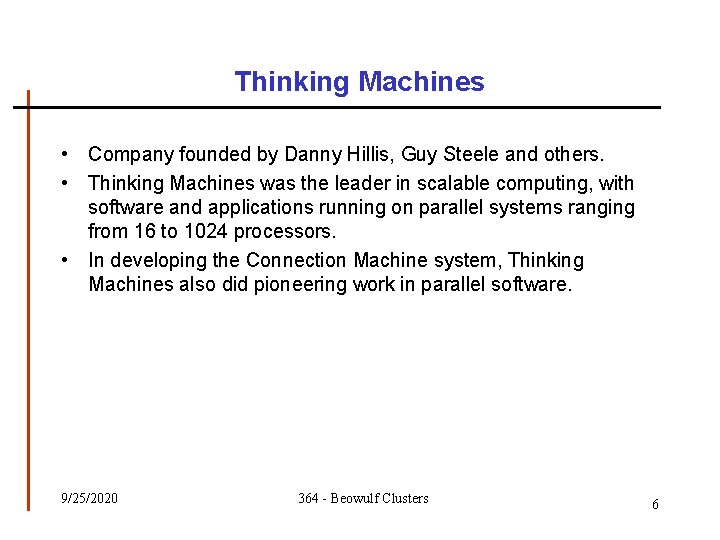

Thinking Machines • Company founded by Danny Hillis, Guy Steele and others. • Thinking Machines was the leader in scalable computing, with software and applications running on parallel systems ranging from 16 to 1024 processors. • In developing the Connection Machine system, Thinking Machines also did pioneering work in parallel software. 9/25/2020 364 - Beowulf Clusters 6

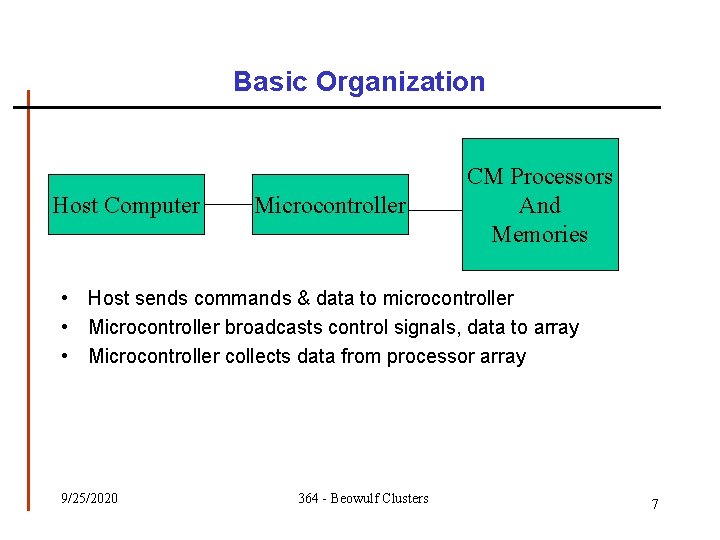

Basic Organization Host Computer Microcontroller CM Processors And Memories • Host sends commands & data to microcontroller • Microcontroller broadcasts control signals, data to array • Microcontroller collects data from processor array 9/25/2020 364 - Beowulf Clusters 7

CM 2 9/25/2020 364 - Beowulf Clusters 8

CM 5 9/25/2020 364 - Beowulf Clusters 9

SPMD Computing • SPMD stands for single program multiple data – The same program is run on the processors of an MIMD machine – Occasionally the processors may synchronize – Because an entire program is executed on separate data, it is possible that different branches are taken, leading to asynchronous parallelism • SPMD can about as a desire to do SIMD like calculations on MIMD machines – SPMD is not a hardware paradigm, it is the software equivalent of SIMD 9/25/2020 364 - Beowulf Clusters 10

Distributed Systems • A collection of autonomous computers linked by a network, with software designed to produce an integrated computing facility. • The introduction of LANs at the beginning of the 1970 s triggered the development of distributed systems. • As an alternative to expensive parallel systems, many researchers began to “build” parallel computers using distributed computing technology. Local Area Network 9/25/2020 364 - Beowulf Clusters 11

Distributed vs. High Performance • Distributed systems, and distributed software in common use today – – Web servers ATM networks Cell Phone System … • These system use distributed computing for architectural reasons (reliability, modularity, …) not necessarily for speed. • High performance distributed computing uses distributed computing to reduce the run time of an application – Primary interest is speed – Primary use is parallel computing 9/25/2020 364 - Beowulf Clusters 12

Common Systems Beowulf – conqueror of computationally intensive problems 9/25/2020 COWS – Clusters of Workstations 364 - Beowulf Clusters 13

Clusters of Workstations • Cycle vampires – Use wasted compute cycles on the desktop • Utilize equipment that is not designed for distributed computing – 100 mbps may be fine for mail… – Must work with an OS that is designed for general purpose computing – Typically suspend computation when workstation becomes active • Some common software environments include – – 9/25/2020 Condor PVM/P 4 Autorun … 364 - Beowulf Clusters 14

Communication • Communication is vital in any kind of distributed application. • Initially most people wrote their own protocols – Tower of Babel effect • Eventually standards appeared – Parallel Virtual Machine (PVM) – Message Passing Interface (MPI) 9/25/2020 364 - Beowulf Clusters 15

What Is a Beowulf Cluster? • “It's a kind of high-performance massively parallel computer built primarily out of commodity hardware components, running a freesoftware operating system like Linux or Free. BSD, interconnected by a private high-speed network. ” – Beowulf FAQ. • A key feature of a Beowulf cluster is that the machines in the cluster are dedicated to running high-performance computing tasks. – The cluster is on a private network. – It is usually connected to the outside world through only a single node. 9/25/2020 364 - Beowulf Clusters 16

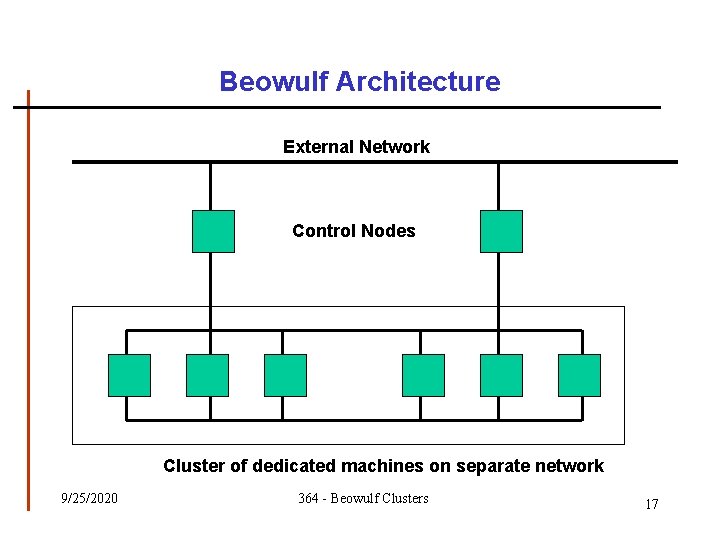

Beowulf Architecture External Network Control Nodes … Cluster of dedicated machines on separate network 9/25/2020 364 - Beowulf Clusters 17

Origins of Beowulf • In the early 1990 s, NASA researchers Becker & Sterling identify these problems: • Computing projects need more power. • Budgets are increasingly tight. • Supercomputer manufacturers were going bust. - Maintenance contracts voided. » Proprietary hardware no longer upgradeable. » Proprietary software no longer maintainable. “Learning the peculiarities of a specific vendor only enslaves you to that vendor. ” 9/25/2020 364 - Beowulf Clusters 18

1994: Wiglaf • Becker & Sterling named their prototype system Wiglaf: – 16 nodes, each with • 486 -DX 4 CPU (100 -MHz) • • • 16 M RAM (60 ns) 540 Mb or 1 Gb disk three 10 -Mbps ethernet cards (communication load spread across three distinct ethernets) – Triple-bus topology – 42 Mflops (measured) Source: Joel Adams, http: //www. calvin. edu/~adams/ 9/25/2020 364 - Beowulf Clusters 19

1995: Hrothgar • They named their next system Hrothgar: – 16 nodes, each with • • Pentium CPU (100 -MHz) 32 M RAM 1. 2 Gb disk Two 100 -Mbps NICs – Two 100 -Mbps switches double -bus topology – 280 Mflops (measured) Source: Joel Adams, http: //www. calvin. edu/~adams/ 9/25/2020 364 - Beowulf Clusters 20

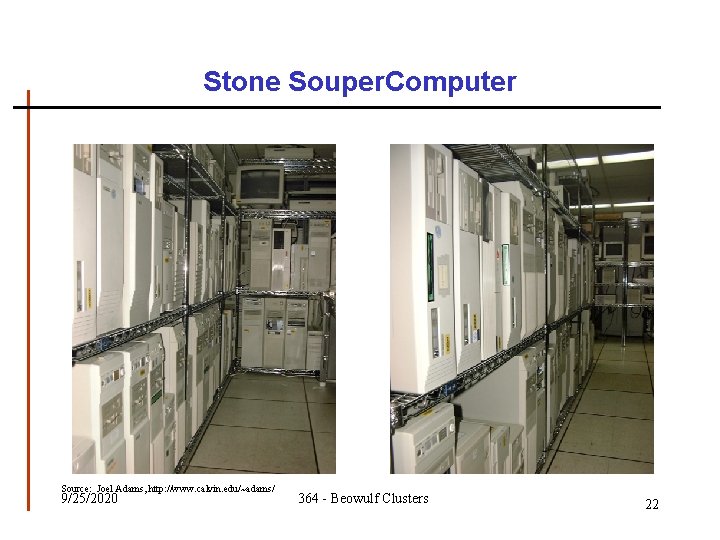

1997: Stone Soupercomputer • Hoffman & Hargrove built ORNL’s Stone Soupercomputer – donated/castoff nodes • • • 486 s, Pentiums, . . . whatever RAM, disk one 10 -Mbps NIC – one 10 -Mbps ethernet (bus topology) • Feb 2002: 133 nodes – Total cost: $0 – Performance/Price ratio: Source: Joel Adams, http: //www. calvin. edu/~adams/ 9/25/2020 364 - Beowulf Clusters 21

Stone Souper. Computer Source: Joel Adams, http: //www. calvin. edu/~adams/ 9/25/2020 364 - Beowulf Clusters 22

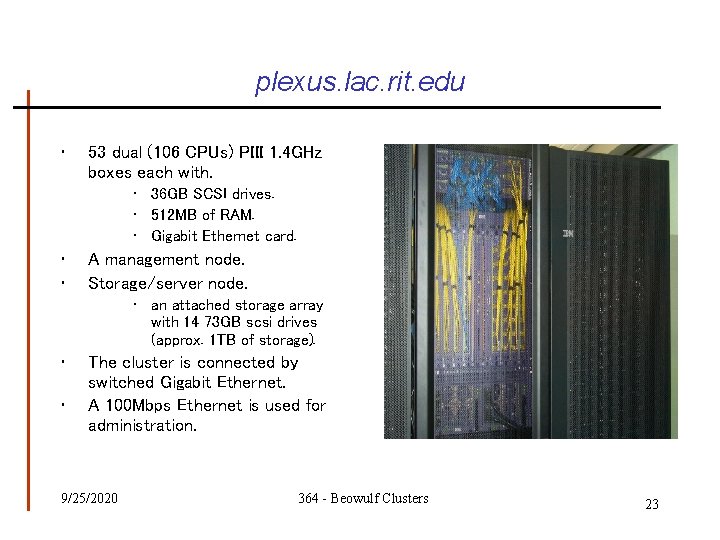

plexus. lac. rit. edu • 53 dual (106 CPUs) PIII 1. 4 GHz boxes each with. • 36 GB SCSI drives. • 512 MB of RAM. • Gigabit Ethernet card. • • A management node. Storage/server node. • an attached storage array with 14 73 GB scsi drives (approx. 1 TB of storage). • • The cluster is connected by switched Gigabit Ethernet. A 100 Mbps Ethernet is used for administration. 9/25/2020 364 - Beowulf Clusters 23

Beowulf Resources • The Beowulf Project » http: //www. beowulf. org • The Beowulf Underground » http: //www. beowulf-underground. org/ • The Beowulf HOWTO » http: //www. linux. com/howto/Beowulf-HOWTO. html • The Scyld Computing Corporation » http: //www. scyld. com 9/25/2020 364 - Beowulf Clusters 24

Communication • Communication is vital in any kind of distributed application. • Initially most people wrote their own protocols. – Tower of Babel effect. • Eventually standards appeared. – Parallel Virtual Machine (PVM). – Message Passing Interface (MPI). 9/25/2020 364 - Beowulf Clusters 25

What is MPI? • A message passing library specification – Message-passing model – Not a compiler specification (i. e. not a language) – Not a specific product • Designed for parallel computers, clusters, and heterogeneous networks 9/25/2020 364 - Beowulf Clusters 26

The MPI Process • Development began in early 1992 • Open process/Broad participation – IBM, Intel, TMC, Meiko, Cray, Convex, Ncube – PVM, p 4, Express, Linda, … – Laboratories, Universities, Government • Final version of draft in May 1994 • Public and vendor implementations are now widely available 9/25/2020 364 - Beowulf Clusters 27

- Slides: 27