Correlation Clustering Nikhil Bansal Joint Work with Avrim

Correlation Clustering Nikhil Bansal Joint Work with Avrim Blum and Shuchi Chawla

Introduction 2

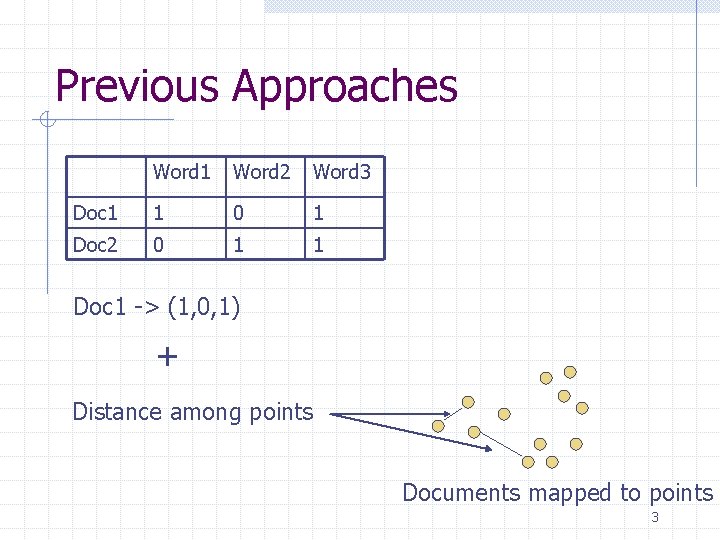

Previous Approaches Word 1 Word 2 Word 3 Doc 1 1 0 1 Doc 2 0 1 1 Doc 1 -> (1, 0, 1) + Distance among points Documents mapped to points 3

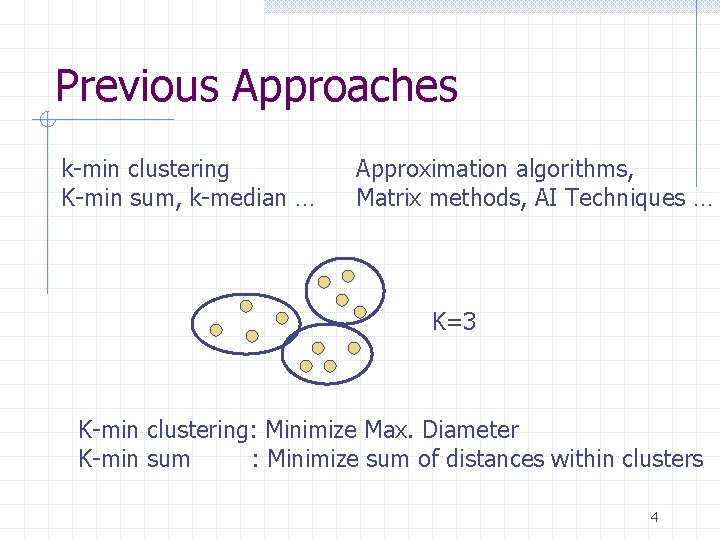

Previous Approaches k-min clustering K-min sum, k-median … Approximation algorithms, Matrix methods, AI Techniques … K=3 K-min clustering: Minimize Max. Diameter K-min sum : Minimize sum of distances within clusters 4

Some Limitations 1) Have to specify “k” If k not restricted: Best to just put each vertex in its own individual cluster 5

Some Limitations 2) Restrictions on Edge Weights Edge weights form metric 6

Some Limitations 3) No Clean notion of quality of clustering E. g. Minimize distance sum within clusters. What really is my Cluster quality? 7

Outline Introduction Our Approach + Problem Formulation Approximating Agreements Approximating Disagreements Conclusion 8

![Our Approach Classifier: takes 2 documents and Returns a weight in [-1, +1] indicating Our Approach Classifier: takes 2 documents and Returns a weight in [-1, +1] indicating](http://slidetodoc.com/presentation_image_h2/0390adfbfa4c2a4dca4fcc30e0694568/image-9.jpg)

Our Approach Classifier: takes 2 documents and Returns a weight in [-1, +1] indicating their similarity W +1: Similar -1: Dissimilar In this talk, W= -1 or +1 9

![Our Approach Classifier: takes 2 documents and Returns a weight in [-1, +1] indicating Our Approach Classifier: takes 2 documents and Returns a weight in [-1, +1] indicating](http://slidetodoc.com/presentation_image_h2/0390adfbfa4c2a4dca4fcc30e0694568/image-10.jpg)

Our Approach Classifier: takes 2 documents and Returns a weight in [-1, +1] indicating their similarity +1: Similar -1: Dissimilar W In this talk, W= -1 or +1 -1 -1 +1 10

![Our Approach Classifier: takes 2 documents and Returns a weight in [-1, +1] indicating Our Approach Classifier: takes 2 documents and Returns a weight in [-1, +1] indicating](http://slidetodoc.com/presentation_image_h2/0390adfbfa4c2a4dca4fcc30e0694568/image-11.jpg)

Our Approach Classifier: takes 2 documents and Returns a weight in [-1, +1] indicating their similarity +1: Similar -1: Dissimilar W In this talk, W= -1 or +1 -1 -1 Our Goal: Find a clustering which agrees with this labeling +1 11

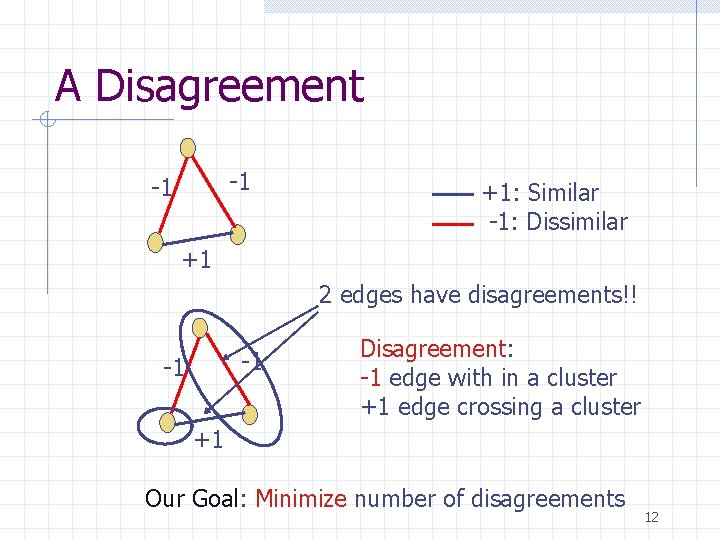

A Disagreement -1 -1 +1: Similar -1: Dissimilar +1 2 edges have disagreements!! -1 -1 Disagreement: -1 edge with in a cluster +1 edge crossing a cluster +1 Our Goal: Minimize number of disagreements 12

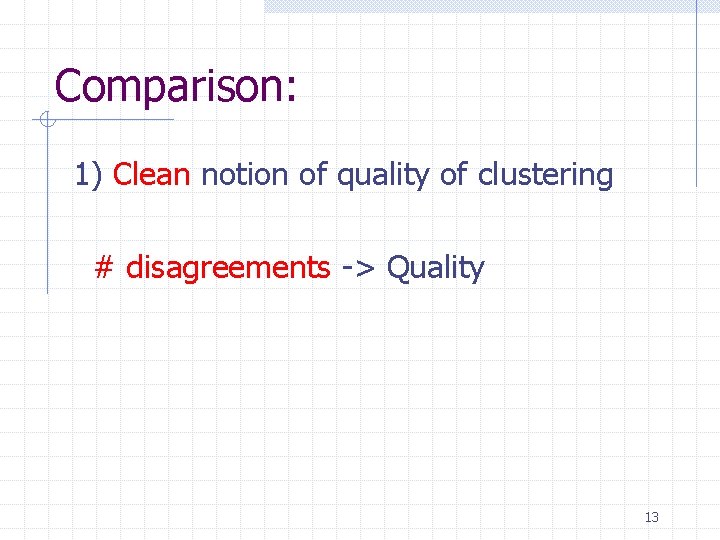

Comparison: 1) Clean notion of quality of clustering # disagreements -> Quality 13

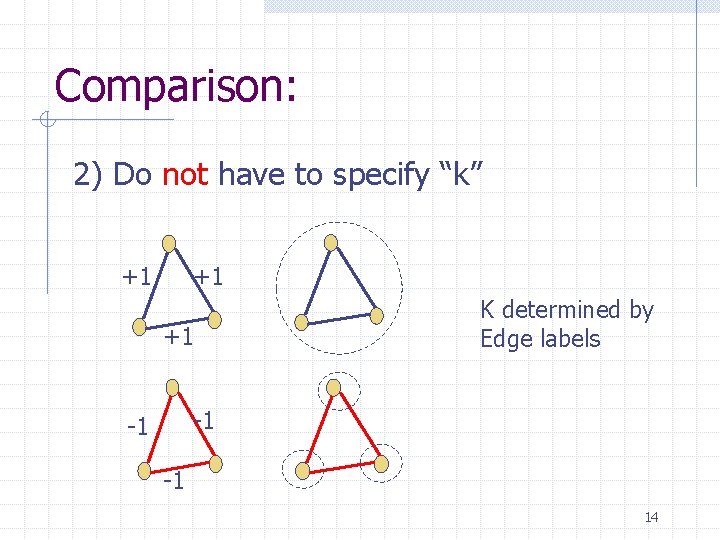

Comparison: 2) Do not have to specify “k” +1 +1 +1 K determined by Edge labels -1 -1 -1 14

Comparison: 3) Arbitrary Edge Weights No metric No dependence 15

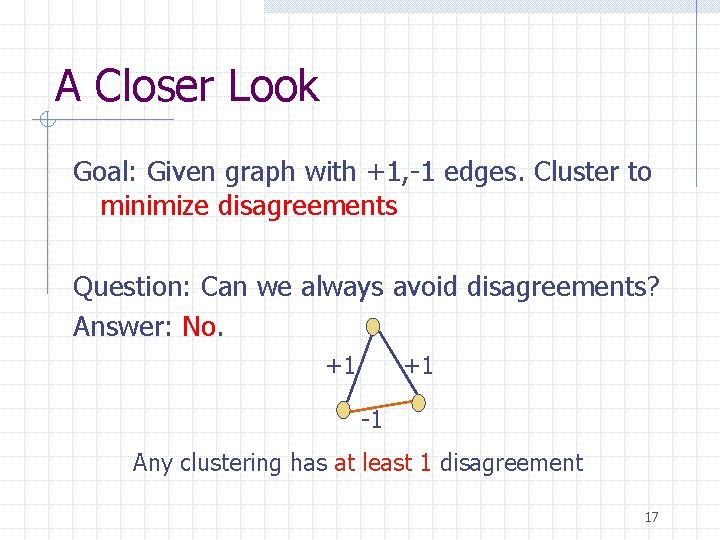

A Closer Look Goal: Given graph with +1, -1 edges. Cluster to minimize disagreements Question: Can we always avoid disagreements? 16

A Closer Look Goal: Given graph with +1, -1 edges. Cluster to minimize disagreements Question: Can we always avoid disagreements? Answer: No. +1 +1 -1 Any clustering has at least 1 disagreement 17

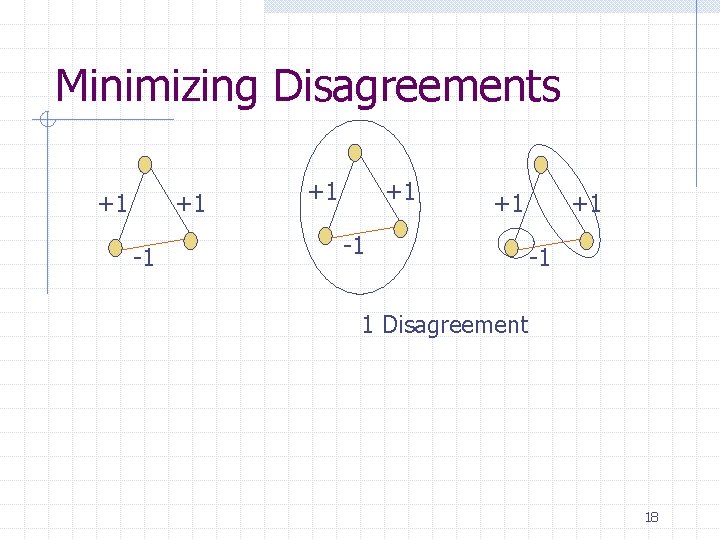

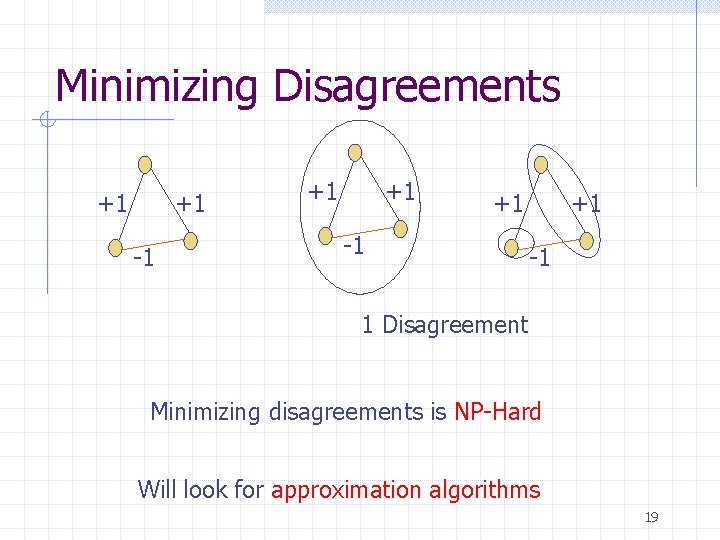

Minimizing Disagreements +1 +1 -1 1 Disagreement 18

Minimizing Disagreements +1 +1 -1 1 Disagreement Minimizing disagreements is NP-Hard Will look for approximation algorithms 19

Agreements vs. Disagreements Observation: Agreements + Disagreements = Minimizing disagreements , Maximizing agreements Very different in terms of approximation: Opt: 1 disagreement We: n disagreements Disagreements : Ratio n Agreements : Ratio ¼ 1 20

Outline Introduction Our Approach + Problem Formulation Approximating Agreements Approximating Disagreements Conclusion 21

Maximizing Agreements A 2 approximation is easy. Algorithm: If #(+1 edges) > #(-1 edges), put all in single cluster Else, individual cluster for each point. Proof: Opt’s agreements at most We agree on at least 22

Our Result A PTAS for max. agreements: (1+ ) approximation, Time = n. O(poly(1/ )) 23

Outline Introduction Our Approach + Problem Formulation Approximating Agreements Approximating Disagreements Conclusion 24

Our Result An O(1) approximation for minimizing disagreements 25

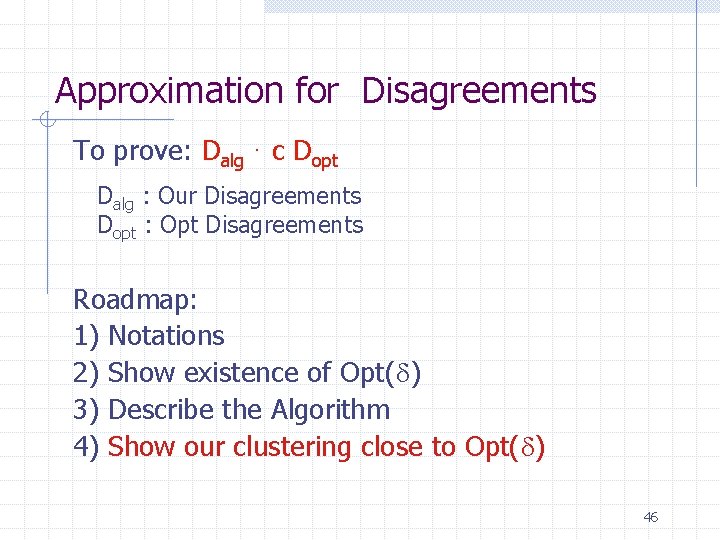

Approximation for Disagreements To prove: Dalg · c Dopt Dalg : Our Disagreements Dopt : Opt Disagreements Roadmap: 1) Notation 2) Show existence of Opt( ) 3) Describe the Algorithm 4) Show our clustering close to Opt( ) 26

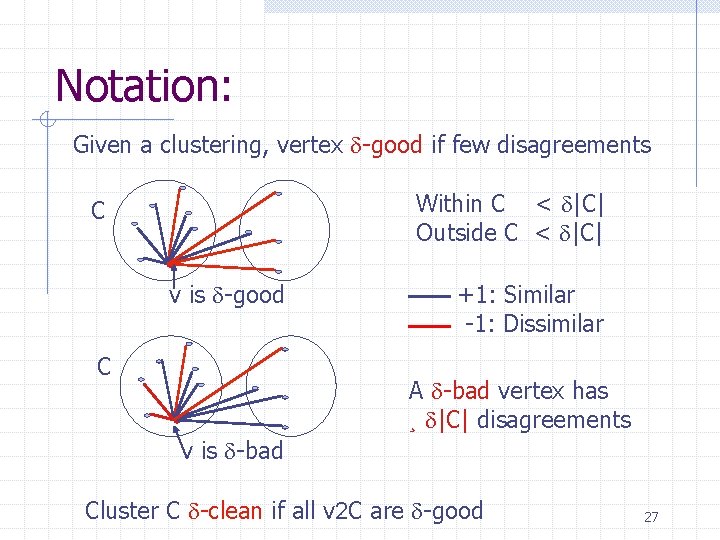

Notation: Given a clustering, vertex -good if few disagreements Within C < |C| Outside C < |C| C v is -good C +1: Similar -1: Dissimilar A -bad vertex has ¸ |C| disagreements v is -bad Cluster C -clean if all v 2 C are -good 27

Approximation for Disagreements To prove: Dalg · c Dopt Dalg : Our Disagreements Dopt : Opt Disagreements Roadmap: 1) Notations 2) Show existence of Opt( ) 3) Describe the Algorithm 4) Show our clustering close to Opt( ) 28

Existence of Opt( ) Main Idea: Opt -> Opt( ): 1) All “non-singleton” clusters -clean 2) Constant times worse than Opt Dopt( ) = O(1/ 2) Dopt 29

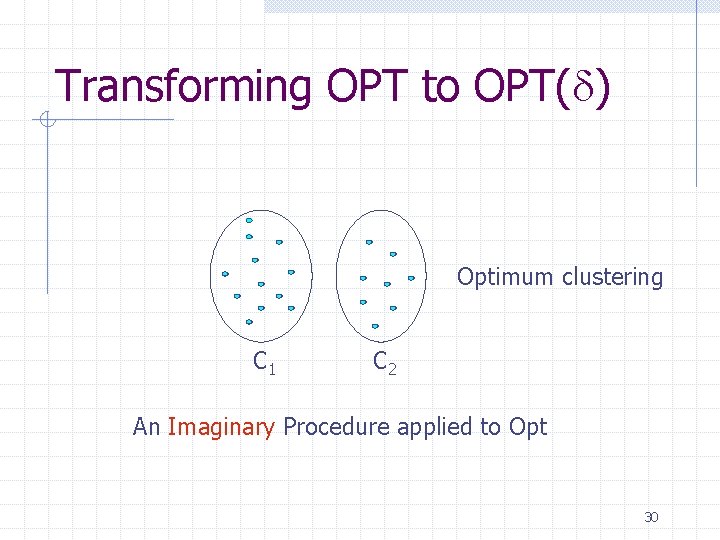

Transforming OPT to OPT( ) Optimum clustering C 1 C 2 An Imaginary Procedure applied to Opt 30

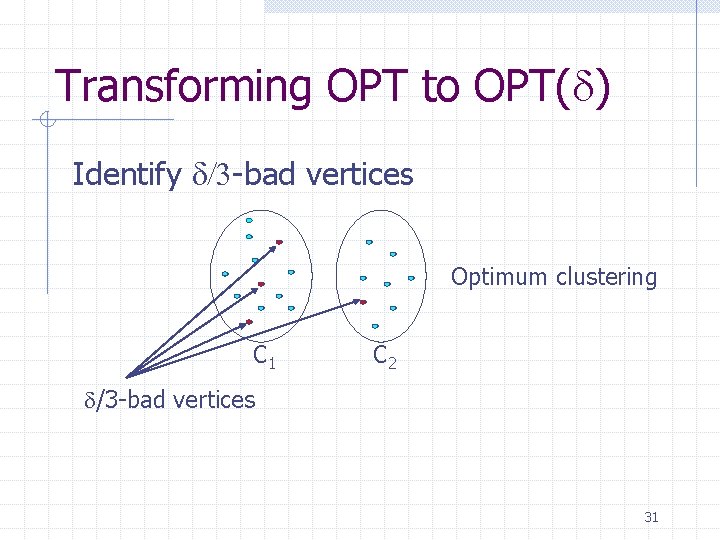

Transforming OPT to OPT( ) Identify /3 -bad vertices Optimum clustering C 1 C 2 /3 -bad vertices 31

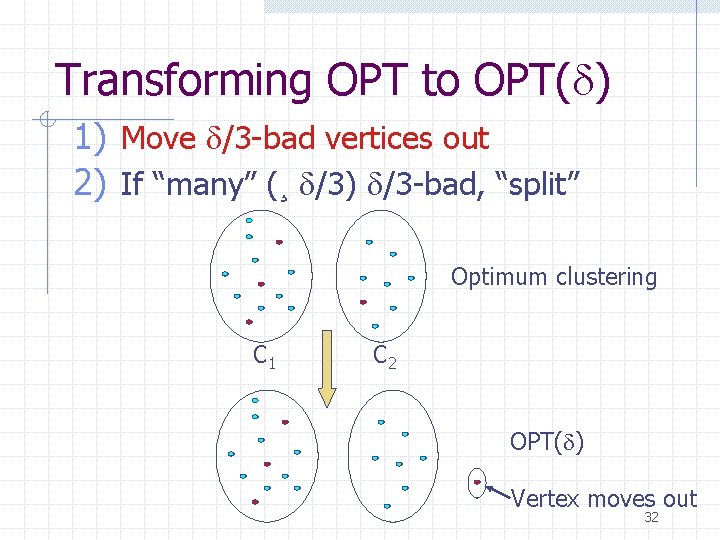

Transforming OPT to OPT( ) 1) Move /3 -bad vertices out 2) If “many” (¸ /3) /3 -bad, “split” Optimum clustering C 1 C 2 OPT( ) Vertex moves out 32

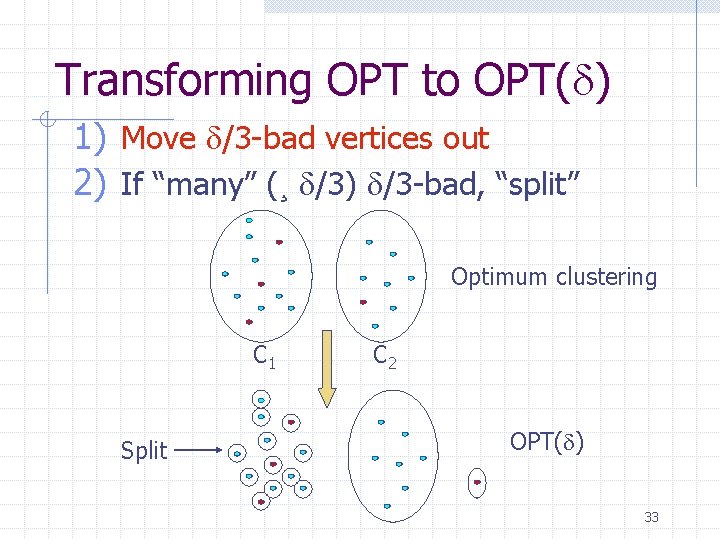

Transforming OPT to OPT( ) 1) Move /3 -bad vertices out 2) If “many” (¸ /3) /3 -bad, “split” Optimum clustering C 1 Split C 2 OPT( ) 33

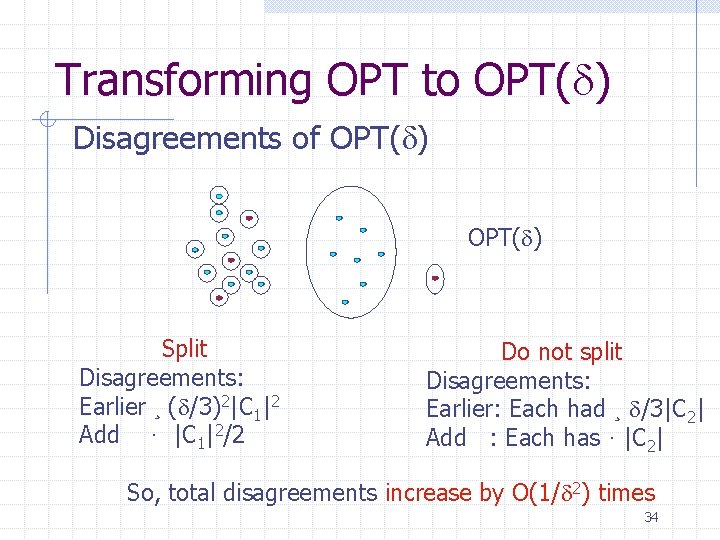

Transforming OPT to OPT( ) Disagreements of OPT( ) Split Disagreements: Earlier ¸ ( /3)2|C 1|2 Add · |C 1|2/2 Do not split Disagreements: Earlier: Each had ¸ /3|C 2| Add : Each has · |C 2| So, total disagreements increase by O(1/ 2) times 34

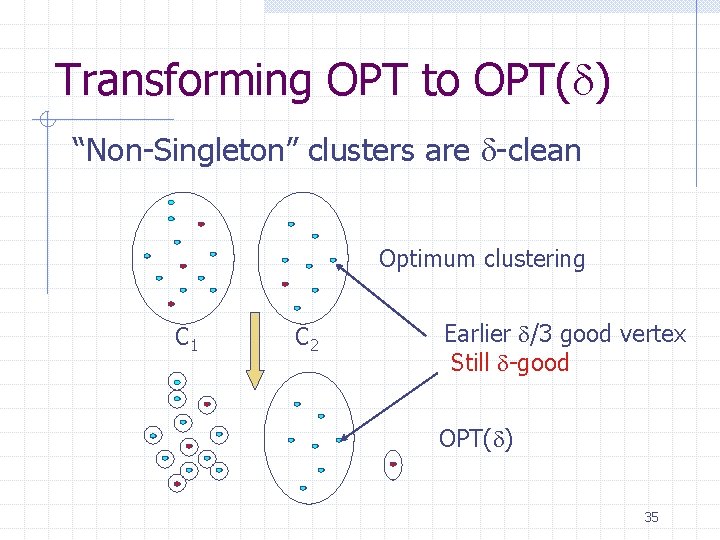

Transforming OPT to OPT( ) “Non-Singleton” clusters are -clean Optimum clustering C 1 C 2 Earlier /3 good vertex Still -good OPT( ) 35

Approximation for Disagreements To prove: Dalg · c Dopt Dalg : Our Disagreements Dopt : Opt Disagreements Roadmap: 1) Notations 2) Show existence of Opt( ) 3) Describe the Algorithm 4) Show our clustering close to Opt( ) 36

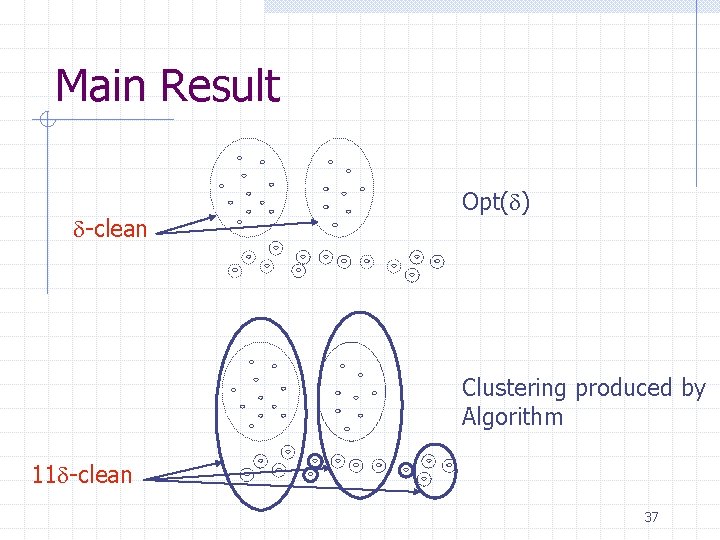

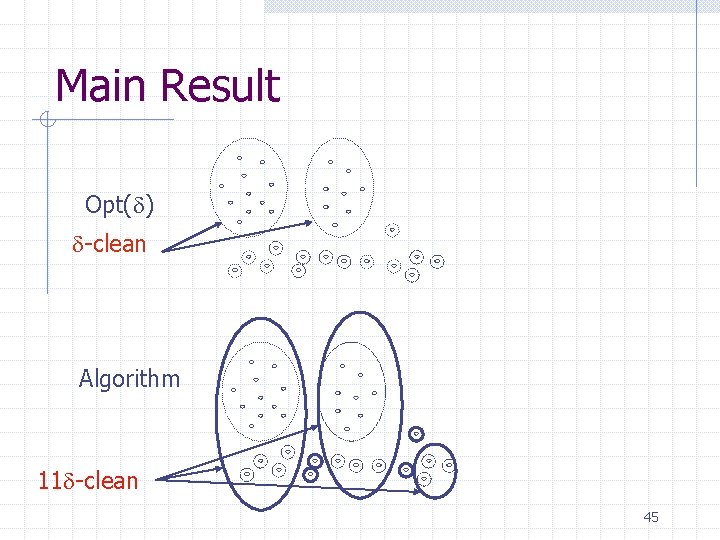

Main Result -clean Opt( ) Clustering produced by Algorithm 11 -clean 37

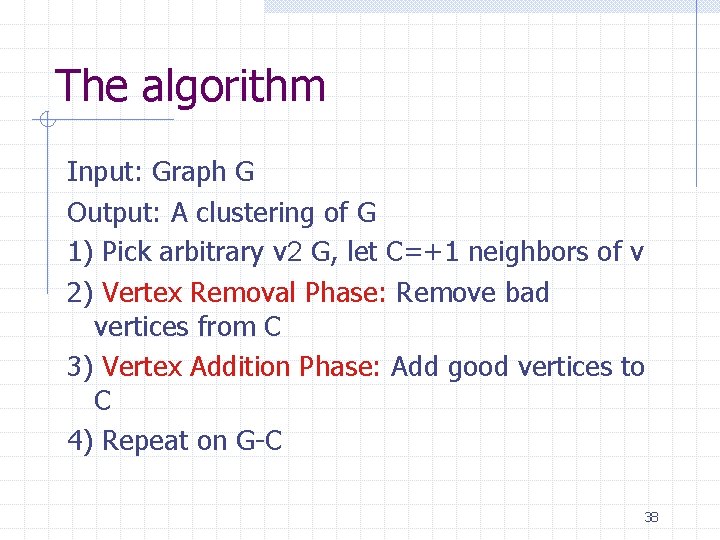

The algorithm Input: Graph G Output: A clustering of G 1) Pick arbitrary v 2 G, let C=+1 neighbors of v 2) Vertex Removal Phase: Remove bad vertices from C 3) Vertex Addition Phase: Add good vertices to C 4) Repeat on G-C 38

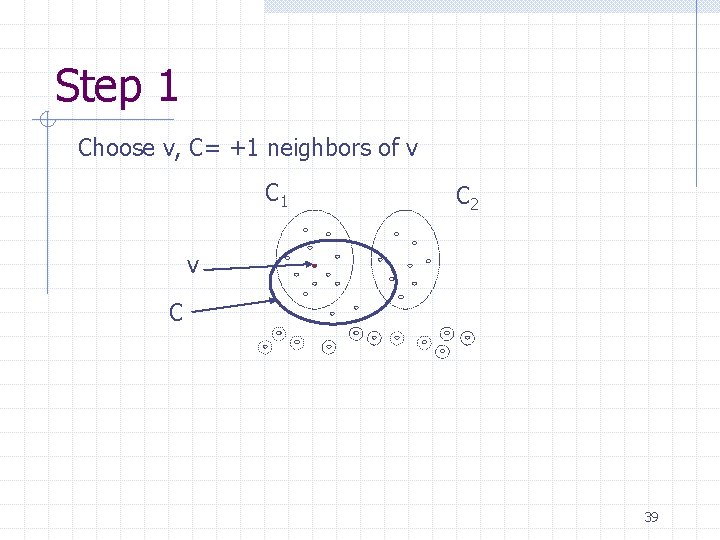

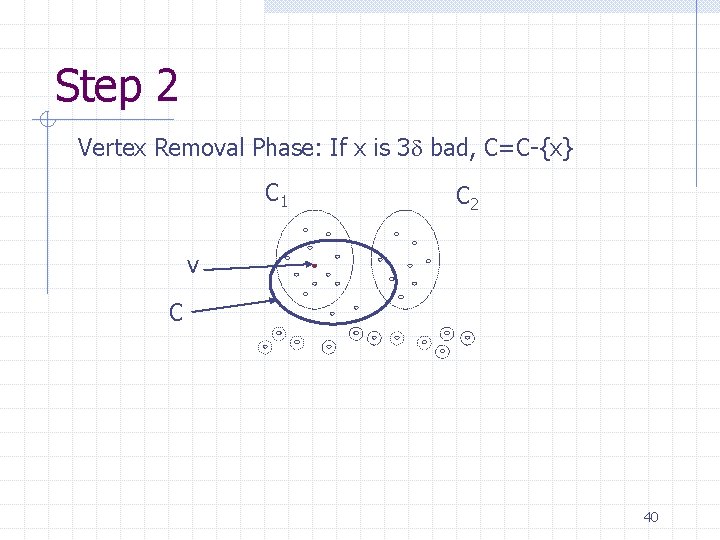

Step 1 Choose v, C= +1 neighbors of v C 1 C 2 v C 39

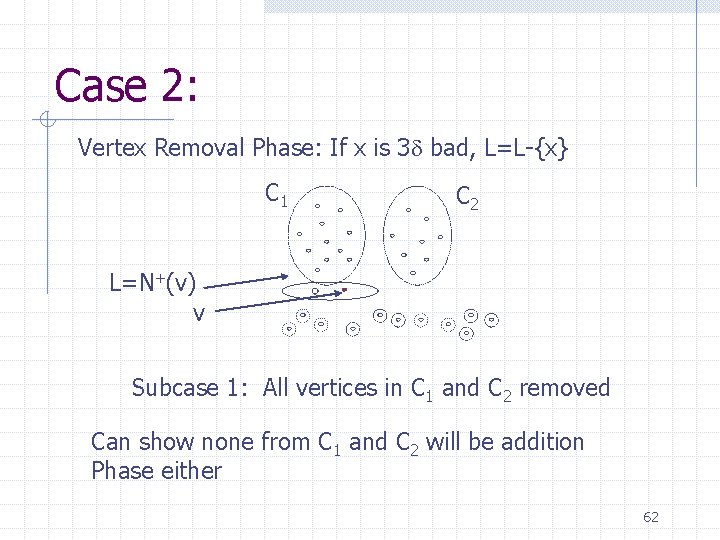

Step 2 Vertex Removal Phase: If x is 3 bad, C=C-{x} C 1 C 2 v C 40

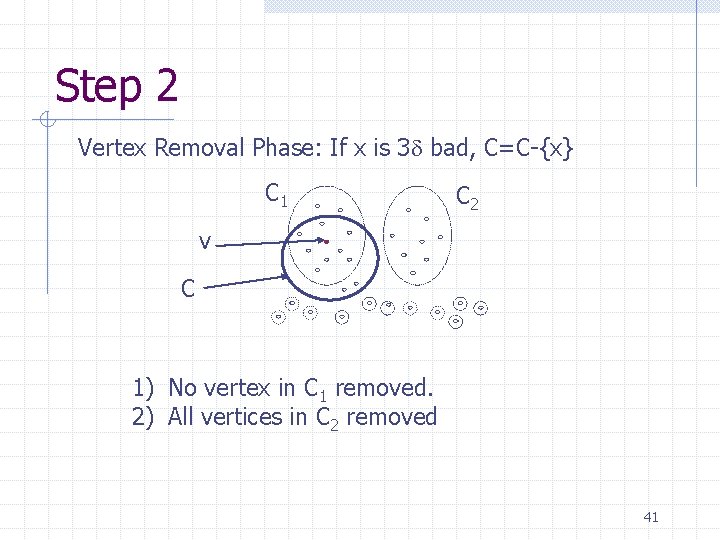

Step 2 Vertex Removal Phase: If x is 3 bad, C=C-{x} C 1 C 2 v C 1) No vertex in C 1 removed. 2) All vertices in C 2 removed 41

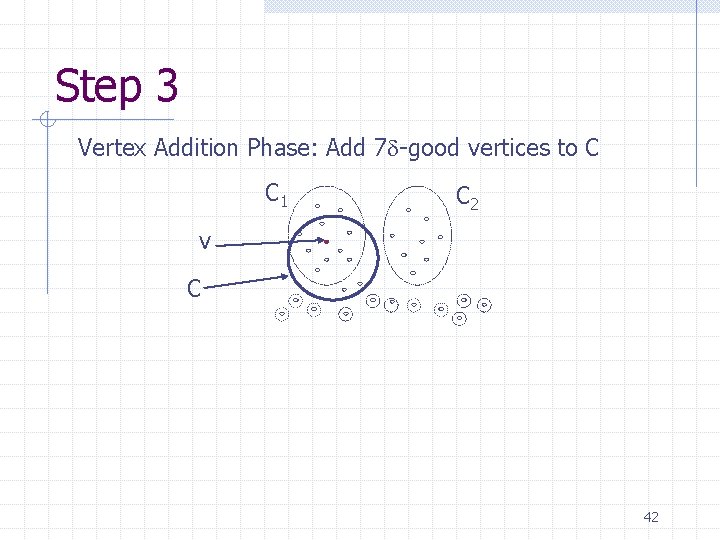

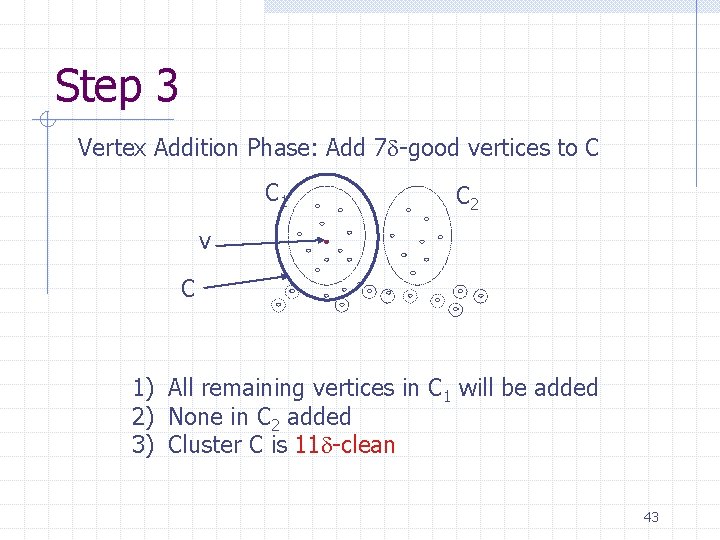

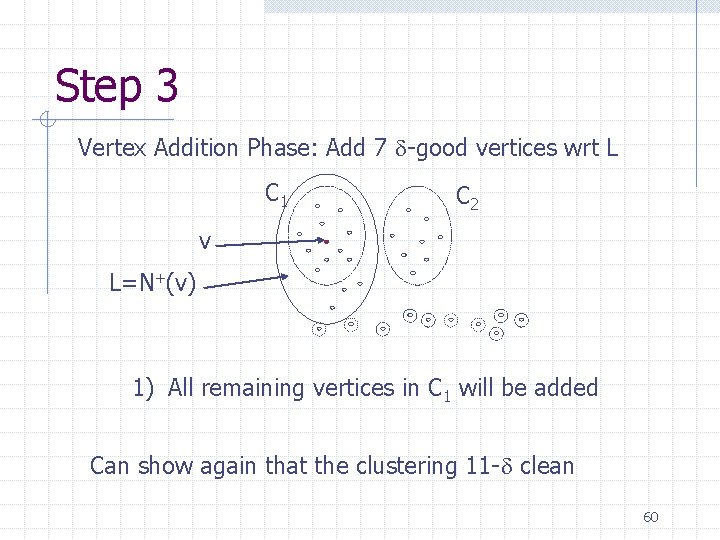

Step 3 Vertex Addition Phase: Add 7 -good vertices to C C 1 C 2 v C 42

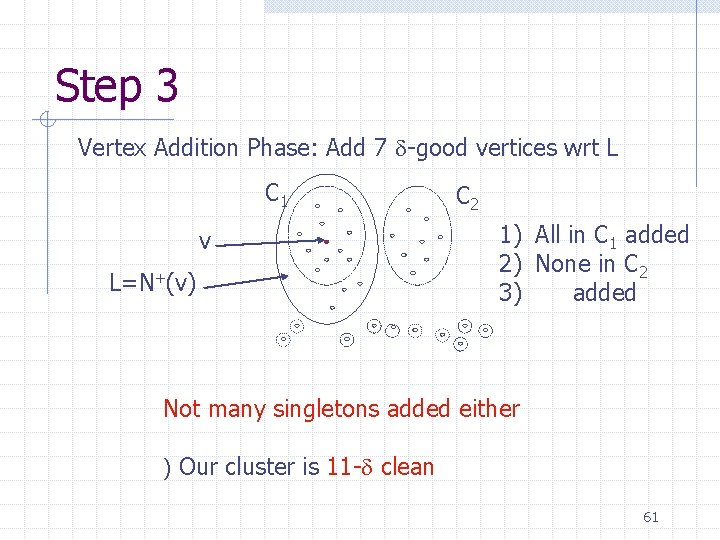

Step 3 Vertex Addition Phase: Add 7 -good vertices to C C 1 C 2 v C 1) All remaining vertices in C 1 will be added 2) None in C 2 added 3) Cluster C is 11 -clean 43

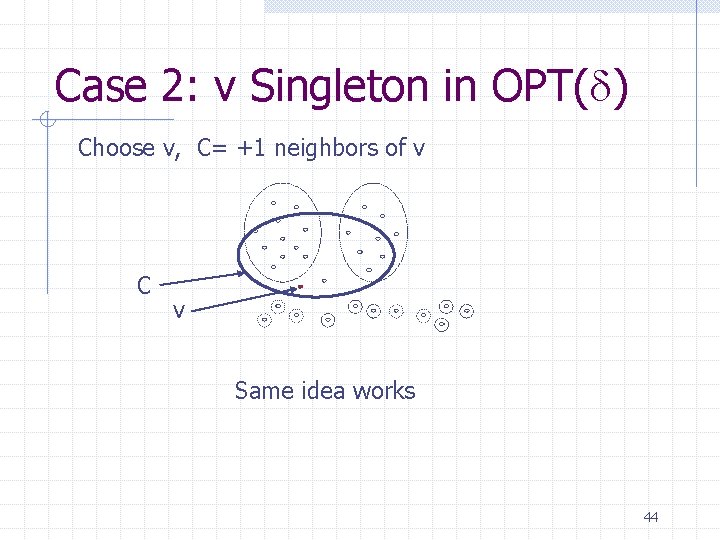

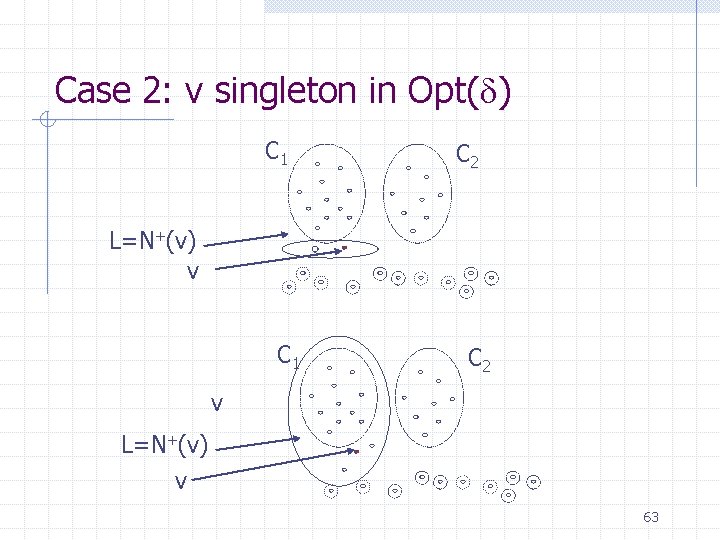

Case 2: v Singleton in OPT( ) Choose v, C= +1 neighbors of v C v Same idea works 44

Main Result Opt( ) -clean Algorithm 11 -clean 45

Approximation for Disagreements To prove: Dalg · c Dopt Dalg : Our Disagreements Dopt : Opt Disagreements Roadmap: 1) Notations 2) Show existence of Opt( ) 3) Describe the Algorithm 4) Show our clustering close to Opt( ) 46

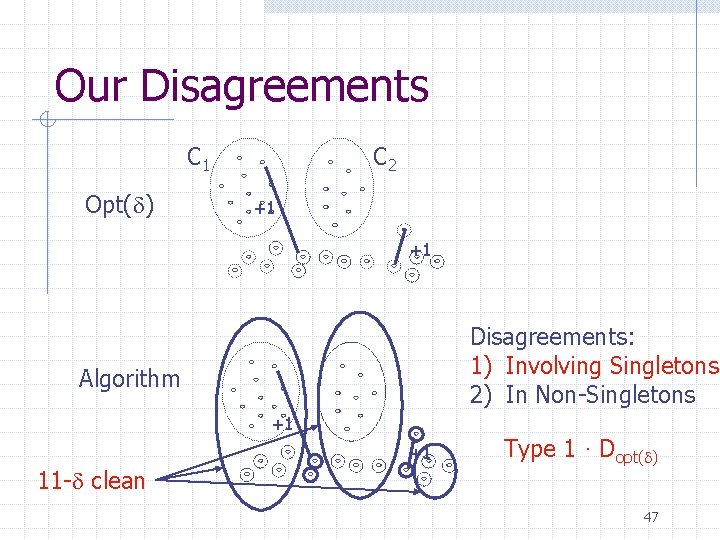

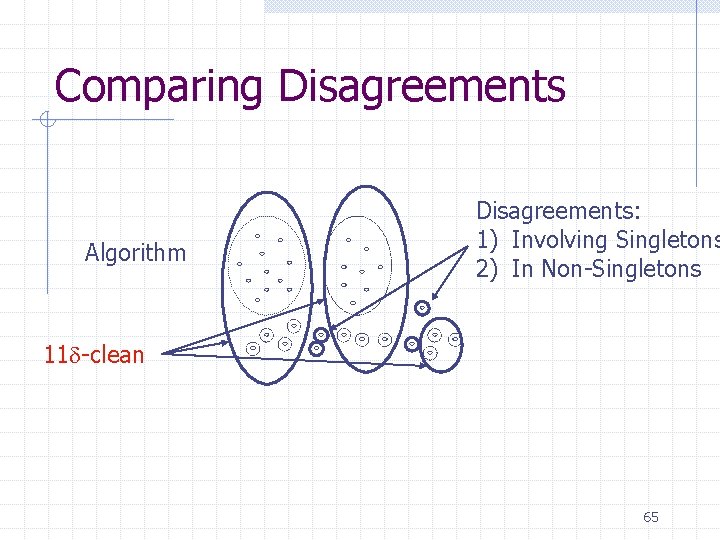

Our Disagreements C 1 Opt( ) C 2 +1 +1 Disagreements: 1) Involving Singletons 2) In Non-Singletons Algorithm +1 +1 11 - clean Type 1 · Dopt( ) 47

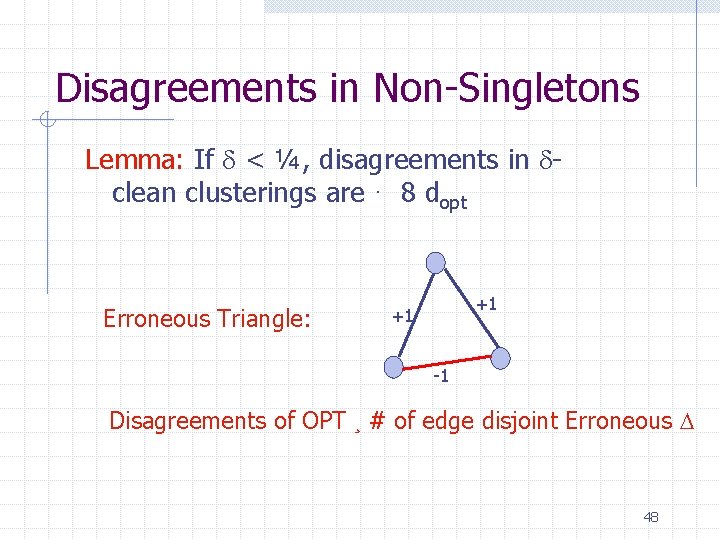

Disagreements in Non-Singletons Lemma: If < ¼, disagreements in clean clusterings are · 8 dopt Erroneous Triangle: +1 +1 -1 Disagreements of OPT ¸ # of edge disjoint Erroneous D 48

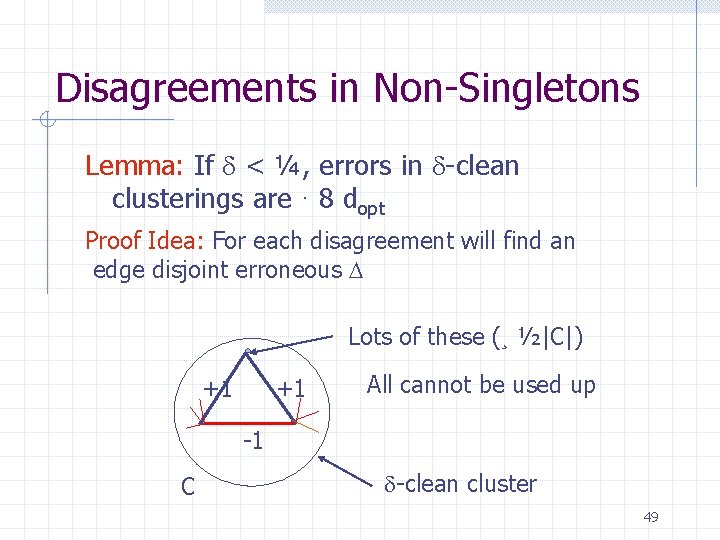

Disagreements in Non-Singletons Lemma: If < ¼, errors in -clean clusterings are · 8 dopt Proof Idea: For each disagreement will find an edge disjoint erroneous D Lots of these (¸ ½|C|) +1 +1 All cannot be used up -1 C -clean cluster 49

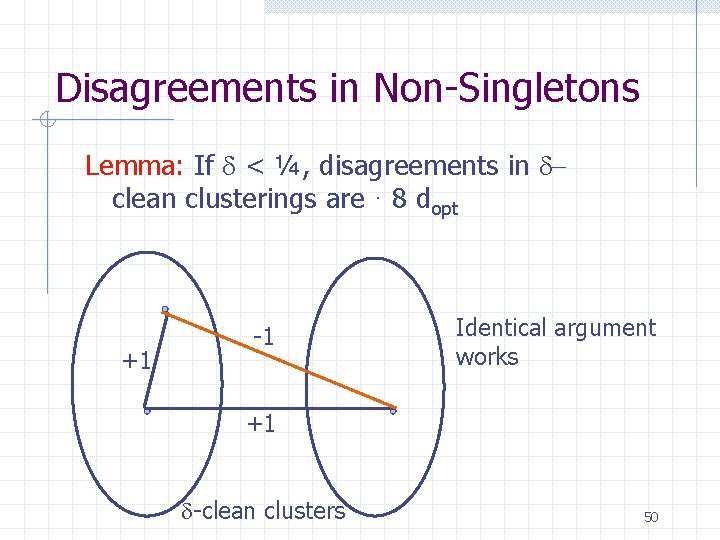

Disagreements in Non-Singletons Lemma: If < ¼, disagreements in clean clusterings are · 8 dopt +1 -1 Identical argument works +1 -clean clusters 50

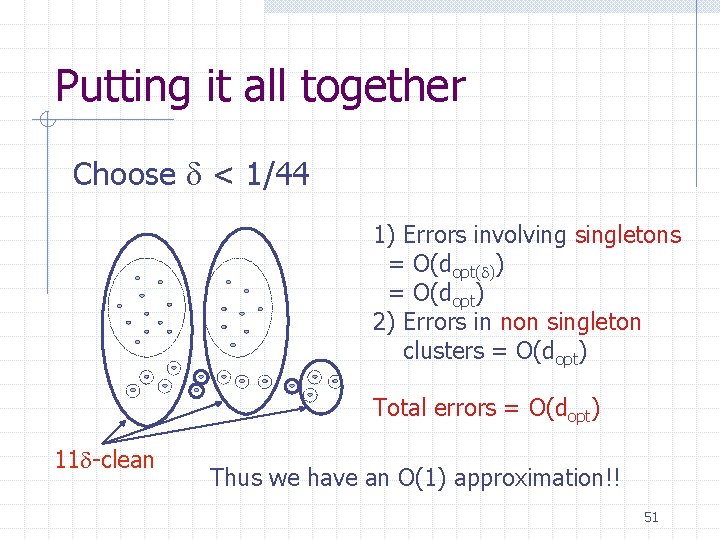

Putting it all together Choose < 1/44 1) Errors involving singletons = O(dopt( )) = O(dopt) 2) Errors in non singleton clusters = O(dopt) Total errors = O(dopt) 11 -clean Thus we have an O(1) approximation!! 51

Outline Introduction Our Approach + Problem Formulation Approximating Agreements Approximating Disagreements Conclusion 52

Conclusion Defined notion of correlation clustering Natural measure of quality, agreements and disagreements Obtained provably good algorithms for these. 53

Future Work Performance in Practice More Heuristics Improve theoretical bounds More notions of clusterings which have provably good algorithms 54

Thank You! Paper: http: //www. cs. cmu. edu/~nikhil Comments: nikhil@cs. cmu. edu 55

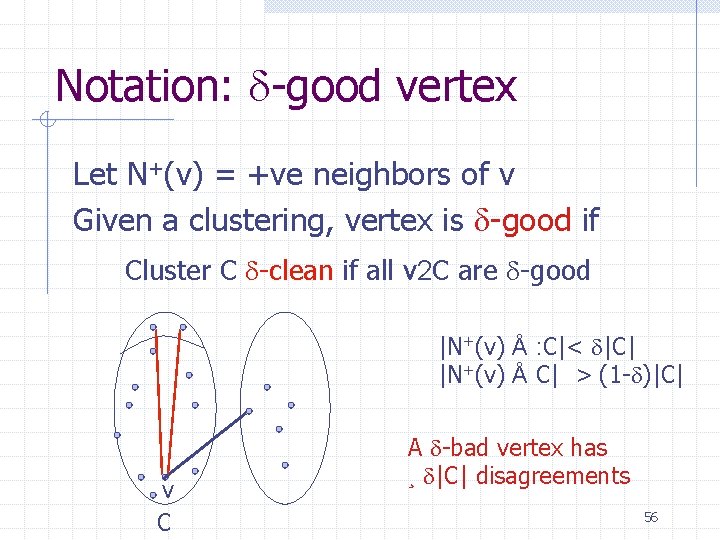

Notation: -good vertex Let N+(v) = +ve neighbors of v Given a clustering, vertex is -good if Cluster C -clean if all v 2 C are -good |N+(v) Å : C|< |C| |N+(v) Å C| > (1 - )|C| v C A -bad vertex has ¸ |C| disagreements 56

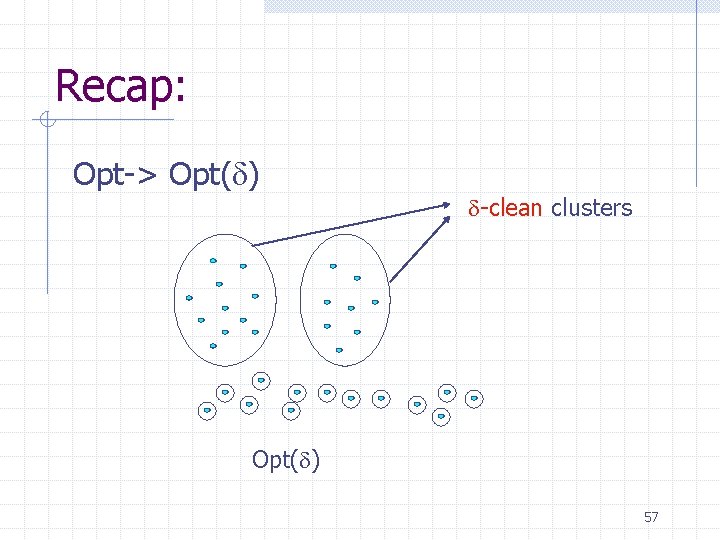

Recap: Opt-> Opt( ) -clean clusters Opt( ) 57

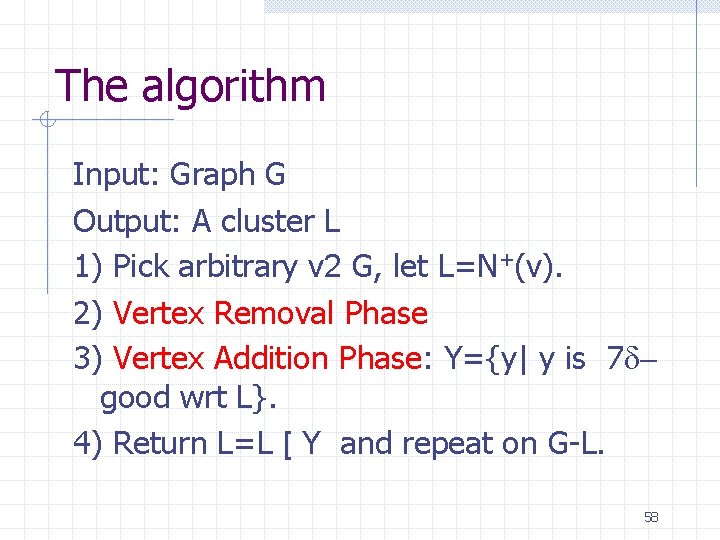

The algorithm Input: Graph G Output: A cluster L 1) Pick arbitrary v 2 G, let L=N+(v). 2) Vertex Removal Phase 3) Vertex Addition Phase: Y={y| y is 7 good wrt L}. 4) Return L=L [ Y and repeat on G-L. 58

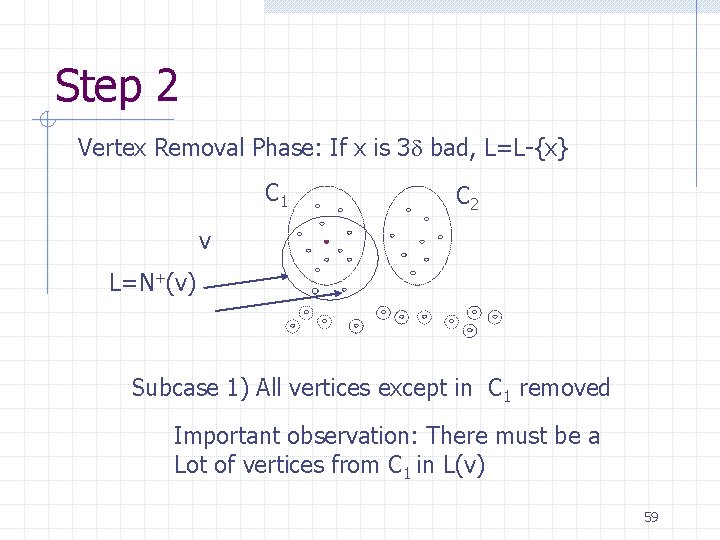

Step 2 Vertex Removal Phase: If x is 3 bad, L=L-{x} C 1 C 2 v L=N+(v) Subcase 1) All vertices except in C 1 removed Important observation: There must be a Lot of vertices from C 1 in L(v) 59

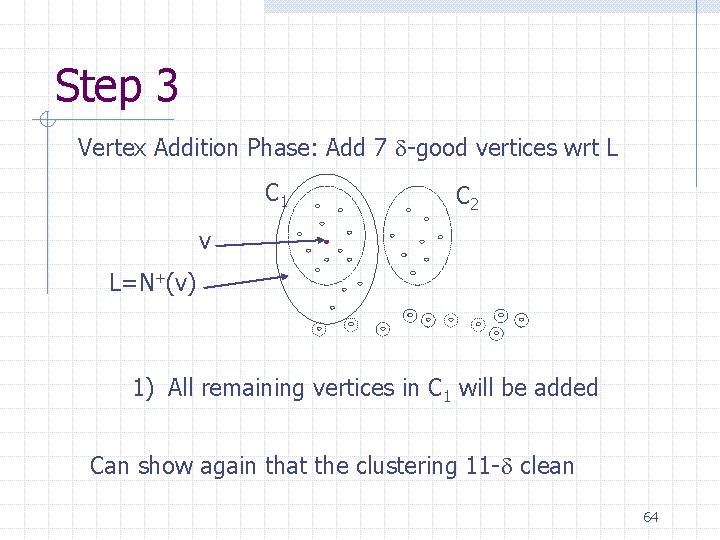

Step 3 Vertex Addition Phase: Add 7 -good vertices wrt L C 1 C 2 v L=N+(v) 1) All remaining vertices in C 1 will be added Can show again that the clustering 11 - clean 60

Step 3 Vertex Addition Phase: Add 7 -good vertices wrt L C 1 v L=N+(v) C 2 1) All in C 1 added 2) None in C 2 3) added Not many singletons added either ) Our cluster is 11 - clean 61

Case 2: Vertex Removal Phase: If x is 3 bad, L=L-{x} C 1 C 2 L=N+(v) v Subcase 1: All vertices in C 1 and C 2 removed Can show none from C 1 and C 2 will be addition Phase either 62

Case 2: v singleton in Opt( ) C 1 C 2 L=N+(v) v C 1 C 2 v L=N+(v) v 63

Step 3 Vertex Addition Phase: Add 7 -good vertices wrt L C 1 C 2 v L=N+(v) 1) All remaining vertices in C 1 will be added Can show again that the clustering 11 - clean 64

Comparing Disagreements Algorithm Disagreements: 1) Involving Singletons 2) In Non-Singletons 11 -clean 65

- Slides: 65