Active Learning as Active Inference Brigham S Anderson

Active Learning as Active Inference Brigham S. Anderson www. cs. cmu. edu/~brigham@cmu. edu School of Computer Science Carnegie Mellon University Copyright © 2006, Brigham S. Anderson

2 Definitions Learning You are given some data …learn a model. Inference You are given a model …infer something about an example. Copyright © 2006, Brigham S. Anderson

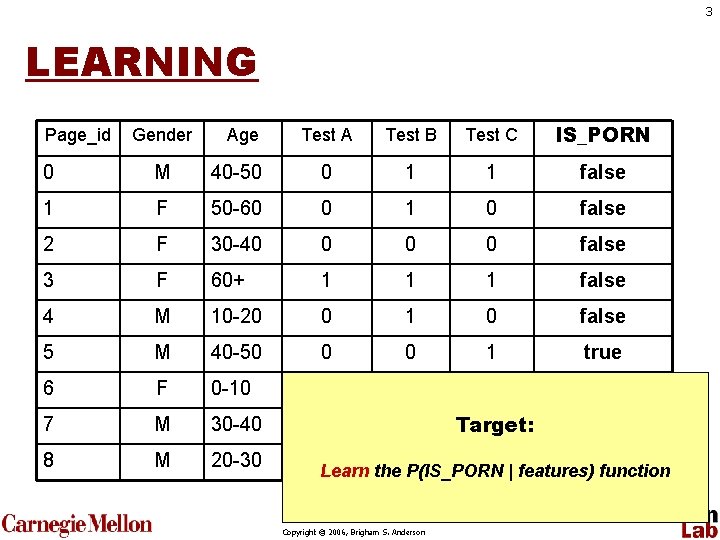

3 LEARNING Page_id Gender Age Test A Test B Test C IS_PORN 0 M 40 -50 0 1 1 false 1 F 50 -60 0 1 0 false 2 F 30 -40 0 false 3 F 60+ 1 1 1 false 4 M 10 -20 0 1 0 false 5 M 40 -50 0 0 1 true 6 F 0 -10 0 false 7 M 30 -40 1 1 0 Target: false 8 M 20 -30 0 0 1 true Learn the P(IS_PORN | features) function Copyright © 2006, Brigham S. Anderson

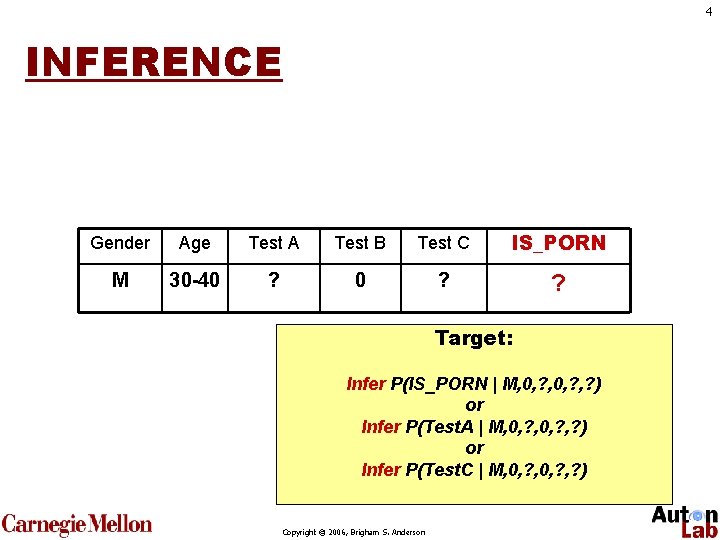

4 INFERENCE Gender Age Test A Test B Test C IS_PORN M 30 -40 ? ? Target: Infer P(IS_PORN | M, 0, ? , ? ) or Infer P(Test. A | M, 0, ? , ? ) or Infer P(Test. C | M, 0, ? , ? ) Copyright © 2006, Brigham S. Anderson

5 Definitions Active Learning • Learn about model by… selecting an unlabelled example Active Inference • Infer something about example by… selecting a hidden feature of the example Copyright © 2006, Brigham S. Anderson

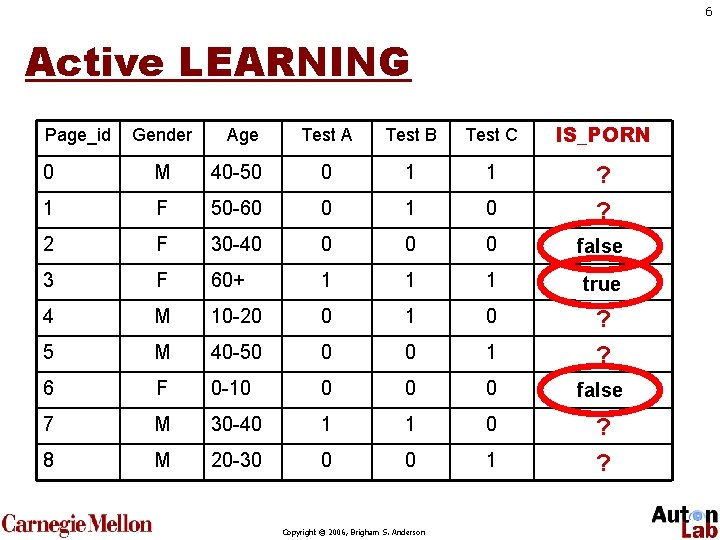

6 Active LEARNING Page_id Gender Age Test A Test B Test C IS_PORN 0 M 40 -50 0 1 1 1 F 50 -60 0 1 0 2 F 30 -40 0 ? ? false ? 3 F 60+ 1 1 1 ? true 4 M 10 -20 0 1 0 5 M 40 -50 0 0 1 6 F 0 -10 0 7 M 30 -40 1 1 0 8 M 20 -30 0 0 1 ? ? false ? ? ? Copyright © 2006, Brigham S. Anderson

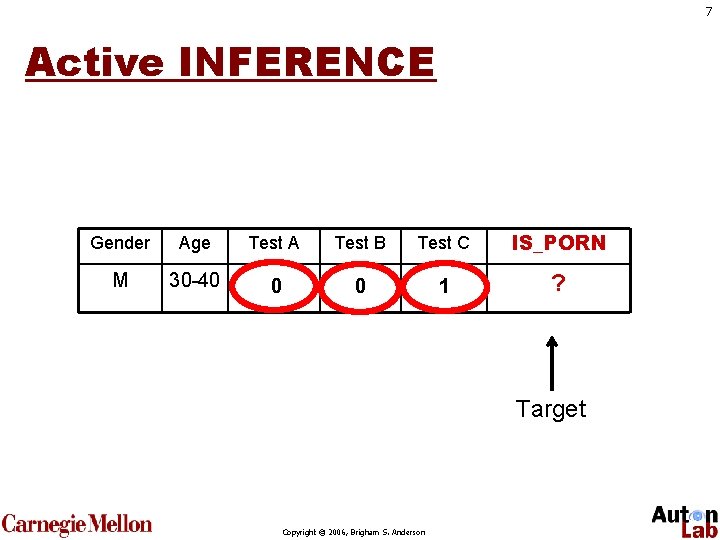

7 Active INFERENCE Gender Age Test A Test B Test C IS_PORN M 30 -40 ? 0 ? 1 ? Target Copyright © 2006, Brigham S. Anderson

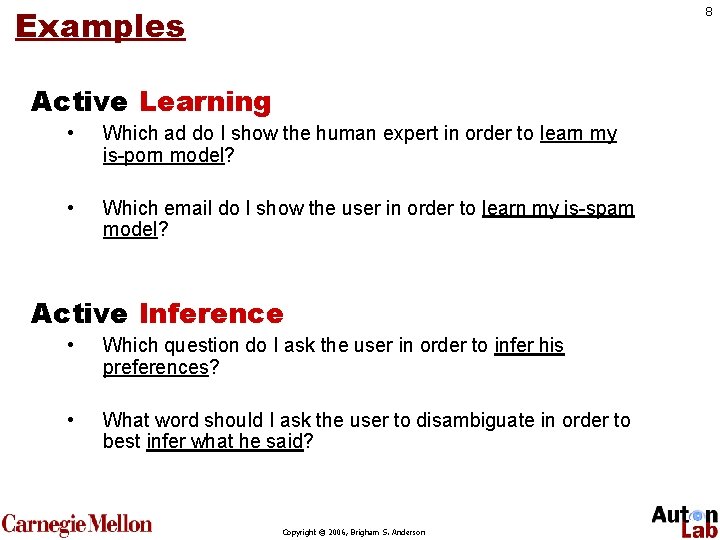

Examples 8 Active Learning • Which ad do I show the human expert in order to learn my is-porn model? • Which email do I show the user in order to learn my is-spam model? Active Inference • Which question do I ask the user in order to infer his preferences? • What word should I ask the user to disambiguate in order to best infer what he said? Copyright © 2006, Brigham S. Anderson

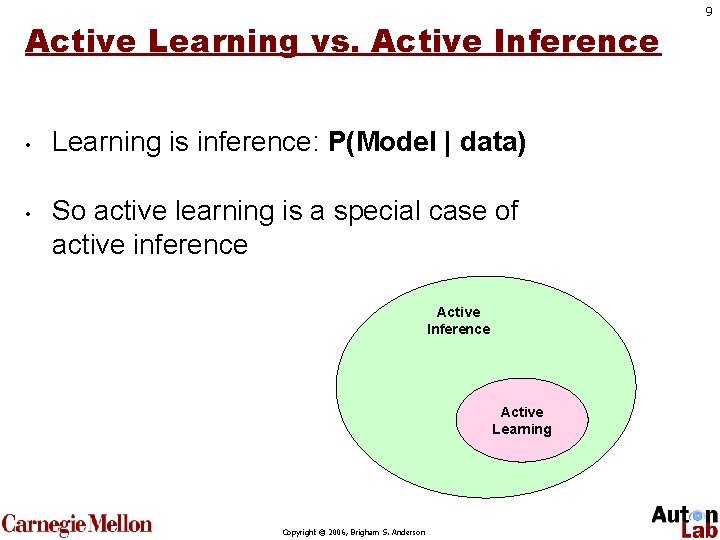

Active Learning vs. Active Inference • • Learning is inference: P(Model | data) So active learning is a special case of active inference Active Inference Active Learning Copyright © 2006, Brigham S. Anderson 9

10 Outline • Review: Probabilistic Models • Active Learning • Algorithm #1: Uncertainty sampling • Algorithm #2: Query by Committee • Algorithm #3: Information Gain • Active Inference • New Loss function • Algorithm #4: Gini Gain Copyright © 2006, Brigham S. Anderson

11 Active Learning Copyright © 2006, Brigham S. Anderson

Active Learning Flavors Today’s topic • • Pool (“random access” to patients) Sequential (must decide as patients walk in the door) Copyright © 2006, Brigham S. Anderson 12

Active Learning Methods? • Humans do “active learning” all the time. • What makes a doctor curious about a patient? • What makes you curious about an experimental result? Copyright © 2006, Brigham S. Anderson 13

14 1994 Copyright © 2006, Brigham S. Anderson

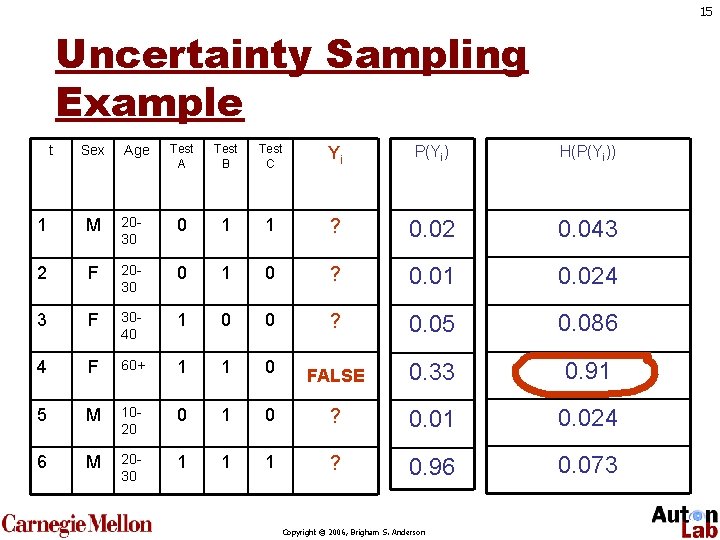

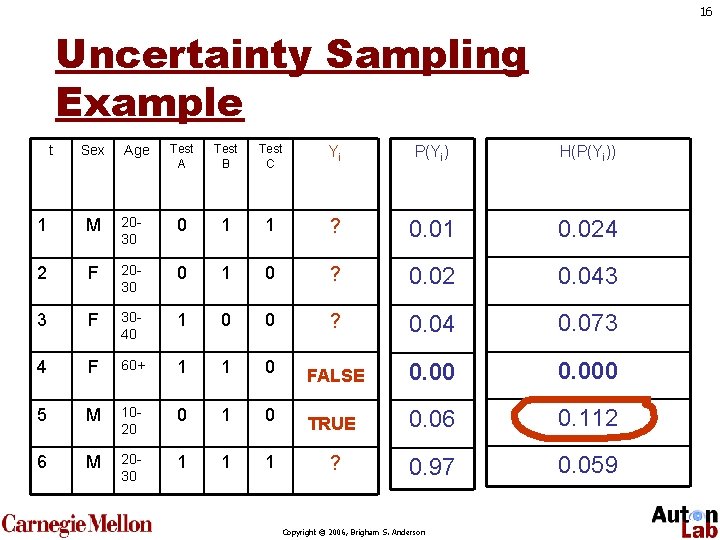

15 Uncertainty Sampling Example t Sex Age Test A Test B Test C Yi P(Yi) H(P(Yi)) 1 M 2030 0 1 1 ? 0. 02 0. 043 2 F 2030 0 1 0 ? 0. 01 0. 024 3 F 3040 1 0 0 ? 0. 05 0. 086 4 F 60+ 1 1 0 ? FALSE 0. 33 0. 91 5 M 1020 0 1 0 ? 0. 01 0. 024 6 M 2030 1 1 1 ? 0. 96 0. 073 Copyright © 2006, Brigham S. Anderson

16 Uncertainty Sampling Example t Sex Age Test A Test B Test C Yi P(Yi) H(P(Yi)) 1 M 2030 0 1 1 ? 0. 01 0. 024 2 F 2030 0 1 0 ? 0. 02 0. 043 3 F 3040 1 0 0 ? 0. 04 0. 073 4 F 60+ 1 1 0 ? FALSE 0. 000 5 M 1020 0 1 0 ? TRUE 0. 06 0. 112 6 M 2030 1 1 1 ? 0. 97 0. 059 Copyright © 2006, Brigham S. Anderson

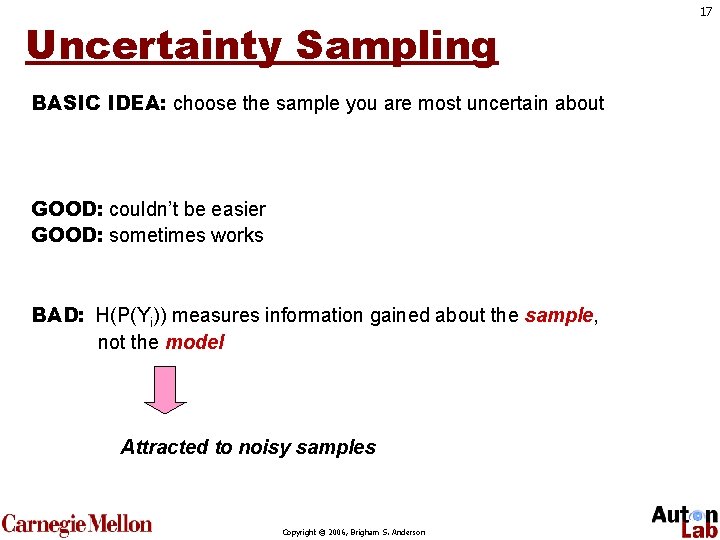

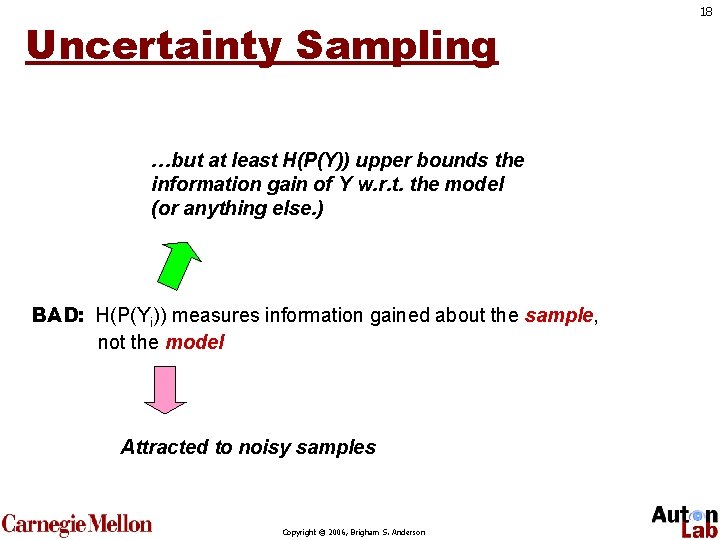

Uncertainty Sampling BASIC IDEA: choose the sample you are most uncertain about GOOD: couldn’t be easier GOOD: sometimes works BAD: H(P(Yi)) measures information gained about the sample, not the model Attracted to noisy samples Copyright © 2006, Brigham S. Anderson 17

Uncertainty Sampling …but at least H(P(Y)) upper bounds the information gain of Y w. r. t. the model (or anything else. ) BAD: H(P(Yi)) measures information gained about the sample, not the model Attracted to noisy samples Copyright © 2006, Brigham S. Anderson 18

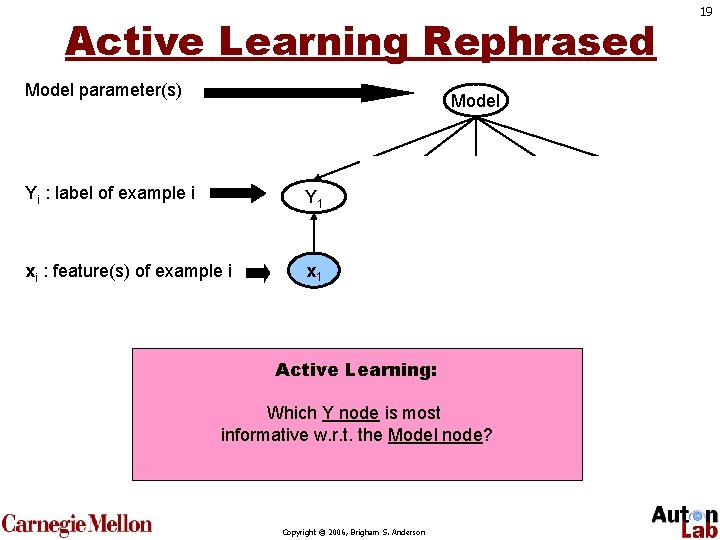

Active Learning Rephrased Model parameter(s) Model Yi : label of example i Y 1 Y 2 Y 3 Y 4 Y 5 xi : feature(s) of example i x 1 x 2 x 3 x 4 x 5 Active Learning: Which Y node is most informative w. r. t. the Model node? Copyright © 2006, Brigham S. Anderson 19

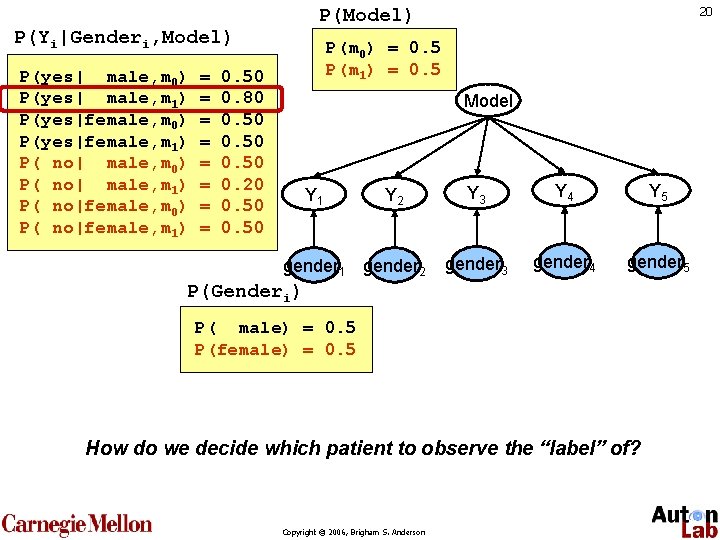

P(Yi|Genderi, Model) P(yes| male, m 0) P(yes| male, m 1) P(yes|female, m 0) P(yes|female, m 1) P( no| male, m 0) P( no| male, m 1) P( no|female, m 0) P( no|female, m 1) = = = = 20 P(Model) P(m 0) = 0. 5 P(m 1) = 0. 50 0. 80 0. 50 0. 20 0. 50 Model Y 1 Y 2 Y 3 Y 4 Y 5 gender 1 gender 2 gender 3 gender 4 gender 5 P(Genderi) P( male) = 0. 5 P(female) = 0. 5 How do we decide which patient to observe the “label” of? Copyright © 2006, Brigham S. Anderson

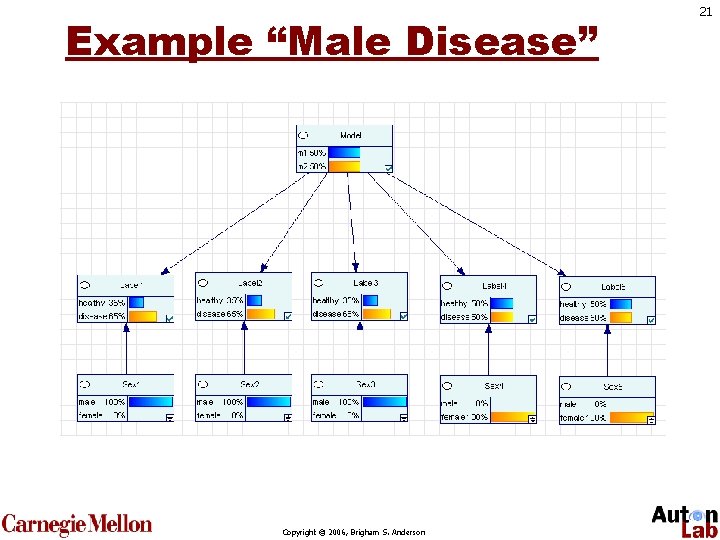

Example “Male Disease” Copyright © 2006, Brigham S. Anderson 21

Where are we? • Uncertainty sampling is easy, but it confuses “sample information” and “model information”. Copyright © 2006, Brigham S. Anderson 22

23 We can do better than uncertainty sampling Copyright © 2006, Brigham S. Anderson

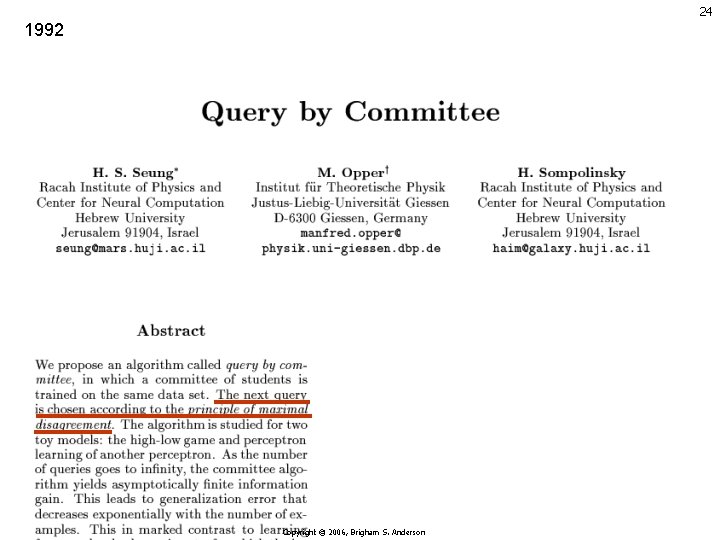

24 1992 Copyright © 2006, Brigham S. Anderson

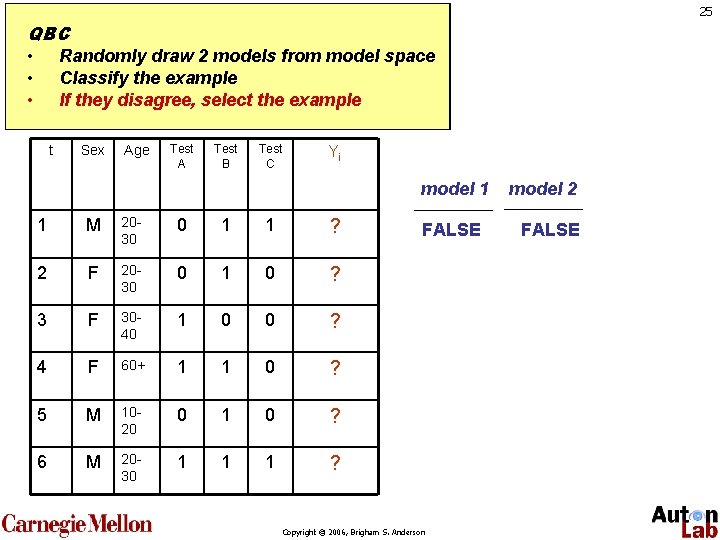

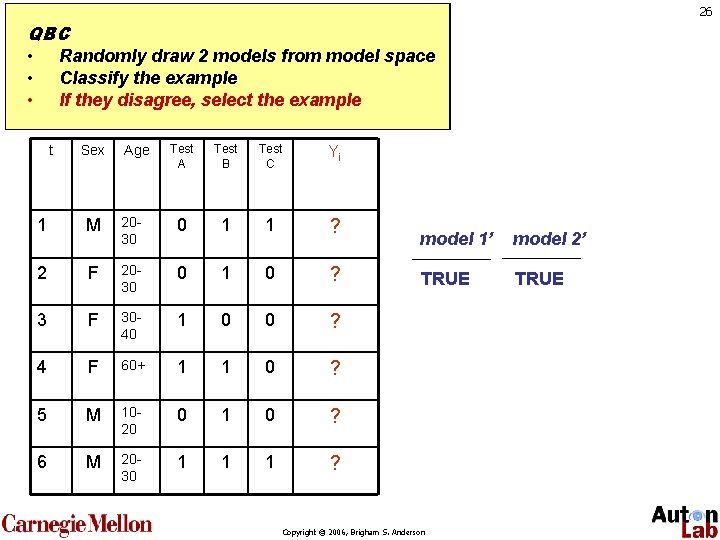

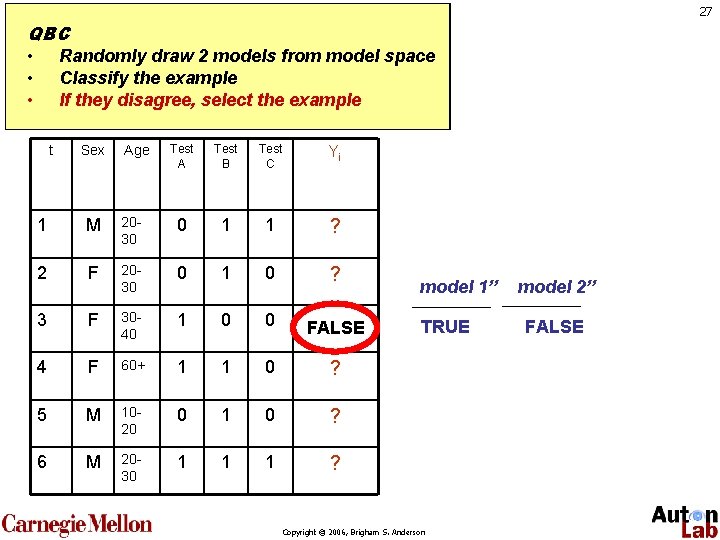

25 QBC • Randomly draw 2 models from model space • Classify the example • If they disagree, select the example t Sex Age Test A Test B Test C Yi model 1 1 M 2030 0 1 1 ? 2 F 2030 0 1 0 ? 3 F 3040 1 0 0 ? 4 F 60+ 1 1 0 ? 5 M 1020 0 1 0 ? 6 M 2030 1 1 1 ? FALSE Copyright © 2006, Brigham S. Anderson model 2 FALSE

26 QBC • Randomly draw 2 models from model space • Classify the example • If they disagree, select the example t Sex Age Test A Test B Test C Yi 1 M 2030 0 1 1 ? 2 F 2030 0 1 0 ? 3 F 3040 1 0 0 ? 4 F 60+ 1 1 0 ? 5 M 1020 0 1 0 ? 6 M 2030 1 1 1 ? model 1’ model 2’ TRUE Copyright © 2006, Brigham S. Anderson

27 QBC • Randomly draw 2 models from model space • Classify the example • If they disagree, select the example t Sex Age Test A Test B Test C Yi 1 M 2030 0 1 1 ? 2 F 2030 0 1 0 ? 3 F 3040 1 0 0 ? FALSE 4 F 60+ 1 1 0 ? 5 M 1020 0 1 0 ? 6 M 2030 1 1 1 ? model 1’’ TRUE Copyright © 2006, Brigham S. Anderson model 2’’ FALSE

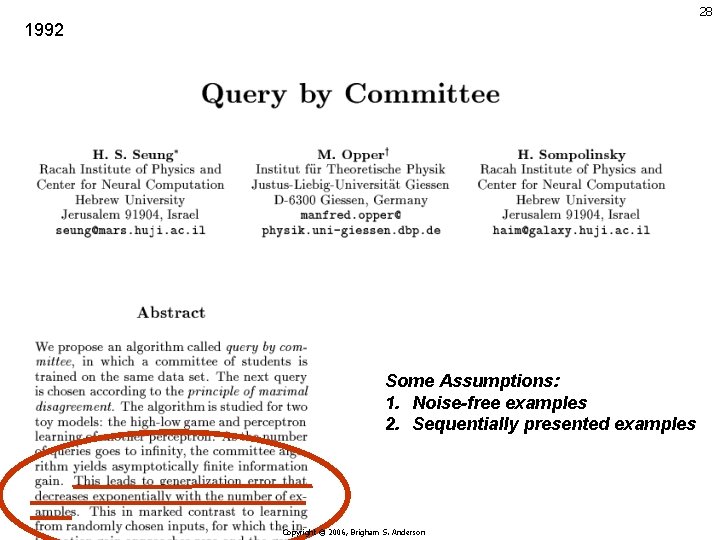

28 1992 Some Assumptions: 1. Noise-free examples 2. Sequentially presented examples Copyright © 2006, Brigham S. Anderson

Query By Committee BASIC IDEA: choose controversial examples. GOOD: easy to implement BAD: Theory based on noise-free examples BAD: Designed for scanning the examples Copyright © 2006, Brigham S. Anderson 29

Where Are We? • Two strategies so far… • Uncertainty Sampling: choose uncertain samples • Query By Committee: choose controversial samples Copyright © 2006, Brigham S. Anderson 30

QBC Sort of Minimizes Entropy • Information Gain is a more rigorous framework for QBC (Mac. Kay, 1992) (Anderson, Siddiqqi, and Moore, 2006) Copyright © 2006, Brigham S. Anderson 31

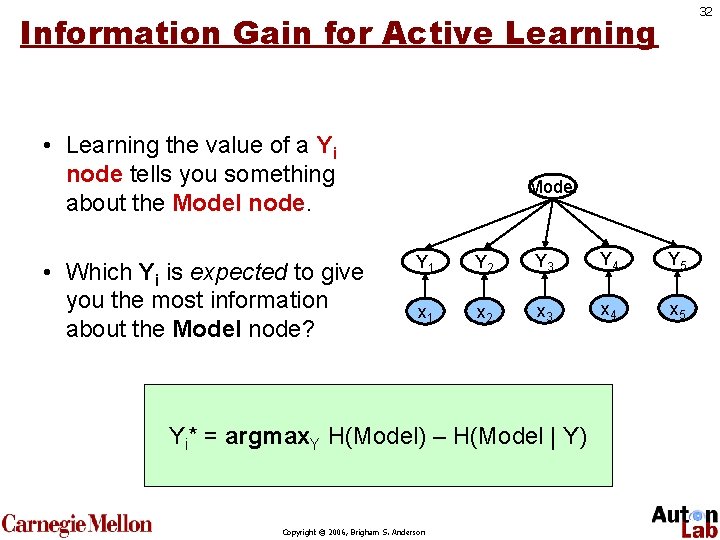

32 Information Gain for Active Learning • Learning the value of a Yi node tells you something about the Model node. • Which Yi is expected to give you the most information about the Model node? Model Y 1 Y 2 Y 3 Y 4 Y 5 x 1 x 2 x 3 x 4 x 5 Yi* = argmax. Y H(Model) – H(Model | Y) Copyright © 2006, Brigham S. Anderson

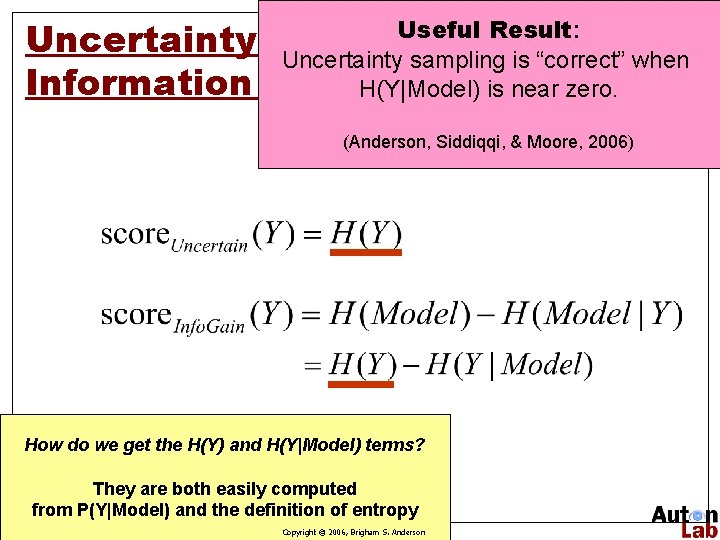

Useful Result: Uncertainty Sampling & Uncertainty sampling is “correct” when Information Gain H(Y|Model) is near zero. (Anderson, Siddiqqi, & Moore, 2006) How do we get the H(Y) and H(Y|Model) terms? They are both easily computed from P(Y|Model) and the definition of entropy Copyright © 2006, Brigham S. Anderson 33

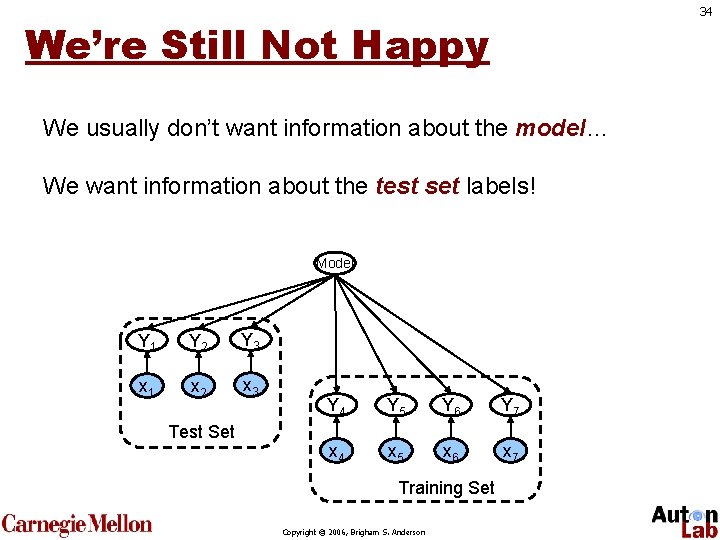

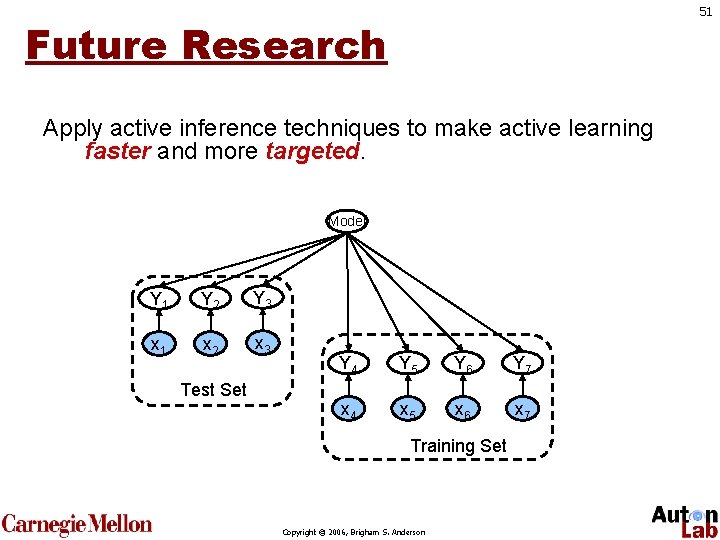

34 We’re Still Not Happy We usually don’t want information about the model… We want information about the test set labels! Model Y 1 Y 2 Y 3 x 1 x 2 x 3 Test Set Y 4 Y 5 Y 6 Y 7 x 4 x 5 x 6 x 7 Training Set Copyright © 2006, Brigham S. Anderson

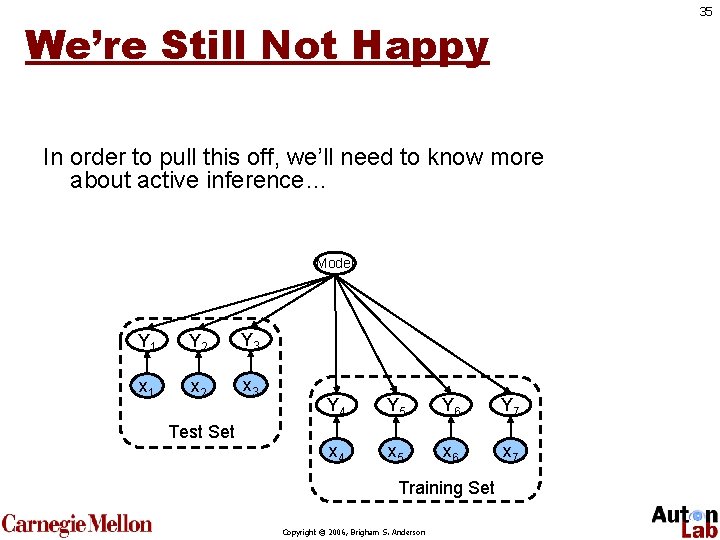

35 We’re Still Not Happy In order to pull this off, we’ll need to know more about active inference… Model Y 1 Y 2 Y 3 x 1 x 2 x 3 Test Set Y 4 Y 5 Y 6 Y 7 x 4 x 5 x 6 x 7 Training Set Copyright © 2006, Brigham S. Anderson

36 Active INFERENCE Copyright © 2006, Brigham S. Anderson

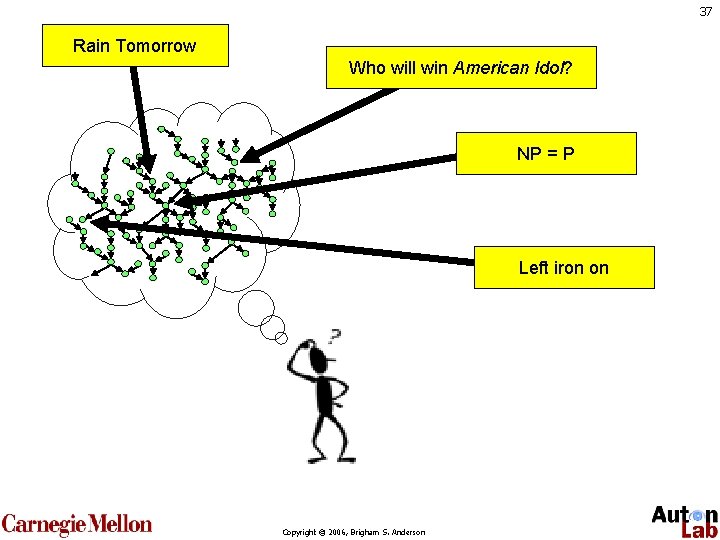

37 Rain Tomorrow Who will win American Idol? NP = P Left iron on Copyright © 2006, Brigham S. Anderson

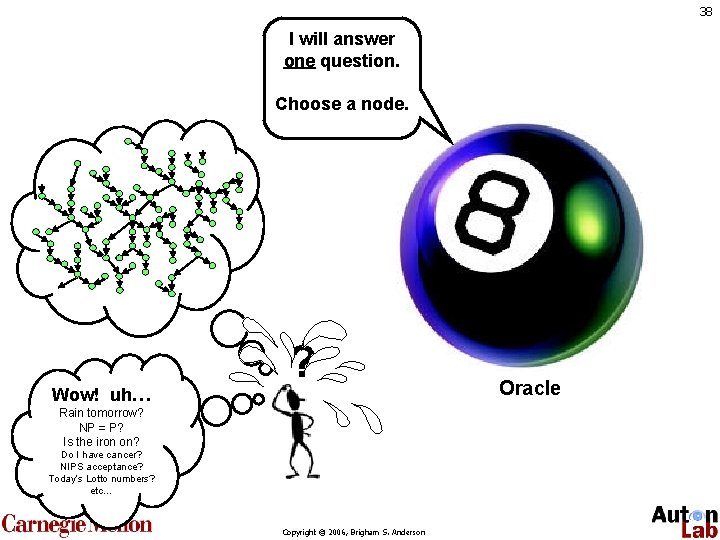

38 I will answer one question. Choose a node. Wow! uh… ? Rain tomorrow? NP = P? Is the iron on? Do I have cancer? NIPS acceptance? Today’s Lotto numbers? etc… Copyright © 2006, Brigham S. Anderson Oracle

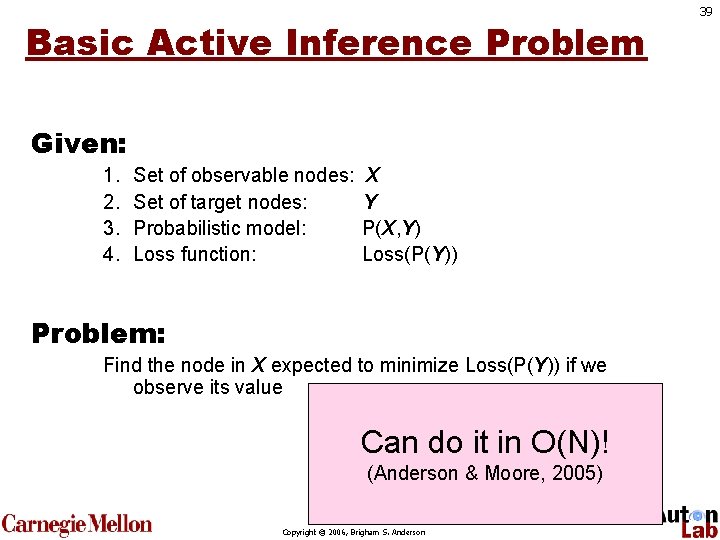

Basic Active Inference Problem Given: 1. 2. 3. 4. Set of observable nodes: Set of target nodes: Probabilistic model: Loss function: X Y P(X, Y) Loss(P(Y)) Problem: Find the node in X expected to minimize Loss(P(Y)) if we observe its value Can do it in O(N)! (Anderson & Moore, 2005) Copyright © 2006, Brigham S. Anderson 39

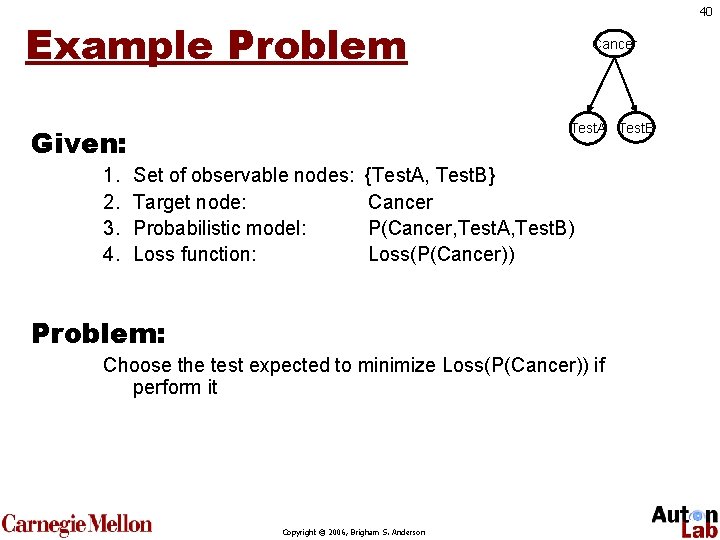

40 Example Problem Test. A Test. B Given: 1. 2. 3. 4. Cancer Set of observable nodes: Target node: Probabilistic model: Loss function: {Test. A, Test. B} Cancer P(Cancer, Test. A, Test. B) Loss(P(Cancer)) Problem: Choose the test expected to minimize Loss(P(Cancer)) if perform it Copyright © 2006, Brigham S. Anderson

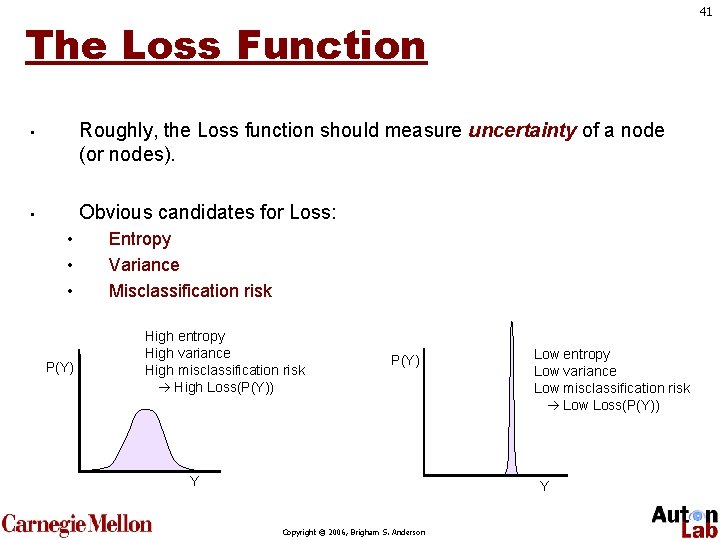

41 The Loss Function Roughly, the Loss function should measure uncertainty of a node (or nodes). • Obvious candidates for Loss: • • P(Y) Entropy Variance Misclassification risk High entropy High variance High misclassification risk High Loss(P(Y)) P(Y) Y Low entropy Low variance Low misclassification risk Low Loss(P(Y)) Y Copyright © 2006, Brigham S. Anderson

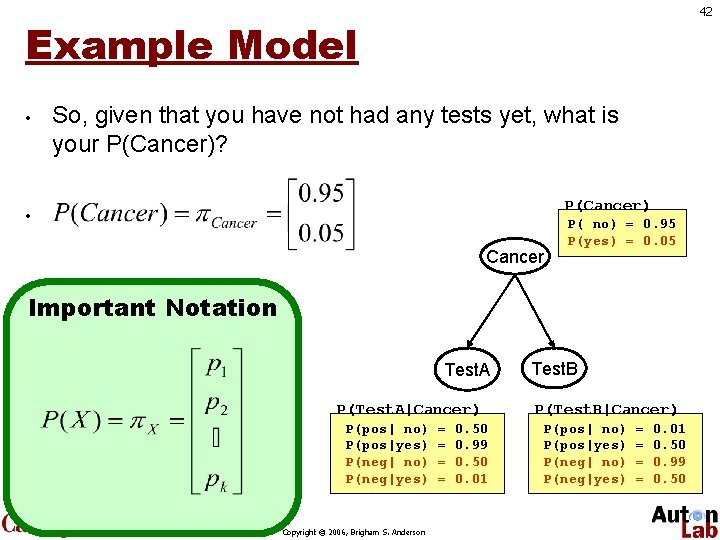

42 Example Model • So, given that you have not had any tests yet, what is your P(Cancer)? P(Cancer) • Cancer P( no) = 0. 95 P(yes) = 0. 05 Important Notation Test. A P(Test. A|Cancer) P(pos| no) P(pos|yes) P(neg| no) P(neg|yes) Copyright © 2006, Brigham S. Anderson = = 0. 50 0. 99 0. 50 0. 01 Test. B P(Test. B|Cancer) P(pos| no) P(pos|yes) P(neg| no) P(neg|yes) = = 0. 01 0. 50 0. 99 0. 50

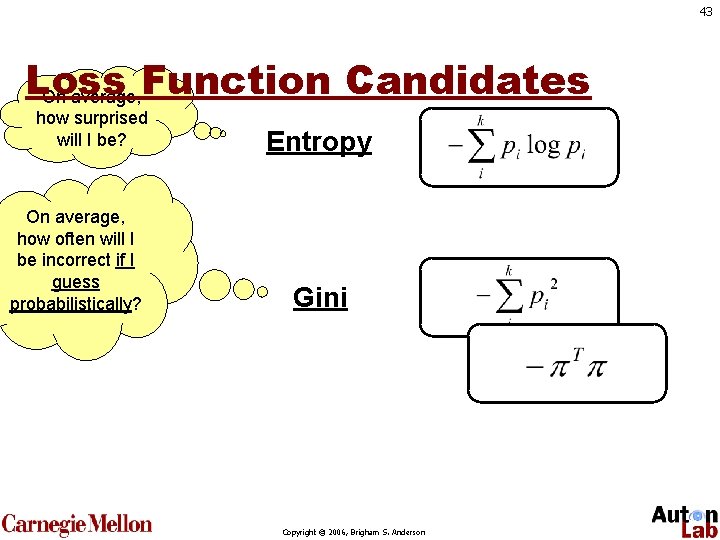

43 Loss On average, Function Candidates how surprised will I be? On average, how often will I be incorrect if I guess probabilistically? Entropy Gini Copyright © 2006, Brigham S. Anderson

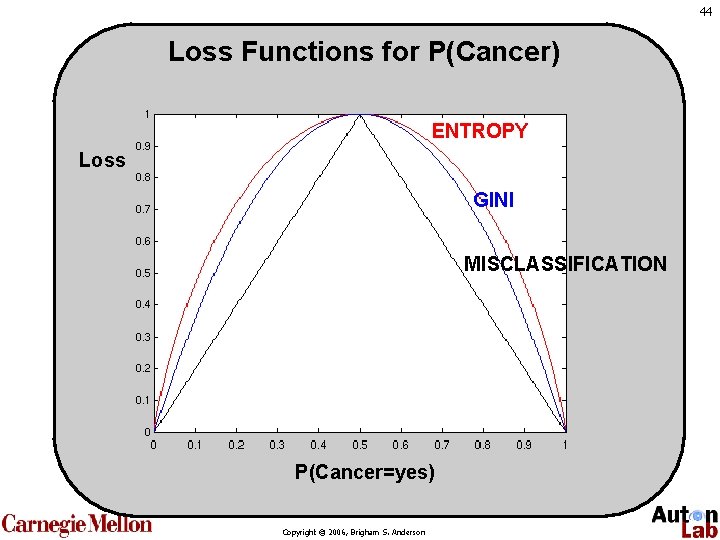

44 Loss Functions for P(Cancer) ENTROPY Loss GINI MISCLASSIFICATION P(Cancer=yes) Copyright © 2006, Brigham S. Anderson

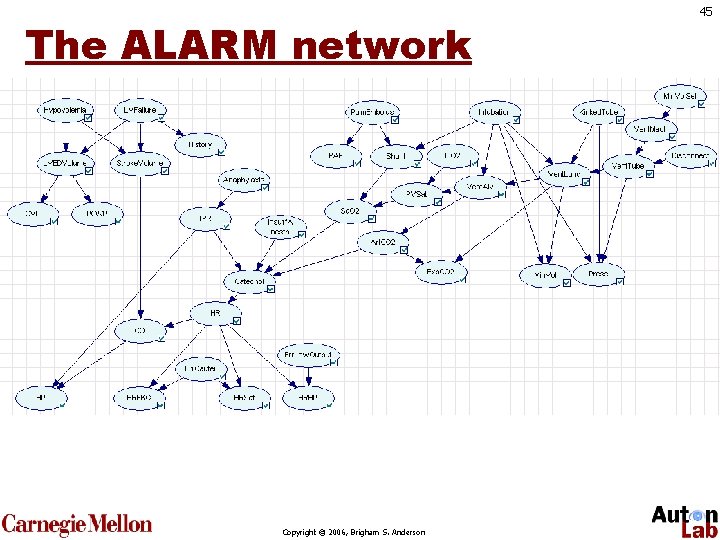

The ALARM network Copyright © 2006, Brigham S. Anderson 45

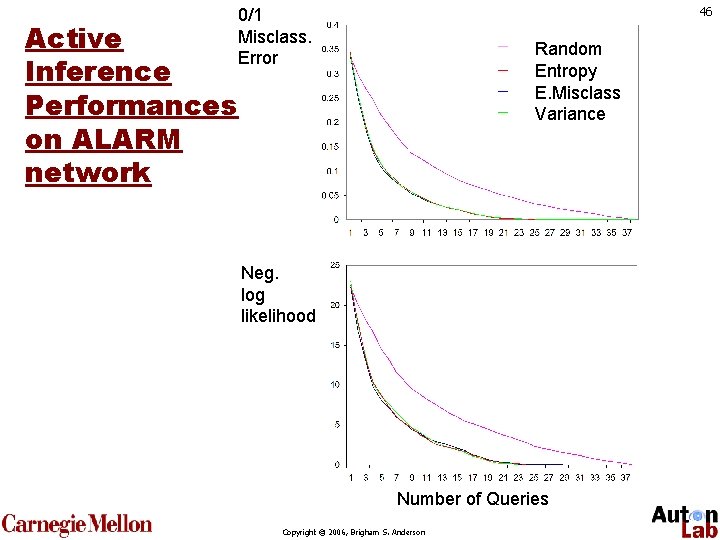

Active Inference Performances on ALARM network 46 0/1 Misclass. Error Random Entropy E. Misclass Variance Neg. log likelihood Number of Queries Copyright © 2006, Brigham S. Anderson

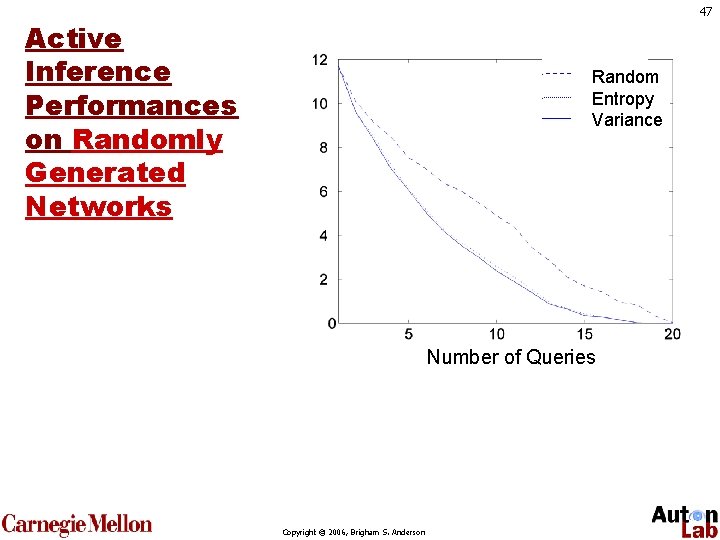

47 Active Inference Performances on Randomly Generated Networks Random Entropy Variance Number of Queries Copyright © 2006, Brigham S. Anderson

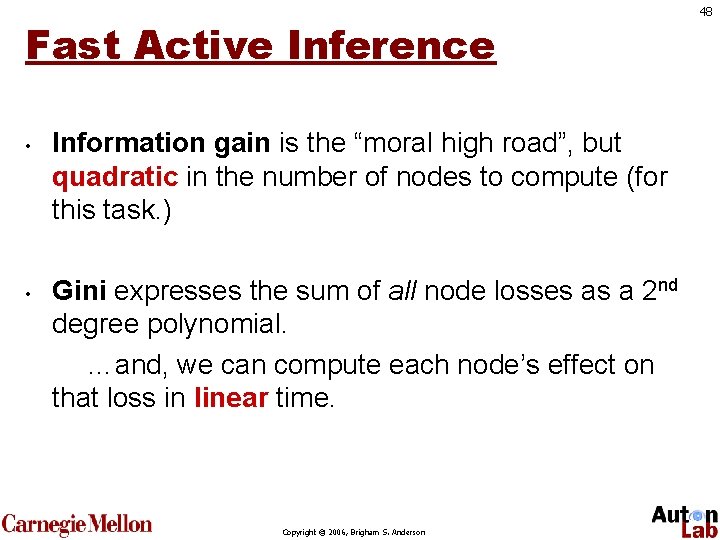

Fast Active Inference • • Information gain is the “moral high road”, but quadratic in the number of nodes to compute (for this task. ) Gini expresses the sum of all node losses as a 2 nd degree polynomial. …and, we can compute each node’s effect on that loss in linear time. Copyright © 2006, Brigham S. Anderson 48

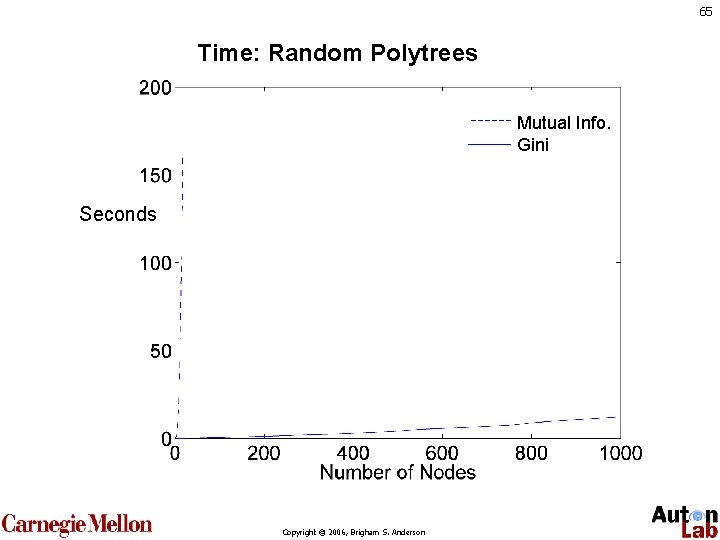

49 Time: Random Polytrees Mutual Info. Gini Seconds Copyright © 2006, Brigham S. Anderson

Recent Research • Hidden Markov Models (Anderson & Moore, ICML, 2005) • Active Viterbi • Active Baum-Welch • Active Forward-Backward • • General active inference (Anderson & Moore, NIPS, 2005) Active sequence selection for Hidden Markov Models (Anderson, Siddiqqi, & Moore, 2006) Copyright © 2006, Brigham S. Anderson 50

51 Future Research Apply active inference techniques to make active learning faster and more targeted. Model Y 1 Y 2 Y 3 x 1 x 2 x 3 Test Set Y 4 Y 5 Y 6 Y 7 x 4 x 5 x 6 x 7 Training Set Copyright © 2006, Brigham S. Anderson

52 Summary • Graphical models are a good tool for understanding active learning and inference • Active learning is just an instance of active inference. • Three popular types of active learning • Uncertainty sampling • QBC • Information Gain Copyright © 2006, Brigham S. Anderson

53 Copyright © 2006, Brigham S. Anderson

54 Copyright © 2006, Brigham S. Anderson

55 Copyright © 2006, Brigham S. Anderson

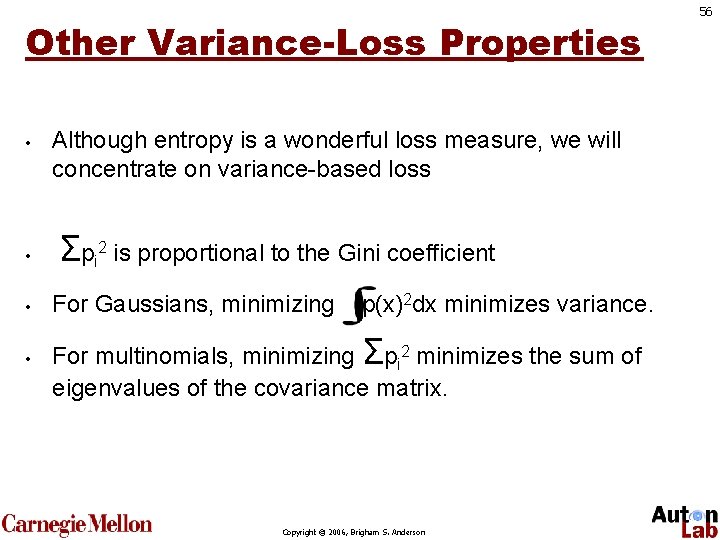

Other Variance-Loss Properties • • Although entropy is a wonderful loss measure, we will concentrate on variance-based loss Σpi 2 is proportional to the Gini coefficient • For Gaussians, minimizing p(x)2 dx minimizes variance. • For multinomials, minimizing Σpi 2 minimizes the sum of eigenvalues of the covariance matrix. Copyright © 2006, Brigham S. Anderson 56

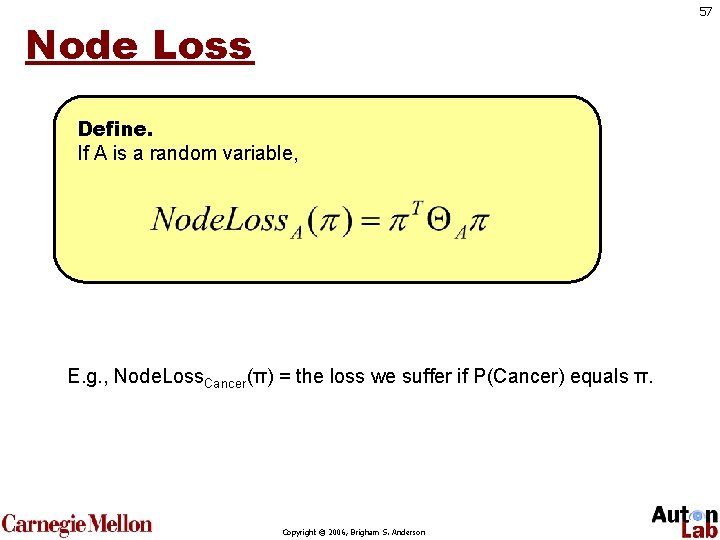

57 Node Loss Define. If A is a random variable, E. g. , Node. Loss. Cancer(π) = the loss we suffer if P(Cancer) equals π. Copyright © 2006, Brigham S. Anderson

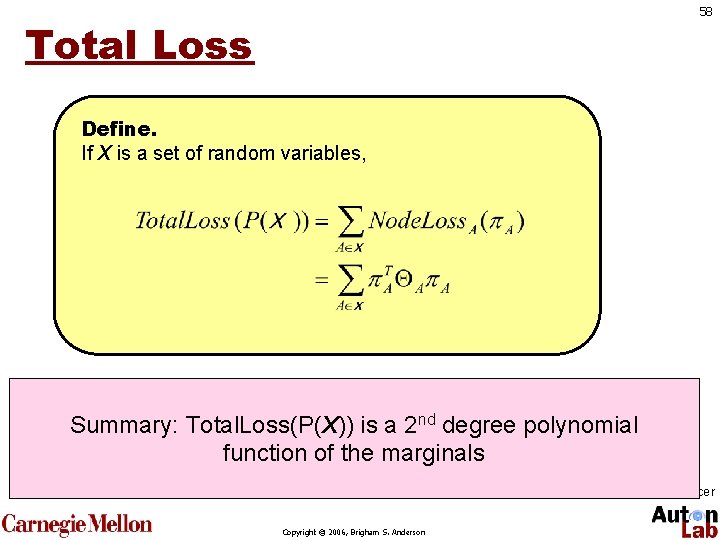

58 Total Loss Define. If X is a set of random variables, E. g. , Total. Loss(P(Cancer, Test. A, Test. B)) nd Summary: =Total. Loss(P(X)) is a 2 degree polynomial sum of all node losses given our current beliefs function of the marginals about all the nodes. In this case, the only node with nonzero Ө is Cancer. So, Total. Loss = Node. Loss. Cancer Copyright © 2006, Brigham S. Anderson

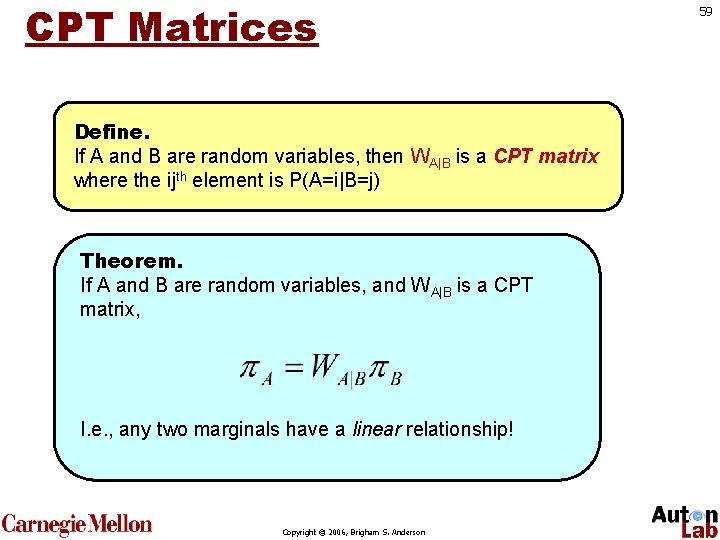

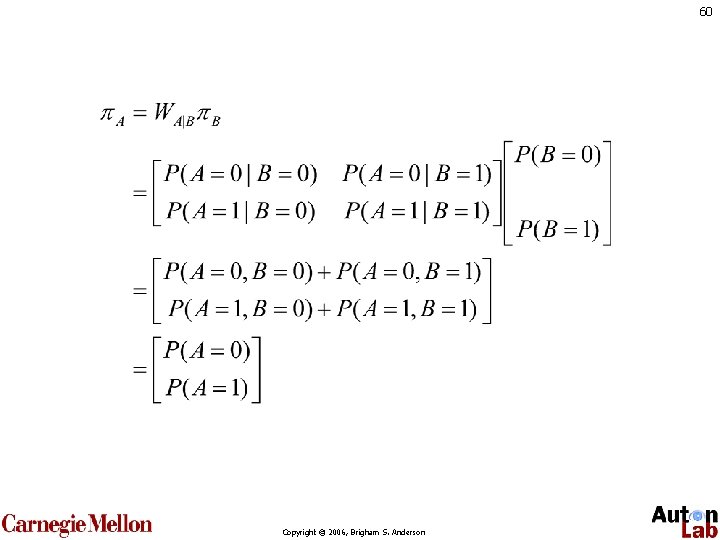

CPT Matrices Define. If A and B are random variables, then WA|B is a CPT matrix where the ijth element is P(A=i|B=j) Theorem. If A and B are random variables, and WA|B is a CPT matrix, I. e. , any two marginals have a linear relationship! Copyright © 2006, Brigham S. Anderson 59

60 Copyright © 2006, Brigham S. Anderson

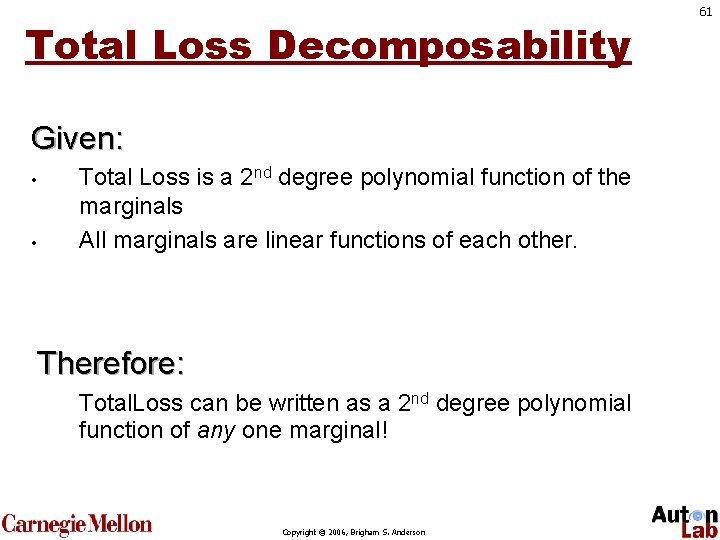

Total Loss Decomposability Given: • • Total Loss is a 2 nd degree polynomial function of the marginals All marginals are linear functions of each other. Therefore: Total. Loss can be written as a 2 nd degree polynomial function of any one marginal! Copyright © 2006, Brigham S. Anderson 61

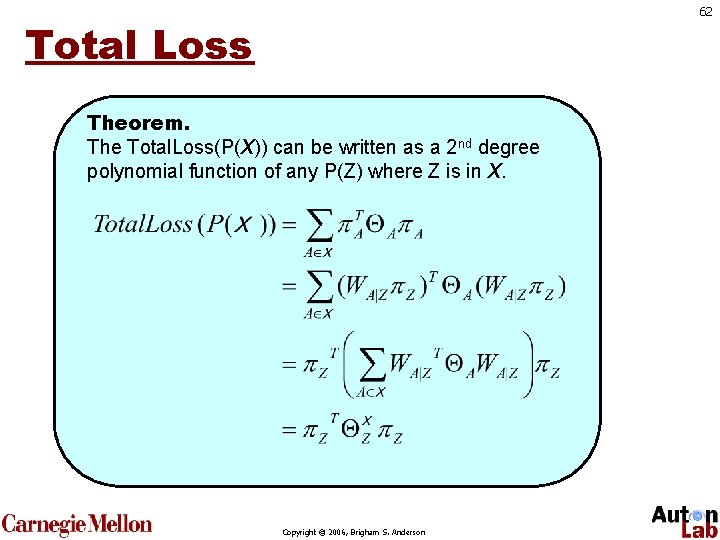

62 Total Loss Theorem. The Total. Loss(P(X)) can be written as a 2 nd degree polynomial function of any P(Z) where Z is in X. Copyright © 2006, Brigham S. Anderson

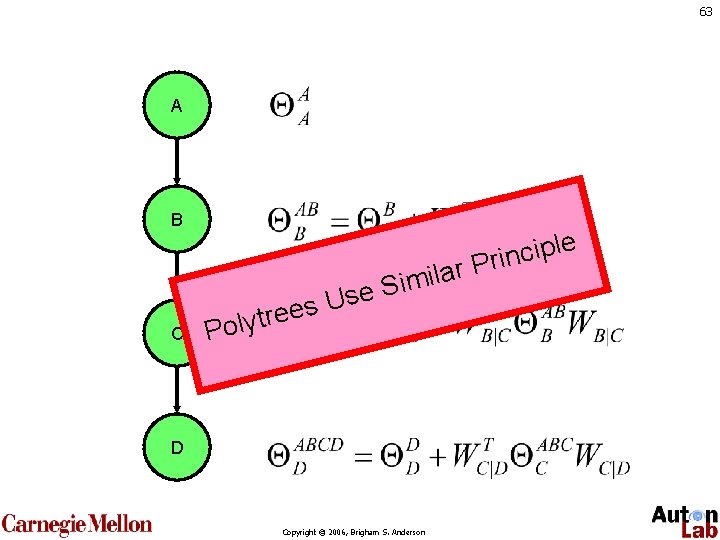

63 A B C e l p i c n r Pri s e e r t y Pol a l i m i Use S D Copyright © 2006, Brigham S. Anderson

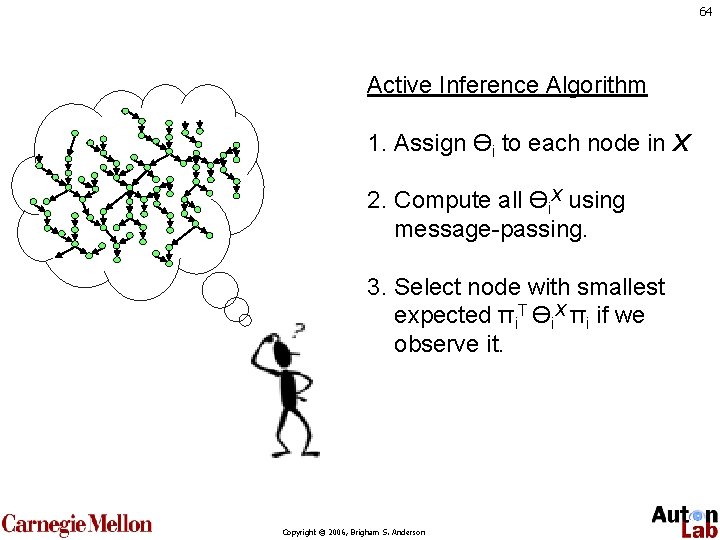

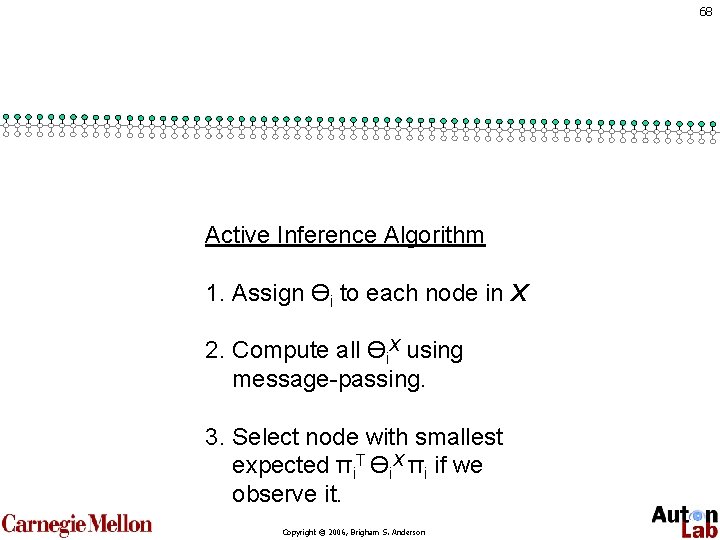

64 Active Inference Algorithm 1. Assign Өi to each node in X 2. Compute all Өi. X using message-passing. 3. Select node with smallest expected πi. T Өi. X πi if we observe it. Copyright © 2006, Brigham S. Anderson

65 Time: Random Polytrees Mutual Info. Gini Seconds Copyright © 2006, Brigham S. Anderson

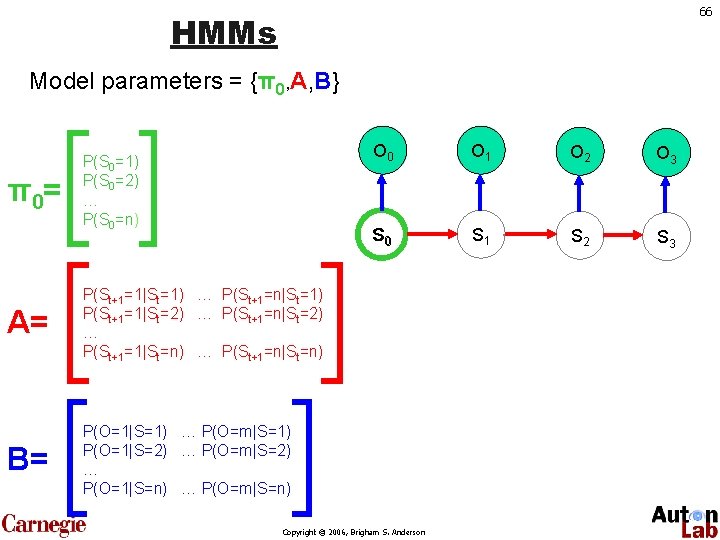

66 HMMs Model parameters = {π0, A, B} π 0= P(S 0=1) P(S 0=2) … P(S 0=n) A= P(St+1=1|St=1) … P(St+1=n|St=1) P(St+1=1|St=2) … P(St+1=n|St=2) … P(St+1=1|St=n) … P(St+1=n|St=n) B= P(O=1|S=1) … P(O=m|S=1) P(O=1|S=2) … P(O=m|S=2) … P(O=1|S=n) … P(O=m|S=n) O 0 O 1 O 2 O 3 S 0 S 1 S 2 S 3 Copyright © 2006, Brigham S. Anderson

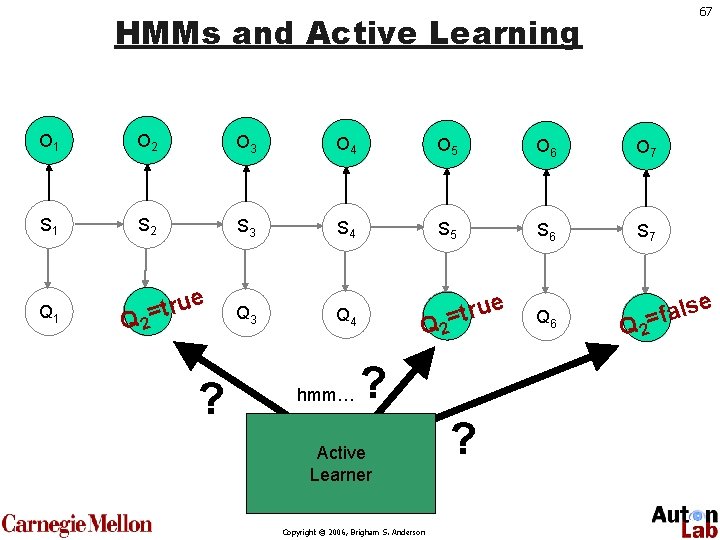

67 HMMs and Active Learning O 1 O 2 O 3 O 4 O 5 O 6 O 7 S 1 S 2 S 3 S 4 S 5 S 6 S 7 Q 1 ru Q= 2 t Q 3 Q 4 ru Q= 5 t Q 6 al Q= 7 f Q 2 e ? hmm… Q 2 ? Active Learner Copyright © 2006, Brigham S. Anderson ? e Q 2 se

68 Active Inference Algorithm 1. Assign Өi to each node in X 2. Compute all Өi. X using message-passing. 3. Select node with smallest expected πi. T Өi. X πi if we observe it. Copyright © 2006, Brigham S. Anderson

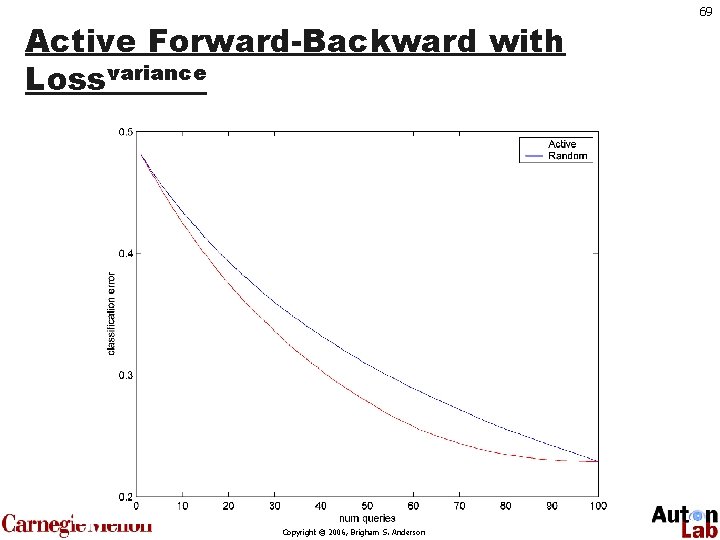

Active Forward-Backward with Lossvariance Copyright © 2006, Brigham S. Anderson 69

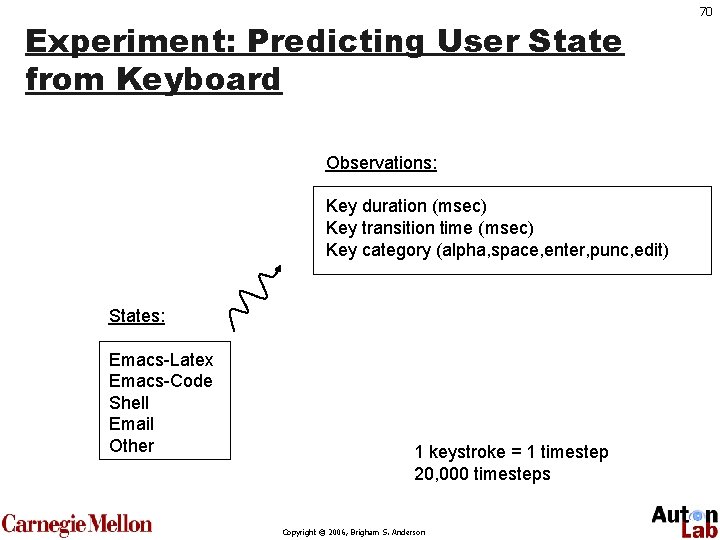

Experiment: Predicting User State from Keyboard Observations: Key duration (msec) Key transition time (msec) Key category (alpha, space, enter, punc, edit) States: Emacs-Latex Emacs-Code Shell Email Other 1 keystroke = 1 timestep 20, 000 timesteps Copyright © 2006, Brigham S. Anderson 70

71 Results Random sampling Uncertainty sampling Active Forward-Back. Copyright © 2006, Brigham S. Anderson

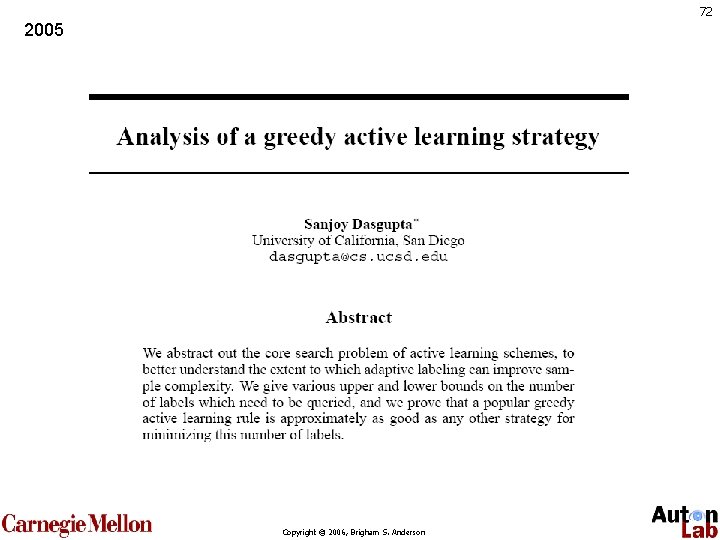

72 2005 Copyright © 2006, Brigham S. Anderson

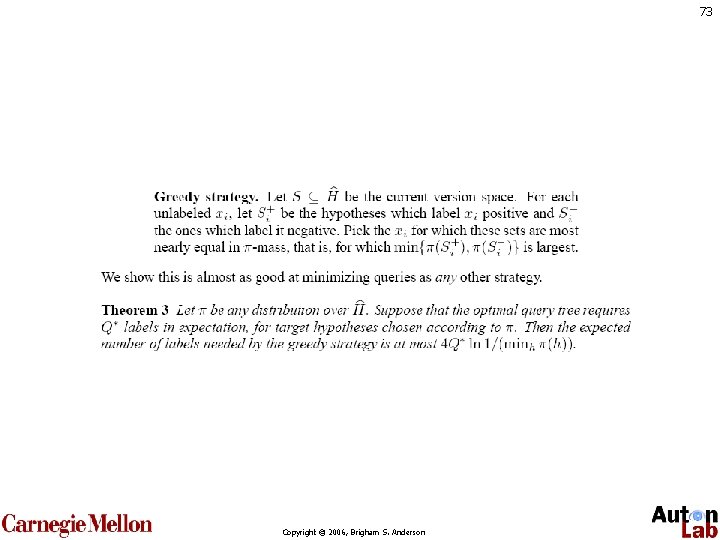

73 Copyright © 2006, Brigham S. Anderson

74 1997 Copyright © 2006, Brigham S. Anderson

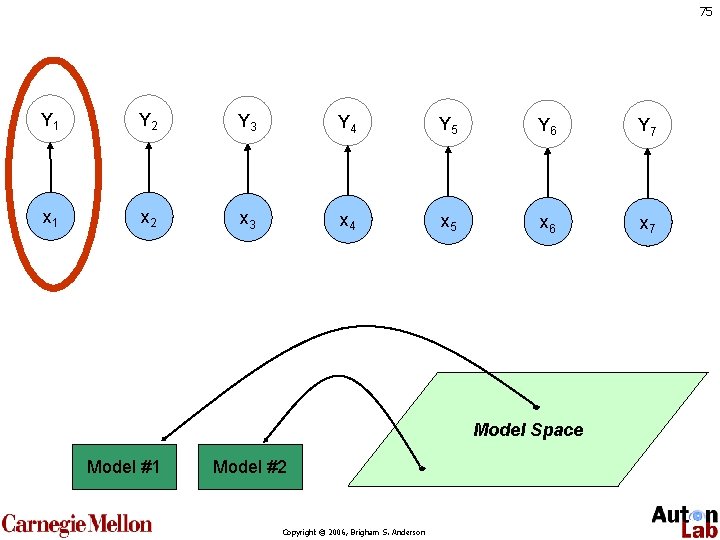

75 Y 1 Y 2 Y 3 Y 4 Y 5 Y 6 Y 7 x 1 x 2 x 3 x 4 x 5 x 6 x 7 Model Space Model #1 Model #2 Copyright © 2006, Brigham S. Anderson

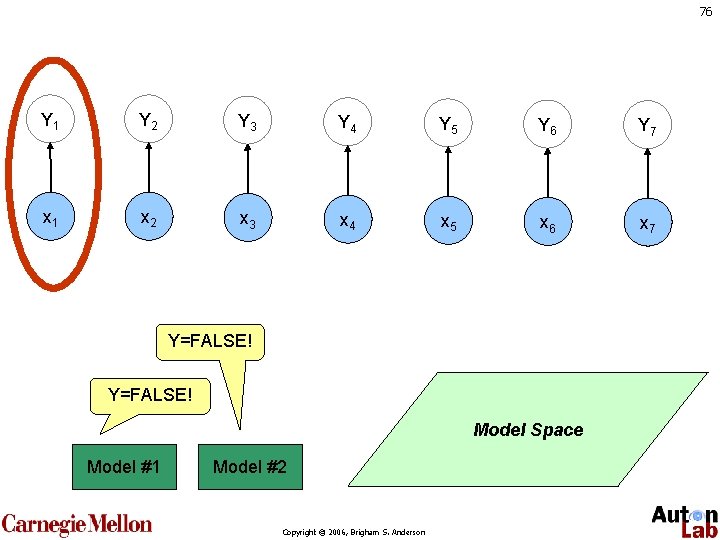

76 Y 1 Y 2 Y 3 Y 4 Y 5 Y 6 Y 7 x 1 x 2 x 3 x 4 x 5 x 6 x 7 Y=FALSE! Model Space Model #1 Model #2 Copyright © 2006, Brigham S. Anderson

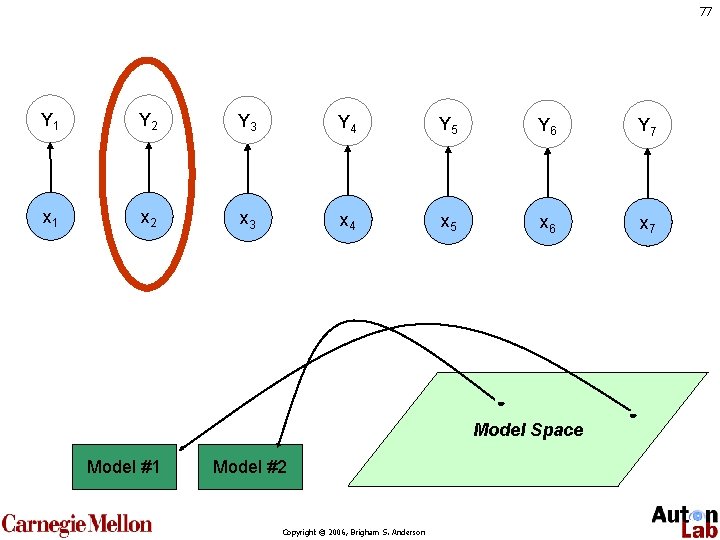

77 Y 1 Y 2 Y 3 Y 4 Y 5 Y 6 Y 7 x 1 x 2 x 3 x 4 x 5 x 6 x 7 Model Space Model #1 Model #2 Copyright © 2006, Brigham S. Anderson

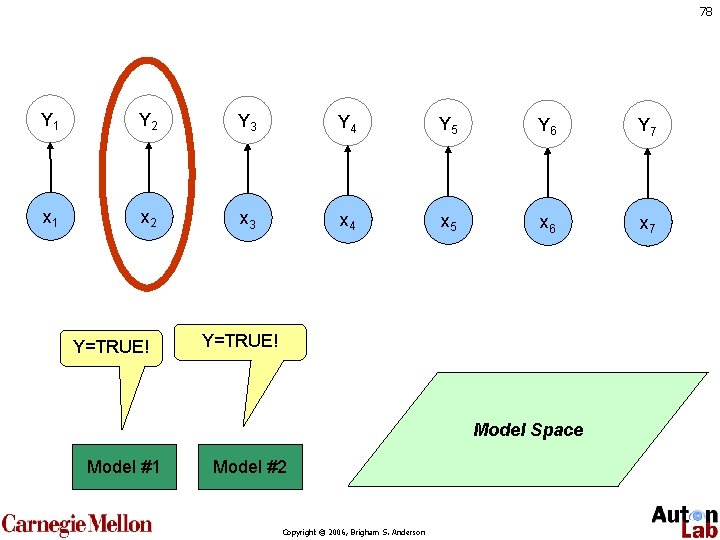

78 Y 1 Y 2 Y 3 Y 4 Y 5 Y 6 Y 7 x 1 x 2 x 3 x 4 x 5 x 6 x 7 Y=TRUE! Model Space Model #1 Model #2 Copyright © 2006, Brigham S. Anderson

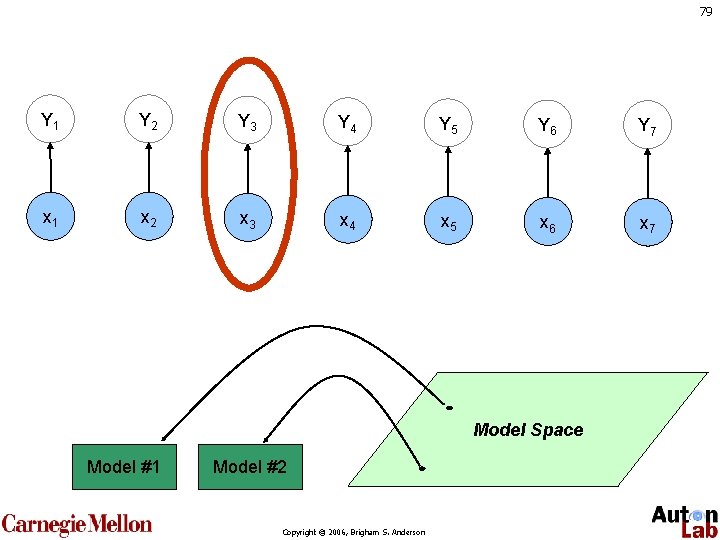

79 Y 1 Y 2 Y 3 Y 4 Y 5 Y 6 Y 7 x 1 x 2 x 3 x 4 x 5 x 6 x 7 Model Space Model #1 Model #2 Copyright © 2006, Brigham S. Anderson

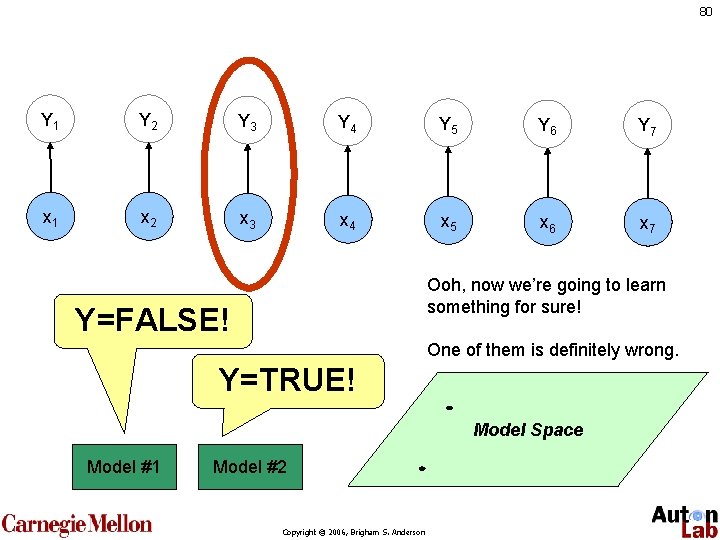

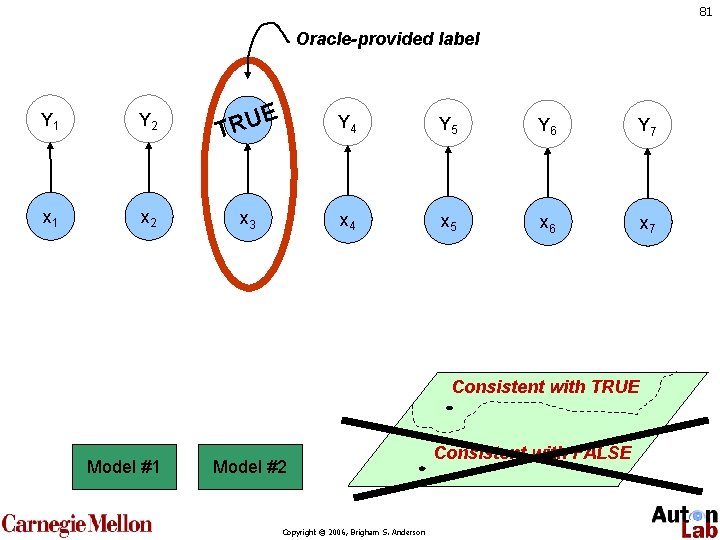

80 Y 1 Y 2 Y 3 Y 4 Y 5 Y 6 Y 7 x 1 x 2 x 3 x 4 x 5 x 6 x 7 Ooh, now we’re going to learn something for sure! Y=FALSE! One of them is definitely wrong. Y=TRUE! Model Space Model #1 Model #2 Copyright © 2006, Brigham S. Anderson

81 Oracle-provided label Y 1 Y 2 E YU 3 R T Y 4 Y 5 Y 6 Y 7 x 1 x 2 x 3 x 4 x 5 x 6 x 7 Consistent with TRUE Model #1 Model #2 Copyright © 2006, Brigham S. Anderson Consistent with FALSE

82 Copyright © 2006, Brigham S. Anderson

83 Review: Probabilistic Models Copyright © 2006, Brigham S. Anderson

Probabilities • • P(A) is “the probability that A is true” How can we represent the function P(A)? How about P(A, B, C)? For discrete variables, the simplest form is a lookup table called a “Joint Probability Table” (JPT) Copyright © 2006, Brigham S. Anderson 84

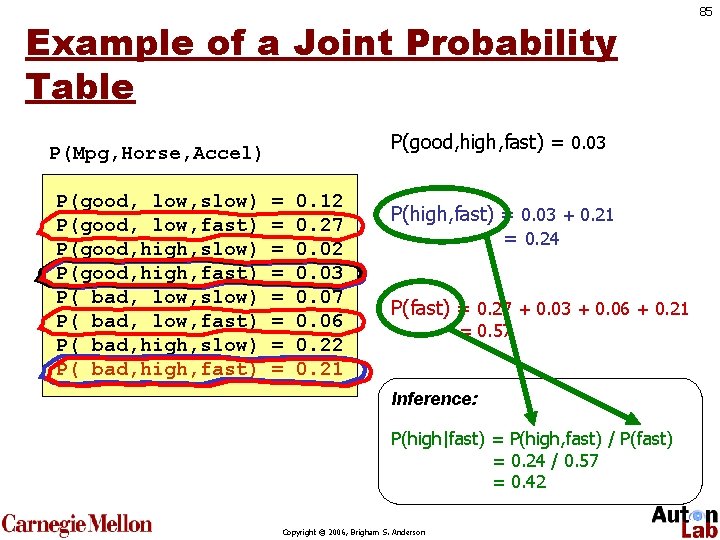

Example of a Joint Probability Table P(good, high, fast) = 0. 03 P(Mpg, Horse, Accel) P(good, low, slow) P(good, low, fast) P(good, high, slow) P(good, high, fast) P( bad, low, slow) P( bad, low, fast) P( bad, high, slow) P( bad, high, fast) = = = = 0. 12 0. 27 0. 02 0. 03 0. 07 0. 06 0. 22 0. 21 P(high, fast) = 0. 03 + 0. 21 = 0. 24 P(fast) = 0. 27 + 0. 03 + 0. 06 + 0. 21 = 0. 57 Inference: P(high|fast) = P(high, fast) / P(fast) = 0. 24 / 0. 57 = 0. 42 Copyright © 2006, Brigham S. Anderson 85

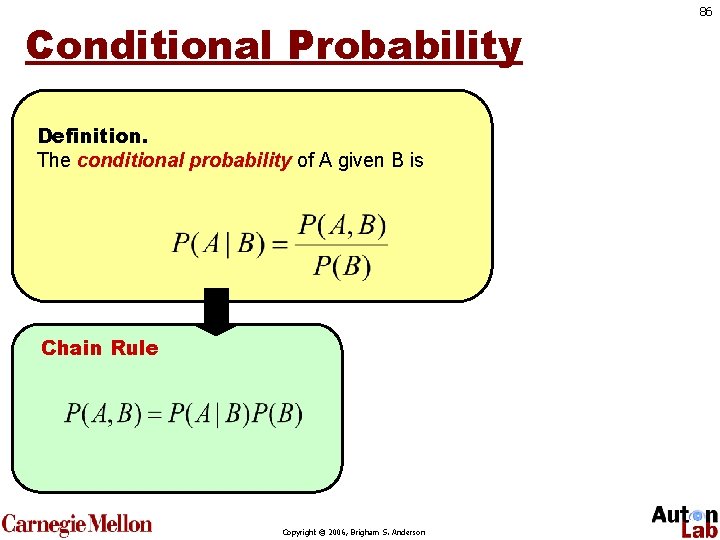

Conditional Probability Definition. The conditional probability of A given B is Chain Rule Copyright © 2006, Brigham S. Anderson 86

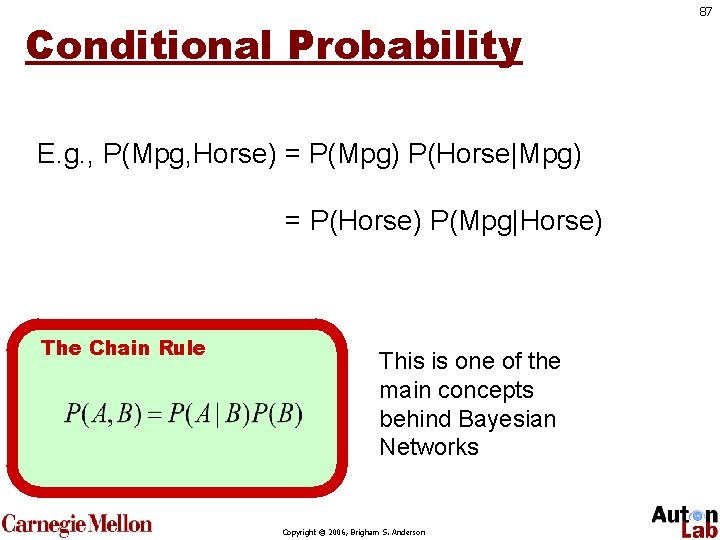

Conditional Probability E. g. , P(Mpg, Horse) = P(Mpg) P(Horse|Mpg) = P(Horse) P(Mpg|Horse) The Chain Rule This is one of the main concepts behind Bayesian Networks Copyright © 2006, Brigham S. Anderson 87

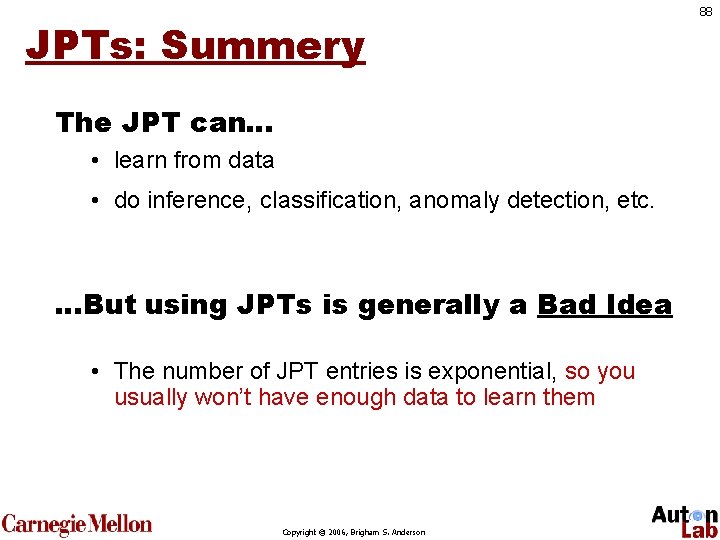

JPTs: Summery The JPT can… • learn from data • do inference, classification, anomaly detection, etc. …But using JPTs is generally a Bad Idea • The number of JPT entries is exponential, so you usually won’t have enough data to learn them Copyright © 2006, Brigham S. Anderson 88

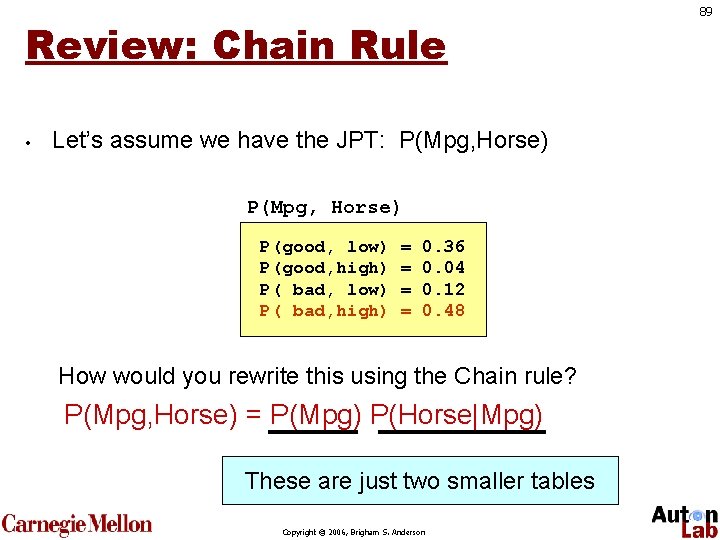

Review: Chain Rule • Let’s assume we have the JPT: P(Mpg, Horse) P(good, low) P(good, high) P( bad, low) P( bad, high) = = 0. 36 0. 04 0. 12 0. 48 How would you rewrite this using the Chain rule? P(Mpg, Horse) = P(Mpg) P(Horse|Mpg) These are just two smaller tables Copyright © 2006, Brigham S. Anderson 89

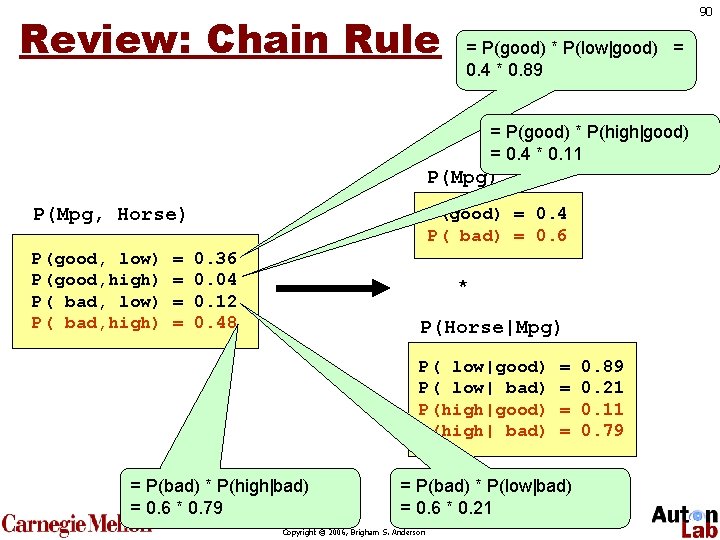

Review: Chain Rule 90 = P(good) * P(low|good) = 0. 4 * 0. 89 = P(good) * P(high|good) = 0. 4 * 0. 11 P(Mpg) P(Mpg, Horse) low high P(good, low) = 0. 36 0. 04 good P(good, high) = P( low) = 0. 12 0. 48 badbad, P( bad, high) = P(Mpg, Horse) P(good) = 0. 4 P( bad) = 0. 6 0. 36 0. 04 0. 12 0. 48 * P(Horse|Mpg) P( low|good) P( low| bad) P(high|good) P(high| bad) = P(bad) * P(high|bad) = 0. 6 * 0. 79 = = = P(bad) * P(low|bad) = 0. 6 * 0. 21 Copyright © 2006, Brigham S. Anderson 0. 89 0. 21 0. 11 0. 79

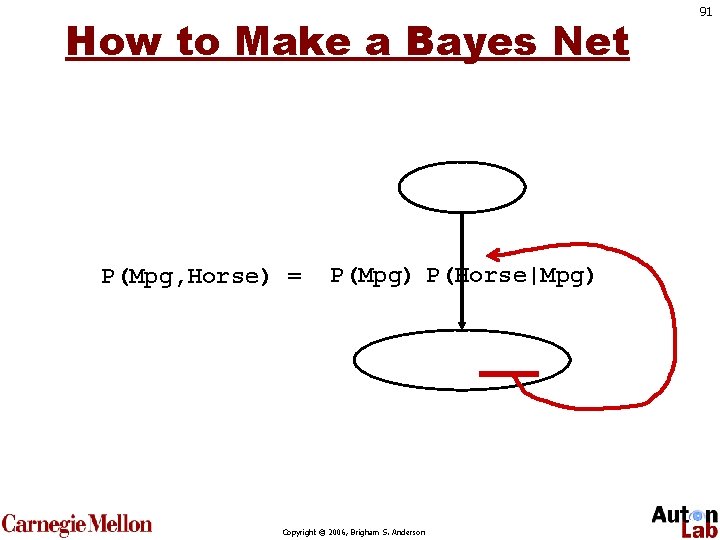

How to Make a Bayes Net P(Mpg, Horse) = P(Mpg) P(Horse|Mpg) Copyright © 2006, Brigham S. Anderson 91

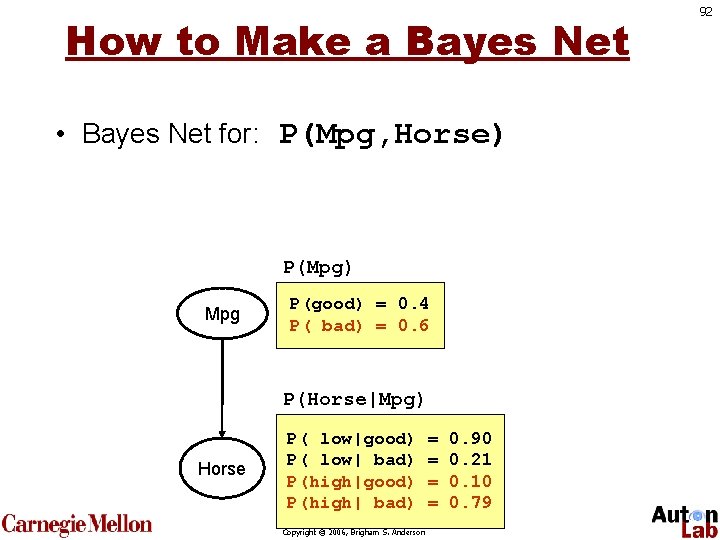

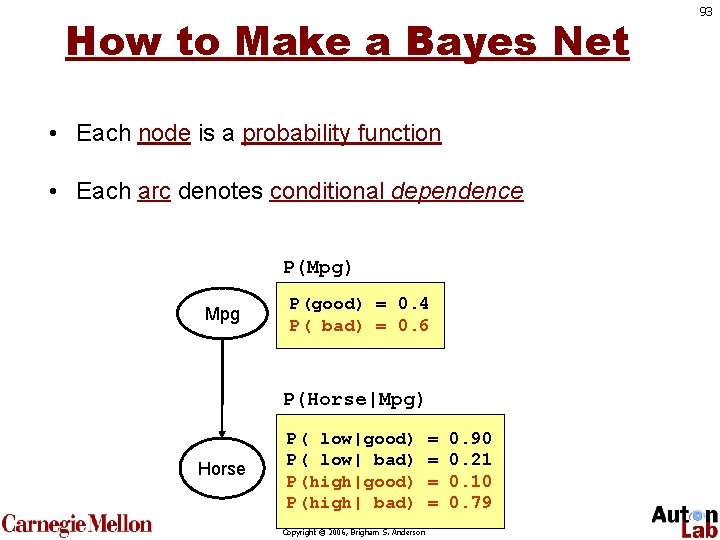

How to Make a Bayes Net • Bayes Net for: P(Mpg, Horse) P(Mpg) Mpg P(good) = 0. 4 P( bad) = 0. 6 P(Horse|Mpg) Horse P( low|good) P( low| bad) P(high|good) P(high| bad) Copyright © 2006, Brigham S. Anderson = = 0. 90 0. 21 0. 10 0. 79 92

How to Make a Bayes Net • Each node is a probability function • Each arc denotes conditional dependence P(Mpg) Mpg P(good) = 0. 4 P( bad) = 0. 6 P(Horse|Mpg) Horse P( low|good) P( low| bad) P(high|good) P(high| bad) Copyright © 2006, Brigham S. Anderson = = 0. 90 0. 21 0. 10 0. 79 93

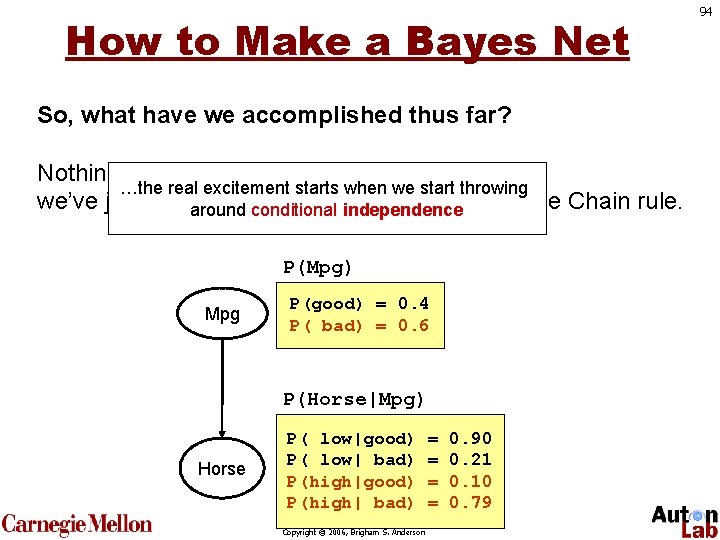

How to Make a Bayes Net So, what have we accomplished thus far? Nothing; …the real excitement starts when we start throwing we’ve just “Bayes Net-ified” via the Chain rule. around conditional. P(Mpg, Horse) independence P(Mpg) Mpg P(good) = 0. 4 P( bad) = 0. 6 P(Horse|Mpg) Horse P( low|good) P( low| bad) P(high|good) P(high| bad) Copyright © 2006, Brigham S. Anderson = = 0. 90 0. 21 0. 10 0. 79 94

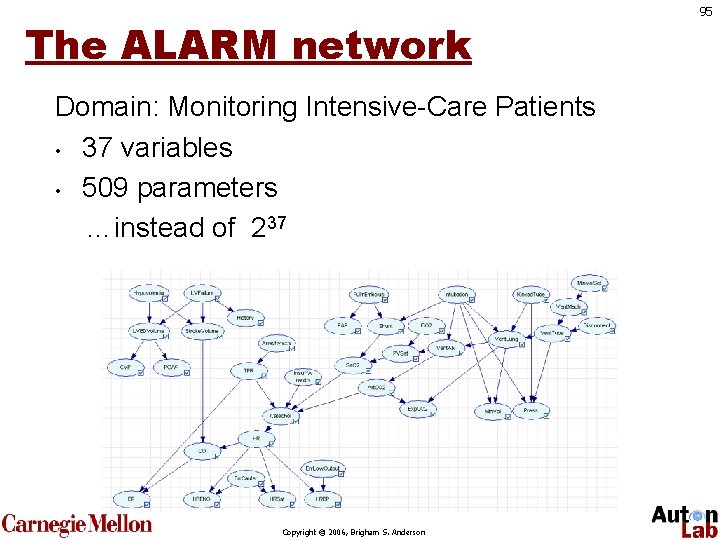

The ALARM network Domain: Monitoring Intensive-Care Patients • 37 variables • 509 parameters …instead of 237 Copyright © 2006, Brigham S. Anderson 95

Bayes Nets: Summary • Bayes nets factor the JPT using the chain rule and conditional independence. • Just like the JPT, it can do inference, anomaly detection, classification, regression, clustering, feature selection, etc. Copyright © 2006, Brigham S. Anderson 96

- Slides: 96