Reinforcement Learning Introduction Passive Learning Alan Fern Based

Reinforcement Learning Introduction & Passive Learning Alan Fern * Based in part on slides by Daniel Weld 1

So far …. h Given an MDP model we know how to find optimal policies (for moderately-sized MDPs) 5 Value Iteration or Policy Iteration h Given just a simulator of an MDP we know how to select actions 5 Monte-Carlo Planning h What if we don’t have a model or simulator? 5 Like an infant. . . 5 Like in many real-world applications 5 All we can do is wander around the world observing what happens, getting rewarded and punished h Enters reinforcement learning 2

Reinforcement Learning h No knowledge of environment 5 Can only act in the world and observe states and reward h Many factors make RL difficult: 5 Actions have non-deterministic effects g Which are initially unknown 5 Rewards / punishments are infrequent g Often at the end of long sequences of actions g How do we determine what action(s) were really responsible for reward or punishment? (credit assignment) 5 World is large and complex h Nevertheless learner must decide what actions to take 5 We will assume the world behaves as an MDP 3

Pure Reinforcement Learning vs. Monte-Carlo Planning h In pure reinforcement learning: 5 the agent begins with no knowledge 5 wanders around the world observing outcomes h In Monte-Carlo planning 5 the agent begins with no declarative knowledge of the world 5 has an interface to a world simulator that allows observing the outcome of taking any action in any state h The simulator gives the agent the ability to “teleport” to any state, at any time, and then apply any action h A pure RL agent does not have the ability to teleport 5 Can only observe the outcomes that it happens to reach 4

Pure Reinforcement Learning vs. Monte-Carlo Planning h MC planning is sometimes called RL with a “strong simulator” 5 I. e. a simulator where we can set the current state to any state at any moment 5 Often here we focus on computing action for a start state h Pure RL is sometimes called RL with a “weak simulator” 5 I. e. a simulator where we cannot set the state h A strong simulator can emulate a weak simulator 5 So pure RL can be used in the MC planning framework 5 But not vice versa 5

Passive vs. Active learning h Passive learning 5 The agent has a fixed policy and tries to learn the utilities of states by observing the world go by 5 Analogous to policy evaluation 5 Often serves as a component of active learning algorithms 5 Often inspires active learning algorithms h Active learning 5 The agent attempts to find an optimal (or at least good) policy by acting in the world 5 Analogous to solving the underlying MDP, but without first being given the MDP model 6

Model-Based vs. Model-Free RL h Model based approach to RL: 5 learn the MDP model, or an approximation of it 5 use it for policy evaluation or to find the optimal policy h Model free approach to RL: 5 derive the optimal policy without explicitly learning the model 5 useful when model is difficult to represent and/or learn h We will consider both types of approaches 7

Small vs. Huge MDPs h We will first cover RL methods for small MDPs 5 MDPs where the number of states and actions is reasonably small 5 These algorithms will inspire more advanced methods h Later we will cover algorithms for huge MDPs 5 Function Approximation Methods 5 Policy Gradient Methods 5 Least-Squares Policy Iteration 8

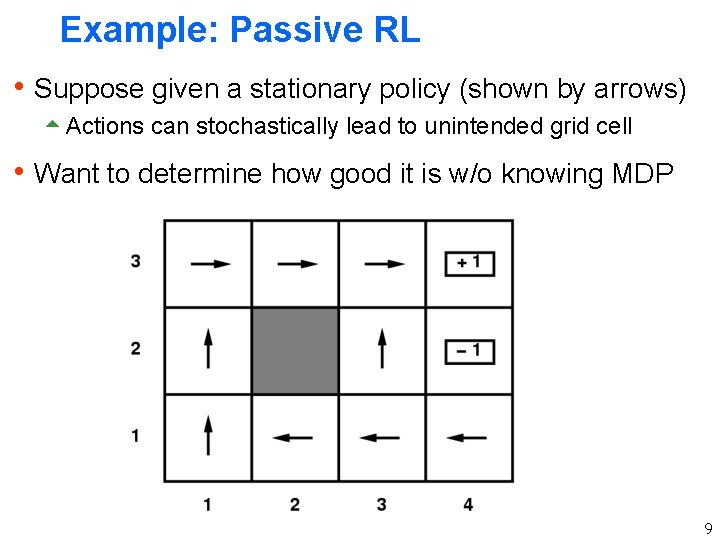

Example: Passive RL h Suppose given a stationary policy (shown by arrows) 5 Actions can stochastically lead to unintended grid cell h Want to determine how good it is w/o knowing MDP 9

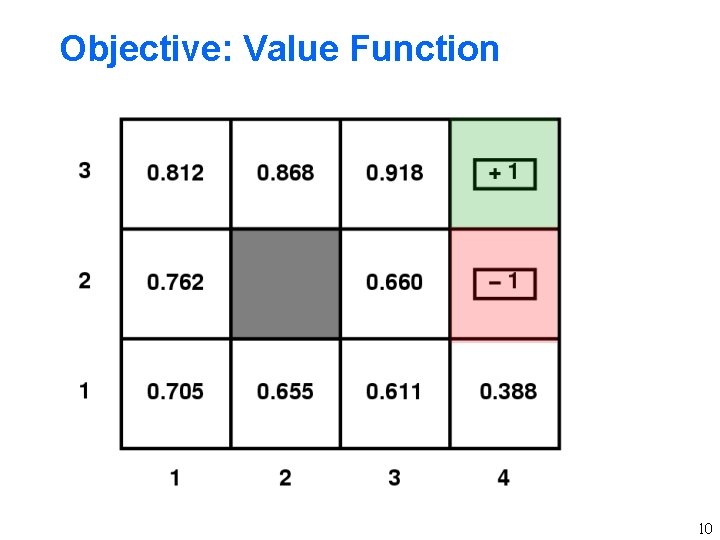

Objective: Value Function 10

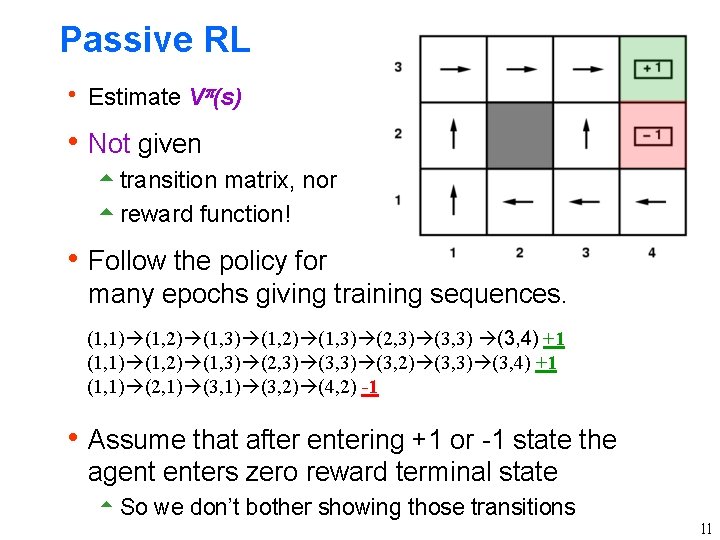

Passive RL h Estimate V (s) h Not given 5 transition matrix, nor 5 reward function! h Follow the policy for many epochs giving training sequences. (1, 1) (1, 2) (1, 3) (2, 3) (3, 4) +1 (1, 1) (1, 2) (1, 3) (2, 3) (3, 2) (3, 3) (3, 4) +1 (1, 1) (2, 1) (3, 2) (4, 2) -1 h Assume that after entering +1 or -1 state the agent enters zero reward terminal state 5 So we don’t bother showing those transitions 11

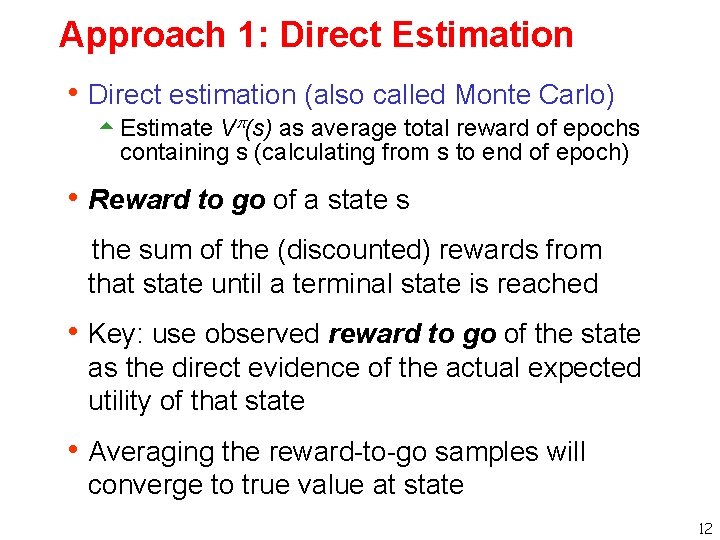

Approach 1: Direct Estimation h Direct estimation (also called Monte Carlo) 5 Estimate V (s) as average total reward of epochs containing s (calculating from s to end of epoch) h Reward to go of a state s the sum of the (discounted) rewards from that state until a terminal state is reached h Key: use observed reward to go of the state as the direct evidence of the actual expected utility of that state h Averaging the reward-to-go samples will converge to true value at state 12

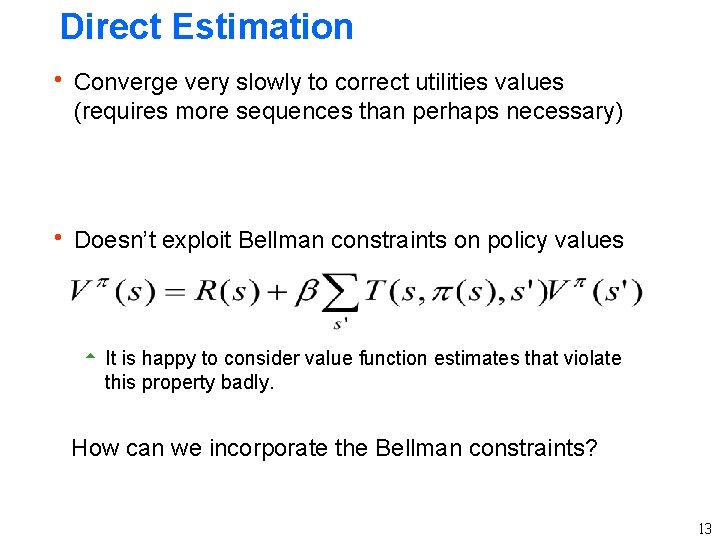

Direct Estimation h Converge very slowly to correct utilities values (requires more sequences than perhaps necessary) h Doesn’t exploit Bellman constraints on policy values 5 It is happy to consider value function estimates that violate this property badly. How can we incorporate the Bellman constraints? 13

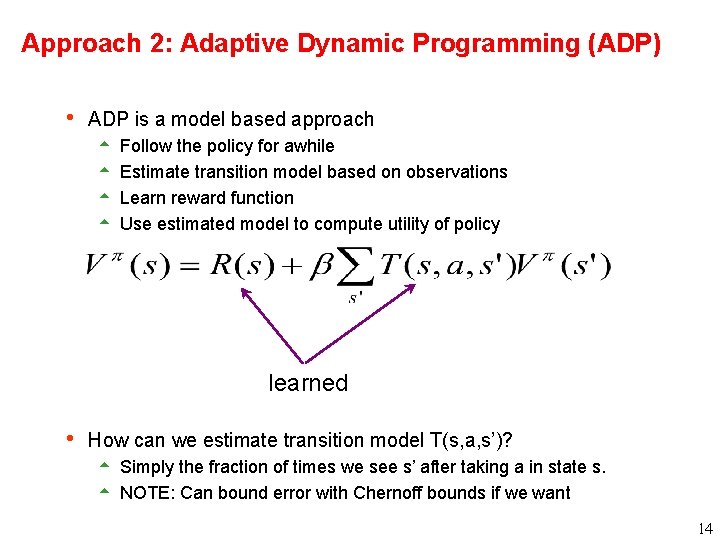

Approach 2: Adaptive Dynamic Programming (ADP) h ADP is a model based approach 5 Follow the policy for awhile 5 Estimate transition model based on observations 5 Learn reward function 5 Use estimated model to compute utility of policy learned h How can we estimate transition model T(s, a, s’)? 5 Simply the fraction of times we see s’ after taking a in state s. 5 NOTE: Can bound error with Chernoff bounds if we want 14

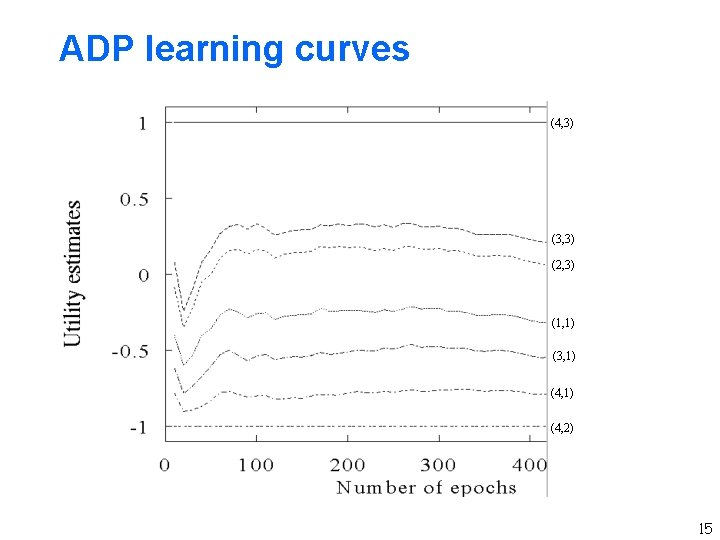

ADP learning curves (4, 3) (3, 3) (2, 3) (1, 1) (3, 1) (4, 2) 15

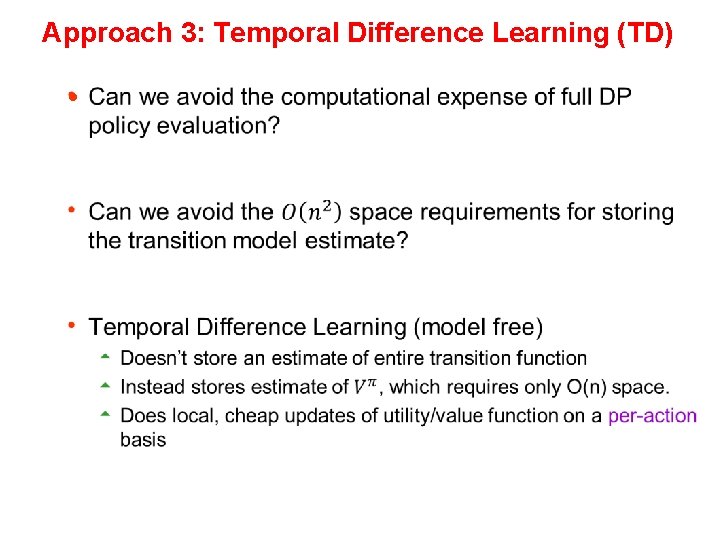

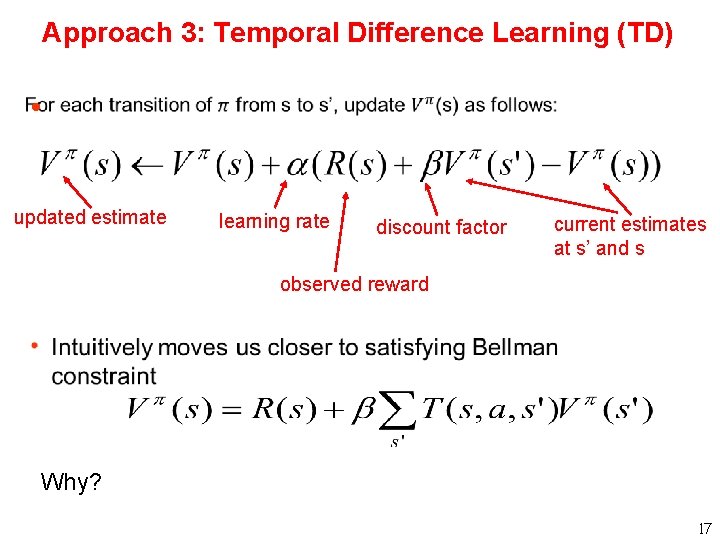

Approach 3: Temporal Difference Learning (TD) h

Approach 3: Temporal Difference Learning (TD) h updated estimate learning rate discount factor current estimates at s’ and s observed reward Why? 17

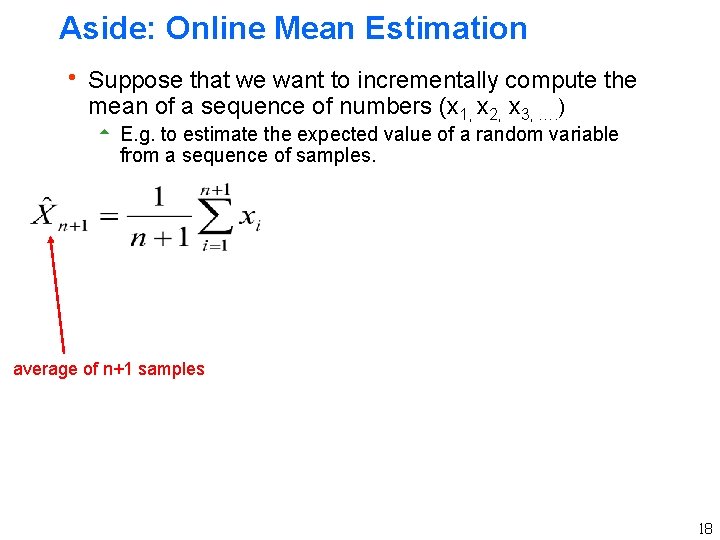

Aside: Online Mean Estimation h Suppose that we want to incrementally compute the mean of a sequence of numbers (x 1, x 2, x 3, …. ) 5 E. g. to estimate the expected value of a random variable from a sequence of samples. average of n+1 samples 18

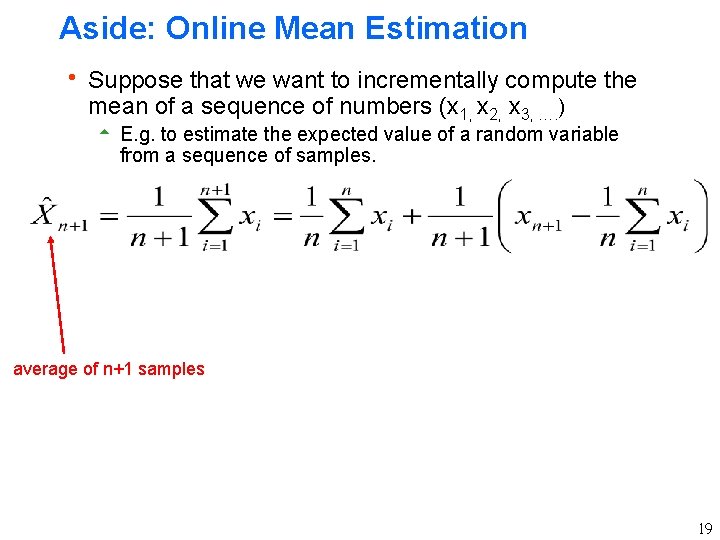

Aside: Online Mean Estimation h Suppose that we want to incrementally compute the mean of a sequence of numbers (x 1, x 2, x 3, …. ) 5 E. g. to estimate the expected value of a random variable from a sequence of samples. average of n+1 samples 19

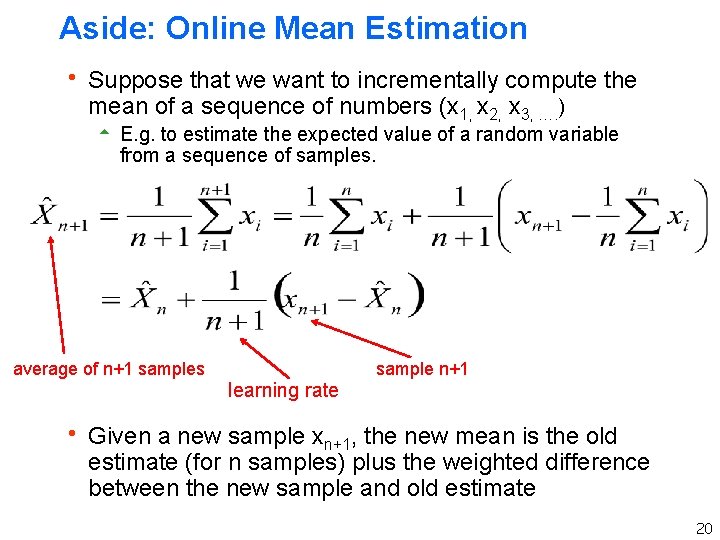

Aside: Online Mean Estimation h Suppose that we want to incrementally compute the mean of a sequence of numbers (x 1, x 2, x 3, …. ) 5 E. g. to estimate the expected value of a random variable from a sequence of samples. average of n+1 samples learning rate sample n+1 h Given a new sample xn+1, the new mean is the old estimate (for n samples) plus the weighted difference between the new sample and old estimate 20

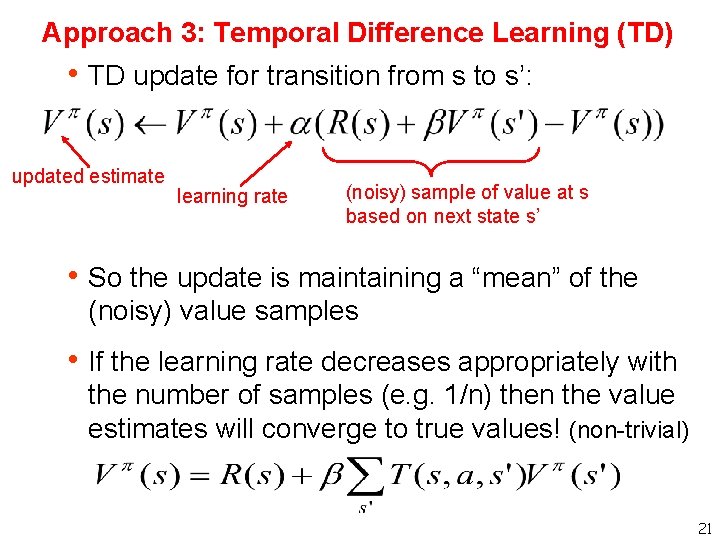

Approach 3: Temporal Difference Learning (TD) h TD update for transition from s to s’: updated estimate learning rate (noisy) sample of value at s based on next state s’ h So the update is maintaining a “mean” of the (noisy) value samples h If the learning rate decreases appropriately with the number of samples (e. g. 1/n) then the value estimates will converge to true values! (non-trivial) 21

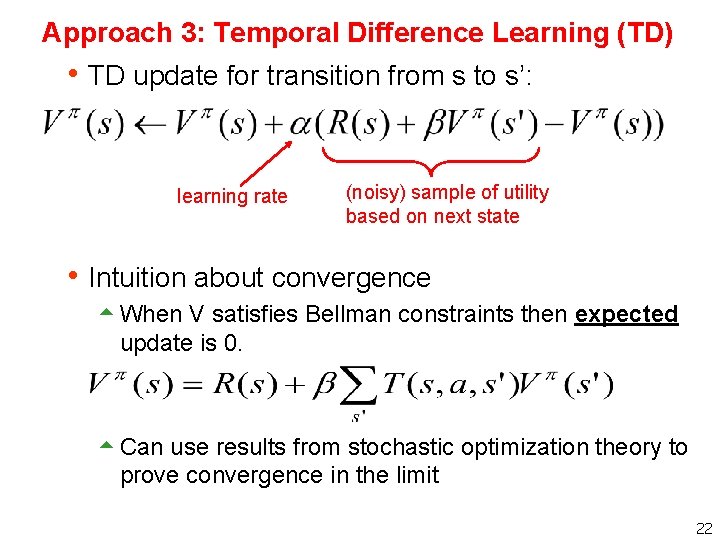

Approach 3: Temporal Difference Learning (TD) h TD update for transition from s to s’: learning rate (noisy) sample of utility based on next state h Intuition about convergence 5 When V satisfies Bellman constraints then expected update is 0. 5 Can use results from stochastic optimization theory to prove convergence in the limit 22

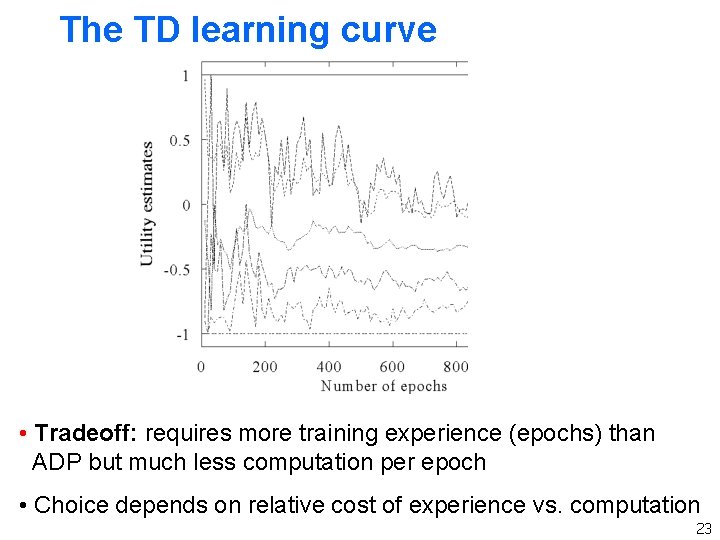

The TD learning curve • Tradeoff: requires more training experience (epochs) than ADP but much less computation per epoch • Choice depends on relative cost of experience vs. computation 23

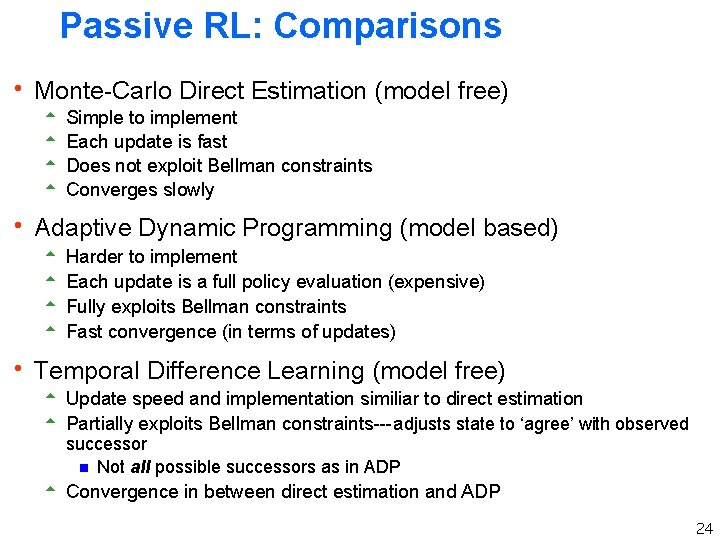

Passive RL: Comparisons h Monte-Carlo Direct Estimation (model free) 5 5 Simple to implement Each update is fast Does not exploit Bellman constraints Converges slowly h Adaptive Dynamic Programming (model based) 5 Harder to implement 5 Each update is a full policy evaluation (expensive) 5 Fully exploits Bellman constraints 5 Fast convergence (in terms of updates) h Temporal Difference Learning (model free) 5 Update speed and implementation similiar to direct estimation 5 Partially exploits Bellman constraints---adjusts state to ‘agree’ with observed successor g Not all possible successors as in ADP 5 Convergence in between direct estimation and ADP 24

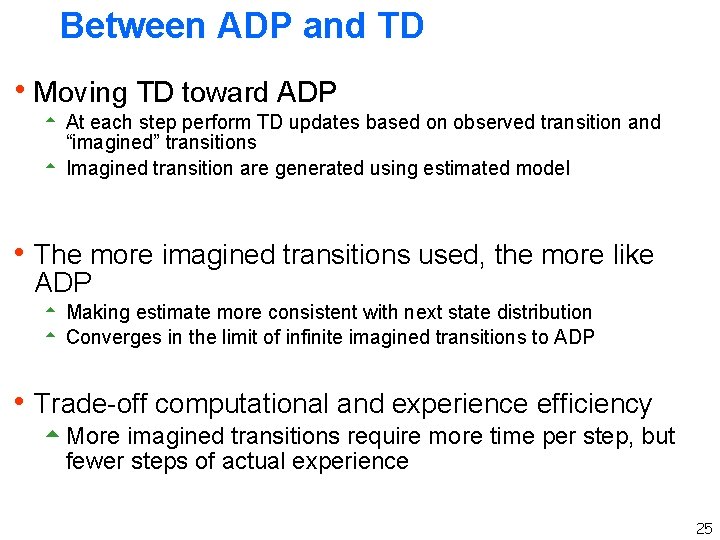

Between ADP and TD h Moving TD toward ADP 5 At each step perform TD updates based on observed transition and “imagined” transitions 5 Imagined transition are generated using estimated model h The more imagined transitions used, the more like ADP 5 Making estimate more consistent with next state distribution 5 Converges in the limit of infinite imagined transitions to ADP h Trade-off computational and experience efficiency 5 More imagined transitions require more time per step, but fewer steps of actual experience 25

- Slides: 25