Slide 1 Probabilistic Analytics Brigham S Anderson School

Slide 1 Probabilistic Analytics Brigham S. Anderson School of Computer Science Carnegie Mellon University www. cs. cmu. edu/~brigham@cmu. edu Copyright © 2001, Andrew W. Moore Aug 25 th, 2001

OUTLINE Probability • Axioms Rules Probabilistic Models • Probability Tables Bayes Nets Machine Learning • Inference • Anomaly detection • Classification 2

What we’re NOT going to do • We will not be talking about: • • Decision Trees Neural Nets Rule learners SVM 3

Probability Theory and Bayes Nets • • There are only two concepts required for understanding Bayes Nets. I will point them out when we get there. 4

OUTLINE Probability • Axioms Rules Probabilistic Models • Full Joint Bayes Nets Machine Learning • Inference • Anomaly detection • Classification 5

Probability • • • The world is a very uncertain place 30 years of Artificial Intelligence and Database research danced around this fact And then a few AI researchers decided to use some ideas from the eighteenth century 6

Probability: Big Picture Definition • Probability is a measure of the likelihood of an event taking place once a random experiment is conducted Miscellanea • Probability is usually expressed as a number between 0 and 1 • An alternate form is in terms of percentages • 0 % to 100 % • 110% makes no sense as a probability • A more cumbersome style is in terms of odds • If you are placing a bet at the Kentucky Derby and you are offered odds of m to n on an event, it implies a probability of n/(m+n) of that event happening 7

8 Probability Definition

Sets - Definition and Notation The study of sets is a fundamental requirement for studying probability Definitions • A Set is collection of objects • These objects are called Members or Elements of the Set Notation • Sets are denoted by upper case letters • Elements are denoted by lower case letters • If an element a belongs to a set X, we write a ε X “a belongs to X” • If an element b does not belong to a set X, we write b X “b does not belong to X” 9

Sets Construction/Description There are two ways of describing a set Roster Method: Listing all members of the set • Set of possible results of tossing a die = {1, 2, 3, 4, 5, 6} • Set of vowels = {a, e, i, o, u} Property Method: Describing a property held by all members and no non-members • Set of possible results of tossing a die = {x| x is an integer, 1<= x <= 6} • Set of positive even integers = {x| x is an integer, x > 0, x is divisible by 2} 10

Probability • • Probability makes use of set theory. We will assume a basic familiarity with the concept of a set. 11

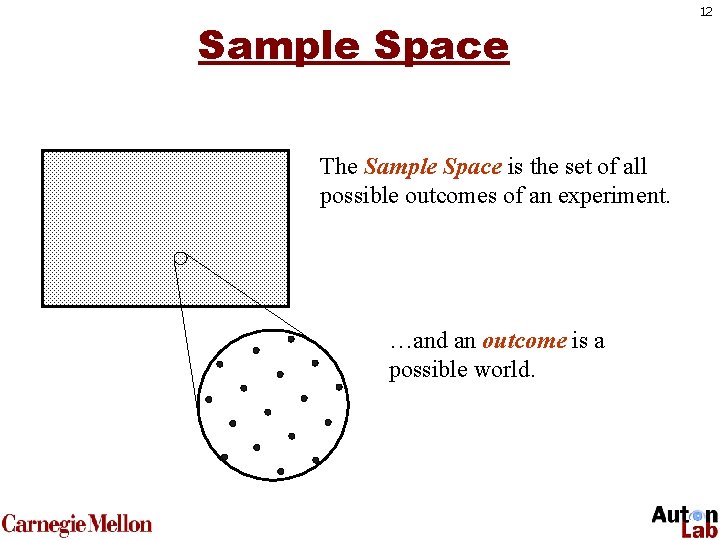

Sample Space The Sample Space is the set of all possible outcomes of an experiment. …and an outcome is a possible world. 12

Sample Spaces Sample space of a coin flip: S = {H, T} T H 13

Sample Spaces Sample space for a wheel spin: S = {blue, red, green, yellow} red blue yellow green 14

Sample Spaces Sample space of a die roll: S = {1, 2, 3, 4, 5, 6} 15

Sample Spaces Sample space of three die rolls? S = {111, 112, 113, …, …, 664, 665, 666} 16

Sample Spaces Sample space of a single draw from a deck of cards: S={As, Ac, Ah, Ad, 2 s, 2 c, 2 h, … …, Ks, Kc, Kd, Kh} 17

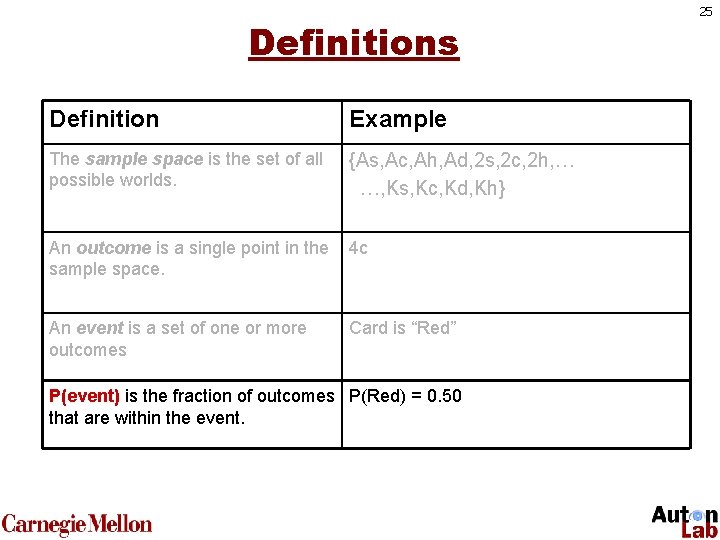

So Far… Definition Example The sample space is the set of all possible worlds. {As, Ac, Ah, Ad, 2 s, 2 c, 2 h, … …, Ks, Kc, Kd, Kh} An outcome is a single point in the sample space. 4 c 18

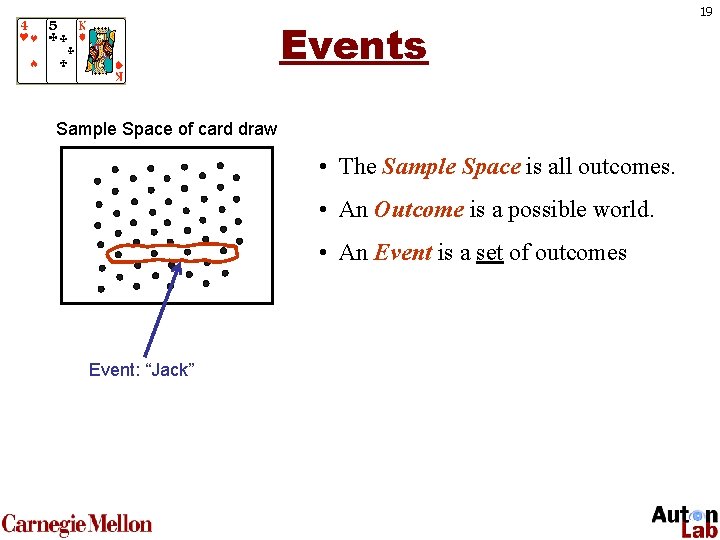

Events Sample Space of card draw • The Sample Space is all outcomes. • An Outcome is a possible world. • An Event is a set of outcomes Event: “Jack” 19

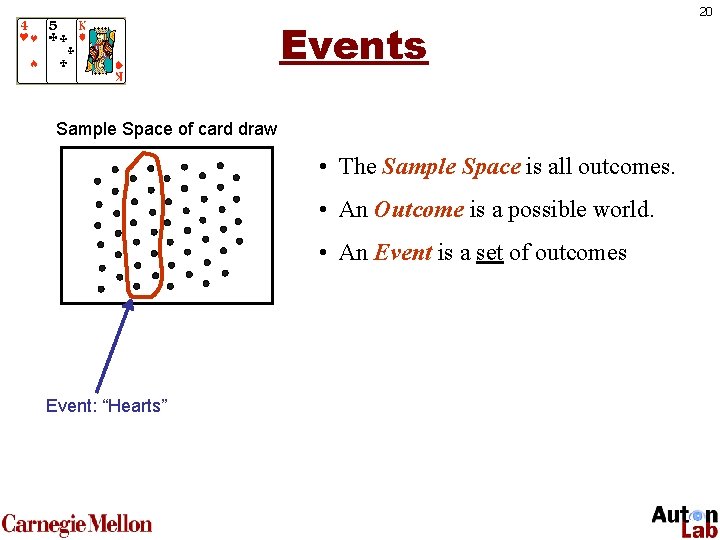

Events Sample Space of card draw • The Sample Space is all outcomes. • An Outcome is a possible world. • An Event is a set of outcomes Event: “Hearts” 20

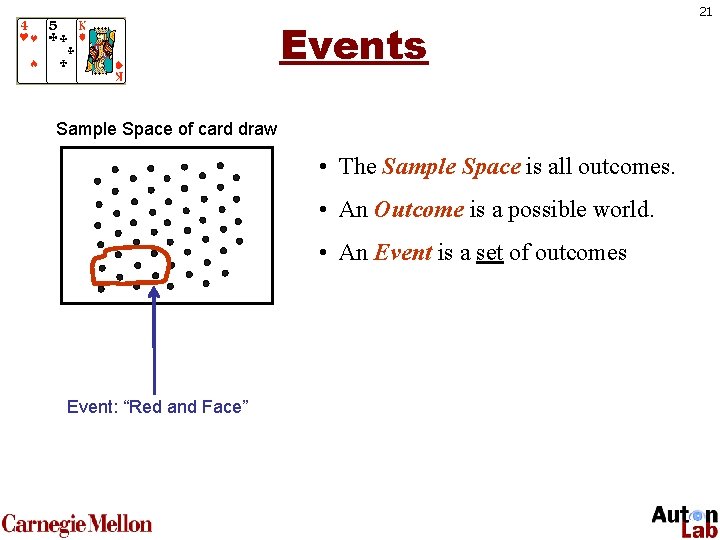

Events Sample Space of card draw • The Sample Space is all outcomes. • An Outcome is a possible world. • An Event is a set of outcomes Event: “Red and Face” 21

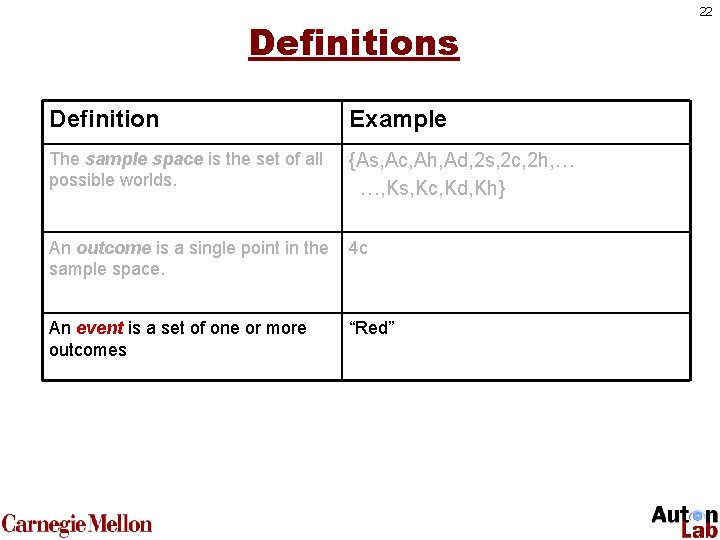

Definitions Definition Example The sample space is the set of all possible worlds. {As, Ac, Ah, Ad, 2 s, 2 c, 2 h, … …, Ks, Kc, Kd, Kh} An outcome is a single point in the sample space. 4 c An event is a set of one or more outcomes “Red” 22

Special Events Definitions • S itself is called the Sure Event or the Certain Event since we know that any outcome will be an element of S • The empty set is called the Impossible Event since no outcome can be an element of Since events are sets, it is clear that statements about events can be translated into the language of sets and conversely 23

Probability Definition A Probability function maps events to real numbers. . . and satisfies the axioms of probability. 24

Definitions Definition Example The sample space is the set of all possible worlds. {As, Ac, Ah, Ad, 2 s, 2 c, 2 h, … …, Ks, Kc, Kd, Kh} An outcome is a single point in the sample space. 4 c An event is a set of one or more outcomes Card is “Red” P(event) is the fraction of outcomes P(Red) = 0. 50 that are within the event. 25

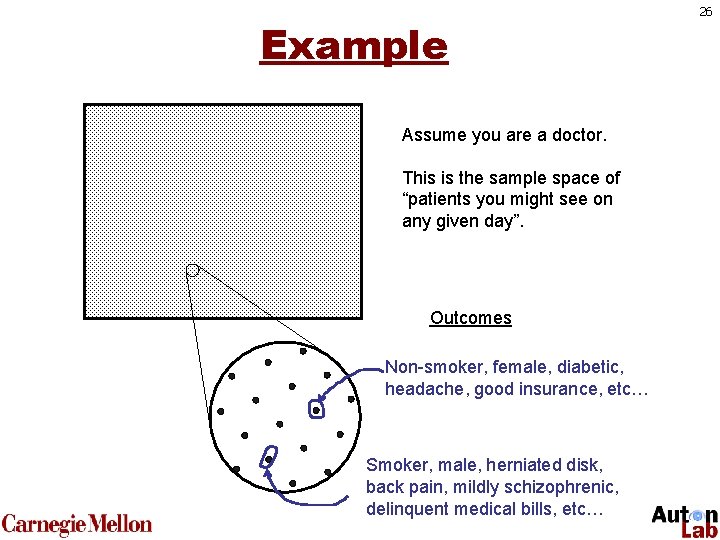

Example Assume you are a doctor. This is the sample space of “patients you might see on any given day”. Outcomes Non-smoker, female, diabetic, headache, good insurance, etc… Smoker, male, herniated disk, back pain, mildly schizophrenic, delinquent medical bills, etc… 26

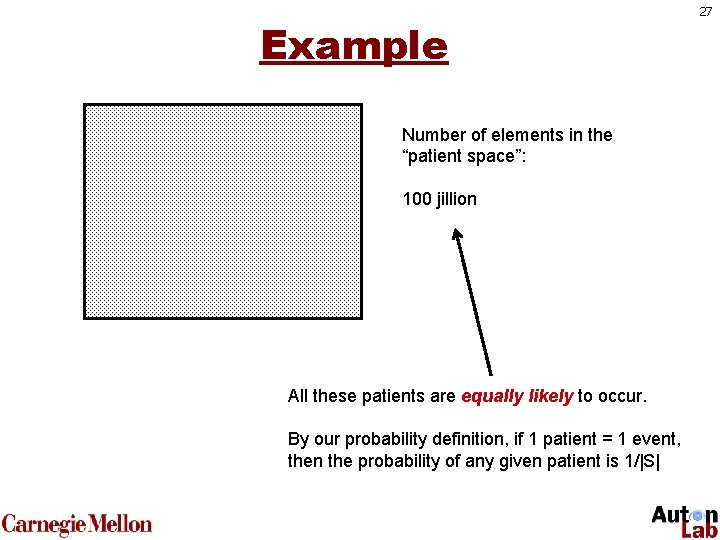

Example Number of elements in the “patient space”: 100 jillion All these patients are equally likely to occur. By our probability definition, if 1 patient = 1 event, then the probability of any given patient is 1/|S| 27

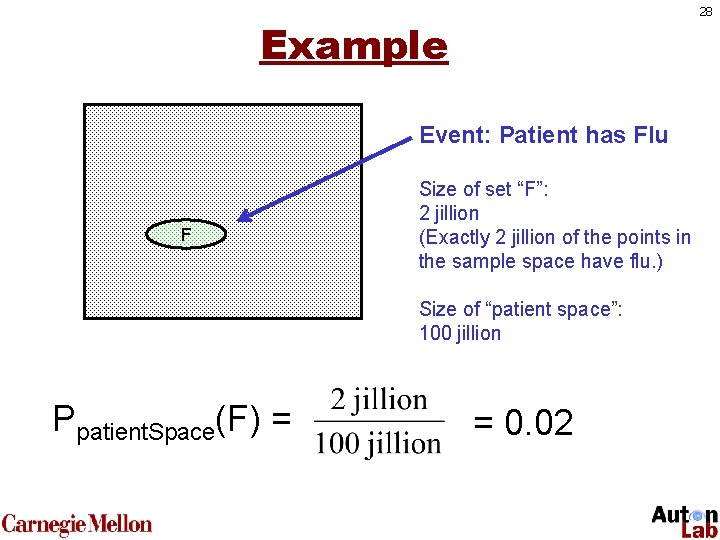

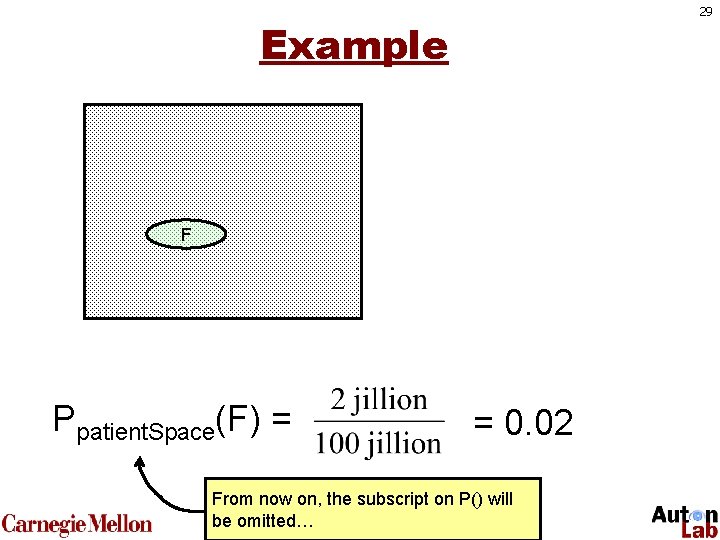

28 Example Event: Patient has Flu F Size of set “F”: 2 jillion (Exactly 2 jillion of the points in the sample space have flu. ) Size of “patient space”: 100 jillion Ppatient. Space(F) = = 0. 02

29 Example F Ppatient. Space(F) = = 0. 02 From now on, the subscript on P() will be omitted…

30 • We now have a reasonable definition of probability “The probability of an event is the fraction of outcomes that are within the event. ” But let’s make that a little more concrete

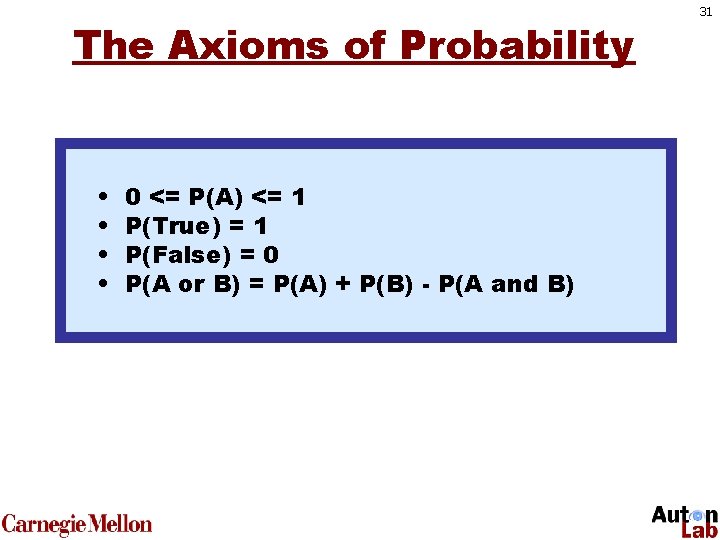

The Axioms of Probability • • 0 <= P(A) <= 1 P(True) = 1 P(False) = 0 P(A or B) = P(A) + P(B) - P(A and B) 31

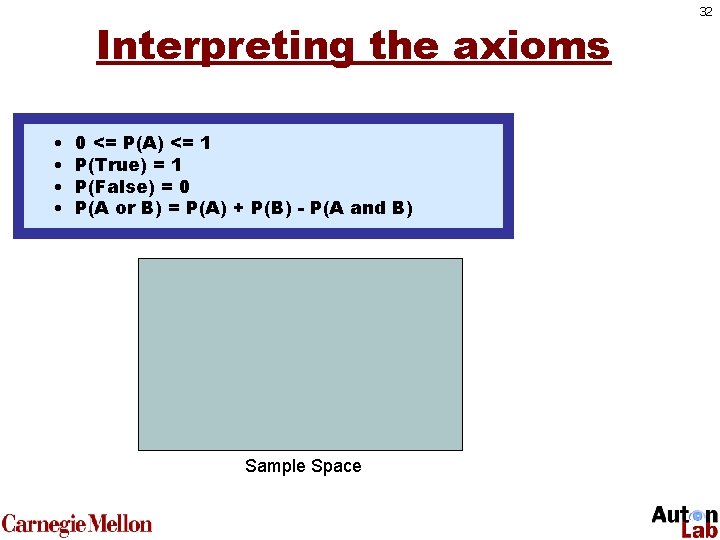

Interpreting the axioms • • 0 • <= <= 1 0 P(A) <= P(A) P(True) =1 =1 • P(True) • P(False) =0 =0 • P(A or=B) = P(A) - P(A or B) P(A) + P(B)+- P(B) P(A and B) Sample Space 32

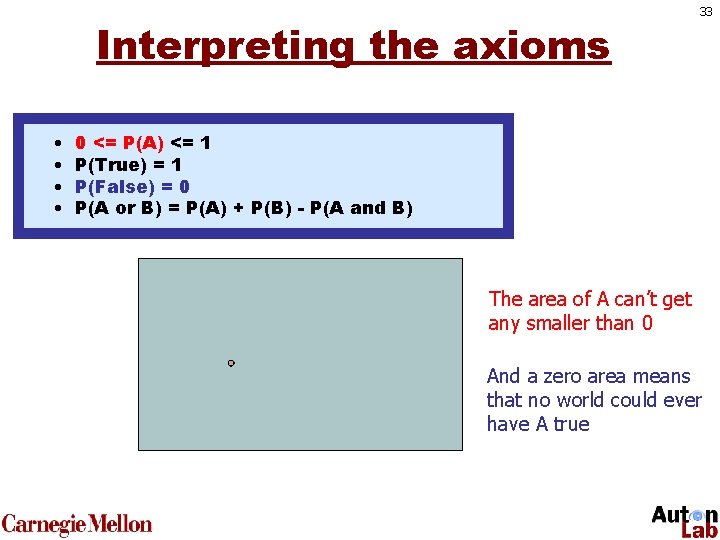

Interpreting the axioms • • 33 0 • <= <= 1 0 P(A) <= P(A) P(True) =1 =1 • P(True) • P(False) =0 =0 • P(A or=B) = P(A) - P(A or B) P(A) + P(B)+- P(B) P(A and B) The area of A can’t get any smaller than 0 And a zero area means that no world could ever have A true

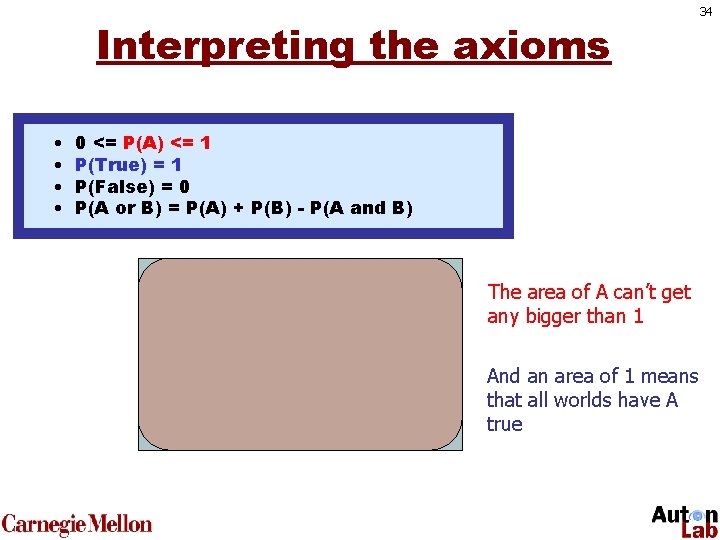

Interpreting the axioms • • 0 • <= <= 1 0 P(A) <= P(A) P(True) =1 =1 • P(True) • P(False) =0 =0 • P(A or=B) = P(A) - P(A or B) P(A) + P(B)+- P(B) P(A and B) The area of A can’t get any bigger than 1 And an area of 1 means that all worlds have A true 34

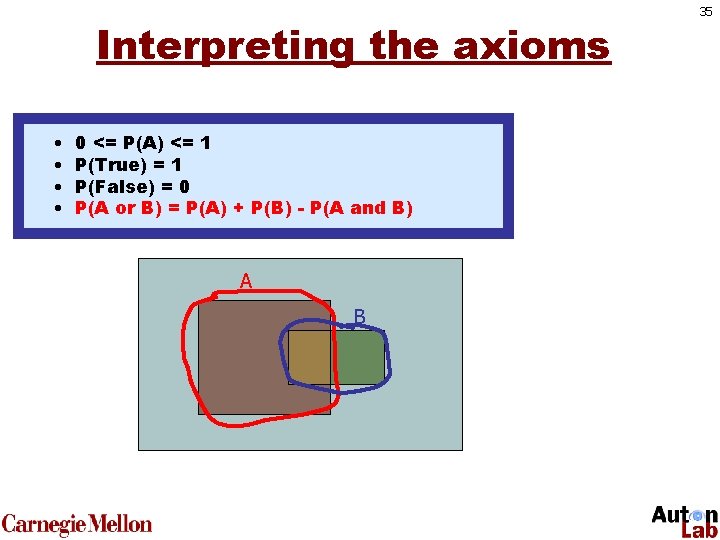

Interpreting the axioms • • 0 • <= <= 1 0 P(A) <= P(A) P(True) =1 =1 • P(True) • P(False) =0 =0 • P(A or=B) = P(A) - P(A or B) P(A) + P(B)+- P(B) P(A and B) A B 35

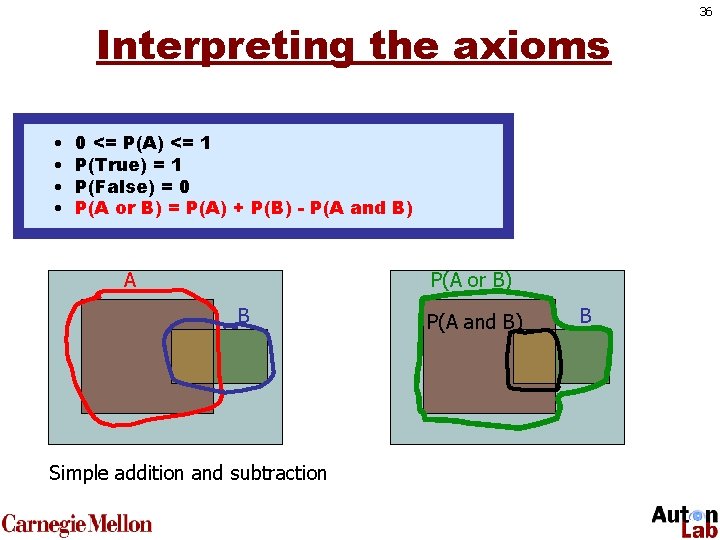

Interpreting the axioms • • 0 • <= <= 1 0 P(A) <= P(A) P(True) =1 =1 • P(True) • P(False) =0 =0 • P(A or=B) = P(A) - P(A or B) P(A) + P(B)+- P(B) P(A and B) A P(A or B) B Simple addition and subtraction P(A and B) B 36

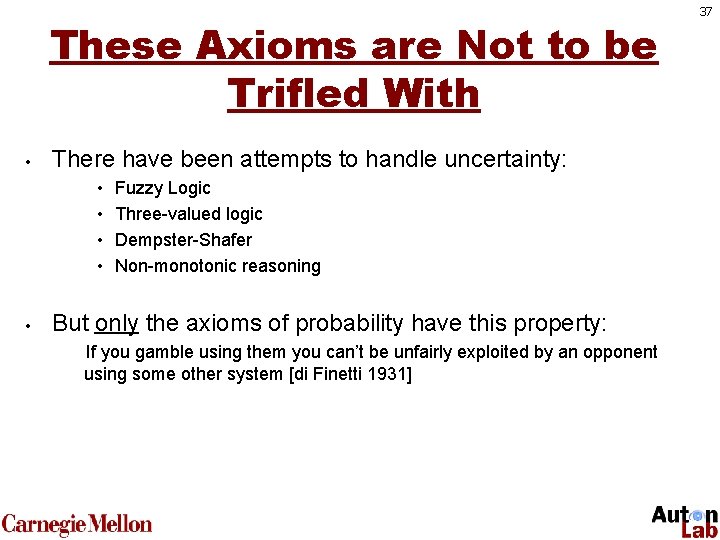

These Axioms are Not to be Trifled With • There have been attempts to handle uncertainty: • • • Fuzzy Logic Three-valued logic Dempster-Shafer Non-monotonic reasoning But only the axioms of probability have this property: If you gamble using them you can’t be unfairly exploited by an opponent using some other system [di Finetti 1931] 37

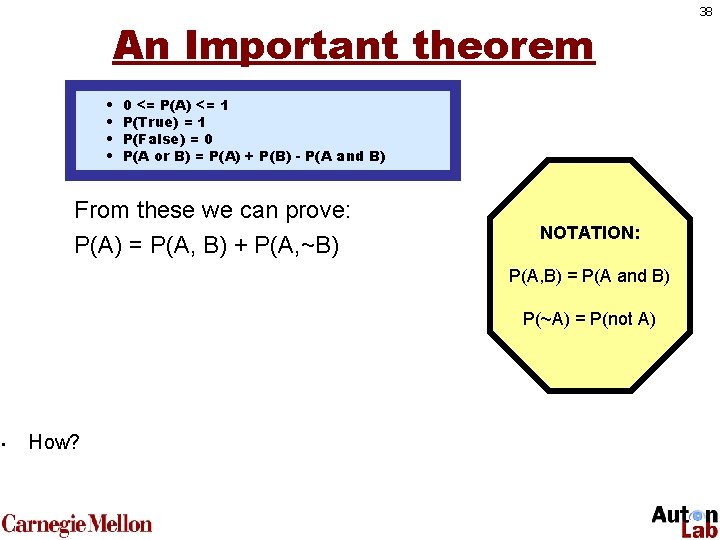

An Important theorem • • 0 <= P(A) <= 1 P(True) = 1 P(False) = 0 P(A or B) = P(A) + P(B) - P(A and B) From these we can prove: P(A) = P(A, B) + P(A, ~B) NOTATION: P(A, B) = P(A and B) P(~A) = P(not A) • How? 38

39 Random Variables Events Outcomes

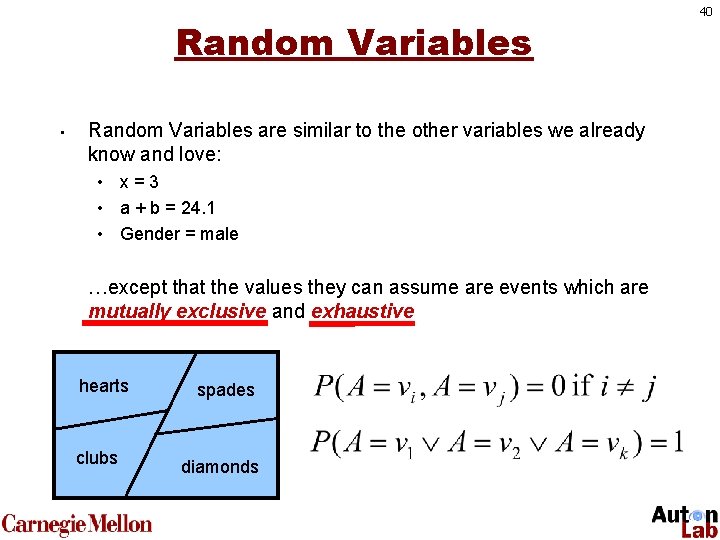

Random Variables • Random Variables are similar to the other variables we already know and love: • x=3 • a + b = 24. 1 • Gender = male …except that the values they can assume are events which are mutually exclusive and exhaustive hearts clubs spades diamonds 40

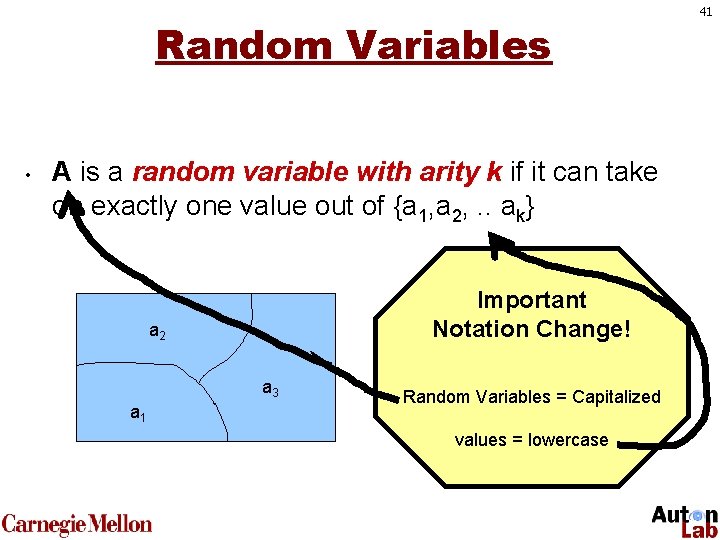

Random Variables • A is a random variable with arity k if it can take on exactly one value out of {a 1, a 2, . . ak} Important Notation Change! a 2 a 3 a 1 Random Variables = Capitalized values = lowercase 41

Discrete/Continuous Random Variables • • • A discrete r. v. can assume only a countable number of values. A continuous r. v. can assume a range of values (e. g. , sensor readings). We will only deal with discrete variables today, though the math works just as well for continuous. 42

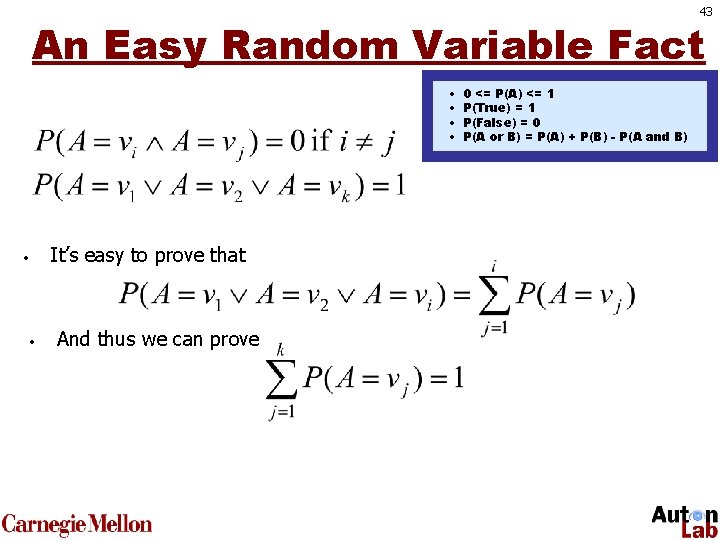

43 An Easy Random Variable Fact • • It’s easy to prove that • • And thus we can prove 0 <= P(A) <= 1 P(True) = 1 P(False) = 0 P(A or B) = P(A) + P(B) - P(A and B)

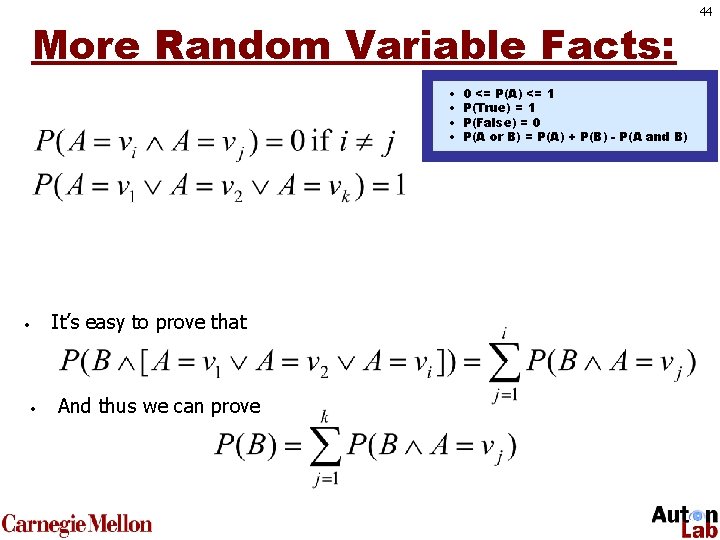

More Random Variable Facts: • • It’s easy to prove that • • And thus we can prove 0 <= P(A) <= 1 P(True) = 1 P(False) = 0 P(A or B) = P(A) + P(B) - P(A and B) 44

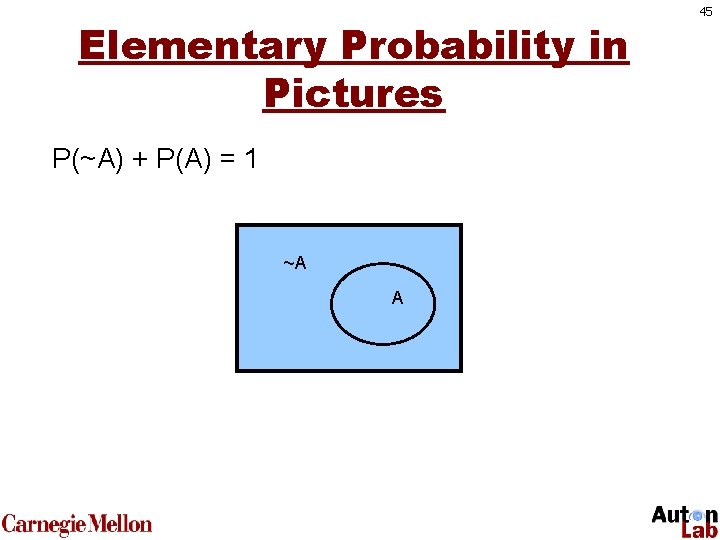

Elementary Probability in Pictures P(~A) + P(A) = 1 ~A A 45

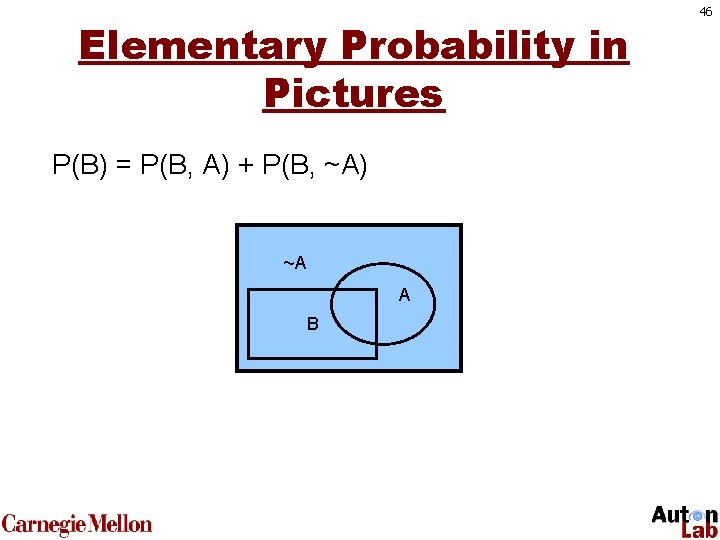

Elementary Probability in Pictures P(B) = P(B, A) + P(B, ~A) ~A A B 46

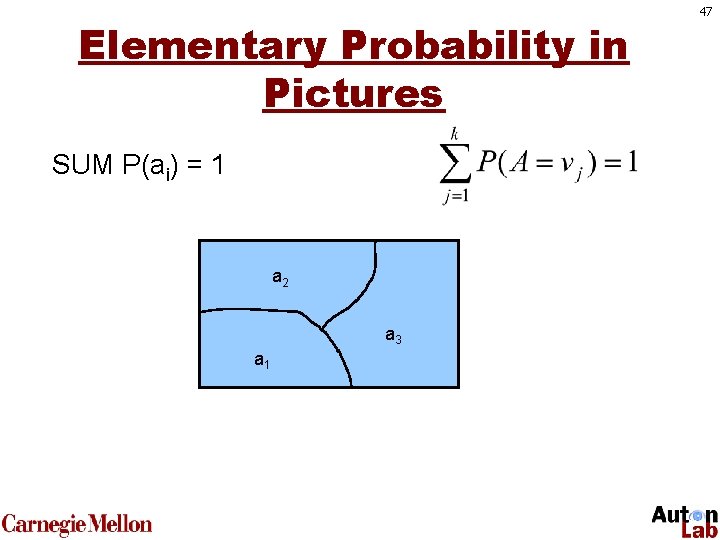

Elementary Probability in Pictures SUM P(ai) = 1 a 2 a 3 a 1 47

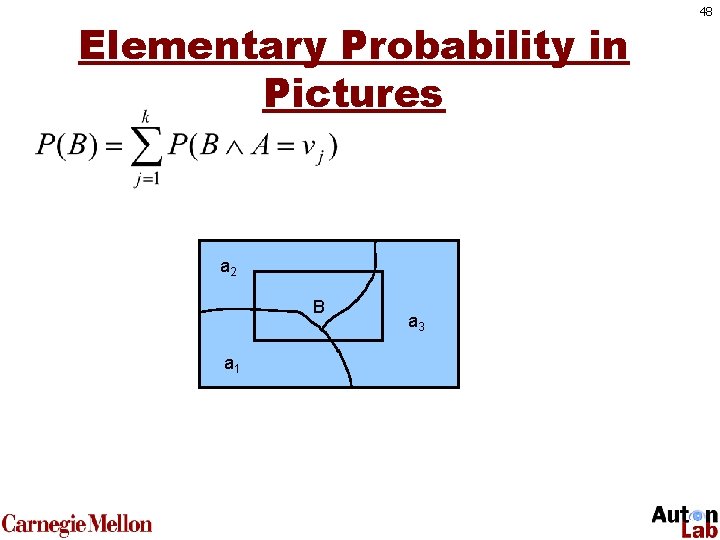

Elementary Probability in Pictures a 2 B a 1 a 3 48

Conditional Probability • • Probability gets really interesting when we start using the little bar symbol … “|” This notation simply indicates conditional probability. 49

Conditional Probability Assume once more that you are a doctor. Again, this is the sample space of “patients you might see on any given day”. 50

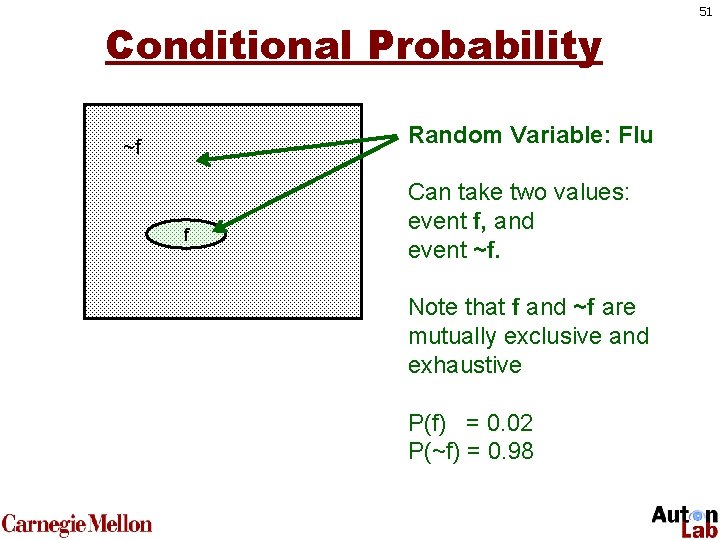

Conditional Probability Random Variable: Flu ~f f Can take two values: event f, and event ~f. Note that f and ~f are mutually exclusive and exhaustive P(f) = 0. 02 P(~f) = 0. 98 51

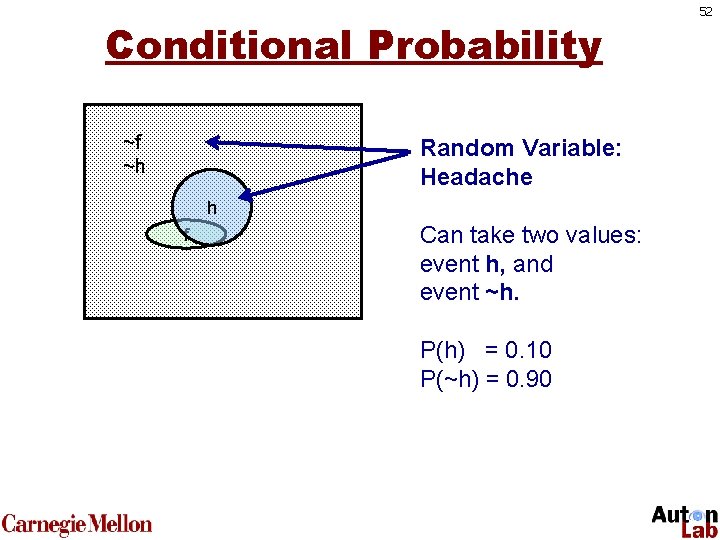

Conditional Probability ~f ~h Random Variable: Headache h f Can take two values: event h, and event ~h. P(h) = 0. 10 P(~h) = 0. 90 52

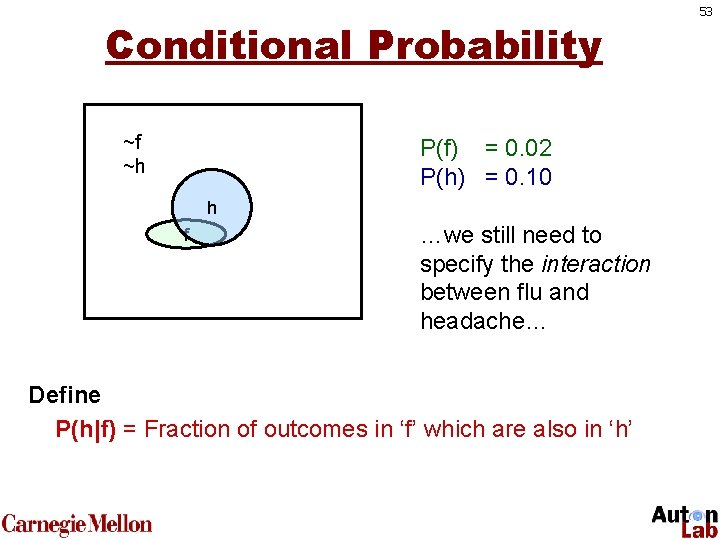

Conditional Probability ~f ~h P(f) = 0. 02 P(h) = 0. 10 h f …we still need to specify the interaction between flu and headache… Define P(h|f) = Fraction of outcomes in ‘f’ which are also in ‘h’ 53

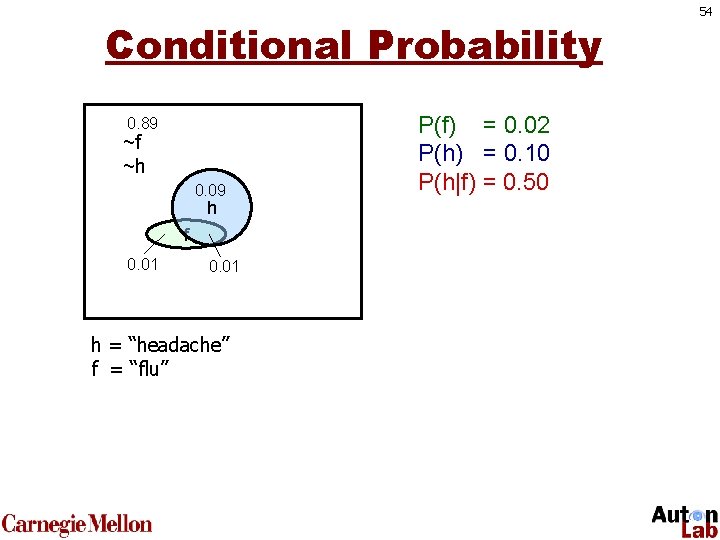

Conditional Probability 0. 89 ~f ~h 0. 09 h f 0. 01 h = “headache” f = “flu” P(f) = 0. 02 P(h) = 0. 10 P(h|f) = 0. 50 54

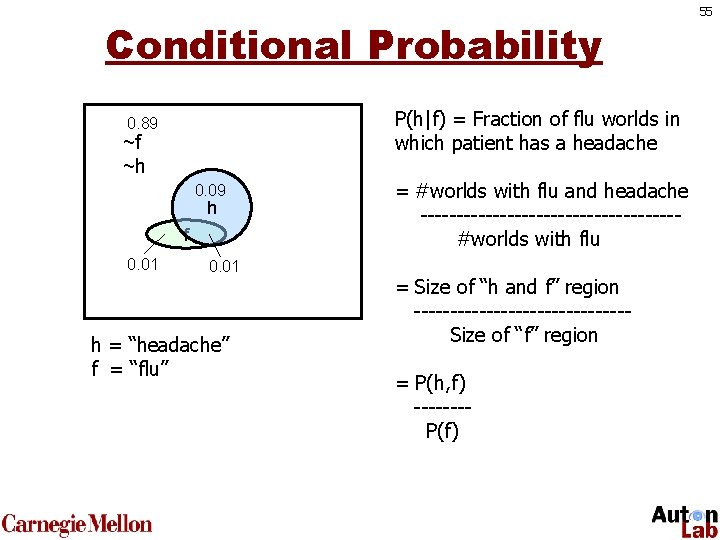

Conditional Probability P(h|f) = Fraction of flu worlds in which patient has a headache 0. 89 ~f ~h 0. 09 h f 0. 01 h = “headache” f = “flu” = #worlds with flu and headache ------------------#worlds with flu = Size of “h and f” region ---------------Size of “f” region = P(h, f) -------P(f) 55

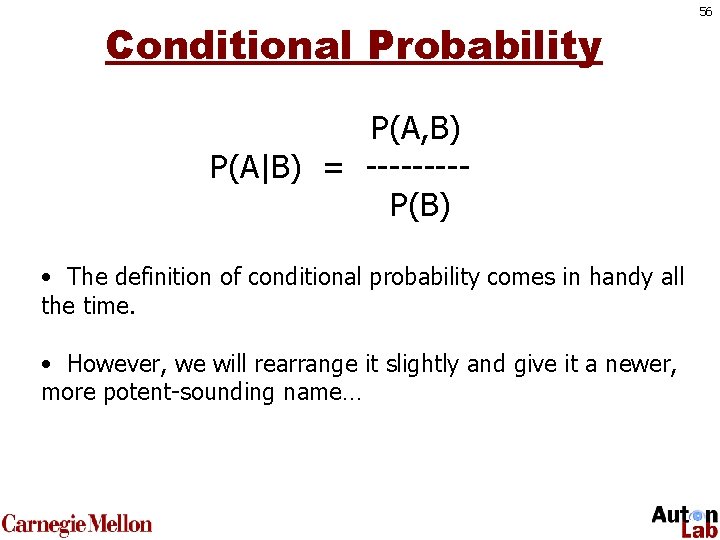

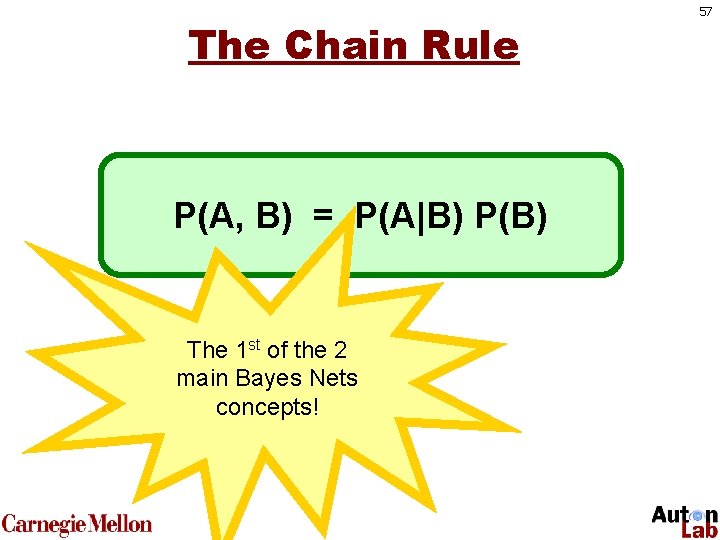

Conditional Probability P(A, B) P(A|B) = ----P(B) • The definition of conditional probability comes in handy all the time. • However, we will rearrange it slightly and give it a newer, more potent-sounding name… 56

The Chain Rule P(A, B) = P(A|B) P(B) The 1 st of the 2 main Bayes Nets concepts! 57

Inference One day you wake up queasy. You think: “Drat! 50% of flus are associated with queasiness so I must have a 50 -50 chance of coming down with flu” Is this reasoning good? 58

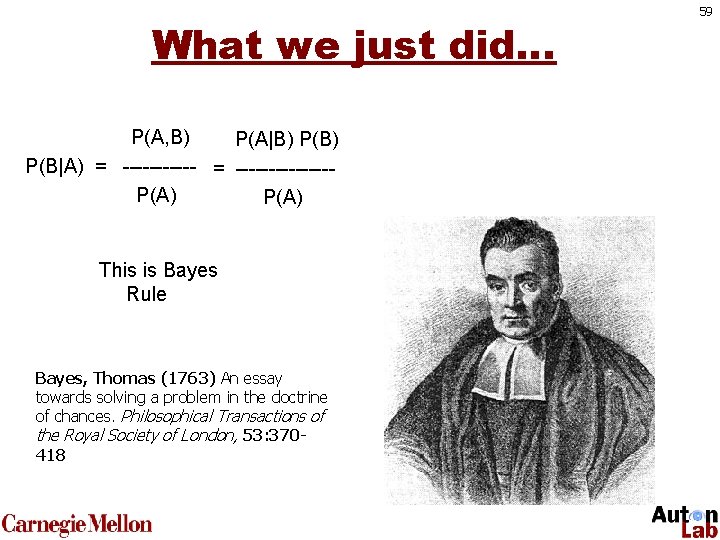

What we just did… P(A, B) P(A|B) P(B|A) = --------------P(A) This is Bayes Rule Bayes, Thomas (1763) An essay towards solving a problem in the doctrine of chances. Philosophical Transactions of the Royal Society of London, 53: 370418 59

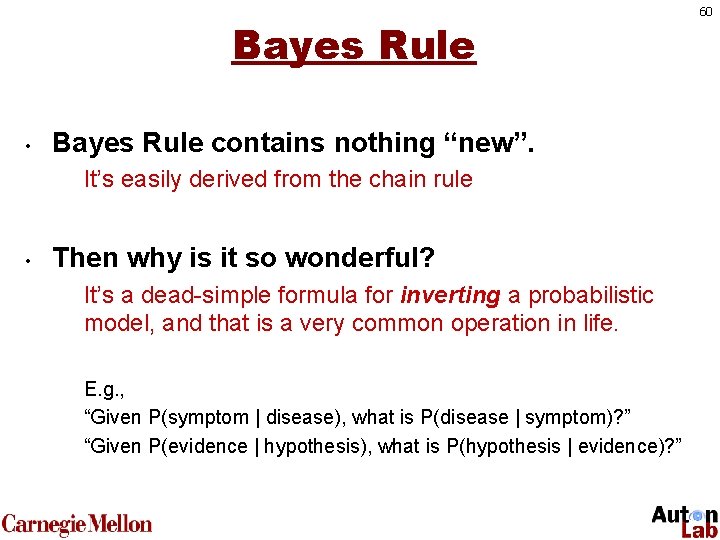

Bayes Rule • Bayes Rule contains nothing “new”. It’s easily derived from the chain rule • Then why is it so wonderful? It’s a dead-simple formula for inverting a probabilistic model, and that is a very common operation in life. E. g. , “Given P(symptom | disease), what is P(disease | symptom)? ” “Given P(evidence | hypothesis), what is P(hypothesis | evidence)? ” 60

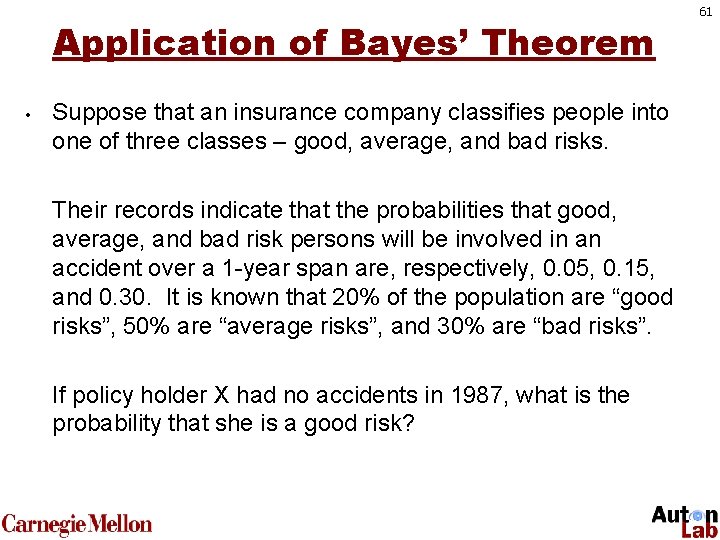

Application of Bayes’ Theorem • Suppose that an insurance company classifies people into one of three classes – good, average, and bad risks. Their records indicate that the probabilities that good, average, and bad risk persons will be involved in an accident over a 1 -year span are, respectively, 0. 05, 0. 15, and 0. 30. It is known that 20% of the population are “good risks”, 50% are “average risks”, and 30% are “bad risks”. If policy holder X had no accidents in 1987, what is the probability that she is a good risk? 61

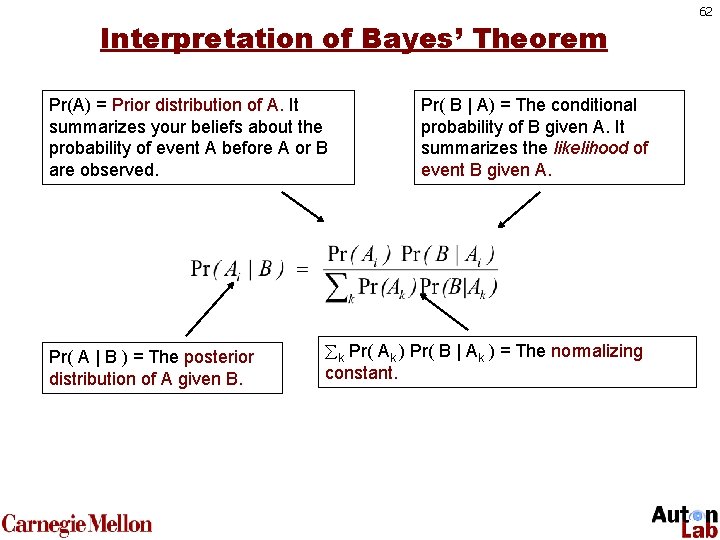

Interpretation of Bayes’ Theorem Pr(A) = Prior distribution of A. It summarizes your beliefs about the probability of event A before A or B are observed. Pr( A | B ) = The posterior distribution of A given B. Pr( B | A) = The conditional probability of B given A. It summarizes the likelihood of event B given A. k Pr( Ak ) Pr( B | Ak ) = The normalizing constant. 62

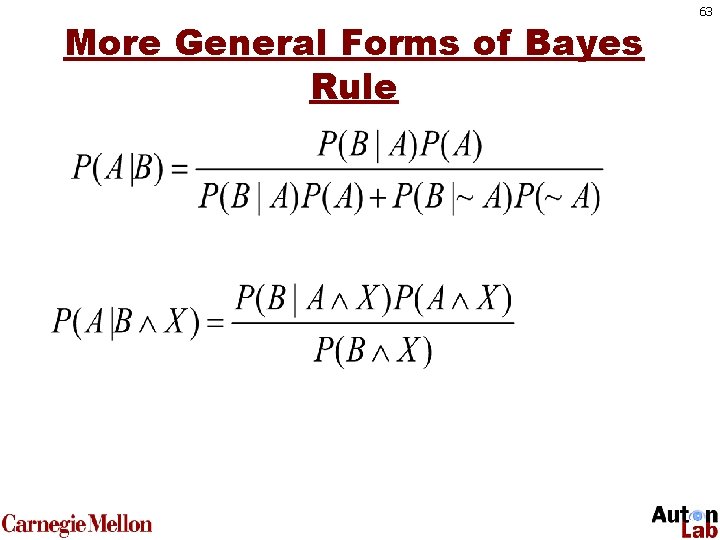

More General Forms of Bayes Rule 63

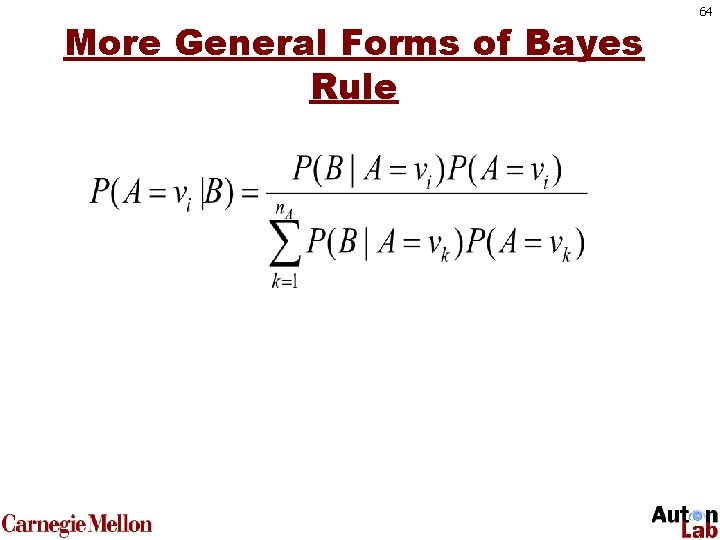

More General Forms of Bayes Rule 64

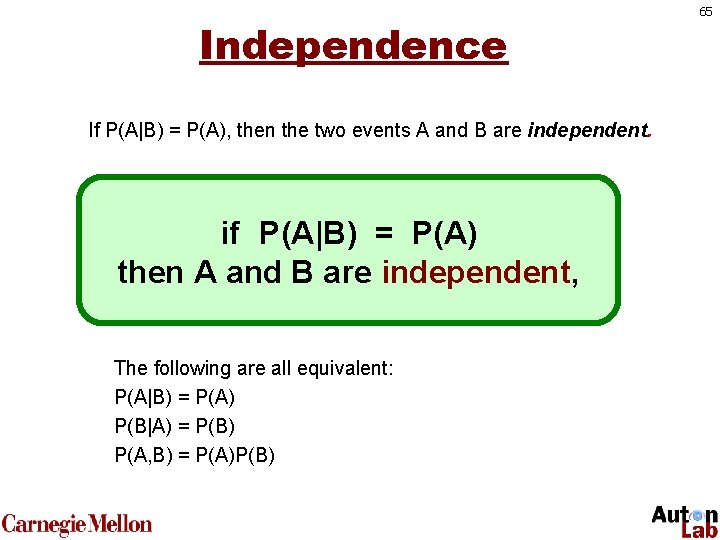

Independence If P(A|B) = P(A), then the two events A and B are independent. if P(A|B) = P(A) then A and B are independent, The following are all equivalent: P(A|B) = P(A) P(B|A) = P(B) P(A, B) = P(A)P(B) 65

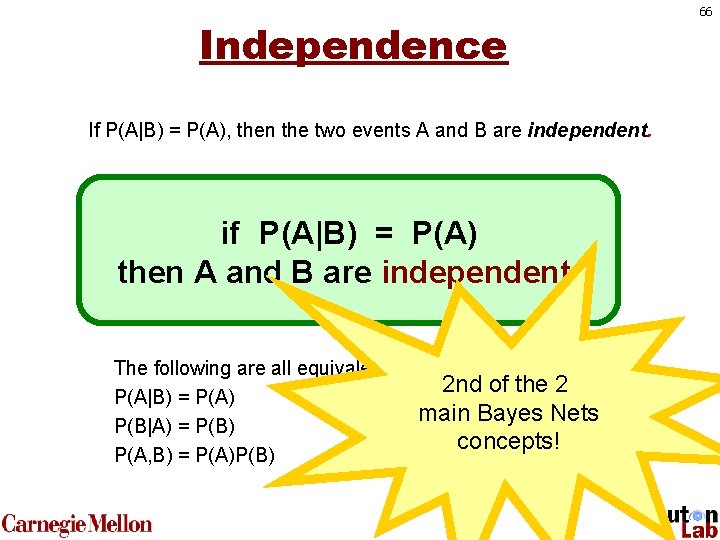

Independence If P(A|B) = P(A), then the two events A and B are independent. if P(A|B) = P(A) then A and B are independent, The following are all equivalent: P(A|B) = P(A) P(B|A) = P(B) P(A, B) = P(A)P(B) 2 nd of the 2 main Bayes Nets concepts! 66

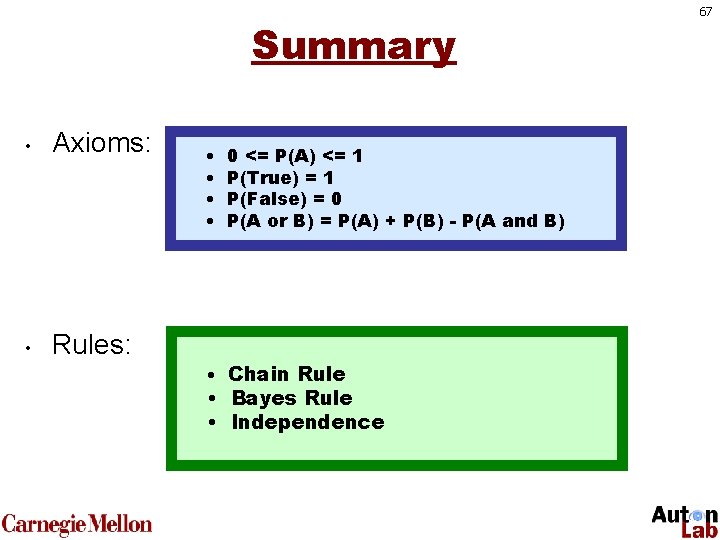

Summary • Axioms: • Rules: • • 0 <= P(A) <= 1 P(True) = 1 P(False) = 0 P(A or B) = P(A) + P(B) - P(A and B) • Chain Rule • Bayes Rule • Independence 67

68

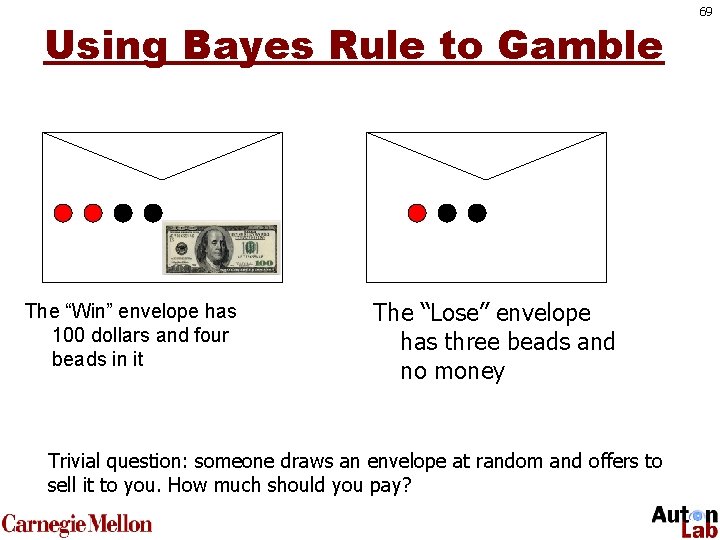

Using Bayes Rule to Gamble The “Win” envelope has 100 dollars and four beads in it The “Lose” envelope has three beads and no money Trivial question: someone draws an envelope at random and offers to sell it to you. How much should you pay? 69

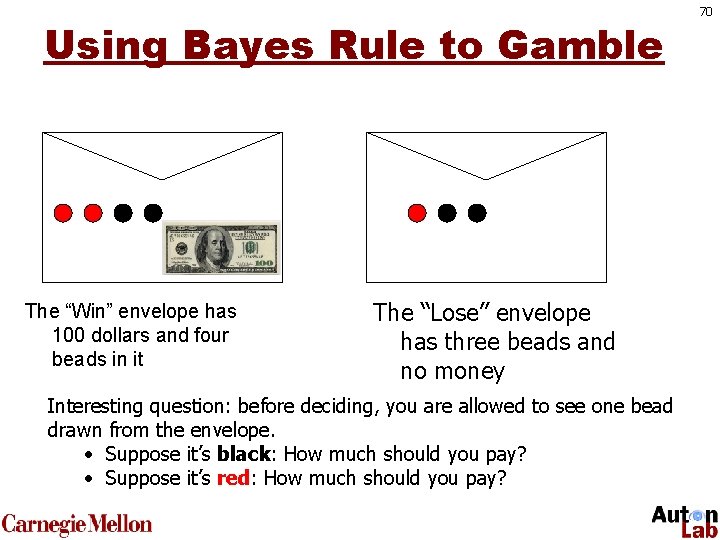

Using Bayes Rule to Gamble The “Win” envelope has 100 dollars and four beads in it The “Lose” envelope has three beads and no money Interesting question: before deciding, you are allowed to see one bead drawn from the envelope. • Suppose it’s black: How much should you pay? • Suppose it’s red: How much should you pay? 70

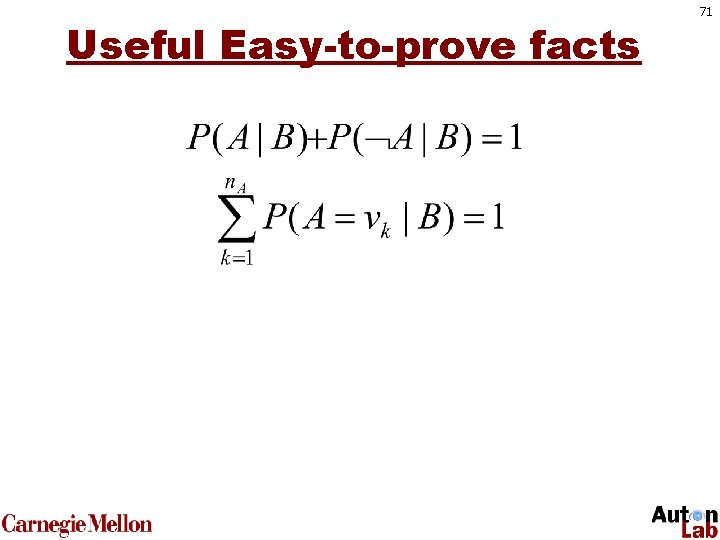

Useful Easy-to-prove facts 71

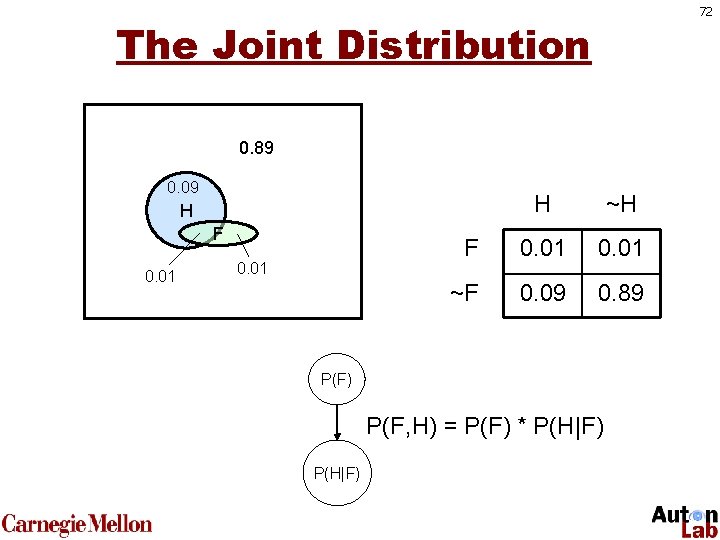

72 The Joint Distribution 0. 89 0. 09 H ~H F 0. 01 ~F 0. 09 0. 89 H F 0. 01 P(F) P(F, H) = P(F) * P(H|F)

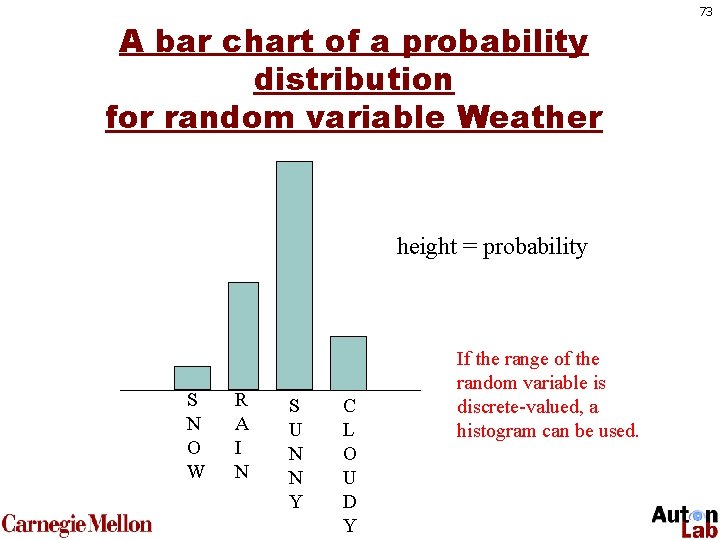

A bar chart of a probability distribution for random variable Weather height = probability S N O W R A I N S U N N Y C L O U D Y If the range of the random variable is discrete-valued, a histogram can be used. 73

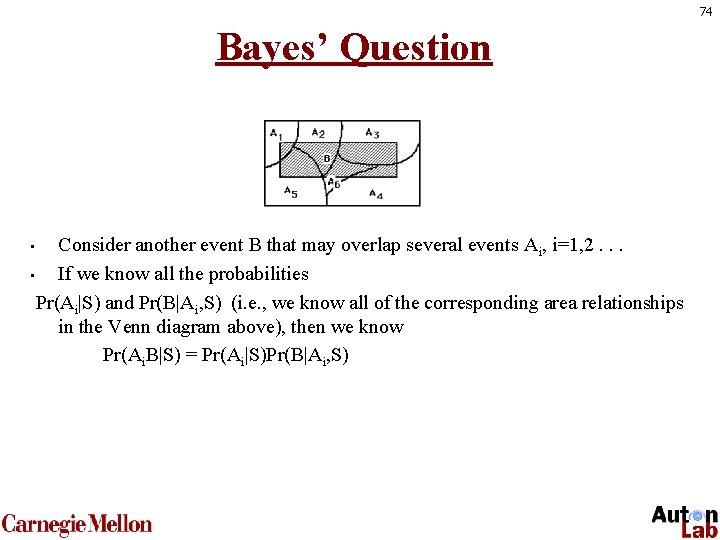

74 Bayes’ Question Consider another event B that may overlap several events Ai, i=1, 2. . . • If we know all the probabilities Pr(Ai|S) and Pr(B|Ai, S) (i. e. , we know all of the corresponding area relationships in the Venn diagram above), then we know Pr(Ai. B|S) = Pr(Ai|S)Pr(B|Ai, S) •

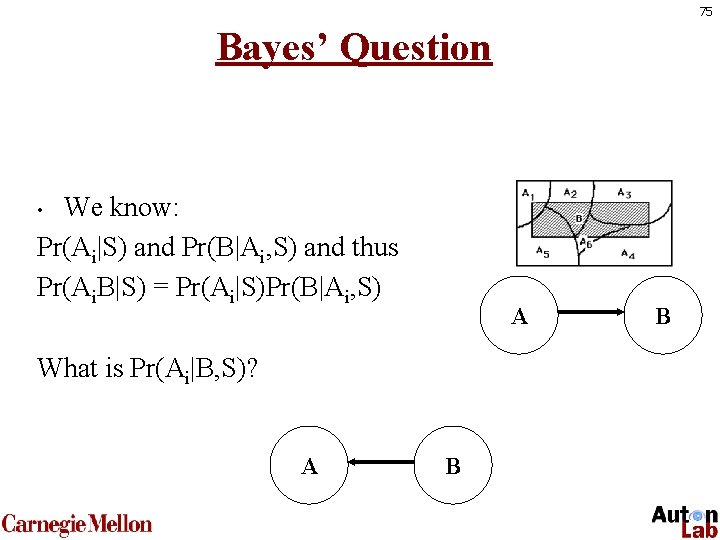

75 Bayes’ Question We know: Pr(Ai|S) and Pr(B|Ai, S) and thus Pr(Ai. B|S) = Pr(Ai|S)Pr(B|Ai, S) • A What is Pr(Ai|B, S)? A B B

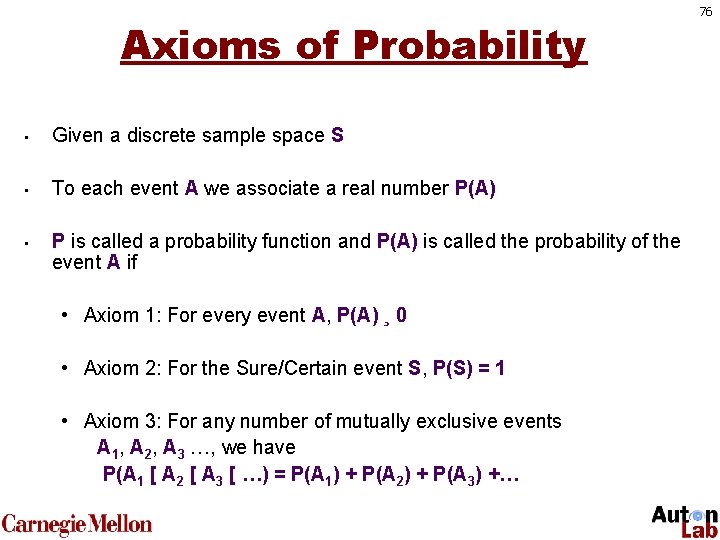

Axioms of Probability • Given a discrete sample space S • To each event A we associate a real number P(A) • P is called a probability function and P(A) is called the probability of the event A if • Axiom 1: For every event A, P(A) ¸ 0 • Axiom 2: For the Sure/Certain event S, P(S) = 1 • Axiom 3: For any number of mutually exclusive events A 1, A 2, A 3 …, we have P(A 1 [ A 2 [ A 3 [ …) = P(A 1) + P(A 2) + P(A 3) +… 76

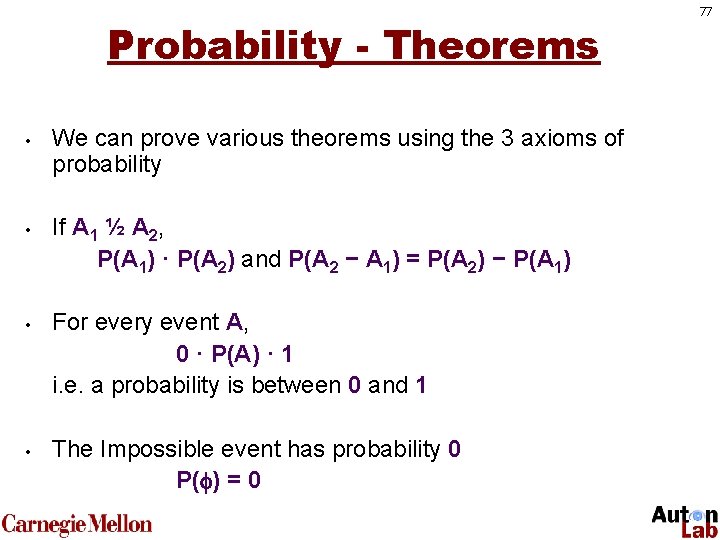

Probability - Theorems • • We can prove various theorems using the 3 axioms of probability If A 1 ½ A 2, P(A 1) · P(A 2) and P(A 2 − A 1) = P(A 2) − P(A 1) For every event A, 0 · P(A) · 1 i. e. a probability is between 0 and 1 The Impossible event has probability 0 P( ) = 0 77

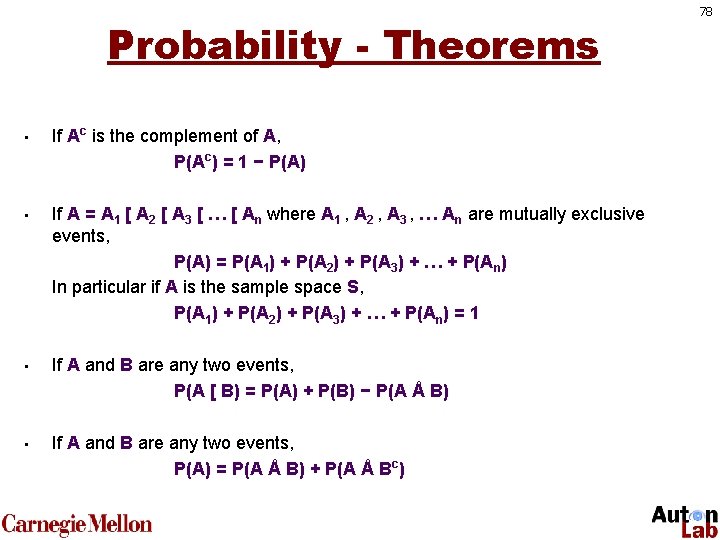

Probability - Theorems • • If Ac is the complement of A, P(Ac) = 1 − P(A) If A = A 1 [ A 2 [ A 3 [ … [ An where A 1 , A 2 , A 3 , … An are mutually exclusive events, P(A) = P(A 1) + P(A 2) + P(A 3) + … + P(An) In particular if A is the sample space S, P(A 1) + P(A 2) + P(A 3) + … + P(An) = 1 If A and B are any two events, P(A [ B) = P(A) + P(B) − P(A Å B) If A and B are any two events, P(A) = P(A Å B) + P(A Å Bc) 78

Some concepts • Definition of probability functions • Empirical probability • Odds • Refer textbook (page 418 – 420) 79

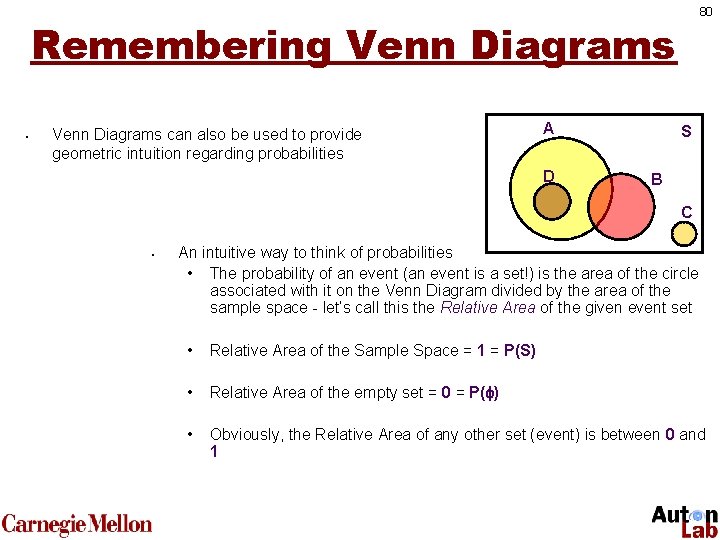

80 Remembering Venn Diagrams • Venn Diagrams can also be used to provide geometric intuition regarding probabilities A D S B C • An intuitive way to think of probabilities • The probability of an event (an event is a set!) is the area of the circle associated with it on the Venn Diagram divided by the area of the sample space - let’s call this the Relative Area of the given event set • Relative Area of the Sample Space = 1 = P(S) • Relative Area of the empty set = 0 = P( ) • Obviously, the Relative Area of any other set (event) is between 0 and 1

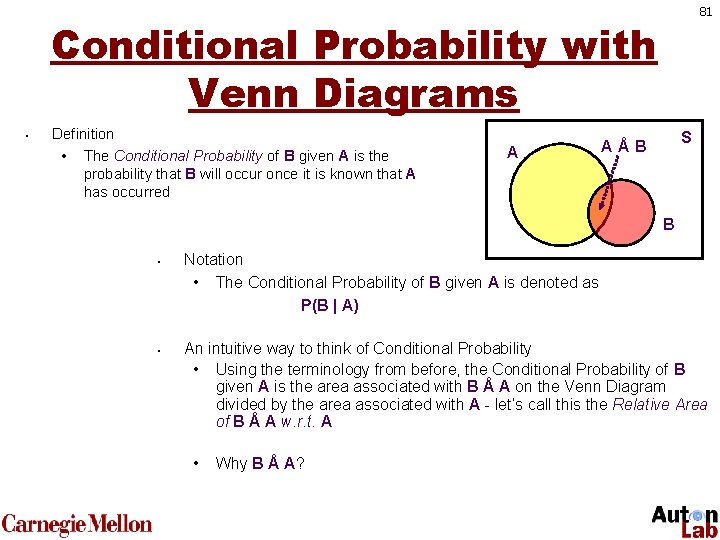

81 Conditional Probability with Venn Diagrams • Definition • The Conditional Probability of B given A is the probability that B will occur once it is known that A has occurred A S AÅB B • • Notation • The Conditional Probability of B given A is denoted as P(B | A) An intuitive way to think of Conditional Probability • Using the terminology from before, the Conditional Probability of B given A is the area associated with B Å A on the Venn Diagram divided by the area associated with A - let’s call this the Relative Area of B Å A w. r. t. A • Why B Å A?

Conditional Probability Formula • • • We just said • the conditional probability of B given A is the area associated with B Å A on the Venn Diagram divided by the area associated with A So we must have P(B | A) = P(B Å A) / P(A) or alternatively P(B Å A) = P(A) x P(B | A) In words: The probability that both B and A occur is the product of the probability that A occurs and the conditional probability of B given A 82

Conditional Probability Theorem • • • Theorem • For any three events A 1, A 2 and A 3, we have P(A 1 Å A 2 Å A 3) = P(A 1) x P(A 2 | A 1) x P(A 3 | A 1 Å A 2) How would you go about proving this? • Let B = A 1 Å A 2 • Then from the Conditional Probability formula P(B Å A 3) = P(B) x P(A 3 | B) • Again from the Conditional Probability formula P(B) = P(A 1 Å A 2) = P(A 1) x P(A 2 | A 1) • Putting everything together, we get P(A 1 Å A 2 Å A 3) = P(A 1) x P(A 2 | A 1) x P(A 3 | A 1 Å A 2) • Voilà! We can easily extend this to more than 3 events 83

Example 1 of Bayes’ Theorem • Suppose that an insurance company classifies people into one of three classes – good risks, average risks, and bad risks. Their records indicate that the probabilities that good, average, and bad risk persons will be involved in an accident over a 1 -year span are, respectively, 0. 05, 0. 15, and 0. 30. If 20% of the population are “good risks”, 50% are “average risks”, and 30% are “bad risks”, what proportion of people have accidents in a fixed year? If policy holder A had no accidents in 1987, what is the probability that he or she is a good risk? 84

Discrete Random Variables • • • A is a boolean-valued random variable if A denotes an event, and there is some degree of uncertainty as to whether A occurs. Examples A = The US president in 2023 will be male A = You wake up tomorrow with a headache A = You have Ebola 85

Sets - Definition and Notation • • • The study of sets is a fundamental requirement for studying probability Definitions • A Set is collection of objects • these objects are called Members or Elements of the Set Notation • Sets are denoted by upper case letters • Elements are denoted by lower case letters • If an element a belongs to a set X, we write a 2 X “a belongs to X” • If an element b does not belong to a set X, we write b X “b does not belong to X” 86

Sets Construction/Description • • There are two ways of describing a set • Roster Method: listing all members of the set • Set of possible results of tossing a die = {1, 2, 3, 4, 5, 6} • Set of vowels = {a, e, i, o, u} • Property Method: Describing a property held by all members and no non-members • Set of possible results of tossing a die = {x| x is an integer, 1<= x <= 6} • Set of positive even integers = {x| x is an integer, x > 0, x is divisible by 2} Two ways of constructing sets • construct the following using the roster method • {Amstel, Budweiser, Miller, Coors} • construct the following using the property method • {x| x is a Prime Number} 87

Special Events • • Similar to the special sets, we have special events Definitions • S itself is called the Sure Event or the Certain Event since we know that any outcome will be an element of S • The empty set is called the Impossible Event since no outcome can be an element of • Since events are sets, it is clear that statements about events can be translated into the language of sets and conversely 88

Set Operations on Events • • • Again, since events are sets in S, performing set operations on events leads us to other events in S If A and B are events • A [ B is the event “either A or B or both” • A Å B is the event “both A and B” • Ac is the event “not A” • A − B is the event “A but not B” Definition • If the sets corresponding to the events A and B are disjoint, i. e. A Å B = , we say that the events A and B are Mutually Exclusive • If events A and B are Mutually Exclusive, they can not occur simultaneously 89

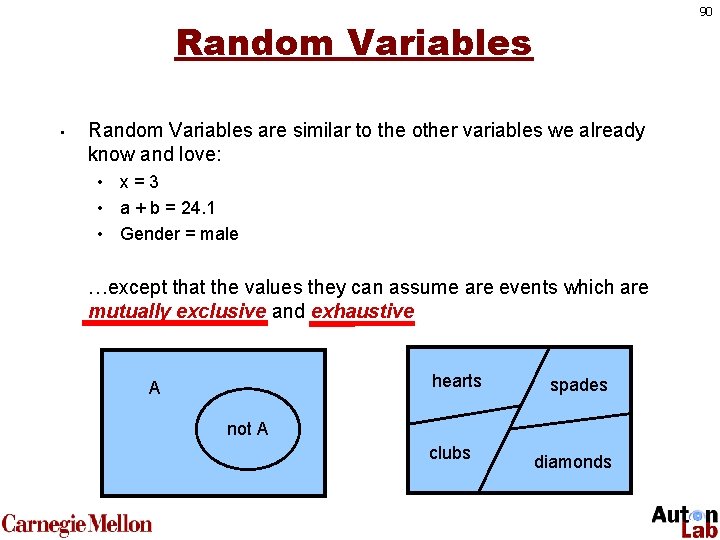

90 Random Variables • Random Variables are similar to the other variables we already know and love: • x=3 • a + b = 24. 1 • Gender = male …except that the values they can assume are events which are mutually exclusive and exhaustive hearts A spades not A clubs diamonds

Random Variables • • In many experiments, it is easier to deal with a “summary variable” than with the original probability structure. Example: in an opinion poll, we ask 50 people whether they agree or disagree with a certain issue. • • • Suppose we record a "1" for agree and "0" for disagree. The sample space for this experiment has 250 elements. Suppose we are only interested in the number of people who agree. Define the variable X = sum of 1's recorded out of 50. Easier to deal with this sample space (has only 51 elements). 91

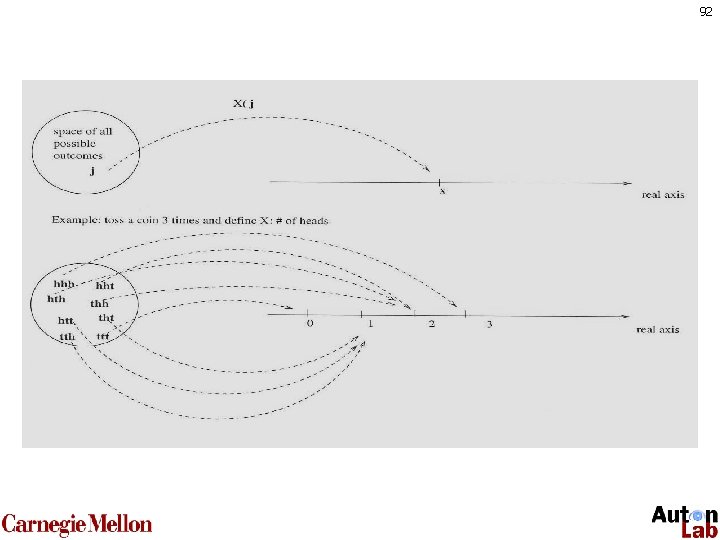

92

- Slides: 92