What Queueing Theory Teaches Us About Computer Systems

What Queueing Theory Teaches Us About Computer Systems Design Mor Harchol-Balter Computer Science Dept, CMU 1

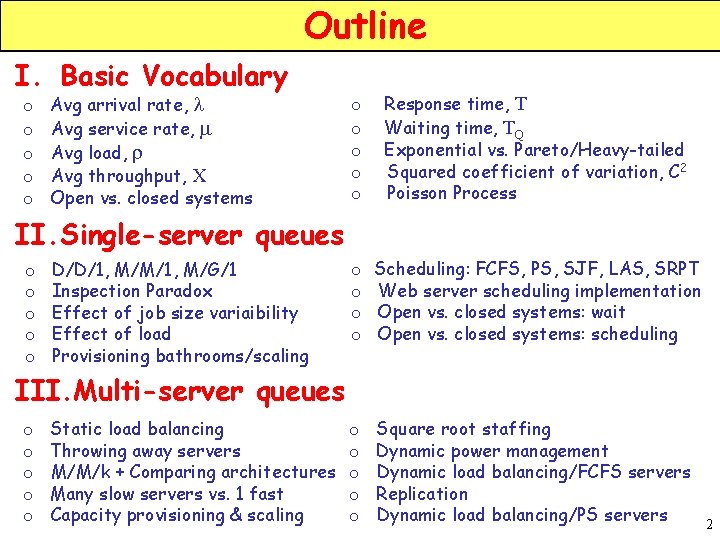

Outline I. Basic Vocabulary o o o Avg arrival rate, l Avg service rate, m Avg load, r Avg throughput, X Open vs. closed systems o o o Response time, T Waiting time, TQ Exponential vs. Pareto/Heavy-tailed Squared coefficient of variation, C 2 Poisson Process o o Scheduling: FCFS, PS, SJF, LAS, SRPT Web server scheduling implementation Open vs. closed systems: wait Open vs. closed systems: scheduling o o o Square root staffing Dynamic power management Dynamic load balancing/FCFS servers Replication Dynamic load balancing/PS servers II. Single-server queues o o o D/D/1, M/M/1, M/G/1 Inspection Paradox Effect of job size variaibility Effect of load Provisioning bathrooms/scaling III. Multi-server queues o o o Static load balancing Throwing away servers M/M/k + Comparing architectures Many slow servers vs. 1 fast Capacity provisioning & scaling 2

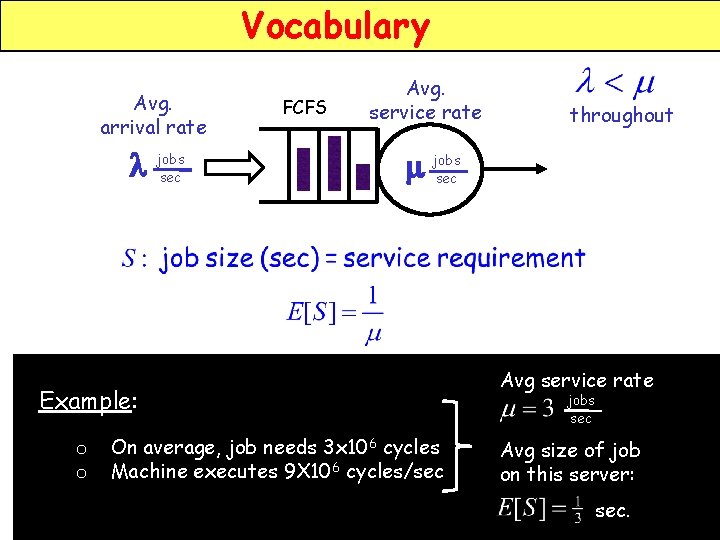

Vocabulary Avg. arrival rate l jobs sec FCFS Avg. service rate m jobs sec Example: o o throughout On average, job needs 3 x 106 cycles Machine executes 9 X 106 cycles/sec Avg service rate jobs sec Avg size of job on this server: sec.

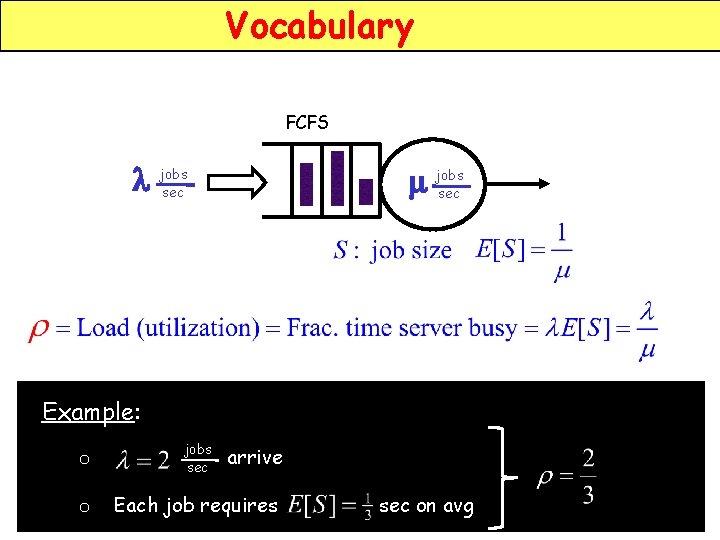

Vocabulary FCFS l jobs sec m jobs sec Example: o jobs sec o Each job requires arrive sec on avg

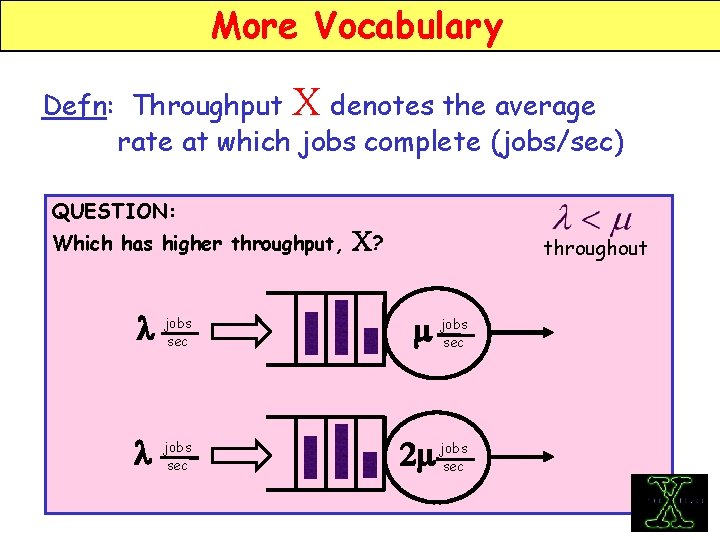

More Vocabulary Defn: Throughput X denotes the average rate at which jobs complete (jobs/sec) QUESTION: Which has higher throughput, l jobs sec C? throughout m jobs sec 2 m jobs sec

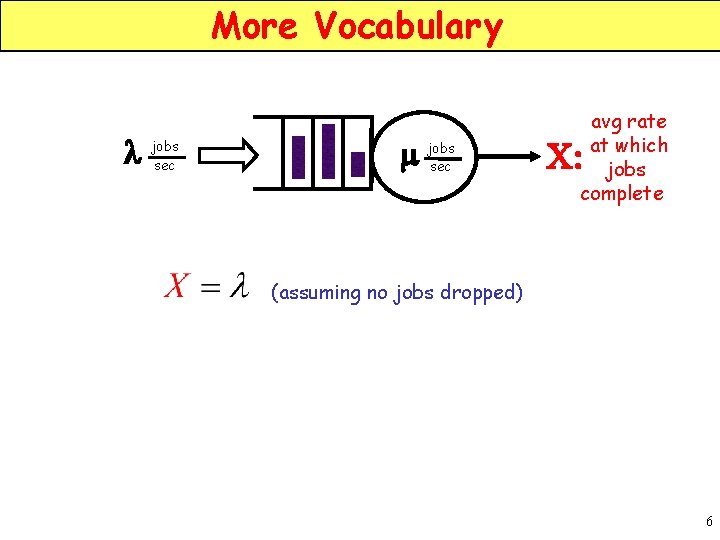

More Vocabulary l jobs sec m jobs sec avg rate at which jobs complete C: (assuming no jobs dropped) 6

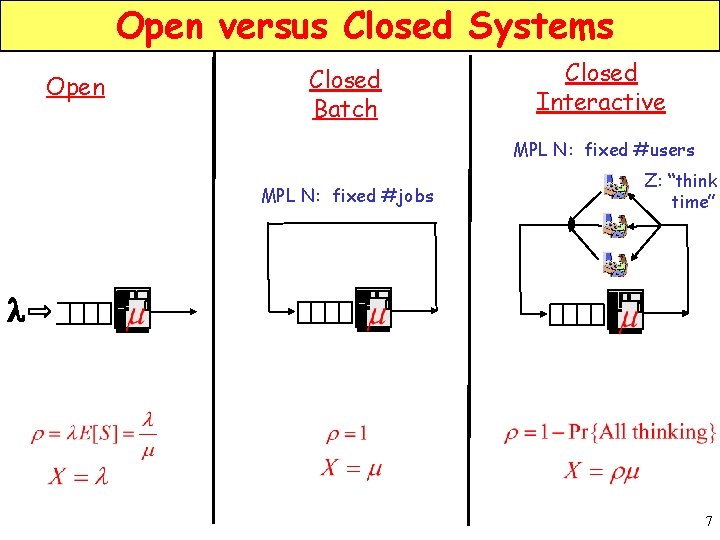

Open versus Closed Systems Open Closed Batch Closed Interactive MPL N: fixed #users MPL N: fixed #jobs Z: “think time” l 7

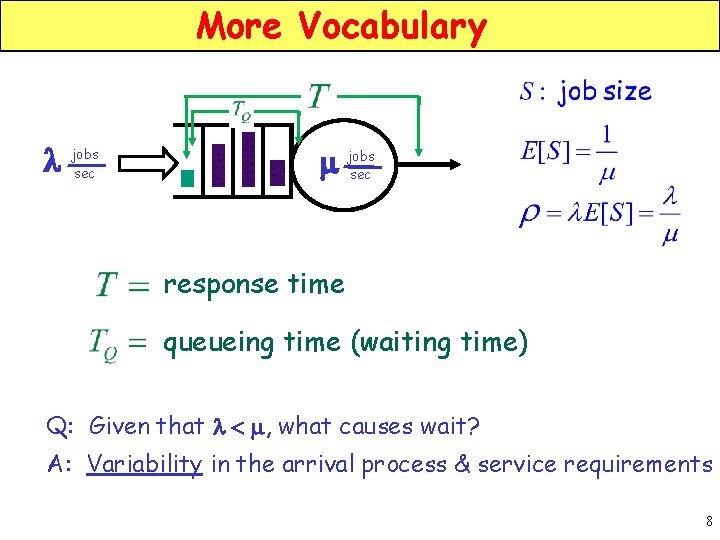

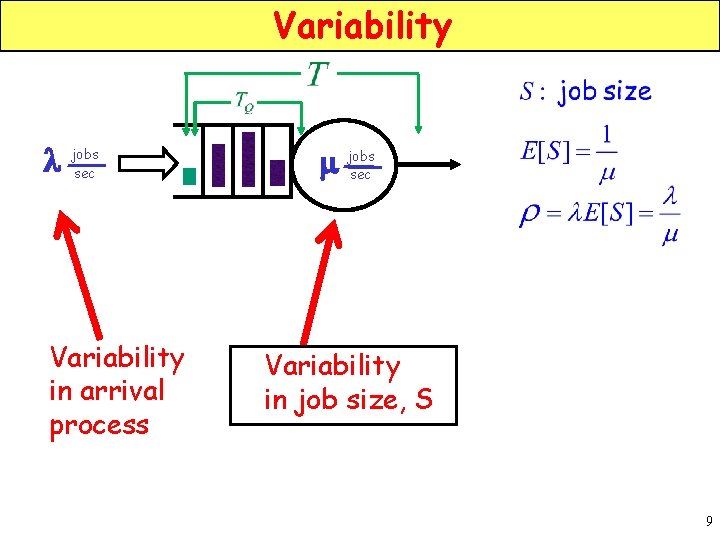

More Vocabulary l jobs sec m jobs sec response time queueing time (waiting time) Q: Given that l < m, what causes wait? A: Variability in the arrival process & service requirements 8

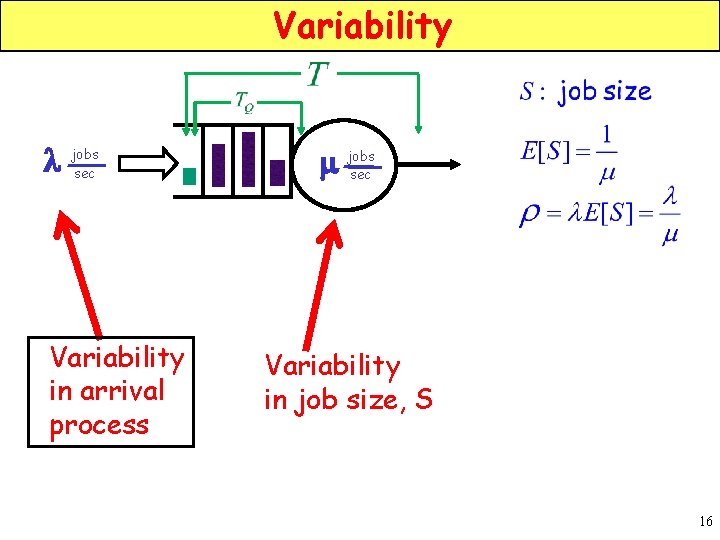

Variability l jobs sec Variability in arrival process m jobs sec Variability in job size, S 9

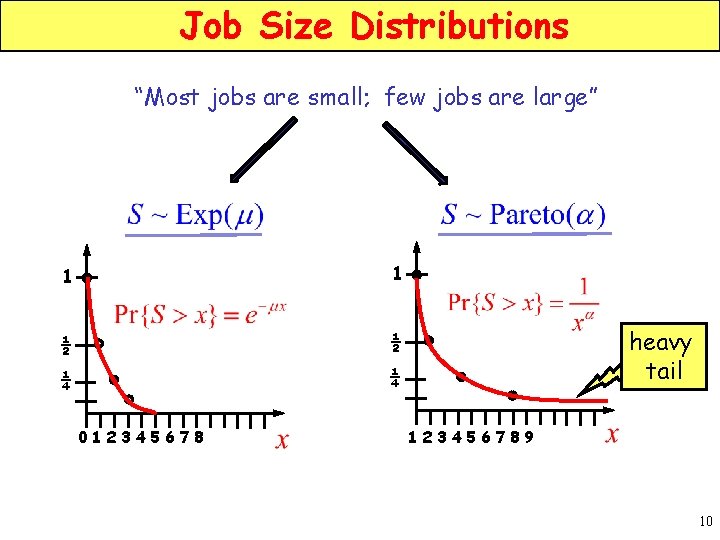

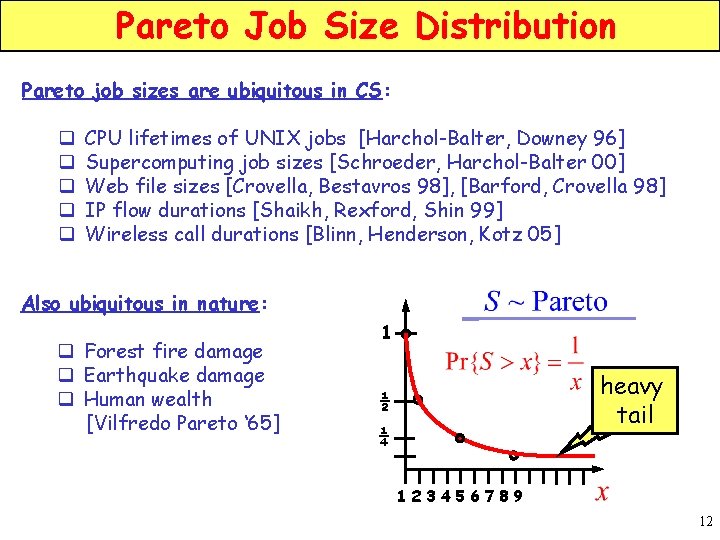

Job Size Distributions “Most jobs are small; few jobs are large” 1 1 ½ ½ ¼ ¼ 012345678 heavy tail 123456789 10

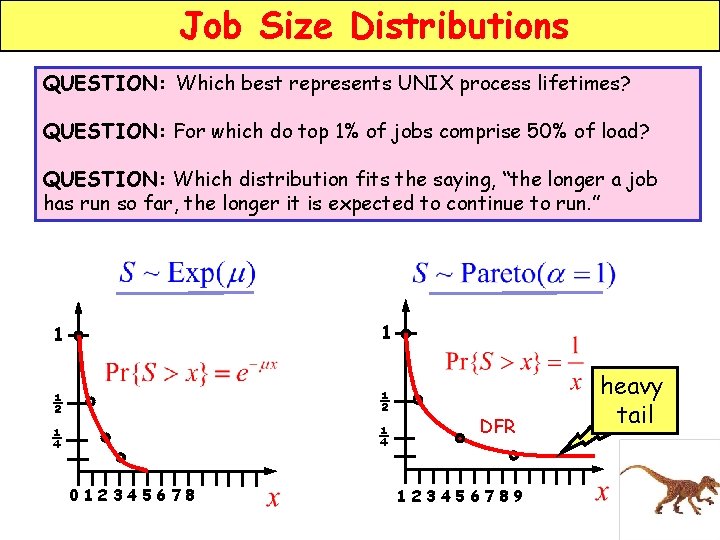

Job Size Distributions QUESTION: Which best represents UNIX process lifetimes? QUESTION: For which do top 1% of jobs comprise 50% of load? QUESTION: Which distribution fits the saying, “the longer a job has run so far, the longer it is expected to continue to run. ” 1 1 ½ ½ ¼ ¼ 012345678 DFR 123456789 heavy tail

Pareto Job Size Distribution Pareto job sizes are ubiquitous in CS: q q q CPU lifetimes of UNIX jobs [Harchol-Balter, Downey 96] Supercomputing job sizes [Schroeder, Harchol-Balter 00] Web file sizes [Crovella, Bestavros 98], [Barford, Crovella 98] IP flow durations [Shaikh, Rexford, Shin 99] Wireless call durations [Blinn, Henderson, Kotz 05] Also ubiquitous in nature: q Forest fire damage q Earthquake damage q Human wealth [Vilfredo Pareto ‘ 65] 1 heavy tail ½ ¼ 123456789 12

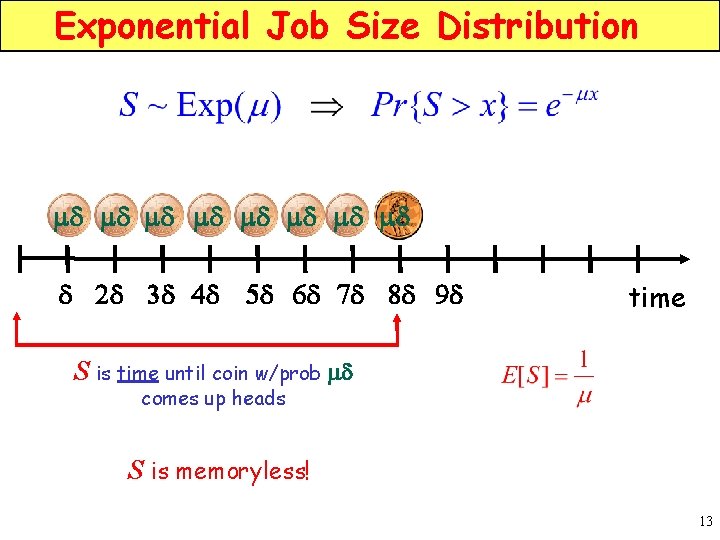

Exponential Job Size Distribution md md d 2 d 3 d 4 d 5 d 6 d 7 d 8 d 9 d time S is time until coin w/prob md comes up heads S is memoryless! 13

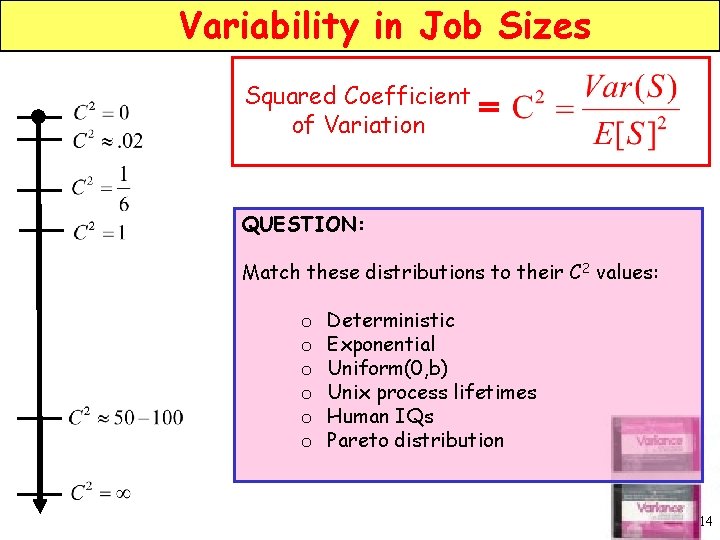

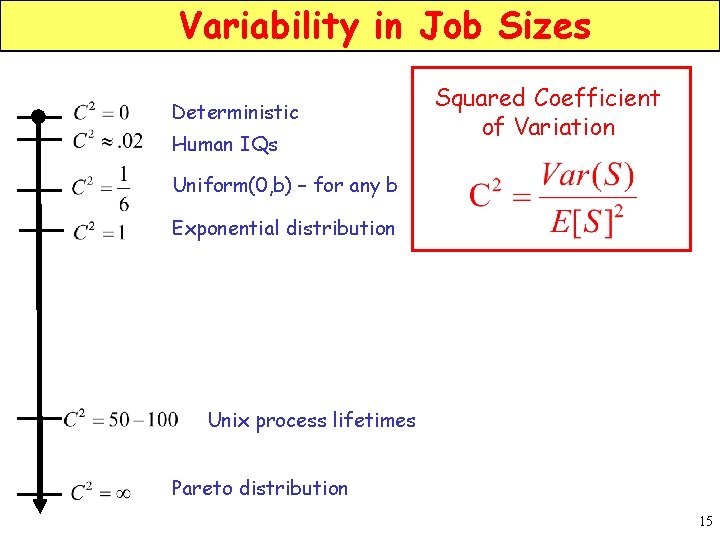

Variability in Job Sizes Squared Coefficient of Variation = QUESTION: Match these distributions to their C 2 values: o o o Deterministic Exponential Uniform(0, b) Unix process lifetimes Human IQs Pareto distribution 14

Variability in Job Sizes Deterministic Human IQs Squared Coefficient of Variation Uniform(0, b) – for any b Exponential distribution Unix process lifetimes Pareto distribution 15

Variability l jobs sec Variability in arrival process m jobs sec Variability in job size, S 16

Poisson Process with rate l QUESTION: What’s a Poisson process with rate l? Hint: It’s related to Exp(l). 17

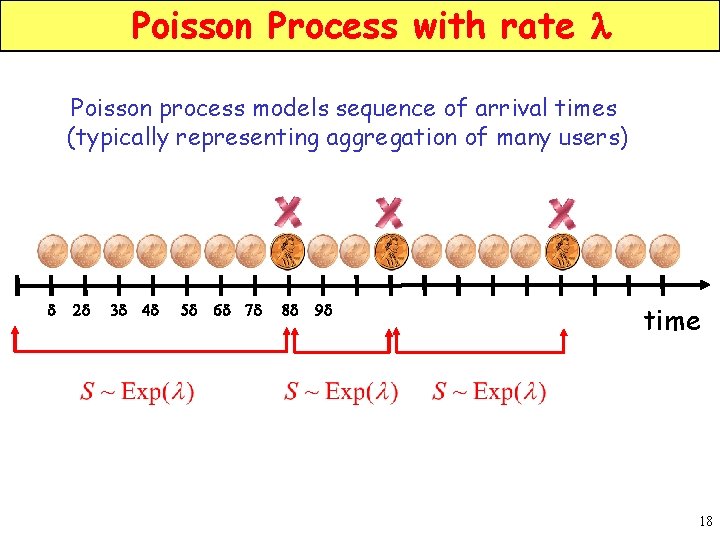

Poisson Process with rate l Poisson process models sequence of arrival times (typically representing aggregation of many users) d 2 d 3 d 4 d 5 d 6 d 7 d 8 d 9 d time 18

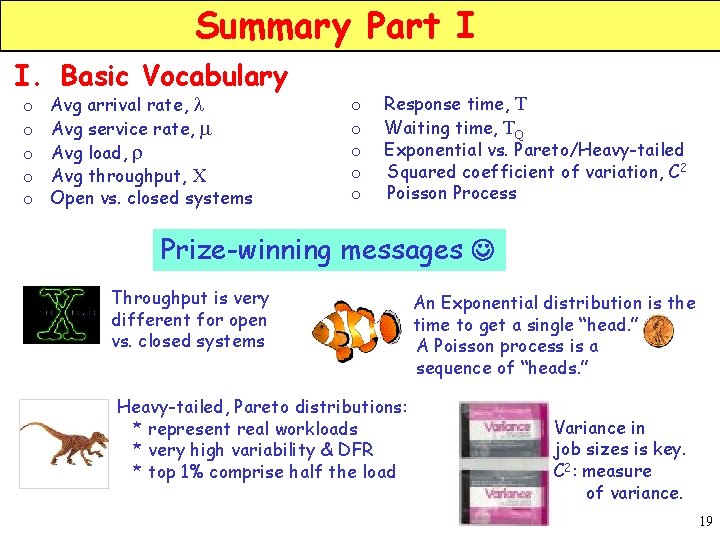

Summary Part I I. Basic Vocabulary o o o Avg arrival rate, l Avg service rate, m Avg load, r Avg throughput, X Open vs. closed systems o o o Response time, T Waiting time, TQ Exponential vs. Pareto/Heavy-tailed Squared coefficient of variation, C 2 Poisson Process Prize-winning messages Throughput is very different for open vs. closed systems Heavy-tailed, Pareto distributions: * represent real workloads * very high variability & DFR * top 1% comprise half the load An Exponential distribution is the time to get a single “head. ” A Poisson process is a sequence of “heads. ” Variance in job sizes is key. C 2: measure of variance. 19

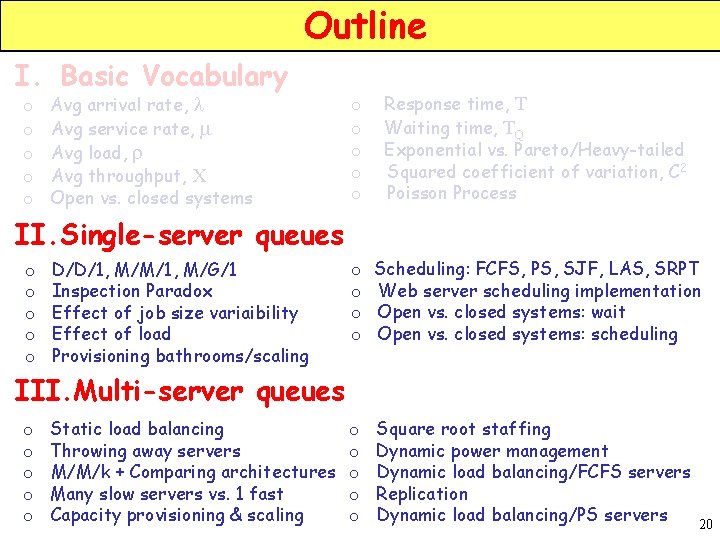

Outline I. Basic Vocabulary o o o Avg arrival rate, l Avg service rate, m Avg load, r Avg throughput, X Open vs. closed systems o o o Response time, T Waiting time, TQ Exponential vs. Pareto/Heavy-tailed Squared coefficient of variation, C 2 Poisson Process o o Scheduling: FCFS, PS, SJF, LAS, SRPT Web server scheduling implementation Open vs. closed systems: wait Open vs. closed systems: scheduling o o o Square root staffing Dynamic power management Dynamic load balancing/FCFS servers Replication Dynamic load balancing/PS servers II. Single-server queues o o o D/D/1, M/M/1, M/G/1 Inspection Paradox Effect of job size variaibility Effect of load Provisioning bathrooms/scaling III. Multi-server queues o o o Static load balancing Throwing away servers M/M/k + Comparing architectures Many slow servers vs. 1 fast Capacity provisioning & scaling 20

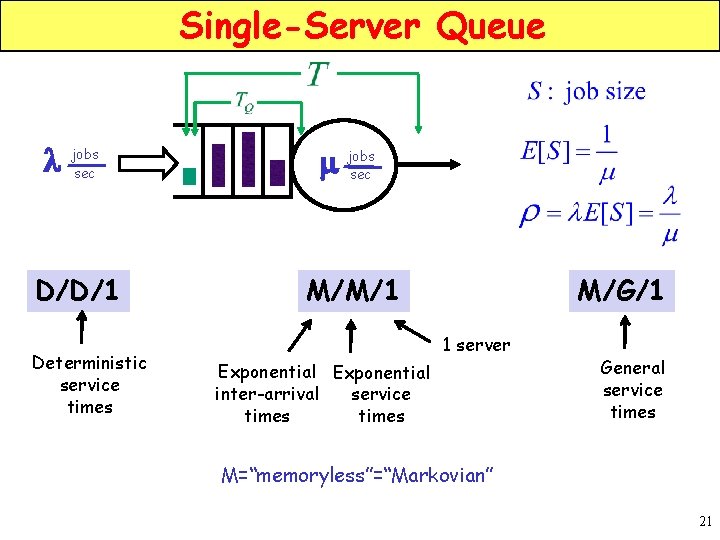

Single-Server Queue l jobs sec m jobs sec D/D/1 M/M/1 Deterministic service times M/G/1 1 server Exponential inter-arrival service times General service times M=“memoryless”=“Markovian” 21

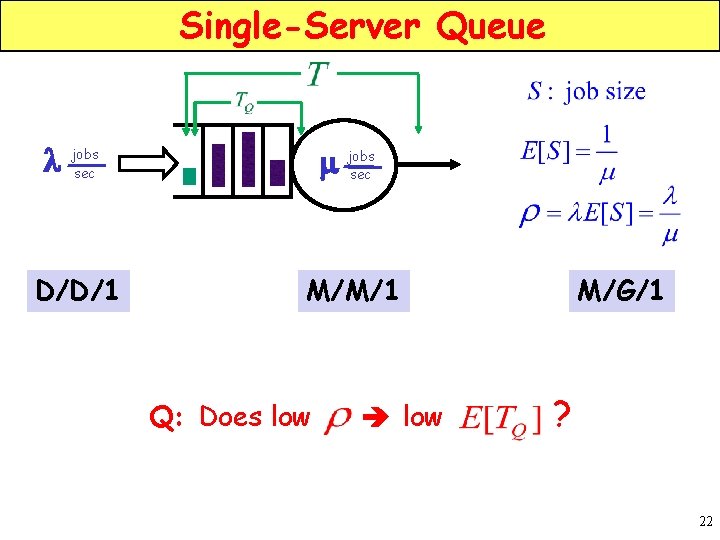

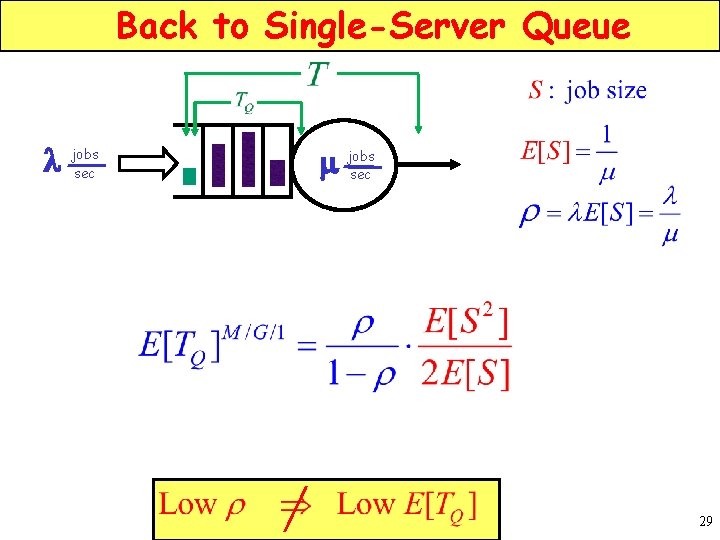

Single-Server Queue l jobs sec m jobs sec D/D/1 M/M/1 Q: Does low M/G/1 ? 22

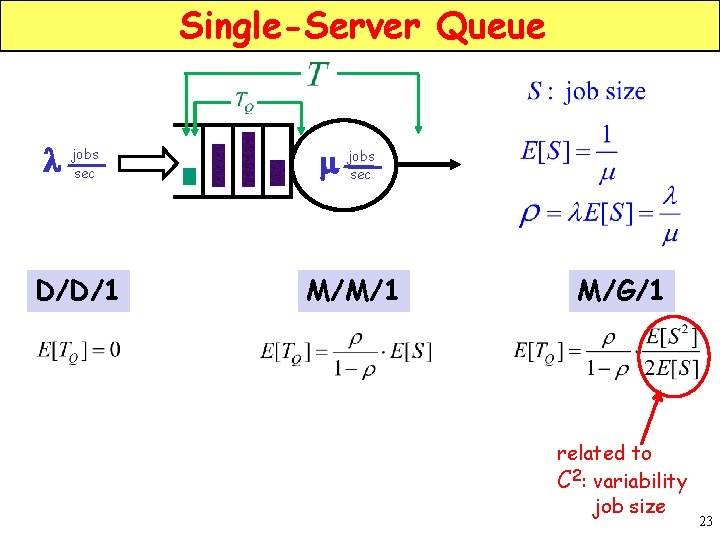

Single-Server Queue l jobs sec m jobs sec D/D/1 M/M/1 M/G/1 related to C 2: variability job size 23

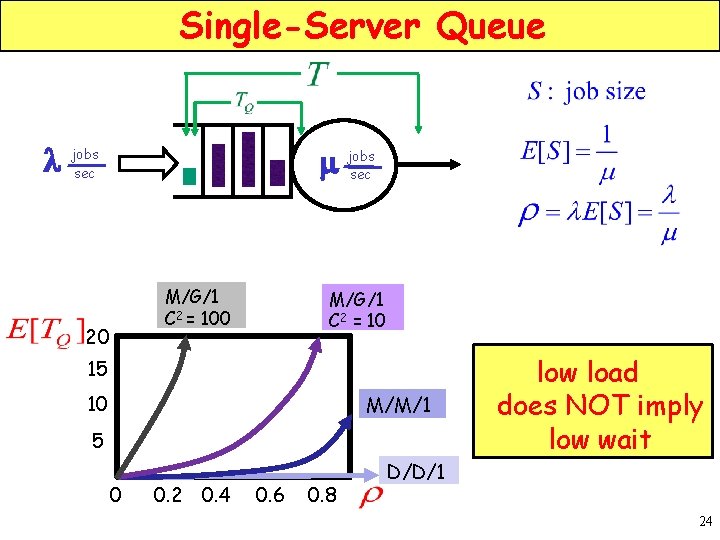

Single-Server Queue l jobs sec m jobs sec M/G/1 C 2 = 100 20 M/G/1 C 2 = 10 15 10 M/M/1 5 0 0. 2 0. 4 0. 6 0. 8 D/D/1 low load does NOT imply low wait 24

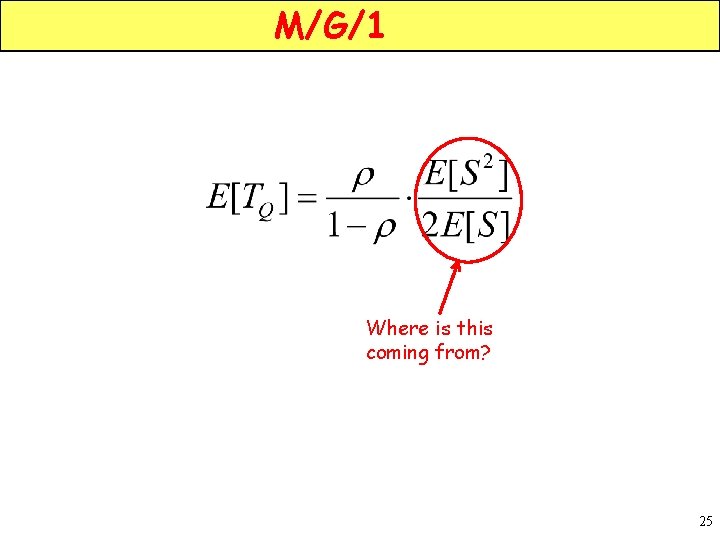

M/G/1 Where is this coming from? 25

Waiting for the bus 26

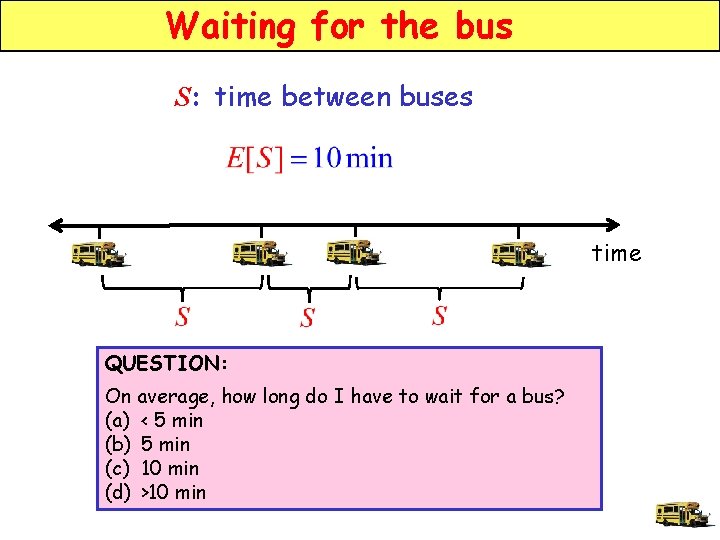

Waiting for the bus S: time between buses time QUESTION: On average, how long do I have to wait for a bus? (a) < 5 min (b) 5 min (c) 10 min (d) >10 min

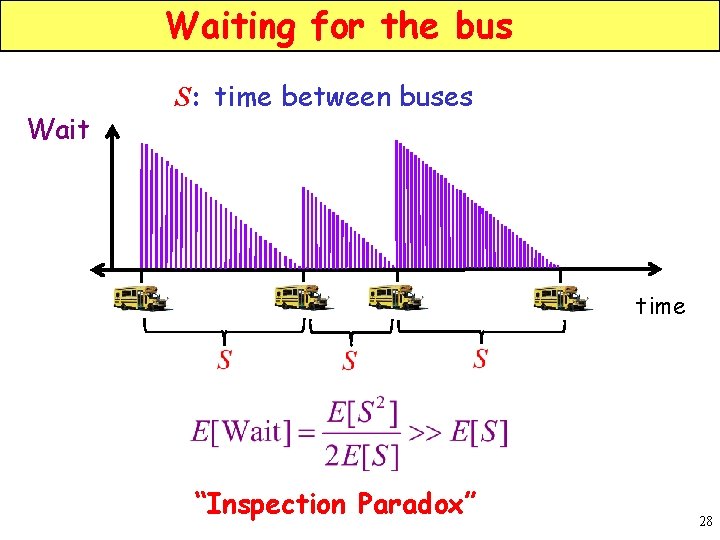

Waiting for the bus Wait S: time between buses time “Inspection Paradox” 28

Back to Single-Server Queue l jobs sec m jobs sec 29

Waiting for the Loo Check out the line for the men’s room … 30

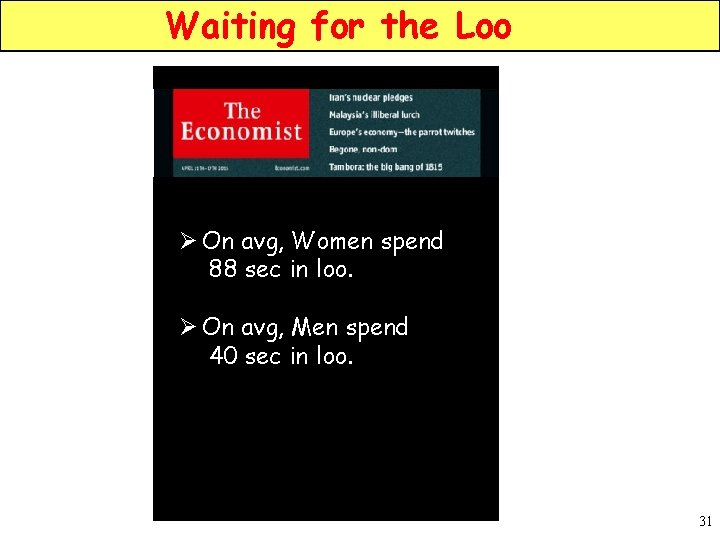

Waiting for the Loo Ø On avg, Women spend 88 sec in loo. Ø On avg, Men spend 40 sec in loo. 31

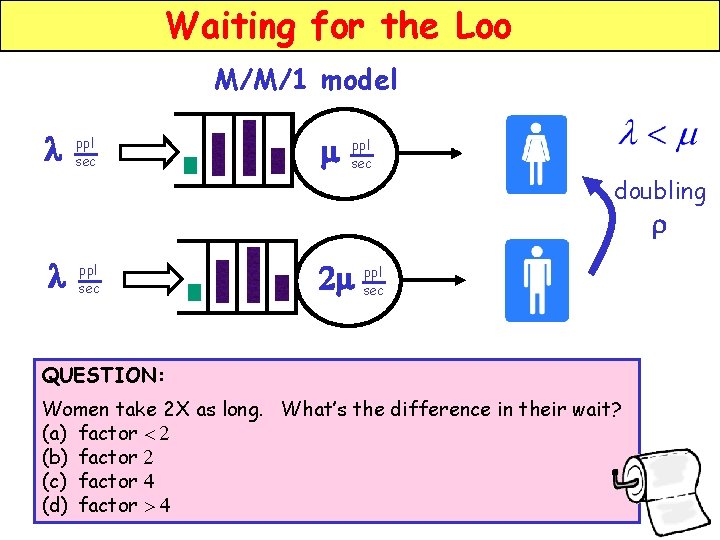

Waiting for the Loo M/M/1 model l ppl sec m ppl sec doubling r l ppl sec ppl 2 m sec QUESTION: Women take 2 X as long. What’s the difference in their wait? (a) factor < 2 (b) factor 2 (c) factor 4 (d) factor > 4

![Waiting for the Loo M/M/1 M/G/1 Doubling r can increase E[TQ] by factor of Waiting for the Loo M/M/1 M/G/1 Doubling r can increase E[TQ] by factor of](http://slidetodoc.com/presentation_image_h2/65fb795f79c5e256836da08f2c30d4a5/image-33.jpg)

Waiting for the Loo M/M/1 M/G/1 Doubling r can increase E[TQ] by factor of 4 to ∞ 33

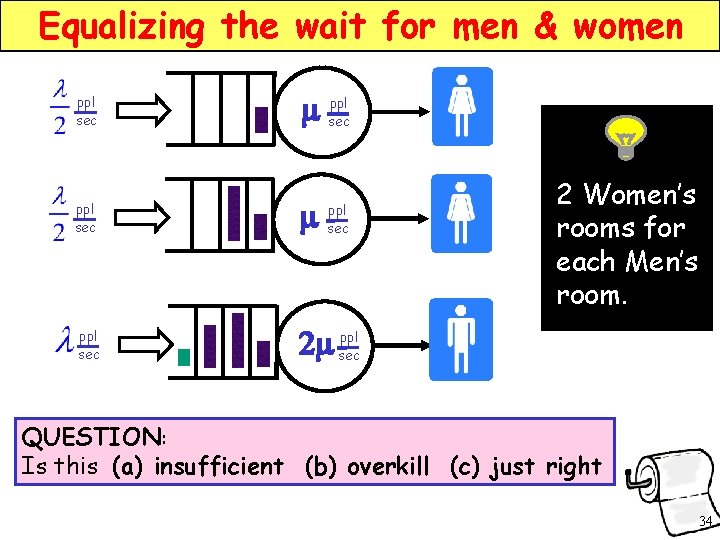

Equalizing the wait for men & women ppl sec ppl m sec ppl sec m ppl sec ppl 2 m sec ppl sec 2 Women’s rooms for each Men’s room. QUESTION: Is this (a) insufficient (b) overkill (c) just right 34

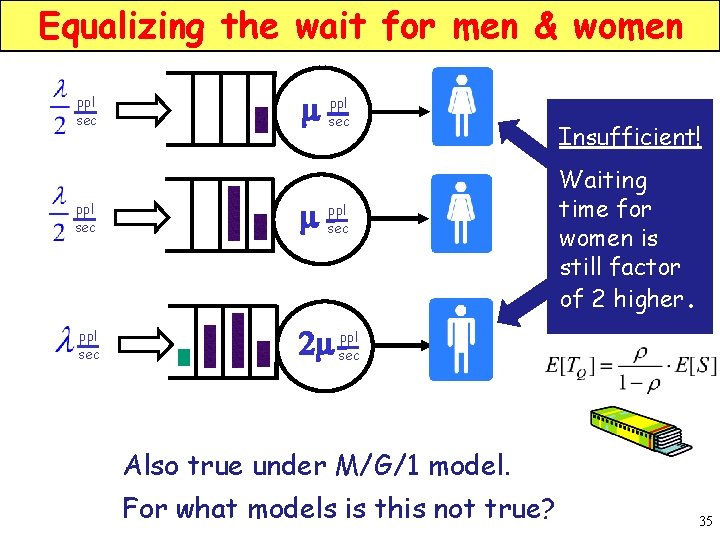

Equalizing the wait for men & women ppl sec ppl m sec ppl 2 m sec Insufficient! Waiting time for women is still factor of 2 higher. Also true under M/G/1 model. For what models is this not true? 35

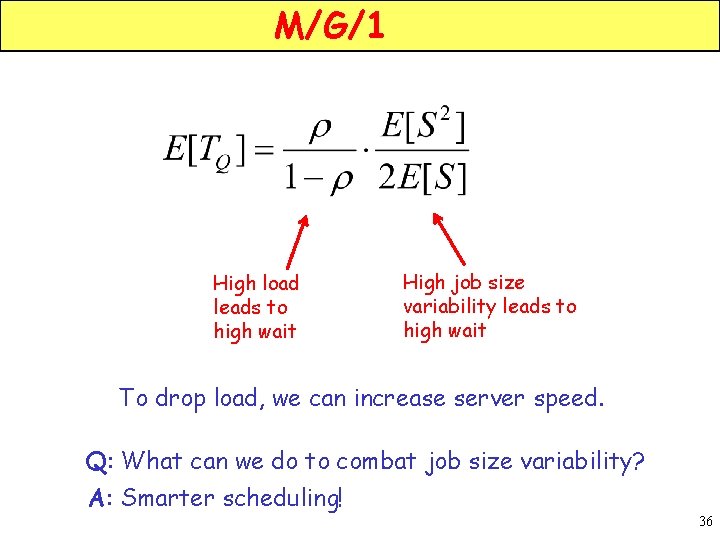

M/G/1 High load leads to high wait High job size variability leads to high wait To drop load, we can increase server speed. Q: What can we do to combat job size variability? A: Smarter scheduling! 36

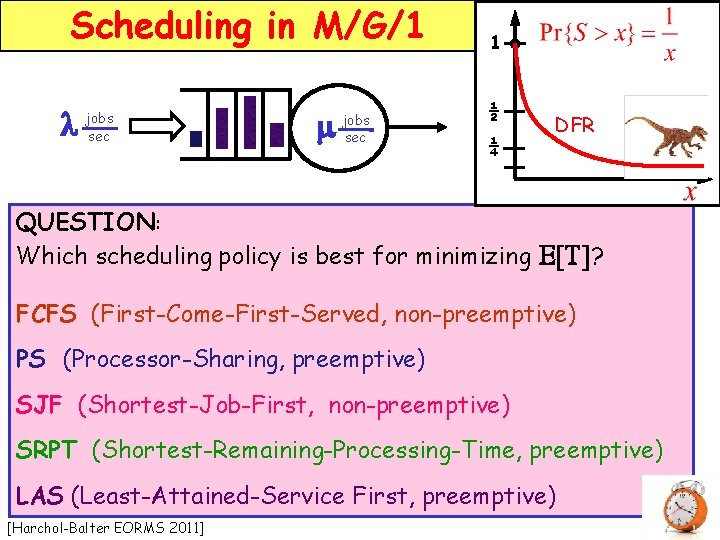

Scheduling in M/G/1 l jobs sec m jobs sec 1 ½ ¼ DFR QUESTION: Which scheduling policy is best for minimizing E[T]? FCFS (First-Come-First-Served, non-preemptive) PS (Processor-Sharing, preemptive) SJF (Shortest-Job-First, non-preemptive) SRPT (Shortest-Remaining-Processing-Time, preemptive) LAS (Least-Attained-Service First, preemptive) [Harchol-Balter EORMS 2011]

![Scheduling in M/G/1 1 l jobs sec m jobs sec ½ ¼ E[T] 9 Scheduling in M/G/1 1 l jobs sec m jobs sec ½ ¼ E[T] 9](http://slidetodoc.com/presentation_image_h2/65fb795f79c5e256836da08f2c30d4a5/image-38.jpg)

Scheduling in M/G/1 1 l jobs sec m jobs sec ½ ¼ E[T] 9 FCFS 7 E[T] PS SJF 5 9 LAS 7 SRPT 5 3 1 FCFS SJF LAS 3 0 0. 2 0. 4 0. 6 C 2=10 0. 8 1. 0 r 1 PS SRPT 0 0. 2 0. 4 0. 6 0. 8 1. 0 r C 2=100 38

![Scheduling in M/G/1 l jobs sec m jobs sec E[T] We saw: PS SRPT Scheduling in M/G/1 l jobs sec m jobs sec E[T] We saw: PS SRPT](http://slidetodoc.com/presentation_image_h2/65fb795f79c5e256836da08f2c30d4a5/image-39.jpg)

Scheduling in M/G/1 l jobs sec m jobs sec E[T] We saw: PS SRPT r But isn’t SRPT unfair to large jobs, when compared to PS? 39

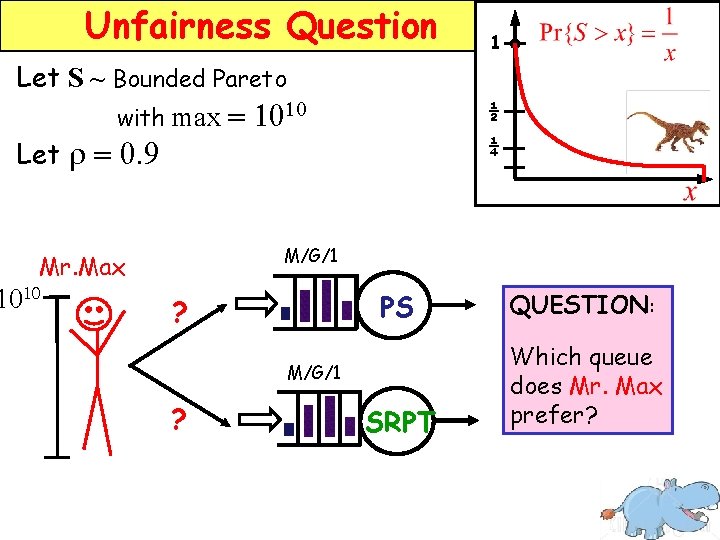

Unfairness Question Let S ~ Bounded Pareto with max = 1010 Let r = 0. 9 ½ ¼ M/G/1 Mr. Max 1010 1 ? PS QUESTION: SRPT Which queue does Mr. Max prefer? M/G/1 ?

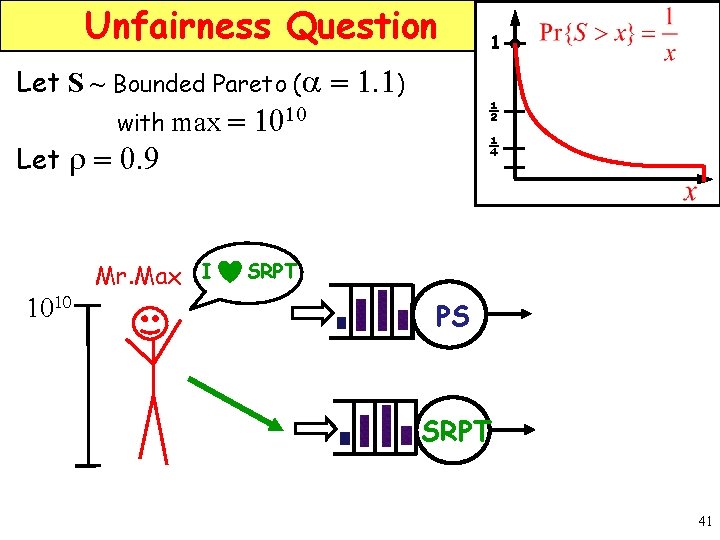

Unfairness Question Let S ~ Bounded Pareto (a = 1. 1) with max = 1010 Let r = 0. 9 1010 Mr. Max I 1 ½ ¼ SRPT PS SRPT 41

![Unfairness Question All-can-win-theorem: [Bansal, Harchol-Balter, Sigmetrics 2001] Defies Kleinrock’s Conservation Law Under M/G/1, for Unfairness Question All-can-win-theorem: [Bansal, Harchol-Balter, Sigmetrics 2001] Defies Kleinrock’s Conservation Law Under M/G/1, for](http://slidetodoc.com/presentation_image_h2/65fb795f79c5e256836da08f2c30d4a5/image-42.jpg)

Unfairness Question All-can-win-theorem: [Bansal, Harchol-Balter, Sigmetrics 2001] Defies Kleinrock’s Conservation Law Under M/G/1, for all job size distributions, if r < 0. 5, E[T(x)]SRPT < E[T(x)]PS for all job size x. For heavy-tailed distributions, holds for r < 0. 95. 42

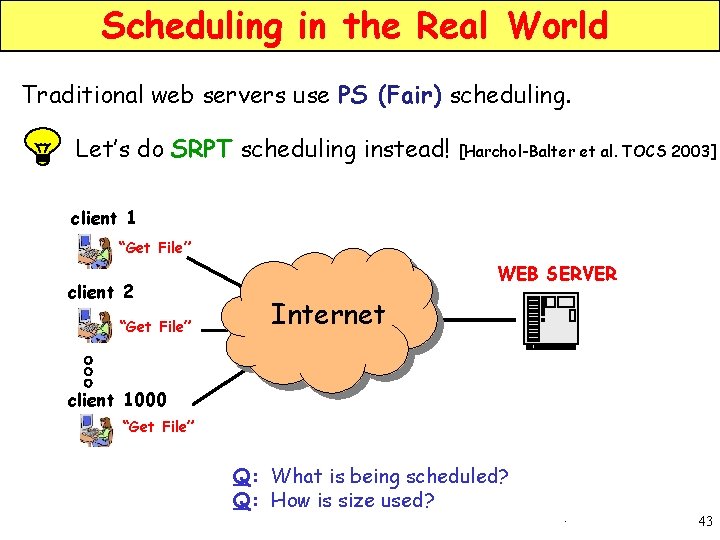

Scheduling in the Real World Traditional web servers use PS (Fair) scheduling. Let’s do SRPT scheduling instead! [Harchol-Balter et al. TOCS 2003] client 1 “Get File” client 2 “Get File” WEB SERVER Internet client 1000 “Get File” Q: What is being scheduled? Q: How is size used? 5 43

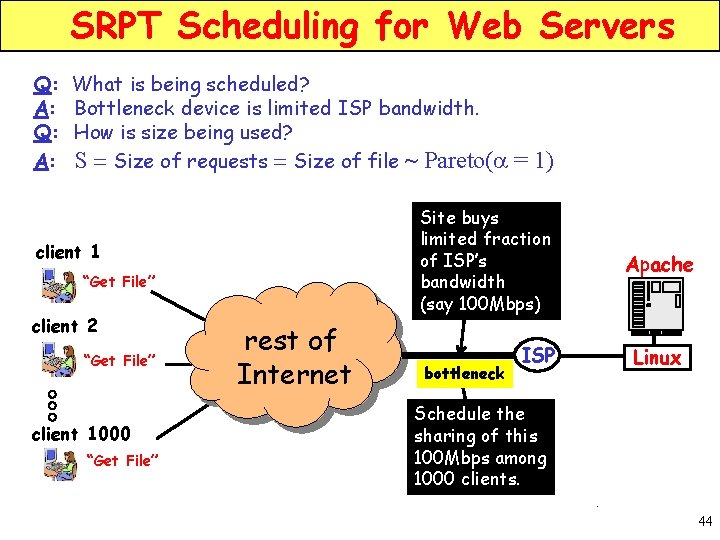

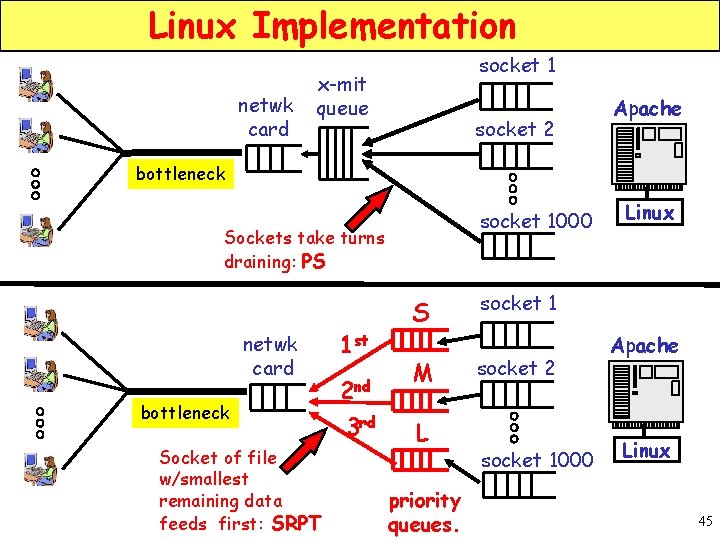

SRPT Scheduling for Web Servers Q: What is being scheduled? A: Bottleneck device is limited ISP bandwidth. Q: How is size being used? A: S = Size of requests = Size of file ~ Pareto(a Site buys limited fraction of ISP’s bandwidth (say 100 Mbps) client 1 “Get File” client 2 “Get File” client 1000 “Get File” = 1) rest of Internet bottleneck Apache ISP Linux Schedule the sharing of this 100 Mbps among 1000 clients. 5 44

Linux Implementation netwk card socket 1 x-mit queue socket 2 Apache bottleneck socket 1000 Sockets take turns draining: PS netwk card bottleneck Socket of file w/smallest remaining data feeds first: SRPT 1 st 2 nd 3 rd S M L priority queues. Linux socket 1 socket 2 socket 1000 Apache Linux 45

![Mean response time results E[T] 0. 20 s 0. 15 s 0. 10 s Mean response time results E[T] 0. 20 s 0. 15 s 0. 10 s](http://slidetodoc.com/presentation_image_h2/65fb795f79c5e256836da08f2c30d4a5/image-46.jpg)

Mean response time results E[T] 0. 20 s 0. 15 s 0. 10 s PS 0. 05 s SRPT 0. 4 0. 6 0. 8 1. 0 r 46

![Response time as fcn of Size E[T(x)] 1. 0 s 10 -1 s PS Response time as fcn of Size E[T(x)] 1. 0 s 10 -1 s PS](http://slidetodoc.com/presentation_image_h2/65fb795f79c5e256836da08f2c30d4a5/image-47.jpg)

Response time as fcn of Size E[T(x)] 1. 0 s 10 -1 s PS 10 -2 s SRPT 10 -3 s 20% 40% 60% 80% 100% Percentile of Request Size percentile of job size x 47

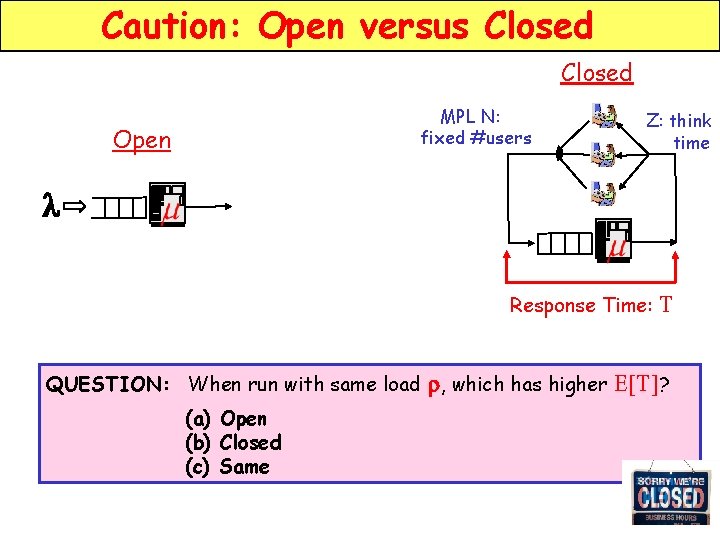

Caution: Open versus Closed MPL N: fixed #users Open Z: think time l Response Time: QUESTION: When run with same load r, which has higher (a) Open (b) Closed (c) Same T E[T]?

![Caution: Open versus Closed MPL N: fixed #users Open Z: think time l E[T] Caution: Open versus Closed MPL N: fixed #users Open Z: think time l E[T]](http://slidetodoc.com/presentation_image_h2/65fb795f79c5e256836da08f2c30d4a5/image-49.jpg)

Caution: Open versus Closed MPL N: fixed #users Open Z: think time l E[T] (ms) Open 102 T MPL 1000 10 MPL 100 1 10 -1 Response Time: MPL 10 0. 2 0. 4 0. 6 0. 8 Performance of Auction Site 1. 0 [Schroeder, Wierman, Harchol-Balter NSDI 2006] r E[T] much lower for closed system w/ same r 49

![Caution: Open versus Closed Open l E[T] PS E[T] SRPT r PS SRPT r Caution: Open versus Closed Open l E[T] PS E[T] SRPT r PS SRPT r](http://slidetodoc.com/presentation_image_h2/65fb795f79c5e256836da08f2c30d4a5/image-50.jpg)

Caution: Open versus Closed Open l E[T] PS E[T] SRPT r PS SRPT r Closed & open systems run w/ same job size distribution and same load. [Schroeder, Wierman, Harchol-Balter, NSDI 06] 50

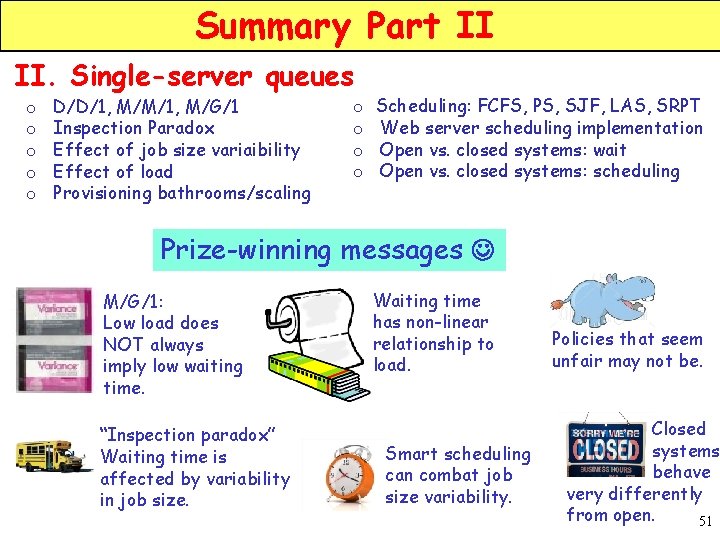

Summary Part II II. Single-server queues o o o D/D/1, M/M/1, M/G/1 Inspection Paradox Effect of job size variaibility Effect of load Provisioning bathrooms/scaling o o Scheduling: FCFS, PS, SJF, LAS, SRPT Web server scheduling implementation Open vs. closed systems: wait Open vs. closed systems: scheduling Prize-winning messages M/G/1: Low load does NOT always imply low waiting time. “Inspection paradox” Waiting time is affected by variability in job size. Waiting time has non-linear relationship to load. Smart scheduling can combat job size variability. Policies that seem unfair may not be. Closed systems behave very differently from open. 51

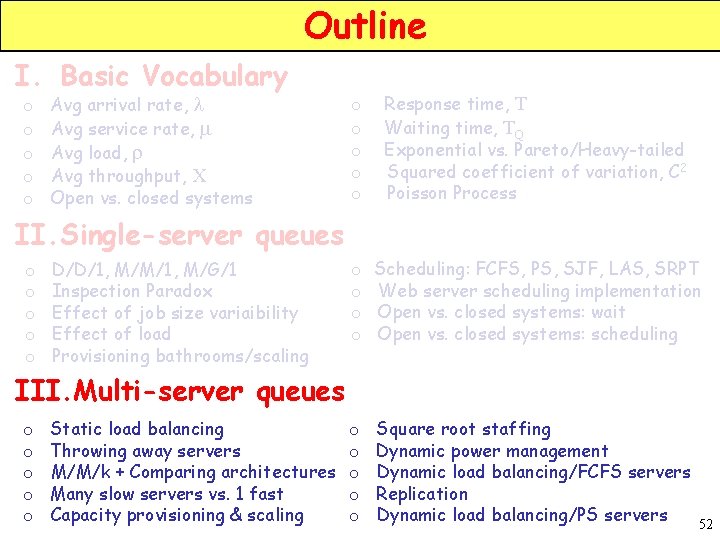

Outline I. Basic Vocabulary o o o Avg arrival rate, l Avg service rate, m Avg load, r Avg throughput, X Open vs. closed systems o o o Response time, T Waiting time, TQ Exponential vs. Pareto/Heavy-tailed Squared coefficient of variation, C 2 Poisson Process o o Scheduling: FCFS, PS, SJF, LAS, SRPT Web server scheduling implementation Open vs. closed systems: wait Open vs. closed systems: scheduling o o o Square root staffing Dynamic power management Dynamic load balancing/FCFS servers Replication Dynamic load balancing/PS servers II. Single-server queues o o o D/D/1, M/M/1, M/G/1 Inspection Paradox Effect of job size variaibility Effect of load Provisioning bathrooms/scaling III. Multi-server queues o o o Static load balancing Throwing away servers M/M/k + Comparing architectures Many slow servers vs. 1 fast Capacity provisioning & scaling 52

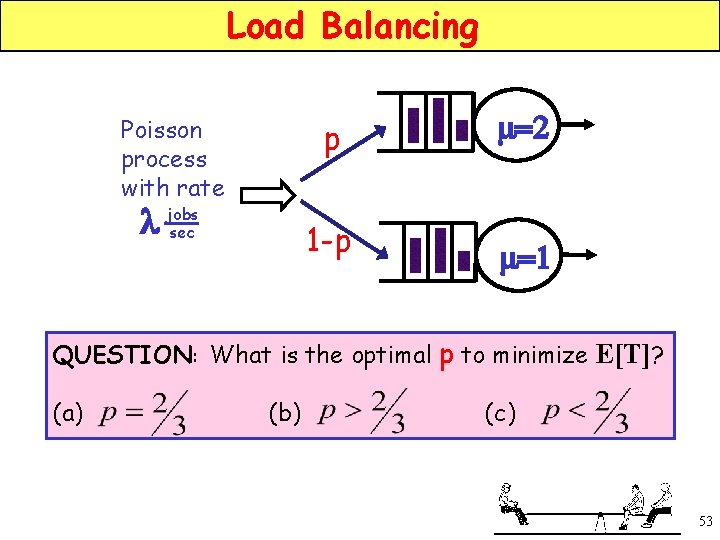

Load Balancing Poisson process with rate l jobs sec p m=2 1 -p m=1 QUESTION: What is the optimal p to minimize E[T]? (a) (b) (c) 53

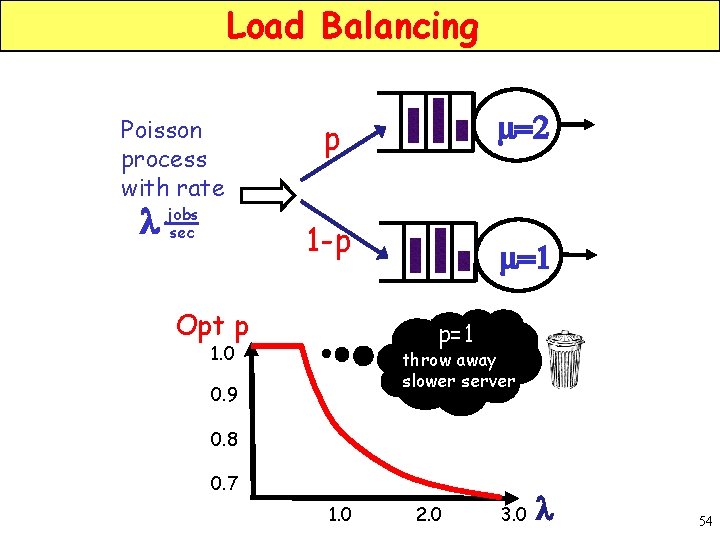

Load Balancing Poisson process with rate l jobs sec p m=2 1 -p m=1 Opt p p=1 1. 0 throw away slower server 0. 9 0. 8 0. 7 1. 0 2. 0 3. 0 l 54

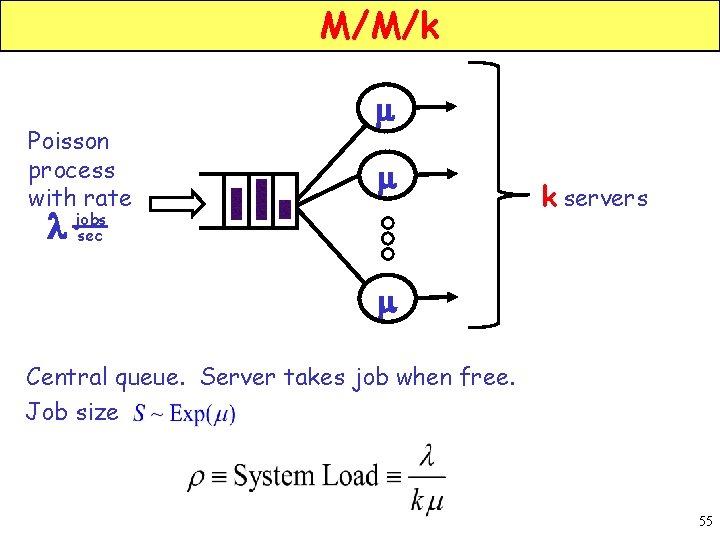

M/M/k Poisson process with rate m m l jobs sec k servers m Central queue. Server takes job when free. Job size 55

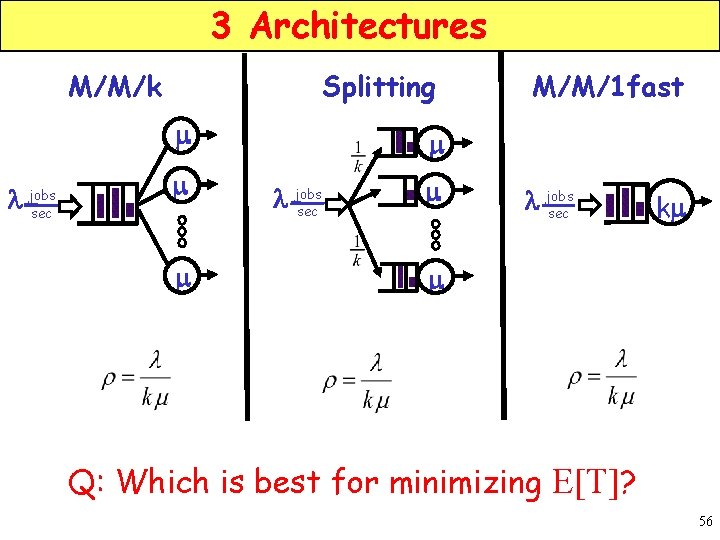

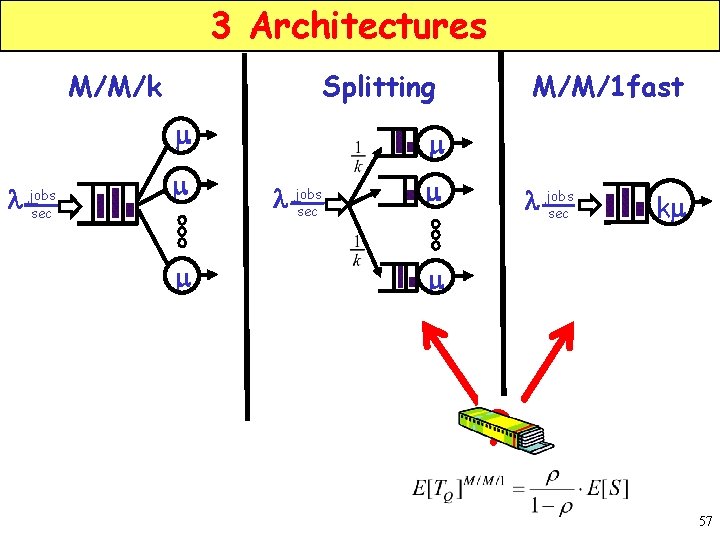

3 Architectures M/M/k Splitting m l jobs sec m m M/M/1 fast m l jobs sec km m Q: Which is best for minimizing E[T]? 56

3 Architectures M/M/k Splitting m l jobs sec m m M/M/1 fast m l jobs sec km m ? 57

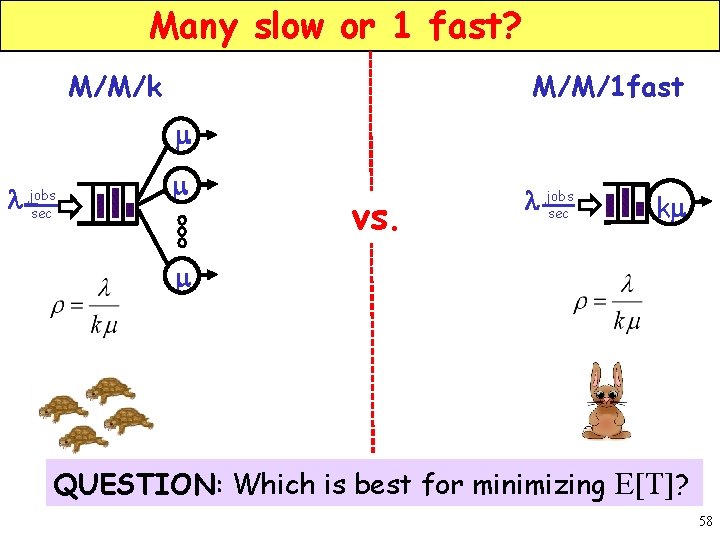

Many slow or 1 fast? M/M/k M/M/1 fast m l jobs sec m vs. l jobs sec km m QUESTION: Which is best for minimizing E[T]? 58

![Many slow or 1 fast? 1. 0 0. 6 E[T] 0. 2 1. 0 Many slow or 1 fast? 1. 0 0. 6 E[T] 0. 2 1. 0](http://slidetodoc.com/presentation_image_h2/65fb795f79c5e256836da08f2c30d4a5/image-59.jpg)

Many slow or 1 fast? 1. 0 0. 6 E[T] 0. 2 1. 0 2. 0 1. 5 1. 0 0. 5 2. 0 3. 0 M/M/1 fast 2 4 6 8 l 10 59

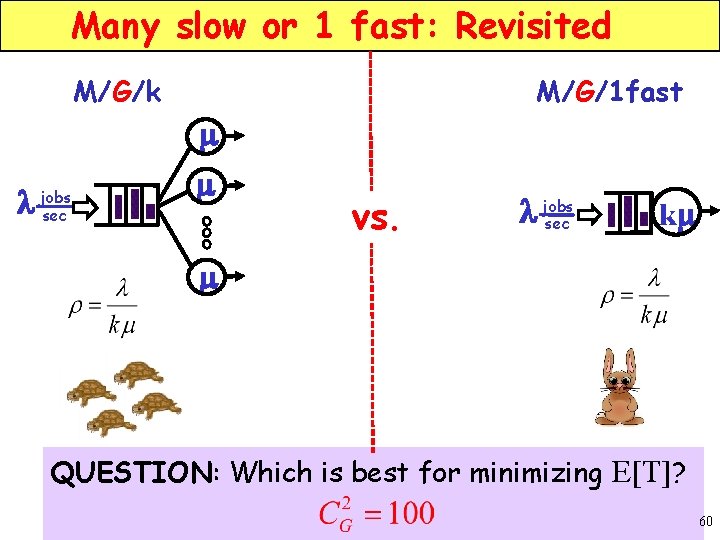

Many slow or 1 fast: Revisited M/G/k M/G/1 fast m l jobs sec m vs. l jobs sec km m QUESTION: Which is best for minimizing E[T]? 60

![Many slow or 1 fast: Revisited E[T] M/G/1 fast 10 5 0 M/G/10 2 Many slow or 1 fast: Revisited E[T] M/G/1 fast 10 5 0 M/G/10 2](http://slidetodoc.com/presentation_image_h2/65fb795f79c5e256836da08f2c30d4a5/image-61.jpg)

Many slow or 1 fast: Revisited E[T] M/G/1 fast 10 5 0 M/G/10 2 4 6 l 61

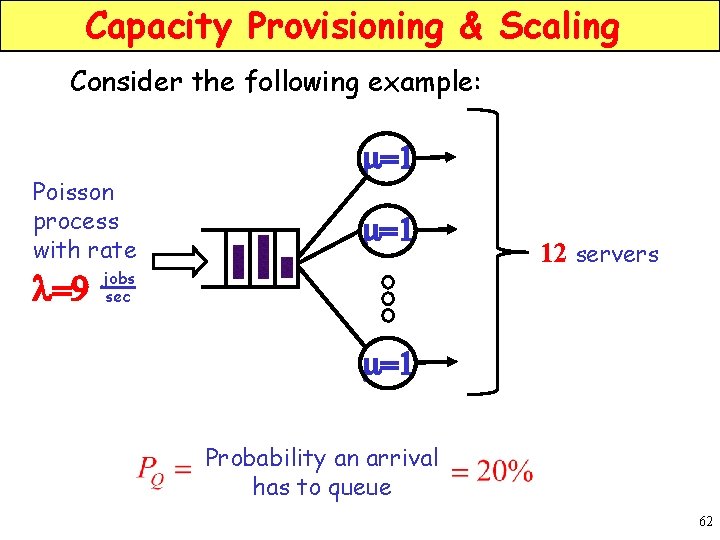

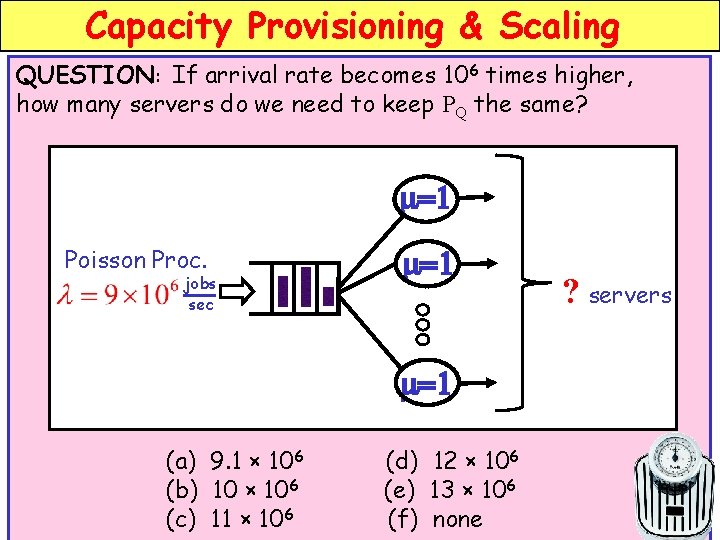

Capacity Provisioning & Scaling Consider the following example: Poisson process with rate l=9 m=1 jobs sec 12 servers m=1 Probability an arrival has to queue 62

Capacity Provisioning & Scaling QUESTION: If arrival rate becomes 106 times higher, how many servers do we need to keep PQ the same? m=1 Poisson Proc. jobs sec m=1 ? servers m=1 (a) 9. 1 × 106 (b) 10 × 106 (c) 11 × 106 (d) 12 × 106 (e) 13 × 106 (f) none 63

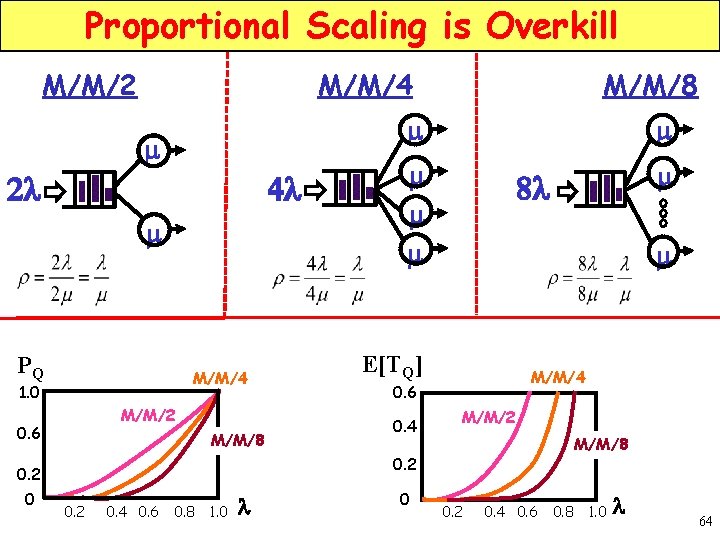

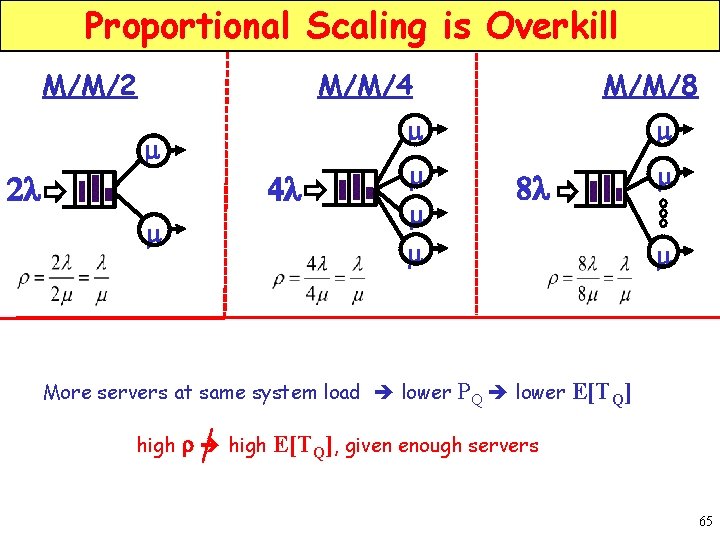

Proportional Scaling is Overkill M/M/4 M/M/2 4 l 2 l m PQ M/M/4 1. 0 M/M/2 M/M/8 m m E[TQ] M/M/4 0. 6 0. 4 m 8 l M/M/2 M/M/8 0. 2 0 m m m 0. 6 M/M/8 0. 2 0. 4 0. 6 0. 8 1. 0 l 0 0. 2 0. 4 0. 6 0. 8 1. 0 l 64

Proportional Scaling is Overkill M/M/4 M/M/2 4 l m m More servers at same system load lower high m m m 2 l M/M/8 8 l m m PQ lower E[TQ] r high E[TQ], given enough servers 65

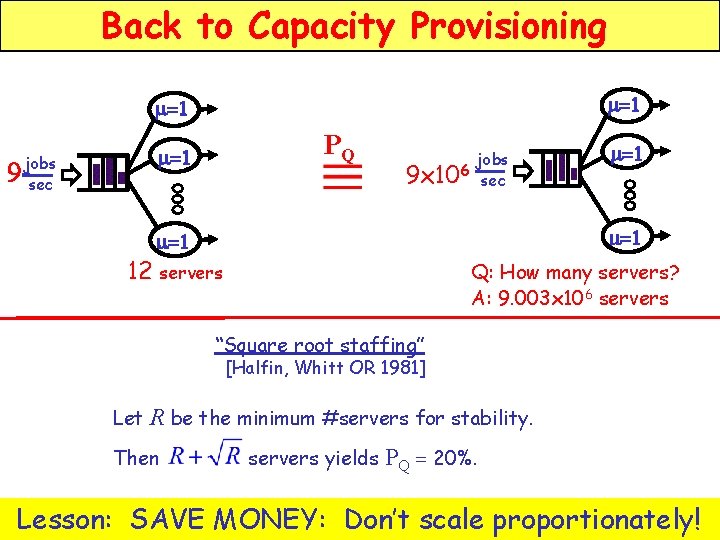

Back to Capacity Provisioning m=1 9 PQ m=1 jobs sec jobs 6 9 x 10 sec m=1 m=1 12 servers Q: How many servers? A: 9. 003 x 106 servers “Square root staffing” [Halfin, Whitt OR 1981] Let R be the minimum #servers for stability. Then servers yields PQ = 20%. Lesson: SAVE MONEY: Don’t scale proportionately!66

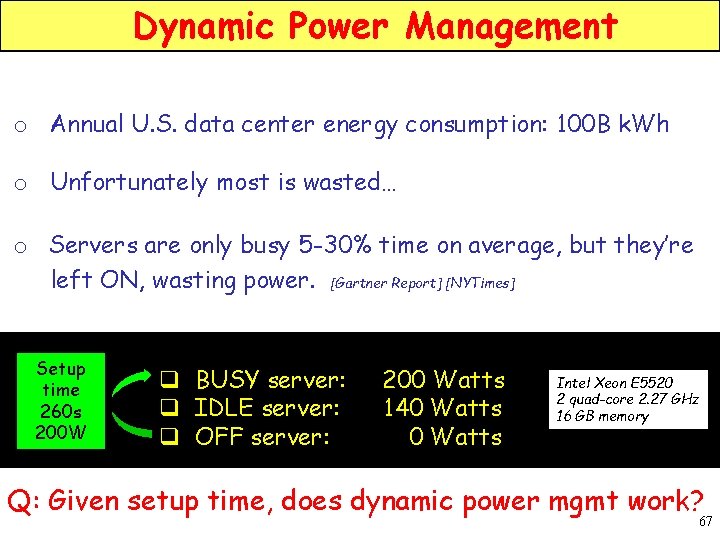

Dynamic Power Management o Annual U. S. data center energy consumption: 100 B k. Wh o Unfortunately most is wasted… o Servers are only busy 5 -30% time on average, but they’re left ON, wasting power. [Gartner Report] [NYTimes] Setup time 260 s 200 W q BUSY server: q IDLE server: q OFF server: 200 Watts 140 Watts Intel Xeon E 5520 2 quad-core 2. 27 GHz 16 GB memory Q: Given setup time, does dynamic power mgmt work? 67

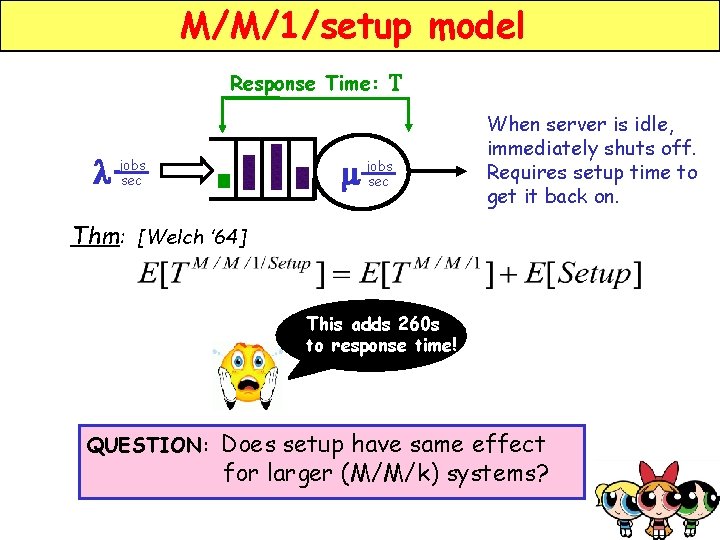

M/M/1/setup model Response Time: l jobs sec m T jobs sec When server is idle, immediately shuts off. Requires setup time to get it back on. Thm: [Welch ’ 64] This adds 260 s to response time! QUESTION: Does setup have same effect for larger (M/M/k) systems? 68

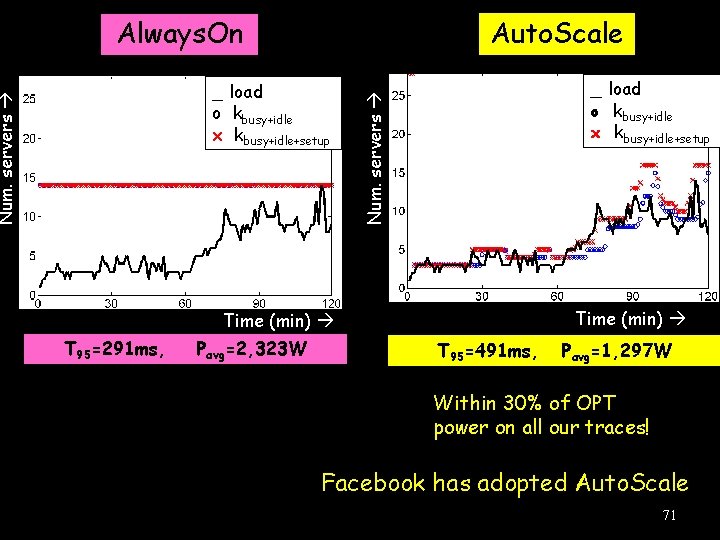

Effect of setup in larger systems We will scale up system size, while keep load fixed. M/M/k/setup m lk jobs sec m 100 k servers m 80 60 40 20 Setup matters less as k increases. 0 #servers, k This is why dynamic power mgmt works! [Gandhi, Doroudi, Harchol-Balter, Scheller-Wolf Sigmetrics 2013] 69

![Dynamic Power Mgmt Implementation [Gandhi, Harchol-Balter, Raghunathan, Kozuch TOCS 2012] Unknown Power Aware Load Dynamic Power Mgmt Implementation [Gandhi, Harchol-Balter, Raghunathan, Kozuch TOCS 2012] Unknown Power Aware Load](http://slidetodoc.com/presentation_image_h2/65fb795f79c5e256836da08f2c30d4a5/image-70.jpg)

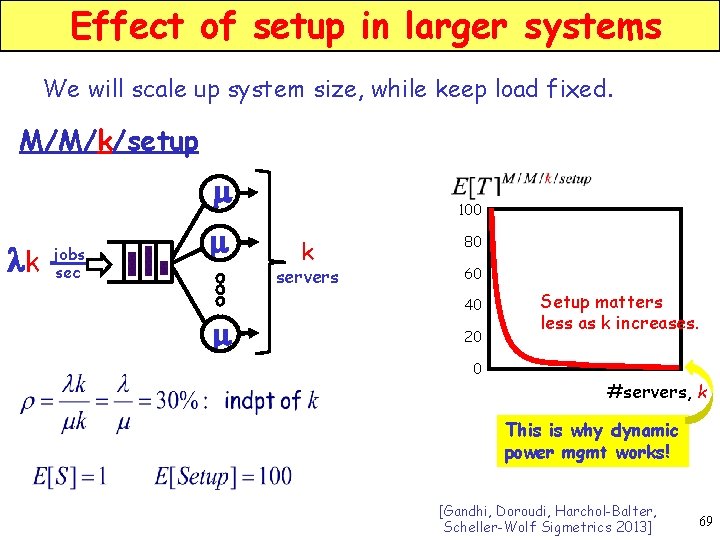

Dynamic Power Mgmt Implementation [Gandhi, Harchol-Balter, Raghunathan, Kozuch TOCS 2012] Unknown Power Aware Load Balancer q key-value workload mix of CPU & I/O q 1 job = 1 to 3000 KV pairs 120 ms total on avg q SLA: T 95 < 500 ms q Setup time: 260 s 500 GB DB 7 Memcached 28 Application servers 70

Auto. Scale Num. servers __ load oo kkbusy+idle xx kkbusy+idle+setup _ load o kbusy+idle x kbusy+idle+setup Num. servers Always. On Time (min) T 95=291 ms, Pavg=2, 323 W T 95=491 ms, Pavg=1, 297 W Within 30% of OPT power on all our traces! Facebook has adopted Auto. Scale 71

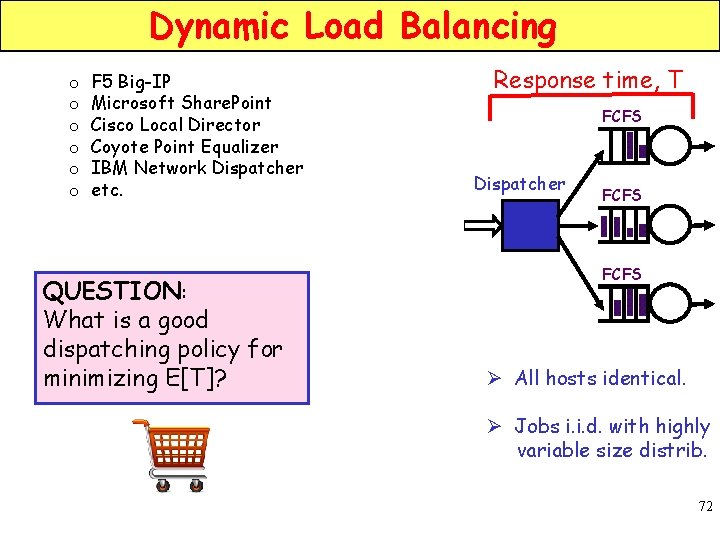

Dynamic Load Balancing o o o F 5 Big-IP Microsoft Share. Point Cisco Local Director Coyote Point Equalizer IBM Network Dispatcher etc. QUESTION: What is a good dispatching policy for minimizing E[T]? Response time, T FCFS Dispatcher FCFS Ø All hosts identical. Ø Jobs i. i. d. with highly variable size distrib. 72

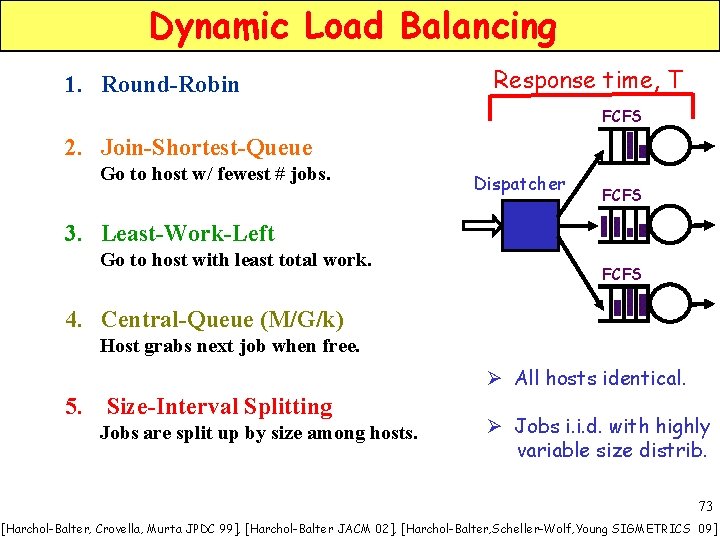

Dynamic Load Balancing 1. Round-Robin Response time, T FCFS 2. Join-Shortest-Queue Go to host w/ fewest # jobs. Dispatcher FCFS 3. Least-Work-Left Go to host with least total work. FCFS 4. Central-Queue (M/G/k) Host grabs next job when free. Ø All hosts identical. 5. Size-Interval Splitting Jobs are split up by size among hosts. Ø Jobs i. i. d. with highly variable size distrib. 73 [Harchol-Balter, Crovella, Murta JPDC 99], [Harchol-Balter JACM 02], [Harchol-Balter, Scheller-Wolf, Young SIGMETRICS 09]

![Dynamic Load Balancing High E[T] 1. Round-Robin Response time, T FCFS 2. Join-Shortest-Queue generally Dynamic Load Balancing High E[T] 1. Round-Robin Response time, T FCFS 2. Join-Shortest-Queue generally](http://slidetodoc.com/presentation_image_h2/65fb795f79c5e256836da08f2c30d4a5/image-74.jpg)

Dynamic Load Balancing High E[T] 1. Round-Robin Response time, T FCFS 2. Join-Shortest-Queue generally Go to host w/ fewest # jobs. Dispatcher FCFS 3. Least-Work-Left Go to host with least total work. FCFS 4. Central-Queue (M/G/k) Host grabs next job when free. Ø All hosts identical. 5. Size-Interval Splitting Jobs are split up by size among hosts. Low E[T] Ø Jobs i. i. d. with highly variable size distrib. 74 [Harchol-Balter, Crovella, Murta JPDC 99], [Harchol-Balter JACM 02], [Harchol-Balter, Scheller-Wolf, Young SIGMETRICS 09]

![Dynamic Load Balancing High E[T] 1. Round-Robin 2. Join-Shortest-Queue generally Go to host w/ Dynamic Load Balancing High E[T] 1. Round-Robin 2. Join-Shortest-Queue generally Go to host w/](http://slidetodoc.com/presentation_image_h2/65fb795f79c5e256836da08f2c30d4a5/image-75.jpg)

Dynamic Load Balancing High E[T] 1. Round-Robin 2. Join-Shortest-Queue generally Go to host w/ fewest # jobs. Central-Queue: + Good utilization of servers. + Some isolation for smalls 3. Least-Work-Left Go to host with least total work. 4. Central-Queue (M/G/k) Host grabs next job when free. Size-Interval Task Assignment - Worse utilization of servers. + Great isolation for smalls! 5. Size-Interval Splitting Jobs are split up by size among hosts. Low E[T] 75 [Harchol-Balter, Crovella, Murta JPDC 99], [Harchol-Balter JACM 02], [Harchol-Balter, Scheller-Wolf, Young SIGMETRICS 09]

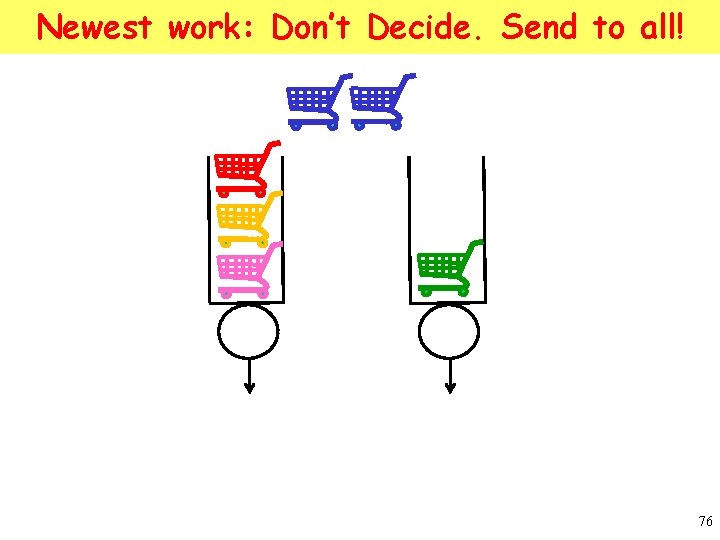

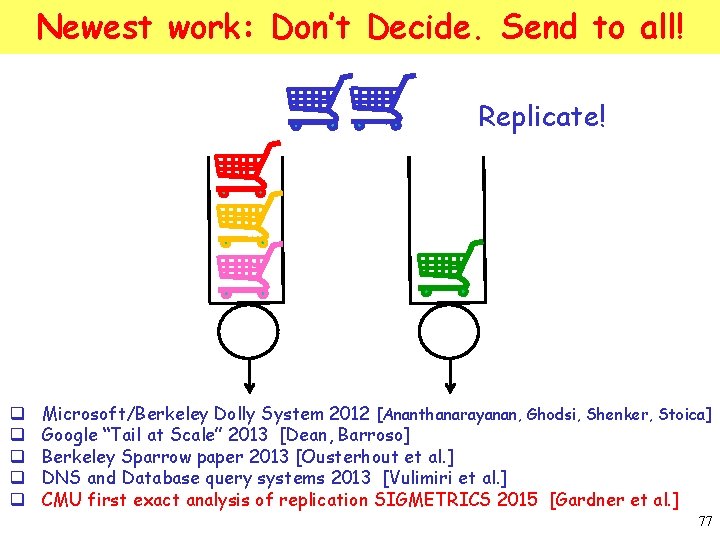

Newest work: Don’t Decide. Send to all! 76

Newest work: Don’t Decide. Send to all! Replicate! q q q Microsoft/Berkeley Dolly System 2012 [Ananthanarayanan, Ghodsi, Shenker, Stoica] Google “Tail at Scale” 2013 [Dean, Barroso] Berkeley Sparrow paper 2013 [Ousterhout et al. ] DNS and Database query systems 2013 [Vulimiri et al. ] CMU first exact analysis of replication SIGMETRICS 2015 [Gardner et al. ] 77

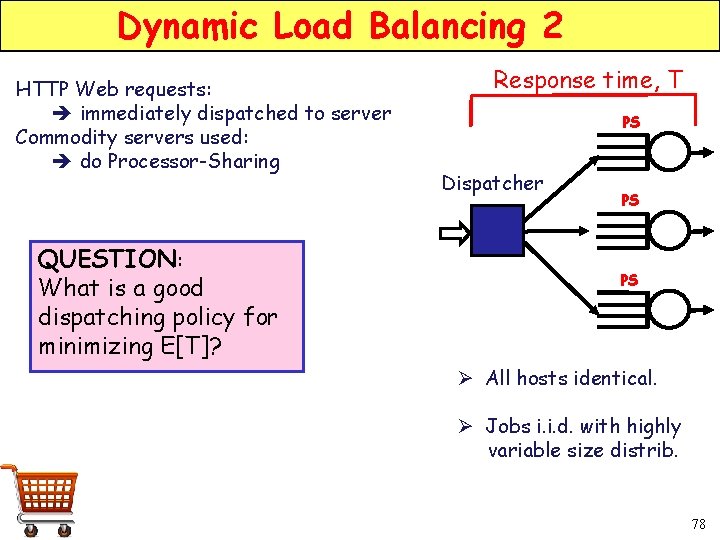

Dynamic Load Balancing 2 HTTP Web requests: immediately dispatched to server Commodity servers used: do Processor-Sharing QUESTION: What is a good dispatching policy for minimizing E[T]? Response time, T PS Dispatcher PS PS Ø All hosts identical. Ø Jobs i. i. d. with highly variable size distrib. 78

![Dynamic Load Balancing 2 High E[T]FCFS Response time, T 1. Round-Robin 2. Join-Shortest-Queue PS Dynamic Load Balancing 2 High E[T]FCFS Response time, T 1. Round-Robin 2. Join-Shortest-Queue PS](http://slidetodoc.com/presentation_image_h2/65fb795f79c5e256836da08f2c30d4a5/image-79.jpg)

Dynamic Load Balancing 2 High E[T]FCFS Response time, T 1. Round-Robin 2. Join-Shortest-Queue PS Dispatcher PS Go to host w/ fewest # jobs. 3. Least-Work-Left Go to host with least total work. 4. Size-Interval Splitting Jobs are split up by size Low among hosts. E[T]FCFS PS Ø All hosts identical. Ø Jobs i. i. d. with highly variable size distrib. 79

![Dynamic Load Balancing 2 High E[T]FCFS Response time, T 1. Round-Robin 2. Join-Shortest-Queue PS Dynamic Load Balancing 2 High E[T]FCFS Response time, T 1. Round-Robin 2. Join-Shortest-Queue PS](http://slidetodoc.com/presentation_image_h2/65fb795f79c5e256836da08f2c30d4a5/image-80.jpg)

Dynamic Load Balancing 2 High E[T]FCFS Response time, T 1. Round-Robin 2. Join-Shortest-Queue PS Dispatcher PS Go to host w/ fewest # jobs. 3. Least-Work-Left PS Go to host with least total work. 4. Size-Interval Splitting Jobs are split up by size Low among hosts. E[T]FCFS QUESTION: What is the best of these for PS server farms? 80

![Dynamic Load Balancing 2 High E[T]FCFS Response time, T 1. Round-Robin 2. Join-Shortest-Queue PS Dynamic Load Balancing 2 High E[T]FCFS Response time, T 1. Round-Robin 2. Join-Shortest-Queue PS](http://slidetodoc.com/presentation_image_h2/65fb795f79c5e256836da08f2c30d4a5/image-81.jpg)

Dynamic Load Balancing 2 High E[T]FCFS Response time, T 1. Round-Robin 2. Join-Shortest-Queue PS Dispatcher PS Go to host w/ fewest # jobs. 3. Least-Work-Left PS Go to host with least total work. 4. Size-Interval Splitting Jobs are split up by size Low among hosts. E[T]FCFS [Gupta, Harchol-Balter, Sigman, Whitt Performance 07] QUESTION: What is the best of these for PS server farms? 81

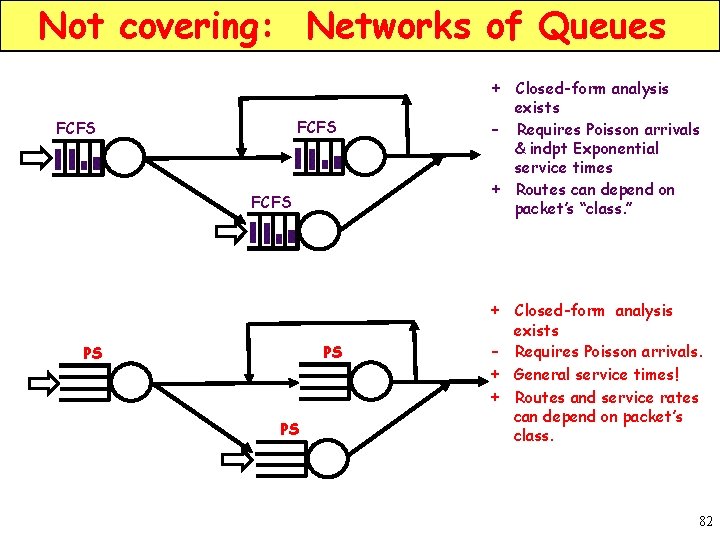

Not covering: Networks of Queues + Closed-form analysis FCFS exists - Requires Poisson arrivals & indpt Exponential service times + Routes can depend on packet’s “class. ” + Closed-form analysis PS PS PS exists - Requires Poisson arrivals. + General service times! + Routes and service rates can depend on packet’s class. 82

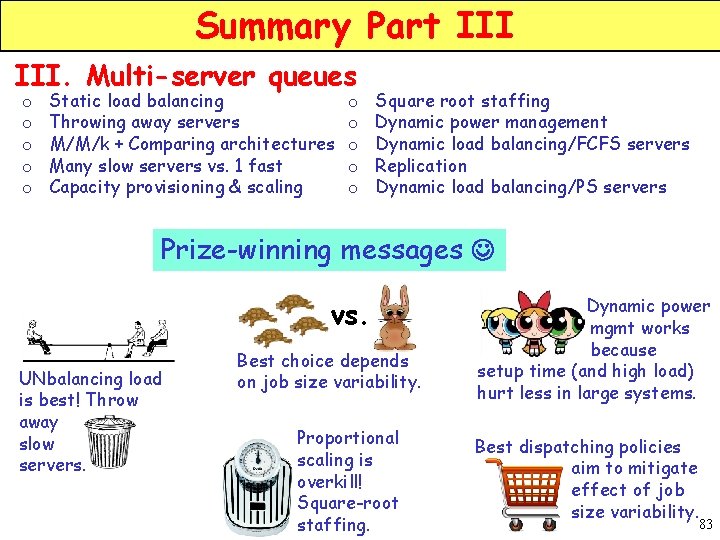

Summary Part III. Multi-server queues o o o Static load balancing Throwing away servers M/M/k + Comparing architectures Many slow servers vs. 1 fast Capacity provisioning & scaling o o o Square root staffing Dynamic power management Dynamic load balancing/FCFS servers Replication Dynamic load balancing/PS servers Prize-winning messages vs. UNbalancing load is best! Throw away slow servers. Best choice depends on job size variability. Proportional scaling is overkill! Square-root staffing. Dynamic power. mgmt works because setup time (and high load) hurt less in large systems. Best dispatching policies aim to mitigate effect of job size variability. 83

THANK YOU! www. cs. cmu. edu/~harchol/ 84

- Slides: 84