Solution of Nonlinear Equations Root Finding Problems Definitions

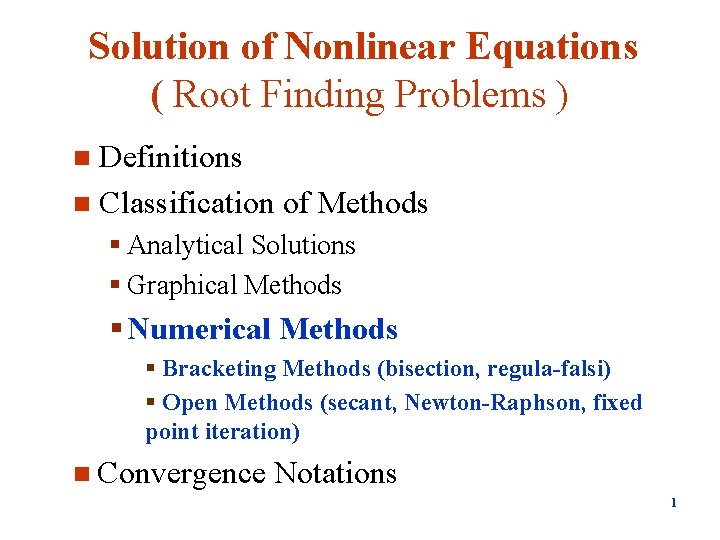

Solution of Nonlinear Equations ( Root Finding Problems ) Definitions n Classification of Methods n § Analytical Solutions § Graphical Methods § Numerical Methods § Bracketing Methods (bisection, regula-falsi) § Open Methods (secant, Newton-Raphson, fixed point iteration) n Convergence Notations 1

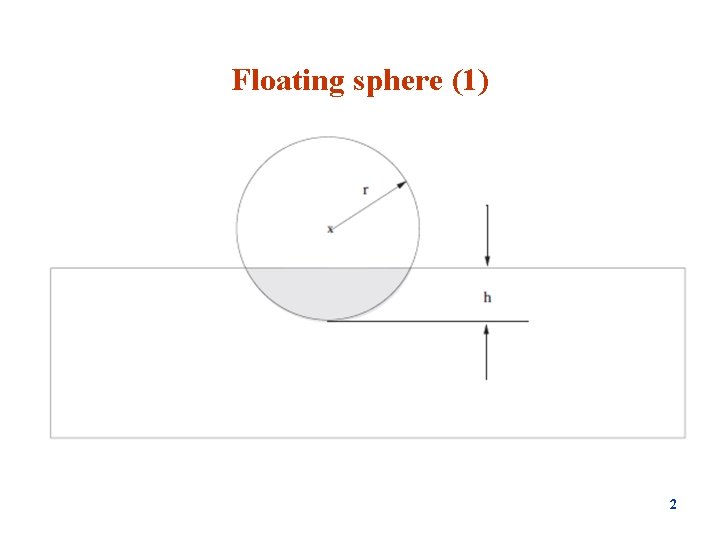

Floating sphere (1) 2

Floating sphere (2) Consider a sphere of solid material floating in water. Archimedes’ principle states that the buoyancy force is equal to the weight of the replaced liquid. Let Vs = (4/3)πr 3 be the volume of the sphere, and let Vw be the volume of water it displaces when it is partially submerged. In static equilibrium, the weight of the sphere is balanced by the buoyancy force ρsg. Vs = ρwg. Vw where ρs is the density of the sphere material, g is the acceleration due to gravity, ρw, is the density of water. The volume Vh of water displaced when a sphere is submerged to a depth h is Vh =π/3 h 2(3 r − h) Applying Archimedes’ principle produces the following equation in term of h h 3 − 3 rh 2 + 4ρr 3 = 0 Given values of r and the specific gravity of the sphere material ρ = ρs / ρw , the solutions h of the above equation can be obtained by iterative methods. 3

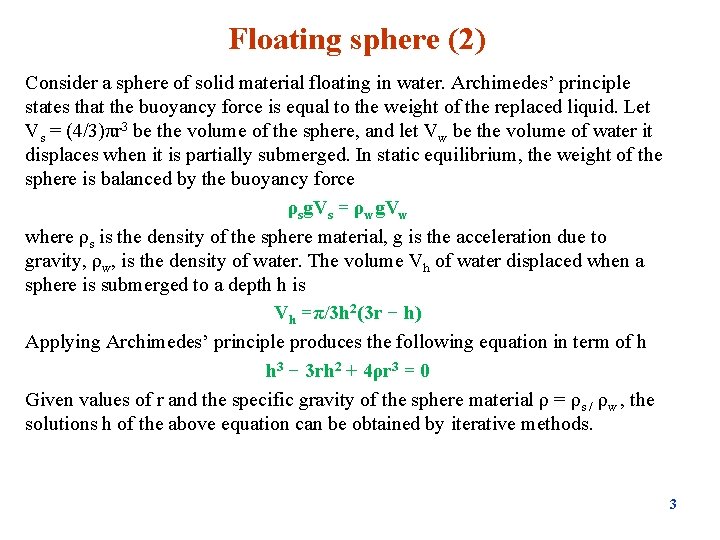

Nonlinear Equation Solvers Bracketing Graphical • Bisection • False Position (Regula-Falsi) Open Methods • Newton Raphson • Secant • Fixed point iteration All Iterative 4

Roots of Equations - Examples 5

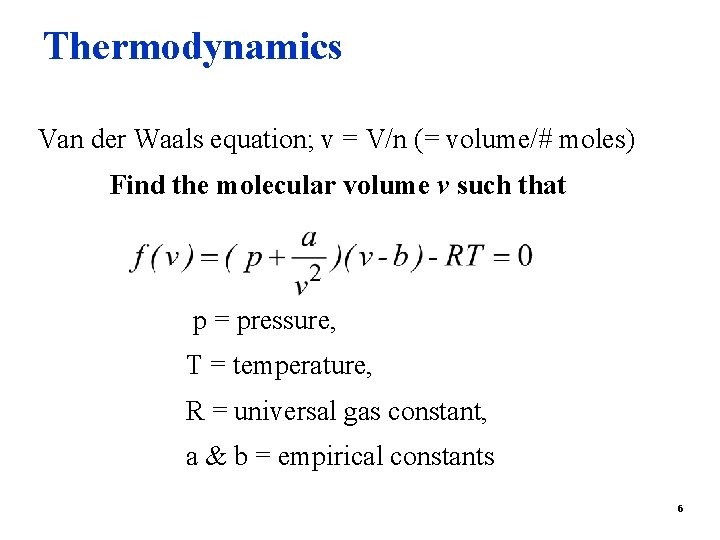

Thermodynamics Van der Waals equation; v = V/n (= volume/# moles) Find the molecular volume v such that p = pressure, T = temperature, R = universal gas constant, a & b = empirical constants 6

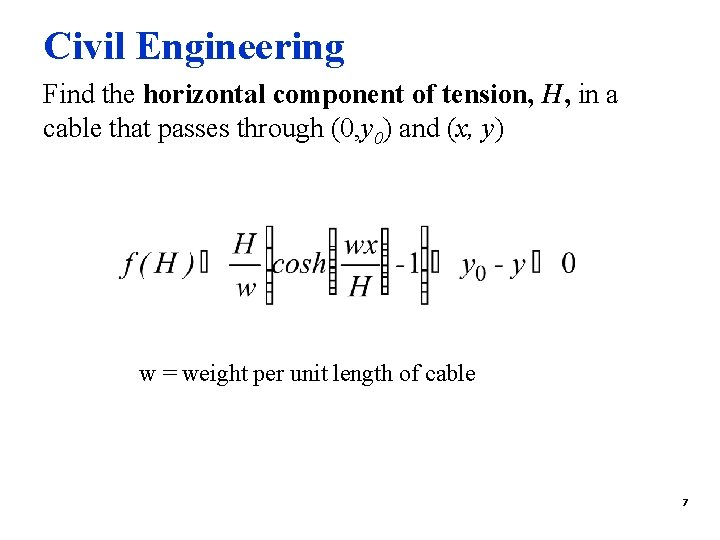

Civil Engineering Find the horizontal component of tension, H, in a cable that passes through (0, y 0) and (x, y) w = weight per unit length of cable 7

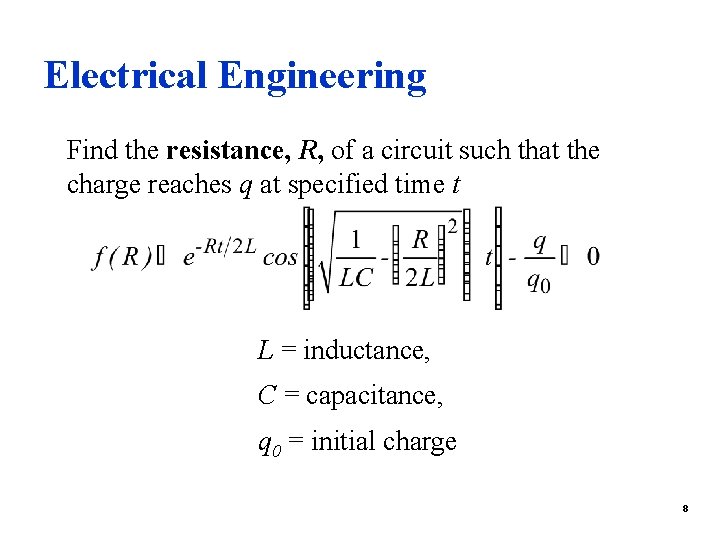

Electrical Engineering Find the resistance, R, of a circuit such that the charge reaches q at specified time t L = inductance, C = capacitance, q 0 = initial charge 8

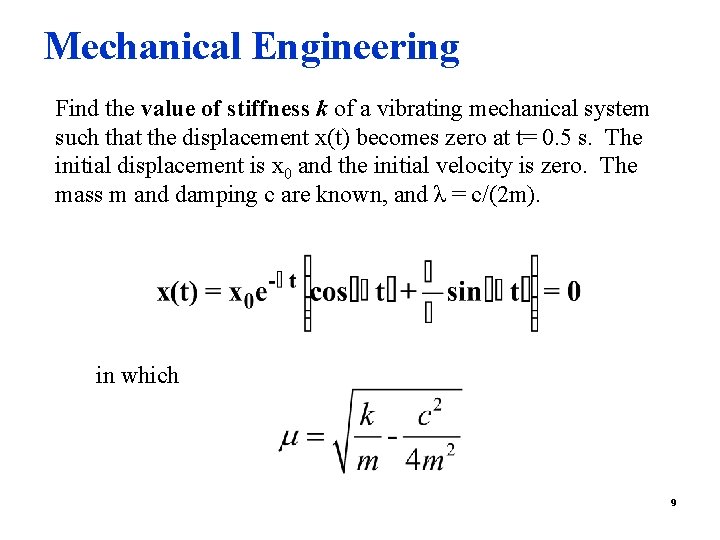

Mechanical Engineering Find the value of stiffness k of a vibrating mechanical system such that the displacement x(t) becomes zero at t= 0. 5 s. The initial displacement is x 0 and the initial velocity is zero. The mass m and damping c are known, and λ = c/(2 m). in which 9

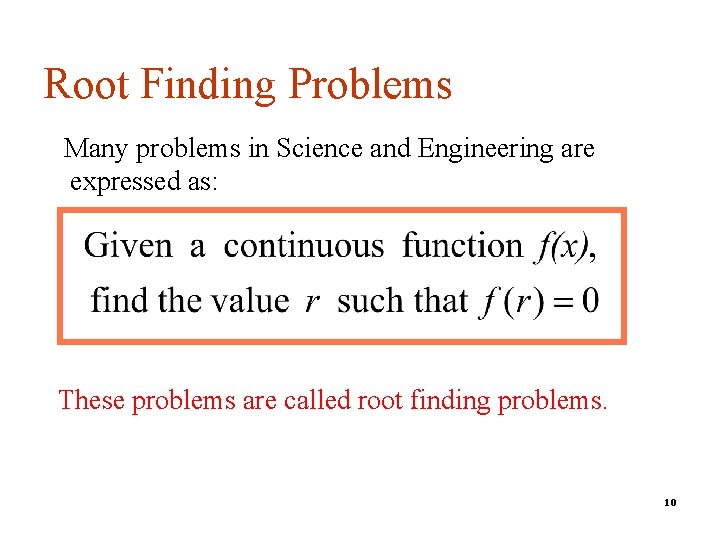

Root Finding Problems Many problems in Science and Engineering are expressed as: These problems are called root finding problems. 10

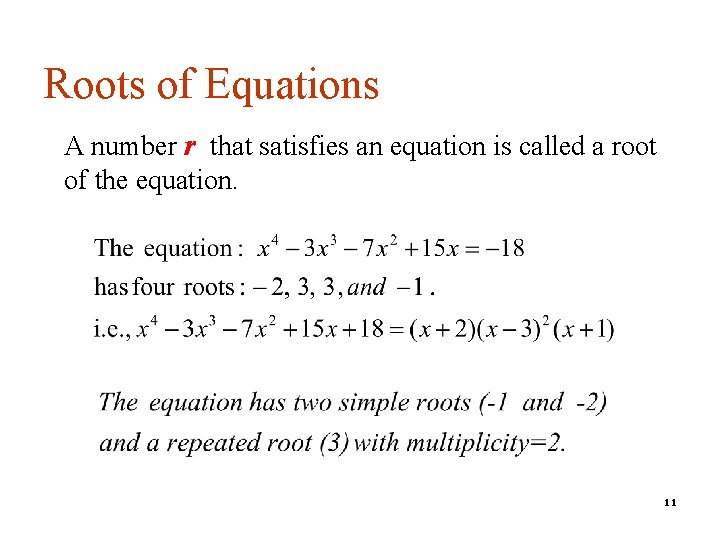

Roots of Equations A number r that satisfies an equation is called a root of the equation. 11

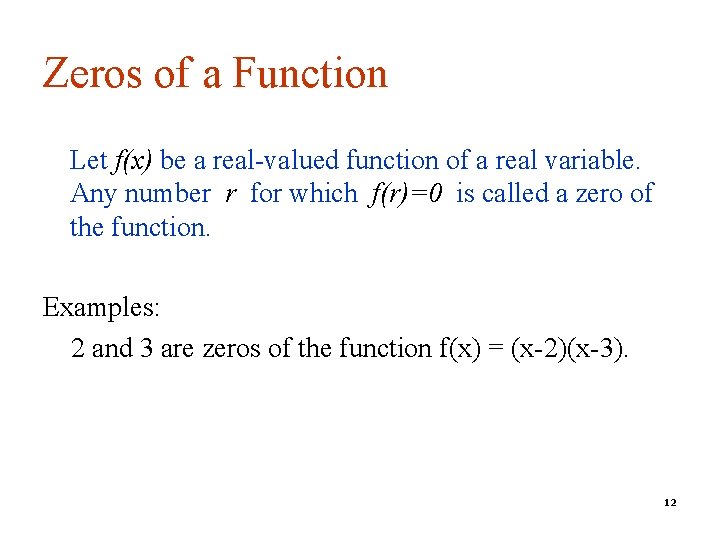

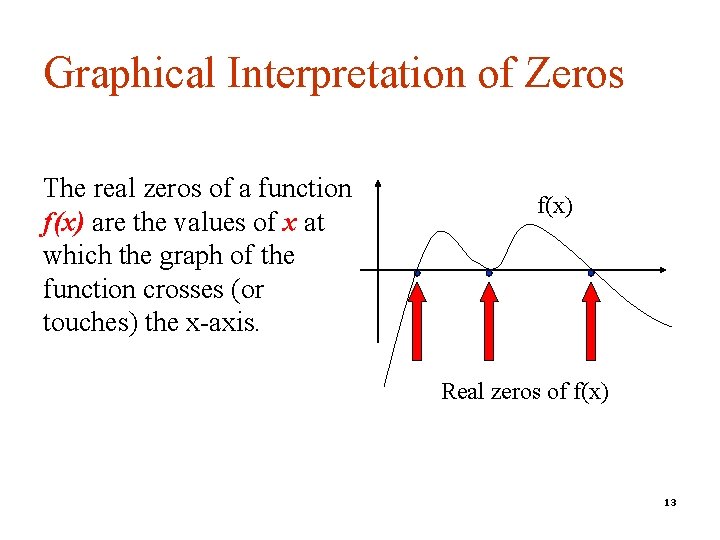

Zeros of a Function Let f(x) be a real-valued function of a real variable. Any number r for which f(r)=0 is called a zero of the function. Examples: 2 and 3 are zeros of the function f(x) = (x-2)(x-3). 12

Graphical Interpretation of Zeros The real zeros of a function f(x) are the values of x at which the graph of the function crosses (or touches) the x-axis. f(x) Real zeros of f(x) 13

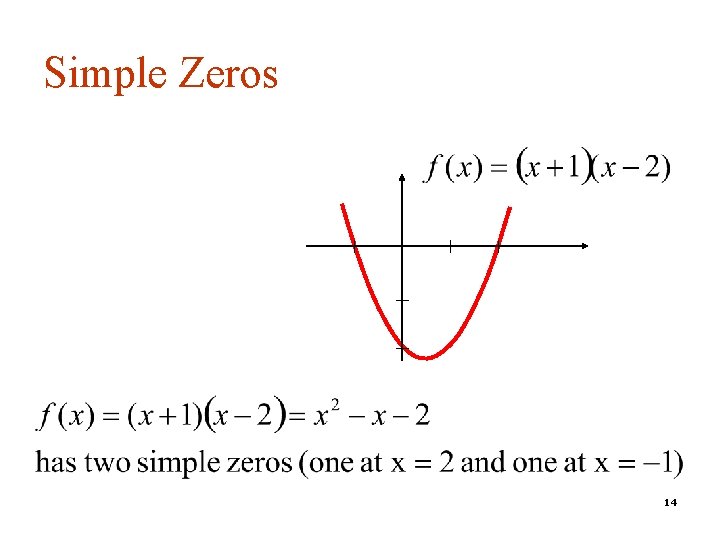

Simple Zeros 14

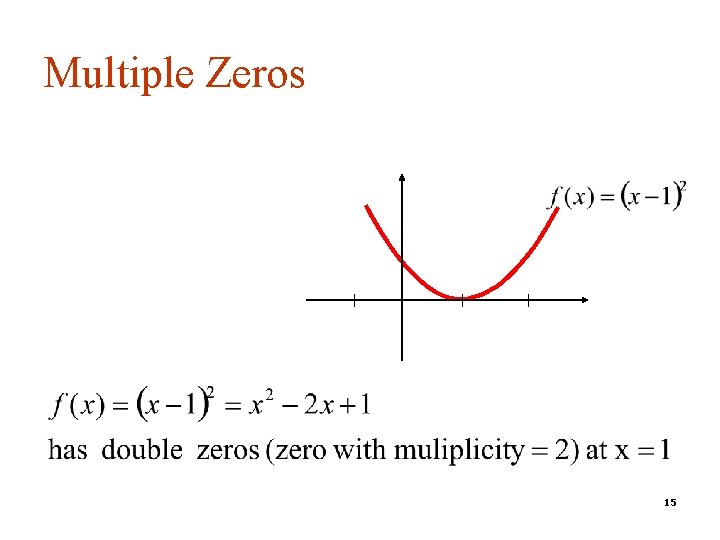

Multiple Zeros 15

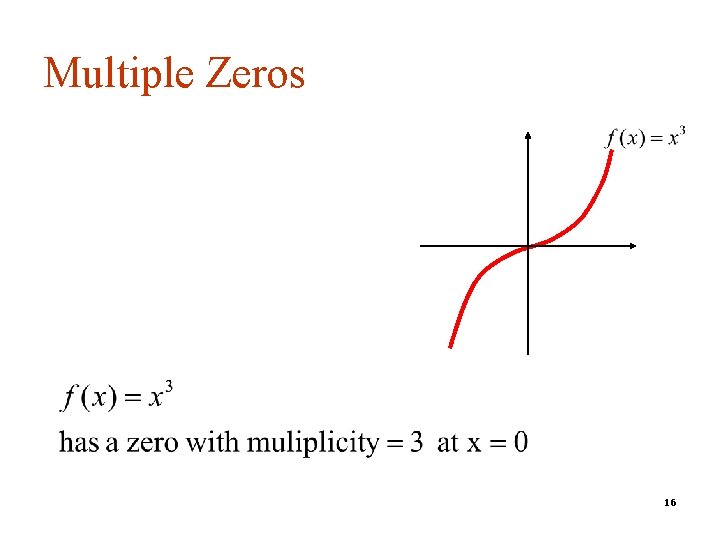

Multiple Zeros 16

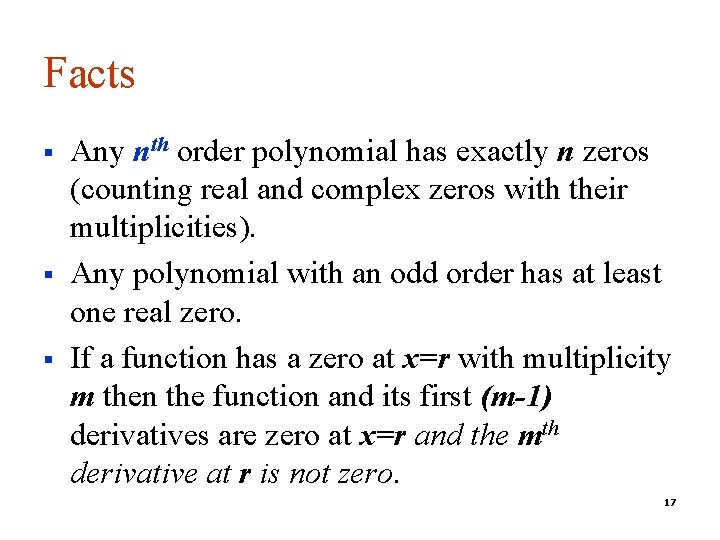

Facts § § § Any nth order polynomial has exactly n zeros (counting real and complex zeros with their multiplicities). Any polynomial with an odd order has at least one real zero. If a function has a zero at x=r with multiplicity m then the function and its first (m-1) derivatives are zero at x=r and the mth derivative at r is not zero. 17

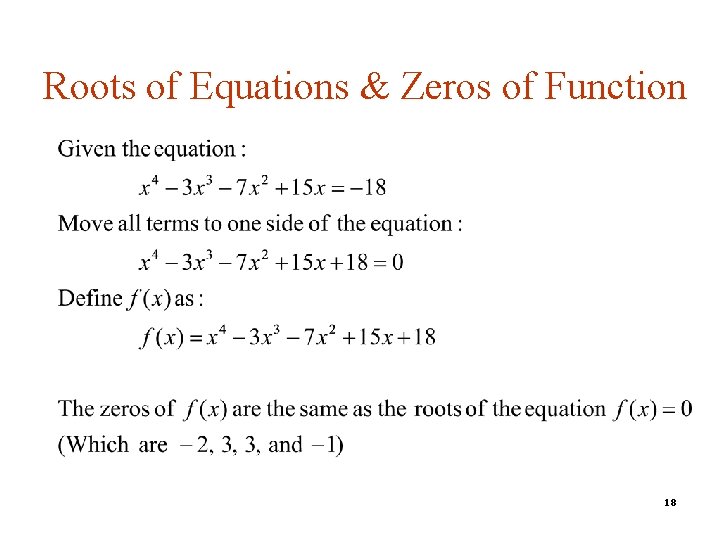

Roots of Equations & Zeros of Function 18

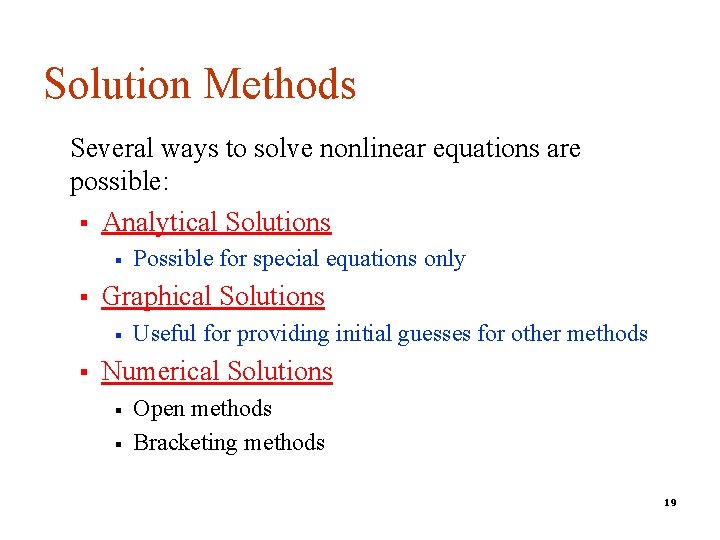

Solution Methods Several ways to solve nonlinear equations are possible: § Analytical Solutions § § Graphical Solutions § § Possible for special equations only Useful for providing initial guesses for other methods Numerical Solutions § § Open methods Bracketing methods 19

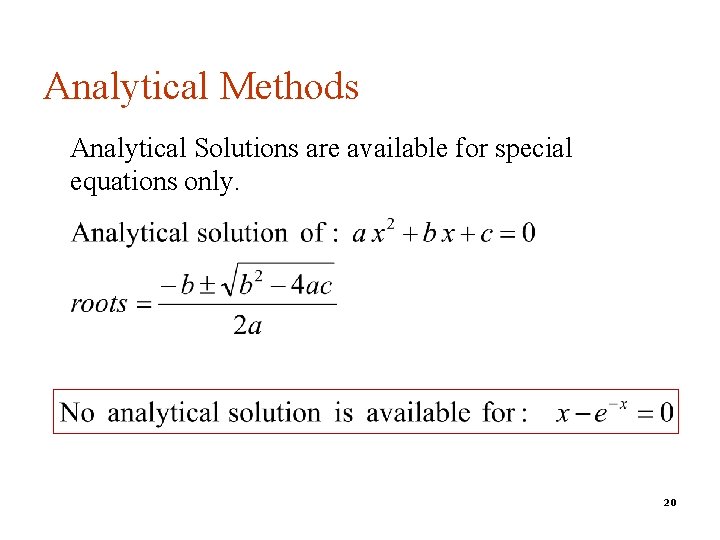

Analytical Methods Analytical Solutions are available for special equations only. 20

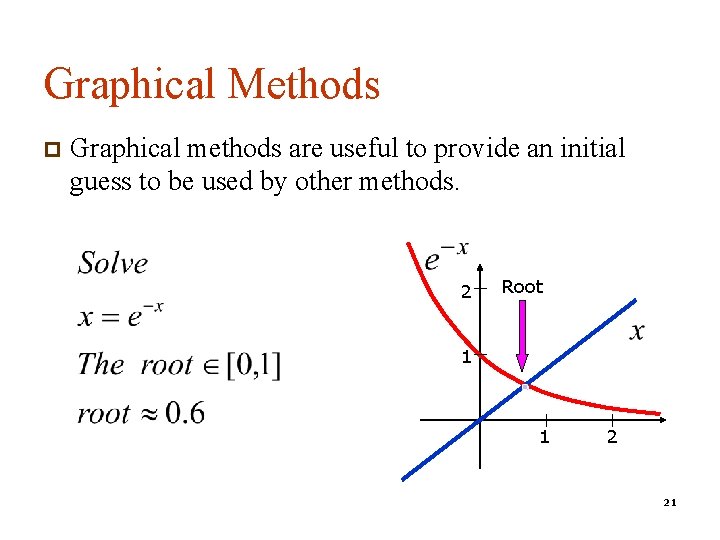

Graphical Methods p Graphical methods are useful to provide an initial guess to be used by other methods. 2 Root 1 1 2 21

Numerical Methods Many methods are available to solve nonlinear equations: § Bisection Method bracketing method § Newton’s Method open method § Secant Method open method § False position Method (bracketing method) § Fixed point iterations (open method) n Muller’s Method n Bairstow’s Method n ………. 22

Bracketing Methods p In bracketing methods, the method starts with an interval that contains the root and a procedure is used to obtain a smaller interval containing the root. p Examples of bracketing methods: n Bisection method n False position method (Regula-Falsi) 23

Open Methods p In the open methods, the method starts with one or more initial guess points. In each iteration, a new guess of the root is obtained. p Open methods are usually more efficient than bracketing methods. p They may not converge to a root. 24

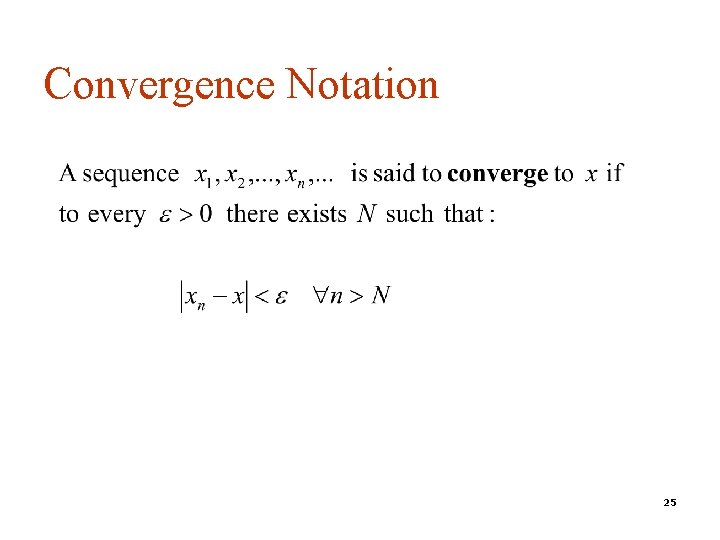

Convergence Notation 25

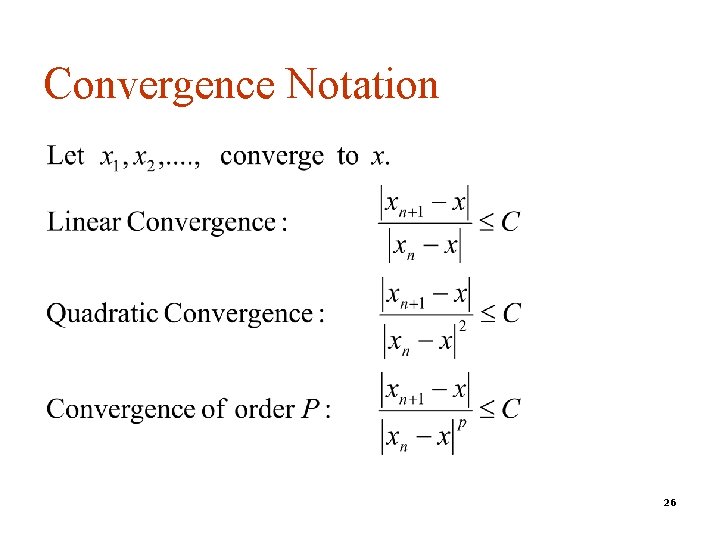

Convergence Notation 26

Speed of Convergence p We can compare different methods in terms of their convergence rate. p Quadratic convergence is faster than linear convergence. p A method with convergence order q converges faster than a method with convergence order p if q>p. p Methods of convergence order p>1 are said to have super linear convergence. 27

Bracketing Methods 28

Bisection Method n n n The Bisection Algorithm Convergence Analysis of Bisection Method Examples 29

Introduction p p The Bisection method is one of the simplest methods to find a zero of a nonlinear function (also called interval halving method). To use the Bisection method, one needs an initial interval that is known to contain a zero of the function. The method systematically reduces the interval. It does this by dividing the interval into two equal parts, performs a simple test and based on the result of the test, half of the interval is thrown away. The procedure is repeated until the desired interval size is obtained. 30

![Intermediate Value Theorem p p Let f(x) be defined on the interval [a, b]. Intermediate Value Theorem p p Let f(x) be defined on the interval [a, b].](http://slidetodoc.com/presentation_image_h2/a7e41f2e27c9ef1c66164125362792c8/image-31.jpg)

Intermediate Value Theorem p p Let f(x) be defined on the interval [a, b]. Intermediate value theorem: if a function is continuous and f(a) and f(b) have different signs then the function has at least one zero in the interval [a, b]. f(a) a b f(b) 31

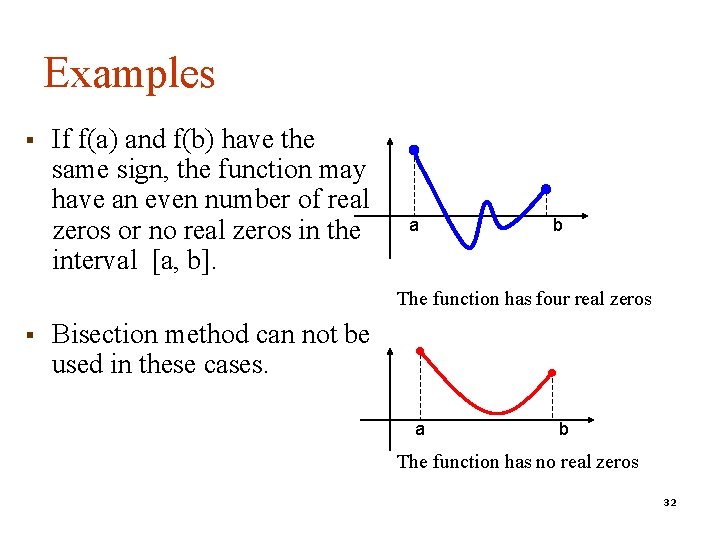

Examples § If f(a) and f(b) have the same sign, the function may have an even number of real zeros or no real zeros in the interval [a, b]. a b The function has four real zeros § Bisection method can not be used in these cases. a b The function has no real zeros 32

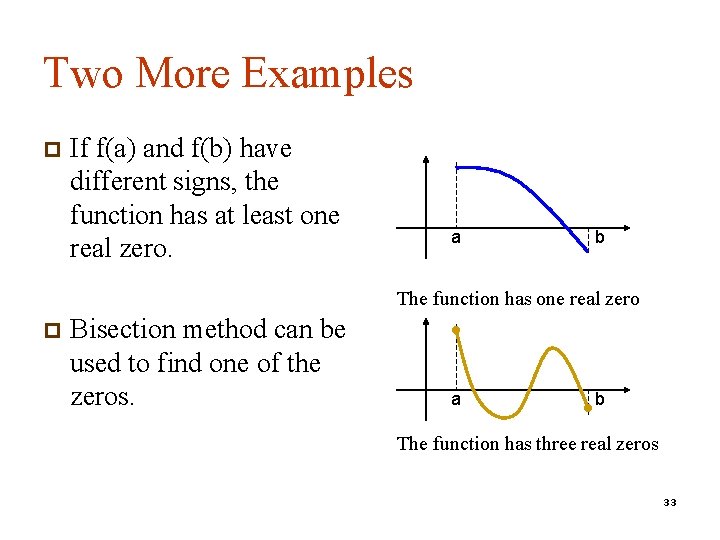

Two More Examples p If f(a) and f(b) have different signs, the function has at least one real zero. a b The function has one real zero p Bisection method can be used to find one of the zeros. a b The function has three real zeros 33

![Bisection Method § If the function is continuous on [a, b] and f(a) and Bisection Method § If the function is continuous on [a, b] and f(a) and](http://slidetodoc.com/presentation_image_h2/a7e41f2e27c9ef1c66164125362792c8/image-34.jpg)

Bisection Method § If the function is continuous on [a, b] and f(a) and f(b) have different signs, Bisection method obtains a new interval that is half of the current interval and the sign of the function at the end points of the interval are different. § This allows us to repeat the Bisection procedure to further reduce the size of the interval. 34

![Bisection Method Assumptions: Given an interval [a, b] f(x) is continuous on [a, b] Bisection Method Assumptions: Given an interval [a, b] f(x) is continuous on [a, b]](http://slidetodoc.com/presentation_image_h2/a7e41f2e27c9ef1c66164125362792c8/image-35.jpg)

Bisection Method Assumptions: Given an interval [a, b] f(x) is continuous on [a, b] f(a) and f(b) have opposite signs. These assumptions ensure the existence of at least one zero in the interval [a, b] and the bisection method can be used to obtain a smaller interval that contains the zero. 35

![Bisection Algorithm Assumptions: p f(x) is continuous on [a, b] p f(a) f(b) < Bisection Algorithm Assumptions: p f(x) is continuous on [a, b] p f(a) f(b) <](http://slidetodoc.com/presentation_image_h2/a7e41f2e27c9ef1c66164125362792c8/image-36.jpg)

Bisection Algorithm Assumptions: p f(x) is continuous on [a, b] p f(a) f(b) < 0 Algorithm: Loop 1. Compute the mid point c=(a+b)/2 2. Evaluate f(c) 3. If f(a) f(c) < 0 then new interval [a, c] If f(a) f(c) > 0 then new interval [c, b] End loop f(a) c b a f(b) 36

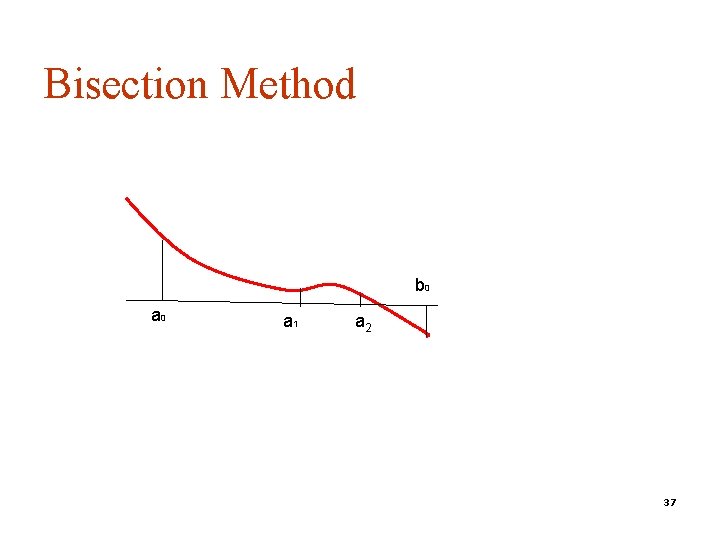

Bisection Method b 0 a 1 a 2 37

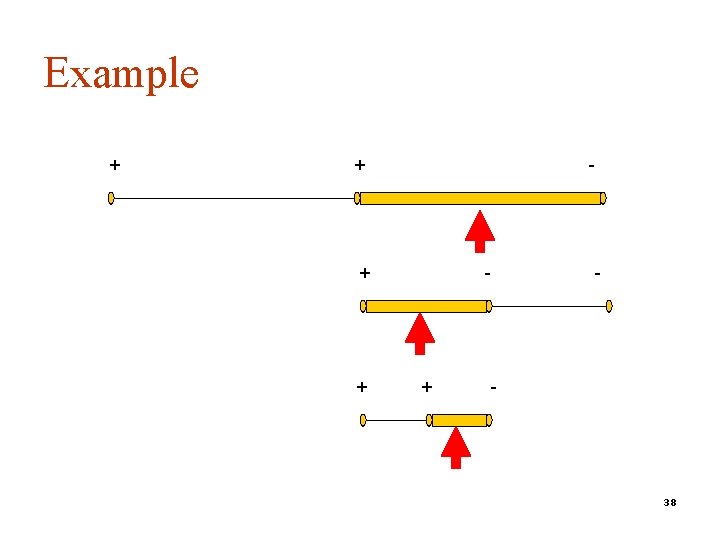

Example + + - + - - 38

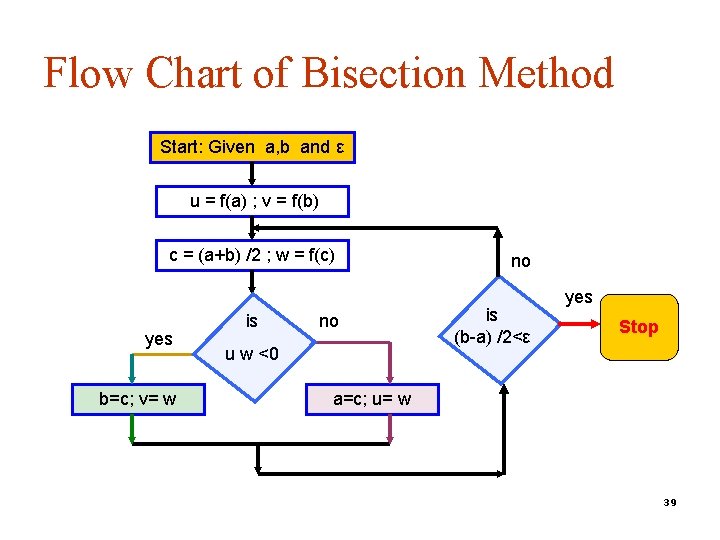

Flow Chart of Bisection Method Start: Given a, b and ε u = f(a) ; v = f(b) c = (a+b) /2 ; w = f(c) yes b=c; v= w is no u w <0 no is (b-a) /2<ε yes Stop a=c; u= w 39

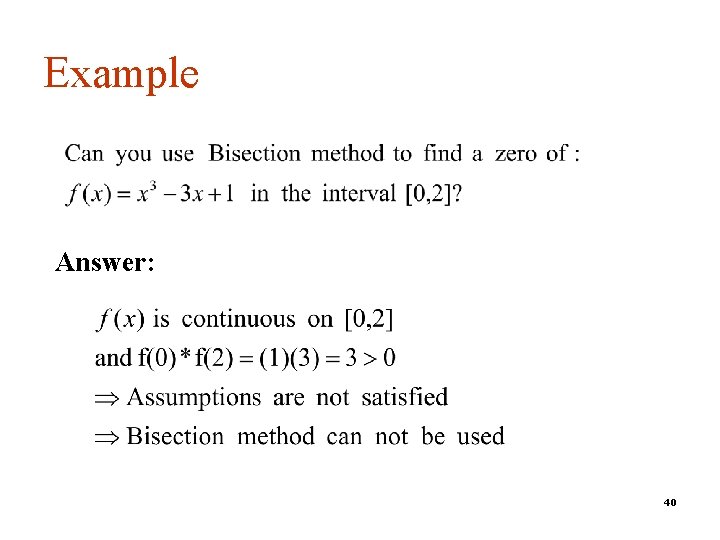

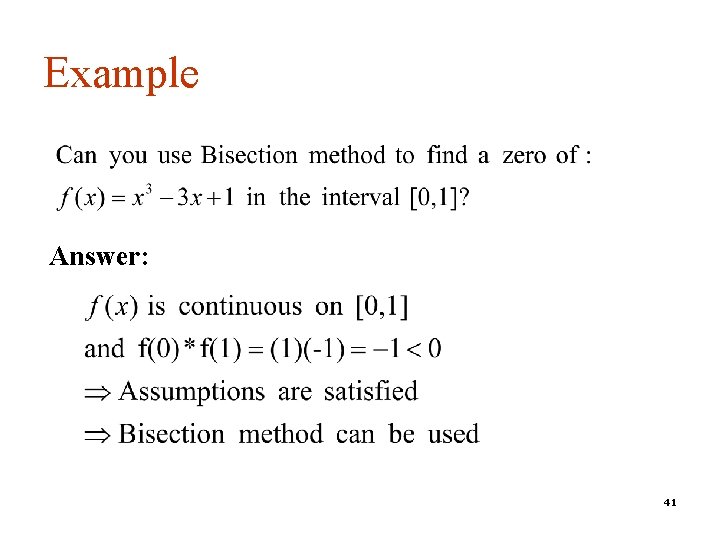

Example Answer: 40

Example Answer: 41

Best Estimate and Error Level Bisection method obtains an interval that is guaranteed to contain a zero of the function. Questions: p What is the best estimate of the zero of f(x)? p What is the error level in the obtained estimate? 42

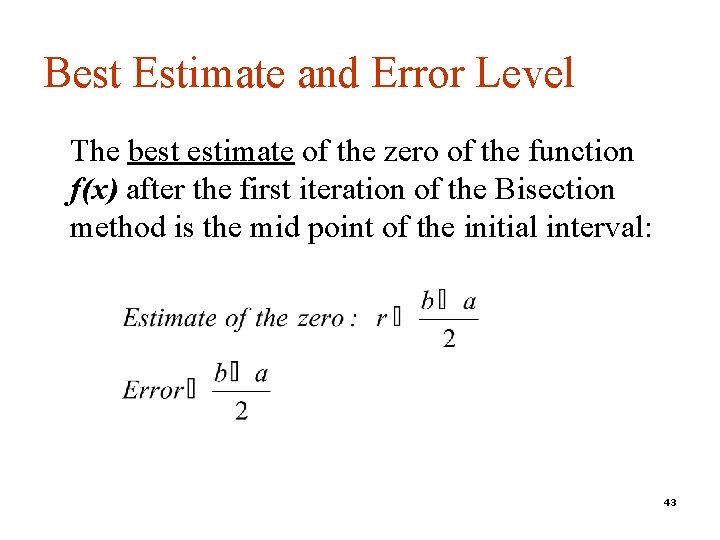

Best Estimate and Error Level The best estimate of the zero of the function f(x) after the first iteration of the Bisection method is the mid point of the initial interval: 43

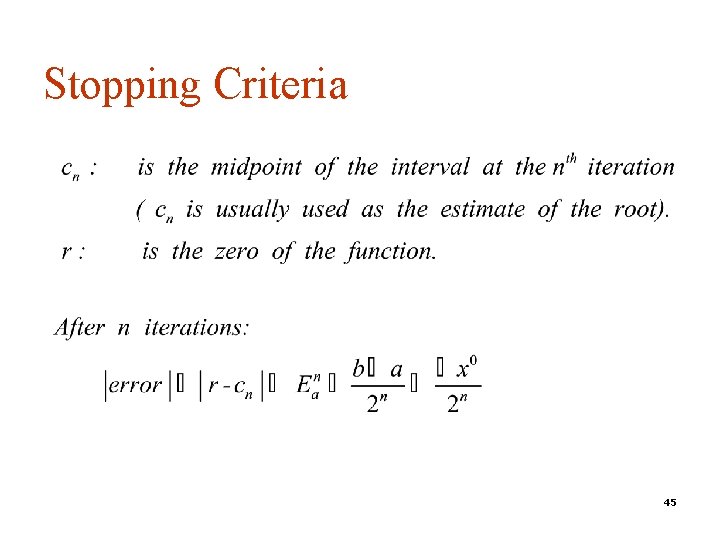

Stopping Criteria Two common stopping criteria 1. 2. Stop after a fixed number of iterations Stop when the absolute error is less than a specified value How are these criteria related? 44

Stopping Criteria 45

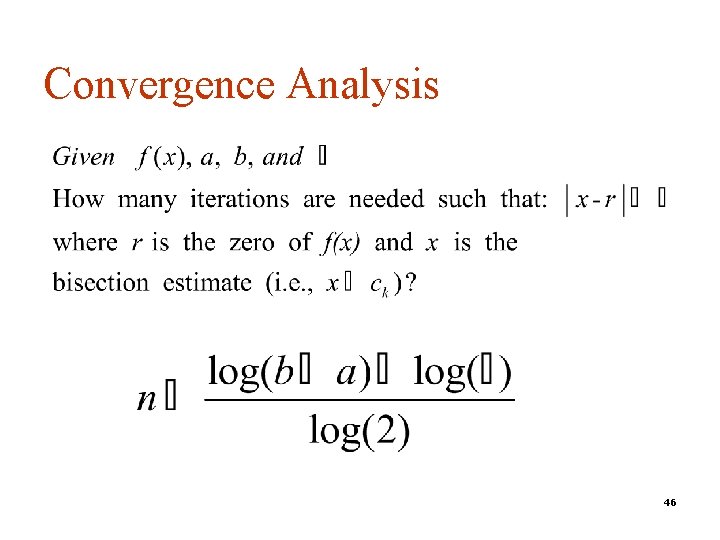

Convergence Analysis 46

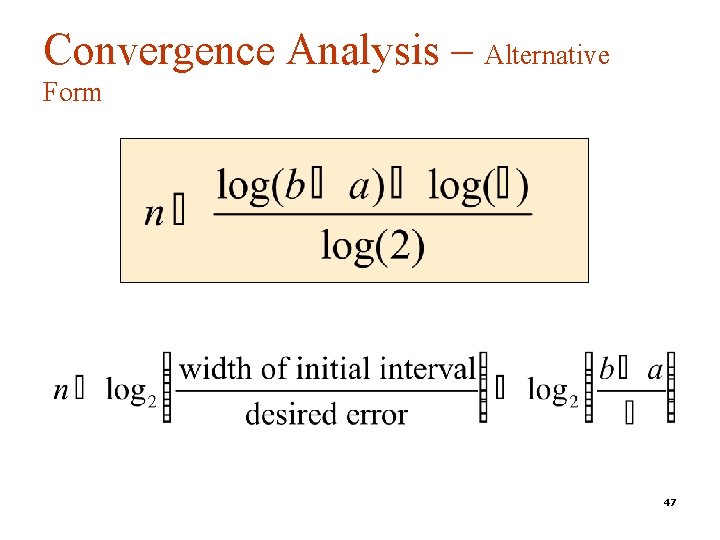

Convergence Analysis – Alternative Form 47

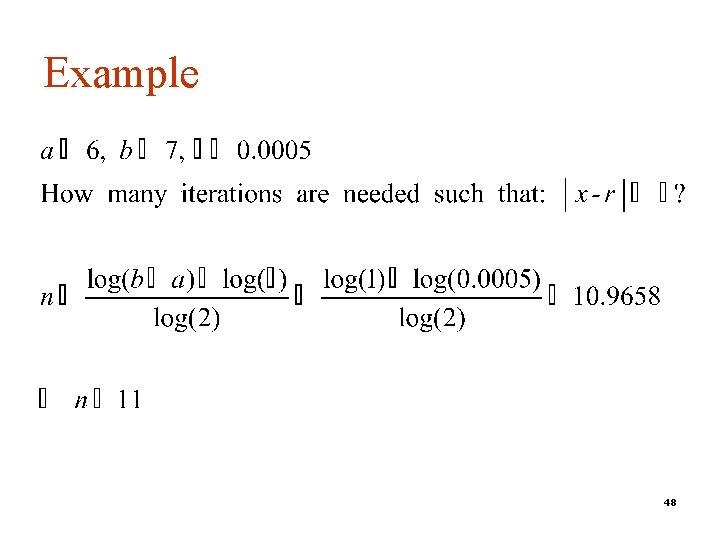

Example 48

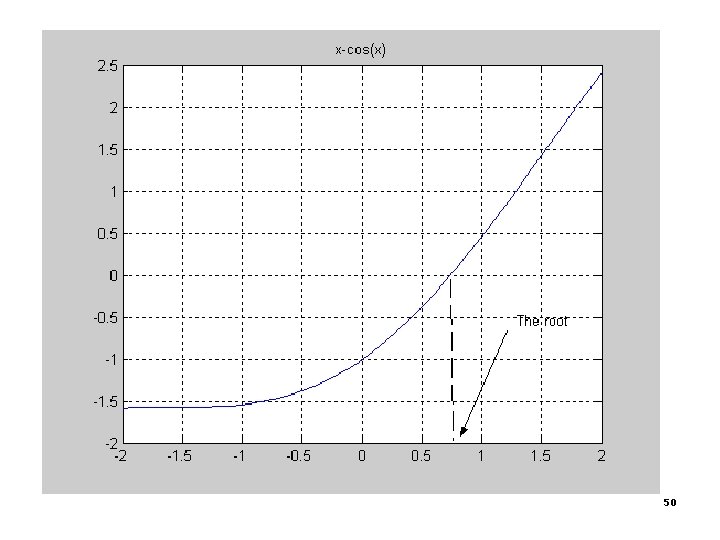

Example p Use Bisection method to find a root of the equation x = cos(x) with absolute error <0. 02 (assume the initial interval [0. 5, 0. 9]) Question 1: What is f (x) ? Question 2: Are the assumptions satisfied ? Question 3: How many iterations are needed ? Question 4: How to compute the new estimate ? 49

50

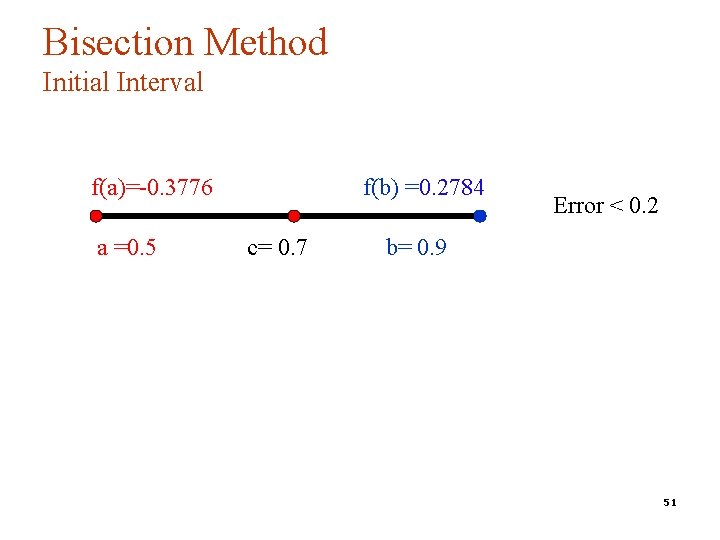

Bisection Method Initial Interval f(a)=-0. 3776 a =0. 5 f(b) =0. 2784 c= 0. 7 Error < 0. 2 b= 0. 9 51

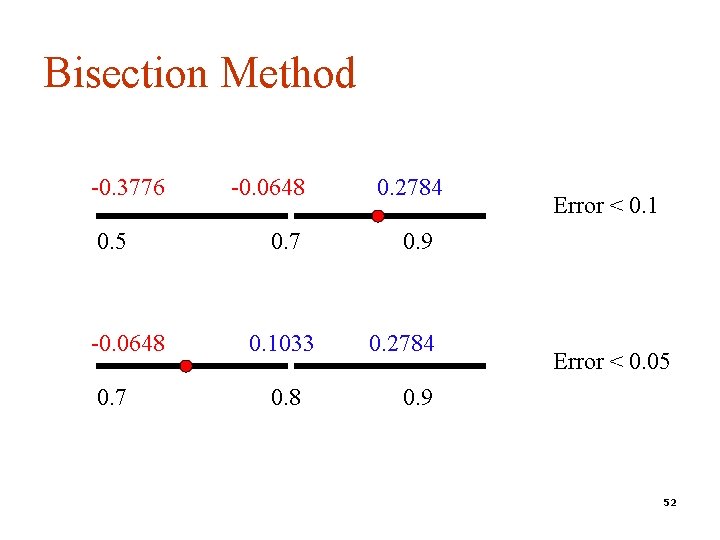

Bisection Method -0. 3776 0. 5 -0. 0648 0. 7 0. 1033 0. 8 0. 2784 Error < 0. 1 0. 9 0. 2784 Error < 0. 05 0. 9 52

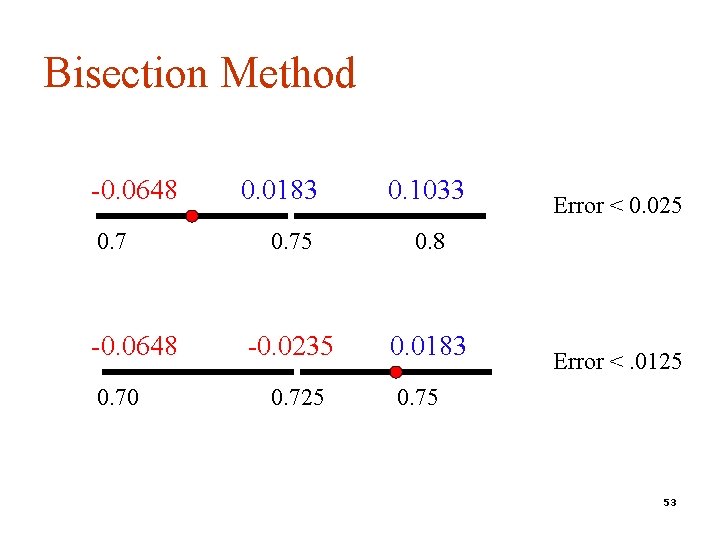

Bisection Method -0. 0648 0. 70 0. 0183 0. 1033 0. 75 0. 8 -0. 0235 0. 0183 0. 725 Error < 0. 025 Error <. 0125 0. 75 53

![Summary p Initial p After interval containing the root: [0. 5, 0. 9] 5 Summary p Initial p After interval containing the root: [0. 5, 0. 9] 5](http://slidetodoc.com/presentation_image_h2/a7e41f2e27c9ef1c66164125362792c8/image-54.jpg)

Summary p Initial p After interval containing the root: [0. 5, 0. 9] 5 iterations: Interval containing the root: [0. 725, 0. 75] n Best estimate of the root is 0. 7375 n | Error | < 0. 0125 n 54

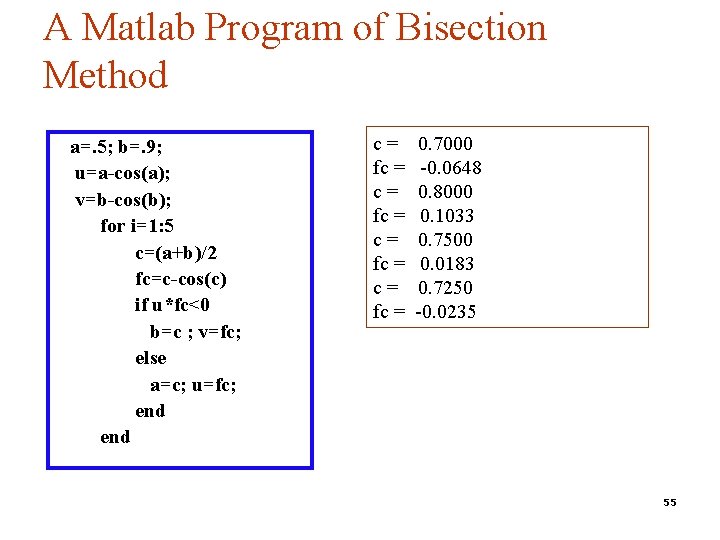

A Matlab Program of Bisection Method a=. 5; b=. 9; u=a-cos(a); v=b-cos(b); for i=1: 5 c=(a+b)/2 fc=c-cos(c) if u*fc<0 b=c ; v=fc; else a=c; u=fc; end c= fc = 0. 7000 -0. 0648 0. 8000 0. 1033 0. 7500 0. 0183 0. 7250 -0. 0235 55

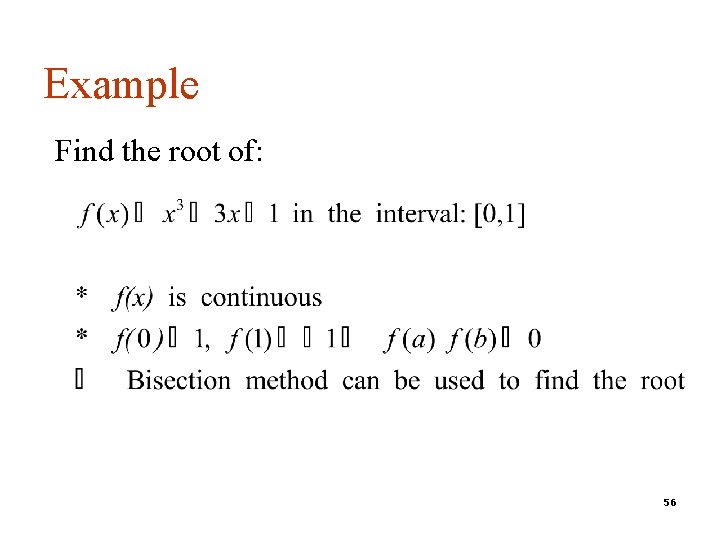

Example Find the root of: 56

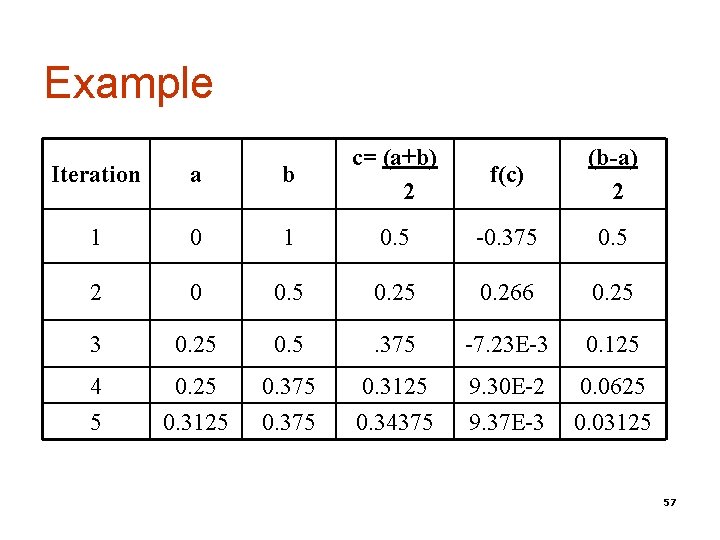

Example Iteration a b c= (a+b) 2 f(c) (b-a) 2 1 0. 5 -0. 375 0. 5 2 0 0. 5 0. 266 0. 25 3 0. 25 0. 5 . 375 -7. 23 E-3 0. 125 4 5 0. 25 0. 3125 0. 375 0. 3125 0. 34375 9. 30 E-2 9. 37 E-3 0. 0625 0. 03125 57

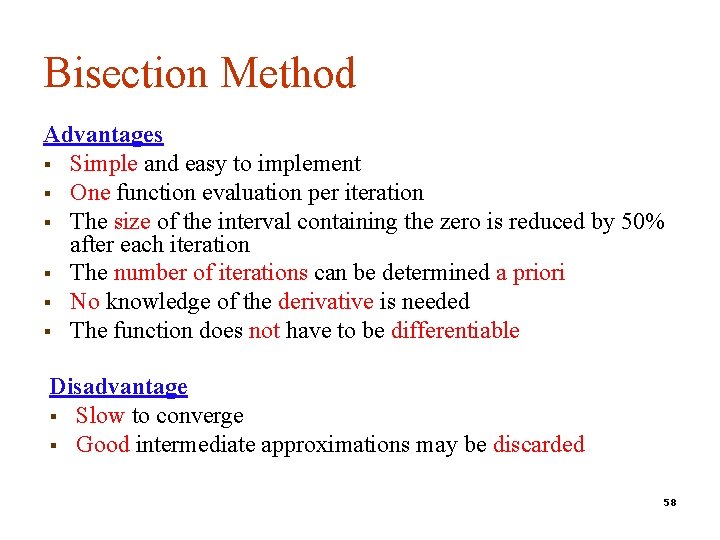

Bisection Method Advantages § Simple and easy to implement § One function evaluation per iteration § The size of the interval containing the zero is reduced by 50% after each iteration § The number of iterations can be determined a priori § No knowledge of the derivative is needed § The function does not have to be differentiable Disadvantage § Slow to converge § Good intermediate approximations may be discarded 58

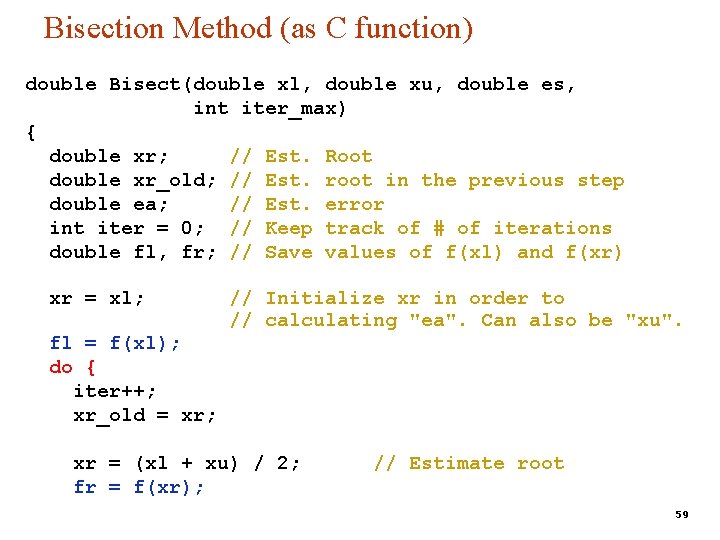

Bisection Method (as C function) double Bisect(double xl, double xu, double es, int iter_max) { double xr; // Est. Root double xr_old; // Est. root in the previous step double ea; // Est. error int iter = 0; // Keep track of # of iterations double fl, fr; // Save values of f(xl) and f(xr) xr = xl; // Initialize xr in order to // calculating "ea". Can also be "xu". fl = f(xl); do { iter++; xr_old = xr; xr = (xl + xu) / 2; fr = f(xr); // Estimate root 59

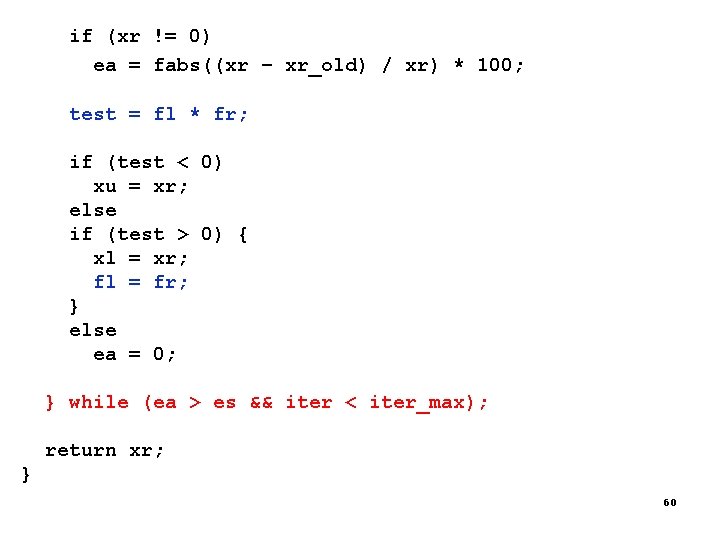

if (xr != 0) ea = fabs((xr – xr_old) / xr) * 100; test = fl * fr; if (test < 0) xu = xr; else if (test > 0) { xl = xr; fl = fr; } else ea = 0; } while (ea > es && iter < iter_max); return xr; } 60

Regula – Falsi Method 61

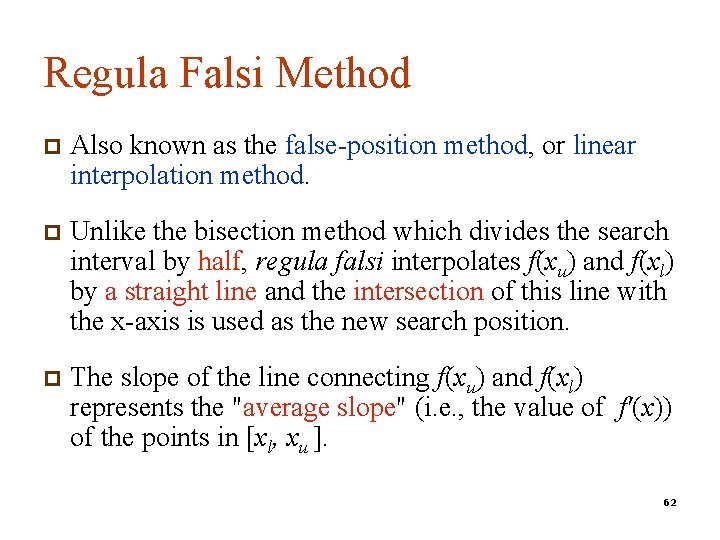

Regula Falsi Method p Also known as the false-position method, or linear interpolation method. p Unlike the bisection method which divides the search interval by half, regula falsi interpolates f(xu) and f(xl) by a straight line and the intersection of this line with the x-axis is used as the new search position. p The slope of the line connecting f(xu) and f(xl) represents the "average slope" (i. e. , the value of f'(x)) of the points in [xl, xu ]. 62

63

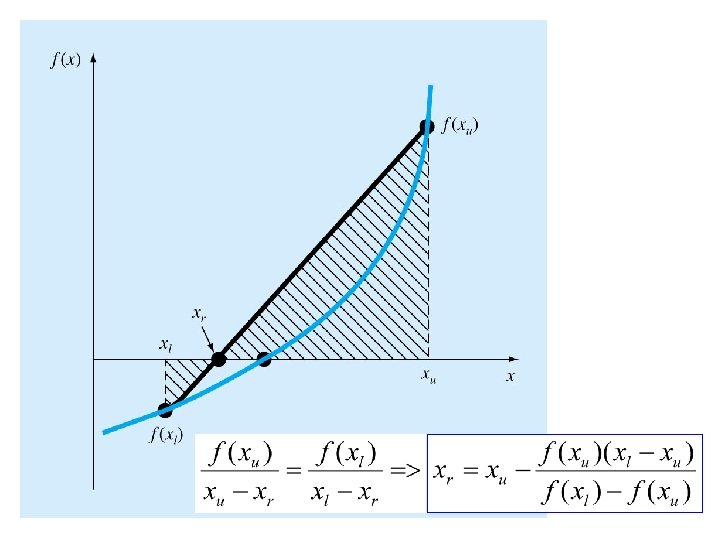

64

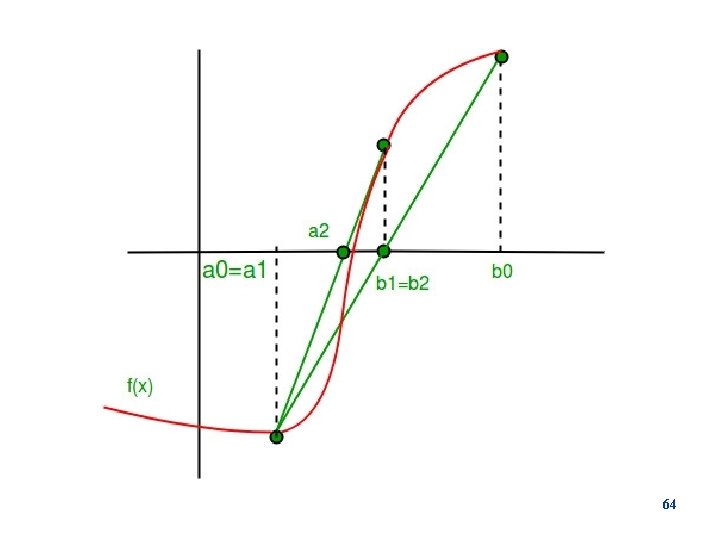

Regula Falsi Method p The regula falsi method start with two point, (a, f(a)) and (b, f(b)), satisfying the condition that f(a)f(b)<0. p The straight line through the two points (a, f(a)), (b, f(b)) is p The next approximation to the zero is the value of x where the straight line through the initial points crosses the x-axis. 65

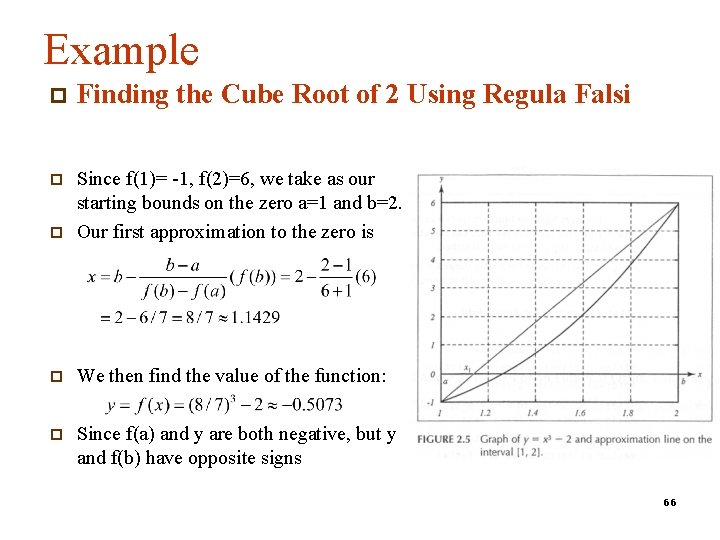

Example p Finding the Cube Root of 2 Using Regula Falsi p p Since f(1)= -1, f(2)=6, we take as our starting bounds on the zero a=1 and b=2. Our first approximation to the zero is p We then find the value of the function: p Since f(a) and y are both negative, but y and f(b) have opposite signs 66

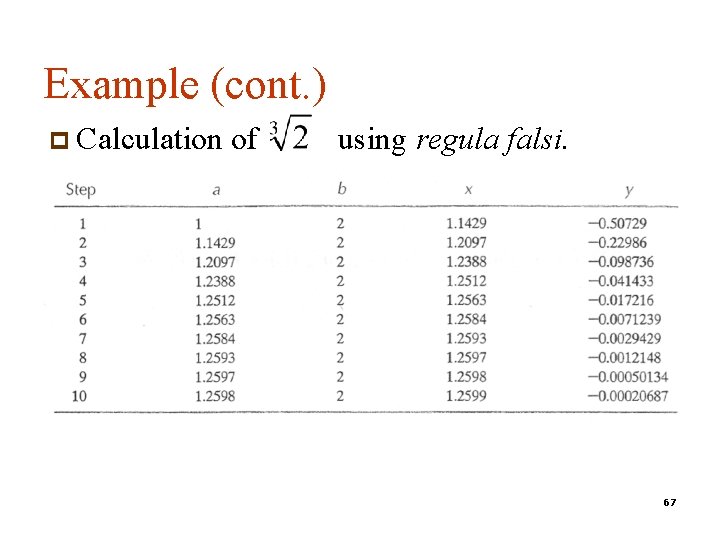

Example (cont. ) p Calculation of using regula falsi. 67

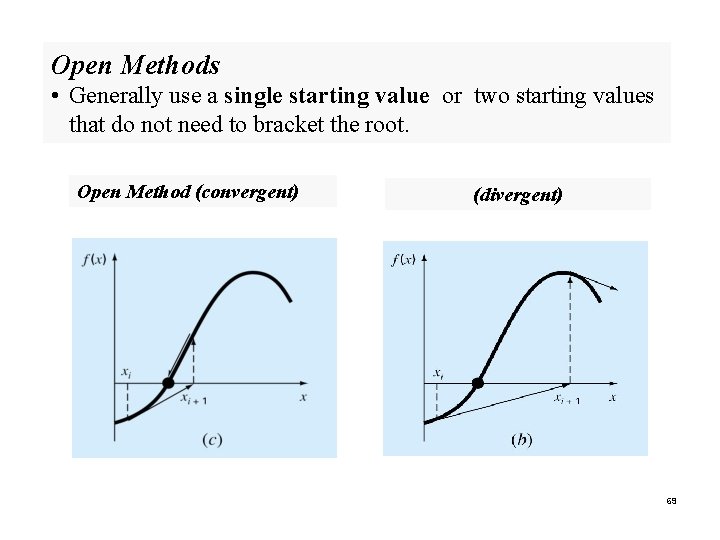

Open Methods 68

Open Methods • Generally use a single starting value or two starting values that do not need to bracket the root. Open Method (convergent) (divergent) 69

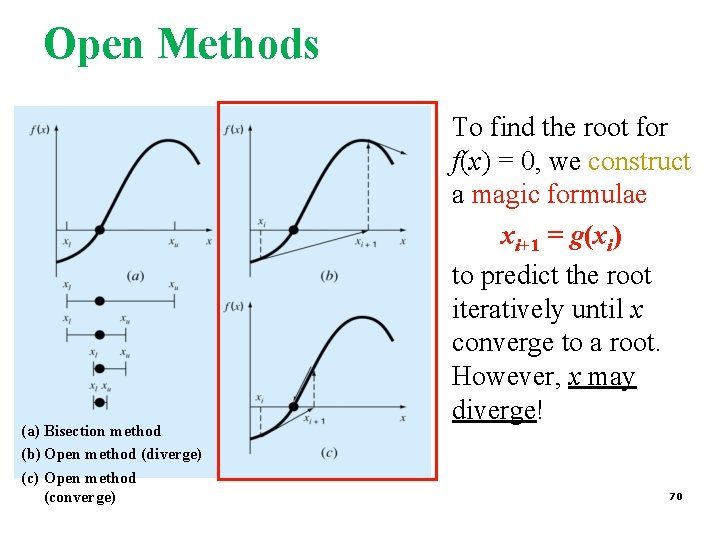

Open Methods (a) Bisection method (b) Open method (diverge) (c) Open method (converge) To find the root for f(x) = 0, we construct a magic formulae xi+1 = g(xi) to predict the root iteratively until x converge to a root. However, x may diverge! 70

What you should know about Open Methods § How to construct the magic formulae g(x)? § How can we ensure convergence? § What makes a method converges quickly or diverge? § How fast does a method converge? 71

OPEN METHOD Newton-Raphson Method n n Assumptions Interpretation Examples Convergence Analysis 72

Newton-Raphson Method (Also known as Newton’s Method) Given an initial guess of the root x 0, Newton. Raphson method uses information about the function and its derivative at that point to find a better guess of the root. Assumptions: n n f(x) is continuous and the first derivative is known An initial guess x 0 such that f’(x 0)≠ 0 is given 73

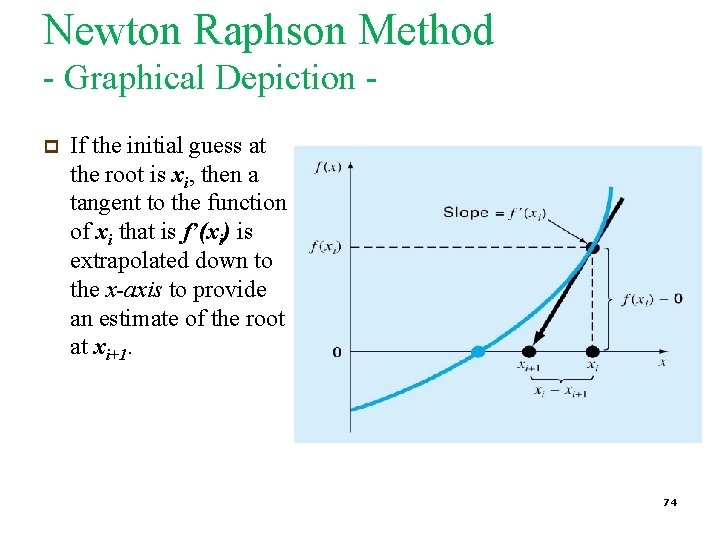

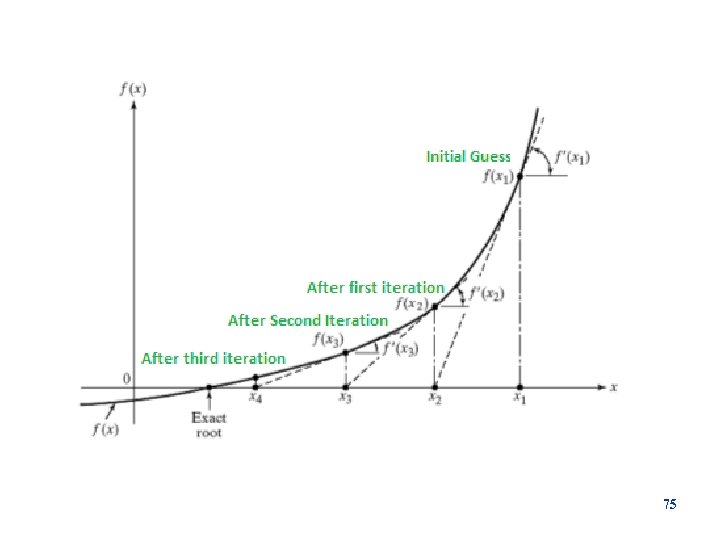

Newton Raphson Method - Graphical Depiction p If the initial guess at the root is xi, then a tangent to the function of xi that is f’(xi) is extrapolated down to the x-axis to provide an estimate of the root at xi+1. 74

75

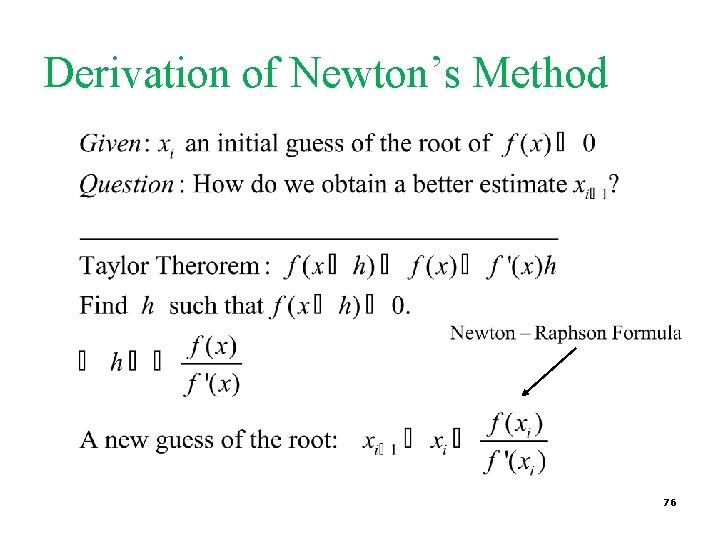

Derivation of Newton’s Method 76

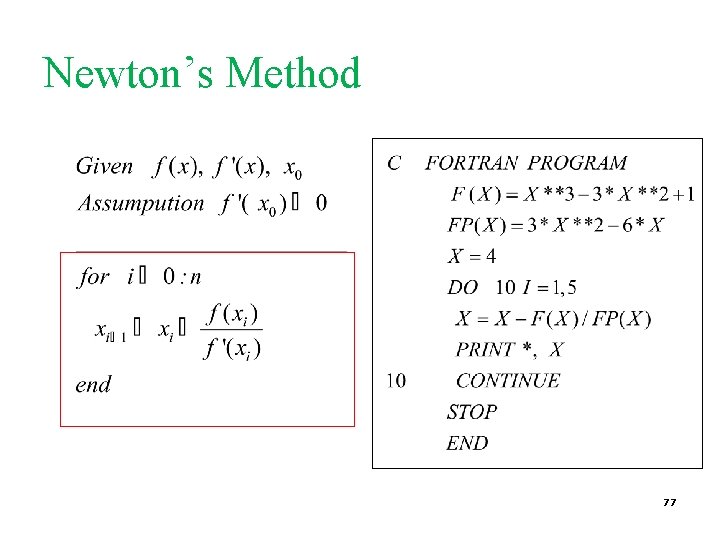

Newton’s Method 77

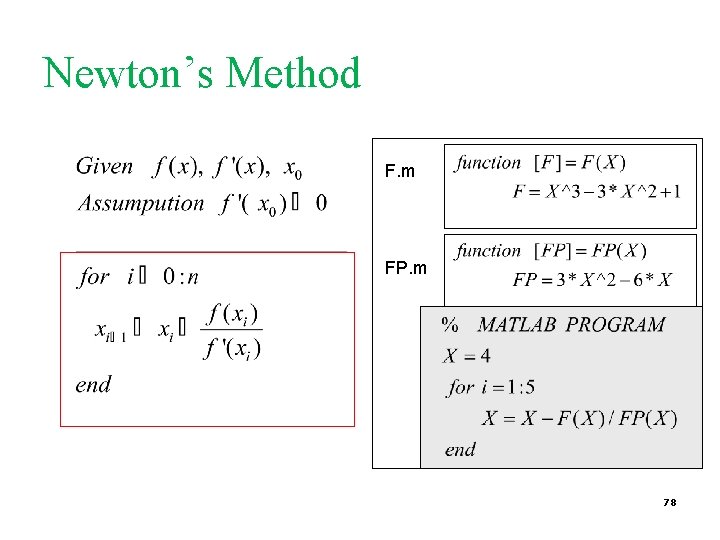

Newton’s Method F. m FP. m 78

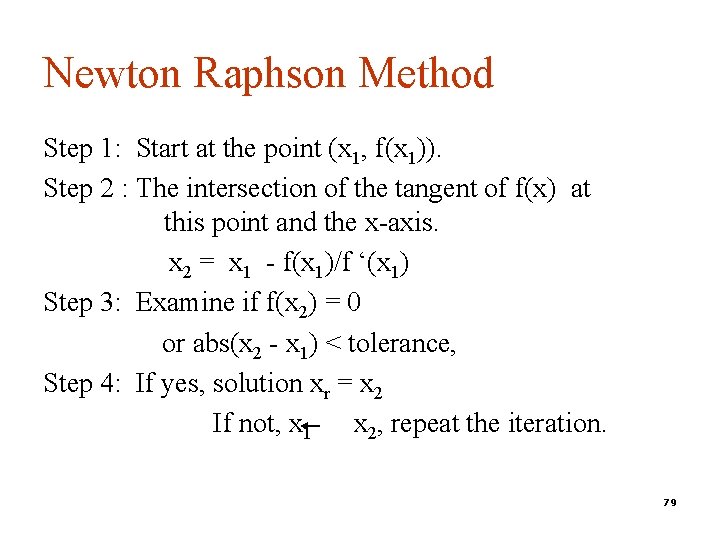

Newton Raphson Method Step 1: Start at the point (x 1, f(x 1)). Step 2 : The intersection of the tangent of f(x) at this point and the x-axis. x 2 = x 1 - f(x 1)/f ‘(x 1) Step 3: Examine if f(x 2) = 0 or abs(x 2 - x 1) < tolerance, Step 4: If yes, solution xr = x 2 If not, x 1 x 2, repeat the iteration. 79

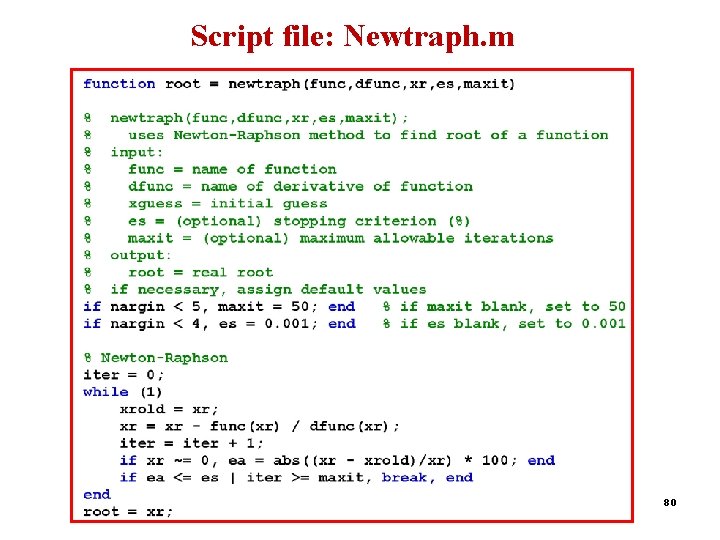

Script file: Newtraph. m 80

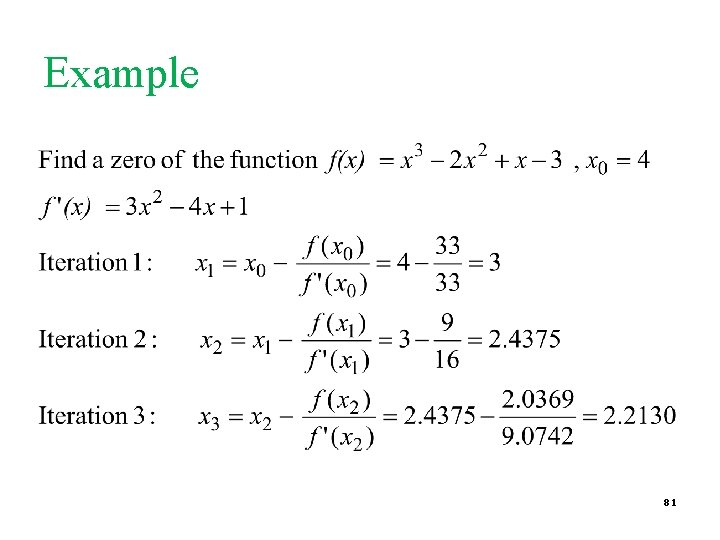

Example 81

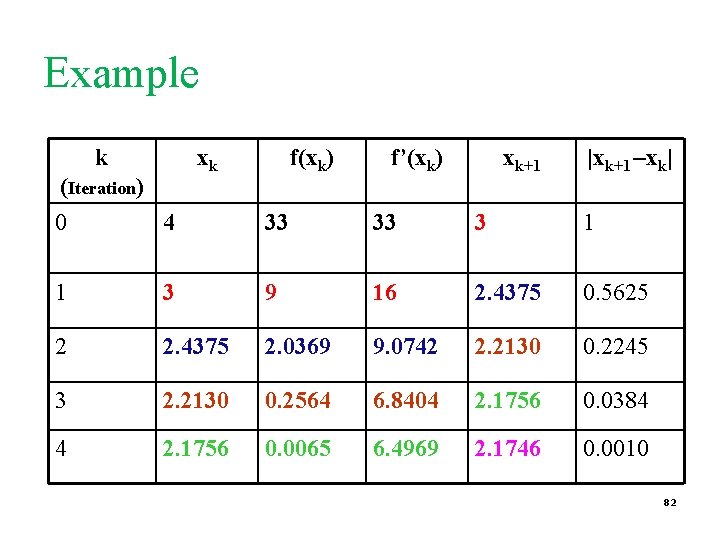

Example k xk (Iteration) f(xk) f’(xk) xk+1 |xk+1 –xk| 0 4 33 33 3 1 1 3 9 16 2. 4375 0. 5625 2 2. 4375 2. 0369 9. 0742 2. 2130 0. 2245 3 2. 2130 0. 2564 6. 8404 2. 1756 0. 0384 4 2. 1756 0. 0065 6. 4969 2. 1746 0. 0010 82

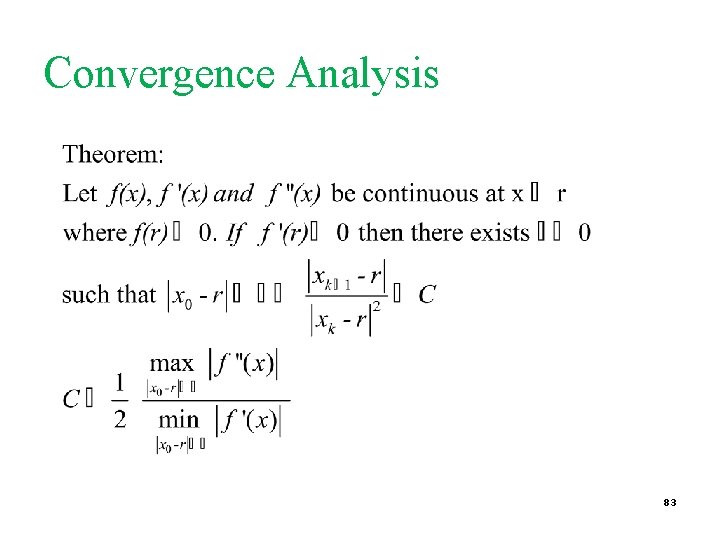

Convergence Analysis 83

Convergence Analysis Remarks § When the guess is close enough to a simple root of the function then Newton’s method is guaranteed to converge quadratically. § Quadratic convergence means that the number of correct digits is nearly doubled at each iteration. 84

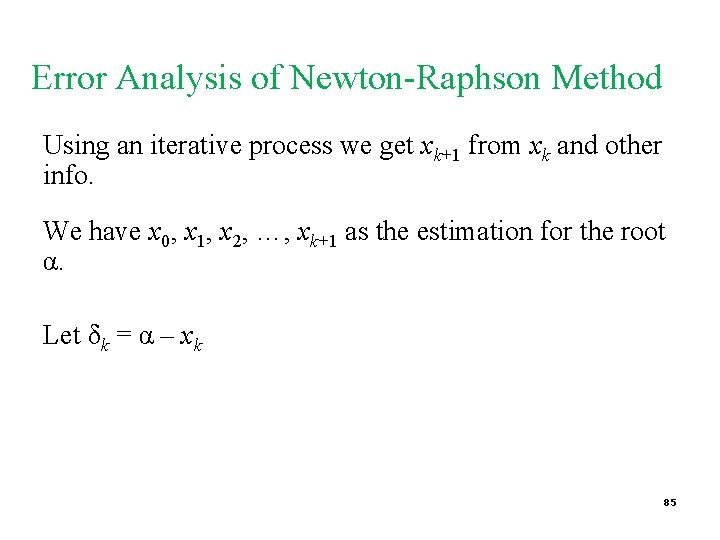

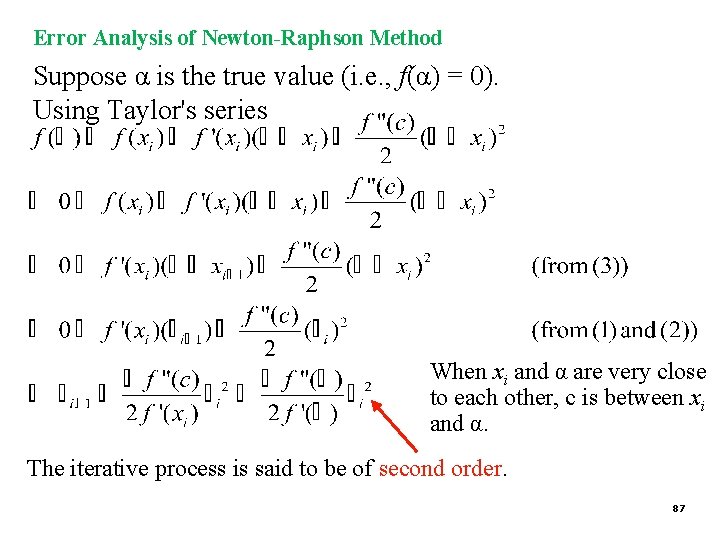

Error Analysis of Newton-Raphson Method Using an iterative process we get xk+1 from xk and other info. We have x 0, x 1, x 2, …, xk+1 as the estimation for the root α. Let δk = α – xk 85

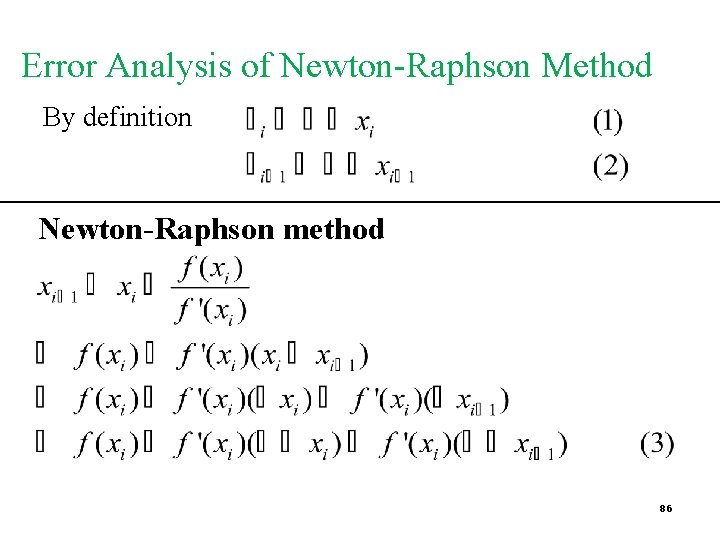

Error Analysis of Newton-Raphson Method By definition Newton-Raphson method 86

Error Analysis of Newton-Raphson Method Suppose α is the true value (i. e. , f(α) = 0). Using Taylor's series When xi and α are very close to each other, c is between xi and α. The iterative process is said to be of second order. 87

Problems with Newton’s Method • If the initial guess of the root is far from the root the method may not converge. • Newton’s method converges linearly near multiple zeros { f(r) = f’(r) =0 }. In such a case, modified algorithms can be used to regain the quadratic convergence. 88

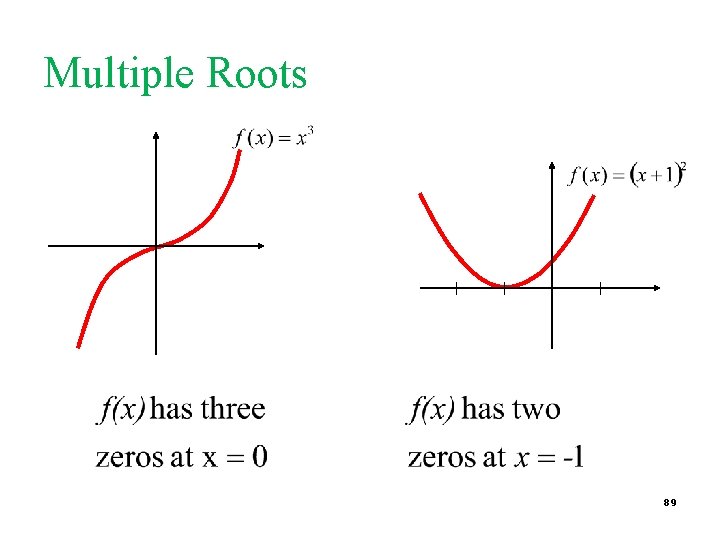

Multiple Roots 89

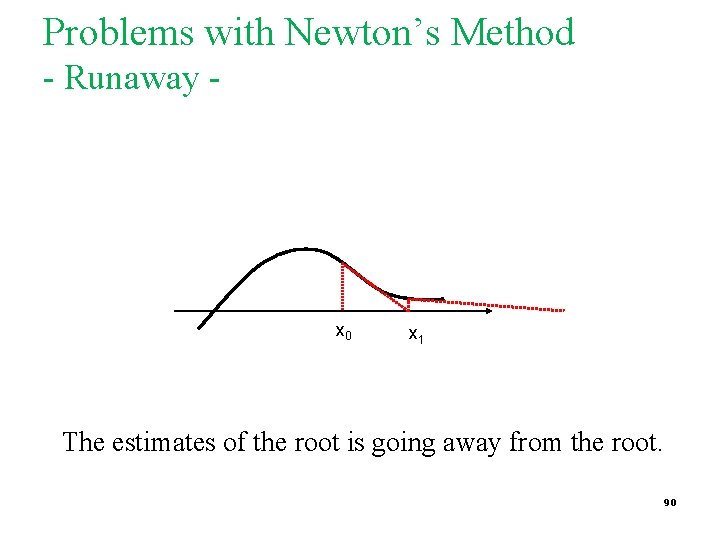

Problems with Newton’s Method - Runaway - x 0 x 1 The estimates of the root is going away from the root. 90

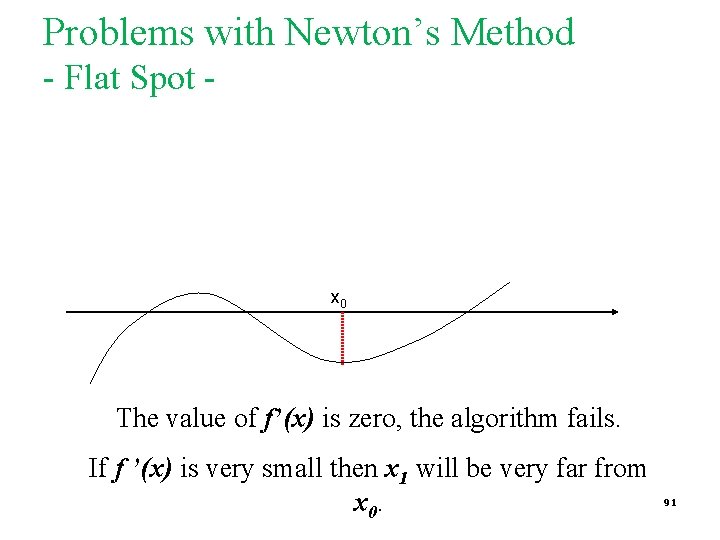

Problems with Newton’s Method - Flat Spot - x 0 The value of f’(x) is zero, the algorithm fails. If f ’(x) is very small then x 1 will be very far from x 0. 91

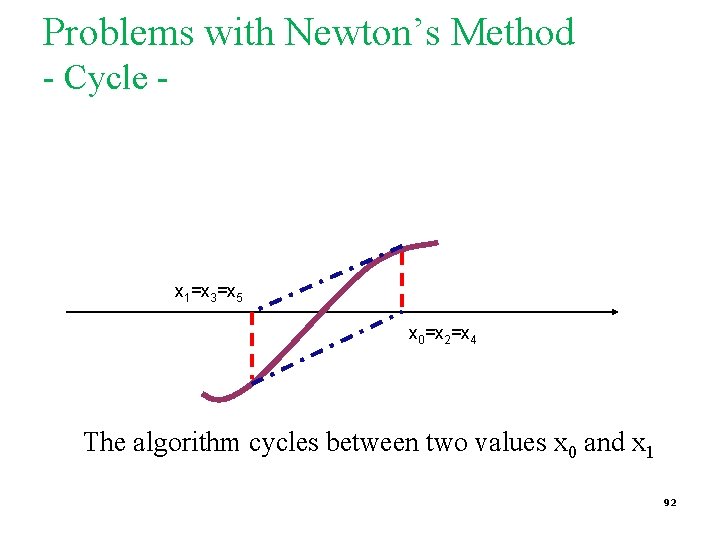

Problems with Newton’s Method - Cycle - x 1=x 3=x 5 x 0=x 2=x 4 The algorithm cycles between two values x 0 and x 1 92

Secant Method p p p Secant Method Examples Convergence Analysis 93

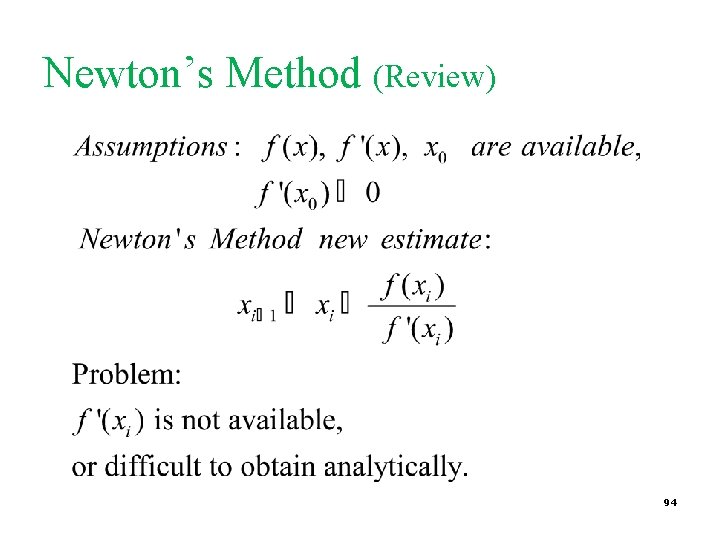

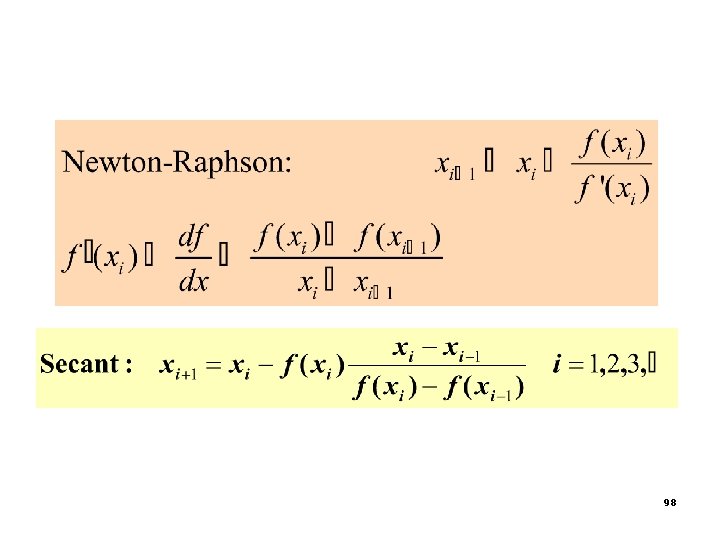

Newton’s Method (Review) 94

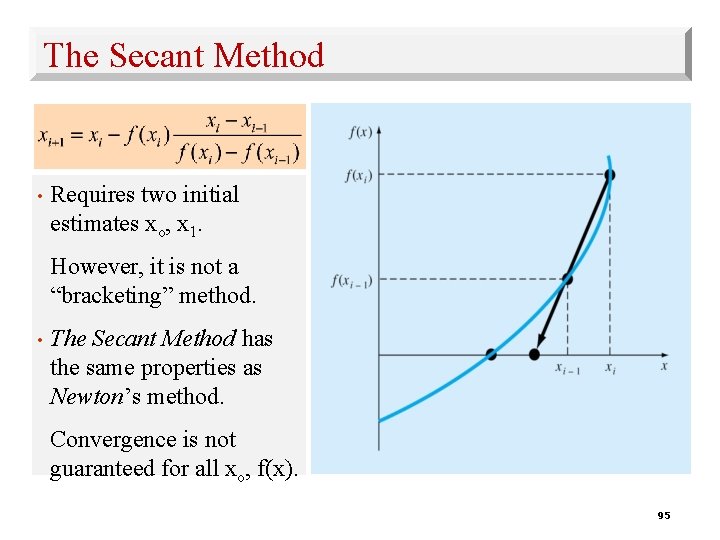

The Secant Method • Requires two initial estimates xo, x 1. However, it is not a “bracketing” method. • The Secant Method has the same properties as Newton’s method. Convergence is not guaranteed for all xo, f(x). 95

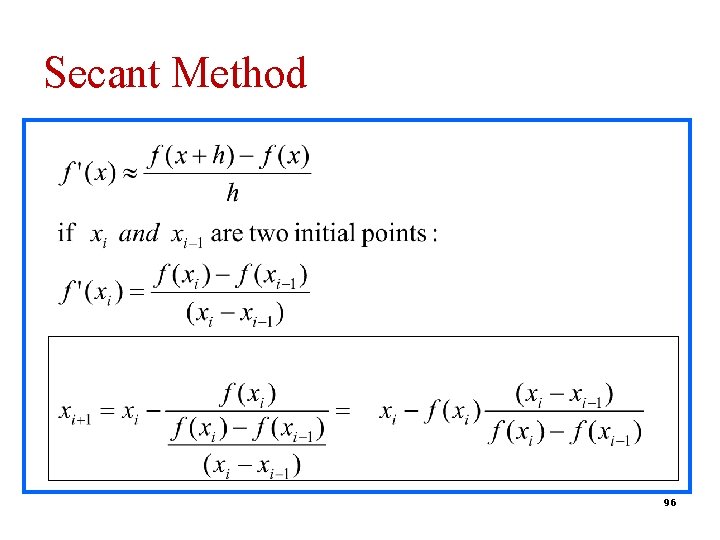

Secant Method 96

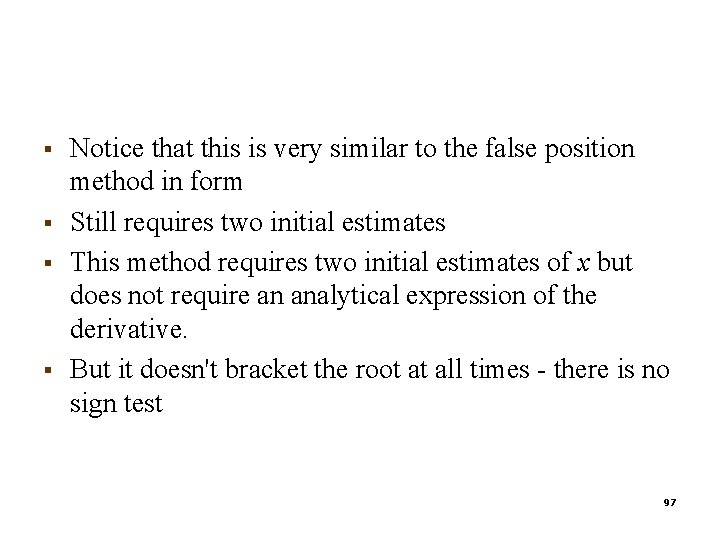

§ § Notice that this is very similar to the false position method in form Still requires two initial estimates This method requires two initial estimates of x but does not require an analytical expression of the derivative. But it doesn't bracket the root at all times - there is no sign test 97

98

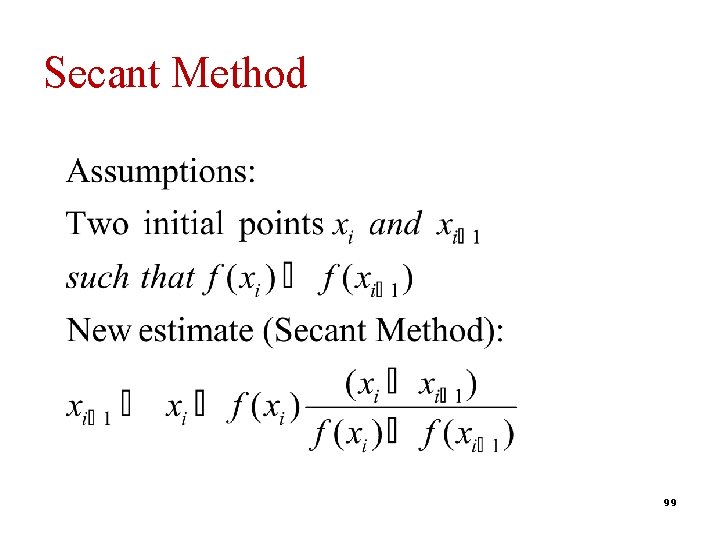

Secant Method 99

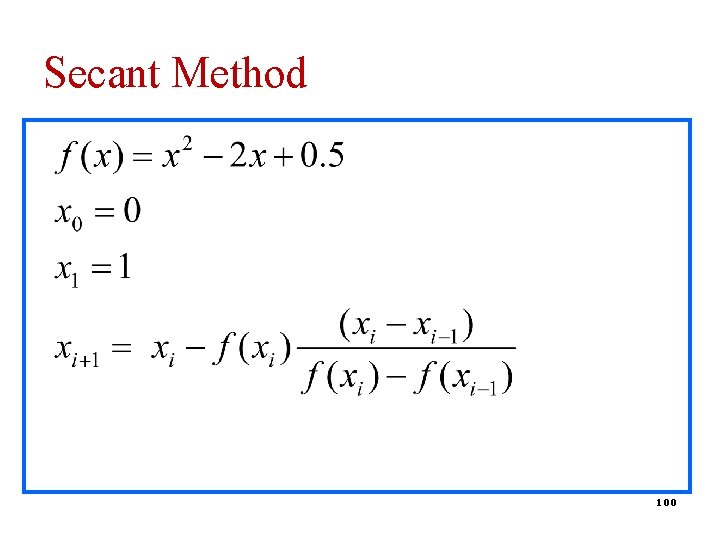

Secant Method 100

![Algorithm for Secant method Open Method 1. Begin with any two endpoints [a, b] Algorithm for Secant method Open Method 1. Begin with any two endpoints [a, b]](http://slidetodoc.com/presentation_image_h2/a7e41f2e27c9ef1c66164125362792c8/image-101.jpg)

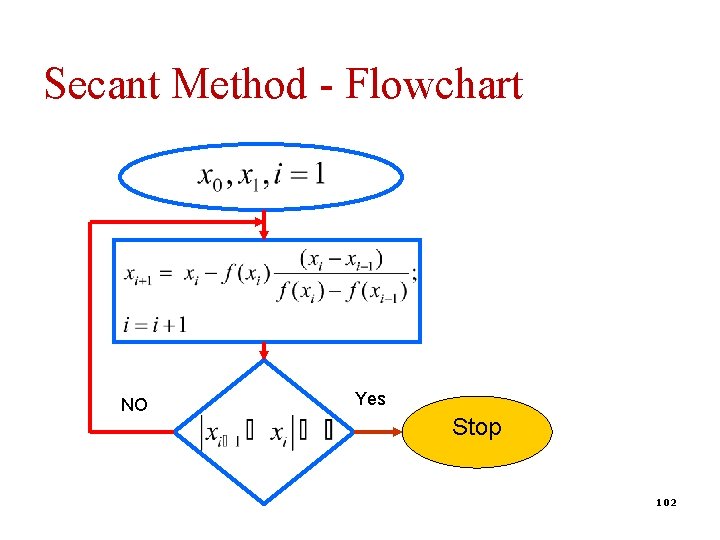

Algorithm for Secant method Open Method 1. Begin with any two endpoints [a, b] = [x 0 , x 1] 2. Calculate x 2 using the secant method formula 3. Replace x 0 with x 1, replace x 1 with x 2 and repeat from (2) until convergence is reached Use the two most recently generated points in subsequent iterations (not a bracket method!) 101

Secant Method - Flowchart NO Yes Stop 102

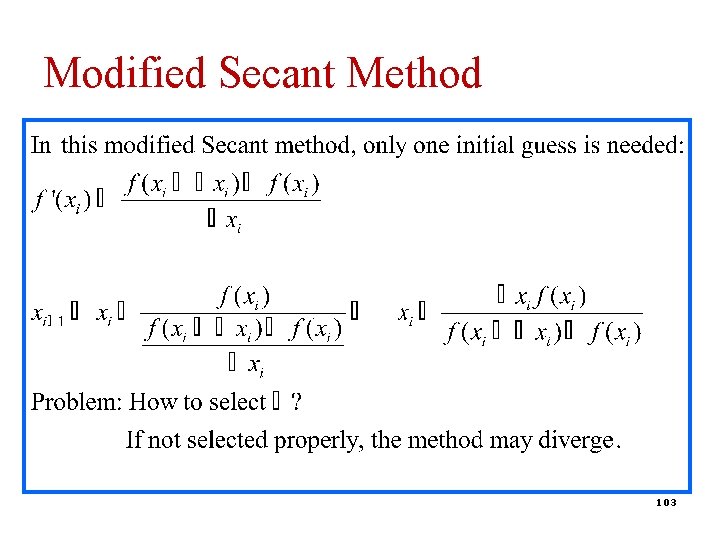

Modified Secant Method 103

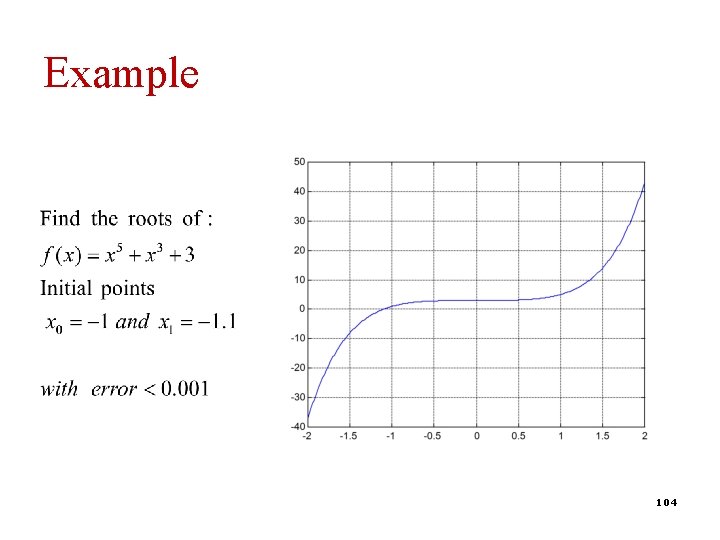

Example 104

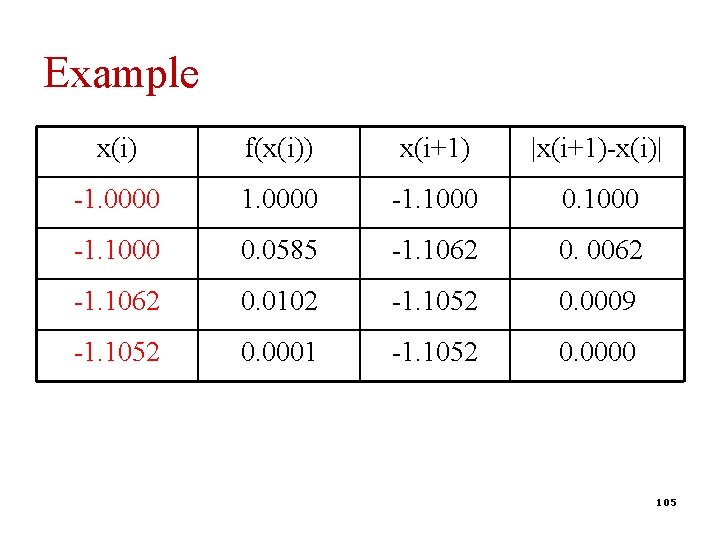

Example x(i) f(x(i)) x(i+1) |x(i+1)-x(i)| -1. 0000 -1. 1000 0. 1000 -1. 1000 0. 0585 -1. 1062 0. 0062 -1. 1062 0. 0102 -1. 1052 0. 0009 -1. 1052 0. 0001 -1. 1052 0. 0000 105

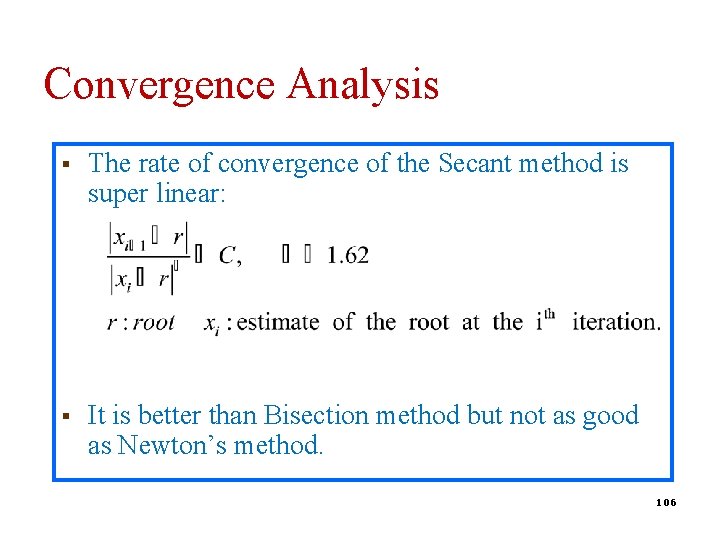

Convergence Analysis § The rate of convergence of the Secant method is super linear: § It is better than Bisection method but not as good as Newton’s method. 106

OPEN METHOD Fixed Point Iteration 107

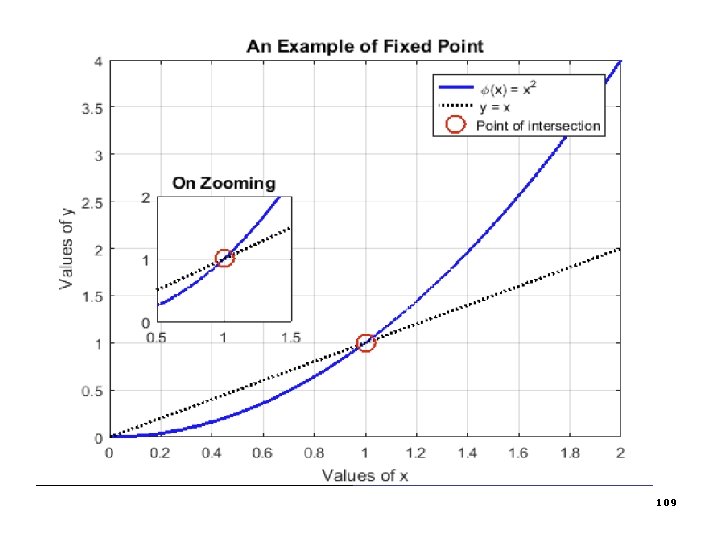

What is fixed point? Formal definition "A fixed point of a function is an element of function's domain that is mapped to itself by the function. " § § rewrite original equation f(x) = 0 into another form x = g(x). Use iteration xi+1 = g(xi ) to find a value that reaches convergence 108

109

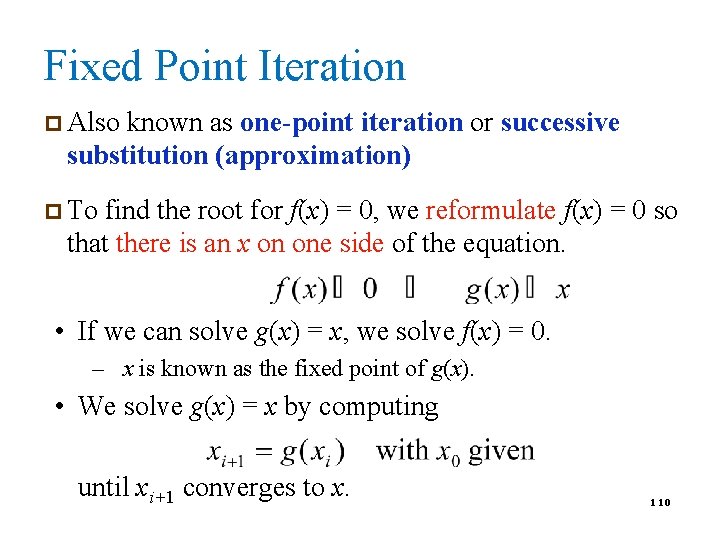

Fixed Point Iteration p Also known as one-point iteration or successive substitution (approximation) p To find the root for f(x) = 0, we reformulate f(x) = 0 so that there is an x on one side of the equation. • If we can solve g(x) = x, we solve f(x) = 0. – x is known as the fixed point of g(x). • We solve g(x) = x by computing until xi+1 converges to x. 110

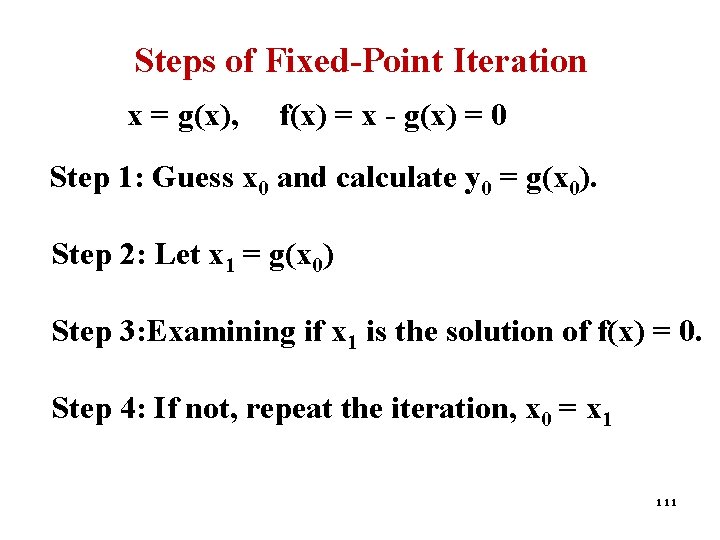

Steps of Fixed-Point Iteration x = g(x), f(x) = x - g(x) = 0 Step 1: Guess x 0 and calculate y 0 = g(x 0). Step 2: Let x 1 = g(x 0) Step 3: Examining if x 1 is the solution of f(x) = 0. Step 4: If not, repeat the iteration, x 0 = x 1 111

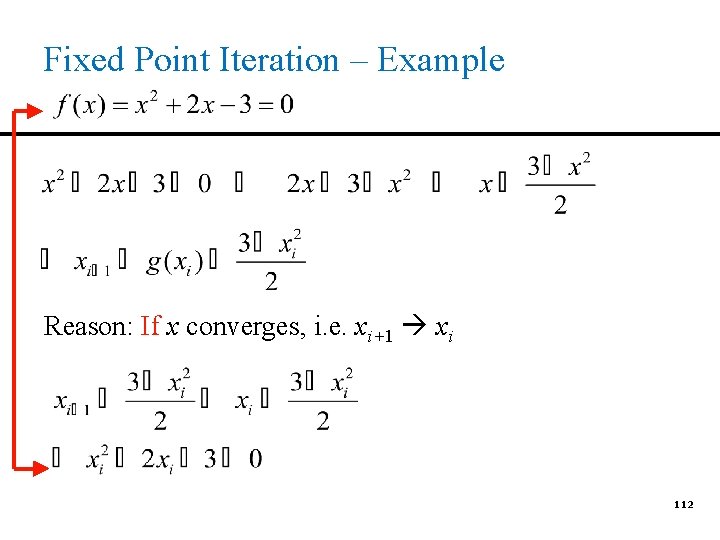

Fixed Point Iteration – Example Reason: If x converges, i. e. xi+1 xi 112

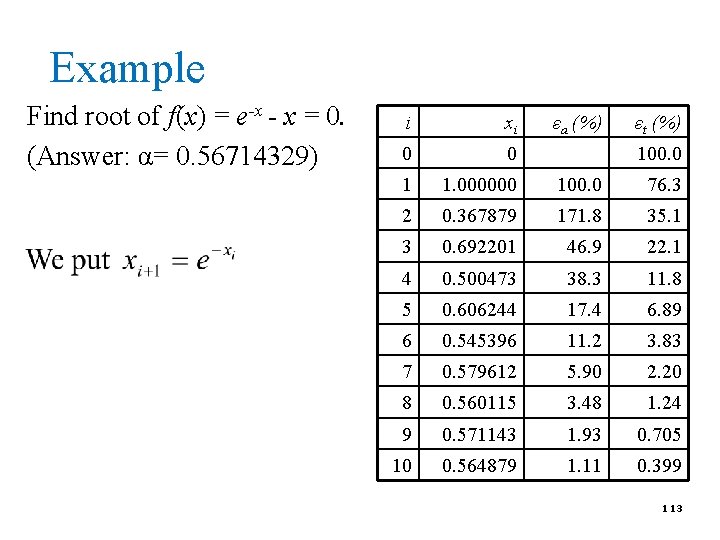

Example Find root of f(x) = e-x - x = 0. (Answer: α= 0. 56714329) i xi εa (%) εt (%) 0 0 1 1. 000000 100. 0 76. 3 2 0. 367879 171. 8 35. 1 3 0. 692201 46. 9 22. 1 4 0. 500473 38. 3 11. 8 5 0. 606244 17. 4 6. 89 6 0. 545396 11. 2 3. 83 7 0. 579612 5. 90 2. 20 8 0. 560115 3. 48 1. 24 9 0. 571143 1. 93 0. 705 10 0. 564879 1. 11 0. 399 100. 0 113

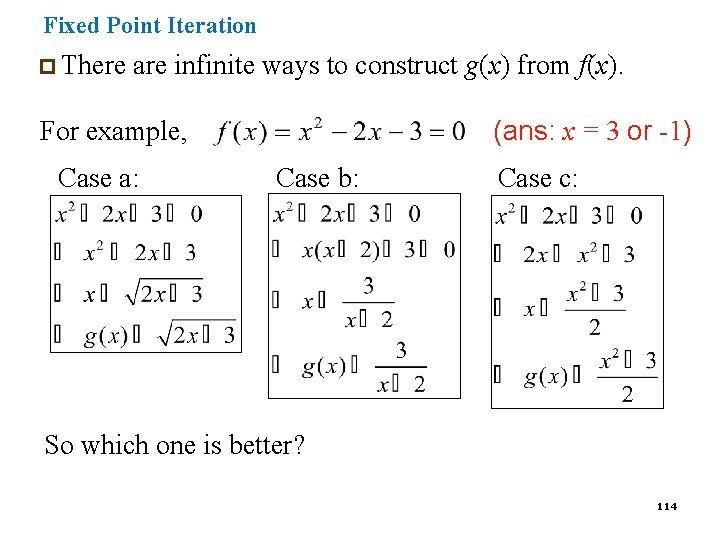

Fixed Point Iteration p There are infinite ways to construct g(x) from f(x). (ans: x = 3 or -1) For example, Case a: Case b: Case c: So which one is better? 114

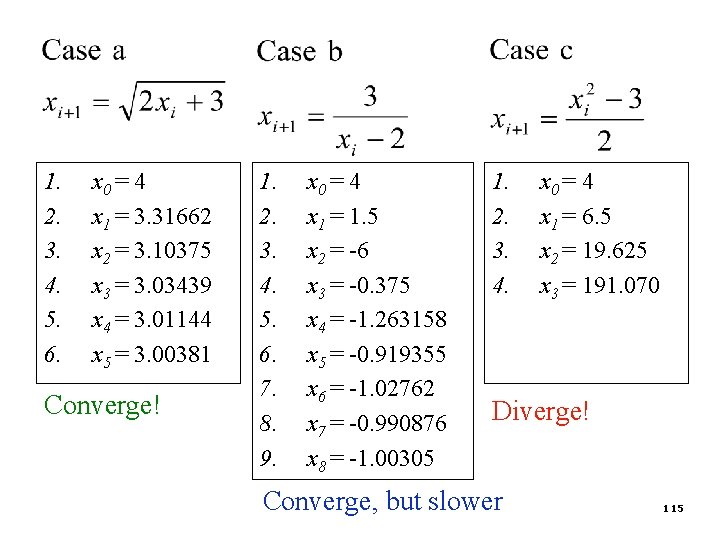

1. 2. 3. 4. 5. 6. x 0 = 4 x 1 = 3. 31662 x 2 = 3. 10375 x 3 = 3. 03439 x 4 = 3. 01144 x 5 = 3. 00381 Converge! 1. 2. 3. 4. 5. 6. 7. 8. 9. x 0 = 4 x 1 = 1. 5 x 2 = -6 x 3 = -0. 375 x 4 = -1. 263158 x 5 = -0. 919355 x 6 = -1. 02762 x 7 = -0. 990876 x 8 = -1. 00305 1. 2. 3. 4. x 0 = 4 x 1 = 6. 5 x 2 = 19. 625 x 3 = 191. 070 Diverge! Converge, but slower 115

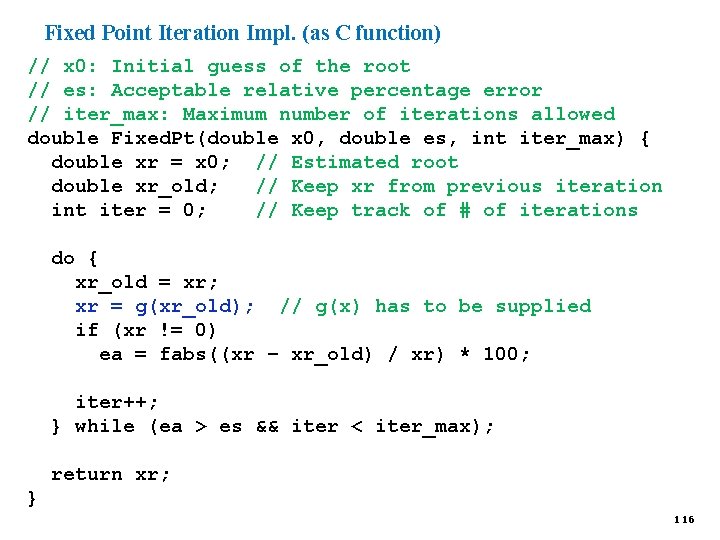

Fixed Point Iteration Impl. (as C function) // x 0: Initial guess of the root // es: Acceptable relative percentage error // iter_max: Maximum number of iterations allowed double Fixed. Pt(double x 0, double es, int iter_max) { double xr = x 0; // Estimated root double xr_old; // Keep xr from previous iteration int iter = 0; // Keep track of # of iterations do { xr_old = xr; xr = g(xr_old); // g(x) has to be supplied if (xr != 0) ea = fabs((xr – xr_old) / xr) * 100; iter++; } while (ea > es && iter < iter_max); return xr; } 116

Comparison of Root Finding Methods p p Advantages/disadvantages Examples 117

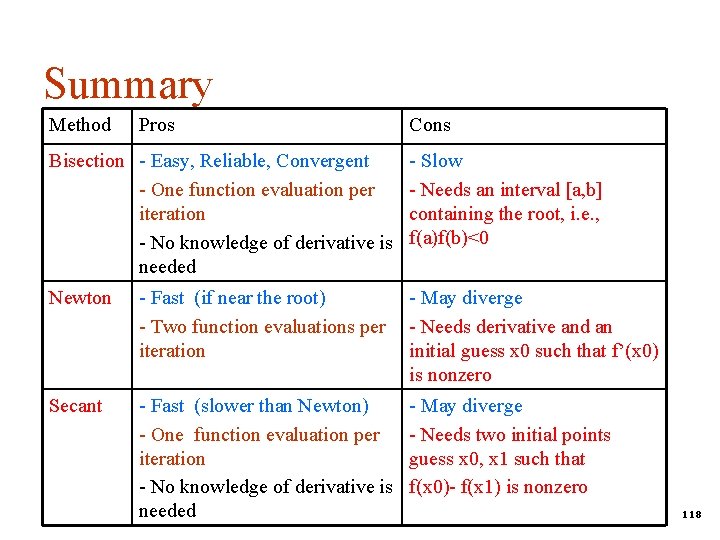

Summary Method Pros Cons Bisection - Easy, Reliable, Convergent - One function evaluation per iteration - No knowledge of derivative is needed - Slow - Needs an interval [a, b] containing the root, i. e. , f(a)f(b)<0 Newton - Fast (if near the root) - Two function evaluations per iteration - May diverge - Needs derivative and an initial guess x 0 such that f’(x 0) is nonzero Secant - Fast (slower than Newton) - One function evaluation per iteration - No knowledge of derivative is needed - May diverge - Needs two initial points guess x 0, x 1 such that f(x 0)- f(x 1) is nonzero 118

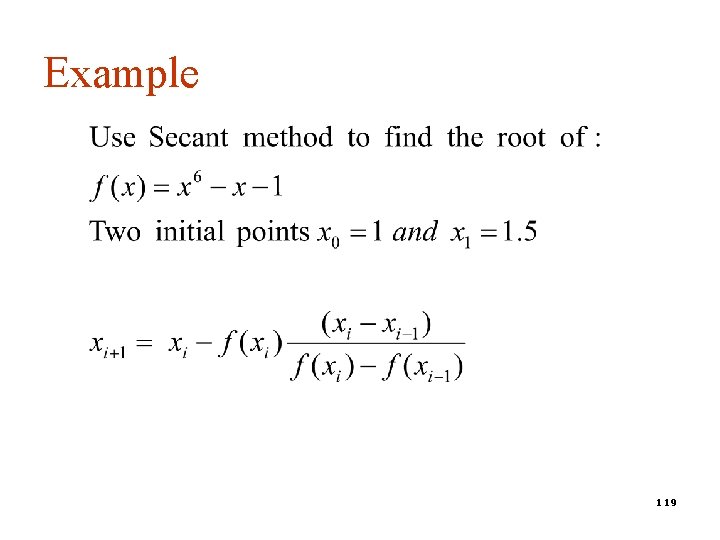

Example 119

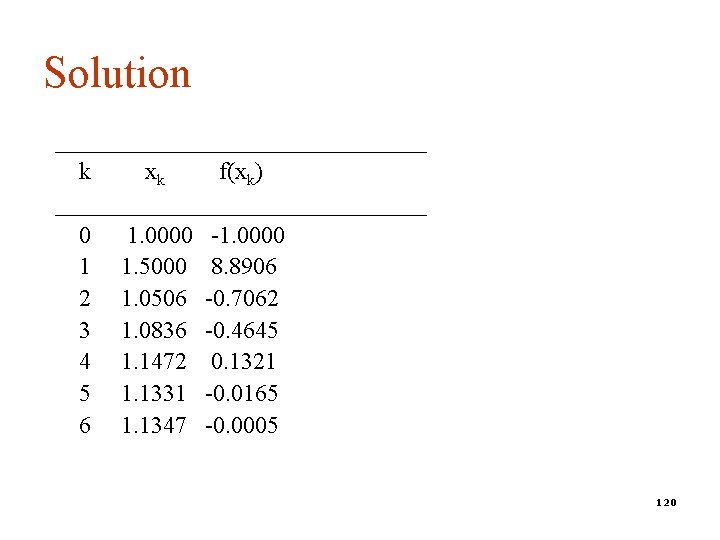

Solution ________________ k xk f(xk) ________________ 0 1. 0000 -1. 0000 1 1. 5000 8. 8906 2 1. 0506 -0. 7062 3 1. 0836 -0. 4645 4 1. 1472 0. 1321 5 1. 1331 -0. 0165 6 1. 1347 -0. 0005 120

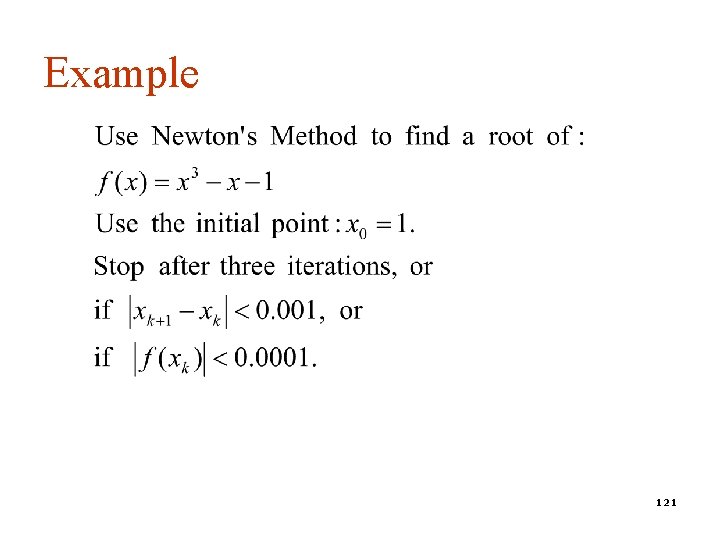

Example 121

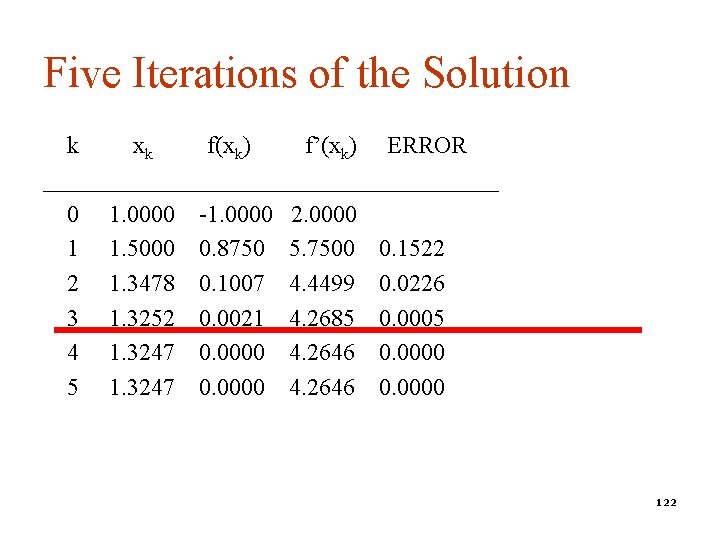

Five Iterations of the Solution k xk f(xk) f’(xk) ERROR ___________________ 0 1. 0000 -1. 0000 2. 0000 1 1. 5000 0. 8750 5. 7500 0. 1522 2 1. 3478 0. 1007 4. 4499 0. 0226 3 1. 3252 0. 0021 4. 2685 0. 0005 4 1. 3247 0. 0000 4. 2646 0. 0000 5 1. 3247 0. 0000 4. 2646 0. 0000 122

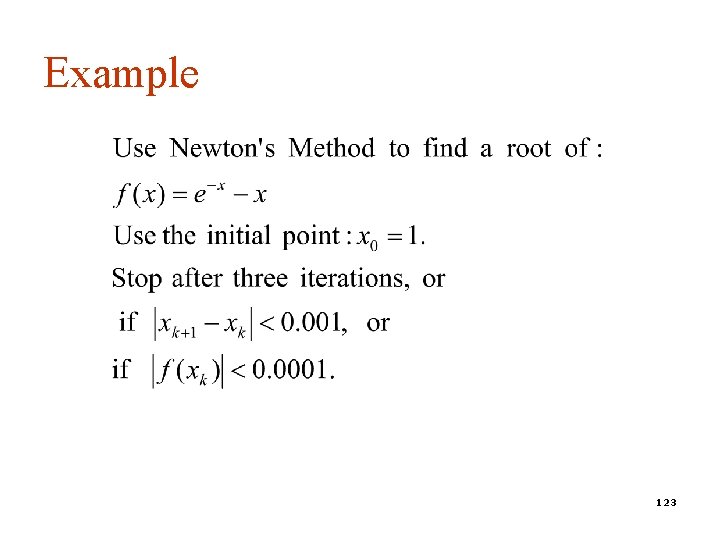

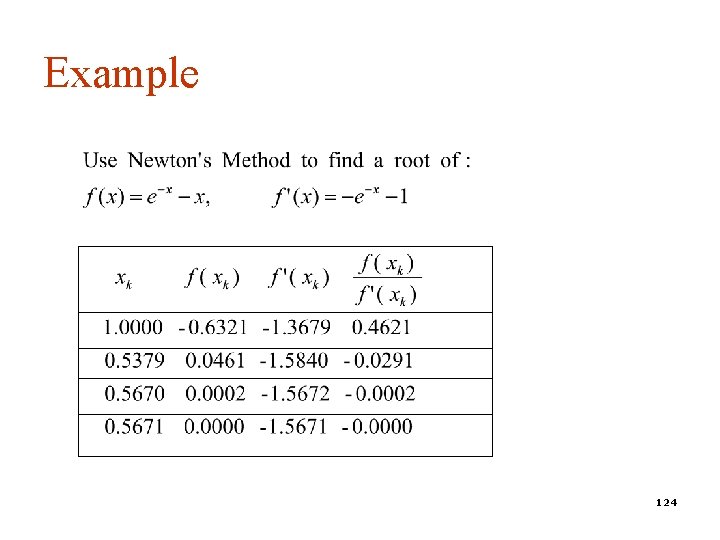

Example 123

Example 124

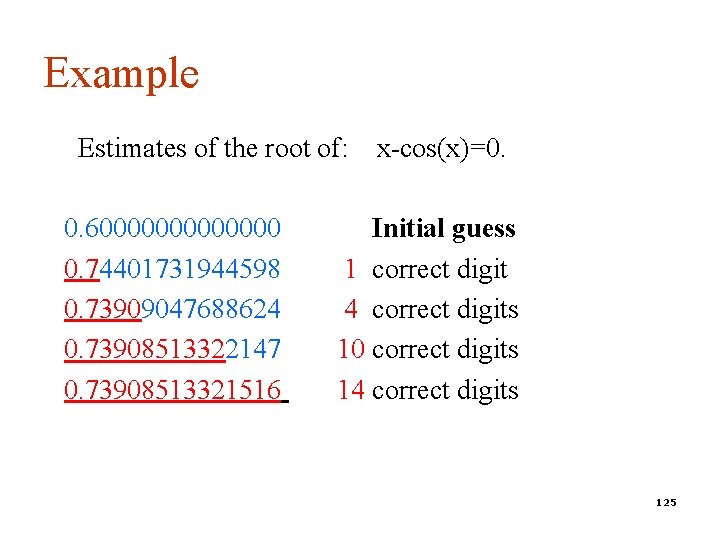

Example Estimates of the root of: 0. 60000000 0. 74401731944598 0. 73909047688624 0. 73908513322147 0. 73908513321516 x-cos(x)=0. Initial guess 1 correct digit 4 correct digits 10 correct digits 14 correct digits 125

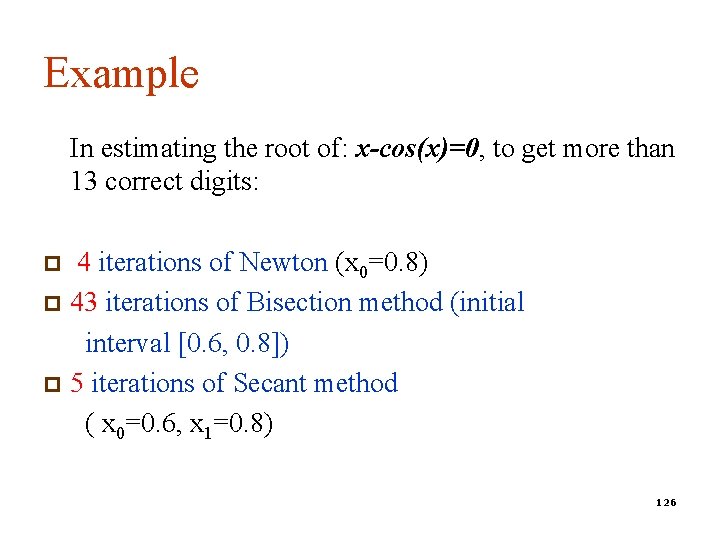

Example In estimating the root of: x-cos(x)=0, to get more than 13 correct digits: 4 iterations of Newton (x 0=0. 8) p 43 iterations of Bisection method (initial interval [0. 6, 0. 8]) p 5 iterations of Secant method ( x 0=0. 6, x 1=0. 8) p 126

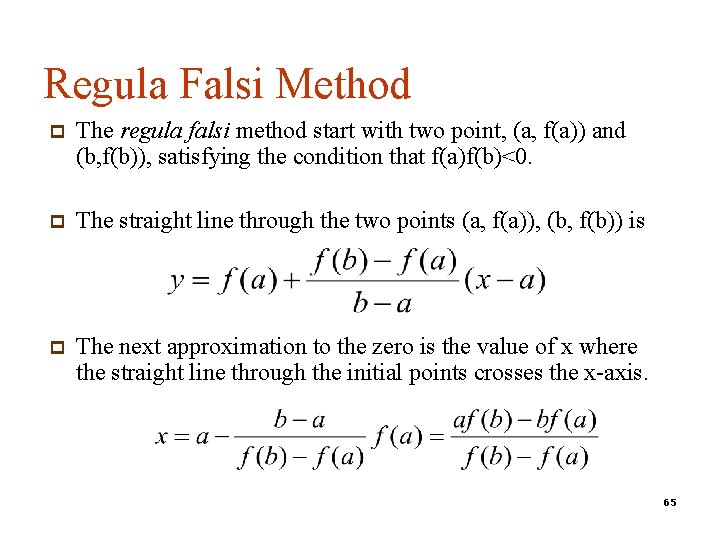

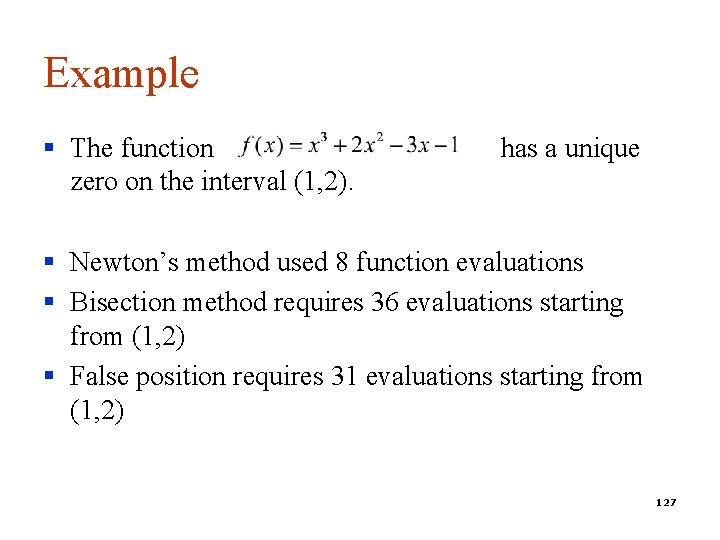

Example § The function zero on the interval (1, 2). has a unique § Newton’s method used 8 function evaluations § Bisection method requires 36 evaluations starting from (1, 2) § False position requires 31 evaluations starting from (1, 2) 127

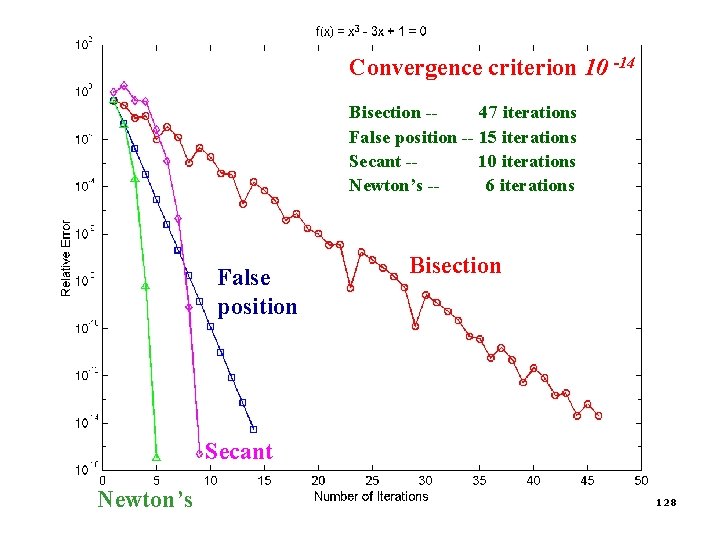

Convergence criterion 10 -14 Bisection -47 iterations False position -- 15 iterations Secant -10 iterations Newton’s -6 iterations False position Bisection Secant Newton’s 128

- Slides: 128