Robustness in Unsupervised and Supervised Machine Learning Gautam

Robustness in Unsupervised and Supervised Machine Learning Gautam Kamath University of Waterloo May 12, 2020 University of Waterloo Algorithms and Complexity Seminar

Modern Challenge: Robustness How can we ensure machine learning systems are prepared for when the real world doesn’t match our models?

Classic Motivations • Model misspecification • Nature doesn’t sample from Gaussians • Truly i. i. d. samples are rare • Dirty datasets

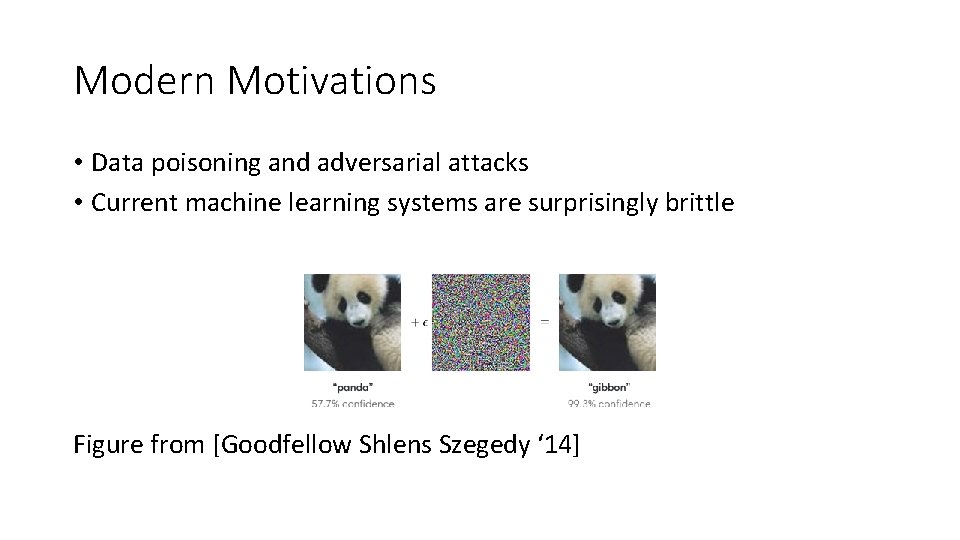

Modern Motivations • Data poisoning and adversarial attacks • Current machine learning systems are surprisingly brittle Figure from [Goodfellow Shlens Szegedy ‘ 14]

![Modern Motivations From [Gu Dolan-Gavitt Garg ‘ 17] Modern Motivations From [Gu Dolan-Gavitt Garg ‘ 17]](http://slidetodoc.com/presentation_image_h/03223f4524d03dd8ad5cfb6c6ea7c8ad/image-5.jpg)

Modern Motivations From [Gu Dolan-Gavitt Garg ‘ 17]

![Modern Motivations From [Athalye Engstrom Ilyas Kwok ‘ 17] Modern Motivations From [Athalye Engstrom Ilyas Kwok ‘ 17]](http://slidetodoc.com/presentation_image_h/03223f4524d03dd8ad5cfb6c6ea7c8ad/image-6.jpg)

Modern Motivations From [Athalye Engstrom Ilyas Kwok ‘ 17]

Robustness • Can we develop algorithms which are provably robust to worst-case noise? Today: Provably robust and effective algorithms for parameter estimation and fundamental supervised learning tasks

Robust High-Dimensional Parameter Estimation Robust Estimators in High Dimensions without the Computational Intractability [Diakonikolas K Kane Li Moitra Stewart, FOCS ‘ 16]

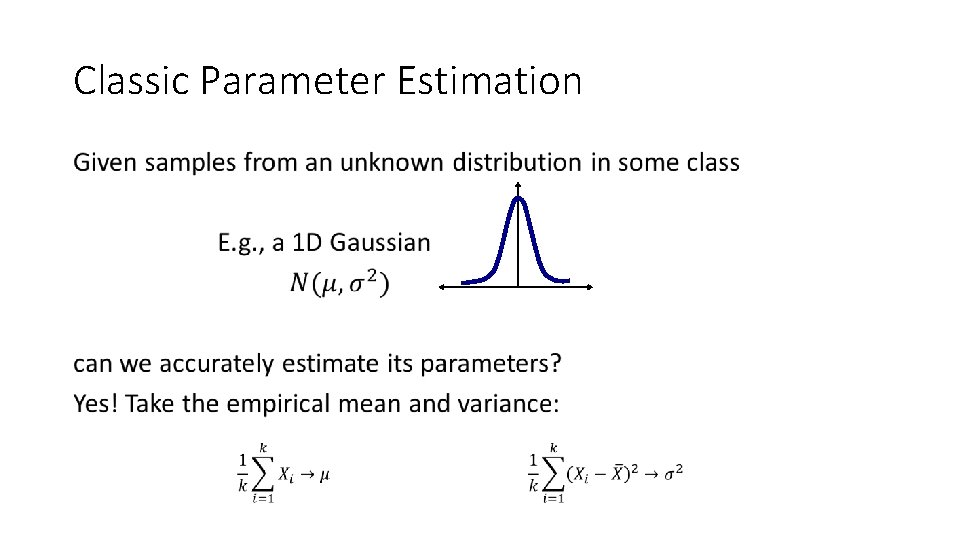

Classic Parameter Estimation •

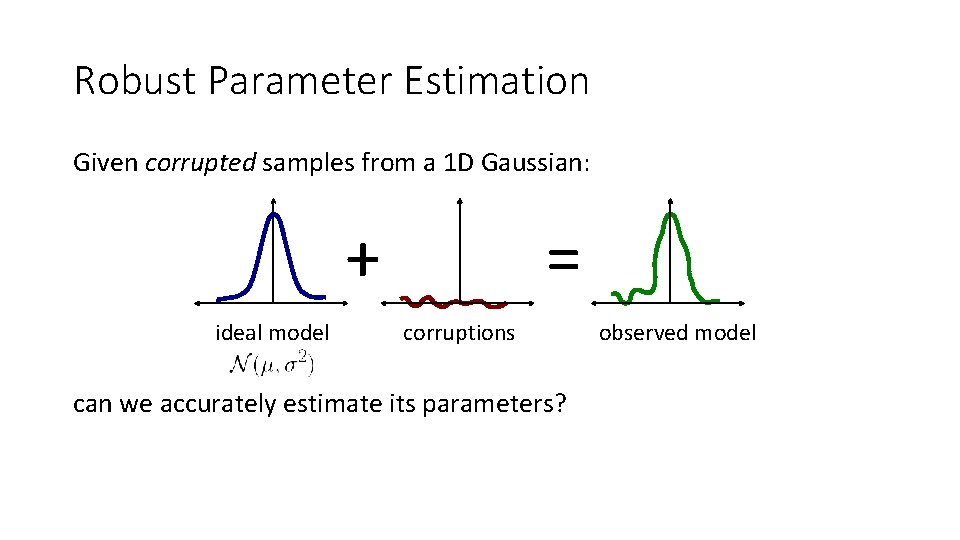

Robust Parameter Estimation Given corrupted samples from a 1 D Gaussian: + ideal model = corruptions can we accurately estimate its parameters? observed model

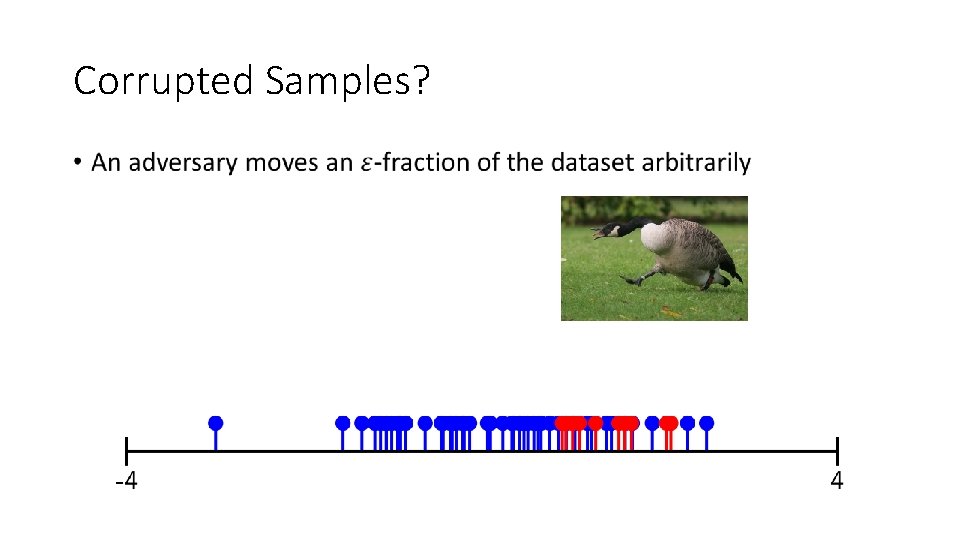

Corrupted Samples? •

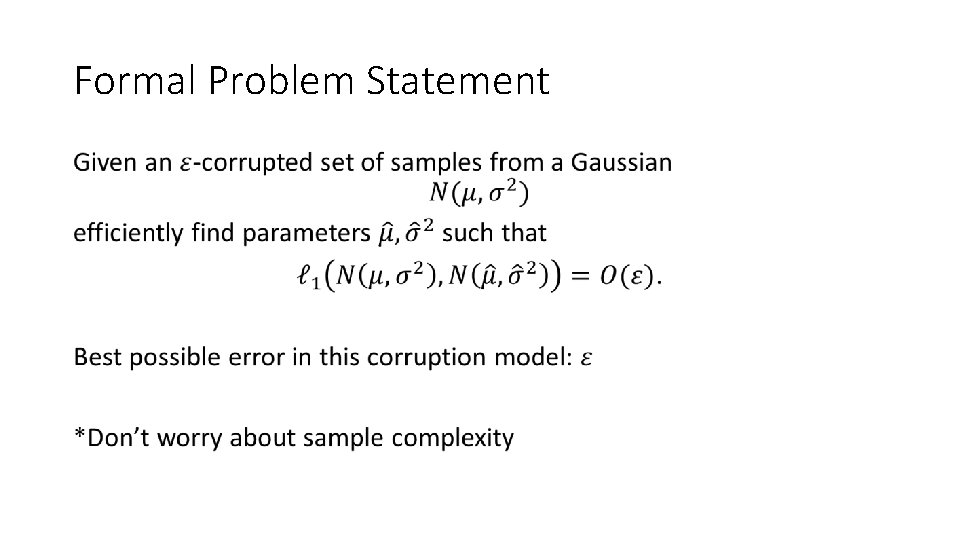

Formal Problem Statement •

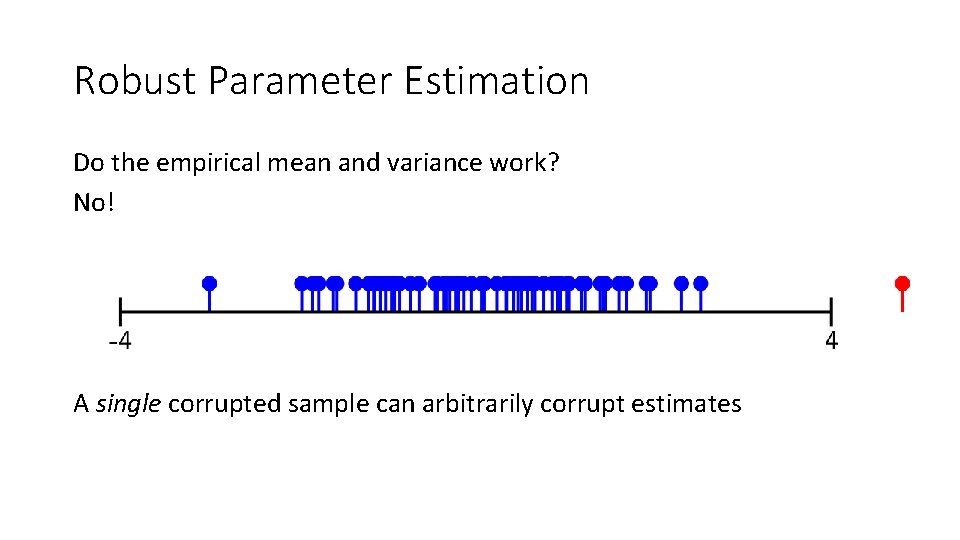

Robust Parameter Estimation Do the empirical mean and variance work? No! A single corrupted sample can arbitrarily corrupt estimates

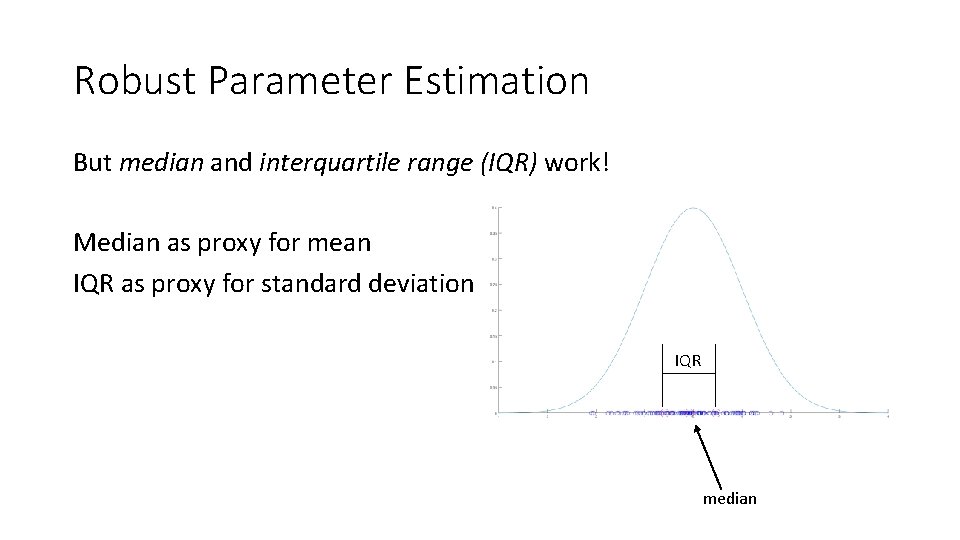

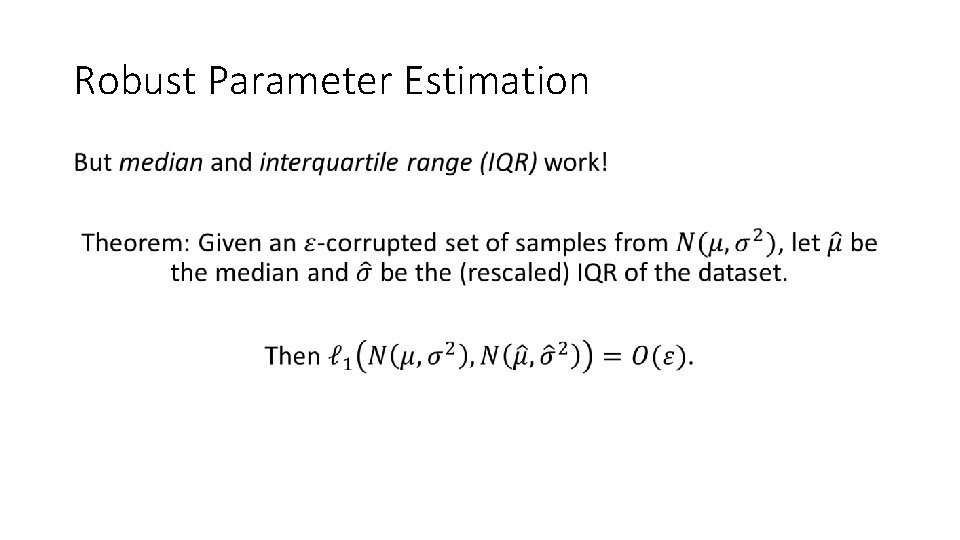

Robust Parameter Estimation But median and interquartile range (IQR) work! Median as proxy for mean IQR as proxy for standard deviation IQR median

Robust Parameter Estimation •

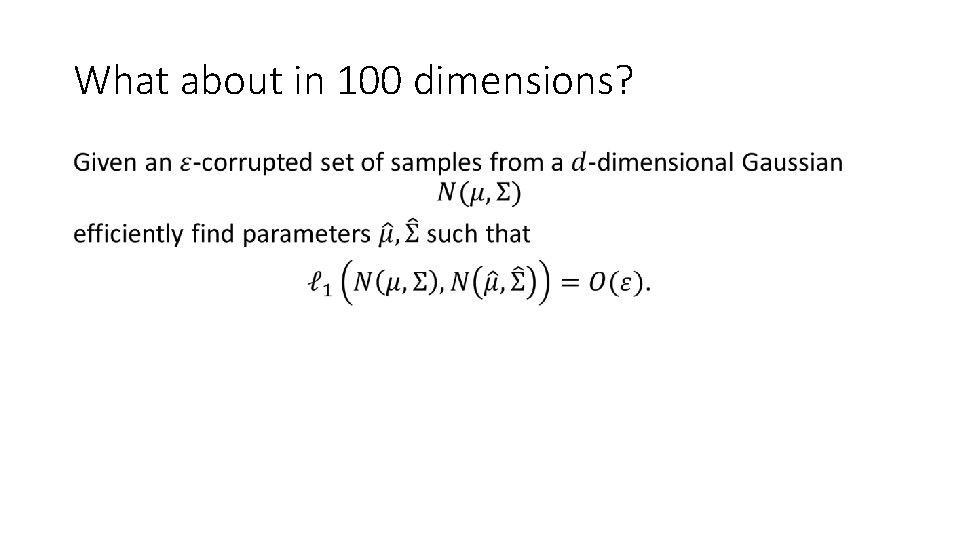

What about in 100 dimensions? •

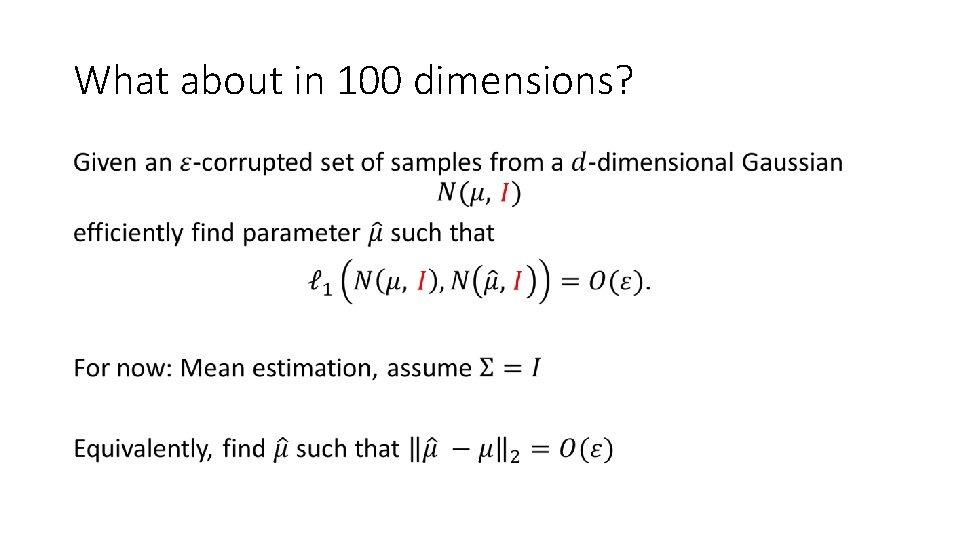

What about in 100 dimensions? •

![Compendium of approaches Approach Error guarantee Tukey Median [Tukey’ 75] Tukey Depth of a Compendium of approaches Approach Error guarantee Tukey Median [Tukey’ 75] Tukey Depth of a](http://slidetodoc.com/presentation_image_h/03223f4524d03dd8ad5cfb6c6ea7c8ad/image-18.jpg)

Compendium of approaches Approach Error guarantee Tukey Median [Tukey’ 75] Tukey Depth of a point: Min. # of data points on one side of a hyperplane through point Tukey Median of a dataset: Point with maximum Tukey depth (not necessarily in dataset!) Running time NP-hard

![Compendium of approaches Approach Error guarantee Running time Tukey Median [Tukey’ 75] NP-hard Geometric Compendium of approaches Approach Error guarantee Running time Tukey Median [Tukey’ 75] NP-hard Geometric](http://slidetodoc.com/presentation_image_h/03223f4524d03dd8ad5cfb6c6ea7c8ad/image-19.jpg)

Compendium of approaches Approach Error guarantee Running time Tukey Median [Tukey’ 75] NP-hard Geometric Median Near-linear! [CLMPS’ 16]

![Compendium of approaches Approach Error guarantee Running time Tukey Median [Tukey’ 75] NP-hard Geometric Compendium of approaches Approach Error guarantee Running time Tukey Median [Tukey’ 75] NP-hard Geometric](http://slidetodoc.com/presentation_image_h/03223f4524d03dd8ad5cfb6c6ea7c8ad/image-20.jpg)

Compendium of approaches Approach Error guarantee Running time Tukey Median [Tukey’ 75] NP-hard Geometric Median Near-linear! [CLMPS’ 16] Naïve Pruning Linear

![Compendium of approaches Approach Error guarantee Running time Tukey Median [Tukey’ 75] NP-hard Geometric Compendium of approaches Approach Error guarantee Running time Tukey Median [Tukey’ 75] NP-hard Geometric](http://slidetodoc.com/presentation_image_h/03223f4524d03dd8ad5cfb6c6ea7c8ad/image-21.jpg)

Compendium of approaches Approach Error guarantee Running time Tukey Median [Tukey’ 75] NP-hard Geometric Median Near-linear! [CLMPS’ 16] Naïve Pruning Linear “Tournament”

![Compendium of approaches Approach Error guarantee Running time Tukey Median [Tukey’ 75] NP-hard Geometric Compendium of approaches Approach Error guarantee Running time Tukey Median [Tukey’ 75] NP-hard Geometric](http://slidetodoc.com/presentation_image_h/03223f4524d03dd8ad5cfb6c6ea7c8ad/image-22.jpg)

Compendium of approaches Approach Error guarantee Running time Tukey Median [Tukey’ 75] NP-hard Geometric Median Near-linear! [CLMPS’ 16] Naïve Pruning Linear “Tournament” … … …

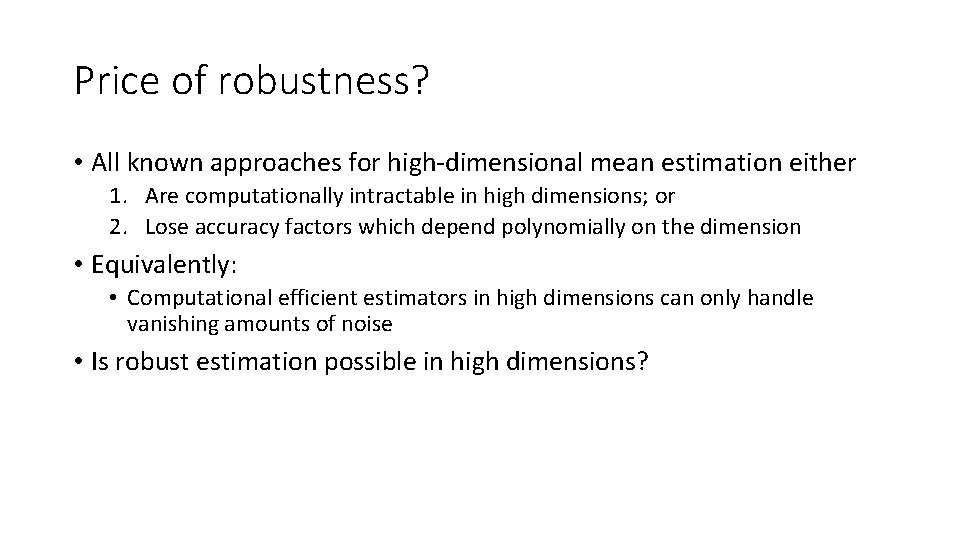

Price of robustness? • All known approaches for high-dimensional mean estimation either 1. Are computationally intractable in high dimensions; or 2. Lose accuracy factors which depend polynomially on the dimension • Equivalently: • Computational efficient estimators in high dimensions can only handle vanishing amounts of noise • Is robust estimation possible in high dimensions?

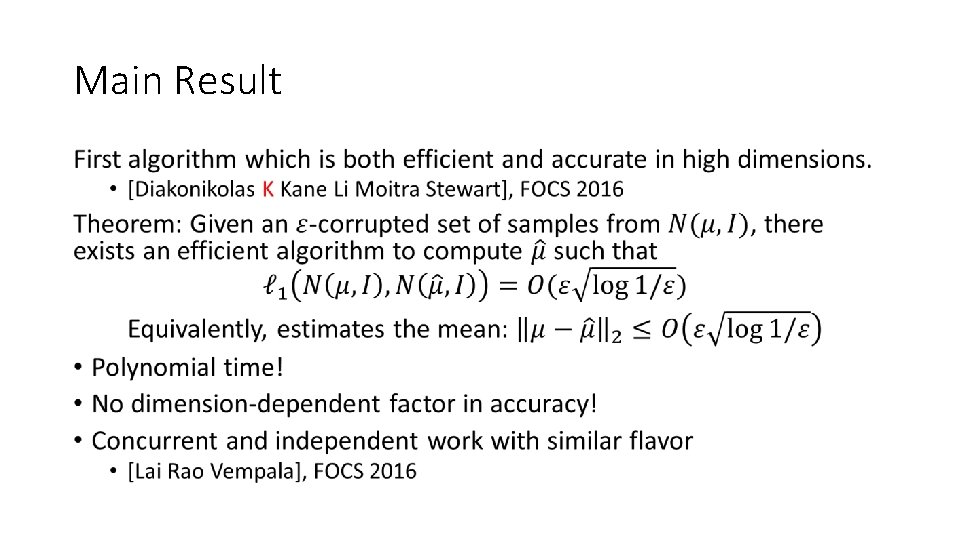

Main Result •

Why do naïve methods get stuck? Consider the following simple algorithm: Naïve pruning: Remove all the points which are obviously too far to be from the Gaussian, then take the empirical mean of the remaining points.

Why do naïve methods get stuck? Consider the following simple algorithm: Naïve pruning: Remove all the points which are obviously too far to be from the Gaussian, then take the empirical mean of the remaining points.

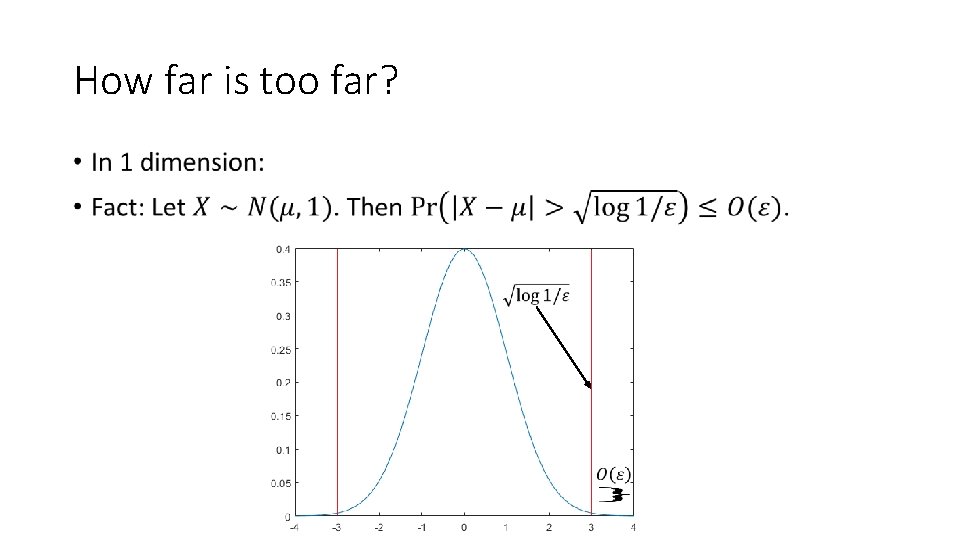

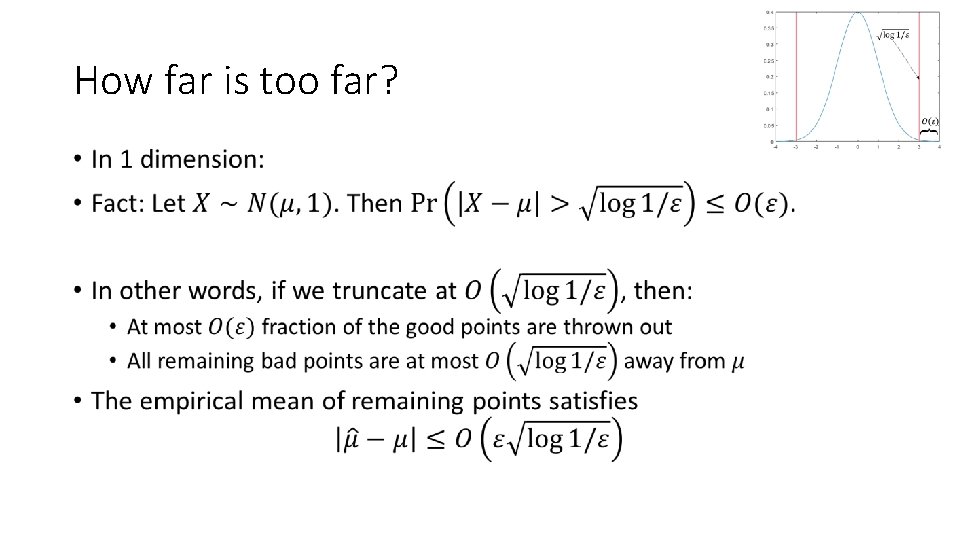

How far is too far? •

How far is too far? •

How far is too far? •

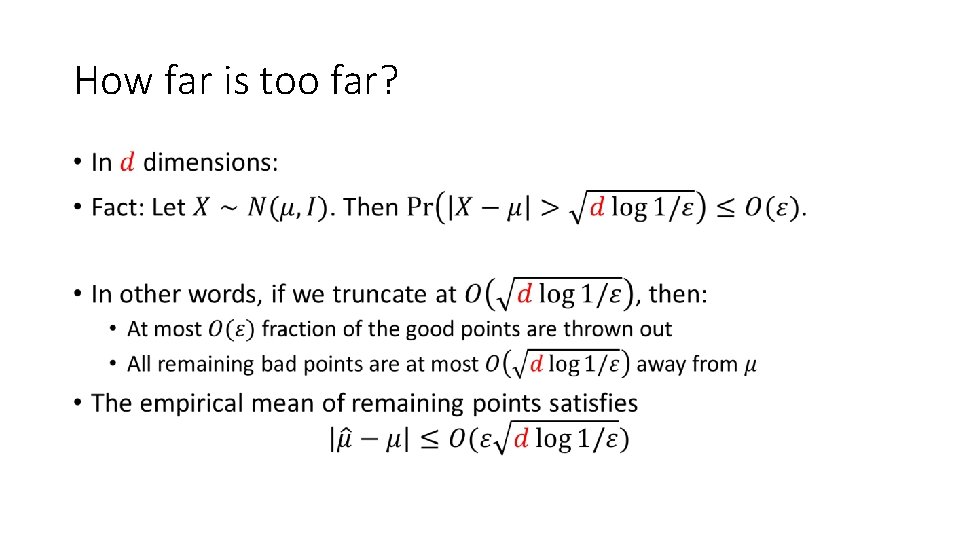

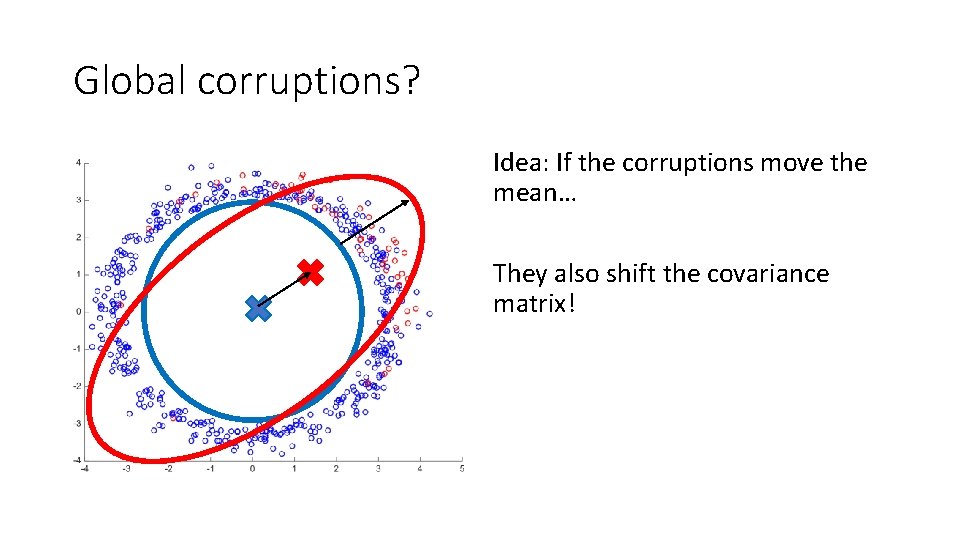

Corruptions in 2 dimensions

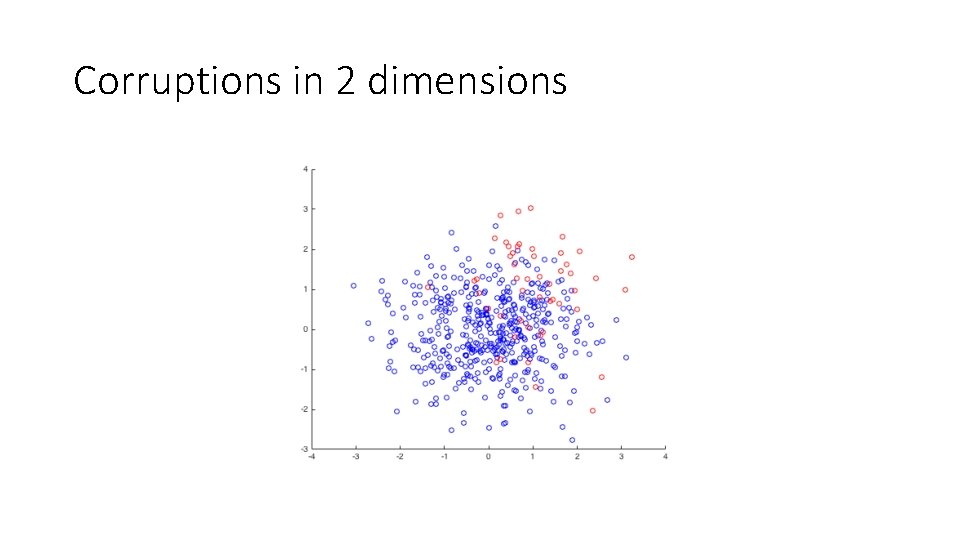

Corruptions in high dimensions •

Global corruptions? Idea: If the corruptions move the mean… They also shift the covariance matrix!

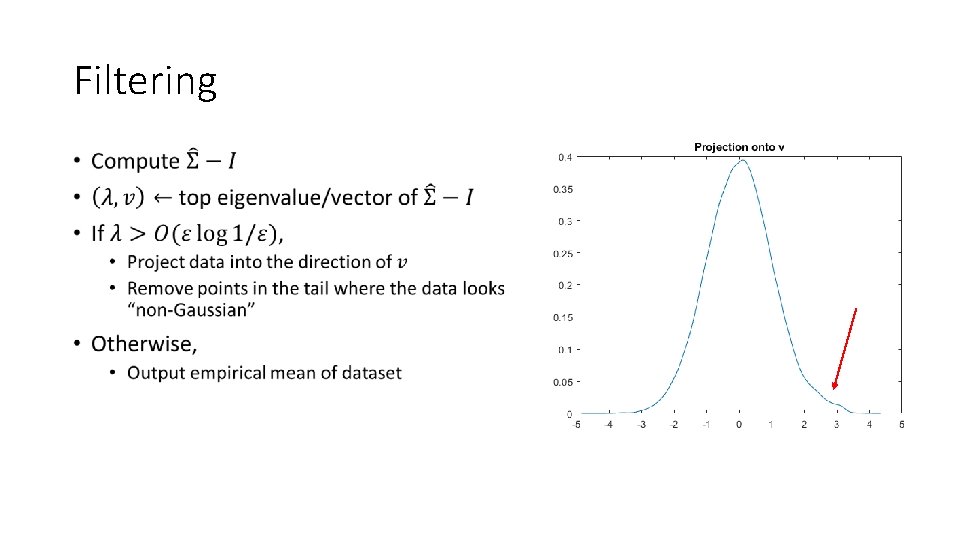

Filtering •

Filtering •

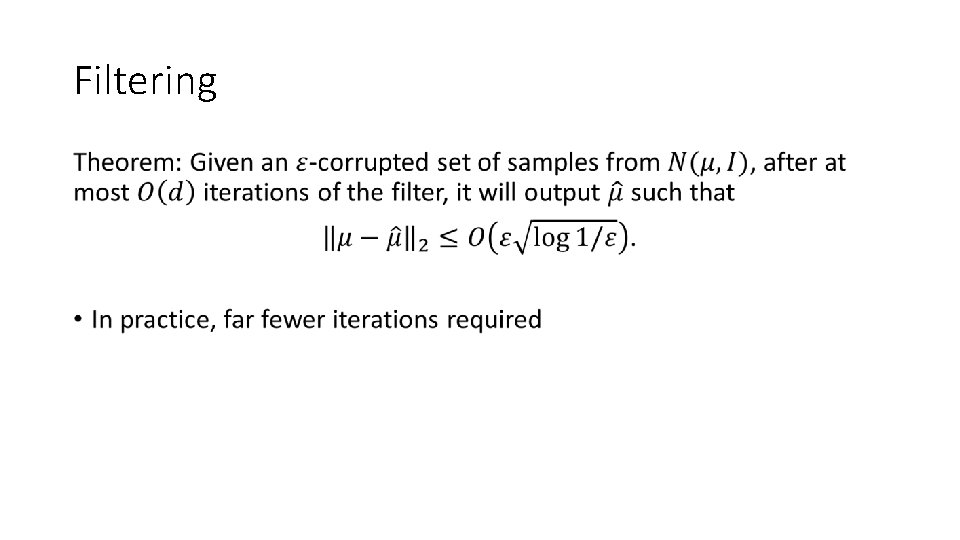

Unsupervised Learning Being Robust (in High Dimensions) Can Be Practical [Diakonikolas K Kane Li Moitra Stewart, ICML ‘ 17]

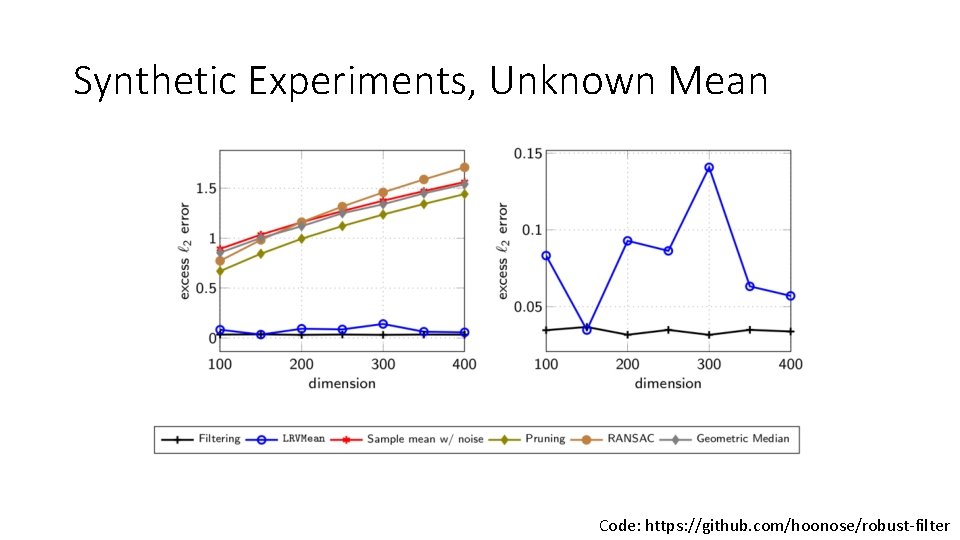

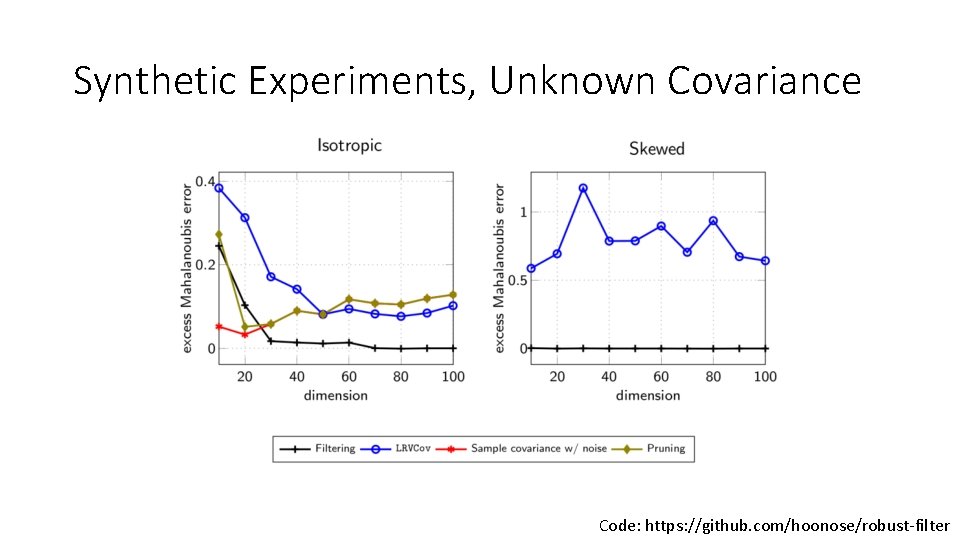

Does the filter “work”? • 90% Gaussian data, 10% adversarial noise • Isotropic Gaussian • Estimate mean • Estimate covariance • Skewed Gaussian • Estimate covariance

Synthetic Experiments, Unknown Mean Code: https: //github. com/hoonose/robust-filter

Synthetic Experiments, Unknown Covariance Code: https: //github. com/hoonose/robust-filter

Exploratory Data Analysis Being Robust (in High Dimensions) Can Be Practical [Diakonikolas K Kane Li Moitra Stewart, ICML ‘ 17]

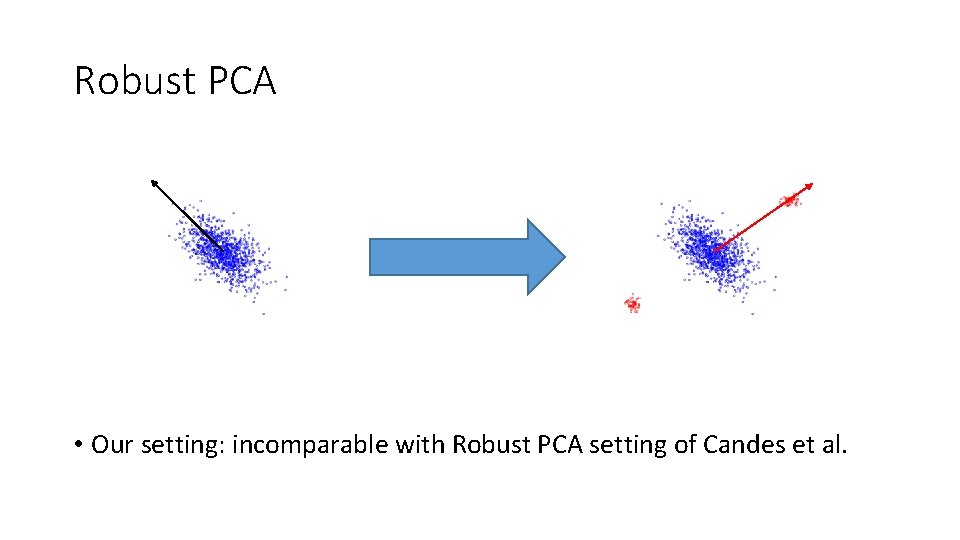

Robust PCA • Our setting: incomparable with Robust PCA setting of Candes et al.

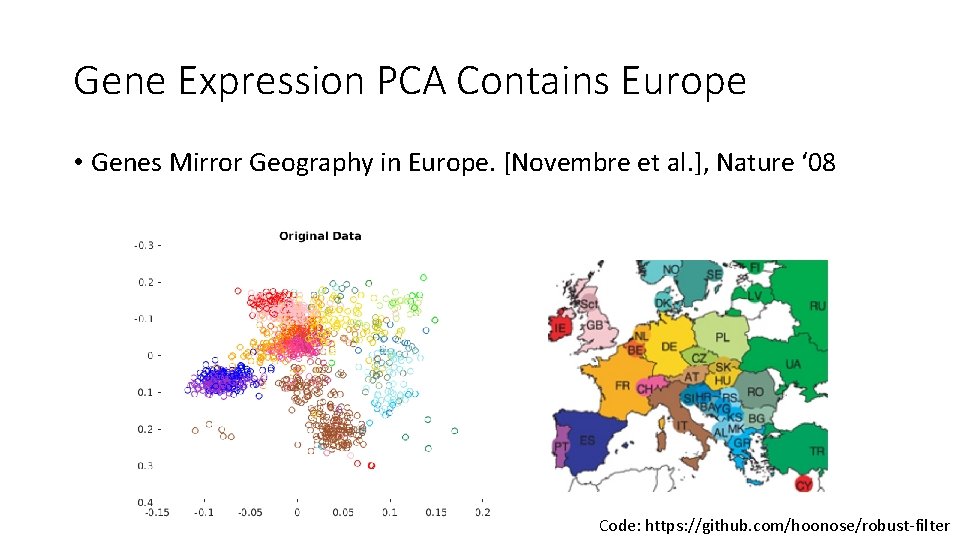

Gene Expression PCA Contains Europe • Genes Mirror Geography in Europe. [Novembre et al. ], Nature ‘ 08 Code: https: //github. com/hoonose/robust-filter

![Naively, Corruptions Destroy Europe • Genes Mirror Geography in Europe. [Novembre et al. ], Naively, Corruptions Destroy Europe • Genes Mirror Geography in Europe. [Novembre et al. ],](http://slidetodoc.com/presentation_image_h/03223f4524d03dd8ad5cfb6c6ea7c8ad/image-42.jpg)

Naively, Corruptions Destroy Europe • Genes Mirror Geography in Europe. [Novembre et al. ], Nature ‘ 08 Code: https: //github. com/hoonose/robust-filter

![Europe is RANSACked • Genes Mirror Geography in Europe. [Novembre et al. ], Nature Europe is RANSACked • Genes Mirror Geography in Europe. [Novembre et al. ], Nature](http://slidetodoc.com/presentation_image_h/03223f4524d03dd8ad5cfb6c6ea7c8ad/image-43.jpg)

Europe is RANSACked • Genes Mirror Geography in Europe. [Novembre et al. ], Nature ‘ 08 Code: https: //github. com/hoonose/robust-filter

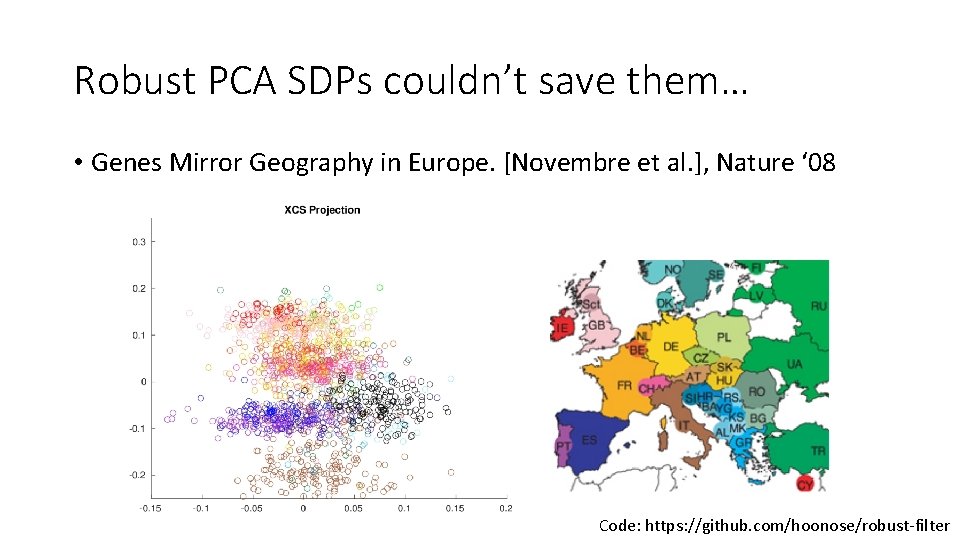

Robust PCA SDPs couldn’t save them… • Genes Mirror Geography in Europe. [Novembre et al. ], Nature ‘ 08 Code: https: //github. com/hoonose/robust-filter

![Our Algorithms Fix Europe! • Genes Mirror Geography in Europe. [Novembre et al. ], Our Algorithms Fix Europe! • Genes Mirror Geography in Europe. [Novembre et al. ],](http://slidetodoc.com/presentation_image_h/03223f4524d03dd8ad5cfb6c6ea7c8ad/image-45.jpg)

Our Algorithms Fix Europe! • Genes Mirror Geography in Europe. [Novembre et al. ], Nature ‘ 08 Code: https: //github. com/hoonose/robust-filter

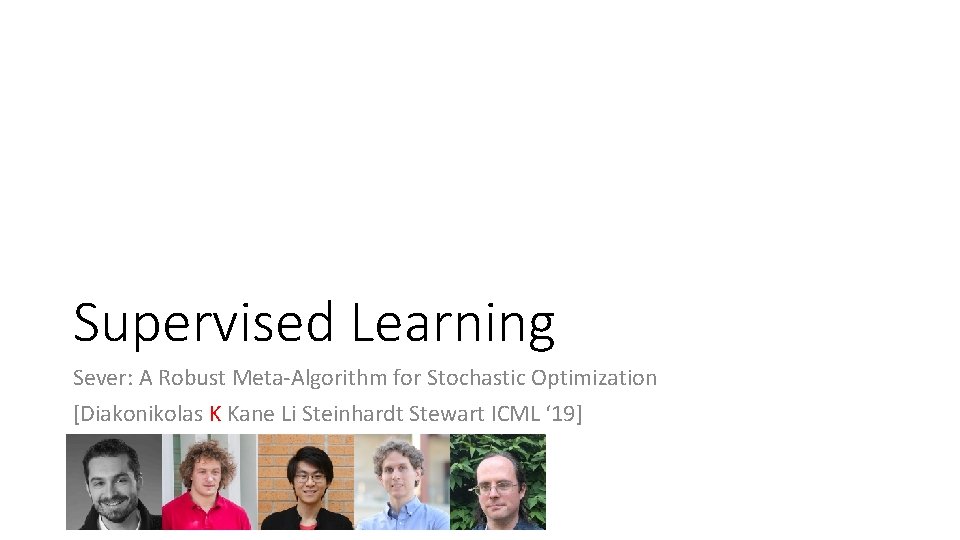

Supervised Learning Sever: A Robust Meta-Algorithm for Stochastic Optimization [Diakonikolas K Kane Li Steinhardt Stewart ICML ‘ 19]

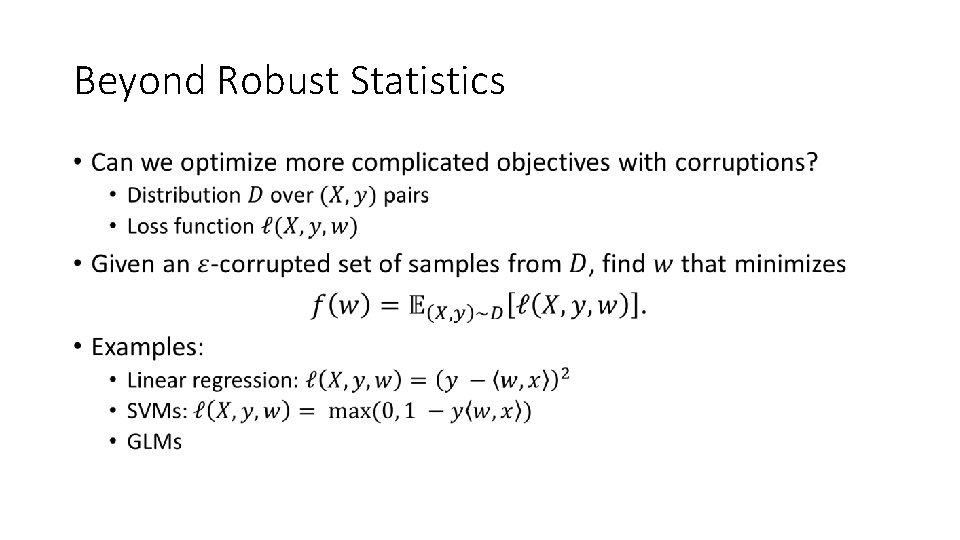

Beyond Robust Statistics •

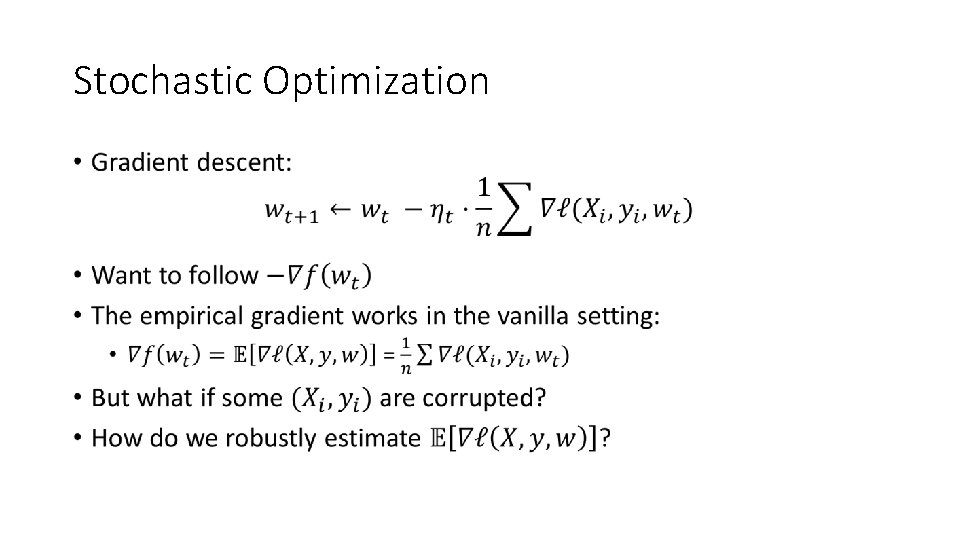

Stochastic Optimization •

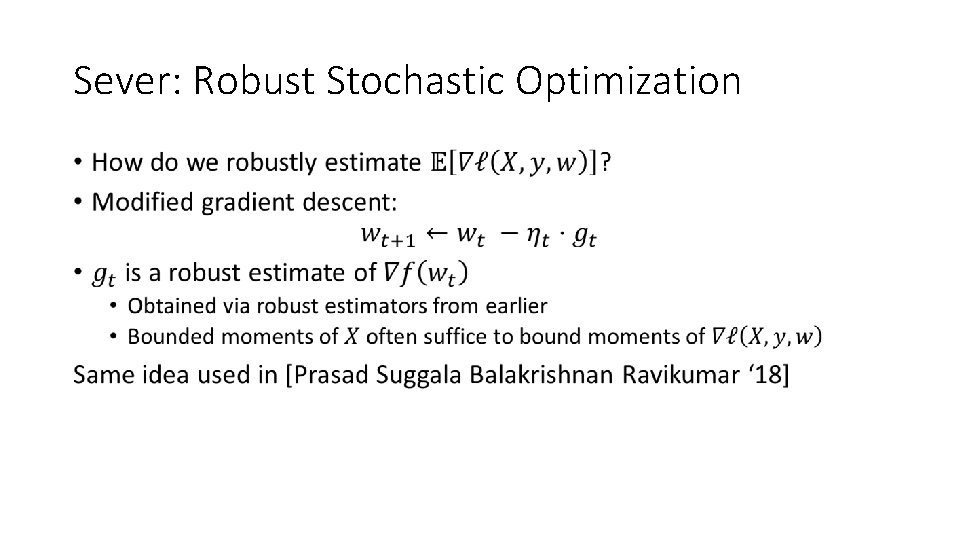

Sever: Robust Stochastic Optimization •

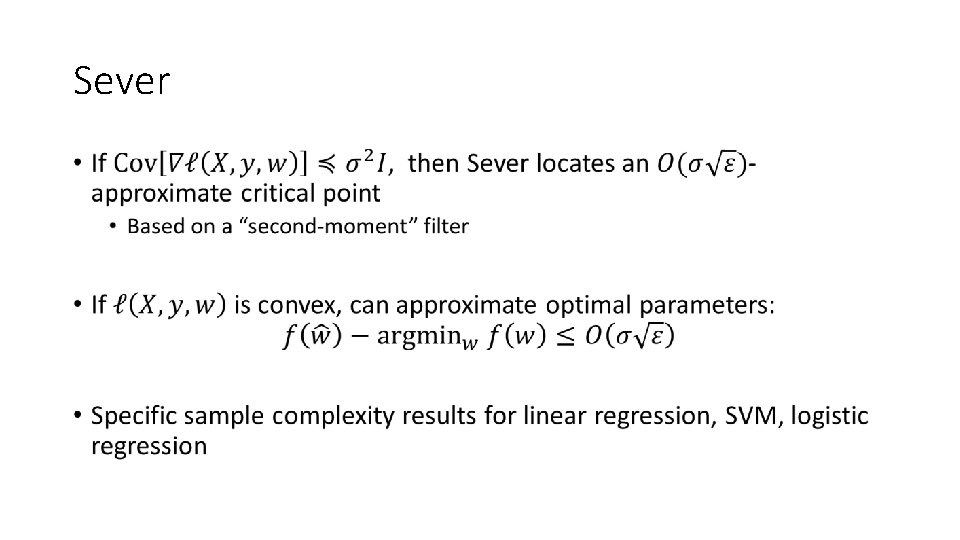

Sever •

Making it practical •

Experiments •

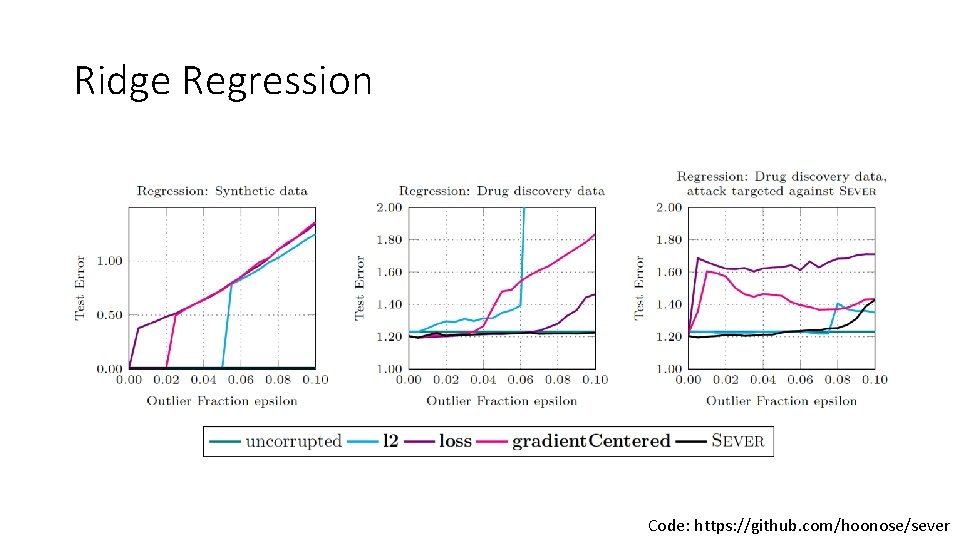

Ridge Regression Code: https: //github. com/hoonose/sever

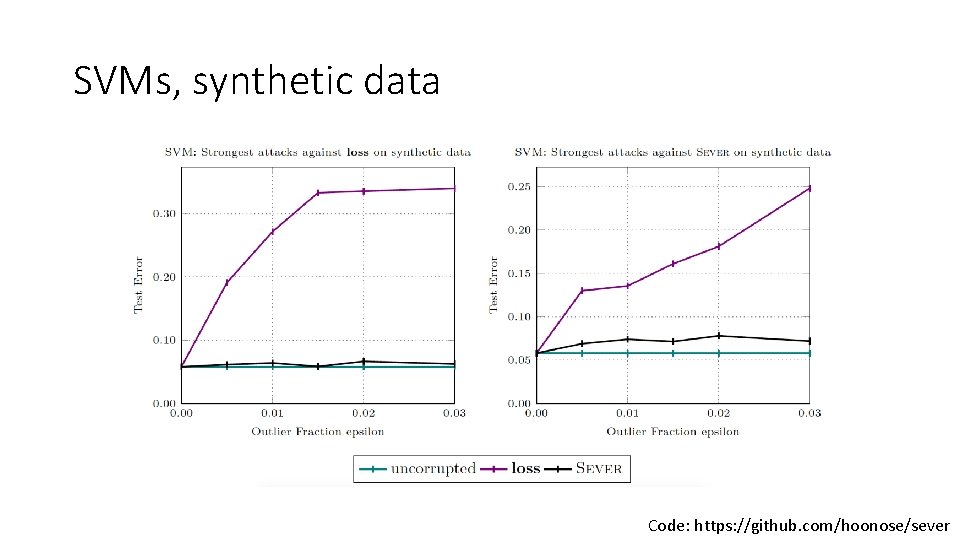

SVMs, synthetic data Code: https: //github. com/hoonose/sever

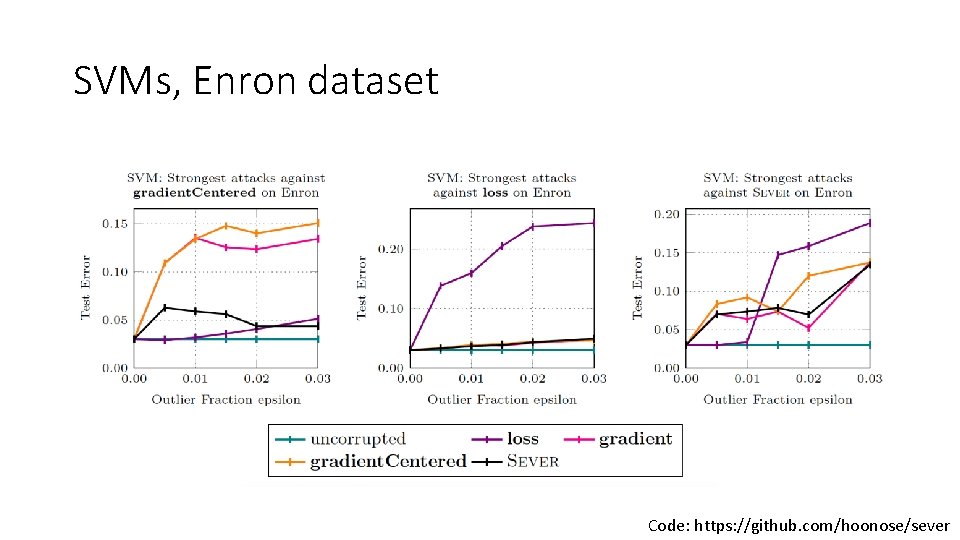

SVMs, Enron dataset Code: https: //github. com/hoonose/sever

Conclusions • Methods for robust estimation • Applicable in many settings • Computationally efficient • Sample efficient • Accurate in high dimensions • Realizable! • Still a lot to explore…

- Slides: 56