Prototypebased models in unsupervised and supervised machine learning

Prototype-based models in unsupervised and supervised machine learning Michael Biehl, Aleke Nolte Johann Bernoulli Institute for Mathematics and Computer Science University of Groningen, NL SUNDIAL H 2020 Network www. cs. rug. nl/~biehl www. astro. rug. nl/~sundial/ pre- reprints, available code Lingyu Wang Kapteyn Astronomical Inst. and SRON Groningen Astrophysics Science Group Groningen, NL

Overview Introduction / Motivation prototypes, exemplars neural activation / learning Unsupervised Learning Vector Quantization (VQ), competitive learning Kohonen’s Self-Organizing Map (SOM) Supervised Learning Vector Quantization (LVQ) Adaptive distances and relevance learning Illustration: SOM-clustering of galaxy data, post-labelling Supervised classification, LVQ+relevance learning Astroinformatics, Cape Town, November 2017 2

Introduction prototypes, exemplars: representation of information in terms of typical representatives (e. g. of a class of objects), much debated concept in cognitive psychology machine learning: prototype- (and distance-) based systems - easy to implement, highly flexible, online training - white box: parameterization in the space of observed data - yield interpretable classifiers/regression systems - help to detect bias in training data, other artifacts - provide insights into data set / problem at hand Accuracy is not enough! Astroinformatics, Cape Town, November 2017 [Paulo Lisboa]

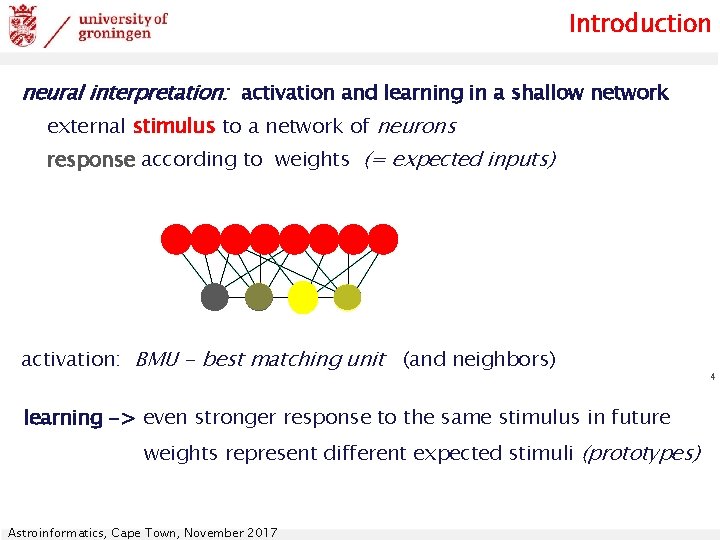

Introduction neural interpretation: activation and learning in a shallow network external stimulus to a network of neurons response according to weights (= expected inputs) activation: BMU - best matching unit (and neighbors) learning -> even stronger response to the same stimulus in future weights represent different expected stimuli (prototypes) Astroinformatics, Cape Town, November 2017 4

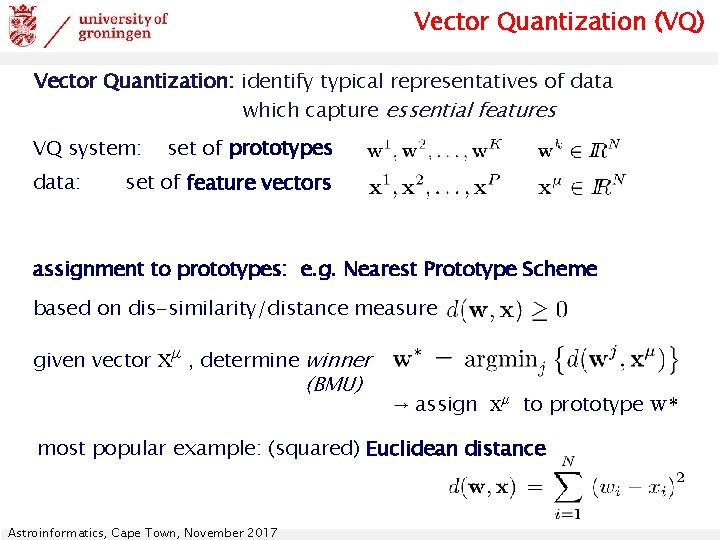

Vector Quantization (VQ) Vector Quantization: identify typical representatives of data which capture essential features VQ system: data: set of prototypes set of feature vectors assignment to prototypes: e. g. Nearest Prototype Scheme based on dis-similarity/distance measure given vector xμ , determine winner (BMU) → assign xμ to prototype w* most popular example: (squared) Euclidean distance Astroinformatics, Cape Town, November 2017

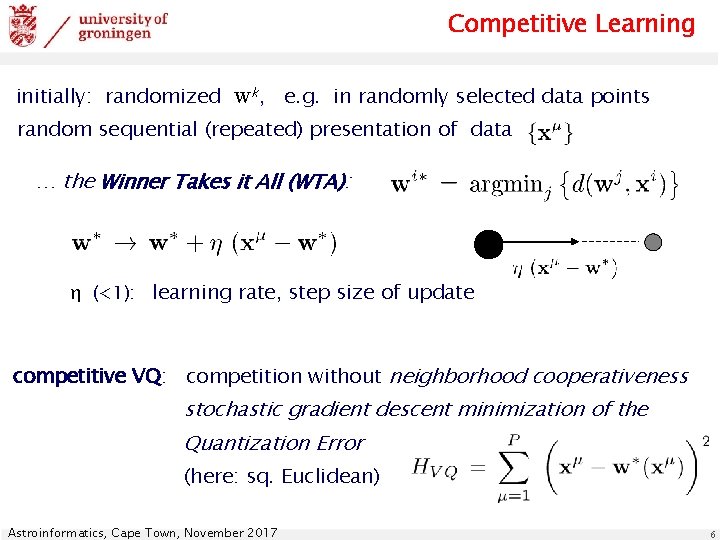

Competitive Learning initially: randomized wk, e. g. in randomly selected data points random sequential (repeated) presentation of data … the Winner Takes it All (WTA): η (<1): learning rate, step size of update competitive VQ: competition without neighborhood cooperativeness stochastic gradient descent minimization of the Quantization Error (here: sq. Euclidean) Astroinformatics, Cape Town, November 2017 6

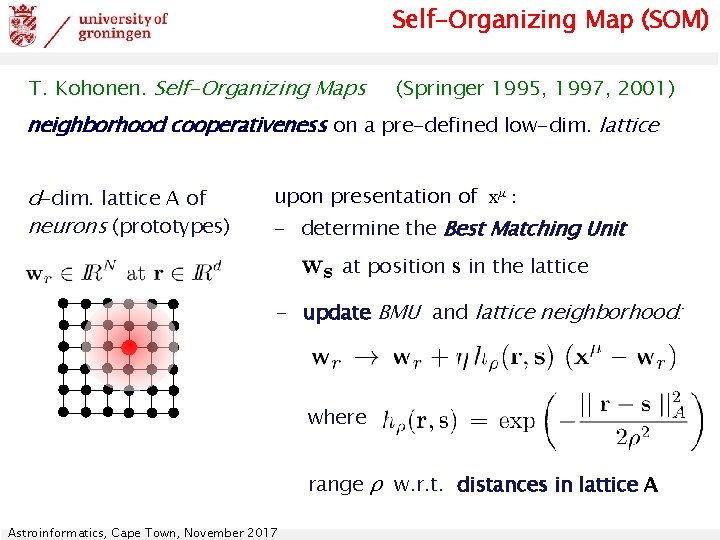

Self-Organizing Map (SOM) T. Kohonen. Self-Organizing Maps (Springer 1995, 1997, 2001) neighborhood cooperativeness on a pre-defined low-dim. lattice d-dim. lattice A of neurons (prototypes) upon presentation of xμ : - determine the Best Matching Unit at position s in the lattice - update BMU and lattice neighborhood: where range ρ w. r. t. distances in lattice A Astroinformatics, Cape Town, November 2017

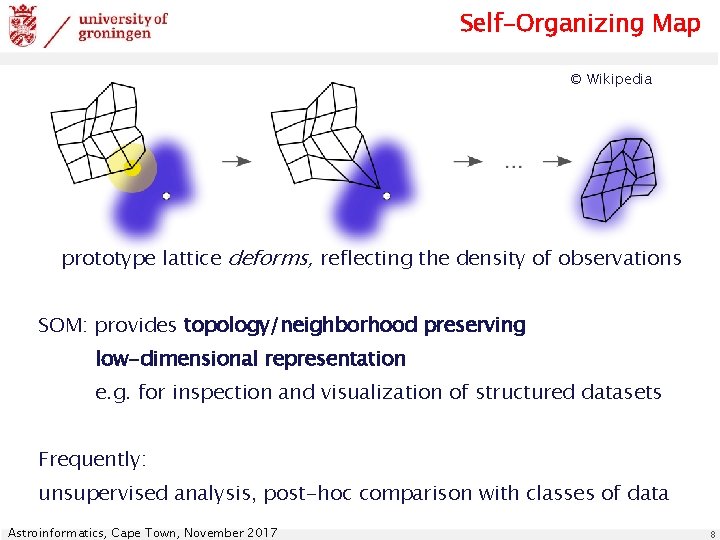

Self-Organizing Map © Wikipedia prototype lattice deforms, reflecting the density of observations SOM: provides topology/neighborhood preserving low-dimensional representation e. g. for inspection and visualization of structured datasets Frequently: unsupervised analysis, post-hoc comparison with classes of data Astroinformatics, Cape Town, November 2017 8

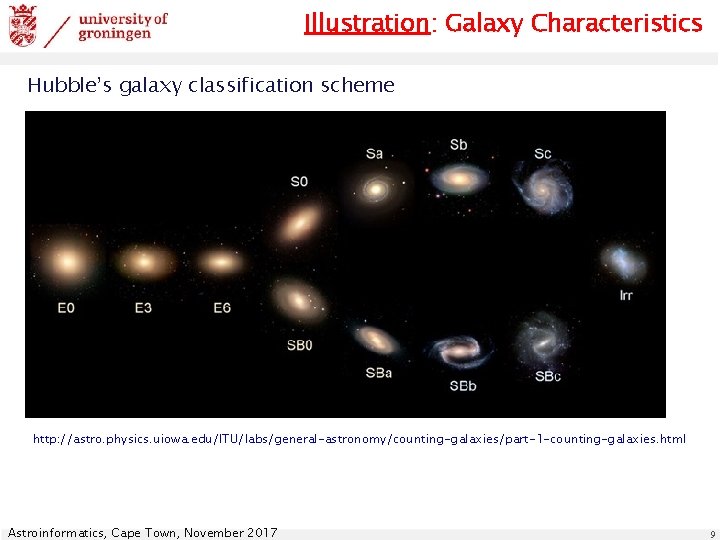

Illustration: Galaxy Characteristics Hubble’s galaxy classification scheme http: //astro. physics. uiowa. edu/ITU/labs/general-astronomy/counting-galaxies/part-1 -counting-galaxies. html Astroinformatics, Cape Town, November 2017 9

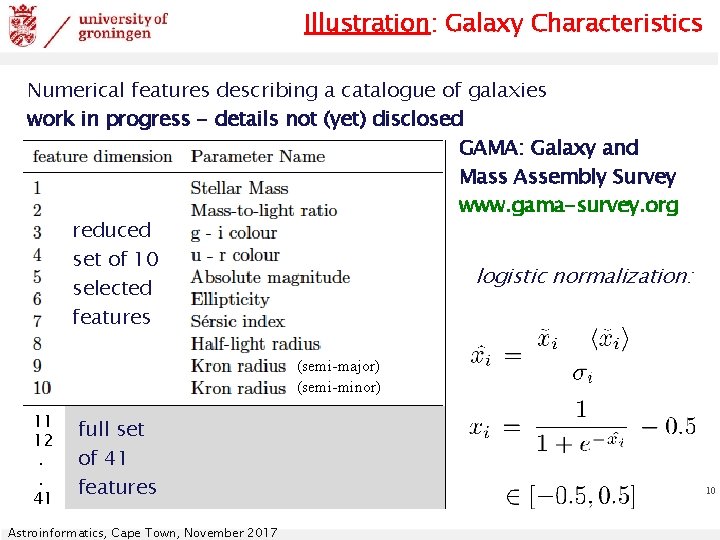

Illustration: Galaxy Characteristics Numerical features describing a catalogue of galaxies work in progress - details not (yet) disclosed GAMA: Galaxy and Mass Assembly Survey www. gama-survey. org reduced set of 10 logistic normalization: selected features (semi-major) (semi-minor) 11 12. . 41 full set of 41 features Astroinformatics, Cape Town, November 2017 10

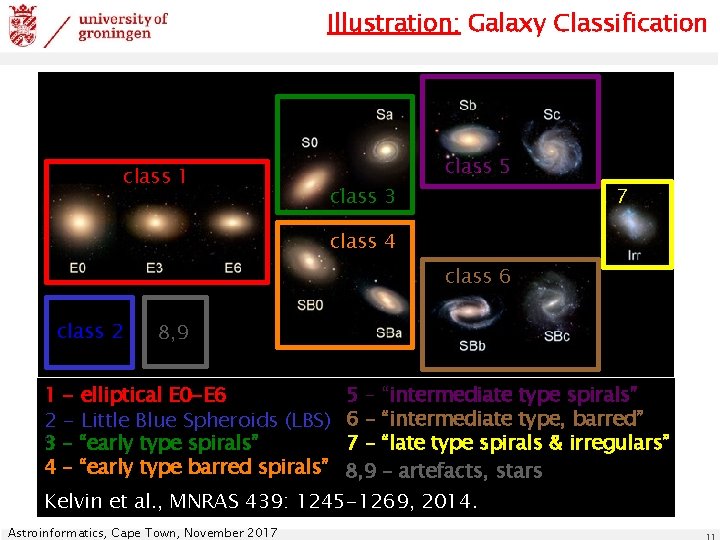

Illustration: Galaxy Classification class 1 class 3 class 5 7 class 4 class 6 class 2 1 2 “ 3 4 8, 9 - elliptical E 0 -E 6 - Little Blue Spheroids (LBS) – “early type spirals” – “early type barred spirals” 5 – “intermediate type spirals” 6 – “intermediate type, barred” 7 – “late type spirals & irregulars” 8, 9 – artefacts, stars Kelvin et al. , MNRAS 439: 1245 -1269, 2014. Astroinformatics, Cape Town, November 2017

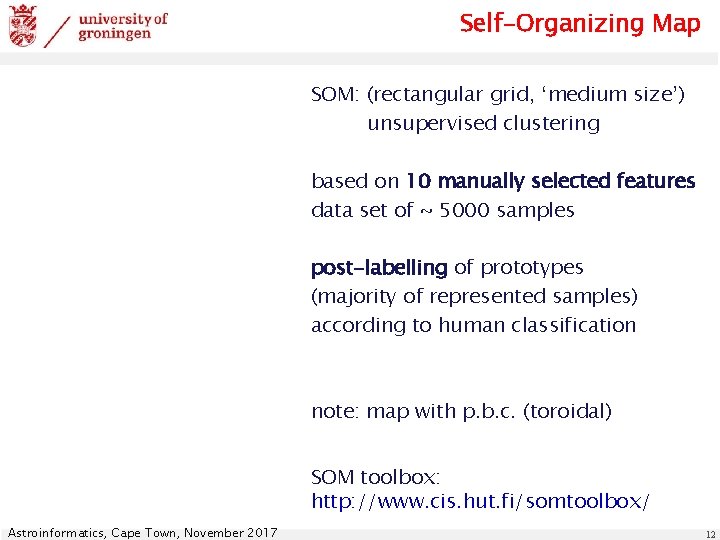

Self-Organizing Map SOM: (rectangular grid, ‘medium size’) unsupervised clustering based on 10 manually selected features data set of ~ 5000 samples post-labelling of prototypes (majority of represented samples) according to human classification note: map with p. b. c. (toroidal) SOM toolbox: http: //www. cis. hut. fi/somtoolbox/ Astroinformatics, Cape Town, November 2017 12

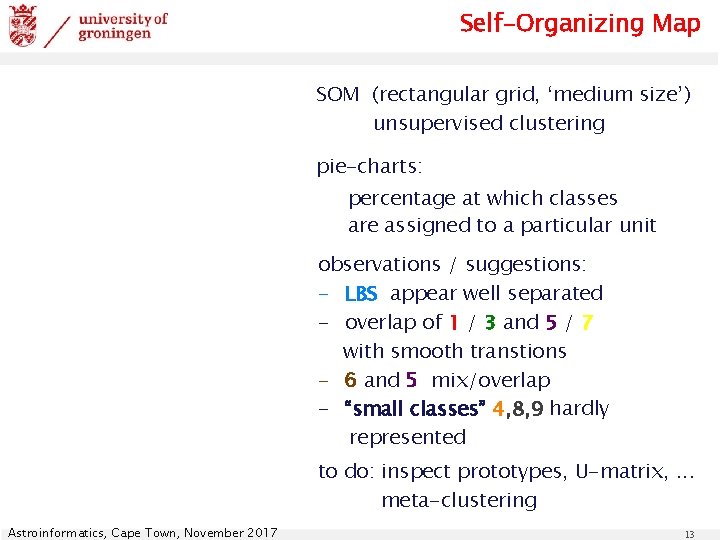

Self-Organizing Map SOM (rectangular grid, ‘medium size’) unsupervised clustering pie-charts: percentage at which classes are assigned to a particular unit observations / suggestions: - LBS appear well separated - overlap of 1 / 3 and 5 / 7 with smooth transtions - 6 and 5 mix/overlap - “small classes” 4, 8, 9 hardly represented to do: inspect prototypes, U-matrix, . . . meta-clustering Astroinformatics, Cape Town, November 2017 13

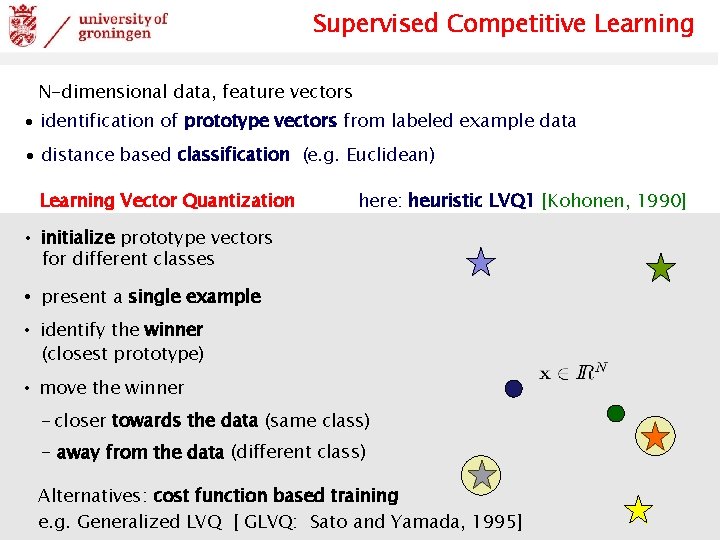

Supervised Competitive Learning N-dimensional data, feature vectors ∙ identification of prototype vectors from labeled example data ∙ distance based classification (e. g. Euclidean) Learning Vector Quantization here: heuristic LVQ 1 [Kohonen, 1990] • initialize prototype vectors for different classes • present a single example • identify the winner (closest prototype) • move the winner - closer towards the data (same class) - away from the data (different class) Alternatives: cost function based training e. g. Generalized LVQ [ GLVQ: Sato and Yamada, 1995] Astroinformatics, Cape Town, November 2017

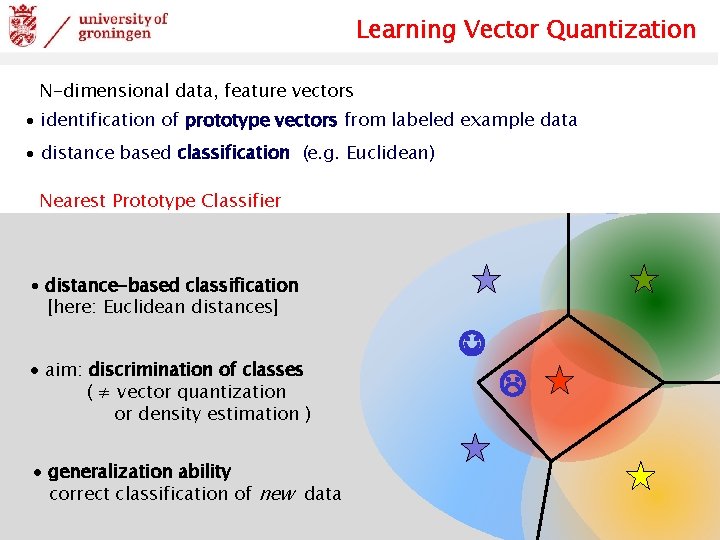

Learning Vector Quantization N-dimensional data, feature vectors ∙ identification of prototype vectors from labeled example data ∙ distance based classification (e. g. Euclidean) Nearest Prototype Classifier ∙ distance-based classification [here: Euclidean distances] ∙ aim: discrimination of classes ( ≠ vector quantization or density estimation ) ∙ generalization ability correct classification of new data Astroinformatics, Cape Town, November 2017

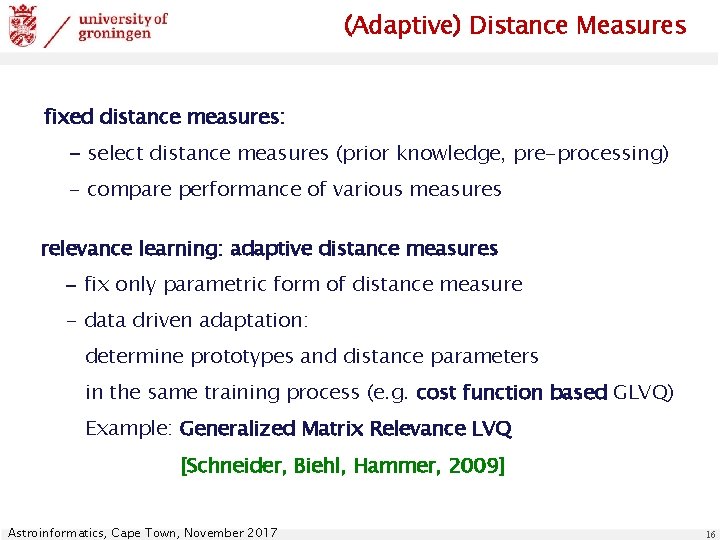

(Adaptive) Distance Measures fixed distance measures: - select distance measures (prior knowledge, pre-processing) - compare performance of various measures relevance learning: adaptive distance measures - fix only parametric form of distance measure - data driven adaptation: determine prototypes and distance parameters in the same training process (e. g. cost function based GLVQ) Example: Generalized Matrix Relevance LVQ [Schneider, Biehl, Hammer, 2009] Astroinformatics, Cape Town, November 2017 16

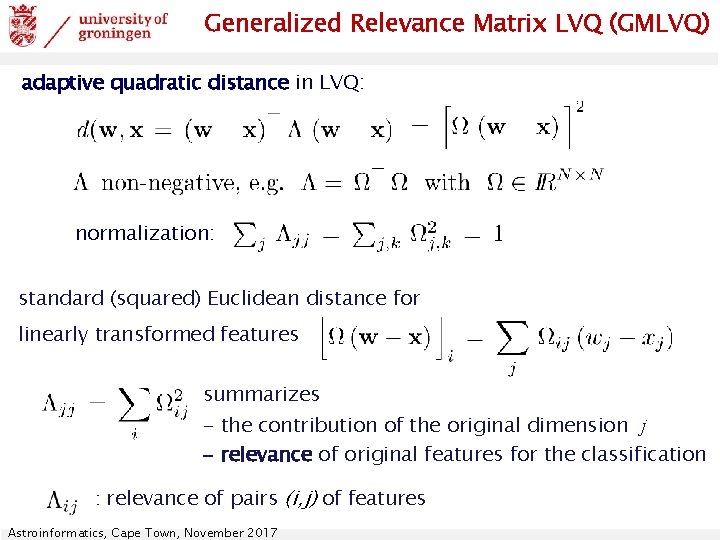

Generalized Relevance Matrix LVQ (GMLVQ) adaptive quadratic distance in LVQ: normalization: standard (squared) Euclidean distance for linearly transformed features summarizes - the contribution of the original dimension j - relevance of original features for the classification : relevance of pairs (i, j) of features Astroinformatics, Cape Town, November 2017

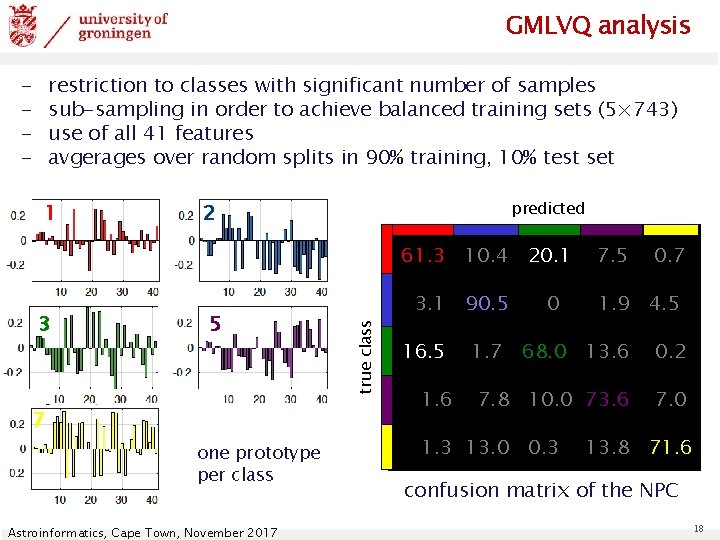

GMLVQ analysis - restriction to classes with significant number of samples sub-sampling in order to achieve balanced training sets (5× 743) use of all 41 features avgerages over random splits in 90% training, 10% test set 1 predicted 2 61. 3 10. 4 20. 1 5 7 one prototype per class Astroinformatics, Cape Town, November 2017 true class 3 3. 1 90. 5 16. 5 1. 6 0 7. 5 0. 7 1. 9 4. 5 1. 7 68. 0 13. 6 0. 2 7. 8 10. 0 73. 6 7. 0 1. 3 13. 0 0. 3 13. 8 71. 6 confusion matrix of the NPC 18

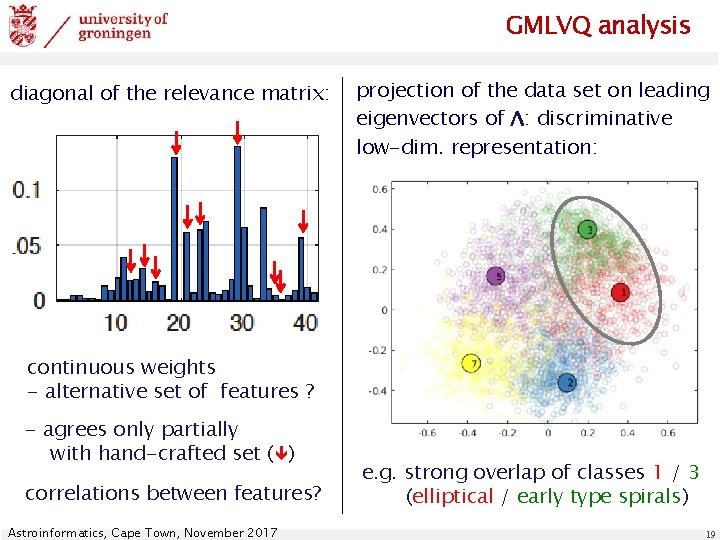

GMLVQ analysis diagonal of the relevance matrix: projection of the data set on leading eigenvectors of Λ: discriminative low-dim. representation: continuous weights - alternative set of features ? - agrees only partially with hand-crafted set ( ) correlations between features? Astroinformatics, Cape Town, November 2017 e. g. strong overlap of classes 1 / 3 (elliptical / early type spirals) 19

Summary Prototype-based systems in machine learning: represent data in terms of exemplars, white box parameterization of clustering / classification / regression Unsupervised Learning data reduction, vector quantization, clustering low-dimensional representation, topology preserving SOM Supervised Learning example: LVQ for classification with adaptive distance Generalized Matrix Relevance LVQ (GMLVQ) * white box, transparent, intuitive, powerful accuracy is not enough: insight into problem / data set e. g. with respect to feature selection / weighting * GMLVQ (matlab) toolboxes: www. cs. rug. nl/~biehl Astroinformatics, Cape Town, November 2017 20

there is a lot more. . . Unsupervised Learning Neural Gas (NG) Generative Topographic Map (GTM) Relevance learning in dimension reduction Regression Ordinal Regresssion in GMVLQ Radial Basis Function networks (RBF) Probabilistic classification likelihood-based classifiers (Robust Soft LVQ) Distances / Similarities unconventional, problem-specific similarity measures e. g. functional data (time series, spectra, histograms. . . ) non-vectorial data, relational data relevances: weak/strong, bounds . . . Astroinformatics, Cape Town, November 2017 21

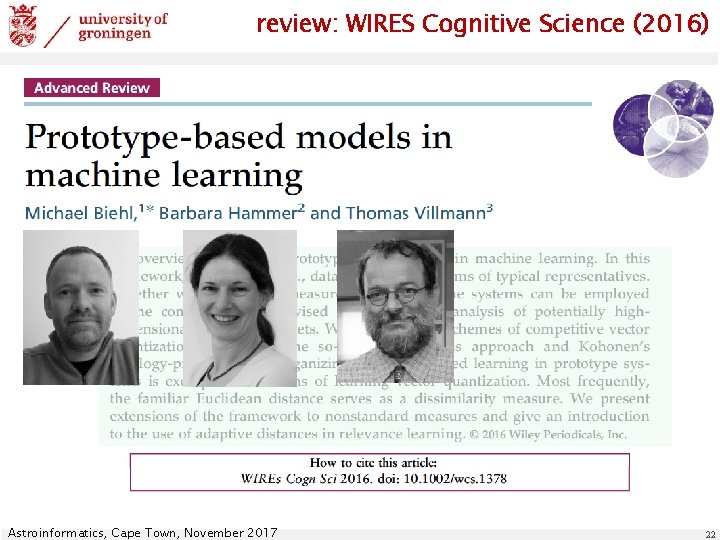

review: WIRES Cognitive Science (2016) Astroinformatics, Cape Town, November 2017 22

- Slides: 22