On PowerProportional Processors Yasuko Watanabe watanabecs wisc edu

On Power-Proportional Processors Yasuko Watanabe watanabe@cs. wisc. edu Advisor: Dr. David A. Wood Oral Defense October 11, 2011

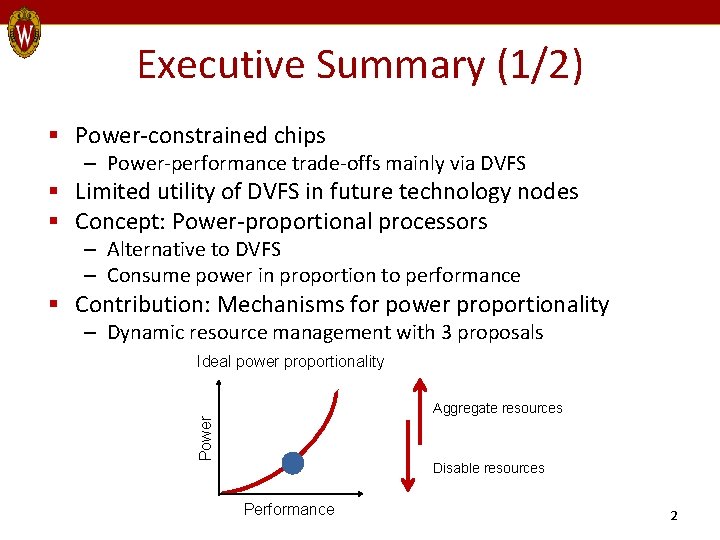

Executive Summary (1/2) § Power-constrained chips – Power-performance trade-offs mainly via DVFS § Limited utility of DVFS in future technology nodes § Concept: Power-proportional processors – Alternative to DVFS – Consume power in proportion to performance § Contribution: Mechanisms for power proportionality – Dynamic resource management with 3 proposals Ideal power proportionality Power Aggregate resources Disable resources Performance 2

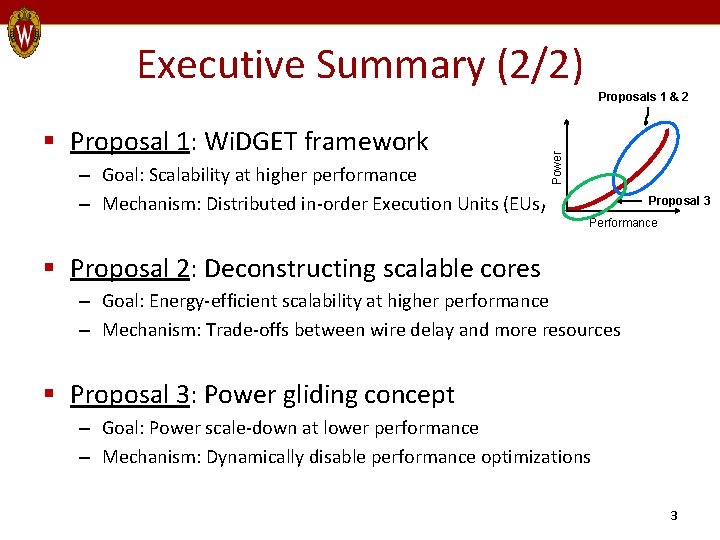

Executive Summary (2/2) § Proposal 1: Wi. DGET framework – Goal: Scalability at higher performance – Mechanism: Distributed in-order Execution Units (EUs) Power Proposals 1 & 2 Proposal 3 Performance § Proposal 2: Deconstructing scalable cores – Goal: Energy-efficient scalability at higher performance – Mechanism: Trade-offs between wire delay and more resources § Proposal 3: Power gliding concept – Goal: Power scale-down at lower performance – Mechanism: Dynamically disable performance optimizations 3

Outline § § § Motivation Proposal 1: Wi. DGET framework Proposal 2: Deconstructing scalable cores Proposal 3: Power gliding Related work Conclusions 4

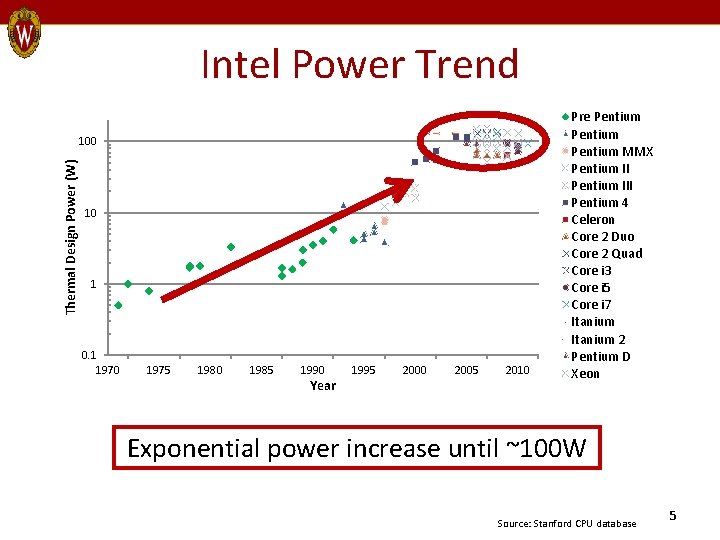

Intel Power Trend Thermal Design Power (W) 100 10 1 0. 1 1970 1975 1980 1985 1990 Year 1995 2000 2005 2010 Pre Pentium MMX Pentium III Pentium 4 Celeron Core 2 Duo Core 2 Quad Core i 3 Core i 5 Core i 7 Itanium 2 Pentium D Xeon Exponential power increase until ~100 W Source: Stanford CPU database 5

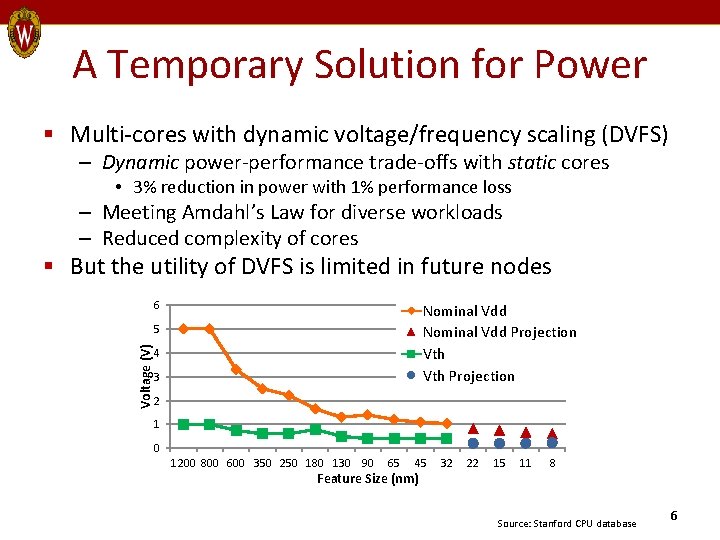

A Temporary Solution for Power § Multi-cores with dynamic voltage/frequency scaling (DVFS) – Dynamic power-performance trade-offs with static cores • 3% reduction in power with 1% performance loss – Meeting Amdahl’s Law for diverse workloads – Reduced complexity of cores § But the utility of DVFS is limited in future nodes 6 Nominal Vdd Projection Vth Projection Voltage (V) 5 4 3 2 1 0 1200 800 600 350 250 180 130 90 65 45 Feature Size (nm) 32 22 15 11 8 Source: Stanford CPU database 6

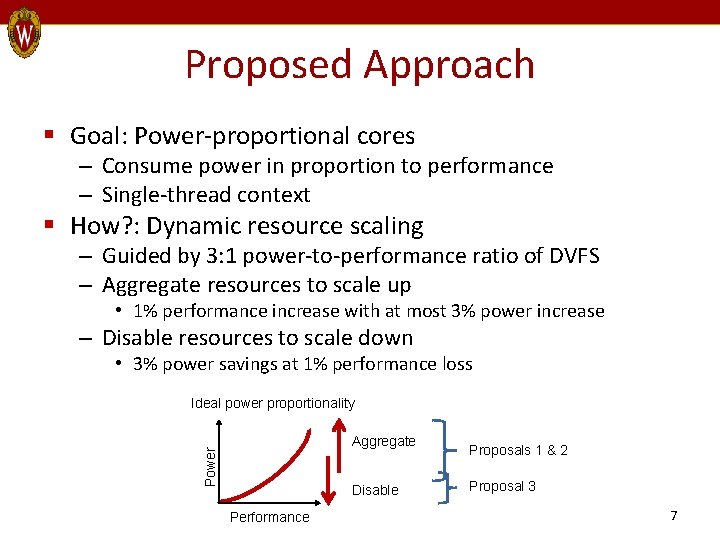

Proposed Approach § Goal: Power-proportional cores – Consume power in proportion to performance – Single-thread context § How? : Dynamic resource scaling – Guided by 3: 1 power-to-performance ratio of DVFS – Aggregate resources to scale up • 1% performance increase with at most 3% power increase – Disable resources to scale down • 3% power savings at 1% performance loss Ideal power proportionality Power Aggregate Disable Performance Proposals 1 & 2 Proposal 3 7

Outline § Motivation § Proposal 1: Wi. DGET framework – Skip – 3 -slide version § § Proposal 2: Deconstructing scalable cores Proposal 3: Power gliding Related work Conclusions 8

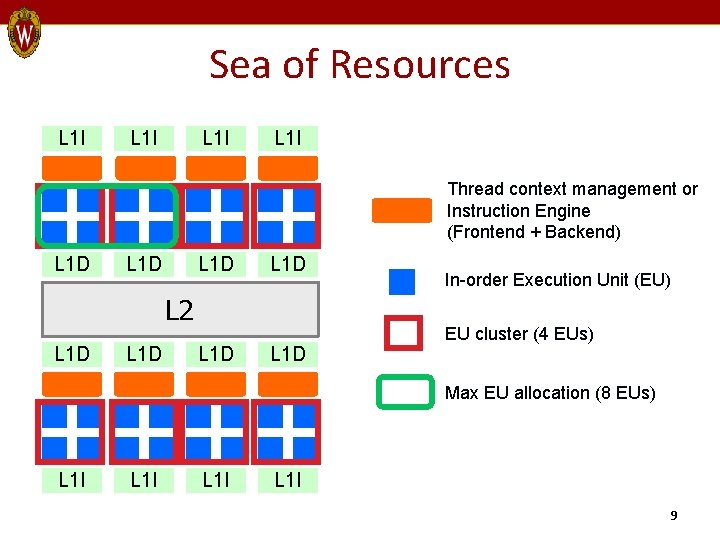

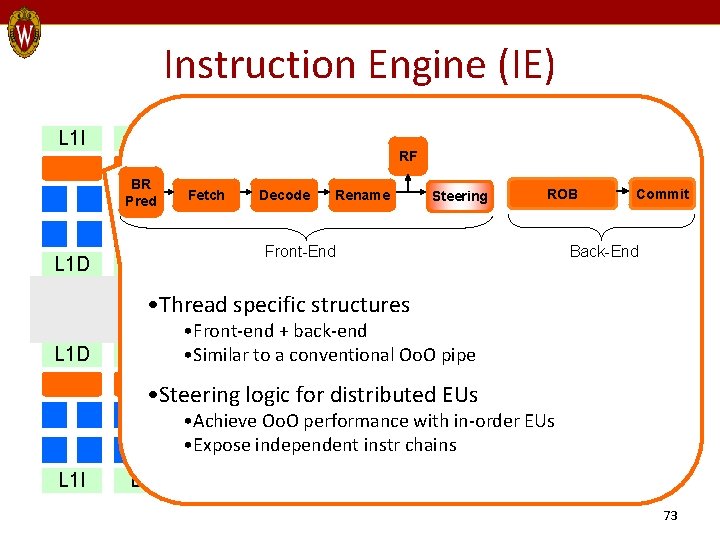

Sea of Resources L 1 I Thread context management or Instruction Engine (Frontend + Backend) L 1 D L 2 L 1 D In-order Execution Unit (EU) EU cluster (4 EUs) Max EU allocation (8 EUs) L 1 I 9

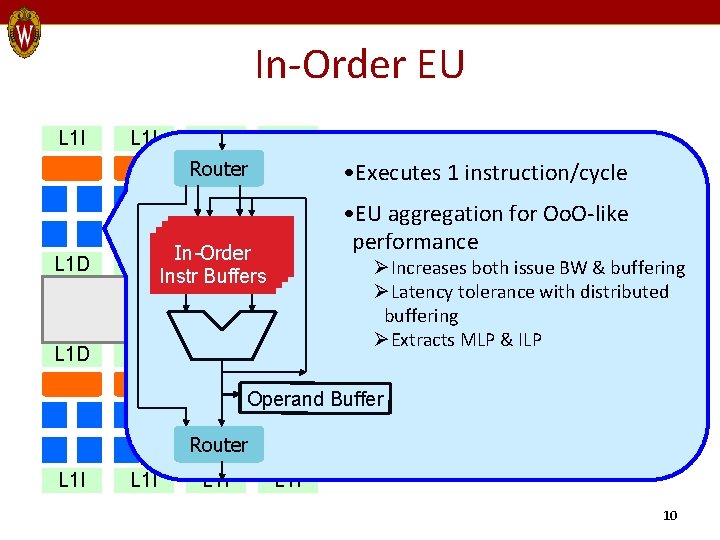

In-Order EU L 1 I Router L 1 D • Executes 1 instruction/cycle In-Order L 1 D Instr Buffers L 2 L 1 D • EU aggregation for Oo. O-like performance ØIncreases both issue BW & buffering ØLatency tolerance with distributed buffering ØExtracts MLP & ILP Operand Buffer Router L 1 I 10

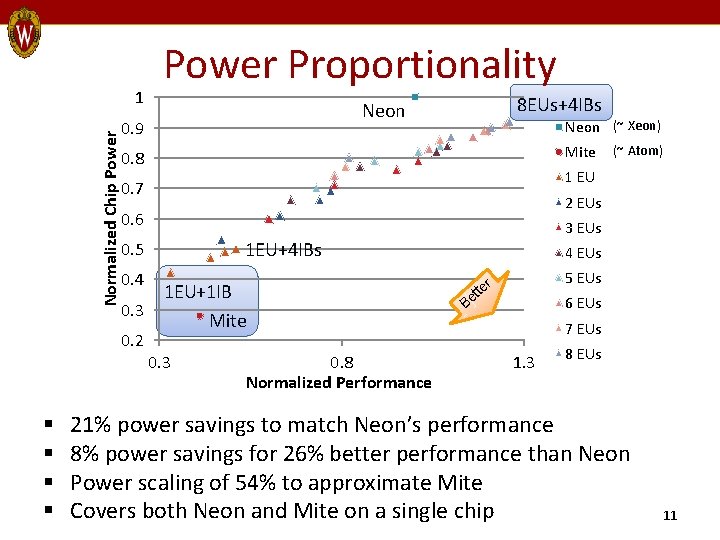

Normalized Chip Power 1 8 EUs+4 IBs Neon 0. 9 Neon (~ Xeon) Mite 0. 8 (~ Atom) 1 EU 0. 7 2 EUs 0. 6 3 EUs 1 EU+4 IBs 0. 5 4 EUs 0. 4 1 EU+1 IB 0. 3 Mite 0. 2 § § Power Proportionality 0. 3 0. 8 Normalized Performance 5 EUs r Be tte 6 EUs 7 EUs 1. 3 8 EUs 21% power savings to match Neon’s performance 8% power savings for 26% better performance than Neon Power scaling of 54% to approximate Mite Covers both Neon and Mite on a single chip 11

Outline § § § Motivation Proposal 1: Wi. DGET framework Proposal 2: Deconstructing scalable cores Proposal 3: Power gliding Related work Conclusions 12

Other Scaling Opportunities? § Unanswered questions by Wi. DGET – Scaling other resources? – Better power scale-down? – Impact of wire delay on performance? § Deconstruct prior scalable cores – Understand trade-offs in achieving scalability • Resource acquisition vs. wire delay – 2 categories 1. Resource borrowing 2. Resource overprovisioning 13

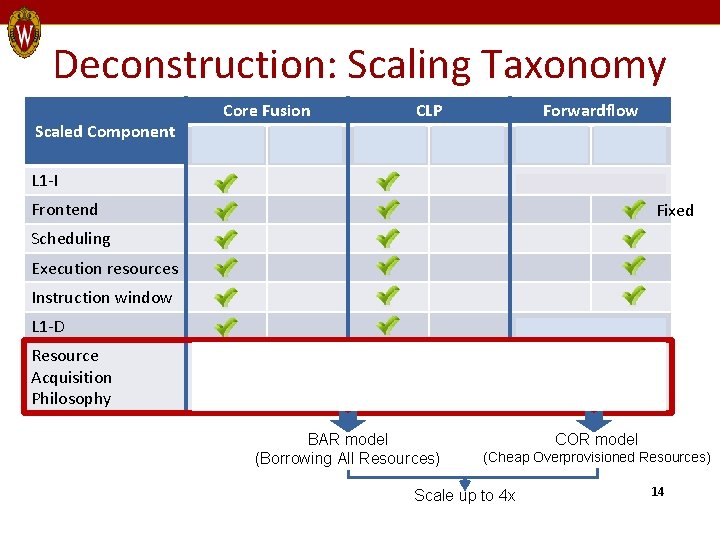

Deconstruction: Scaling Taxonomy Scaled Component Core Fusion Borrow? Over Provision? CLP Borrow? Forwardflow Over Provision? L 1 -I Borrow? Over Provision? No scaling Frontend Fixed Scheduling Execution resources Instruction window L 1 -D Resource Acquisition Philosophy No scaling Overprovision a few core-private resources Aggregate neighboring cores BAR model (Borrowing All Resources) COR model (Cheap Overprovisioned Resources) Scale up to 4 x 14

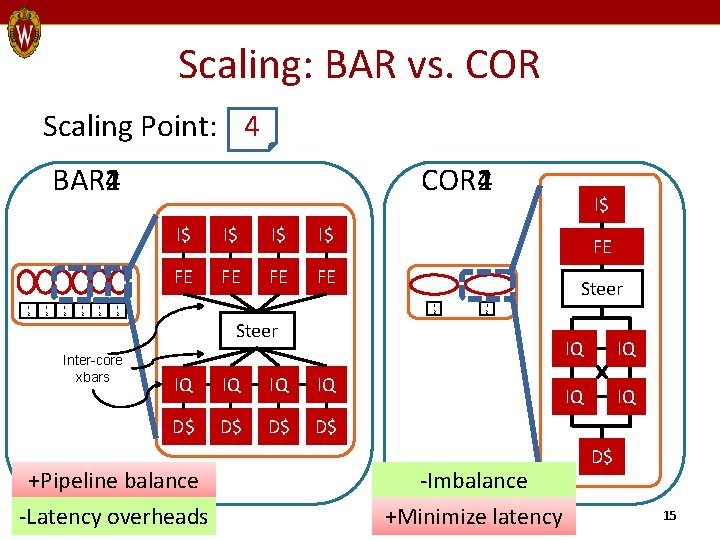

Scaling: BAR vs. COR Scaling Point: 1 4 2 BAR 1 BAR 2 BAR 4 L 2 L 2 L 2 COR 1 COR 2 COR 4 I$ I$ FE FE Inter-core xbars FE Steer L 2 L 2 Steer IQ IQ D$ D$ +Pipeline balance -Latency overheads I$ -Imbalance +Minimize latency IQ IQ D$ 15

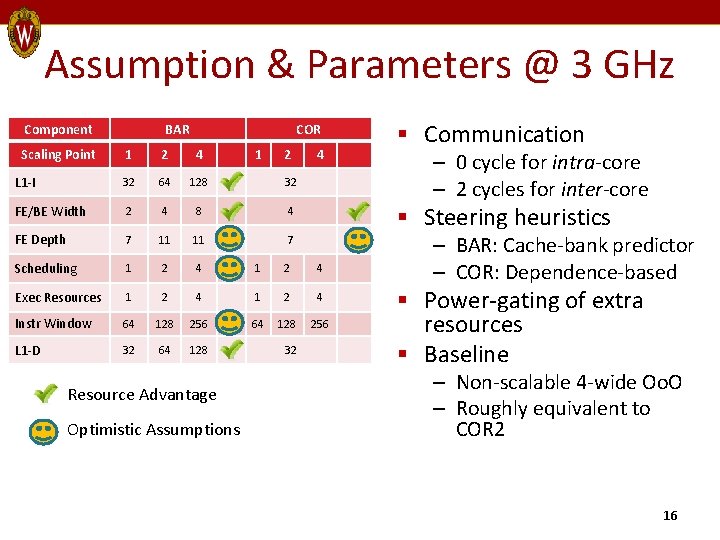

Assumption & Parameters @ 3 GHz Component Scaling Point BAR COR 1 2 4 L 1 -I 32 64 128 32 FE/BE Width 2 4 8 4 FE Depth 7 11 11 7 Scheduling 1 2 4 Exec Resources 1 2 4 Instr Window 64 128 256 L 1 -D 32 64 128 Resource Advantage Optimistic Assumptions 1 2 32 4 § Communication – 0 cycle for intra-core – 2 cycles for inter-core § Steering heuristics – BAR: Cache-bank predictor – COR: Dependence-based § Power-gating of extra resources § Baseline – Non-scalable 4 -wide Oo. O – Roughly equivalent to COR 2 16

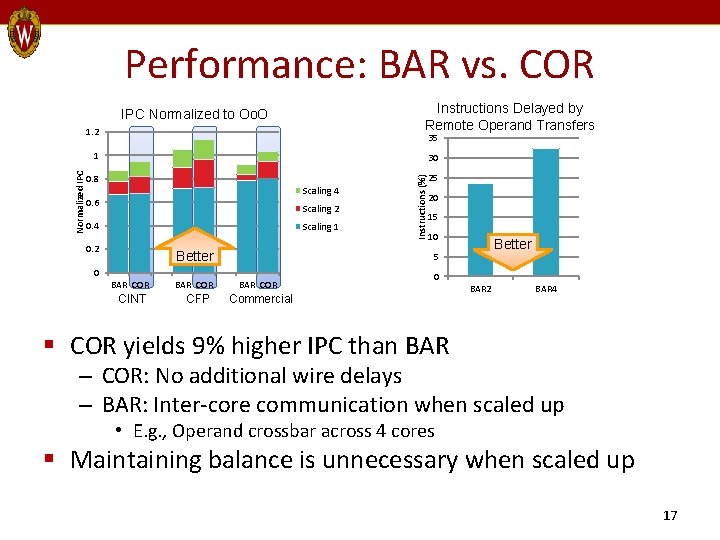

Performance: BAR vs. COR Instructions Delayed by Remote Operand Transfers IPC Normalized to Oo. O 1. 2 35 30 0. 8 Scaling 4 0. 6 Scaling 2 0. 4 Scaling 1 0. 2 Better CINT BAR COR CFP 25 20 15 10 Better 5 0 BAR COR Instructions (%) Normalized IPC 1 BAR COR 0 Commercial BAR 2 BAR 4 § COR yields 9% higher IPC than BAR – COR: No additional wire delays – BAR: Inter-core communication when scaled up • E. g. , Operand crossbar across 4 cores § Maintaining balance is unnecessary when scaled up 17

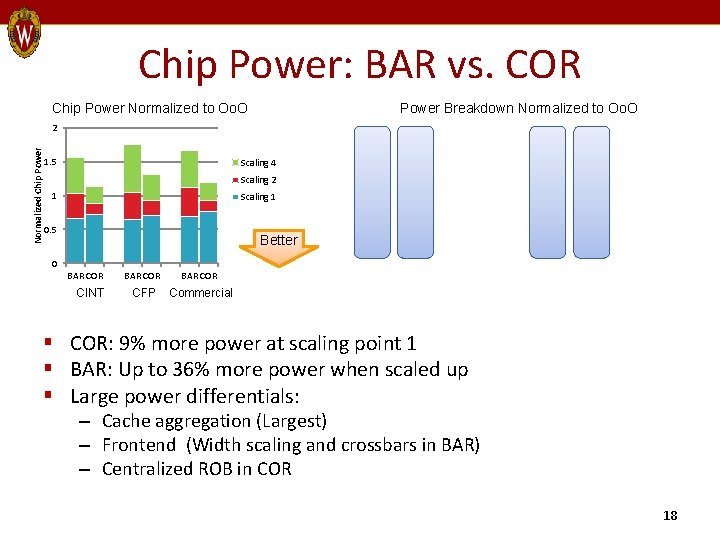

Chip Power: BAR vs. COR Chip Power Normalized to Oo. O Power Breakdown Normalized to Oo. O Normalized Chip Power 2 1. 5 Scaling 4 Scaling 2 1 Scaling 1 0. 5 Better 0 BAR COR CINT BAR COR CFP Commercial § COR: 9% more power at scaling point 1 § BAR: Up to 36% more power when scaled up § Large power differentials: – Cache aggregation (Largest) – Frontend (Width scaling and crossbars in BAR) – Centralized ROB in COR 18

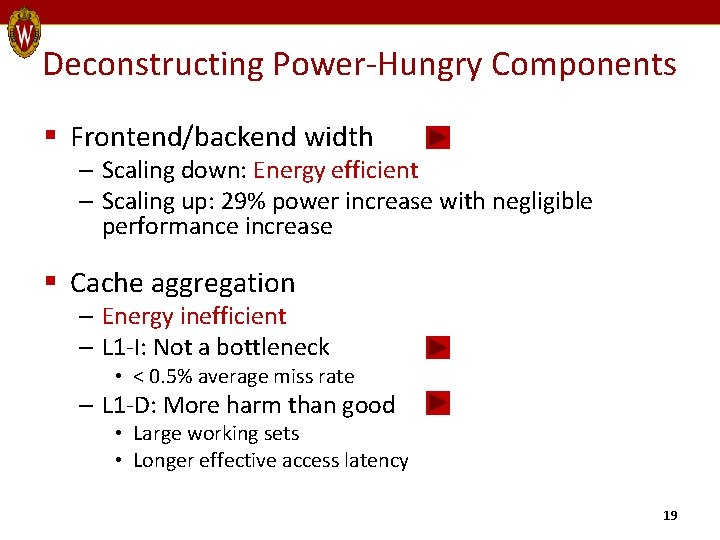

Deconstructing Power-Hungry Components § Frontend/backend width – Scaling down: Energy efficient – Scaling up: 29% power increase with negligible performance increase § Cache aggregation – Energy inefficient – L 1 -I: Not a bottleneck • < 0. 5% average miss rate – L 1 -D: More harm than good • Large working sets • Longer effective access latency 19

Improving Scalable Cores: COBRA § One scaling philosophy does not fit all situations § Hybrid of BAR and COR – Performance scalability features from COR • Overprovisioned window/execution resources – Low-power features from BAR • Interleaved ROB (core private) • Pipeline width scale-down (core private) – Borrow only execution resources • Scaling of up to 8 x 20

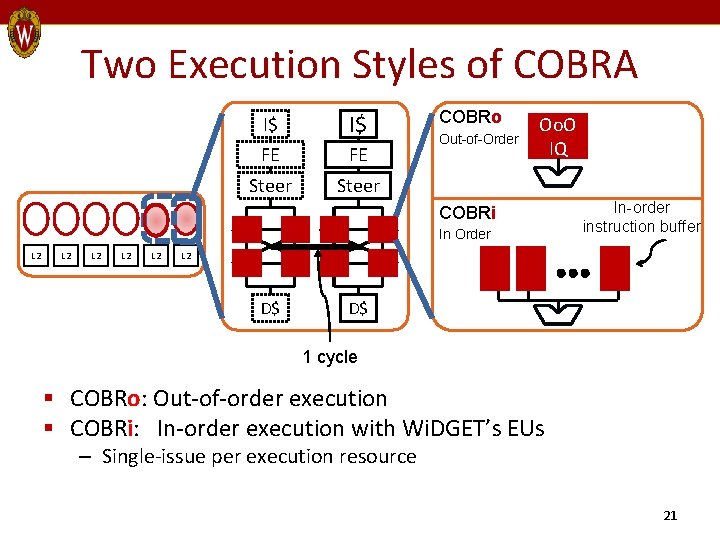

Two Execution Styles of COBRA I$ I$ FE FE Steer COBRo Out-of-Order Oo. O IQ COBRi In Order L 2 L 2 L 2 In-order instruction buffer L 2 D$ D$ 1 cycle § COBRo: Out-of-order execution § COBRi: In-order execution with Wi. DGET’s EUs – Single-issue per execution resource 21

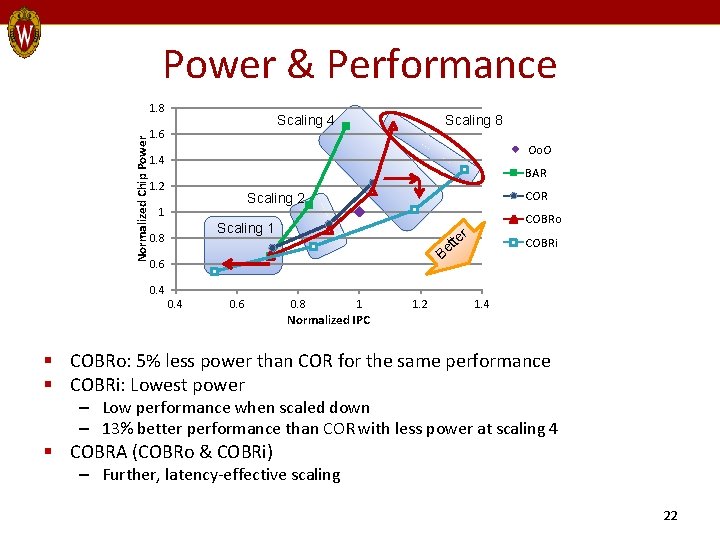

Power & Performance Normalized Chip Power 1. 8 Scaling 4 1. 6 Scaling 8 Oo. O 1. 4 BAR 1. 2 COR Scaling 2 1 Scaling 1 0. 8 B 0. 6 te et COBRo r COBRi 0. 4 0. 6 0. 8 1 Normalized IPC 1. 2 1. 4 § COBRo: 5% less power than COR for the same performance § COBRi: Lowest power – Low performance when scaled down – 13% better performance than COR with less power at scaling 4 § COBRA (COBRo & COBRi) – Further, latency-effective scaling 22

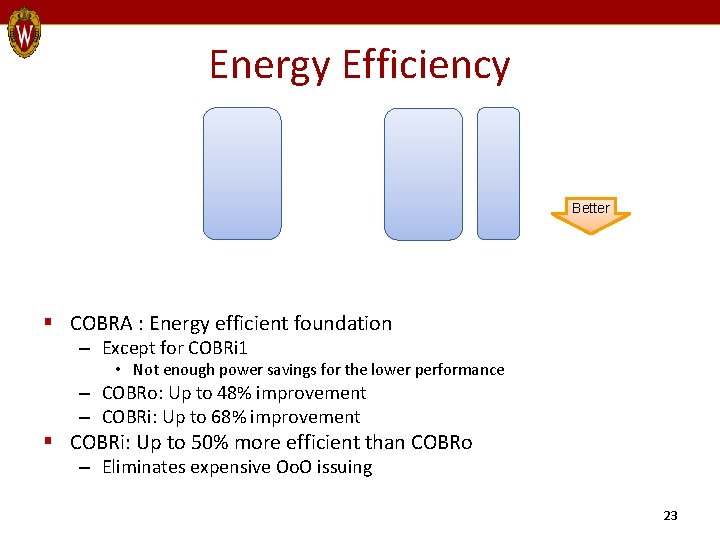

Energy Efficiency Better § COBRA : Energy efficient foundation – Except for COBRi 1 • Not enough power savings for the lower performance – COBRo: Up to 48% improvement – COBRi: Up to 68% improvement § COBRi: Up to 50% more efficient than COBRo – Eliminates expensive Oo. O issuing 23

Summary § Whole-core scaling is energy inefficient – Wire delays outweigh the benefits § Scaled-down cores should maintain pipe balance § COBRA: Energy-efficient foundation – Overprovisions window resources – Only borrows small, latency-effective resources – Scales down frontend/backend with the window – Aggregation of in-order executions more energy efficient than using Oo. O executions 24

Outline Motivation Proposal 1: Wi. DGET framework Proposal 2: Deconstructing scalable cores Proposal 3: Power gliding Related work Conclusions Power § § § Power Gliding Proposal 3 Performance 25

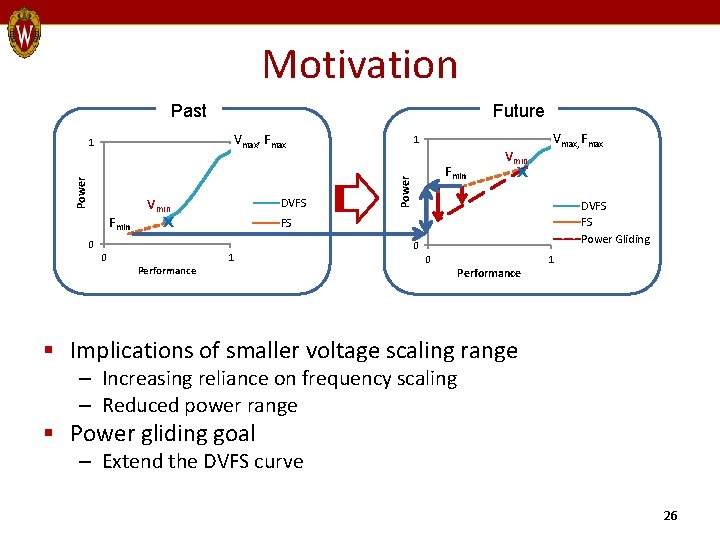

Motivation Past Future Vmax, Fmax Fmin Vmin X DVFS Performance Fmin Vmin X Vmax, Fmax DVFS FS Power Gliding FS 0 0 1 Power 1 1 0 0 Performance 1 § Implications of smaller voltage scaling range – Increasing reliance on frequency scaling – Reduced power range § Power gliding goal – Extend the DVFS curve 26

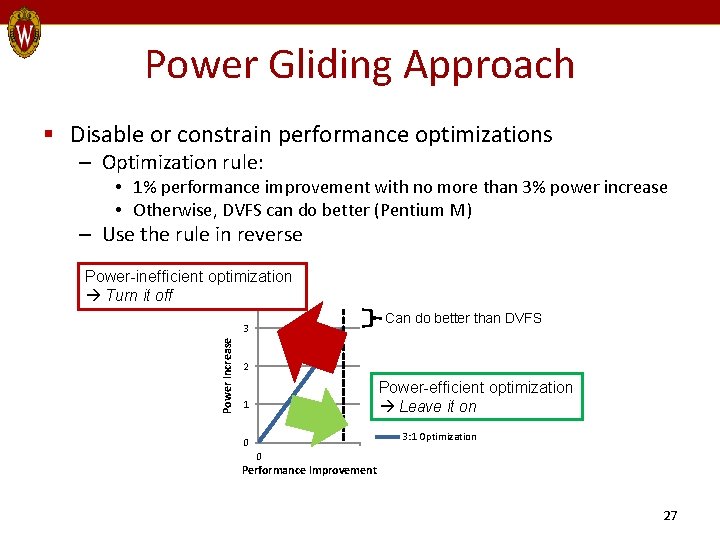

Power Gliding Approach § Disable or constrain performance optimizations – Optimization rule: • 1% performance improvement with no more than 3% power increase • Otherwise, DVFS can do better (Pentium M) – Use the rule in reverse Power-inefficient optimization Turn it off Can do better than DVFS Power Increase 3 2 Power-efficient optimization Leave it on 1 3: 1 Optimization 0 0 Performance Improvement 27

Two Case Studies § 2 different targets 1. Core frontend 2. L 2 cache § Approach: Use existing low-power techniques – Chosen based on intuition • Associated performance loss not always appropriate for high-performance processors • But viable options under the 3: 1 ratio – Use without complex policies 28

Methodology § Baseline – Non-scalable 4 -wide Oo. O § Comparison – Frequency scaling (Simulated) • Modeled after POWER 7 • Min frequency = 50% of Nominal frequency – DVFS (Analytical) • 22% operating voltage range based on Pentium M § Goal – Power-performance curve closer to DVFS than frequency scaling 29

Case Study 1: Frontend Power Gliding 30

Power-Dominant Optimizations § Renamer checkpointing + Fast branch misprediction recovery – Only 0. 05% of checkpoints useful due to highly accurate branch predictor § Aggressive fetching + Fast window re-fills – Underutilized once the scheduler is full – Prone to fetch wrong-path instructions 31

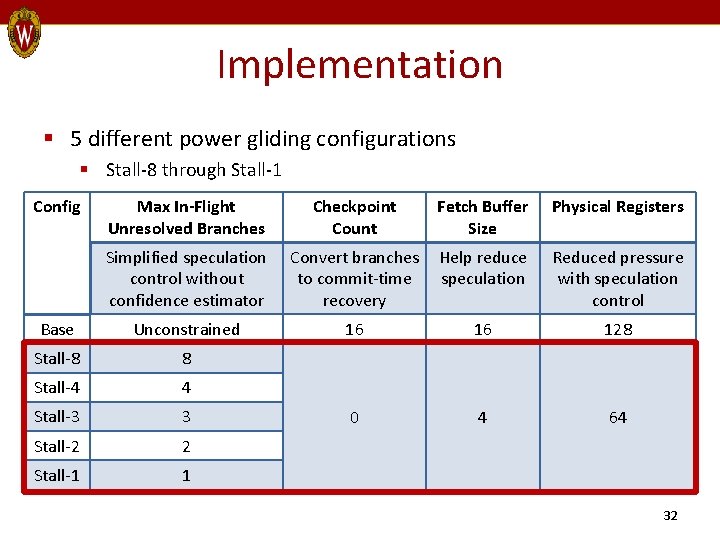

Implementation § 5 different power gliding configurations § Stall-8 through Stall-1 Config Max In-Flight Unresolved Branches Checkpoint Count Fetch Buffer Size Physical Registers Simplified speculation control without confidence estimator Convert branches to commit-time recovery Help reduce speculation Reduced pressure with speculation control Base Unconstrained 16 16 128 Stall-8 8 Stall-4 4 Stall-3 3 0 4 64 Stall-2 2 Stall-1 1 32

Case Study 2: L 2 Power Gliding 33

Observations on L 2 Cache § Static power dominated § Not all workloads need full capacity or optimized latency § Memory-intensive workloads – Tolerant of smaller L 2 sizes § Compute-bound workloads – Sensitive to smaller L 2 sizes – But low L 2 miss rate (0. 3%) 34

![Implementation § Level-[12] § Reduce static power with drowsy mode § Level-[345] § Reduce Implementation § Level-[12] § Reduce static power with drowsy mode § Level-[345] § Reduce](http://slidetodoc.com/presentation_image/de27ab752bc2b4665af722350803a503/image-35.jpg)

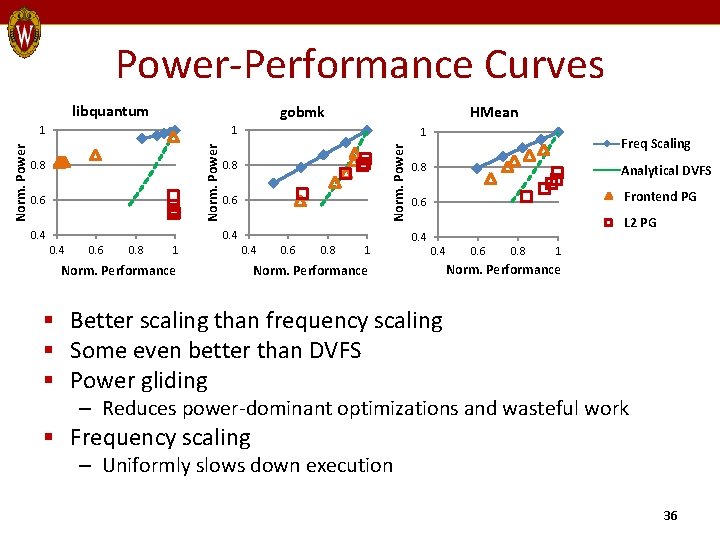

Implementation § Level-[12] § Reduce static power with drowsy mode § Level-[345] § Reduce L 2 associativity (8 -way 1 MB direct-mapped 128 KB) Config Base Drowsy L 2 Data Drowsy L 2 Tags L 2 Associativity N Level-1 N L 2 Access Cycles 12 8 13 Level-2 Level-3 Level-4 Level-5 Y Y 4 2 14 1 35

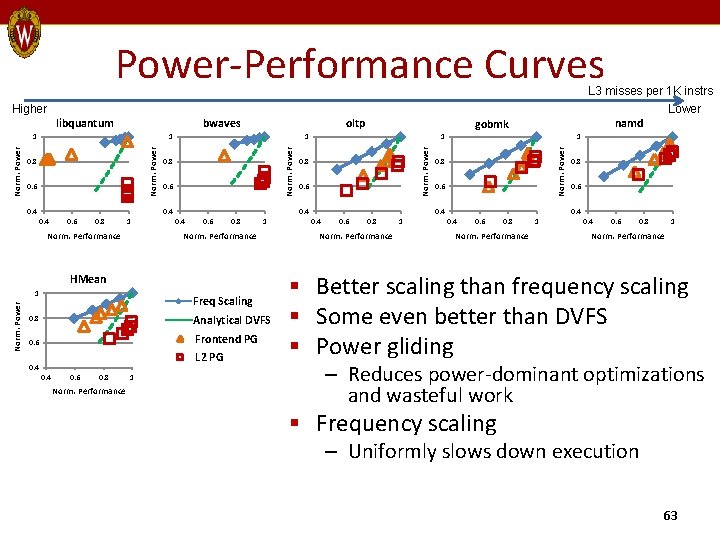

Power-Performance Curves libquantum gobmk 1 0. 8 0. 6 0. 4 1 Norm. Power 1 HMean 0. 8 0. 6 0. 4 0. 6 0. 8 1 0. 4 Norm. Performance 0. 6 0. 8 1 Freq Scaling 0. 8 Analytical DVFS 0. 6 Frontend PG L 2 PG 0. 4 Norm. Performance 0. 6 0. 8 1 Norm. Performance § Better scaling than frequency scaling § Some even better than DVFS § Power gliding – Reduces power-dominant optimizations and wasteful work § Frequency scaling – Uniformly slows down execution 36

Summary § Frequency scaling – Limited to linear dynamic power reduction – Less effective on memory-intensive workloads § Power gliding – Disables/constrains optimizations that meet 3: 1 ratio – Addresses leakage power – More efficient scaling than frequency scaling – Exceeds power savings by DVFS in some cases 37

Outline § § § Motivation Proposal 1: Wi. DGET framework Proposal 2: Deconstructing scalable cores Proposal 3: Power gliding Related work Conclusions 38

Outline § § § Motivation Proposal 1: Wi. DGET framework Proposal 2: Deconstructing scalable cores Proposal 3: Power gliding Related work Conclusions 39

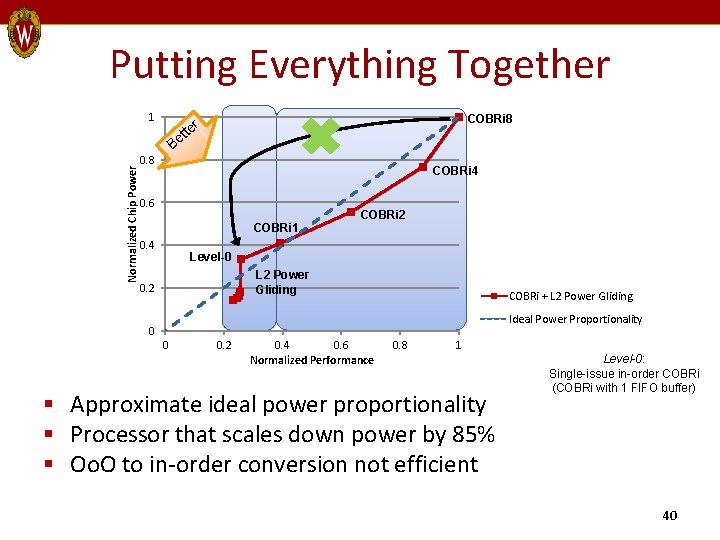

Putting Everything Together Normalized Chip Power 1 Be 0. 8 COBRi 8 r tte COBRi 4 0. 6 COBRi 1 0. 4 COBRi 2 Level-0 L 2 Power Gliding 0. 2 COBRi + L 2 Power Gliding Ideal Power Proportionality 0 0 0. 2 0. 4 0. 6 Normalized Performance 0. 8 1 § Approximate ideal power proportionality § Processor that scales down power by 85% § Oo. O to in-order conversion not efficient Level-0: Single-issue in-order COBRi (COBRi with 1 FIFO buffer) 40

Conclusions § Limited DVFS-driven power management § Power-proportional cores for future technology nodes – Dynamic resource allocation – Aggregate resources to scale up • Wi. DGET & COBRA – Disable resources to scale down • Wi. DGET, COBRA, & Power Gliding – One processor, many different operating points 41

Acknowledgement Committee Special thanks to: David Wood John Davis UW architecture students Dan Gibson Derek Hower AMD Research Joe Eckert 42

Backup Slides 43

![Orthogonal Work § Circuit-level techniques – Supply-voltage reduction • Near-threshold operation [Dreslinski 10] • Orthogonal Work § Circuit-level techniques – Supply-voltage reduction • Near-threshold operation [Dreslinski 10] •](http://slidetodoc.com/presentation_image/de27ab752bc2b4665af722350803a503/image-44.jpg)

Orthogonal Work § Circuit-level techniques – Supply-voltage reduction • Near-threshold operation [Dreslinski 10] • Subthreshold operation [Chandrakasan 10] – Globally-asynchronous locally-synchronous designs [Kalla 10] – Transistor optimization [Azizi 10] • Multi- / variable-threshold CMOS • Sleep transistors § System-level techniques – Power. Nap [Meisner 09] – Thread Motion [Rangan 09] 44

![Related Power Management Work § Energy-proportional computing [Barroso 07] § Dynamically adaptive cores [Albonesi Related Power Management Work § Energy-proportional computing [Barroso 07] § Dynamically adaptive cores [Albonesi](http://slidetodoc.com/presentation_image/de27ab752bc2b4665af722350803a503/image-45.jpg)

Related Power Management Work § Energy-proportional computing [Barroso 07] § Dynamically adaptive cores [Albonesi 03] – Localized changes – Limited scalability – Wasteful power reduction with minimum performance impact § Heterogeneous CMP [Kumar 03] – Bound to static design choices – Less effective for non-targeted apps – More verification (and design) time 45

Related Low-Complexity u. Arch Work § Clustered Architectures – Goal: Superscalar ILP without impacting cycle time – Usually Oo. O-execution clusters – Performance-centric steering policies • Load balancing over locality § Approximating Oo. O execution – Braid architecture [Tseng 08] – Instruction Level Distributed Processing [Kim 02] – Both require ISA changes or binary translation 46

![Prior Scalable Cores § Similar vision, different scaling mechanisms § Core Fusion [Ipek 07] Prior Scalable Cores § Similar vision, different scaling mechanisms § Core Fusion [Ipek 07]](http://slidetodoc.com/presentation_image/de27ab752bc2b4665af722350803a503/image-47.jpg)

Prior Scalable Cores § Similar vision, different scaling mechanisms § Core Fusion [Ipek 07] – Whole-core aggregation – Centralized rename § Composable Lightweight Processors (CLP) [Kim 07] – Whole-core aggregation – EDGE ISA assisted scheduling § Forwardflow [Gibson 10] – Only scales the window & execution – Dataflow architecture 47

Dynamic Voltage/Freq Scaling (DVFS) § Dynamically trade-off power for performance – Change voltage and freq at runtime – Often regulated by OS • Slow response time § Linear reduction of V & F – Cubic in dynamic power – Linear in performance – Quadratic in dynamic energy § Effective for thermal management § Challenges – Controlling DVFS – Diminishing returns of DVFS 48

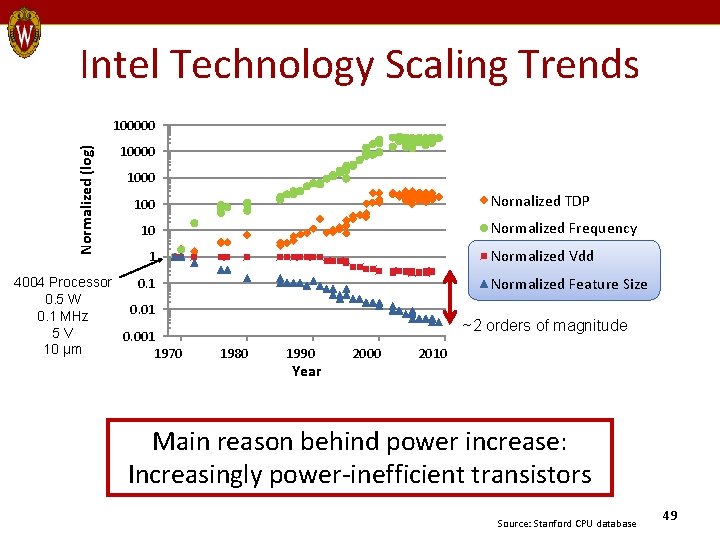

Intel Technology Scaling Trends Normalized (log) 100000 1000 Nornalized TDP 100 Normalized Frequency 10 Normalized Vdd 1 4004 Processor 0. 1 0. 5 W 0. 01 0. 1 MHz 5 V 0. 001 10 µm 1970 Normalized Feature Size ~2 orders of magnitude 1980 1990 Year 2000 2010 Main reason behind power increase: Increasingly power-inefficient transistors Source: Stanford CPU database 49

Core Wars Backup Slides 50

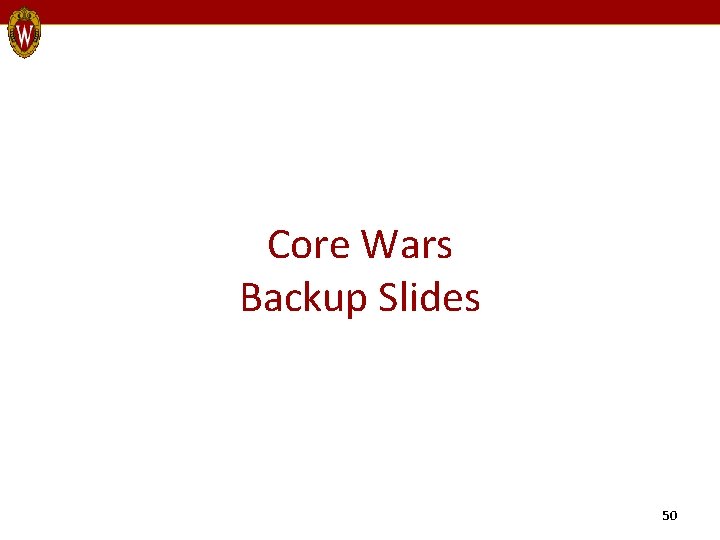

Core Scaling Taxonomy Component Definition Scaling Alternatives L 1 -I Mechanism to aggregate L 1 -Is • No scaling • Sub-banked L 1 -Is Frontend Mechanism to scale frontend width • Static overprovisioning • Aggregated frontend Scheduling Mechanism to scale instruction scheduler • Steering based on architectural register dependency • Steering with an L 1 -D bank predictor Execution Resources Mechanism to scale the number of functional pipelines • Static overprovisioning • Scaled with scheduler Instruction Window Mechanism to scale the size of the instruction window • Static overprovisioning • Scaled with scheduler L 1 -D Mechanism to aggregate L 1 -Ds • No scaling • Bank-interleaved L 1 -Ds • Ad hoc coherent L 1 -Ds Resource Acquisition Philosophy Means by which cores are provided with additional resources when scaled up • Resource borrowing • Resource overprovisioning 51

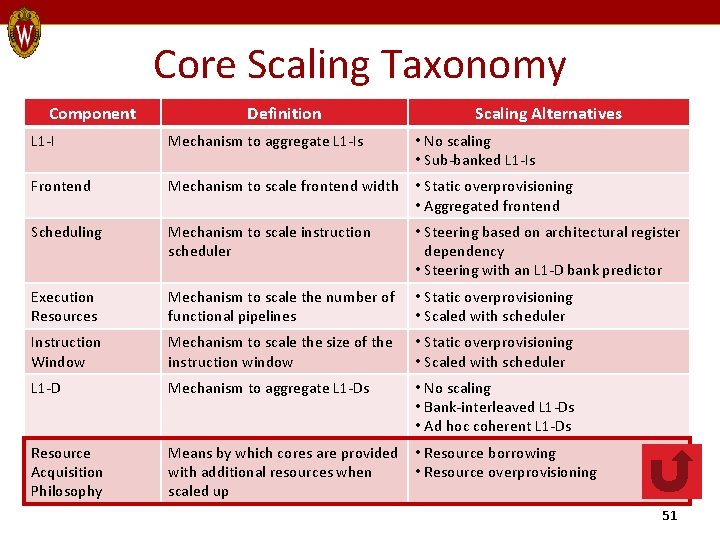

§ Smaller technologies / higher frequencies Smaller distance § @ 3 GHz & 45 nm – 2 mm of distance in cycle Max Signalling Distance (microns) Area and Wire Delays 6000 45 nm 5000 32 nm 4000 3000 2000 1000 2000 3000 Clock Frequency (MHz) 4000 • Restriction on size and placement of shared resources – E. g. , 2 -issue Oo. O core in 45 nm at 3 GHz 2 cores 4 cores 32 KB L 1 -D 1 -cycle distance 2 -cycle distance • More cores to share Tighter constraints 52

Sensitivity to Communication Overheads Better § Not sensitive to frontend depth § Cache-bank misprediction penalties dominate the overheads § BAR outperforms COR only at scaling point 4 with no wire delay 53

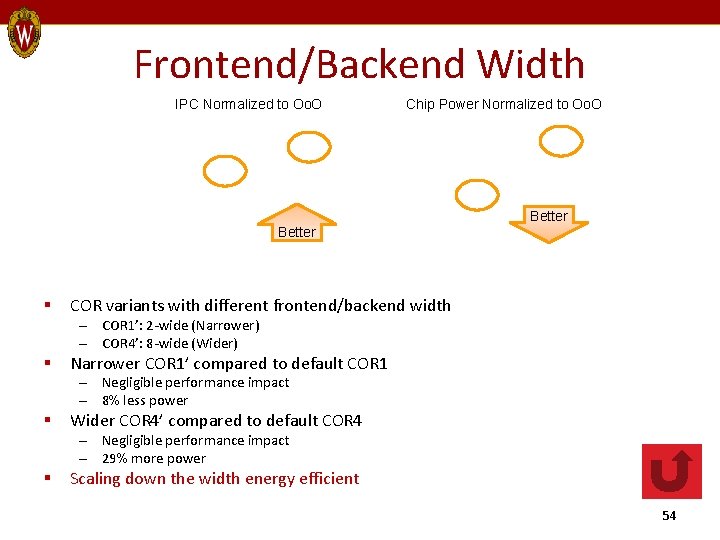

Frontend/Backend Width IPC Normalized to Oo. O Chip Power Normalized to Oo. O Better § Better COR variants with different frontend/backend width – COR 1’: 2 -wide (Narrower) – COR 4’: 8 -wide (Wider) § Narrower COR 1’ compared to default COR 1 – Negligible performance impact – 8% less power § Wider COR 4’ compared to default COR 4 – Negligible performance impact – 29% more power § Scaling down the width energy efficient 54

L 1 -I Aggregation BAR 4’ with a single L 1 -I I$0 COR 4’ with a single L 1 -I I$0 2 cycles C 0 C 1 C 2 C 3 C 0 D$1 D$2 D$3 D$0 I$1 I$2 I$3 2 cycles Better § Little impact on overall performance – Avg miss rate < 0. 5% – Highest miss rate: 6% (OLTP) § L 1 -I aggregation not necessary 55

L 1 -D Aggregation BAR 4’ with a single L 1 -D COR 4’ with a single L 1 -D I$0 I$1 I$2 I$3 I$0 C 1 C 2 C 3 C 0 D$0 2 cycles D$1 D$2 D$3 Better § More harm than good – Especially for fully scaled-up cores – 2% for BAR 4’ and 4% for COR 4’ § Why counter intuitive results? – Large working sets – Longer effective access latency • BAR: Cache-core mispredictions • COR’: Remote cache access latencies § L 1 -D aggregation not necessary 56

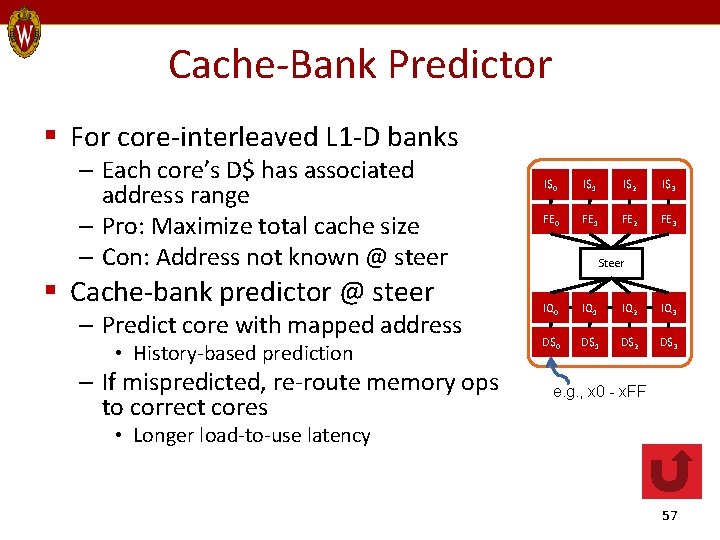

Cache-Bank Predictor § For core-interleaved L 1 -D banks – Each core’s D$ has associated address range – Pro: Maximize total cache size – Con: Address not known @ steer § Cache-bank predictor @ steer – Predict core with mapped address • History-based prediction – If mispredicted, re-route memory ops to correct cores I$0 I$1 I$2 I$3 FE 0 FE 1 FE 2 FE 3 Steer IQ 0 IQ 1 IQ 2 IQ 3 D$0 D$1 D$2 D$3 e. g. , x 0 - x. FF • Longer load-to-use latency 57

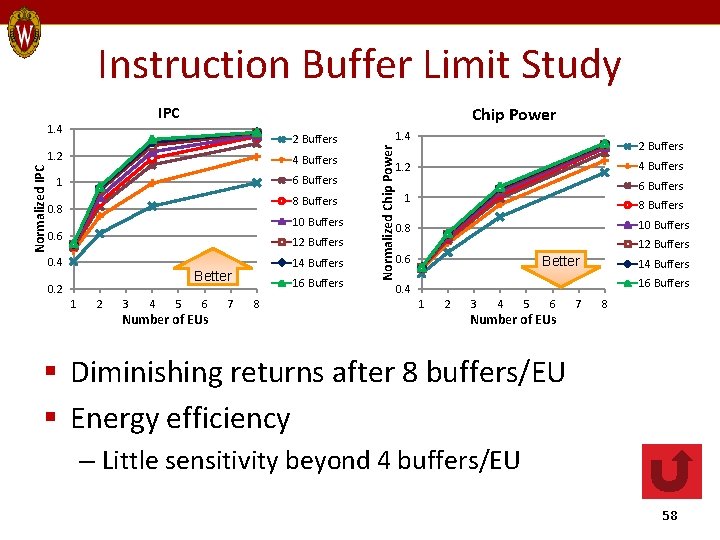

Instruction Buffer Limit Study IPC Chip Power 2 Buffers 1. 2 4 Buffers 1 6 Buffers 8 Buffers 0. 8 10 Buffers 0. 6 12 Buffers 0. 4 14 Buffers Better 0. 2 1 2 3 4 5 6 Number of EUs 7 16 Buffers 8 1. 4 Normalized Chip Power Normalized IPC 1. 4 2 Buffers 4 Buffers 1. 2 6 Buffers 1 8 Buffers 10 Buffers 0. 8 12 Buffers 0. 6 Better 14 Buffers 16 Buffers 0. 4 1 2 3 4 5 6 Number of EUs 7 8 § Diminishing returns after 8 buffers/EU § Energy efficiency – Little sensitivity beyond 4 buffers/EU 58

Power Gliding Backup Slides 59

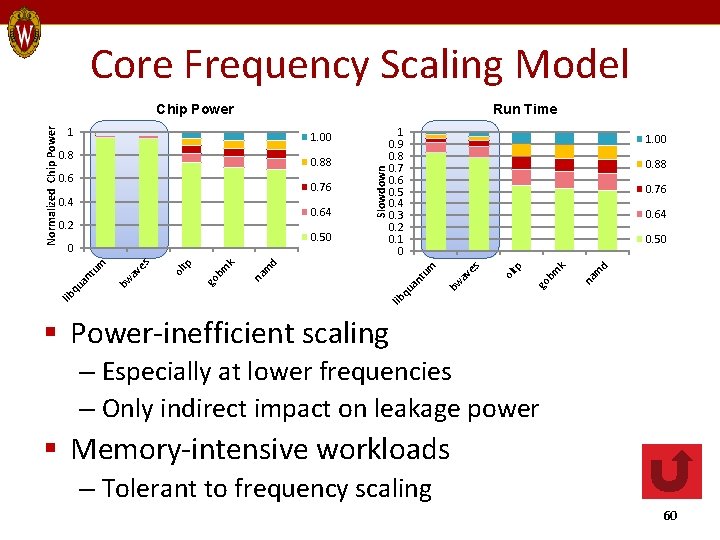

Core Frequency Scaling Model Run Time tu an d lib qu m m d m na k go bm tp ol es av bw an t qu lib 0. 50 na 0. 50 0 0. 64 k 0. 64 0. 2 0. 76 go bm 0. 4 tp 0. 76 0. 88 ol 0. 6 1. 00 es 0. 88 1 0. 9 0. 8 0. 7 0. 6 0. 5 0. 4 0. 3 0. 2 0. 1 0 av 0. 8 Slowdown 1. 00 bw 1 um Normalized Chip Power § Power-inefficient scaling – Especially at lower frequencies – Only indirect impact on leakage power § Memory-intensive workloads – Tolerant to frequency scaling 60

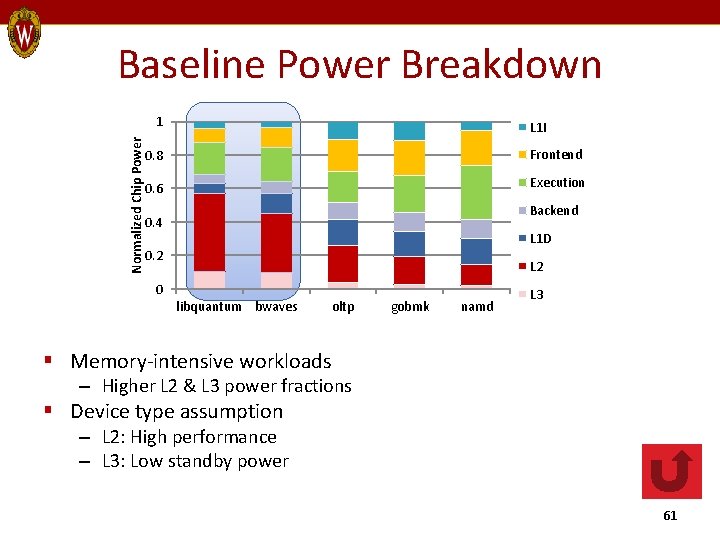

Baseline Power Breakdown Normalized Chip Power 1 L 1 I 0. 8 Frontend 0. 6 Execution Backend 0. 4 L 1 D 0. 2 L 2 0 libquantum bwaves oltp gobmk namd L 3 § Memory-intensive workloads – Higher L 2 & L 3 power fractions § Device type assumption – L 2: High performance – L 3: Low standby power 61

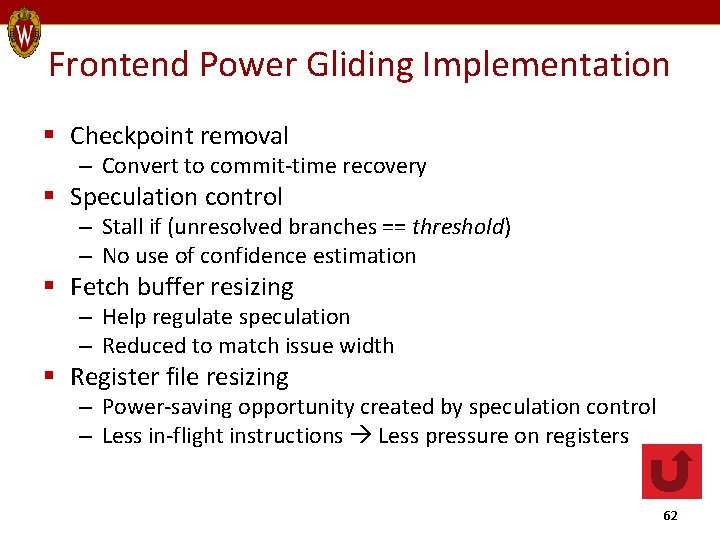

Frontend Power Gliding Implementation § Checkpoint removal – Convert to commit-time recovery § Speculation control – Stall if (unresolved branches == threshold) – No use of confidence estimation § Fetch buffer resizing – Help regulate speculation – Reduced to match issue width § Register file resizing – Power-saving opportunity created by speculation control – Less in-flight instructions Less pressure on registers 62

Power-Performance Curves L 3 misses per 1 K instrs Lower namd Higher bwaves 0. 6 0. 4 0. 8 0. 6 0. 4 0. 6 0. 8 1 Norm. Performance 0. 6 0. 8 1 HMean Norm. Power Freq Scaling Analytical DVFS 0. 8 Frontend PG 0. 6 L 2 PG 0. 4 0. 6 0. 8 Norm. Performance 1 0. 8 0. 6 0. 4 Norm. Performance 1 1 Norm. Power 0. 8 gobmk 1 Norm. Power oltp 1 1 Norm. Power libquantum 1 0. 8 0. 6 0. 4 0. 6 0. 8 Norm. Performance 1 0. 4 0. 6 0. 8 1 Norm. Performance § Better scaling than frequency scaling § Some even better than DVFS § Power gliding – Reduces power-dominant optimizations and wasteful work § Frequency scaling – Uniformly slows down execution 63

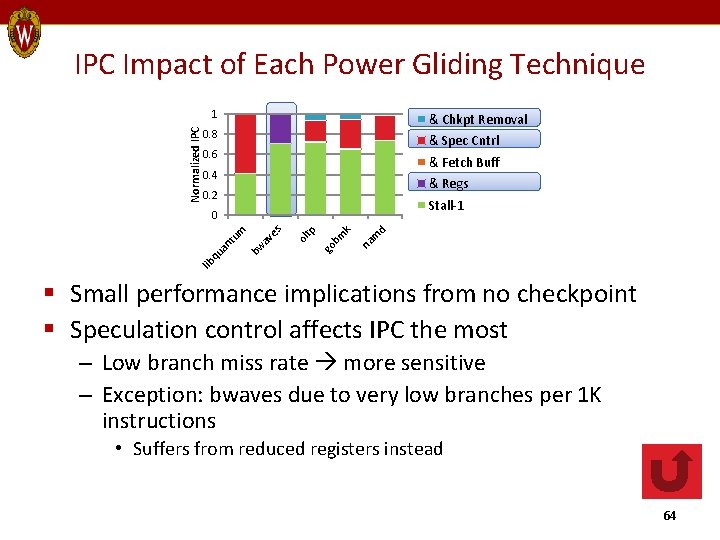

IPC Impact of Each Power Gliding Technique Normalized IPC 1 & Chkpt Removal 0. 8 & Spec Cntrl 0. 6 & Fetch Buff 0. 4 & Regs 0. 2 Stall-1 na m d m k go b ol tp es bw av lib qu an tu m 0 § Small performance implications from no checkpoint § Speculation control affects IPC the most – Low branch miss rate more sensitive – Exception: bwaves due to very low branches per 1 K instructions • Suffers from reduced registers instead 64

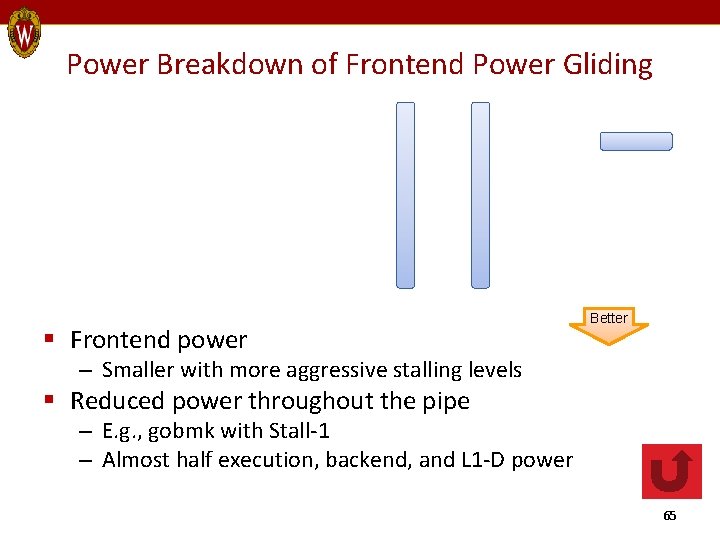

Power Breakdown of Frontend Power Gliding § Frontend power Better – Smaller with more aggressive stalling levels § Reduced power throughout the pipe – E. g. , gobmk with Stall-1 – Almost half execution, backend, and L 1 -D power 65

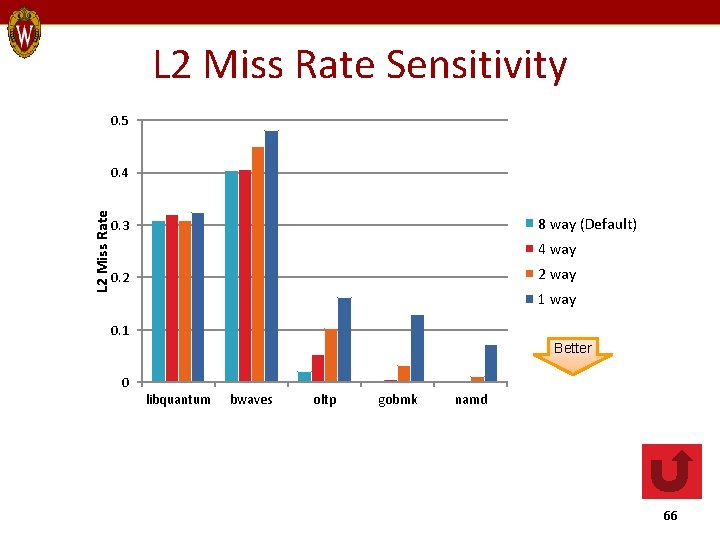

L 2 Miss Rate Sensitivity 0. 5 L 2 Miss Rate 0. 4 8 way (Default) 0. 3 4 way 2 way 0. 2 1 way 0. 1 Better 0 libquantum bwaves oltp gobmk namd 66

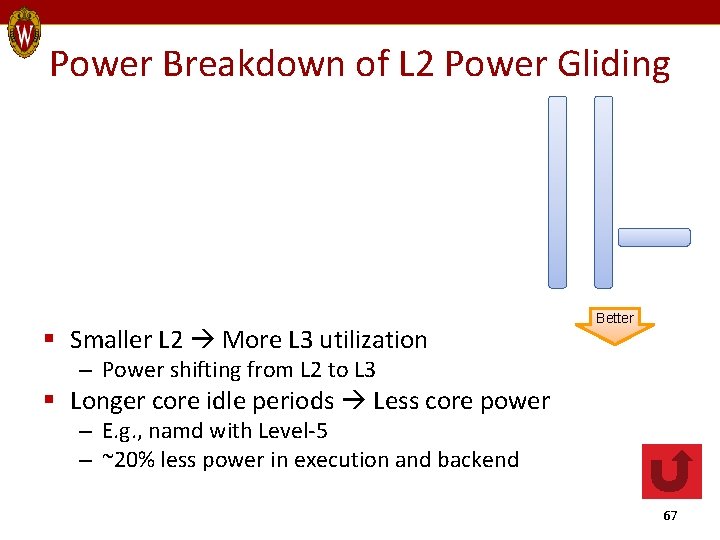

Power Breakdown of L 2 Power Gliding § Smaller L 2 More L 3 utilization Better – Power shifting from L 2 to L 3 § Longer core idle periods Less core power – E. g. , namd with Level-5 – ~20% less power in execution and backend 67

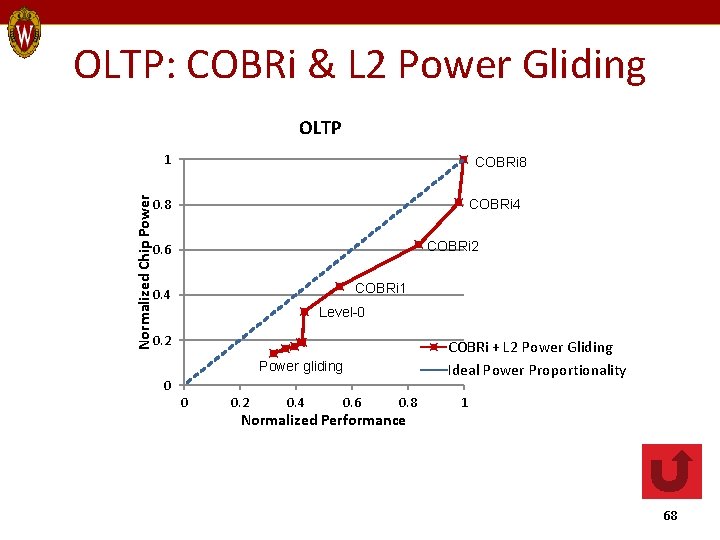

OLTP: COBRi & L 2 Power Gliding OLTP Normalized Chip Power 1 COBRi 8 0. 8 COBRi 4 COBRi 2 0. 6 COBRi 1 0. 4 Level-0 0. 2 COBRi + L 2 Power Gliding Ideal Power Proportionality Power gliding 0 0 0. 2 0. 4 0. 6 0. 8 Normalized Performance 1 68

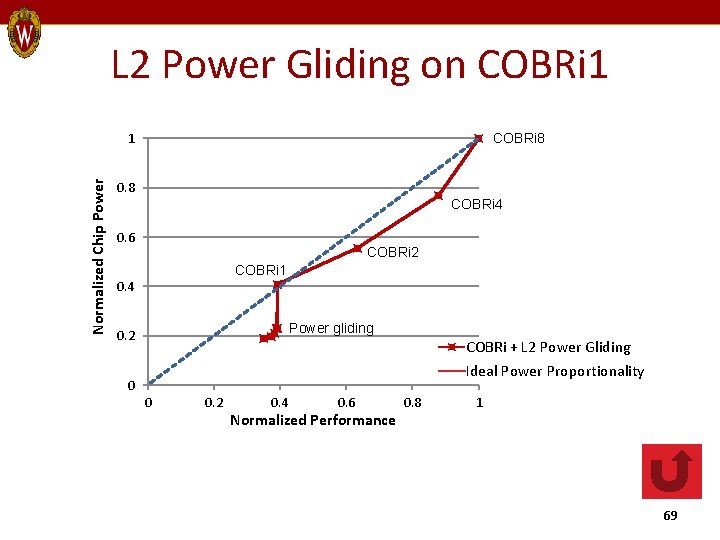

L 2 Power Gliding on COBRi 1 Normalized Chip Power 1 COBRi 8 0. 8 COBRi 4 0. 6 COBRi 2 COBRi 1 0. 4 Power gliding 0. 2 COBRi + L 2 Power Gliding Ideal Power Proportionality 0 0 0. 2 0. 4 0. 6 Normalized Performance 0. 8 1 69

Wi. DGET Backup Slides 70

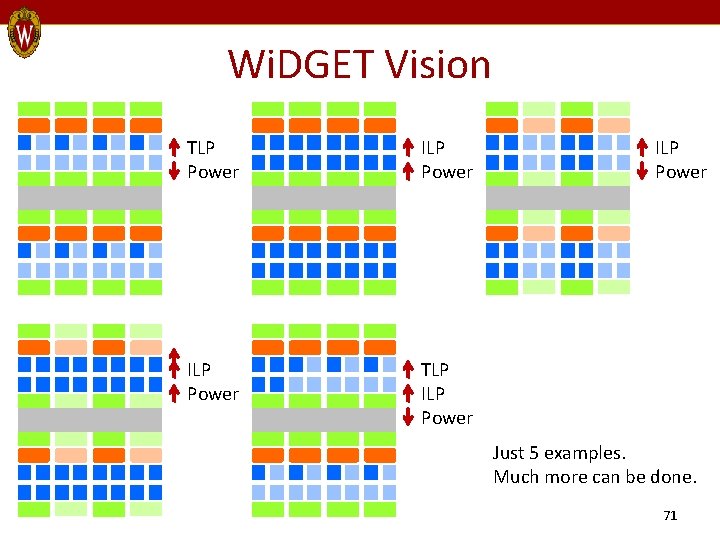

Wi. DGET Vision TLP Power ILP Power TLP ILP Power Just 5 examples. Much more can be done. 71

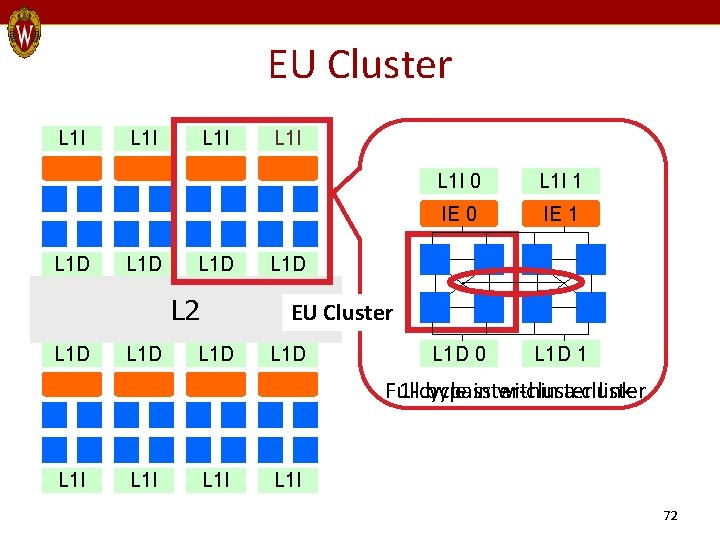

EU Cluster L 1 I L 1 D L 2 L 1 D L 1 I 0 L 1 I 1 IE 0 IE 1 Thread context management or Instruction Engine (Front-end + Back-end) L 1 D EU Cluster L 1 D In-order Execution Unit (EU) L 1 D 0 L 1 D 1 Full 1 -cycle bypass inter-cluster within a cluster link L 1 I 72

Instruction Engine (IE) L 1 I BR Pred L 1 D L 1 I Fetch L 1 D L 1 I Decode RF Rename Steering ROB Front-End L 1 D Commit Back-End • Thread L 2 specific structures L 1 D • Front-end + back-end • Similar a conventional Oo. O pipe L 1 D to. L 1 D • Steering logic for distributed EUs • Achieve Oo. O performance with in-order EUs • Expose independent instr chains L 1 I 73

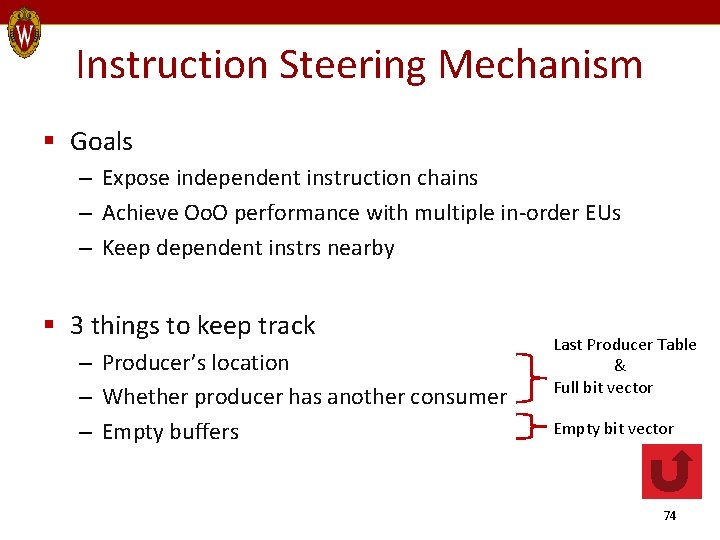

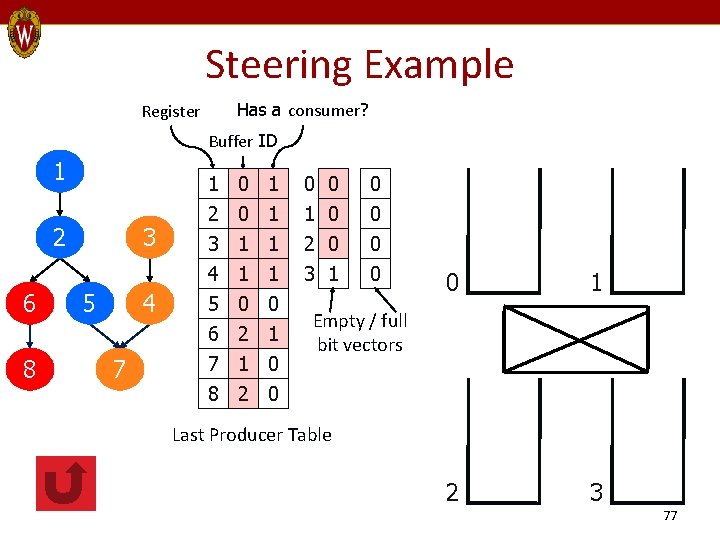

Instruction Steering Mechanism § Goals – Expose independent instruction chains – Achieve Oo. O performance with multiple in-order EUs – Keep dependent instrs nearby § 3 things to keep track – Producer’s location – Whether producer has another consumer – Empty buffers Last Producer Table & Full bit vector Empty bit vector 74

![Steering Heuristic § Based on dependence-based steering [Palacharla 97] – Expose independent instr chains Steering Heuristic § Based on dependence-based steering [Palacharla 97] – Expose independent instr chains](http://slidetodoc.com/presentation_image/de27ab752bc2b4665af722350803a503/image-75.jpg)

Steering Heuristic § Based on dependence-based steering [Palacharla 97] – Expose independent instr chains – Consumer directly behind the producer – Stall steering when no empty buffer is found § Wi. DGET: Power-performance goal – Emphasize locality & scalability Cluster 0 Cluster 1 Outstanding Ops? 0 Any empty buf 1 2 Avail behind either of producers? Y Y N Empty buf in Producer buf either of clusters Avail behind producer? N Empty buf within cluster • Consumer-push operand transfers – Send steered EU ID to the producer EU – Multi-cast result to all consumers 75

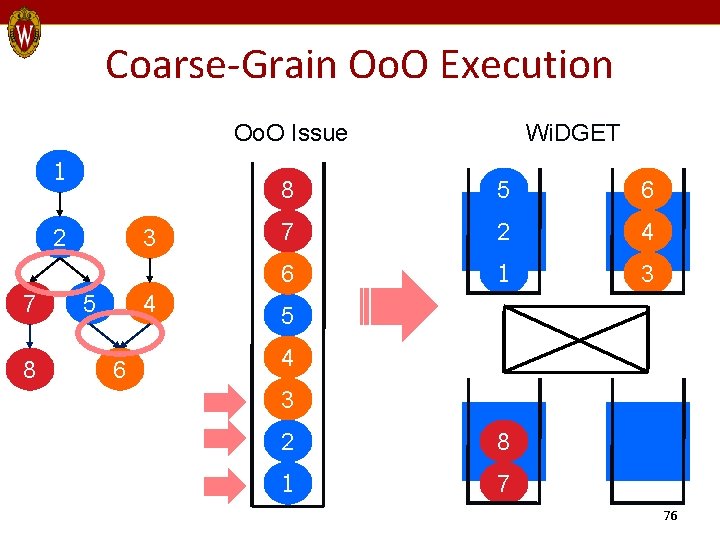

Coarse-Grain Oo. O Execution Oo. O Issue 1 2 7 8 3 5 4 6 Wi. DGET 8 5 6 7 2 4 6 1 3 5 4 3 2 8 1 7 76

Steering Example Has a consumer? Register Buffer ID 1 2 6 8 3 5 4 7 1 ﬩ 0 1 0 2 ﬩ 0 0 1 3 ﬩ 1 1 0 0 0 1 1 0 1 2 0 1 0 0 0 4 5 6 7 8 3 1 0 ﬩ 1 ﬩ 0 ﬩ 2 ﬩ 1 ﬩ 2 1 0 0 0 1 2 3 Empty / full bit vectors Last Producer Table 77

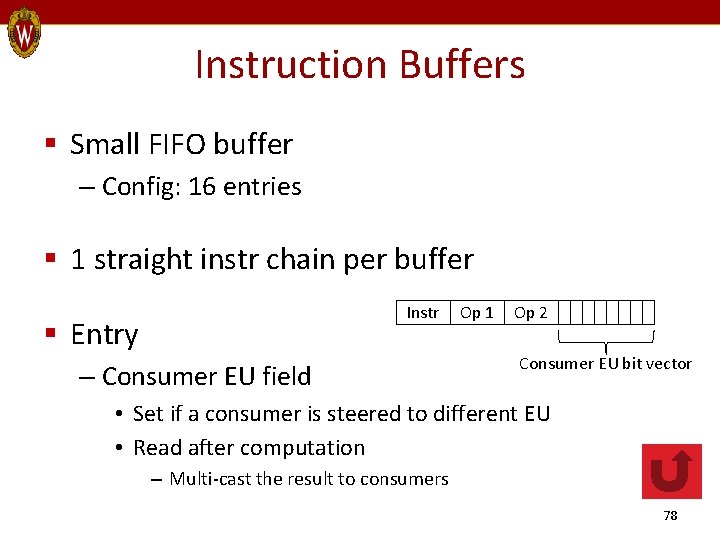

Instruction Buffers § Small FIFO buffer – Config: 16 entries § 1 straight instr chain per buffer Instr § Entry – Consumer EU field Op 1 Op 2 Consumer EU bit vector • Set if a consumer is steered to different EU • Read after computation – Multi-cast the result to consumers 78

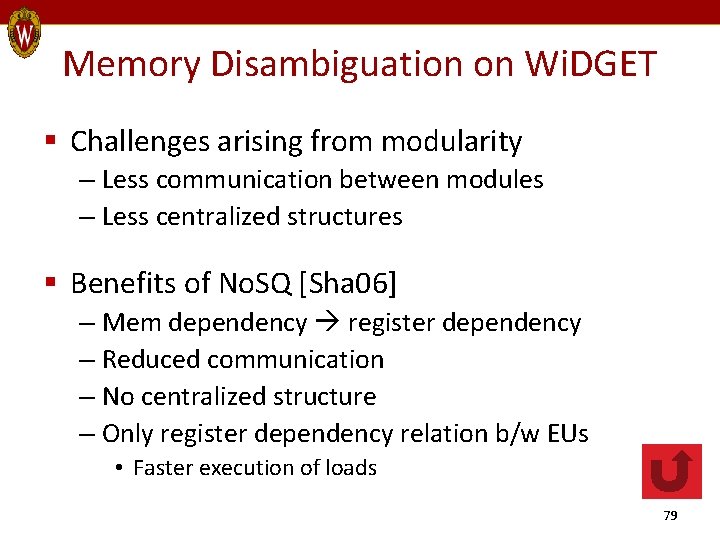

Memory Disambiguation on Wi. DGET § Challenges arising from modularity – Less communication between modules – Less centralized structures § Benefits of No. SQ [Sha 06] – Mem dependency register dependency – Reduced communication – No centralized structure – Only register dependency relation b/w EUs • Faster execution of loads 79

![Memory Instructions? § No LSQ thanks to No. SQ [Sha 06] § Instead, – Memory Instructions? § No LSQ thanks to No. SQ [Sha 06] § Instead, –](http://slidetodoc.com/presentation_image/de27ab752bc2b4665af722350803a503/image-80.jpg)

Memory Instructions? § No LSQ thanks to No. SQ [Sha 06] § Instead, – Exploit in-window ST-LD forwarding – LDs: Predict if dependent ST is in-flight @ Rename • If so, read from ST’s source register, not from cache • Else, read from cache • @ Commit, re-execute if necessary – STs: Write @ Commit – Prediction • Dynamic distance in stores • Path-sensitive 80

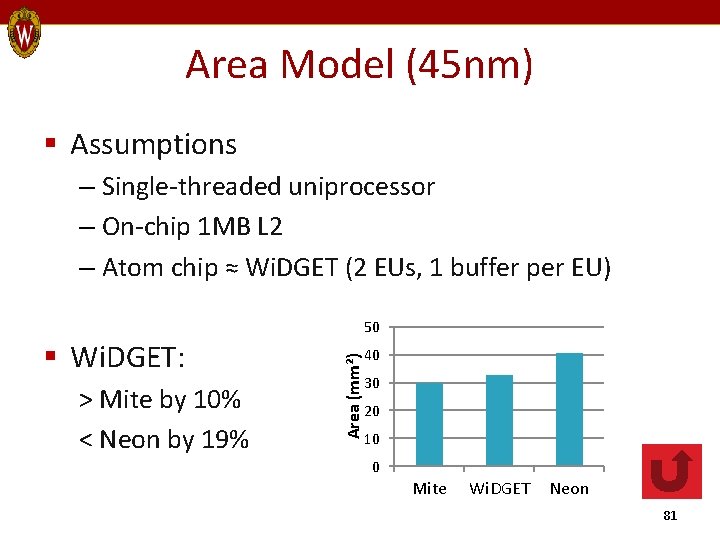

Area Model (45 nm) § Assumptions – Single-threaded uniprocessor – On-chip 1 MB L 2 – Atom chip ≈ Wi. DGET (2 EUs, 1 buffer per EU) > Mite by 10% < Neon by 19% Area (mm²) § Wi. DGET: 50 40 30 20 10 0 Mite Wi. DGET Neon 81

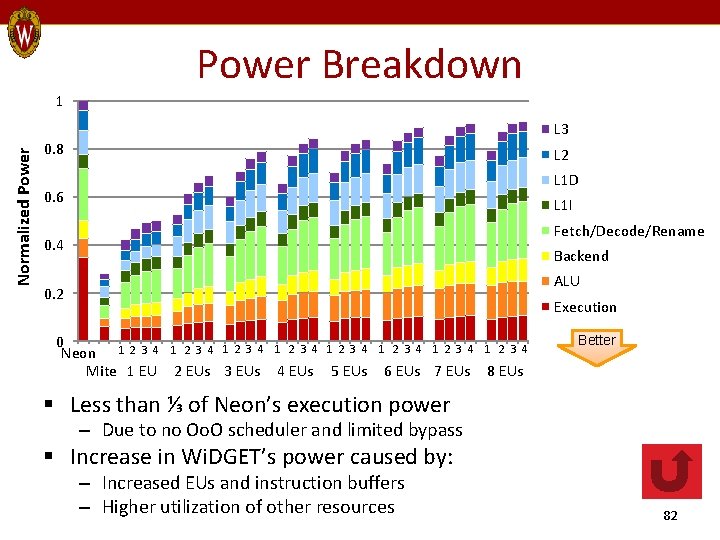

Power Breakdown 1 Normalized Power L 3 0. 8 L 2 L 1 D 0. 6 L 1 I Fetch/Decode/Rename 0. 4 Backend ALU 0. 2 Execution 0 Neon 1 2 3 4 1 2 3 4 Mite 1 EU 2 EUs 3 EUs 4 EUs 5 EUs 1 2 3 4 6 EUs 7 EUs Better 8 EUs § Less than ⅓ of Neon’s execution power – Due to no Oo. O scheduler and limited bypass § Increase in Wi. DGET’s power caused by: – Increased EUs and instruction buffers – Higher utilization of other resources 82

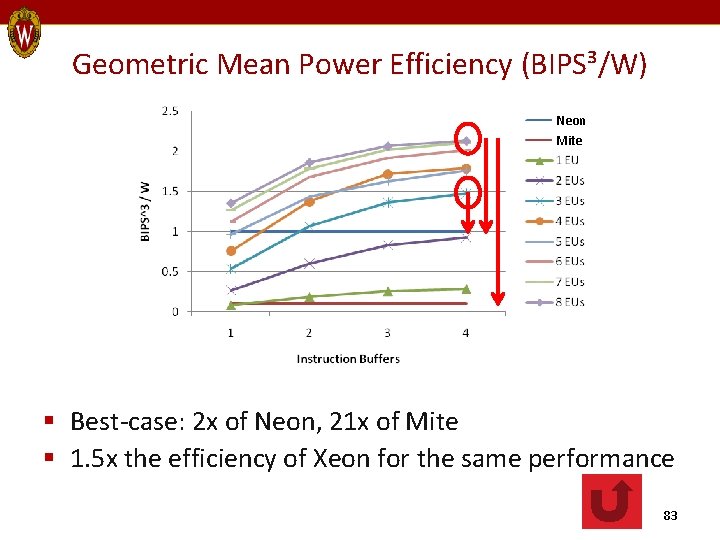

Geometric Mean Power Efficiency (BIPS³/W) Neon Mite § Best-case: 2 x of Neon, 21 x of Mite § 1. 5 x the efficiency of Xeon for the same performance 83

Related Work Backup Slides 84

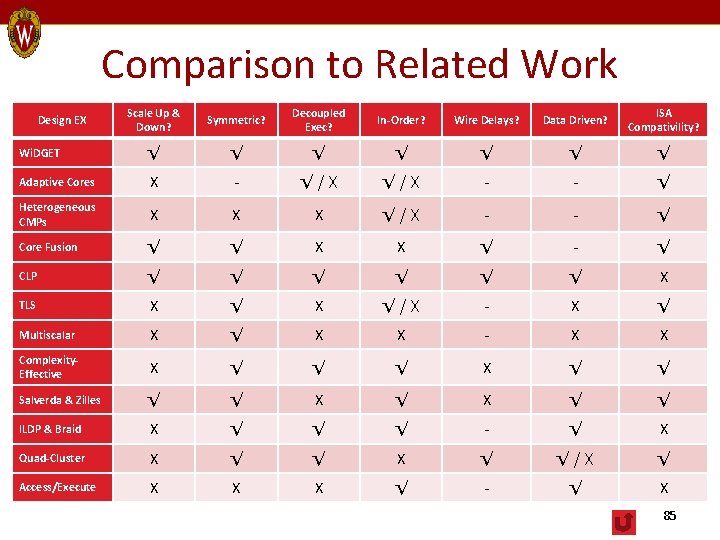

Comparison to Related Work Scale Up & Down? Symmetric? Decoupled Exec? In-Order? Wire Delays? Data Driven? ISA Compativility? Wi. DGET √ √ √ √ Adaptive Cores X - √/X - - √ Heterogeneous CMPs X X X √/X - - √ Core Fusion √ √ X X √ - √ CLP √ √ √ X TLS X √/X - X √ Multiscalar X √ X X - X X Complexity. Effective X √ √ √ X √ √ Salverda & Zilles √ √ X √ √ ILDP & Braid X √ √ √ - √ X Quad-Cluster X √ √/X √ Access/Execute X X X √ - √ X Design EX 85

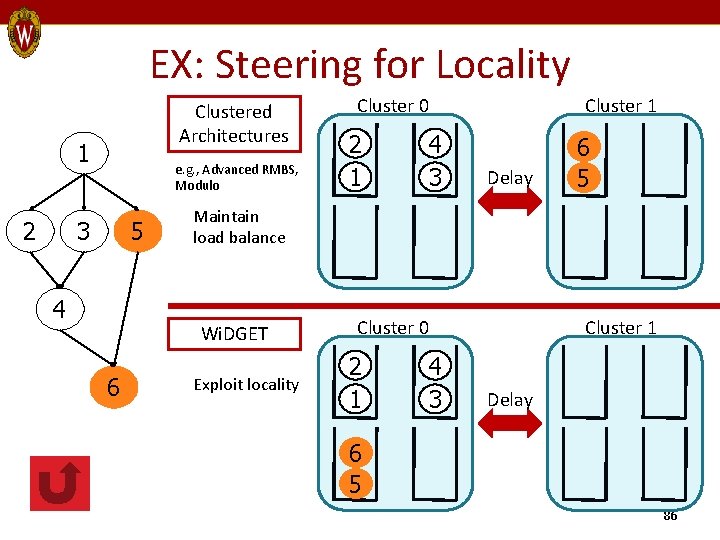

EX: Steering for Locality Clustered Architectures 1 2 e. g. , Advanced RMBS, Modulo 3 5 4 2 1 4 3 Cluster 1 Delay 6 5 Maintain load balance Wi. DGET 6 Cluster 0 Exploit locality Cluster 0 2 1 4 3 Cluster 1 Delay 6 5 86

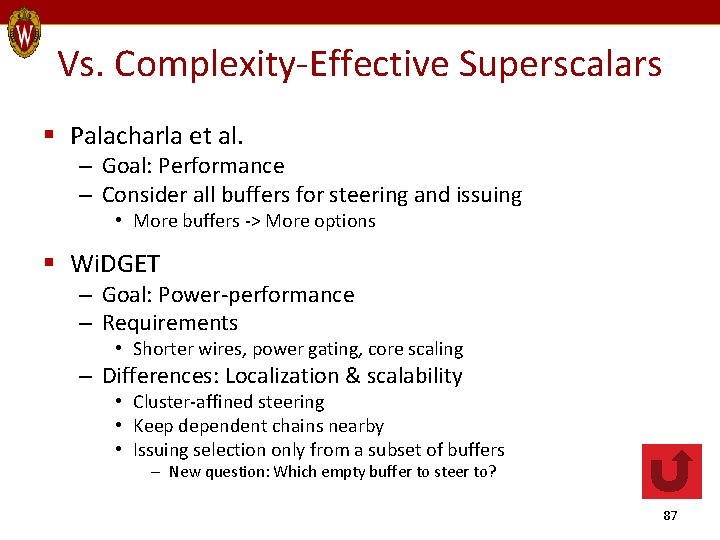

Vs. Complexity-Effective Superscalars § Palacharla et al. – Goal: Performance – Consider all buffers for steering and issuing • More buffers -> More options § Wi. DGET – Goal: Power-performance – Requirements • Shorter wires, power gating, core scaling – Differences: Localization & scalability • Cluster-affined steering • Keep dependent chains nearby • Issuing selection only from a subset of buffers – New question: Which empty buffer to steer to? 87

![Steering Cost Model [Salverda 08] § Steer to distributed IQs § Steering policy determines Steering Cost Model [Salverda 08] § Steer to distributed IQs § Steering policy determines](http://slidetodoc.com/presentation_image/de27ab752bc2b4665af722350803a503/image-88.jpg)

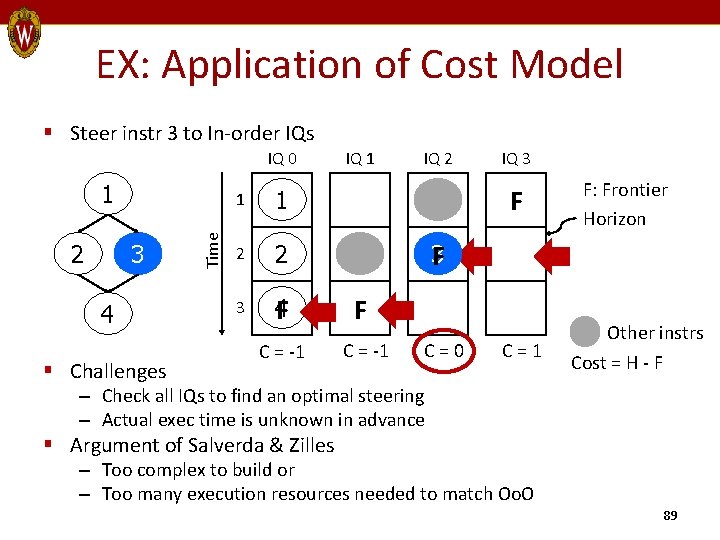

Steering Cost Model [Salverda 08] § Steer to distributed IQs § Steering policy determines issue time – Constrained by dependency, structural hazards, issue policy § Ideal steering will issue an instr: – As soon as it becomes ready (horizon) – Without blocking others (frontier) (constraints of in-order) § Steering Cost = horizon – frontier – Good steering: Min absolute (Steering Cost) 88

EX: Application of Cost Model § Steer instr 3 to In-order IQs IQ 0 2 3 4 § Challenges Time 1 IQ 2 IQ 3 F 1 1 2 2 3 4 F F C = -1 F: Frontier Horizon 3 F C=0 C=1 Other instrs Cost = H - F – Check all IQs to find an optimal steering – Actual exec time is unknown in advance § Argument of Salverda & Zilles – Too complex to build or – Too many execution resources needed to match Oo. O 89

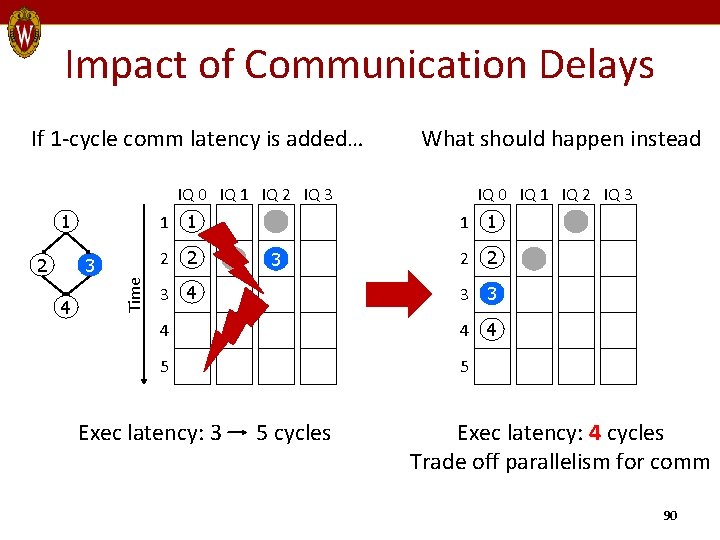

Impact of Communication Delays If 1 -cycle comm latency is added… What should happen instead IQ 0 IQ 1 IQ 2 IQ 3 1 2 2 3 4 Time 2 1 1 IQ 0 IQ 1 IQ 2 IQ 3 1 1 3 2 2 3 4 3 3 4 4 4 5 5 Exec latency: 3 5 cycles Exec latency: 4 cycles Trade off parallelism for comm 90

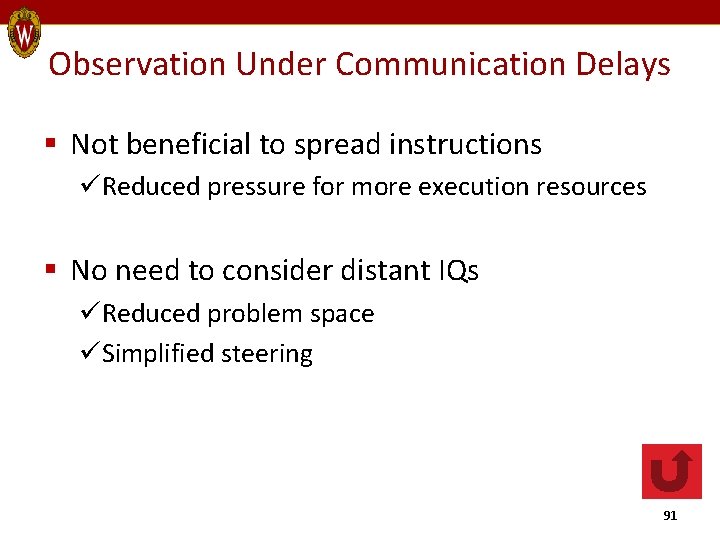

Observation Under Communication Delays § Not beneficial to spread instructions üReduced pressure for more execution resources § No need to consider distant IQs üReduced problem space üSimplified steering 91

![Energy-Proportional Computing for Servers [Barroso 07] § Servers – 10 -50% utilization most of Energy-Proportional Computing for Servers [Barroso 07] § Servers – 10 -50% utilization most of](http://slidetodoc.com/presentation_image/de27ab752bc2b4665af722350803a503/image-92.jpg)

Energy-Proportional Computing for Servers [Barroso 07] § Servers – 10 -50% utilization most of the time • Yet, availability is crucial – Common energy-saving techs inapplicable – 50% of full power even during low utilization § Solution: Energy proportionality – Energy consumption in proportion to work done § Key features – Wide dynamic power range – Active low-power modes • Better than sleep states with wake-up penalties 92

![Power. Nap [Meisner 09] § Goals – Reduction of server idle power – Exploitation Power. Nap [Meisner 09] § Goals – Reduction of server idle power – Exploitation](http://slidetodoc.com/presentation_image/de27ab752bc2b4665af722350803a503/image-93.jpg)

Power. Nap [Meisner 09] § Goals – Reduction of server idle power – Exploitation of frequent idle periods § Mechanisms – System level – Reduce transition time into & out of nap state – Ease power-performance trade-offs – Modify hardware subsystems with high idle power • e. g. , DRAM (self-refresh), fans (variable speed) 93

![Thread Motion [Rangan 09] § Goals – Fine-grained power management for CMPs – Alternative Thread Motion [Rangan 09] § Goals – Fine-grained power management for CMPs – Alternative](http://slidetodoc.com/presentation_image/de27ab752bc2b4665af722350803a503/image-94.jpg)

Thread Motion [Rangan 09] § Goals – Fine-grained power management for CMPs – Alternative to per-core DVFS – High system throughput within power budget § Mechanisms – Migrate threads rather than adjusting voltage – Homogeneous cores in multiple, static voltage/freq domains – 2 migration policies • Time-driven & miss-driven 94

Vs. Thread-Level Speculation § Their way – SW: Divides into contiguous segments – HW: Runs speculative threads in parallel L 2 Speculation support § Shortcomings – Only successful for regular program structures – Load imbalance – Squash propagation § My Way – No SW reliance – Support a wider range of programs 95

![Vs. Braid Architecture [Tseng 08] § Their way – ISA extension – SW: Re-orders Vs. Braid Architecture [Tseng 08] § Their way – ISA extension – SW: Re-orders](http://slidetodoc.com/presentation_image/de27ab752bc2b4665af722350803a503/image-96.jpg)

Vs. Braid Architecture [Tseng 08] § Their way – ISA extension – SW: Re-orders instrs based on dependency – HW: Sends a group of instrs to FIFO issue queues § Shortcomings – Re-ordering limited to basic blocks § My Way – No SW reliance – Exploit dynamic dependency 96

![Vs. Instruction Level Distributed Processing (ILDP) [Kim 02] § Their way – New ISA Vs. Instruction Level Distributed Processing (ILDP) [Kim 02] § Their way – New ISA](http://slidetodoc.com/presentation_image/de27ab752bc2b4665af722350803a503/image-97.jpg)

Vs. Instruction Level Distributed Processing (ILDP) [Kim 02] § Their way – New ISA or binary translation – SW: Identifies instr dependency – HW: Sends a group of instrs to FIFO issue queues § Shortcomings – Lose binary compatibility § My Way – No SW reliance – Exploit dynamic dependency 97

- Slides: 97