Midterm review Lotzi Blni Fall 2002 EEL 5708

Midterm review Lotzi Bölöni Fall 2002 EEL 5708

Acknowledgements • All the lecture slides were adopted from the slides of David Patterson (1998, 2001) and David E. Culler (2001), Copyright 1998 -2002, University of California Berkeley EEL 5708

Instruction sets EEL 5708

![Levels of Representation temp = v[k]; High Level Language Program Compiler Assembly Language Program Levels of Representation temp = v[k]; High Level Language Program Compiler Assembly Language Program](http://slidetodoc.com/presentation_image_h2/e1227594742e97b03701d55b01c7cd2b/image-4.jpg)

Levels of Representation temp = v[k]; High Level Language Program Compiler Assembly Language Program Assembler Machine Language Program v[k] = v[k+1]; v[k+1] = temp; lw $15, lw $16, sw $15, 0000 1010 1100 0101 1001 1111 0110 1000 1100 0101 1010 0000 0110 1000 1111 1001 0($2) 4($2) 1010 0000 0101 1100 1111 1000 0110 0101 1100 0000 1010 1000 0110 1001 1111 Machine Interpretation Control Signal Specification ALUOP[0: 3] <= Inst. Reg[9: 11] & MASK ° ° EEL 5708

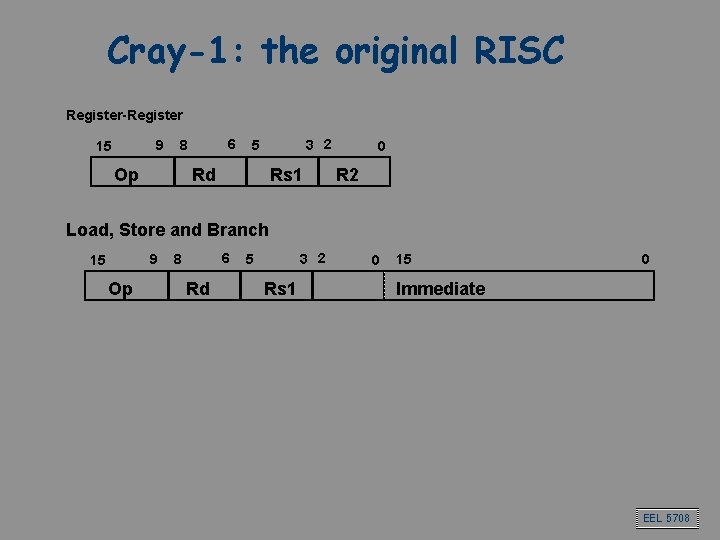

Cray-1: the original RISC Register-Register 9 15 6 8 Op 3 2 5 Rd Rs 1 0 R 2 Load, Store and Branch 9 15 Op 6 8 Rd 3 2 5 Rs 1 0 15 0 Immediate EEL 5708

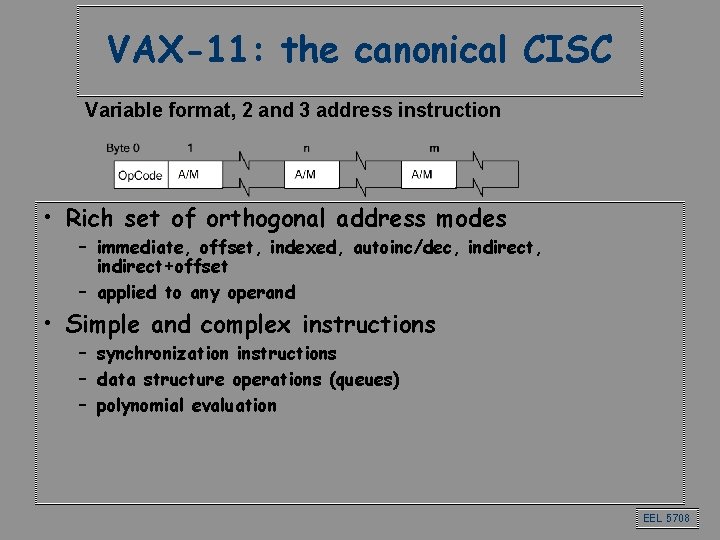

VAX-11: the canonical CISC Variable format, 2 and 3 address instruction • Rich set of orthogonal address modes – immediate, offset, indexed, autoinc/dec, indirect+offset – applied to any operand • Simple and complex instructions – synchronization instructions – data structure operations (queues) – polynomial evaluation EEL 5708

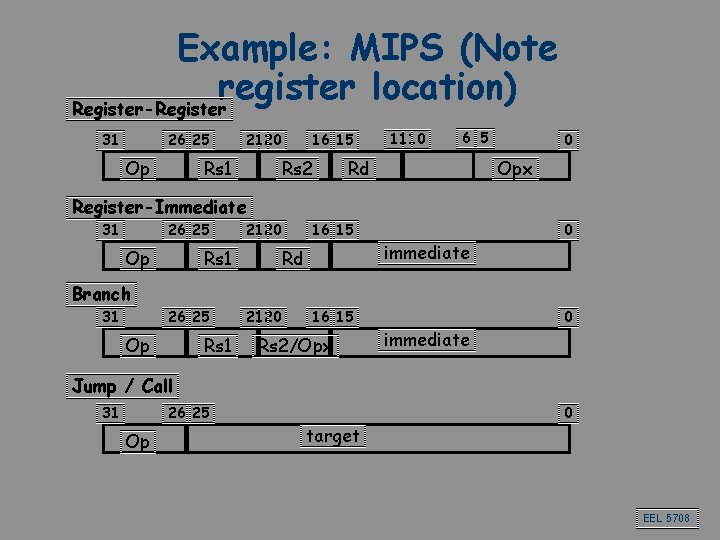

Example: MIPS (Note register location) Register-Register 31 26 25 Op 21 20 Rs 1 16 15 Rs 2 11 10 6 5 Rd 0 Opx Register-Immediate 31 26 25 Op 21 20 Rs 1 16 15 Rd immediate 0 Branch 31 26 25 Op Rs 1 21 20 16 15 Rs 2/Opx immediate 0 Jump / Call 31 26 25 Op target 0 EEL 5708

Processor organization. Basic pipelining EEL 5708

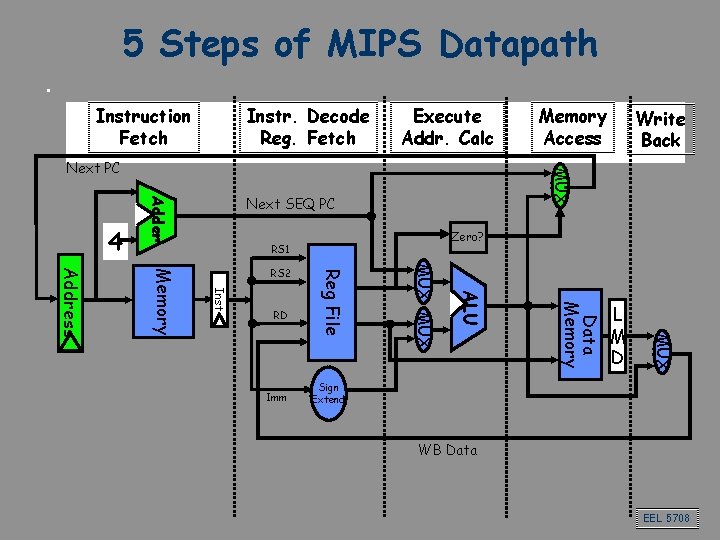

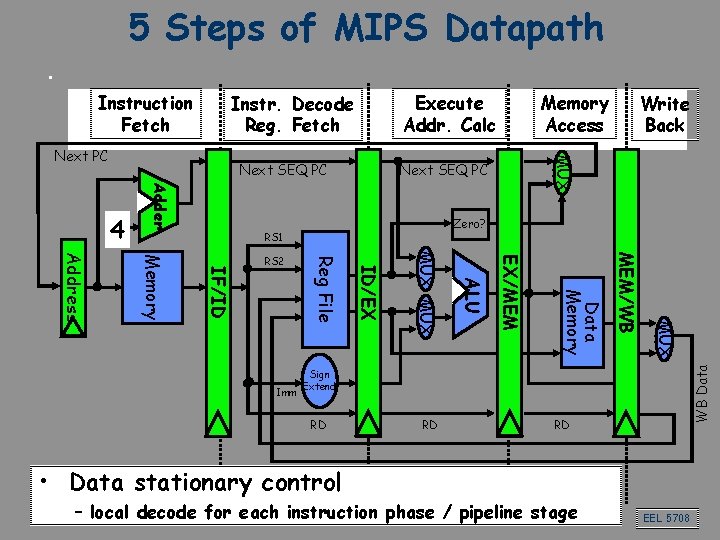

5 Steps of MIPS Datapath Instruction Fetch Instr. Decode Reg. Fetch Execute Addr. Calc Next SEQ PC Adder 4 Zero? RS 1 L M D MUX Data Memory ALU Imm MUX RD Reg File Inst Memory Address RS 2 Write Back MUX Next PC Memory Access Sign Extend WB Data EEL 5708

5 Steps of MIPS Datapath Execute Addr. Calc Instr. Decode Reg. Fetch Next SEQ PC Adder 4 Zero? RS 1 MUX MEM/WB Data Memory EX/MEM ALU MUX ID/EX Imm Reg File IF/ID Memory Address RS 2 Write Back MUX Next PC Memory Access WB Data Instruction Fetch Sign Extend RD RD RD • Data stationary control – local decode for each instruction phase / pipeline stage EEL 5708

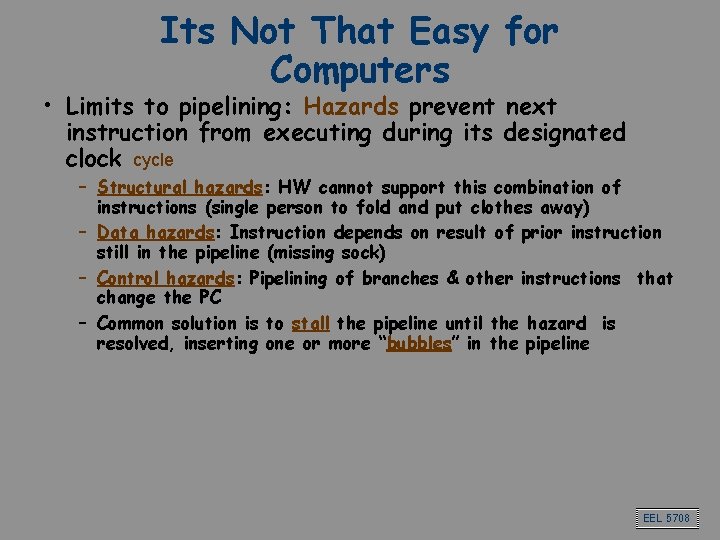

Its Not That Easy for Computers • Limits to pipelining: Hazards prevent next instruction from executing during its designated clock cycle – Structural hazards: HW cannot support this combination of instructions (single person to fold and put clothes away) – Data hazards: Instruction depends on result of prior instruction still in the pipeline (missing sock) – Control hazards: Pipelining of branches & other instructions that change the PC – Common solution is to stall the pipeline until the hazard is resolved, inserting one or more “bubbles” in the pipeline EEL 5708

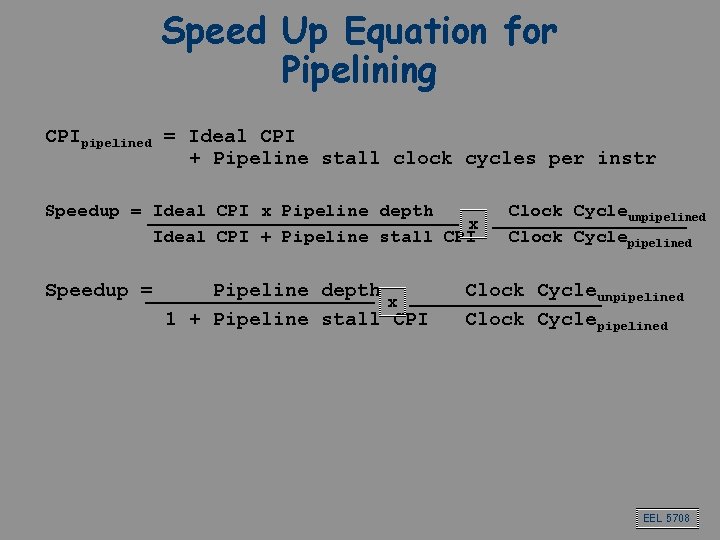

Speed Up Equation for Pipelining CPIpipelined = Ideal CPI + Pipeline stall clock cycles per instr Speedup = Ideal CPI x Pipeline depth x Ideal CPI + Pipeline stall CPI Speedup = Pipeline depth x 1 + Pipeline stall CPI Clock Cycleunpipelined Clock Cyclepipelined EEL 5708

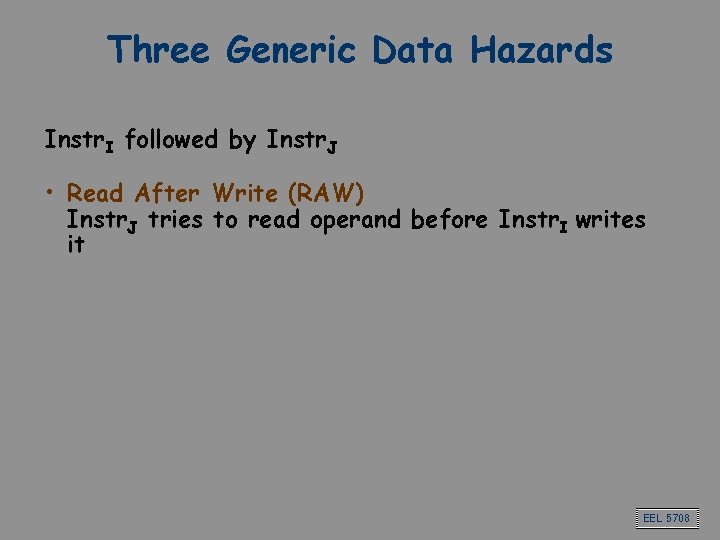

Three Generic Data Hazards Instr. I followed by Instr. J • Read After Write (RAW) Instr. J tries to read operand before Instr. I writes it EEL 5708

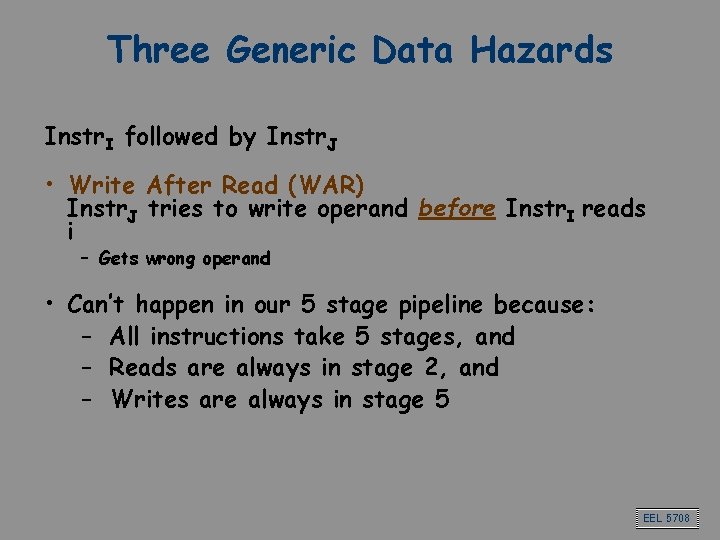

Three Generic Data Hazards Instr. I followed by Instr. J • Write After Read (WAR) Instr. J tries to write operand before Instr. I reads i – Gets wrong operand • Can’t happen in our 5 stage pipeline because: – All instructions take 5 stages, and – Reads are always in stage 2, and – Writes are always in stage 5 EEL 5708

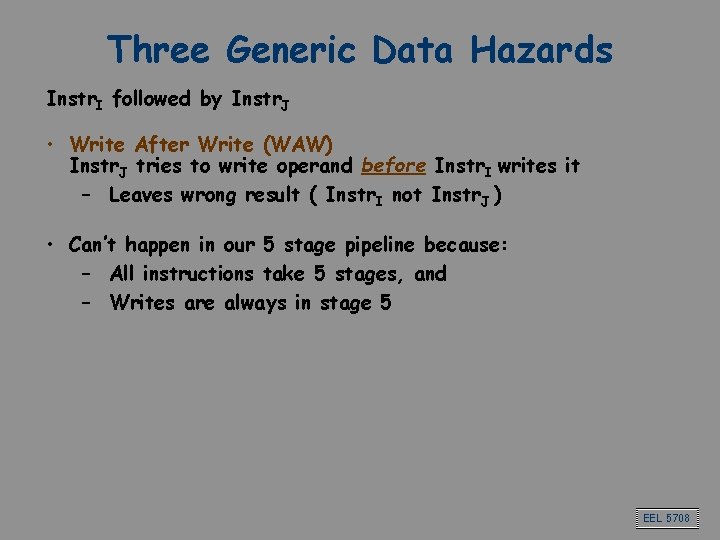

Three Generic Data Hazards Instr. I followed by Instr. J • Write After Write (WAW) Instr. J tries to write operand before Instr. I writes it – Leaves wrong result ( Instr. I not Instr. J ) • Can’t happen in our 5 stage pipeline because: – All instructions take 5 stages, and – Writes are always in stage 5 EEL 5708

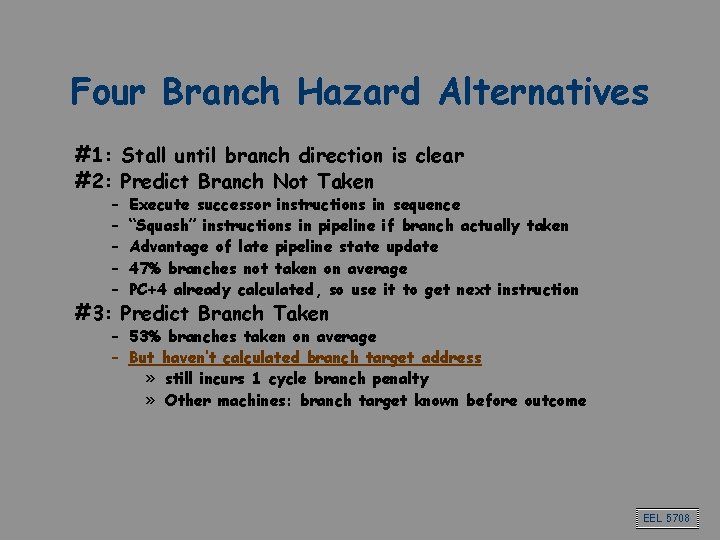

Four Branch Hazard Alternatives #1: Stall until branch direction is clear #2: Predict Branch Not Taken – – – Execute successor instructions in sequence “Squash” instructions in pipeline if branch actually taken Advantage of late pipeline state update 47% branches not taken on average PC+4 already calculated, so use it to get next instruction #3: Predict Branch Taken – 53% branches taken on average – But haven’t calculated branch target address » still incurs 1 cycle branch penalty » Other machines: branch target known before outcome EEL 5708

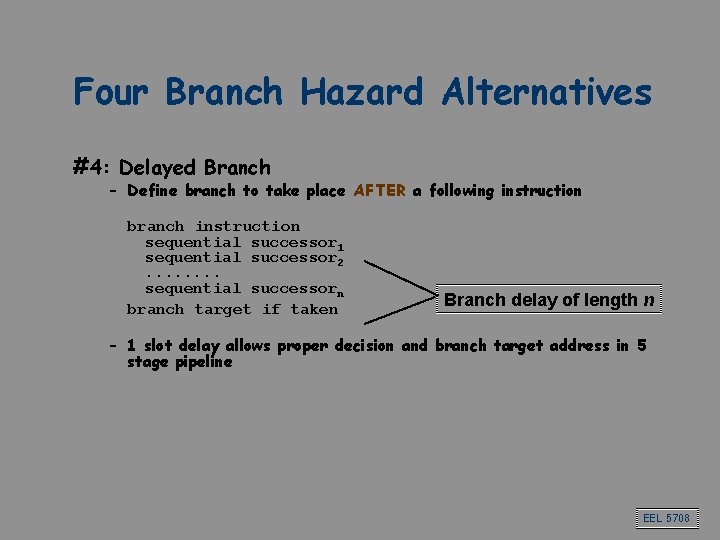

Four Branch Hazard Alternatives #4: Delayed Branch – Define branch to take place AFTER a following instruction branch instruction sequential successor 1 sequential successor 2. . . . sequential successorn branch target if taken Branch delay of length n – 1 slot delay allows proper decision and branch target address in 5 stage pipeline EEL 5708

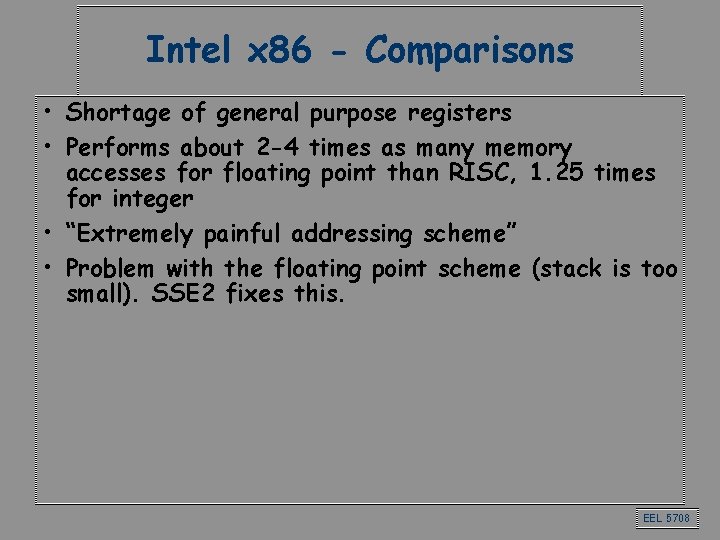

Intel x 86 - Comparisons • Shortage of general purpose registers • Performs about 2 -4 times as many memory accesses for floating point than RISC, 1. 25 times for integer • “Extremely painful addressing scheme” • Problem with the floating point scheme (stack is too small). SSE 2 fixes this. EEL 5708

Instruction level parallelism EEL 5708

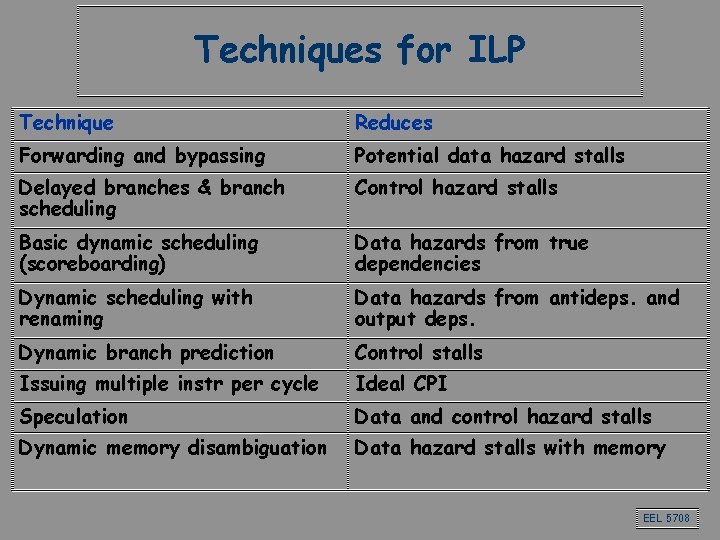

Techniques for ILP Technique Reduces Forwarding and bypassing Potential data hazard stalls Delayed branches & branch scheduling Control hazard stalls Basic dynamic scheduling (scoreboarding) Data hazards from true dependencies Dynamic scheduling with renaming Data hazards from antideps. and output deps. Dynamic branch prediction Control stalls Issuing multiple instr per cycle Ideal CPI Speculation Data and control hazard stalls Dynamic memory disambiguation Data hazard stalls with memory EEL 5708

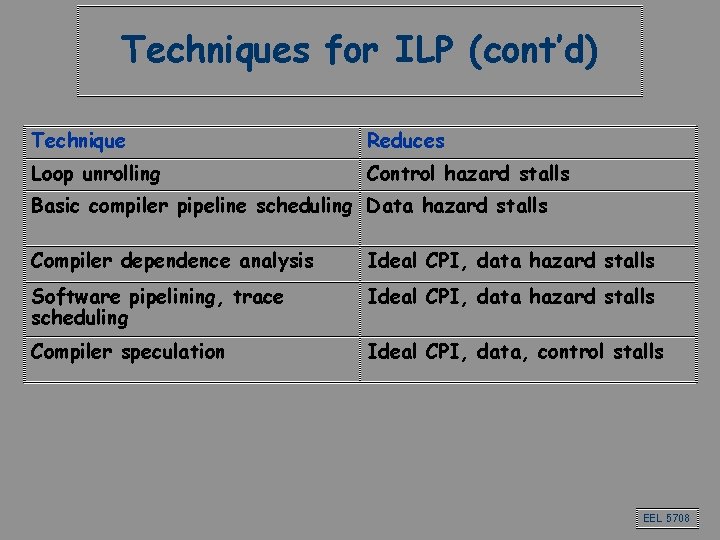

Techniques for ILP (cont’d) Technique Reduces Loop unrolling Control hazard stalls Basic compiler pipeline scheduling Data hazard stalls Compiler dependence analysis Ideal CPI, data hazard stalls Software pipelining, trace scheduling Ideal CPI, data hazard stalls Compiler speculation Ideal CPI, data, control stalls EEL 5708

Loop unrolling EEL 5708

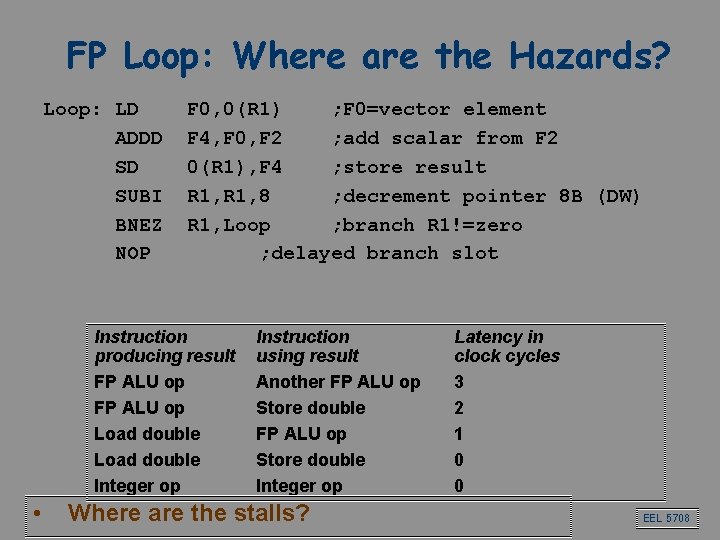

FP Loop: Where are the Hazards? Loop: LD ADDD SD SUBI BNEZ NOP F 0, 0(R 1) ; F 0=vector element F 4, F 0, F 2 ; add scalar from F 2 0(R 1), F 4 ; store result R 1, 8 ; decrement pointer 8 B (DW) R 1, Loop ; branch R 1!=zero ; delayed branch slot Instruction producing result FP ALU op Load double Integer op • Instruction using result Another FP ALU op Store double Integer op Where are the stalls? Latency in clock cycles 3 2 1 0 0 EEL 5708

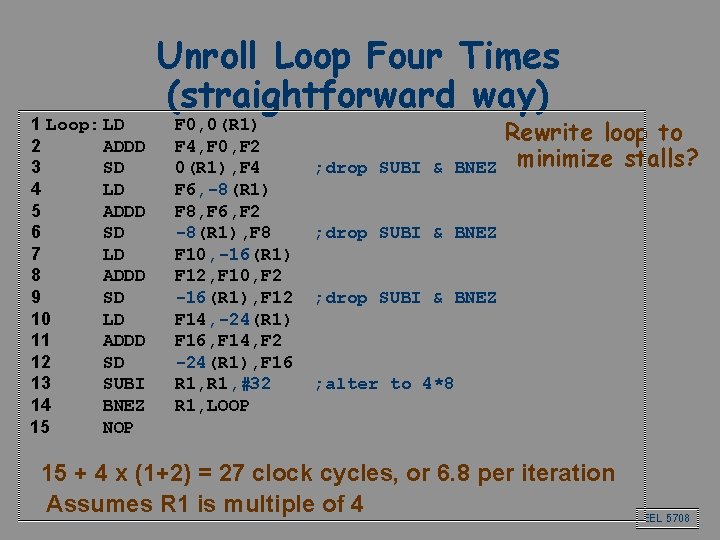

1 Loop: LD 2 ADDD 3 SD 4 LD 5 ADDD 6 SD 7 LD 8 ADDD 9 SD 10 LD 11 ADDD 12 SD 13 SUBI 14 BNEZ 15 NOP Unroll Loop Four Times (straightforward way) F 0, 0(R 1) F 4, F 0, F 2 0(R 1), F 4 F 6, -8(R 1) F 8, F 6, F 2 -8(R 1), F 8 F 10, -16(R 1) F 12, F 10, F 2 -16(R 1), F 12 F 14, -24(R 1) F 16, F 14, F 2 -24(R 1), F 16 R 1, #32 R 1, LOOP ; drop SUBI & Rewrite loop to BNEZ minimize stalls? ; drop SUBI & BNEZ ; alter to 4*8 15 + 4 x (1+2) = 27 clock cycles, or 6. 8 per iteration Assumes R 1 is multiple of 4 EEL 5708

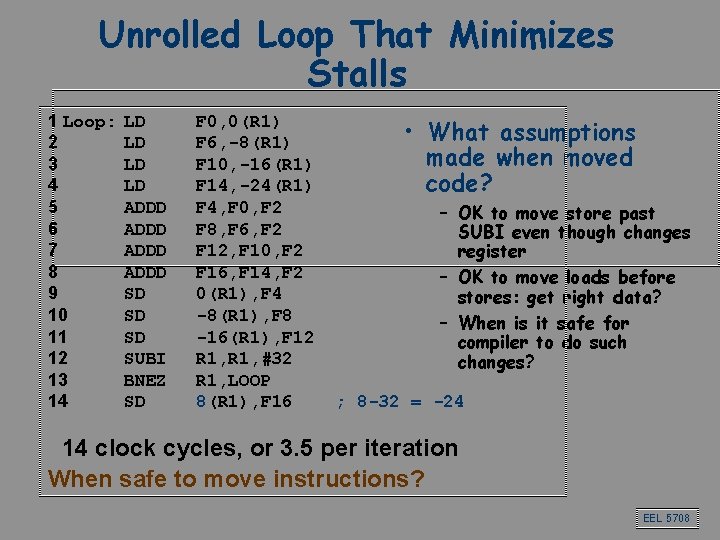

Unrolled Loop That Minimizes Stalls 1 Loop: 2 3 4 5 6 7 8 9 10 11 12 13 14 LD LD ADDD SD SD SD SUBI BNEZ SD F 0, 0(R 1) F 6, -8(R 1) F 10, -16(R 1) F 14, -24(R 1) F 4, F 0, F 2 F 8, F 6, F 2 F 12, F 10, F 2 F 16, F 14, F 2 0(R 1), F 4 -8(R 1), F 8 -16(R 1), F 12 R 1, #32 R 1, LOOP 8(R 1), F 16 • What assumptions made when moved code? – OK to move store past SUBI even though changes register – OK to move loads before stores: get right data? – When is it safe for compiler to do such changes? ; 8 -32 = -24 14 clock cycles, or 3. 5 per iteration When safe to move instructions? EEL 5708

Register renaming through Tomasulo’s algorithm EEL 5708

Another Dynamic Algorithm: Tomasulo’s Algorithm • For IBM 360/91 about 3 years after CDC 6600 (1966) • Goal: High Performance without special compilers • Differences between IBM 360 & CDC 6600 ISA – IBM has only 2 register specifiers/instr vs. 3 in CDC 6600 – IBM has 4 FP registers vs. 8 in CDC 6600 • Why Study? lead to Alpha 21264, HP 8000, MIPS 10000, Pentium II, Power. PC 604, … EEL 5708

Three Stages of Tomasulo Algorithm 1. Issue—get 2. 3. instruction from FP Op Queue If reservation station free (no structural hazard), control issues instr & sends operands (renames registers). Execution—operate on operands (EX) When both operands ready then execute; if not ready, watch Common Data Bus for result Write result—finish execution (WB) Write on Common Data Bus to all awaiting units; mark reservation station available • Normal data bus: data + destination (“go to” bus) • Common data bus: data + source (“come from” bus) – 64 bits of data + 4 bits of Functional Unit source address – Write if matches expected Functional Unit (produces result) – Does the broadcast EEL 5708

Tomasulo Drawbacks • Complexity – delays of 360/91, MIPS 10000, IBM 620? • Many associative stores (CDB) at high speed • Performance limited by Common Data Bus – Multiple CDBs => more FU logic for parallel assoc stores EEL 5708

Tomasulo Summary • Reservations stations: renaming to larger set of registers + buffering source operands – Prevents registers as bottleneck – Avoids WAR, WAW hazards of Scoreboard – Allows loop unrolling in HW • Not limited to basic blocks (integer units gets ahead, beyond branches) • Helps cache misses as well • Lasting Contributions – Dynamic scheduling – Register renaming – Load/store disambiguation • 360/91 descendants are Pentium II; Power. PC 604; MIPS R 10000; HP-PA 8000; Alpha 21264 EEL 5708

Hardware Speculation EEL 5708

What about interrupts? • We want the interrupt to happen as if the instructions would have been executed in order: precise interrupts • State of machine looks as if no instruction beyond faulting instructions has issued • Tomasulo had: in-order issue, out-of-order execution, and out-of-order completion • Need to “fix” the out-of-order completion aspect so that we can find precise breakpoint in instruction stream. EEL 5708

Relationship between precise interrupts and speculation: • Speculation: guess the outcome of the branches and execute as if our guesses were correct. • Branch prediction is important: – Need to “take our best shot” at predicting branch direction. • If we speculate and are wrong, need to back up and restart execution to point at which we predicted incorrectly: – This is exactly same as precise exceptions! • Technique for both precise interrupts/exceptions and speculation: in-order completion or commit EEL 5708

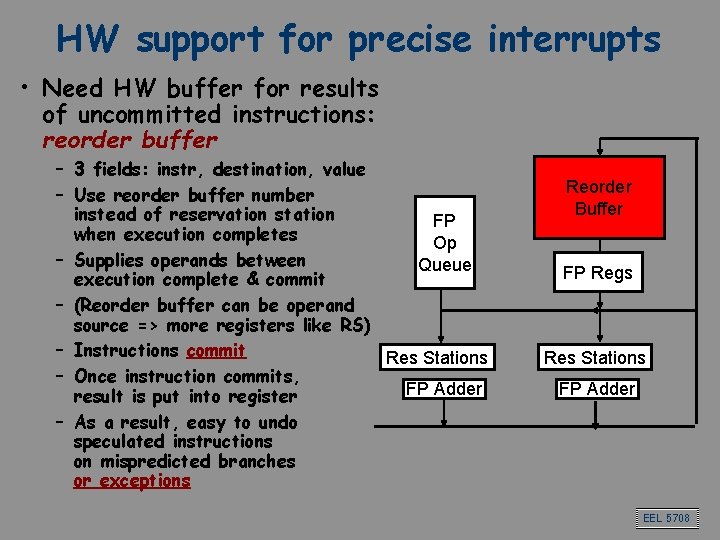

HW support for precise interrupts • Need HW buffer for results of uncommitted instructions: reorder buffer – 3 fields: instr, destination, value – Use reorder buffer number instead of reservation station FP when execution completes Op – Supplies operands between Queue execution complete & commit – (Reorder buffer can be operand source => more registers like RS) – Instructions commit Res Stations – Once instruction commits, FP Adder result is put into register – As a result, easy to undo speculated instructions on mispredicted branches or exceptions Reorder Buffer FP Regs Res Stations FP Adder EEL 5708

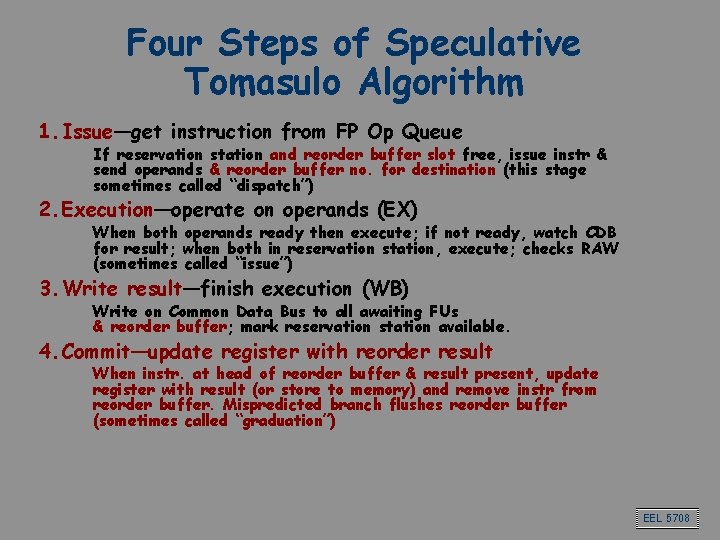

Four Steps of Speculative Tomasulo Algorithm 1. Issue—get instruction from FP Op Queue If reservation station and reorder buffer slot free, issue instr & send operands & reorder buffer no. for destination (this stage sometimes called “dispatch”) 2. Execution—operate on operands (EX) When both operands ready then execute; if not ready, watch CDB for result; when both in reservation station, execute; checks RAW (sometimes called “issue”) 3. Write result—finish execution (WB) Write on Common Data Bus to all awaiting FUs & reorder buffer; mark reservation station available. 4. Commit—update register with reorder result When instr. at head of reorder buffer & result present, update register with result (or store to memory) and remove instr from reorder buffer. Mispredicted branch flushes reorder buffer (sometimes called “graduation”) EEL 5708

Branch prediction EEL 5708

Branch prediction • As the amount of ILP grows, control dependencies become the limiting factor • The effectiveness of a branch prediction scheme depends – On the accuracy – On the cost of the branch when we are correct, and when we are incorrect EEL 5708

7 Branch Prediction Schemes 1. 2. 3. 4. 5. 6. 7. 1 -bit Branch-Prediction Buffer 2 -bit Branch-Prediction Buffer Correlating Branch Prediction Buffer Tournament Branch Predictor Branch Target Buffer Integrated Instruction Fetch Units Return Address Predictors EEL 5708

Superscalar processors EEL 5708

Getting CPI < 1: Issuing Multiple Instructions/Cycle • Vector Processing: Explicit coding of independent loops as operations on large vectors of numbers – Multimedia instructions being added to many processors • Superscalar: varying no. instructions/cycle (1 to 8), scheduled by compiler or by HW (Tomasulo) – IBM Power. PC, Sun Ultra. Sparc, DEC Alpha, Pentium III/4 • (Very) Long Instruction Words (V)LIW: fixed number of instructions (4 -16) scheduled by the compiler; put ops into wide templates (TBD) – Intel Architecture-64 (IA-64) 64 -bit address » Renamed: “Explicitly Parallel Instruction Computer (EPIC)” – Transmeta Crusoe • Anticipated success of multiple instructions lead to Instructions Per Clock cycle (IPC) vs. CPI EEL 5708

Memory technologies EEL 5708

Caches EEL 5708

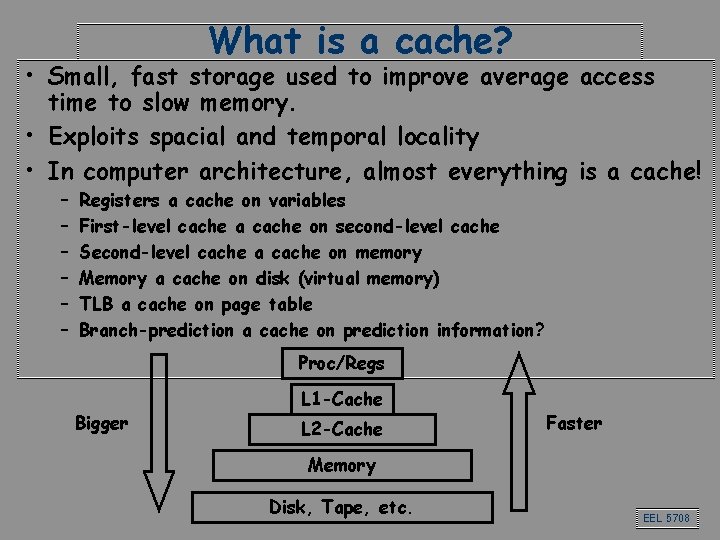

What is a cache? • Small, fast storage used to improve average access time to slow memory. • Exploits spacial and temporal locality • In computer architecture, almost everything is a cache! – – – Registers a cache on variables First-level cache a cache on second-level cache Second-level cache a cache on memory Memory a cache on disk (virtual memory) TLB a cache on page table Branch-prediction a cache on prediction information? Proc/Regs Bigger L 1 -Cache L 2 -Cache Faster Memory Disk, Tape, etc. EEL 5708

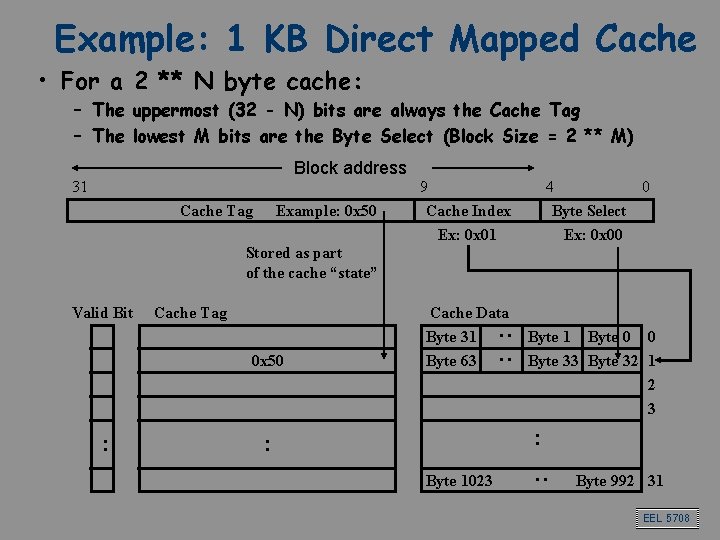

Example: 1 KB Direct Mapped Cache • For a 2 ** N byte cache: – The uppermost (32 - N) bits are always the Cache Tag – The lowest M bits are the Byte Select (Block Size = 2 ** M) Block address 31 Cache Tag Example: 0 x 50 9 Cache Index Ex: 0 x 01 4 0 Byte Select Ex: 0 x 00 Stored as part of the cache “state” 0 x 50 : Cache Data Byte 31 Byte 63 Byte 1 Byte 0 0 Byte 33 Byte 32 1 2 3 : : Byte 1023 : Cache Tag : : Valid Bit Byte 992 31 EEL 5708

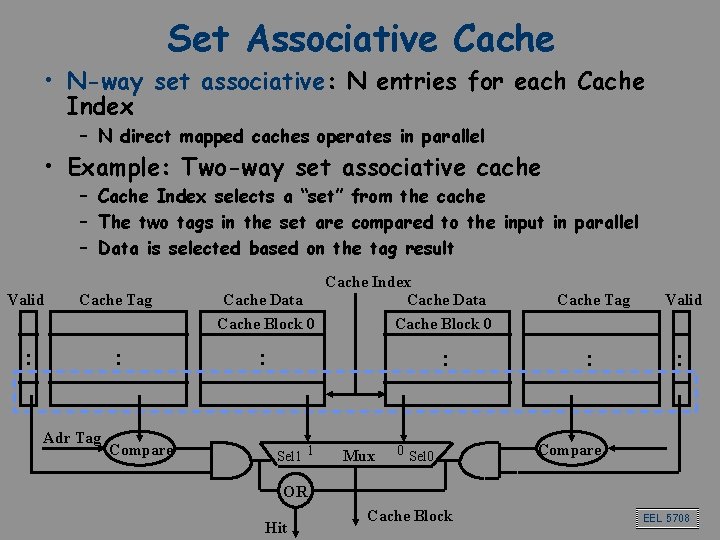

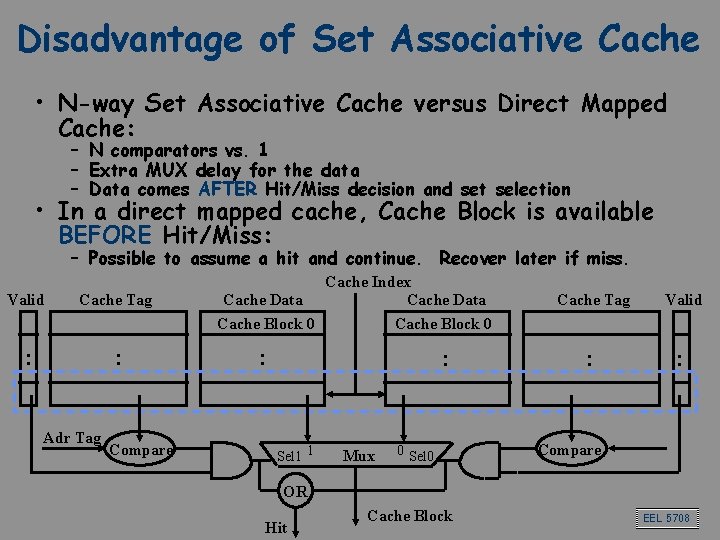

Set Associative Cache • N-way set associative: N entries for each Cache Index – N direct mapped caches operates in parallel • Example: Two-way set associative cache – Cache Index selects a “set” from the cache – The two tags in the set are compared to the input in parallel – Data is selected based on the tag result Valid Cache Tag : : Adr Tag Compare Cache Index Cache Data Cache Block 0 : : Sel 1 1 Mux 0 Sel 0 Cache Tag Valid : : Compare OR Hit Cache Block EEL 5708

Disadvantage of Set Associative Cache • N-way Set Associative Cache versus Direct Mapped Cache: – N comparators vs. 1 – Extra MUX delay for the data – Data comes AFTER Hit/Miss decision and set selection • In a direct mapped cache, Cache Block is available BEFORE Hit/Miss: – Possible to assume a hit and continue. Recover later if miss. Valid Cache Tag : : Adr Tag Compare Cache Index Cache Data Cache Block 0 : : Sel 1 1 Mux 0 Sel 0 Cache Tag Valid : : Compare OR Hit Cache Block EEL 5708

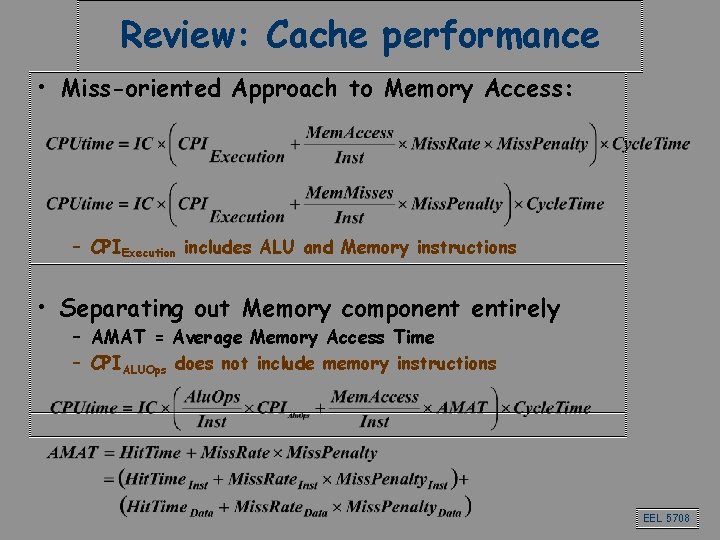

Review: Cache performance • Miss-oriented Approach to Memory Access: – CPIExecution includes ALU and Memory instructions • Separating out Memory component entirely – AMAT = Average Memory Access Time – CPIALUOps does not include memory instructions EEL 5708

- Slides: 47