Advanced Pipelining and Instruction Level Parallelism Lotzi Blni

Advanced Pipelining and Instruction Level Parallelism Lotzi Bölöni EEL 5708 1

Acknowledgements • All the lecture slides were adopted from the slides of David Patterson (1998, 2001) and David E. Culler (2001), Copyright 1998 -2002, University of California Berkeley EEL 5708 2

Review: Summary of Pipelining Basics • Hazards limit performance – Structural: need more HW resources – Data: need forwarding, compiler scheduling – Control: early evaluation & PC, delayed branch, prediction • Increasing length of pipe increases impact of hazards; pipelining helps instruction bandwidth, not latency • Interrupts, Instruction Set, FP makes pipelining harder • Compilers reduce cost of data and control hazards – Load delay slots – Branch prediction • Today: Longer pipelines => Better branch prediction, more instruction parallelism? EEL 5708 3

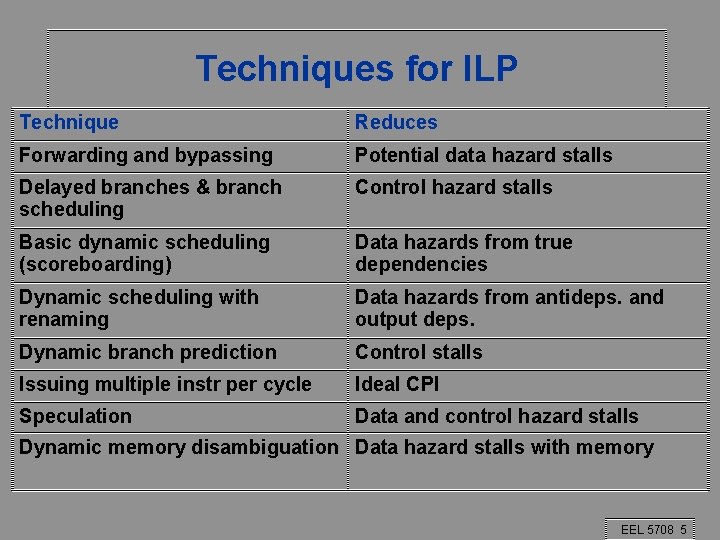

The CPI for pipelined processors • Pipeline CPI = Ideal pipeline CPI + Structural stalls + Data hazard stalls + Control stalls • There a variety of techniques used for improving various components. What we have seen as of yet is just the beginning. • Techniques can be: – Hardware (dynamic) – Software (static) EEL 5708 4

Techniques for ILP Technique Reduces Forwarding and bypassing Potential data hazard stalls Delayed branches & branch scheduling Control hazard stalls Basic dynamic scheduling (scoreboarding) Data hazards from true dependencies Dynamic scheduling with renaming Data hazards from antideps. and output deps. Dynamic branch prediction Control stalls Issuing multiple instr per cycle Ideal CPI Speculation Data and control hazard stalls Dynamic memory disambiguation Data hazard stalls with memory EEL 5708 5

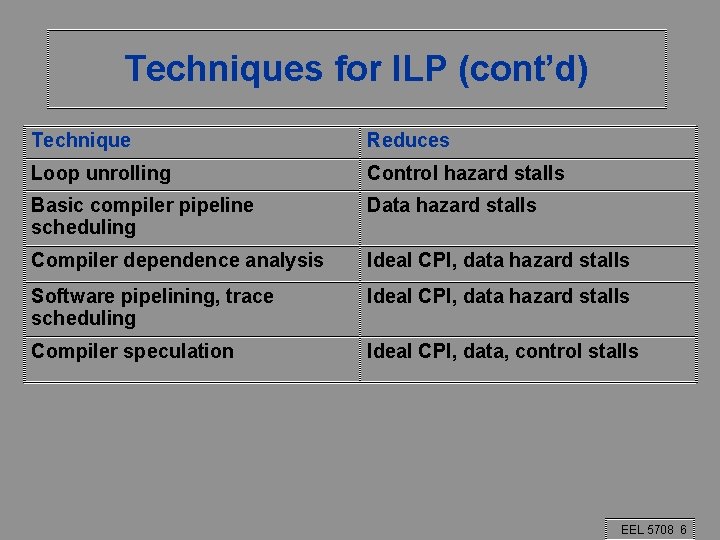

Techniques for ILP (cont’d) Technique Reduces Loop unrolling Control hazard stalls Basic compiler pipeline scheduling Data hazard stalls Compiler dependence analysis Ideal CPI, data hazard stalls Software pipelining, trace scheduling Ideal CPI, data hazard stalls Compiler speculation Ideal CPI, data, control stalls EEL 5708 6

Loop unrolling (software, static method) EEL 5708 7

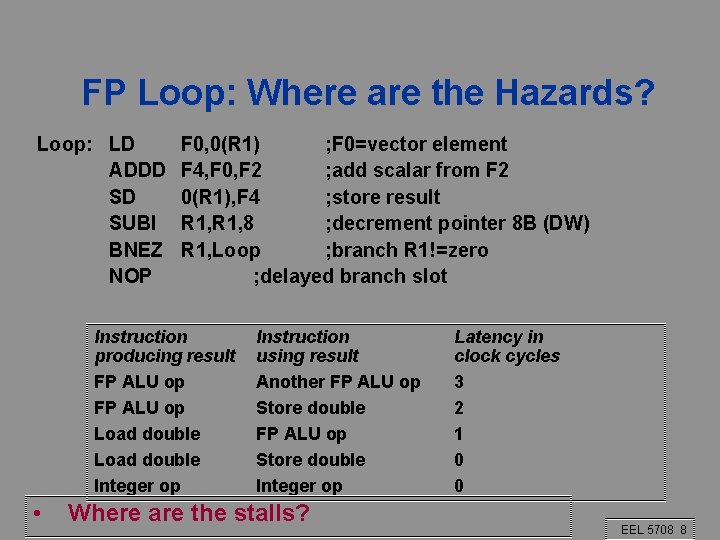

FP Loop: Where are the Hazards? Loop: LD ADDD SD SUBI BNEZ NOP F 0, 0(R 1) ; F 0=vector element F 4, F 0, F 2 ; add scalar from F 2 0(R 1), F 4 ; store result R 1, 8 ; decrement pointer 8 B (DW) R 1, Loop ; branch R 1!=zero ; delayed branch slot Instruction producing result FP ALU op Load double Integer op • Instruction using result Another FP ALU op Store double Integer op Where are the stalls? Latency in clock cycles 3 2 1 0 0 EEL 5708 8

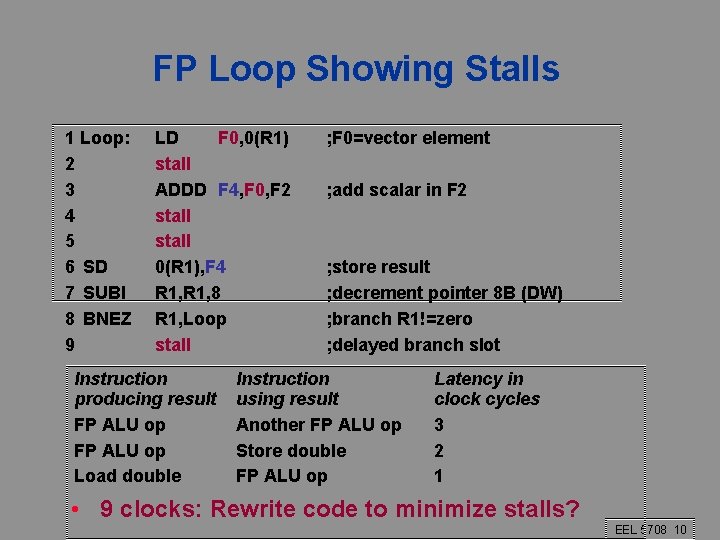

FP Loop Showing Stalls 1 Loop: 2 3 4 5 6 SD 7 SUBI 8 BNEZ 9 LD F 0, 0(R 1) stall ADDD F 4, F 0, F 2 stall 0(R 1), F 4 R 1, 8 R 1, Loop stall Instruction producing result FP ALU op Load double ; F 0=vector element ; add scalar in F 2 ; store result ; decrement pointer 8 B (DW) ; branch R 1!=zero ; delayed branch slot Instruction using result Another FP ALU op Store double FP ALU op Latency in clock cycles 3 2 1 • 9 clocks: Rewrite code to minimize stalls? EEL 5708 10

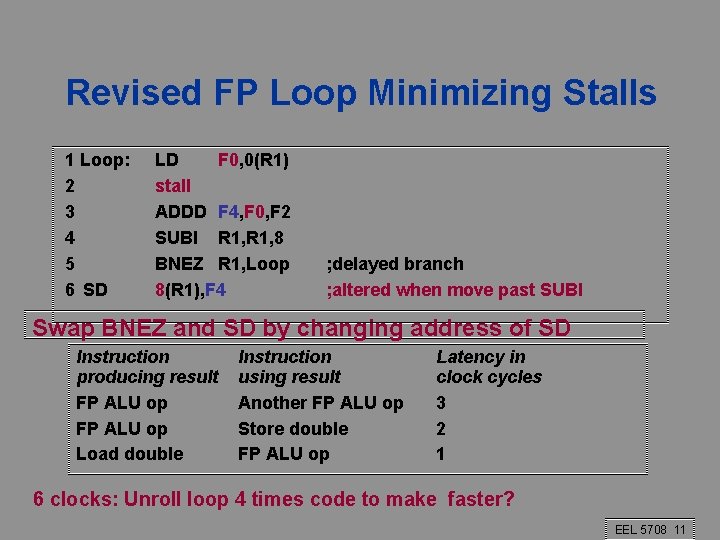

Revised FP Loop Minimizing Stalls 1 Loop: 2 3 4 5 6 SD LD F 0, 0(R 1) stall ADDD F 4, F 0, F 2 SUBI R 1, 8 BNEZ R 1, Loop 8(R 1), F 4 ; delayed branch ; altered when move past SUBI Swap BNEZ and SD by changing address of SD Instruction producing result FP ALU op Load double Instruction using result Another FP ALU op Store double FP ALU op Latency in clock cycles 3 2 1 6 clocks: Unroll loop 4 times code to make faster? EEL 5708 11

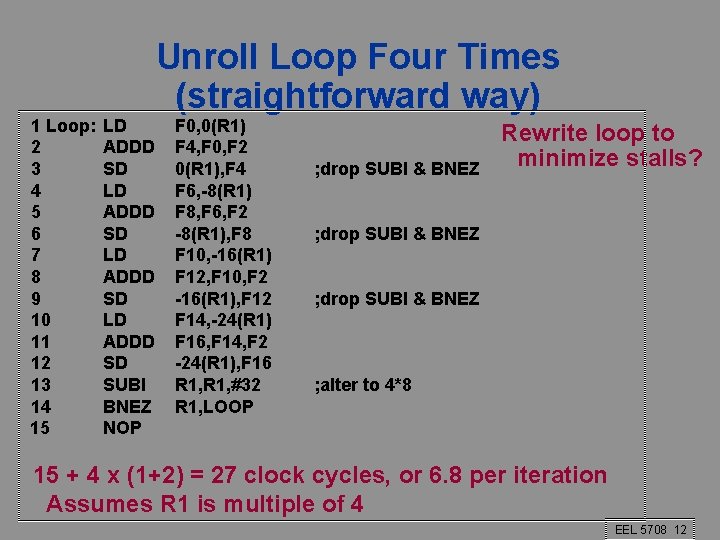

1 Loop: 2 3 4 5 6 7 8 9 10 11 12 13 14 15 LD ADDD SD SUBI BNEZ NOP Unroll Loop Four Times (straightforward way) F 0, 0(R 1) F 4, F 0, F 2 0(R 1), F 4 F 6, -8(R 1) F 8, F 6, F 2 -8(R 1), F 8 F 10, -16(R 1) F 12, F 10, F 2 -16(R 1), F 12 F 14, -24(R 1) F 16, F 14, F 2 -24(R 1), F 16 R 1, #32 R 1, LOOP ; drop SUBI & BNEZ Rewrite loop to minimize stalls? ; drop SUBI & BNEZ ; alter to 4*8 15 + 4 x (1+2) = 27 clock cycles, or 6. 8 per iteration Assumes R 1 is multiple of 4 EEL 5708 12

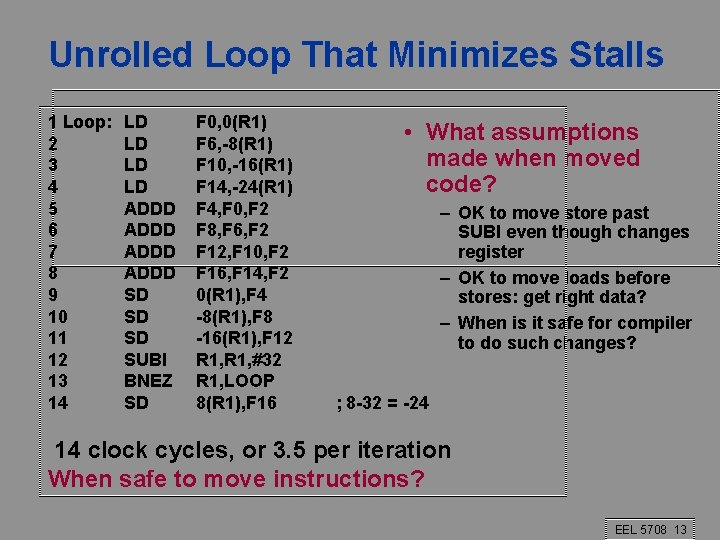

Unrolled Loop That Minimizes Stalls 1 Loop: 2 3 4 5 6 7 8 9 10 11 12 13 14 LD LD ADDD SD SD SD SUBI BNEZ SD F 0, 0(R 1) F 6, -8(R 1) F 10, -16(R 1) F 14, -24(R 1) F 4, F 0, F 2 F 8, F 6, F 2 F 12, F 10, F 2 F 16, F 14, F 2 0(R 1), F 4 -8(R 1), F 8 -16(R 1), F 12 R 1, #32 R 1, LOOP 8(R 1), F 16 • What assumptions made when moved code? – OK to move store past SUBI even though changes register – OK to move loads before stores: get right data? – When is it safe for compiler to do such changes? ; 8 -32 = -24 14 clock cycles, or 3. 5 per iteration When safe to move instructions? EEL 5708 13

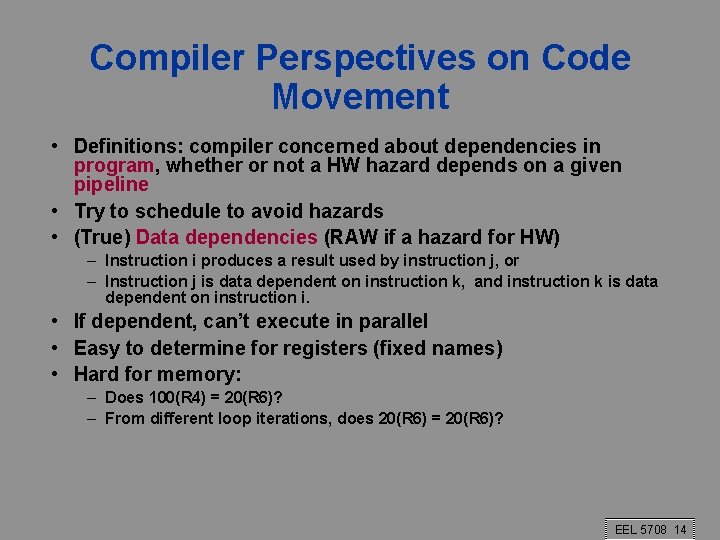

Compiler Perspectives on Code Movement • Definitions: compiler concerned about dependencies in program, whether or not a HW hazard depends on a given pipeline • Try to schedule to avoid hazards • (True) Data dependencies (RAW if a hazard for HW) – Instruction i produces a result used by instruction j, or – Instruction j is data dependent on instruction k, and instruction k is data dependent on instruction i. • If dependent, can’t execute in parallel • Easy to determine for registers (fixed names) • Hard for memory: – Does 100(R 4) = 20(R 6)? – From different loop iterations, does 20(R 6) = 20(R 6)? EEL 5708 14

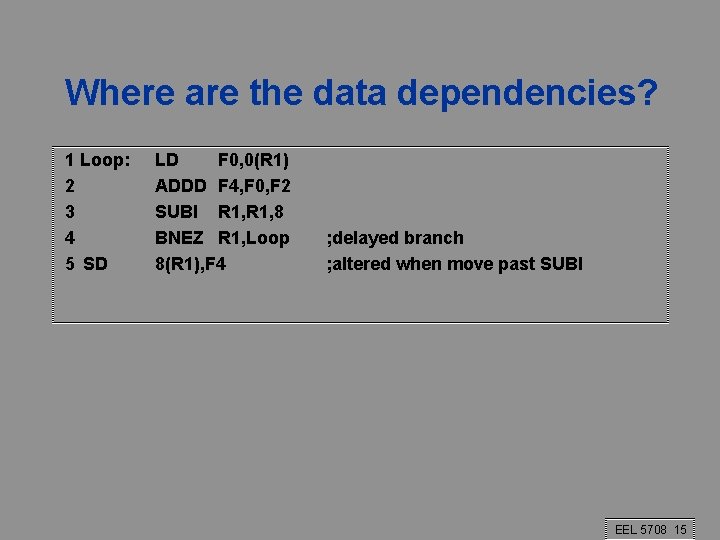

Where are the data dependencies? 1 Loop: 2 3 4 5 SD LD F 0, 0(R 1) ADDD F 4, F 0, F 2 SUBI R 1, 8 BNEZ R 1, Loop 8(R 1), F 4 ; delayed branch ; altered when move past SUBI EEL 5708 15

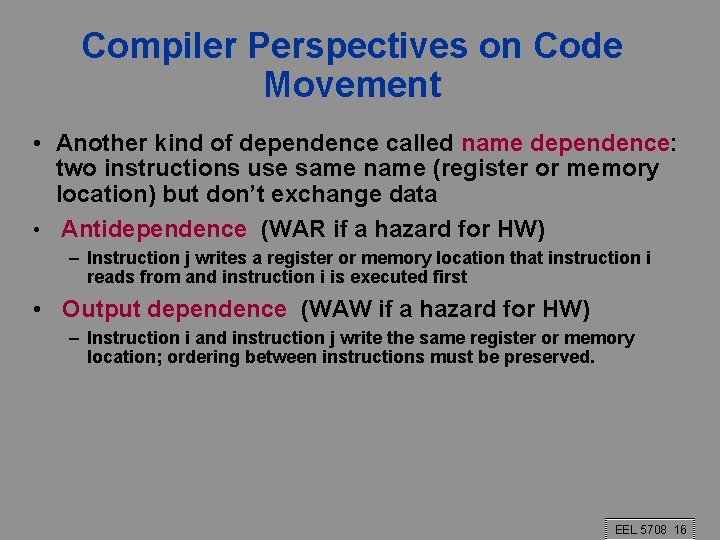

Compiler Perspectives on Code Movement • Another kind of dependence called name dependence: two instructions use same name (register or memory location) but don’t exchange data • Antidependence (WAR if a hazard for HW) – Instruction j writes a register or memory location that instruction i reads from and instruction i is executed first • Output dependence (WAW if a hazard for HW) – Instruction i and instruction j write the same register or memory location; ordering between instructions must be preserved. EEL 5708 16

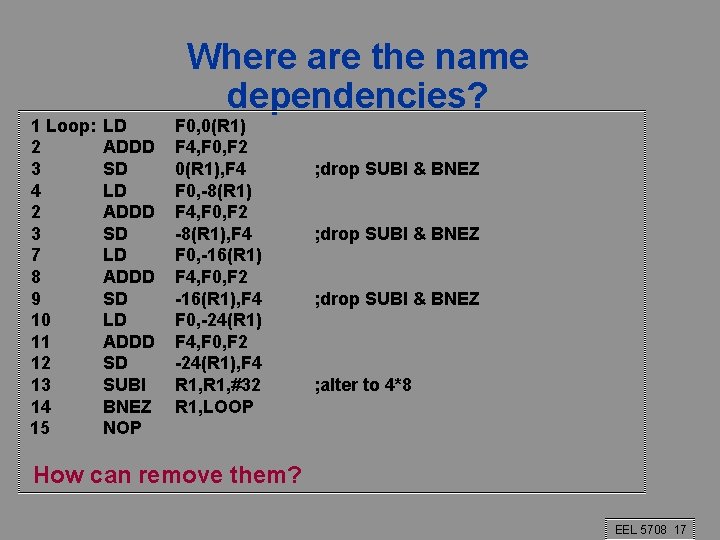

1 Loop: 2 3 4 2 3 7 8 9 10 11 12 13 14 15 LD ADDD SD SUBI BNEZ NOP Where are the name dependencies? F 0, 0(R 1) F 4, F 0, F 2 0(R 1), F 4 F 0, -8(R 1) F 4, F 0, F 2 -8(R 1), F 4 F 0, -16(R 1) F 4, F 0, F 2 -16(R 1), F 4 F 0, -24(R 1) F 4, F 0, F 2 -24(R 1), F 4 R 1, #32 R 1, LOOP ; drop SUBI & BNEZ ; alter to 4*8 How can remove them? EEL 5708 17

Compiler Perspectives on Code Movement • Again name dependencies are hard for memory accesses – Does 100(R 4) = 20(R 6)? – From different loop iterations, does 20(R 6) = 20(R 6)? • Our example required compiler to know that if R 1 doesn’t change then: 0(R 1) ≠ -8(R 1) ≠ -16(R 1) ≠ -24(R 1) There were no dependencies between some loads and stores so they could be moved by each other EEL 5708 19

Compiler Perspectives on Code Movement • Final kind of dependence called control dependence • Example if p 1 {S 1; }; if p 2 {S 2; }; S 1 is control dependent on p 1 and S 2 is control dependent on p 2 but not on p 1. EEL 5708 20

Compiler Perspectives on Code Movement • Two (obvious) constraints on control dependences: – An instruction that is control dependent on a branch cannot be moved before the branch so that its execution is no longer controlled by the branch. – An instruction that is not control dependent on a branch cannot be moved to after the branch so that its execution is controlled by the branch. • Control dependencies relaxed to get parallelism; get same effect if preserve order of exceptions (address in register checked by branch before use) and data flow (value in register depends on branch) EEL 5708 21

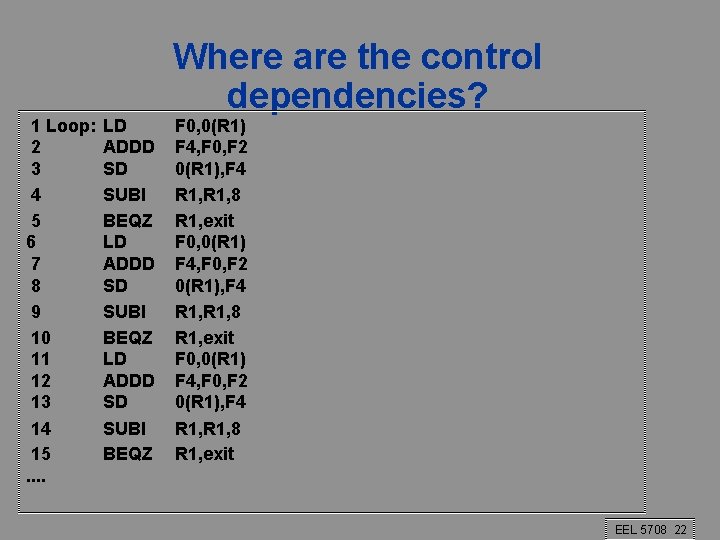

1 Loop: 2 3 4 5 6 7 8 9 10 11 12 13 14 15. . LD ADDD SD SUBI BEQZ Where are the control dependencies? F 0, 0(R 1) F 4, F 0, F 2 0(R 1), F 4 R 1, 8 R 1, exit F 0, 0(R 1) F 4, F 0, F 2 0(R 1), F 4 R 1, 8 R 1, exit EEL 5708 22

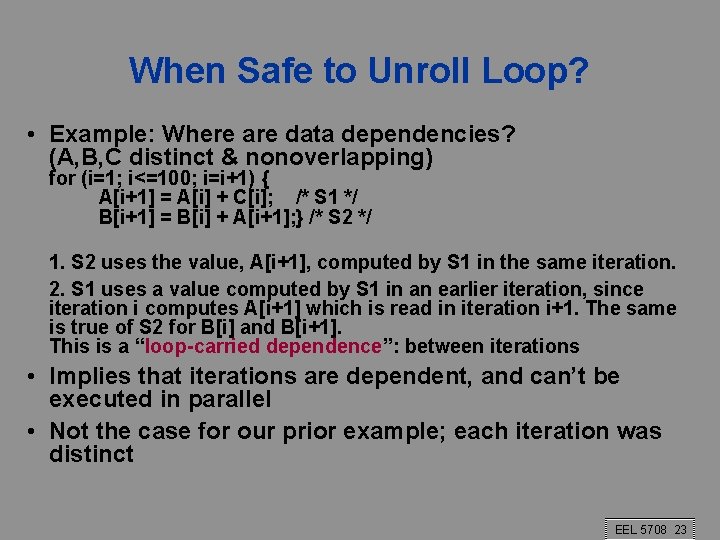

When Safe to Unroll Loop? • Example: Where are data dependencies? (A, B, C distinct & nonoverlapping) for (i=1; i<=100; i=i+1) { A[i+1] = A[i] + C[i]; /* S 1 */ B[i+1] = B[i] + A[i+1]; } /* S 2 */ 1. S 2 uses the value, A[i+1], computed by S 1 in the same iteration. 2. S 1 uses a value computed by S 1 in an earlier iteration, since iteration i computes A[i+1] which is read in iteration i+1. The same is true of S 2 for B[i] and B[i+1]. This is a “loop-carried dependence”: between iterations • Implies that iterations are dependent, and can’t be executed in parallel • Not the case for our prior example; each iteration was distinct EEL 5708 23

Summary • Instruction Level Parallelism (ILP) in SW or HW • Loop level parallelism is easiest to see • SW parallelism dependencies defined for program, hazards if HW cannot resolve • SW dependencies/compiler sophistication determine if compiler can unroll loops – Memory dependencies hardest to determine EEL 5708 24

- Slides: 22