Memory Controller Innovations for HighPerformance Systems Rajeev Balasubramonian

Memory Controller Innovations for High-Performance Systems Rajeev Balasubramonian School of Computing University of Utah Sep 25 th 2013 1

Micron Road Trip N RO C I M BOISE SALT LAKE CITY 2

DRAM Chip Innovations 3

Feedback - I Don’t bother modifying the DRAM chip. 4

Feedback - II We love what you’re doing with the memory controller and OS. 5

Academic Research Agendas • Not giving up on memory device innovations Ø Several examples of academic papers resonating commercial innovations • Greater focus on memory controller improvements 6

This Talk’s Focus: The Memory Controller • More relevant to Intel, Micron • Cores are being commoditized, but memory controller features are still evolving – new devices (buffer chips, HMC), chipkill, compression • Lots of room for improvement – MCs haven’t seen the same innovation frenzy as the cores 7

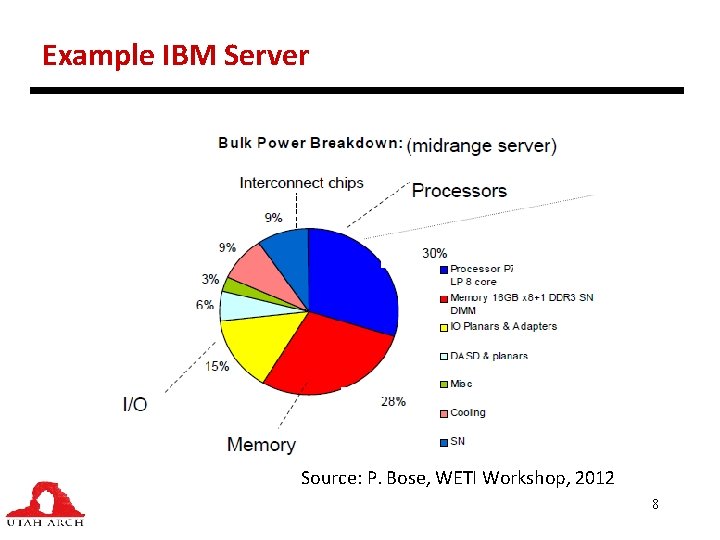

Example IBM Server Source: P. Bose, WETI Workshop, 2012 8

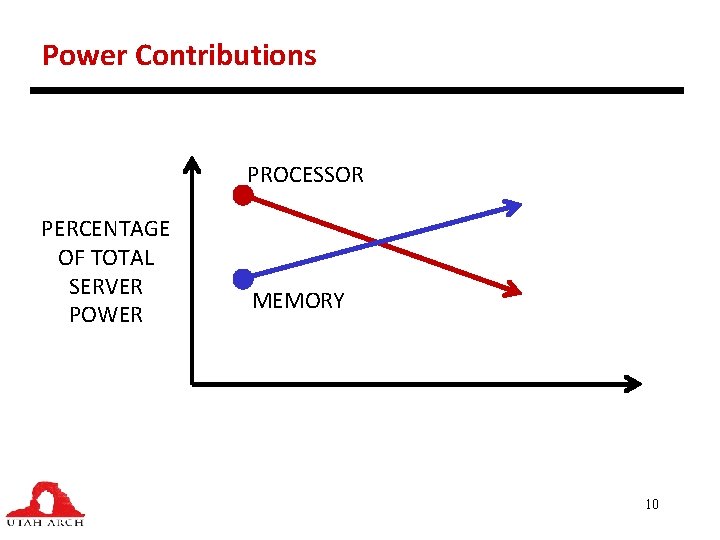

Power Contributions PROCESSOR PERCENTAGE OF TOTAL SERVER POWER MEMORY 9

Power Contributions PROCESSOR PERCENTAGE OF TOTAL SERVER POWER MEMORY 10

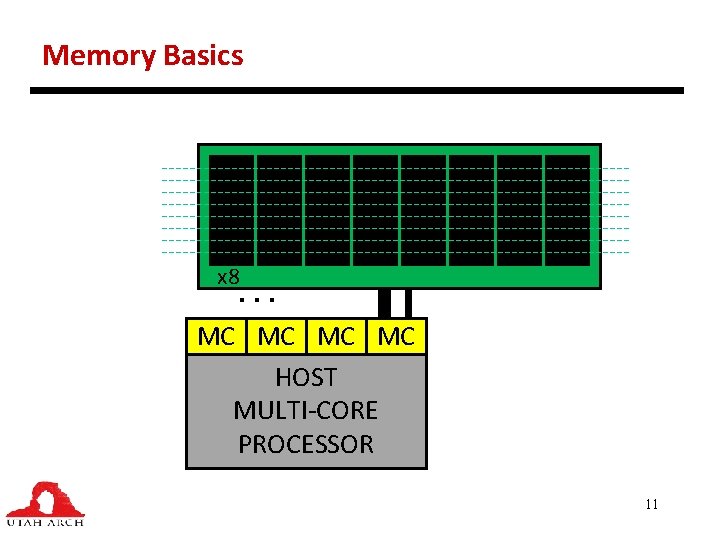

Memory Basics … x 8 MC MC HOST MULTI-CORE PROCESSOR 11

Outline • Background • Focusing on the memory controller • Memory basics • Implementing memory compression (Mem. Zip) • Implementing chipkill (LESS-ECC) • Voltage and current aware scheduling (MICRO 2013) 12

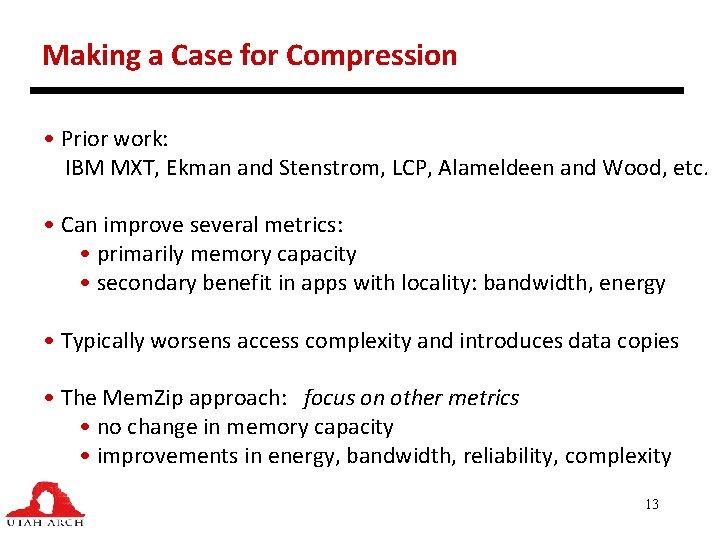

Making a Case for Compression • Prior work: IBM MXT, Ekman and Stenstrom, LCP, Alameldeen and Wood, etc. • Can improve several metrics: • primarily memory capacity • secondary benefit in apps with locality: bandwidth, energy • Typically worsens access complexity and introduces data copies • The Mem. Zip approach: focus on other metrics • no change in memory capacity • improvements in energy, bandwidth, reliability, complexity 13

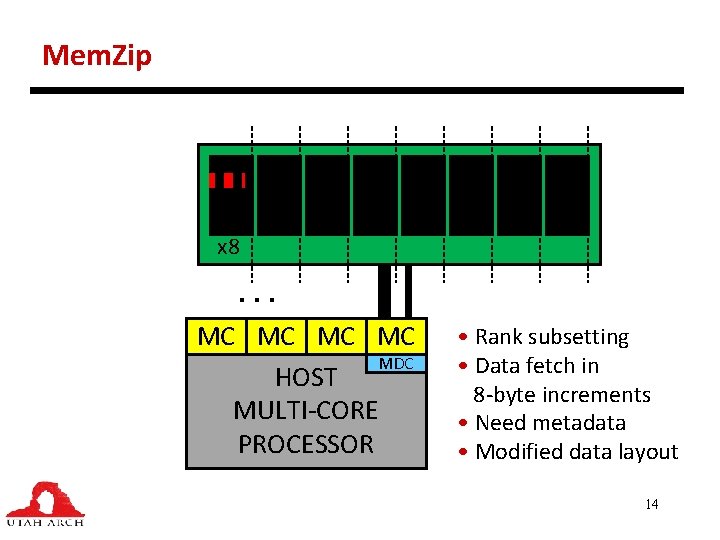

Mem. Zip x 8 … MC MC MDC HOST MULTI-CORE PROCESSOR • Rank subsetting • Data fetch in 8 -byte increments • Need metadata • Modified data layout 14

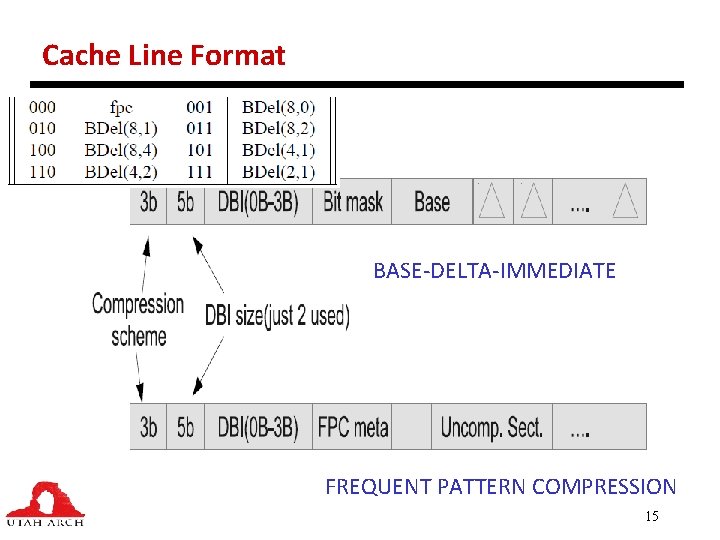

Cache Line Format BASE-DELTA-IMMEDIATE FREQUENT PATTERN COMPRESSION 15

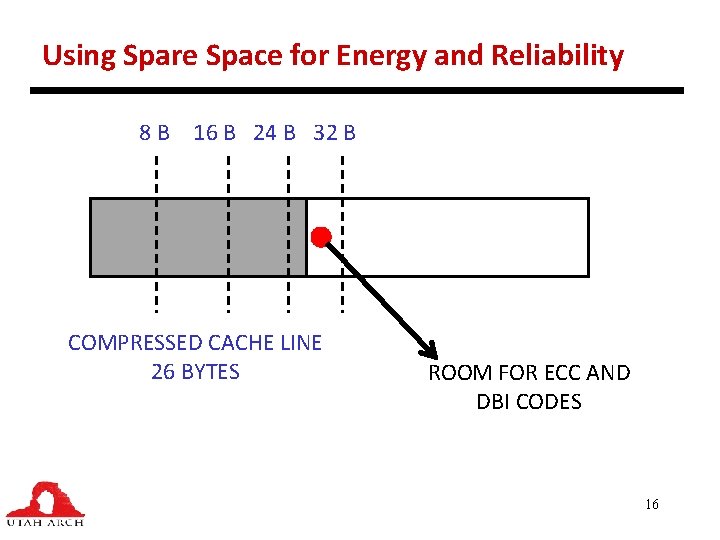

Using Spare Space for Energy and Reliability 8 B 16 B 24 B 32 B COMPRESSED CACHE LINE 26 BYTES ROOM FOR ECC AND DBI CODES 16

Making the ECC Access More Efficient • Baseline ECC: ECC code is fetched in parallel from 9 th chip • Subranking with embedded-ECC: no extra chip; ECC is located in the same row as data; need extra COL-RDs to fetch ECC codes • Mem. Zip with embedded-ECC: in many cases, the ECC is fetched with no additional COL-RD 17

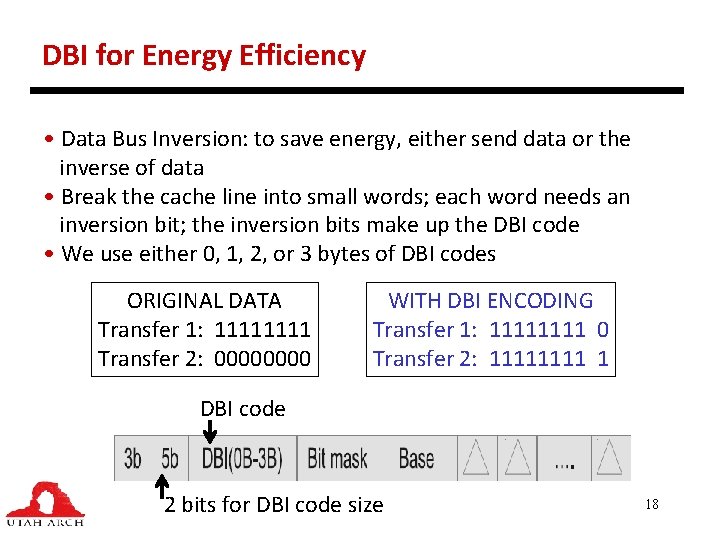

DBI for Energy Efficiency • Data Bus Inversion: to save energy, either send data or the inverse of data • Break the cache line into small words; each word needs an inversion bit; the inversion bits make up the DBI code • We use either 0, 1, 2, or 3 bytes of DBI codes ORIGINAL DATA Transfer 1: 1111 Transfer 2: 0000 WITH DBI ENCODING Transfer 1: 1111 0 Transfer 2: 1111 1 DBI code 2 bits for DBI code size 18

Methodology • Simics (8 out-of-order cores) and USIMM memory system timing • Micron power calculator for DRAM power estimates • Collection of workloads from SPEC 2 k 6, NASPB, Parsec, Cloud. Suite; multi-programmed and multi-threaded 19

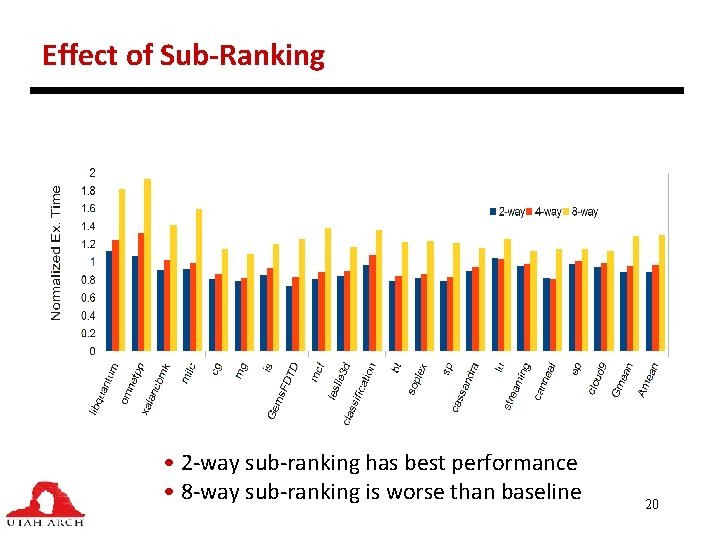

Effect of Sub-Ranking • 2 -way sub-ranking has best performance • 8 -way sub-ranking is worse than baseline 20

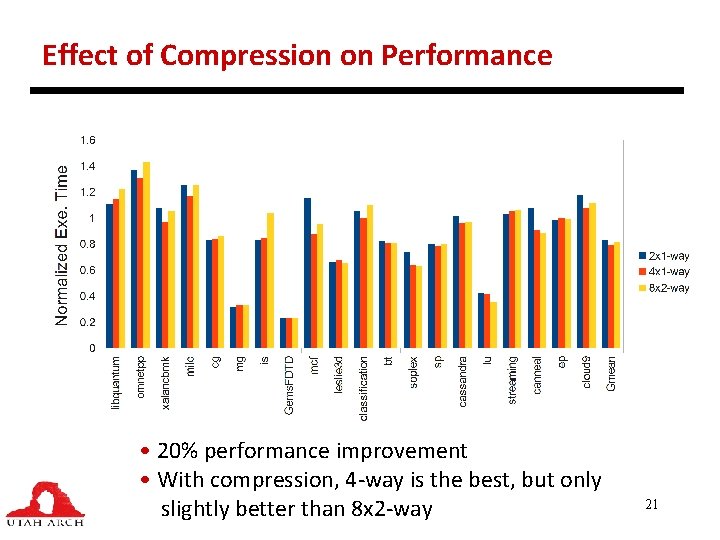

Effect of Compression on Performance • 20% performance improvement • With compression, 4 -way is the best, but only slightly better than 8 x 2 -way 21

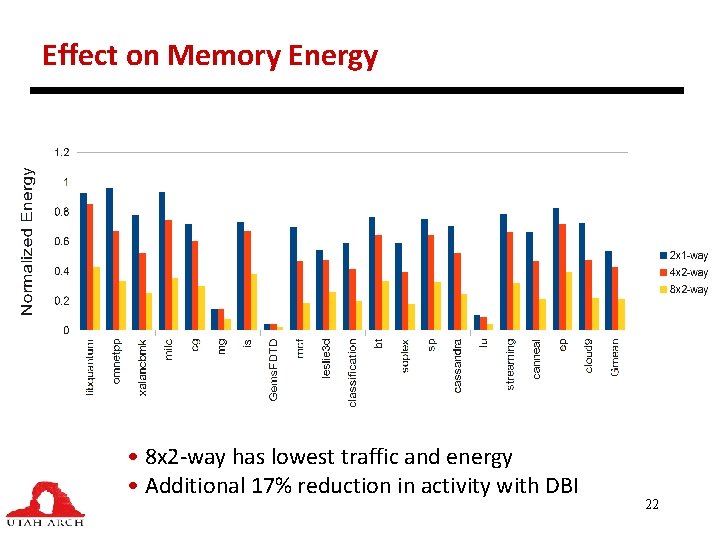

Effect on Memory Energy • 8 x 2 -way has lowest traffic and energy • Additional 17% reduction in activity with DBI 22

Outline • Background • Focusing on the memory controller • Memory basics • Implementing memory compression (Mem. Zip) • Implementing chipkill (LESS-ECC) • Voltage and current aware scheduling (MICRO 2013) 23

Chipkill Overview • Chipkill: the ability to recover from an entire memory chip failure • Commercial symbol-based chipkill: 4 check symbols are required to recover from 1 data symbol corruption; hence needs 32+4 x 4 DRAM chips per access (two channels) 24

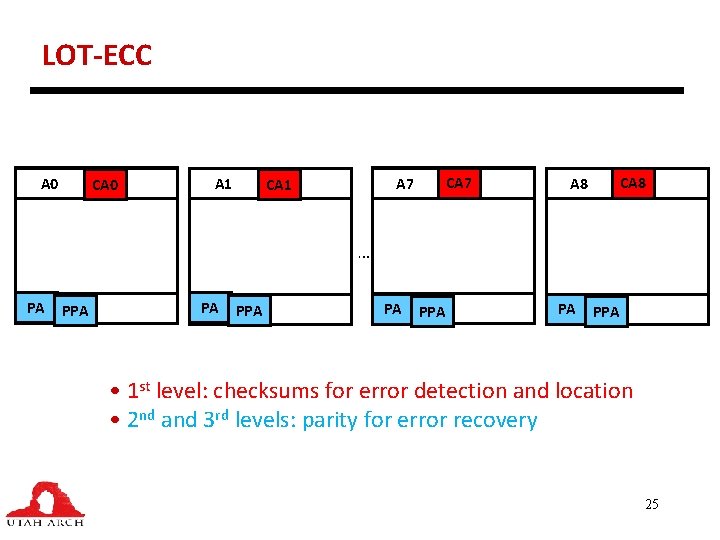

LOT-ECC A 0 CA 0 A 1 CA 7 CA 1 CA 8 … PA PPA • 1 st level: checksums for error detection and location • 2 nd and 3 rd levels: parity for error recovery 25

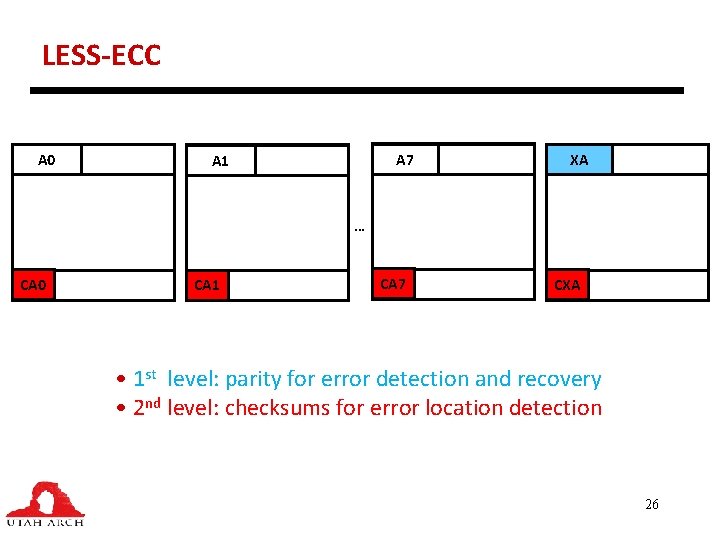

LESS-ECC A 0 A 7 A 1 XA … CA 0 CA 1 CA 7 CXA • 1 st level: parity for error detection and recovery • 2 nd level: checksums for error location detection 26

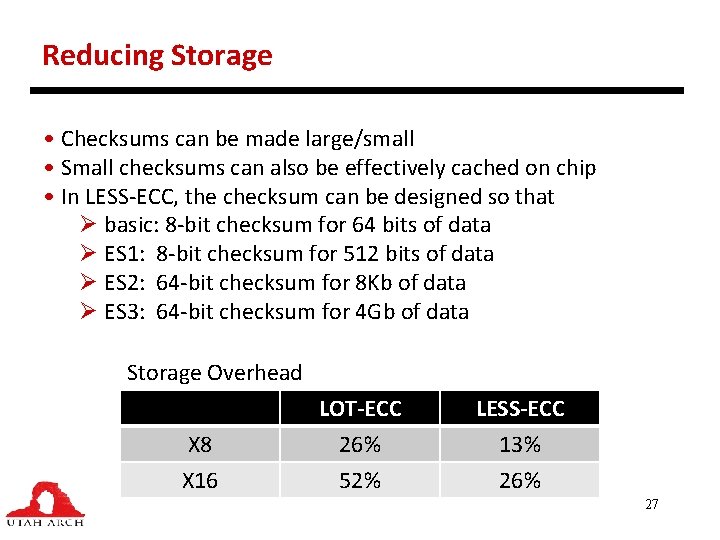

Reducing Storage • Checksums can be made large/small • Small checksums can also be effectively cached on chip • In LESS-ECC, the checksum can be designed so that Ø basic: 8 -bit checksum for 64 bits of data Ø ES 1: 8 -bit checksum for 512 bits of data Ø ES 2: 64 -bit checksum for 8 Kb of data Ø ES 3: 64 -bit checksum for 4 Gb of data Storage Overhead X 8 X 16 LOT-ECC 26% 52% LESS-ECC 13% 26% 27

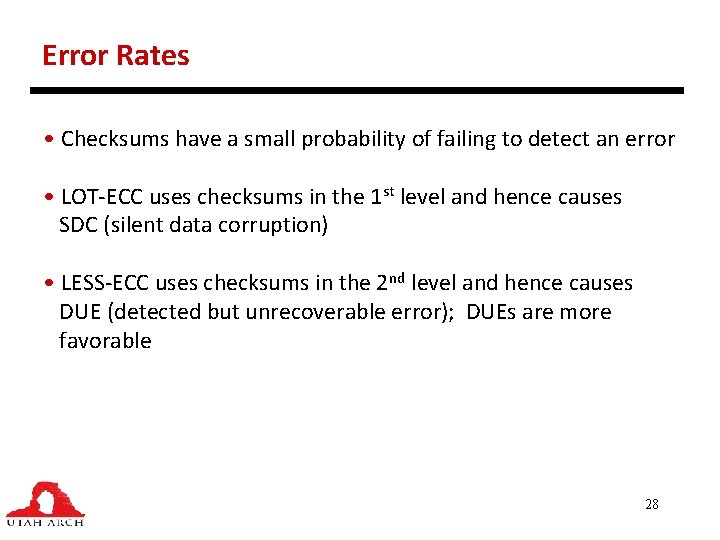

Error Rates • Checksums have a small probability of failing to detect an error • LOT-ECC uses checksums in the 1 st level and hence causes SDC (silent data corruption) • LESS-ECC uses checksums in the 2 nd level and hence causes DUE (detected but unrecoverable error); DUEs are more favorable 28

LESS-ECC Summary • Benefits: energy, parallelism, storage, SDC • Disadvantage: checksum cache and more logic at the memory controller 29

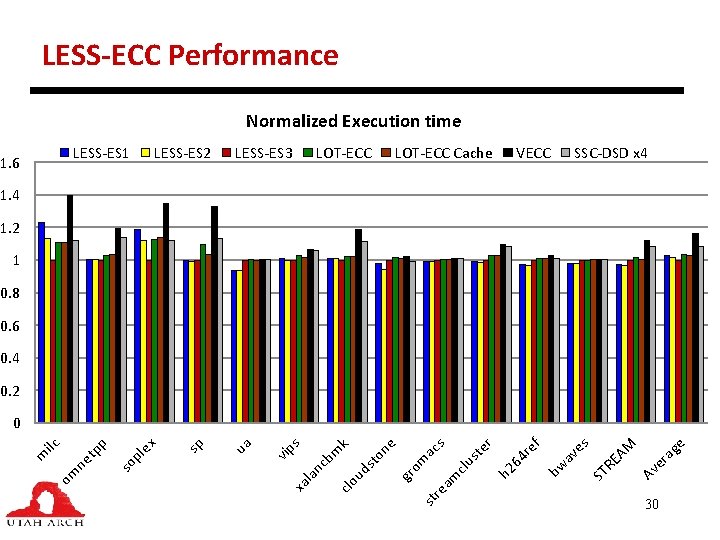

LESS-ECC Performance Normalized Execution time LESS-ES 1 1. 6 LESS-ES 2 LESS-ES 3 LOT-ECC Cache VECC SSC-DSD x 4 1. 2 1 0. 8 0. 6 0. 4 0. 2 e ag er Av AM ST RE es bw av f 64 re clu m h 2 st e r s ac st re a gr om st o clo ud bm nc la xa ne k s vip ua sp le x so p p ne tp om m ilc 0 30

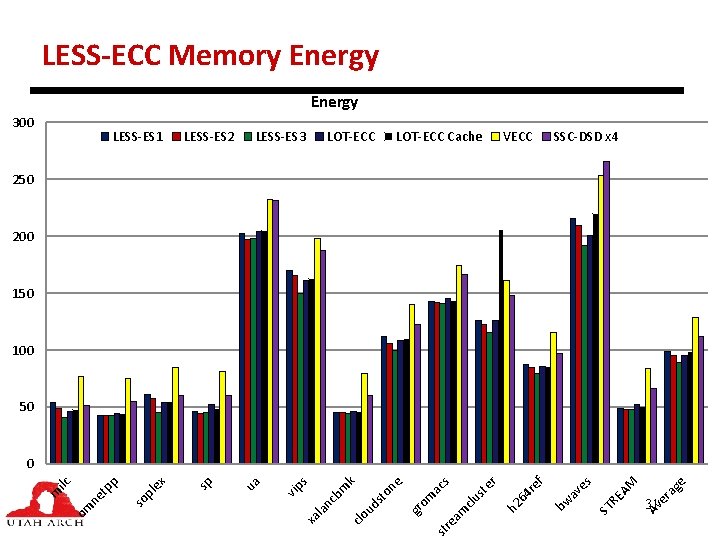

LESS-ECC Memory Energy 300 LESS-ES 1 LESS-ES 2 LESS-ES 3 LOT-ECC Cache VECC SSC-DSD x 4 250 200 150 100 50 st re e ag er 31 Av AM RE ST es av bw ef 4 r h 2 6 am clu st e r ac s om gr ne st o ud clo xa l an cb m k s vip ua sp x pl e so pp ne t om m ilc 0

LESS-ECC Energy Efficiency • LESS-ECC-x 8 has 0. 5% lower energy than LOT-ECC-x 8 but 15% less energy per usable byte • LESS-ECC-x 16 has 26% lower energy than LOT-ECC-x 8 (both have similar storage overhead of 26%) 32

Outline • Background • Focusing on the memory controller • Memory basics • Implementing memory compression (Mem. Zip) • Implementing chipkill (LESS-ECC) • Voltage and current aware scheduling (MICRO 2013) 33

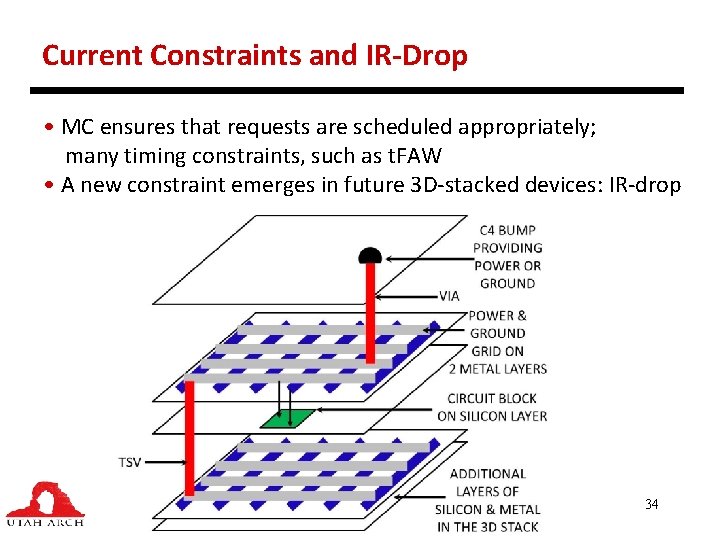

Current Constraints and IR-Drop • MC ensures that requests are scheduled appropriately; many timing constraints, such as t. FAW • A new constraint emerges in future 3 D-stacked devices: IR-drop 34

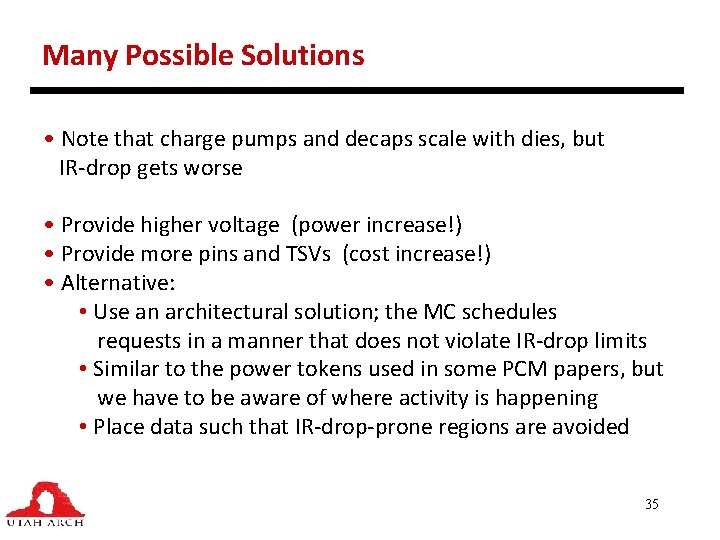

Many Possible Solutions • Note that charge pumps and decaps scale with dies, but IR-drop gets worse • Provide higher voltage (power increase!) • Provide more pins and TSVs (cost increase!) • Alternative: • Use an architectural solution; the MC schedules requests in a manner that does not violate IR-drop limits • Similar to the power tokens used in some PCM papers, but we have to be aware of where activity is happening • Place data such that IR-drop-prone regions are avoided 35

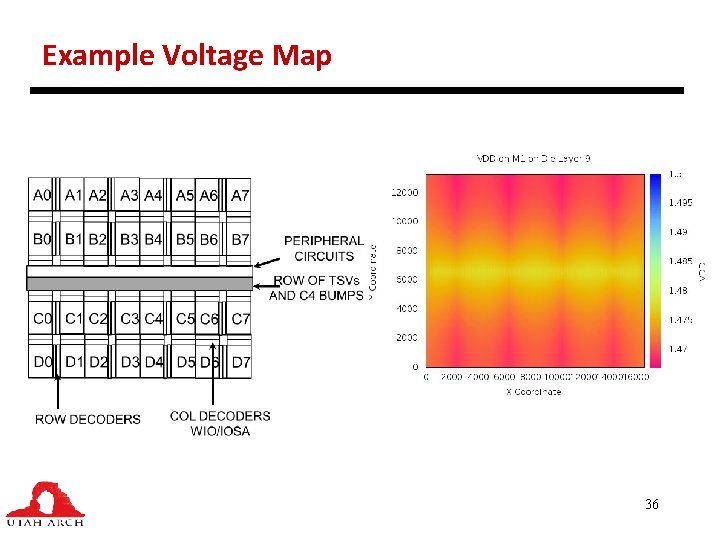

Y Coordinate Example Voltage Map 36

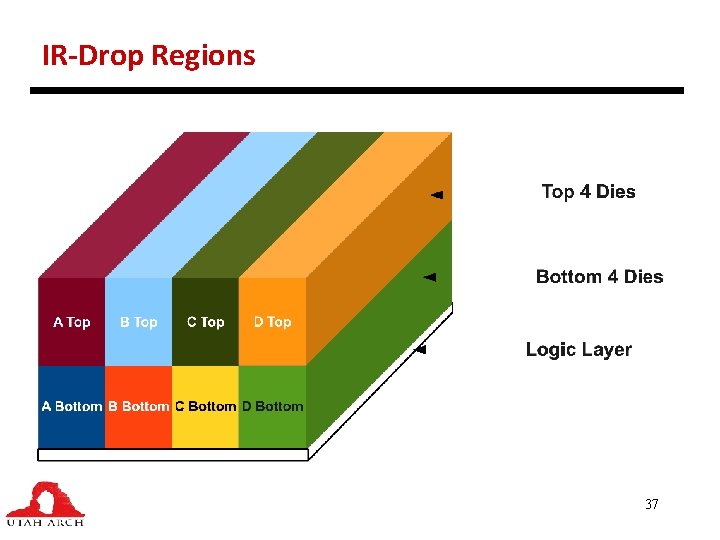

IR-Drop Regions 37

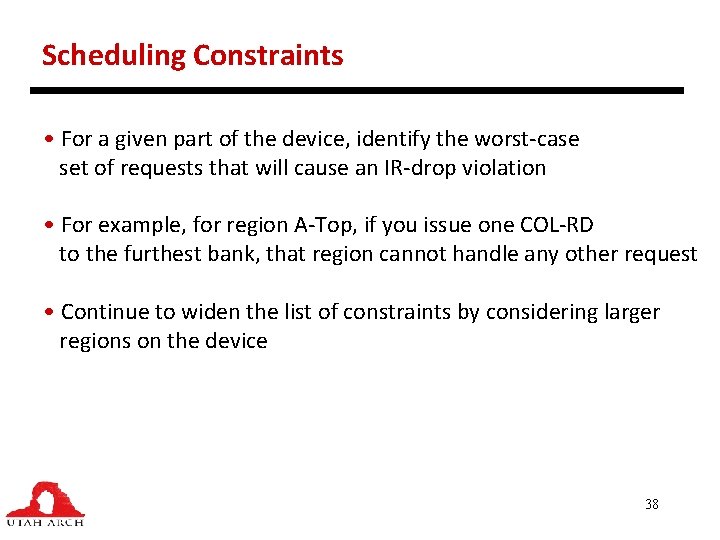

Scheduling Constraints • For a given part of the device, identify the worst-case set of requests that will cause an IR-drop violation • For example, for region A-Top, if you issue one COL-RD to the furthest bank, that region cannot handle any other request • Continue to widen the list of constraints by considering larger regions on the device 38

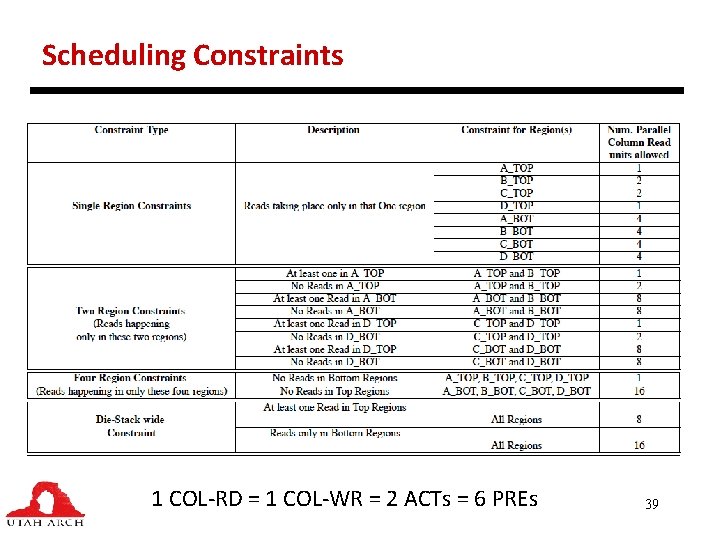

Scheduling Constraints 1 COL-RD = 1 COL-WR = 2 ACTs = 6 PREs 39

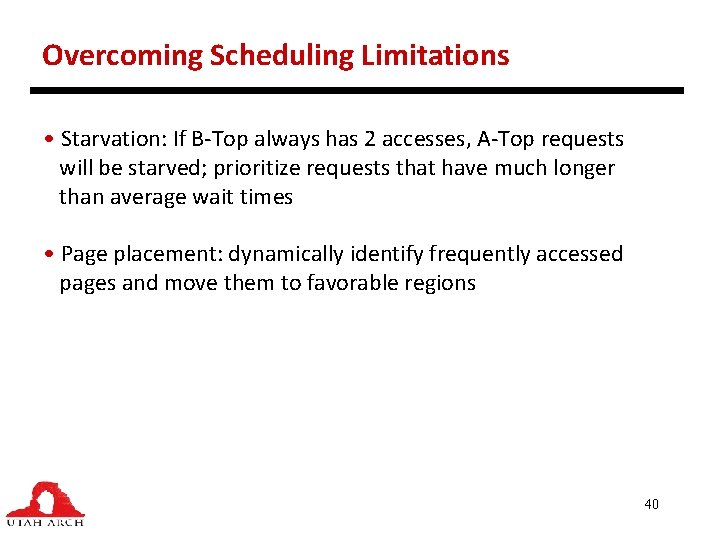

Overcoming Scheduling Limitations • Starvation: If B-Top always has 2 accesses, A-Top requests will be starved; prioritize requests that have much longer than average wait times • Page placement: dynamically identify frequently accessed pages and move them to favorable regions 40

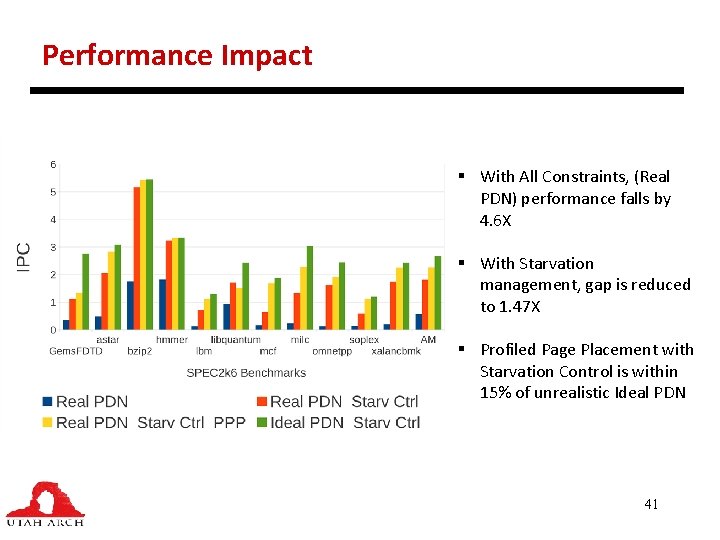

Performance Impact § With All Constraints, (Real PDN) performance falls by 4. 6 X § With Starvation management, gap is reduced to 1. 47 X § Profiled Page Placement with Starvation Control is within 15% of unrealistic Ideal PDN 41

Summary • Many features expected of future memory controllers: handling compression, errors, new devices • Lots of low-hanging fruit • Significant energy/performance benefits from compression • Energy-efficient and storage-efficient chipkill possible, but requires some effort in the MC • More scheduling constraints being imposed as technology evolves; we show in an IR-drop case study for 3 D-stacked devices that the performance impacts can be large 42

Acks • Students in the Utah Arch Lab (Amirali Boroumand, Nil Chatterjee, Seth Pugsley, Ali Shafiee, Manju Shevgoor, Meysam Taassori) • Other collaborators from Samsung (Jung-Sik Kim), HP Labs (Naveen Muralimanohar), ARM (Ani Udipi), U. Nebrija (Pedro Reviriego), Utah (Al Davis) • Funding sources: NSF, Samsung, HP, IBM 43

- Slides: 43