IBM Federal NASA NCCS APPLICATION PERFORMANCE DISCUSSION Koushik

IBM Federal NASA NCCS APPLICATION PERFORMANCE DISCUSSION § Koushik Ghosh, Ph. D. § IBM Federal HPC § HPC Technical Specialist IBM Systemx i. Data. Plex -Parallel Scientific Applications Development April 22 -23, 2009 Koushik K Ghosh, Ph. D. IBM Federal HPC Technical Specialist IBM © 2006 IBM Corporation

IBM Federal Topics § HW SW System Architecture 4 Platform/Chipset 4 Processor 4 Memory 4 Interconnect § Building Apps on System x 4 Compilation 4 MPI § Executing Apps on System x 4 Runtime options § Tools: Oprofile / MIO I/O Perf / MPI Trace § Discussion of NCCS apps IBM © 2006 IBM Corporation

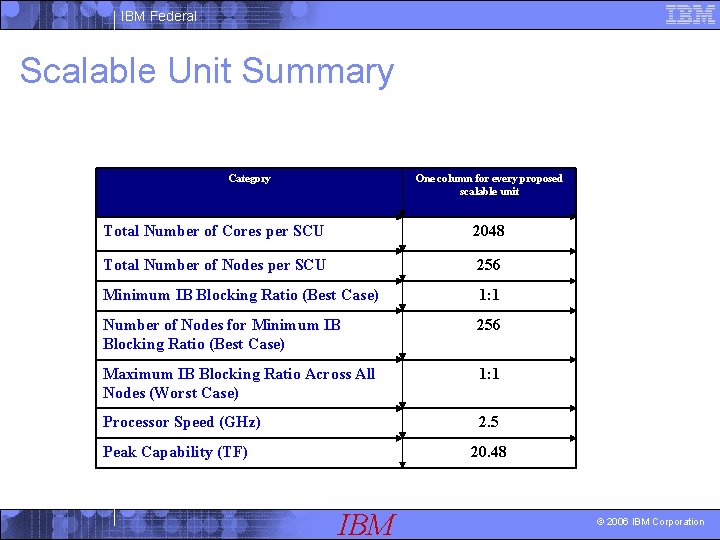

IBM Federal Scalable Unit Summary Category One column for every proposed scalable unit Total Number of Cores per SCU 2048 Total Number of Nodes per SCU 256 Minimum IB Blocking Ratio (Best Case) 1: 1 Number of Nodes for Minimum IB Blocking Ratio (Best Case) 256 Maximum IB Blocking Ratio Across All Nodes (Worst Case) 1: 1 Processor Speed (GHz) 2. 5 Peak Capability (TF) 20. 48 IBM © 2006 IBM Corporation

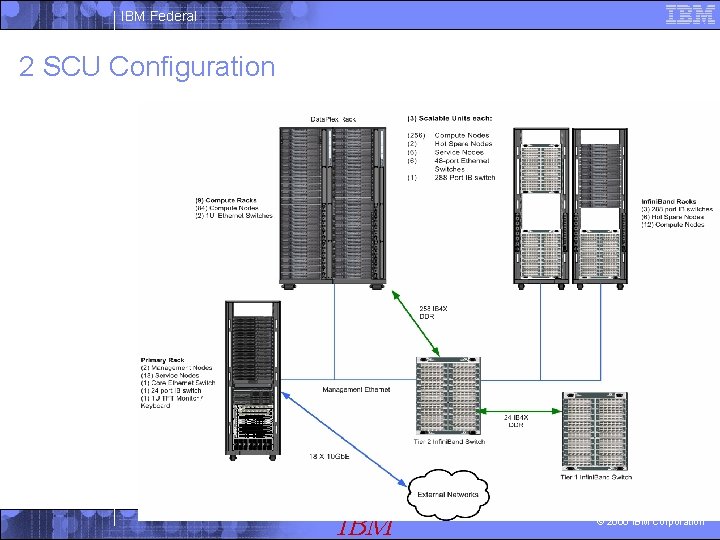

IBM Federal 2 SCU Configuration IBM © 2006 IBM Corporation

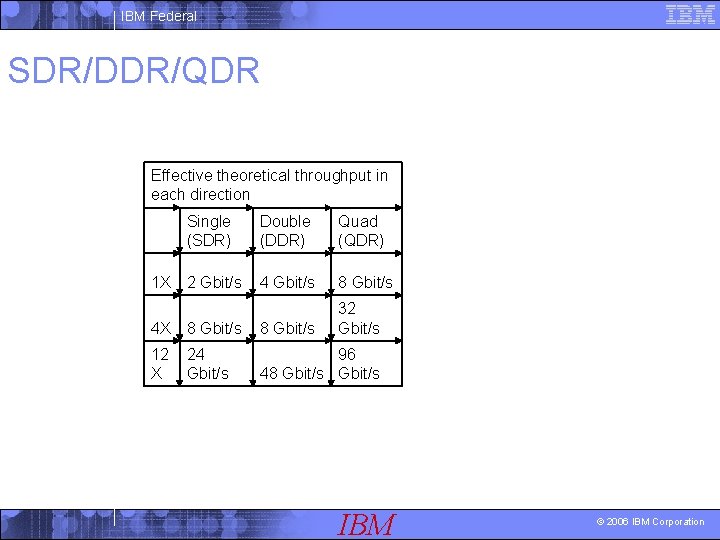

IBM Federal SDR/DDR/QDR Effective theoretical throughput in each direction 1 X Single (SDR) Double (DDR) Quad (QDR) 2 Gbit/s 4 Gbit/s 8 Gbit/s 32 Gbit/s 4 X 8 Gbit/s 12 X 24 Gbit/s 96 48 Gbit/s IBM © 2006 IBM Corporation

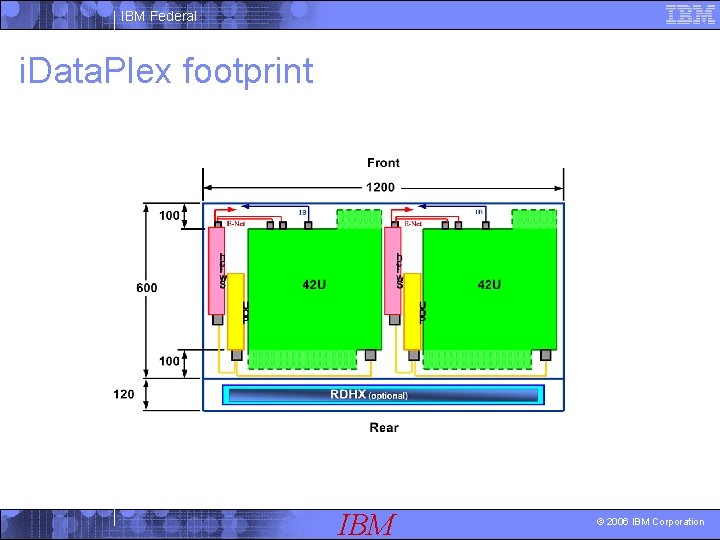

IBM Federal i. Data. Plex footprint IBM © 2006 IBM Corporation

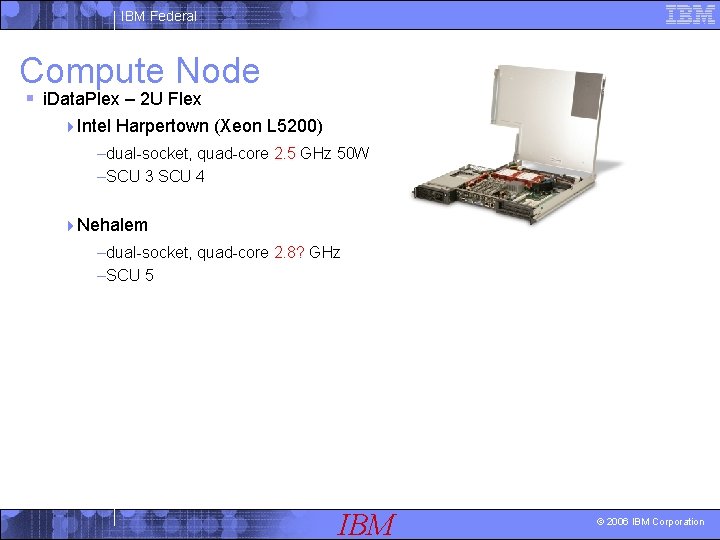

IBM Federal Compute Node § i. Data. Plex – 2 U Flex 4 Intel Harpertown (Xeon L 5200) –dual-socket, quad-core 2. 5 GHz 50 W –SCU 3 SCU 4 4 Nehalem –dual-socket, quad-core 2. 8? GHz –SCU 5 IBM © 2006 IBM Corporation

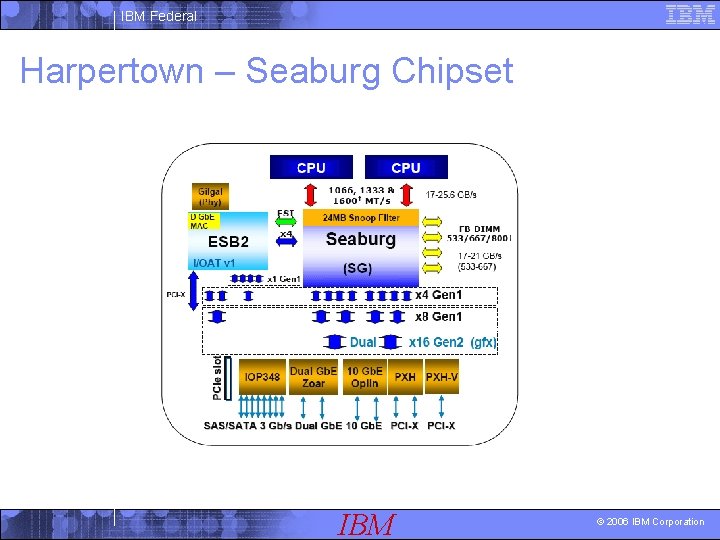

IBM Federal Harpertown – Seaburg Chipset IBM © 2006 IBM Corporation

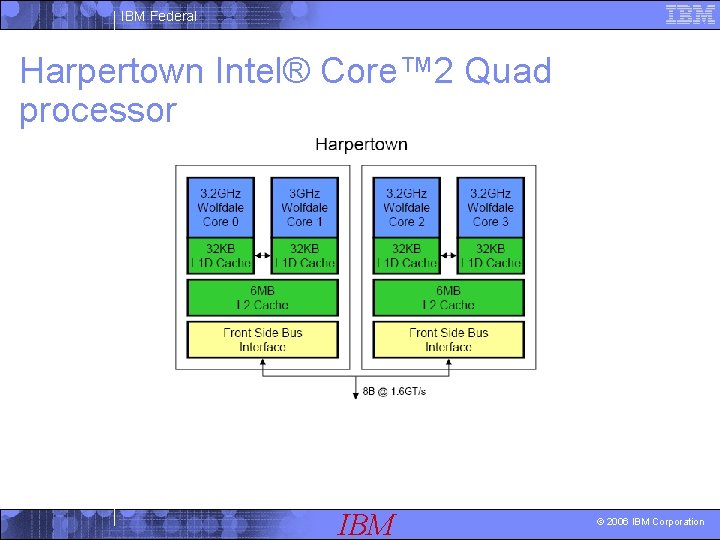

IBM Federal Harpertown Intel® Core™ 2 Quad processor IBM © 2006 IBM Corporation

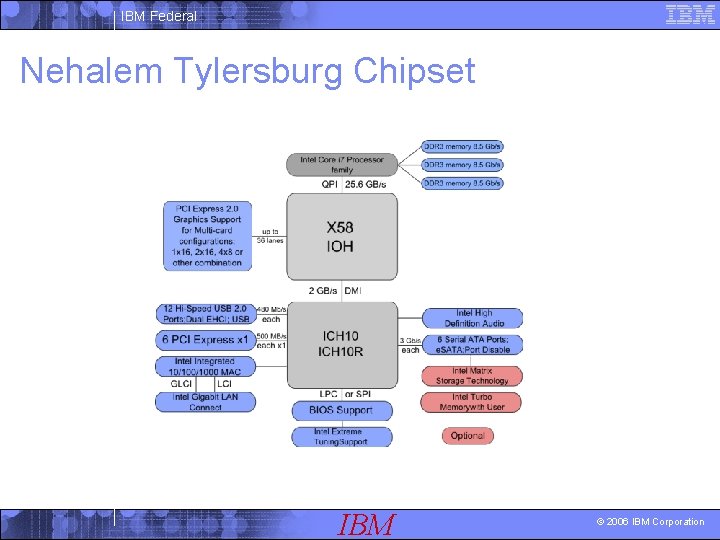

IBM Federal Nehalem Tylersburg Chipset IBM © 2006 IBM Corporation

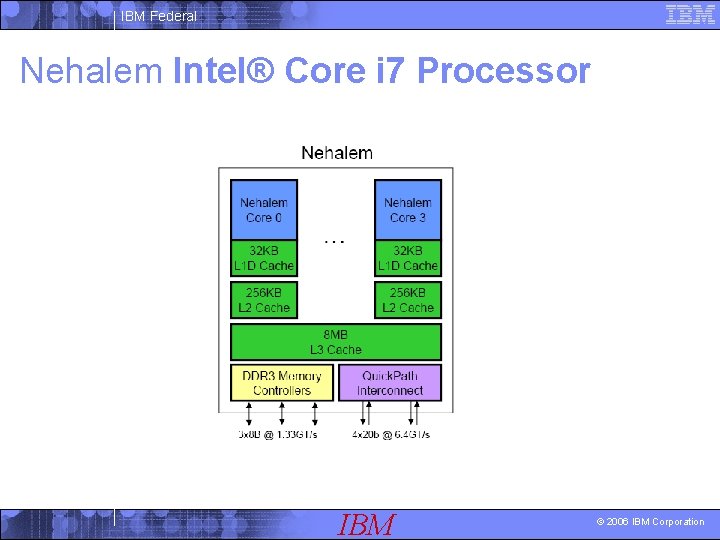

IBM Federal Nehalem Intel® Core i 7 Processor IBM © 2006 IBM Corporation

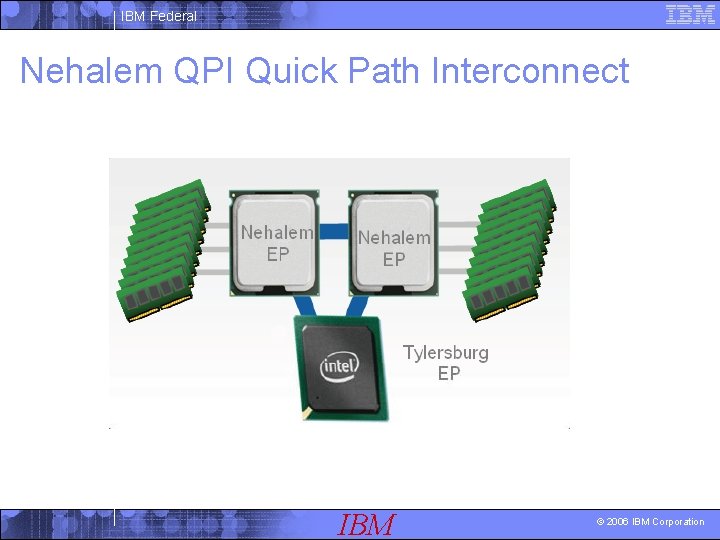

IBM Federal Nehalem QPI Quick Path Interconnect IBM © 2006 IBM Corporation

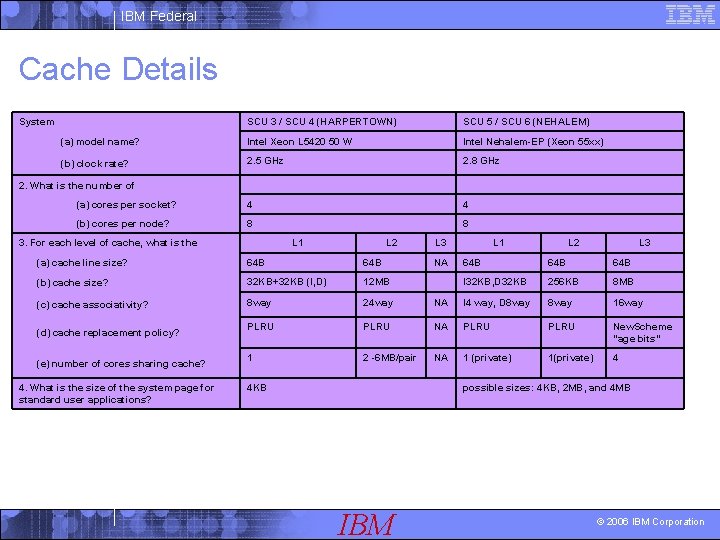

IBM Federal Cache Details System SCU 3 / SCU 4 (HARPERTOWN) SCU 5 / SCU 6 (NEHALEM) (a) model name? Intel Xeon L 5420 50 W Intel Nehalem-EP (Xeon 55 xx) (b) clock rate? 2. 5 GHz 2. 8 GHz (a) cores per socket? 4 4 (b) cores per node? 8 8 2. What is the number of L 1 3. For each level of cache, what is the L 2 (a) cache line size? 64 B (b) cache size? 32 KB+32 KB (I, D) 12 MB (c) cache associativity? 8 way 24 way PLRU 1 (d) cache replacement policy? (e) number of cores sharing cache? 4. What is the size of the system page for standard user applications? L 3 L 2 L 3 64 B 64 B I 32 KB, D 32 KB 256 KB 8 MB NA I 4 way, D 8 way 16 way PLRU NA PLRU New. Scheme "age bits" 2 -6 MB/pair NA 1 (private) 1(private) 4 4 KB NA L 1 possible sizes: 4 KB, 2 MB, and 4 MB IBM © 2006 IBM Corporation

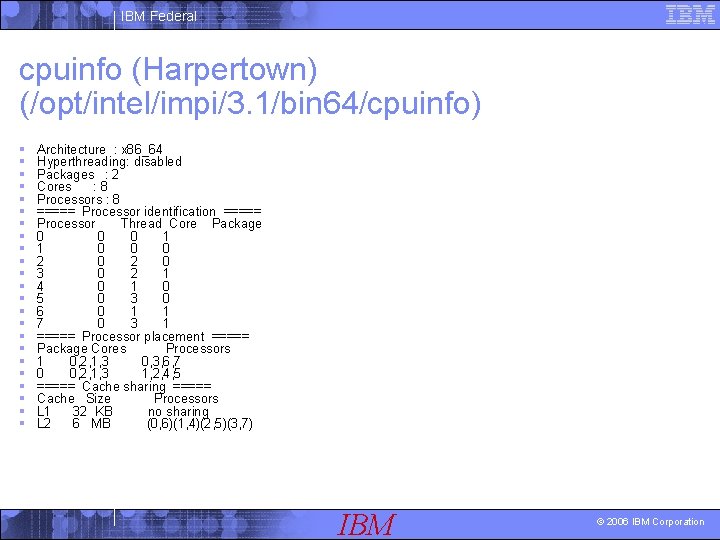

IBM Federal cpuinfo (Harpertown) (/opt/intel/impi/3. 1/bin 64/cpuinfo) § § § § § § Architecture : x 86_64 Hyperthreading: disabled Packages : 2 Cores : 8 Processors : 8 ===== Processor identification ===== Processor Thread Core Package 0 0 0 1 1 0 0 0 2 0 3 0 2 1 4 0 1 0 5 0 3 0 6 0 1 1 7 0 3 1 ===== Processor placement ===== Package Cores Processors 1 0, 2, 1, 3 0, 3, 6, 7 0 0, 2, 1, 3 1, 2, 4, 5 ===== Cache sharing ===== Cache Size Processors L 1 32 KB no sharing L 2 6 MB (0, 6)(1, 4)(2, 5)(3, 7) IBM © 2006 IBM Corporation

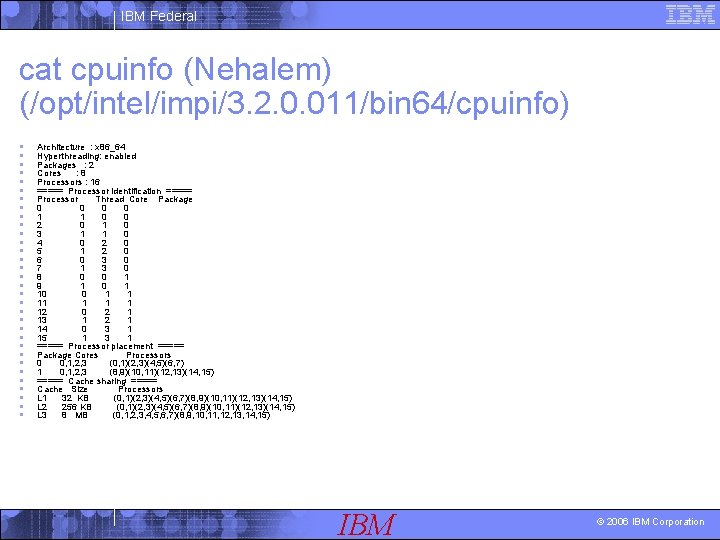

IBM Federal cat cpuinfo (Nehalem) (/opt/intel/impi/3. 2. 0. 011/bin 64/cpuinfo) § § § § § § § § Architecture : x 86_64 Hyperthreading: enabled Packages : 2 Cores : 8 Processors : 16 ===== Processor identification ===== Processor Thread Core Package 0 0 1 1 0 0 2 0 1 0 3 1 1 0 4 0 2 0 5 1 2 0 6 0 3 0 7 1 3 0 8 0 0 1 9 1 0 1 10 0 1 1 1 1 12 0 2 1 13 1 2 1 14 0 3 1 15 1 3 1 ===== Processor placement ===== Package Cores Processors 0 0, 1, 2, 3 (0, 1)(2, 3)(4, 5)(6, 7) 1 0, 1, 2, 3 (8, 9)(10, 11)(12, 13)(14, 15) ===== Cache sharing ===== Cache Size Processors L 1 32 KB (0, 1)(2, 3)(4, 5)(6, 7)(8, 9)(10, 11)(12, 13)(14, 15) L 2 256 KB (0, 1)(2, 3)(4, 5)(6, 7)(8, 9)(10, 11)(12, 13)(14, 15) L 3 8 MB (0, 1, 2, 3, 4, 5, 6, 7)(8, 9, 10, 11, 12, 13, 14, 15) IBM © 2006 IBM Corporation

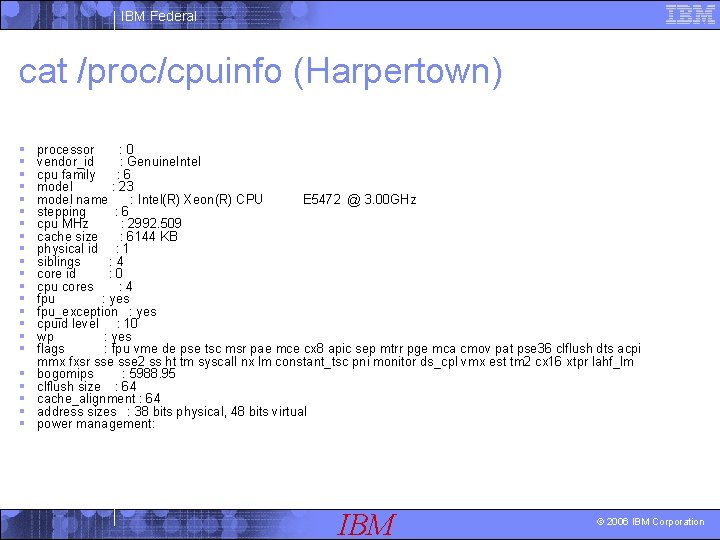

IBM Federal cat /proc/cpuinfo (Harpertown) § § § § § § processor : 0 vendor_id : Genuine. Intel cpu family : 6 model : 23 model name : Intel(R) Xeon(R) CPU E 5472 @ 3. 00 GHz stepping : 6 cpu MHz : 2992. 509 cache size : 6144 KB physical id : 1 siblings : 4 core id : 0 cpu cores : 4 fpu : yes fpu_exception : yes cpuid level : 10 wp : yes flags : fpu vme de pse tsc msr pae mce cx 8 apic sep mtrr pge mca cmov pat pse 36 clflush dts acpi mmx fxsr sse 2 ss ht tm syscall nx lm constant_tsc pni monitor ds_cpl vmx est tm 2 cx 16 xtpr lahf_lm bogomips : 5988. 95 clflush size : 64 cache_alignment : 64 address sizes : 38 bits physical, 48 bits virtual power management: IBM © 2006 IBM Corporation

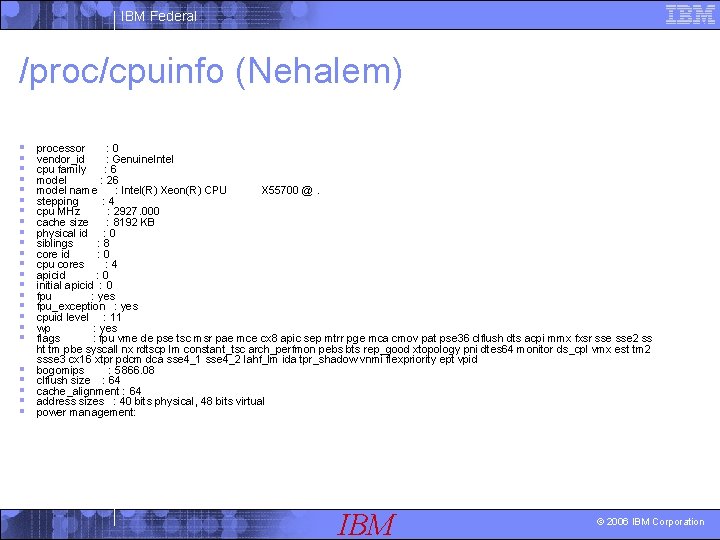

IBM Federal /proc/cpuinfo (Nehalem) § § § § § § processor : 0 vendor_id : Genuine. Intel cpu family : 6 model : 26 model name : Intel(R) Xeon(R) CPU X 55700 @. stepping : 4 cpu MHz : 2927. 000 cache size : 8192 KB physical id : 0 siblings : 8 core id : 0 cpu cores : 4 apicid : 0 initial apicid : 0 fpu : yes fpu_exception : yes cpuid level : 11 wp : yes flags : fpu vme de pse tsc msr pae mce cx 8 apic sep mtrr pge mca cmov pat pse 36 clflush dts acpi mmx fxsr sse 2 ss ht tm pbe syscall nx rdtscp lm constant_tsc arch_perfmon pebs bts rep_good xtopology pni dtes 64 monitor ds_cpl vmx est tm 2 ssse 3 cx 16 xtpr pdcm dca sse 4_1 sse 4_2 lahf_lm ida tpr_shadow vnmi flexpriority ept vpid bogomips : 5866. 08 clflush size : 64 cache_alignment : 64 address sizes : 40 bits physical, 48 bits virtual power management: IBM © 2006 IBM Corporation

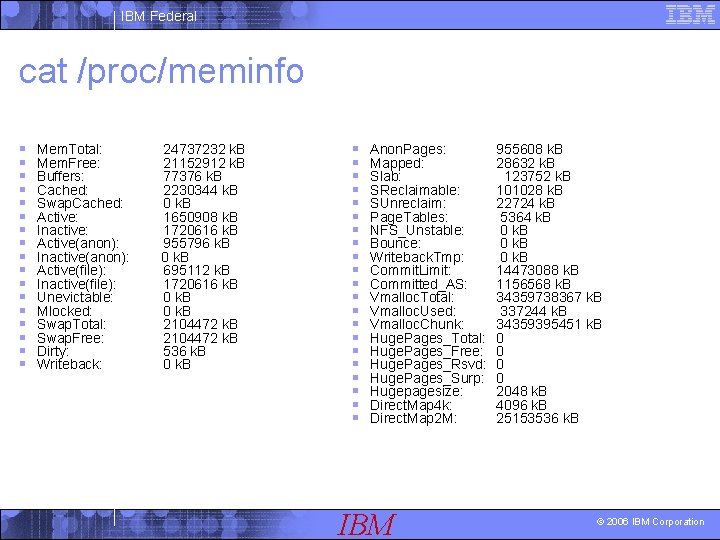

IBM Federal cat /proc/meminfo § § § § § Mem. Total: Mem. Free: Buffers: Cached: Swap. Cached: Active: Inactive: Active(anon): Inactive(anon): Active(file): Inactive(file): Unevictable: Mlocked: Swap. Total: Swap. Free: Dirty: Writeback: 24737232 k. B 21152912 k. B 77376 k. B 2230344 k. B 0 k. B 1650908 k. B 1720616 k. B 955796 k. B 0 k. B 695112 k. B 1720616 k. B 0 k. B 2104472 k. B 536 k. B 0 k. B § § § § § § Anon. Pages: Mapped: Slab: SReclaimable: SUnreclaim: Page. Tables: NFS_Unstable: Bounce: Writeback. Tmp: Commit. Limit: Committed_AS: Vmalloc. Total: Vmalloc. Used: Vmalloc. Chunk: Huge. Pages_Total: Huge. Pages_Free: Huge. Pages_Rsvd: Huge. Pages_Surp: Hugepagesize: Direct. Map 4 k: Direct. Map 2 M: IBM 955608 k. B 28632 k. B 123752 k. B 101028 k. B 22724 k. B 5364 k. B 0 k. B 14473088 k. B 1156568 k. B 34359738367 k. B 337244 k. B 34359395451 k. B 0 0 2048 k. B 4096 k. B 25153536 k. B © 2006 IBM Corporation

IBM Federal Meminfo explanation § High-Level Statistics § Mem. Total: Total usable ram (i. e. physical ram minus a few reserved § § § bits and the kernel binary code) Mem. Free: Is sum of Low. Free+High. Free (overall stat) Mem. Shared: 0; is here for compat reasons but always zero. Buffers: Memory in buffer cache. mostly useless as metric nowadays Cached: Memory in the pagecache (diskcache) minus Swap. Cache: Memory that once was swapped out, is swapped back in but still also is in the swapfile (if memory is needed it doesn't need to be swapped out AGAIN because it is already in the swapfile. This saves I/O) IBM © 2006 IBM Corporation

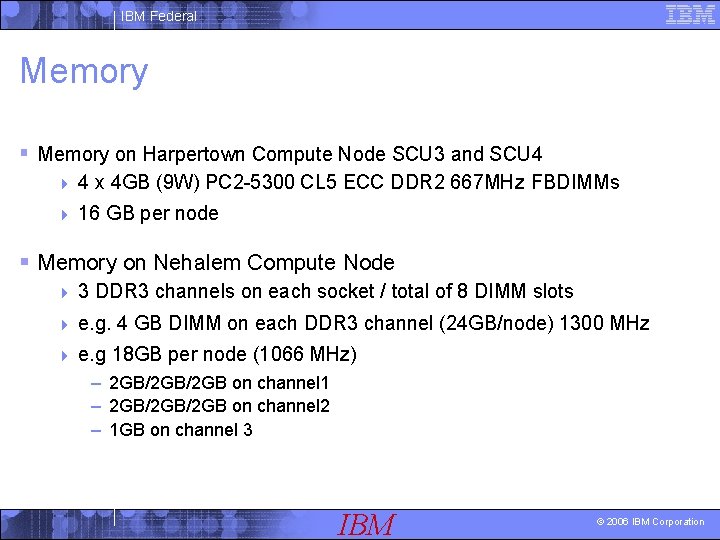

IBM Federal Memory § Memory on Harpertown Compute Node SCU 3 and SCU 4 4 4 x 4 GB (9 W) PC 2 -5300 CL 5 ECC DDR 2 667 MHz FBDIMMs 4 16 GB per node § Memory on Nehalem Compute Node 4 3 DDR 3 channels on each socket / total of 8 DIMM slots 4 e. g. 4 GB DIMM on each DDR 3 channel (24 GB/node) 1300 MHz 4 e. g 18 GB per node (1066 MHz) – 2 GB/2 GB on channel 1 – 2 GB/2 GB on channel 2 – 1 GB on channel 3 IBM © 2006 IBM Corporation

IBM Federal Interconnect § (1) Mellanox Connect. X dual port DDR IB 4 X HCA PCIe 2. 0 x 8 § IB 4 X DDR Cisco 9024 D 288 -port DDR switches for each scalable unit cabled in the following manner: § § 256 ports to compute nodes 2 ports to spare compute nodes 6 ports to service nodes 24 ports uplinked to Tier 1 Infini. Band switch § “Connect. X” Infini. Band 4 X DDR HCAs § 16 Gb/second of uni-directional peak MPI bandwidth § less than 2 microseconds MPI latency. IBM © 2006 IBM Corporation

IBM Federal Nehalem Features § The Nehalem microarchitecture has many new features, some of which are present in the Core i 7. The ones that represent significant changes from the Core 2 include: § The new LGA 1366 socket is incompatible with earlier processors. § On-die memory controller: the memory is directly connected to the processor. It is called the uncore part and runs at a different clock (uncore clock) of execution cores. 4 Three channel memory: each channel can support one or two DDR 3 DIMMs. Motherboards for Core i 7 generally have three, four (3+1) or six DIMM slots. 4 Support for DDR 3 only. 4 No ECC support. § The front side bus has been replaced by the Intel Quick. Path Interconnect interface. Motherboards must use a chipset that supports Quick. Path. § The following caches: 4 32 KB L 1 instruction and 32 KB L 1 data cache per core 4 256 KB L 2 cache (combined instruction and data) per core 4 8 MB L 3 (combined instruction and data) "inclusive", shared by all cores § Single-die device: all four cores, the memory controller, and all cache are on a single die. IBM © 2006 IBM Corporation

IBM Federal Nehalem Features contd. § "Turbo Boost" technology allows all active cores to intelligently clock themselves up 4 in steps of 133 MHz over the design clock rate 4 as long as the CPU's predetermined thermal/electrical requirements are still met. 4 § Re-implemented Hyper-threading. Each of the four cores can process up to two threads simultaneously, 4 processor appears to the OS as eight CPUs. 4 This feature was dropped in Core (Harpertown). 4 § § § Only one Quick. Path interface: not intended for multi-processor motherboards. 45 nm process technology. 731 M transistors. 263 mm 2 Die size. Sophisticated power management places unused core in a zero-power mode. Support for SSE 4. 2 & SSE 4. 1 instruction sets. IBM © 2006 IBM Corporation

IBM Federal I/O and Filesystem § /discover/home § /discover/nobackup § IBM Global Parallel File System (GPFS) used on all nodes § Serviced by 4 I/O nodes § read/write access from all nodes § /discover/home 2 TB, /discover/nobackup 4 TB 4 Individual quota : • – /discover/home: 500 MB – /discover/nobackup: 100 GB § Very fast (peak: 350 MB/sec, normal: 150 - 250 MB/sec) IBM © 2006 IBM Corporation

IBM Federal Software § OS: Linux (RHEL 5. 2) § Compilers: 4 Intel Fortran, C/C++ 4 Math libs: BLAS, LAPACK, Sca. LAPACK, MKL § MPI: MPI-2 § Scheduler: PBSPro IBM © 2006 IBM Corporation

IBM Federal LINUX pagesize § getconf PAGESIZE 4096 IBM © 2006 IBM Corporation

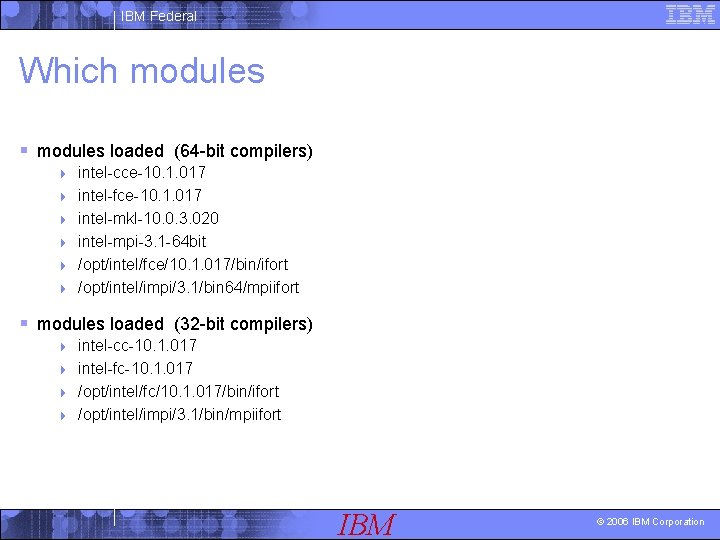

IBM Federal Which modules § modules loaded (64 -bit compilers) 4 4 4 intel-cce-10. 1. 017 intel-fce-10. 1. 017 intel-mkl-10. 0. 3. 020 intel-mpi-3. 1 -64 bit /opt/intel/fce/10. 1. 017/bin/ifort /opt/intel/impi/3. 1/bin 64/mpiifort § modules loaded (32 -bit compilers) intel-cc-10. 1. 017 4 intel-fc-10. 1. 017 4 /opt/intel/fc/10. 1. 017/bin/ifort 4 /opt/intel/impi/3. 1/bin/mpiifort 4 IBM © 2006 IBM Corporation

IBM Federal IFC Compiler Options of some Physics/Chemistry/Climate Applications § Cubed. Sphere 4 -safe_cray_ptr -i_dynamic -convert big_endian -assume byterecl ftz -i 4 -r 8 -O 3 -x. S § NPB 3. 2 -O 3 -x. T -ip -no-prec-div -ansi-alias -fno-alias § HPCC -O 2 –x. T § GAMESS -O 3 -x. S -ipo -i-static -fno-pic –ipo § GTC -O 1 § CAM -O 3 –x. T § MILC -O 3 –x. T § PARATEC -O 3 -x. S -ipo -i-static-fno-fnalias -fno-alias § STREAM -O 3 –opt-streaming-stores=always –x. S-ip § Spec. CPU 2006 ? ? ? IBM © 2006 IBM Corporation

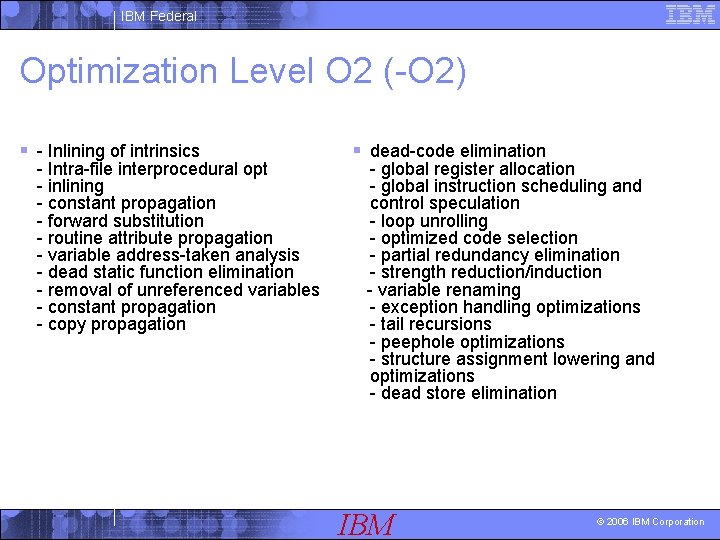

IBM Federal Optimization Level O 2 (-O 2) § - Inlining of intrinsics - Intra-file interprocedural opt - inlining - constant propagation - forward substitution - routine attribute propagation - variable address-taken analysis - dead static function elimination - removal of unreferenced variables - constant propagation - copy propagation § dead-code elimination - global register allocation - global instruction scheduling and control speculation - loop unrolling - optimized code selection - partial redundancy elimination - strength reduction/induction - variable renaming - exception handling optimizations - tail recursions - peephole optimizations - structure assignment lowering and optimizations - dead store elimination IBM © 2006 IBM Corporation

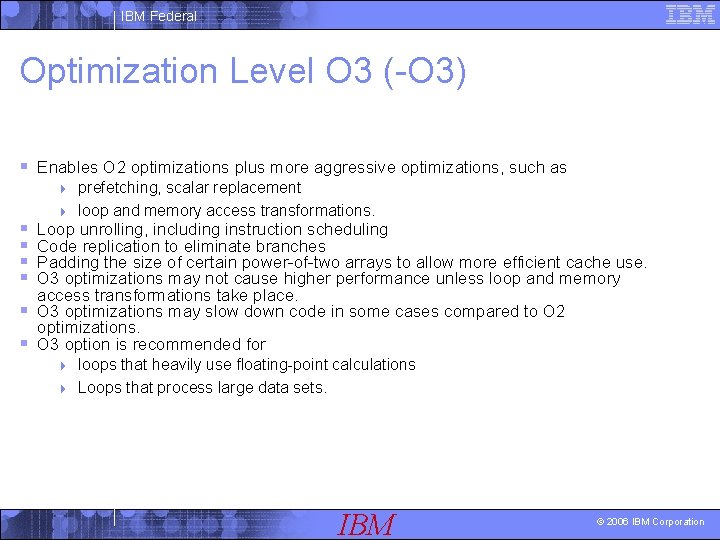

IBM Federal Optimization Level O 3 (-O 3) § Enables O 2 optimizations plus more aggressive optimizations, such as prefetching, scalar replacement 4 loop and memory access transformations. 4 § § Loop unrolling, including instruction scheduling Code replication to eliminate branches Padding the size of certain power-of-two arrays to allow more efficient cache use. O 3 optimizations may not cause higher performance unless loop and memory access transformations take place. § O 3 optimizations may slow down code in some cases compared to O 2 optimizations. § O 3 option is recommended for loops that heavily use floating-point calculations 4 Loops that process large data sets. 4 IBM © 2006 IBM Corporation

IBM Federal O 2 vs. O 3 § O 2 will get a significant amount of performance § Depends on code constructs, memory optimizations § Both of these should be experimented with IBM © 2006 IBM Corporation

IBM Federal Interprocedural Optimizations (-Ip) § Interprocedural optimizations for single file compilation. § Subset of full intra-file interprocedural optimizations § e. g. Perform inline function expansion for calls to functions defined within the current source file. IBM © 2006 IBM Corporation

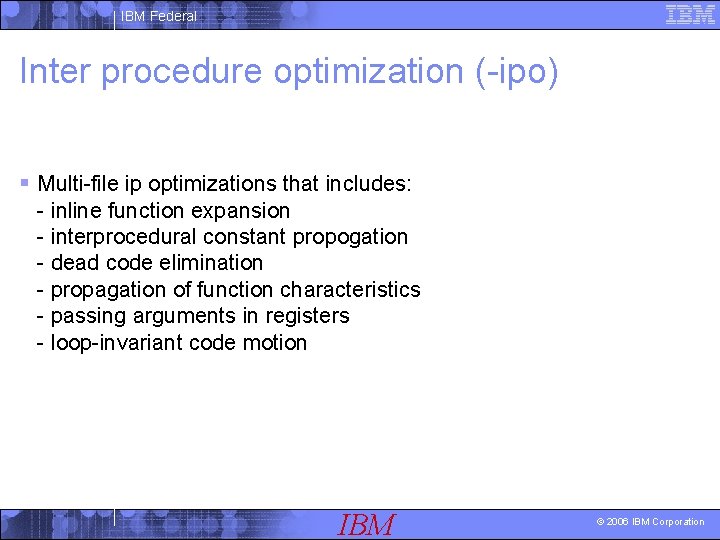

IBM Federal Interprocedural Optimization (-ipo) § Multi-file ip optimizations that includes: - inline function expansion - interprocedural constant propogation - dead code elimination - propagation of function characteristics - passing arguments in registers - loop-invariant code motion IBM © 2006 IBM Corporation

IBM Federal Inlining § -inline-level=<n> control inline expansion: 4 n=0 disable inlining 4 n=1 no inlining (unless -ip specified) 4 n=2 inline any function, at the compiler's discretion (same as -ip) 4 § -f[no-]inline-functions 4 inline any function at the compiler's discretion § -finline-limit=<n> 4 set maximum number of statements to be considered for inlining § -no-inline-min-size 4 no size limit for inlining small routines § -no-inline-max-size 4 no size limit for inlining large routines IBM © 2006 IBM Corporation

IBM Federal Did Inlining, IPO and PGO Help? § Use selectively on bottlenecks § Better for small chunks of code IBM © 2006 IBM Corporation

IBM Federal The –fast Option § Include options that can improve run-time performance: § -O 3 (maximum speed and high-level optimizations) § -ipo (enables interprocedural optimizations across files) § -x. T (generate code specialized for Intel(R) Xeon(R) processors with SSE 3, SSE 4 etc. § -static Statically link in libraries at link time § -no-prec-div (disable -prec-div) where -prec-div improves precision of FP divides (some speed impact) IBM © 2006 IBM Corporation

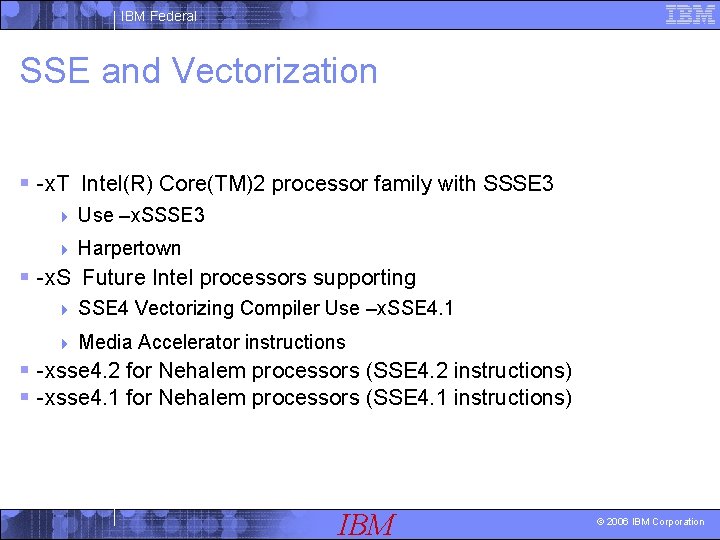

IBM Federal SSE and Vectorization § -x. T Intel(R) Core(TM)2 processor family with SSSE 3 4 Use –x. SSSE 3 4 Harpertown § -x. S Future Intel processors supporting 4 SSE 4 Vectorizing Compiler Use –x. SSE 4. 1 4 Media Accelerator instructions § -xsse 4. 2 for Nehalem processors (SSE 4. 2 instructions) § -xsse 4. 1 for Nehalem processors (SSE 4. 1 instructions) IBM © 2006 IBM Corporation

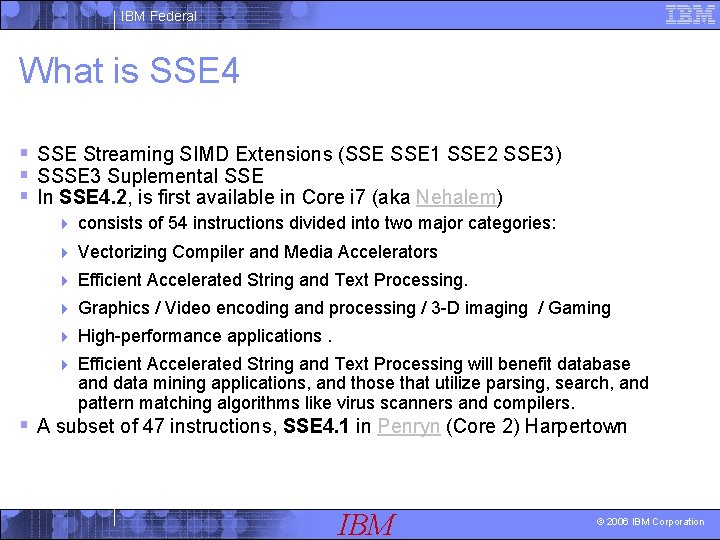

IBM Federal What is SSE 4 § SSE Streaming SIMD Extensions (SSE 1 SSE 2 SSE 3) § SSSE 3 Suplemental SSE § In SSE 4. 2, is first available in Core i 7 (aka Nehalem) 4 consists of 54 instructions divided into two major categories: 4 Vectorizing Compiler and Media Accelerators 4 Efficient Accelerated String and Text Processing. 4 Graphics / Video encoding and processing / 3 -D imaging / Gaming 4 High-performance applications. 4 Efficient Accelerated String and Text Processing will benefit database and data mining applications, and those that utilize parsing, search, and pattern matching algorithms like virus scanners and compilers. § A subset of 47 instructions, SSE 4. 1 in Penryn (Core 2) Harpertown IBM © 2006 IBM Corporation

![IBM Federal Vectorization (Intra register) -vec § void vecadd(float a[], float b[], float c[], IBM Federal Vectorization (Intra register) -vec § void vecadd(float a[], float b[], float c[],](http://slidetodoc.com/presentation_image_h2/4eb3410b5e39ff4a4ba4f6b097eed15f/image-39.jpg)

IBM Federal Vectorization (Intra register) -vec § void vecadd(float a[], float b[], float c[], int n) { int i; for (i = 0; i < n; i++) { c[i] = a[i] + b[i]; } } the Intel compiler will transform the loop to allow four floating-point additions to occur simultaneously using the addps instruction. Simply put, using a pseudo-vector notation, the result would look something like this: for (i = 0; i < n; i+=4) { c[i: i+3] = a[i: i+3] + b[i: i+3]; } IBM © 2006 IBM Corporation

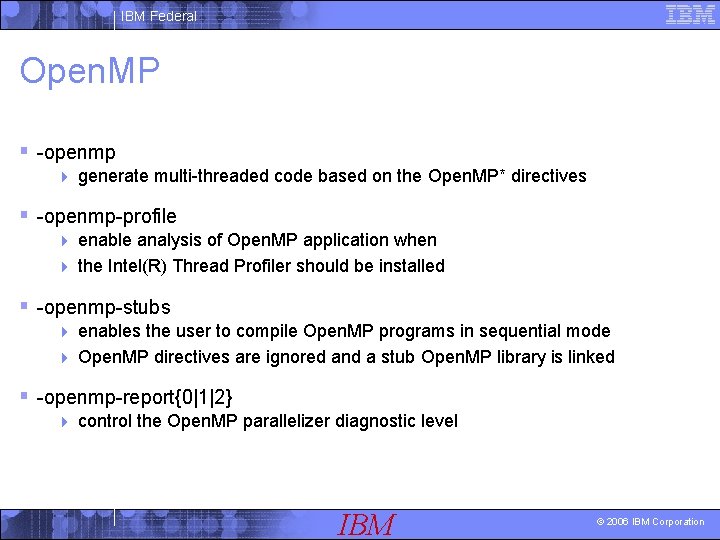

IBM Federal Open. MP § -openmp 4 generate multi-threaded code based on the Open. MP* directives § -openmp-profile enable analysis of Open. MP application when 4 the Intel(R) Thread Profiler should be installed 4 § -openmp-stubs enables the user to compile Open. MP programs in sequential mode 4 Open. MP directives are ignored and a stub Open. MP library is linked 4 § -openmp-report{0|1|2} 4 control the Open. MP parallelizer diagnostic level IBM © 2006 IBM Corporation

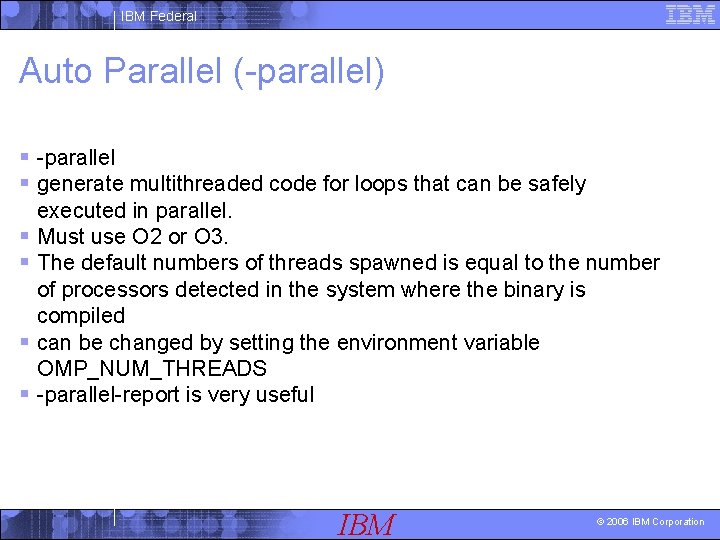

IBM Federal Auto Parallel (-parallel) § -parallel § generate multithreaded code for loops that can be safely § § executed in parallel. Must use O 2 or O 3. The default numbers of threads spawned is equal to the number of processors detected in the system where the binary is compiled can be changed by setting the environment variable OMP_NUM_THREADS -parallel-report is very useful IBM © 2006 IBM Corporation

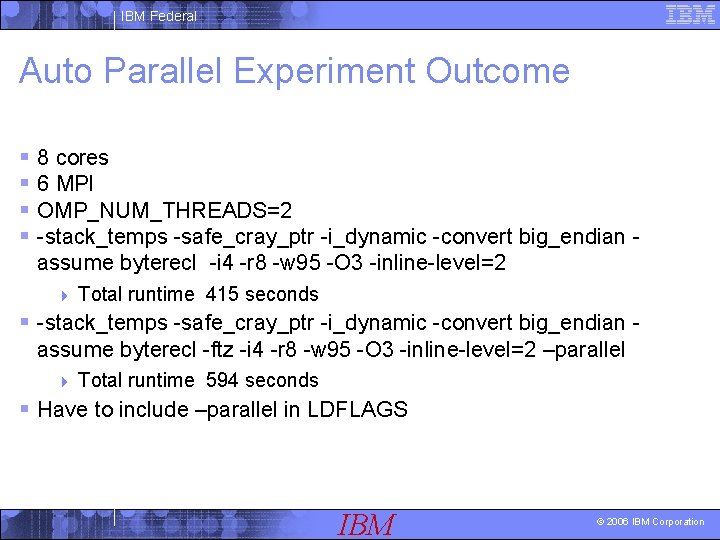

IBM Federal Auto Parallel Experiment Outcome § 8 cores § 6 MPI § OMP_NUM_THREADS=2 § -stack_temps -safe_cray_ptr -i_dynamic -convert big_endian assume byterecl -i 4 -r 8 -w 95 -O 3 -inline-level=2 4 Total runtime 415 seconds § -stack_temps -safe_cray_ptr -i_dynamic -convert big_endian assume byterecl -ftz -i 4 -r 8 -w 95 -O 3 -inline-level=2 –parallel 4 Total runtime 594 seconds § Have to include –parallel in LDFLAGS IBM © 2006 IBM Corporation

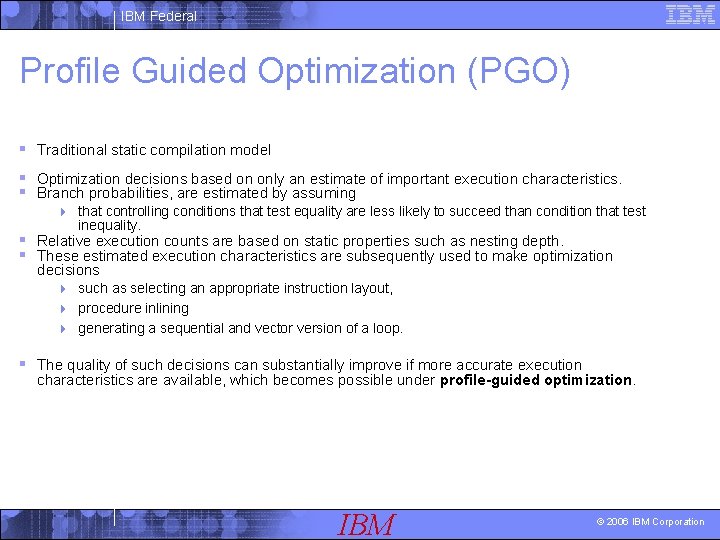

IBM Federal Profile Guided Optimization (PGO) § Traditional static compilation model § Optimization decisions based on only an estimate of important execution characteristics. § Branch probabilities, are estimated by assuming 4 that controlling conditions that test equality are less likely to succeed than condition that test inequality. § Relative execution counts are based on static properties such as nesting depth. § These estimated execution characteristics are subsequently used to make optimization decisions 4 such as selecting an appropriate instruction layout, 4 procedure inlining 4 generating a sequential and vector version of a loop. § The quality of such decisions can substantially improve if more accurate execution characteristics are available, which becomes possible under profile-guided optimization. IBM © 2006 IBM Corporation

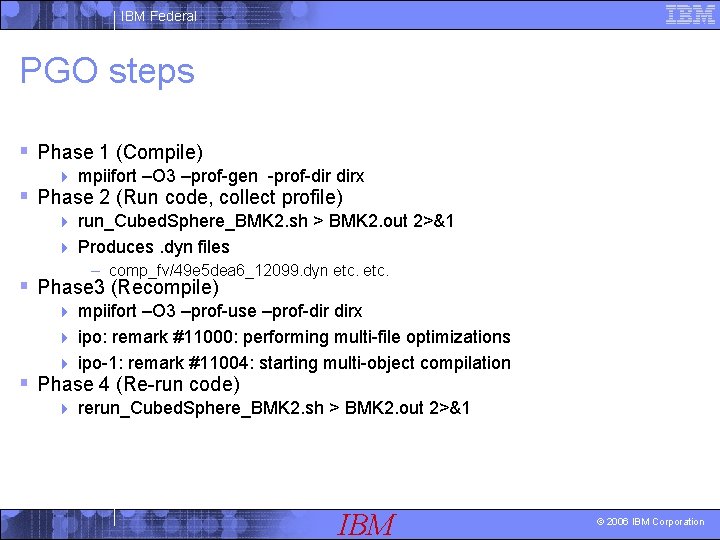

IBM Federal PGO steps § Phase 1 (Compile) 4 mpiifort –O 3 –prof-gen -prof-dir dirx § Phase 2 (Run code, collect profile) run_Cubed. Sphere_BMK 2. sh > BMK 2. out 2>&1 4 Produces. dyn files 4 – comp_fv/49 e 5 dea 6_12099. dyn etc. § Phase 3 (Recompile) mpiifort –O 3 –prof-use –prof-dir dirx 4 ipo: remark #11000: performing multi-file optimizations 4 ipo-1: remark #11004: starting multi-object compilation 4 § Phase 4 (Re-run code) 4 rerun_Cubed. Sphere_BMK 2. sh > BMK 2. out 2>&1 IBM © 2006 IBM Corporation

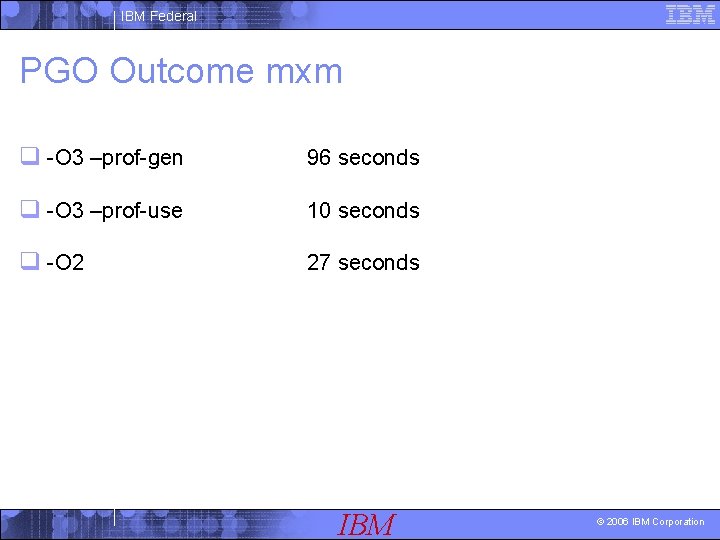

IBM Federal PGO Outcome mxm q -O 3 –prof-gen 96 seconds q -O 3 –prof-use 10 seconds q -O 2 27 seconds IBM © 2006 IBM Corporation

![IBM Federal Optimization Reports § -vec-report[n] control amount of vectorizer diagnostic information 4 n=3 IBM Federal Optimization Reports § -vec-report[n] control amount of vectorizer diagnostic information 4 n=3](http://slidetodoc.com/presentation_image_h2/4eb3410b5e39ff4a4ba4f6b097eed15f/image-46.jpg)

IBM Federal Optimization Reports § -vec-report[n] control amount of vectorizer diagnostic information 4 n=3 indicate vectorized/non-vectorized loops and prohibiting data dependence info 4 § -opt-report [n] generate an optimization report to stderr 4 n=3 maximum report output 4 § -opt-report-file=<file> 4 specify the filename for the generated report § -opt-report-routine=<name> 4 reports on routines containing the given name IBM © 2006 IBM Corporation

IBM Federal Inter procedure optimization (-ipo) § Multi-file ip optimizations that includes: - inline function expansion - interprocedural constant propogation - dead code elimination - propagation of function characteristics - passing arguments in registers - loop-invariant code motion IBM © 2006 IBM Corporation

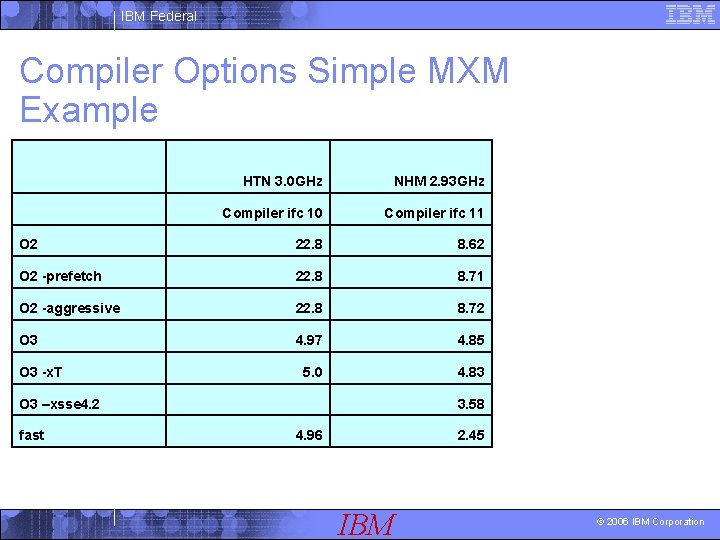

IBM Federal Compiler Options Simple MXM Example HTN 3. 0 GHz NHM 2. 93 GHz Compiler ifc 10 Compiler ifc 11 O 2 22. 8 8. 62 O 2 -prefetch 22. 8 8. 71 O 2 -aggressive 22. 8 8. 72 O 3 4. 97 4. 85 5. 0 4. 83 O 3 -x. T O 3 –xsse 4. 2 fast 3. 58 4. 96 2. 45 IBM © 2006 IBM Corporation

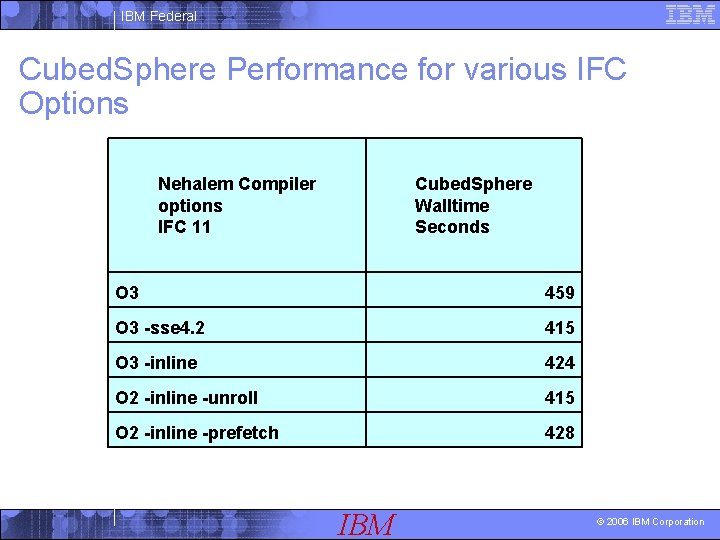

IBM Federal Cubed. Sphere Performance for various IFC Options Nehalem Compiler options IFC 11 Cubed. Sphere Walltime Seconds O 3 459 O 3 -sse 4. 2 415 O 3 -inline 424 O 2 -inline -unroll 415 O 2 -inline -prefetch 428 IBM © 2006 IBM Corporation

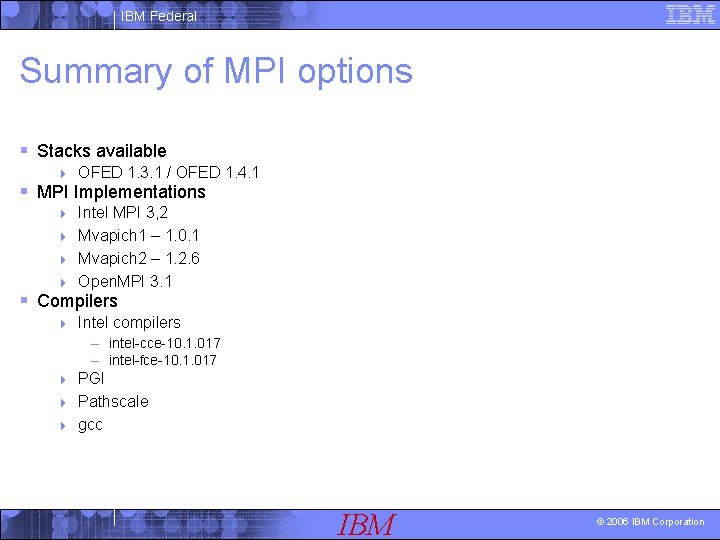

IBM Federal Summary of MPI options § Stacks available 4 OFED 1. 3. 1 / OFED 1. 4. 1 § MPI Implementations Intel MPI 3, 2 4 Mvapich 1 – 1. 0. 1 4 Mvapich 2 – 1. 2. 6 4 Open. MPI 3. 1 4 § Compilers 4 Intel compilers – intel-cce-10. 1. 017 – intel-fce-10. 1. 017 PGI 4 Pathscale 4 gcc 4 IBM © 2006 IBM Corporation

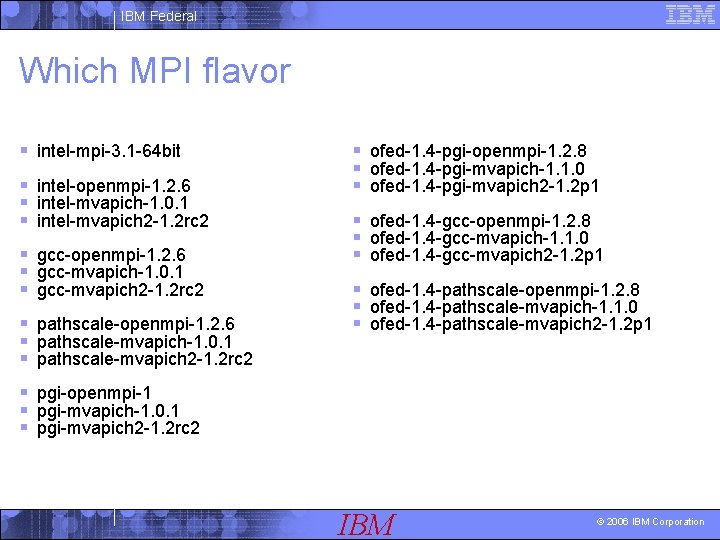

IBM Federal Which MPI flavor § intel-mpi-3. 1 -64 bit § intel-openmpi-1. 2. 6 § intel-mvapich-1. 0. 1 § intel-mvapich 2 -1. 2 rc 2 § gcc-openmpi-1. 2. 6 § gcc-mvapich-1. 0. 1 § gcc-mvapich 2 -1. 2 rc 2 § pathscale-openmpi-1. 2. 6 § pathscale-mvapich-1. 0. 1 § pathscale-mvapich 2 -1. 2 rc 2 § ofed-1. 4 -pgi-openmpi-1. 2. 8 § ofed-1. 4 -pgi-mvapich-1. 1. 0 § ofed-1. 4 -pgi-mvapich 2 -1. 2 p 1 § ofed-1. 4 -gcc-openmpi-1. 2. 8 § ofed-1. 4 -gcc-mvapich-1. 1. 0 § ofed-1. 4 -gcc-mvapich 2 -1. 2 p 1 § ofed-1. 4 -pathscale-openmpi-1. 2. 8 § ofed-1. 4 -pathscale-mvapich-1. 1. 0 § ofed-1. 4 -pathscale-mvapich 2 -1. 2 p 1 § pgi-openmpi-1 § pgi-mvapich-1. 0. 1 § pgi-mvapich 2 -1. 2 rc 2 IBM © 2006 IBM Corporation

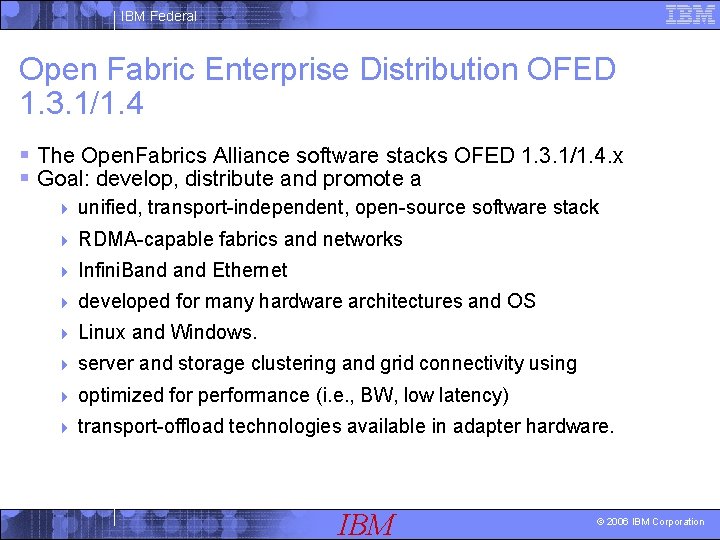

IBM Federal Open Fabric Enterprise Distribution OFED 1. 3. 1/1. 4 § The Open. Fabrics Alliance software stacks OFED 1. 3. 1/1. 4. x § Goal: develop, distribute and promote a 4 unified, transport-independent, open-source software stack 4 RDMA-capable fabrics and networks 4 Infini. Band Ethernet 4 developed for many hardware architectures and OS 4 Linux and Windows. 4 server and storage clustering and grid connectivity using 4 optimized for performance (i. e. , BW, low latency) 4 transport-offload technologies available in adapter hardware. IBM © 2006 IBM Corporation

IBM Federal MVAPICH § MVAPICH 4 (MPI-1 over Open. Fabrics/Gen 2, Open. Fabrics/Gen 2 -UD, u. DAPL, Infini. Path, VAPI and TCP/IP) § MPI-1 implementation § Based on MPICH and MVICH § The latest release is MVAPICH 1. 1 (includes MPICH 1. 2. 7). § It is available under BSD licensing. IBM © 2006 IBM Corporation

IBM Federal MVAPICH 2 § MPI-2 over Open. Fabrics-IB, Open. Fabrics-i. WARP, u. DAPL and TCP/IP § MPI-2 implementation which includes all MPI-1 features. § Based on MPICH 2 and MVICH. § The latest release is MVAPICH 2 1. 2 (includes MPICH 2 1. 0. 7). IBM © 2006 IBM Corporation

IBM Federal Open MPI: Version 1. 3. 1 § http: //www. open-mpi. org § High performance message passing library § Open source MPI-2 implementation § Developed and maintained by a consortium of academic, research, and industry partners § Many OS supported IBM © 2006 IBM Corporation

IBM Federal MPIEXEC options § Two major areas: § DEVICE § PINNING IBM © 2006 IBM Corporation

IBM Federal RUNTIME MPI Issues § shm 4 Shared-memory only (no sockets) § ssm 4 Combined sockets + shared memory (for clusters with SMP nodes) § rdma 4 RDMA-capable network fabrics including Infini. Band*, Myrinet* (via DAPL*) § rdssm 4 Combined sockets + shared memory + DAPL* 4 for clusters with SMPnodes and RDMA-capable network fabrics IBM © 2006 IBM Corporation

IBM Federal Typical mpiexec command § mpiexec -genv I_MPI_DEVICE rdssm -genv –I _MPI_PIN 1 -genv I_MPI_PIN_PROCESSOR_LIST 0, 2 -3, 4 –np 16 –perhost 4 a. out § -genv X Y : associate env var X with value Y for all MPI ranks. IBM © 2006 IBM Corporation

IBM Federal MPI_DEVICE for Cubed. Sphere § ssm 4 250 seconds § rdma 4 250 seconds § rdssm 4 250 seconds IBM © 2006 IBM Corporation

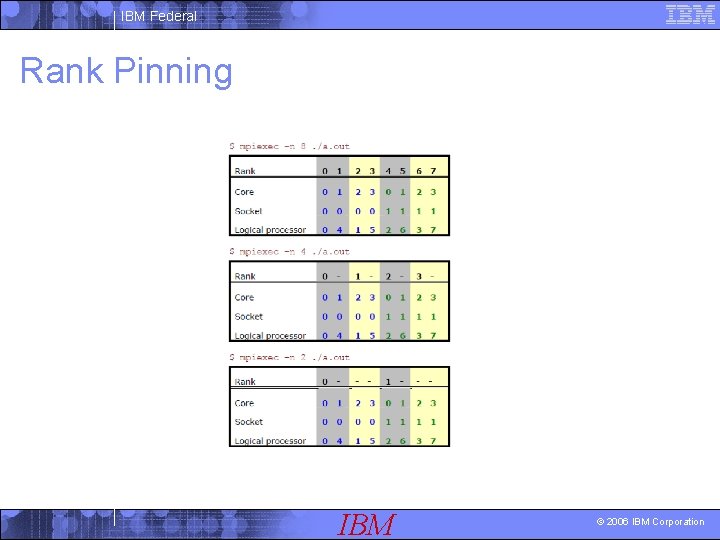

IBM Federal Rank Pinning IBM © 2006 IBM Corporation

IBM Federal Task Affinity § Taskset 4 Taskset -c 0, 1, 4, 5 ……. § Numacntrl IBM © 2006 IBM Corporation

IBM Federal Interactive Tools for Monitoring § top § mpstat § vmstat § iostat IBM © 2006 IBM Corporation

IBM Federal SMT § § § § Bios option set at boot time Run 2 threads at the same time per core Share resources (execution units) Take advantage of 4 -wide execution engine Keep it fed with multiple threads Hide latency of a single thread Most power efficient performance feature Very low die area cost Can provide significant performance benefit depending on application Much more efficient than adding an entire core Implications for Out of Order executions Might be good for MPI + Open. MP Might lead to extra BW pressure and pressure on L 1 L 2 L 3 caches IBM © 2006 IBM Corporation

IBM Federal SMT + MPI § NOAA NCEP GFS code T 190 (240 hour simulation) § SMT OFF 4 9709 seconds § SMT ON TURBO ON 4 7276 seconds IBM © 2006 IBM Corporation

IBM Federal TURBO § Turbo mode boosts operating frequency based on thermal headroom § when the processor is operating below its peak power, 4 increase the clock speed of the active cores by one or more bins to increase performance. § Common reasons for operating below peak power are one or more cores may be powered down 4 the active workload is relatively power (e. g. no floating point, or few memory accesses). 4 § Active cores can increase their clock frequency in relatively coarse increments of 133 MHz speed bins, depending on the SKU 4 the available power 4 thermal headroom 4 other environmental factors. 4 IBM © 2006 IBM Corporation

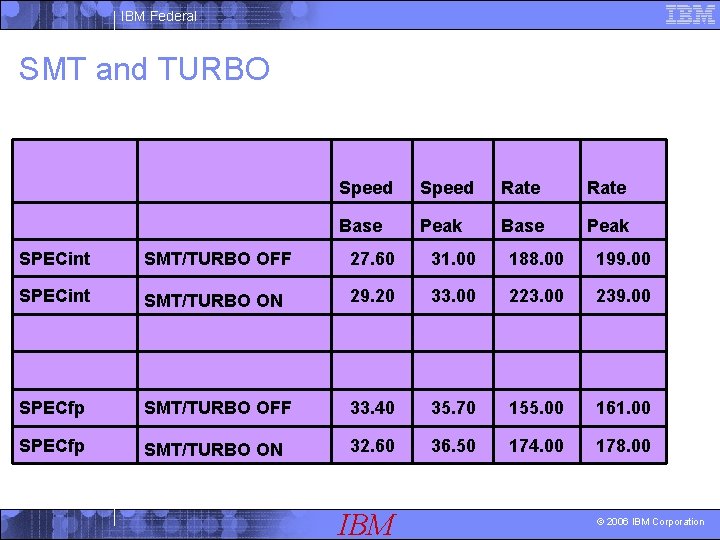

IBM Federal SMT and TURBO Speed Rate Base Peak SPECint SMT/TURBO OFF 27. 60 31. 00 188. 00 199. 00 SPECint SMT/TURBO ON 29. 20 33. 00 223. 00 239. 00 SPECfp SMT/TURBO OFF 33. 40 35. 70 155. 00 161. 00 SPECfp SMT/TURBO ON 32. 60 36. 50 174. 00 178. 00 IBM © 2006 IBM Corporation

IBM Federal SMT + Hybrid MPI + Open. MP § 8 MPI tasks § OMP_NUM_THREADS=1 § OMP_NUM_THREADS=2 § Potentially a good way to exploit SMP IBM © 2006 IBM Corporation

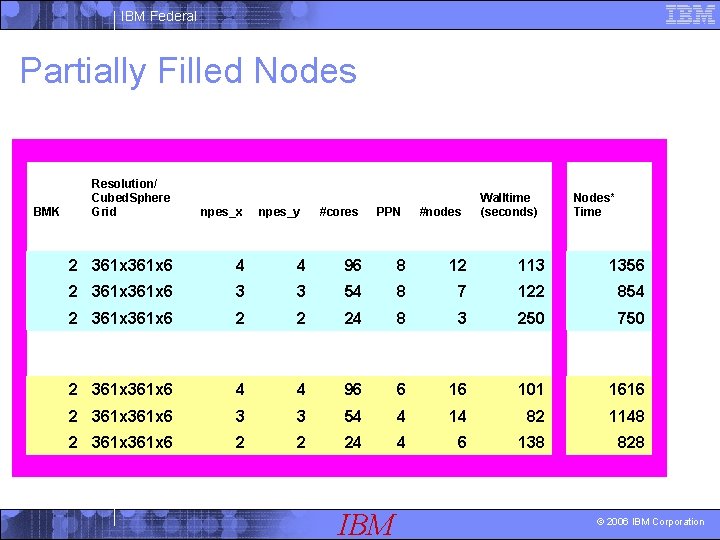

IBM Federal Partially Filled Nodes BMK Resolution/ Cubed. Sphere Grid npes_x 2 361 x 6 4 4 96 8 12 113 1356 2 361 x 6 3 3 54 8 7 122 854 2 361 x 6 2 2 24 8 3 250 750 2 361 x 6 4 4 96 6 16 101 1616 2 361 x 6 3 3 54 4 14 82 1148 2 361 x 6 2 2 24 4 6 138 828 npes_y #cores PPN IBM #nodes Walltime (seconds) Nodes* Time © 2006 IBM Corporation

IBM Federal MPI optimization § Affinity § Mapping Tasks to Nodes § Mapping Tasks to Cores § Barriers § Collectives § Environment variables § Partially/Fully loaded nodes IBM © 2006 IBM Corporation

IBM Federal SHM vs. SSM § shm: 411 seconds § ssm: 424 seconds IBM © 2006 IBM Corporation

IBM Federal Events available for Oprofile § CPU_CLK_UNHALTED: § UNHALTED_REFERENCE_CYCLES: § INST_RETIRED_ANY_P: § LLC_MISSES: § LLC_REFS: § BR_MISS_PRED_RETIRED: IBM © 2006 IBM Corporation

IBM Federal PAPI issues 4 Agree 100% that performance tools are desperately needed 4 Our LTC team has been actively driving the distros to add support. 4 End 2009, a decision was made to drive perfmon 2 as the preferred method 4 Have had some success in driving into next major releases of REHEL 6 and SLES 11 4 Unfortunately, (possibly) we missed the first release of SLES 11 and it will be in SP 1 4 This would be the first time we could officially support it installed. 4 Run with the kernel patch, problems have to be reproduced on a non-patched system. 4 This is has worked on POWER Linux users at some pretty large sites. 4 Use TDS systems as the vehicle to have the patches and do some perf testing. 4 SCU 5 without the PAPI patch and SCU 6 with? ? . 4 If a kernel problem occurs that needs to be reproduced, it could just be rerun on SCU 5? ? IBM © 2006 IBM Corporation

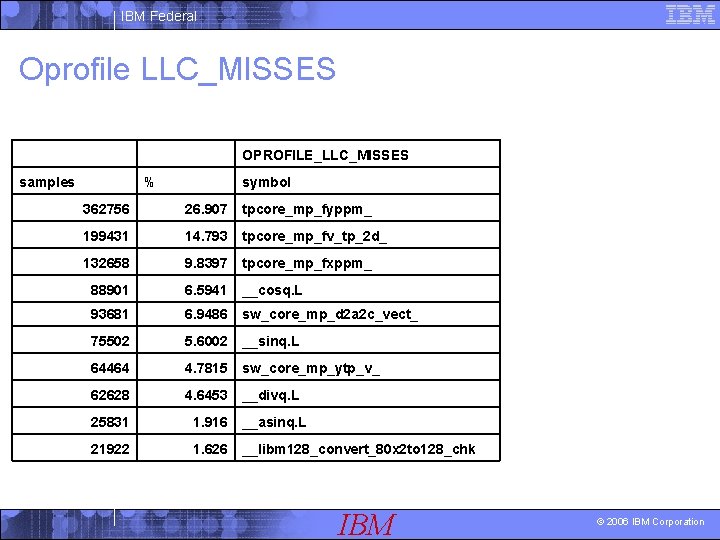

IBM Federal Oprofile LLC_MISSES OPROFILE_LLC_MISSES samples % symbol 362756 26. 907 tpcore_mp_fyppm_ 199431 14. 793 tpcore_mp_fv_tp_2 d_ 132658 9. 8397 tpcore_mp_fxppm_ 88901 6. 5941 __cosq. L 93681 6. 9486 sw_core_mp_d 2 a 2 c_vect_ 75502 5. 6002 __sinq. L 64464 4. 7815 sw_core_mp_ytp_v_ 62628 4. 6453 __divq. L 25831 1. 916 __asinq. L 21922 1. 626 __libm 128_convert_80 x 2 to 128_chk IBM © 2006 IBM Corporation

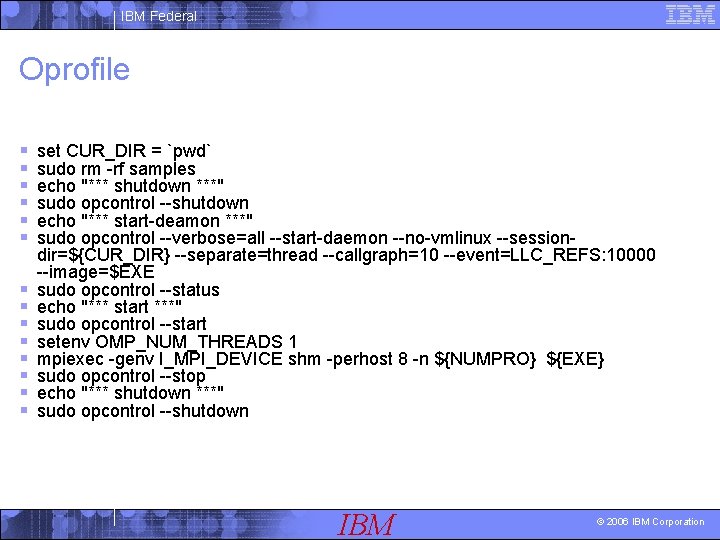

IBM Federal Oprofile § § § § set CUR_DIR = `pwd` sudo rm -rf samples echo "*** shutdown ***" sudo opcontrol --shutdown echo "*** start-deamon ***" sudo opcontrol --verbose=all --start-daemon --no-vmlinux --sessiondir=${CUR_DIR} --separate=thread --callgraph=10 --event=LLC_REFS: 10000 --image=$EXE sudo opcontrol --status echo "*** start ***" sudo opcontrol --start setenv OMP_NUM_THREADS 1 mpiexec -genv I_MPI_DEVICE shm -perhost 8 -n ${NUMPRO} ${EXE} sudo opcontrol --stop echo "*** shutdown ***" sudo opcontrol --shutdown IBM © 2006 IBM Corporation

IBM Federal I/O optimization § High Performance Filesystems 4 Striped disks 4 GPFS (parallel filesystem) § MIO IBM © 2006 IBM Corporation

IBM Federal MIOSTAT Statistics Collection § set MIOSTAT = /home/kghosh/vmio/tools/bin/miostats § $MIOSTAT -v. /c 2 l. x IBM © 2006 IBM Corporation

IBM Federal MIO optimized code execution § setenv MIO /home/kghosh/vmio/tools § setenv MIO_LIBRARY_PATH $MIO"/BLD/x. Linux. 64/lib" § setenv LD_PRELOAD $MIO"/BLD/x. Linux. 64/lib. TKIO. so" § setenv TKIO_ALTLIBX "fv. x[$MIO/BLD/x. Linux. 64/lib/get_MIO_ptrs_64. so/abort]" § setenv MIO_STATS ". /MIO. %{PID}. stats" § setenv MIO_FILES "*. nc [ trace/stats/mbytes | pf/cache=2 g/page=2 m/pref=2 | trace/stats/mbytes| async/nthread=2/naio=100/nchild=1 | trace/stats/mbytes]" IBM © 2006 IBM Corporation

IBM Federal MIO with C 2 L (Cube. To. Lat. Lon) § BEFORE § Timestamp @ Start 14: 00: 45 Cumulative time 0. 000 sec § Timestamp @ Stop 14: 08: 25 Cumulative time 460. 451 sec § AFTER § MIO_FILES ="*. nc [ trace/stats/mbytes | pf/cache=2 g/page=2 m/pref=2 | trace/stats/mbytes| async/nthread=2/naio=100/nchild=1 | trace/stats/mbytes]" § Timestamp @ Start 14: 31: 53 Cumulative time 0. 004 sec § Timestamp@ Stop 14: 34: 04 Cumulative time 130. 618 sec IBM © 2006 IBM Corporation

IBM Federal GPFS I/O § Timestamp @ Start 10: 14: 44 Cumulative time 0. 012 sec § Timestamp @ Stop 10: 15: 12 Cumulative time 27. 835 sec IBM © 2006 IBM Corporation

IBM Federal Non invasive MPI Trace Tool from IBM § No recompile needed § Uses PMPI layer § mpiifort -$(LDFLAGS) libmpi_trace. a *. o –o a. out IBM © 2006 IBM Corporation

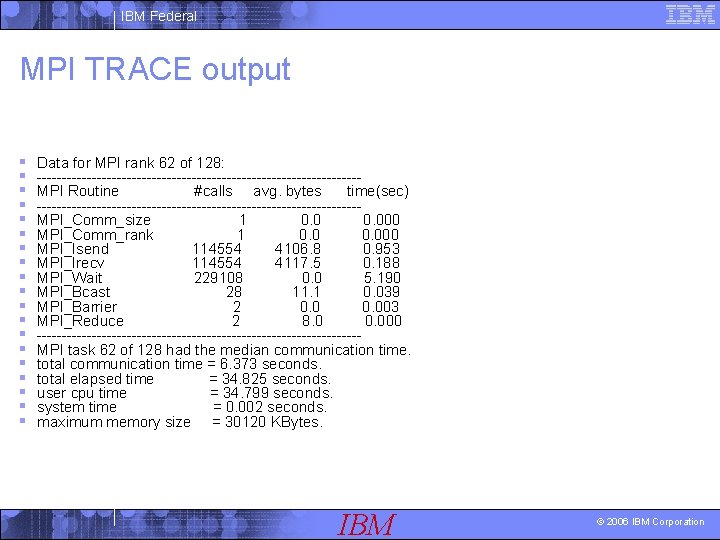

IBM Federal MPI TRACE output § § § § § Data for MPI rank 62 of 128: --------------------------------MPI Routine #calls avg. bytes time(sec) --------------------------------MPI_Comm_size 1 0. 000 MPI_Comm_rank 1 0. 000 MPI_Isend 114554 4106. 8 0. 953 MPI_Irecv 114554 4117. 5 0. 188 MPI_Wait 229108 0. 0 5. 190 MPI_Bcast 28 11. 1 0. 039 MPI_Barrier 2 0. 003 MPI_Reduce 2 8. 0 0. 000 --------------------------------MPI task 62 of 128 had the median communication time. total communication time = 6. 373 seconds. total elapsed time = 34. 825 seconds. user cpu time = 34. 799 seconds. system time = 0. 002 seconds. maximum memory size = 30120 KBytes. IBM © 2006 IBM Corporation

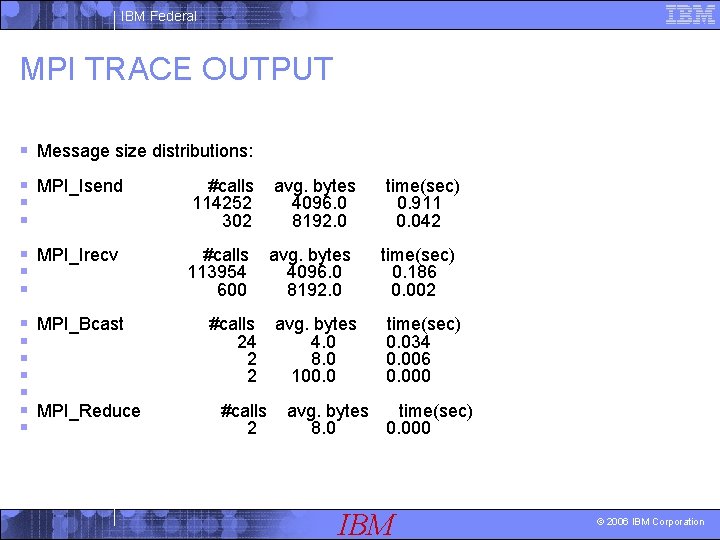

IBM Federal MPI TRACE OUTPUT § Message size distributions: § MPI_Isend § § #calls 114252 302 avg. bytes 4096. 0 8192. 0 time(sec) 0. 911 0. 042 § MPI_Irecv § § #calls 113954 600 avg. bytes 4096. 0 8192. 0 time(sec) 0. 186 0. 002 § MPI_Bcast § § § MPI_Reduce § #calls 24 2 2 #calls 2 avg. bytes 4. 0 8. 0 100. 0 time(sec) 0. 034 0. 006 0. 000 avg. bytes time(sec) 8. 0 0. 000 IBM © 2006 IBM Corporation

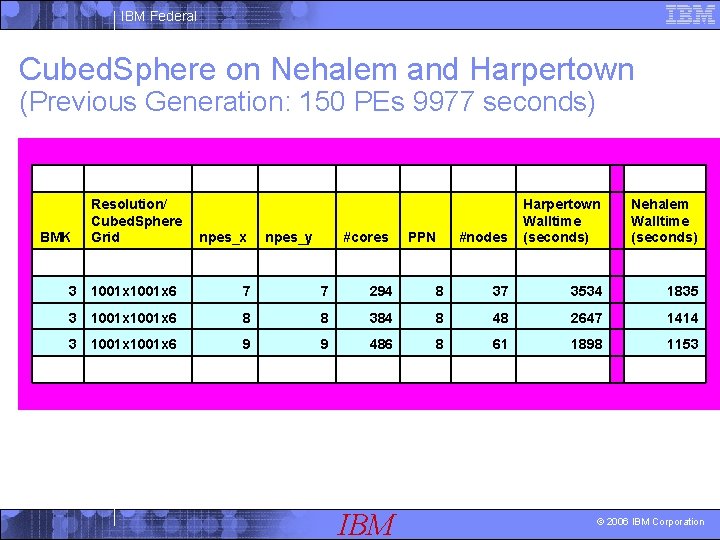

IBM Federal Cubed. Sphere on Nehalem and Harpertown (Previous Generation: 150 PEs 9977 seconds) BMK Resolution/ Cubed. Sphere Grid npes_x npes_y #cores PPN #nodes Harpertown Walltime (seconds) Nehalem Walltime (seconds) 3 1001 x 6 7 7 294 8 37 3534 1835 3 1001 x 6 8 8 384 8 48 2647 1414 3 1001 x 6 9 9 486 8 61 1898 1153 IBM © 2006 IBM Corporation

- Slides: 83