Genetic Algorithms for clustering problem Pasi Frnti 7

Genetic Algorithms for clustering problem Pasi Fränti 7. 4. 2016

General structure Genetic Algorithm: Generate S initial solutions REPEAT Z iterations Select best solutions Create new solutions by crossover Mutate solutions END-REPEAT

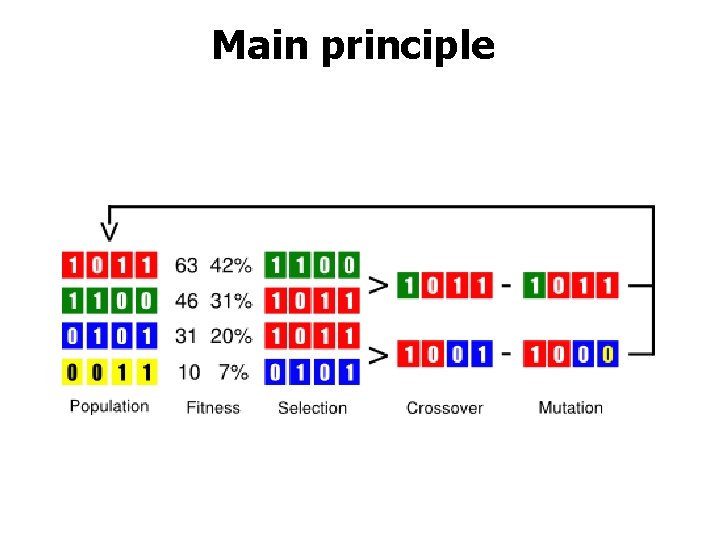

Main principle

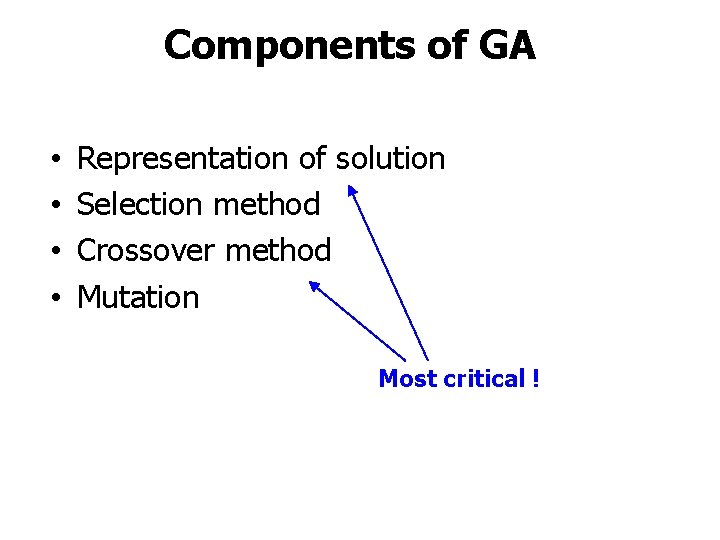

Components of GA • • Representation of solution Selection method Crossover method Mutation Most critical !

Representation

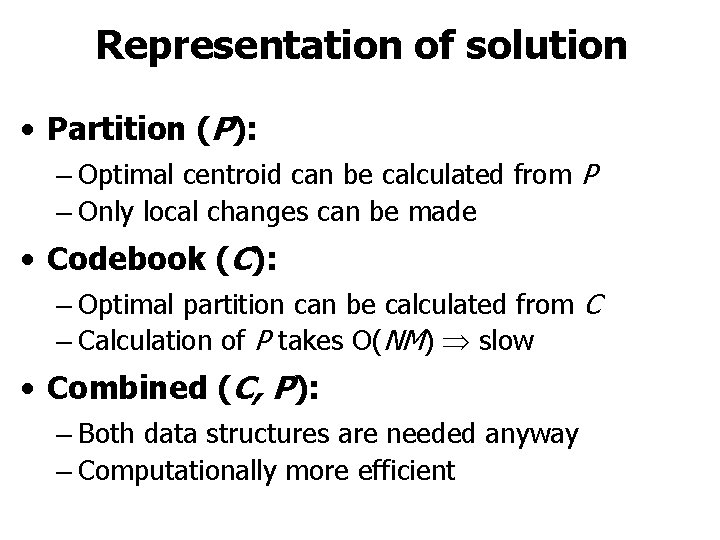

Representation of solution • Partition (P): – Optimal centroid can be calculated from P – Only local changes can be made • Codebook (C): – Optimal partition can be calculated from C – Calculation of P takes O(NM) slow • Combined (C, P): – Both data structures are needed anyway – Computationally more efficient

Selection method • To select which solutions will be used in crossover for generating new solutions • Main principle: good solutions should be used rather than weak solutions • Two main strategies: – Roulette wheel selection – Elitist selection • Exact implementation not so important

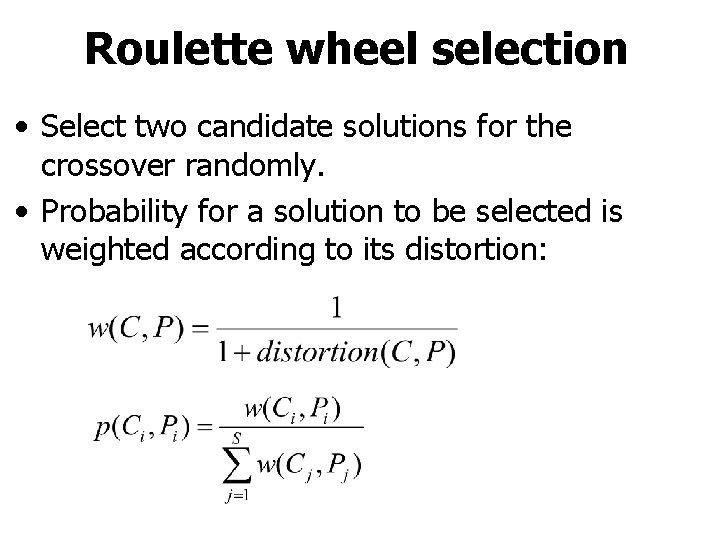

Roulette wheel selection • Select two candidate solutions for the crossover randomly. • Probability for a solution to be selected is weighted according to its distortion:

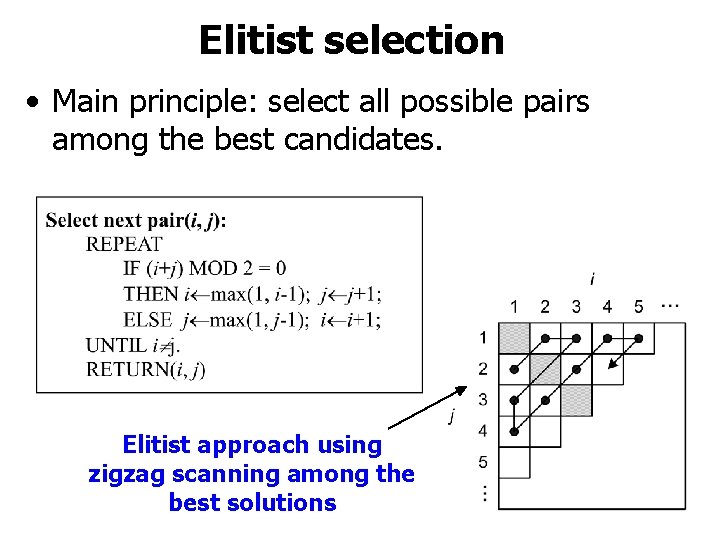

Elitist selection • Main principle: select all possible pairs among the best candidates. Elitist approach using zigzag scanning among the best solutions

Crossover

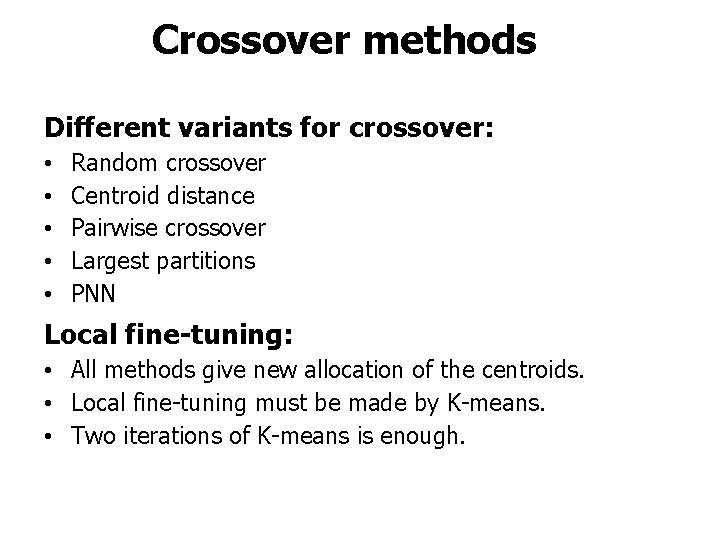

Crossover methods Different variants for crossover: • • • Random crossover Centroid distance Pairwise crossover Largest partitions PNN Local fine-tuning: • All methods give new allocation of the centroids. • Local fine-tuning must be made by K-means. • Two iterations of K-means is enough.

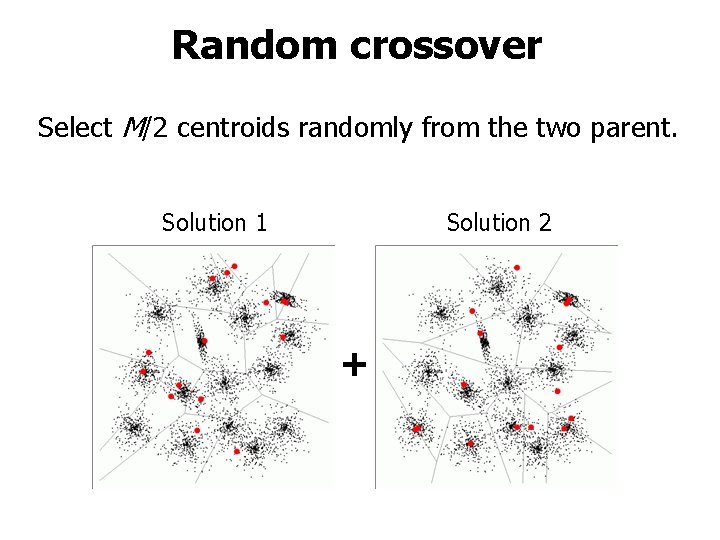

Random crossover Select M/2 centroids randomly from the two parent. Solution 1 Solution 2 +

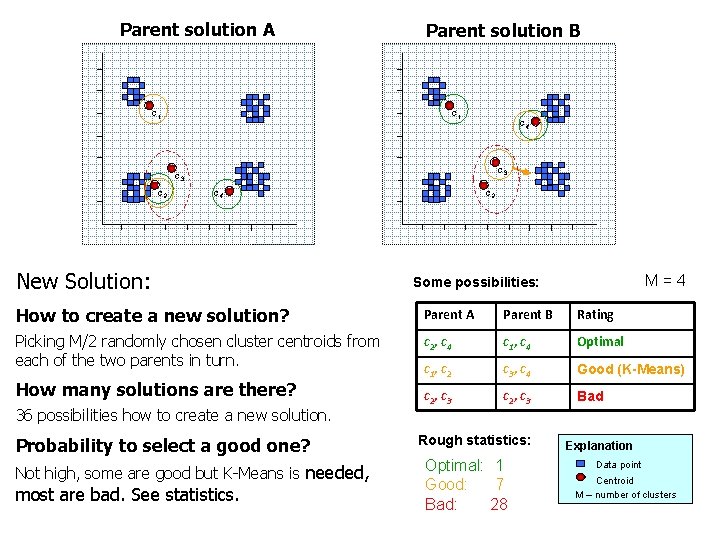

Parent solution A Parent solution B c 1 c 4 c 3 c 2 c 4 c 2 1 New Solution: 2 4 5 8 M=4 Some possibilities: How to create a new solution? Parent A Parent B Rating Picking M/2 randomly chosen cluster centroids from each of the two parents in turn. c 2, c 4 c 1, c 4 Optimal c 1, c 2 c 3, c 4 Good (K-Means) c 2, c 3 Bad How many solutions are there? 36 possibilities how to create a new solution. Probability to select a good one? Not high, some are good but K-Means is needed, most are bad. See statistics. Rough statistics: Optimal: 1 Good: 7 Bad: 28 Explanation Data point Centroid M – number of clusters

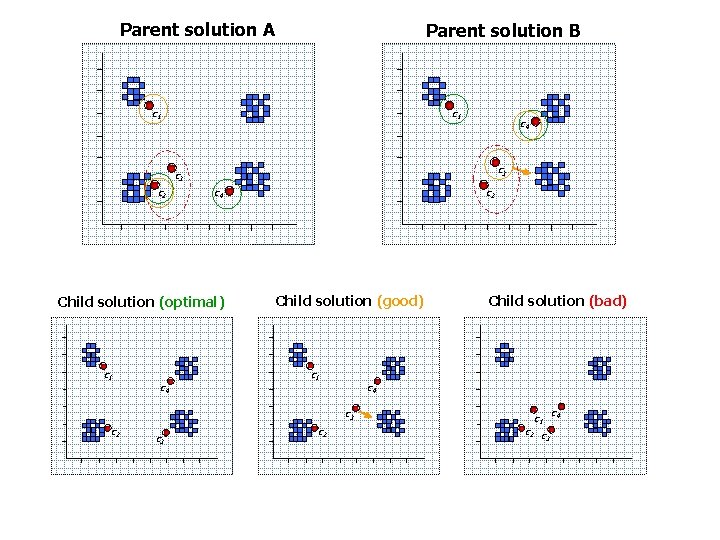

Parent solution A Parent solution B c 1 c 4 c 3 c 2 c 4 c 2 1 Child solution (optimal) c 1 c 4 Child solution (good) c 1 c 3 c 2 4 5 8 Child solution (bad) c 4 c 3 c 2 2 c 1 c 2 c 3 c 4

![Centroid distance crossover [Pan, Mc. Innes, Jack, 1995: Electronics Letters ] [Scheunders, 1997: Pattern Centroid distance crossover [Pan, Mc. Innes, Jack, 1995: Electronics Letters ] [Scheunders, 1997: Pattern](http://slidetodoc.com/presentation_image/023037dfb6e8a20a8004fb170ba724ae/image-15.jpg)

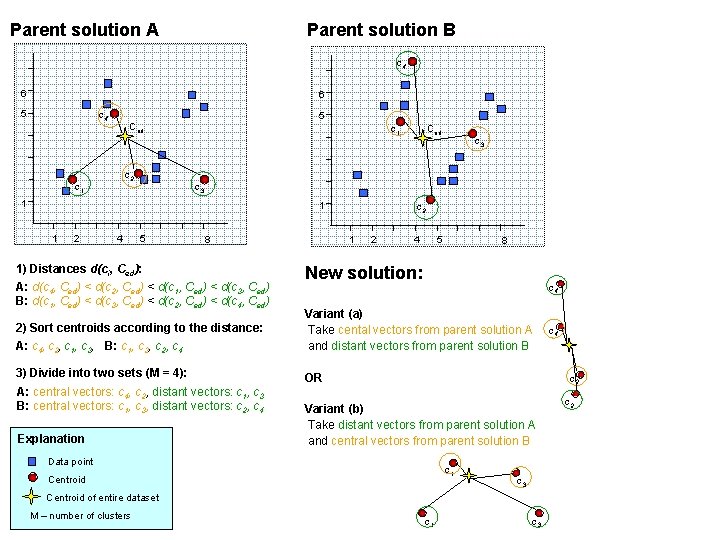

Centroid distance crossover [Pan, Mc. Innes, Jack, 1995: Electronics Letters ] [Scheunders, 1997: Pattern Recognition Letters ] • For each centroid, calculate its distance to the center point of the entire data set. • Sort the centroids according to the distance. • Divide into two sets: central vectors (M/2 closest) and distant vectors (M/2 furthest). • Take central vectors from one codebook and distant vectors from the other.

Parent solution A Parent solution B c 4 6 6 5 c 4 5 Ced c 2 c 1 1 2 4 c 3 1 1 Ced 5 8 1) Distances d(ci, Ced): A: d(c 4, Ced) < d(c 2, Ced) < d(c 1, Ced) < d(c 3, Ced) B: d(c 1, Ced) < d(c 3, Ced) < d(c 2, Ced) < d(c 4, Ced) c 2 1 2 4 5 8 New solution: c 4 2) Sort centroids according to the distance: A: c 4, c 2, c 1, c 3, B: c 1, c 3, c 2, c 4 Variant (a) Take cental vectors from parent solution A and distant vectors from parent solution B 3) Divide into two sets (M = 4): OR A: central vectors: c 4, c 2, distant vectors: c 1, c 3 B: central vectors: c 1, c 3, distant vectors: c 2, c 4 Explanation c 2 Variant (b) Take distant vectors from parent solution A and central vectors from parent solution B Data point c 1 Centroid c 3 Centroid of entire dataset M – number of clusters c 4 c 1 c 3 c 2

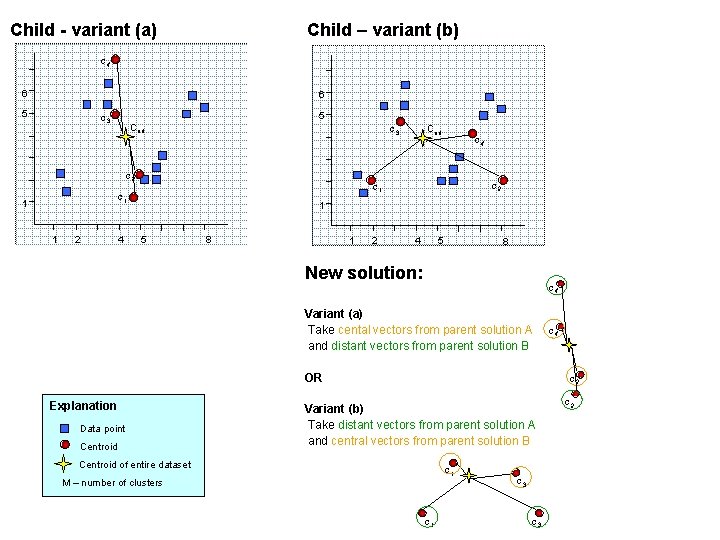

Child - variant (a) Child – variant (b) c 4 6 6 5 5 c 3 Ced c 3 c 2 1 2 4 c 2 c 1 1 Ced 1 5 8 1 2 4 5 8 New solution: c 4 Variant (a) Take cental vectors from parent solution A and distant vectors from parent solution B OR Explanation Data point Centroid c 4 c 2 Variant (b) Take distant vectors from parent solution A and central vectors from parent solution B Centroid of entire dataset c 1 M – number of clusters c 1 c 3 c 2

![Pairwise crossover [Fränti et al, 1997: Computer Journal] Greedy approach: • For each centroid, Pairwise crossover [Fränti et al, 1997: Computer Journal] Greedy approach: • For each centroid,](http://slidetodoc.com/presentation_image/023037dfb6e8a20a8004fb170ba724ae/image-18.jpg)

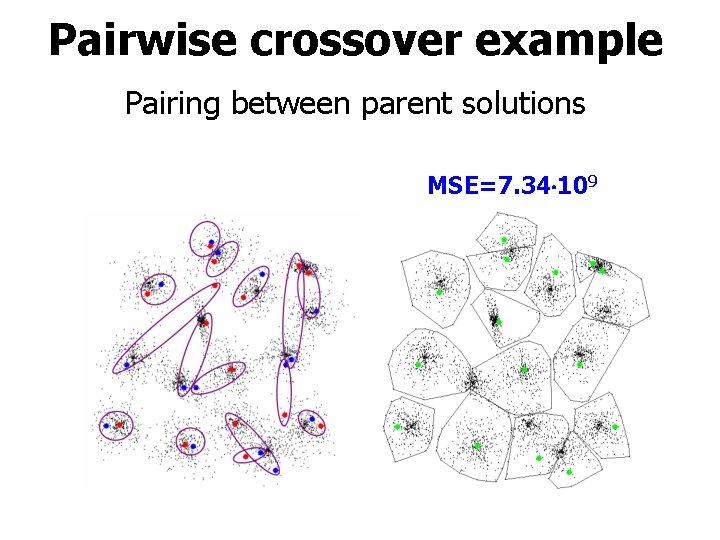

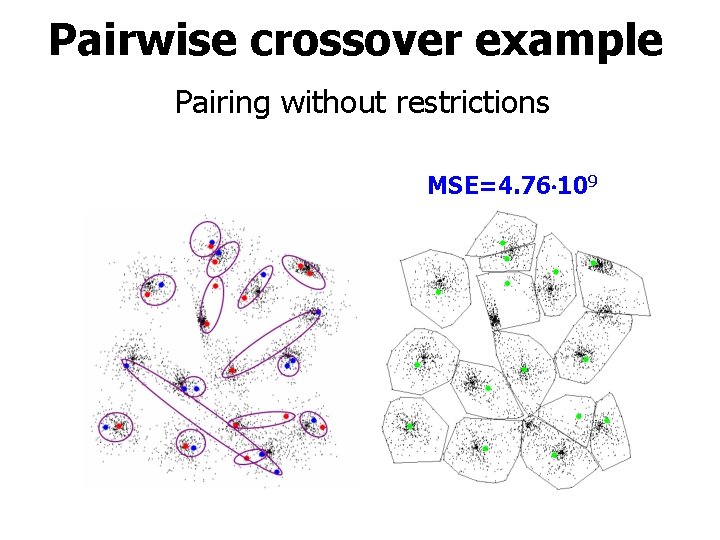

Pairwise crossover [Fränti et al, 1997: Computer Journal] Greedy approach: • For each centroid, find its nearest centroid in the other parent solution that is not yet used. • Among all pairs, select one of the two randomly. Small improvement: • No reason to consider the parents as separate solutions. • Take union of all centroids. • Make the pairing independent of parent.

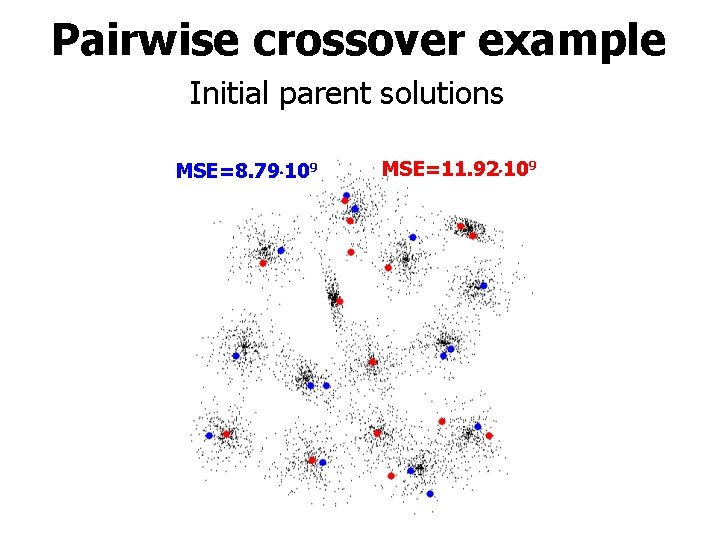

Pairwise crossover example Initial parent solutions MSE=8. 79 109 MSE=11. 92 109

Pairwise crossover example Pairing between parent solutions MSE=7. 34 109

Pairwise crossover example Pairing without restrictions MSE=4. 76 109

![Largest partitions [Fränti et al, 1997: Computer Journal] Crossover algorithm: • Each cluster in Largest partitions [Fränti et al, 1997: Computer Journal] Crossover algorithm: • Each cluster in](http://slidetodoc.com/presentation_image/023037dfb6e8a20a8004fb170ba724ae/image-22.jpg)

Largest partitions [Fränti et al, 1997: Computer Journal] Crossover algorithm: • Each cluster in the solutions A and B is assigned with a number, cluster size S, indicating how many data objects belong to it. • In each phase we pick the centroid of the largest cluster. • Assume that cluster i was chosen from A. The cluster centroid Ci is removed from A to avoid its reselection. • For the same reason we update the cluster sizes of B by removing the effect of those data objects in B that were assigned to the chosen cluster i in A.

![Largest partitions [Fränti et al, 1997: Computer Journal] Parent solution A Parent solution B Largest partitions [Fränti et al, 1997: Computer Journal] Parent solution A Parent solution B](http://slidetodoc.com/presentation_image/023037dfb6e8a20a8004fb170ba724ae/image-23.jpg)

Largest partitions [Fränti et al, 1997: Computer Journal] Parent solution A Parent solution B S=50 S=30 S=100 c 1 S=100 S=20 Explanation Data point Centroid

![PNN crossover for GA [Fränti et al, 1997: The Computer Journal] Initial 1 Combined PNN crossover for GA [Fränti et al, 1997: The Computer Journal] Initial 1 Combined](http://slidetodoc.com/presentation_image/023037dfb6e8a20a8004fb170ba724ae/image-24.jpg)

PNN crossover for GA [Fränti et al, 1997: The Computer Journal] Initial 1 Combined Initial 2 Union PNN After PNN

![The PNN crossover method (1) [Fränti, 2000: Pattern Recognition Letters] The PNN crossover method (1) [Fränti, 2000: Pattern Recognition Letters]](http://slidetodoc.com/presentation_image/023037dfb6e8a20a8004fb170ba724ae/image-25.jpg)

The PNN crossover method (1) [Fränti, 2000: Pattern Recognition Letters]

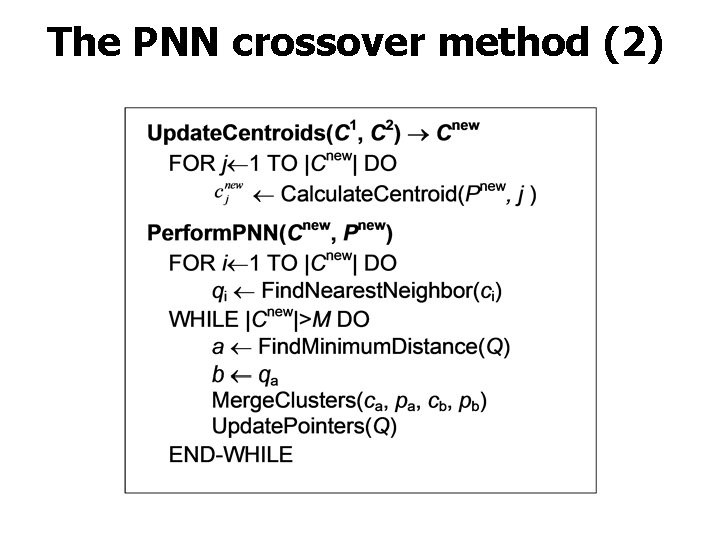

The PNN crossover method (2)

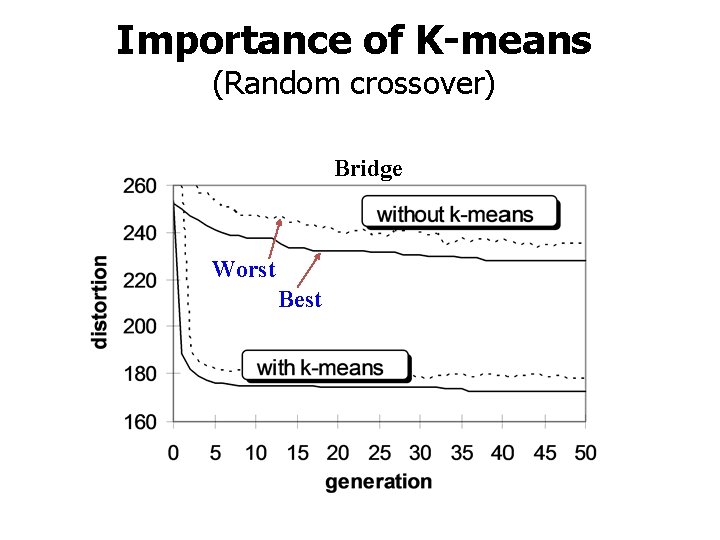

Importance of K-means (Random crossover) Bridge Worst Best

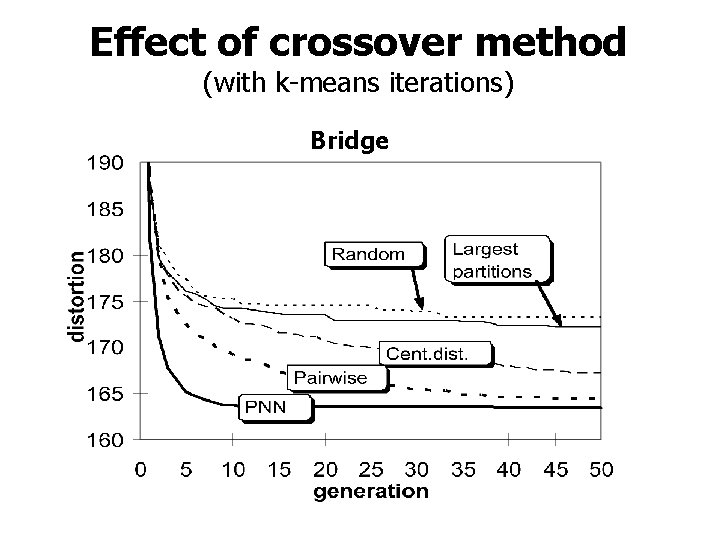

Effect of crossover method (with k-means iterations) Bridge

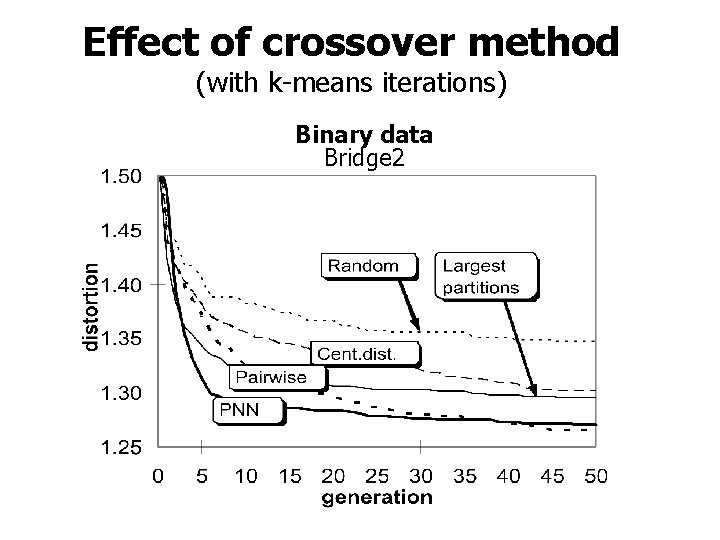

Effect of crossover method (with k-means iterations) Binary data Bridge 2

Mutations

Mutations • Purpose is to implement small random changes to the solutions. • Happens with a small probability. • Sensible approach: change the location of one centroid by the random swap! • Role of mutations is to simulate local search. • If mutations are needed crossover method is not very good.

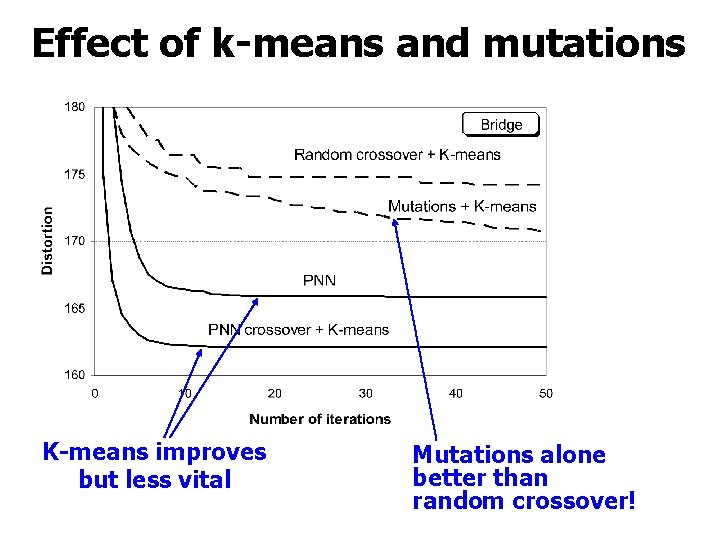

Effect of k-means and mutations K-means improves but less vital Mutations alone better than random crossover!

GAIS – Going extreme

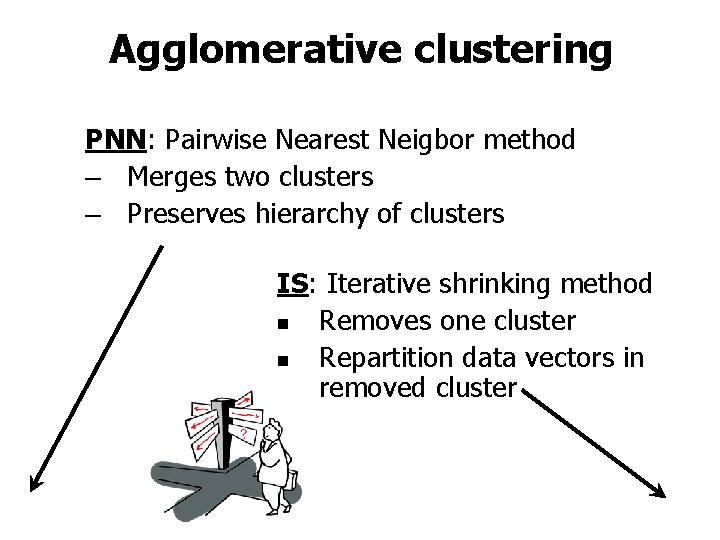

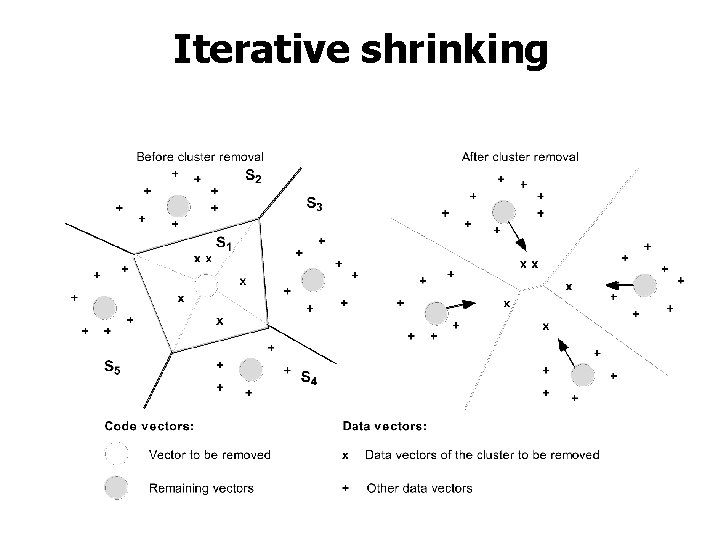

Agglomerative clustering PNN: Pairwise Nearest Neigbor method – Merges two clusters – Preserves hierarchy of clusters IS: Iterative shrinking method n Removes one cluster n Repartition data vectors in removed cluster

Iterative shrinking

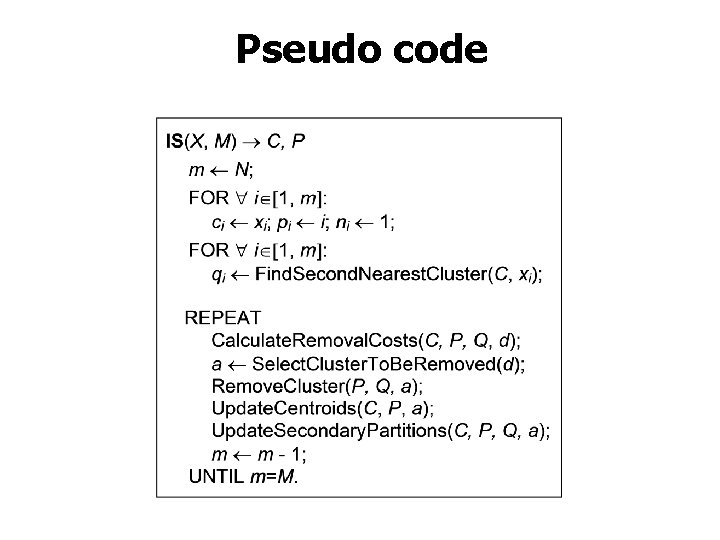

Pseudo code

Local optimization of IS Finding secondary cluster: Removal cost of single vector:

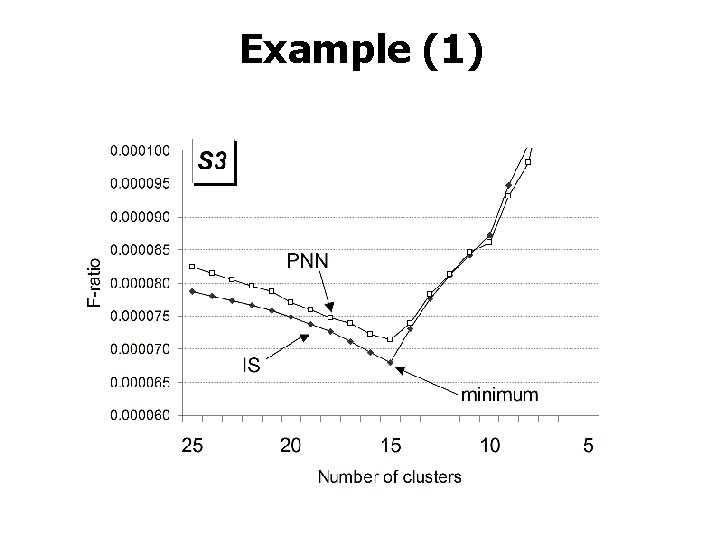

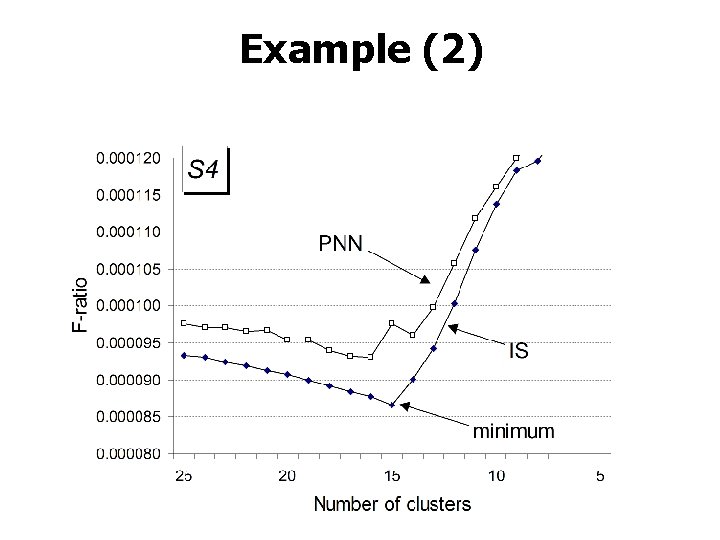

Example (1)

Example (2)

![Pseudo code of GAIS [Virmajoki & Fränti, 2006: Pattern Recognition] Pseudo code of GAIS [Virmajoki & Fränti, 2006: Pattern Recognition]](http://slidetodoc.com/presentation_image/023037dfb6e8a20a8004fb170ba724ae/image-40.jpg)

Pseudo code of GAIS [Virmajoki & Fränti, 2006: Pattern Recognition]

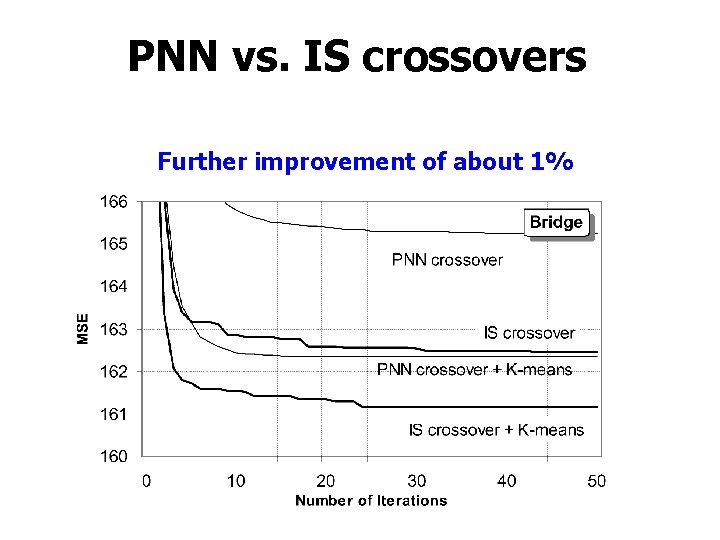

PNN vs. IS crossovers Further improvement of about 1%

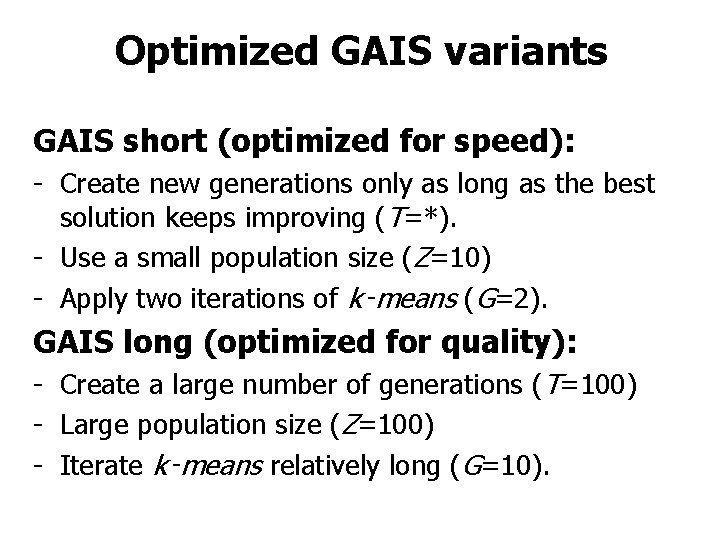

Optimized GAIS variants GAIS short (optimized for speed): - Create new generations only as long as the best solution keeps improving (T=*). - Use a small population size (Z=10) - Apply two iterations of k‑means (G=2). GAIS long (optimized for quality): - Create a large number of generations (T=100) - Large population size (Z=100) - Iterate k‑means relatively long (G=10).

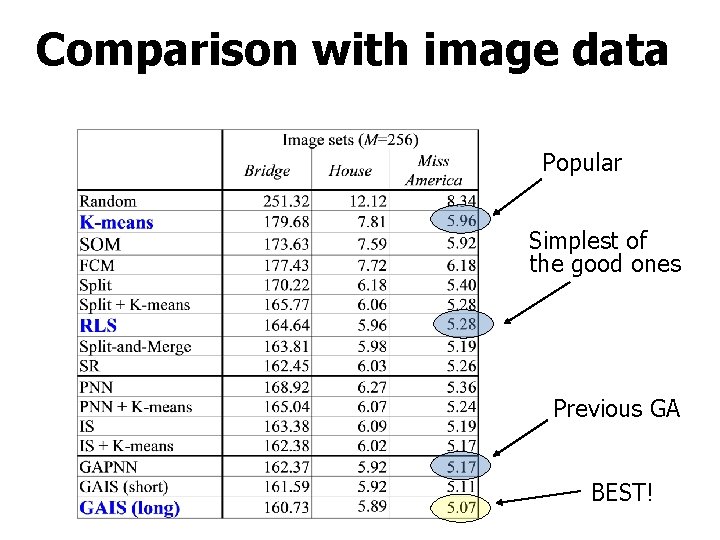

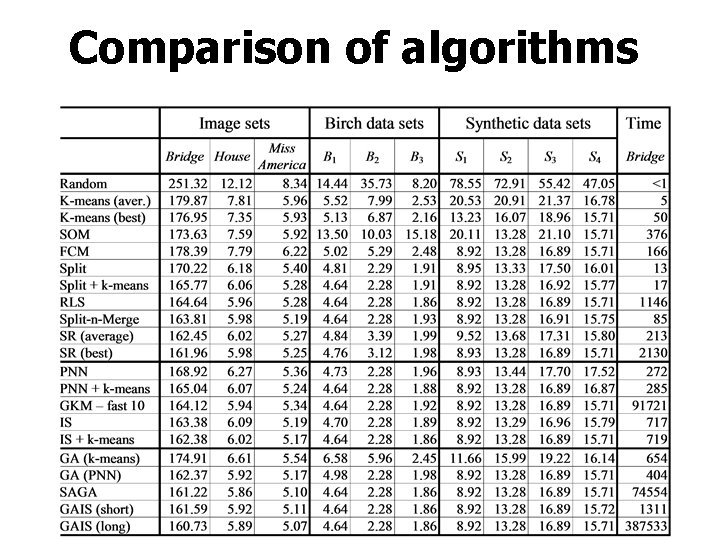

Comparison with image data Popular Simplest of the good ones Previous GA BEST!

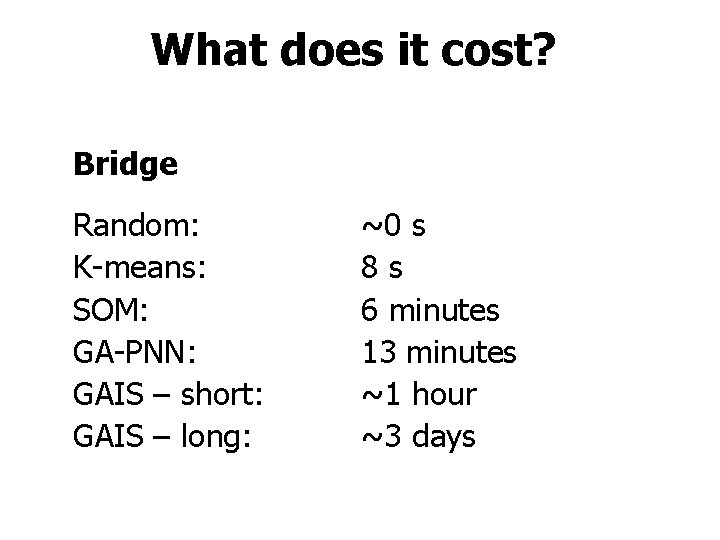

What does it cost? Bridge Random: K-means: SOM: GA-PNN: GAIS – short: GAIS – long: ~0 s 8 s 6 minutes 13 minutes ~1 hour ~3 days

Comparison of algorithms

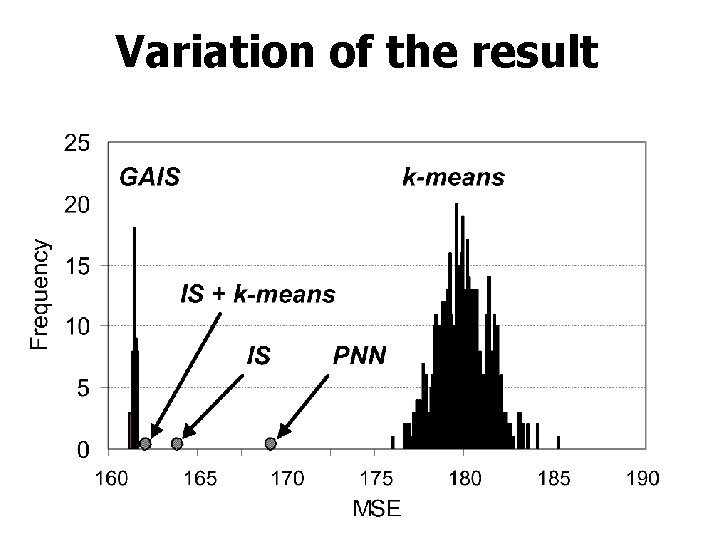

Variation of the result

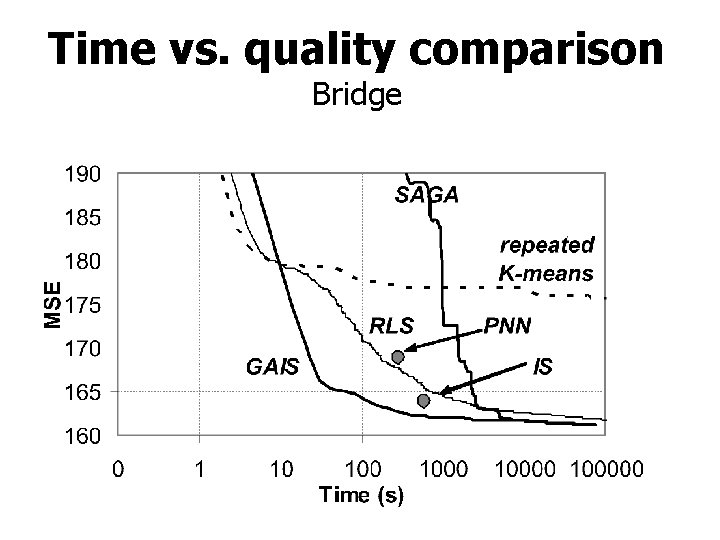

Time vs. quality comparison Bridge

Conclusions • Best clustering obtained by GA • Crossover method most important • Mutations not needed

References 1. 2. 3. 4. 5. 6. P. Fränti and O. Virmajoki, "Iterative shrinking method for clustering problems", Pattern Recognition, 39 (5), 761 -765, May 2006. P. Fränti, "Genetic algorithm with deterministic crossover for vector quantization", Pattern Recognition Letters, 21 (1), 61 -68, January 2000. P. Fränti, J. Kivijärvi, T. Kaukoranta and O. Nevalainen, "Genetic algorithms for large scale clustering problems", The Computer Journal, 40 (9), 547 -554, 1997. J. Kivijärvi, P. Fränti and O. Nevalainen, "Self-adaptive genetic algorithm for clustering", Journal of Heuristics, 9 (2), 113 -129, 2003. J. S. Pan, F. R. Mc. Innes and M. A. Jack, VQ codebook design using genetic algorithms. Electronics Letters, 31, 1418 -1419, August 1995. P. Scheunders, A genetic Lloyd-Max quantization algorithm. Pattern Recognition Letters, 17, 547 -556, 1996.

Wor ki ng s pace Text box

- Slides: 50