Corpora and Statistical Methods Albert Gatt In this

Corpora and Statistical Methods Albert Gatt

In this lecture �We have considered distributions of words and lexical variation in corpora. �Today we consider collocations: �definition and characteristics �measures of collocational strength �experiments on corpora �hypothesis testing Corpora and Statistical Methods

Part 1 Collocations: Definition and characteristics

A motivating example �Consider phrases such as: �strong tea ? powerful tea �strong support ? powerful support �powerful drug ? strong drug �Traditional semantic theories have difficulty accounting for these patterns. �strong and powerful seem near-synonyms �do we claim they have different senses? �what is the crucial difference? Corpora and Statistical Methods

The empiricist view of meaning �Firth’s view (1957): �“You shall know a word by the company it keeps” �This is a contextual view of meaning, akin to that espoused by Wittgenstein (1953). �In the Firthian tradition, attention is paid to patterns that crop up with regularity in language. �Contrast symbolic/rationalist approaches, emphasising polysemy, componential analysis, etc. �Statistical work on collocations tends to follow this tradition. Corpora and Statistical Methods

![Defining collocations �“Collocations … are statements of the habitual or customary places of [a] Defining collocations �“Collocations … are statements of the habitual or customary places of [a]](http://slidetodoc.com/presentation_image_h2/f1707ff645aa1c161f8fb2f859f8fec9/image-6.jpg)

Defining collocations �“Collocations … are statements of the habitual or customary places of [a] word. ” (Firth 1957) �Characteristics/Expectations: �regular/frequently attested; �occur within a narrow window (span of few words); �not fully compositional; �non-substitutable; �non-modifiable �display category restrictions Corpora and Statistical Methods

Frequency and regularity �We know that language is regular (non-random) and rule-based. �this aspect is emphasised by rationalist approaches to grammar �We also need to acknowledge that frequency of usage is an important factor in language development. �why do big and large collocate differently with different nouns? Corpora and Statistical Methods

Regularity/frequency �f(strong tea) > f(powerful tea) �f(credit card) > f(credit bankruptcy) �f(white wine) > f(yellow wine) �(even though white wine is actually yellowish) Corpora and Statistical Methods

Narrow window (textual proximity) � Usually, we specify an n-gram window within which to analyse collocations: �bigram: credit card, credit crunch �trigram: credit card fraud, credit card expiry �… � The idea is to look at co-occurrence of words within a specific n-gram window � We can also count n-grams with intervening words: �federal (. *) subsidy �matches: federal subsidy, federal farm subsidy, federal manufacturing subsidy… Corpora and Statistical Methods

Textual proximity (continued) �Usually collocates of a word occur close to that word. �may still occur across a span �Examples: �bigram: white wine, powerful tea �>bigram: knock on the door; knock on X’s door Corpora and Statistical Methods

Non-compositionality � white wine �not really “white”, meaning not fully predictable from component words + syntax � signal interpretation �a term used in Intelligent Signal Processing: connotations go beyond compositional meaning � Similarly: �regression coefficient �good practice guidelines � Extreme cases: �idioms such as kick the bucket �meaning is completely frozen Corpora and Statistical Methods

Non-substitutability �If a phrase is a collocation, we can’t substitute a word in the phrase for a near-synonym, and still have the same overall meaning. �E. g. : �white wine vs. yellow wine �powerful tea vs. strong tea �… Corpora and Statistical Methods

Non-modifiability �Often, there are restrictions on inserting additional lexical items into the collocation, especially in the case of idioms. �Example: �kick the bucket vs. ? kick the large bucket �NB: �this is a matter of degree! �non-idiomatic collocations are more flexible Corpora and Statistical Methods

Category restrictions �Frequency alone doesn’t indicate collocational strength: �by the is a very frequent phrase in English �not a collocation �Collocations tend to be formed from content words: �A+N: powerful tea �N+N: regression coefficient, mass demonstration �N+PREP+N: degrees of freedom Corpora and Statistical Methods

Collocations “in a broad sense” �In many statistical NLP applications, the term collocation is quite broadly understood: �any phrase which is frequent/regular enough… �proper names (New York) �compound nouns (elevator operator) �set phrases (part of speech) �idioms (kick the bucket) Corpora and Statistical Methods

Why are collocations interesting? �Several applications need to “know” about collocations: �terminology extraction: technical or domain-specific phrases crop up frequently in text (oil prices) �document classification: specialist phrases are good indicators of the topic of a text �named entity recognition: names such as New York tend to occur together frequently; phrases like new toy don’t Corpora and Statistical Methods

Example application: Parsing � She spotted the man with a pair of binoculars 1. [VP spotted [NP the man [PP with a pair of binoculars]]] 2. [VP spotted [NP the man] [PP with a pair of binoculars]] � Parser might prefer (2) if spot/binoculars are frequent co-occurrences in a window of a certain width. Corpora and Statistical Methods

Example application: Generation �NLG systems often need to map a semantic representation to a lexical/syntactic one. �Shouldn’t use the wrong adjective-noun combinations: clean face vs. ? immaculate face �Lapata et al. (1999): �experiment asking people to rate different adjective-noun combinations �frequency of the combination a strong predictor of people’s preferences �argue that NLG systems need to be able to make contextually-informed decisions in lexical choice Corpora and Statistical Methods

Finding collocations in corpora: basic methods

Frequency-based approach �Motivation: �if two (or three, or…) words occur together a lot within some window, they’re a collocation �Problems: �frequent “collocations” under this definition include with the, onto a, etc. �not very interesting… Corpora and Statistical Methods

Improving the frequency-based approach �Justeson & Katz (1995): �part of speech filter �only look at word combinations of the “right” category: �N + N: regression coefficient �N + PRP + N: jack in (the) box �… �dramatically improves the results �content-word combinations more likely to be phrases Corpora and Statistical Methods

Case study: strong vs. powerful �See: Manning & Schutze `99, Sec 5. 2 �Motivation: �try to distinguish the meanings of two quasi-synonyms �data from New York Times corpus �Basic strategy: �find all bigrams <w 1, w 2> where w 1 = strong or powerful �apply POS filter to remove strong on [crime], powerful in [industry] etc. Corpora and Statistical Methods

Case study (cont/d) �Sample results from Manning & Schutze `99: �f(strong support) = 50 �f(strong supporter) = 10 �f(powerful force) = 13 �f(powerful computers) = 10 �Teaser: �would you also expect powerful supporter? �what’s the difference between strong supporter and powerful supporter? Corpora and Statistical Methods

Limitations of frequency-based search �Only work for fixed phrases �But collocations can be “looser”, allowing interpolation of other words. �knock on [the, X’s, a] door �pull [a] punch �Simple frequency won’t do for these: different interpolated words dilute the frequency. Corpora and Statistical Methods

Using mean and variance �General idea: include bigrams even at a distance: w 1 X w 2 pull a punch �Strategy: �find co-occurrences of the two words in windows of varying length �compute mean offset between w 1 and w 2 �compute variance of offset between w 1 and w 2 �if offsets are randomly distributed, then we have high variance and conclude that <w 1, w 2> is not a collocation Corpora and Statistical Methods

Example outcomes (M&S `99) �position of strong wrt opposition �mean = -1. 15, standard dev = 0. 67 �i. e. most occurrences are strong […] opposition �position of strong wrt for �mean = -1. 12, standard dev = 2. 15 �i. e. for occurs anywhere around strong, SD is higher than mean. �can get strong support for, for the strong support, etc. Corpora and Statistical Methods

More limitations of frequency �If we use simple frequency or mean & variance, we have a good way of ranking likely collocations. �But how do we know if a frequent pattern is frequent enough? Is it above what would be predicted by chance? �We need to think in terms of hypothesis-testing. �Given <w 1, w 2>, we want to compare: �The hypothesis that they are non-independent. �The hypothesis that they are independent. Corpora and Statistical Methods

Preliminaries: Hypothesis testing and the binomial distribution

Permutations � Suppose we have the 5 words {the, dog, ate, a, bone} � How many permutations (possible orderings) are there of these words? �the dog ate a bone �dog the ate a bone �… � E. g. there are 5! = 120 ways of permuting 5 words.

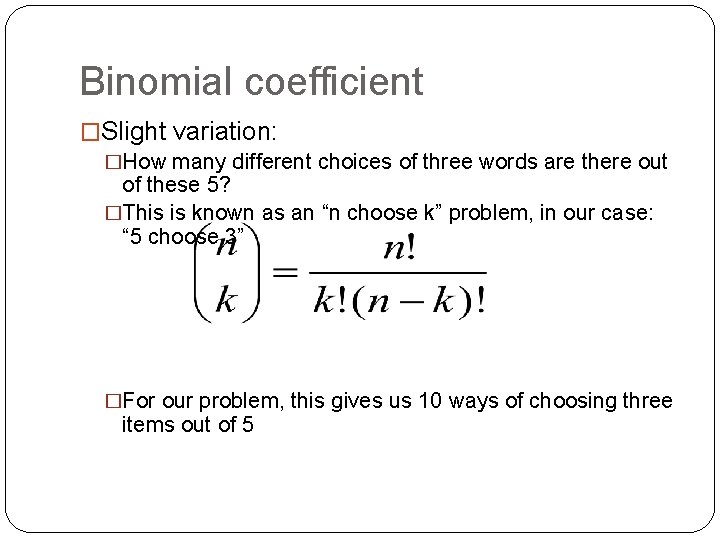

Binomial coefficient �Slight variation: �How many different choices of three words are there out of these 5? �This is known as an “n choose k” problem, in our case: “ 5 choose 3” �For our problem, this gives us 10 ways of choosing three items out of 5

Bernoulli trials � A Bernoulli (or binomial) trial is like a coin flip. Features: There are two possible outcomes (not necessarily with the same likelihood), e. g. success/failure or 1/0. 2. If the situation is repeated, then the likelihoods of the two outcomes are stable. 1.

Sampling with/out replacement �Suppose we’re interested in the probability of pulling out a function word from a corpus of 100 words. �we pull out words one by one without putting them back �Is this a Bernoulli trial? �we have a notion of success/failure: w is either a function word (“success”) or not (“failure”) �but our chances aren’t the same across trials: they diminish since we sample without replacement

Cutting corners �If the sample (e. g. the corpus) is large enough, then we can assume a Bernoulli situation even if we sample without replacement. �Suppose our corpus has 52 million words �Success = pulling out a function word �Suppose there are 13 million function words �First trial: p(success) =. 25 �Second trial: p(success) = 12, 999/51, 999 =. 249 �On very large samples, the chances remain relatively stable even without replacement.

Binomial probabilities - I �Let π represent the probability of success on a Bernoulli trial (e. g. our simple word game on a large corpus). �Then, p(failure) = 1 - π �Problem: What are the chances of achieving success 3 times out of 5 trials? �Assumption: each trial is independent of every other. �(Is this assumption reasonable? )

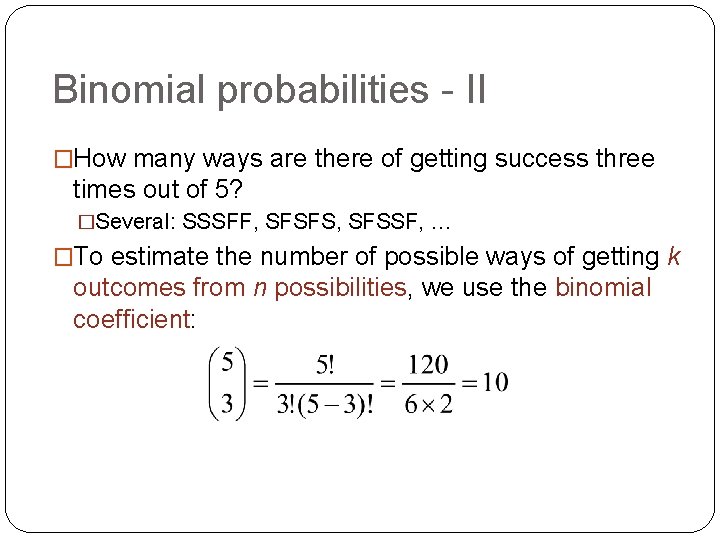

Binomial probabilities - II �How many ways are there of getting success three times out of 5? �Several: SSSFF, SFSFS, SFSSF, … �To estimate the number of possible ways of getting k outcomes from n possibilities, we use the binomial coefficient:

Binomial probabilities - III �“ 5 choose 3” gives 10. �Given independence, each of these sequences is equally likely. �What’s the probability of a sequence? �it’s an AND problem (multiplication rule) �P(SSSFF) = πππ(1 - π)(1 – π) = π3(1 - π)2 �P(SFSFS) = π(1 - π) π = π3(1 - π)2 �(they all come out the same)

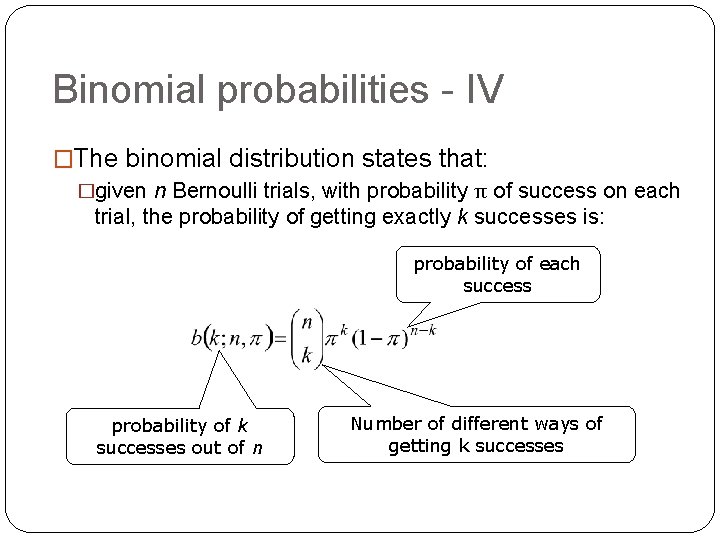

Binomial probabilities - IV �The binomial distribution states that: �given n Bernoulli trials, with probability π of success on each trial, the probability of getting exactly k successes is: probability of each success probability of k successes out of n Number of different ways of getting k successes

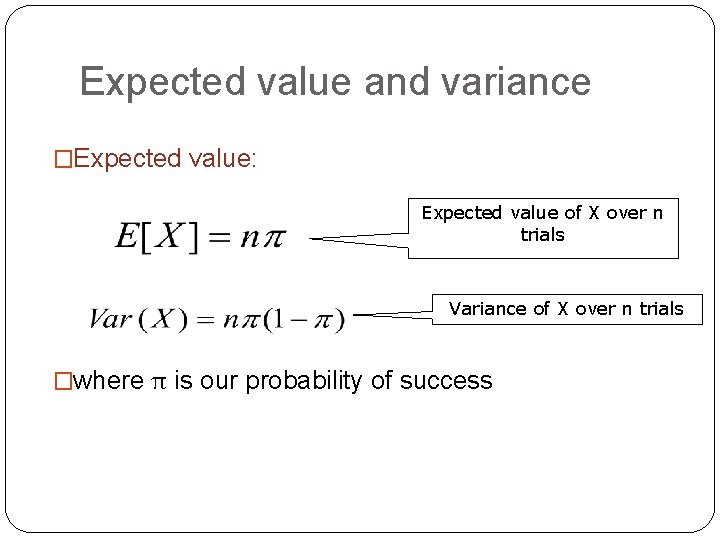

Expected value and variance �Expected value: Expected value of X over n trials Variance of X over n trials �where π is our probability of success

Using the t-test for collocation discovery

The logic of hypothesis testing �The typical scenario in hypothesis testing compares two hypotheses: 1. The research hypothesis 2. A null hypothesis � The idea is to set up our experiment (study, etc) in such a way that: � If we show the null hypothesis to be false then � we can affirm our research hypothesis with a certain degree of confidence

H 0 for collocation studies �There is no real association between w 1 and w 2, i. e. occurrence of <w 1, w 2> is no more likely than chance. �More formally: �H 0: P(w 1 & w 2) = P(w 1)P(w 2) �i. e. P(w 1) and P(w 2) are independent

Some more on hypothesis testing �Our research hypothesis (H 1): �<w 1, w 2> are strong collocates �P(w 1 & w 2) > P(w 1)P(w 2) �A null hypothesis H 0 �P(w 1 & w 2) = P(w 1)P(w 2) �How do we know whether our results are sufficient to affirm H 1? �I. e. how big is our risk of wrongly falsifying H 0?

The notion of significance �We generally fix a “level of confidence” in advance. �In many disciplines, we’re happy with being 95% confident that the result we obtain is correct. �So we have a 5% chance of error. �Therefore, we state our results at p = 0. 05 �“The probability of wrongly rejecting H 0 is 5% (0. 05)”

Tests for significance � Many of the tests we use involve: having a prior notion of what the mean/variance of a population is, according to H 0 2. computing the mean/variance on our sample of the population 3. checking whether the sample mean/variance is different from the sample predicted by H 0, at 95% confidence. 1.

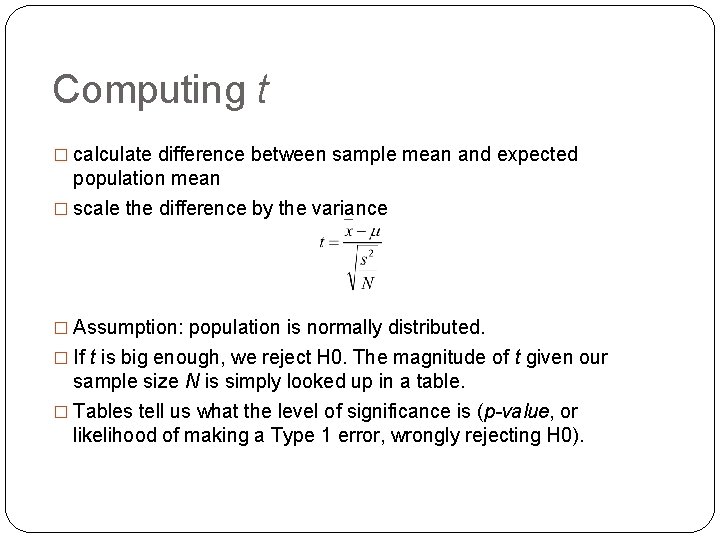

The t-test: strategy �obtain mean (x) and variance (s 2) for a sample �H 0: sample is drawn from a population with mean μ and variance σ2 �estimate the t value: this compares the sample mean/variance to the expected (population) mean/variance under H 0 �check if any difference found is significant enough to reject H 0

Computing t � calculate difference between sample mean and expected population mean � scale the difference by the variance � Assumption: population is normally distributed. � If t is big enough, we reject H 0. The magnitude of t given our sample size N is simply looked up in a table. � Tables tell us what the level of significance is (p-value, or likelihood of making a Type 1 error, wrongly rejecting H 0).

Example: new companies �We think of our corpus as a series of bigrams, and each sample we take is an indicator variable (Bernoulli trial): �value = 1 if a bigram is new companies �value = 0 otherwise �Compute P(new) and P(companies) using standard MLE. � H 0: P(new companies) = P(new)P(companies)

Example continued �We have computed the likelihood of our bigram of interest under H 0. �Since this is a Bernoulli Trial, this is also our expected mean. �We then compute the actual sample probability of <w 1, w 2> (new companies). �Compute t and check significance

Uses of the t-test �Often used to rank candidate collocations, rather than compute significance. �Stop word lists must be used, else all bigrams will be significant. �e. g. M&S report 824 out of 831 bigrams that pass the significance test. �Reason: �language is just not random �regularities mean that if the corpus is large enough, all bigrams will occur together regularly and often enough to be significant. �Kilgarriff (2005): Any null hypothesis will be rejected on a large enough corpus.

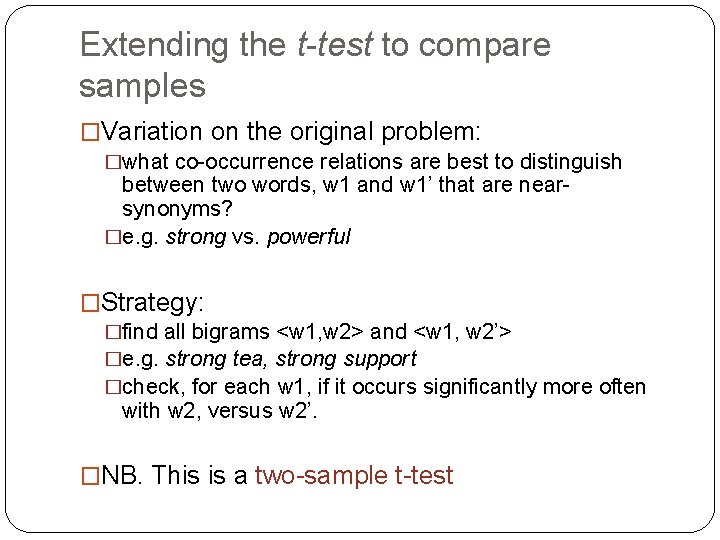

Extending the t-test to compare samples �Variation on the original problem: �what co-occurrence relations are best to distinguish between two words, w 1 and w 1’ that are nearsynonyms? �e. g. strong vs. powerful �Strategy: �find all bigrams <w 1, w 2> and <w 1, w 2’> �e. g. strong tea, strong support �check, for each w 1, if it occurs significantly more often with w 2, versus w 2’. �NB. This is a two-sample t-test

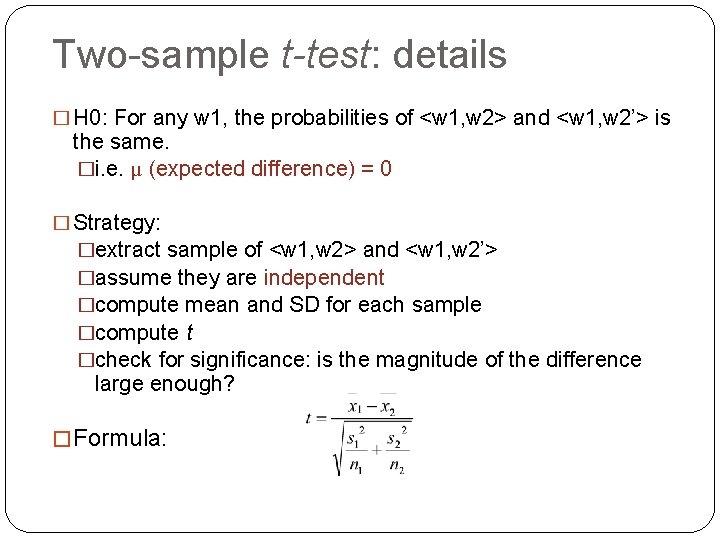

Two-sample t-test: details � H 0: For any w 1, the probabilities of <w 1, w 2> and <w 1, w 2’> is the same. �i. e. μ (expected difference) = 0 � Strategy: �extract sample of <w 1, w 2> and <w 1, w 2’> �assume they are independent �compute mean and SD for each sample �compute t �check for significance: is the magnitude of the difference large enough? � Formula:

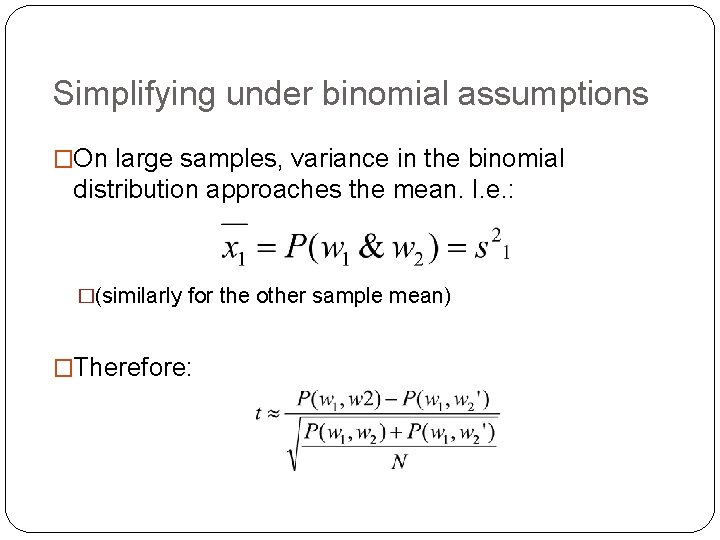

Simplifying under binomial assumptions �On large samples, variance in the binomial distribution approaches the mean. I. e. : �(similarly for the other sample mean) �Therefore:

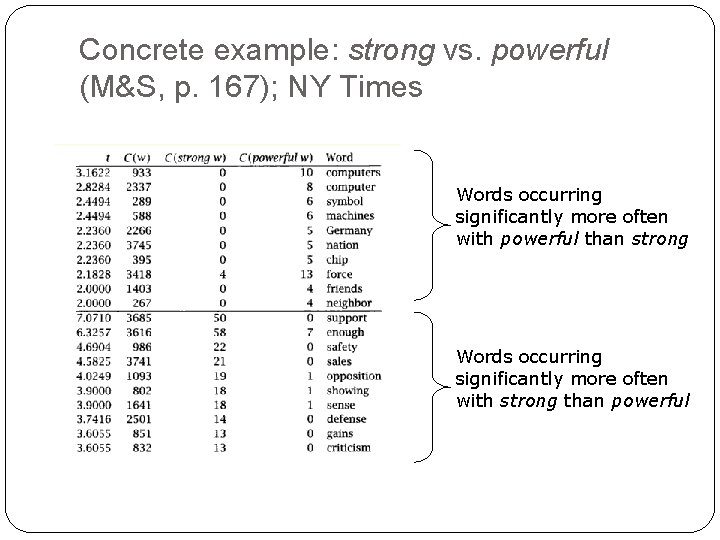

Concrete example: strong vs. powerful (M&S, p. 167); NY Times Words occurring significantly more often with powerful than strong Words occurring significantly more often with strong than powerful

Criticisms of the t-test �Assumes that the probabilities are normally distributed. This is probably not the case in linguistic data, where probabilities tend to be very large or very small. �Alternative: chi-squared test (Χ 2) �compare differences between expected and observed frequencies (e. g. of bigrams)

The chi-square test

Example �Imagine we’re interested in whether poor performance is a good collocation. �H 0: frequency of poor performance is no different from the expected frequency if each word occurs independently. �Find frequencies of bigrams containing poor, performance and poor performance. �compare actual to expected frequencies �check if the value is high enough to reject H 0

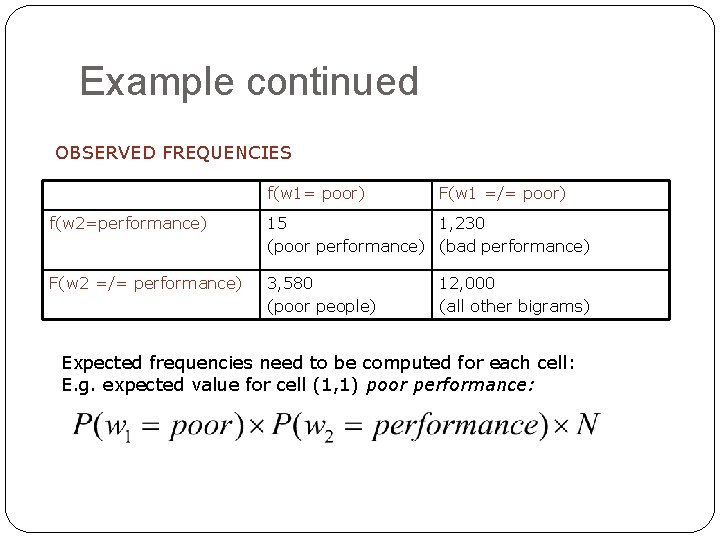

Example continued OBSERVED FREQUENCIES f(w 1= poor) F(w 1 =/= poor) f(w 2=performance) 15 1, 230 (poor performance) (bad performance) F(w 2 =/= performance) 3, 580 (poor people) 12, 000 (all other bigrams) Expected frequencies need to be computed for each cell: E. g. expected value for cell (1, 1) poor performance:

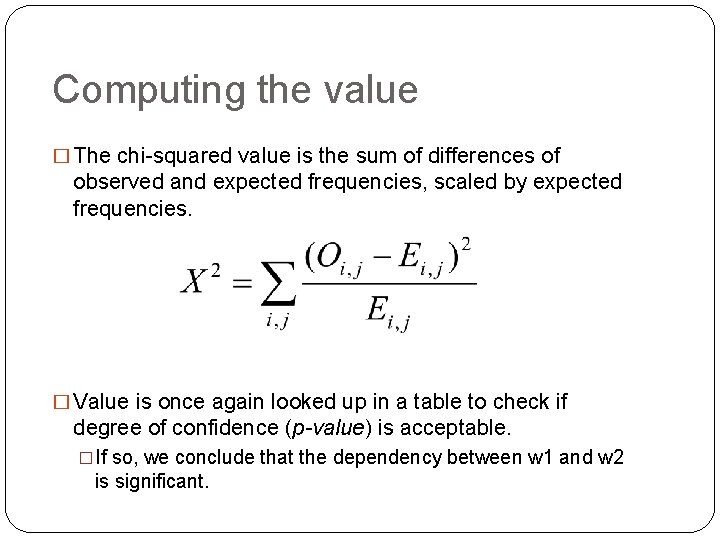

Computing the value � The chi-squared value is the sum of differences of observed and expected frequencies, scaled by expected frequencies. � Value is once again looked up in a table to check if degree of confidence (p-value) is acceptable. �If so, we conclude that the dependency between w 1 and w 2 is significant.

More applications of this statistic � Kilgarriff and Rose 1998 use chi-square as a measure of corpus similarity �draw up an n (row)*2 (column) table �columns correspond to corpora �rows correspond to individual types �compare the difference in counts between corpora �H 0: corpora are drawn from the same underlying linguistic population (e. g. register or variety) �corpora will be highly similar if the ratio of counts for each word is roughly constant. �This uses lexical variation to compute corpussimilarity.

Limitations of t-test and chi-square �Not easily interpretable �a large chi-square or t value suggests a large difference �but makes more sense as a comparative measure, rather than in absolute terms �t-test is problematic because of the normality assumption �chi-square doesn’t work very well for small frequencies (by convention, we don’t calculate it if the expected value for any of the cells is less than 5) �but n-grams will often be infrequent!

Likelihood ratios for collocation discovery

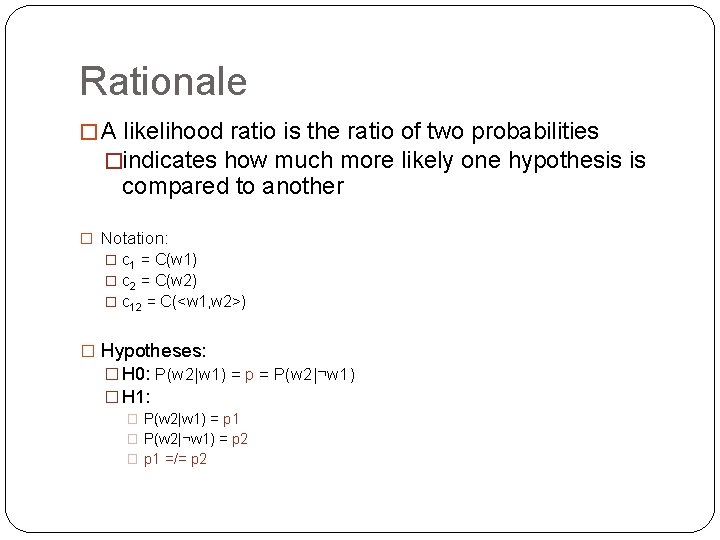

Rationale � A likelihood ratio is the ratio of two probabilities �indicates how much more likely one hypothesis is compared to another � Notation: � c 1 = C(w 1) � c 2 = C(w 2) � c 12 = C(<w 1, w 2>) � Hypotheses: � H 0: P(w 2|w 1) = p = P(w 2|¬w 1) � H 1: � P(w 2|w 1) = p 1 � P(w 2|¬w 1) = p 2 � p 1 =/= p 2

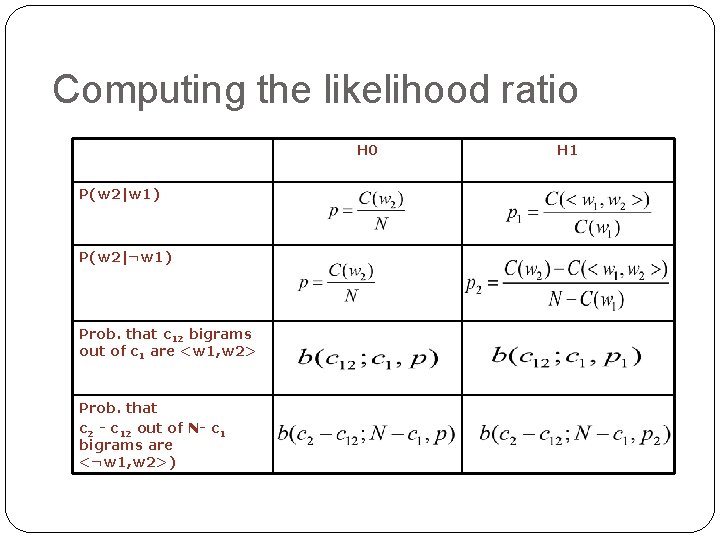

Computing the likelihood ratio H 0 P(w 2|w 1) P(w 2|¬w 1) Prob. that c 12 bigrams out of c 1 are <w 1, w 2> Prob. that c 2 - c 12 out of N- c 1 bigrams are <¬w 1, w 2>) H 1

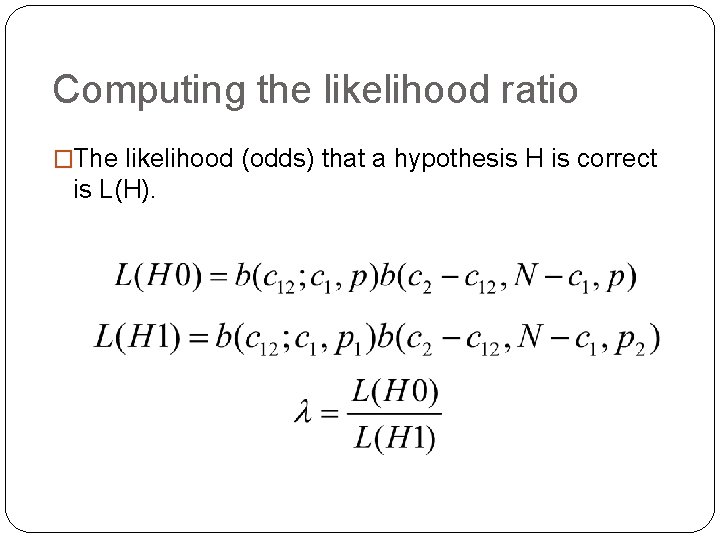

Computing the likelihood ratio �The likelihood (odds) that a hypothesis H is correct is L(H).

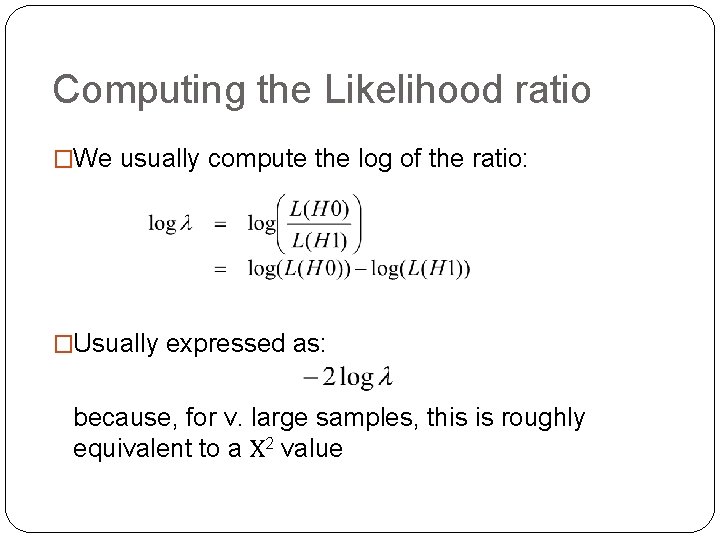

Computing the Likelihood ratio �We usually compute the log of the ratio: �Usually expressed as: because, for v. large samples, this is roughly equivalent to a Χ 2 value

Interpreting the ratio �Suppose that the likelihood ratio for some bigram <w 1, w 2> is x. This says: �If we make the hypothesis that w 2 is somehow dependent on w 1, then we expect it to occur x times more than its actual base rate of occurrence would predict. �This ratio is also better for sparse data. �we can use the estimate as an approximate chi-square value even when expected frequencies are small.

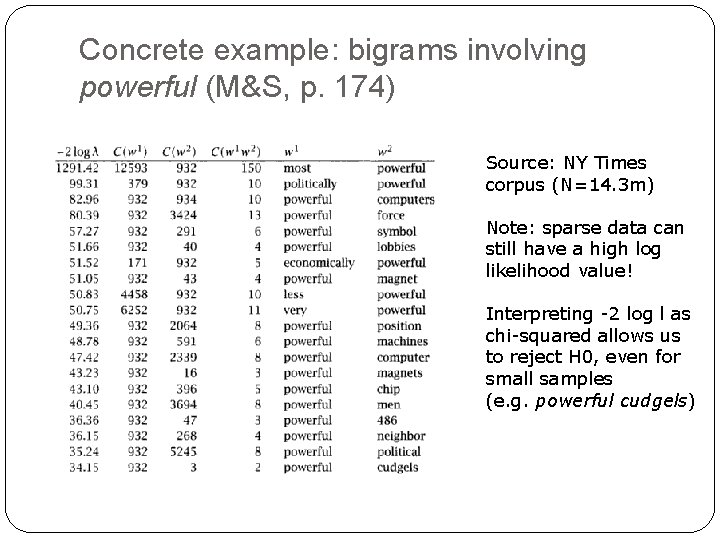

Concrete example: bigrams involving powerful (M&S, p. 174) Source: NY Times corpus (N=14. 3 m) Note: sparse data can still have a high log likelihood value! Interpreting -2 log l as chi-squared allows us to reject H 0, even for small samples (e. g. powerful cudgels)

Relative frequency ratios �An extension of the same logic of a likelihood ratio �used to compare collocations across corpora �Let <w 1, w 2> be our bigram of interest. �Let C 1 and C 2 be two corpora: �p 1 = P(<w 1, w 2>) in C 1 �p 2 = P(<w 1, w 2>) in C 2. �r= p 1/p 2 gives an indication of the relative likelihood of <w 1, w 2> in C 1 and C 2.

Example application �Manning and Schutze (p. 176) compare: �C 1: NY Times texts from 1990 �C 2: NY Times texts from 1989 �Bigram <East, Berliners> occurs 44 times in C 2, but only 2 times in C 1, so r = 0. 03 �The big difference is due to 1989 papers dealing more with the fall of the Berlin Wall.

Summary �We’ve now considered two forms of hypothesis testing: �t-test �chi-square �Also, log-likelihood ratios as measures of relative probability under different hypotheses. �Next, we begin to look at the problem of lexical acquisition.

References � M. Lapata, S. Mc. Donald & F. Keller (1999). Determinants of Adjective-Noun plausibility. Proceedings of the 9 th Conference of the European Chapter of the Association for Computational Linguistics, EACL-99 � A. Kilgarriff (2005). Language is never, ever random. Corpus Linguistics and Linguistic Theory 1(2): 263 � Church, K. and Hanks, P. (1990). Word association norms, mutual information and lexicography. Computational Linguistics 16(1).

- Slides: 71