Corpora and Statistical Methods Lecture 3 Albert Gatt

Corpora and Statistical Methods – Lecture 3 Albert Gatt

Part 1 Zipf’s law and the Zipfian distribution

Identifying words

Words �Levels of identification: �Graphical word (a token) �Dependent on surface properties of text �Underlying word (stem, root…) �Dependent on some form of morphological analysis �Practical definition: A word… �is an indivisible (!) sequence of characters �carries elementary meaning �is reusable in different contexts

Indivisibility �Words can have compositional meaning from parts that are either words themselves, or prefixes and suffixes �colour + -less = colourless (derivation) �data + base = database (compounding) �The notion of “atomicity” or “indivisibility” is a matter of degree.

Problems with indivisibility � Definite article in Maltese �il-kelb DEF-dog “the dog” �phonologically dependent on word � German componding �Lebensversicherunggesellschaftsangestellter “life insurance company employee” � Arabic conjunctions: �waliy �One possible gloss: and I follow (w- is “and”)

Resuability �Words become part of the lexicon of a language, and can be reused. �But some words can be formed on the fly using productive morphological processes. �Many words are used very rarely �A large majority of the lexicon is inaccessible to native speakers �Approximately 50% of the words in a novel will be used only once within that novel (hapax legomena)

The graphic definition �Many corpora, starting with Brown, use a definition of a graphic word: �sequence of letters/numbers �possibly some other symbols �separated by whitespace or punctuation �But even here, there are exceptions. �Not much use for tokenisation of languages like Arabic.

Non-alphanumeric characters �Numbers such as 22. 5 �in word frequency counts, typically mapped to a single type ## �Other characters: �Abbreviations: U. S. A. �Apostrophes: O’Hara vs. John’s �Whitespace: New Delhi �A problem for tokenisation �Hyphenated compounds: �so-called, A-1 -plus vs. aluminum-export industry �How many words do we have here?

Tokenisation �Task of breaking up running text into component words. �Crucial for most NLP tasks, as parameters typically estimated based on words. �Can be statistical or rule-based. Often, simple regular expressions will go a long way. �Some practical problems: �Whitespace: very useful in Indo-European languages. In others (e. g. East Asian languages, ancient Greek) no space is used. �Non-alphanumeric symbols: need to decide if these are part of a word or not.

Types and tokens

Running example �Throughout this lecture, data is taken from a corpus of Maltese texts: �ca. 51, 000 words �all from Maltese-language newspapers �various topics and article types �Compared to data from English corpora taken from Baroni 2007

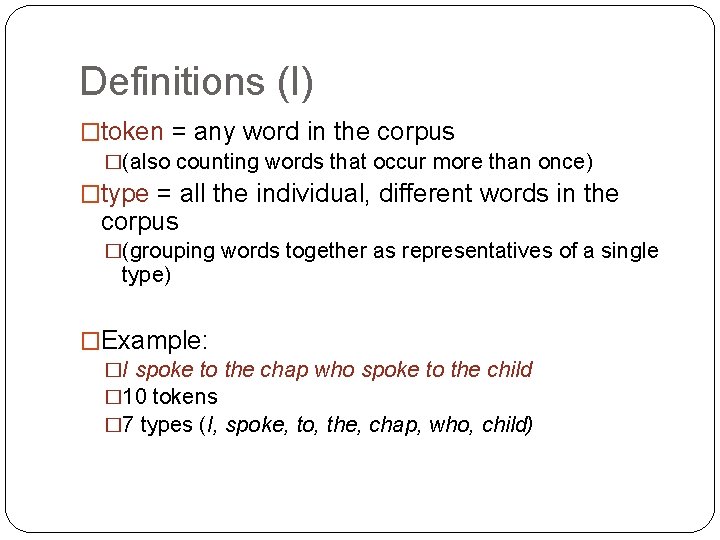

Definitions (I) �token = any word in the corpus �(also counting words that occur more than once) �type = all the individual, different words in the corpus �(grouping words together as representatives of a single type) �Example: �I spoke to the chap who spoke to the child � 10 tokens � 7 types (I, spoke, to, the, chap, who, child)

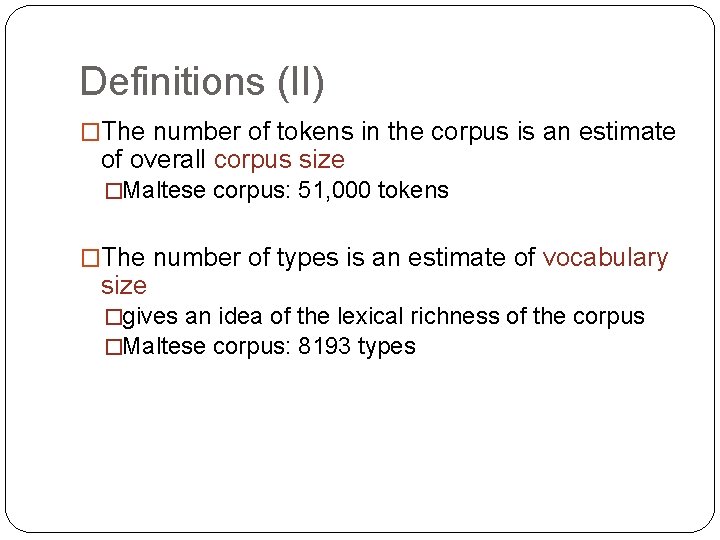

Definitions (II) �The number of tokens in the corpus is an estimate of overall corpus size �Maltese corpus: 51, 000 tokens �The number of types is an estimate of vocabulary size �gives an idea of the lexical richness of the corpus �Maltese corpus: 8193 types

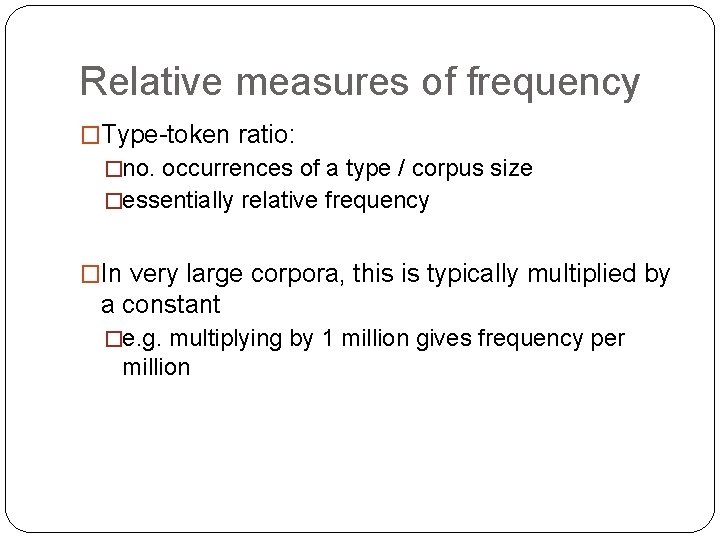

Relative measures of frequency �Type-token ratio: �no. occurrences of a type / corpus size �essentially relative frequency �In very large corpora, this is typically multiplied by a constant �e. g. multiplying by 1 million gives frequency per million

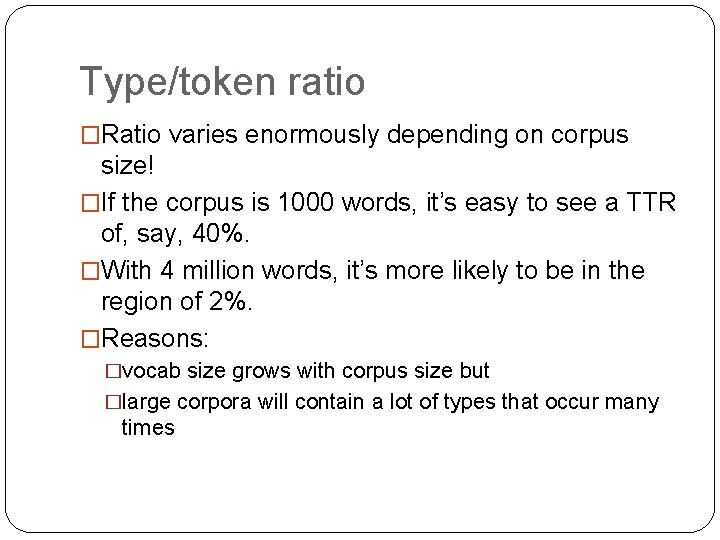

Type/token ratio �Ratio varies enormously depending on corpus size! �If the corpus is 1000 words, it’s easy to see a TTR of, say, 40%. �With 4 million words, it’s more likely to be in the region of 2%. �Reasons: �vocab size grows with corpus size but �large corpora will contain a lot of types that occur many times

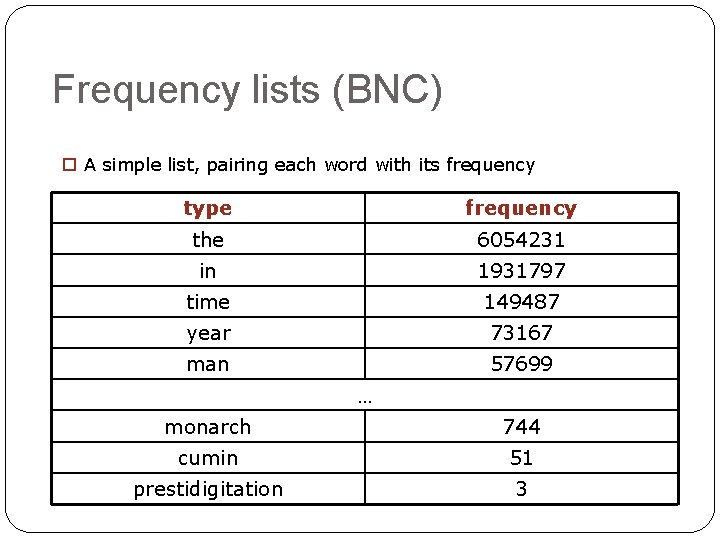

Frequency lists (BNC) o A simple list, pairing each word with its frequency type frequency the 6054231 in 1931797 time 149487 year 73167 man 57699 … monarch 744 cumin 51 prestidigitation 3

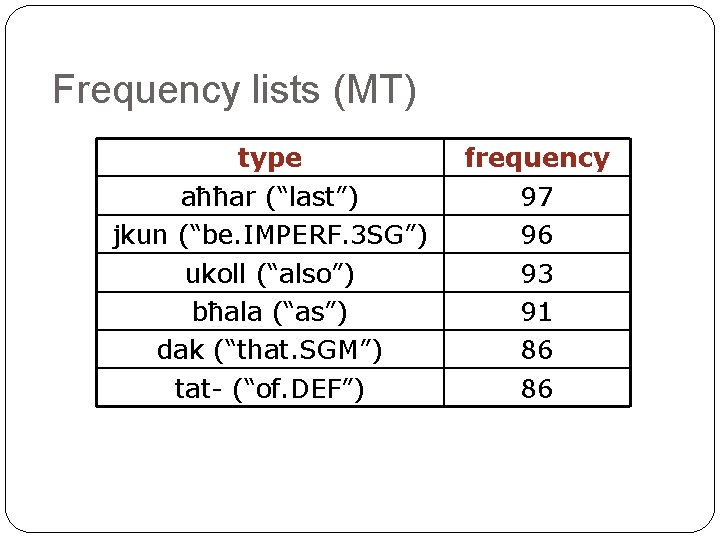

Frequency lists (MT) type aħħar (“last”) jkun (“be. IMPERF. 3 SG”) ukoll (“also”) bħala (“as”) dak (“that. SGM”) tat- (“of. DEF”) frequency 97 96 93 91 86 86

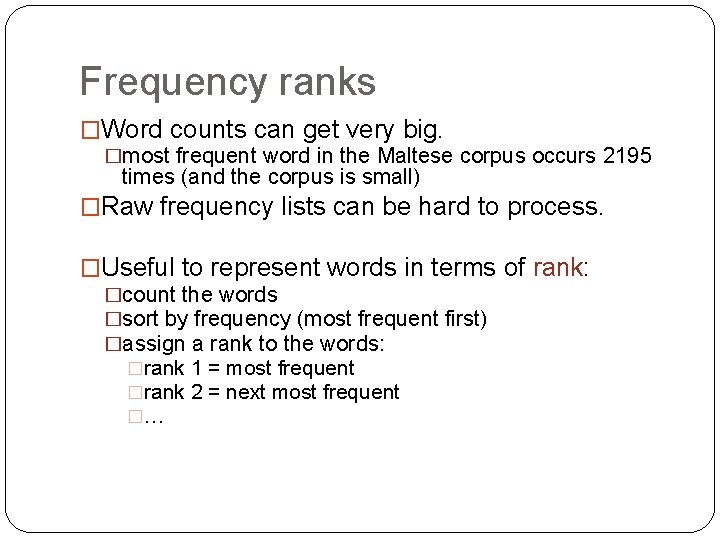

Frequency ranks �Word counts can get very big. �most frequent word in the Maltese corpus occurs 2195 times (and the corpus is small) �Raw frequency lists can be hard to process. �Useful to represent words in terms of rank: �count the words �sort by frequency (most frequent first) �assign a rank to the words: �rank 1 = most frequent �rank 2 = next most frequent �…

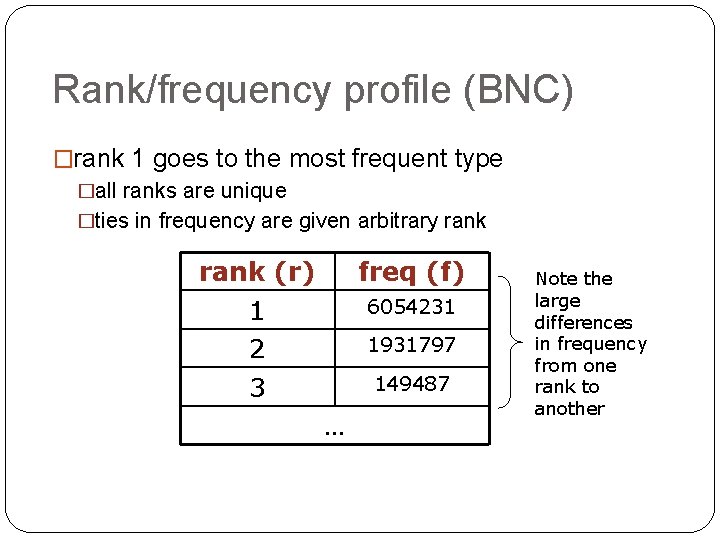

Rank/frequency profile (BNC) �rank 1 goes to the most frequent type �all ranks are unique �ties in frequency are given arbitrary rank (r) 1 2 3 freq (f) 6054231 1931797 149487 … Note the large differences in frequency from one rank to another

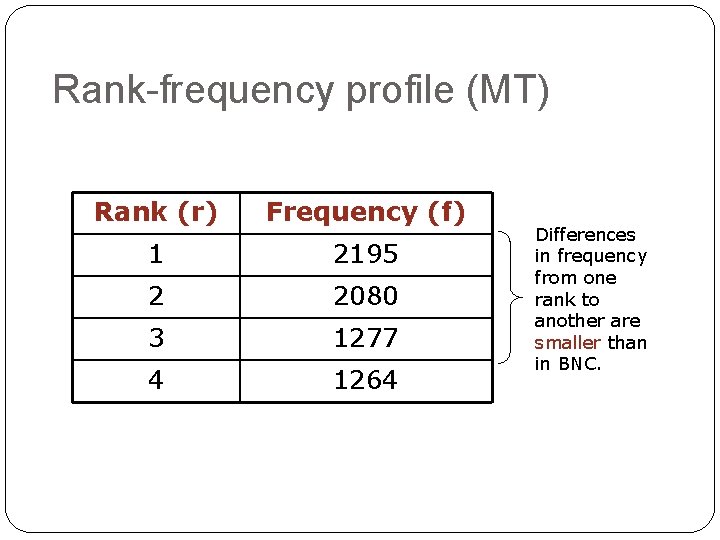

Rank-frequency profile (MT) Rank (r) Frequency (f) 1 2195 2 2080 3 1277 4 1264 Differences in frequency from one rank to another are smaller than in BNC.

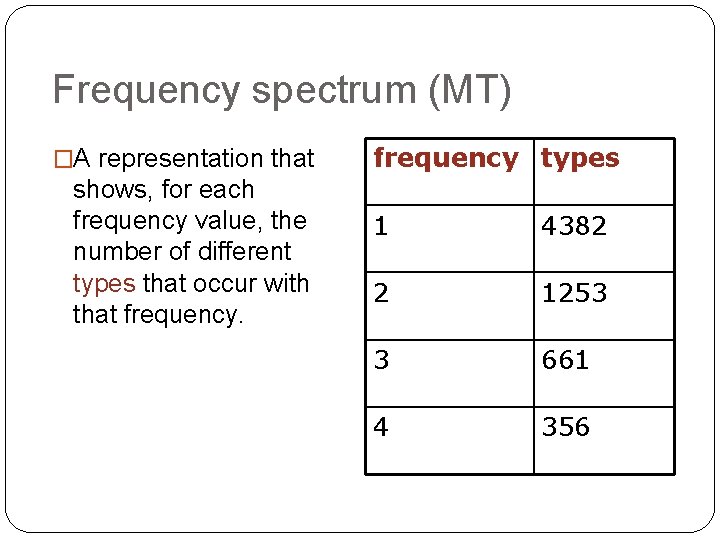

Frequency spectrum (MT) �A representation that shows, for each frequency value, the number of different types that occur with that frequency types 1 4382 2 1253 3 661 4 356

Word distributions (few giants, many midgets)

Non-linguistic case study �Suppose we are interested in measuring people’s height. �population = adult, male/female, European �sample: N people from the relevant population �measure height of each person in the sample �Results: �person 1: 1. 6 m �person 2: 1. 5 m �…

Measures of central tendency �Given the height of individuals in our sample, we can calculate some summary statistics: �mean (“average”): sum of all heights in sample, divided by N �mode: most frequent value �What are your expectations? �will most people be extremely tall? �extremely short? �more or less average?

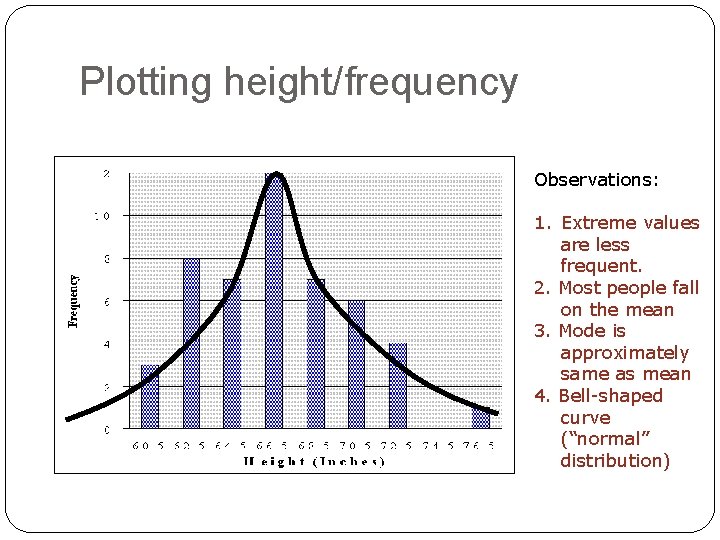

Plotting height/frequency Observations: 1. Extreme values are less frequent. 2. Most people fall on the mean 3. Mode is approximately same as mean 4. Bell-shaped curve (“normal” distribution)

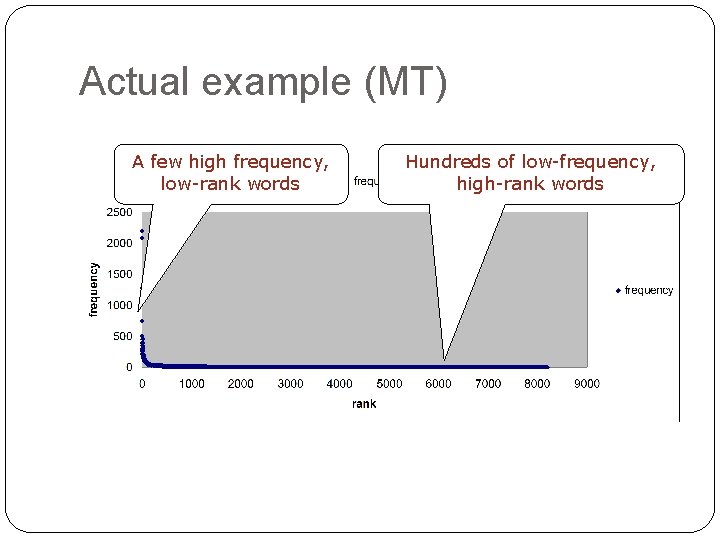

Distributions of words � Out of 51, 000 tokens in the Maltese corpus: � 8016 tokens belong to just the 5 most frequent types (the types at ranks 1 -- 5) �ca. 15% of our corpus size is made up of only 5 different words! � Out of 8193 types: � 4382 are hapax legomena, occurring only once (bottom ranks) � 1253 occur only twice �… � In this data, the mean won’t tell us very much. �it hides huge variations!

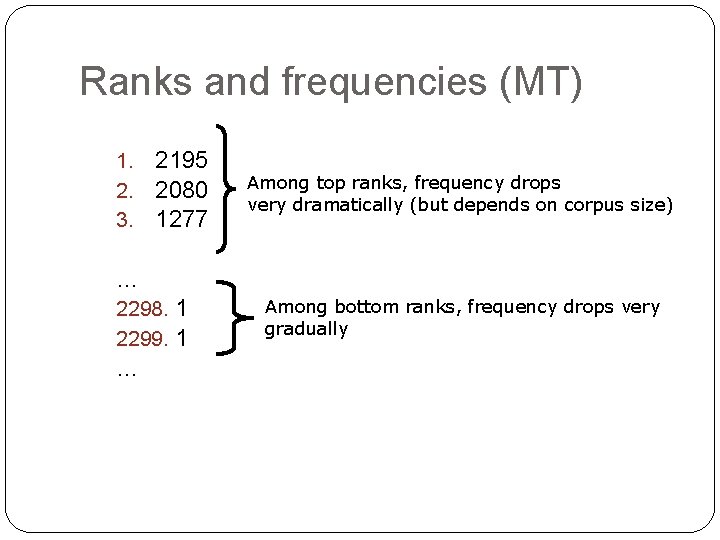

Ranks and frequencies (MT) 1. 2. 3. 2195 2080 1277 Among top ranks, frequency drops very dramatically (but depends on corpus size) … 2298. 1 2299. 1 … Among bottom ranks, frequency drops very gradually

General observations �There always a few very high-frequency words, and many low-frequency words. �Among the top ranks, frequency differences are big. �Among bottom ranks, frequency differences are very small.

So what are the high-frequency words? �Top 5 ranked words in the Maltese data: �li (“that”), l- (DEF), il- (DEF), u (“and”), ta’ (“of”), tal- (“of the”) �Bottom ranked words: � żona (“zone”) f = 1 �yankee f = 1 �żwieten (“Zejtun residents”) f = 1 �xortih (“luck. 3 SGM”) f = 1 �widnejhom (“ear. POSS. 3 PL”) f = 1

Frequency distributions in corpora �The top few frequency ranks are taken up by function words. �In the Brown corpus, the 10 top-ranked words make up 23% of total corpus size (Baroni, 2007) �Bottom-ranked words display lots of ties in frequency. �Lots of words occurring only once (hapax legomena) �In Brown, ca. ½ of vocabulary size is made up of words that occur only once.

Implications � The mean or average frequency hides huge deviations. �In Brown, average frequency of a type is 19 tokens. But: � the mean is inflated by a few very frequent types � most words will have frequency well below the mean � Mean will therefore be higher than median (the middle value) � not a very meaningful indicator of central tendency � Mode (most frequency value) is usually 1. � This is typical of most large corpora. Same happens if we look at n-grams rather than words.

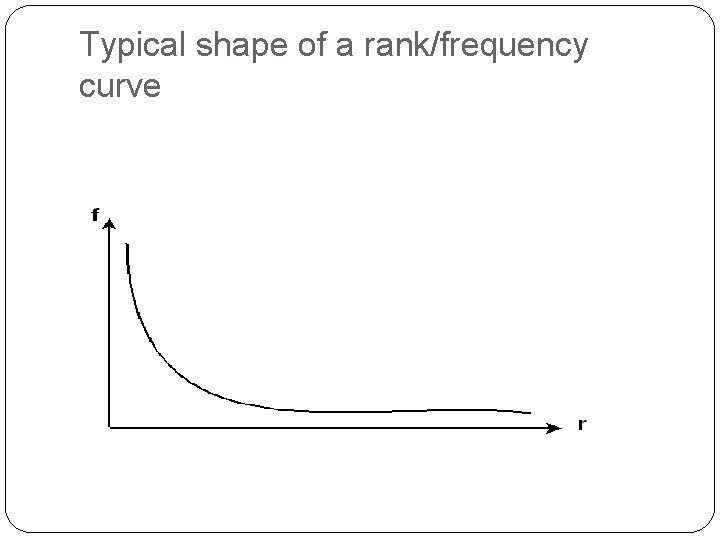

Typical shape of a rank/frequency curve

Actual example (MT) A few high frequency, low-rank words Hundreds of low-frequency, high-rank words

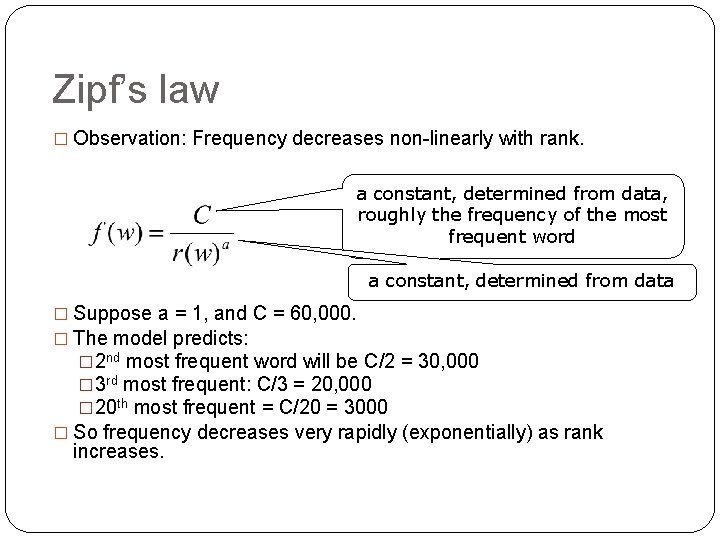

Zipf’s law � Observation: Frequency decreases non-linearly with rank. a constant, determined from data, roughly the frequency of the most frequent word a constant, determined from data � Suppose a = 1, and C = 60, 000. � The model predicts: � 2 nd most frequent word will be C/2 = 30, 000 � 3 rd most frequent: C/3 = 20, 000 � 20 th most frequent = C/20 = 3000 � So frequency decreases very rapidly (exponentially) as rank increases.

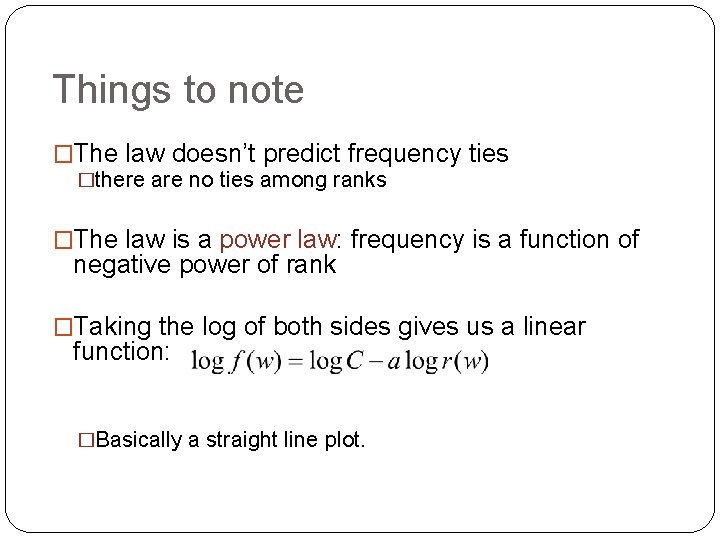

Things to note �The law doesn’t predict frequency ties �there are no ties among ranks �The law is a power law: frequency is a function of negative power of rank �Taking the log of both sides gives us a linear function: �Basically a straight line plot.

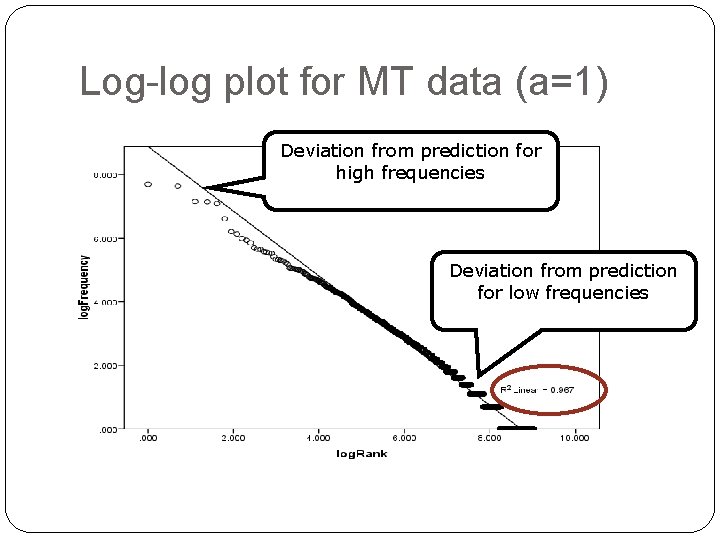

Log-log plot for MT data (a=1) Deviation from prediction for high frequencies Deviation from prediction for low frequencies

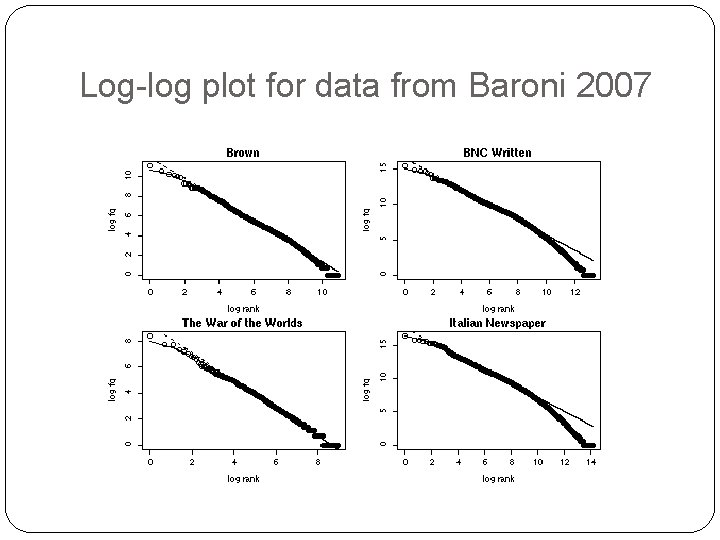

Log-log plot for data from Baroni 2007

Some observations �Empirical work has shown that the law doesn’t perfectly predict frequencies: �at the bottom ranks (low frequencies), actual frequency drops more rapidly than predicted �at the top ranks (high frequencies), the model predicts higher frequencies than actually attested

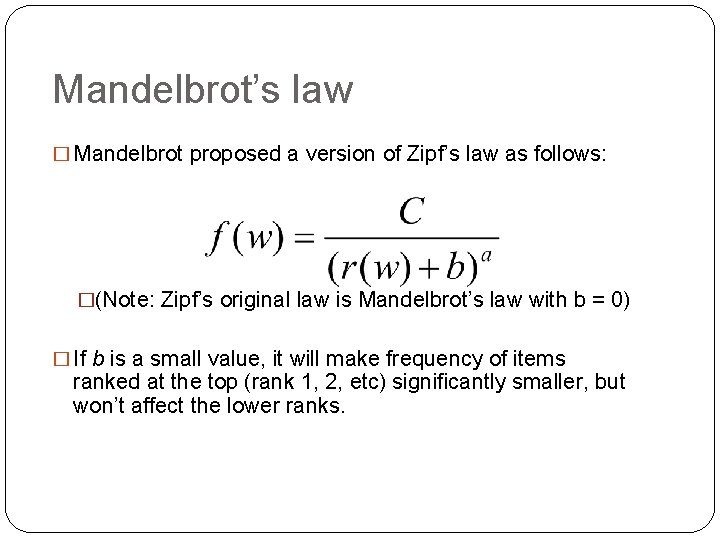

Mandelbrot’s law � Mandelbrot proposed a version of Zipf’s law as follows: �(Note: Zipf’s original law is Mandelbrot’s law with b = 0) � If b is a small value, it will make frequency of items ranked at the top (rank 1, 2, etc) significantly smaller, but won’t affect the lower ranks.

Comparison �Let C = 60, 000, a = 1 and b = 1 �Then, for a word of rank 1: �Zipf’s law predicts f(w) = 60, 000/1 = 60, 000 �Mandelbrot’s law predicts f(w) = 60, 000/(1+1) = 30, 000 �For a word of rank 1000: �Zipf predicts: f(w) = 60, 000/1000 = 60 �Mandelbrot: f(w) = 60, 000/1001 = 59. 94 �So differences are bigger at the top than at the bottom.

Linear version of Mandelbrot �Note: this is no longer a linear curve, so should fit our data better.

Consequences of the law �Data sparseness: no matter how big your corpus, most of the words in it will be of very low frequency. �You can’t exhaust the vocabulary of a language: new words will crop up as corpus size increases. �implication: you can’t compare vocabulary richness of corpora of different sizes

Explanation for Zipfian distributions �Zipf’s own explanation (“least effort” principle): �Speaker’s goal is to minimise effort by using a few distinct words as frequently as possible �Hearer’s goal is to maximise clarity by having as large a vocabulary as possible

Other Zipfian distributions �Zipf’s law crops up in other domains (e. g. distribution of incomes) �Even randomly generated character strings show the same pattern! �short strings will be few, but likely to crop up by chance �more long strings, but each one less likely individually

- Slides: 45