Virtuoso Adaptive Virtualized Distributed Computing Peter A Dinda

Virtuoso: Adaptive Virtualized Distributed Computing Peter A. Dinda Prescience Lab Department of Electrical Engineering and Computer Science Northwestern University http: //presciencelab. org © 2006 Peter A. Dinda 1

People and Acknowledgements • Students – Jack Lange, Ananth Sundararaj, Bin Lin, Ashish Gupta, Blair Heuer, Alex Shoykhet • Collaborators – In-Vigo project at University of Florida (NSF collaborative project) • Renato Figueiredo, Jose Fortes – Wren project at W&M • Bruce Lowekamp • Funders/Gifts – NSF through several awards, VMware 2

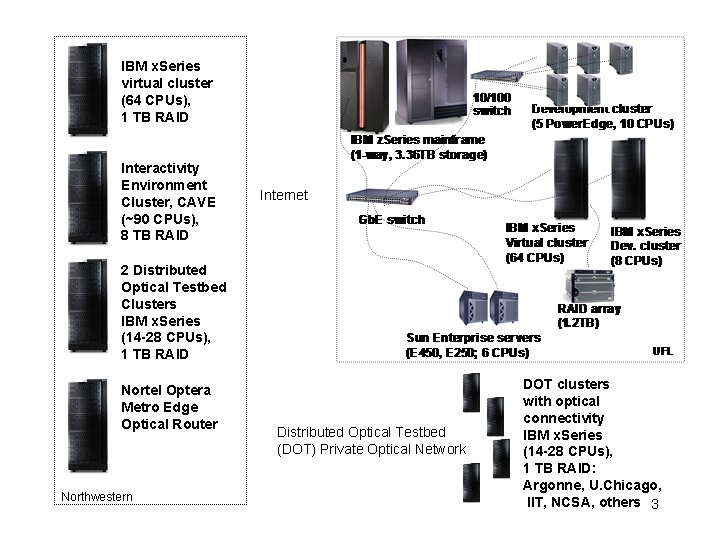

IBM x. Series virtual cluster (64 CPUs), 1 TB RAID Interactivity Environment Cluster, CAVE (~90 CPUs), 8 TB RAID Internet 2 Distributed Optical Testbed Clusters IBM x. Series (14 -28 CPUs), 1 TB RAID Nortel Optera Metro Edge Optical Router Northwestern Distributed Optical Testbed (DOT) Private Optical Network DOT clusters with optical connectivity IBM x. Series (14 -28 CPUs), 1 TB RAID: Argonne, U. Chicago, IIT, NCSA, others 3

Users already know how to deal with this complexity at another level 4

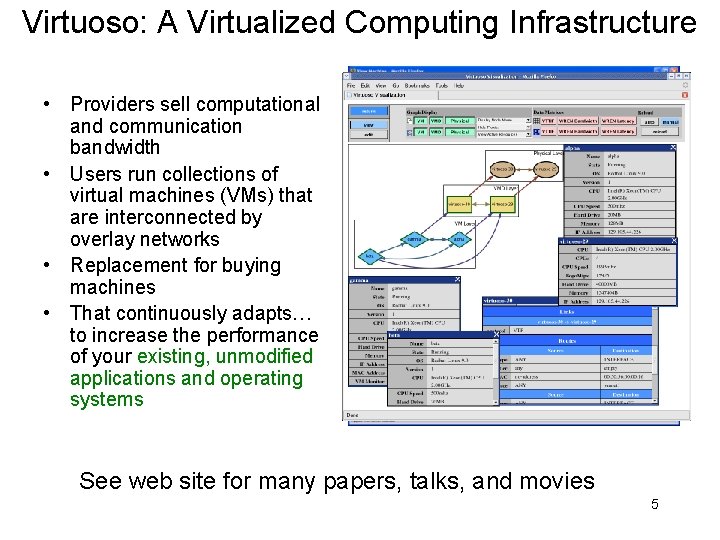

Virtuoso: A Virtualized Computing Infrastructure • Providers sell computational and communication bandwidth • Users run collections of virtual machines (VMs) that are interconnected by overlay networks • Replacement for buying machines • That continuously adapts… to increase the performance of your existing, unmodified applications and operating systems See web site for many papers, talks, and movies 5

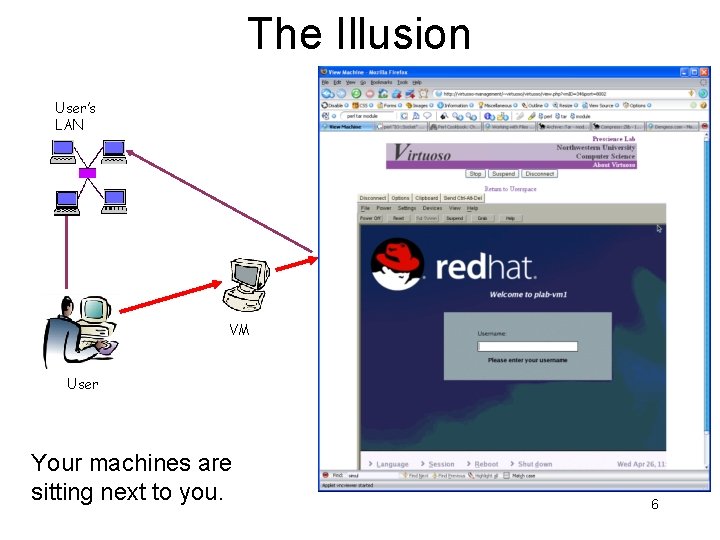

The Illusion User’s LAN VM User Your machines are sitting next to you. 6

Claim (initially published in 2003) • VMs interconnected with overlay networks enables the broad application of dream techniques… – Inference/measurement/prediction – Adaptation – Resource reservation – Marketplaces • … using existing, unmodified applications and operating systems – So actual people can use the techniques 7

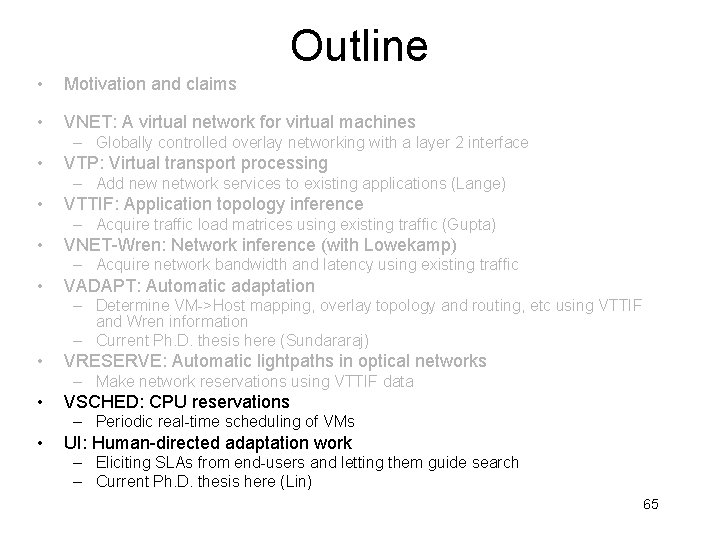

Outline • Motivation and claims • VNET: A virtual network for virtual machines – Globally controlled overlay networking with a layer 2 interface • VTP: Virtual transport processing – Add new network services to existing applications (Lange) • VTTIF: Application topology inference – Acquire traffic load matrices using existing traffic (Gupta) • VNET-Wren: Network inference (with Lowekamp) – Acquire network bandwidth and latency using existing traffic • VADAPT: Automatic adaptation – Determine VM->Host mapping, overlay topology and routing, etc using VTTIF and Wren information – Current Ph. D. thesis here (Sundararaj) • VRESERVE: Automatic lightpaths in optical networks – Make network reservations using VTTIF data • VSCHED: CPU reservations – Periodic real-time scheduling of VMs • UI: Human-directed adaptation work – Eliciting SLAs from end-users and letting them guide search – Current Ph. D. thesis here (Lin) 8

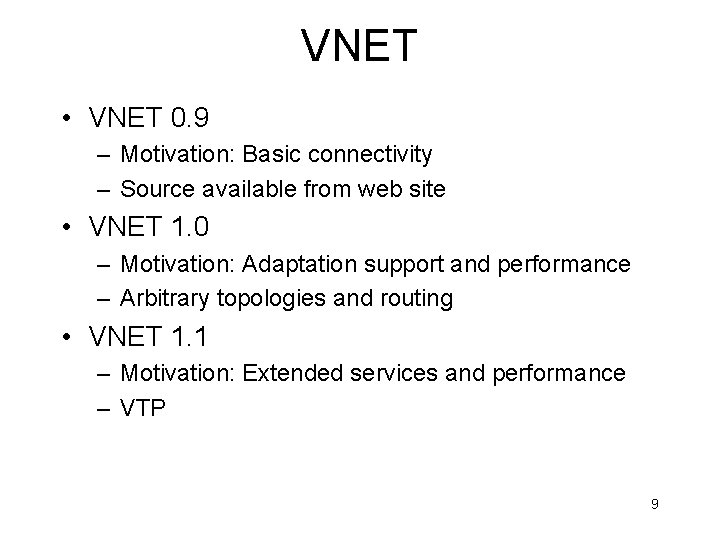

VNET • VNET 0. 9 – Motivation: Basic connectivity – Source available from web site • VNET 1. 0 – Motivation: Adaptation support and performance – Arbitrary topologies and routing • VNET 1. 1 – Motivation: Extended services and performance – VTP 9

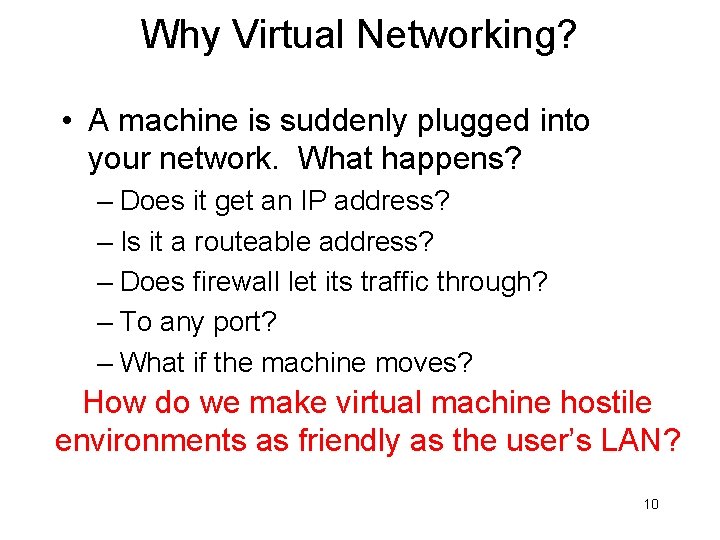

Why Virtual Networking? • A machine is suddenly plugged into your network. What happens? – Does it get an IP address? – Is it a routeable address? – Does firewall let its traffic through? – To any port? – What if the machine moves? How do we make virtual machine hostile environments as friendly as the user’s LAN? 10

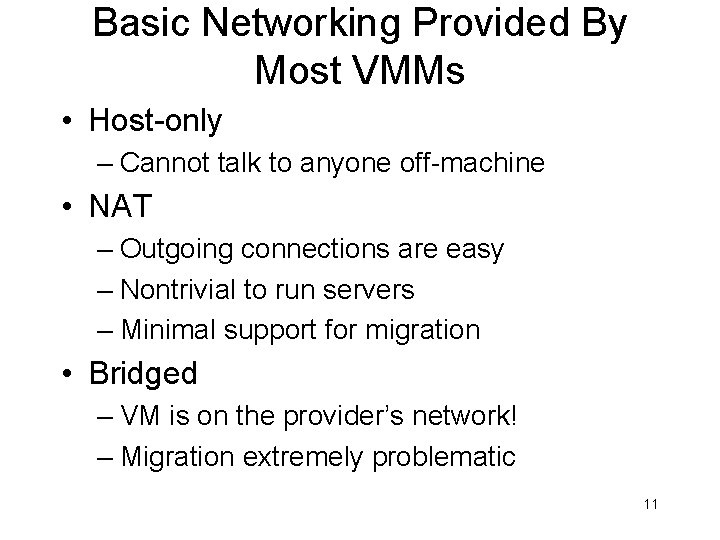

Basic Networking Provided By Most VMMs • Host-only – Cannot talk to anyone off-machine • NAT – Outgoing connections are easy – Nontrivial to run servers – Minimal support for migration • Bridged – VM is on the provider’s network! – Migration extremely problematic 11

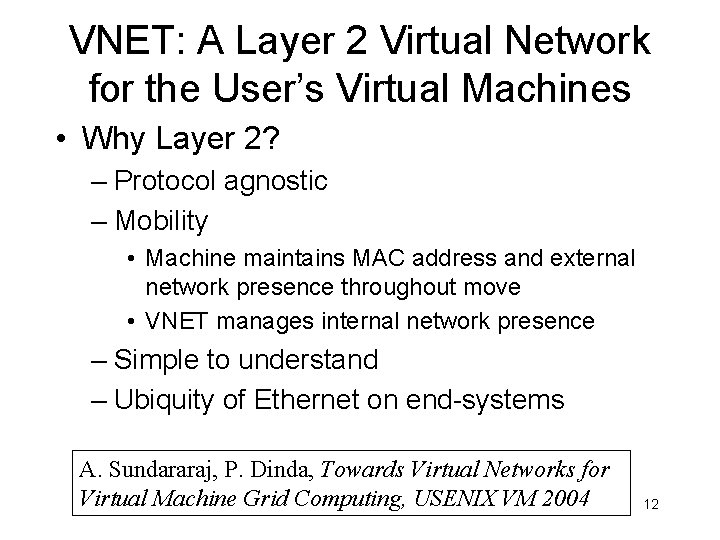

VNET: A Layer 2 Virtual Network for the User’s Virtual Machines • Why Layer 2? – Protocol agnostic – Mobility • Machine maintains MAC address and external network presence throughout move • VNET manages internal network presence – Simple to understand – Ubiquity of Ethernet on end-systems A. Sundararaj, P. Dinda, Towards Virtual Networks for Virtual Machine Grid Computing, USENIX VM 2004 12

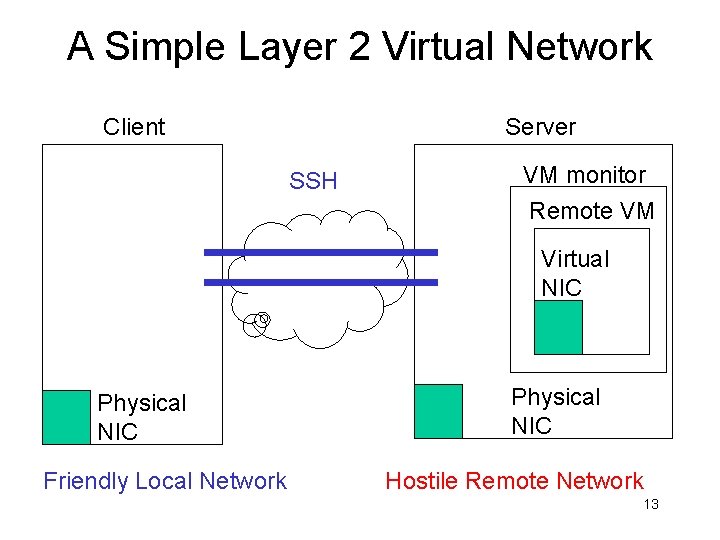

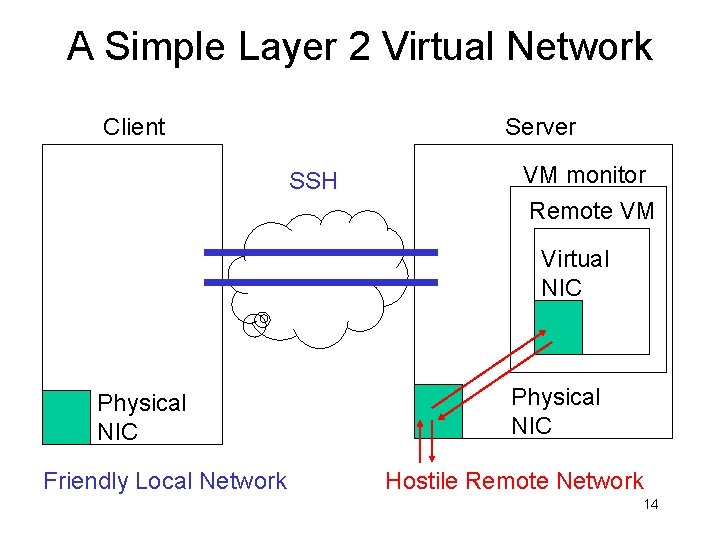

A Simple Layer 2 Virtual Network Client Server SSH VM monitor Remote VM Virtual NIC Physical NIC Friendly Local Network Physical NIC Hostile Remote Network 13

A Simple Layer 2 Virtual Network Client Server SSH VM monitor Remote VM Virtual NIC Physical NIC Friendly Local Network Physical NIC Hostile Remote Network 14

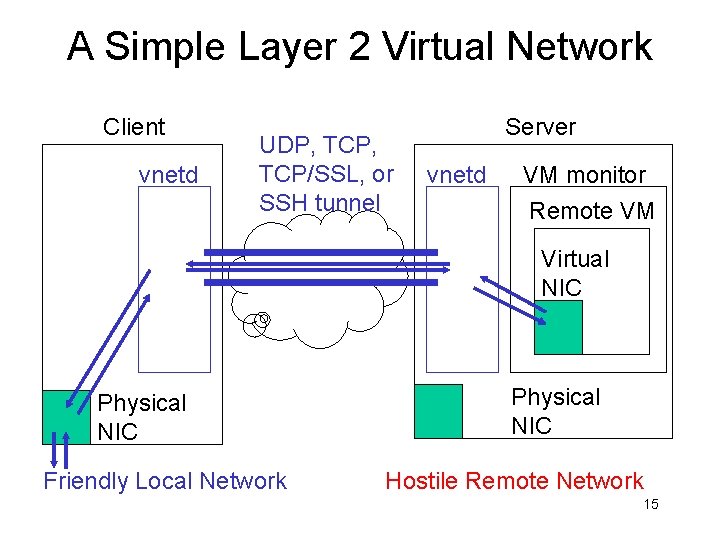

A Simple Layer 2 Virtual Network Client vnetd UDP, TCP/SSL, or SSH tunnel Server vnetd VM monitor Remote VM Virtual NIC Physical NIC Friendly Local Network Physical NIC Hostile Remote Network 15

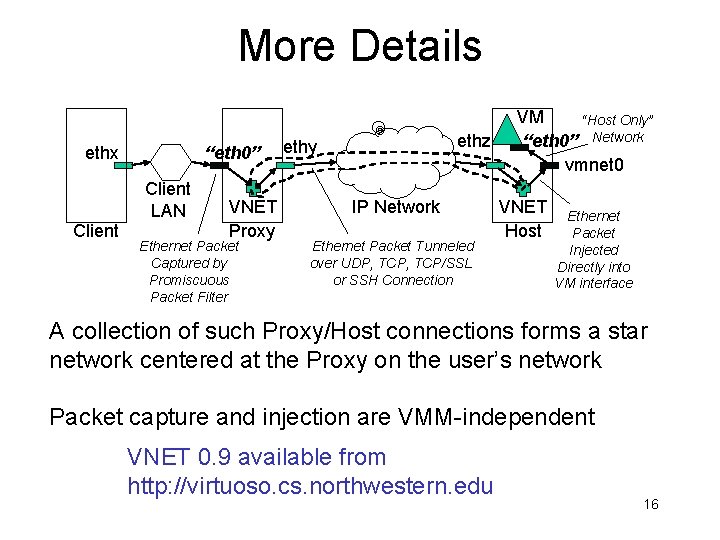

More Details ethx “eth 0” Client LAN Client VNET Proxy Ethernet Packet Captured by Promiscuous Packet Filter ethz ethy IP Network Ethernet Packet Tunneled over UDP, TCP/SSL or SSH Connection VM “Host Only” “eth 0” Network vmnet 0 VNET Host Ethernet Packet Injected Directly into VM interface A collection of such Proxy/Host connections forms a star network centered at the Proxy on the user’s network Packet capture and injection are VMM-independent VNET 0. 9 available from http: //virtuoso. cs. northwestern. edu 16

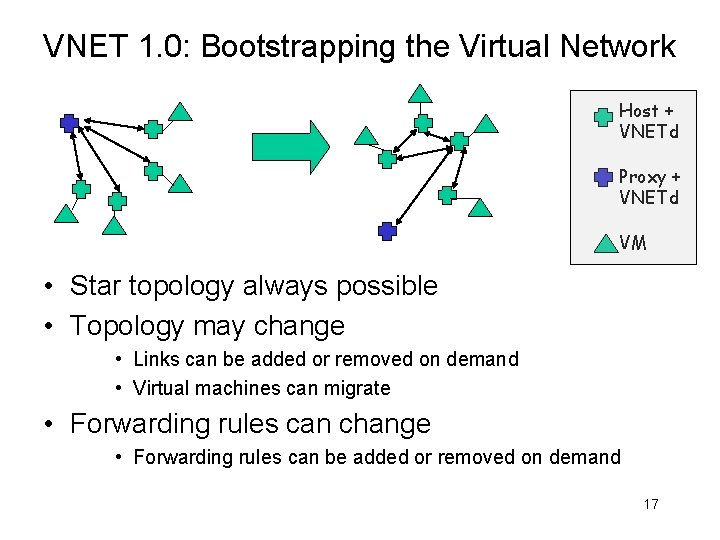

VNET 1. 0: Bootstrapping the Virtual Network Host + VNETd Proxy + VNETd VM • Star topology always possible • Topology may change • Links can be added or removed on demand • Virtual machines can migrate • Forwarding rules can change • Forwarding rules can be added or removed on demand 17

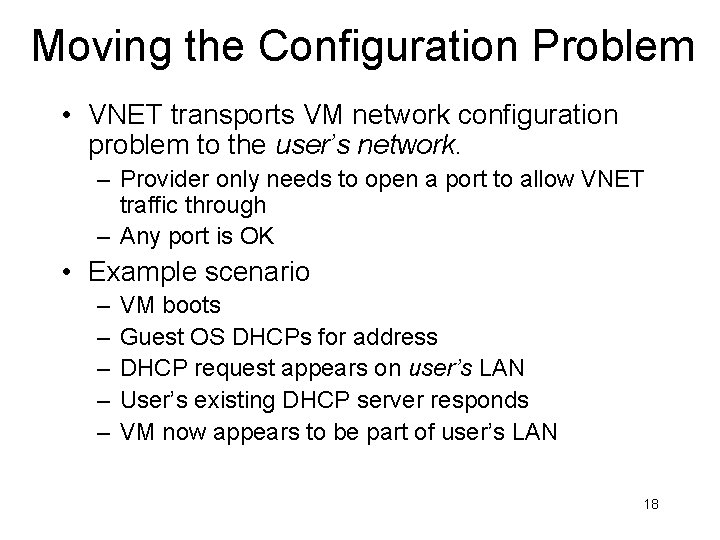

Moving the Configuration Problem • VNET transports VM network configuration problem to the user’s network. – Provider only needs to open a port to allow VNET traffic through – Any port is OK • Example scenario – – – VM boots Guest OS DHCPs for address DHCP request appears on user’s LAN User’s existing DHCP server responds VM now appears to be part of user’s LAN 18

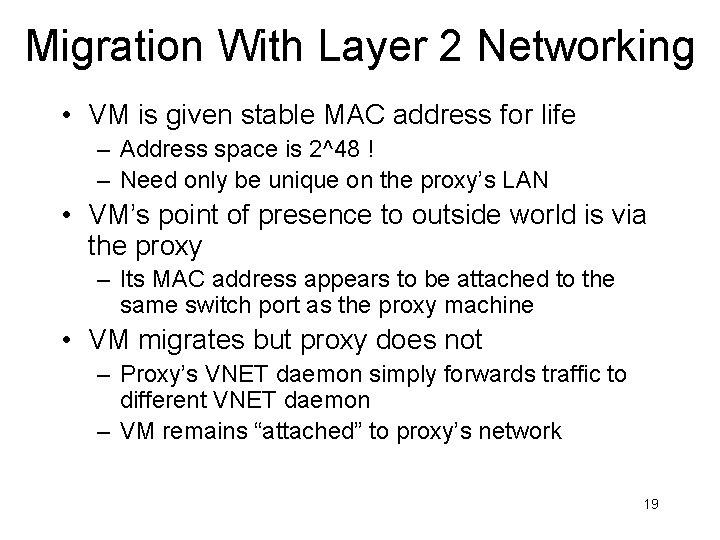

Migration With Layer 2 Networking • VM is given stable MAC address for life – Address space is 2^48 ! – Need only be unique on the proxy’s LAN • VM’s point of presence to outside world is via the proxy – Its MAC address appears to be attached to the same switch port as the proxy machine • VM migrates but proxy does not – Proxy’s VNET daemon simply forwards traffic to different VNET daemon – VM remains “attached” to proxy’s network 19

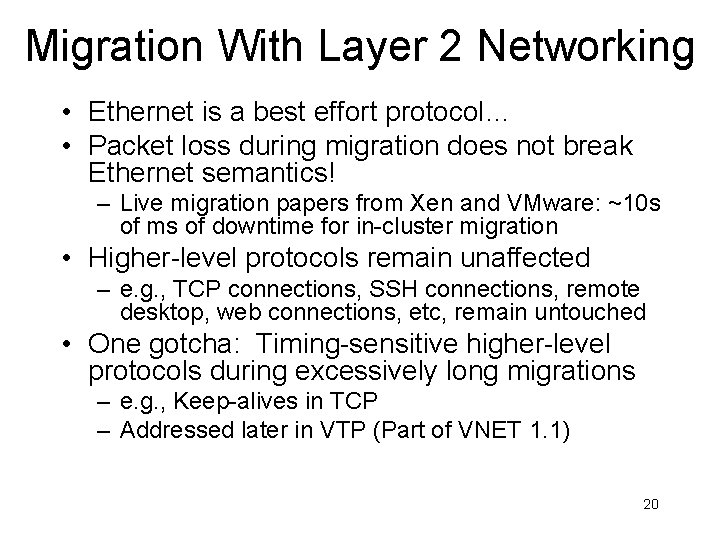

Migration With Layer 2 Networking • Ethernet is a best effort protocol… • Packet loss during migration does not break Ethernet semantics! – Live migration papers from Xen and VMware: ~10 s of ms of downtime for in-cluster migration • Higher-level protocols remain unaffected – e. g. , TCP connections, SSH connections, remote desktop, web connections, etc, remain untouched • One gotcha: Timing-sensitive higher-level protocols during excessively long migrations – e. g. , Keep-alives in TCP – Addressed later in VTP (Part of VNET 1. 1) 20

VNET 1. 0 Performance • Using UDP links and forwarding rule cache • ~20 MB/s, ~0. 5 ms – VM-to-VM ttcp and ping tests – VMware Bridged: ~26 MB/s • Add/Delete Link: 21 ms • Add/Delete Forwarding Rule: 16 ms • • IBM e 1350 cluster, Gigabit switch Dual 2. 0 GHz Xeon RH 9 Host, RH 9 Guest, VMware 2. 5 GSX VMware Server and Xen tests similar J. Lange, A. Sundararaj, P. Dinda, Automatic Dynamic Optical Network Reservations, HPDC 2005 21

Outline • Motivation and claims • VNET: A virtual network for virtual machines – Globally controlled overlay networking with a layer 2 interface • VTP: Virtual transport processing – Add new network services to existing applications (Lange) • VTTIF: Application topology inference – Acquire traffic load matrices using existing traffic (Gupta) • VNET-Wren: Network inference (with Lowekamp) – Acquire network bandwidth and latency using existing traffic • VADAPT: Automatic adaptation – Determine VM->Host mapping, overlay topology and routing, etc using VTTIF and Wren information – Current Ph. D. thesis here (Sundararaj) • VRESERVE: Automatic lightpaths in optical networks – Make network reservations using VTTIF data • VSCHED: CPU reservations – Periodic real-time scheduling of VMs • UI: Human-directed adaptation work – Eliciting SLAs from end-users and letting them guide search – Current Ph. D. thesis here (Lin) 22

Virtual Transport Processing (VTP) • VNET can inspect Ethernet packet content… – At layer 2, 3, 4, … • Manipulate it… – Packet header and content modifications • Generate new packets… – Acks, keep-alive • And maintain state. – Example: virtual TCP endpoint • Addresses limitations of layer 2 tunneling – Example: Long-duration migration • Allows addition of new services 23

VTP: Address Translation • Implement NAT and firewall processing • At whatever combination of layers you would like • Subnet Tunneling – Mac address modification 24

VTP: Enhancing the Transport Layer • VM opens TCP connection and sends data • VTP locally ACKs each segment, forcing VM to send at a high rate, even if the app in the VM has made a ridiculously bad socket buffer choice • VTP buffers ACKed packets and delivers them according to network-appropriate rules – E. g. full delay-bandwidth product, Vegas congestion control, selective ACKs, etc. • Performance Enhancing Proxy – RFC 3135 • Working example: Local Acks • Working example: TCP Keep-alive processing during long duration VM migration to maintain all TCP connections 25

VTP: Enhancing Transport Layer • Recognize UDP flows • Enforce rate-limits – Packet modification – Queuing • Add TCP-compatible congestion control – DCCP 26

VTP: Replacing the Transport Layer • VM creates TCP connection • VTP translates to UDT/Fast. TCP/Parallel TCP, etc – Like spliced TCP connections but with different transport layer protocols • Allows us to add latest generation transport layer protocols to existing applications and OSes without changing either of them. • Working Example: TCP to UDT 27

VTP: Seamless SOCKS • Application opens TCP connection to X • VTP pretends to be the endpoint – Buffers packet data – Fakes ARPs, DNS • VTP connects to SOCKS proxy to talk to X • VTP redirects TCP data stream to X via SOCKS proxy • …But app is unaware of SOCKS proxy and need not support SOCKS at all • Working Example: seamless interface of any TCPusing application to TOR anonymous network 28

Outline • Motivation and claims • VNET: A virtual network for virtual machines – Globally controlled overlay networking with a layer 2 interface • VTP: Virtual transport processing – Add new network services to existing applications (Lange) • VTTIF: Application topology inference – Acquire traffic load matrices using existing traffic (Gupta) • VNET-Wren: Network inference (with Lowekamp) – Acquire network bandwidth and latency using existing traffic • VADAPT: Automatic adaptation – Determine VM->Host mapping, overlay topology and routing, etc using VTTIF and Wren information – Current Ph. D. thesis here (Sundararaj) • VRESERVE: Automatic lightpaths in optical networks – Make network reservations using VTTIF data • VSCHED: CPU reservations – Periodic real-time scheduling of VMs • UI: Human-directed adaptation work – Eliciting SLAs from end-users and letting them guide search – Current Ph. D. thesis here (Lin) 29

VTTIF: Application Traffic Load Measurement and Topology Inference • Parallel and distributed applications display particular communication patterns on particular topologies – Intensity of communication can also vary from node to node or time to time. – Combined representation: Traffic Load Matrix • VNET already sees every packet sent or received by a VM • Can we use this information to compute a global traffic load matrix? • Can we eliminate irrelevant communication from matrix to get at application topology? 30

![Traffic Monitoring and Reduction Ethernet Packet Format: ethz SRC|DEST|TYPE|DATA (size) VMTraffic. Matrix[SRC][DEST]+=size Each VM Traffic Monitoring and Reduction Ethernet Packet Format: ethz SRC|DEST|TYPE|DATA (size) VMTraffic. Matrix[SRC][DEST]+=size Each VM](http://slidetodoc.com/presentation_image_h2/f669a0746e7668ee20da9238bac26bd6/image-31.jpg)

Traffic Monitoring and Reduction Ethernet Packet Format: ethz SRC|DEST|TYPE|DATA (size) VMTraffic. Matrix[SRC][DEST]+=size Each VM on the host contributes a row and column to the VM traffic matrix VM “Host Only” “eth 0” Network vmnet 0 VNET Host Packets observed here Global reduction to find overall matrix, broadcast back to VNETs Each VNET daemon has a view of the global network load 31

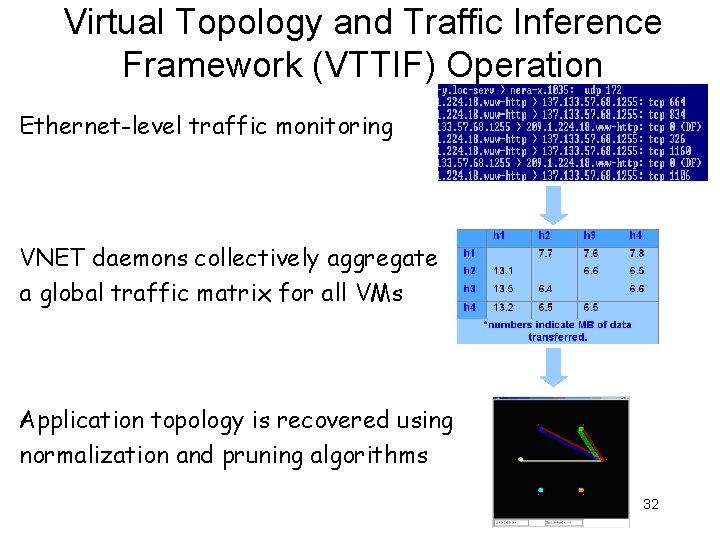

Virtual Topology and Traffic Inference Framework (VTTIF) Operation Ethernet-level traffic monitoring VNET daemons collectively aggregate a global traffic matrix for all VMs Application topology is recovered using normalization and pruning algorithms 32

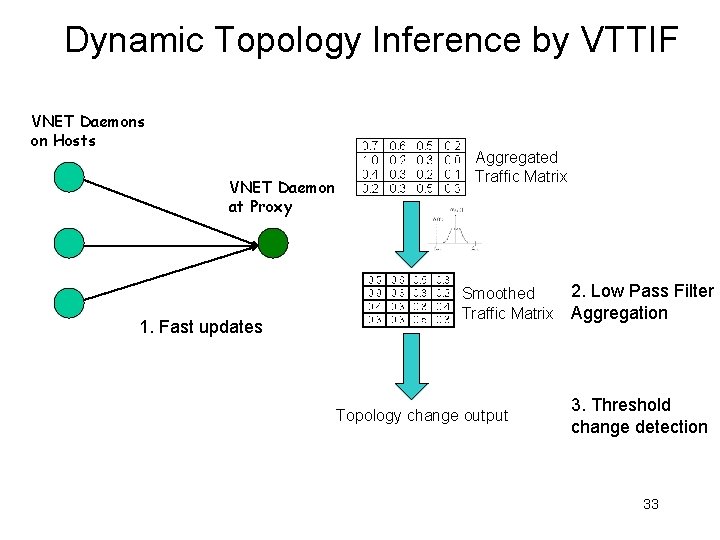

Dynamic Topology Inference by VTTIF VNET Daemons on Hosts VNET Daemon at Proxy 1. Fast updates Aggregated Traffic Matrix Smoothed Traffic Matrix Topology change output 2. Low Pass Filter Aggregation 3. Threshold change detection 33

Overheads (100 mbit LAN) • Essentially zero latency impact • 4. 2 % throughput reduction versus VNET A. Sundararaj, A. Gupta, and P. Dinda, Increasing Application Performance In Virtual Environments Through Run-time Inference and Adaptation, HPDC 2005. A. Gupta, P. Dinda, Inferring the Topology and Traffic Load of Parallel Programs Running In a Virtual Machine Environment, JSSPP 2004. 34

Outline • Motivation and claims • VNET: A virtual network for virtual machines – Globally controlled overlay networking with a layer 2 interface • VTP: Virtual transport processing – Add new network services to existing applications (Lange) • VTTIF: Application topology inference – Acquire traffic load matrices using existing traffic (Gupta) • VNET-Wren: Network inference (with Lowekamp) – Acquire network bandwidth and latency using existing traffic • VADAPT: Automatic adaptation – Determine VM->Host mapping, overlay topology and routing, etc using VTTIF and Wren information – Current Ph. D. thesis here (Sundararaj) • VRESERVE: Automatic lightpaths in optical networks – Make network reservations using VTTIF data • VSCHED: CPU reservations – Periodic real-time scheduling of VMs • UI: Human-directed adaptation work – Eliciting SLAs from end-users and letting them guide search – Current Ph. D. thesis here (Lin) 35

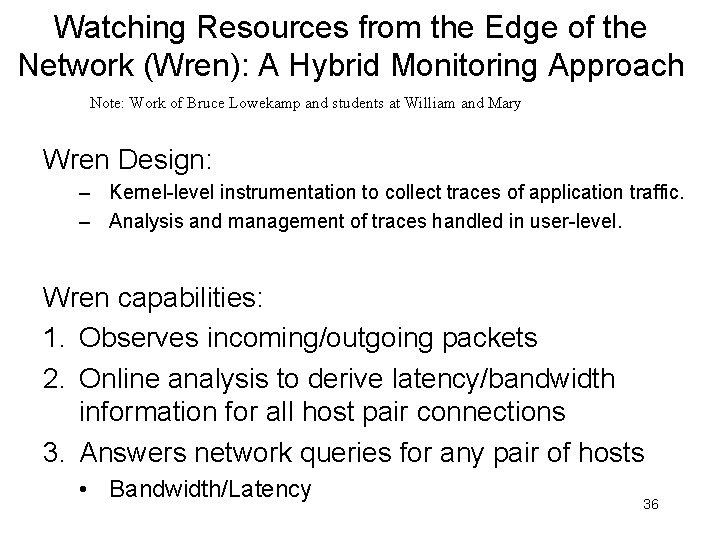

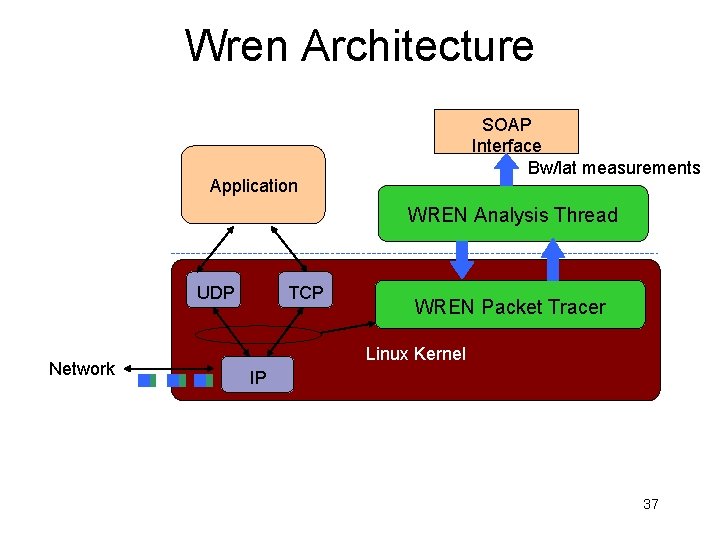

Watching Resources from the Edge of the Network (Wren): A Hybrid Monitoring Approach Note: Work of Bruce Lowekamp and students at William and Mary Wren Design: – Kernel-level instrumentation to collect traces of application traffic. – Analysis and management of traces handled in user-level. Wren capabilities: 1. Observes incoming/outgoing packets 2. Online analysis to derive latency/bandwidth information for all host pair connections 3. Answers network queries for any pair of hosts • Bandwidth/Latency 36

Wren Architecture Grid Application UDP Network TCP SOAP Interface Bw/lat measurements WREN Analysis Thread WREN Packet Tracer Linux Kernel IP 37

Wren Online Available Bandwidth Algorithm Applies self-induced congestion principle – If packets are sent at a rate larger than the available bandwidth, the queuing delays will have an increasing trend. – Find the rate just before queuing delays are incurred 1. Identifies outgoing Maximal length trains with similar spaced packets. 2. Calculates ISR ( Initial Sending Rate ) for these trains. 3. Monitors ACK return rate to determine trends in RTTs. 4. Increase trend indicates congestion, non increasing trend indicates lower bound for bw. 38

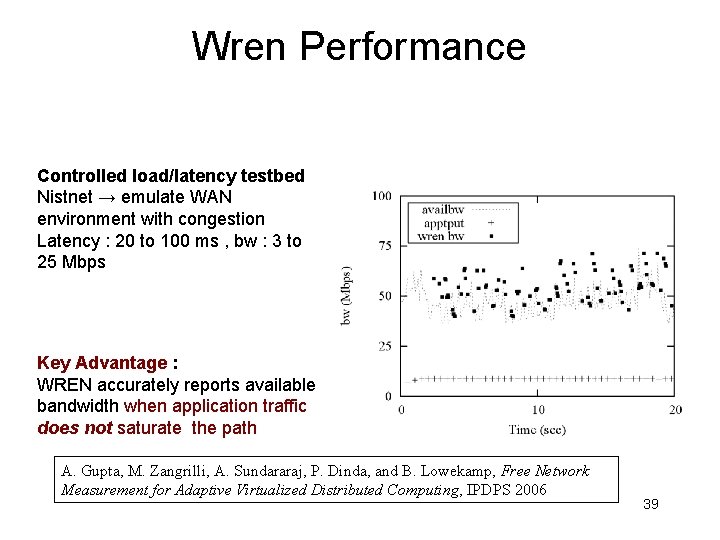

Wren Performance Controlled load/latency testbed Nistnet → emulate WAN environment with congestion Latency : 20 to 100 ms , bw : 3 to 25 Mbps Key Advantage : WREN accurately reports available bandwidth when application traffic does not saturate the path A. Gupta, M. Zangrilli, A. Sundararaj, P. Dinda, and B. Lowekamp, Free Network Measurement for Adaptive Virtualized Distributed Computing, IPDPS 2006 39

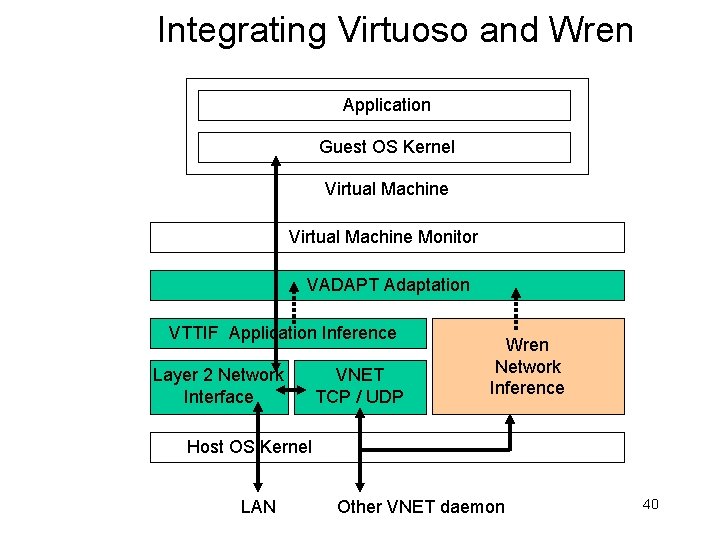

Integrating Virtuoso and Wren Application Guest OS Kernel Virtual Machine Monitor VADAPT Adaptation VTTIF Application Inference Layer 2 Network Interface VNET TCP / UDP Wren Network Inference Host OS Kernel LAN Other VNET daemon 40

Outline • Motivation and claims • VNET: A virtual network for virtual machines – Globally controlled overlay networking with a layer 2 interface • VTP: Virtual transport processing – Add new network services to existing applications (Lange) • VTTIF: Application topology inference – Acquire traffic load matrices using existing traffic (Gupta) • VNET-Wren: Network inference (with Lowekamp) – Acquire network bandwidth and latency using existing traffic • VADAPT: Automatic adaptation – Determine VM->Host mapping, overlay topology and routing, etc using VTTIF and Wren information – Current Ph. D. thesis here (Sundararaj) • VRESERVE: Automatic lightpaths in optical networks – Make network reservations using VTTIF data • VSCHED: CPU reservations – Periodic real-time scheduling of VMs • UI: Human-directed adaptation work – Eliciting SLAs from end-users and letting them guide search – Current Ph. D. thesis here (Lin) 41

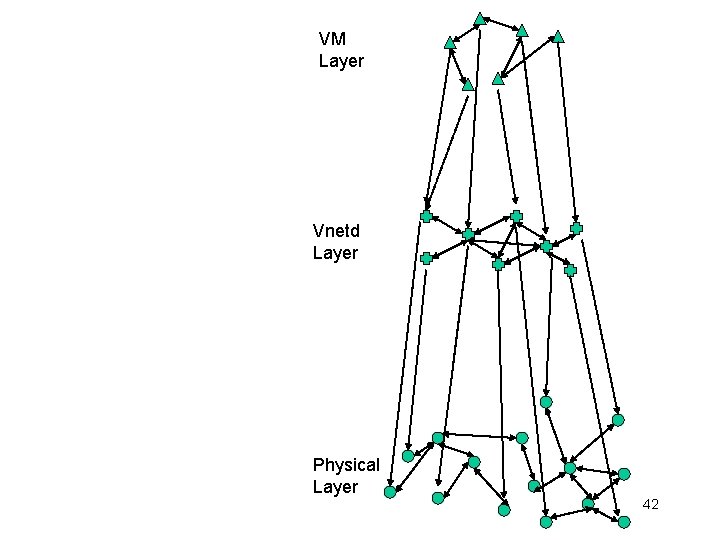

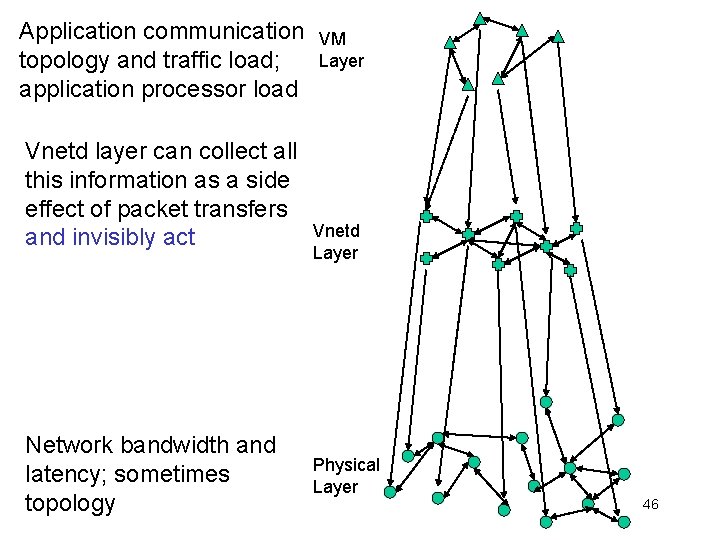

VM Layer Vnetd Layer Physical Layer 42

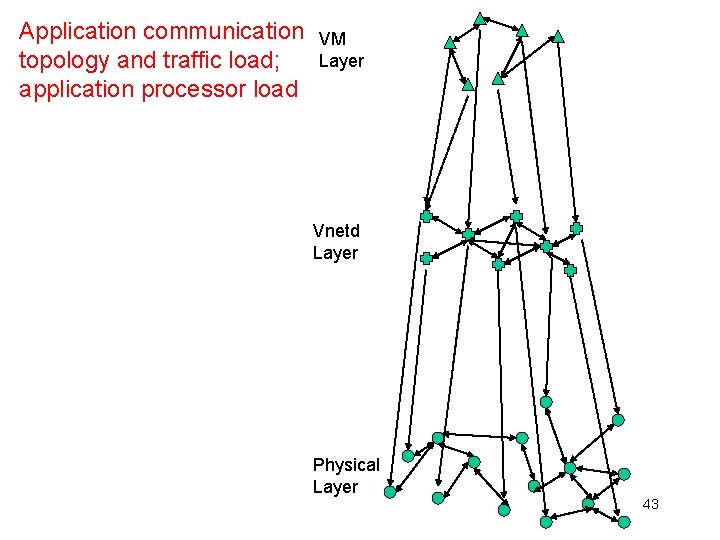

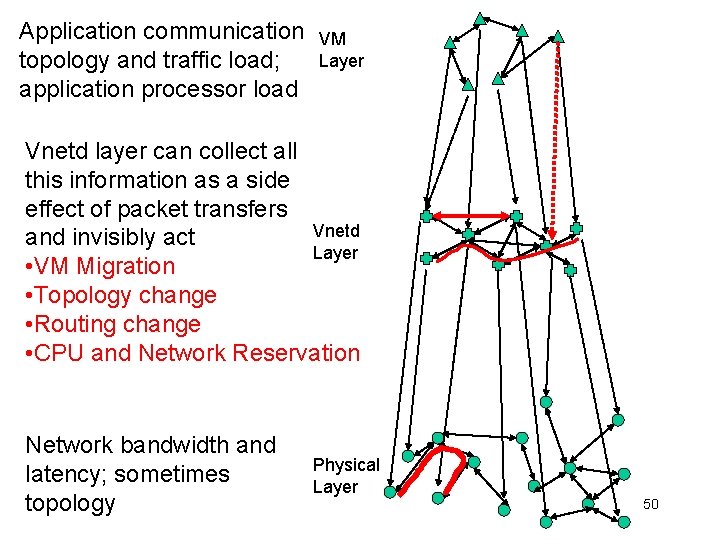

Application communication topology and traffic load; application processor load VM Layer Vnetd Layer Physical Layer 43

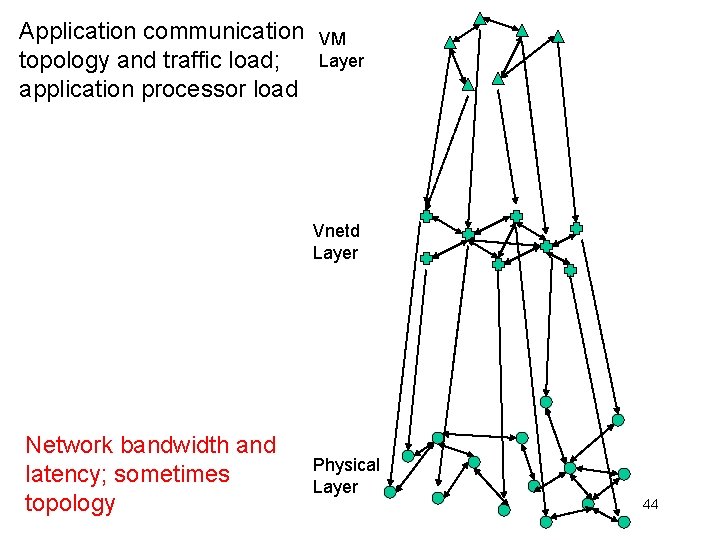

Application communication topology and traffic load; application processor load VM Layer Vnetd Layer Network bandwidth and latency; sometimes topology Physical Layer 44

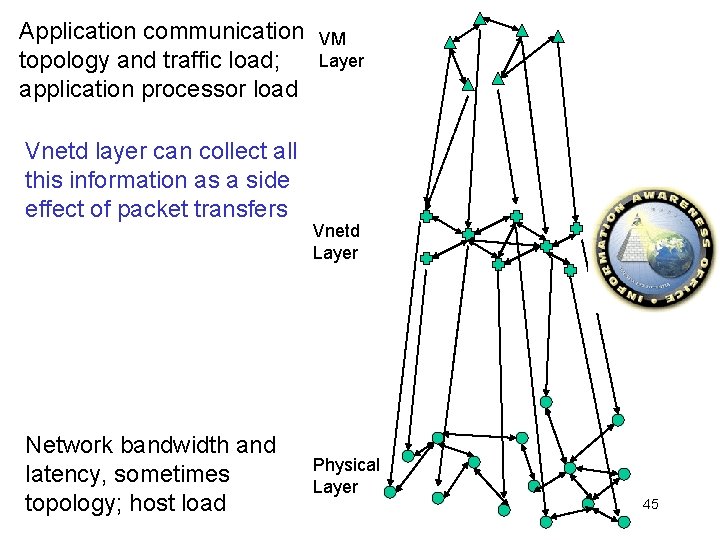

Application communication topology and traffic load; application processor load Vnetd layer can collect all this information as a side effect of packet transfers Network bandwidth and latency, sometimes topology; host load VM Layer Vnetd Layer Physical Layer 45

Application communication topology and traffic load; application processor load Vnetd layer can collect all this information as a side effect of packet transfers and invisibly act Network bandwidth and latency; sometimes topology VM Layer Vnetd Layer Physical Layer 46

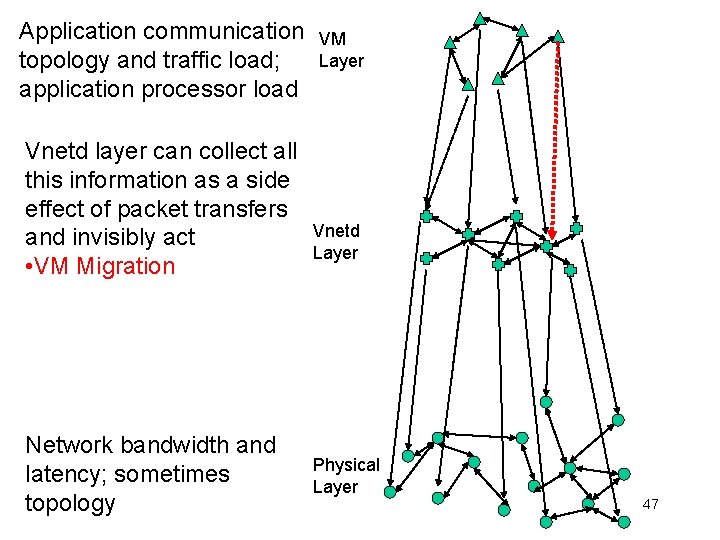

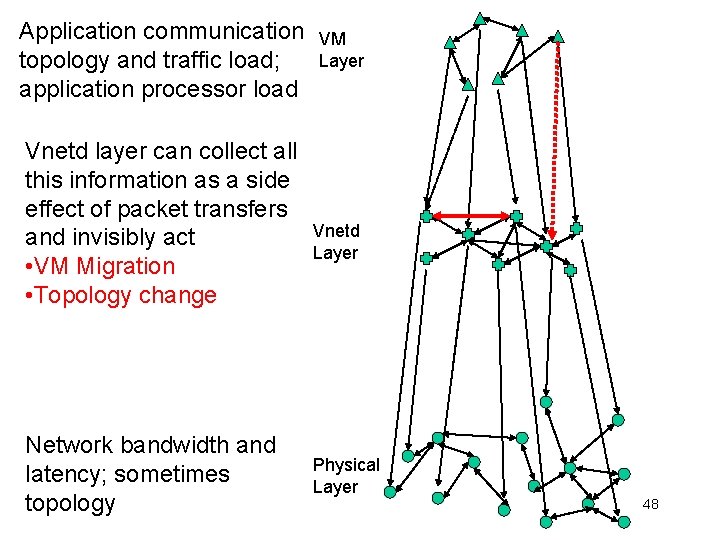

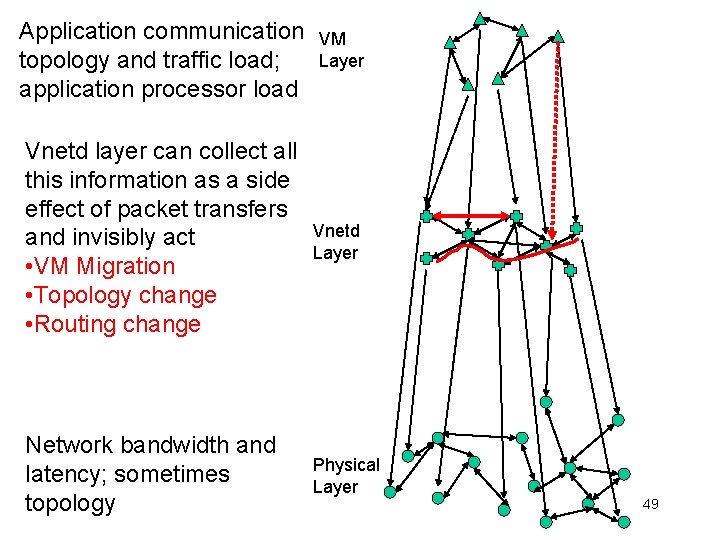

Application communication topology and traffic load; application processor load Vnetd layer can collect all this information as a side effect of packet transfers and invisibly act • VM Migration Network bandwidth and latency; sometimes topology VM Layer Vnetd Layer Physical Layer 47

Application communication topology and traffic load; application processor load Vnetd layer can collect all this information as a side effect of packet transfers and invisibly act • VM Migration • Topology change Network bandwidth and latency; sometimes topology VM Layer Vnetd Layer Physical Layer 48

Application communication topology and traffic load; application processor load VM Layer Vnetd layer can collect all this information as a side effect of packet transfers and invisibly act • VM Migration • Topology change • Routing change Vnetd Layer Network bandwidth and latency; sometimes topology Physical Layer 49

Application communication topology and traffic load; application processor load VM Layer Vnetd layer can collect all this information as a side effect of packet transfers Vnetd and invisibly act Layer • VM Migration • Topology change • Routing change • CPU and Network Reservation Network bandwidth and latency; sometimes topology Physical Layer 50

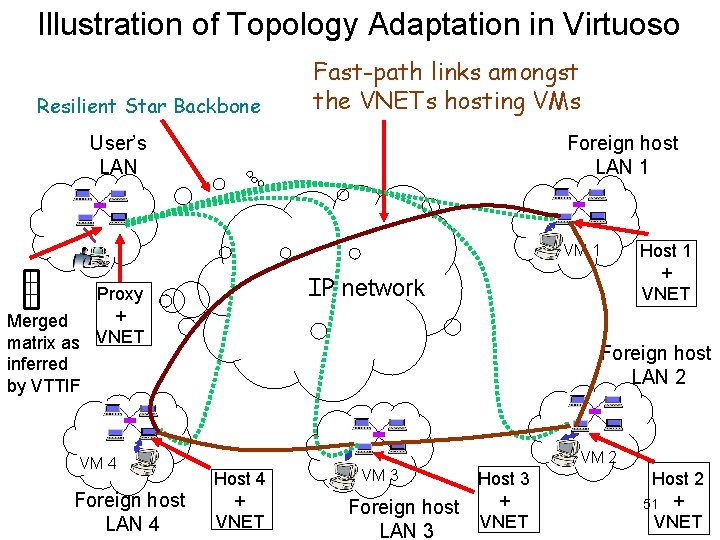

Illustration of Topology Adaptation in Virtuoso Resilient Star Backbone Fast-path links amongst the VNETs hosting VMs User’s LAN Foreign host LAN 1 VM 1 IP network Proxy + Merged matrix as VNET inferred by VTTIF VM 4 Foreign host LAN 4 Host 1 + VNET Foreign host LAN 2 VM 2 Host 4 + VNET VM 3 Foreign host LAN 3 Host 3 + VNET Host 2 51 + VNET

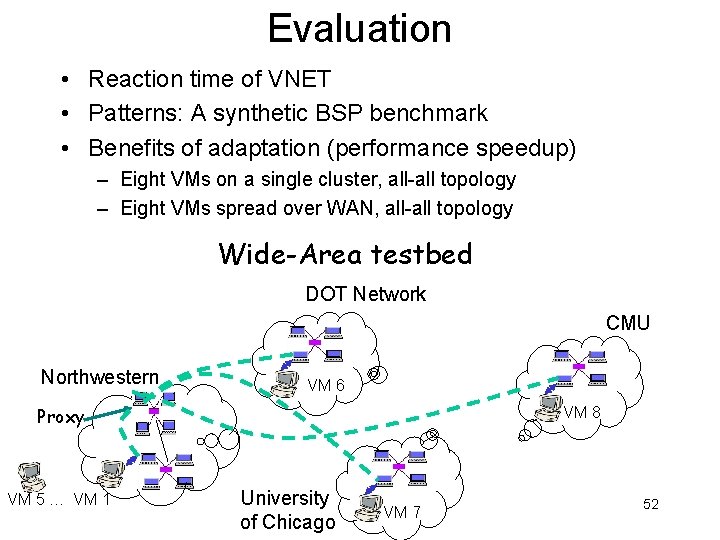

Evaluation • Reaction time of VNET • Patterns: A synthetic BSP benchmark • Benefits of adaptation (performance speedup) – Eight VMs on a single cluster, all-all topology – Eight VMs spread over WAN, all-all topology Wide-Area testbed DOT Network CMU Northwestern VM 6 Proxy VM 5 … VM 1 VM 8 University of Chicago VM 7 52

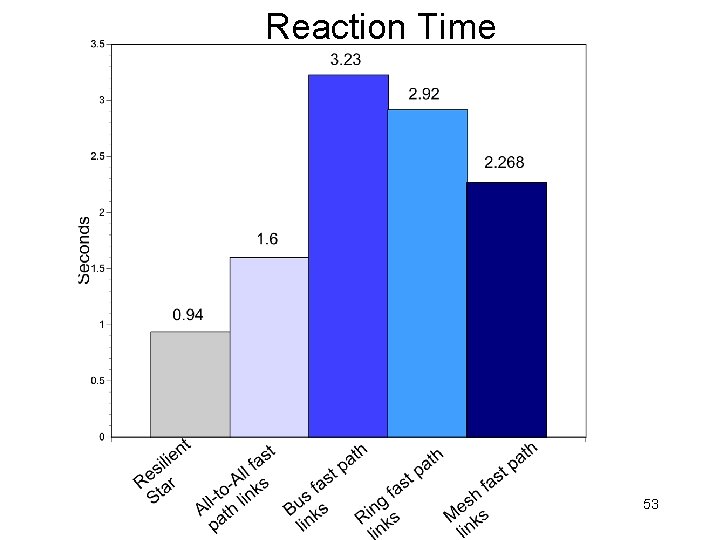

Reaction Time 53

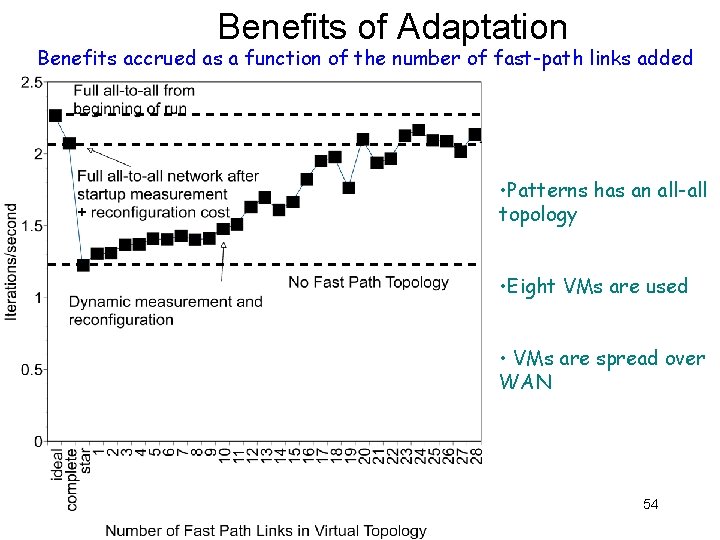

Benefits of Adaptation Benefits accrued as a function of the number of fast-path links added • Patterns has an all-all topology • Eight VMs are used • VMs are spread over WAN 54

Optimization Problems With Topology + Migration Informally stated: • Input – Network traffic load matrix of application – Physical network topology and measurements • Output – Mapping of VMs to hosts – Overlay topology connecting hosts – Forwarding rules on the topology q. Such that the application throughput is maximized 55

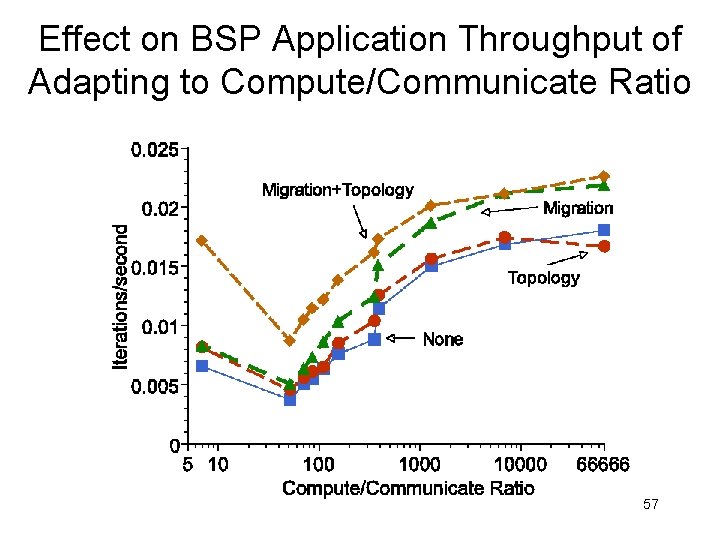

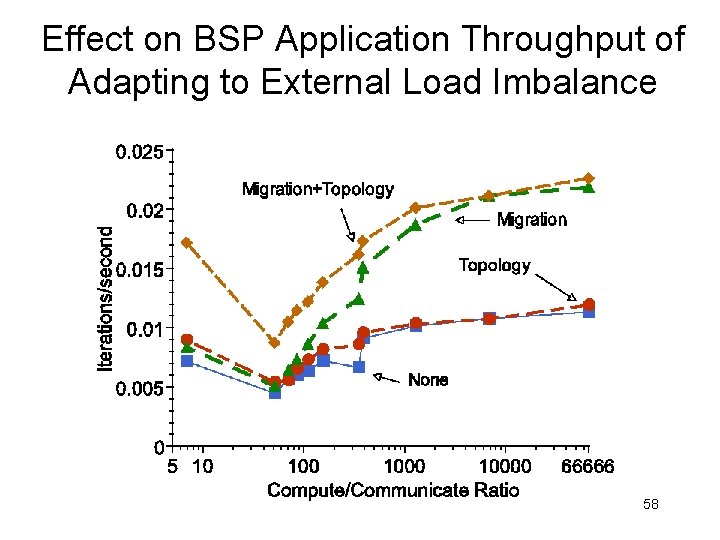

Evaluation • Applications – Patterns: A synthetic BSP benchmark – TPC-W: Transactional web ecommerce benchmark • Benefits of adaptation (performance speedup) – Adapting to compute/communicate ratio – Adapting to external load imbalance 56

Effect on BSP Application Throughput of Adapting to Compute/Communicate Ratio 57

Effect on BSP Application Throughput of Adapting to External Load Imbalance 58

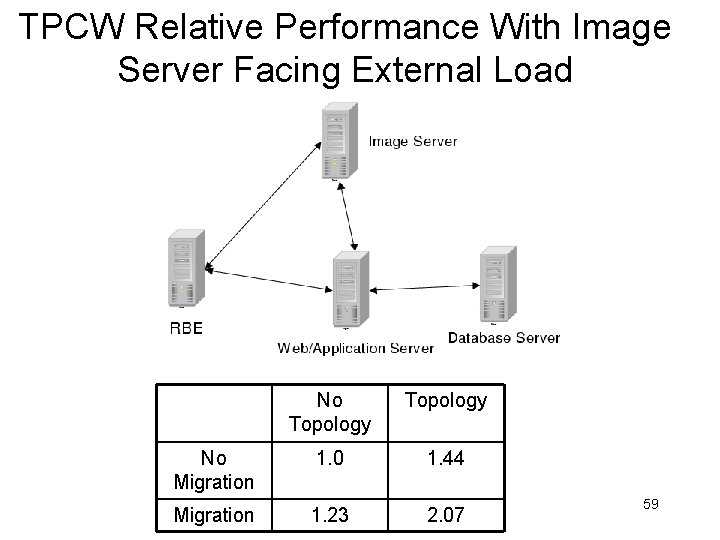

TPCW Relative Performance With Image Server Facing External Load No Topology No Migration 1. 0 1. 44 Migration 1. 23 2. 07 59

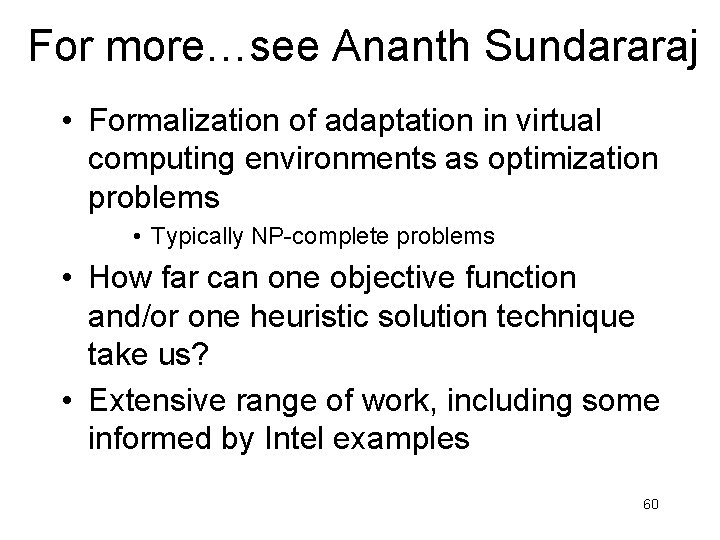

For more…see Ananth Sundararaj • Formalization of adaptation in virtual computing environments as optimization problems • Typically NP-complete problems • How far can one objective function and/or one heuristic solution technique take us? • Extensive range of work, including some informed by Intel examples 60

Outline • Motivation and claims • VNET: A virtual network for virtual machines – Globally controlled overlay networking with a layer 2 interface • VTP: Virtual transport processing – Add new network services to existing applications (Lange) • VTTIF: Application topology inference – Acquire traffic load matrices using existing traffic (Gupta) • VNET-Wren: Network inference (with Lowekamp) – Acquire network bandwidth and latency using existing traffic • VADAPT: Automatic adaptation – Determine VM->Host mapping, overlay topology and routing, etc using VTTIF and Wren information – Current Ph. D. thesis here (Sundararaj) • VRESERVE: Automatic lightpaths in optical networks – Make network reservations using VTTIF data • VSCHED: CPU reservations – Periodic real-time scheduling of VMs • UI: Human-directed adaptation work – Eliciting SLAs from end-users and letting them guide search – Current Ph. D. thesis here (Lin) 61

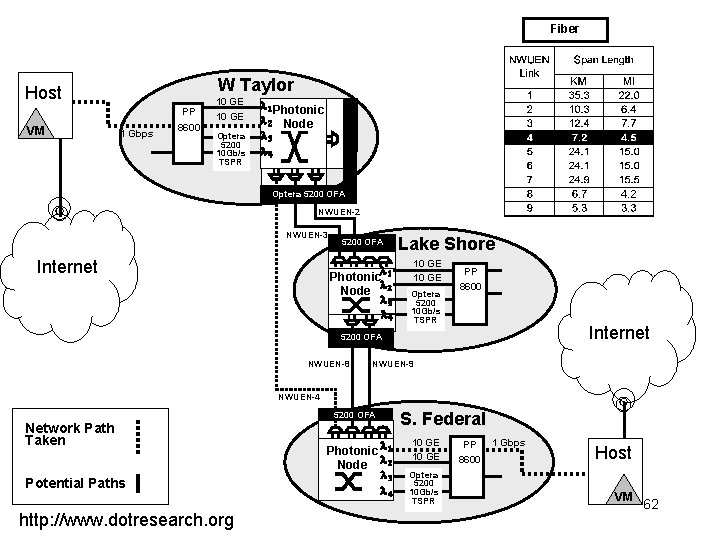

Fiber W Taylor Host PP VM 1 Gbps 8600 10 GE Optera 5200 10 Gb/s TSPR l 1 Photonic l 2 Node l 3 l 4 Optera 5200 OFA NWUEN-2 NWUEN-3 Internet 5200 OFA l Photonic 1 l Node 2 l 3 l 4 Lake Shore 10 GE Optera 5200 10 Gb/s TSPR PP 8600 Internet 5200 OFA NWUEN-8 NWUEN-9 NWUEN-4 5200 OFA Network Path Taken Potential Paths http: //www. dotresearch. org Photonic l 1 Node l 2 l 3 l 4 S. Federal 10 GE Optera 5200 10 Gb/s TSPR PP 8600 1 Gbps Host VM 62

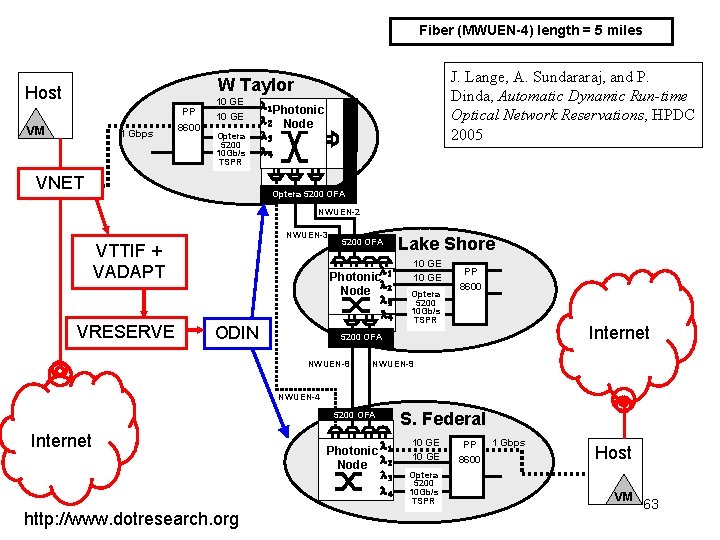

Fiber (MWUEN-4) length = 5 miles J. Lange, A. Sundararaj, and P. Dinda, Automatic Dynamic Run-time Optical Network Reservations, HPDC 2005 W Taylor Host PP VM 1 Gbps 8600 10 GE Optera 5200 10 Gb/s TSPR VNET l 1 Photonic l 2 Node l 3 l 4 Optera 5200 OFA NWUEN-2 NWUEN-3 VTTIF + VADAPT VRESERVE 5200 OFA l Photonic 1 l Node 2 l 3 l 4 ODIN Lake Shore 10 GE Optera 5200 10 Gb/s TSPR PP 8600 Internet 5200 OFA NWUEN-8 NWUEN-9 NWUEN-4 5200 OFA Internet http: //www. dotresearch. org Photonic l 1 Node l 2 l 3 l 4 S. Federal 10 GE Optera 5200 10 Gb/s TSPR PP 8600 1 Gbps Host VM 63

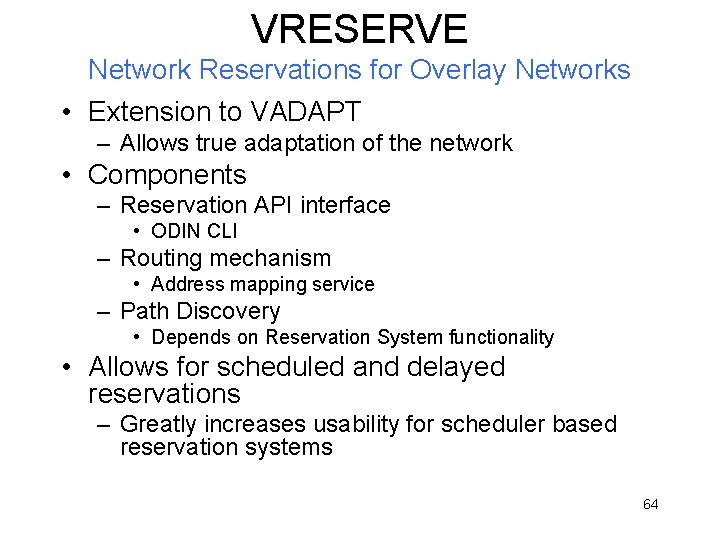

VRESERVE Network Reservations for Overlay Networks • Extension to VADAPT – Allows true adaptation of the network • Components – Reservation API interface • ODIN CLI – Routing mechanism • Address mapping service – Path Discovery • Depends on Reservation System functionality • Allows for scheduled and delayed reservations – Greatly increases usability for scheduler based reservation systems 64

Outline • Motivation and claims • VNET: A virtual network for virtual machines – Globally controlled overlay networking with a layer 2 interface • VTP: Virtual transport processing – Add new network services to existing applications (Lange) • VTTIF: Application topology inference – Acquire traffic load matrices using existing traffic (Gupta) • VNET-Wren: Network inference (with Lowekamp) – Acquire network bandwidth and latency using existing traffic • VADAPT: Automatic adaptation – Determine VM->Host mapping, overlay topology and routing, etc using VTTIF and Wren information – Current Ph. D. thesis here (Sundararaj) • VRESERVE: Automatic lightpaths in optical networks – Make network reservations using VTTIF data • VSCHED: CPU reservations – Periodic real-time scheduling of VMs • UI: Human-directed adaptation work – Eliciting SLAs from end-users and letting them guide search – Current Ph. D. thesis here (Lin) 65

Challenges For CPU Reservations • Resource providers price VM execution according to interactivity and compute rate constraints – How to express, validate, and enforce? • A workload-diverse set of VMs – How to schedule them on a single physical machine? 66

Meeting the Needs of Diverse Workloads • Interactive workloads – substitute a remote VM for a desktop computer. – desktop applications, web applications and games • Batch workloads – scientific simulations, analysis codes • Batch parallel workloads – scientific simulations, analysis codes that can be scaled by adding more VMs • Goals – interactivity does not suffer – batch machines meet both their advance reservation deadlines and gang scheduling constraints. 67

Periodic Real-time Scheduling Model • Task is run for slice seconds every period seconds. – slice / period defines the compute rate of the task • Unifying abstraction for needs of various classes of applications – Each running VM is a process; can be scheduled as a whole • Implementation: EDF scheduler built on top of Linux FIFO scheduler 68

VSched • Soft real-time; entirely user-level • Interacts with Linux kernel running below any type-II VMM (virtual machine monitor) to schedule all VMs • Allows dynamic adjustment of VM constraints – Immediate performance improvement, or – Migrate the VM to another physical machine 69

VSched • Supports periods and slices ranging to days – Fine millisecond and sub-millisecond ranges are needed for highly interactive VMs – Much coarser resolutions for batch VMs. • Client/Server; remotely controlled scheduling • Publicly released B. Lin, and P. Dinda, VSched: Mixing Batch and Interactive Virtual Machines Using Periodic Real-time Scheduling, Proceedings of ACM/IEEE SC 2005 70

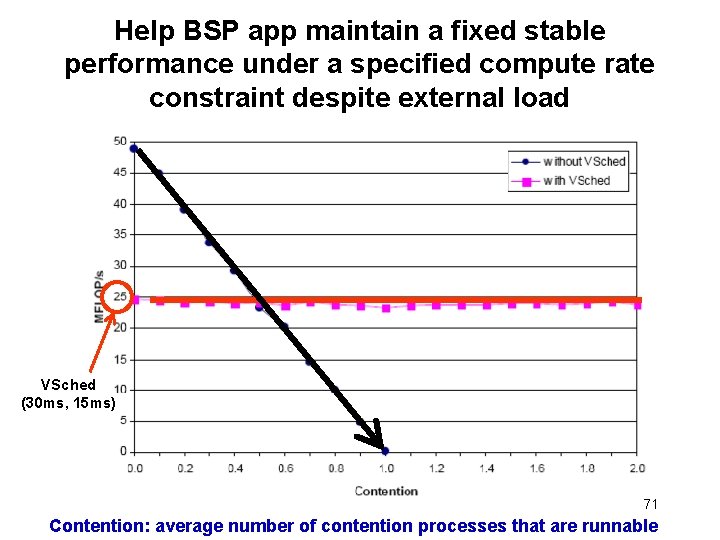

Help BSP app maintain a fixed stable performance under a specified compute rate constraint despite external load VSched (30 ms, 15 ms) 71 Contention: average number of contention processes that are runnable

Outline • Motivation and claims • VNET: A virtual network for virtual machines – Globally controlled overlay networking with a layer 2 interface • VTP: Virtual transport processing – Add new network services to existing applications (Lange) • VTTIF: Application topology inference – Acquire traffic load matrices using existing traffic (Gupta) • VNET-Wren: Network inference (with Lowekamp) – Acquire network bandwidth and latency using existing traffic • VADAPT: Automatic adaptation – Determine VM->Host mapping, overlay topology and routing, etc using VTTIF and Wren information – Current Ph. D. thesis here (Sundararaj) • VRESERVE: Automatic lightpaths in optical networks – Make network reservations using VTTIF data • VSCHED: CPU reservations – Periodic real-time scheduling of VMs • UI: Human-directed adaptation work – Eliciting SLAs from end-users and letting them guide search – Current Ph. D. thesis here (Lin) 72

The Adaptation Problem • Given measurements – Application traffic (VTTIF) – Network characteristics (Wren) • Choose configuration – VM->Host mapping – Overlay topology and forwarding rules – CPU and network reservations • Such that – Some performance metric is maximized – Some SLA is satisfied – Some collection of the above is satisfied (multiple users) • Subject to constraints – 0/1 => NP Hardness – Capacity, etc. – Hidden constraints 73

The Adaptation Problem • Formalizatons of specific instances – MAMA 2005, HPDC 2005, USENIX VM 2004, IPDPS 2006 • Mechanisms to solve them – Heuristics – Simulated annealing / Genetic programming • Claims of sufficiency – One problem fits many instances (Sundararaj) 74

The Adaptation Problem • But exactly what are the metrics, SLAs, and hidden constraints for a given problem? – And how do they affect solution approaches? • Virtualization layer removes us from the user, making it much harder to get this information • Long history of adaptive application developers being unable to provide SLAs, metrics, or constraints. 75

Human-directed Adaptation • User interfaces that – Elicit SLAs or performance metrics from users – Permit users to guide the search of the solution space • Mechanisms that can be guided – VSCHED – … more in progress 76

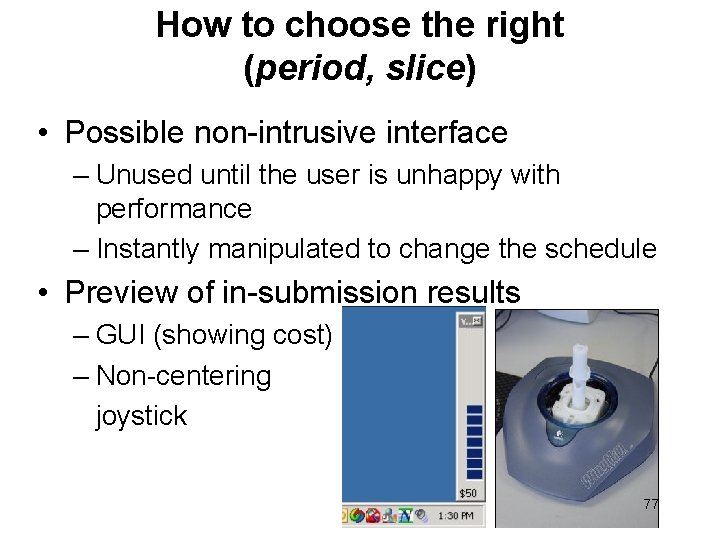

How to choose the right (period, slice) • Possible non-intrusive interface – Unused until the user is unhappy with performance – Instantly manipulated to change the schedule • Preview of in-submission results – GUI (showing cost) – Non-centering joystick 77

Results • User study of ~40 participants looking at comfort with CPU, disk, and memory resource contention for Word, Powerpoint, web browsing and game tasks – Very high variability in tolerance (intolerance=button press) – No such thing as a typical user A. Gupta, B. Lin, and P. Dinda, Measuring and Understanding User Comfort With Resource Borrowing, Proceedings of HPDC 2004. • User study of ~20 participants using the joystick control to set schedules for Word, Powerpoint, web browser, and game tasks – Almost all could find a setting that was comfortable and believed to be of lowest cost – Lowest cost highly variable, as expected given previous results – Interface adequate to capture individual user tradeoffs 78

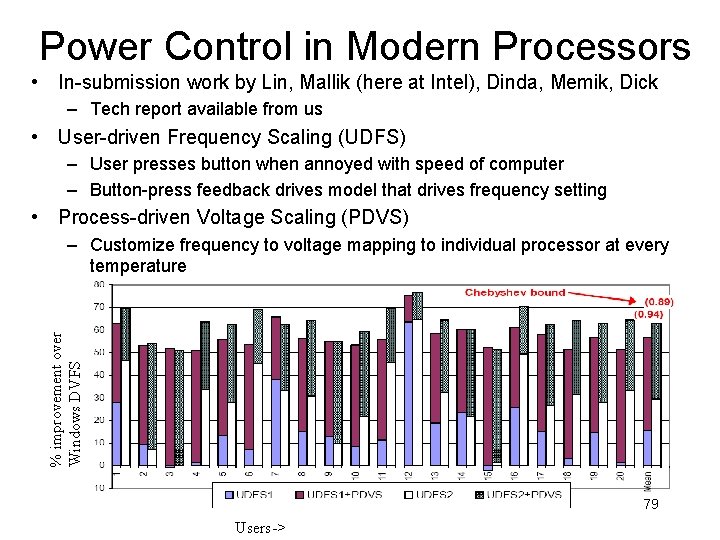

Power Control in Modern Processors • In-submission work by Lin, Mallik (here at Intel), Dinda, Memik, Dick – Tech report available from us • User-driven Frequency Scaling (UDFS) – User presses button when annoyed with speed of computer – Button-press feedback drives model that drives frequency setting • Process-driven Voltage Scaling (PDVS) % improvement over Windows DVFS – Customize frequency to voltage mapping to individual processor at every temperature 79 Users->

Where to Next • Time-sharing BSP-style parallel applications with performance isolation and control – In submission • Multiple schedule distributed applications • User interfaces for eliciting SLAs in high dimensionality configuration spaces with categorical dimensions – General adaptation in Virtuoso • User interfaces for guided search in high dimensionality configuration spaces and categorical dimensions – General adaptation in Virtuoso 80

Outline • Motivation and claims • VNET: A virtual network for virtual machines – Globally controlled overlay networking with a layer 2 interface • VTP: Virtual transport processing – Add new network services to existing applications (Lange) • VTTIF: Application topology inference – Acquire traffic load matrices using existing traffic (Gupta) • VNET-Wren: Network inference (with Lowekamp) – Acquire network bandwidth and latency using existing traffic • VADAPT: Automatic adaptation – Determine VM->Host mapping, overlay topology and routing, etc using VTTIF and Wren information – Current Ph. D. thesis here (Sundararaj) • VRESERVE: Automatic lightpaths in optical networks – Make network reservations using VTTIF data • VSCHED: CPU reservations – Periodic real-time scheduling of VMs • UI: Human-directed adaptation work – Eliciting SLAs from end-users and letting them guide search – Current Ph. D. thesis here (Lin) 81

Future Work • Develop mechanisms for hardware-level performance from virtual networking and other devices, even in tightly coupled machines • Develop an open VMM framework for modern architectures – Compile-time composition to build specialized VMMs – Run-time extensibility – Small footprint, suitable for use in HPC and education as well as in systems and architecture research • Generalize VTP concept • Explore tradeoff between amount of elicited user information or control and adaptation performance • Generalize “direct human input” approach to address other systems problems • Speculative remote display 82

For More Information • Virtuoso – http: //virtuoso. cs. northwestern. edu • Prescience Lab – http: //presciencelab. org • Peter Dinda – http: //pdinda. org 83

FIN, FIN-ACK, ACK • FOLLOWING SLIDES ARE BACKUPS 84

Optical Overlays • VRESERVE routing is accomplished with VNET overlay links • Issues – Reservation based resources usually aimed at high performance – Application unaware of changing network conditions • TCP performance is typically poor in high performance networks • Benefits – Application unaware of changing network conditions – Routing is much easier at the overlay level • Network is capable of reacting to global state changes 85

More Marketplace Ideas in Virtuoso • Pricing of – CPU ($/hour-bogomip) – Storage ($/month-MB) • Scoreboarding (public bids/asks) – Providers price their CPU and storage costs – Users query them using constraints A. Shoykhet, J. Lange, P. Dinda, Virtuoso: A System For Virtual Machine Marketplaces, Technical Report NWU-CS-04 -39, July, 2004, • Changing in current Virtuoso implementation 86

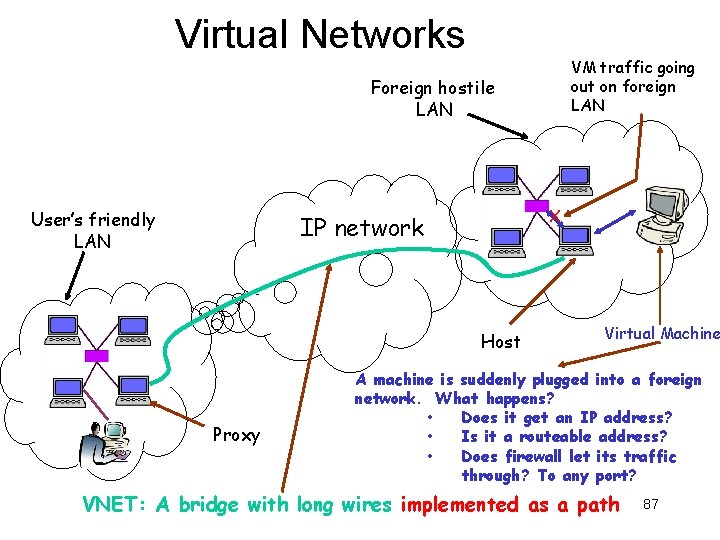

Virtual Networks VM traffic going out on foreign LAN Foreign hostile LAN User’s friendly LAN X IP network Host Proxy Virtual Machine A machine is suddenly plugged into a foreign network. What happens? • Does it get an IP address? • Is it a routeable address? • Does firewall let its traffic through? To any port? VNET: A bridge with long wires implemented as a path 87

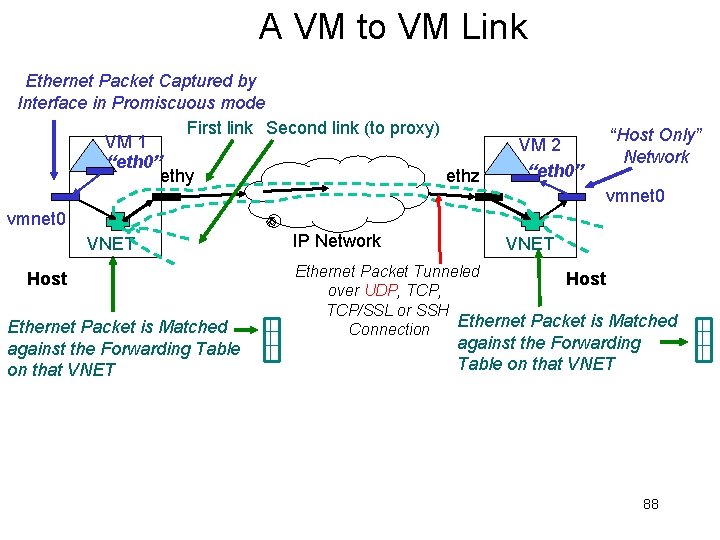

A VM to VM Link Ethernet Packet Captured by Interface in Promiscuous mode First link Second link (to proxy) VM 1 “eth 0” ethy ethz VM 2 “eth 0” “Host Only” Network vmnet 0 VNET Host Ethernet Packet is Matched against the Forwarding Table on that VNET IP Network VNET Ethernet Packet Tunneled Host over UDP, TCP/SSL or SSH Ethernet Packet is Matched Connection against the Forwarding Table on that VNET 88

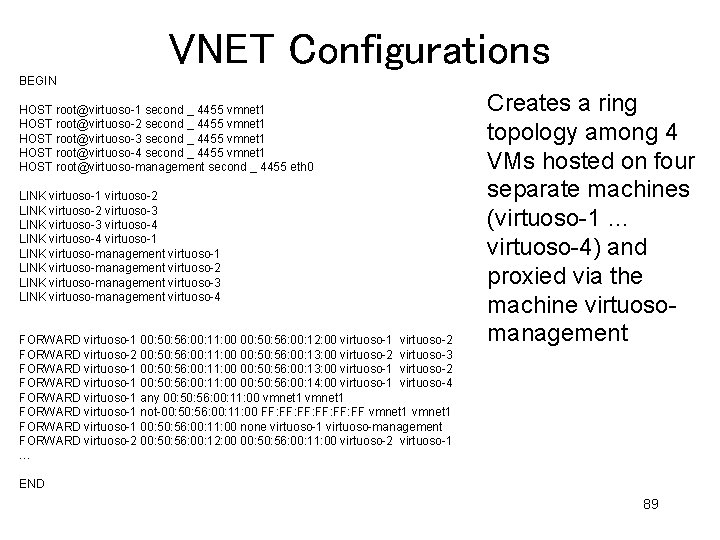

VNET Configurations BEGIN HOST root@virtuoso-1 second _ 4455 vmnet 1 HOST root@virtuoso-2 second _ 4455 vmnet 1 HOST root@virtuoso-3 second _ 4455 vmnet 1 HOST root@virtuoso-4 second _ 4455 vmnet 1 HOST root@virtuoso-management second _ 4455 eth 0 LINK virtuoso-1 virtuoso-2 LINK virtuoso-2 virtuoso-3 LINK virtuoso-3 virtuoso-4 LINK virtuoso-4 virtuoso-1 LINK virtuoso-management virtuoso-2 LINK virtuoso-management virtuoso-3 LINK virtuoso-management virtuoso-4 FORWARD virtuoso-1 00: 56: 00: 11: 00 00: 56: 00: 12: 00 virtuoso-1 virtuoso-2 FORWARD virtuoso-2 00: 56: 00: 11: 00 00: 56: 00: 13: 00 virtuoso-2 virtuoso-3 FORWARD virtuoso-1 00: 56: 00: 11: 00 00: 56: 00: 13: 00 virtuoso-1 virtuoso-2 FORWARD virtuoso-1 00: 56: 00: 11: 00 00: 56: 00: 14: 00 virtuoso-1 virtuoso-4 FORWARD virtuoso-1 any 00: 56: 00: 11: 00 vmnet 1 FORWARD virtuoso-1 not-00: 56: 00: 11: 00 FF: FF: FF: FF vmnet 1 FORWARD virtuoso-1 00: 56: 00: 11: 00 none virtuoso-1 virtuoso-management FORWARD virtuoso-2 00: 56: 00: 12: 00 00: 56: 00: 11: 00 virtuoso-2 virtuoso-1 … Creates a ring topology among 4 VMs hosted on four separate machines (virtuoso-1 … virtuoso-4) and proxied via the machine virtuosomanagement END 89

VNET 1. 0 • Client/Server – SSL + password authentication • Simple text-based protocol • Dependencies (all public) – – – – C++ (g++) Perl 5. 8 Sockets Libpcap (packet capture) Libnet (packet injection) SSL VMM must expose interface • True of VMware, Xen, User Mode Linux • Otherwise, VMM-independent 90

Performance Enhancements • Faster ways of getting packets into and out of the VM… • While maintaining ability to run simply on interfaces • “Bootstrap” to fastest interface possible • Ethernet->Ethernet mapping for inter. VM communication when both VMs are on the same LAN 91

Fast VM<->VNET communication • Host Kernel / VNET shared memory segment communication for packet filtering and injection – Avoid copy cost, system-call overhead • In-Host kernel implementation of VNET forwarding loop – Avoid context switch from VMM to VNET, avoid copy cost • In-Guest kernel VNET device driver – Avoid additional context switch and copy step 92

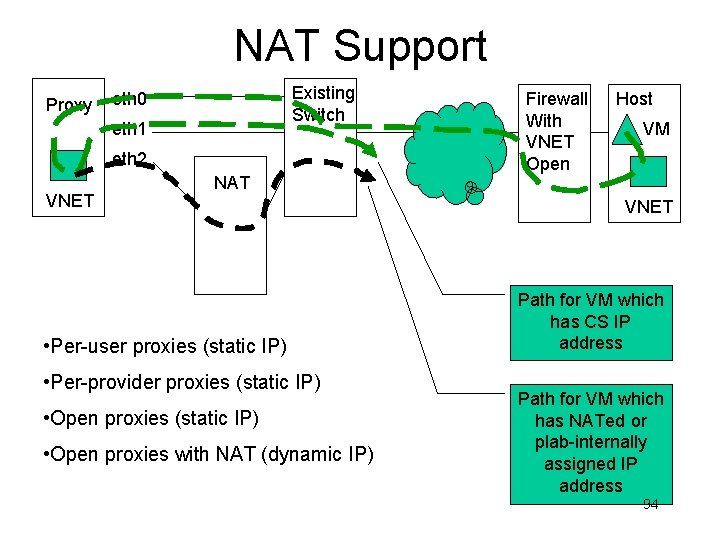

Policy Conformance Routing • VNET overlay links can take many forms… • UDP, TCP/SSL, SSH (Current) • UDP+STUN, TCP+STUN, TOR, HTTP (Planned) – STUN => traverse NATs – TOR => anonymous • Many topologies and forwarding rules possible among a collection of VNET daemons supporting VMs with a given application topology and traffic load matrix • Which topology and what choice of link type for each edge achieves desired connectivity (if possible) with highest performance? • A form of the adaptation problems we have already made much progress on in Virtuoso. 93

NAT Support Proxy Existing Switch eth 0 eth 1 eth 2 VNET NAT • Per-user proxies (static IP) • Per-provider proxies (static IP) • Open proxies with NAT (dynamic IP) Firewall With VNET Open Host VM VNET Path for VM which has CS IP address Path for VM which has NATed or plab-internally assigned IP address 94

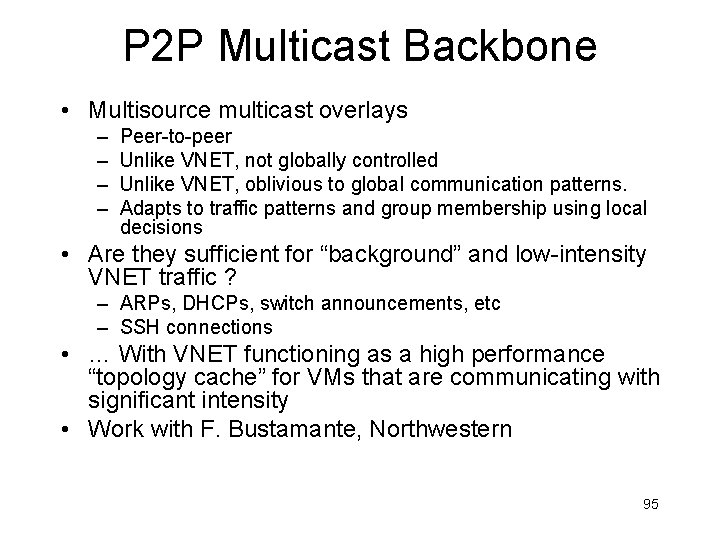

P 2 P Multicast Backbone • Multisource multicast overlays – – Peer-to-peer Unlike VNET, not globally controlled Unlike VNET, oblivious to global communication patterns. Adapts to traffic patterns and group membership using local decisions • Are they sufficient for “background” and low-intensity VNET traffic ? – ARPs, DHCPs, switch announcements, etc – SSH connections • … With VNET functioning as a high performance “topology cache” for VMs that are communicating with significant intensity • Work with F. Bustamante, Northwestern 95

- Slides: 95