Distributed Computing Part 1 Resource Distributed Computing Principles

Distributed Computing Part 1 Resource: Distributed Computing, Principles, Algorithms and Systems, Ajay D. Kshemkalyani and Mukesh Singhal, Cambridge University Press, 2008. 1

Introduction • A distributed System distribute jobs or computation among several physical processor (autonomous computers). • So, several processors interconnected with each other by a communication network forms a Distributed System. • Distributed Systems distribute jobs or computation among several physical processors. – A distributed system is a collection of independent entities that cooperate to solve a problem that cannot be individually solved. • Autonomous processors communicating over a communication network. – A distributed system allows resource sharing, including software by systems connected to the network. 2

Introduction • A distributed system is the collection of autonomous computers that are connected using a communication network and they communicate with each other by passing messages. • The different processors have their own local memory. They use a distribution middleware. • They help in sharing different resources and capabilities to provide users with a single and integrated coherent network. • https: //www. ques 10. com/p/24024/define-and-give-examples-ofdistributed-computing-/ 3

Introduction • An important goal of a distributed system is to make it easy for users (and applications) to access and share remote resources. – Resources can be virtually anything, but typical examples include peripherals, storage facilities, data, files, services, networks, etc. – Is Facebook a distributed system? • Facebook, the online social network (OSN) system is relying on globally distributed data centers which are highly dependent on centralized U. S data centers. – Is Google a distributed system? • Google is one of the largest distributed systems in use today. • Examples of distributed systems /distributed computing: • Intranets, Internet, WWW, email, P 2 P networks, Telecommunication networks, Google file system (GFS), etc. 4

Introduction • The following are the advantages of a distributed system: – – – Resource sharing Openess Concurrency Scalability Fault Tolerance Transparency 5

Features of a Distributed System • A distributed system can be characterized by the following features: – – No common physical clock No shared memory Geographical separation Autonomy and heterogeneity • The processors are “loosely coupled” – they have different speeds and each can be running a different OS. 6

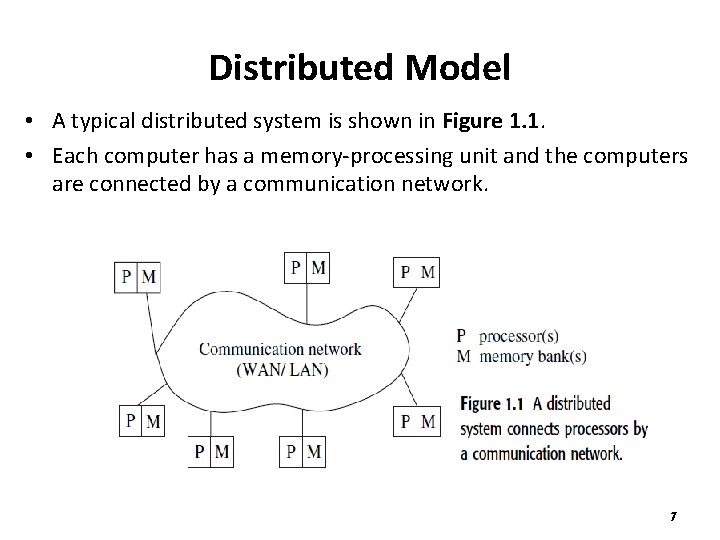

Distributed Model • A typical distributed system is shown in Figure 1. 1. • Each computer has a memory-processing unit and the computers are connected by a communication network. 7

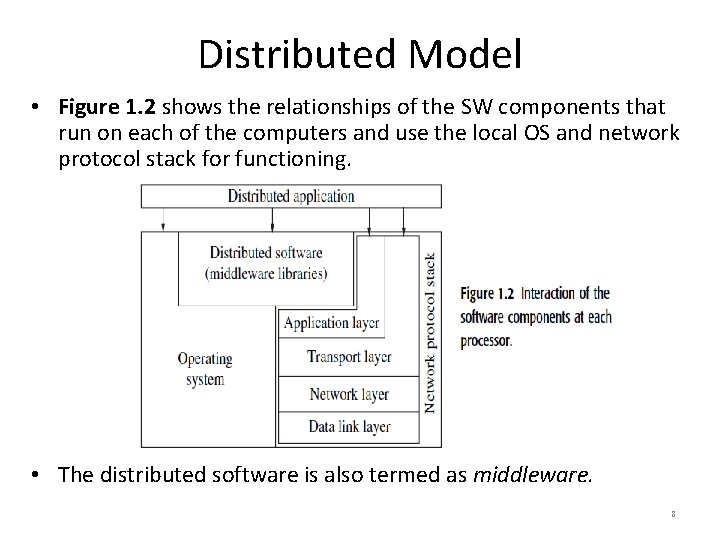

Distributed Model • Figure 1. 2 shows the relationships of the SW components that run on each of the computers and use the local OS and network protocol stack for functioning. • The distributed software is also termed as middleware. 8

Distributed System Motivation • The motivation for distributed system includes: – Inherently distributed computations: such as money transfer in banking, or reaching consensus among parties that are geographically distant. – Resource sharing: Resources such as peripherals, complete data sets in databases, special libraries, etc. are possible to share among machines. – Access to geographically remote data and resources: Computers can access geographically remote data through their network arrangement. – Enhanced reliability: • availability, i. e. , the resource should be accessible at all times. • Integrity: the value/state of the resource should be correct, in the face of concurrent access from multiple processors as per the application. • fault-tolerance: The ability to recover from system failures. – Increased performance/cost ratio : By resource sharing and accessing geographically remote data/resources, the performance/cost ratio is increased. – Modularity and incremental expandability: Heterogeneous processors may be easily added into the system without affecting the performance. 9

Message-passing vs. Shared Memory Systems • Emulating message-passing (MP) on a shared memory(SM)system (MP →SM) • Emulating shared memory on a message-passing system (SM →MP) 10

Emulating shared memory on a messagepassing system (SM →MP) • The shared address space can be partitioned into disjoint parts, one part being assigned to each processor. • “Send” and “receive” operations can be implemented by writing to and reading from the destination/sender processor’s address space, respectively. • A Pi–Pj message-passing can be emulated by a write by Pi to the mailbox and then a read by Pj from the mailbox. • these mailboxes can be assumed to have unbounded size. • The write and read operations need to be controlled using • synchronization primitives to inform the receiver/sender after the data has been sent/received. – Partition shared address space – Send/Receive emulated by writing/reading from special mailbox per pair of processes 11

Emulating Shared Memory on a Message. Passing System (SM →MP) • This involves the use of “send” and “receive” operations for “write” and “read” operations. • Each shared location can be modeled as a separate process; – “write” to a shared location is emulated by sending an update message to the corresponding owner process; • Write to shared object emulated by sending message to owner process of the object – “read” to a shared location is emulated by sending a query message to the owner process; • Read from shared object emulated by sending query to owner process of the shared object 12

Emulating Shared Memory on a Message. Passing System (SM →MP) • The latencies involved in read and write operations may be high even when using shared memory emulation because the read and write operations are implemented by using network-wide communication under the system specification. 13

Emulating Shared Memory on a Message. Passing System (SM →MP) • An application can of course use a combination of shared memory and message-passing: – In a MIMD message-passing multicomputer system, each “processor” may be a tightly coupled multiprocessor system with shared memory. – Within the multiprocessor system, the processors communicate via shared memory. – Between two computers, the communication is by message passing. • As message-passing systems are more common and more suited for wide-area distributed systems, messagepassing systems are more extensively focused here. 14

Communication Primitives • Synchronous (send/receive) – Handshake between sender and receiver – Send completes when receive completes – Receive completes when data copied into buffer • The processing for the Receive primitive completes when the data is copied into the receiver’s buffer. – A send operation is called synchronous when the operation will only complete after the message sent has been received • Asynchronous (send) – Control returns to process when data copied out of userspecified buffer • It does not make sense to define asynchronous Receive primitives. 15

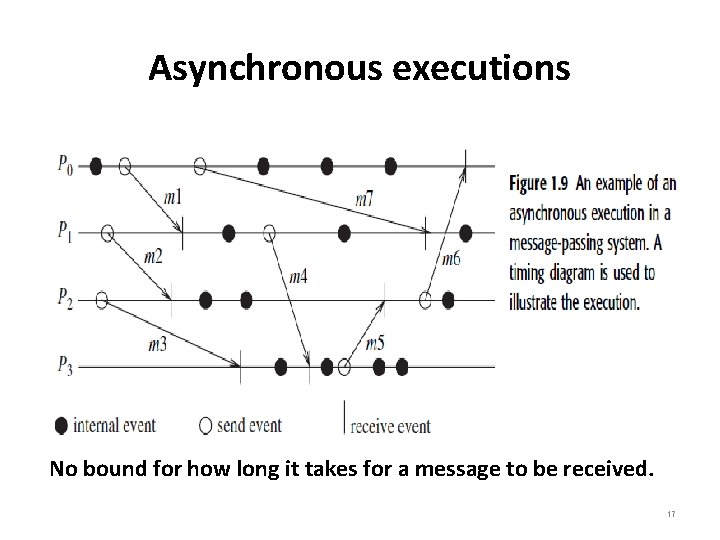

Synchronous versus asynchronous executions • An asynchronous execution is an execution in which – there is no processor synchrony and there is no bound on the drift rate of processor clocks, – message delays (transmission + propagation times) are finite but unbounded, and – there is no upper bound on the time taken by a process to execute a step. • Asynchronous means that you can execute multiple things at a time and you don't have to finish executing the current thing in order to move on to next one. • An example asynchronous execution with four processes P 0 to P 3 is shown in Figure 1. 9 (the arrows denote the messages). 16

Asynchronous executions No bound for how long it takes for a message to be received. 17

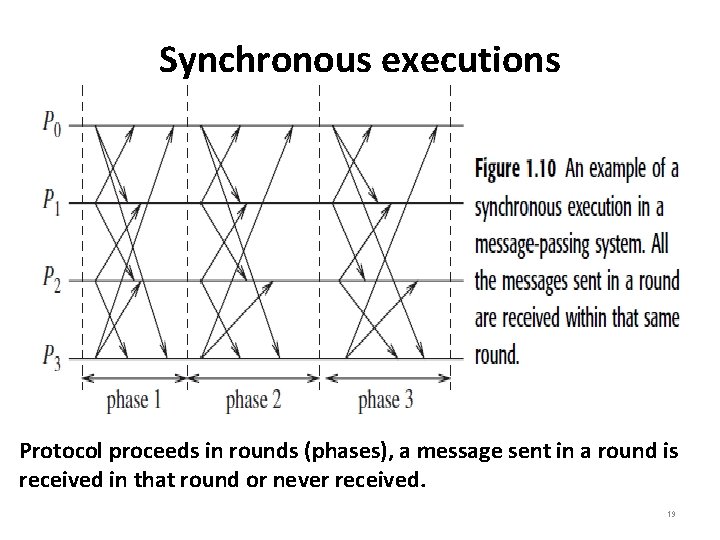

Synchronous executions • A synchronous execution is an execution in which; – processors are synchronized and the clock drift rate between any two processors is bounded, – message delivery times (transmission + delivery times) are such that they occur in one logical step or round, and – there is a known time taken by a process to execute a step. • When you execute something synchronously, you wait for it to finish before moving on to next step. When you execute something asynchronously, you can move on to next step or task before it finishes. • An example of a synchronous execution with four processes P 0 to P 3 is shown in Figure 1. 10 (the arrows denote the messages). 18

Synchronous executions Protocol proceeds in rounds (phases), a message sent in a round is received in that round or never received. 19

Distributed Systems Challenges • The following functions must be addressed when designing and building a distributed system: – Communication : This task involves designing appropriate mechanisms for communication among the processes in the network, for example: RPC, remote object invocation (ROI), message-oriented vs. streamoriented (audio stream) communications, etc. • Message Oriented Communication can be viewed as: • In persistent communication, messages are stored at each intermediate hop along the way until the next node is ready to take delivery of the message. Example: e-mail. • In transient communication, messages are buffered only for small periods of time, If the message cannot be delivered or the host is down, it is discarded. Example: General TCP/IP communication. • In synchronous or blocking communication, the sender blocks further operations until an acknowledgement or response is received. • In asynchronous or non-blocking communication, the sender continues execution without waiting for any acknowledgement or response. 20

Distributed Systems Challenges – Processes: This involves the issues of management of processes and threads at clients/servers, code migration, the design of protocols , etc. – Naming: This involves the issues of name schemes, identifiers, and addresses are essential for locating resources, etc. – Synchronization: Mechanisms for synchronization or coordination among the processes are essential. – Data storage and access: Schemes for data storage, and implicitly for accessing the data in a fast and scalable manner across the network are important for efficiency. 21

Distributed Systems Challenges – Consistency and replication: To avoid bottlenecks, to provide fast access to data, and to provide scalability, replication of data objects is highly desirable • This leads to issues of managing the replicas, and dealing with consistency among the replicas/caches. – Fault tolerance: Fault tolerance requires maintaining correct and efficient operation in spite of any failures of links, nodes, and processes. – Security: In a distributed system, security involves various aspects of cryptography, secure channels, access control, key management – generation and distribution, authorization, secure group management, etc. 22

Distributed Systems Challenges – Scalability and modularity: The algorithms, data (objects), and services must be as distributed as possible. Various techniques such as replication, caching and cache management, and asynchronous processing help to achieve scalability. 23

Load balancing • The goal of load balancing is to gain higher throughput, and reduce the user perceived latency. • Load balancing may be necessary because of high network traffic or high request rate causing the network connection to be a bottleneck, or high computational load. • The following are some forms of load balancing: – Data migration The ability to move data (which may be replicated) around in the system, based on the access pattern of the users. – Computation migration The ability to relocate processes in order to perform a redistribution of the workload. – Distributed scheduling This achieves a better turnaround time for the users by using idle processing power in the system more efficiently. 24

A Model of Distributed Computation • A distributed system consists of a set of processors that are connected by a communication network. • The communication network provides the facility of information exchange among processors. • The communication delay is finite but unpredictable. • The processors do not share a common global memory and communicate solely by passing messages over the communication network. • There is no physical global clock in the system to which processes have instantaneous access. 25

A Model of Distributed Program • A distributed program is composed of a set of n asynchronous processes p 1, p 2, ……, pn that communicate by message passing over the communication network. • Each process is running on a different processors and the processes do not share a global memory and communicate solely by passing messages. • Let Cij denote the channel from process pi to process pj and let mij denote a message sent by pi to pj. – The message transmission delay is finite and unpredictable. • Process execution and message transfer are asynchronous – a process may execute an action spontaneously and a process sending a message does not wait for the delivery of the message to be complete. 26

A Model of Distributed Execution • The execution of a process consists of atomic actions and are modeled as three types of events: internal events, message send events, and message receive events. • Let exi denote the xth event at process pi. • let send(m) and rec(m) denote the send and receive events of a message m. • The occurrence of events changes the states of respective processes and channels: – A send event changes the state of the process that sends the message and the state of the channel on which the message is sent. – A receive event changes the state of the process that receives the message and the state of the channel on which the message is received. 27

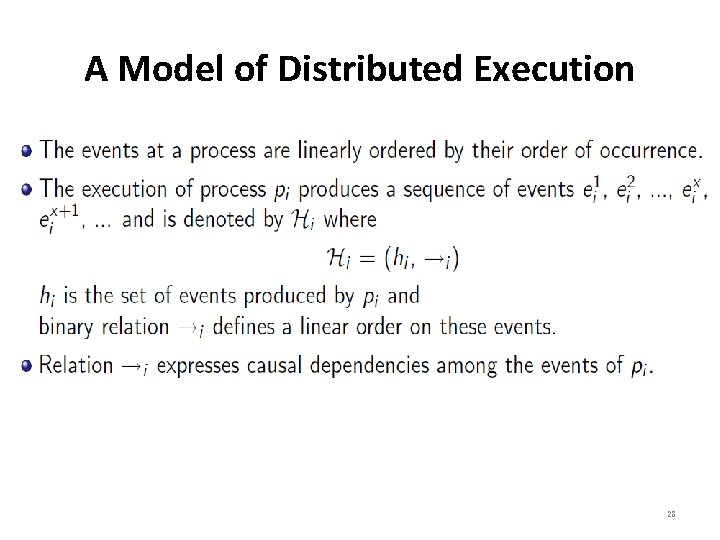

A Model of Distributed Execution 28

A Model of Distributed Execution • The send and the receive events signify the flow of information between processes and establish causal dependency from the sender process to the receiver process. • A relation →msg that captures the causal dependency due to message exchange, is defined as follows: – For every message m that is exchanged between two processes, we have, send(m) →msg rec(m) • Relation →msg defines causal dependencies between the pairs of corresponding send and receive events. 29

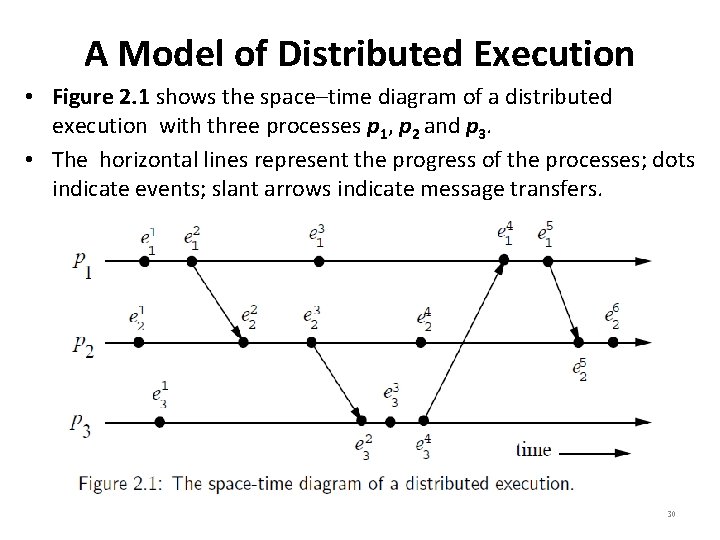

A Model of Distributed Execution • Figure 2. 1 shows the space–time diagram of a distributed execution with three processes p 1, p 2 and p 3. • The horizontal lines represent the progress of the processes; dots indicate events; slant arrows indicate message transfers. 30

Casual Precedence Relation • The relation → is Lamport’s “happens before” relation. • For any two events ei and ej , if ei → ej , then event ej is directly or transitively dependent on event ei (event ei happens before event ej). • The relation → denotes flow of information in a distributed computation and ei → ej dictates that all the information available at ei is potentially accessible at ej (see Figure 2. 1). 31

Casual Precedence Relation 32

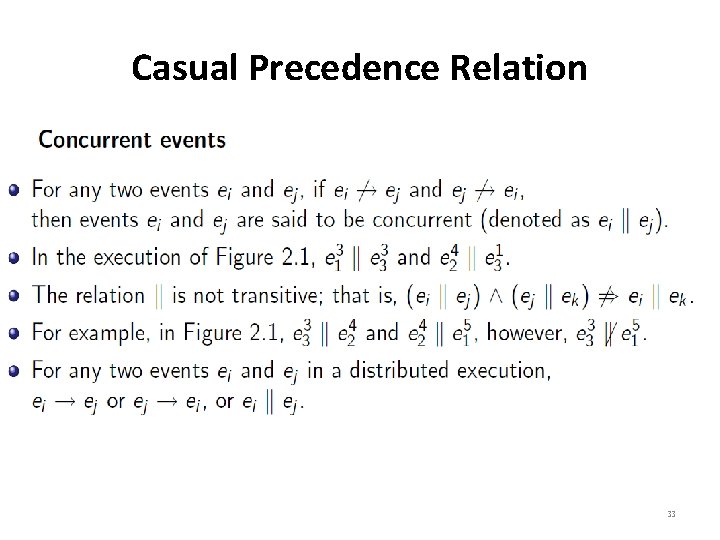

Casual Precedence Relation 33

Logical vs. Physical Concurrency • In a distributed computation, two events are logically concurrent iff they do not causally affect each other. • Physical concurrency: the events occur at the same instant in physical time. • Two or more events may be logically concurrent even though they do not occur at the same instant in physical time: – It depends on processor speed and message delays. – Whether a set of logically concurrent events coincide in the physical time or not, does not change the outcome of the computation. 34

Models of Communication Networks • There are several models of the service provided by communication networks: FIFO, Non-FIFO, and Causally Ordered(CO). – In the FIFO model, each channel acts as a first-in first-out message queue and thus, message ordering is preserved by a channel. – In the non-FIFO model, a channel acts like a set in which the sender process adds messages and the receiver process removes messages from it in a random order. – In the CO model, a message communication is based on the Lamport’s “happens before” relation. 35

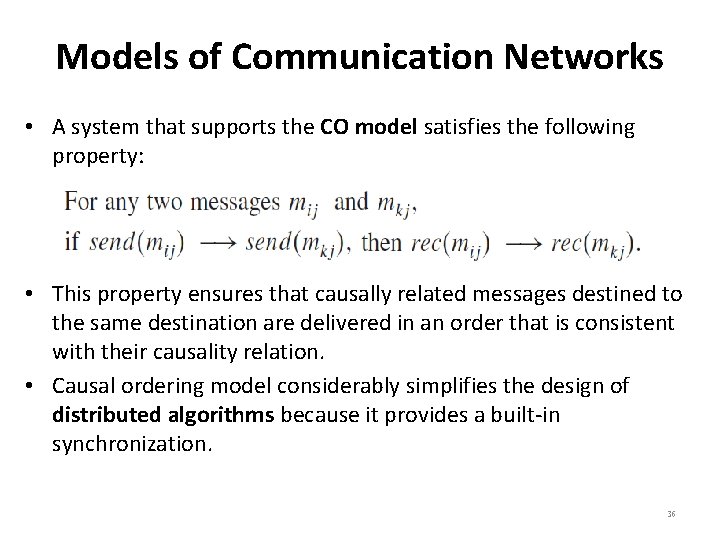

Models of Communication Networks • A system that supports the CO model satisfies the following property: • This property ensures that causally related messages destined to the same destination are delivered in an order that is consistent with their causality relation. • Causal ordering model considerably simplifies the design of distributed algorithms because it provides a built-in synchronization. 36

Global State of a Distributed System • Global State (GS) of a distributed system is a collection of its local states (LS) including the states of processes and the communication channels: – The state of a process is defined by the contents of processor registers, stacks, local memory, etc. and depends on the local context of the distributed application. – The state of channel is given by the set of messages in transit in the channel. 37

Global State of a Distributed System • The occurrence of events changes the states of respective processes and channels: – A send event changes the state of the process that sends the message and the state of the channel on which the message is sent. – A receive event changes the state of the process that receives the message and the state of the channel on which the message is received. 38

Global State of a Distributed System 39

Global State of a Distributed System 40

Global State of a Distributed System • A Consistent Global State – Even if the state of all the send and receive components is not recorded at the same instant, such a state will be meaningful when every message that is recorded as received is also recorded as sent. – Basic idea is that a state should not violate causality: • A message cannot be received if it was not sent. Such states are called consistent global states and are meaningful global states. – Inconsistent global states are not meaningful in the sense that a distributed system can never be in an inconsistent state. 41

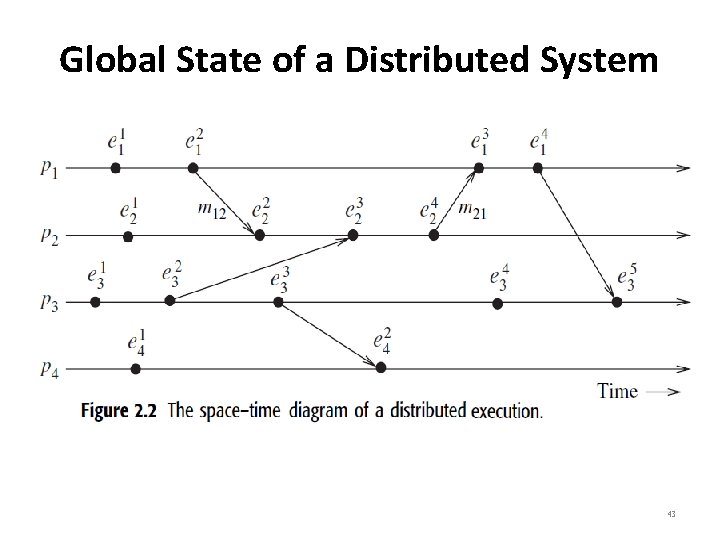

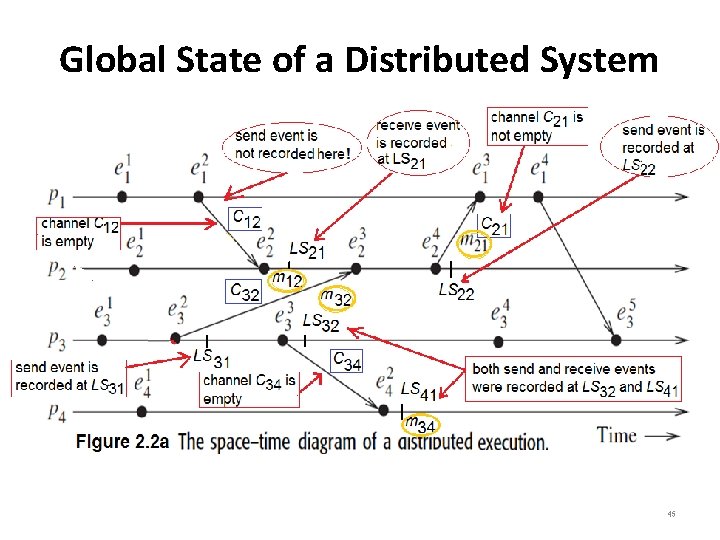

Global State of a Distributed System • A model of distributed execution is shown in Figure 2. 2. 42

Global State of a Distributed System 43

Global State of a Distributed System • All channels that are recorded as empty (means the messages have been sent to the destination and no more messages left) in a transitless global state. • A global state is strongly consistent iff it is transitless as well as consistent. • The following can be noted from Figure 2. 2 a: – The message m 12 sent by p 1 is an inconsistent message because its send event is not recorded in the local state (LS) of p 1. – The message m 21 sent by p 2 is a consistent message because its send event is recorded at LS 22 and the channel C 21 is not empty. – The message m 34 sent by p 3 is a strongly consistent message because its send and receive events are recorded at LS 31 and LS 41 and the channel C 34 is empty after recording the received message at LS 41. 44

Global State of a Distributed System 45

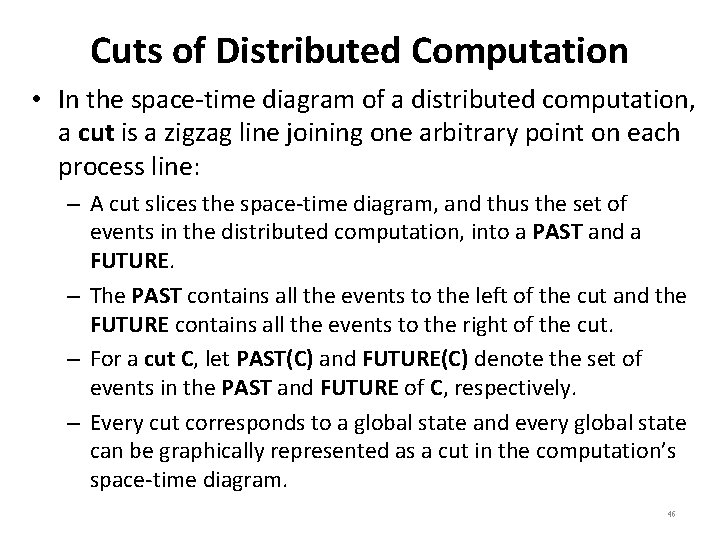

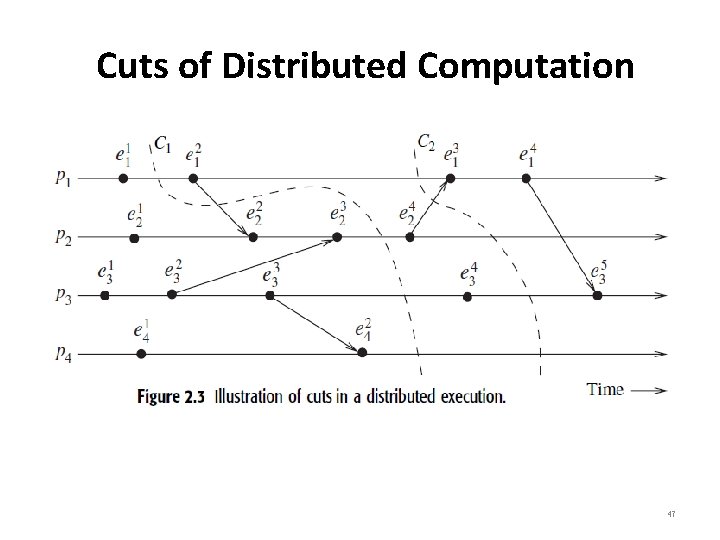

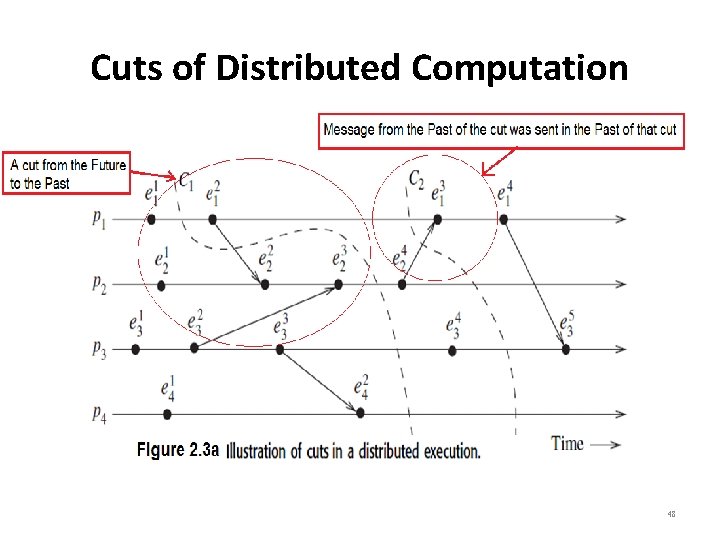

Cuts of Distributed Computation • In the space-time diagram of a distributed computation, a cut is a zigzag line joining one arbitrary point on each process line: – A cut slices the space-time diagram, and thus the set of events in the distributed computation, into a PAST and a FUTURE. – The PAST contains all the events to the left of the cut and the FUTURE contains all the events to the right of the cut. – For a cut C, let PAST(C) and FUTURE(C) denote the set of events in the PAST and FUTURE of C, respectively. – Every cut corresponds to a global state and every global state can be graphically represented as a cut in the computation’s space-time diagram. 46

Cuts of Distributed Computation 47

Cuts of Distributed Computation 48

Cuts of Distributed Computation 49

Models of Process Communication • There are two basic models of process communications – synchronous and asynchronous. • The synchronous communication model is a blocking type where on a message send, the sender process blocks until the message has been received by the receiver process. • The sender process resumes execution only after it learns that the receiver process has accepted the message. – Thus, the sender and the receiver processes must synchronize to exchange a message. • Asynchronous communication model is a non-blocking type where the sender and the receiver do not synchronize to exchange a message. – After having sent a message, the sender process does not wait for the message to be delivered to the receiver process. 50

Logical time • The knowledge of the causal precedence relation among the events of processes helps solve a variety of problems in distributed systems, such as distributed algorithms design, tracking of dependent events, knowledge about the progress of a computation, concurrency measures, etc. • In a distributed computation, the causality relation between events produced by a program execution and its fundamental monotonicity property can be accurately captured by logical clocks. 51

Logical time • The logical clock C (Proposed by Lamport)is a function that maps an event e in a distributed system to an element in the time domain T, denoted as C(e) and called the timestamp of e, and is defined as follows: for two events ei and ej , ei → ej ⇒ C(ei) < C(ej ) • Relation < is called the happened before or causal precedence. 52

Implementing Logical Clocks • Implementation of logical clocks requires addressing two issues: – data structures local to every process to represent logical time: • A local logical clock, denoted by lci , that helps process pi measure its own progress. • A logical global clock, denoted by gci , that is a representation of process pi ’s local view of the logical global time. Typically, lci is a part of gci. – A protocol with the following rules to ensure the consistency condition: • R 1: This rule governs how the local logical clock is updated by a process when it executes an event. • R 2: This rule governs how a process updates its global logical clock to update its view of the global time and global progress. 53

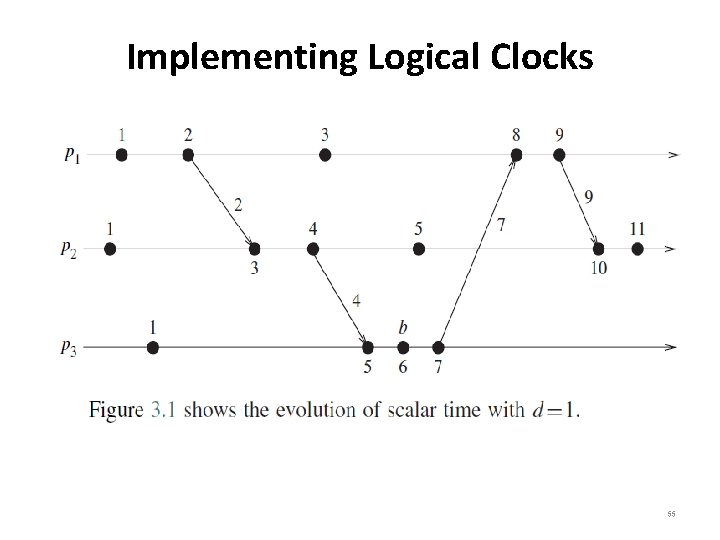

Implementing Logical Clocks • Time domain is the set of non-negative integers. • Rules R 1 and R 2 to update the clocks are as follows: – R 1: Before executing an event (send, receive, or internal), process pi executes the following: Ci : = Ci + d (d > 0). – R 2: Each message piggybacks the clock value of its sender at sending time. When a process pi receives a message with timestamp Cmsg , it executes the following actions: • Ci : = max(Ci , Cmsg) • Execute R 1 • Deliver the message • Figure 3. 1 shows the evolution of scalar time with d=1. 54

Implementing Logical Clocks 55

Implementing Logical Clocks • CONDITIONS SATISFIED BY THE SYSTEM OF CLOCKS (C) – For any events a and b: if a b, then C(a) < C(b) ]C 1]: For a and b in a process Pi, if a occurs before b, then Ci(a) < Ci(b( ]C 2]: If a is the event of sending m in Pi and b is the event of receiving m at Pj, then Ci(a) < Cj(b( Implementation Rule ]R 1]: Clock Ci is incremented between any two successive events in Pi: Ci = Ci + d (d > 0) , if a and b are events in Pi, a b then Ci(b) = Ci(a) + d ]R 2]: If a in Pi sends a message m, then timestamp, tm of a is tma = Ci(a) (after IR 1 . ( On Pj receiving m, the timestamp is: Cj = max(Cj-1 +d, tma + d) { usually the value of d is 1 } 56

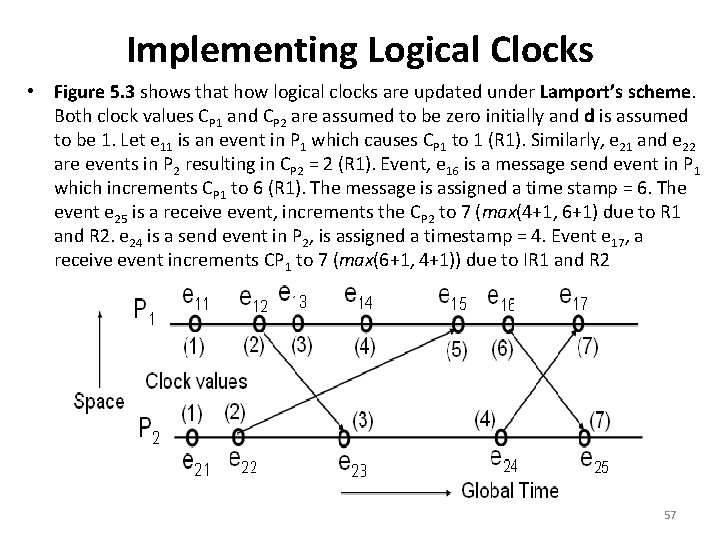

Implementing Logical Clocks • Figure 5. 3 shows that how logical clocks are updated under Lamport’s scheme. Both clock values CP 1 and CP 2 are assumed to be zero initially and d is assumed to be 1. Let e 11 is an event in P 1 which causes CP 1 to 1 (R 1). Similarly, e 21 and e 22 are events in P 2 resulting in CP 2 = 2 (R 1). Event, e 16 is a message send event in P 1 which increments CP 1 to 6 (R 1). The message is assigned a time stamp = 6. The event e 25 is a receive event, increments the CP 2 to 7 (max(4+1, 6+1) due to R 1 and R 2. e 24 is a send event in P 2, is assigned a timestamp = 4. Event e 17, a receive event increments CP 1 to 7 (max(6+1, 4+1)) due to IR 1 and R 2 57

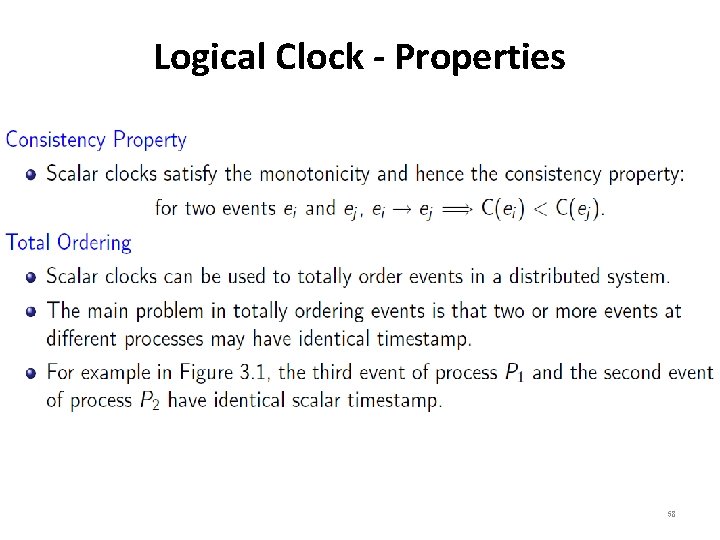

Logical Clock - Properties 58

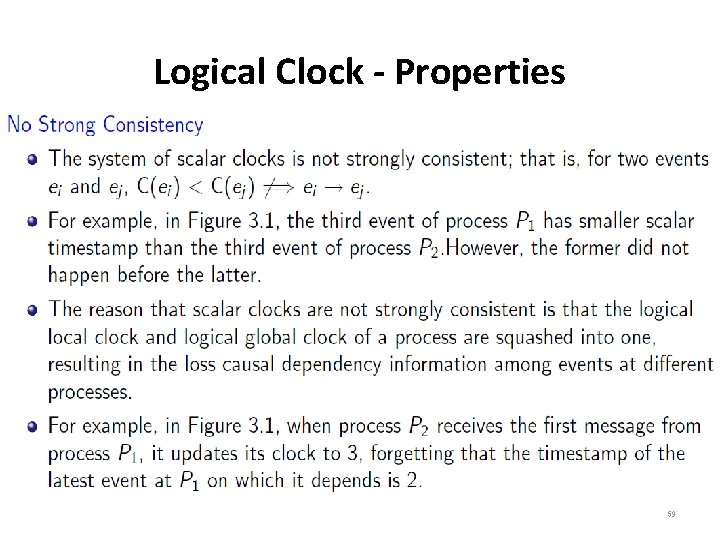

Logical Clock - Properties 59

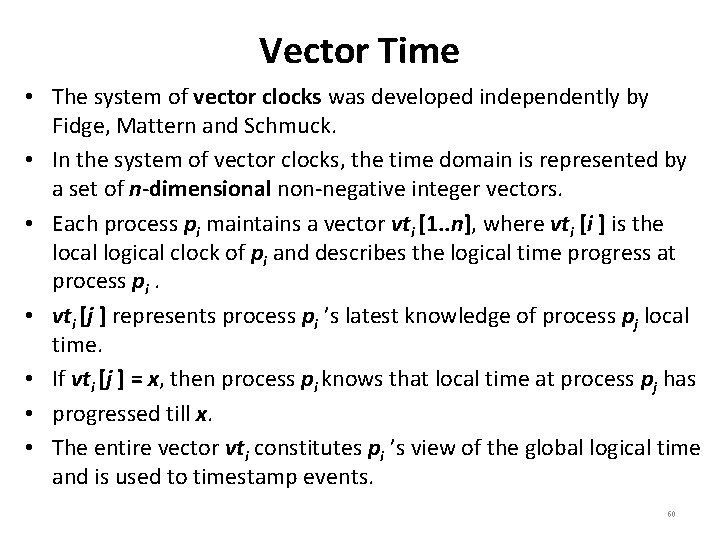

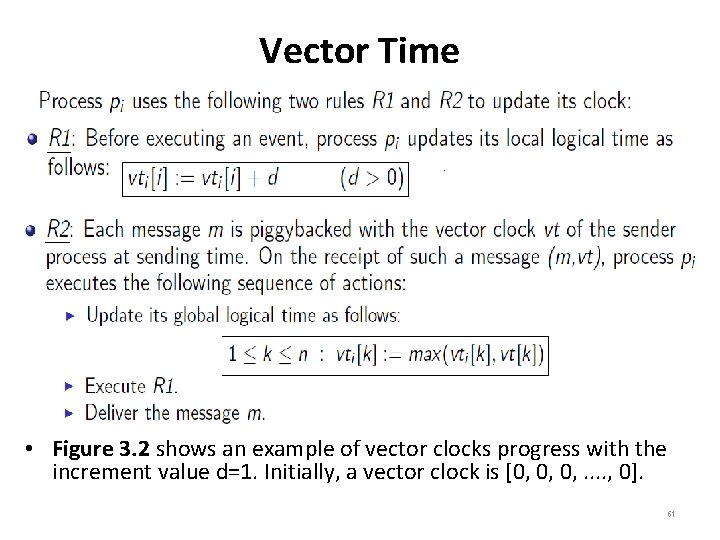

Vector Time • The system of vector clocks was developed independently by Fidge, Mattern and Schmuck. • In the system of vector clocks, the time domain is represented by a set of n-dimensional non-negative integer vectors. • Each process pi maintains a vector vti [1. . n], where vti [i ] is the local logical clock of pi and describes the logical time progress at process pi. • vti [j ] represents process pi ’s latest knowledge of process pj local time. • If vti [j ] = x, then process pi knows that local time at process pj has • progressed till x. • The entire vector vti constitutes pi ’s view of the global logical time and is used to timestamp events. 60

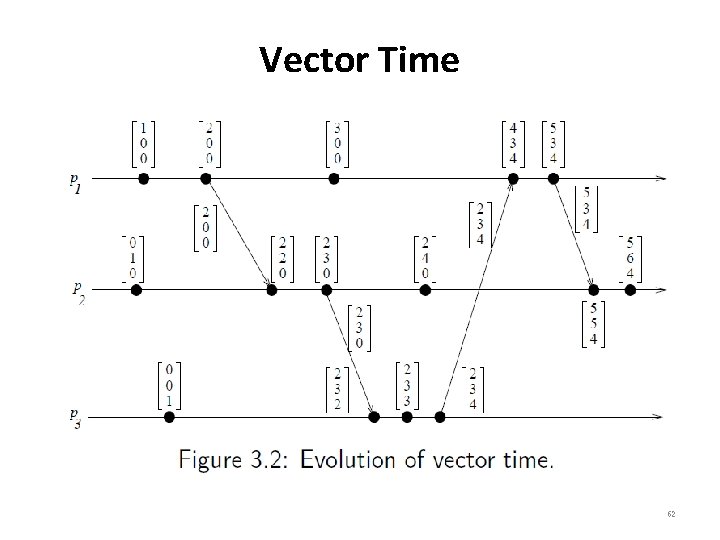

Vector Time • Figure 3. 2 shows an example of vector clocks progress with the increment value d=1. Initially, a vector clock is [0, 0, 0, . . , 0]. 61

Vector Time 62

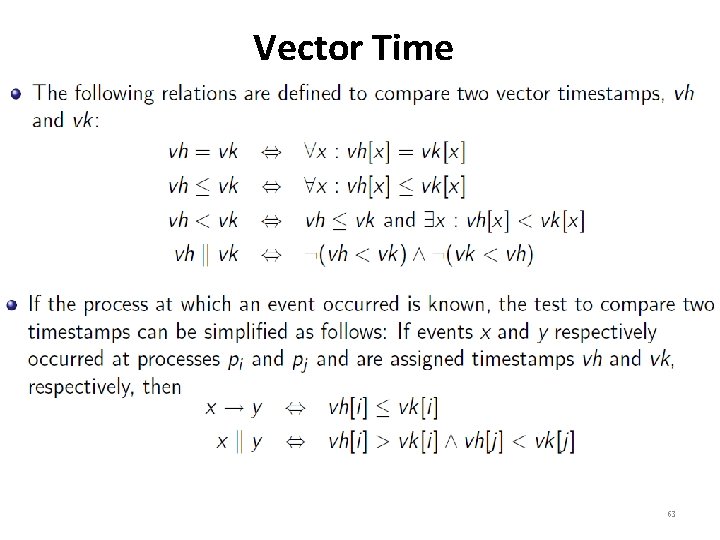

Vector Time 63

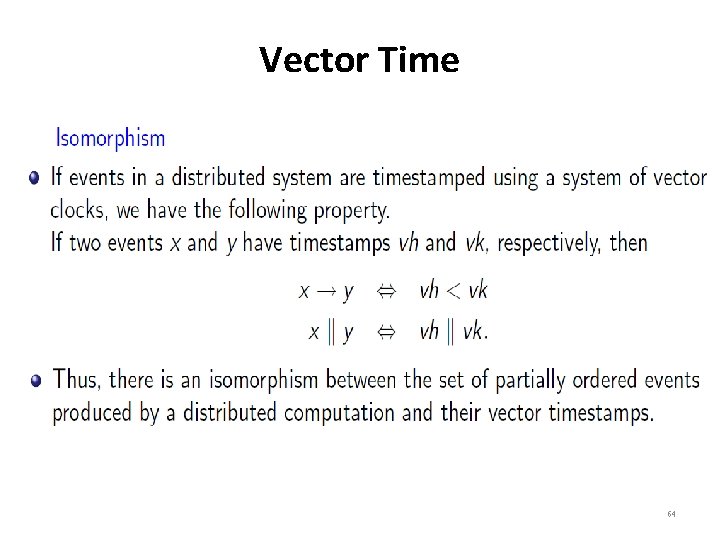

Vector Time 64

Vector Time • Since vector time tracks causal dependencies exactly, it finds a wide variety of applications: – For example, they are used in distributed debugging, implementations of causal ordering communication , causal distributed shared memory, establishment of global breakpoints, and in determining the consistency of checkpoints in optimistic recovery. 65

Global State and Snapshot Recording • Recording the global state of a distributed system onthe-fly is an important paradigm. • The lack of globally shared memory, global clock and unpredictable message delays in a distributed system make this problem non-trivial. 66

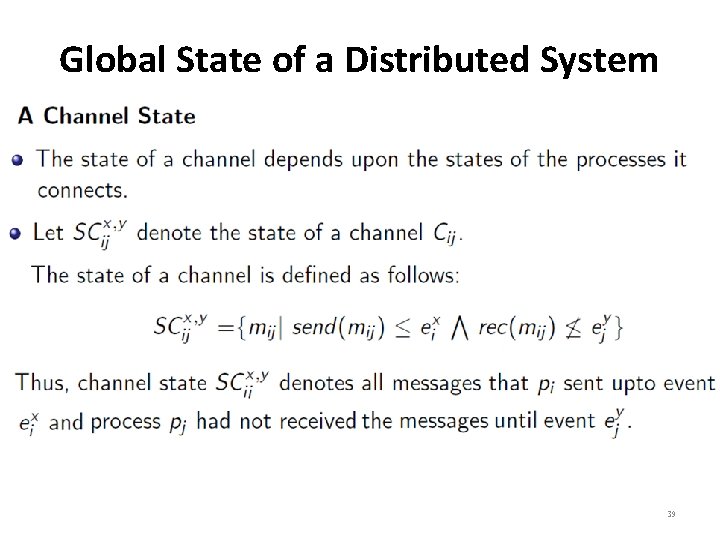

System Model • The system consists of a collection of n processes p 1, p 2, . . . , pn that are connected by channels. • Processes communicate by passing messages through communication channels. • Cij denotes the channel from process pi to process pj and the channel state is denoted by SCij. • The actions performed by a process are modeled as three types of events: Internal events, the message send event and the message receive event. • For a message mij that is sent by process pi to process pj , let send(mij) and rec(mij) denote its send and receive events. 67

System Model • At any instant, the state of process pi , denoted by LSi , is a result of the sequence of all the events execution. • For an event e and a process state LSi , e ∈ LSi iff e belongs to the sequence of events that have taken process pi to state LSi. • For an event e and a process state LSi , e LSi iff e does not belong to the sequence of events that have taken process pi to state LSi. • For a channel Cij , the following set of messages can be defined based on the local states of the processes pi and pj : Transit: transit(LSi, Lsj ) = {mij |send(mij ) ∈ Lsi rec(mij) LSj } • Message mij is sent and recorded by pi and it is on the channel Cij and is not received and recorded by pj. 68

Model of Communication • There are three models of communication: FIFO, non. FIFO, and causally ordered: – In FIFO model, each channel acts as a first-in first-out message queue and thus, message ordering is preserved by a channel. – In non-FIFO model, a channel acts like a set in which the sender process adds messages and the receiver process removes messages from it in a random order. – A causal delivery of messages satisfies the following property: “For any two messages mij and mkj , if send(mij ) → send(mkj), then rec(mij ) → rec(mkj)”. 69

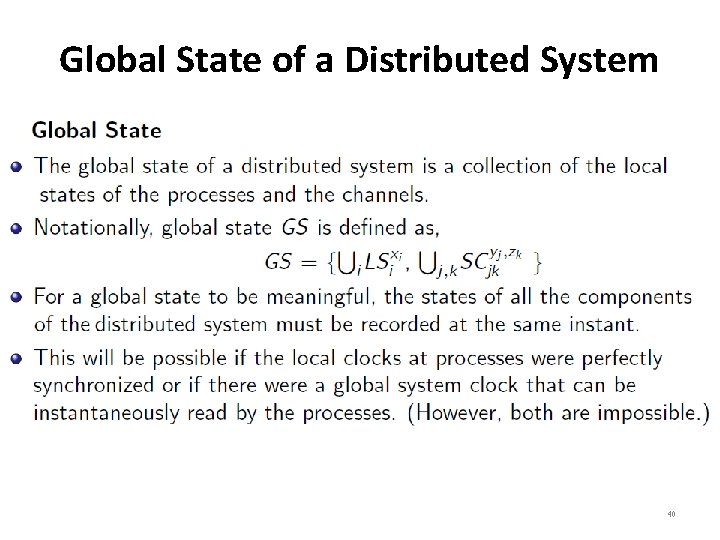

Consistent Global State • The global state (GS) of a distributed system is a collection of the local states (LS) of the processes and the channel states(SC). • A GS is a consistent GS iff it satisfies the following two conditions: – C 1: {send(mij) ∈ LSi ⇒ mij ∈SCij ⊕ rec(mij) ∈ LSj } Every message mij that is recorded as sent in the LS of a process pi must be captured in the state of the channel Cij or in the collected LS of the receiver process pj. – C 2: {send(mij) LSi ⇒ mij SCij ∧ rec(mij) LSj} states that if a message mij is not recorded as sent in the LS of process pi then it must neither be present in the state of the channel Cij nor in the collected LS of the receiver process pj. 70

Consistent Global State • In a consistent GS, every message that is recorded as received is also recorded as sent. • Such a global state captures the notion of causality that a message cannot be received if it was not sent. • Consistent GS are meaningful global states and inconsistent GS are not meaningful in the sense that a distributed system can never be in an inconsistent state. 71

Recording a Snapshot of a Distributed Computation • A global physical clock is not available in a distributed system and the following two issues need to be addressed in recording of a consistent global snapshot (state)of a distributed system: – How to distinguish between the messages to be recorded in the snapshot (either in a channel state or in a process state) from those not to be recorded? • Any message that is sent by a process before recording its snapshot, must be recorded in the global snapshot (from C 1). • Process pj must record its snapshot before processing a message mij that was sent by process pi after recording its snapshot (from C 2). 72

Recording a Snapshot of a Distributed Computation • Snapshot recording algorithms assume different IPC capabilities about the underlying system and illustrate IPC affects the design complexity of these algorithms. • There are two types of messages are considered: computation messages and control messages – The computation messages are exchanged by the underlying application and the control messages are exchanged by the snapshot algorithm. – Execution of a snapshot algorithm is transparent to the underlying application. 73

Chandy-Lamport’s Snapshot Recording Algorithm for FIFO Channels • The Chandy-Lamport algorithm was the first algorithm to record the global state snapshot in a distributed computation system. The assumptions of the algorithm are as follows: – There are no failures and all messages arrive intact and only once The communication channels are unidirectional and FIFO ordered. – There is a communication path between any two processes in the system. – Any process may initiate the snapshot algorithm. – The snapshot algorithm does not interfere with the normal execution of the processes. – Each process in the system records its local state and the state of its incoming channels. 74

Chandy-Lamport’s Snapshot Recording Algorithm for FIFO Channels • Chandy-Lamport algorithm: – The algorithm uses a control message, called a marker. – After a site has recorded its snapshot, it sends the marker along all of its outgoing channels before sending out any more messages. – A marker separates the messages in the channel into those to be included in the snapshot (i. e. , channel state or process state) from those not to be recorded in the snapshot. 75

Chandy-Lamport’s Snapshot Recording Algorithm for FIFO Channels • Since all messages that follow a marker on channel Cij have been sent by process pi after pi has taken its snapshot, process pj must record its snapshot not later than when it receives a marker on channel Cij. • In general, a process must record its snapshot no later than when it receives a marker on any of its incoming channels. 76

Chandy-Lamport’s Snapshot Recording Algorithm for FIFO Channels • The algorithm can be initiated by any process by executing the “Marker Sending Rule” by which it records its local state and sends a marker on each of its outgoing channel. • A process executes the “Marker Receiving Rule” on receiving a marker. • If the process has not yet recorded its local state, it records the state of the channel on which the marker is received as empty and executes the “Marker Sending Rule” to record its local state. • The algorithm terminates after each process has received a marker on all of its incoming channels. • All the local snapshots get disseminated to all other processes and all the processes can determine the global state. 77

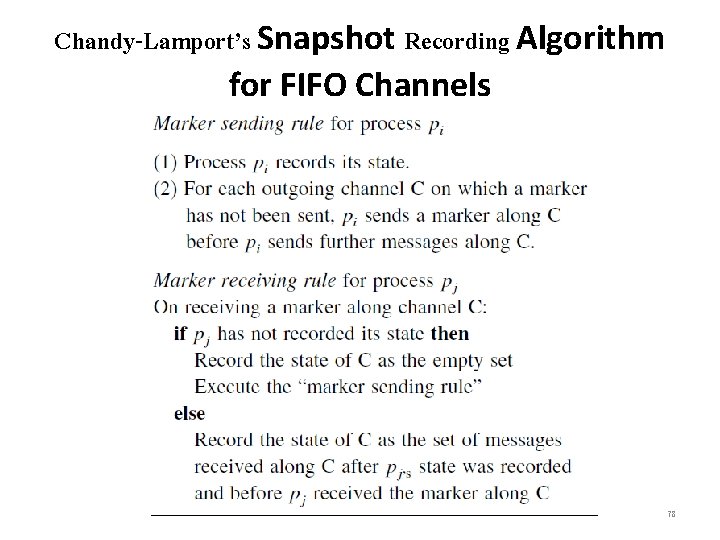

Chandy-Lamport’s Snapshot Recording Algorithm for FIFO Channels 78

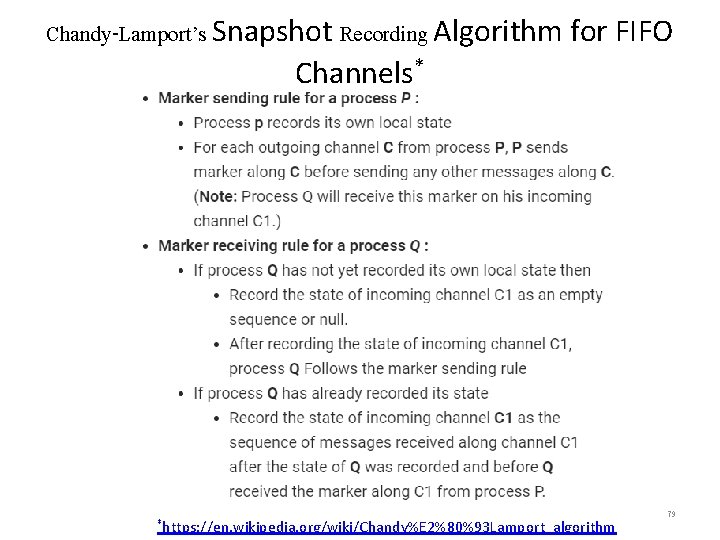

Chandy-Lamport’s Snapshot Recording Algorithm for FIFO Channels* *https: //en. wikipedia. org/wiki/Chandy%E 2%80%93 Lamport_algorithm 79

Chandy-Lamport’s Snapshot Recording Algorithm for FIFO Channels • A process initiates snapshot collection by executing the marker sending rule by which it records its local state and sends a marker on each outgoing channel. • A process executes the marker receiving rule on receiving a marker. • If the process has not yet recorded its local state, it records the state of the channel on which the marker is received as empty and executes the marker sending rule to record its local state. • Otherwise, the state of the incoming channel on which the marker is received is recorded as the set of computation messages received on that channel after recording the local state but before receiving the marker on that channel. • The algorithm can be initiated by any process by executing the marker sending rule. The algorithm terminates after each process has received a marker on all of its incoming channels. 80

Chandy-Lamport’s Snapshot Recording Algorithm for FIFO Channels • The recorded local snapshots can be put together to create the global snapshot in several ways. • One policy is to have each process send its local snapshot to the initiator of the algorithm. • Another policy is to have each process send the information it records along outgoing channels, and to have each process receiving such information for the first time propagate it along its outgoing channels. • All the local snapshots get disseminated to all other processes and all the processes can determine the global state. 81

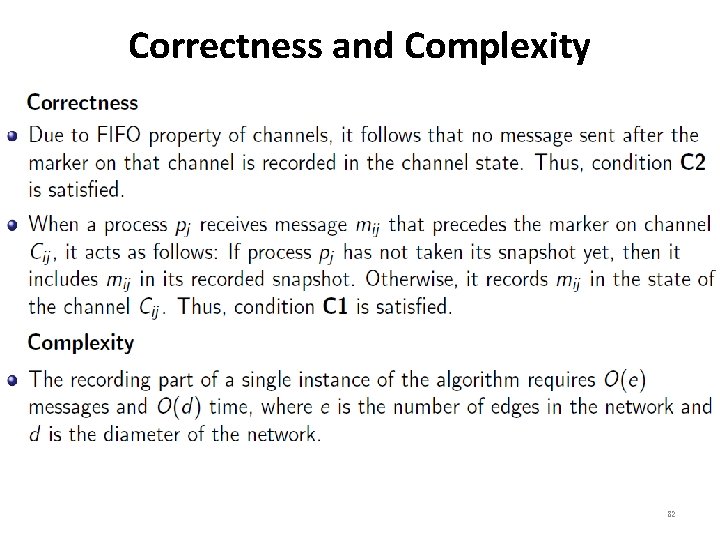

Correctness and Complexity 82

- Slides: 82